Skeleton Based Human Action Recognition Wei Hsu Outline

Skeleton Based Human Action Recognition Wei Hsu

Outline • Introduction • RNN-based approach – Spatio-Temporal LSTM with Trust Gate • CNN-based approaches – Joint Trajectory Maps – Joint Distance Maps

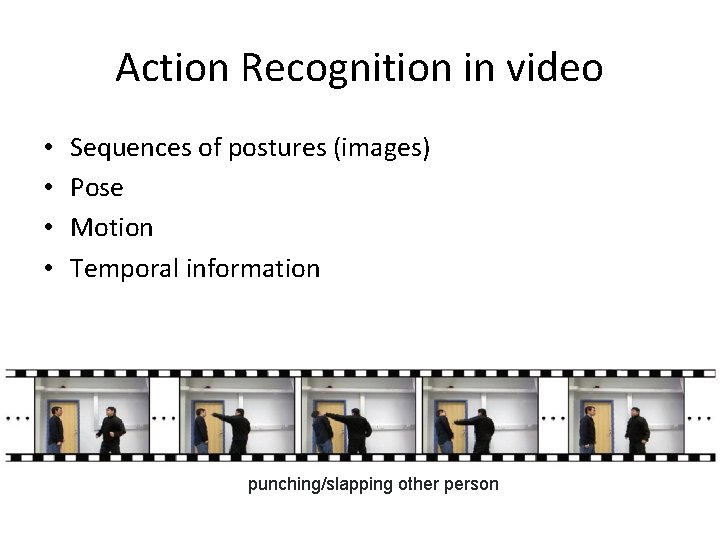

Action Recognition in video • • Sequences of postures (images) Pose Motion Temporal information punching/slapping other person

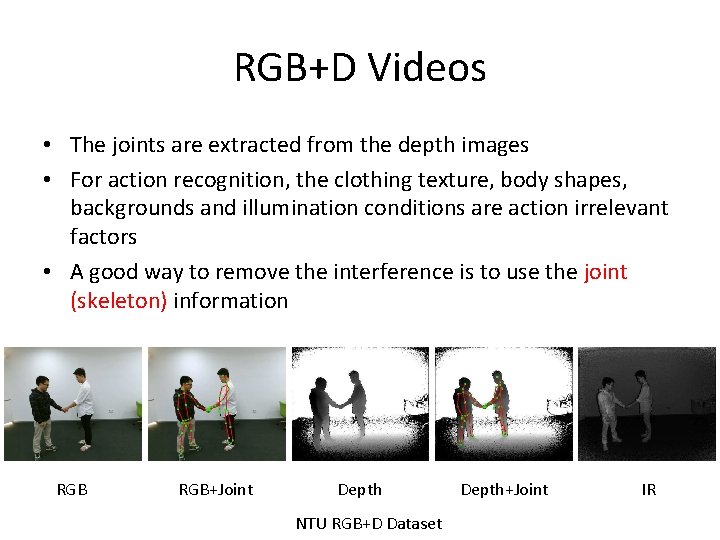

RGB+D Videos • The joints are extracted from the depth images • For action recognition, the clothing texture, body shapes, backgrounds and illumination conditions are action irrelevant factors • A good way to remove the interference is to use the joint (skeleton) information RGB+Joint Depth NTU RGB+D Dataset Depth+Joint IR

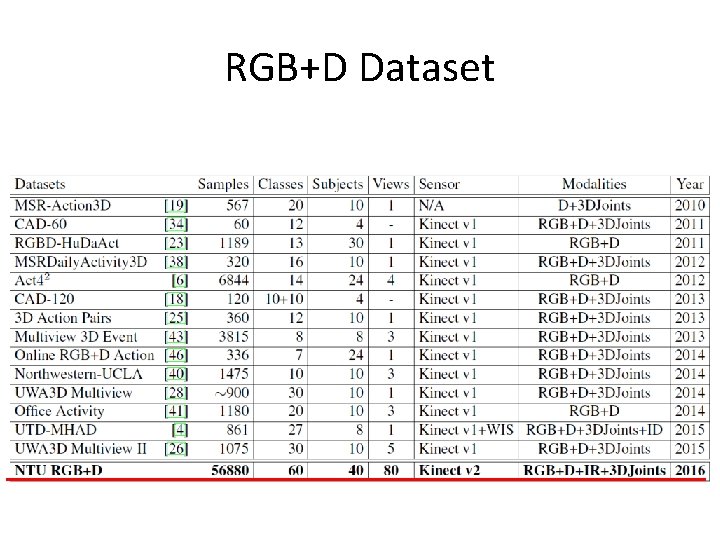

RGB+D Dataset

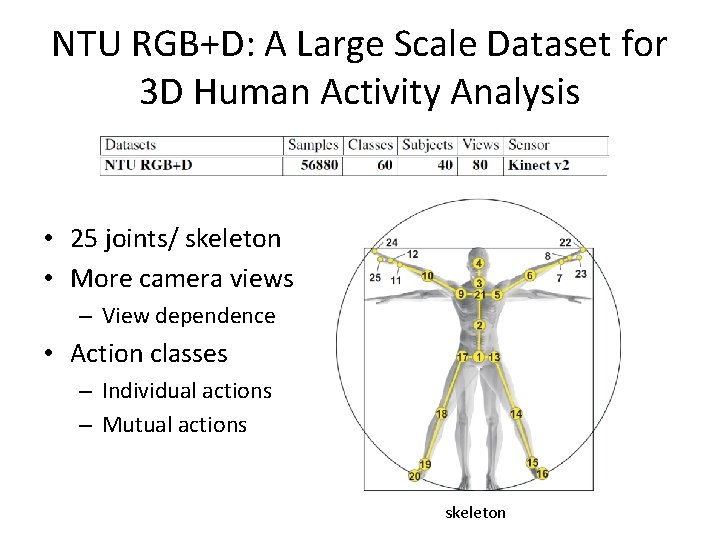

NTU RGB+D: A Large Scale Dataset for 3 D Human Activity Analysis • 25 joints/ skeleton • More camera views – View dependence • Action classes – Individual actions – Mutual actions skeleton

NTU RGB+D : Drink • Isolated action

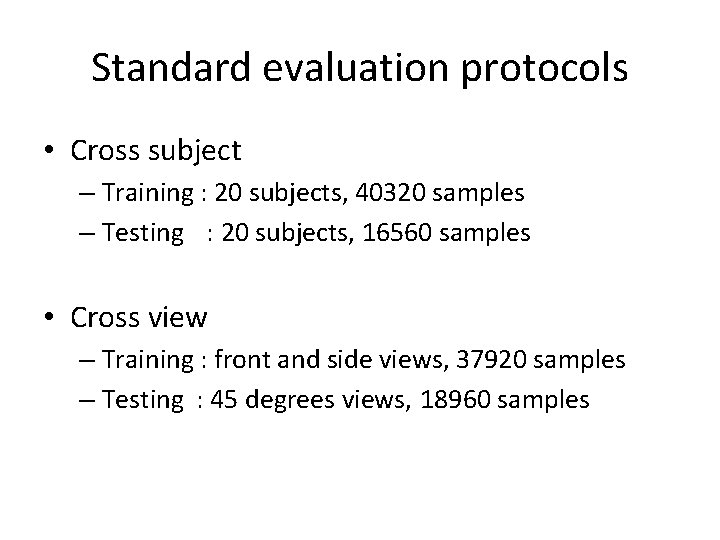

Standard evaluation protocols • Cross subject – Training : 20 subjects, 40320 samples – Testing : 20 subjects, 16560 samples • Cross view – Training : front and side views, 37920 samples – Testing : 45 degrees views, 18960 samples

Outline • Introduction • RNN-based approach – Spatio-Temporal LSTM with Trust Gate • CNN-based approaches – Joint Trajectory Maps – Joint Distance Maps

Why RNN ? • RNNs are powerful in dealing the temporal relationship in sequential data • RNNs tend to overemphasize the temporal information especially when the training data is insufficient, thus leading to over-fitting

Spatio-Temporal LSTM with Trust Gates for 3 D Human Action Recognition Jun Liu, Amir Shahroudy, Dong Xu, and Gang Wang

Motivation • RNN methods mainly model the long term contextual information in the temporal domain. However, there is also strong dependency between joints in the spatial domain – Spatial-Temporal LSTM • To handle the noise and occlusion in 3 D skeleton data – Trust gate

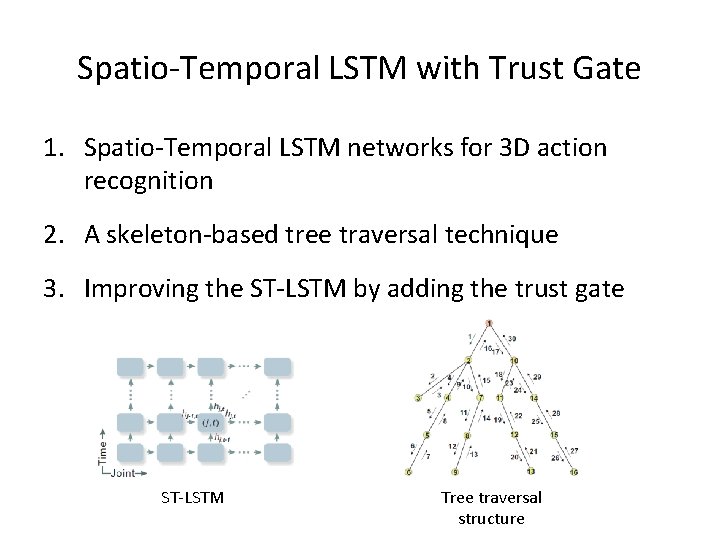

Spatio-Temporal LSTM with Trust Gate 1. Spatio-Temporal LSTM networks for 3 D action recognition 2. A skeleton-based tree traversal technique 3. Improving the ST-LSTM by adding the trust gate ST-LSTM Tree traversal structure

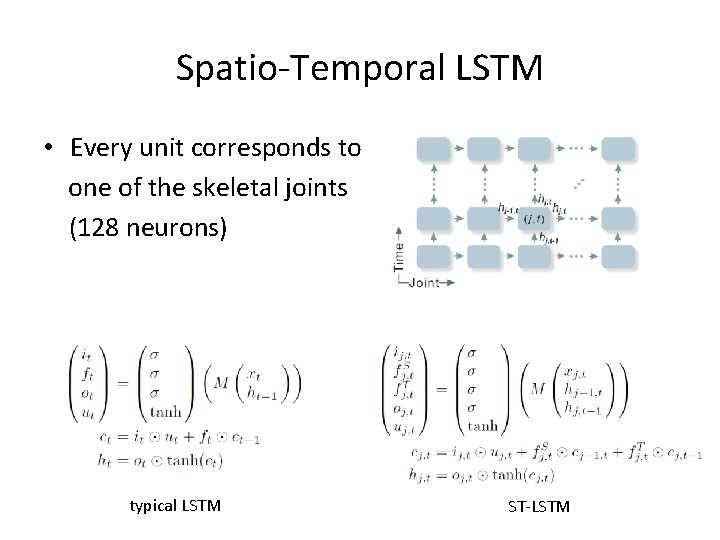

Spatio-Temporal LSTM • Every unit corresponds to one of the skeletal joints (128 neurons) typical LSTM ST-LSTM

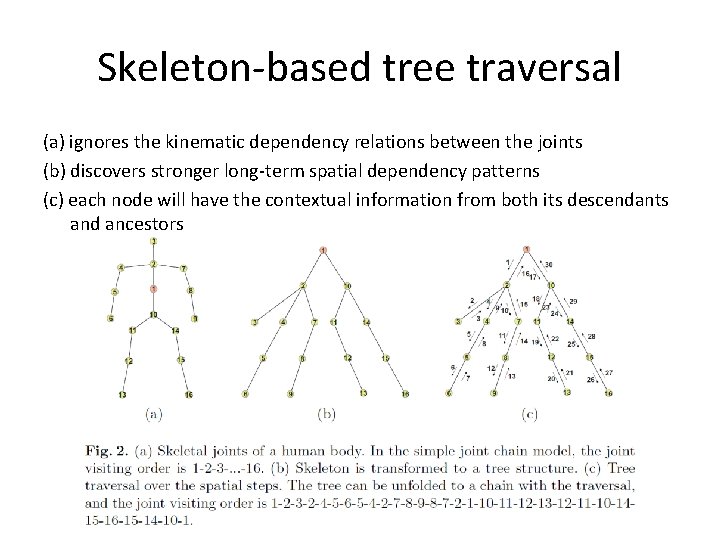

Skeleton-based tree traversal (a) ignores the kinematic dependency relations between the joints (b) discovers stronger long-term spatial dependency patterns (c) each node will have the contextual information from both its descendants and ancestors

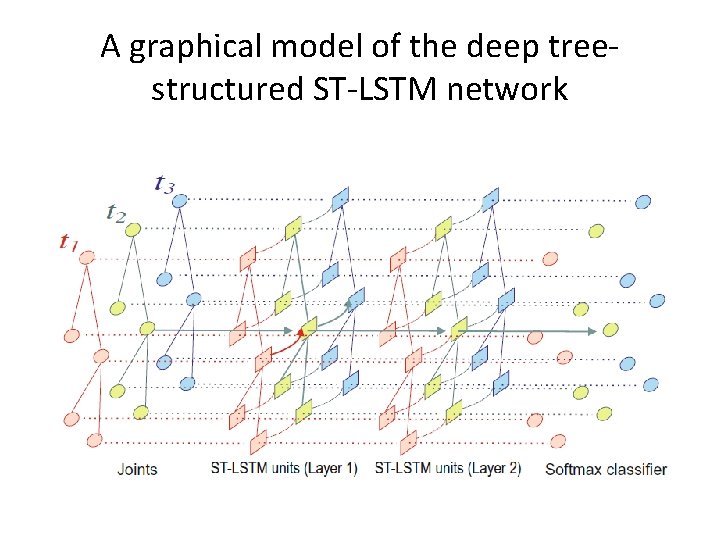

A graphical model of the deep treestructured ST-LSTM network

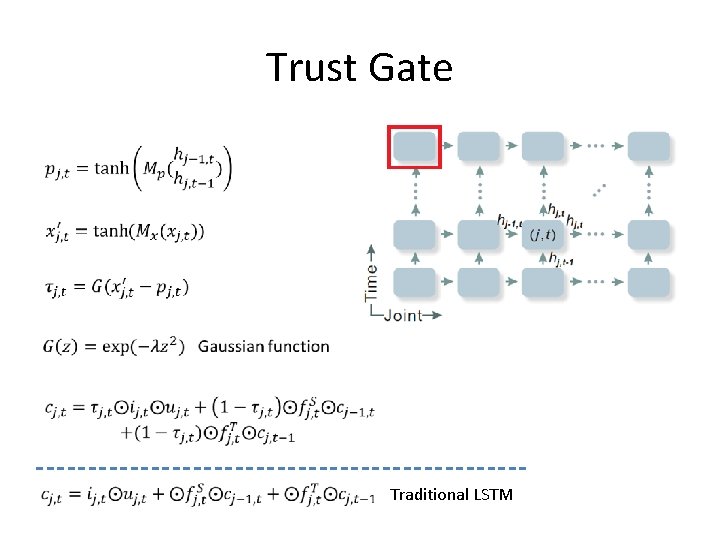

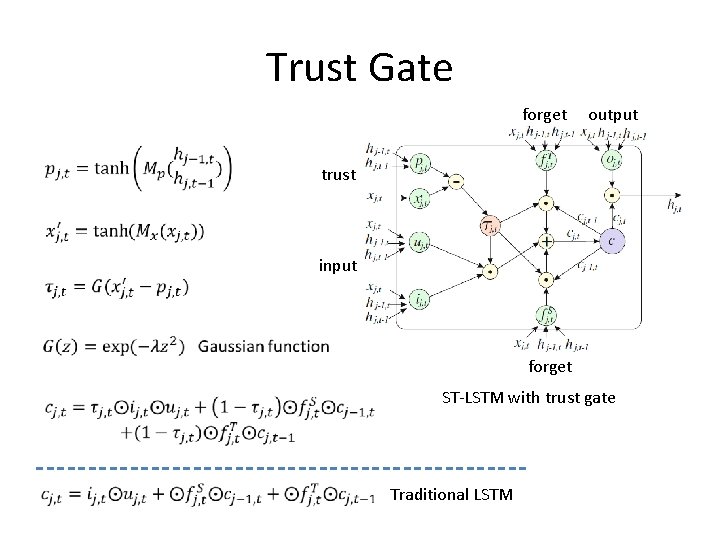

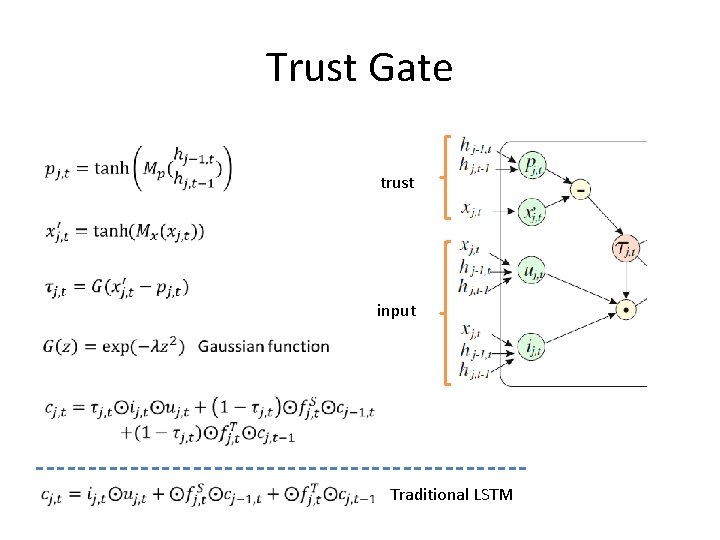

Trust Gate Traditional LSTM

Trust Gate forget output trust input forget ST-LSTM with trust gate Traditional LSTM

Trust Gate trust input Traditional LSTM

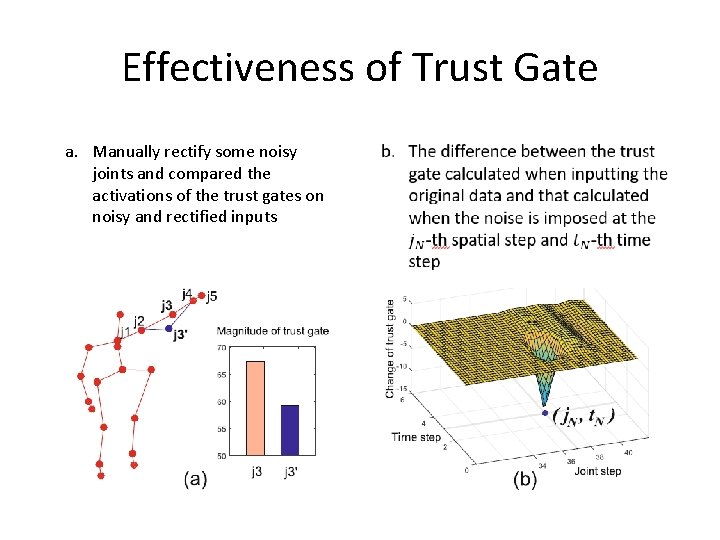

Effectiveness of Trust Gate a. Manually rectify some noisy joints and compared the activations of the trust gates on noisy and rectified inputs

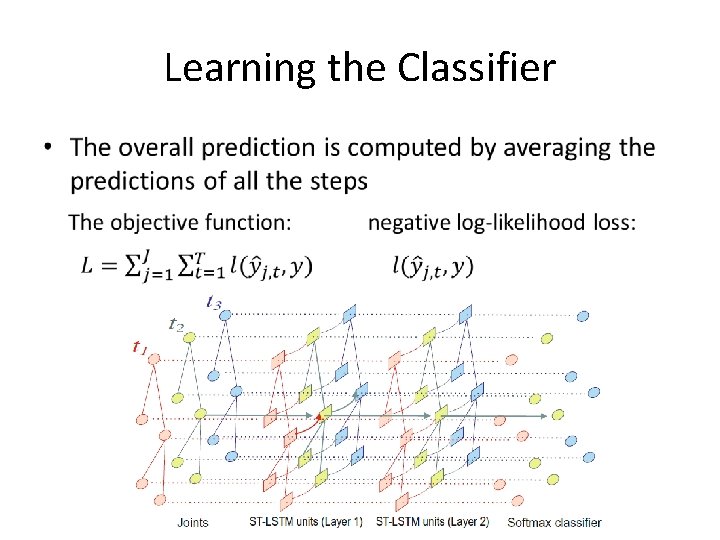

Learning the Classifier •

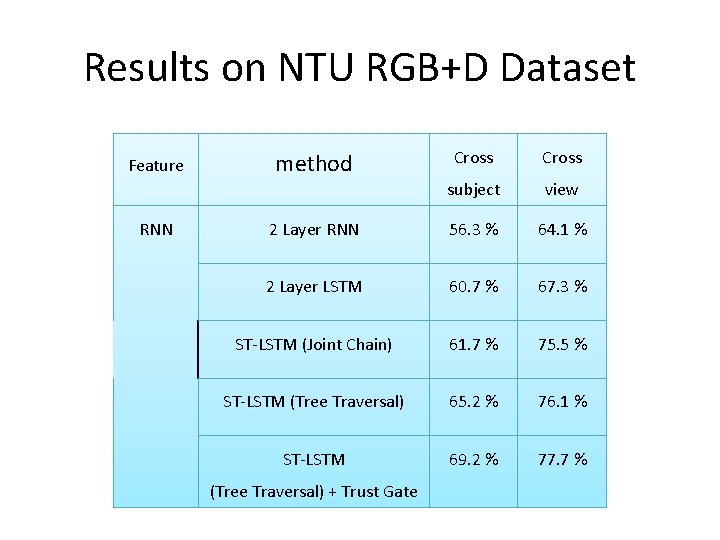

Results on NTU RGB+D Dataset Feature RNN Cross subject view 2 Layer RNN 56. 3 % 64. 1 % 2 Layer LSTM 60. 7 % 67. 3 % ST-LSTM (Joint Chain) 61. 7 % 75. 5 % ST-LSTM (Tree Traversal) 65. 2 % 76. 1 % ST-LSTM 69. 2 % 77. 7 % method (Tree Traversal) + Trust Gate

Outline • Introduction • RNN-based approach – Spatio-Temporal LSTM with Trust Gate • CNN-based approaches – Joint Trajectory Maps – Joint Distance Maps

Why CNN ? • CNN can automatically explore distinctive local patterns of images, therefore it is an effective way to encode a spatio-temporal sequence as images • CNNs may lose some important information when projecting 3 D skeleton into 2 D image

Action Recognition Based on Joint Trajectory Maps Using Convolutional Neural Networks Pichao Wang, Zhaoyang Li, Yonghong Hou, Wanqing Li

Motivation • To propose a compact, effective yet simple method to encode spatiotemporal information carried in 3 D skeleton sequences into multiple 2 D images 1. The joints or group of joints should be distinct in the image such that the spatial information of the joints is well reserved 2. The image should encode effectively the temporal evolution, i. e. trajectories of the joints, including the direction and speed of joint motions 3. The image should be able to encode the difference in motion among the different joints or parts of the body to reflect how the joints are synchronized during the action

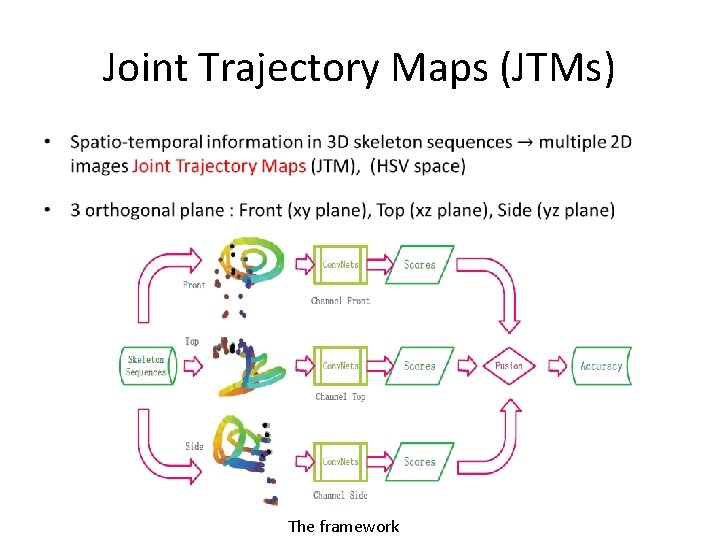

Joint Trajectory Maps (JTMs) • The framework

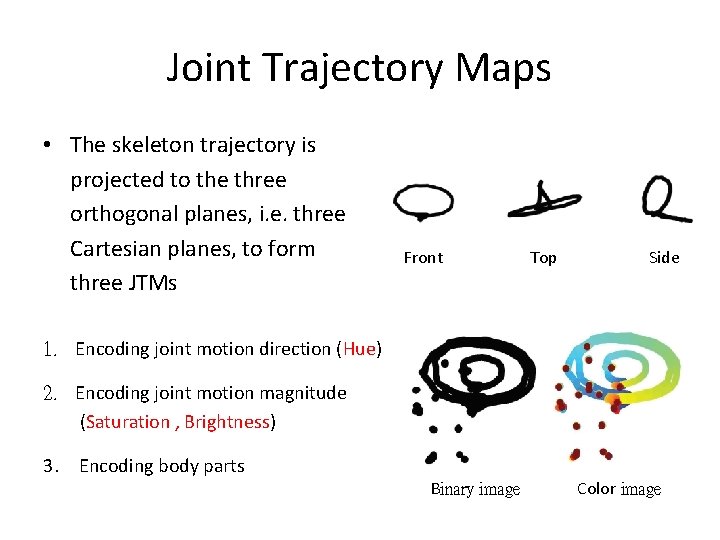

Joint Trajectory Maps • The skeleton trajectory is projected to the three orthogonal planes, i. e. three Cartesian planes, to form three JTMs Front Top Side 1. Encoding joint motion direction (Hue) 2. Encoding joint motion magnitude (Saturation , Brightness) 3. Encoding body parts Binary image Color image

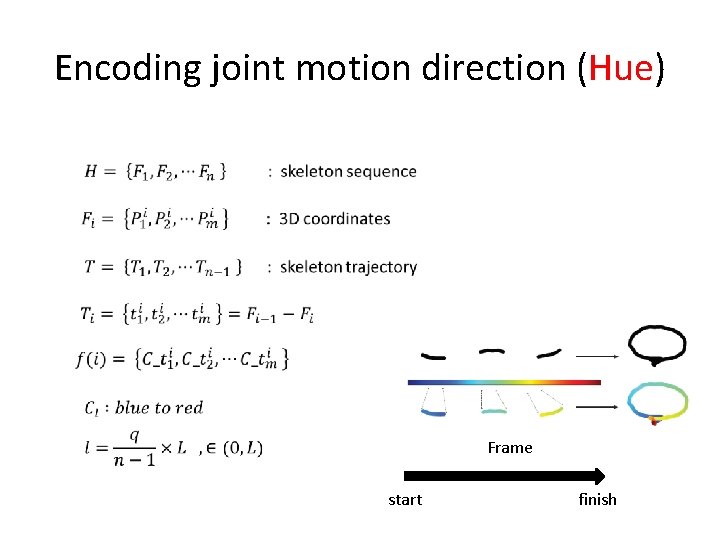

Encoding joint motion direction (Hue) Frame start finish

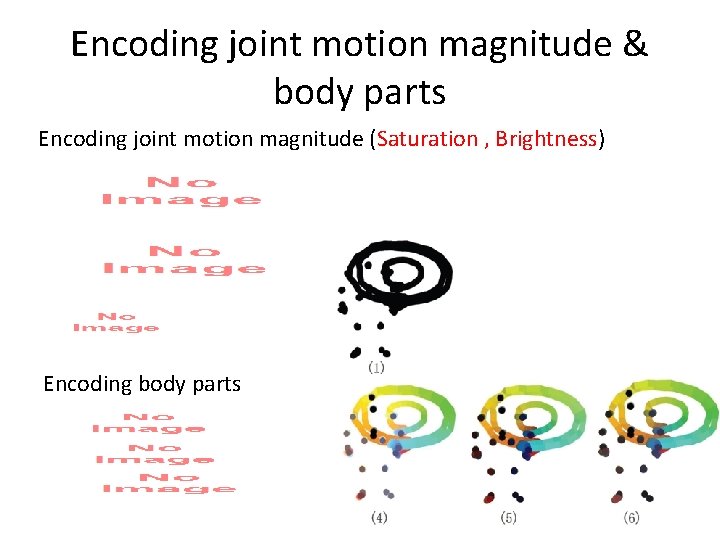

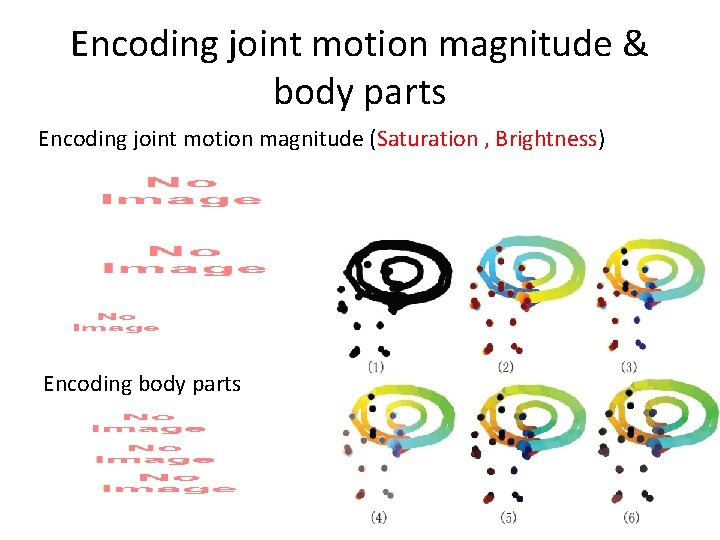

Encoding joint motion magnitude & body parts Encoding joint motion magnitude (Saturation , Brightness) Encoding body parts

Encoding joint motion magnitude & body parts Encoding joint motion magnitude (Saturation , Brightness) Encoding body parts

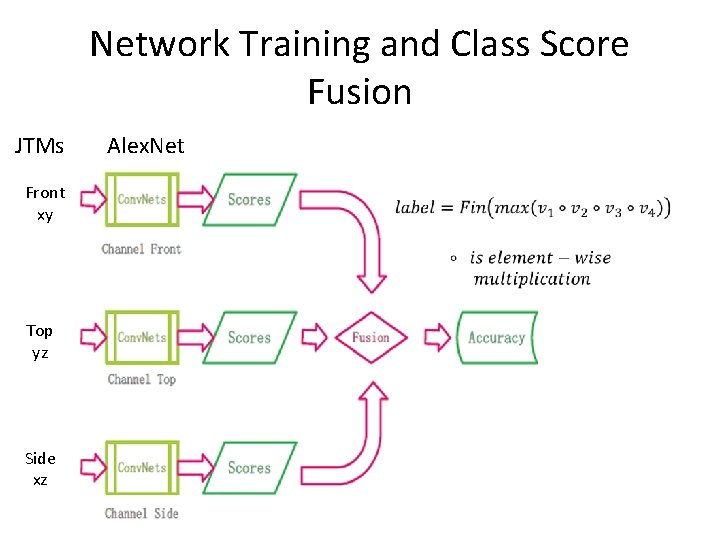

Network Training and Class Score Fusion JTMs Front xy Top yz Side xz Alex. Net

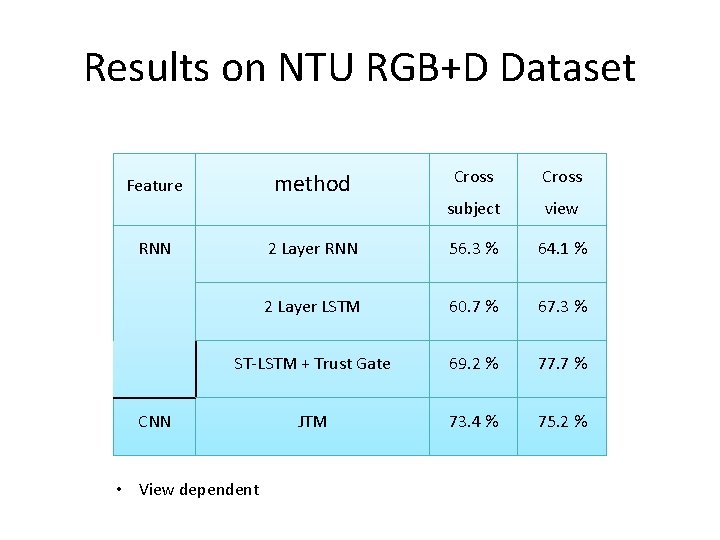

Results on NTU RGB+D Dataset Cross subject view 2 Layer RNN 56. 3 % 64. 1 % 2 Layer LSTM 60. 7 % 67. 3 % ST-LSTM + Trust Gate 69. 2 % 77. 7 % JTM 73. 4 % 75. 2 % method Feature RNN CNN • View dependent

Outline • Introduction • RNN-based approach – Spatio-Temporal LSTM with Trust Gate • CNN-based approaches – Joint Trajectory Maps – Joint Distance Maps

Joint Distance Maps Based Action Recognition With Convolutional Neural Networks Chuankun Li, Yonghong Hou, Pichao Wang, Wanqing Li

Motivation • The three-dimensional (3 -D) space distances between joints are view independent • The size of JDMs is comparable to the image size used to train existing Conv. Nets and consequently JDMs can be used for finetuning a pretrained model

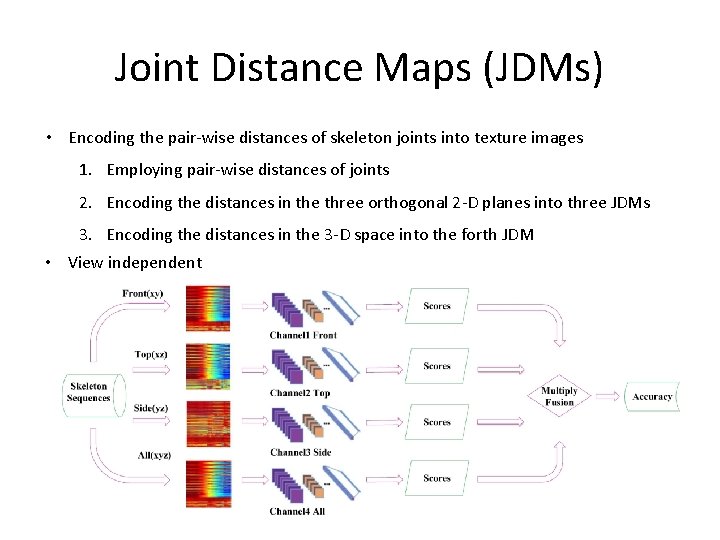

Joint Distance Maps (JDMs) • Encoding the pair-wise distances of skeleton joints into texture images 1. Employing pair-wise distances of joints 2. Encoding the distances in the three orthogonal 2 -D planes into three JDMs 3. Encoding the distances in the 3 -D space into the forth JDM • View independent

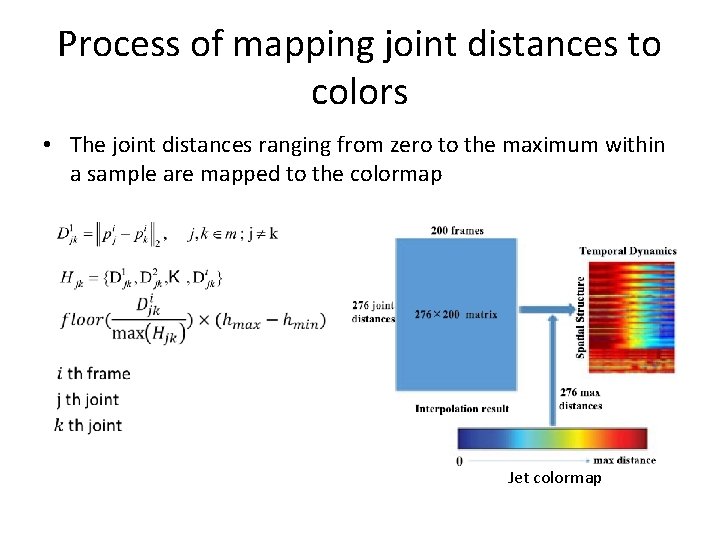

Process of mapping joint distances to colors • Selecting 12 joints per subject

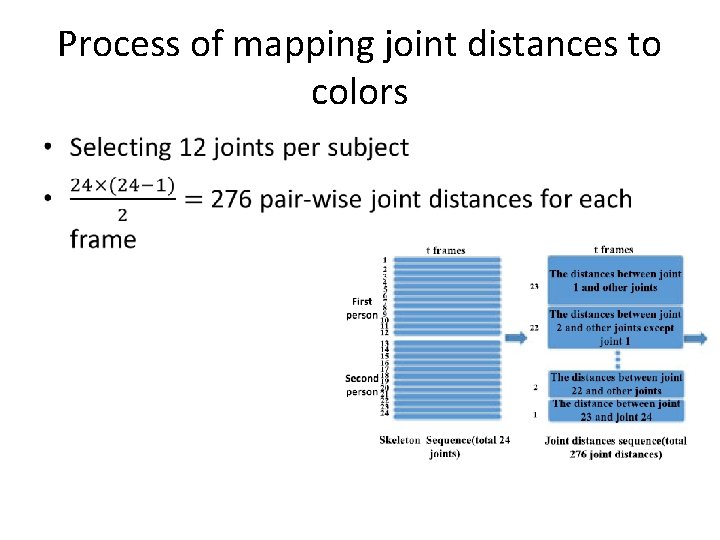

Process of mapping joint distances to colors •

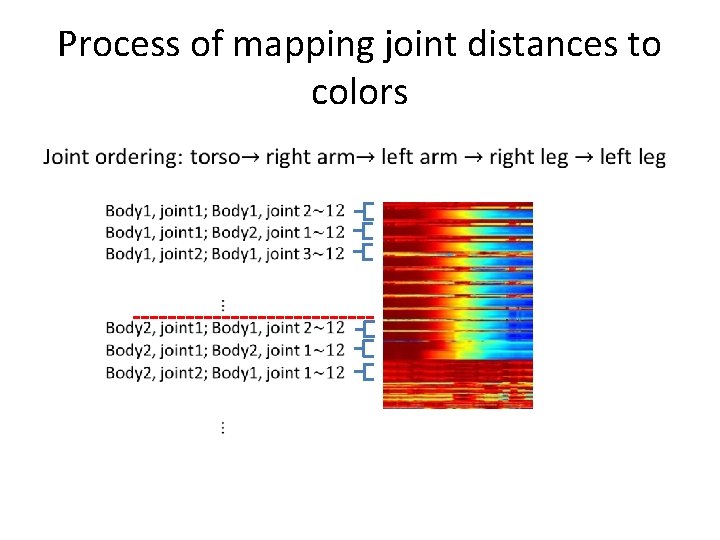

Process of mapping joint distances to colors

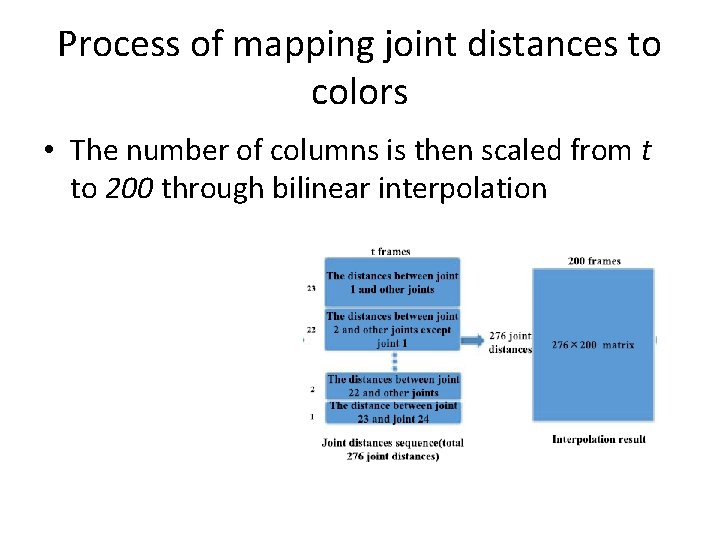

Process of mapping joint distances to colors • The number of columns is then scaled from t to 200 through bilinear interpolation

Process of mapping joint distances to colors • The joint distances ranging from zero to the maximum within a sample are mapped to the colormap Jet colormap

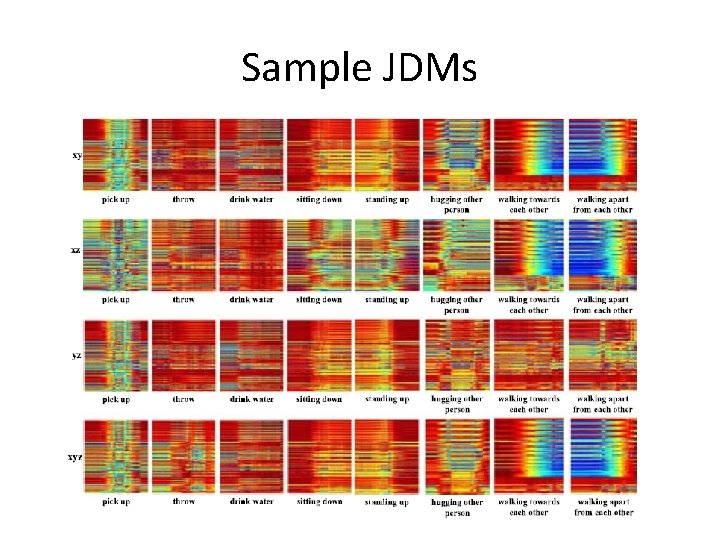

Sample JDMs

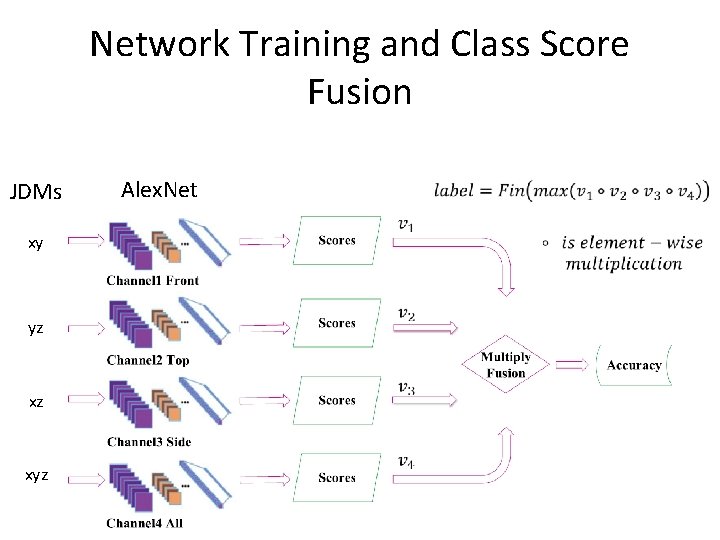

Network Training and Class Score Fusion JDMs xy yz xz xyz Alex. Net

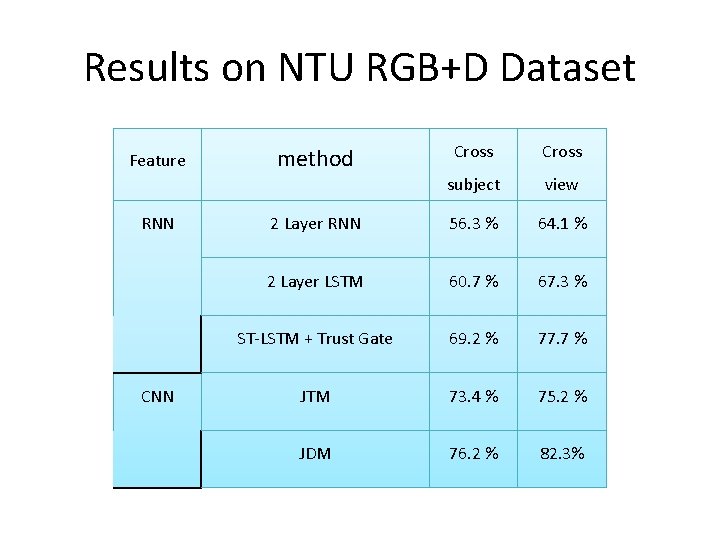

Results on NTU RGB+D Dataset Feature RNN Cross subject view 2 Layer RNN 56. 3 % 64. 1 % 2 Layer LSTM 60. 7 % 67. 3 % ST-LSTM + Trust Gate 69. 2 % 77. 7 % JTM 73. 4 % 75. 2 % JDM 76. 2 % 82. 3% method

Conclusion • RNN-based approach – LSTM is a powerful model for sequential data modeling and feature extraction Handling the spatial information – To learn both the spatial and temporal relationships among joints can perform better • CNN-based approach – How to effectively represent 3 D skeleton data and feed into deep CNNs is still an open problem • Problem : skeleton reliability

![Reference [1] NTU RGB+D: A Large Scale Dataset for 3 D Human Activity Analysis Reference [1] NTU RGB+D: A Large Scale Dataset for 3 D Human Activity Analysis](http://slidetodoc.com/presentation_image_h/25b40e4b1e90b23d45ceb7f2d63329ab/image-47.jpg)

Reference [1] NTU RGB+D: A Large Scale Dataset for 3 D Human Activity Analysis , Amir Shahroudy, Jun Liu , Tian-Tsong Ng, Gang Wang [2] Spatio-Temporal LSTM with Trust Gates for 3 D Human Action Recognition , Jun Liu, Amir Shahroudy, Dong Xu, and Gang Wang [3] An End-to-End Spatio-Temporal Attention Model for Human Action Recognition from Skeleton Data , Sijie Song, Cuiling Lan, Junliang Xing, Wenjun Zeng, Jiaying Liu [4] Action Recognition Based on Joint Trajectory Maps Using Convolutional Neural Networks , Pichao Wang, Zhaoyang Li, Yonghong Hou, Wanqing Li [5] Joint Distance Maps Based Action Recognition With Convolutional Neural Networks , Chuankun Li, Yonghong Hou, Pichao Wang, Wanqing Li

- Slides: 47