Probabilistic Graphical Models Learning Parameter Estimation Maximum Likelihood

Probabilistic Graphical Models Learning Parameter Estimation Maximum Likelihood Estimation Daphne Koller

Biased Coin Example P is a Bernoulli distribution: P(X=1) = , P(X=0) = 1 - sampled IID from P • Tosses are independent of each other • Tosses are sampled from the same distribution (identically distributed) Daphne Koller

![IID as a PGM X Data m X[1] . . . X[M] Daphne Koller IID as a PGM X Data m X[1] . . . X[M] Daphne Koller](http://slidetodoc.com/presentation_image_h/054f2a07fb6e59b2e109513f855f7cb9/image-3.jpg)

IID as a PGM X Data m X[1] . . . X[M] Daphne Koller

![Maximum Likelihood Estimation L(D: ) • Goal: find [0, 1] that predicts D well Maximum Likelihood Estimation L(D: ) • Goal: find [0, 1] that predicts D well](http://slidetodoc.com/presentation_image_h/054f2a07fb6e59b2e109513f855f7cb9/image-4.jpg)

Maximum Likelihood Estimation L(D: ) • Goal: find [0, 1] that predicts D well • Prediction quality = likelihood of D given 0 0. 2 0. 4 0. 6 0. 8 1 Daphne Koller

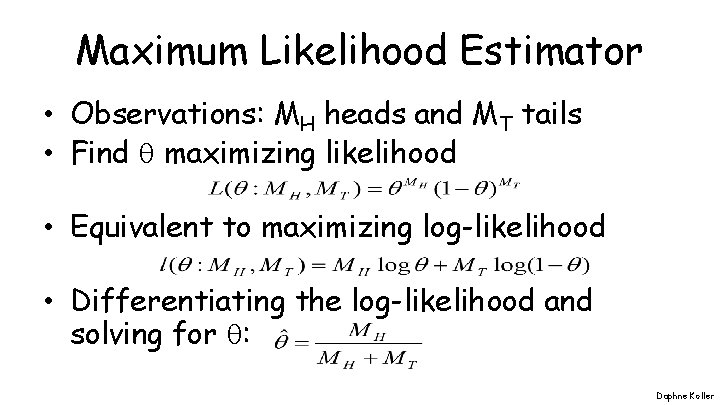

Maximum Likelihood Estimator • Observations: MH heads and MT tails • Find maximizing likelihood • Equivalent to maximizing log-likelihood • Differentiating the log-likelihood and solving for : Daphne Koller

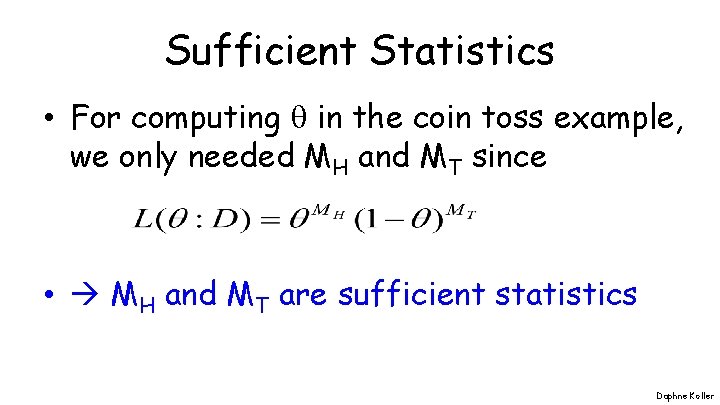

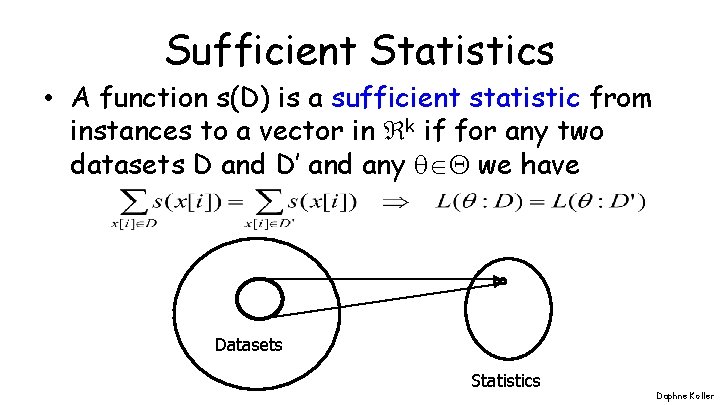

Sufficient Statistics • For computing in the coin toss example, we only needed MH and MT since • MH and MT are sufficient statistics Daphne Koller

Sufficient Statistics • A function s(D) is a sufficient statistic from instances to a vector in k if for any two datasets D and D’ and any we have Datasets Statistics Daphne Koller

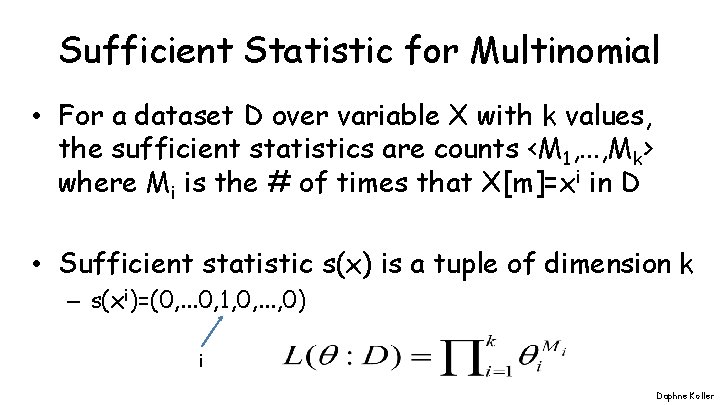

Sufficient Statistic for Multinomial • For a dataset D over variable X with k values, the sufficient statistics are counts <M 1, . . . , Mk> where Mi is the # of times that X[m]=xi in D • Sufficient statistic s(x) is a tuple of dimension k – s(xi)=(0, . . . 0, 1, 0, . . . , 0) i Daphne Koller

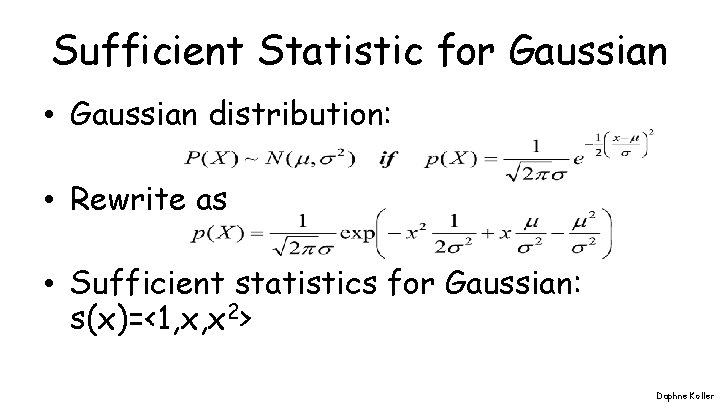

Sufficient Statistic for Gaussian • Gaussian distribution: • Rewrite as • Sufficient statistics for Gaussian: s(x)=<1, x, x 2> Daphne Koller

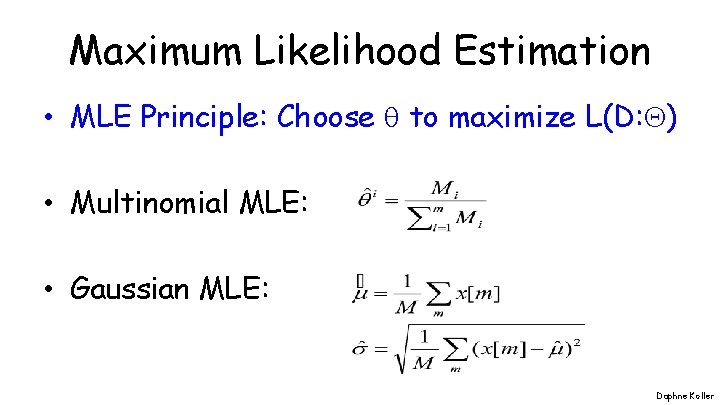

Maximum Likelihood Estimation • MLE Principle: Choose to maximize L(D: ) • Multinomial MLE: • Gaussian MLE: Daphne Koller

Summary • Maximum likelihood estimation is a simple principle for parameter selection given D • Likelihood function uniquely determined by sufficient statistics that summarize D • MLE has closed form solution for many parametric distributions Daphne Koller

END END Daphne Koller

- Slides: 12