Maximum Likelihood Missing data Rachael Bedford Mplus Longitudinal

Maximum Likelihood & Missing data Rachael Bedford Mplus: Longitudinal Analysis Workshop 23/06/2015

Overview � Maximum Likelihood (ML) � Maximum Likelihood: Robust Standard Errors (MLR) � Missing data ◦ MCAR ◦ MAR ◦ NMAR � Full Information Maximum Likelihood (FIML) � Maximum likelihood vs. multiple imputation � Summary

Maximum Likelihood (ML) Estimation is an estimator (like ordinary least squares) � ML � Why is estimation important? ◦ Influences quality and validity of estimates � Most estimation techniques: ◦ Minimise something e. g. OLS ◦ Maximise something e. g. ML ◦ Simulation

Maximum Likelihood (ML) Estimation � Asymptotic consistency – as sample size increases, estimator converges on true value � Asymptotic normality – as sample size increases, the estimator distribution is normal � Efficiency – small standard errors

Maximum Likelihood � What does ML do? ◦ ML identifies the population parameter values (e. g. population mean) that are most ‘likely’ or consistent with data (e. g. sample mean) ◦ It uses the observed data to find parameters with the highest likelihood (best fit) � How does ML estimate parameters? ◦ By constraining search for parameters within a normal distribution

Maximum Likelihood Robust � Robust Maximum Likelihood (MLR) still assumes data follow a multivariate normal distribution. � BUT can deal with kurtosis “peakedness” of data � MLR in Mplus uses a sandwich estimator to give robust standard errors

Full Information Maximum Likelihood � Can apply ML to incomplete as well as complete data records ◦ i. e. where data is missing in response variables � This is called Full Information Maximum Likelihood (FIML). � If data are missing at random we can use FIML to estimate model parameters. � Missing at random DOES NOT MEAN missing at random.

Missing Data � Missing completely at random (MCAR) � Missing at random (MAR) (confusing name!) ◦ missingness does not depend on the values of either observed or latent variables ◦ IF this holds can use listwise deletion ◦ missingness is related to observed, but not latent variables or missing values � Non-ignorable missing ◦ Missingness of data can be related to both observed but also to missing values and latent variables

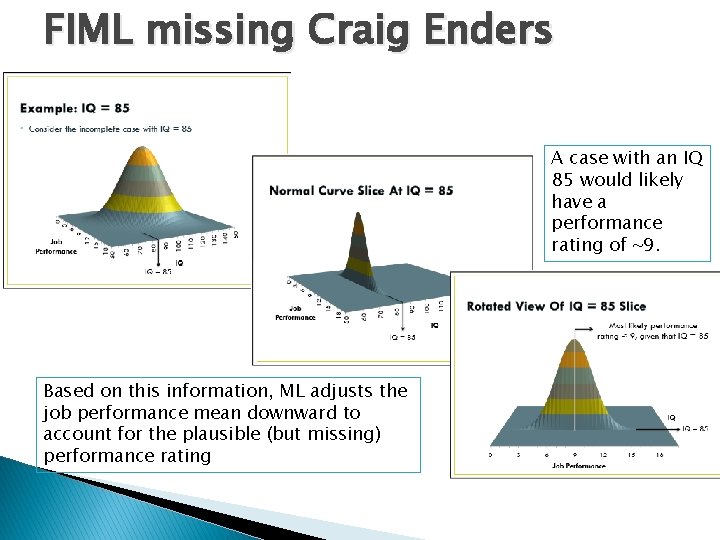

FIML missing Craig Enders A case with an IQ 85 would likely have a performance rating of ~9. Based on this information, ML adjusts the job performance mean downward to account for the plausible (but missing) performance rating

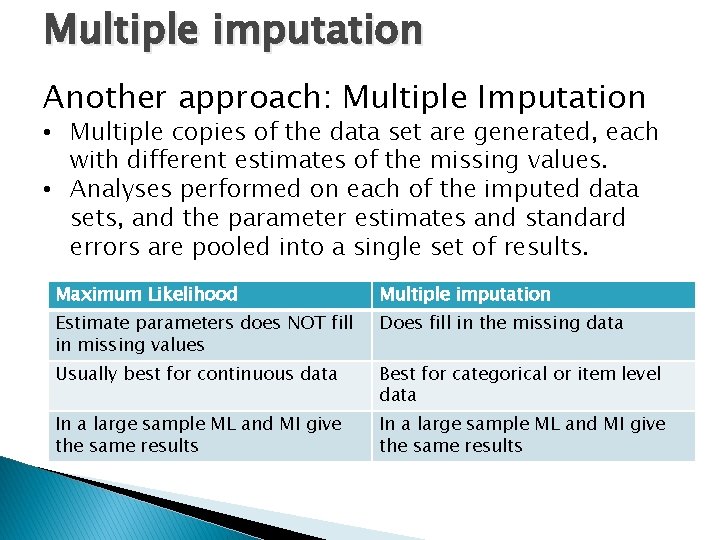

Multiple imputation Another approach: Multiple Imputation • Multiple copies of the data set are generated, each with different estimates of the missing values. • Analyses performed on each of the imputed data sets, and the parameter estimates and standard errors are pooled into a single set of results. Maximum Likelihood Multiple imputation Estimate parameters does NOT fill in missing values Does fill in the missing data Usually best for continuous data Best for categorical or item level data In a large sample ML and MI give the same results

References � � http: //vkc. library. uu. nl/vkc/ms/Site. Collection. Documents/Mpl ussite/1%20 nov%2011/Enders%20 Pre%20 Conference%20 Worksh op. pdf http: //jonathantemplin. com/files/sem 13 psyc 948/sem 1 3 psyc 948_lecture 02. pdf

Considerations � Recoverability ◦ Is it possible to estimate what scores would have been if they were not missing? � Bias ◦ Are statistics (e. g. means, variances, and covariances/correlations) the same as what they would have been had there not been any missing data? � Power ◦ Do we have the same or similar rates of power (1 – Type II error rate) as we would without missing data?

- Slides: 12