Preventive Replication in Database Cluster Esther Pacitti Cedric

Preventive Replication in Database Cluster Esther Pacitti, Cedric Coulon, Patrick Valduriez, M. Tamer Özsu* LINA / INRIA – Atlas Group University of Nantes - France * University of Waterloo - Canada July 2005 LINA / INRIA – Atlas Group

Outline § § § § § Motivations Cluster Architecture Preventive Replication Multi-Master Partially Replicated configurations Replication Manager Architecture Optimizations Rep. DB* Prototype Experiments Conclusions, Current and Future Work LINA / INRIA – Atlas Group 2

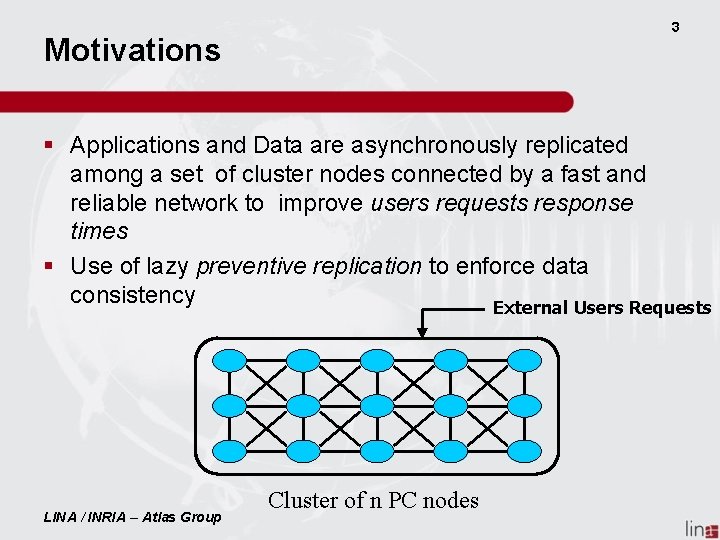

3 Motivations § Applications and Data are asynchronously replicated among a set of cluster nodes connected by a fast and reliable network to improve users requests response times § Use of lazy preventive replication to enforce data consistency External Users Requests LINA / INRIA – Atlas Group Cluster of n PC nodes

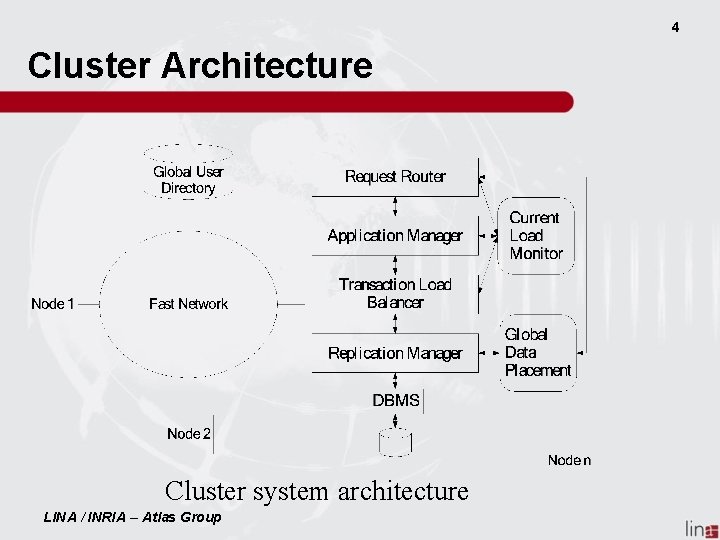

4 Cluster Architecture Cluster system architecture LINA / INRIA – Atlas Group

Preventive Replication (1) Properties: § § Strong consistency Non-blocking Scale and Speeds Up Highly High Data availability LINA / INRIA – Atlas Group 5

Preventive Replication (2) Assumptions: § Network interface provides FIFO reliable multicast § Max is the upper bound of time needed to multicast a message from a node i and to be received at a receiving node j § Clocks are -synchronized § Each transaction has a timestamp C value (arrival time) LINA / INRIA – Atlas Group 6

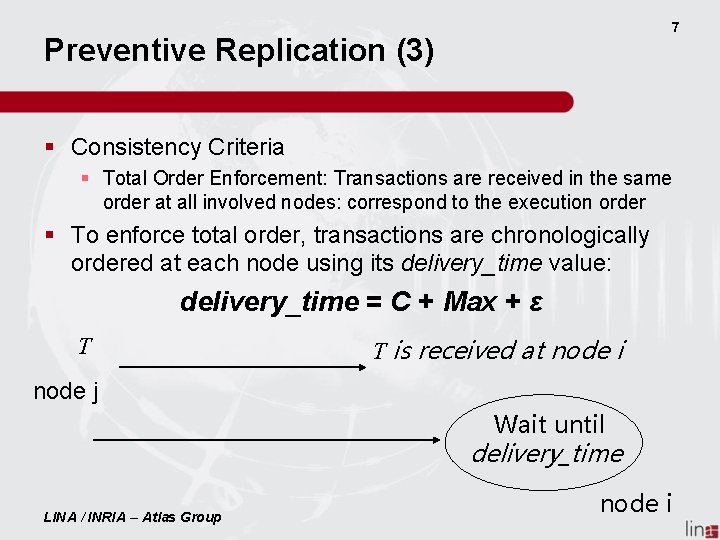

7 Preventive Replication (3) § Consistency Criteria § Total Order Enforcement: Transactions are received in the same order at all involved nodes: correspond to the execution order § To enforce total order, transactions are chronologically ordered at each node using its delivery_time value: delivery_time = C + Max + ε T T is received at node i node j Wait until delivery_time LINA / INRIA – Atlas Group node i

Preventive Replication (4) § Whenever a node i receives T § Propagation: It multi-cast T to all nodes including itself § Scheduling: At each node T’s delivery-time expires if and only if it is the older transaction § Execution: When T’s delivery-time expires then T is entirely executed LINA / INRIA – Atlas Group 8

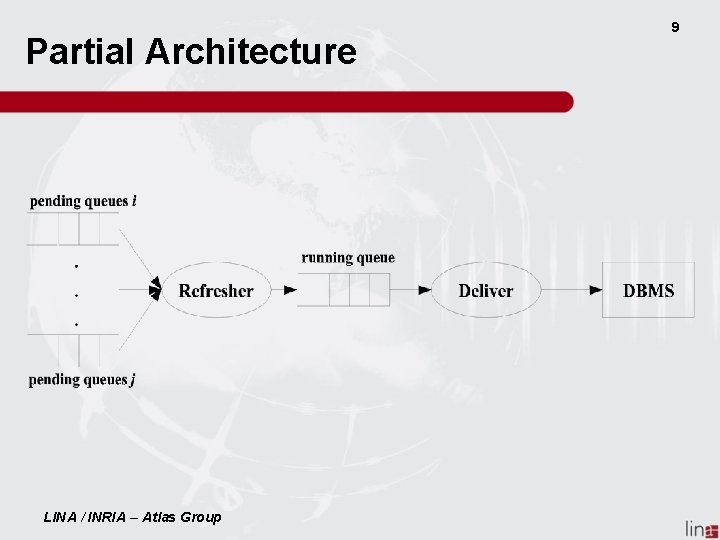

Partial Architecture LINA / INRIA – Atlas Group 9

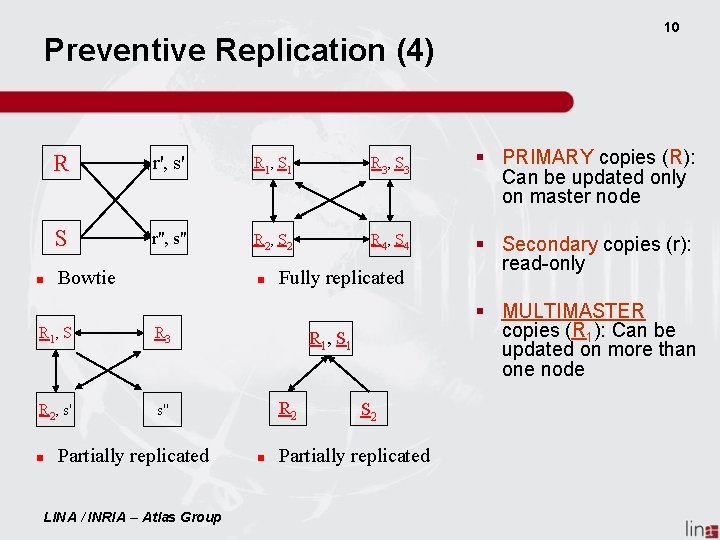

Preventive Replication (4) R r', s' R 1, S 1 R 3, S 3 S r'', s'' R 2, S 2 R 4, S 4 Bowtie R 1, S R 3 R 2, s' s'' Partially replicated LINA / INRIA – Atlas Group Fully replicated § PRIMARY copies (R): Can be updated only on master node § Secondary copies (r): read-only § MULTIMASTER copies (R 1): Can be updated on more than one node R 1 , S 1 R 2 10 S 2 Partially replicated

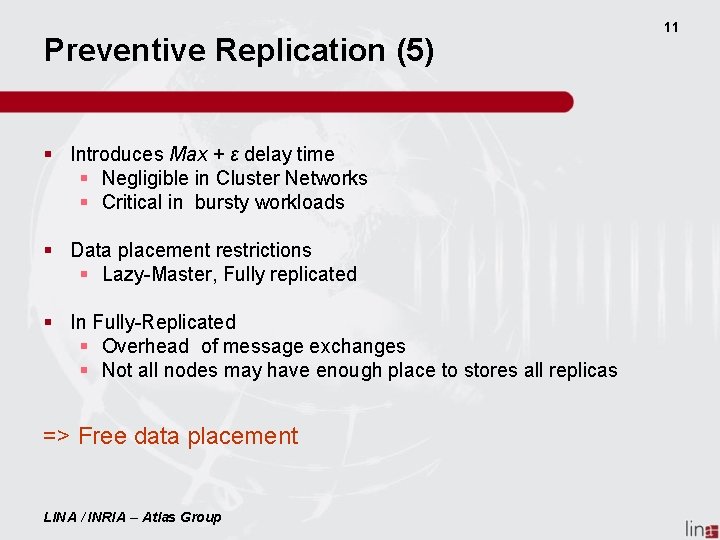

Preventive Replication (5) § Introduces Max + ε delay time § Negligible in Cluster Networks § Critical in bursty workloads § Data placement restrictions § Lazy-Master, Fully replicated § In Fully-Replicated § Overhead of message exchanges § Not all nodes may have enough place to stores all replicas => Free data placement LINA / INRIA – Atlas Group 11

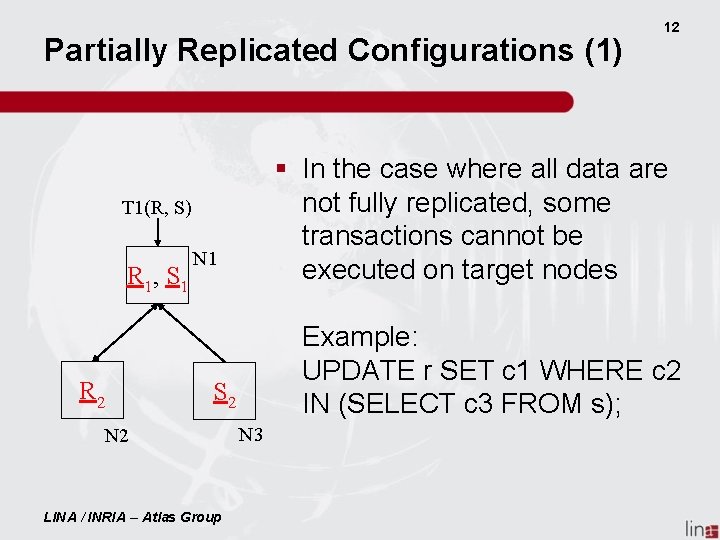

Partially Replicated Configurations (1) § In the case where all data are not fully replicated, some transactions cannot be executed on target nodes T 1(R, S) R 1, S 1 R 2 N 1 Example: UPDATE r SET c 1 WHERE c 2 IN (SELECT c 3 FROM s); S 2 N 2 LINA / INRIA – Atlas Group 12 N 3

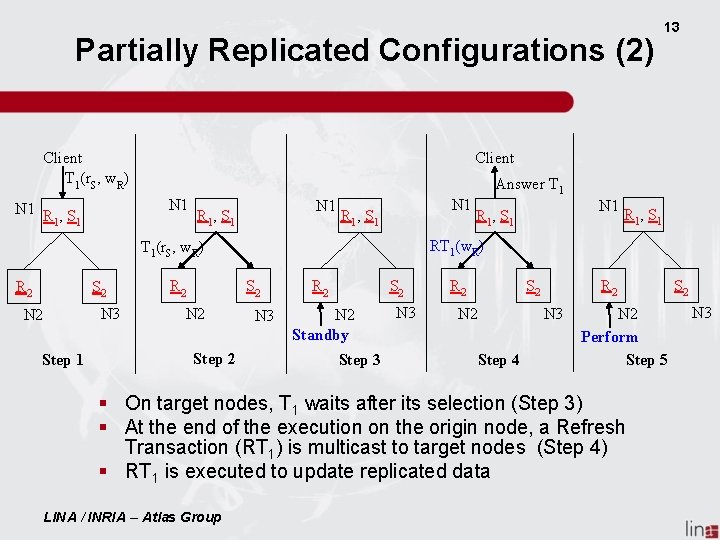

Partially Replicated Configurations (2) Client T 1(r. S, w. R) N 1 R , S 1 1 N 1 R 1, S 1 S 2 N 3 N 2 Step 1 R 2 S 2 N 2 Step 2 Answer T 1 R 1, S 1 N 1 R 1, S 1 RT 1(w. R) T 1(r. S, w. R) R 2 13 N 3 R 2 S 2 N 2 Standby Step 3 N 3 R 2 S 2 N 2 Step 4 N 3 N 2 Perform Step 5 § On target nodes, T 1 waits after its selection (Step 3) § At the end of the execution on the origin node, a Refresh Transaction (RT 1) is multicast to target nodes (Step 4) § RT 1 is executed to update replicated data LINA / INRIA – Atlas Group S 2 N 3

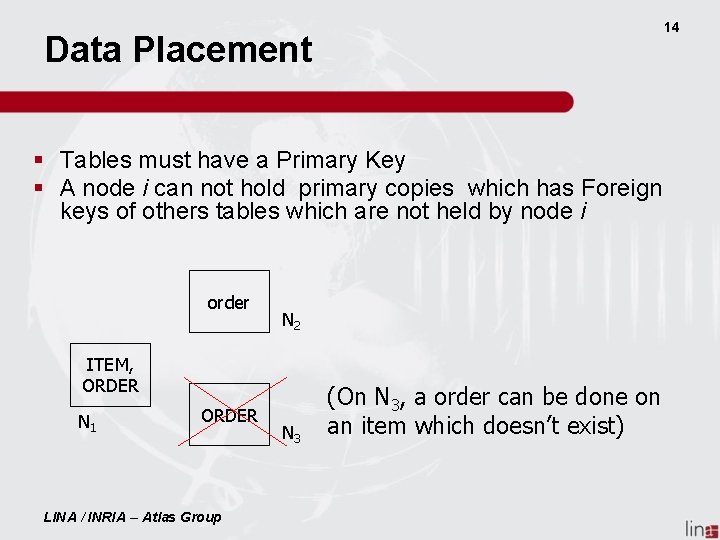

14 Data Placement § Tables must have a Primary Key § A node i can not hold primary copies which has Foreign keys of others tables which are not held by node i order N 2 ITEM, ORDER N 1 ORDER LINA / INRIA – Atlas Group N 3 (On N 3, a order can be done on an item which doesn’t exist)

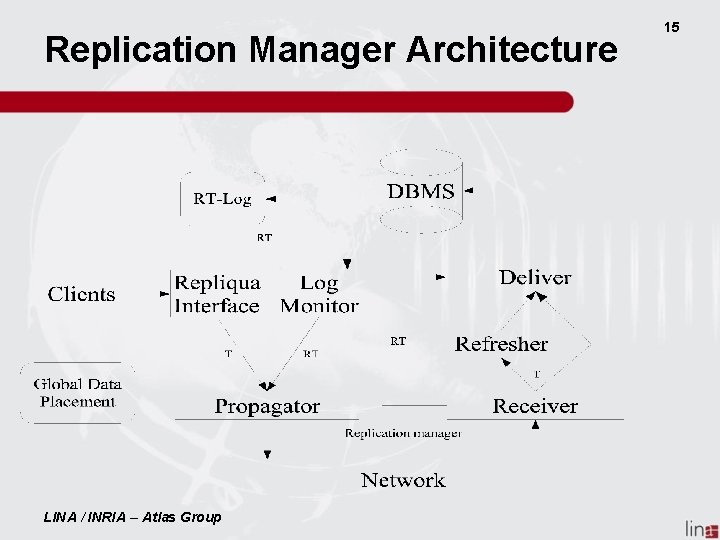

Replication Manager Architecture LINA / INRIA – Atlas Group 15

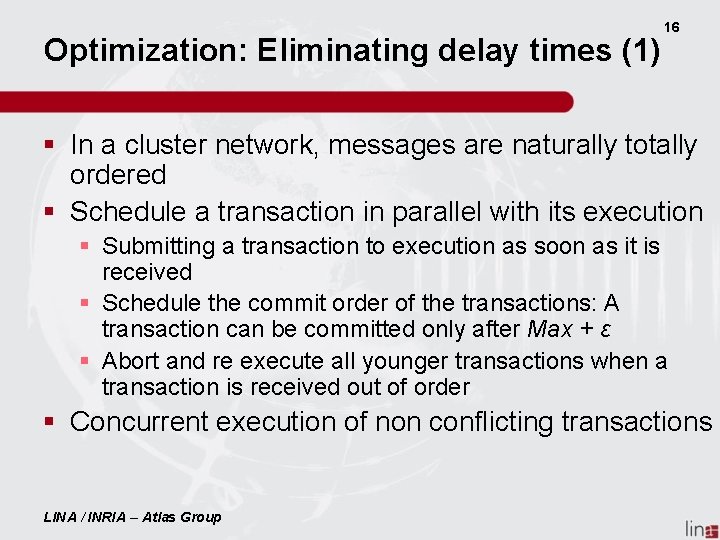

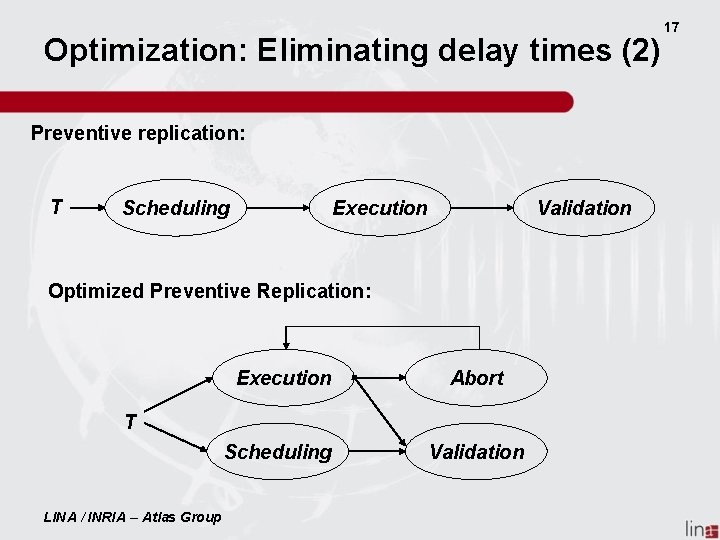

Optimization: Eliminating delay times (1) 16 § In a cluster network, messages are naturally totally ordered § Schedule a transaction in parallel with its execution § Submitting a transaction to execution as soon as it is received § Schedule the commit order of the transactions: A transaction can be committed only after Max + ε § Abort and re execute all younger transactions when a transaction is received out of order § Concurrent execution of non conflicting transactions LINA / INRIA – Atlas Group

Optimization: Eliminating delay times (2) Preventive replication: T Scheduling Execution Validation Optimized Preventive Replication: Execution Abort Scheduling Validation T LINA / INRIA – Atlas Group 17

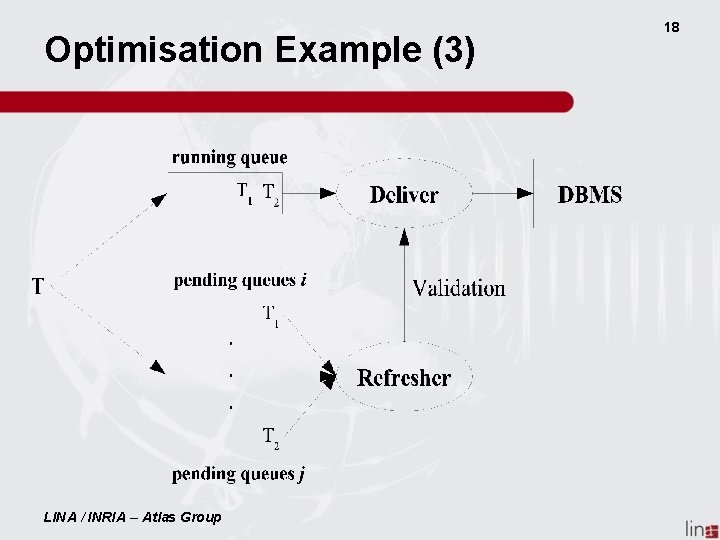

Optimisation Example (3) LINA / INRIA – Atlas Group 18

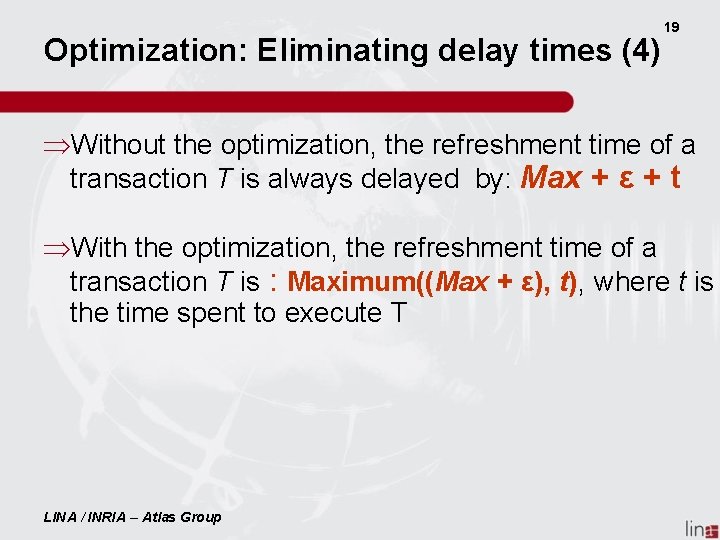

Optimization: Eliminating delay times (4) 19 ÞWithout the optimization, the refreshment time of a transaction T is always delayed by: Max + ε + t ÞWith the optimization, the refreshment time of a transaction T is : Maximum((Max + ε), t), where t is the time spent to execute T LINA / INRIA – Atlas Group

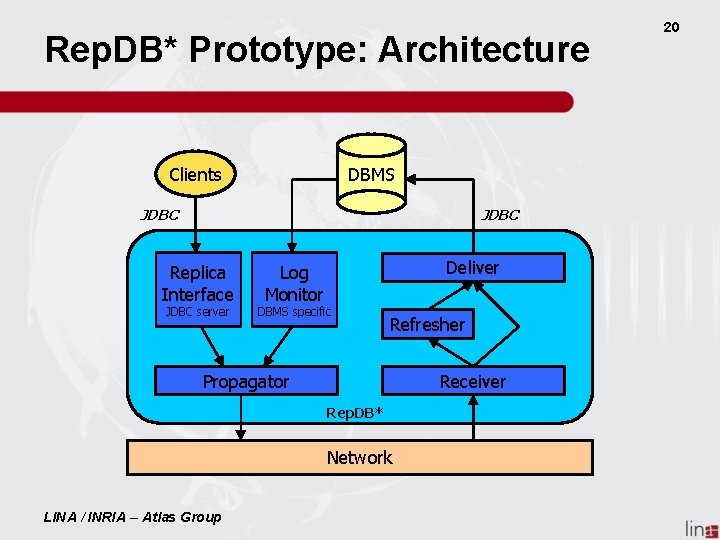

Rep. DB* Prototype: Architecture DBMS Clients JDBC Replica Interface JDBC server Deliver Log Monitor DBMS specific Refresher Propagator Receiver Rep. DB* Network LINA / INRIA – Atlas Group 20

Rep. DB* Prototype: Implementation § § 21 Java (around 10000 lines) DBMS is a black-box Interface JDBC (RMI-JDBC) Use of Spread toolkit to manage the network (Center for Networking and Distributed Systems - CNDS) § Simulation version (Sim. Java) § http: //www. sciences. univnantes. fr/ATLAS/Rep. DB LINA / INRIA – Atlas Group

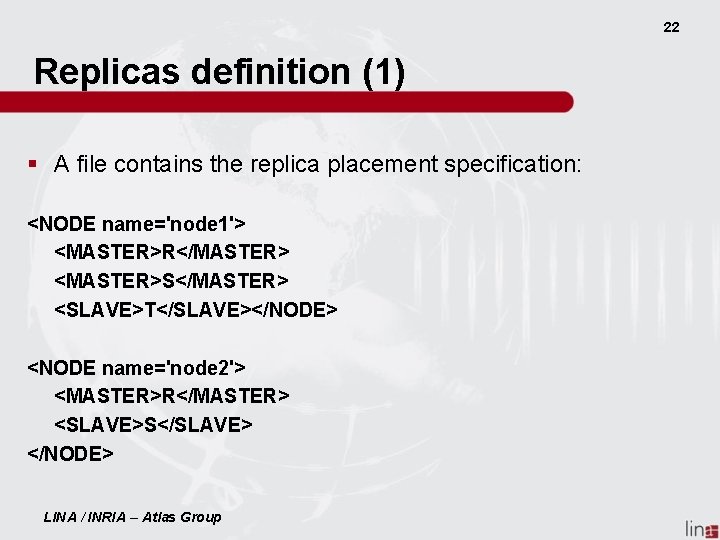

22 Replicas definition (1) § A file contains the replica placement specification: <NODE name='node 1'> <MASTER>R</MASTER> <MASTER>S</MASTER> <SLAVE>T</SLAVE></NODE> <NODE name='node 2'> <MASTER>R</MASTER> <SLAVE>S</SLAVE> </NODE> LINA / INRIA – Atlas Group

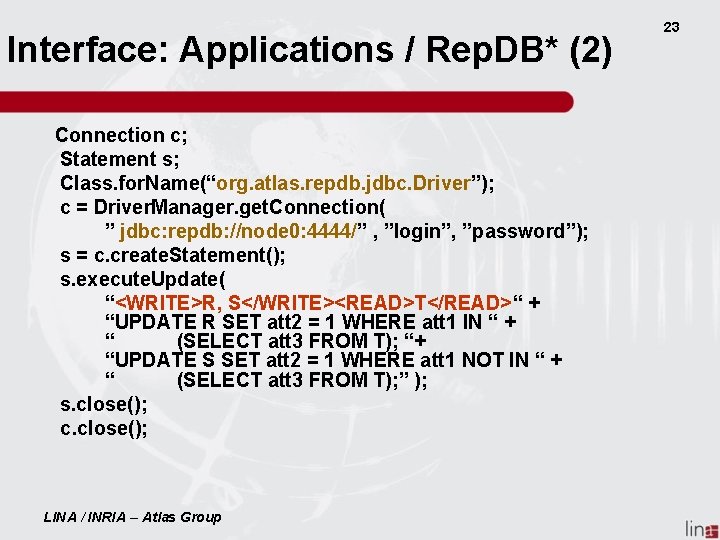

Interface: Applications / Rep. DB* (2) Connection c; Statement s; Class. for. Name(“org. atlas. repdb. jdbc. Driver”); c = Driver. Manager. get. Connection( ” jdbc: repdb: //node 0: 4444/” , ”login”, ”password”); s = c. create. Statement(); s. execute. Update( “<WRITE>R, S</WRITE><READ>T</READ>“ + “UPDATE R SET att 2 = 1 WHERE att 1 IN “ + “ (SELECT att 3 FROM T); “+ “UPDATE S SET att 2 = 1 WHERE att 1 NOT IN “ + “ (SELECT att 3 FROM T); ” ); s. close(); c. close(); LINA / INRIA – Atlas Group 23

Experiments (1): TPC-C benchmark 24 § 1 / 5 / 10 Warehouses § 10 clients per Warehouse § Transactions’ arrival rate is 1 s / 200 ms / 100 ms § 4 types of transactions: § § New-order: Read-Write, high frequency (45%) Payment: Read-Write, high frequency (45%) Order-status: Read, low frequency (5%) Stock-level: Read, low frequency (5%) LINA / INRIA – Atlas Group

Experiments (2) § Cluster of 64 nodes § Postgre. SQL 7. 3. 2 § 1 Gb/s network § 2 Configurations § Fully Replicated (FR) § Partially Replicated (PR): each type of TPCC transaction runs using ¼ of the nodes. LINA / INRIA – Atlas Group 25

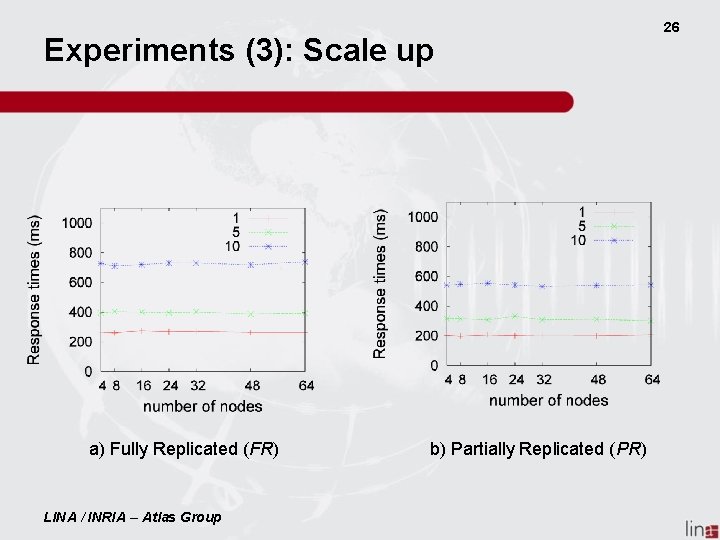

Experiments (3): Scale up a) Fully Replicated (FR) LINA / INRIA – Atlas Group b) Partially Replicated (PR) 26

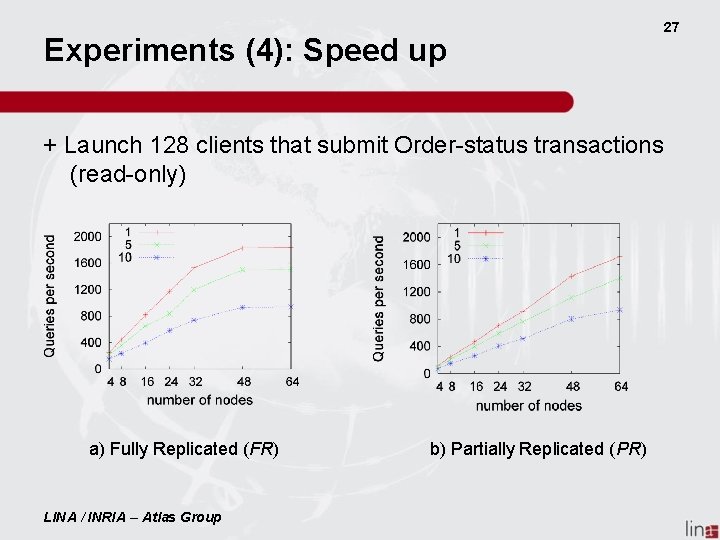

Experiments (4): Speed up + Launch 128 clients that submit Order-status transactions (read-only) a) Fully Replicated (FR) LINA / INRIA – Atlas Group b) Partially Replicated (PR) 27

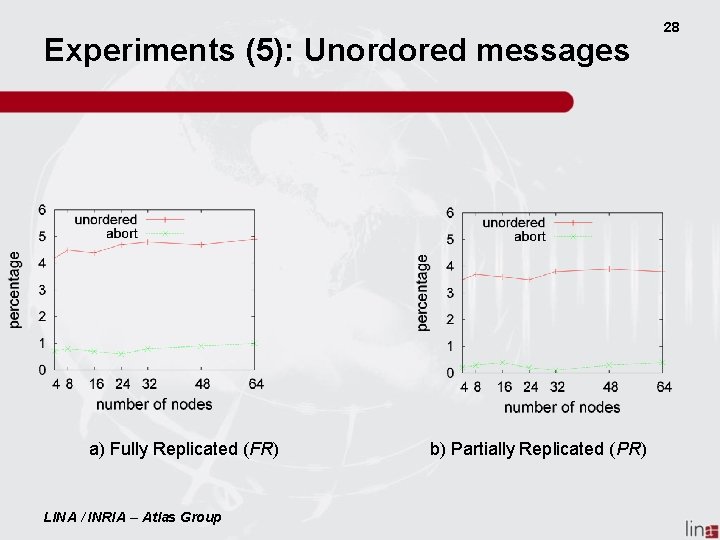

Experiments (5): Unordored messages a) Fully Replicated (FR) LINA / INRIA – Atlas Group b) Partially Replicated (PR) 28

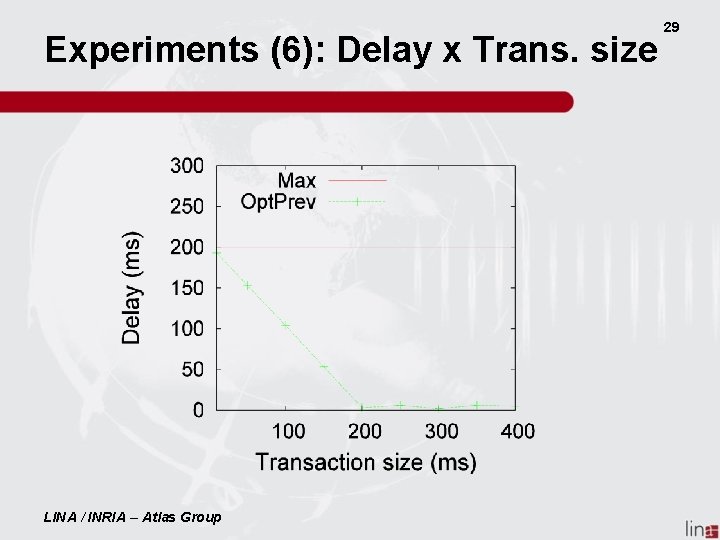

Experiments (6): Delay x Trans. size LINA / INRIA – Atlas Group 29

Conclusions § Preventive replication § Strong consistency § Prevents conflicts for partially replicated databases § Full node autonomy § Scale and Seeps up § Experiments show the configuration and the placement of the copies should be tuned to selected types of transactions LINA / INRIA – Atlas Group 30

Current and Future Work § Preventive Replication for P 2 P systems § Small and Dynamic multi-master groups § Max is computed dynamically § Small and dynamic slave groups § Optimistic Replication § Distributed Semantic Reconcialiation LINA / INRIA – Atlas Group 31

32 Thanks ! Merci ! Obrigado ! Questions ? LINA / INRIA – Atlas Group

- Slides: 32