Part 3 Decision Trees Decision tree representation n

![Which Attribute is ”best”? [29+, 35 -] A 1=? True [21+, 5 -] A Which Attribute is ”best”? [29+, 35 -] A 1=? True [21+, 5 -] A](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-12.jpg)

![Information Gain Entropy([21+, 5 -]) = 0. 71 Entropy([8+, 30 -]) = 0. 74 Information Gain Entropy([21+, 5 -]) = 0. 71 Entropy([8+, 30 -]) = 0. 74](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-16.jpg)

![Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Humidity Wind High [3+, 4 Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Humidity Wind High [3+, 4](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-18.jpg)

![Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Outlook Sunny Over cast Rain Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Outlook Sunny Over cast Rain](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-19.jpg)

![ID 3 Algorithm [D 1, D 2, …, D 14] [9+, 5 -] Outlook ID 3 Algorithm [D 1, D 2, …, D 14] [9+, 5 -] Outlook](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-21.jpg)

![ID 3 Algorithm Outlook Sunny Humidity High No [D 1, D 2] Overcast Rain ID 3 Algorithm Outlook Sunny Humidity High No [D 1, D 2] Overcast Rain](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-22.jpg)

- Slides: 29

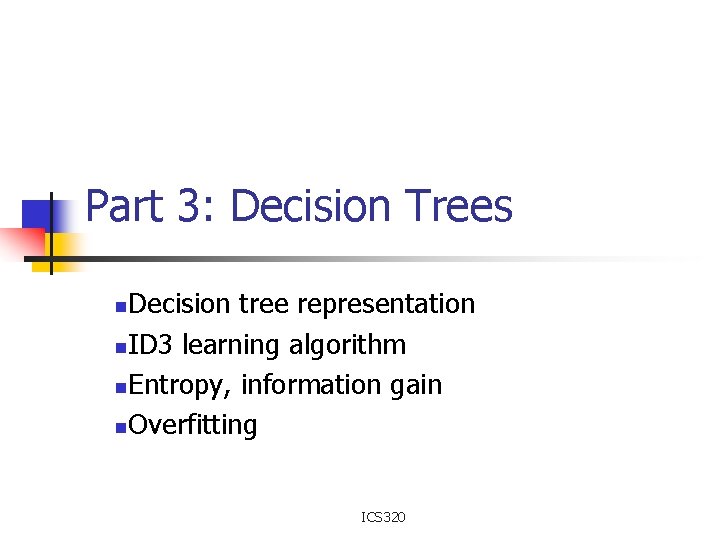

Part 3: Decision Trees Decision tree representation n. ID 3 learning algorithm n. Entropy, information gain n. Overfitting n ICS 320

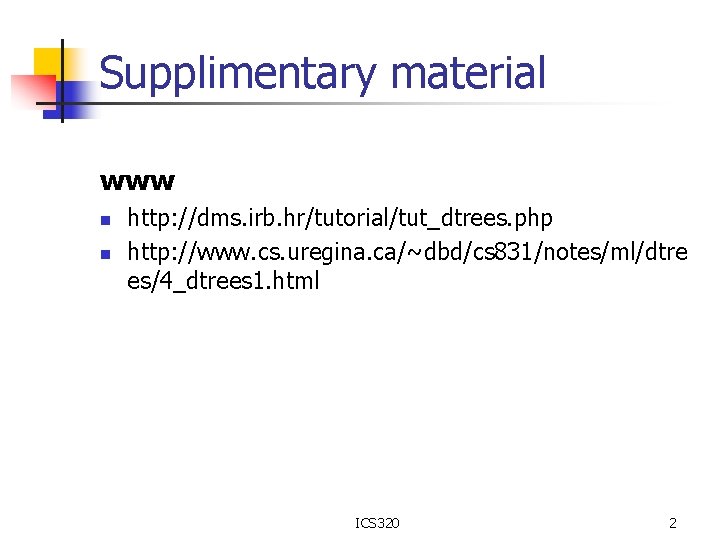

Supplimentary material www n n http: //dms. irb. hr/tutorial/tut_dtrees. php http: //www. cs. uregina. ca/~dbd/cs 831/notes/ml/dtre es/4_dtrees 1. html ICS 320 2

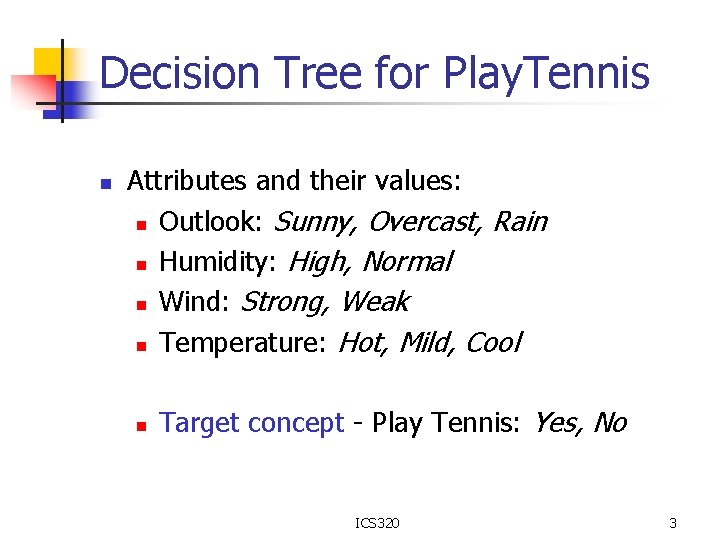

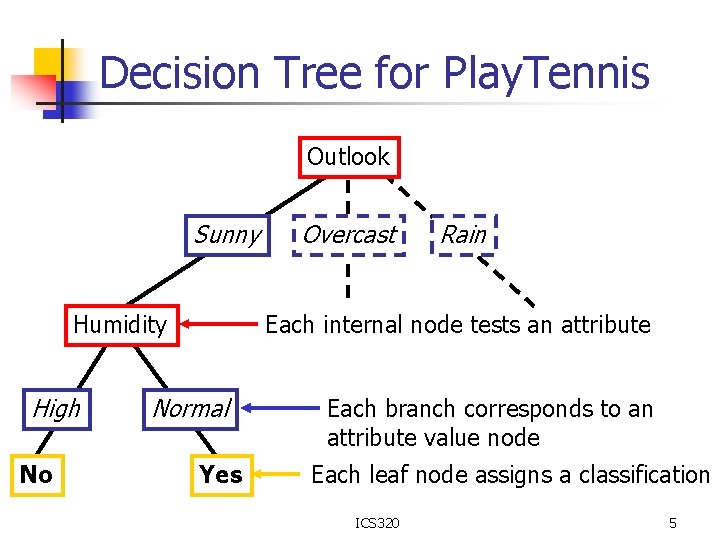

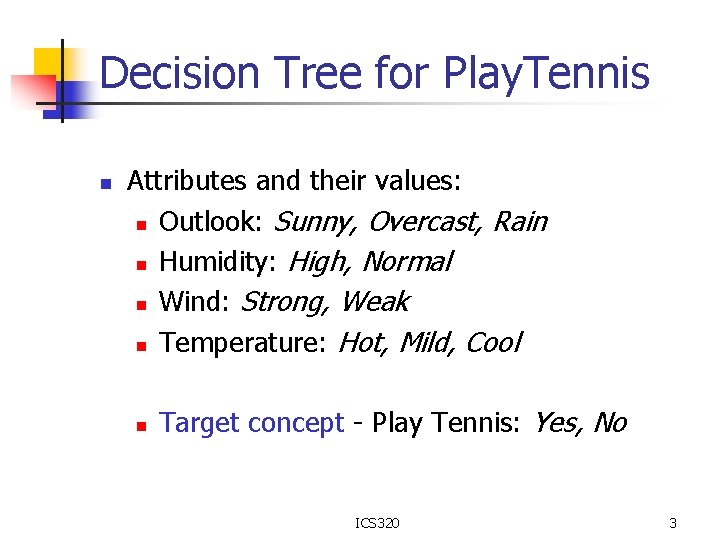

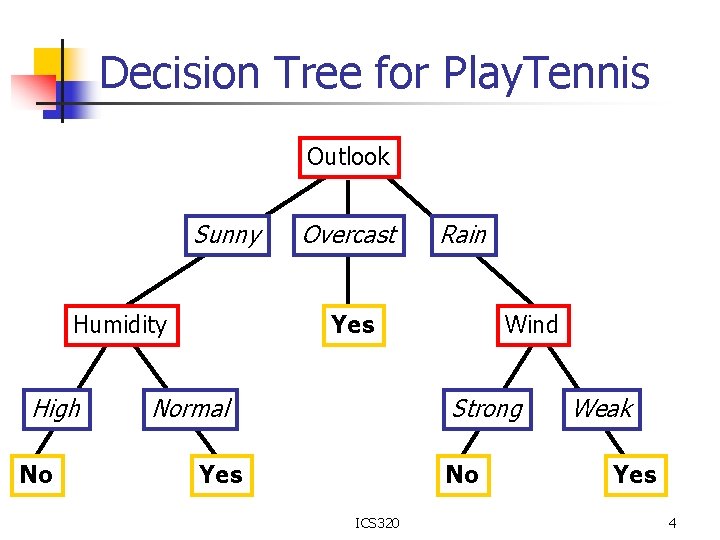

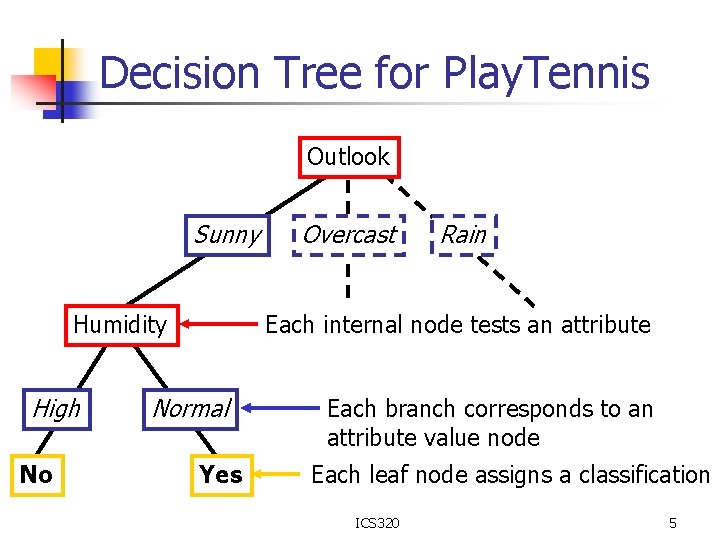

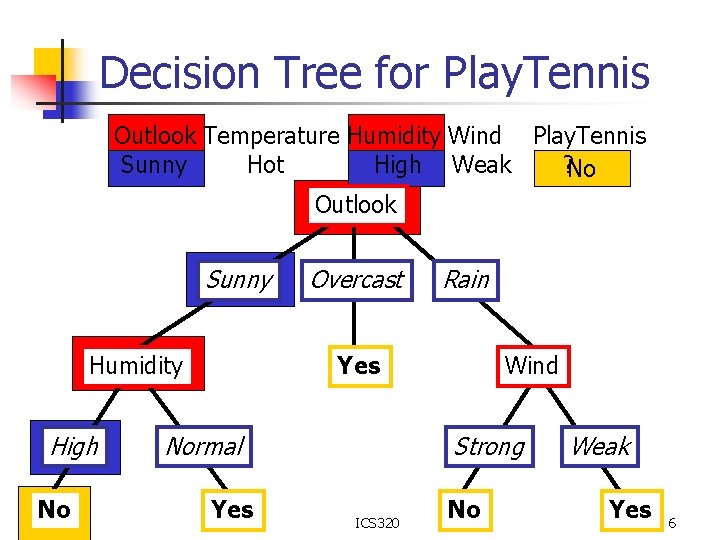

Decision Tree for Play. Tennis n Attributes and their values: n Outlook: Sunny, Overcast, Rain n Humidity: High, Normal n Wind: Strong, Weak n Temperature: Hot, Mild, Cool n Target concept - Play Tennis: Yes, No ICS 320 3

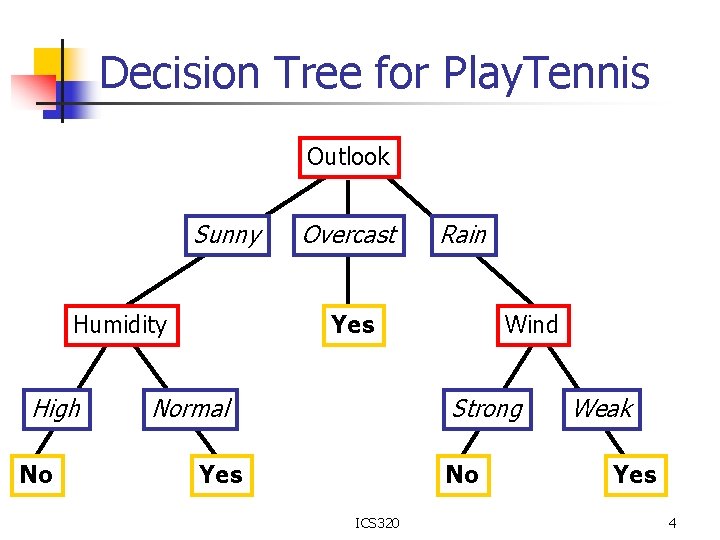

Decision Tree for Play. Tennis Outlook Sunny Humidity High No Overcast Rain Yes Normal Wind Strong Yes No ICS 320 Weak Yes 4

Decision Tree for Play. Tennis Outlook Sunny Humidity High No Overcast Rain Each internal node tests an attribute Normal Yes Each branch corresponds to an attribute value node Each leaf node assigns a classification ICS 320 5

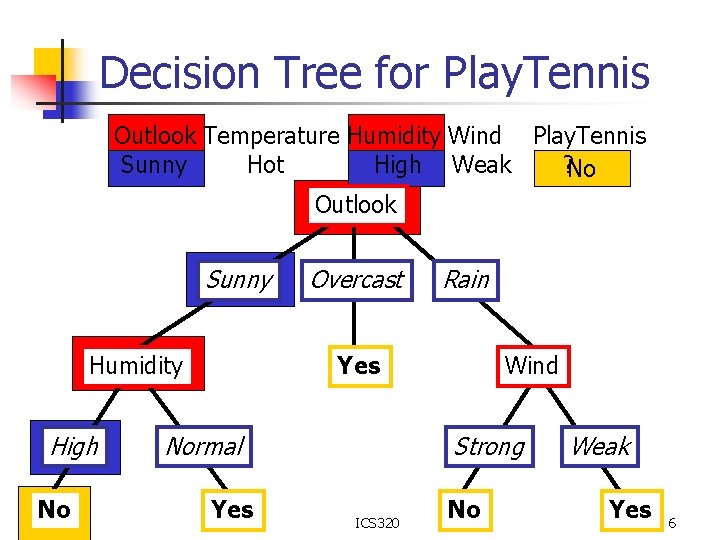

Decision Tree for Play. Tennis Outlook Temperature Humidity Wind Play. Tennis Sunny Hot High Weak ? No Outlook Sunny Humidity High No Overcast Rain Yes Normal Yes Wind Strong ICS 320 No Weak Yes 6

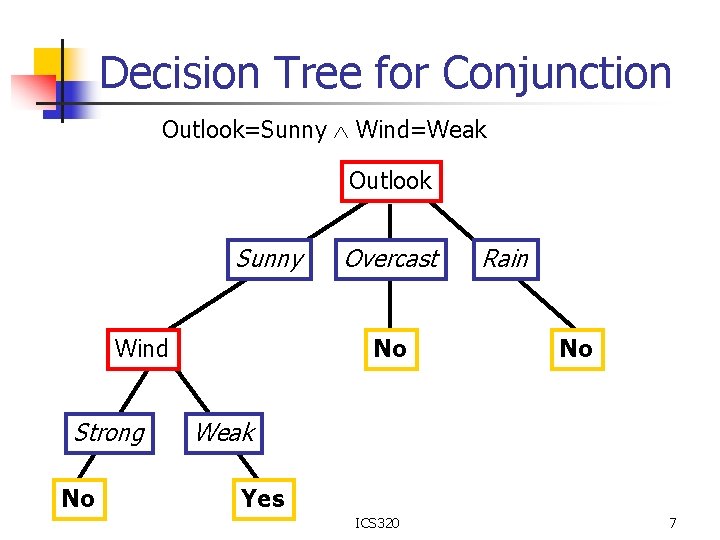

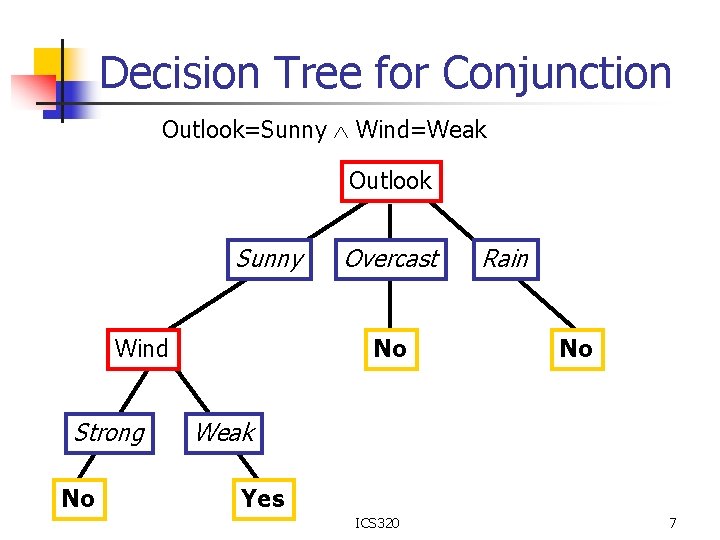

Decision Tree for Conjunction Outlook=Sunny Wind=Weak Outlook Sunny Wind Strong No Overcast No Rain No Weak Yes ICS 320 7

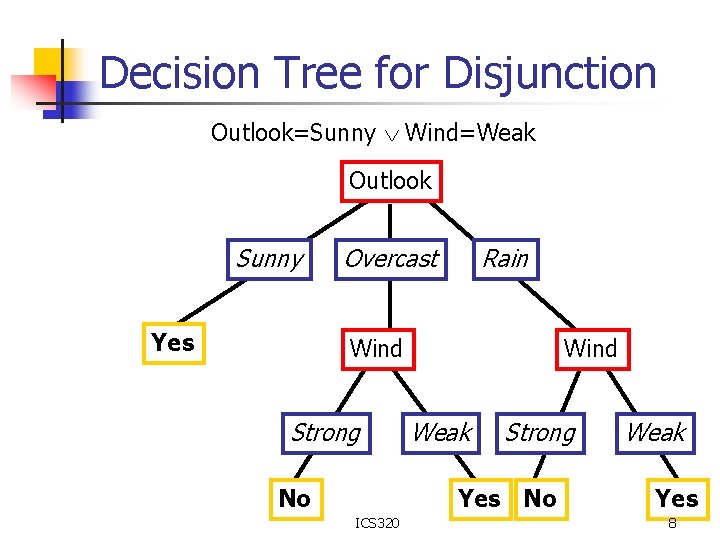

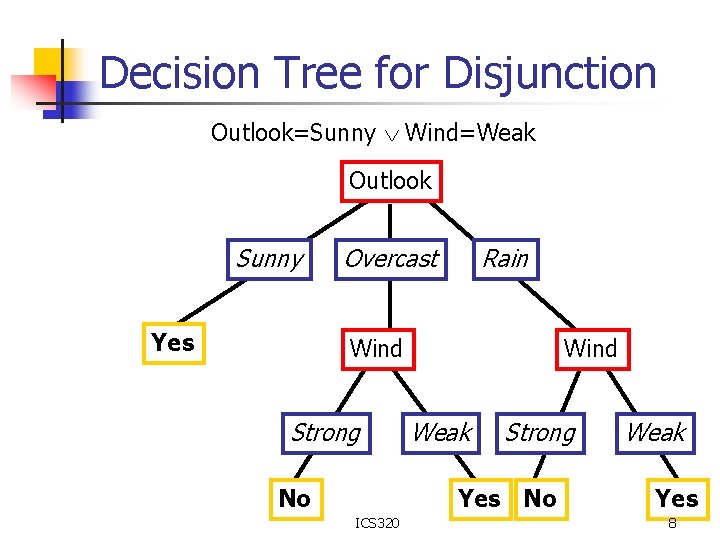

Decision Tree for Disjunction Outlook=Sunny Wind=Weak Outlook Sunny Yes Overcast Rain Wind Strong No Wind Weak Strong Yes No ICS 320 Weak Yes 8

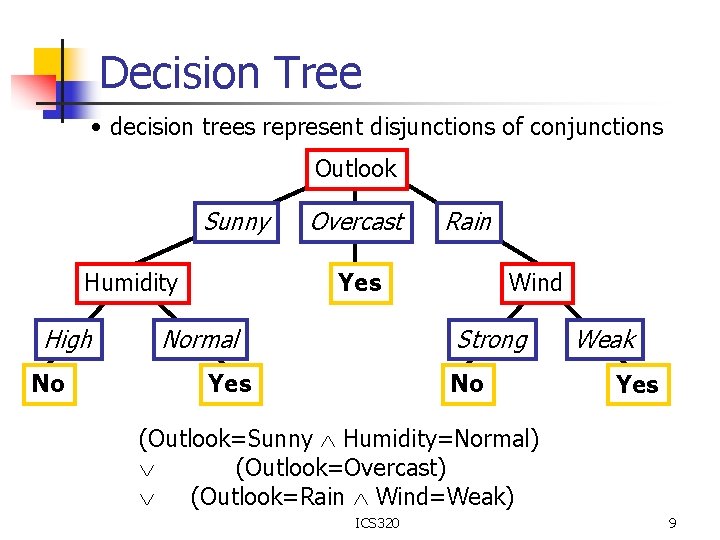

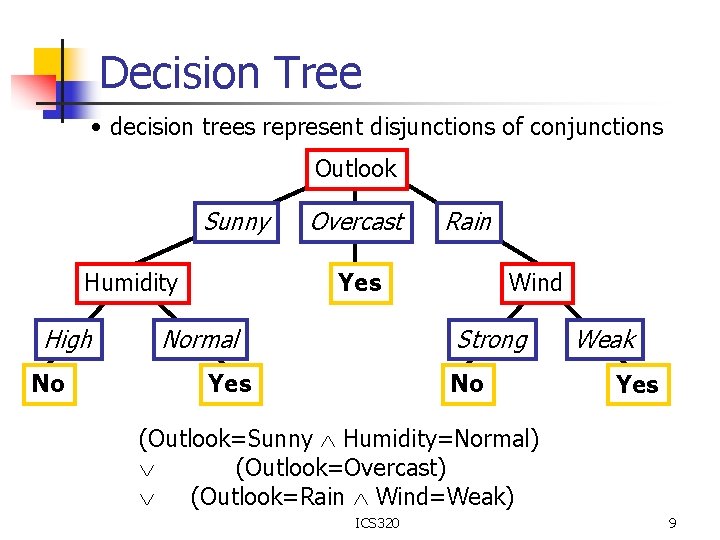

Decision Tree • decision trees represent disjunctions of conjunctions Outlook Sunny Humidity High No Overcast Rain Yes Normal Wind Strong Yes No Weak Yes (Outlook=Sunny Humidity=Normal) (Outlook=Overcast) (Outlook=Rain Wind=Weak) ICS 320 9

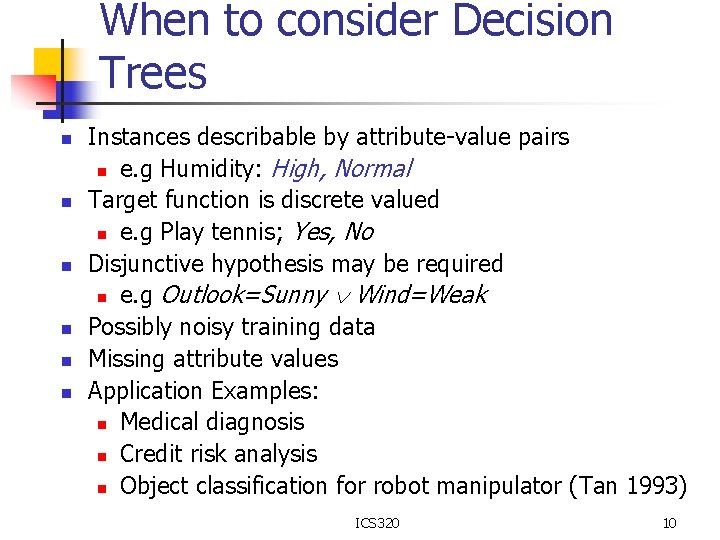

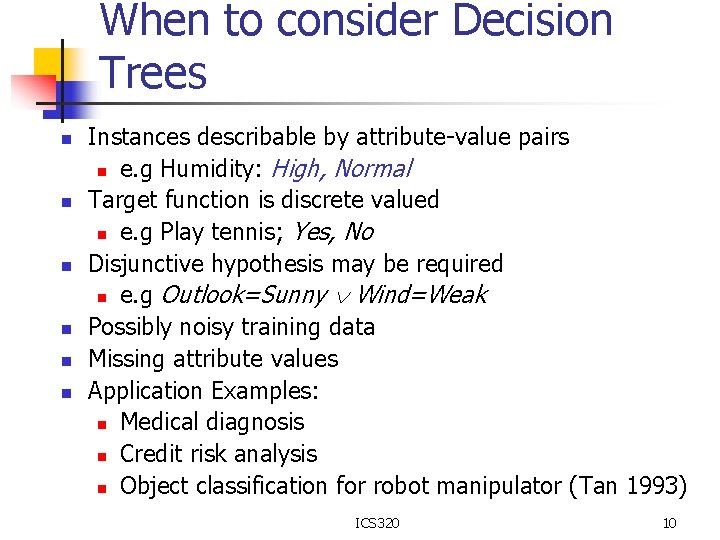

When to consider Decision Trees n n n Instances describable by attribute-value pairs n e. g Humidity: High, Normal Target function is discrete valued n e. g Play tennis; Yes, No Disjunctive hypothesis may be required n e. g Outlook=Sunny Wind=Weak Possibly noisy training data Missing attribute values Application Examples: n Medical diagnosis n Credit risk analysis n Object classification for robot manipulator (Tan 1993) ICS 320 10

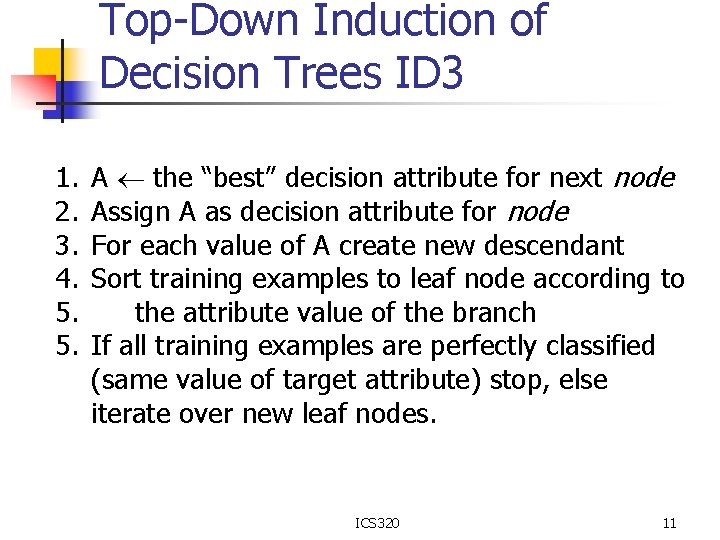

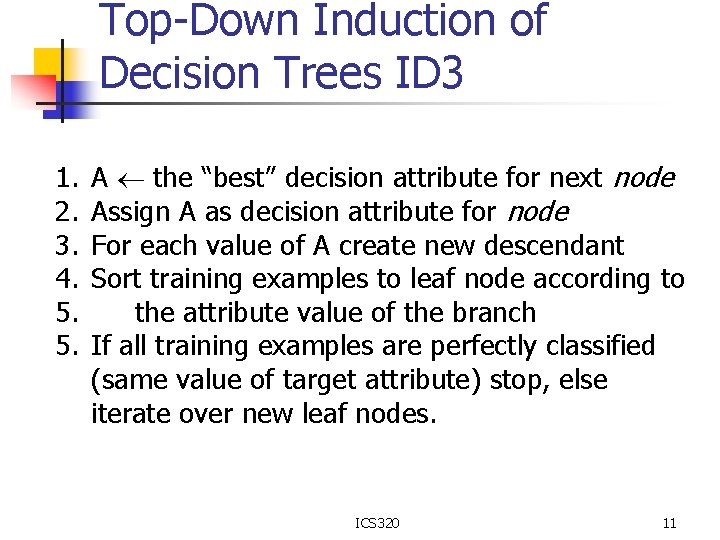

Top-Down Induction of Decision Trees ID 3 1. 2. 3. 4. 5. 5. A the “best” decision attribute for next node Assign A as decision attribute for node For each value of A create new descendant Sort training examples to leaf node according to the attribute value of the branch If all training examples are perfectly classified (same value of target attribute) stop, else iterate over new leaf nodes. ICS 320 11

![Which Attribute is best 29 35 A 1 True 21 5 A Which Attribute is ”best”? [29+, 35 -] A 1=? True [21+, 5 -] A](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-12.jpg)

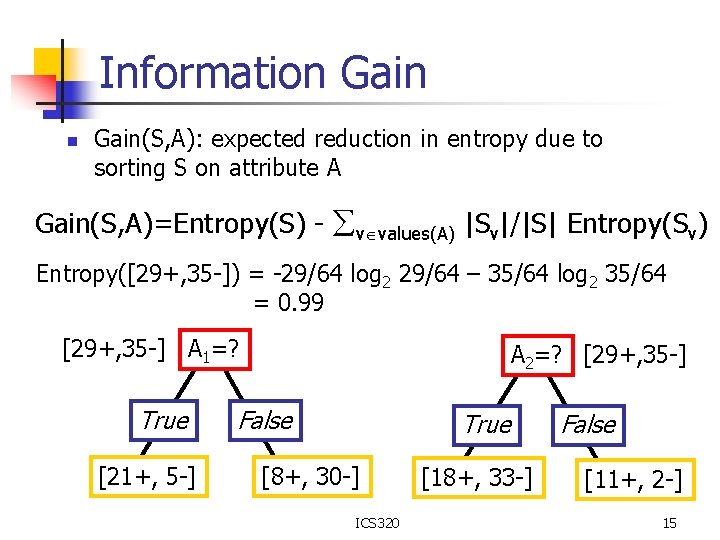

Which Attribute is ”best”? [29+, 35 -] A 1=? True [21+, 5 -] A 2=? [29+, 35 -] False [8+, 30 -] True [18+, 33 -] ICS 320 False [11+, 2 -] 12

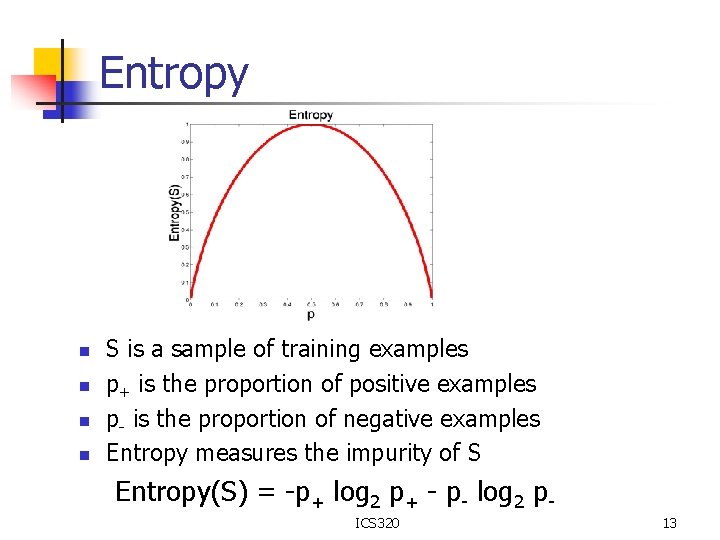

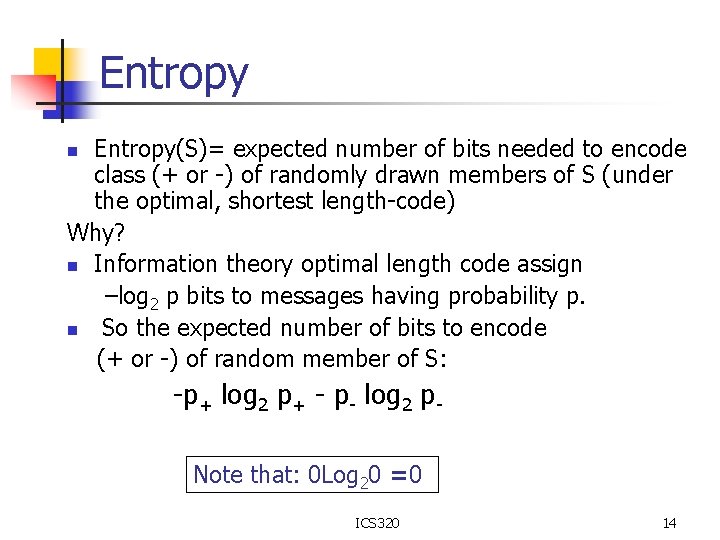

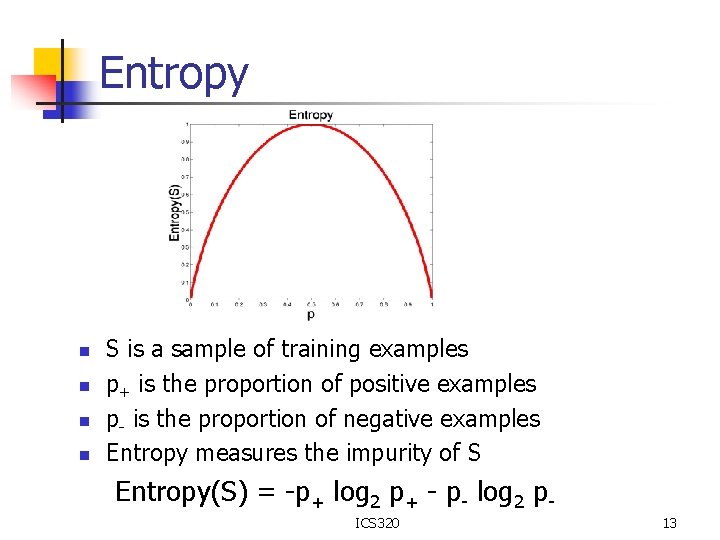

Entropy n n S is a sample of training examples p+ is the proportion of positive examples p- is the proportion of negative examples Entropy measures the impurity of S Entropy(S) = -p+ log 2 p+ - p- log 2 p. ICS 320 13

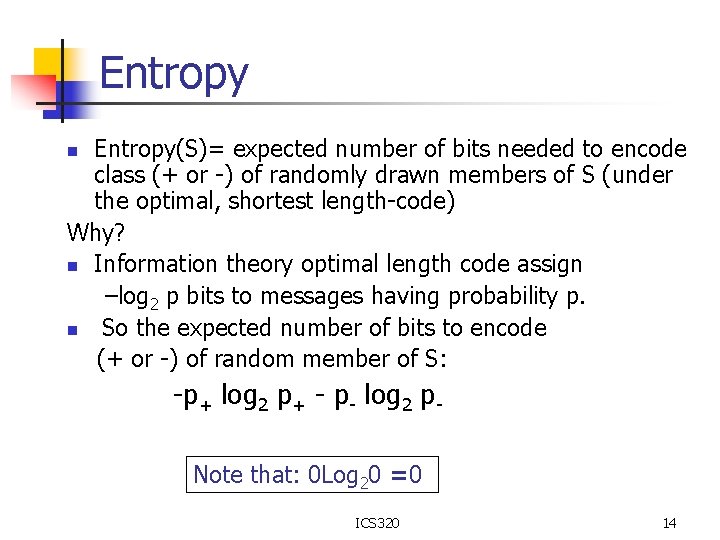

Entropy(S)= expected number of bits needed to encode class (+ or -) of randomly drawn members of S (under the optimal, shortest length-code) Why? n Information theory optimal length code assign –log 2 p bits to messages having probability p. n So the expected number of bits to encode (+ or -) of random member of S: n -p+ log 2 p+ - p- log 2 p. Note that: 0 Log 20 =0 ICS 320 14

Information Gain(S, A): expected reduction in entropy due to sorting S on attribute A Gain(S, A)=Entropy(S) - v values(A) |Sv|/|S| Entropy(Sv) Entropy([29+, 35 -]) = -29/64 log 2 29/64 – 35/64 log 2 35/64 = 0. 99 [29+, 35 -] A 1=? True [21+, 5 -] A 2=? [29+, 35 -] False True [8+, 30 -] ICS 320 [18+, 33 -] False [11+, 2 -] 15

![Information Gain Entropy21 5 0 71 Entropy8 30 0 74 Information Gain Entropy([21+, 5 -]) = 0. 71 Entropy([8+, 30 -]) = 0. 74](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-16.jpg)

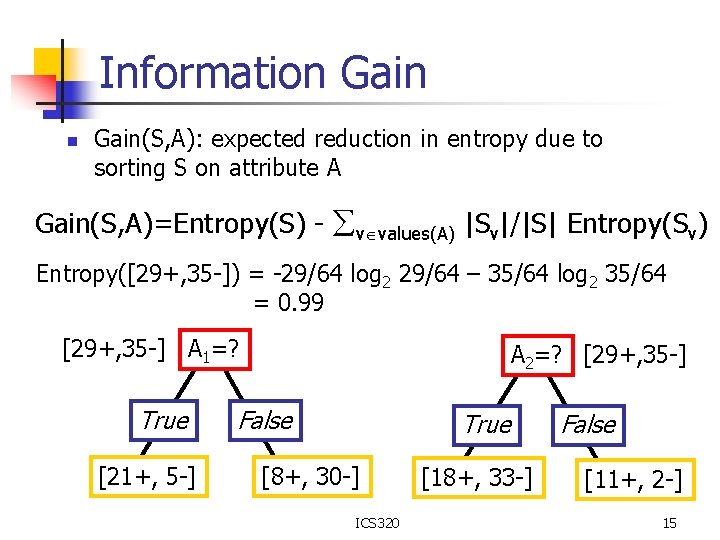

Information Gain Entropy([21+, 5 -]) = 0. 71 Entropy([8+, 30 -]) = 0. 74 Gain(S, A 1)=Entropy(S) -26/64*Entropy([21+, 5 -]) -38/64*Entropy([8+, 30 -]) =0. 27 Entropy([18+, 33 -]) = 0. 94 Entropy([8+, 30 -]) = 0. 62 Gain(S, A 2)=Entropy(S) -51/64*Entropy([18+, 33 -]) -13/64*Entropy([11+, 2 -]) =0. 12 [29+, 35 -] A 1=? True [21+, 5 -] A 2=? [29+, 35 -] True False [8+, 30 -] [18+, 33 -] ICS 320 False [11+, 2 -] 16

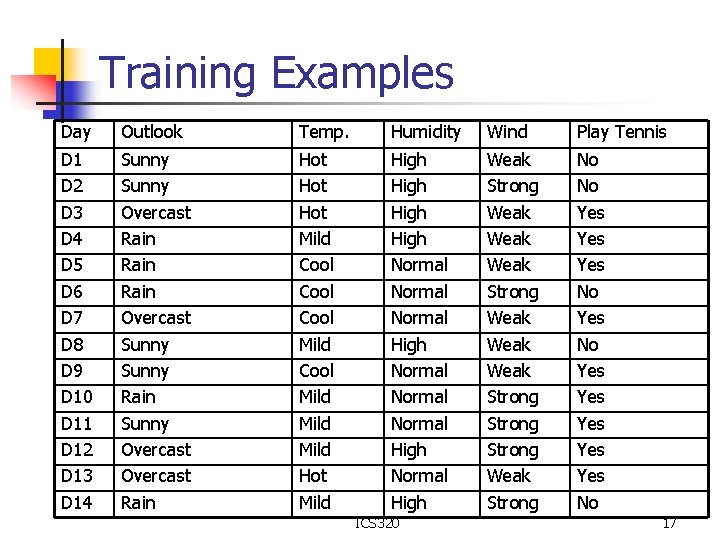

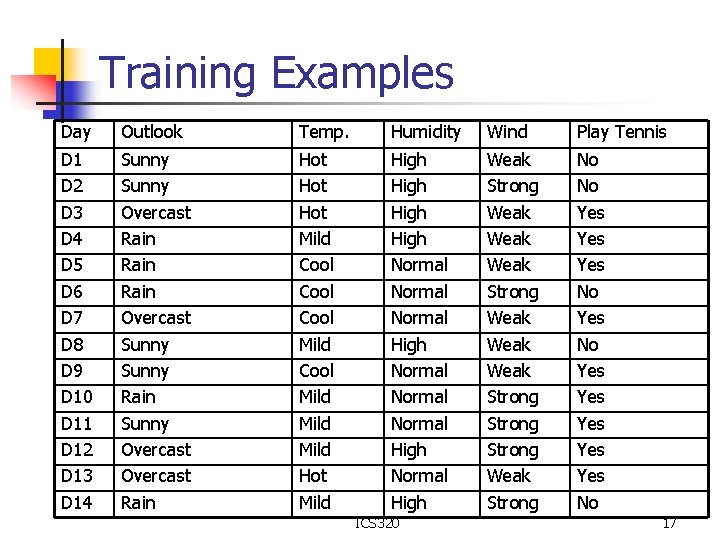

Training Examples Day Outlook Temp. Humidity Wind Play Tennis D 1 D 2 D 3 D 4 D 5 D 6 D 7 D 8 D 9 D 10 D 11 D 12 D 13 D 14 Sunny Overcast Rain Overcast Sunny Rain Sunny Overcast Rain Hot Hot Mild Cool Mild Hot Mild High Normal Normal High Weak Strong Weak Weak Strong Weak Strong No No Yes Yes Yes No ICS 320 17

![Selecting the Next Attribute S9 5 E0 940 Humidity Wind High 3 4 Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Humidity Wind High [3+, 4](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-18.jpg)

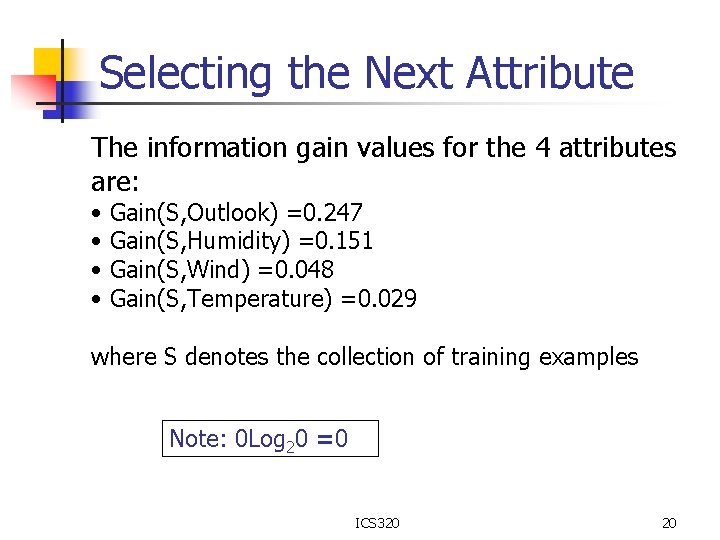

Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Humidity Wind High [3+, 4 -] E=0. 985 Normal Weak Strong [6+, 1 -] [6+, 2 -] E=0. 592 E=0. 811 E=1. 0 Gain(S, Wind) =0. 940 -(8/14)*0. 811 – (6/14)*1. 0 =0. 048 Gain(S, Humidity) =0. 940 -(7/14)*0. 985 – (7/14)*0. 592 =0. 151 [3+, 3 -] Humidity provides greater info. gain. ICS 320 than Wind, w. r. t target classification. 18

![Selecting the Next Attribute S9 5 E0 940 Outlook Sunny Over cast Rain Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Outlook Sunny Over cast Rain](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-19.jpg)

Selecting the Next Attribute S=[9+, 5 -] E=0. 940 Outlook Sunny Over cast Rain [2+, 3 -] [4+, 0] [3+, 2 -] E=0. 971 E=0. 0 E=0. 971 Gain(S, Outlook) =0. 940 -(5/14)*0. 971 -(4/14)*0. 0 – (5/14)*0. 0971 =0. 247 ICS 320 19

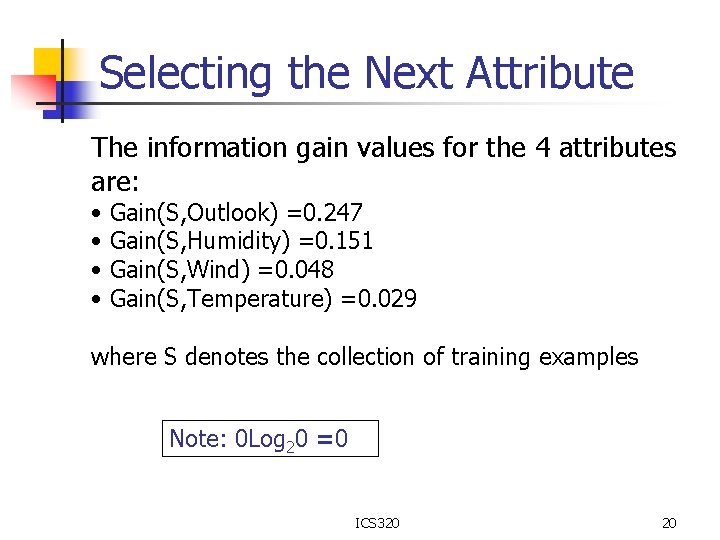

Selecting the Next Attribute The information gain values for the 4 attributes are: • • Gain(S, Outlook) =0. 247 Gain(S, Humidity) =0. 151 Gain(S, Wind) =0. 048 Gain(S, Temperature) =0. 029 where S denotes the collection of training examples Note: 0 Log 20 =0 ICS 320 20

![ID 3 Algorithm D 1 D 2 D 14 9 5 Outlook ID 3 Algorithm [D 1, D 2, …, D 14] [9+, 5 -] Outlook](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-21.jpg)

ID 3 Algorithm [D 1, D 2, …, D 14] [9+, 5 -] Outlook Sunny Overcast Note: 0 Log 20 =0 Rain Ssunny=[D 1, D 2, D 8, D 9, D 11] [D 3, D 7, D 12, D 13] [D 4, D 5, D 6, D 10, D 14] [2+, 3 -] [4+, 0 -] [3+, 2 -] Test for this node ? Yes ? Gain(Ssunny , Humidity)=0. 970 -(3/5)0. 0 – 2/5(0. 0) = 0. 970 Gain(Ssunny , Temp. )=0. 970 -(2/5)0. 0 – 2/5(1. 0)-(1/5)0. 0 = 0. 570 Gain(Ssunny , Wind)=0. 970= -(2/5)1. 0 – 3/5(0. 918) = 0. 019 ICS 320 21

![ID 3 Algorithm Outlook Sunny Humidity High No D 1 D 2 Overcast Rain ID 3 Algorithm Outlook Sunny Humidity High No [D 1, D 2] Overcast Rain](https://slidetodoc.com/presentation_image_h/c4af86784ee93eb352ff2f6647f72459/image-22.jpg)

ID 3 Algorithm Outlook Sunny Humidity High No [D 1, D 2] Overcast Rain Yes [D 3, D 7, D 12, D 13] Normal Strong Yes [D 8, D 9, D 11] Wind ICS 320 Weak No Yes [D 6, D 14] [D 4, D 5, D 10] 22

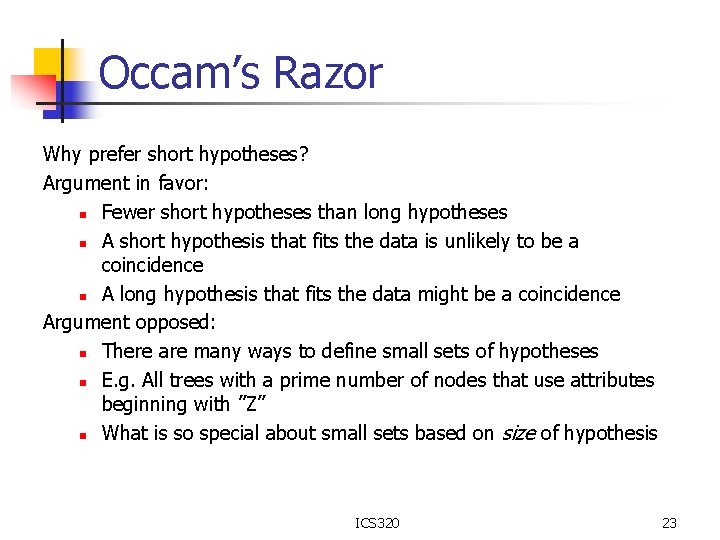

Occam’s Razor Why prefer short hypotheses? Argument in favor: n Fewer short hypotheses than long hypotheses n A short hypothesis that fits the data is unlikely to be a coincidence n A long hypothesis that fits the data might be a coincidence Argument opposed: n There are many ways to define small sets of hypotheses n E. g. All trees with a prime number of nodes that use attributes beginning with ”Z” n What is so special about small sets based on size of hypothesis ICS 320 23

Overfitting n One of the biggest problems with decision trees is Overfitting ICS 320 24

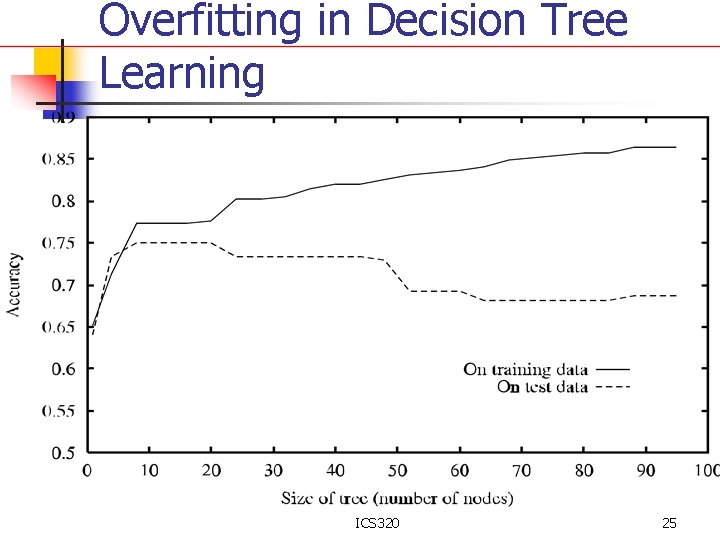

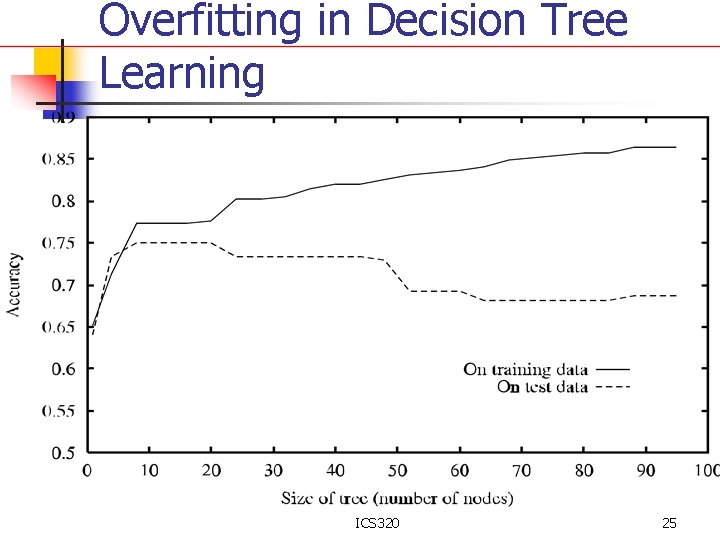

Overfitting in Decision Tree Learning ICS 320 25

Avoid Overfitting How can we avoid overfitting? n Stop growing when data split not statistically significant n Grow full tree then post-prune n Minimum description length (MDL): Minimize: size(tree) + size(misclassifications(tree)) ICS 320 26

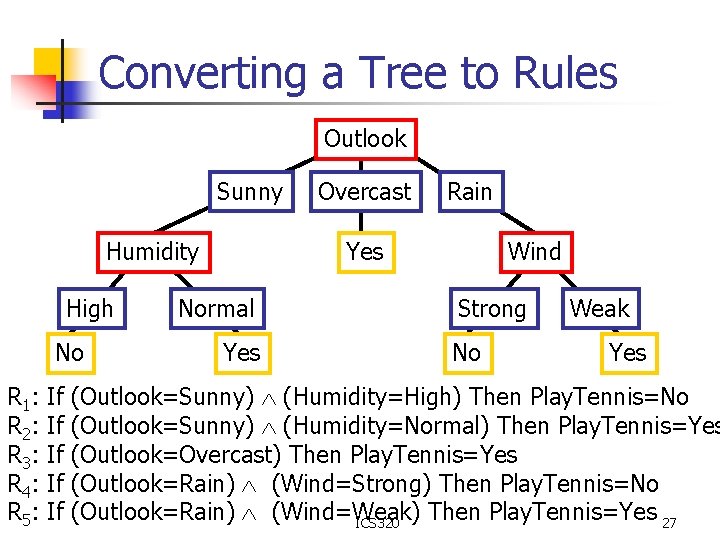

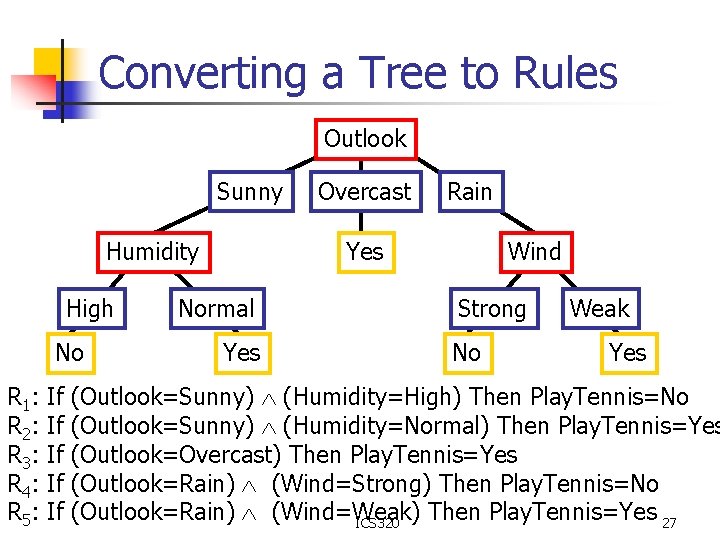

Converting a Tree to Rules Outlook Sunny Humidity High No R 1: R 2: R 3: R 4: R 5: If If If Overcast Rain Yes Normal Yes Wind Strong No Weak Yes (Outlook=Sunny) (Humidity=High) Then Play. Tennis=No (Outlook=Sunny) (Humidity=Normal) Then Play. Tennis=Yes (Outlook=Overcast) Then Play. Tennis=Yes (Outlook=Rain) (Wind=Strong) Then Play. Tennis=No (Outlook=Rain) (Wind=Weak) Then Play. Tennis=Yes 27 ICS 320

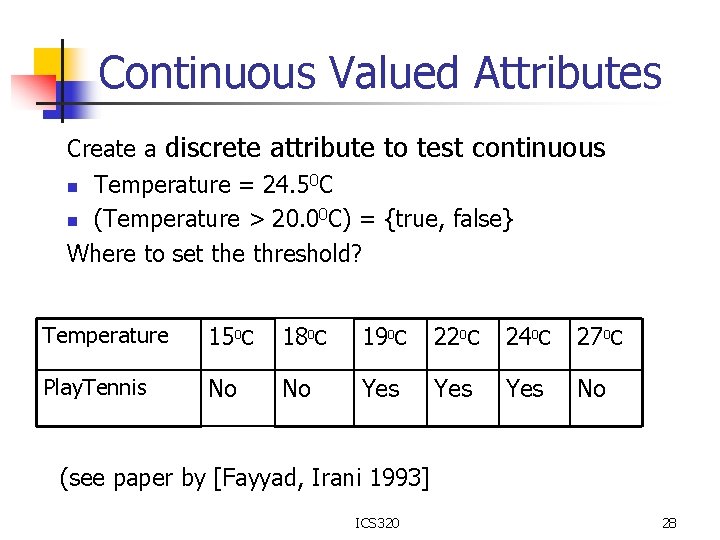

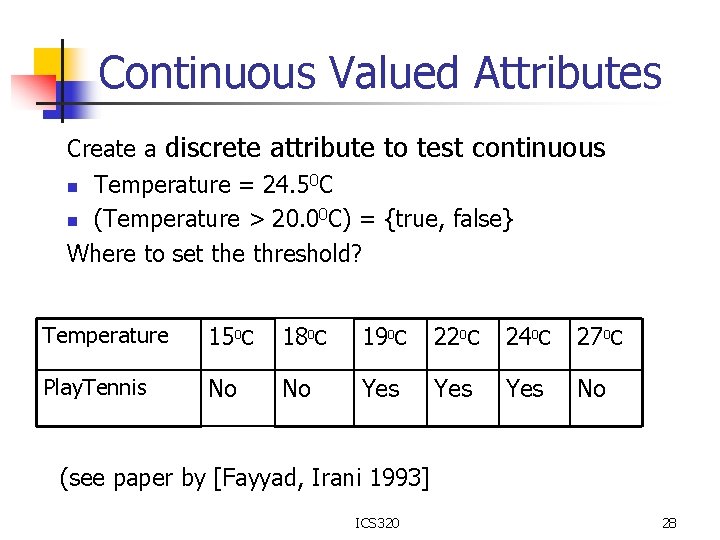

Continuous Valued Attributes Create a discrete attribute to test continuous n Temperature = 24. 50 C n (Temperature > 20. 00 C) = {true, false} Where to set the threshold? Temperature 150 C 180 C 190 C 220 C 240 C 270 C Play. Tennis No No Yes Yes No (see paper by [Fayyad, Irani 1993] ICS 320 28

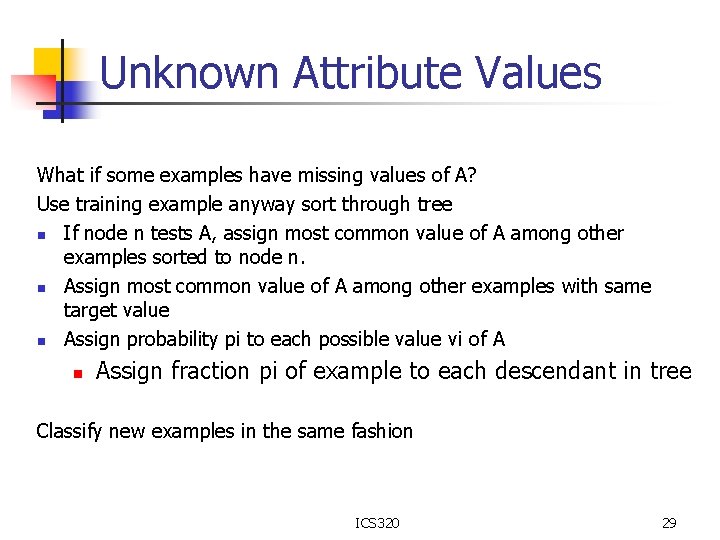

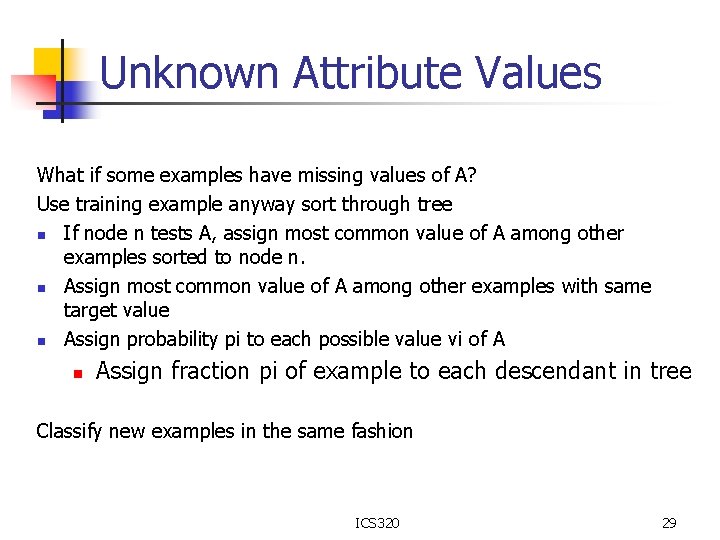

Unknown Attribute Values What if some examples have missing values of A? Use training example anyway sort through tree n If node n tests A, assign most common value of A among other examples sorted to node n. n Assign most common value of A among other examples with same target value n Assign probability pi to each possible value vi of A n Assign fraction pi of example to each descendant in tree Classify new examples in the same fashion ICS 320 29