Parallelizing Spacetime Discontinuous Galerkin Methods Jonathan Booth University

Parallelizing Spacetime Discontinuous Galerkin Methods Jonathan Booth University of Illinois at Urbana/Champaign In conjunction with: L. Kale, R. Haber, S. Thite, J. Palaniappan This research made possible via NSF grant DMR 01 -21695 http: //charm. cs. uiuc. edu

Parallel Programming Lab • Led by Professor Laxmikant Kale • Application-oriented – Research is driven by real applications and the needs of real applications • • NAMD CSAR Rocket Simulation (Roc*) Spacetime Discontinuous Galerkin Petaflops Performance Prediction (Blue Gene) – Focus on scaleable performance for real applications http: //charm. cs. uiuc. edu

Charm++ Overview • In development for roughly ten years • Based on C++ • Runs on many platforms – Desktops – Clusters – Supercomputers • Overlays a C layer called Converse – Allows multiple languages to work together http: //charm. cs. uiuc. edu

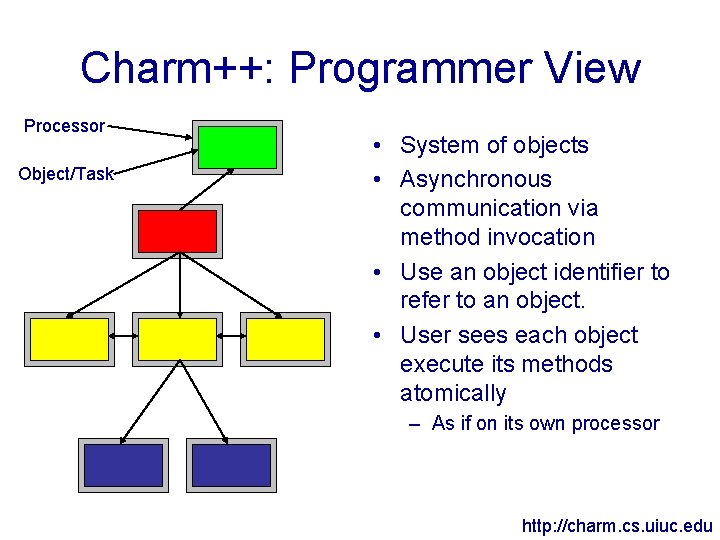

Charm++: Programmer View Processor Object/Task • System of objects • Asynchronous communication via method invocation • Use an object identifier to refer to an object. • User sees each object execute its methods atomically – As if on its own processor http: //charm. cs. uiuc. edu

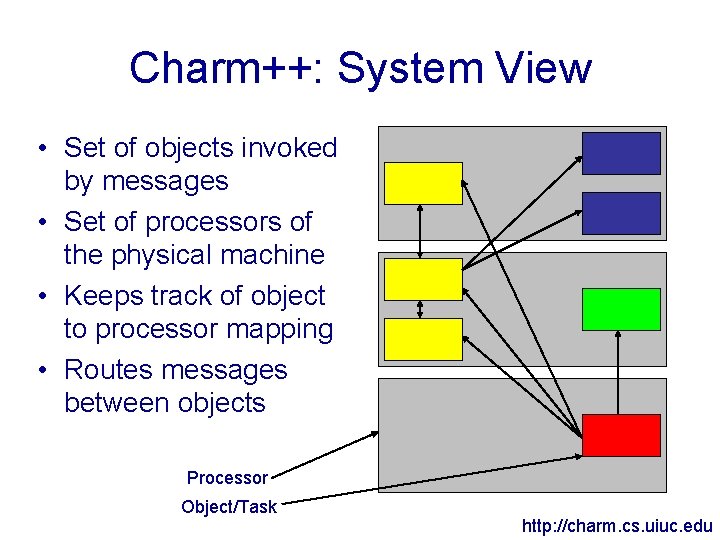

Charm++: System View • Set of objects invoked by messages • Set of processors of the physical machine • Keeps track of object to processor mapping • Routes messages between objects Processor Object/Task http: //charm. cs. uiuc. edu

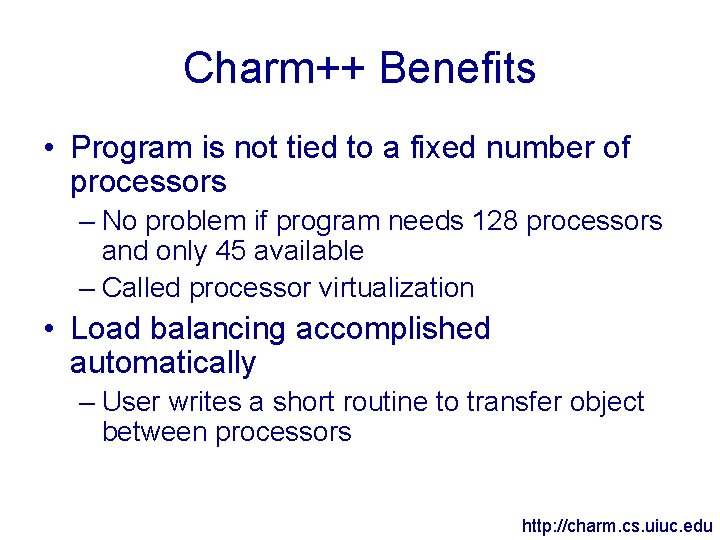

Charm++ Benefits • Program is not tied to a fixed number of processors – No problem if program needs 128 processors and only 45 available – Called processor virtualization • Load balancing accomplished automatically – User writes a short routine to transfer object between processors http: //charm. cs. uiuc. edu

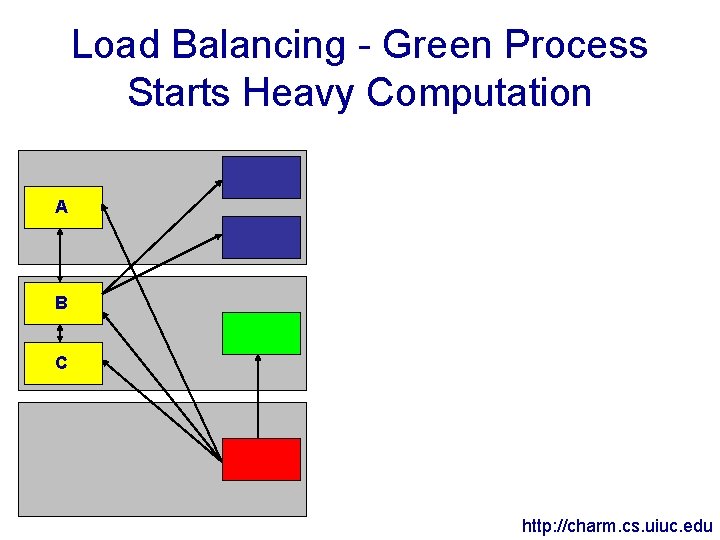

Load Balancing - Green Process Starts Heavy Computation A B C http: //charm. cs. uiuc. edu

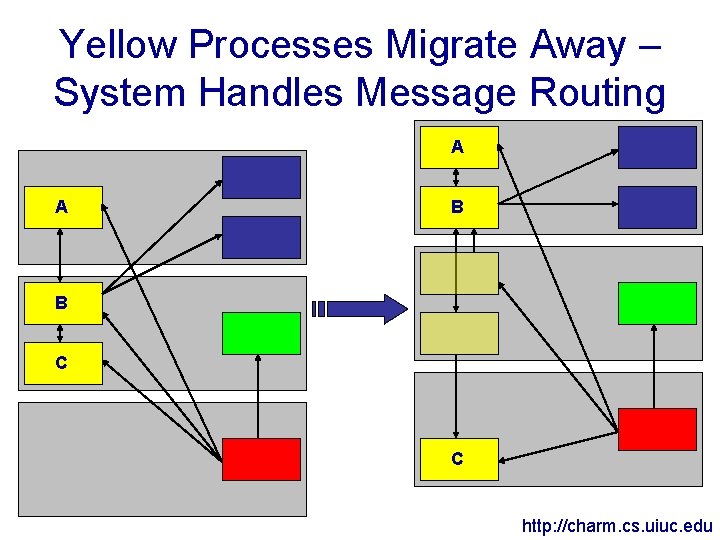

Yellow Processes Migrate Away – System Handles Message Routing A A B B C C http: //charm. cs. uiuc. edu

Load Balancing • Load balancing isn’t solely dependant on CPU usage • Balancers consider network usage as well – Can move objects to lessen network bandwidth usage • Migrating an object to disk instead of another processor gives checkpoint/restart, out-of-core execution http: //charm. cs. uiuc. edu

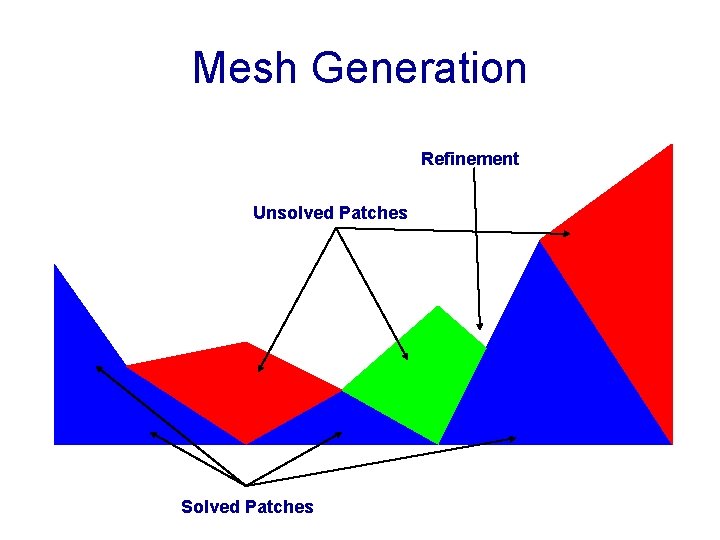

Parallel Spacetime Discontinuous Galerkin • Mesh generation is an advancing front algorithm – Adds an independent set of elements called patches to the mesh • Spacetime methods are setup in such a way they are easy to parallelize – Each patch depends only on inflow elements • Cone constraint insures no other dependencies – Amount of data per patch is small • Inexpensive to send a patch and its inflow elements to another processor http: //charm. cs. uiuc. edu

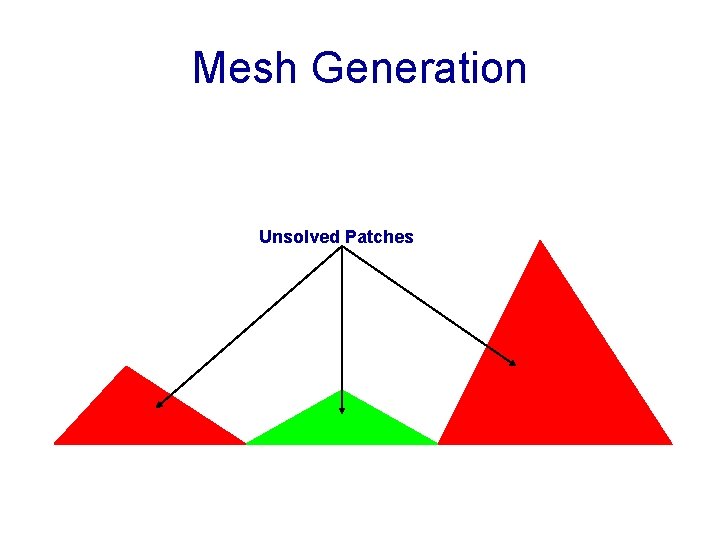

Mesh Generation Unsolved Patches

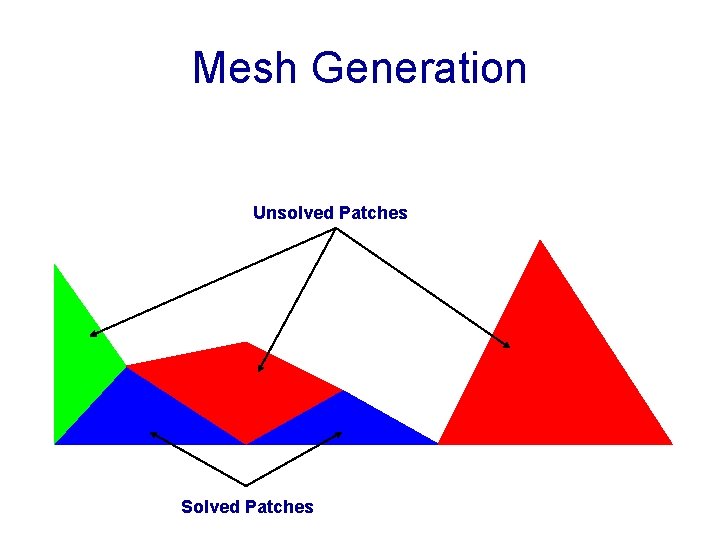

Mesh Generation Unsolved Patches Solved Patches

Mesh Generation Refinement Unsolved Patches Solved Patches

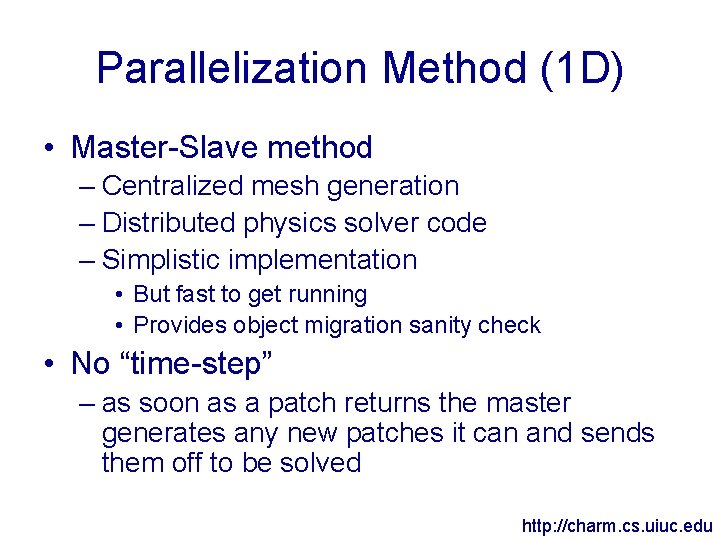

Parallelization Method (1 D) • Master-Slave method – Centralized mesh generation – Distributed physics solver code – Simplistic implementation • But fast to get running • Provides object migration sanity check • No “time-step” – as soon as a patch returns the master generates any new patches it can and sends them off to be solved http: //charm. cs. uiuc. edu

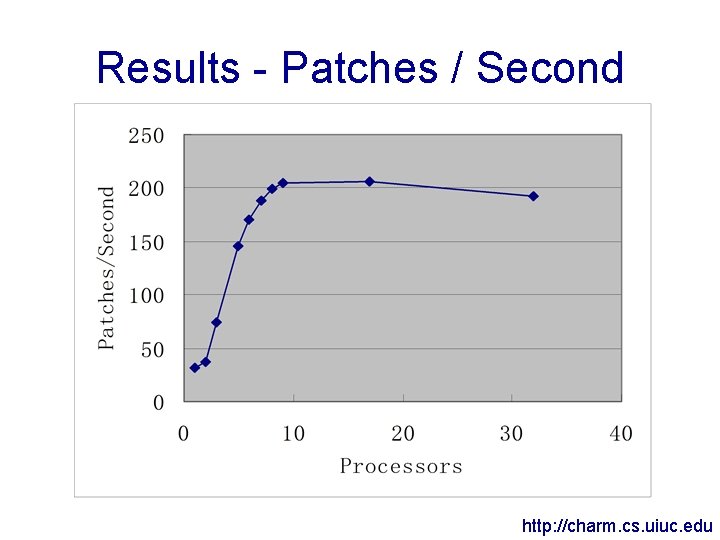

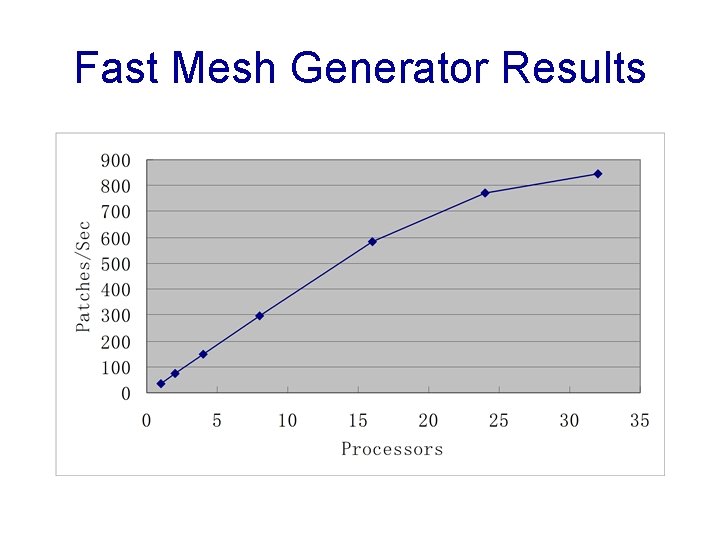

Results - Patches / Second http: //charm. cs. uiuc. edu

Scaling Problems • Speedup is ideal at 4 slave processors • After 4 slaves, diminishing speedup occurs • Possible sources: – Network bandwidth overload – Charm++ system overhead (grainsize control) – Mesh generator overload • Problem doesn’t scale-down – More processors don’t slow the computation down http: //charm. cs. uiuc. edu

Network Bandwidth • Size of a patch to send both ways is 2048 bytes (very conservative estimate) • Can compute 36 patches/(second*CPU) • Each CPU needs 72 kbytes/second • 100 Mbit Ethernet provides 10 Mbyte/sec • Network can support ~130 CPUs – Must not be a lack of network bandwidth http: //charm. cs. uiuc. edu

Charm++ System Overhead (Grainsize Control) • Grainsize is a measure of the smallest unit of work • Too small and overhead dominates – Network latency overhead – Object creation overhead • Each patch takes 1. 7 ms to setup the connection to send (both ways) • Can send ~550 patches/sec to remote processors – Again, higher than observed patch/second rate • Grainsize can be reduced by sending multiple patches at once – Speeds up the computation but speedup still flattens out after 8 processors http: //charm. cs. uiuc. edu

Mesh Generation • With 0 slave processors, 31 ms/patch • With 1 slave processor, 27 ms/patch • Geometry code takes 4 ms to generate a patch – Mesh generator needs a bit more time due to Charm++ message sending overhead • Leads to less than 250 patches/second • Can’t trivially speed this up – Would have to parallelize mesh generation – Parallel mesh generation also would lighten network load if the mesh were fully distributed to slave nodes http: //charm. cs. uiuc. edu

Testing the Mesh Generator Bottleneck • Does speeding up the mesh generator give better results? • Leaves the question how to speed up the mesh generator – The cluster used is a P 3 Xeon 500 Mhz – So run the mesh generator on something faster (a P 4 2. 8 Ghz) – Everything still on 100 Mbit network

Fast Mesh Generator Results

Future Directions • Parallelize geometry/mesh generation – Easy to do in theory – More complex in practice with refinement, coarsening – Lessens network bandwidth consumption • Only have to send border elements of all meshes • Compared to all elements sent right now – Better cache performance http: //charm. cs. uiuc. edu

More Future Directions • Send only necessary data – Currently send everything, needed or not • Use migration to balance load rather than slaves – Means we’ll also get checkpoint/restart and out-ofcore execution for free – Also means we can load balance away some of the network communication • Integrate 2 D mesh generation/physics code – Nothing in the parallel code knows the dimensionality http: //charm. cs. uiuc. edu

- Slides: 23