NLP and ML in Scala with Breeze David

NLP and ML in Scala with Breeze David Hall UC Berkeley 9/18/2012 dlwh@cs. berkeley. edu

What Is Breeze?

What Is Breeze? ≥ Dense Vectors, Matrices, Sparse Vectors, Counters, Decompositions, Graphing, Numerics

What Is Breeze? ≥ Stemming, Segmentation, Part of Speech Tagging, Parsing (Soon)

What Is Breeze? ≥ Nonlinear Optimization, Logistic Regression, SVMs, Probability Distributions

What Is Breeze? ≥ Scalala + Scala. NLP/Core

What are Breeze’s goals? • Build a powerful library that is as flexible as Matlab, but is still well-suited to building large scale software projects. • Build a community of Machine Learning and NLP practitioners to provide building blocks for both research and industrial code.

This talk • Quick overview of Scala • Tour of some of the highlights: – Linear Algebra – Optimization – Machine Learning – Some basic NLP • A simple sentiment classifier

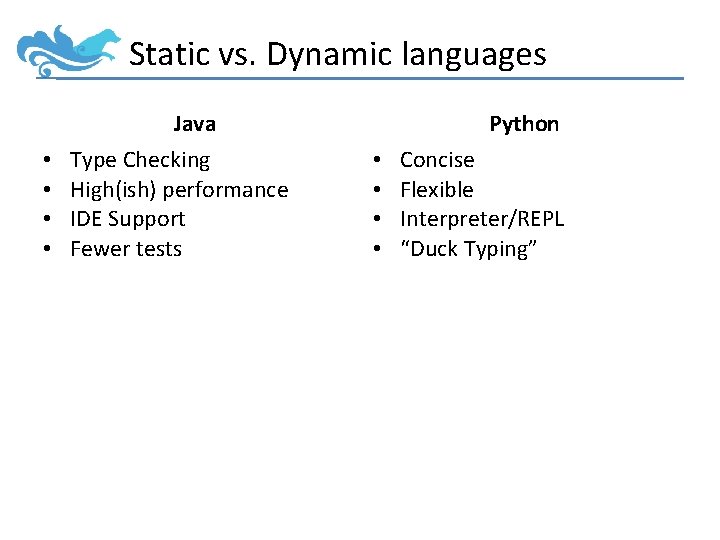

Static vs. Dynamic languages Java • • Type Checking High(ish) performance IDE Support Fewer tests Python • • Concise Flexible Interpreter/REPL “Duck Typing”

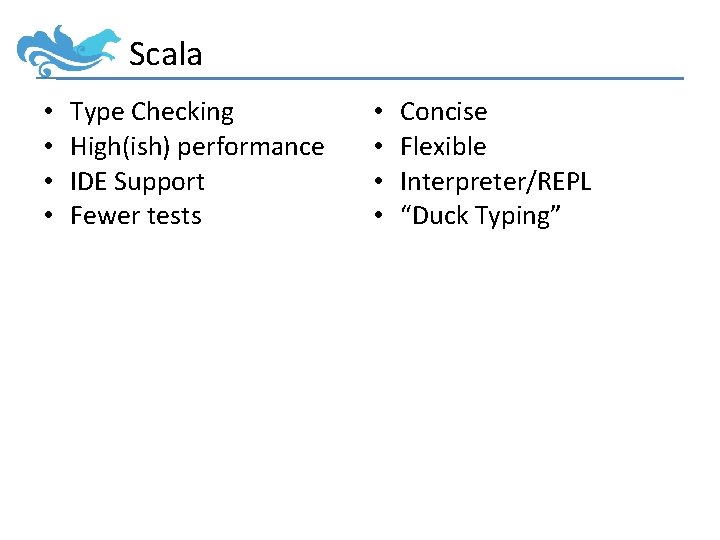

Scala • • Type Checking High(ish) performance IDE Support Fewer tests • • Concise Flexible Interpreter/REPL “Duck Typing”

= Concise

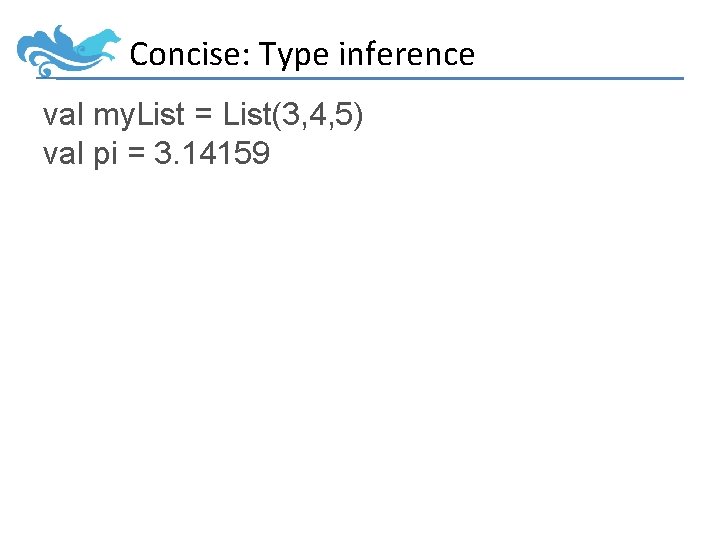

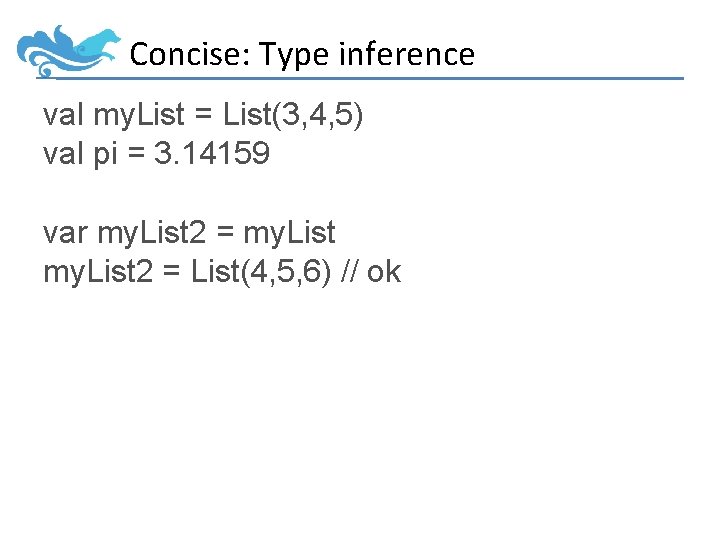

Concise: Type inference val my. List = List(3, 4, 5) val pi = 3. 14159

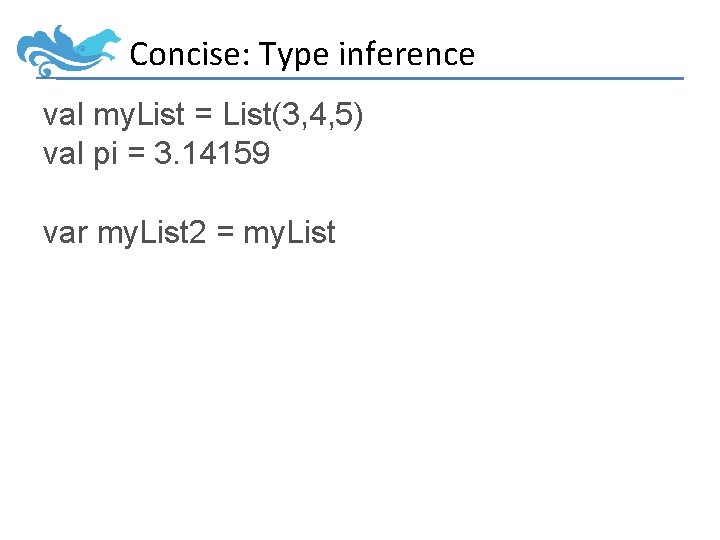

Concise: Type inference val my. List = List(3, 4, 5) val pi = 3. 14159 var my. List 2 = my. List

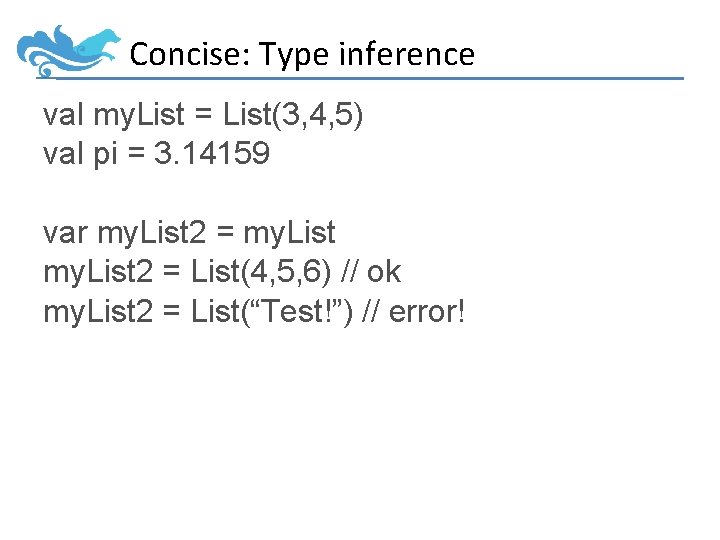

Concise: Type inference val my. List = List(3, 4, 5) val pi = 3. 14159 var my. List 2 = List(4, 5, 6) // ok

Concise: Type inference val my. List = List(3, 4, 5) val pi = 3. 14159 var my. List 2 = List(4, 5, 6) // ok my. List 2 = List(“Test!”) // error!

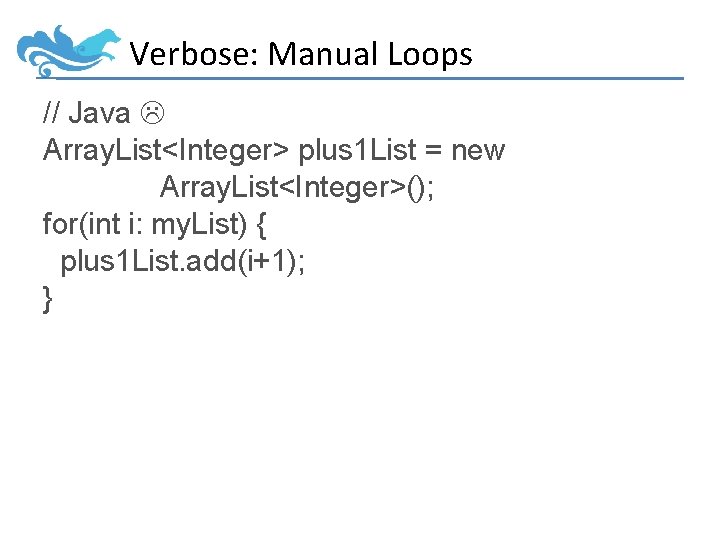

Verbose: Manual Loops // Java Array. List<Integer> plus 1 List = new Array. List<Integer>(); for(int i: my. List) { plus 1 List. add(i+1); }

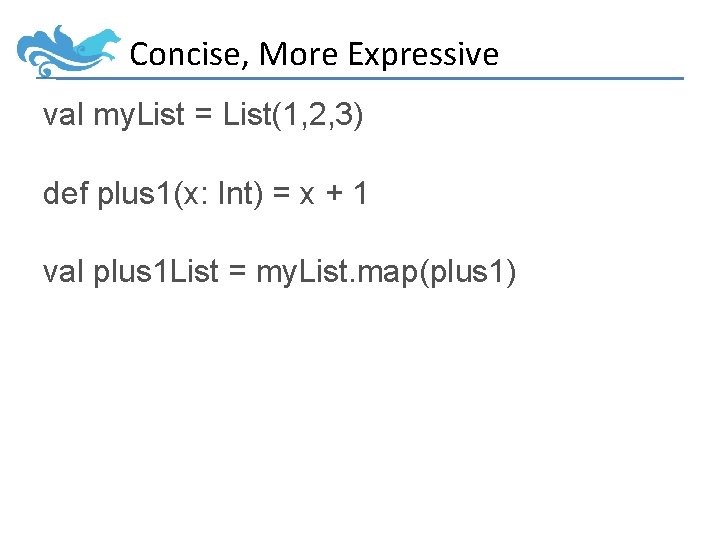

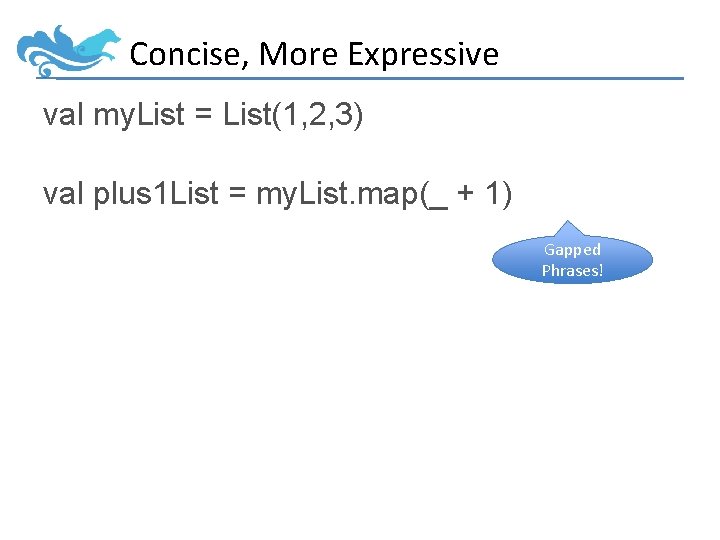

Concise, More Expressive val my. List = List(1, 2, 3) def plus 1(x: Int) = x + 1 val plus 1 List = my. List. map(plus 1)

Concise, More Expressive val my. List = List(1, 2, 3) val plus 1 List = my. List. map(_ + 1) Gapped Phrases!

Verbose, Less Expressive // Java int sum = 0 for(int i: my. List) { sum += i; }

Concise, More Expressive val sum = my. List. reduce(_ + _)

Concise, More Expressive val sum = my. List. reduce(_ + _) val also. Sum = my. List. sum

Concise, More Expressive Parallelized! val sum = my. List. par. reduce(_ + _)

• Title : String • Body : String • Location : URL

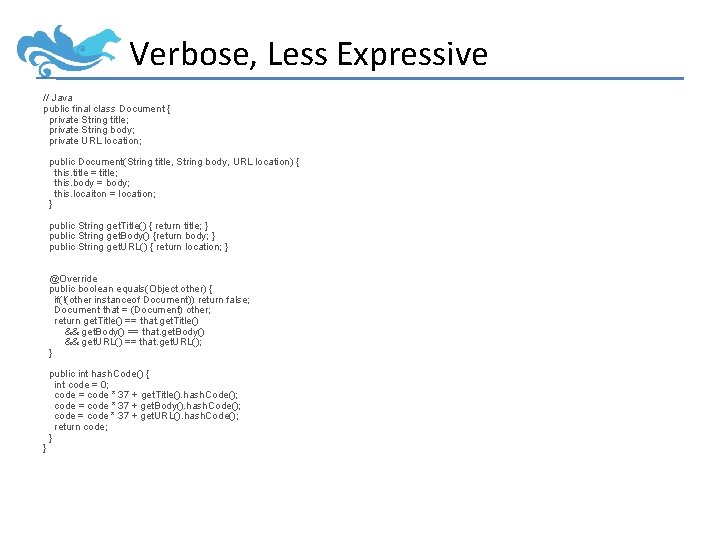

Verbose, Less Expressive // Java public final class Document { private String title; private String body; private URL location; public Document(String title, String body, URL location) { this. title = title; this. body = body; this. locaiton = location; } public String get. Title() { return title; } public String get. Body() {return body; } public String get. URL() { return location; } @Override public boolean equals(Object other) { if(!(other instanceof Document)) return false; Document that = (Document) other; return get. Title() == that. get. Title() && get. Body() == that. get. Body() && get. URL() == that. get. URL(); } } public int hash. Code() { int code = 0; code = code * 37 + get. Title(). hash. Code(); code = code * 37 + get. Body(). hash. Code(); code = code * 37 + get. URL(). hash. Code(); return code; }

Concise, More Expressive // Scala case class Document( title: String, body: String, url: URL)

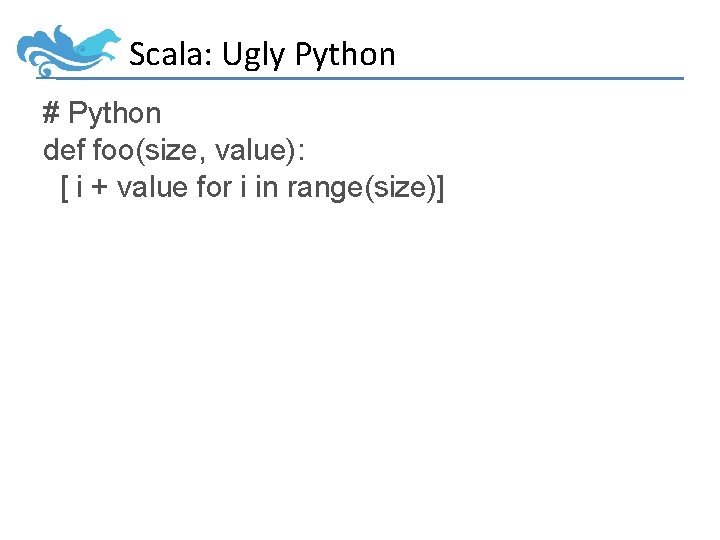

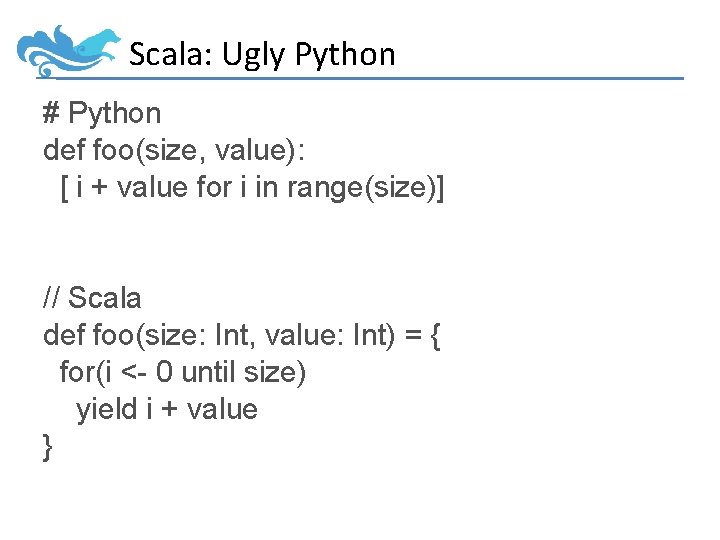

Scala: Ugly Python # Python def foo(size, value): [ i + value for i in range(size)]

Scala: Ugly Python # Python def foo(size, value): [ i + value for i in range(size)] // Scala def foo(size: Int, value: Int) = { for(i <- 0 until size) yield i + value }

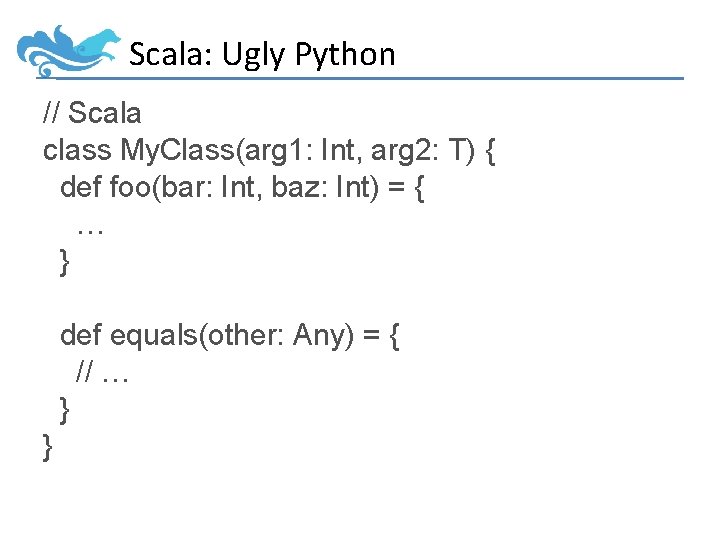

Scala: Ugly Python // Scala class My. Class(arg 1: Int, arg 2: T) { def foo(bar: Int, baz: Int) = { … } def equals(other: Any) = { // … } }

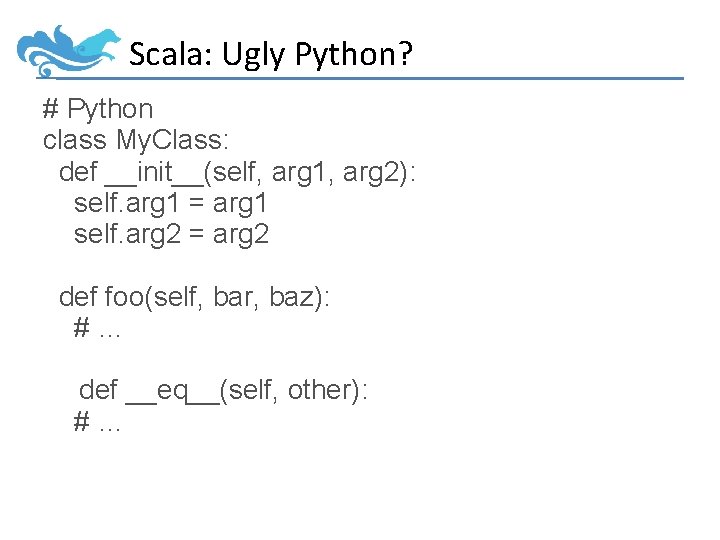

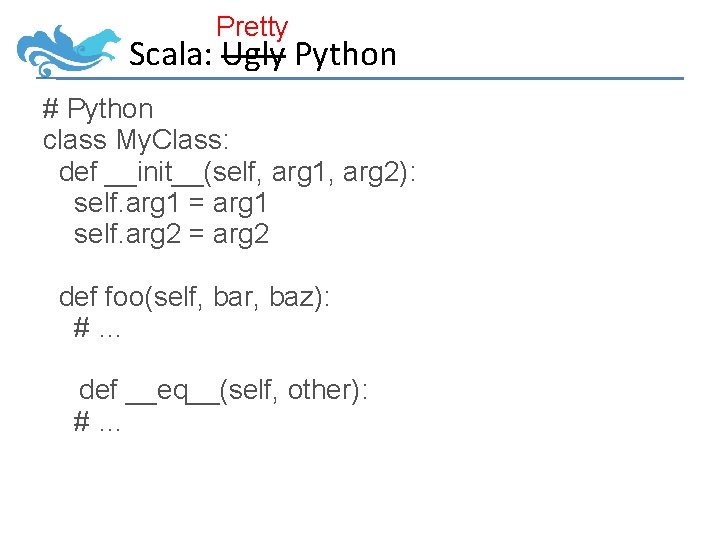

Scala: Ugly Python? # Python class My. Class: def __init__(self, arg 1, arg 2): self. arg 1 = arg 1 self. arg 2 = arg 2 def foo(self, bar, baz): #… def __eq__(self, other): #…

Pretty Scala: Ugly Python # Python class My. Class: def __init__(self, arg 1, arg 2): self. arg 1 = arg 1 self. arg 2 = arg 2 def foo(self, bar, baz): #… def __eq__(self, other): #…

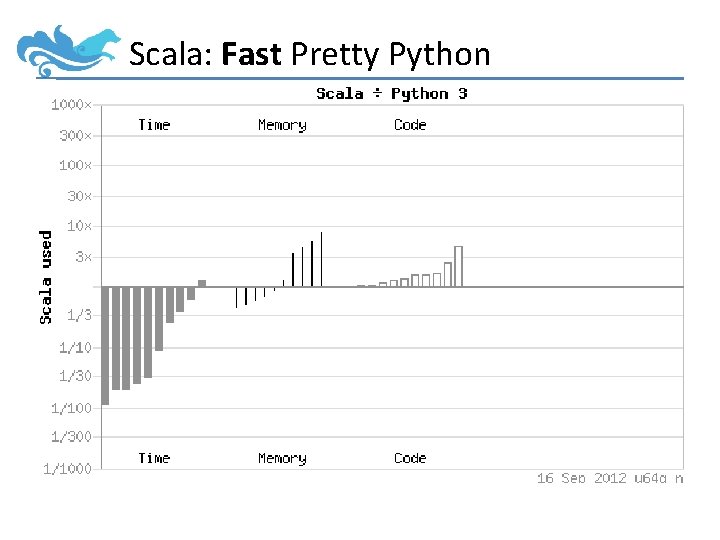

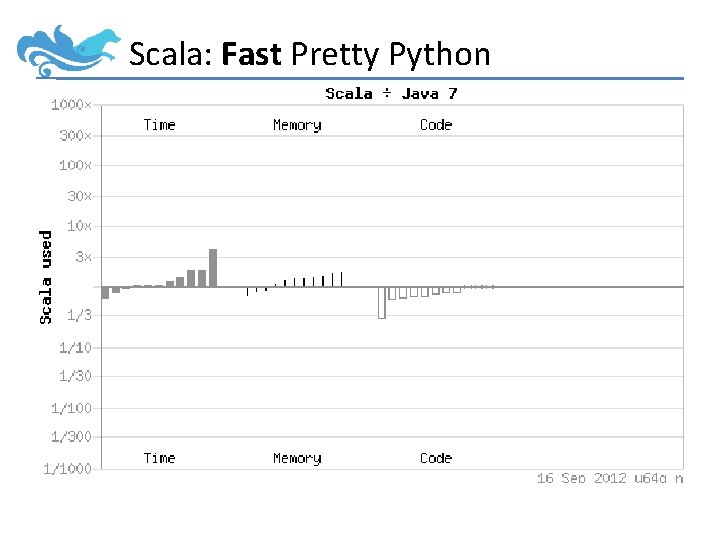

Scala: Fast Pretty Python

Scala: Fast Pretty Python

Scala: Performant, Concise, Fun • Usually within 10% of Java for ~1/2 the code. • Usually 20 -30 x faster than Python, for ± the same code. • Tight inner loops can be written as fast as Java – Great for NLP’s dynamic programs – Typically pretty ugly, though • Outer loops can be written idiomatically – aka more slowly, but prettier

Scala: Some Downsides • IDE support isn’t as strong as for Java. – Getting better all the time • Compiler is much slower.

Learn more about Scala https: //www. coursera. org/course/progfun Starts today!

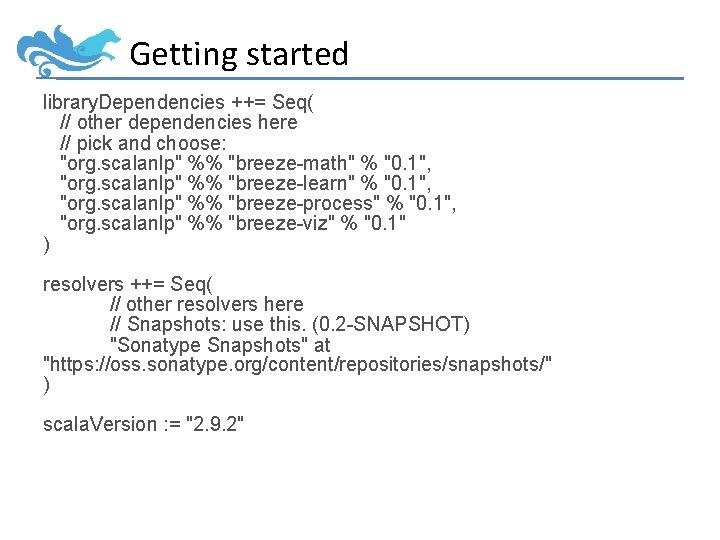

Getting started library. Dependencies ++= Seq( // other dependencies here // pick and choose: "org. scalanlp" %% "breeze-math" % "0. 1", "org. scalanlp" %% "breeze-learn" % "0. 1", "org. scalanlp" %% "breeze-process" % "0. 1", "org. scalanlp" %% "breeze-viz" % "0. 1" ) resolvers ++= Seq( // other resolvers here // Snapshots: use this. (0. 2 -SNAPSHOT) "Sonatype Snapshots" at "https: //oss. sonatype. org/content/repositories/snapshots/" ) scala. Version : = "2. 9. 2"

Breeze-Math

// Dense. Linear Algebra import breeze. linalg. _ val x = Dense. Vector. zeros[Int](5) // Dense.](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-40.jpg)

Linear Algebra import breeze. linalg. _ val x = Dense. Vector. zeros[Int](5) // Dense. Vector(0, 0, 0) val m = Dense. Matrix. zeros[Int](5, 5) val r = Dense. Matrix. rand(5, 5) m. t // transpose x + x // addition m * x // multiplication by vector m * 3 // by scalar m * m // by matrix m : * m // element wise mult, Matlab. *

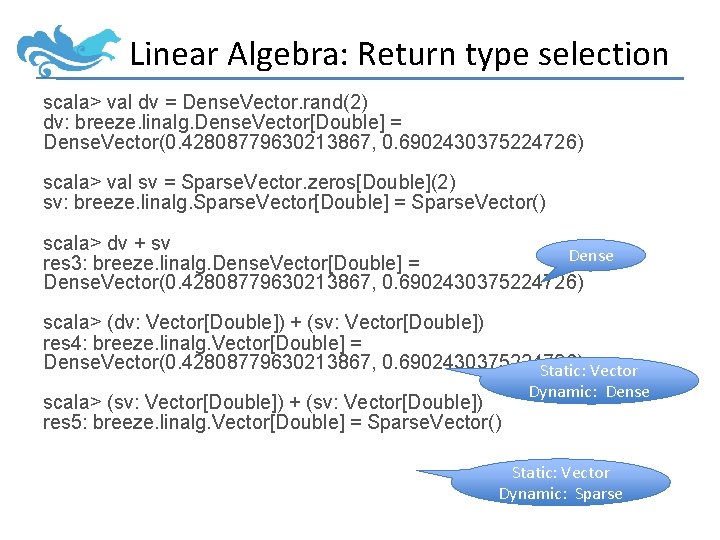

Linear Algebra: Return type selection scala> val dv = Dense. Vector. rand(2) dv: breeze. linalg. Dense. Vector[Double] = Dense. Vector(0. 42808779630213867, 0. 6902430375224726) scala> val sv = Sparse. Vector. zeros[Double](2) sv: breeze. linalg. Sparse. Vector[Double] = Sparse. Vector() scala> dv + sv Dense res 3: breeze. linalg. Dense. Vector[Double] = Dense. Vector(0. 42808779630213867, 0. 6902430375224726) scala> (dv: Vector[Double]) + (sv: Vector[Double]) res 4: breeze. linalg. Vector[Double] = Dense. Vector(0. 42808779630213867, 0. 6902430375224726) Static: Vector scala> (sv: Vector[Double]) + (sv: Vector[Double]) res 5: breeze. linalg. Vector[Double] = Sparse. Vector() Dynamic: Dense Static: Vector Dynamic: Sparse

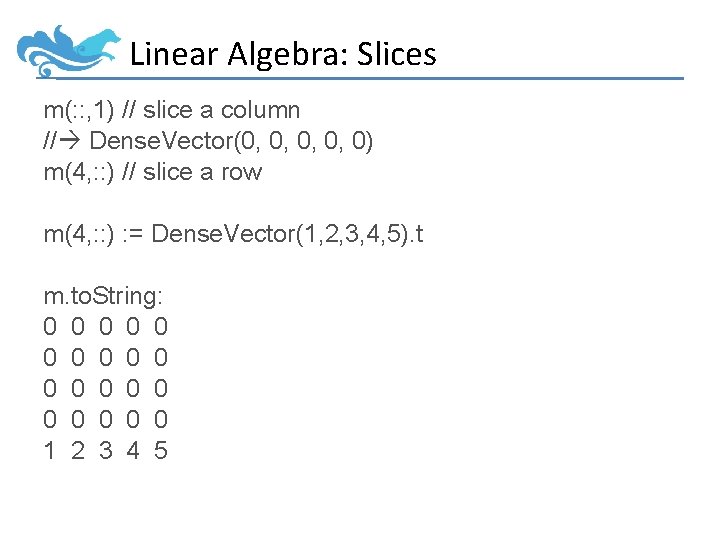

Linear Algebra: Slices m(: : , 1) // slice a column // Dense. Vector(0, 0, 0) m(4, : : ) // slice a row m(4, : : ) : = Dense. Vector(1, 2, 3, 4, 5). t m. to. String: 0 0 0 0 0 1 2 3 4 5

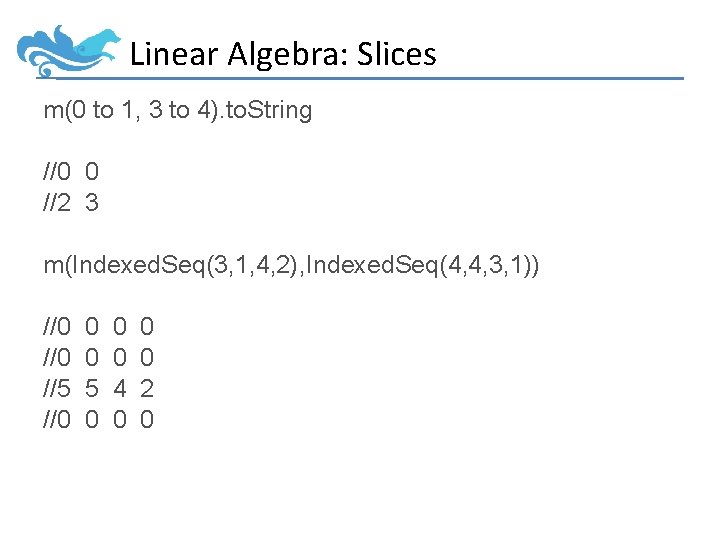

Linear Algebra: Slices m(0 to 1, 3 to 4). to. String //0 0 //2 3 m(Indexed. Seq(3, 1, 4, 2), Indexed. Seq(4, 4, 3, 1)) //0 //5 //0 0 0 5 0 0 0 4 0 0 0 2 0

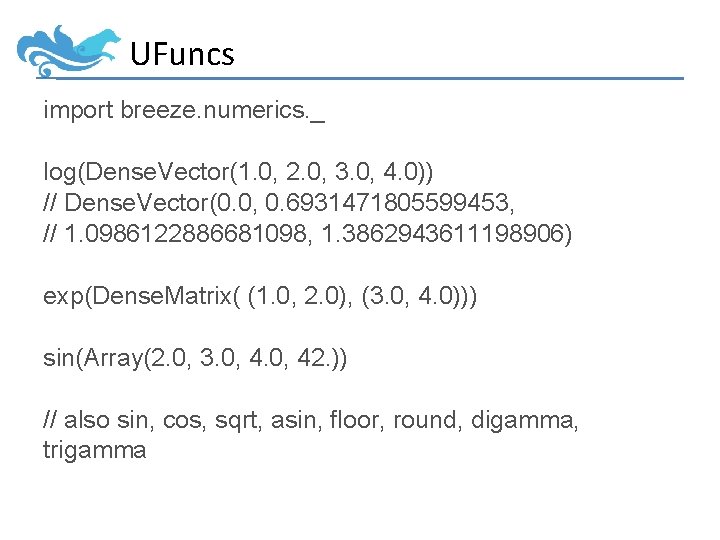

UFuncs import breeze. numerics. _ log(Dense. Vector(1. 0, 2. 0, 3. 0, 4. 0)) // Dense. Vector(0. 0, 0. 6931471805599453, // 1. 0986122886681098, 1. 3862943611198906) exp(Dense. Matrix( (1. 0, 2. 0), (3. 0, 4. 0))) sin(Array(2. 0, 3. 0, 42. )) // also sin, cos, sqrt, asin, floor, round, digamma, trigamma

![UFuncs: Implementation trait Ufunc[-V, +V 2] { def apply(v: V): V 2 def apply[T, UFuncs: Implementation trait Ufunc[-V, +V 2] { def apply(v: V): V 2 def apply[T,](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-45.jpg)

UFuncs: Implementation trait Ufunc[-V, +V 2] { def apply(v: V): V 2 def apply[T, U](t: T)(implicit cmv: Can. Map. Values[T, V, V 2, U]): U = { cmv. map(t, apply _) } } // elsewhere: val exp = UFunc(scala. math. exp _)

![UFuncs: Implementation new Can. Map. Values[Dense. Vector[V], V, V 2, Dense. Vector[V 2]] { UFuncs: Implementation new Can. Map. Values[Dense. Vector[V], V, V 2, Dense. Vector[V 2]] {](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-46.jpg)

UFuncs: Implementation new Can. Map. Values[Dense. Vector[V], V, V 2, Dense. Vector[V 2]] { def map(from: Dense. Vector[V], fn: (V) => V 2) = { val arr = new Array[V 2](from. length) val d = from. data val stride = from. stride var i = 0 var j = from. offset while(i < arr. length) { arr(i) = fn(d(j)) i += 1 j += stride } new Dense. Vector[V 2](arr) } }

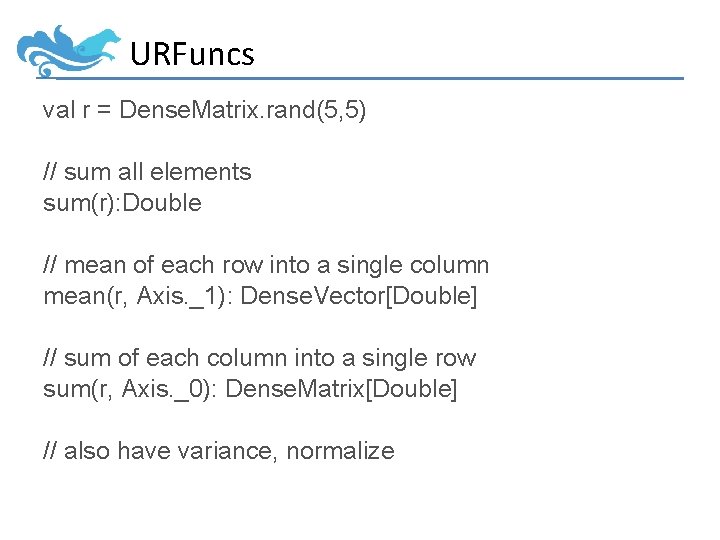

URFuncs val r = Dense. Matrix. rand(5, 5) // sum all elements sum(r): Double // mean of each row into a single column mean(r, Axis. _1): Dense. Vector[Double] // sum of each column into a single row sum(r, Axis. _0): Dense. Matrix[Double] // also have variance, normalize

![URFuncs: the magic trait URFunc[A, +B] { def apply(cc: Traversable. Once[A]): B def apply[T](c: URFuncs: the magic trait URFunc[A, +B] { def apply(cc: Traversable. Once[A]): B def apply[T](c:](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-48.jpg)

URFuncs: the magic trait URFunc[A, +B] { def apply(cc: Traversable. Once[A]): B def apply[T](c: T)(implicit urable: UReduceable[T, A]): B = { urable(c, this) } Optional Specialized Impls def apply(arr: Array[A]): B = apply(arr, arr. length) def apply(arr: Array[A], length: Int): B = apply(arr, 0, 1, length, {_ => true}) def apply(arr: Array[A], offset: Int, stride: Int, length: Int, is. Used: Int=>Boolean): B = { apply((0 until length). filter(is. Used). map(i => arr(offset + i * stride))) } def apply(as: A*): B = apply(as) def apply[T 2, Axis, TA, R]( c: T 2, axis: Axis) (implicit collapse: Can. Collapse. Axis[T 2, Axis, TA, B, R], ured: UReduceable[TA, A]): R = { collapse(c, axis)(ta => this. apply[TA](ta)) } } How Axis stuff works

![URFuncs: the magic trait Tensor[K, V] { // … def ureduce[A](f: URFunc[V, A]) = URFuncs: the magic trait Tensor[K, V] { // … def ureduce[A](f: URFunc[V, A]) =](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-49.jpg)

URFuncs: the magic trait Tensor[K, V] { // … def ureduce[A](f: URFunc[V, A]) = { f(this. values. Iterator) } } trait Dense. Vector[E] … { override def ureduce[A](f: URFunc[E, A]) = { if(offset == 0 && stride == 1) f(data, length) else f(data, offset, stride, length, {(_: Int) => true}) } }

Breeze-Viz

Breeze-Viz • VERY ALPHA API • 2 -d plotting, via JFree. Chart • import breeze. plot. _

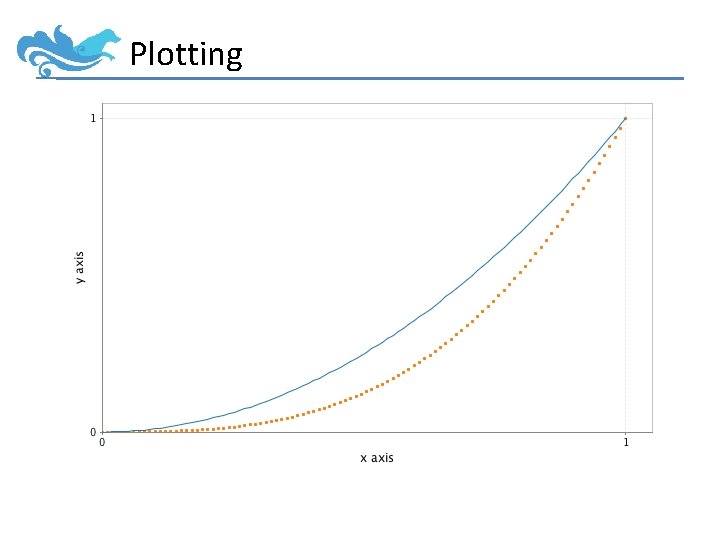

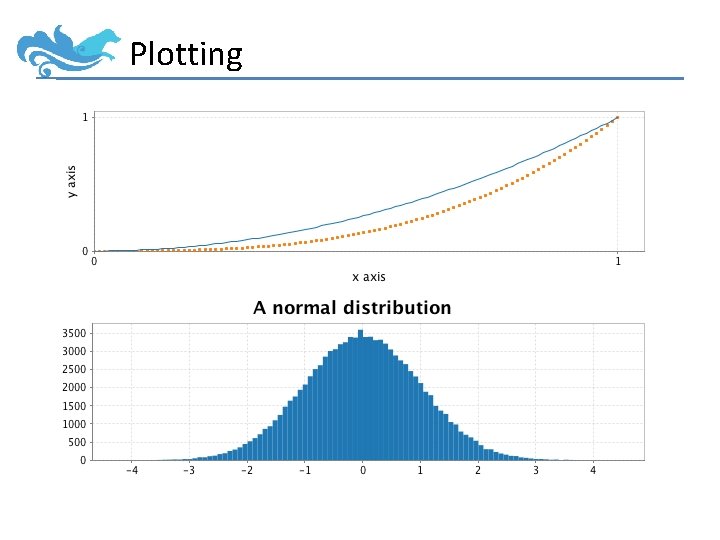

Plotting val f = Figure() val p = f. subplot(0) val x = linspace(0. 0, 1. 0) p += plot(x, x : ^ 2. 0) p += plot(x, x : ^ 3. 0, '. ') p. xlabel = "x axis" p. ylabel = "y axis" f. saveas("lines. png") // also pdf, eps

Plotting

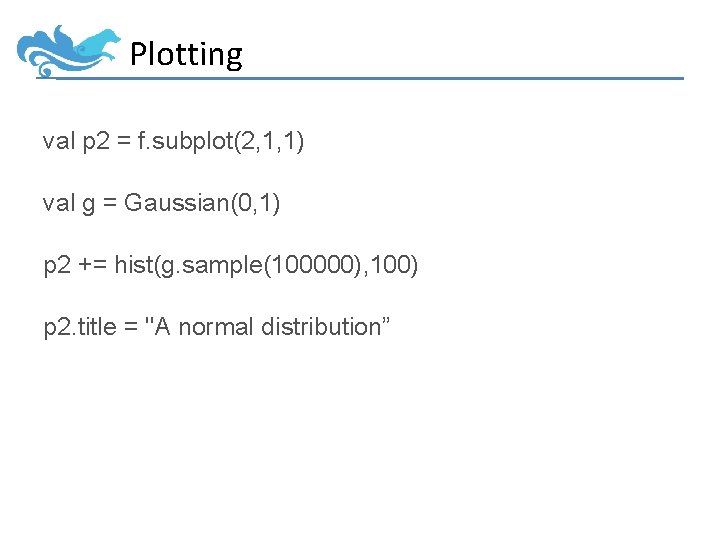

Plotting val p 2 = f. subplot(2, 1, 1) val g = Gaussian(0, 1) p 2 += hist(g. sample(100000), 100) p 2. title = "A normal distribution”

Plotting

Breeze-Learn

Breeze-Learn • Optimization • Machine Learning • Probability Distributions

Breeze-Learn • Optimization – Convex Optimization: LBFGS, OWLQN – Stochastic Gradient Descent: Adaptive Gradient Descent – Linear Program DSL, solver – Bipartite Matching

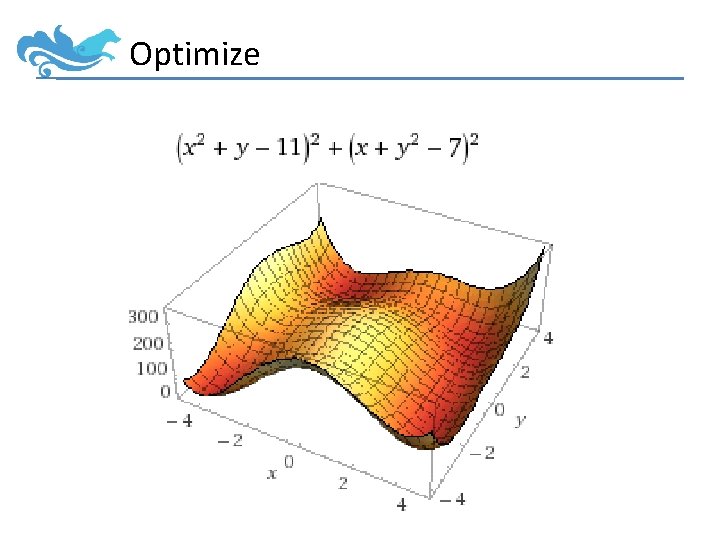

Optimize

![Optimize trait Diff. Function[T] extends (T=>Double) { /** Calculates both the value and the Optimize trait Diff. Function[T] extends (T=>Double) { /** Calculates both the value and the](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-60.jpg)

Optimize trait Diff. Function[T] extends (T=>Double) { /** Calculates both the value and the gradient at a point */ def calculate(x: T): (Double, T); }

![Optimize val df = new Diff. Function[DV[Double]] { def calculate(values: DV[Double]) = { val Optimize val df = new Diff. Function[DV[Double]] { def calculate(values: DV[Double]) = { val](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-61.jpg)

Optimize val df = new Diff. Function[DV[Double]] { def calculate(values: DV[Double]) = { val gradient = DV. zeros[Double](2) val (x, y) = (values(0), values(1)) value = pow(x* x + y - 11, 2) + pow(x + y * y - 7, 2) gradient(0) = 4 * x * (x * x + y - 11) + 2 * (x + y * y - 7) gradient(1) = 2 * (x * x + y - 11) + 4 * y * (x + y * y - 7) (value, gradient) } }

![Optimize val lbfgs = new LBFGS[Dense. Vector[Double]] lbfgs. minimize(df, Dense. Vector. rand(2)) // Dense. Optimize val lbfgs = new LBFGS[Dense. Vector[Double]] lbfgs. minimize(df, Dense. Vector. rand(2)) // Dense.](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-62.jpg)

Optimize val lbfgs = new LBFGS[Dense. Vector[Double]] lbfgs. minimize(df, Dense. Vector. rand(2)) // Dense. Vector(2. 999983, 2. 000046)

![Optimize val lbfgs = new LBFGS[Dense. Vector[Double]] lbfgs. minimize(df, Dense. Vector. rand(2)) // Dense. Optimize val lbfgs = new LBFGS[Dense. Vector[Double]] lbfgs. minimize(df, Dense. Vector. rand(2)) // Dense.](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-63.jpg)

Optimize val lbfgs = new LBFGS[Dense. Vector[Double]] lbfgs. minimize(df, Dense. Vector. rand(2)) // Dense. Vector(2. 999983, 2. 000046)

Breeze-Learn • Classify – Logistic Classifier – SVM – Naïve Bayes – Perceptron

Breeze-Learn val training. Data = Array ( Example("cat", Counter. count("fuzzy", "claws", "small")), Example("bear", Counter. count("fuzzy", "claws", "big”)), Example("cat", Counter. count("claws", "medium”)) ) val test. Data = Array( Example("? ? ", Counter. count("claws", "small”)) )

![Breeze-Learn new Logistic. Classifier. Trainer[L, Counter[T, Double]]() val classifier = trainer. train(training. Data) classifier(Counter. Breeze-Learn new Logistic. Classifier. Trainer[L, Counter[T, Double]]() val classifier = trainer. train(training. Data) classifier(Counter.](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-66.jpg)

Breeze-Learn new Logistic. Classifier. Trainer[L, Counter[T, Double]]() val classifier = trainer. train(training. Data) classifier(Counter. count(“fuzzy”, “small”)) == “cat”

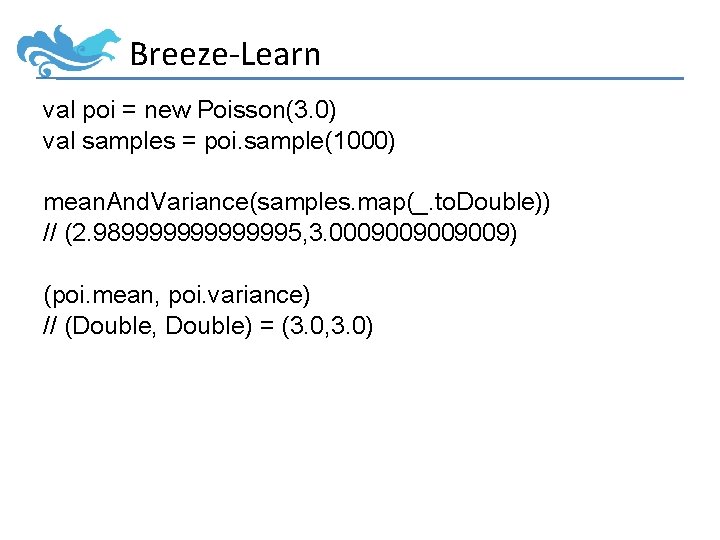

Breeze-Learn • Distributions – Poisson, Gamma, Gaussian, Multinomial, Von Mises… – Sampling, PDF, Mean, Variance, Maximum Likelihood Estimation

Breeze-Learn val poi = new Poisson(3. 0) val samples = poi. sample(1000) mean. And. Variance(samples. map(_. to. Double)) // (2. 989999995, 3. 0009009) (poi. mean, poi. variance) // (Double, Double) = (3. 0, 3. 0)

Let’s build something… • Sentiment Classification – Given a movie review, predict whether it is positive or negative. • Dataset: – Bo Pang, Lillian Lee, and Shivakumar Vaithyanathan, Thumbs up? Sentiment Classification using Machine Learning Techniques, EMNLP 2002 – http: //www. cs. cornell. edu/people/pabo/moviereview-data/

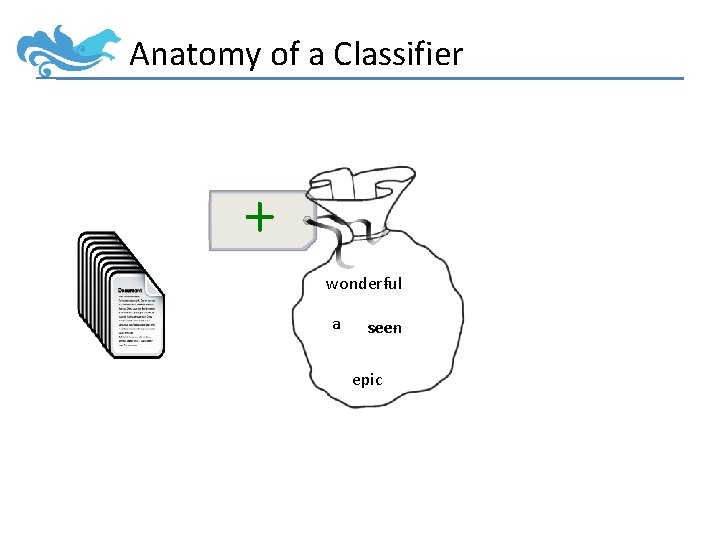

Anatomy of a Classifier +x

Anatomy of a Classifier + + wonderful wondera seeseen epic

![Anatomy of a Classifier + wonderful wondera seeseen epic Index[Feature] Anatomy of a Classifier + wonderful wondera seeseen epic Index[Feature]](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-72.jpg)

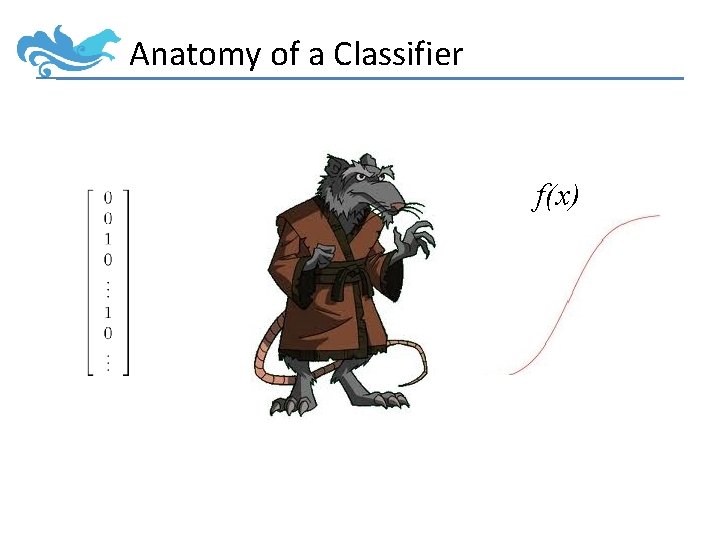

Anatomy of a Classifier + wonderful wondera seeseen epic Index[Feature]

Anatomy of a Classifier f(x)

Let’s build something… object Sentiment. Classifier { case class Params( @Help(text="Path to txt_sentoken in the dataset. ") train: File, help: Boolean = false) // …

![Parsing command line options def main(args: Array[String]) { // Read in parameters, ensure they're Parsing command line options def main(args: Array[String]) { // Read in parameters, ensure they're](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-75.jpg)

Parsing command line options def main(args: Array[String]) { // Read in parameters, ensure they're right and dump help if necessary val (config, seq. Args) = Command. Line. Parser. parse. Arguments(args) val params = config. read. In[Params](“”) if(params. help) { println(Generate. Help[Params](config)) sys. exit(1) }

Reading in data val tokenizer = breeze. text. Language. Pack. English val data: Array[Example[Int, Indexed. Seq[String]]] = { for { dir <- params. train. list. Files(); f <- dir. list. Files() } yield { val slurped = Source. from. File(f). mk. String val text = tokenizer(slurped). to. Indexed. Seq // data is in pos/ and neg/ directories val label = if(dir. get. Name =="pos") 1 else 0 Example(label, text, id = f. get. Name) } }

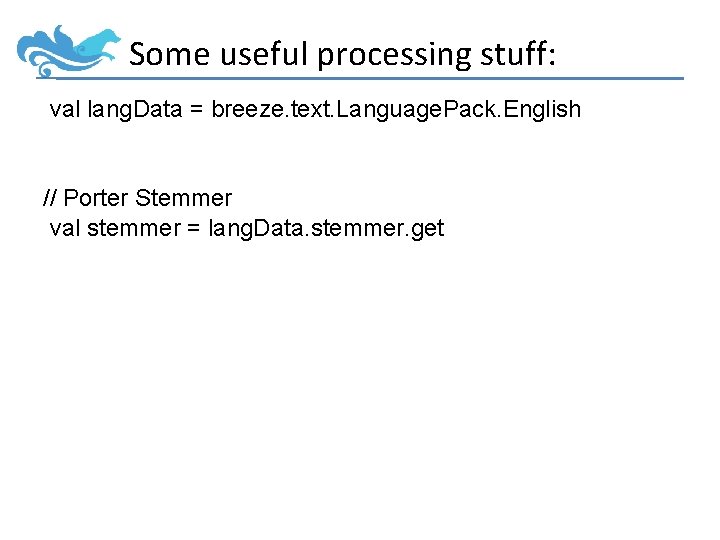

Some useful processing stuff: val lang. Data = breeze. text. Language. Pack. English // Porter Stemmer val stemmer = lang. Data. stemmer. get

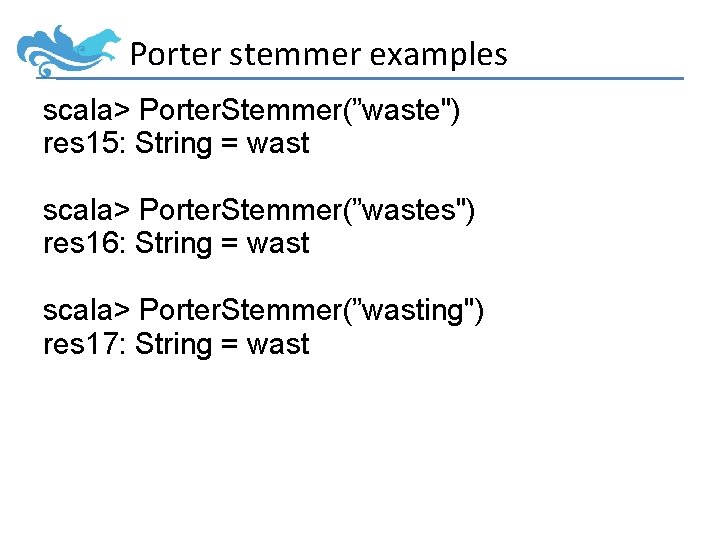

Porter stemmer examples scala> Porter. Stemmer(”waste") res 15: String = wast scala> Porter. Stemmer(”wastes") res 16: String = wast scala> Porter. Stemmer(”wasting") res 17: String = wast scala> Porter. Stemmer(”wastetastic") res 18: String = wastetast

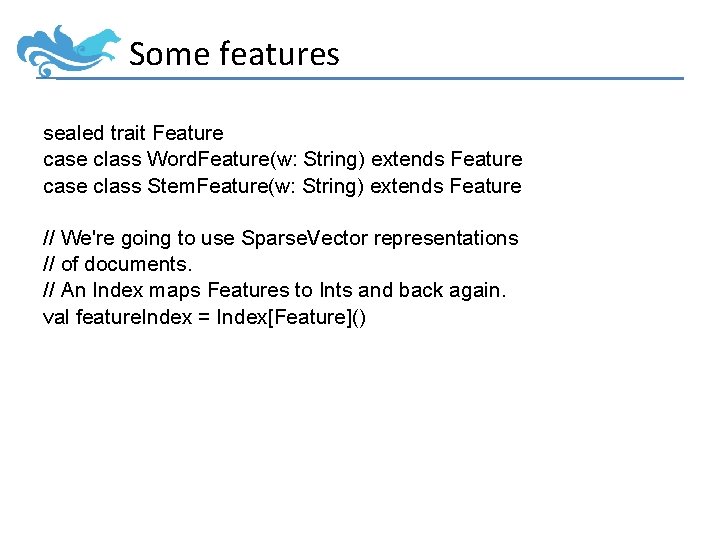

Some features sealed trait Feature case class Word. Feature(w: String) extends Feature case class Stem. Feature(w: String) extends Feature // We're going to use Sparse. Vector representations // of documents. // An Index maps Features to Ints and back again. val feature. Index = Index[Feature]()

![Extract features for each example def extract. Features(ex: Example[Int, ISeq[String]]) = { ex. map Extract features for each example def extract. Features(ex: Example[Int, ISeq[String]]) = { ex. map](http://slidetodoc.com/presentation_image_h/bc2219b57e4eeb0b3f3e9a12e9a6cd5c/image-80.jpg)

Extract features for each example def extract. Features(ex: Example[Int, ISeq[String]]) = { ex. map { words => val builder = new Sparse. Vector. Builder[Double](Int. Max. Value) for(w <- words) { val fi = feature. Index. index(Word. Feature(w)) val s = stemmer(w) val si = feature. Index. index(Stem. Feature(s)) builder. add(fi, 1. 0) builder. add(si, 1. 0) } builder } }

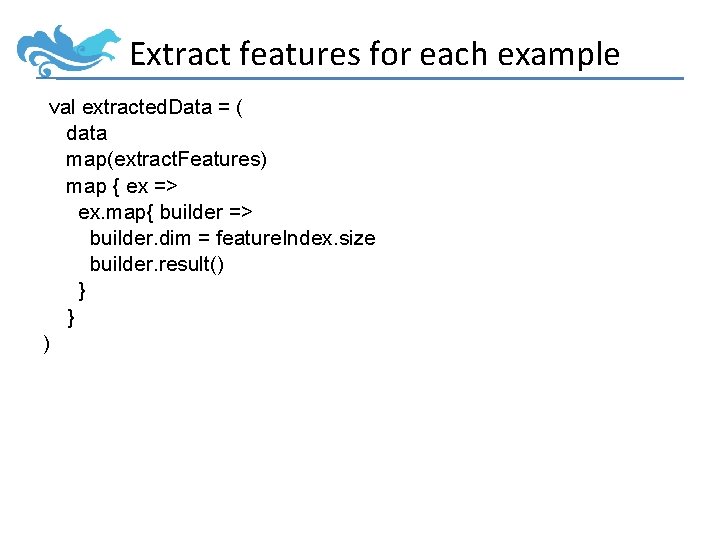

Extract features for each example val extracted. Data = ( data map(extract. Features) map { ex => ex. map{ builder => builder. dim = feature. Index. size builder. result() } } )

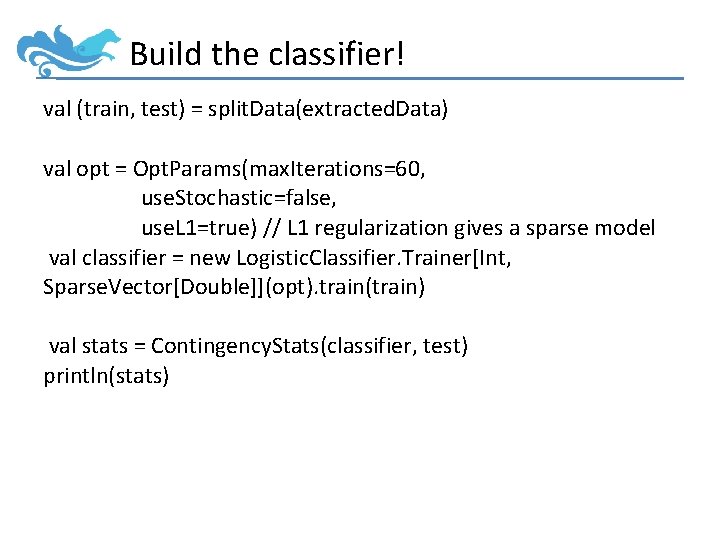

Build the classifier! val (train, test) = split. Data(extracted. Data) val opt = Opt. Params(max. Iterations=60, use. Stochastic=false, use. L 1=true) // L 1 regularization gives a sparse model val classifier = new Logistic. Classifier. Trainer[Int, Sparse. Vector[Double]](opt). train(train) val stats = Contingency. Stats(classifier, test) println(stats)

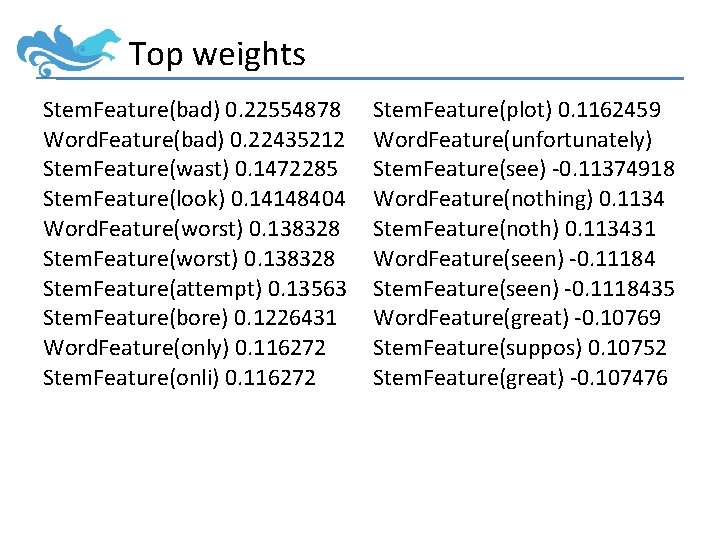

Top weights Stem. Feature(bad) 0. 22554878 Word. Feature(bad) 0. 22435212 Stem. Feature(wast) 0. 1472285 Stem. Feature(look) 0. 14148404 Word. Feature(worst) 0. 138328 Stem. Feature(attempt) 0. 13563 Stem. Feature(bore) 0. 1226431 Word. Feature(only) 0. 116272 Stem. Feature(onli) 0. 116272 Stem. Feature(plot) 0. 1162459 Word. Feature(unfortunately) Stem. Feature(see) -0. 11374918 Word. Feature(nothing) 0. 1134 Stem. Feature(noth) 0. 113431 Word. Feature(seen) -0. 11184 Stem. Feature(seen) -0. 1118435 Word. Feature(great) -0. 10769 Stem. Feature(suppos) 0. 10752 Stem. Feature(great) -0. 107476

Breeze: What’s Next? • • Improved tokenization, segmentation Cross-lingual stuff GPU matrices (via Java. CL or JCUDA) More powerful/customizable classification routines • Epic: platform for “real NLP” – Parsing, Named Entity Recognition, POS Tagging, etc. – Hall and Klein (2012)

Thanks! https: //github. com/dlwh/breeze http: //scalanlp. org

No really, who is Breeze?

- Slides: 86