Network Traffic Anomaly Detection Based on Packet Bytes

Network Traffic Anomaly Detection Based on Packet Bytes Matthew V. Mahoney Florida Institute of Technology mmahoney@cs. fit. edu

Limitations of Intrusion Detection • Host based (audit logs, virus checkers) – Cannot be trusted after a compromise • Network signature detection (SNORT, Bro) – Cannot detect novel attacks – Alarm floods (network traffic is bursty) • Address/port anomaly detection (ADAM, SPADE, e. Bayes) – Cannot detect attacks on public servers (web, mail, DNS)

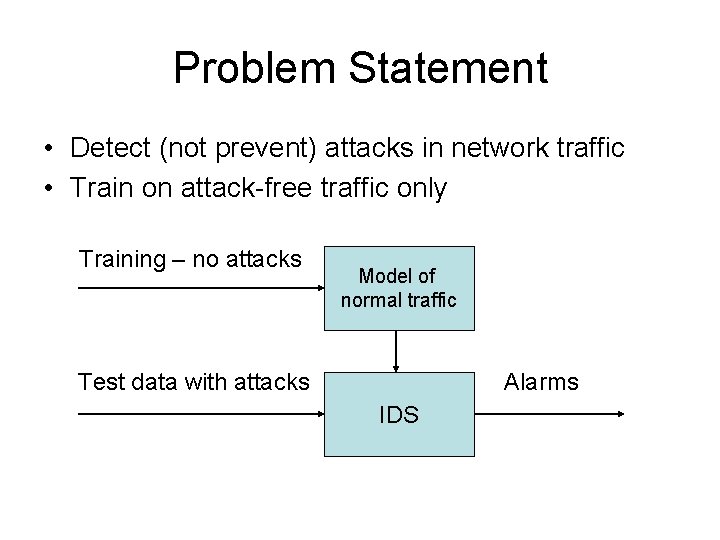

Problem Statement • Detect (not prevent) attacks in network traffic • Train on attack-free traffic only Training – no attacks Model of normal traffic Test data with attacks Alarms IDS

Approach • Model client protocols via inbound traffic – 9 protocols: IP, TCP, HTTP, SMTP … – Beginning of request only (~ 2% of traffic) • Test each packet independently • Unusual bytes = hostile (sometimes) – Values seen but not often or recently – Values never seen in training (higher score)

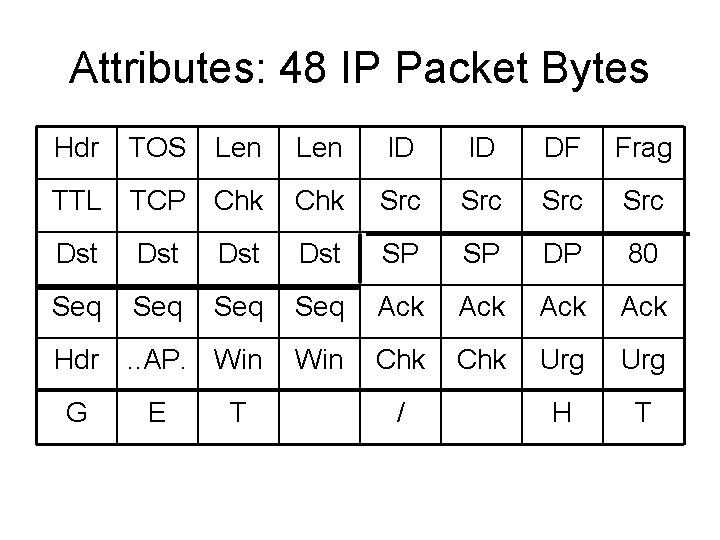

Attributes: 48 IP Packet Bytes Hdr TOS Len ID ID DF Frag TTL TCP Chk Src Src Dst Dst SP SP DP 80 Seq Seq Ack Ack Hdr . . AP. Win Chk Urg H T G E T /

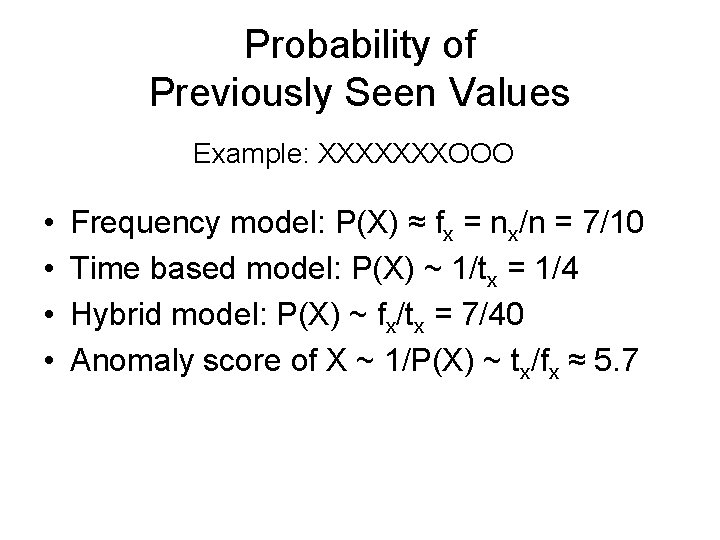

Probability of Previously Seen Values Example: XXXXXXXOOO • • Frequency model: P(X) ≈ fx = nx/n = 7/10 Time based model: P(X) ~ 1/tx = 1/4 Hybrid model: P(X) ~ fx/tx = 7/40 Anomaly score of X ~ 1/P(X) ~ tx/fx ≈ 5. 7

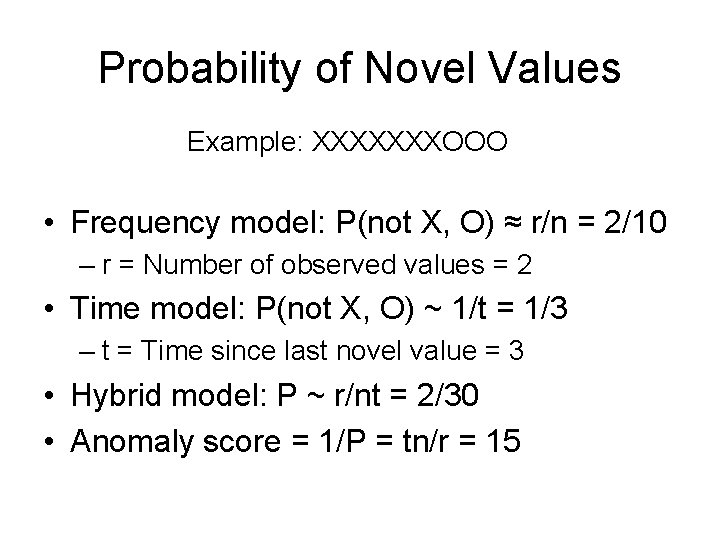

Probability of Novel Values Example: XXXXXXXOOO • Frequency model: P(not X, O) ≈ r/n = 2/10 – r = Number of observed values = 2 • Time model: P(not X, O) ~ 1/t = 1/3 – t = Time since last novel value = 3 • Hybrid model: P ~ r/nt = 2/30 • Anomaly score = 1/P = tn/r = 15

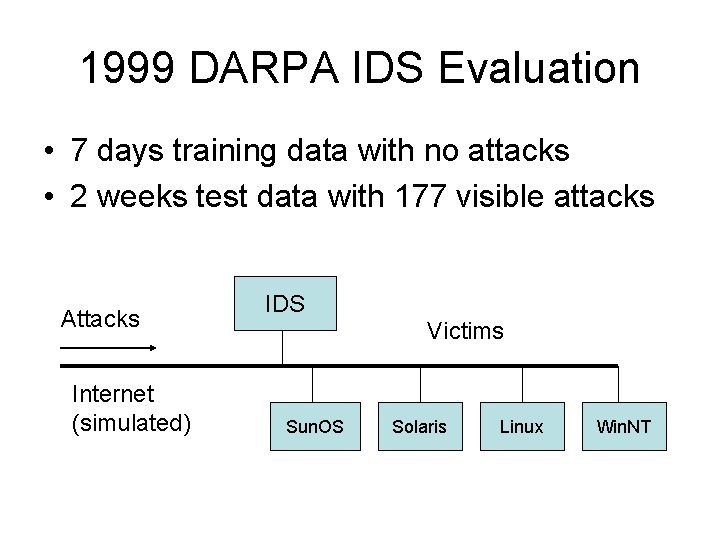

1999 DARPA IDS Evaluation • 7 days training data with no attacks • 2 weeks test data with 177 visible attacks Attacks Internet (simulated) IDS Victims Sun. OS Solaris Linux Win. NT

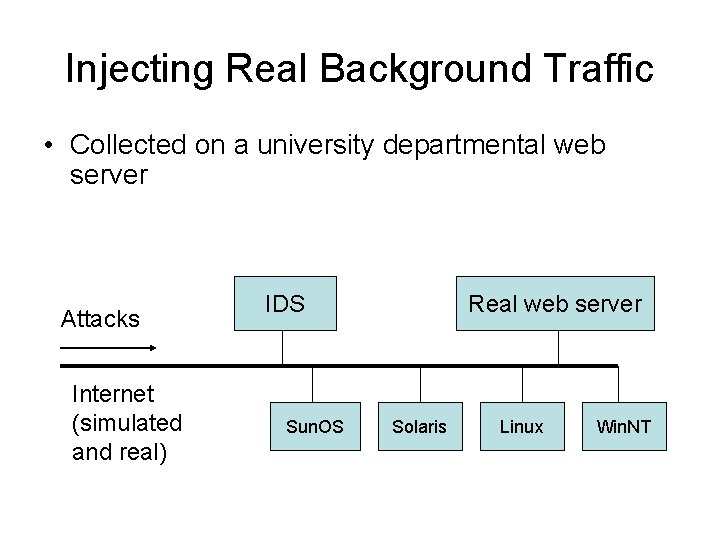

Injecting Real Background Traffic • Collected on a university departmental web server Attacks Internet (simulated and real) IDS Sun. OS Real web server Solaris Linux Win. NT

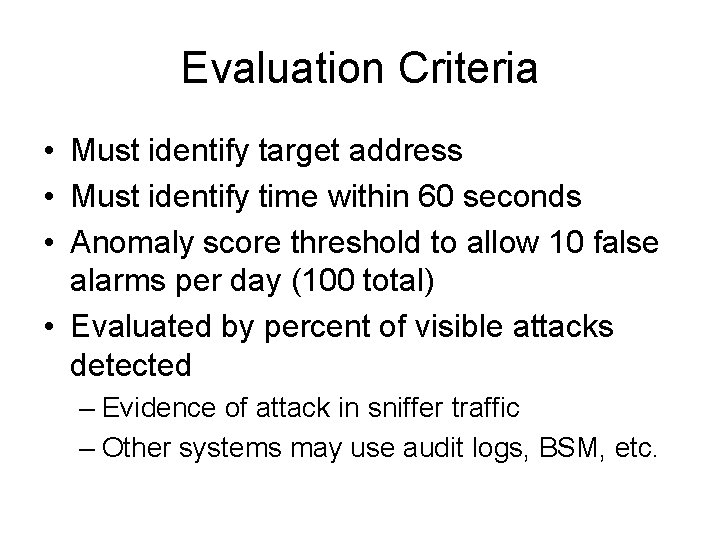

Evaluation Criteria • Must identify target address • Must identify time within 60 seconds • Anomaly score threshold to allow 10 false alarms per day (100 total) • Evaluated by percent of visible attacks detected – Evidence of attack in sniffer traffic – Other systems may use audit logs, BSM, etc.

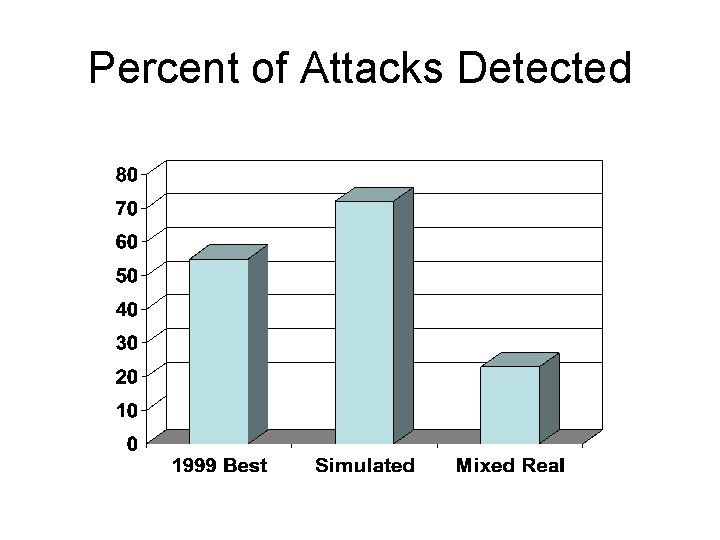

Percent of Attacks Detected

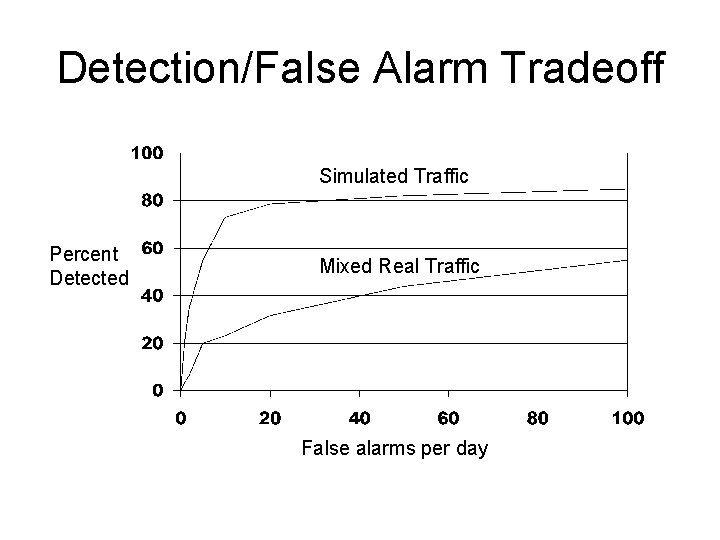

Detection/False Alarm Tradeoff Simulated Traffic Percent Detected Mixed Real Traffic False alarms per day

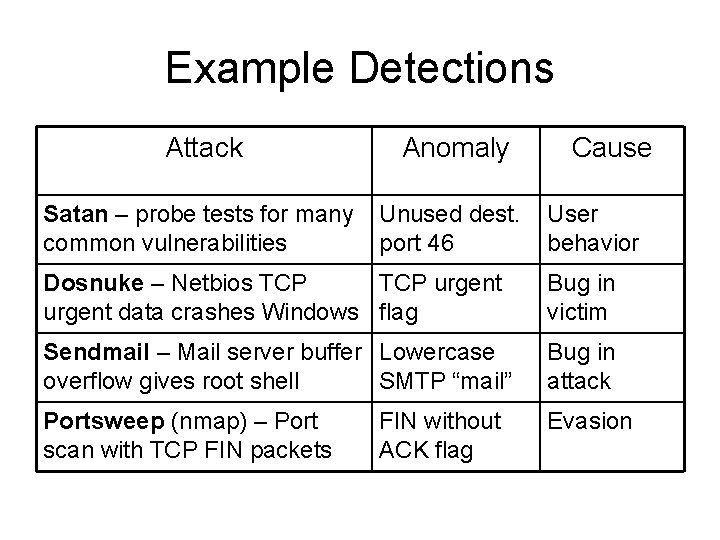

Example Detections Attack Anomaly Satan – probe tests for many common vulnerabilities Unused dest. port 46 Cause User behavior Dosnuke – Netbios TCP urgent data crashes Windows flag Bug in victim Sendmail – Mail server buffer Lowercase overflow gives root shell SMTP “mail” Bug in attack Portsweep (nmap) – Port scan with TCP FIN packets Evasion FIN without ACK flag

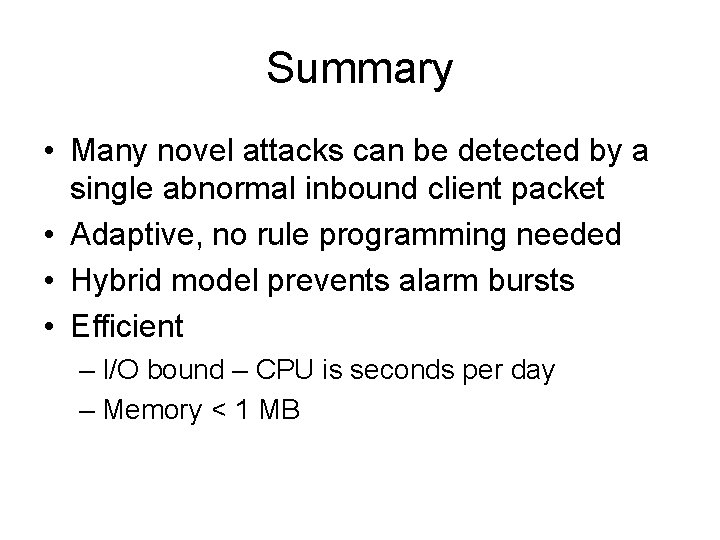

Summary • Many novel attacks can be detected by a single abnormal inbound client packet • Adaptive, no rule programming needed • Hybrid model prevents alarm bursts • Efficient – I/O bound – CPU is seconds per day – Memory < 1 MB

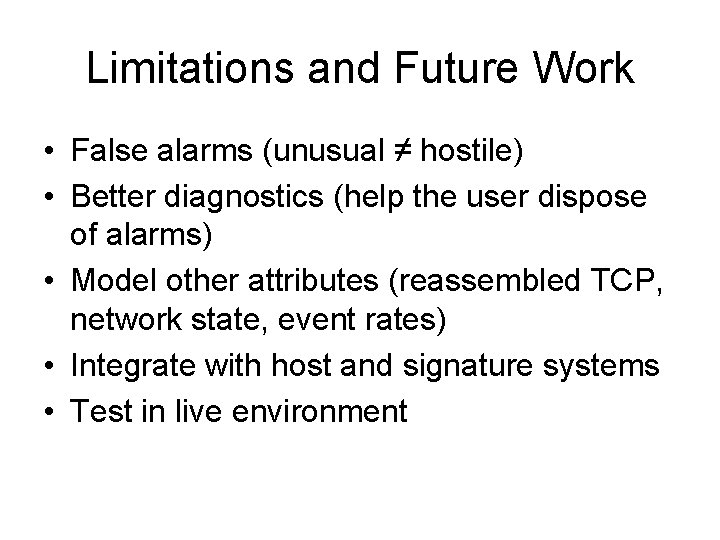

Limitations and Future Work • False alarms (unusual ≠ hostile) • Better diagnostics (help the user dispose of alarms) • Model other attributes (reassembled TCP, network state, event rates) • Integrate with host and signature systems • Test in live environment

Thank You

- Slides: 16