Towards Anomaly Detection in Network Traffic by Statistical

Towards Anomaly Detection in Network Traffic by Statistical Means and Machine Learning Roland Kwitt & Tobias Strohmeier Salzburg Research Forschungsgesellschaft m. b. H. | Jakob-Haringer-Str. 5/III | A-5020 Salzburg T +43. 662. 2288 -200 | F +43. 662. 2288 -222 | info@salzburgresearch. at | www. salzburgresearch. at

Overview 9/25/2020 | Motivation | Dependency between Anomalies and Attacks | The Detection Process | Assumptions | Statistical Analysis | Machine Learning | Results | Further Work © Salzburg Research 2

Motivation 9/25/2020 | Attacks against computer networks are dramatically increasing every year | Security solutions merely based on firewalls are not enough any longer | Prevention + Detection of malicious activity | Attacks tend to vary over time signature based approaches lack flexibility | Anomaly detection provides means to detect variations of old attacks as well as novel attacks © Salzburg Research 3

Dependency between Anomalies and Attacks | 9/25/2020 Actually, there is a huge amount of possibilities! Examples: | Network probes or scans are necessarily anomalous since they seek information legitimate users already possess | Many attack rely on the ability of an attacker to construct client protocols themselves in most cases the target environment is not duplicated carefully enough | A lot of successfully executed attacks result in so called response anomalies! For example, due to the exploitation of implementation failures | Deliberately manipulated protocols designed to pass improperly configured firewall systems © Salzburg Research 4

The Detection Process (1): : Assumptions 9/25/2020 | Many malicious activities deviate from normal activities | A subset of them can be detected by monitoring the distributions of certain packet header fields | Based on monitoring benign traffic, a baseline profile, describing normal traffic behavior can be established | Do we have enough almost anomaly free traffic (training data) ? | Is the training data representative for normal traffic conditions ? | We make the assumption the data generating process is (weak) stationary (idealization) ! © Salzburg Research 5

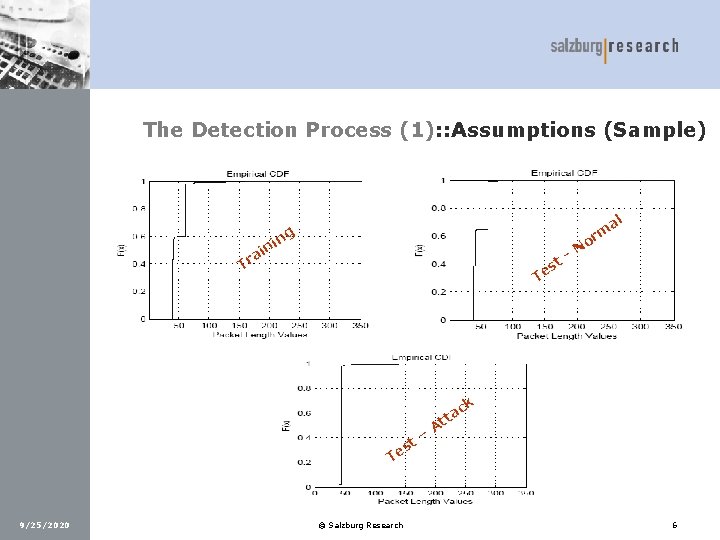

The Detection Process (1): : Assumptions (Sample) al rm ng i n ai r T st e T o N - ck t es – ta t A T 9/25/2020 © Salzburg Research 6

The Detection Process (2): : Statistical Analysis (1) | Let a random variable X denote whether a monitored header field takes on a certain value or not Bernoulli Experiment X ~ Bernoulli (1, p) | Let a random variable Y denote the number of successes in v executions of the same experiment | Actually, we do observe the whole domain DK of a header field. Each random experiment can thus result in mk = |DK| outcomes (k … k-th header field) 9/25/2020 © Salzburg Research 7

The Detection Process (2): : Statistical Analysis (2) 9/25/2020 | We introduce a learning window L and a (sliding) test window T of n-packets | Calculation of the Maximum Likelihood Estimator (MLE) of both multinomial distributions | Actually the MLEs of L are the expected probabilities under normal traffic conditions The difference between the MLEs of both windows is an indicator for anomalous activity | Problem: Too much fluctuations Calculate the ECDFs of the MLE differences Same system: learning window + (sliding) test window of n-fluctuations | Determine the differences between the areas under both ECDFs (complexity O(1)) anomaly score for each header field © Salzburg Research 8

The Detection Process (3): : Machine Learning 9/25/2020 | Problem: Reduce k-dimensional anomaly vector to 1 -dimensional anomaly score | Solution: Self-Organizing Map (unsupervised learning) | In the training phase (normal traffic) the SOM builds cluster centers for normal anomaly vectors the SOM learns the usual ECDF differences under benign traffic conditions | Anomalous high ECDF differences result in anomalous vectors no suitable cluster center can be found the distance (quantization error) to the nearest cluster center is very high © Salzburg Research 9

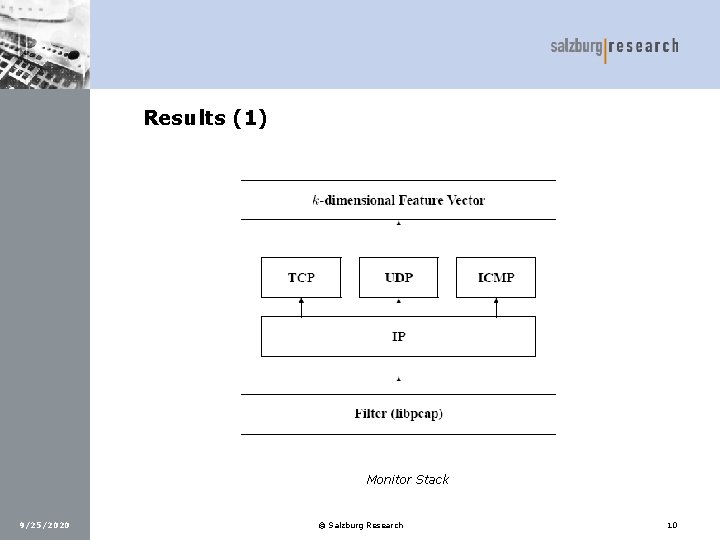

Results (1) Monitor Stack 9/25/2020 © Salzburg Research 10

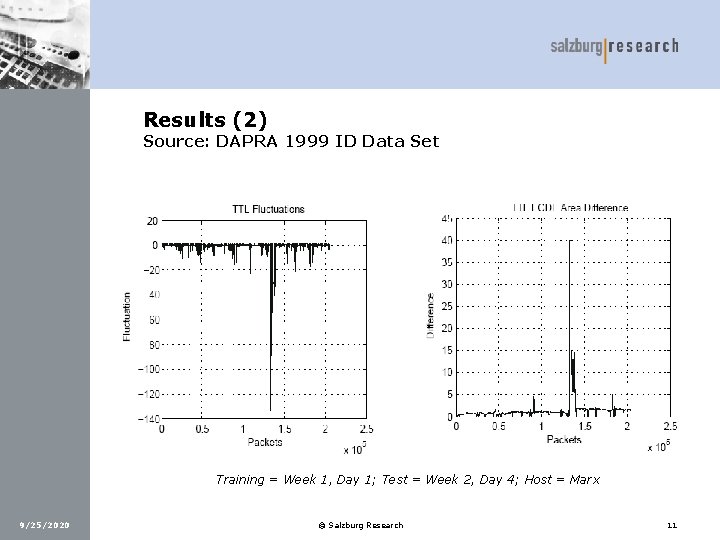

Results (2) Source: DAPRA 1999 ID Data Set Training = Week 1, Day 1; Test = Week 2, Day 4; Host = Marx 9/25/2020 © Salzburg Research 11

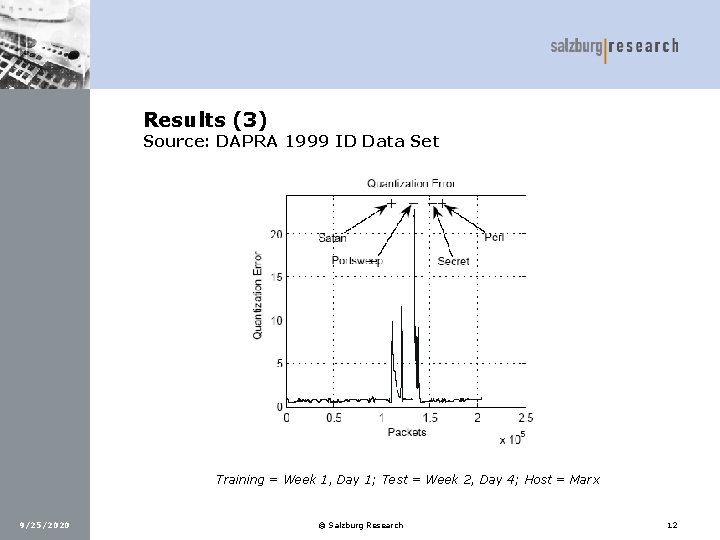

Results (3) Source: DAPRA 1999 ID Data Set Training = Week 1, Day 1; Test = Week 2, Day 4; Host = Marx 9/25/2020 © Salzburg Research 12

Further Work 9/25/2020 | Eliminate assumption of stationarity determine stationary intervals piecewise stationarity | Introduce higher level features such as connection details or temporal statistics (#connections in t-seconds for example) | Evaluate other machine learning methods (Growing Neural Gas, Growing Cell Structures …) | Testing with Endace’s DAG card to eliminate the performance bottleneck caused by libpcap! © Salzburg Research 13

Thanks for your attention ! 9/25/2020 © Salzburg Research 14

- Slides: 14