Nearest Neighbor Classification Oct 2001 Luo Si http

Nearest Neighbor Classification Oct 2001 Luo Si http: //www. cs. cmu. edu/~lsi © Luo Si, 2001

Introduction u Carnegie Mellon What is Nearest Neighbor Learning Algorithm? In contrast to learning methods that construct a general, explicit description of the target function when training examples are provided, it simply stores the training examples. Each time a new query object is encountered, its relationship to the previously stored examples is examined in order to assign a target function. It is a locally weighted regression algorithm and as it postpone the computation to the online test phase it is also a kind of lazy learning algorithm. 12/30/2021 © Luo Si, 2001 2

Course philosophy u Carnegie Mellon Characteristics of Nearest Neighbor Learning? 1. This algorithm does not need to make assumptions about the generative model of the data as need by some generative model. 2. It can construct a different approximation to the target function for each distinct query instance. 3. It can also be used for more complex, symbolic representations. 4. 12/30/2021 The cost of classifying new instances can be high. (Time and Space) © Luo Si, 2001 3

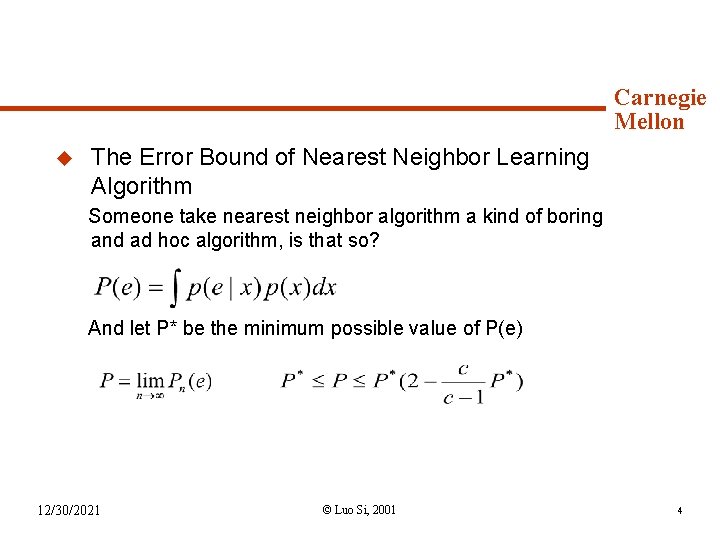

Course Mechanics u Carnegie Mellon The Error Bound of Nearest Neighbor Learning Algorithm Someone take nearest neighbor algorithm a kind of boring and ad hoc algorithm, is that so? And let P* be the minimum possible value of P(e) 12/30/2021 © Luo Si, 2001 4

Background Material u. Instance-Based Carnegie Mellon Learning Algorithms Instance-based learning algorithm generates classification predictions using only specific instances. The whole instance set is a subset of the training examples. The main idea is that not all training examples are equally for class label prediction. We can only keep part of them and also get a very good performance. 12/30/2021 © Luo Si, 2001 5

Syllabus (subject to change) Carnegie Mellon Several Algorithms will be presented and compared for the following attributes: ü Average Accuracy ü Storage Requirement ü Noise in the Data Instance-based learning 1 (IB 1) algorithm is the simplest one. It simply uses all the training data as the instances. 12/30/2021 © Luo Si, 2001 6

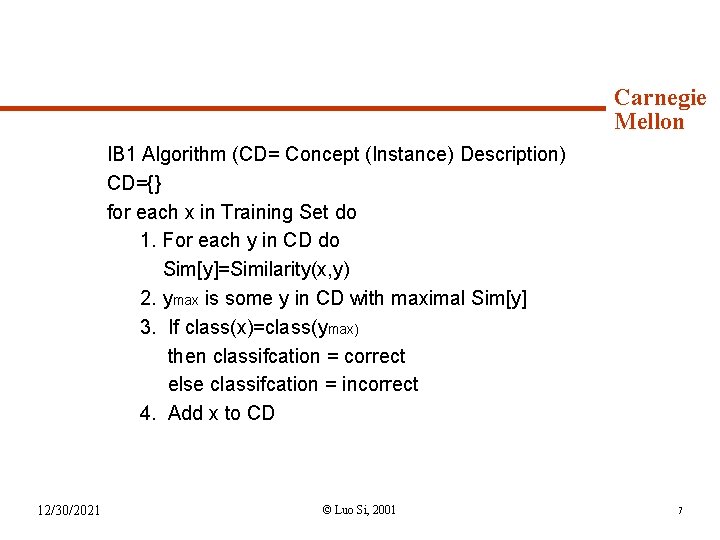

Syllabus (continued) Carnegie Mellon IB 1 Algorithm (CD= Concept (Instance) Description) CD={} for each x in Training Set do 1. For each y in CD do Sim[y]=Similarity(x, y) 2. ymax is some y in CD with maximal Sim[y] 3. If class(x)=class(ymax) then classifcation = correct else classifcation = incorrect 4. Add x to CD 12/30/2021 © Luo Si, 2001 7

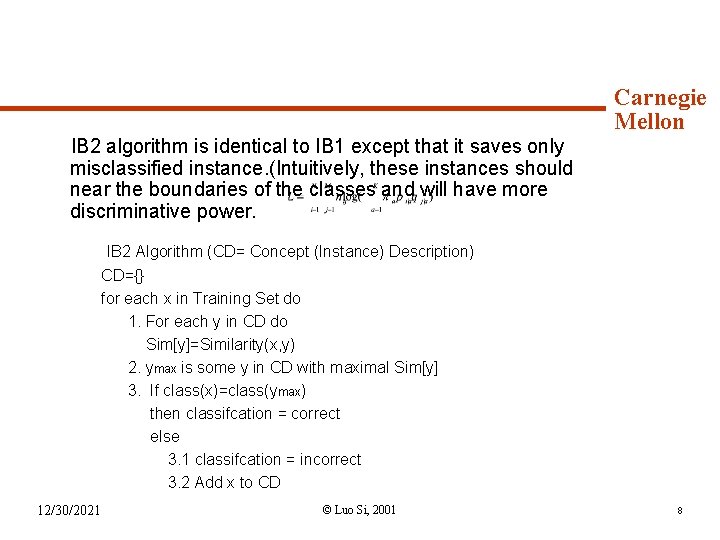

Syllabus (continued) Carnegie Mellon IB 2 algorithm is identical to IB 1 except that it saves only misclassified instance. (Intuitively, these instances should near the boundaries of the classes and will have more discriminative power. IB 2 Algorithm (CD= Concept (Instance) Description) CD={} for each x in Training Set do 1. For each y in CD do Sim[y]=Similarity(x, y) 2. ymax is some y in CD with maximal Sim[y] 3. If class(x)=class(ymax) then classifcation = correct else 3. 1 classifcation = incorrect 3. 2 Add x to CD 12/30/2021 © Luo Si, 2001 8

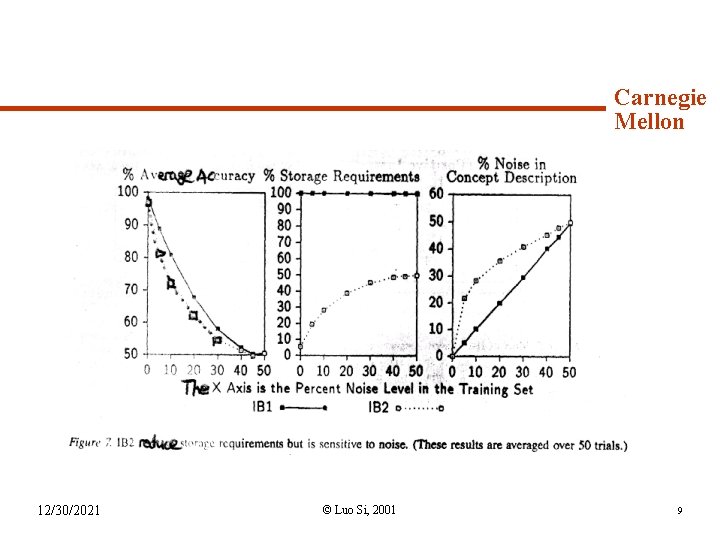

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 9

Syllabus (continued) Carnegie Mellon The idea of IB 3 is: it is an extension of IBL 2 but employs a “wait and see” evidence-gathering method to determine which of the saved instances are expected to perform well during classification. 1. 2. 12/30/2021 IB 3 maintains classification record( the number of correct and incorrect classification attempts) with each saved instance. A classification record summarizes an instance’s classification performance on subsequently presented training instances and suggests how it will perform in the future. IB 3 employs a significance test to determine which instances are good classifiers and which ones are believed to be noisy. The former are used to classify subsequently presented instances. The latter are discarded from the concept description. © Luo Si, 2001 10

Syllabus (continued) IB 3 Algorithm (CD= Concept (Instance) Description) Carnegie Mellon CD={} for each x in Training Set do 1. For each y in CD do Sim[y]=Similarity(x, y) 2. If any y in CD is acceptable then set ymax some acceptable y in CD with maximal sim[y] else set j as a randomly-selected value in [1, |CD|] and set ymax as some y in CD that is the j-th most simliar instance to x 3. If class(x)=class(ymax) then classification is correct else classification is incorrect and add x to CD 4. For each y in CD do if Sim[y]>= Sim[ymax] then update y’s classifcation record if y’s record is significantly poor then remove y from CD 12/30/2021 © Luo Si, 2001 11

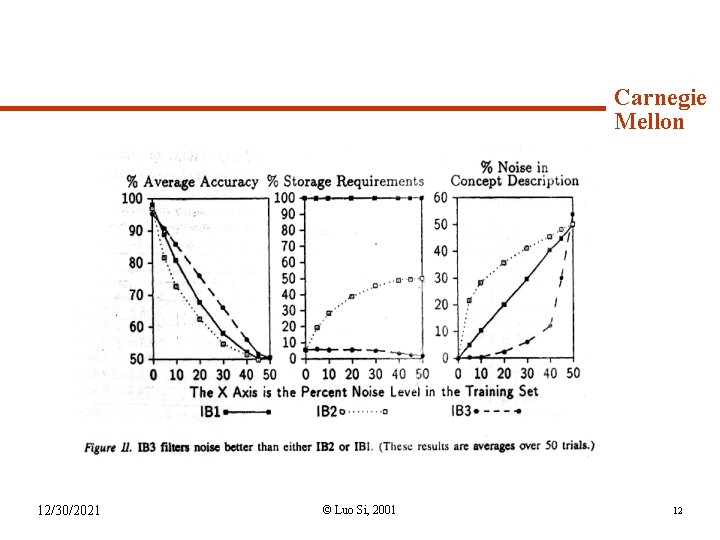

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 12

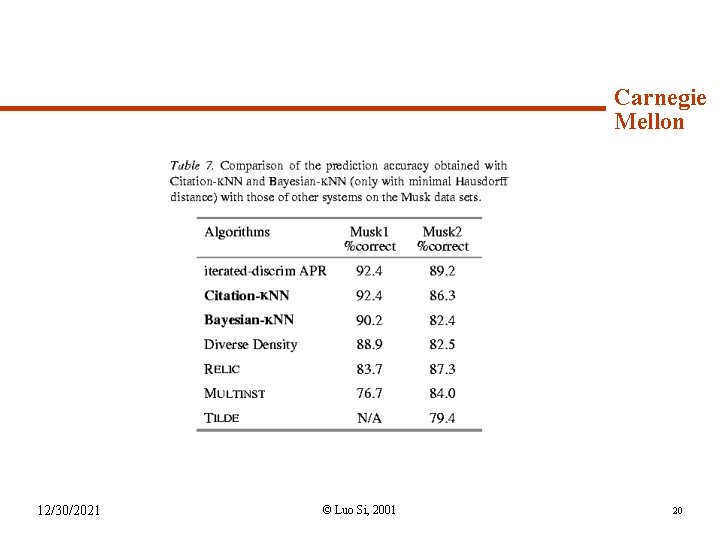

Syllabus (continued) Carnegie Mellon Solving the Multiple-Instance Problem: A Lazy Learning Approach u What is Multiple-Instance Problem? As opposed to traditional supervised learning algorithm, multipleinstance learning concerns the problem of classifying a bag of instance, given bags that are labeled by a teacher as being overall positive or negative. We will investigate the use of lazy learning to approach the multiple-instance problem. We will present two variants of the k-nearest neighbor algorithm, called Bayesian-k. NN and Citation-KNN. 12/30/2021 © Luo Si, 2001 13

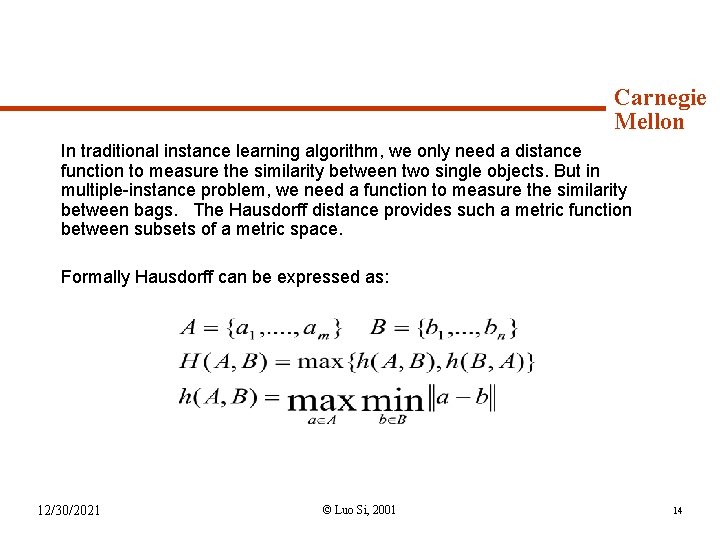

Syllabus (continued) Carnegie Mellon In traditional instance learning algorithm, we only need a distance function to measure the similarity between two single objects. But in multiple-instance problem, we need a function to measure the similarity between bags. The Hausdorff distance provides such a metric function between subsets of a metric space. Formally Hausdorff can be expressed as: 12/30/2021 © Luo Si, 2001 14

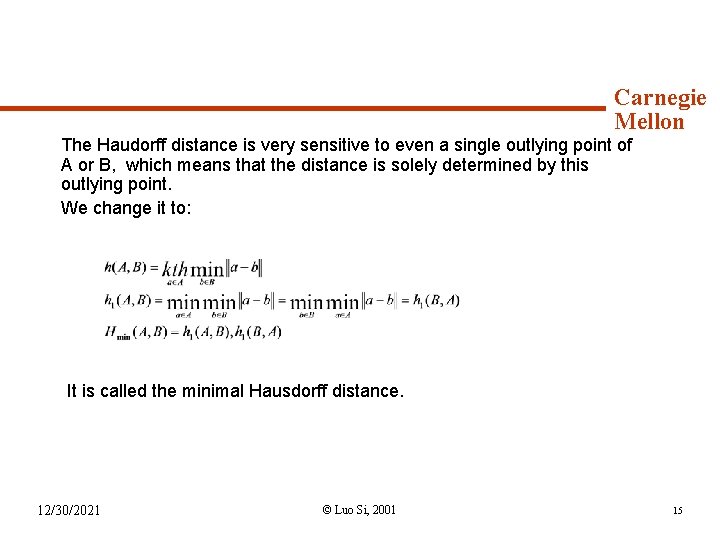

Syllabus (continued) Carnegie Mellon The Haudorff distance is very sensitive to even a single outlying point of A or B, which means that the distance is solely determined by this outlying point. We change it to: It is called the minimal Hausdorff distance. 12/30/2021 © Luo Si, 2001 15

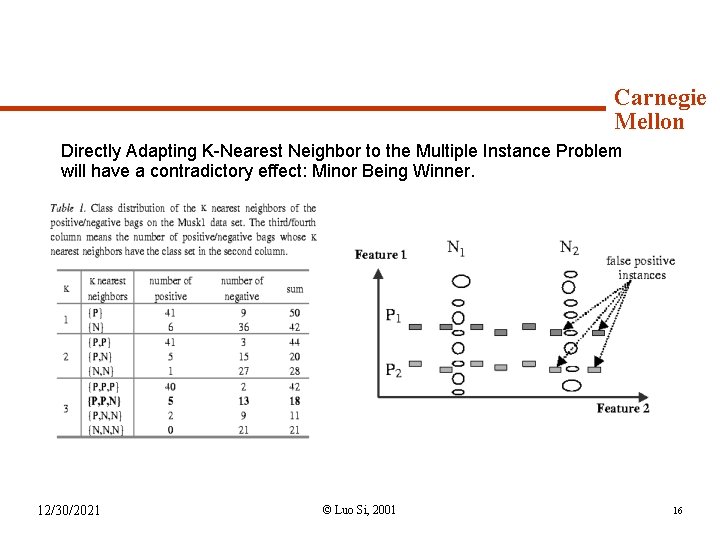

Syllabus (continued) Carnegie Mellon Directly Adapting K-Nearest Neighbor to the Multiple Instance Problem will have a contradictory effect: Minor Being Winner. 12/30/2021 © Luo Si, 2001 16

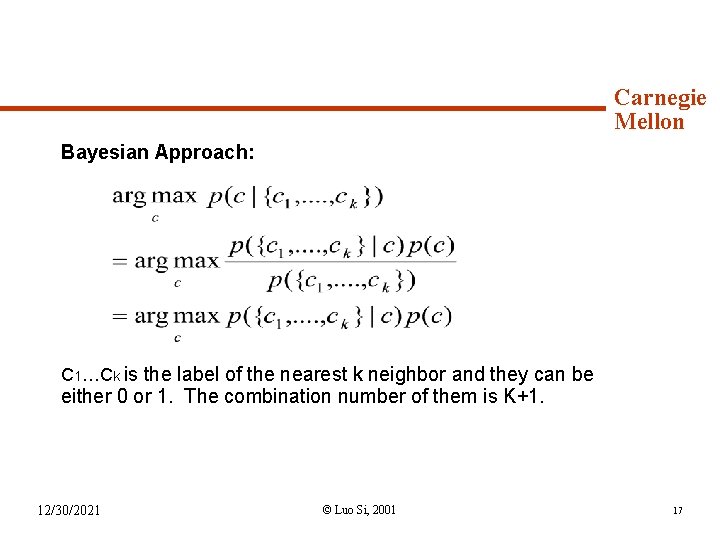

Syllabus (continued) Carnegie Mellon Bayesian Approach: C 1…Ck is the label of the nearest k neighbor and they can be either 0 or 1. The combination number of them is K+1. 12/30/2021 © Luo Si, 2001 17

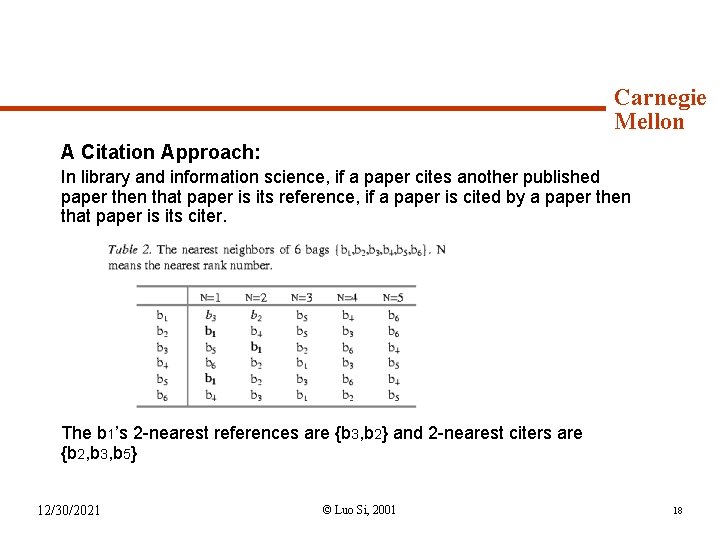

Syllabus (continued) Carnegie Mellon A Citation Approach: In library and information science, if a paper cites another published paper then that paper is its reference, if a paper is cited by a paper then that paper is its citer. The b 1’s 2 -nearest references are {b 3, b 2} and 2 -nearest citers are {b 2, b 3, b 5} 12/30/2021 © Luo Si, 2001 18

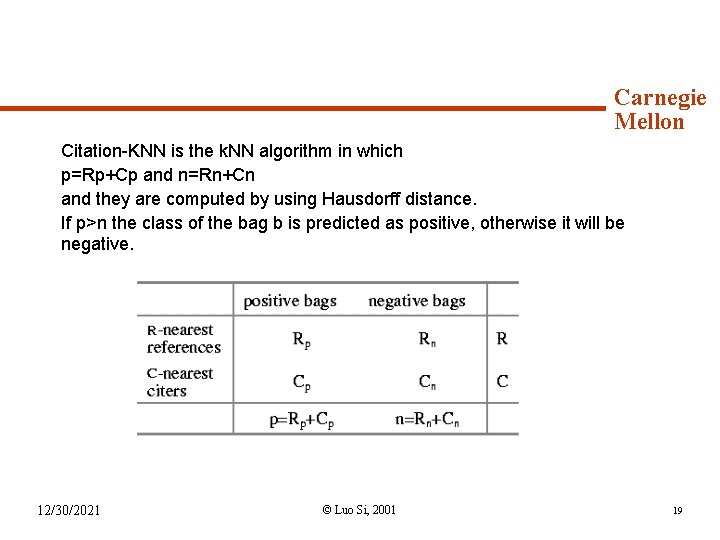

Syllabus (continued) Carnegie Mellon Citation-KNN is the k. NN algorithm in which p=Rp+Cp and n=Rn+Cn and they are computed by using Hausdorff distance. If p>n the class of the bag b is predicted as positive, otherwise it will be negative. 12/30/2021 © Luo Si, 2001 19

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 20

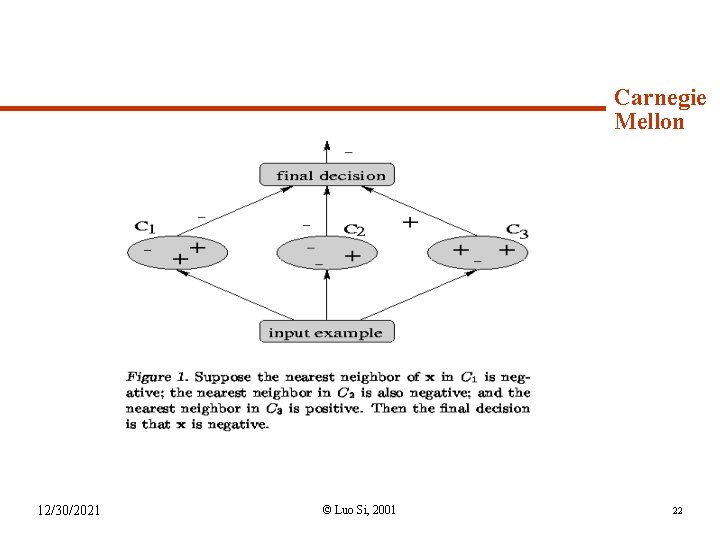

Syllabus (continued) Carnegie Mellon Voting Nearest-Neighbor Subclassifiers, Traditional nearest-neighbor classifiers suffer from capacity-related problems. The size of very large database renders loading the entire data into the main memory impossible. This present an approach that selects three very small groups of examples such that when used as 1 -NN subclassifiers, each tends to have err in a different part of the instance space. 12/30/2021 © Luo Si, 2001 21

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 22

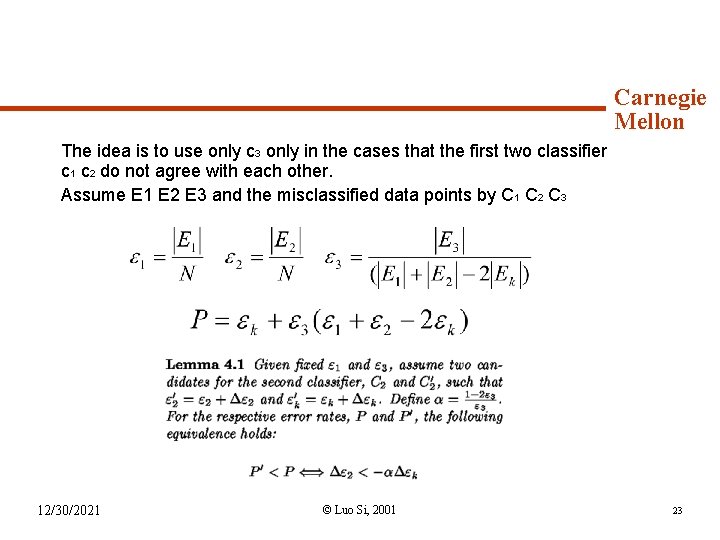

Syllabus (continued) Carnegie Mellon The idea is to use only c 3 only in the cases that the first two classifier c 1 c 2 do not agree with each other. Assume E 1 E 2 E 3 and the misclassified data points by C 1 C 2 C 3 12/30/2021 © Luo Si, 2001 23

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 24

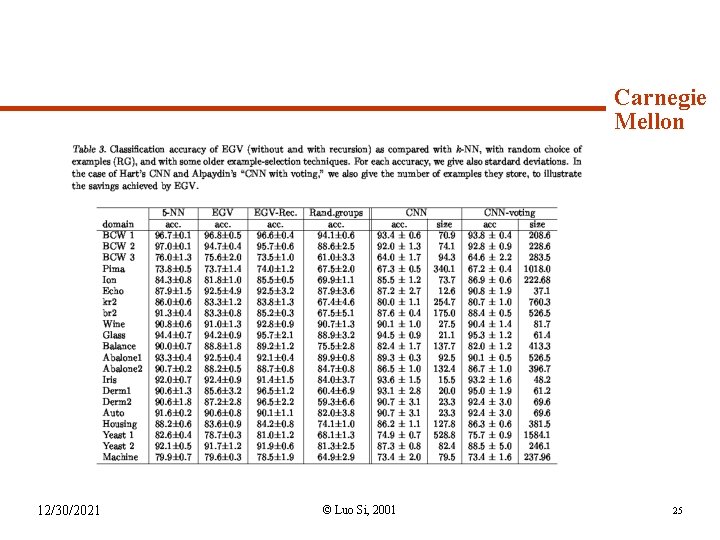

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 25

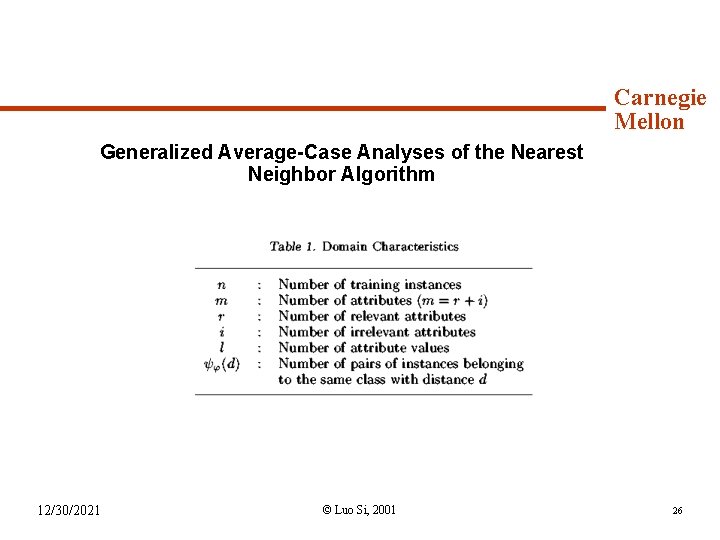

Syllabus (continued) Carnegie Mellon Generalized Average-Case Analyses of the Nearest Neighbor Algorithm 12/30/2021 © Luo Si, 2001 26

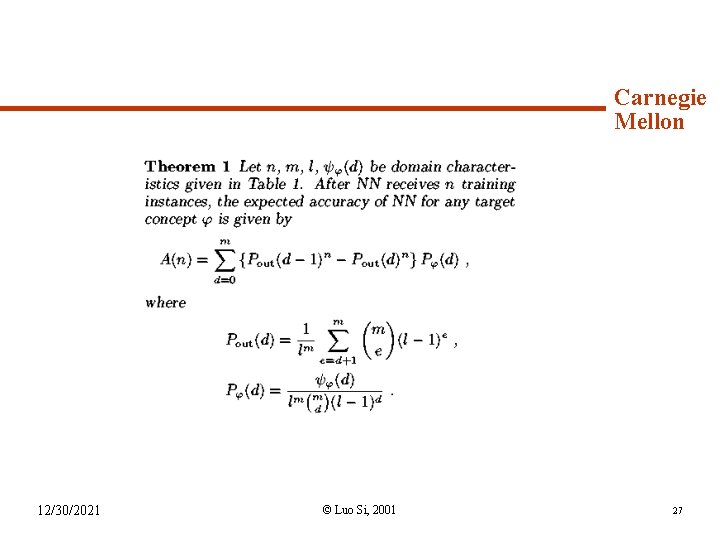

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 27

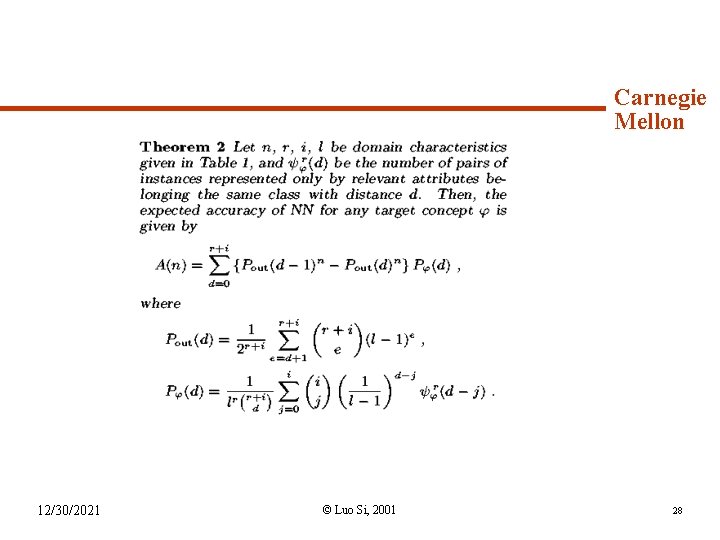

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 28

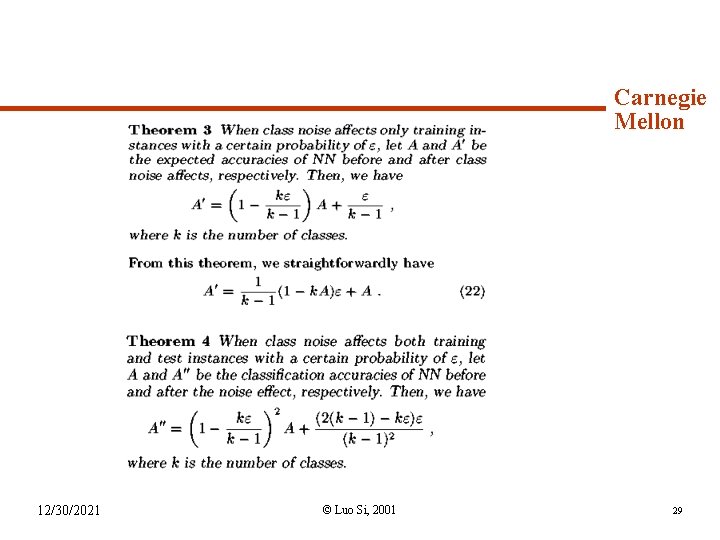

Syllabus (continued) 12/30/2021 Carnegie Mellon © Luo Si, 2001 29

Syllabus (continued) Carnegie Mellon References: u. Duda& Hart “Pattern Classification” u. D. Aha, D. Kibler, and M. Albert. "Instance-based learning algorithms. " Machine Learning, 6: 37 --66, 1991. u. J. Wang and J. D. Zucker. "Solving the Multiple-Instance Problem: A Lazy Learning Approach. " ICML'00. Proceedings pp 1119 -1134. u. S. Okamoto and N. Yugami. “Generalized Average-Case Analyses of the Nearest Neighbor Algorithm"ICML'00. u. M. Kubat and M. Cooperson, Jr. "Voting Nearest-Neighbor Subclassifiers. " ICML'00. pp 503 -510. 12/30/2021 © Luo Si, 2001 30

- Slides: 30