NEAREST NEIGHBOR NN KNN AND BAYES CLASSIFIER Guoqing

NEAREST NEIGHBOR (NN), KNN AND BAYES CLASSIFIER Guoqing Tang June 10, 2019

Outline of the Presentation § Feature spaces and measures of similarity § Nearest neighbor classifier: k-nearest neighbors § Bayes’ theorem & Bayesian prediction § Bayes classifier: naïve Bayes classifier 2

Similarity-Based Learning § Similarity-based approaches to machine learning come from the idea that: » Similar examples have similar label, and » Classify new examples like similar training examples. § The fundamental concepts required to build a model based on this idea are: » Feature spaces, and » Measures of similarity § Algorithm: » Given some new example x for which we need to predict its class y » Find most similar training examples » Classify x “like” these most similar examples § Questions: » How to determine similarity? » How many similar training examples to consider? » How to resolve inconsistencies among the training examples? 3

An Example § One day in 1798, after an expedition up Hawkesbury River in New South Wales, a sailor named Jim Smith from the expedition told his boss, Lt. Col. David Collins, that he saw a strong animal near the river. § Lt. Collins asked Jim to describe the animal, and Jim explained that he didn’t see it very well because, as he approached it, the animal growled at him so he didn’t get too close to the animal. But he did notice that the animal had webbed feet and a duck-billed snout. § Based on Jim’s description, Lt. Collins decided that he needed to classify the animal so that he can determine whether it is dangerous to approach it. § He did it by thinking about the animals he can remember coming across before and comparing features of these animals with the features Jim described to him. § Figure below illustrates this process by listing some of the animals he had encountered before and how they compared with the growling, web-footed, duckbilled animal that Jim described. 4

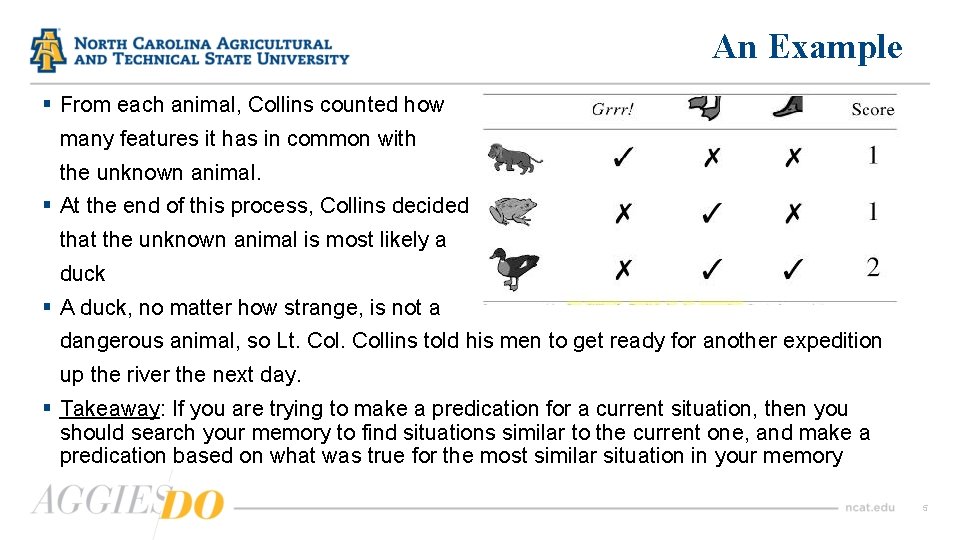

An Example § From each animal, Collins counted how many features it has in common with the unknown animal. § At the end of this process, Collins decided that the unknown animal is most likely a duck § A duck, no matter how strange, is not a dangerous animal, so Lt. Collins told his men to get ready for another expedition up the river the next day. § Takeaway: If you are trying to make a predication for a current situation, then you should search your memory to find situations similar to the current one, and make a predication based on what was true for the most similar situation in your memory 5

Feature Space § As illustrated by the above example, a key component of the similarity-based approach to prediction is to define a computational measure of similarity between instances § Often this measure of similarity is some form of distance measure. § If we want to compute distances between instances, we need to have a concept of feature space in the representation of the domain used by our similaritybased model. § We formally define a feature space as an abstract m-dimensional space by: » making each descriptive feature in a dataset an axis of an m-dim coordinate system; and » mapping each instance in the dataset to a point in this coordinate system based on the values of its descriptive features 6

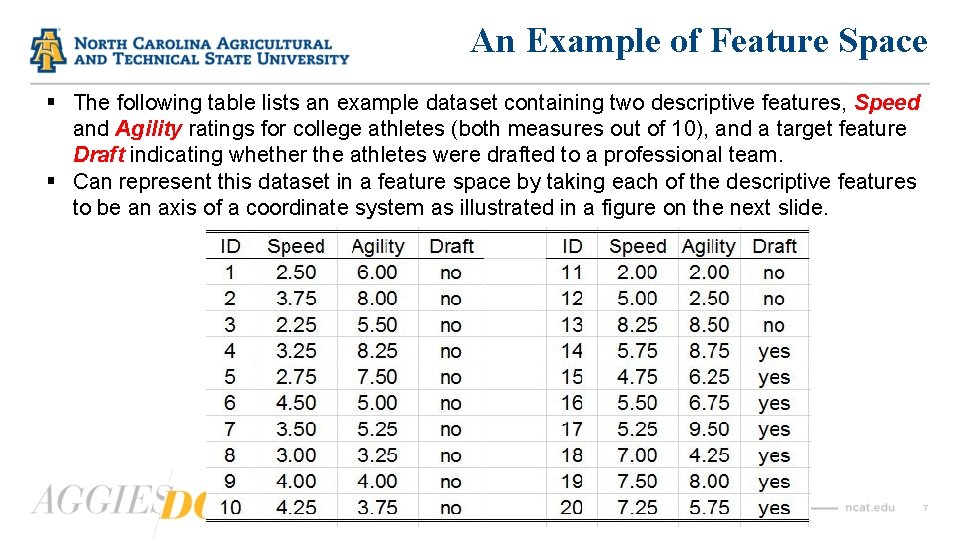

An Example of Feature Space § The following table lists an example dataset containing two descriptive features, Speed and Agility ratings for college athletes (both measures out of 10), and a target feature Draft indicating whether the athletes were drafted to a professional team. § Can represent this dataset in a feature space by taking each of the descriptive features to be an axis of a coordinate system as illustrated in a figure on the next slide. 7

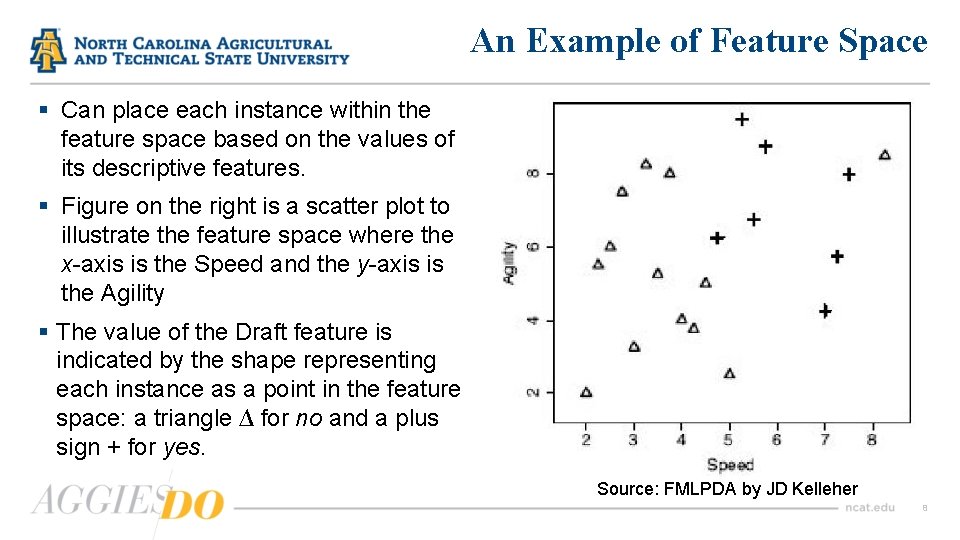

An Example of Feature Space § Can place each instance within the feature space based on the values of its descriptive features. § Figure on the right is a scatter plot to illustrate the feature space where the x-axis is the Speed and the y-axis is the Agility § The value of the Draft feature is indicated by the shape representing each instance as a point in the feature space: a triangle ∆ for no and a plus sign + for yes. Source: FMLPDA by JD Kelleher 8

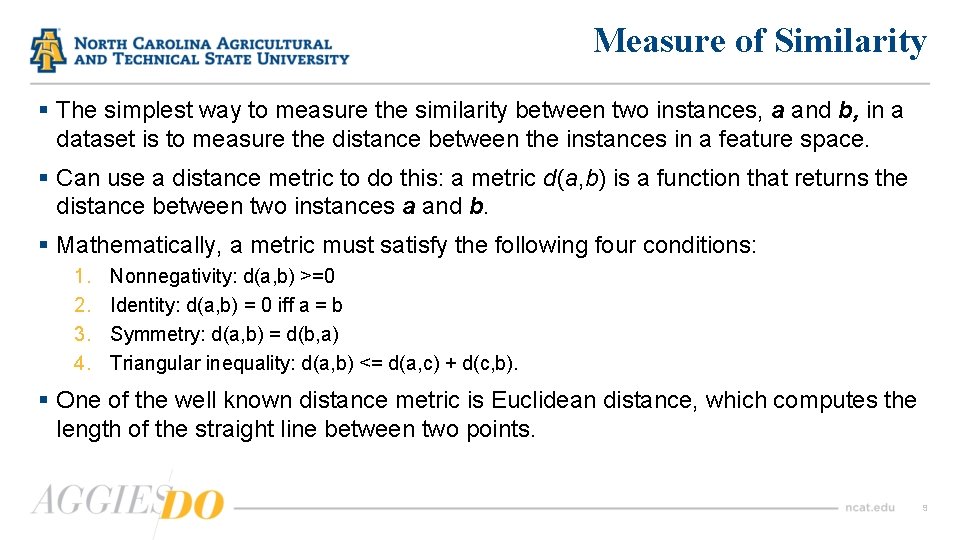

Measure of Similarity § The simplest way to measure the similarity between two instances, a and b, in a dataset is to measure the distance between the instances in a feature space. § Can use a distance metric to do this: a metric d(a, b) is a function that returns the distance between two instances a and b. § Mathematically, a metric must satisfy the following four conditions: 1. 2. 3. 4. Nonnegativity: d(a, b) >=0 Identity: d(a, b) = 0 iff a = b Symmetry: d(a, b) = d(b, a) Triangular inequality: d(a, b) <= d(a, c) + d(c, b). § One of the well known distance metric is Euclidean distance, which computes the length of the straight line between two points. 9

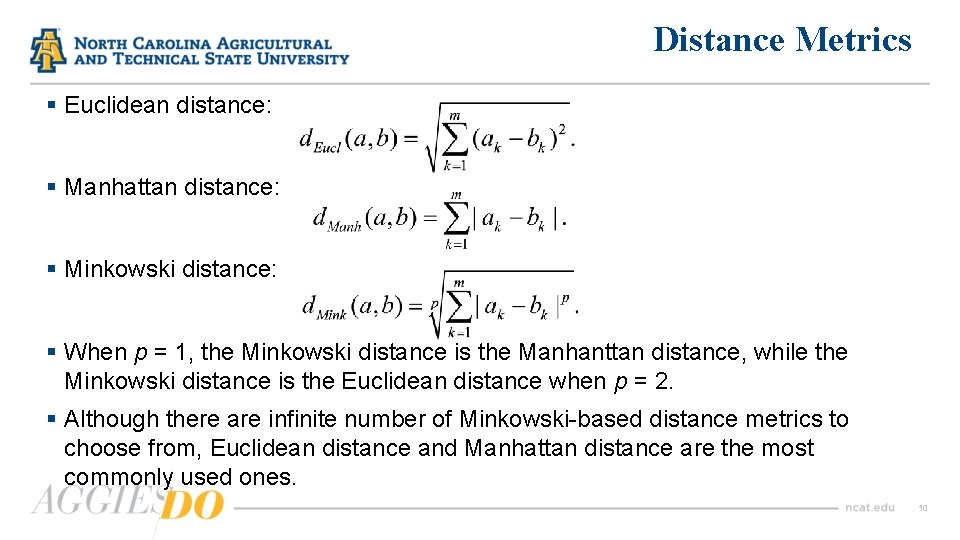

Distance Metrics § Euclidean distance: § Manhattan distance: § Minkowski distance: § When p = 1, the Minkowski distance is the Manhanttan distance, while the Minkowski distance is the Euclidean distance when p = 2. § Although there are infinite number of Minkowski-based distance metrics to choose from, Euclidean distance and Manhattan distance are the most commonly used ones. 10

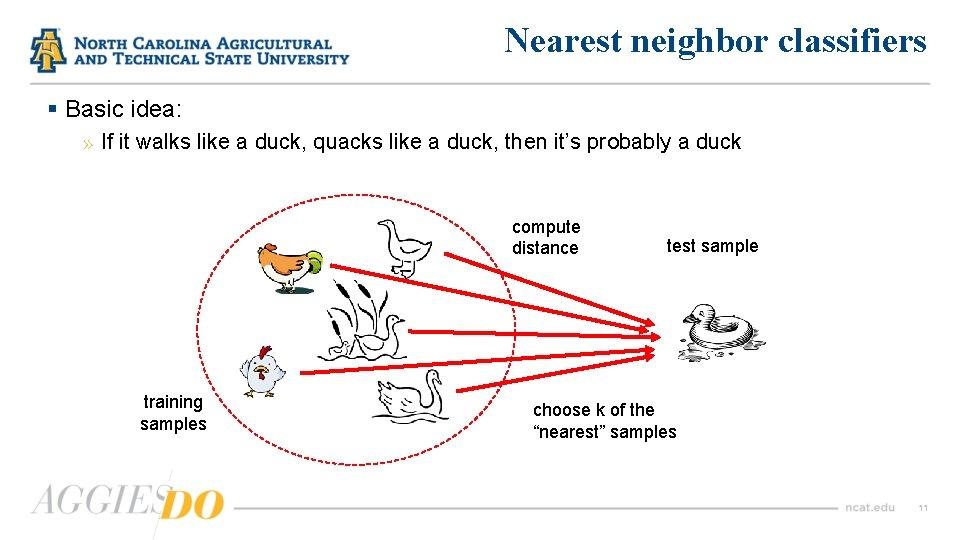

Nearest neighbor classifiers § Basic idea: » If it walks like a duck, quacks like a duck, then it’s probably a duck compute distance training samples test sample choose k of the “nearest” samples 11

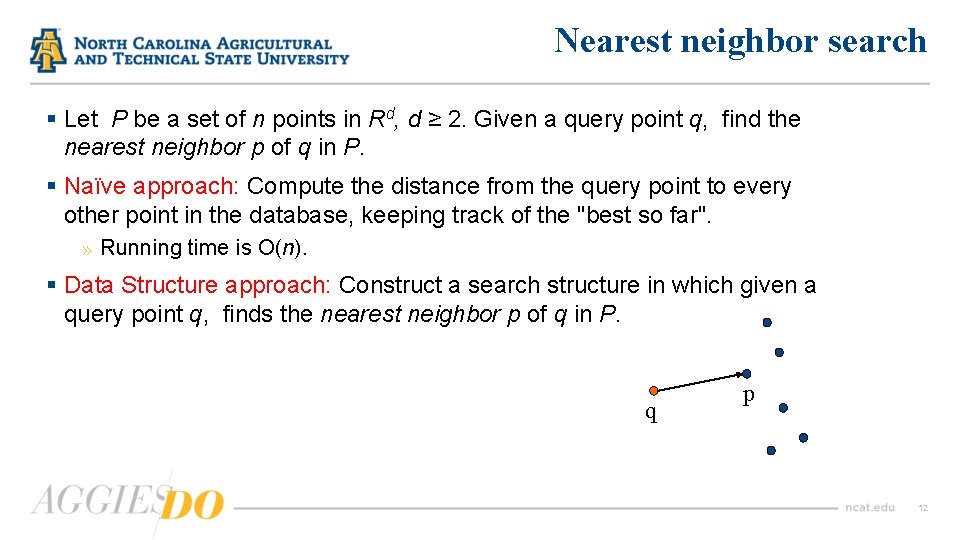

Nearest neighbor search § Let P be a set of n points in Rd, d ≥ 2. Given a query point q, find the nearest neighbor p of q in P. § Naïve approach: Compute the distance from the query point to every other point in the database, keeping track of the "best so far". » Running time is O(n). § Data Structure approach: Construct a search structure in which given a query point q, finds the nearest neighbor p of q in P. q p 12

Nearest neighbor algorithm Pseudocode description of the nearest neighbor algorithm § Require: a set of training instances § Require: a query instance 1. Iterate across the instances in memory to find the nearest neighbor– this is the instance with the shortest distance across the feature space to the query instance. 2. Make a predication for the query instance that is equal to the value of the target feature of the nearest neighbor. 3. The default distance metric used in nearest neighbor model is Euclidean distance. 13

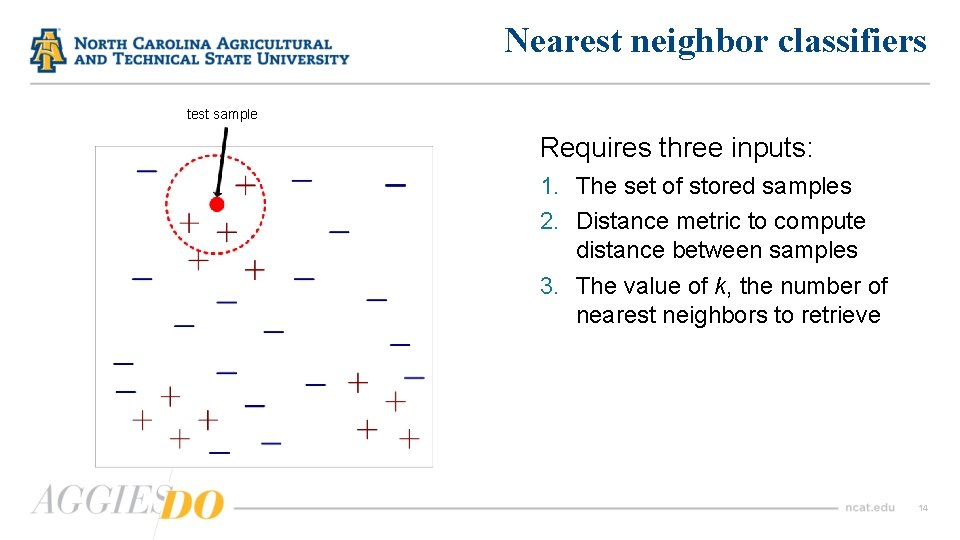

Nearest neighbor classifiers test sample Requires three inputs: 1. The set of stored samples 2. Distance metric to compute distance between samples 3. The value of k, the number of nearest neighbors to retrieve 14

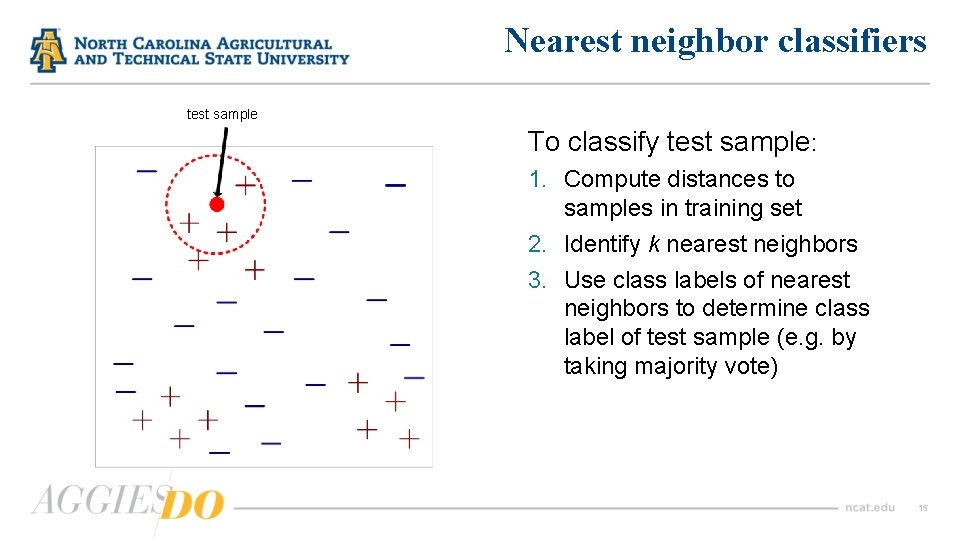

Nearest neighbor classifiers test sample To classify test sample: 1. Compute distances to samples in training set 2. Identify k nearest neighbors 3. Use class labels of nearest neighbors to determine class label of test sample (e. g. by taking majority vote) 15

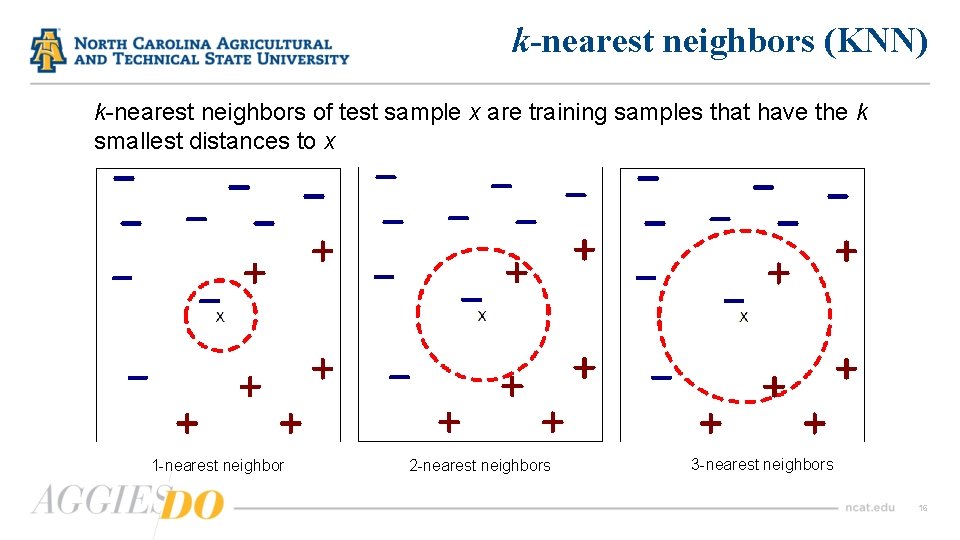

k-nearest neighbors (KNN) k-nearest neighbors of test sample x are training samples that have the k smallest distances to x 1 -nearest neighbor 2 -nearest neighbors 3 -nearest neighbors 16

KNN § KNN is a non-parametric supervised learning technique in which we try to classify the data point to a given category with the help of training set. § In simple words, it captures information of all training cases and classifies new cases based on a similarity. § Predictions are made for a new instance (x) by searching through the entire training set for the k most similar cases (neighbors) and summarizing the output variable for those k cases. § In classification this is the mode (or most common) class value. 17

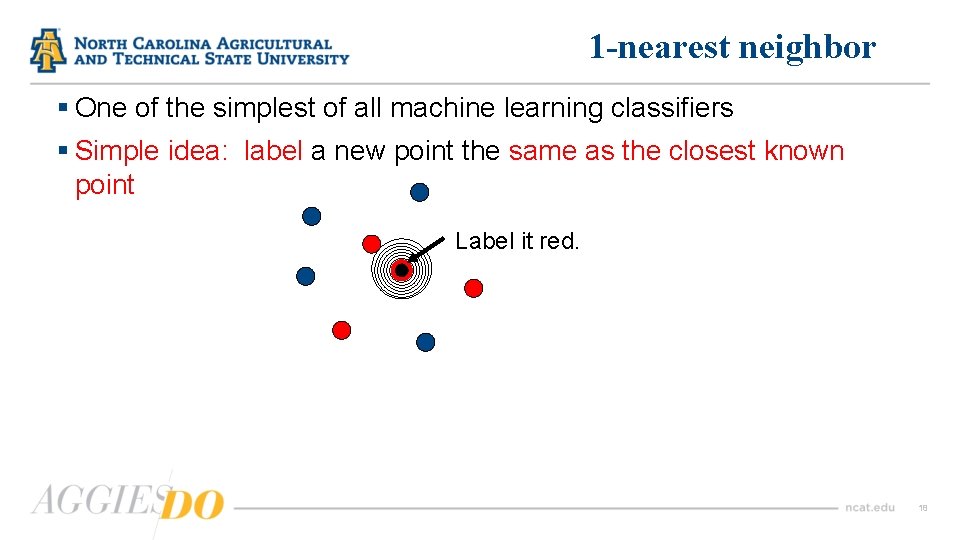

1 -nearest neighbor § One of the simplest of all machine learning classifiers § Simple idea: label a new point the same as the closest known point Label it red. 18

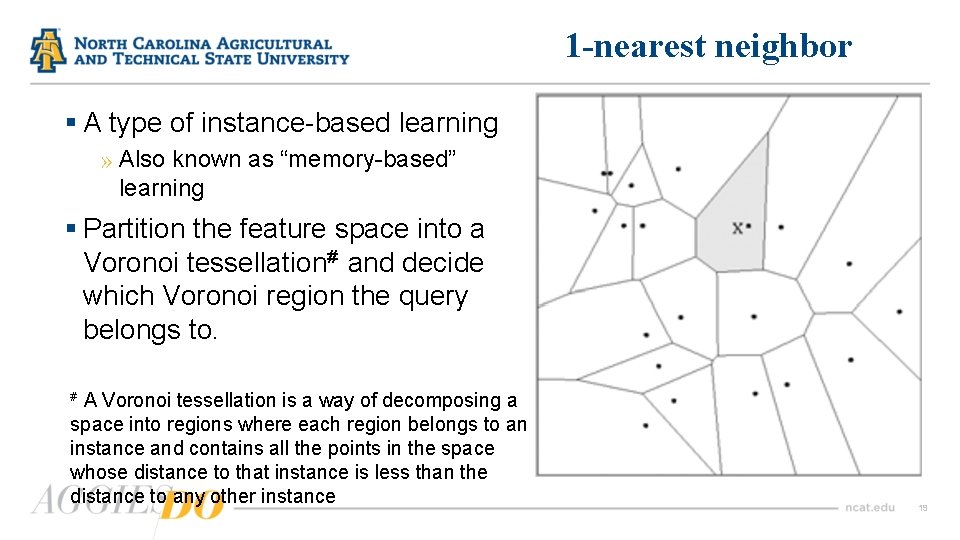

1 -nearest neighbor § A type of instance-based learning » Also known as “memory-based” learning § Partition the feature space into a Voronoi tessellation# and decide which Voronoi region the query belongs to. A Voronoi tessellation is a way of decomposing a space into regions where each region belongs to an instance and contains all the points in the space whose distance to that instance is less than the distance to any other instance # 19

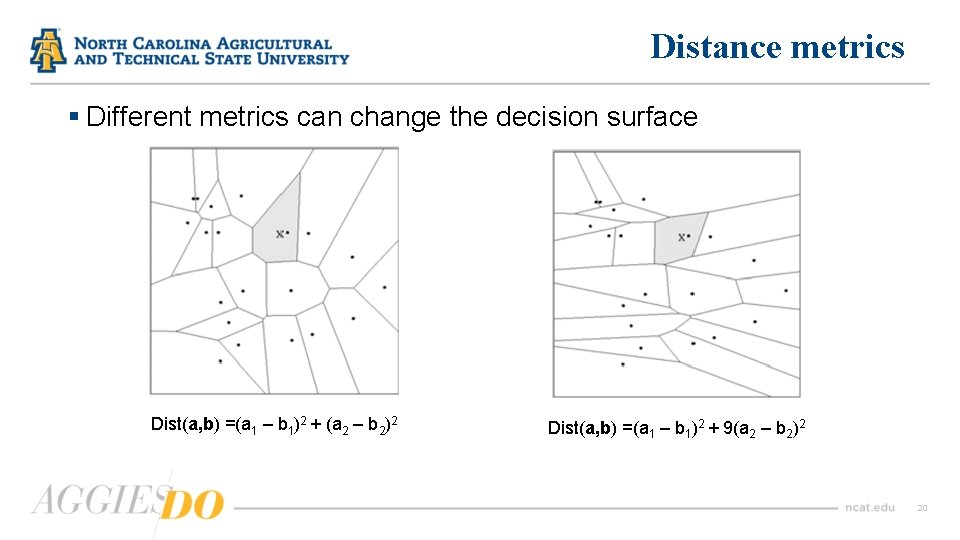

Distance metrics § Different metrics can change the decision surface Dist(a, b) =(a 1 – b 1)2 + (a 2 – b 2)2 Dist(a, b) =(a 1 – b 1)2 + 9(a 2 – b 2)2 20

1 -NN as an instance-based learner § A distance metric » » Euclidean When different units are used for each dimension normalize each dimension by standard deviation For discrete data, can use Hamming distance d(x 1, x 2) = number of features on which x 1 and x 2 differ Others (e. g. , normal, cosine) § How many nearby neighbors to look at? » One § How to fit with the local points? » Just predict the same output as the nearest neighbor. 21

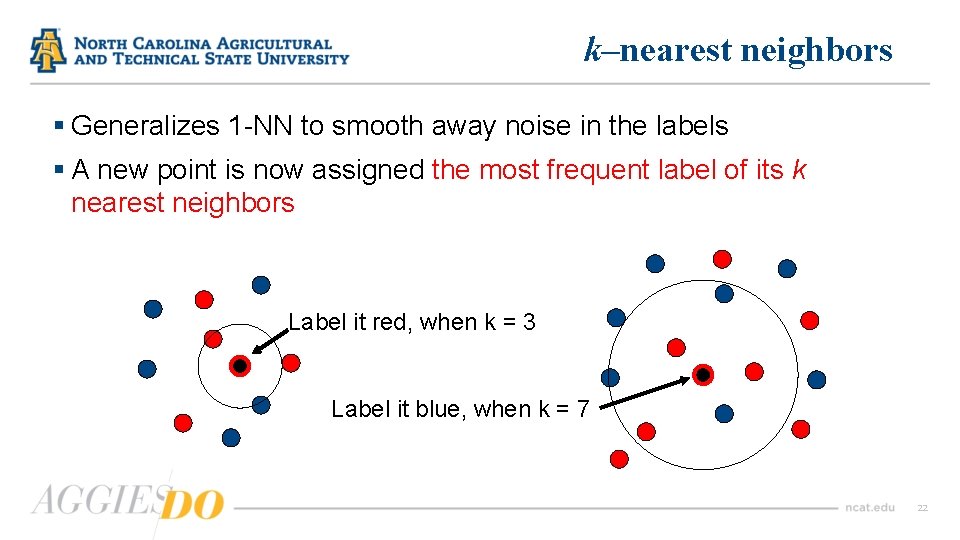

k–nearest neighbors § Generalizes 1 -NN to smooth away noise in the labels § A new point is now assigned the most frequent label of its k nearest neighbors Label it red, when k = 3 Label it blue, when k = 7 22

Selecting k value § Increase k: » Makes KNN less sensitive to noise § Decrease k: » Allows capturing finer structure of space § Pick k not too large, but not too small (depends on data) 23

Curse of Dimensionality § Prediction accuracy can quickly degrade when number of attributes grows. » Irrelevant attributes easily “swamp” information from relevant attributes » When many irrelevant attributes, similarity/distance measure becomes less reliable § Remedy » Try to remove irrelevant attributes in pre-processing step » Weight attributes differently » Increase k (but not too much) 24

Pros and cons of KNN § Pros » Easy to understand » No assumptions about data » Can be applied to both classification and regression » Works easily on multi-class problems § Cons » Memory intensive / computationally expensive » Sensitive to scale of data » Not work well on rare event (skewed) target variable » Struggle when high number of independent variables § A small value of k will lead to a large variance in predictions. § Setting k to a large value may lead to a large model bias. 25

How to find best k value § Cross-validation is a smart way to find out the optimal K value. It estimates the validation error rate by holding out a subset of the training set from the model building process. § Cross-validation (let’s say 10 fold validation) involves randomly dividing the training set into 10 groups, or folds, of approximately equal size. 90% data is used to train the model and remaining 10% to validate it. § The misclassification rate is then computed on the 10% validation data. This procedure repeats 10 times. § Different group of observations are treated as a validation set each of the 10 times. § It results to 10 estimates of the validation error which are then averaged out. 26

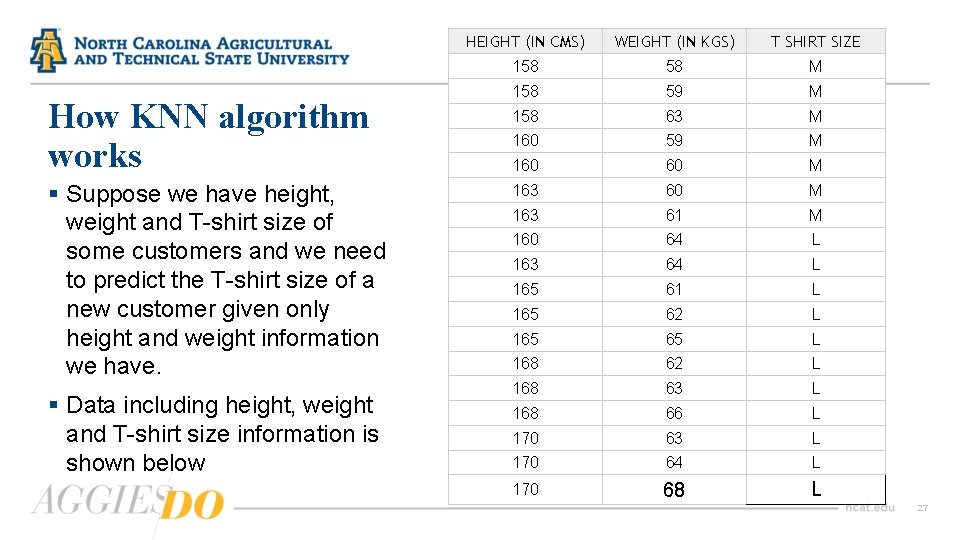

How KNN algorithm works § Suppose we have height, weight and T-shirt size of some customers and we need to predict the T-shirt size of a new customer given only height and weight information we have. § Data including height, weight and T-shirt size information is shown below HEIGHT (IN CMS) WEIGHT (IN KGS) T SHIRT SIZE 158 58 M 158 59 M 158 63 M 160 59 M 160 60 M 163 61 M 160 64 L 163 64 L 165 61 L 165 62 L 165 65 L 168 62 L 168 63 L 168 66 L 170 63 L 170 64 L 170 68 L 27

Step 1 : Calculate similarity based on distance function § There are many distance functions but Euclidean is the most commonly used measure. It is mainly used when data is continuous. § The Euclidean distance between two n-dimensional points x and u is d(x, u) =[ (x 1 -u 1)2+ (x 2 -u 2)2 + … + (xn-un)2 ]1/2 § Manhattan distance is also very common for continuous variables. § The Manhattan distance between two n-dimensional points x and u is d(x, u) =|x 1 -u 1|+ |x 2 -u 2|+ … + |xn-un| § The idea to use distance measure is to find the distance (similarity) between new sample and training cases and then finds the k-closest customers to new customer in terms of height and weight. 28

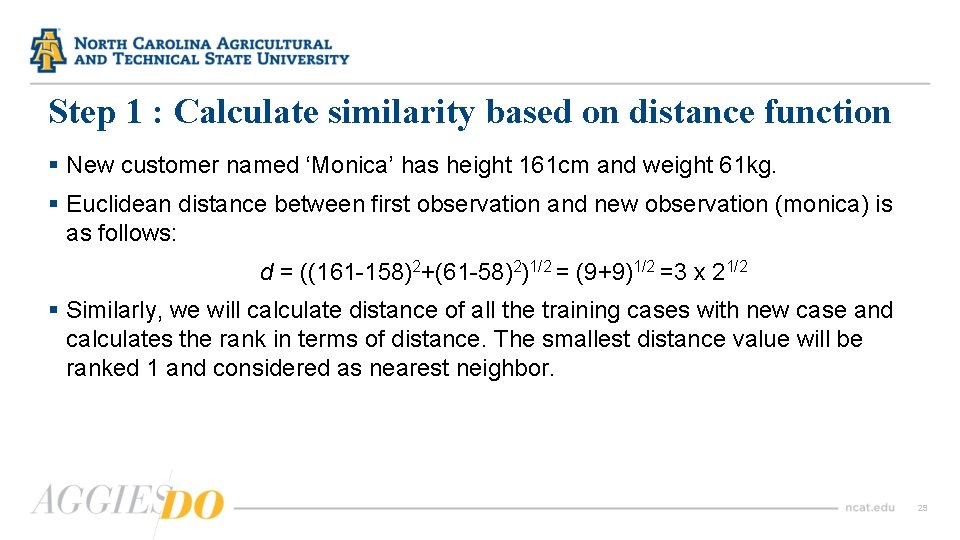

Step 1 : Calculate similarity based on distance function § New customer named ‘Monica’ has height 161 cm and weight 61 kg. § Euclidean distance between first observation and new observation (monica) is as follows: d = ((161 -158)2+(61 -58)2)1/2 = (9+9)1/2 =3 x 21/2 § Similarly, we will calculate distance of all the training cases with new case and calculates the rank in terms of distance. The smallest distance value will be ranked 1 and considered as nearest neighbor. 29

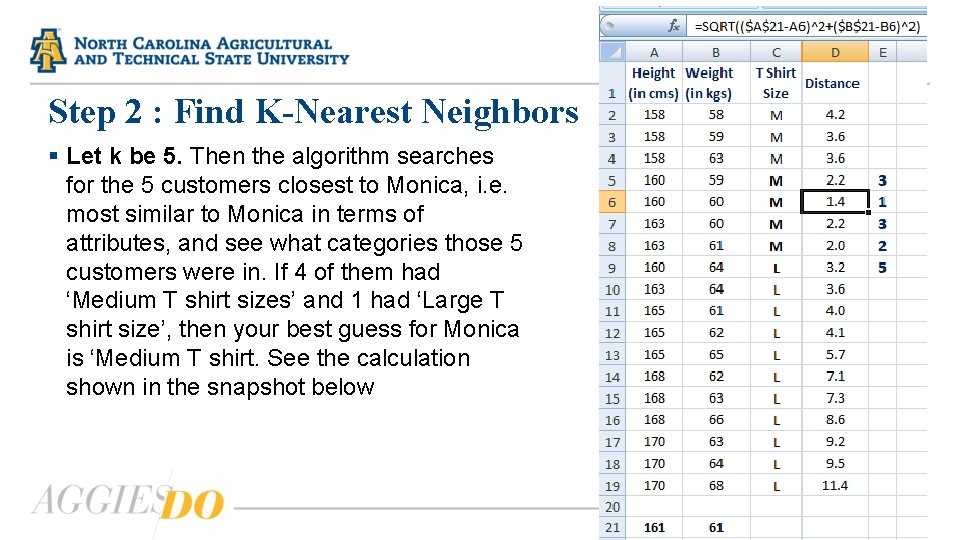

Step 2 : Find K-Nearest Neighbors § Let k be 5. Then the algorithm searches for the 5 customers closest to Monica, i. e. most similar to Monica in terms of attributes, and see what categories those 5 customers were in. If 4 of them had ‘Medium T shirt sizes’ and 1 had ‘Large T shirt size’, then your best guess for Monica is ‘Medium T shirt. See the calculation shown in the snapshot below 30

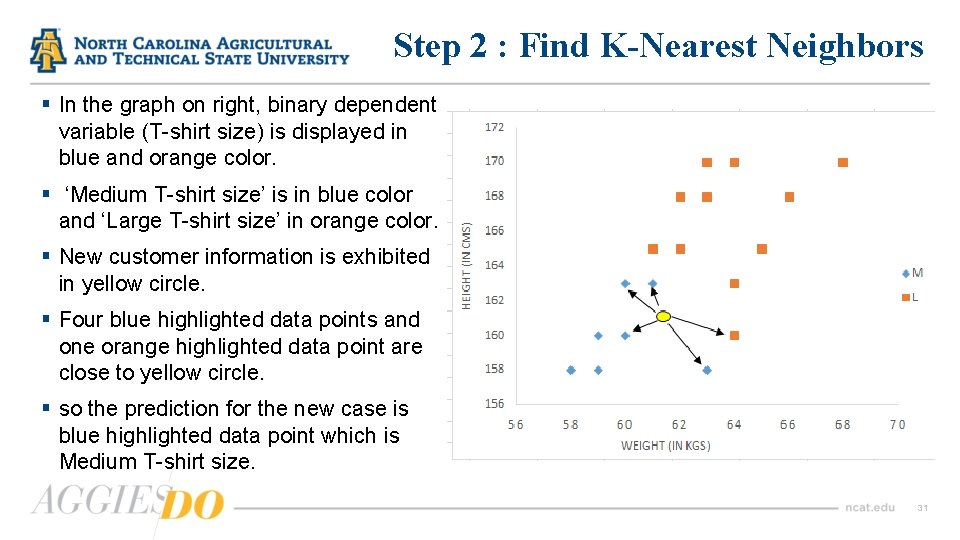

Step 2 : Find K-Nearest Neighbors § In the graph on right, binary dependent variable (T-shirt size) is displayed in blue and orange color. § ‘Medium T-shirt size’ is in blue color and ‘Large T-shirt size’ in orange color. § New customer information is exhibited in yellow circle. § Four blue highlighted data points and one orange highlighted data point are close to yellow circle. § so the prediction for the new case is blue highlighted data point which is Medium T-shirt size. 31

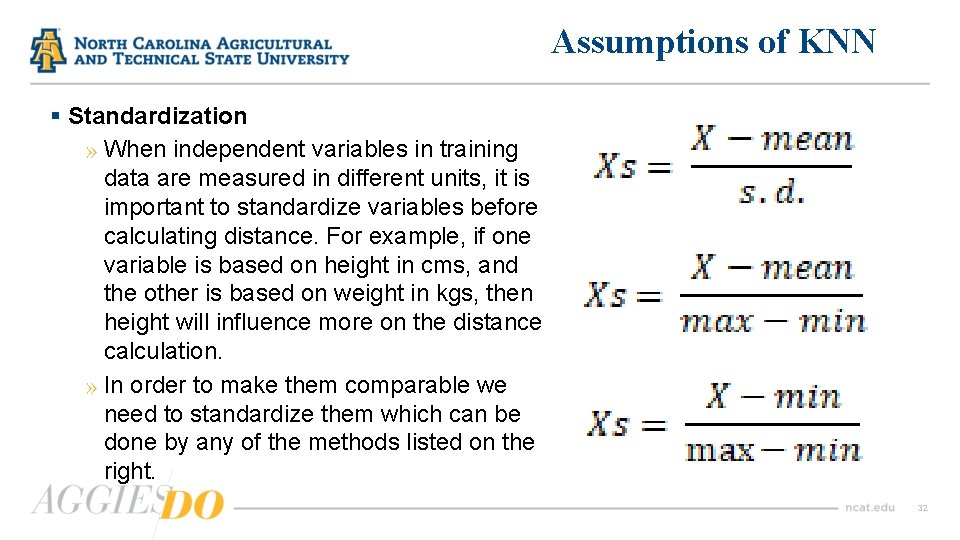

Assumptions of KNN § Standardization » When independent variables in training data are measured in different units, it is important to standardize variables before calculating distance. For example, if one variable is based on height in cms, and the other is based on weight in kgs, then height will influence more on the distance calculation. » In order to make them comparable we need to standardize them which can be done by any of the methods listed on the right. 32

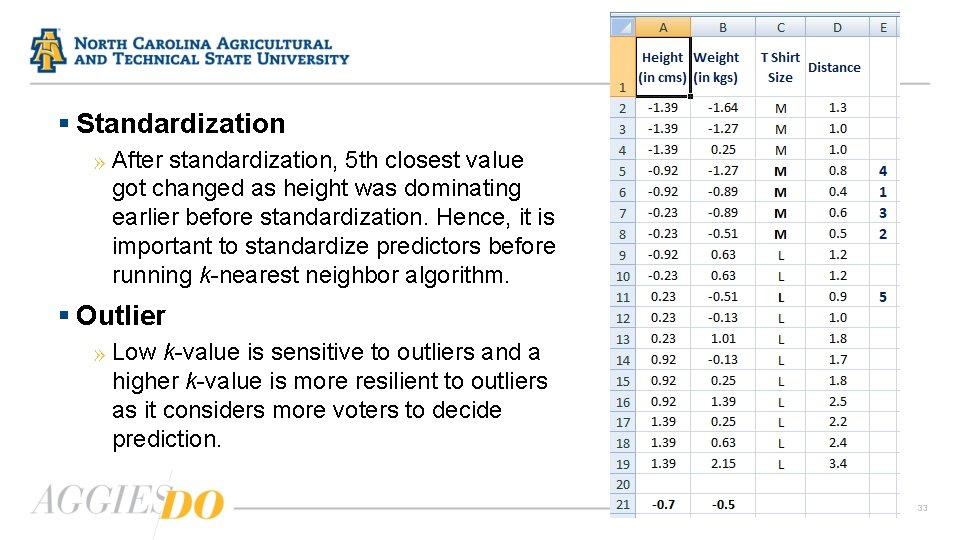

§ Standardization » After standardization, 5 th closest value got changed as height was dominating earlier before standardization. Hence, it is important to standardize predictors before running k-nearest neighbor algorithm. § Outlier » Low k-value is sensitive to outliers and a higher k-value is more resilient to outliers as it considers more voters to decide prediction. 33

Why KNN is non-parametric § Non-parametric means not making any assumptions on the underlying data distribution. § Non-parametric methods do not have fixed numbers of parameters in the model. § Similarly in KNN, model parameters actually grow with the training data set – you can imagine each training case as a “parameter” in the model. 34

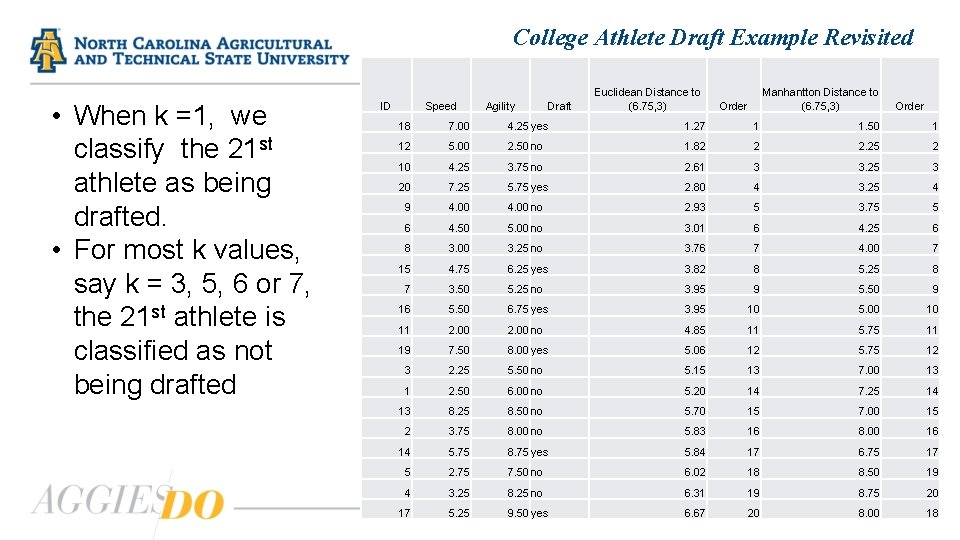

College Athlete Draft Example Revisited • When k =1, we classify the 21 st athlete as being drafted. • For most k values, say k = 3, 5, 6 or 7, the 21 st athlete is classified as not being drafted ID Speed Agility Draft Euclidean Distance to (6. 75, 3) Manhantton Distance to (6. 75, 3) Order 18 7. 00 4. 25 yes 1. 27 1 1. 50 1 12 5. 00 2. 50 no 1. 82 2 2. 25 2 10 4. 25 3. 75 no 2. 61 3 3. 25 3 20 7. 25 5. 75 yes 2. 80 4 3. 25 4 9 4. 00 no 2. 93 5 3. 75 5 6 4. 50 5. 00 no 3. 01 6 4. 25 6 8 3. 00 3. 25 no 3. 76 7 4. 00 7 15 4. 75 6. 25 yes 3. 82 8 5. 25 8 7 3. 50 5. 25 no 3. 95 9 5. 50 9 16 5. 50 6. 75 yes 3. 95 10 5. 00 10 11 2. 00 no 4. 85 11 5. 75 11 19 7. 50 8. 00 yes 5. 06 12 5. 75 12 3 2. 25 5. 50 no 5. 15 13 7. 00 13 1 2. 50 6. 00 no 5. 20 14 7. 25 14 13 8. 25 8. 50 no 5. 70 15 7. 00 15 2 3. 75 8. 00 no 5. 83 16 8. 00 16 14 5. 75 8. 75 yes 5. 84 17 6. 75 17 5 2. 75 7. 50 no 6. 02 18 8. 50 19 4 3. 25 8. 25 no 6. 31 19 8. 75 17 5. 25 9. 50 yes 6. 67 20 8. 00 20 35 18

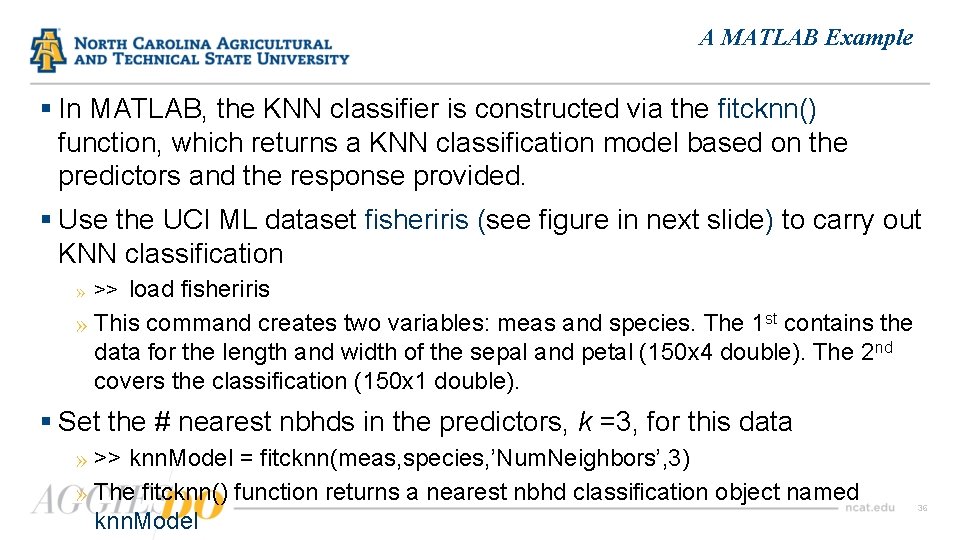

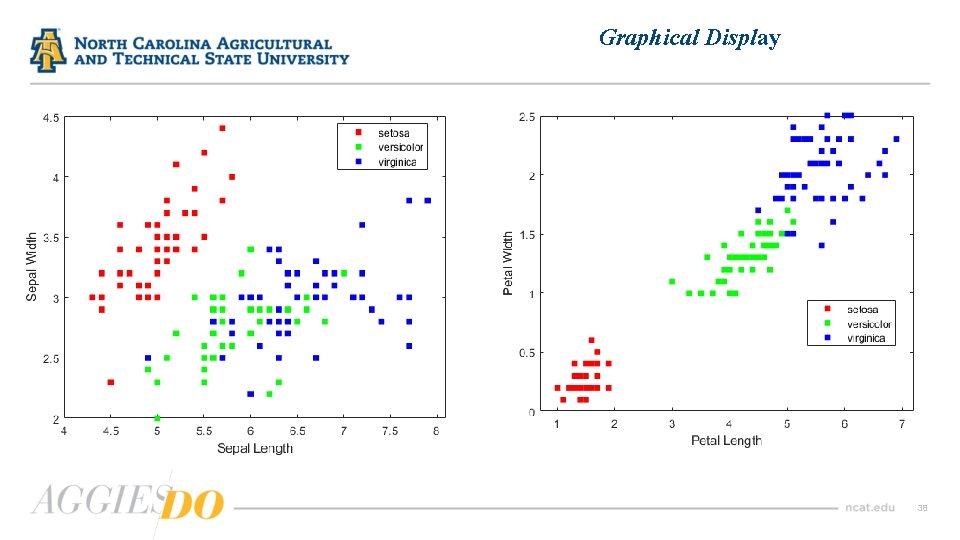

A MATLAB Example § In MATLAB, the KNN classifier is constructed via the fitcknn() function, which returns a KNN classification model based on the predictors and the response provided. § Use the UCI ML dataset fisheriris (see figure in next slide) to carry out KNN classification » >> load fisheriris » This command creates two variables: meas and species. The 1 st contains the data for the length and width of the sepal and petal (150 x 4 double). The 2 nd covers the classification (150 x 1 double). § Set the # nearest nbhds in the predictors, k =3, for this data » >> knn. Model = fitcknn(meas, species, ’Num. Neighbors’, 3) » The fitcknn() function returns a nearest nbhd classification object named knn. Model 36

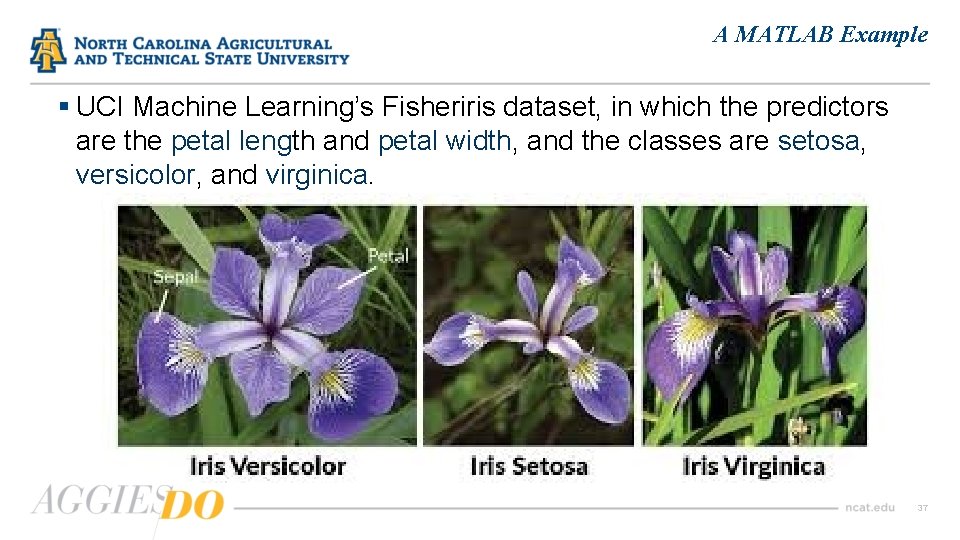

A MATLAB Example § UCI Machine Learning’s Fisheriris dataset, in which the predictors are the petal length and petal width, and the classes are setosa, versicolor, and virginica. 37

Graphical Display 38

A MATLAB Example § We can examine the properties of classification objects by doubleclicking knn. Model in the Workspace window. This opens the VARIABLE editor, in which a long list of properties is shown. § To access the properties of the model just created, use dot notation: » knn. Model. Class. Names ans = 3 x 1 cell array ‘setosa’ ‘versicolor’ ‘virginica’ 39

A MATLAB Example § To test the performance of the model, compute the resubstitution error: » knn 3 Resub. Err = resub. Loss(knn. Model) knn 3 Resub. Err = 0. 0400 » This means 4% of the observations are misclassified by the KNN algorithm. § To understand how these errors are distributed, we first collect the model predictions for the available data and then calculate the confusion matrix » predicted. Value = predict(knn. Model, meas); » conf. Mat = confusionmat(species, predicted. Value) 40

Cross-Validation § In cross-validation, all available data is used in fixed-size groups, or as a test set and a training set. § Each pattern is classified at least once and used for training § Practically the sample is subdivided into groups of equal numberm one group at a time is excluded and tries to predict it with non-excluded groups § This process is repeated k times such that each subset is used exactly once for validation to verify the quality of the prediction model used. § In MATLAB, cross-validation is performed by the crossval() function, which creates a partitioned model from fitted KNN classification model. 41

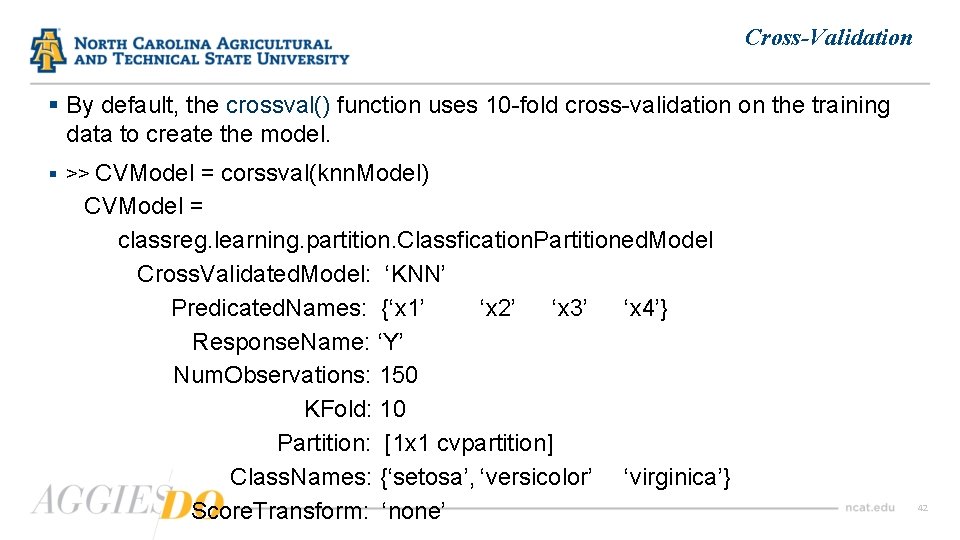

Cross-Validation § By default, the crossval() function uses 10 -fold cross-validation on the training data to create the model. § >> CVModel = corssval(knn. Model) CVModel = classreg. learning. partition. Classfication. Partitioned. Model Cross. Validated. Model: ‘KNN’ Predicated. Names: {‘x 1’ ‘x 2’ ‘x 3’ ‘x 4’} Response. Name: ‘Y’ Num. Observations: 150 KFold: 10 Partition: [1 x 1 cvpartition] Class. Names: {‘setosa’, ‘versicolor’ ‘virginica’} Score. Transform: ‘none’ 42

Cross Validation § A Classification. Partition. Model object is created with several properties and methods, some of which were shown in the previous page. § We can examine the properties of the Classification. Partition. Model object by double-clicking CVModel in the workspace window. § We can review the cross-validation loss, which is the average loss of each crossvalidation model when predication is executed on data that is not used for training. » KLoss. Model = kfold. Loss(CVModel) KLoss. Model = 0. 0333 § The cross-validation classification accuracy of 96. 67% is very close to the resubstitution accuracy of 96%. § It is expected that the classification model to misclassify approx. 4% of the new data 43

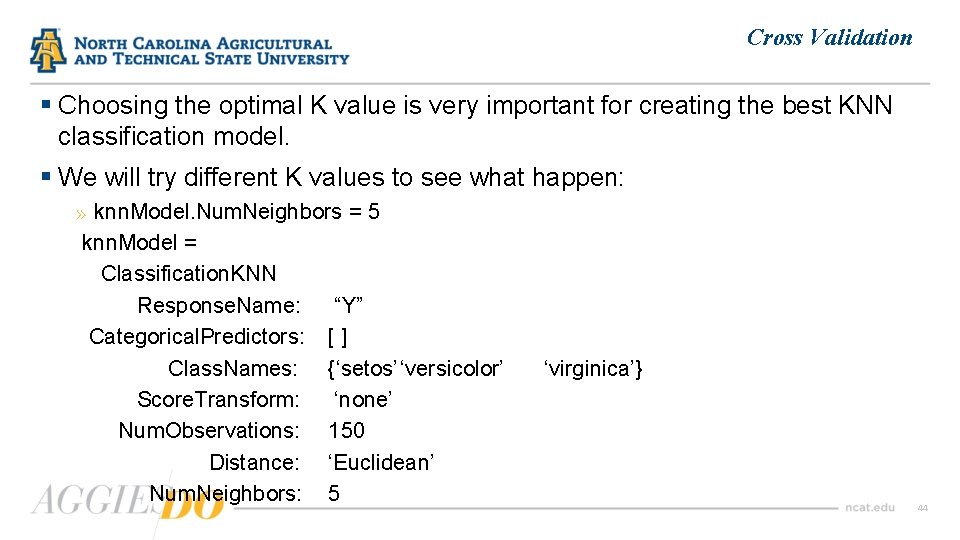

Cross Validation § Choosing the optimal K value is very important for creating the best KNN classification model. § We will try different K values to see what happen: » knn. Model. Num. Neighbors = 5 knn. Model = Classification. KNN Response. Name: “Y” Categorical. Predictors: [ ] Class. Names: {‘setos’ ‘versicolor’ Score. Transform: ‘none’ Num. Observations: 150 Distance: ‘Euclidean’ Num. Neighbors: 5 ‘virginica’} 44

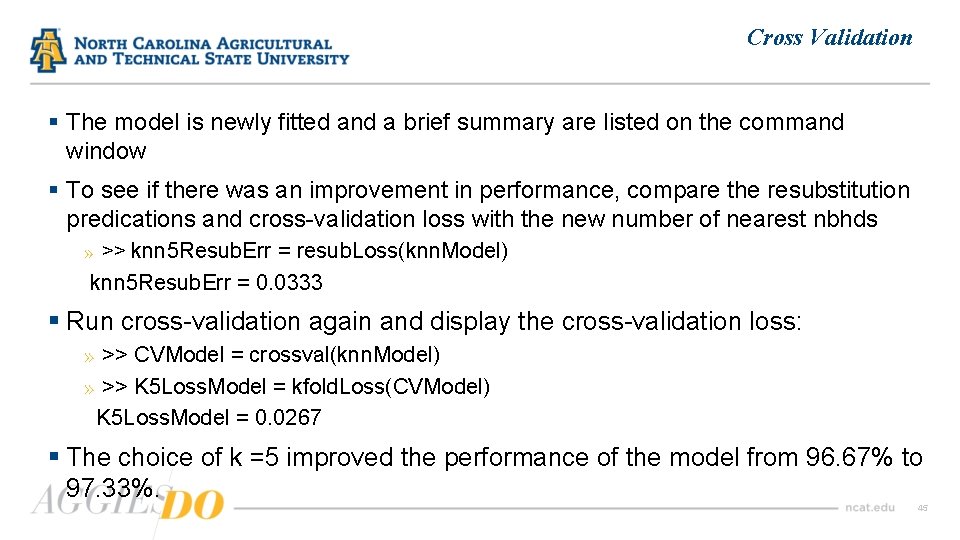

Cross Validation § The model is newly fitted and a brief summary are listed on the command window § To see if there was an improvement in performance, compare the resubstitution predications and cross-validation loss with the new number of nearest nbhds » >> knn 5 Resub. Err = resub. Loss(knn. Model) knn 5 Resub. Err = 0. 0333 § Run cross-validation again and display the cross-validation loss: » >> CVModel = crossval(knn. Model) » >> K 5 Loss. Model = kfold. Loss(CVModel) K 5 Loss. Model = 0. 0267 § The choice of k =5 improved the performance of the model from 96. 67% to 97. 33%. 45

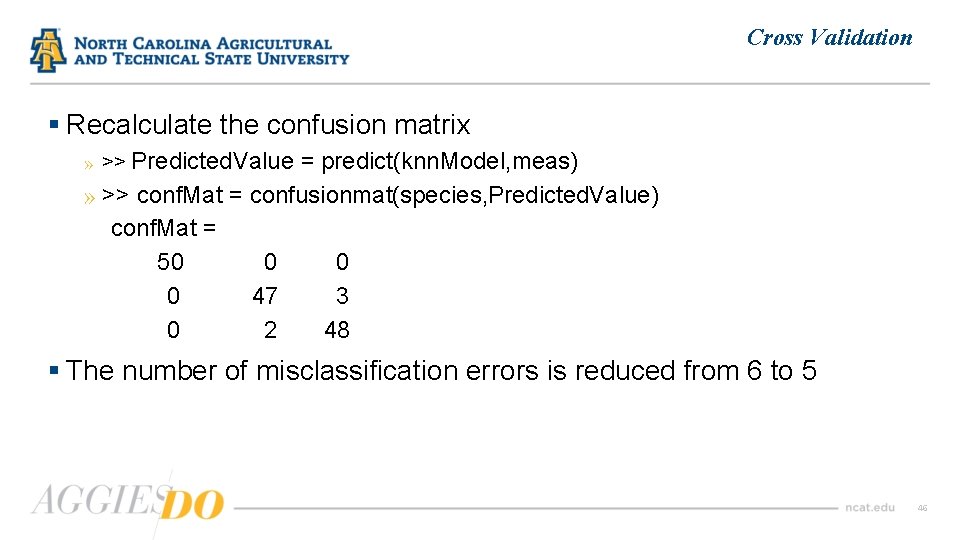

Cross Validation § Recalculate the confusion matrix » >> Predicted. Value = predict(knn. Model, meas) » >> conf. Mat = confusionmat(species, Predicted. Value) conf. Mat = 50 0 0 0 47 3 0 2 48 § The number of misclassification errors is reduced from 6 to 5 46

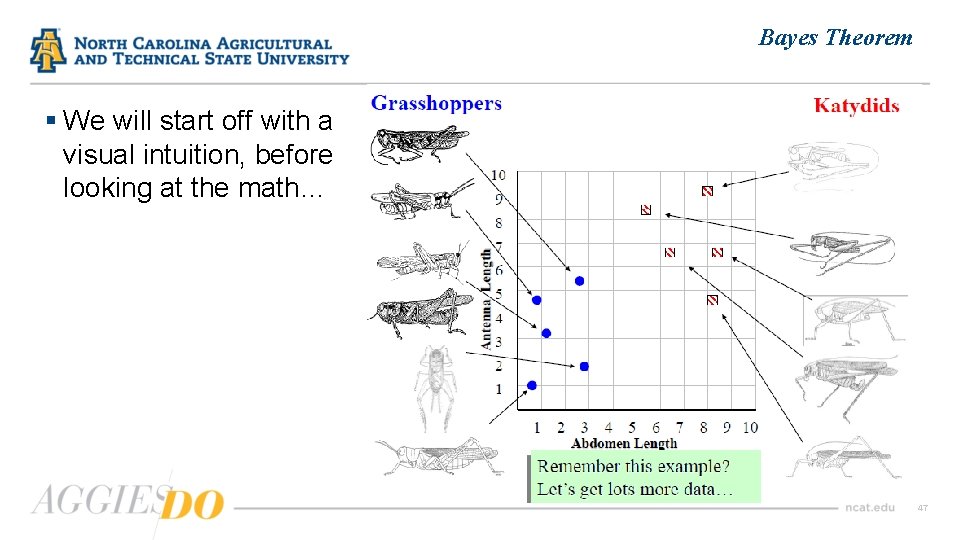

Bayes Theorem § We will start off with a visual intuition, before looking at the math… 47

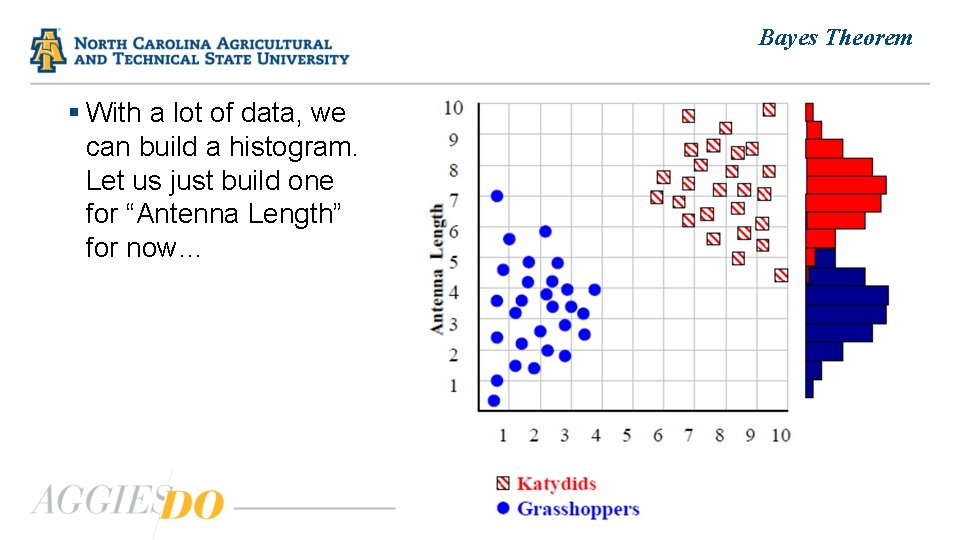

Bayes Theorem § With a lot of data, we can build a histogram. Let us just build one for “Antenna Length” for now… 48

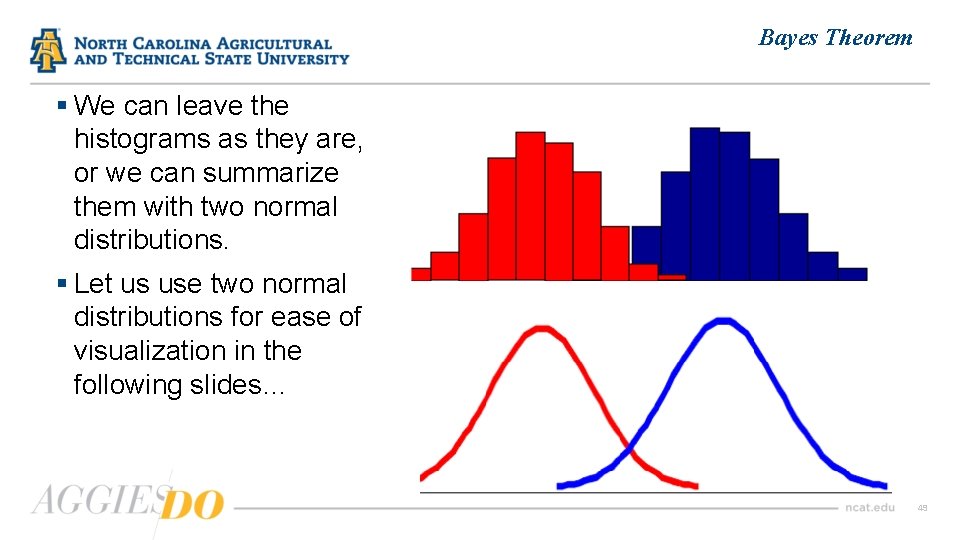

Bayes Theorem § We can leave the histograms as they are, or we can summarize them with two normal distributions. § Let us use two normal distributions for ease of visualization in the following slides… 49

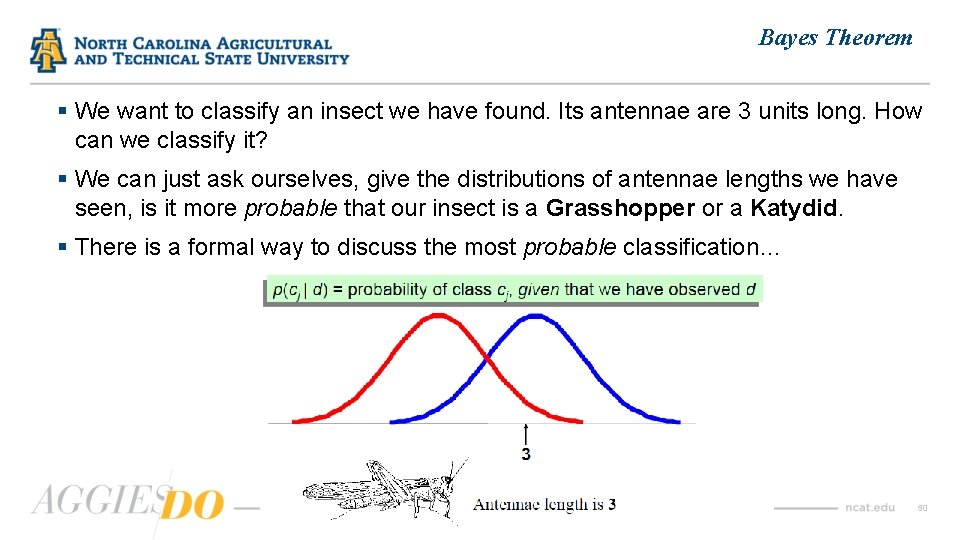

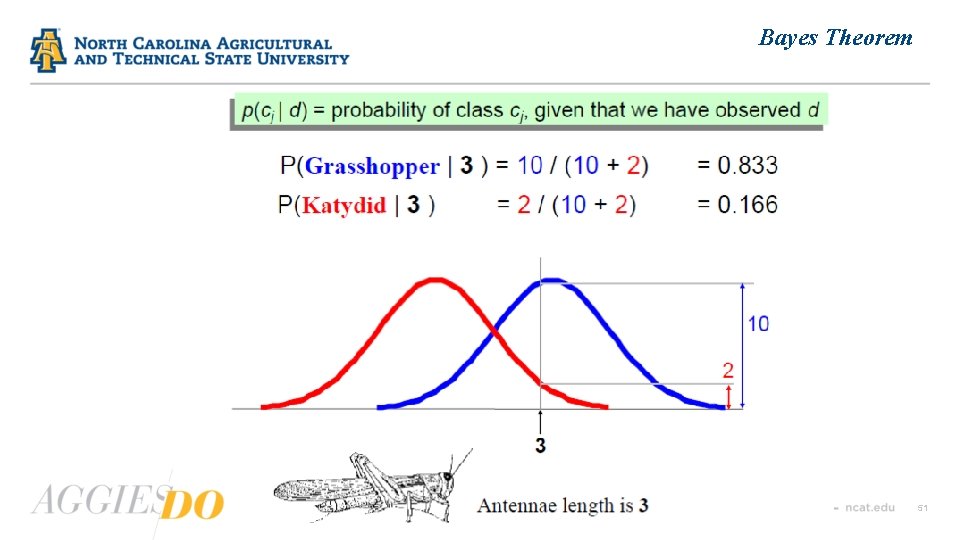

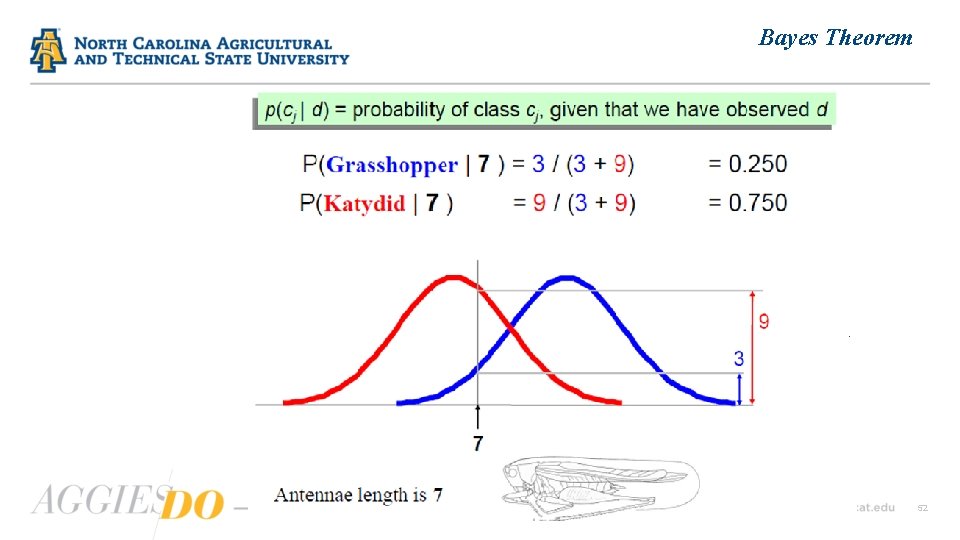

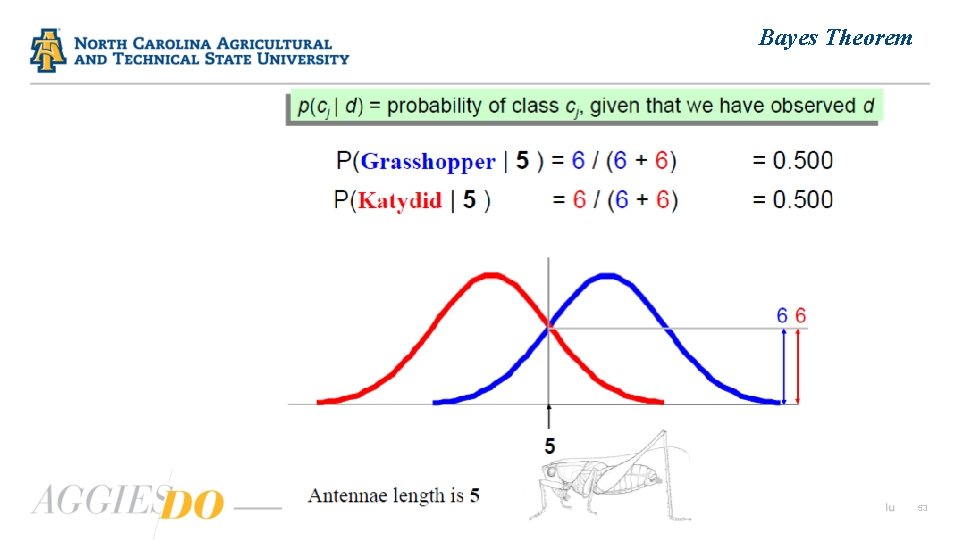

Bayes Theorem § We want to classify an insect we have found. Its antennae are 3 units long. How can we classify it? § We can just ask ourselves, give the distributions of antennae lengths we have seen, is it more probable that our insect is a Grasshopper or a Katydid. § There is a formal way to discuss the most probable classification… 50

Bayes Theorem 51

Bayes Theorem 52

Bayes Theorem 53

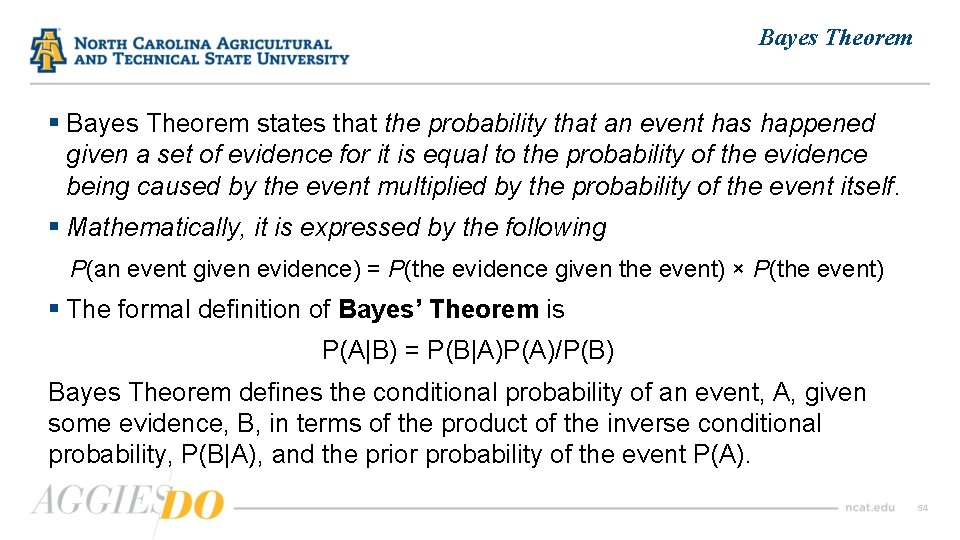

Bayes Theorem § Bayes Theorem states that the probability that an event has happened given a set of evidence for it is equal to the probability of the evidence being caused by the event multiplied by the probability of the event itself. § Mathematically, it is expressed by the following P(an event given evidence) = P(the evidence given the event) × P(the event) § The formal definition of Bayes’ Theorem is P(A|B) = P(B|A)P(A)/P(B) Bayes Theorem defines the conditional probability of an event, A, given some evidence, B, in terms of the product of the inverse conditional probability, P(B|A), and the prior probability of the event P(A). 54

Bayes Theorem § For an illustrative example of Bayes’ Theorem in action, imagine that after a yearly checkup, a doctor informs a patient that there is both bad news and good news. § The bad news is that the patient has tested positive for a serious disease and that the test the doctor used is 99% accurate (i. e. , the probability of testing positive when a patient has the disease is 0. 99, as is the probability of testing negative when a patient does not have the disease). § The good news, however, is that the disease is extremely rare, striking only 1 in 10, 000 people. § So what is the actual probability that the patient has the disease? And why is the rarity of the disease good news given that the patient has tested positive for it? 55

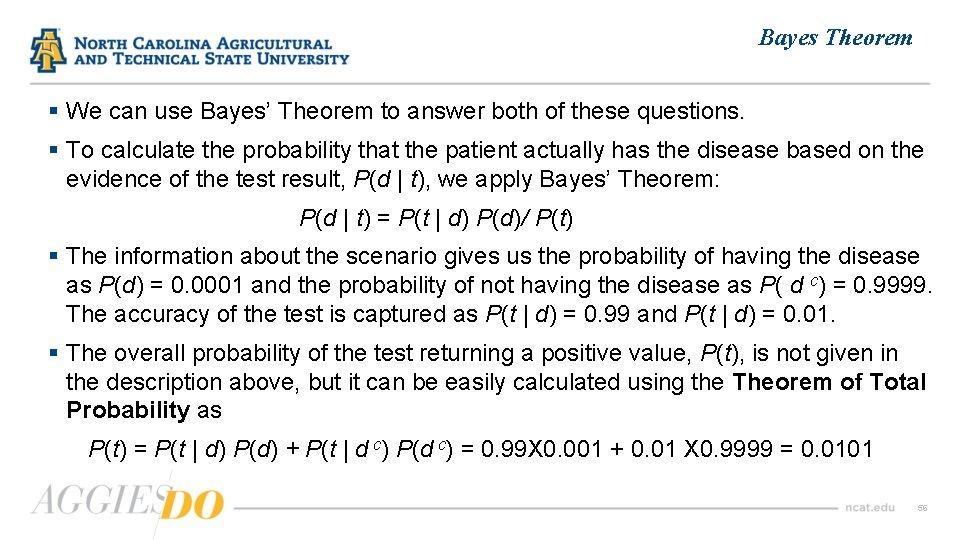

Bayes Theorem § We can use Bayes’ Theorem to answer both of these questions. § To calculate the probability that the patient actually has the disease based on the evidence of the test result, P(d | t), we apply Bayes’ Theorem: P(d | t) = P(t | d) P(d)/ P(t) § The information about the scenario gives us the probability of having the disease as P(d) = 0. 0001 and the probability of not having the disease as P( d c) = 0. 9999. The accuracy of the test is captured as P(t | d) = 0. 99 and P(t | d) = 0. 01. § The overall probability of the test returning a positive value, P(t), is not given in the description above, but it can be easily calculated using the Theorem of Total Probability as P(t) = P(t | d) P(d) + P(t | d c) P(d c) = 0. 99 X 0. 001 + 0. 01 X 0. 9999 = 0. 0101 56

Bayes Theorem § Applying Bayes Theorem yields: P(d | t) = P(t | d) P(d)/ P(t) = 0. 99 x 0. 0001/0. 0101 = 0. 0098 § So, the probability of actually having the disease, in spite of the positive test result, is less than 1%. § This is why the doctor said the rarity of the disease was such good news § One of the important characteristics of Bayes’ Theorem is its explicit inclusion of the prior probability of an event when calculating the likelihood of that event based on evidence. 57

Bayes Classifiers § Bayesian classifiers use Bayes theorem, which says P(cj | d ) = P(d | cj ) P(cj)/ P(d) where: ØP(cj | d) = probability of instance d being in class cj (This is what we are trying to compute); ØP(d | cj) = probability of generating instance d given class cj (We can imagine that being in class cj, causes you to have feature d with some probability); ØP(cj) = probability of occurrence of class cj (This is just how frequent the class cj, is in our database); and ØP(d)= probability of instance d occurring (This can actually be ignored, since it is the same for all classes). 58

Bayesian Prediction § To make Bayesian predictions, we generate the probability of the event that a target feature, t, takes a specific level, l, given the assignment of values to a set of descriptive features, q = (q 1, q 2, …, qm ), from a query instance. § We generalize the definition of Bayes Theorem so that it can take into account more than one piece of evidence (each descriptive feature value is a separate piece of evidence). § The Generalized Bayes’ Theorem is defined as P( t = l | q 1, q 2, …, qm ) = P( q 1, q 2, …, qm | t = l ) X P(t = l ) / P(q 1, q 2, …, qm ) 59

Bayesian Prediction § To calculate a probability using the Generalized Bayes’ Theorem, we need to calculate three probabilities: » P(t = l), the prior probability of the target feature t taking the level l » P(q 1, q 2, …, qm), the joint probability of the descriptive features of a query instance taking a specific set of values » P(q 1, q 2, …, qm | t = l), the conditional probability of the descriptive features of a query instance taking a specific set of values given that the target feature takes the level l § The first two of these probabilities are easy to calculate. » P(t = l) is simply the relative frequency with which the target feature takes the level l in a dataset. » P(q 1, q 2, …, qm ] can be calculated as the relative frequency in a dataset of the joint event that the descriptive features of an instance take on the values q 1, q 2, …, qm. 60

Bayesian Prediction § The final probability that we need to calculate, P(q 1, q 2, …, qm | t = l), can be calculated either directly from a dataset (by calculating the relative frequency of the joint event q 1, q 2, …, qm within the set of instances where t = l), or alternatively, it can be calculated using the probability chain rule. § The chain rule states that the probability of a joint event can be rewritten as a product of conditional probabilities. So, we can rewrite P(q 1, q 2, …, qm ), as P(q 1, q 2, …, qm ) = P(q 1) P(q 2 | q 1) P(q 3 | q 1, q 2) … P(qm | q 1, q 2 , …, qm-1 ) § We can use the chain rule for conditional probabilities by just adding the conditioning term to each term in the expression, so P(q 1, q 2, …, qm | t = l) = P(q 1 | t = l) P(q 2 | q 1, t = l) P(q 3 | q 1, q 2 , t = l) … P(qm | q 1, q 2 , …, qm-1 , t = l) 61

Conditional Independence § If knowledge of one event has no effect on the probability of another event, and vice versa, then the two events are said to be independent of each other. § Mathematically, if two events A and B are independent, then P(AB) = P(A)P(B), and P(A|B) = P(A). § Full independence between events is quite rare. A more common phenomenon is that two, or more, events may be independent if we know that a third event has happened. This is known as conditional independence. § The typical situation where conditional independence holds between events is when the events share the same cause. § For two events, A and B, that are conditionally independent given knowledge of a third event, here C, we can say that P(AB|C) = P(A|C)P(B|C), and. P(A|B, C) = P(A|C). 62

Naïve Bayes Model § The naïve Bayes model is defined as M(q) = argmax {Π {P(qi | t=l ), i = 1, …, m}X P(t=l ), l ϵ levels(t) }, where t is a target feature with a set of levels, levels(t), and q is a query instance with set of descriptive features, q 1, …, qm. § The naive Bayes model leverages conditional independence to the extreme by assuming conditional independence between the assignment of all the descriptive feature values given the target level. § This assumption allows a naive Bayes model to radically reduce the number of probabilities it requires, resulting in a very compact, highly factored representation of a domain. 63

Naïve Bayes Model § We say that the naive Bayes model is naive because the assumption of conditional independence between the features in the evidence given the target level is a simplifying assumption that is made whether or not it is incorrect. § Despite this simplifying assumption, however, the naive Bayes approach has been found to be surprisingly accurate across a large range of domains. § This is partly because errors in the calculation of the posterior probabilities for the different target levels do not necessarily result in prediction errors. 64

Naïve Bayes Model § The assumption of conditional independence between the features in the evidence given the level of the target feature also makes the naive Bayes model relatively robust to data fragmentation and the curse of dimensionality. § This is particularly important in scenarios with small datasets or with sparse data. § One application domain where sparse data is the norm rather than the exception is in text analytics (for example, spam filtering), and naive Bayes models are often successful in this domain. § Overall, although naive Bayes models may not be as powerful as some other prediction models, they often provide reasonable accuracy results, for prediction tasks with categorical targets, while being robust to the curse of dimensionality and also being easy to train. 65

MATLAB implementation of Naïve Bayes Classifier § In MATLAB, the naïve Bayes classifier works with data in two steps: » 1 st step of training: Using a set of data, previously classified, the algorithm estimates the parameters of a probability distribution according to the assumption that predictors are conditionally independent given the class, » 2 nd step of prediction: For a new set of data, the posterior probability of that sample belonging to each class is computed. In this way, new data is classified according to the largest posterior probability. § To train a naïve Bayes classifier, we use the fitcnb() function; it returns a multiclass naïve Bayes model trained by the predictors provided. § We again consider UCI Machine Learning’s Fisheriris dataset, in which the predictors are the petal length and petal width, and the classes are setosa, versicolor, and virginica. 66

A MATLAB Example § To import data, we simply type » >> load fisheriris § We extract the 3 rd and 4 th columns of the meas variables, which correspond to petal length and petal width respectively, and then create a table with these variables to include in the model names of the predictors: » >> petal. Length = meas(: , 3); » >> petalwidth = meas(: , 4); » >> petal. Table = table(petal. Length, petalwidth); § To train a naïve Bayes classifier, we type » naive. Model. Petal = fitcnb(petal. Table, species, 'Class. Names’, …, {'setosa', 'versicolor', 'virginica'}) 67

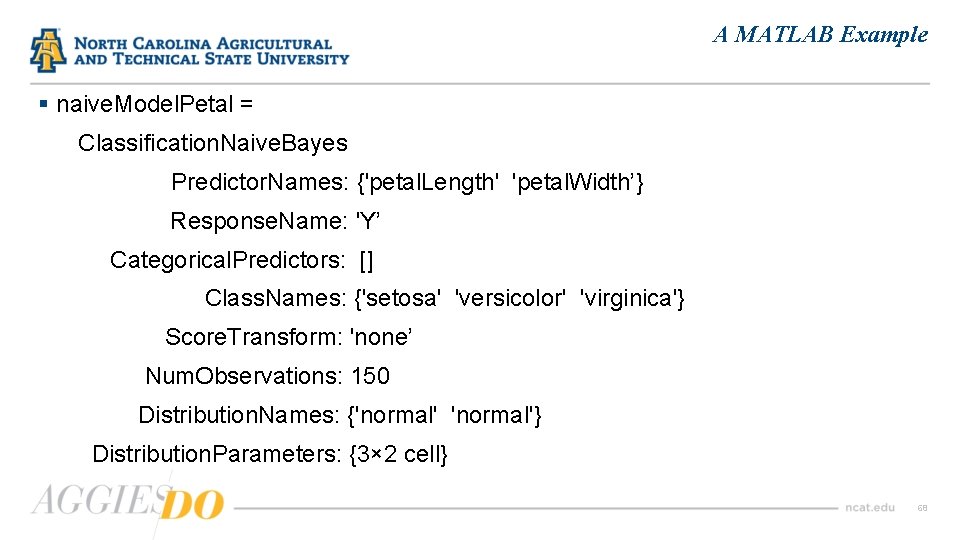

A MATLAB Example § naive. Model. Petal = Classification. Naive. Bayes Predictor. Names: {'petal. Length' 'petal. Width’} Response. Name: 'Y’ Categorical. Predictors: [] Class. Names: {'setosa' 'versicolor' 'virginica'} Score. Transform: 'none’ Num. Observations: 150 Distribution. Names: {'normal'} Distribution. Parameters: {3× 2 cell} 68

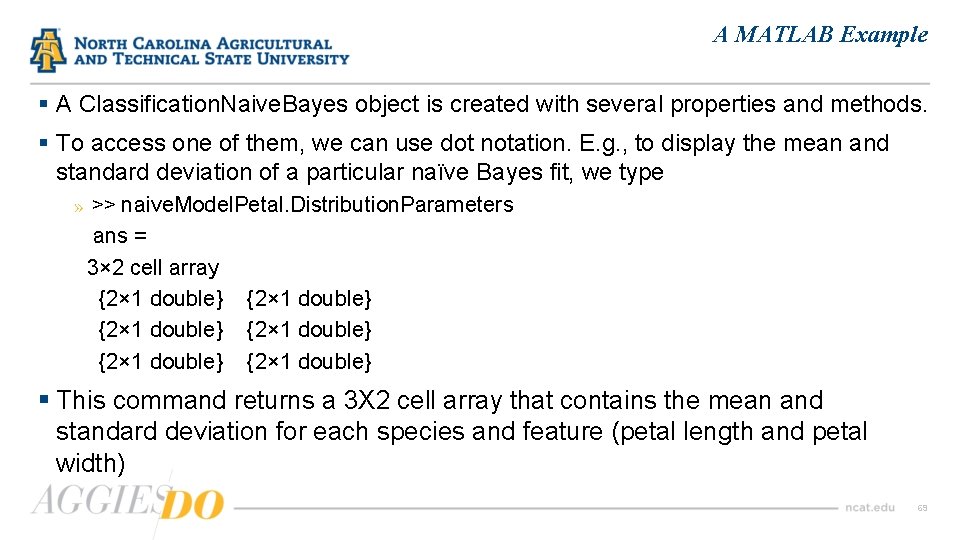

A MATLAB Example § A Classification. Naive. Bayes object is created with several properties and methods. § To access one of them, we can use dot notation. E. g. , to display the mean and standard deviation of a particular naïve Bayes fit, we type » >> naive. Model. Petal. Distribution. Parameters ans = 3× 2 cell array {2× 1 double} {2× 1 double} § This command returns a 3 X 2 cell array that contains the mean and standard deviation for each species and feature (petal length and petal width) 69

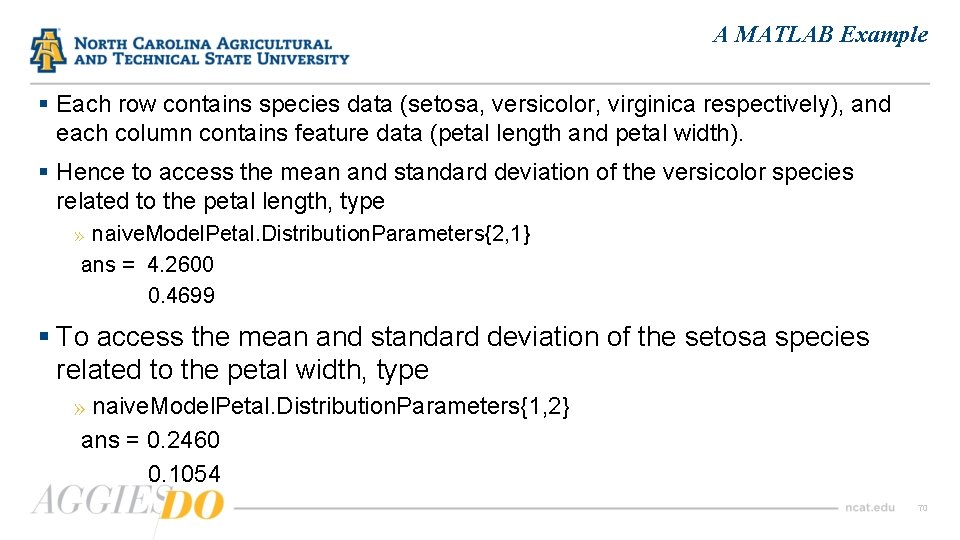

A MATLAB Example § Each row contains species data (setosa, versicolor, virginica respectively), and each column contains feature data (petal length and petal width). § Hence to access the mean and standard deviation of the versicolor species related to the petal length, type » naive. Model. Petal. Distribution. Parameters{2, 1} ans = 4. 2600 0. 4699 § To access the mean and standard deviation of the setosa species related to the petal width, type » naive. Model. Petal. Distribution. Parameters{1, 2} ans = 0. 2460 0. 1054 70

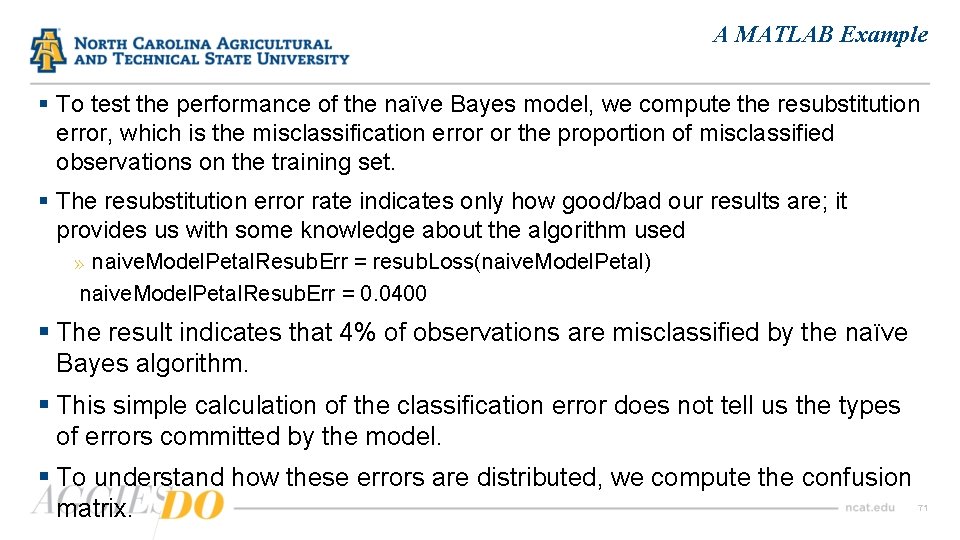

A MATLAB Example § To test the performance of the naïve Bayes model, we compute the resubstitution error, which is the misclassification error or the proportion of misclassified observations on the training set. § The resubstitution error rate indicates only how good/bad our results are; it provides us with some knowledge about the algorithm used » naive. Model. Petal. Resub. Err = resub. Loss(naive. Model. Petal) naive. Model. Petal. Resub. Err = 0. 0400 § The result indicates that 4% of observations are misclassified by the naïve Bayes algorithm. § This simple calculation of the classification error does not tell us the types of errors committed by the model. § To understand how these errors are distributed, we compute the confusion matrix. 71

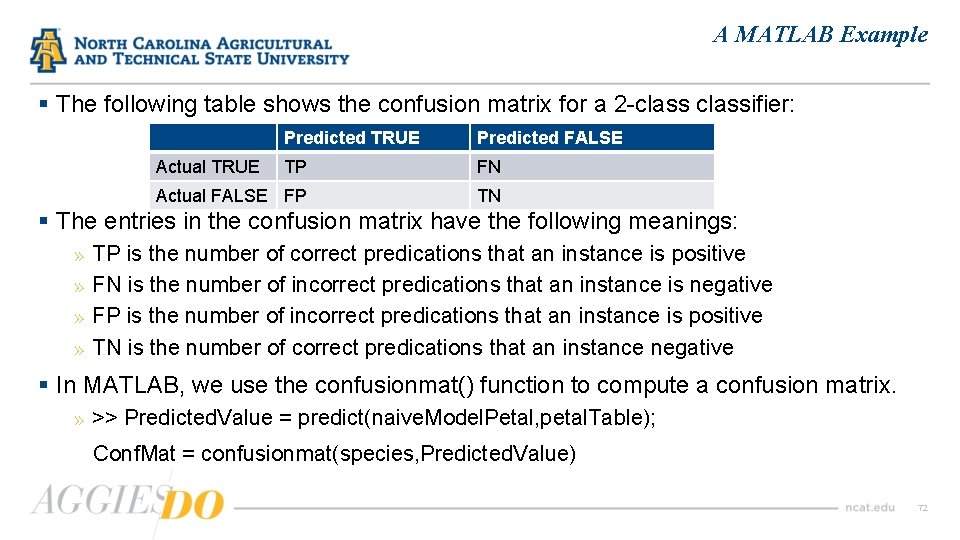

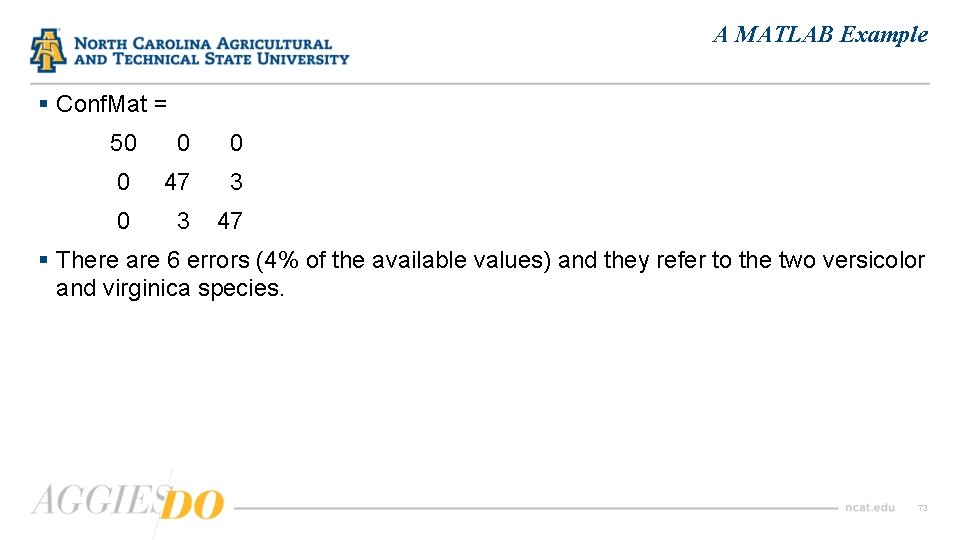

A MATLAB Example § The following table shows the confusion matrix for a 2 -classifier: Predicted TRUE Predicted FALSE TP FN Actual FALSE FP TN Actual TRUE § The entries in the confusion matrix have the following meanings: » TP is the number of correct predications that an instance is positive » FN is the number of incorrect predications that an instance is negative » FP is the number of incorrect predications that an instance is positive » TN is the number of correct predications that an instance negative § In MATLAB, we use the confusionmat() function to compute a confusion matrix. » >> Predicted. Value = predict(naive. Model. Petal, petal. Table); Conf. Mat = confusionmat(species, Predicted. Value) 72

A MATLAB Example § Conf. Mat = 50 0 0 0 47 3 0 3 47 § There are 6 errors (4% of the available values) and they refer to the two versicolor and virginica species. 73

Advantages/Disadvantages of Naïve Bayes § Advantages: Ø Ø Fast to train (single scan). Fast to classify Not sensitive to irrelevant features Handles real and discrete data Handles streaming data well § Disadvantages: Ø Assumes independence of features 74

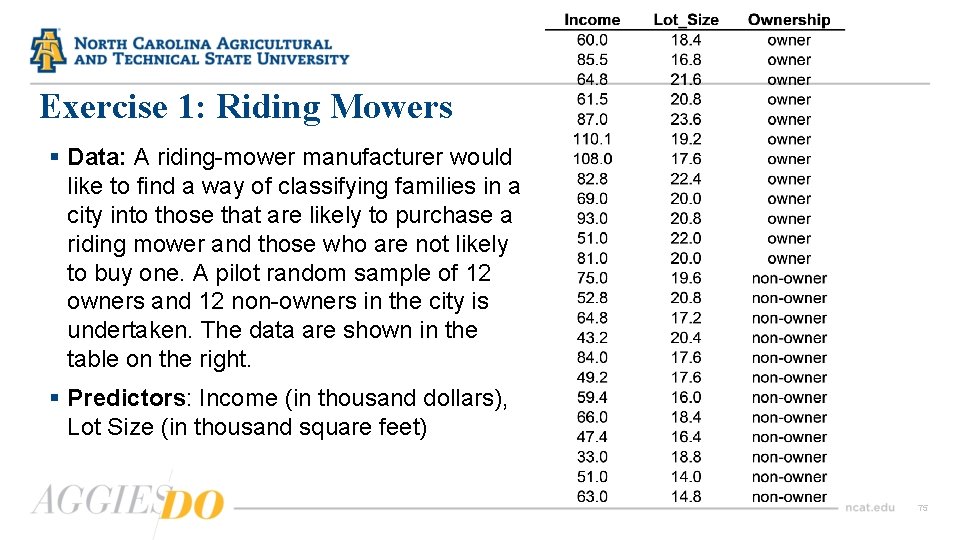

Exercise 1: Riding Mowers § Data: A riding-mower manufacturer would like to find a way of classifying families in a city into those that are likely to purchase a riding mower and those who are not likely to buy one. A pilot random sample of 12 owners and 12 non-owners in the city is undertaken. The data are shown in the table on the right. § Predictors: Income (in thousand dollars), Lot Size (in thousand square feet) 75

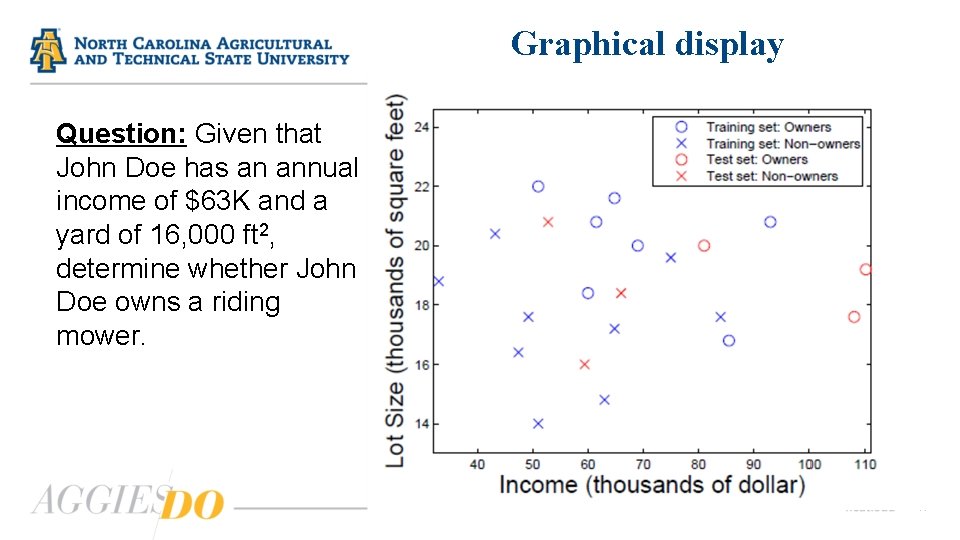

How do we choose k? § In data mining we use the training data to classify the cases in the validation data using the data in the training set to compute error rates for various choices of k. § For our example we have randomly divided the data into a training set with 18 cases and a validation set of 6 cases. Of course, in a real data mining situation we would have sets of much larger sizes. § The validation set consists of samples 6, 7, 12, 14, 19, 20 in the table. The remaining 18 samples constitute the training data. § The figure in the next page displays the samples in both the training and validation sets. 76

Graphical display Question: Given that John Doe has an annual income of $63 K and a yard of 16, 000 ft 2, determine whether John Doe owns a riding mower. 77

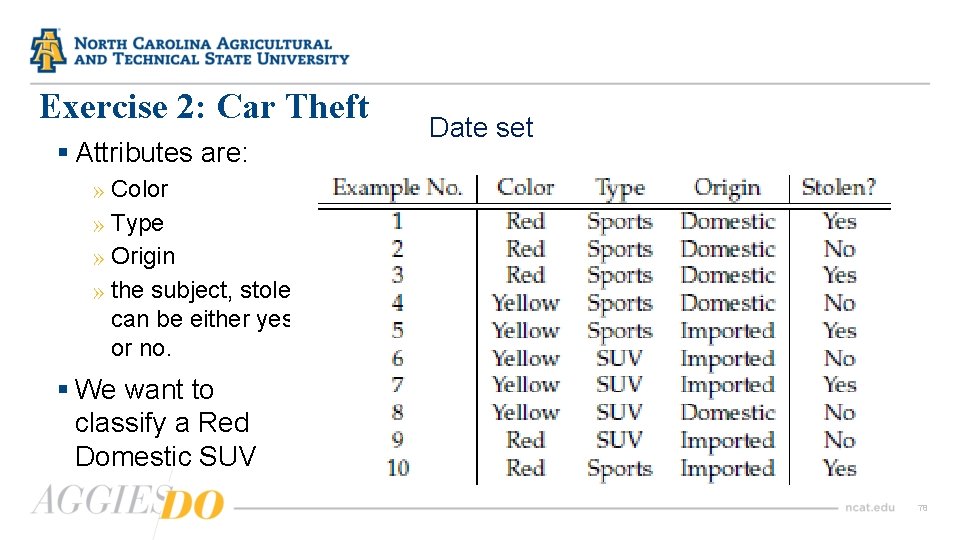

Exercise 2: Car Theft § Attributes are: Date set » Color » Type » Origin » the subject, stolen, can be either yes or no. § We want to classify a Red Domestic SUV 78

Questions 79

- Slides: 79