A Fast and Scalable Nearest Neighbor Based Classification

A Fast and Scalable Nearest Neighbor Based Classification Taufik Abidin and William Perrizo Department of Computer Science North Dakota State University

Outline Nearest Neighbors Classification l Problems l SMART TV (SMall Absolute diffe. Rence of To. Tal Variation): A Fast and Scalable Nearest Neighbors Classification Algorithm l SMART TV in Image Classification l

Classification Given a (large) TRAINING SET, R(A 1, …, An, C), with C=CLASSES and (A 1…An)=FEATURES Unclassified Object Classification task is: to label the unclassified objects based on the pre-defined class. Search labels of Kobjects in the training set for the Vote the class -Nearest Training Set Prominent classification algorithms: Neighbors SVM, KNN, Bayesian, etc.

Problems with KNN l l Finding k-nearest neighbors is expensive when the training set contains millions of objects (very large training set) The classification time is linear to the size of the training set Can we make it faster and scalable?

![P-Tree Vertical Data Structure R[A 1] R[A 2] R[A 3] R[A 4] R A P-Tree Vertical Data Structure R[A 1] R[A 2] R[A 3] R[A 4] R A](http://slidetodoc.com/presentation_image/8f054f56e15c70f27c1fae3cf9fcafc7/image-5.jpg)

P-Tree Vertical Data Structure R[A 1] R[A 2] R[A 3] R[A 4] R A 1 A 2 A 3 A 4 2 6 2 2 5 2 7 7 7 2 2 0 0 6 6 5 5 1 1 1 0 1 7 4 5 4 4 = 010 011 010 101 010 111 111 110 111 010 000 110 101 001 001 001 000 001 111 100 100 The construction steps of P-trees: 1. Convert the data into binary 2. Vertically project each attribute 3. Vertically project each bit position 4. Compress each bit slice into a P-tree 010 011 010 101 010 111 111 110 111 010 000 110 101 001 001 001 000 001 111 100 100 R 11 R 12 R 13 R 21 R 22 R 23 R 31 R 32 R 33 0 0 1 0 1 1 1 0 0 1 1 P 12 P 13 1 1 0 0 1 1 0 0 0 0 1 1 0 0 0 0 1 1 1 R 41 R 42 R 43 0 0 0 1 1 1 0 0 0 0 1 1 0 0 P 21 P 22 P 23 P 31 P 32 P 33 P 41 P 42 P 43 0 0 0 0 1 0 0 01 0 0 0 10 10 10 01 01 0001 0100 01 ^ 10 ^ ^ ^ 01 ^ ^ 10 01 01 10

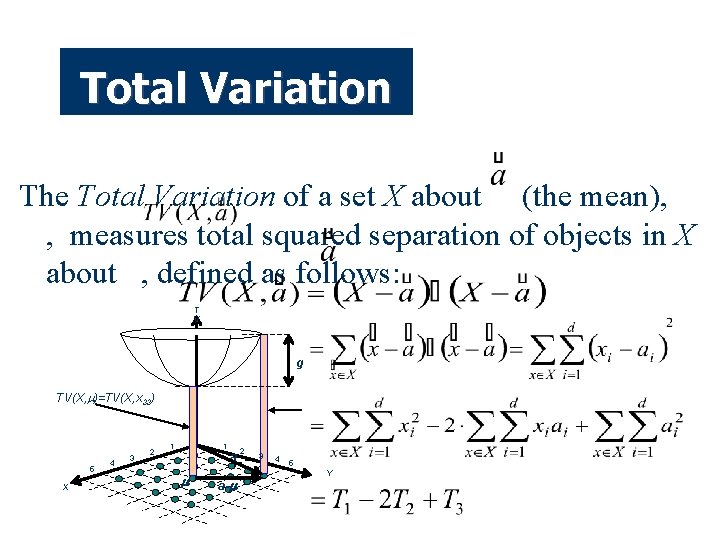

Total Variation The Total Variation of a set X about (the mean), , measures total squared separation of objects in X about , defined as follows: T V g TV(X, )=TV(X, x 33) 5 X 4 3 2 1 1 a a- 2 3 4 5 Y

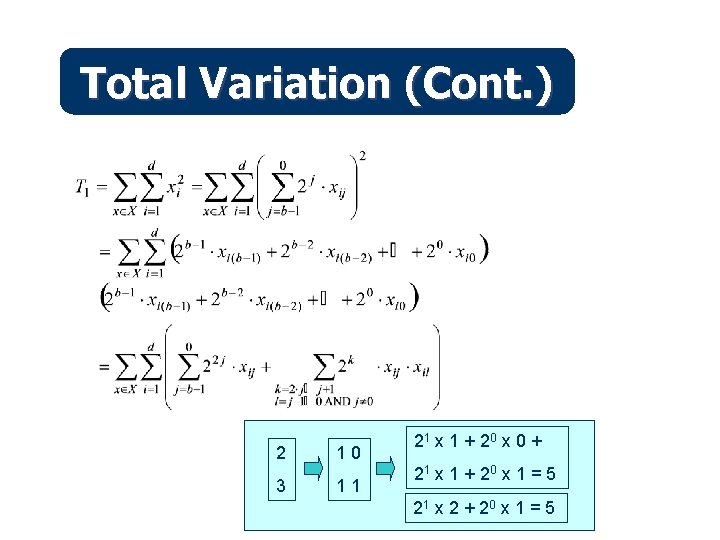

Total Variation (Cont. ) 2 3 10 11 21 x 1 + 2 0 x 0 + 21 x 1 + 2 0 x 1 = 5 21 x 2 + 2 0 x 1 = 5

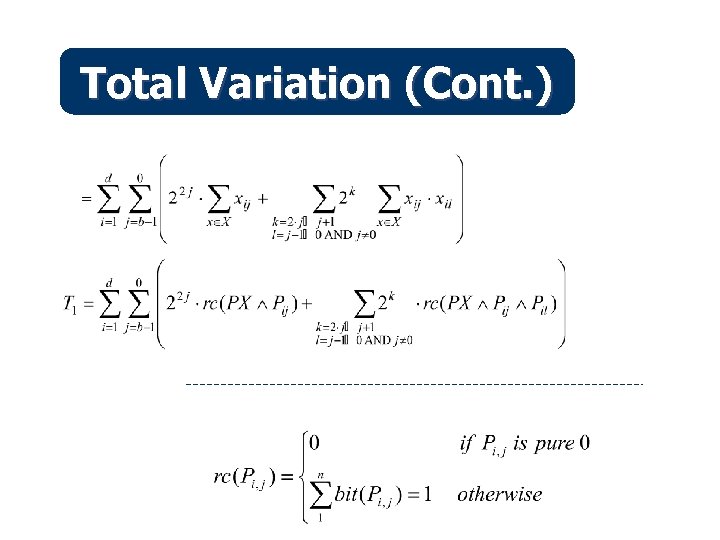

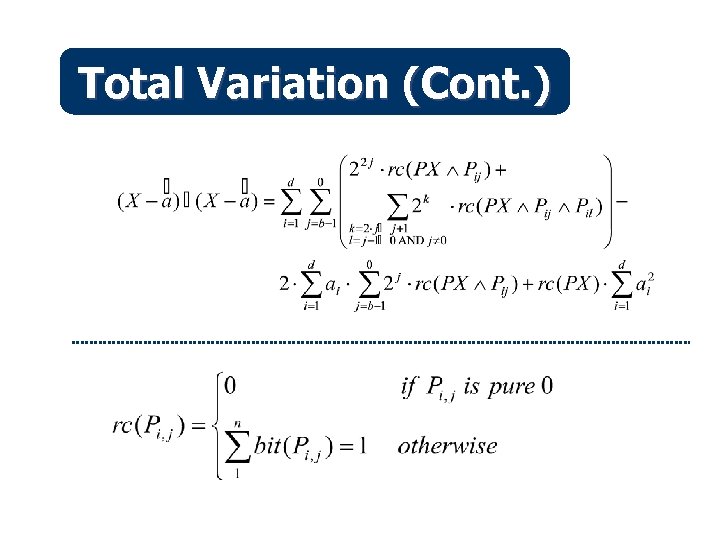

Total Variation (Cont. )

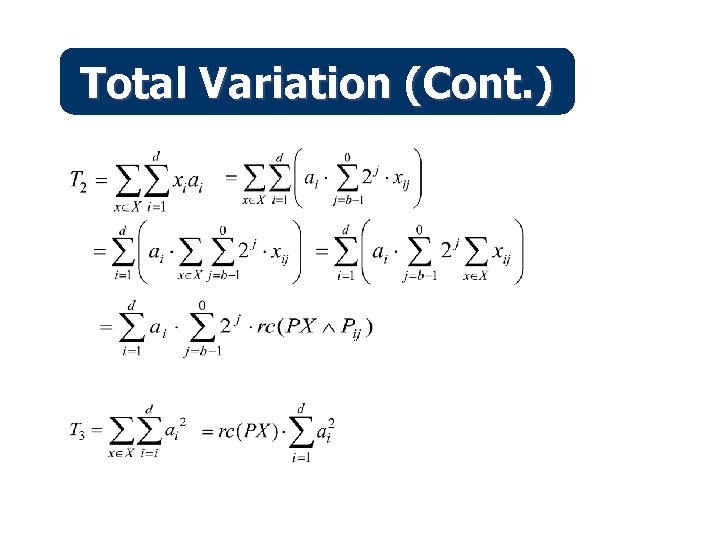

Total Variation (Cont. )

Total Variation (Cont. )

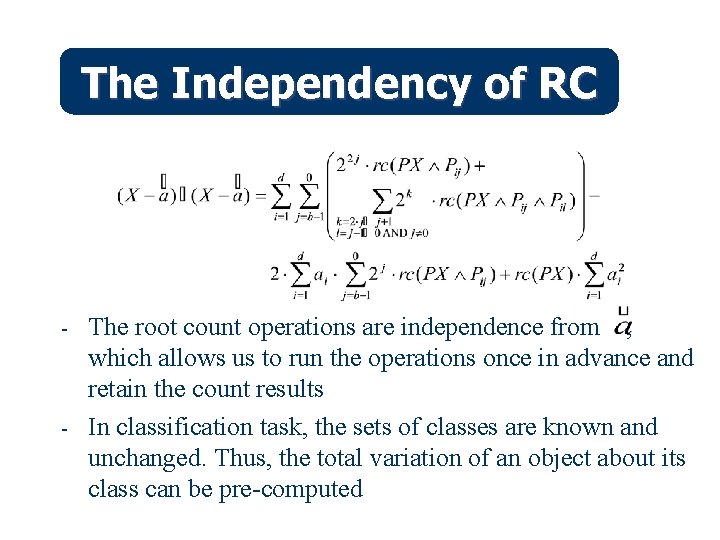

The Independency of RC - - The root count operations are independence from , which allows us to run the operations once in advance and retain the count results In classification task, the sets of classes are known and unchanged. Thus, the total variation of an object about its class can be pre-computed

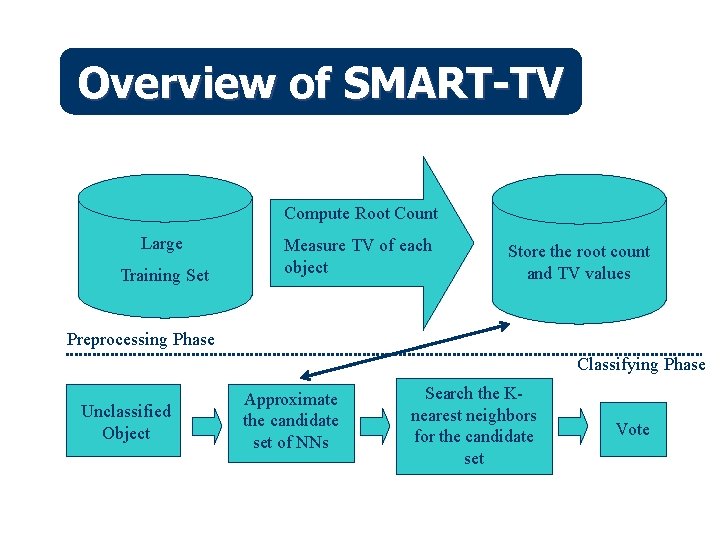

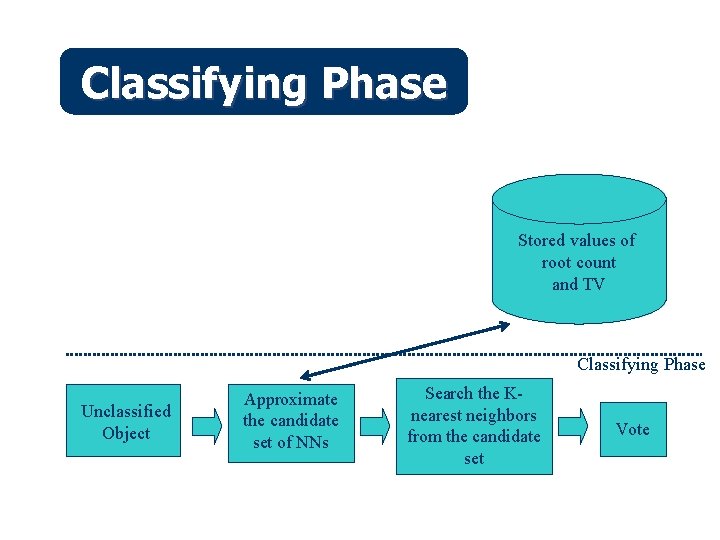

Overview of SMART-TV Compute Root Count Large Training Set Measure TV of each object Store the root count and TV values Preprocessing Phase Classifying Phase Unclassified Object Approximate the candidate set of NNs Search the Knearest neighbors for the candidate set Vote

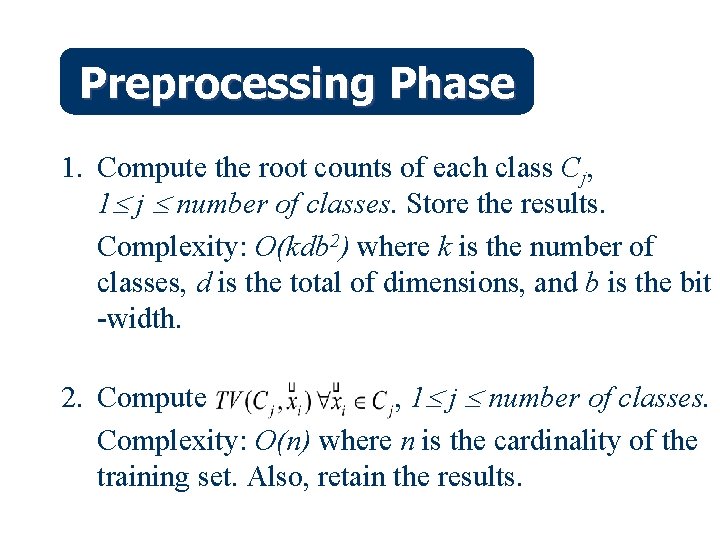

Preprocessing Phase 1. Compute the root counts of each class Cj, 1 j number of classes. Store the results. Complexity: O(kdb 2) where k is the number of classes, d is the total of dimensions, and b is the bit -width. 2. Compute , 1 j number of classes. Complexity: O(n) where n is the cardinality of the training set. Also, retain the results.

Classifying Phase Stored values of root count and TV Classifying Phase Unclassified Object Approximate the candidate set of NNs Search the Knearest neighbors from the candidate set Vote

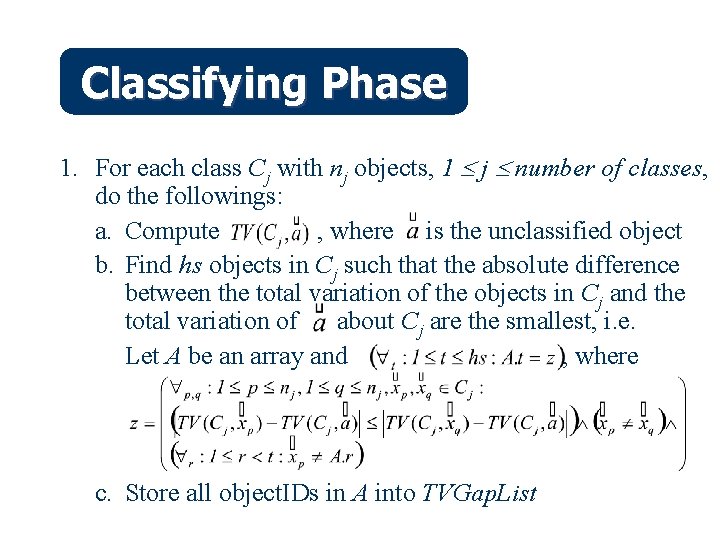

Classifying Phase 1. For each class Cj with nj objects, 1 j number of classes, do the followings: a. Compute , where is the unclassified object b. Find hs objects in Cj such that the absolute difference between the total variation of the objects in Cj and the total variation of about Cj are the smallest, i. e. Let A be an array and , where c. Store all object. IDs in A into TVGap. List

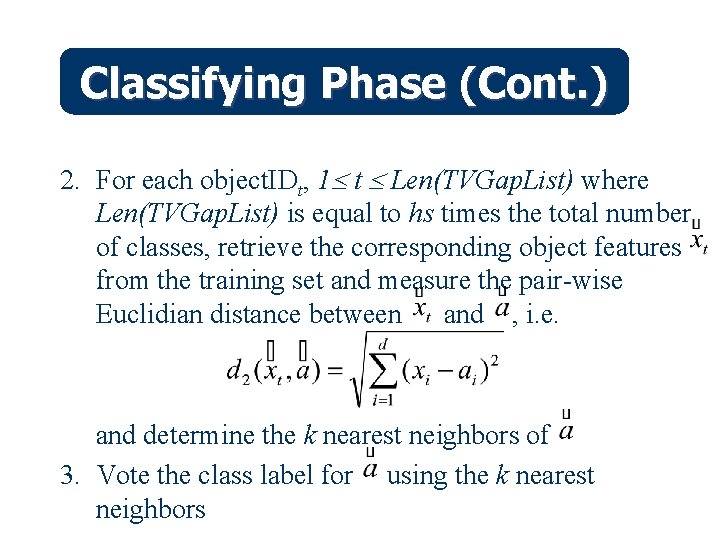

Classifying Phase (Cont. ) 2. For each object. IDt, 1 t Len(TVGap. List) where Len(TVGap. List) is equal to hs times the total number of classes, retrieve the corresponding object features from the training set and measure the pair-wise Euclidian distance between and , i. e. and determine the k nearest neighbors of 3. Vote the class label for using the k nearest neighbors

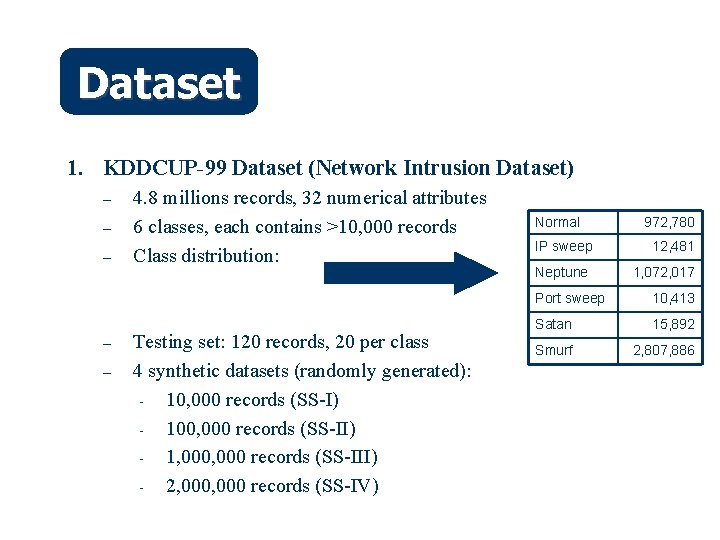

Dataset 1. KDDCUP-99 Dataset (Network Intrusion Dataset) – – – 4. 8 millions records, 32 numerical attributes 6 classes, each contains >10, 000 records Class distribution: Testing set: 120 records, 20 per class 4 synthetic datasets (randomly generated): - 10, 000 records (SS-I) - 100, 000 records (SS-II) - 1, 000 records (SS-III) - 2, 000 records (SS-IV) Normal IP sweep Neptune 972, 780 12, 481 1, 072, 017 Port sweep 10, 413 Satan 15, 892 Smurf 2, 807, 886

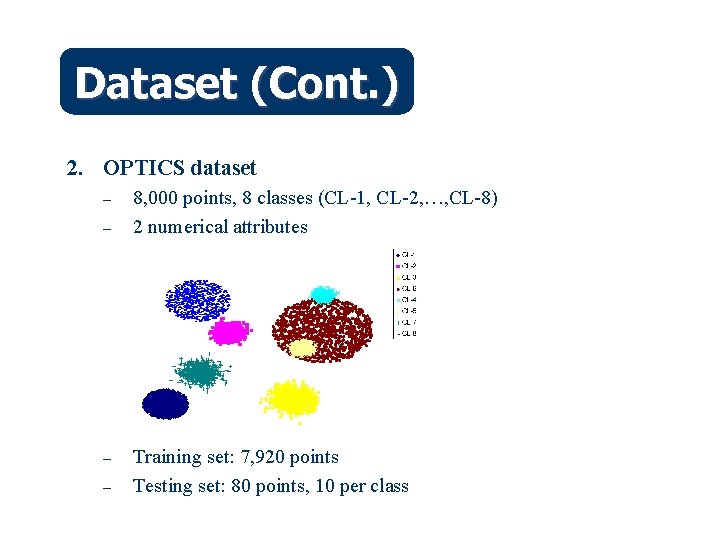

Dataset (Cont. ) 2. OPTICS dataset – – 8, 000 points, 8 classes (CL-1, CL-2, …, CL-8) 2 numerical attributes Training set: 7, 920 points Testing set: 80 points, 10 per class

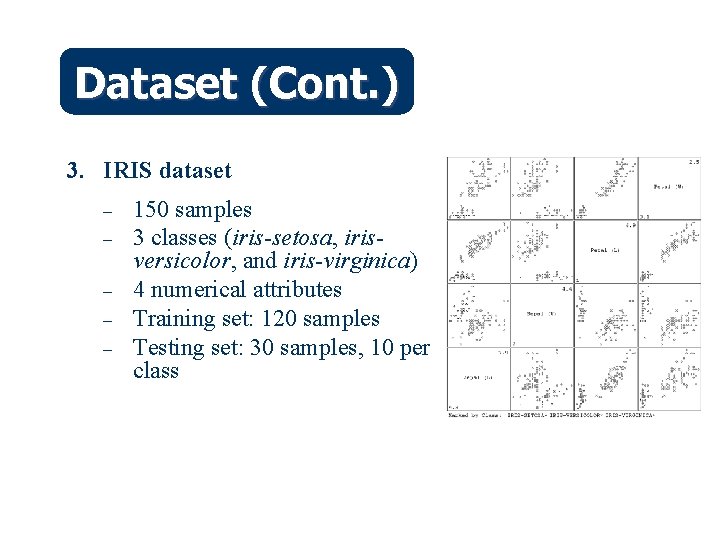

Dataset (Cont. ) 3. IRIS dataset – – – 150 samples 3 classes (iris-setosa, irisversicolor, and iris-virginica) 4 numerical attributes Training set: 120 samples Testing set: 30 samples, 10 per class

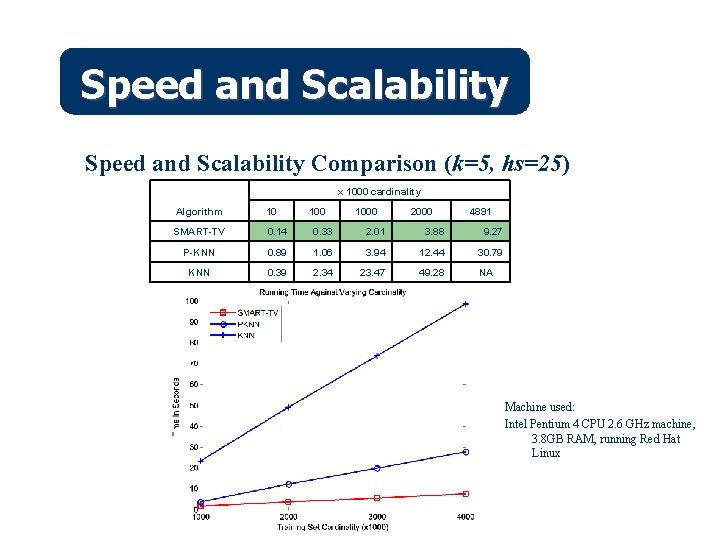

Speed and Scalability Comparison (k=5, hs=25) x 1000 cardinality Algorithm 10 SMART-TV 0. 14 0. 33 2. 01 3. 88 9. 27 P-KNN 0. 89 1. 06 3. 94 12. 44 30. 79 KNN 0. 39 2. 34 23. 47 49. 28 1000 2000 4891 NA Machine used: Intel Pentium 4 CPU 2. 6 GHz machine, 3. 8 GB RAM, running Red Hat Linux

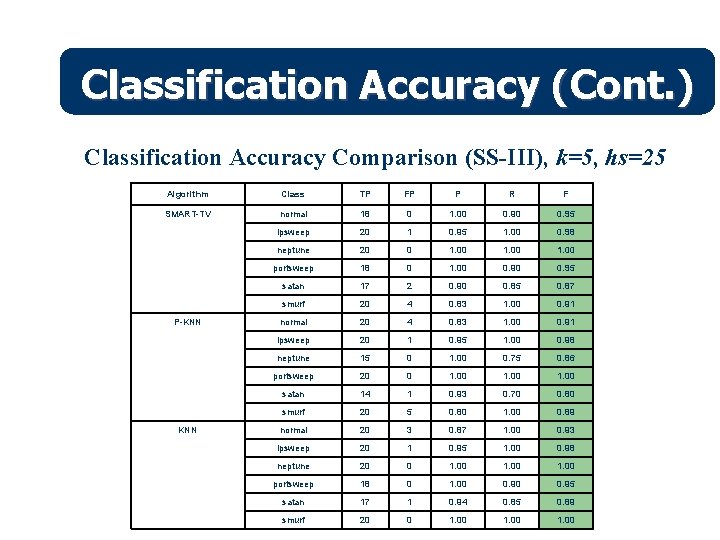

Classification Accuracy (Cont. ) Classification Accuracy Comparison (SS-III), k=5, hs=25 Algorithm Class TP FP P R F SMART-TV normal 18 0 1. 00 0. 95 ipsweep 20 1 0. 95 1. 00 0. 98 neptune 20 0 1. 00 portsweep 18 0 1. 00 0. 95 satan 17 2 0. 90 0. 85 0. 87 smurf 20 4 0. 83 1. 00 0. 91 normal 20 4 0. 83 1. 00 0. 91 ipsweep 20 1 0. 95 1. 00 0. 98 neptune 15 0 1. 00 0. 75 0. 86 portsweep 20 0 1. 00 satan 14 1 0. 93 0. 70 0. 80 smurf 20 5 0. 80 1. 00 0. 89 normal 20 3 0. 87 1. 00 0. 93 ipsweep 20 1 0. 95 1. 00 0. 98 neptune 20 0 1. 00 portsweep 18 0 1. 00 0. 95 satan 17 1 0. 94 0. 85 0. 89 smurf 20 0 1. 00 P-KNN

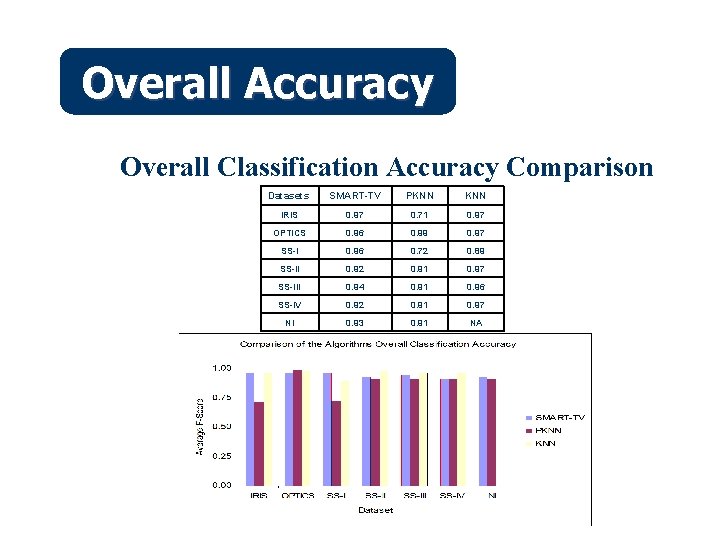

Overall Accuracy Overall Classification Accuracy Comparison Datasets SMART-TV PKNN IRIS 0. 97 0. 71 0. 97 OPTICS 0. 96 0. 99 0. 97 SS-I 0. 96 0. 72 0. 89 SS-II 0. 92 0. 91 0. 97 SS-III 0. 94 0. 91 0. 96 SS-IV 0. 92 0. 91 0. 97 NI 0. 93 0. 91 NA

Outline Nearest Neighbors Classification l Problems l SMART TV (SMall Absolute diffe. Rence of To. Tal Variation): A Fast and Scalable Nearest Neighbors Classification Algorithm l SMART TV in Image Classification l

Image Preprocessing l We extracted color and texture features from the original pixel of the images l Color features: We used HVS color space and quantized the images into 52 bins i. e. (6 x 3) bins l Texture features: we used multi-resolutions Gabor filter with two scales and four orientation (see B. S. Manjunath, IEEE Trans. on Pattern Analysis and Machine Intelligence, 1996)

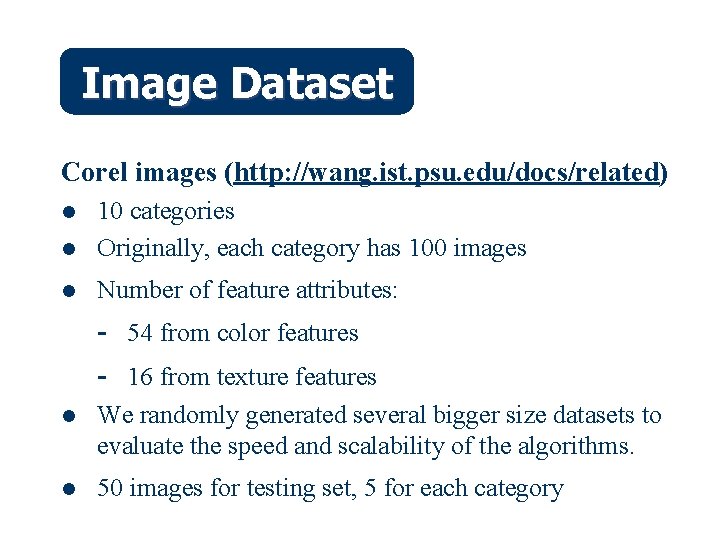

Image Dataset Corel images (http: //wang. ist. psu. edu/docs/related) l 10 categories Originally, each category has 100 images l Number of feature attributes: l - 54 from color features - 16 from texture features l We randomly generated several bigger size datasets to evaluate the speed and scalability of the algorithms. l 50 images for testing set, 5 for each category

Image Dataset

Example on Corel Dataset

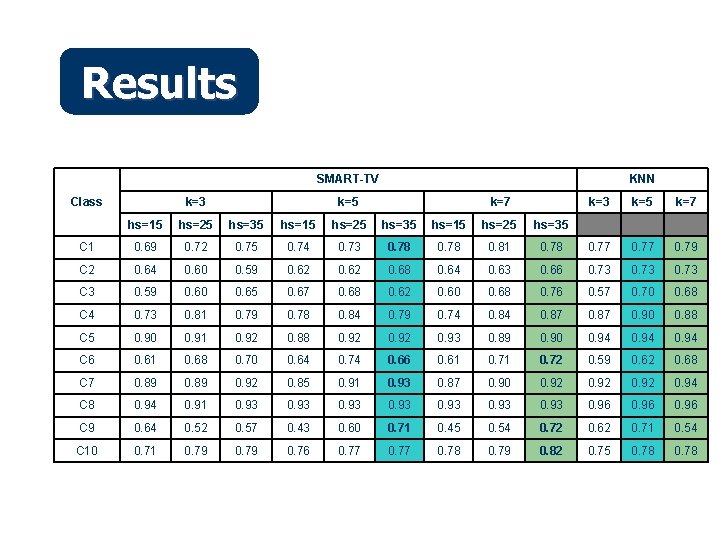

Results SMART-TV k=3 Class KNN k=5 k=7 k=3 k=5 k=7 hs=15 hs=25 hs=35 C 1 0. 69 0. 72 0. 75 0. 74 0. 73 0. 78 0. 81 0. 78 0. 77 0. 79 C 2 0. 64 0. 60 0. 59 0. 62 0. 68 0. 64 0. 63 0. 66 0. 73 C 3 0. 59 0. 60 0. 65 0. 67 0. 68 0. 62 0. 60 0. 68 0. 76 0. 57 0. 70 0. 68 C 4 0. 73 0. 81 0. 79 0. 78 0. 84 0. 79 0. 74 0. 87 0. 90 0. 88 C 5 0. 90 0. 91 0. 92 0. 88 0. 92 0. 93 0. 89 0. 90 0. 94 C 6 0. 61 0. 68 0. 70 0. 64 0. 74 0. 66 0. 61 0. 72 0. 59 0. 62 0. 68 C 7 0. 89 0. 92 0. 85 0. 91 0. 93 0. 87 0. 90 0. 92 0. 94 C 8 0. 94 0. 91 0. 93 0. 96 C 9 0. 64 0. 52 0. 57 0. 43 0. 60 0. 71 0. 45 0. 54 0. 72 0. 62 0. 71 0. 54 C 10 0. 71 0. 79 0. 76 0. 77 0. 78 0. 79 0. 82 0. 75 0. 78

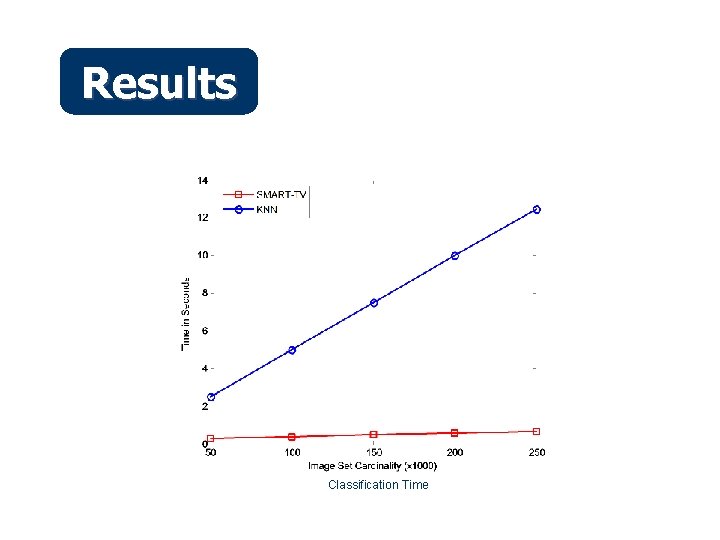

Results Classification Time

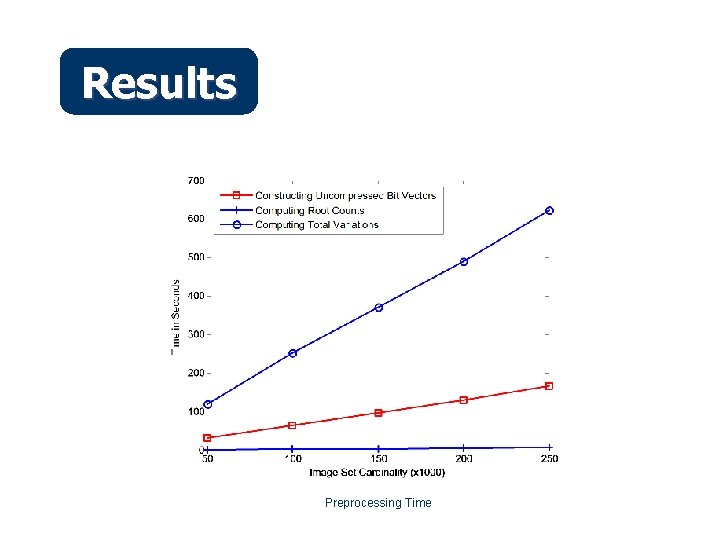

Results Preprocessing Time

Summary l l l A nearest-based classification algorithm that starts its classification steps by approximating a number of candidates of nearest neighbors The absolute difference of total variation between data points in the training set and the unclassified point is used to approximate the candidates The algorithm is fast, and it scales well in very large dataset. The classification accuracy is very comparable to that of KNN algorithm.

- Slides: 31