Multiplicative Data Perturbations Outline o o Introduction Multiplicative

Multiplicative Data Perturbations

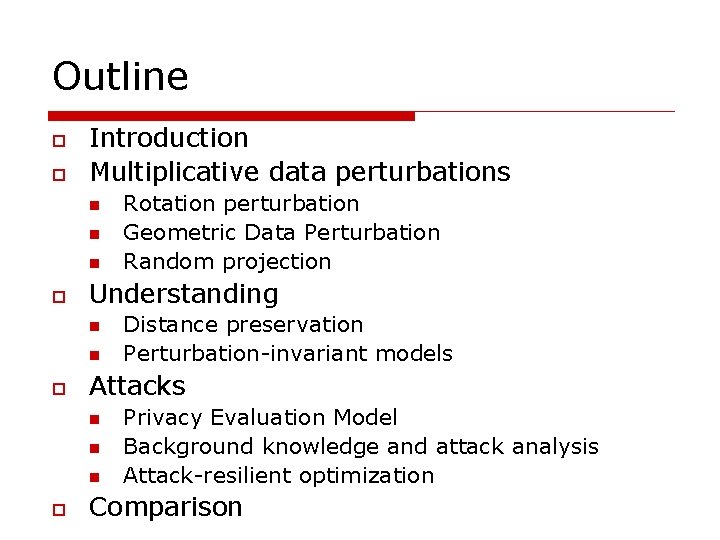

Outline o o Introduction Multiplicative data perturbations n n n o Understanding n n o Distance preservation Perturbation-invariant models Attacks n n n o Rotation perturbation Geometric Data Perturbation Random projection Privacy Evaluation Model Background knowledge and attack analysis Attack-resilient optimization Comparison

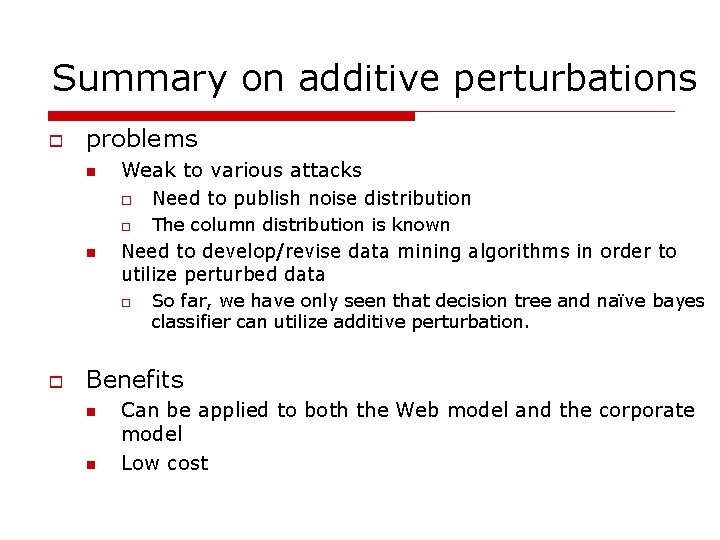

Summary on additive perturbations o problems n n Weak to various attacks o Need to publish noise distribution o The column distribution is known Need to develop/revise data mining algorithms in order to utilize perturbed data o o So far, we have only seen that decision tree and naïve bayes classifier can utilize additive perturbation. Benefits n n Can be applied to both the Web model and the corporate model Low cost

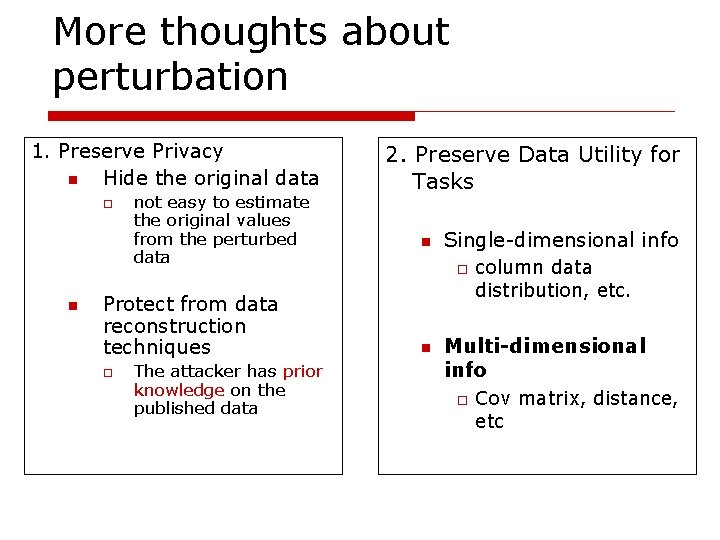

More thoughts about perturbation 1. Preserve Privacy n Hide the original data o n not easy to estimate the original values from the perturbed data Protect from data reconstruction techniques o The attacker has prior knowledge on the published data 2. Preserve Data Utility for Tasks n Single-dimensional info o n column data distribution, etc. Multi-dimensional info o Cov matrix, distance, etc

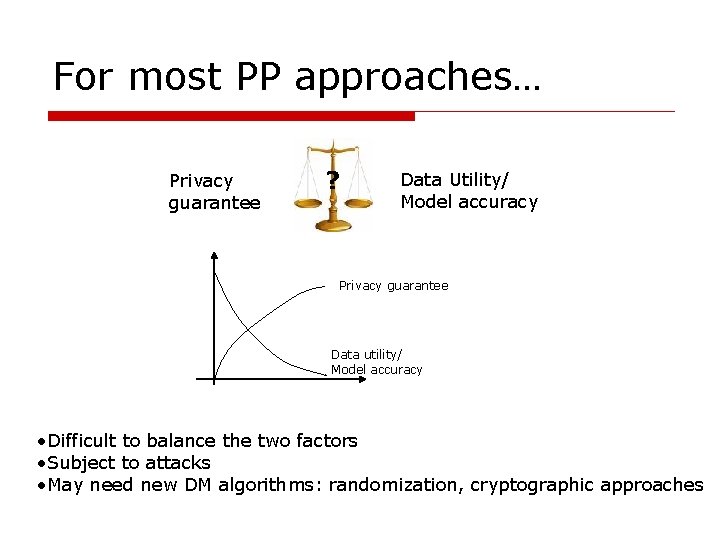

For most PP approaches… Privacy guarantee ? Data Utility/ Model accuracy Privacy guarantee Data utility/ Model accuracy • Difficult to balance the two factors • Subject to attacks • May need new DM algorithms: randomization, cryptographic approaches

Multiplicative perturbations o Geometric data perturbation (GDP) n n n o Rotation data perturbation Translation data perturbation Noise addition Random projection perturbation(RPP)

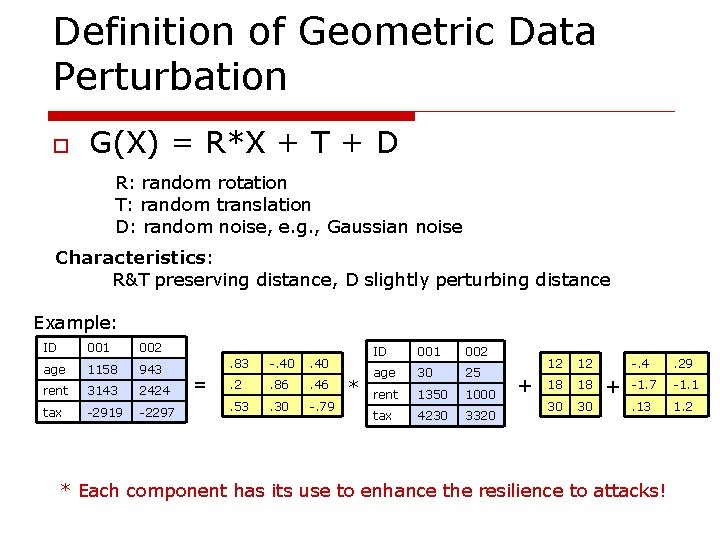

Definition of Geometric Data Perturbation o G(X) = R*X + T + D R: random rotation T: random translation D: random noise, e. g. , Gaussian noise Characteristics: R&T preserving distance, D slightly perturbing distance Example: ID 001 002 age 1158 943 rent 3143 2424 tax -2919 -2297 = . 83 -. 40 . 2 . 86 . 46 . 53 . 30 -. 79 * ID 001 002 age 30 25 rent 1350 1000 tax 4230 3320 + 12 12 18 18 30 30 + -. 4 . 29 -1. 7 -1. 1 . 13 1. 2 * Each component has its use to enhance the resilience to attacks!

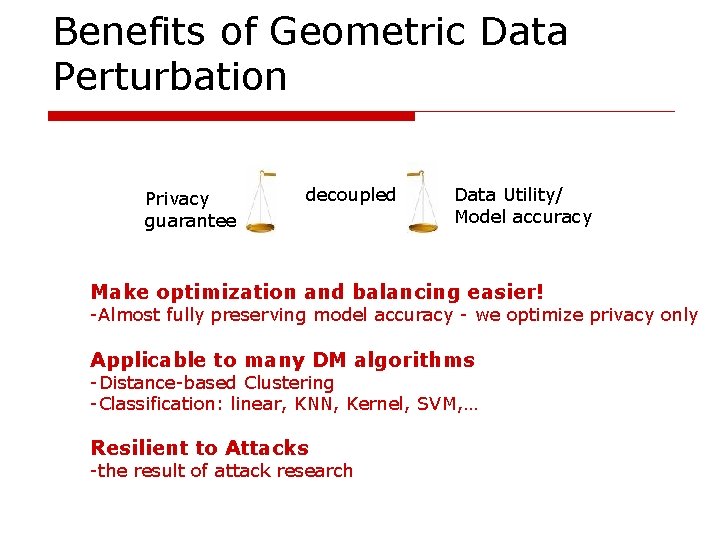

Benefits of Geometric Data Perturbation Privacy guarantee decoupled Data Utility/ Model accuracy Make optimization and balancing easier! -Almost fully preserving model accuracy - we optimize privacy only Applicable to many DM algorithms -Distance-based Clustering -Classification: linear, KNN, Kernel, SVM, … Resilient to Attacks -the result of attack research

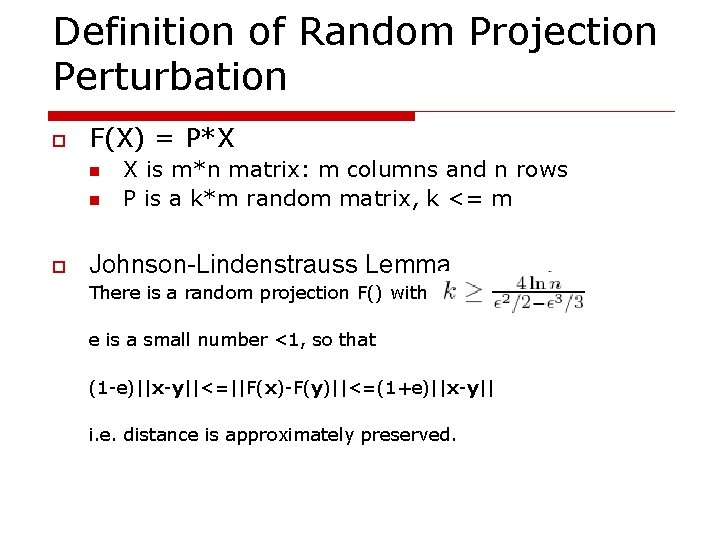

Definition of Random Projection Perturbation o F(X) = P*X n n o X is m*n matrix: m columns and n rows P is a k*m random matrix, k <= m Johnson-Lindenstrauss Lemma There is a random projection F() with e is a small number <1, so that (1 -e)||x-y||<=||F(x)-F(y)||<=(1+e)||x-y|| i. e. distance is approximately preserved.

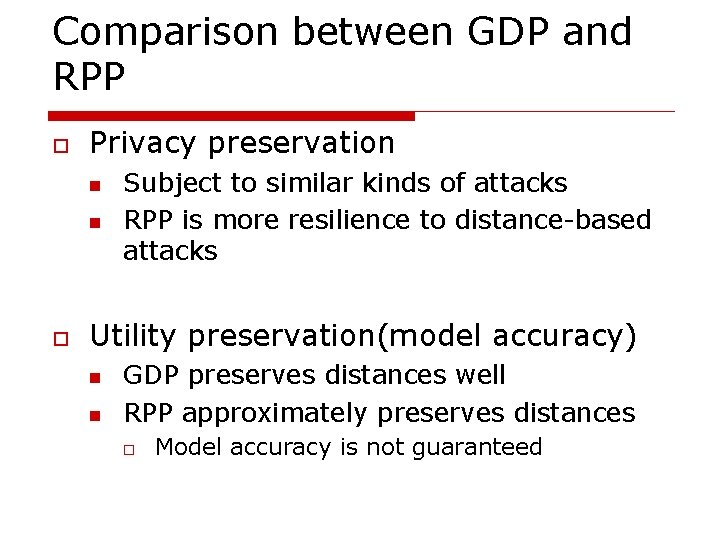

Comparison between GDP and RPP o Privacy preservation n n o Subject to similar kinds of attacks RPP is more resilience to distance-based attacks Utility preservation(model accuracy) n n GDP preserves distances well RPP approximately preserves distances o Model accuracy is not guaranteed

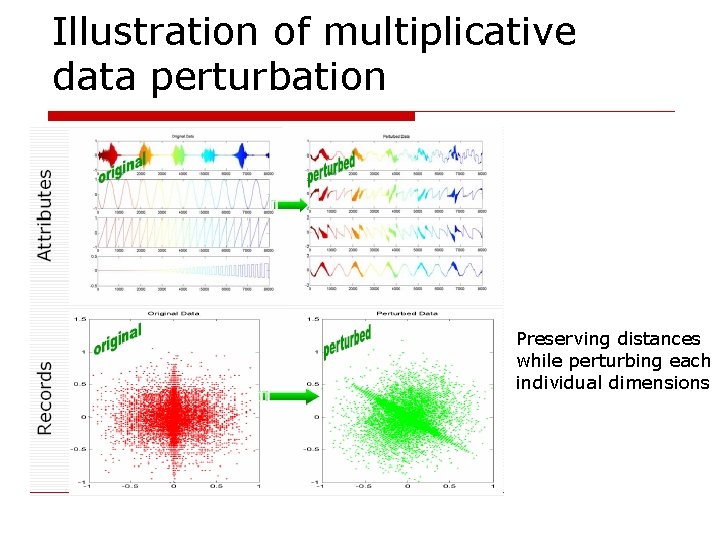

Illustration of multiplicative data perturbation Preserving distances while perturbing each individual dimensions

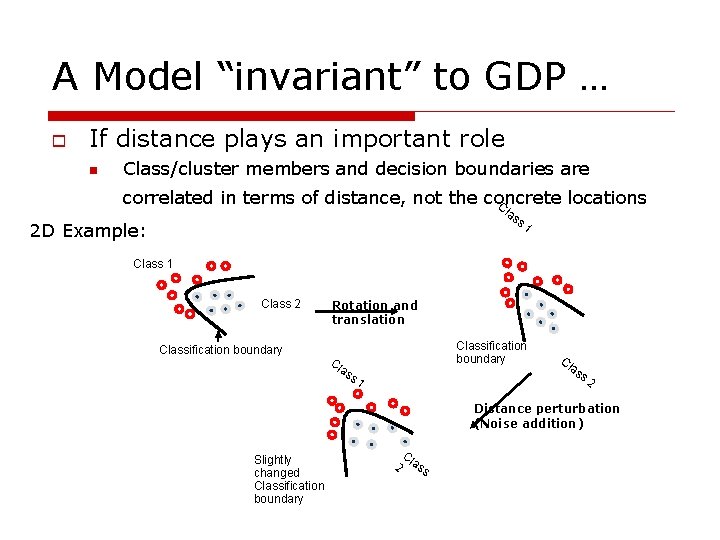

A Model “invariant” to GDP … o If distance plays an important role n Class/cluster members and decision boundaries are correlated in terms of distance, not the concrete locations C la s s 1 2 D Example: Class 1 Class 2 Classification boundary Rotation and translation Classification boundary Cl as s 1 Cl as s 2 Distance perturbation (Noise addition) Slightly changed Classification boundary C 2 lass

Applicable DM algorithms o o Modeling methods that depend on Euclidean geometric properties Models “invariant” to GDP n n all Euclidean distance based clustering algorithms Classification algorithms o o o K Nearest Neighbors Kernel methods Linear classifier Support vector machines Most regression models And potentially more …

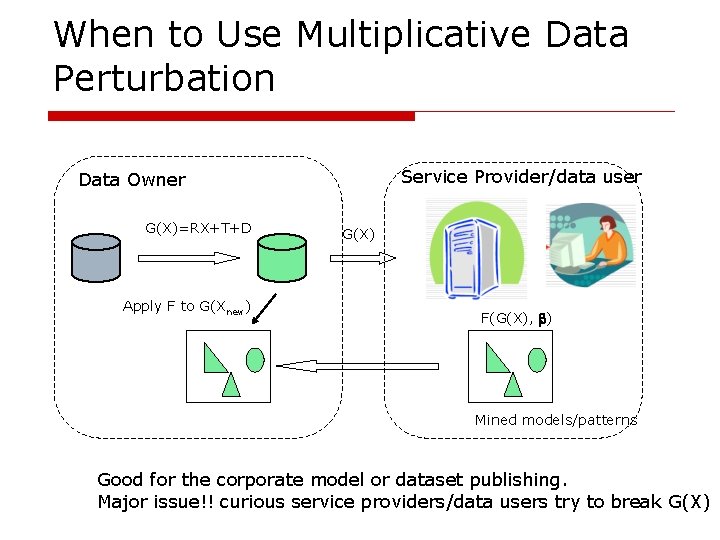

When to Use Multiplicative Data Perturbation Service Provider/data user Data Owner G(X)=RX+T+D Apply F to G(Xnew) G(X) F(G(X), ) Mined models/patterns Good for the corporate model or dataset publishing. Major issue!! curious service providers/data users try to break G(X)

Major issue: attacks!! o Many existing PP methods are found not so effective n n o when attacks are considered Ex: various data reconstruction algorithms to the random noise addition approach [Huang 05][Guo 06] Prior knowledge n Service provider Y has “PRIOR KNOWLEDGE” about X’s domain and nothing stops Y from using it to infer information in the sanitized data

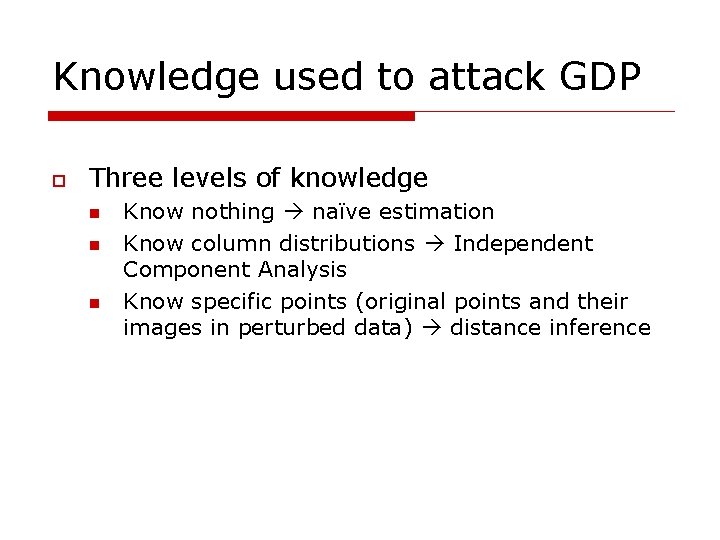

Knowledge used to attack GDP o Three levels of knowledge n n n Know nothing naïve estimation Know column distributions Independent Component Analysis Know specific points (original points and their images in perturbed data) distance inference

Methodology of attack analysis o An attack is an estimate of the original data Original O(x 1, x 2, …, xn) vs. estimate P(x’ 1, x’ 2, …, x’n) How similar are these two series? One of the effective methods is to evaluate the variance/standard deviation of the difference [Rakesh 00] Var (P–O) or std(P-O), P: estimated, O: original

Two multi-column privacy metrics qi : privacy guarantee for column i qi = std(Pi–Oi), Oi normalized column values, Pi estimated column values Min privacy guarantee: the weakest link of all columns min { qi, i=1. . d} Avg privacy guarantee: overall privacy guarantee 1/d qi

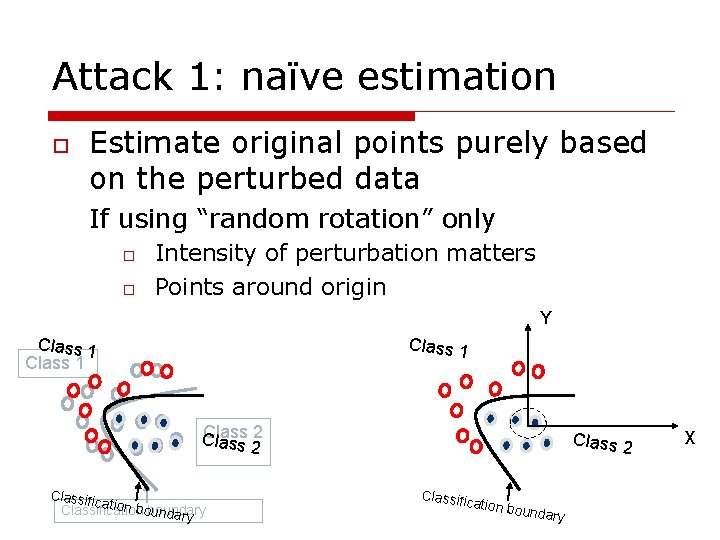

Attack 1: naïve estimation o Estimate original points purely based on the perturbed data If using “random rotation” only o o Intensity of perturbation matters Points around origin Y Class 1 Class 2 Classific ation bo Classification boundary Class 2 Classific ation bo undary X

Counter naïve estimation o Maximize intensity n n o Based on formal analysis of “rotation intensity” Method to maximize intensity o Fast_Opt algorithm in GDP “Random translation” T n n Hide origin Increase difficulty of attacking! o Need to estimate R first, in order to find out T

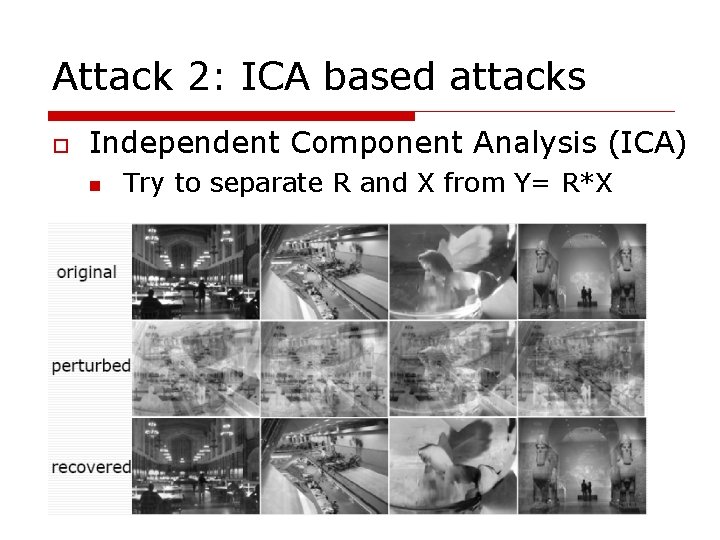

Attack 2: ICA based attacks o Independent Component Analysis (ICA) n Try to separate R and X from Y= R*X

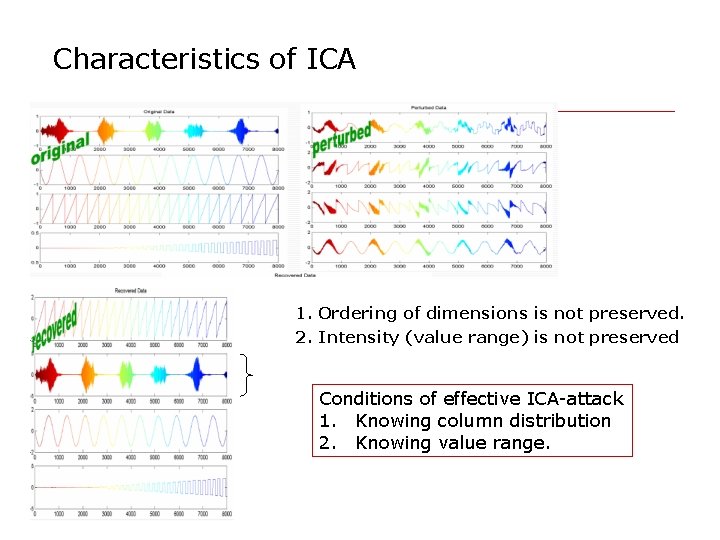

Characteristics of ICA 1. Ordering of dimensions is not preserved. 2. Intensity (value range) is not preserved Conditions of effective ICA-attack 1. Knowing column distribution 2. Knowing value range.

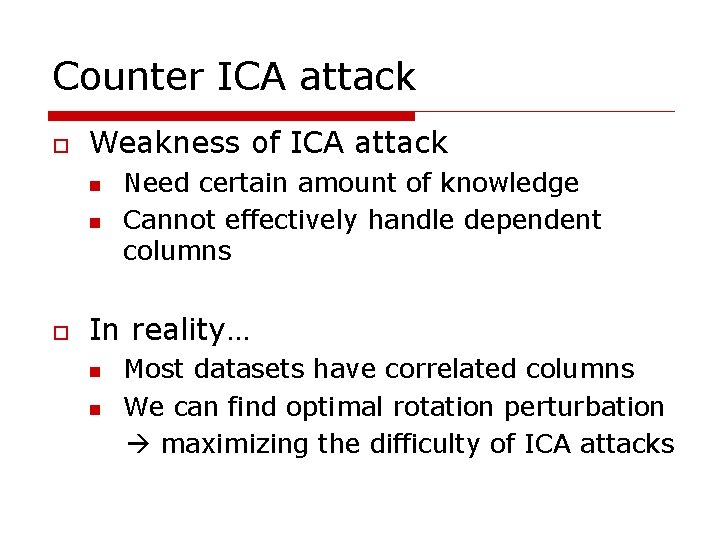

Counter ICA attack o Weakness of ICA attack n n o Need certain amount of knowledge Cannot effectively handle dependent columns In reality… n n Most datasets have correlated columns We can find optimal rotation perturbation maximizing the difficulty of ICA attacks

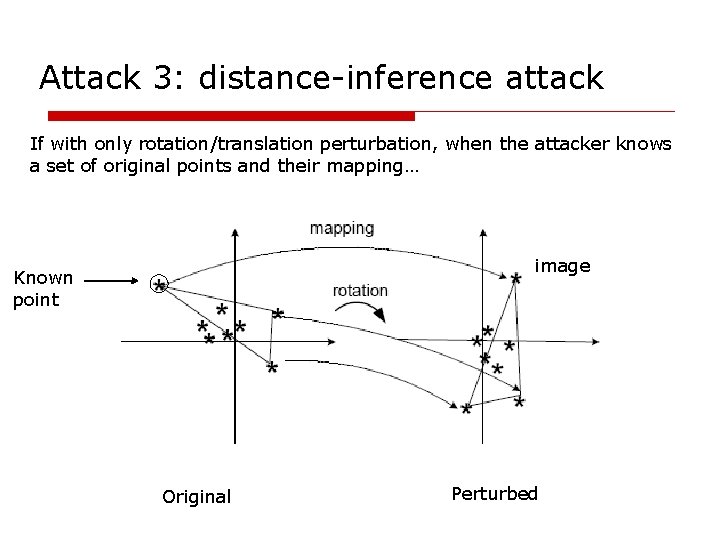

Attack 3: distance-inference attack If with only rotation/translation perturbation, when the attacker knows a set of original points and their mapping… image Known point Original Perturbed

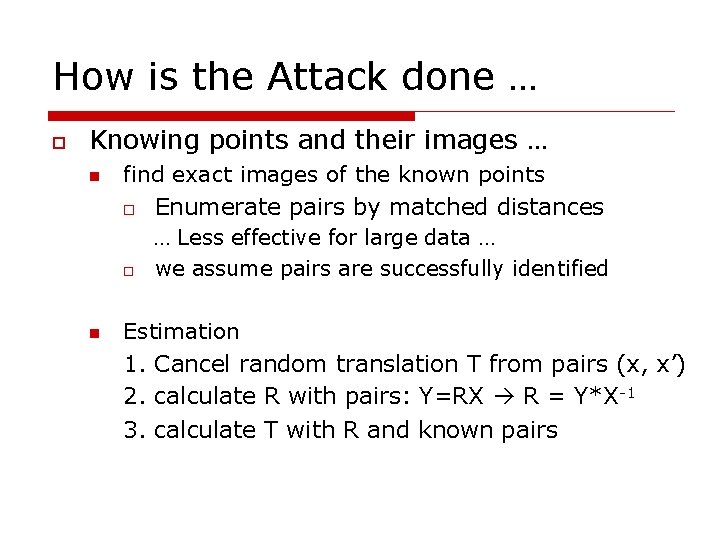

How is the Attack done … o Knowing points and their images … n n find exact images of the known points o Enumerate pairs by matched distances … Less effective for large data … o we assume pairs are successfully identified Estimation 1. Cancel random translation T from pairs (x, x’) 2. calculate R with pairs: Y=RX R = Y*X-1 3. calculate T with R and known pairs

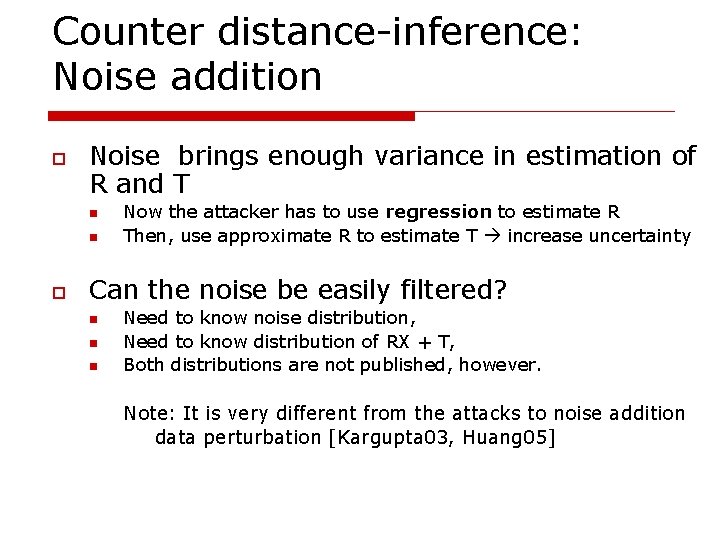

Counter distance-inference: Noise addition o Noise brings enough variance in estimation of R and T n n o Now the attacker has to use regression to estimate R Then, use approximate R to estimate T increase uncertainty Can the noise be easily filtered? n n n Need to know noise distribution, Need to know distribution of RX + T, Both distributions are not published, however. Note: It is very different from the attacks to noise addition data perturbation [Kargupta 03, Huang 05]

Attackers with more knowledge? o What if attackers know large amount of original records? n Able to accurately estimate covariance matrix, column distribution, and column range, etc. , of the original data Methods PCA, AK_ICA, …, etc can be used n What do we do? n You have released so much original information… Stop releasing any kind of data anymore

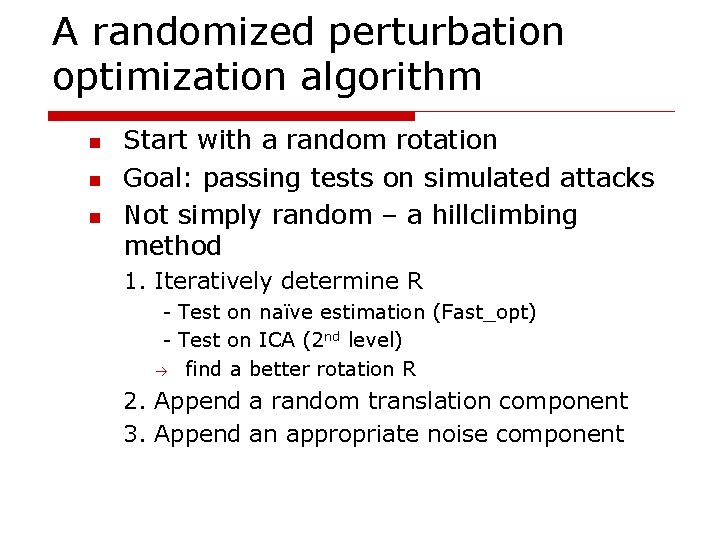

A randomized perturbation optimization algorithm n n n Start with a random rotation Goal: passing tests on simulated attacks Not simply random – a hillclimbing method 1. Iteratively determine R - Test on naïve estimation (Fast_opt) - Test on ICA (2 nd level) find a better rotation R 2. Append a random translation component 3. Append an appropriate noise component

Comparison on methods o Privacy preservation n n In general, RPP should be better than GDP Evaluate the effect of attacks for GDP o o Utility preservation n GDP: o o n R and T exactly preserve distances, The effect of D needs experimental evaluation RPP o o ICA and distance perturbation need experimental evaluation # of perturbed dimensions vs. utility Datasets n 12 datasets from UCI Data Repository

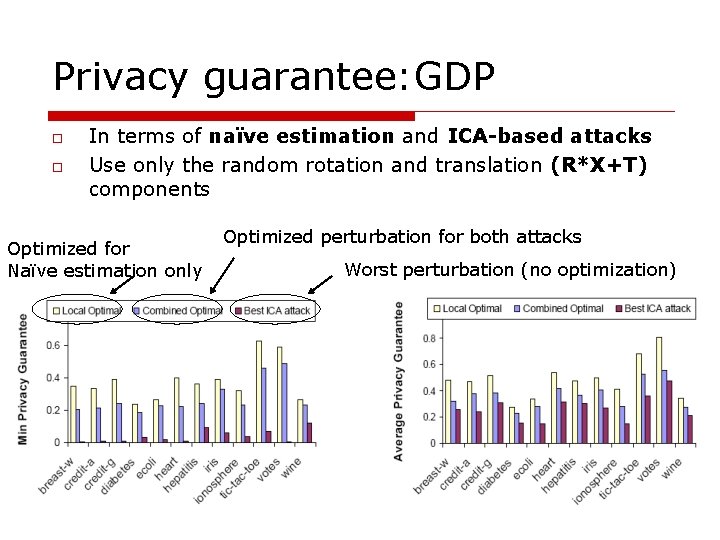

Privacy guarantee: GDP o o In terms of naïve estimation and ICA-based attacks Use only the random rotation and translation (R*X+T) components Optimized for Naïve estimation only Optimized perturbation for both attacks Worst perturbation (no optimization)

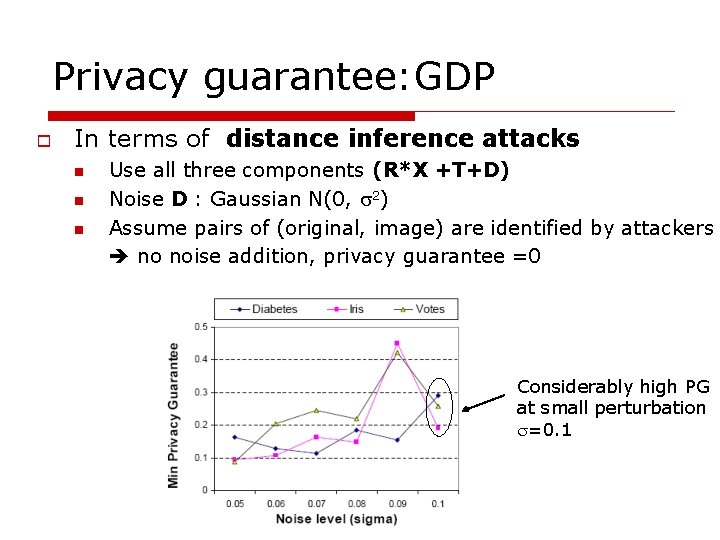

Privacy guarantee: GDP o In terms of distance inference attacks n n n Use all three components (R*X +T+D) Noise D : Gaussian N(0, 2) Assume pairs of (original, image) are identified by attackers no noise addition, privacy guarantee =0 Considerably high PG at small perturbation =0. 1

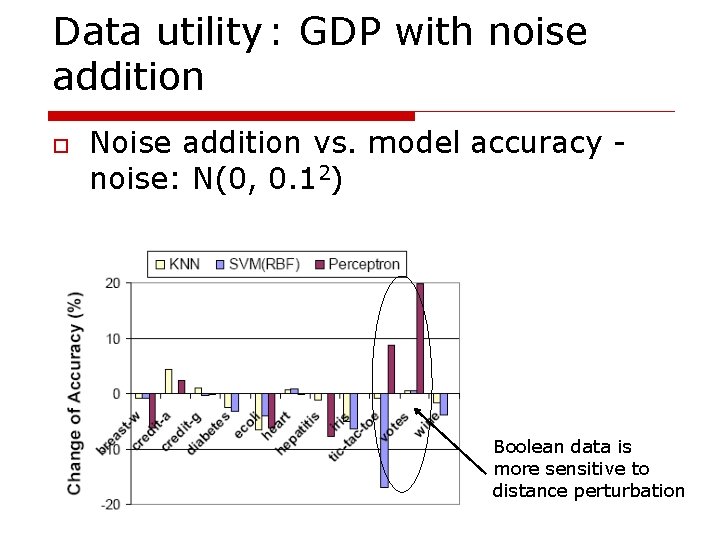

Data utility : GDP with noise addition o Noise addition vs. model accuracy noise: N(0, 0. 12) Boolean data is more sensitive to distance perturbation

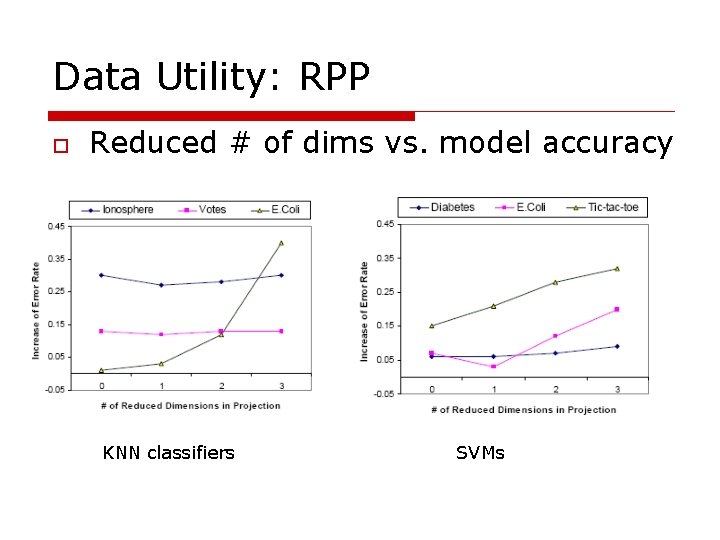

Data Utility: RPP o Reduced # of dims vs. model accuracy KNN classifiers SVMs

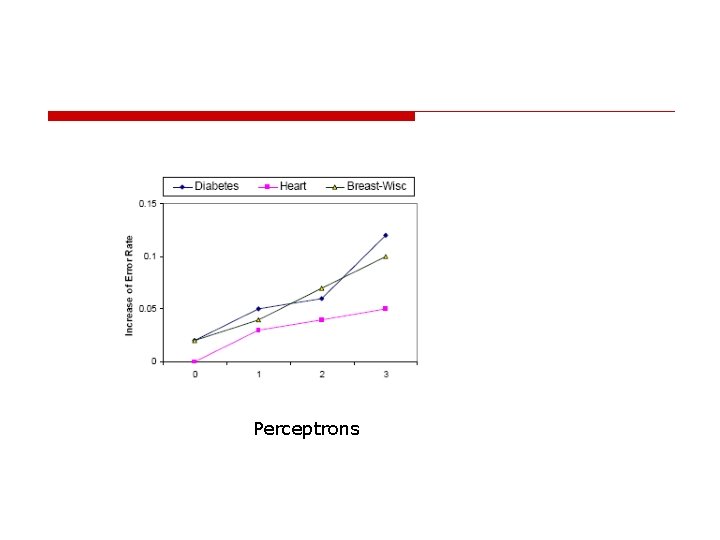

Perceptrons

- Slides: 34