Morphological Smoothing and Extrapolation of Word Embeddings Ryan

Morphological Smoothing and Extrapolation of Word Embeddings Ryan Cotterell, Hinrich Schütze, Jason Eisner

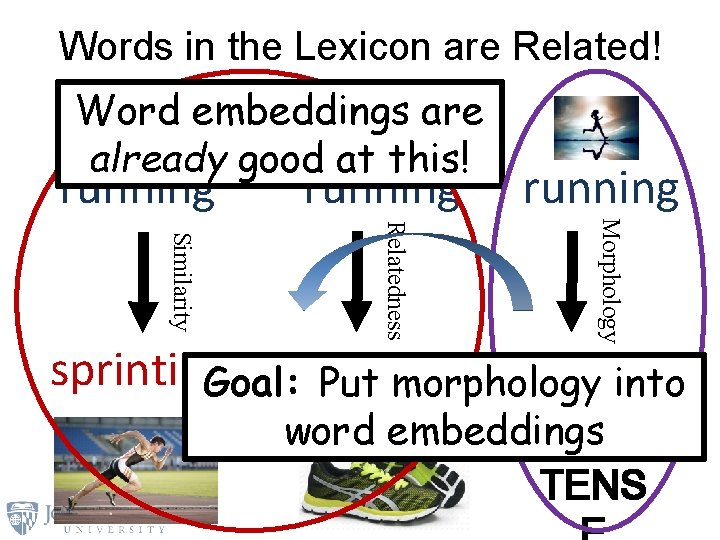

Words in the Lexicon are Related! Word embeddings are already good at this! running Morphology Relatedness Similarity sprinting raninto Goal: shoes Put morphology word embeddings PAST TENS

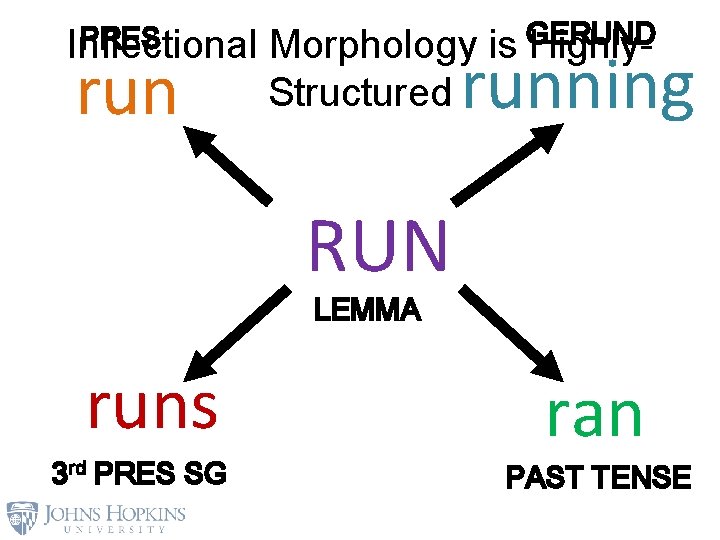

PRES Inflectional run Morphology Structured GERUND is Highly- running RUN LEMMA runs 3 rd PRES SG ran PAST TENSE

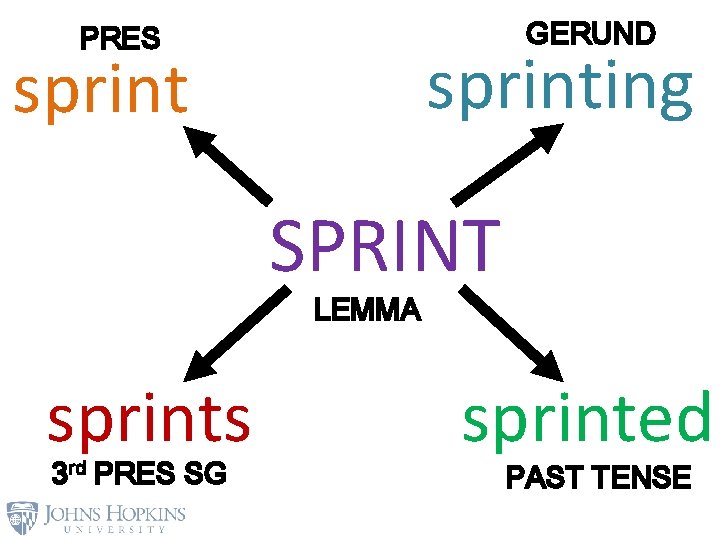

GERUND PRES sprinting sprint SPRINT LEMMA sprints 3 rd PRES SG sprinted PAST TENSE

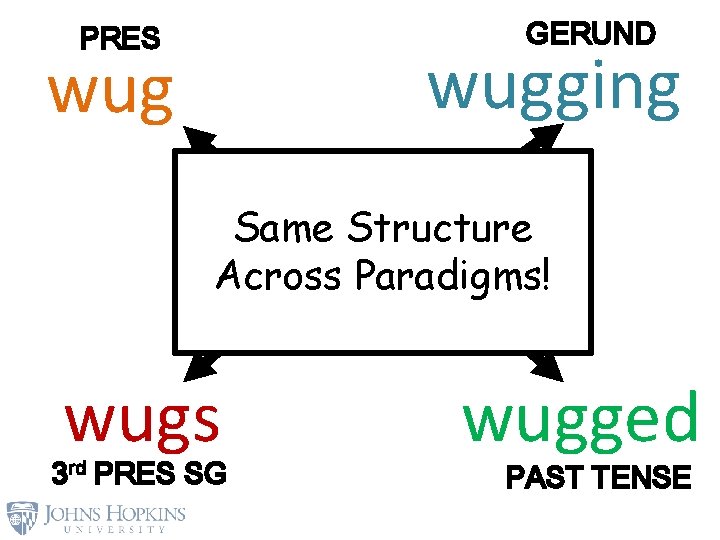

GERUND PRES wugging wug WUG Same Structure Across Paradigms! LEMMA wugs 3 rd PRES SG wugged PAST TENSE

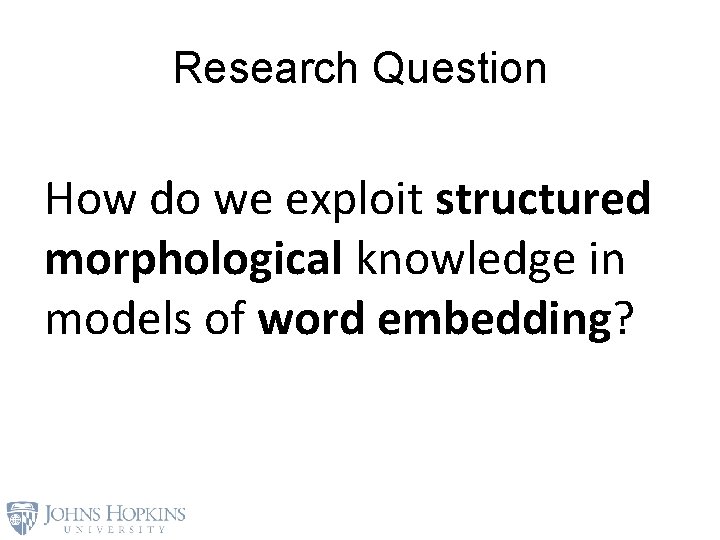

Research Question How do we exploit structured morphological knowledge in models of word embedding?

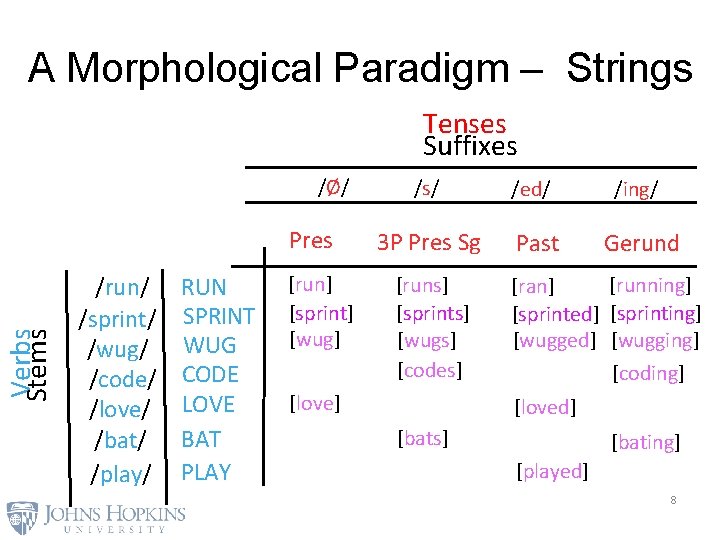

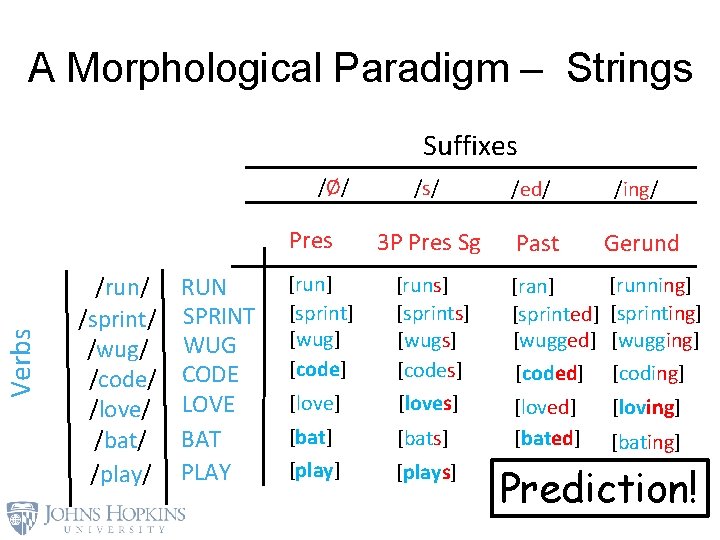

A Morphological Paradigm – Strings Tenses Suffixes /Ø/ Verbs Stems Pres /run/ /sprint/ /wug/ /code/ /love/ /bat/ /play/ RUN SPRINT WUG CODE LOVE BAT PLAY [run] [sprint] [wug] /s/ /ed/ /ing/ 3 P Pres Sg Past Gerund [runs] [sprints] [wugs] [codes] [love] [running] [ran] [sprinted] [sprinting] [wugged] [wugging] [coding] [loved] [bats] [bating] [played] 8

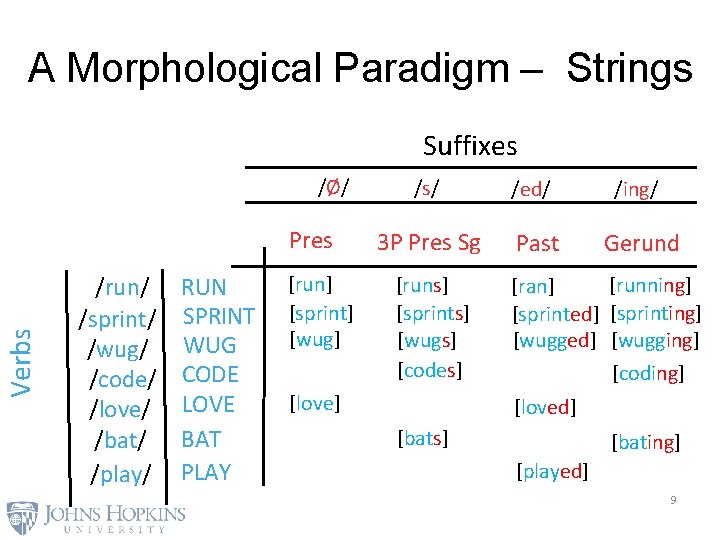

A Morphological Paradigm – Strings Suffixes /Ø/ Verbs Pres /run/ /sprint/ /wug/ /code/ /love/ /bat/ /play/ RUN SPRINT WUG CODE LOVE BAT PLAY [run] [sprint] [wug] /s/ /ed/ /ing/ 3 P Pres Sg Past Gerund [runs] [sprints] [wugs] [codes] [love] [running] [ran] [sprinted] [sprinting] [wugged] [wugging] [coding] [loved] [bats] [bating] [played] 9

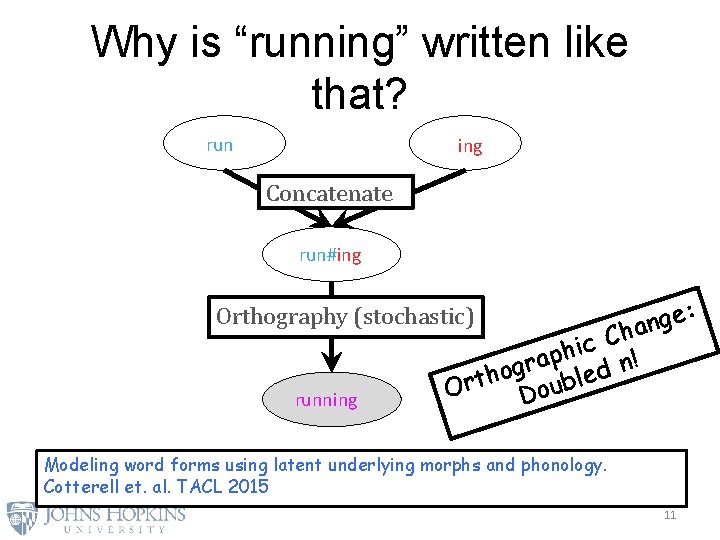

Why is “running” written like that? run ing Concatenate run#ing Orthography (stochastic) running : e g an Ch c i h p a ! r n g o d h e Ort Doubl Modeling word forms using latent underlying morphs and phonology. Cotterell et. al. TACL 2015 11

A Morphological Paradigm – Strings Suffixes /Ø/ /s/ /ed/ /ing/ 3 P Pres Sg Past Gerund [ran] [sprinted] [wugged] [coded] [running] [sprinting] [wugging] [coding] [love] [runs] [sprints] [wugs] [codes] [loving] [bats] [loved] [bated] [plays] [bating] [played] [playing] Verbs Pres /run/ /sprint/ /wug/ /code/ /love/ /bat/ /play/ RUN SPRINT WUG CODE LOVE BAT PLAY [run] [sprint] [wug] [code] Prediction! 12

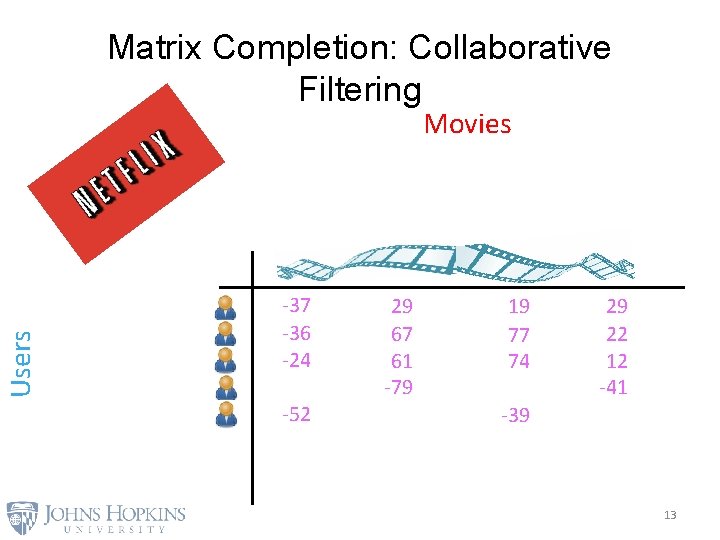

Matrix Completion: Collaborative Filtering Users Movies -37 -36 -24 -52 29 67 61 -79 19 77 74 29 22 12 -41 -39 13

![Matrix Completion: Collaborative Filtering [ [ [ Users [ 4 1 -5] [ 7 Matrix Completion: Collaborative Filtering [ [ [ Users [ 4 1 -5] [ 7](http://slidetodoc.com/presentation_image_h2/011e0392ce52d2e98ad02ee9cc287a22/image-12.jpg)

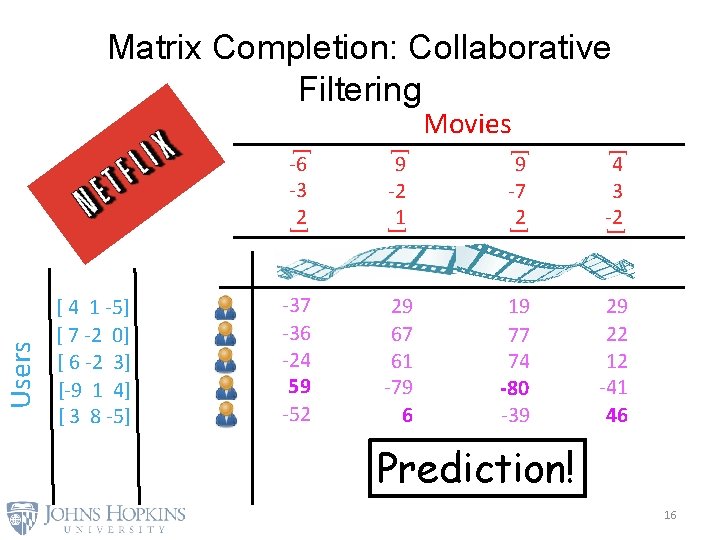

Matrix Completion: Collaborative Filtering [ [ [ Users [ 4 1 -5] [ 7 -2 0] [ 6 -2 3] [-9 1 4] [ 3 8 -5] 29 67 61 -79 19 77 74 29 22 12 -41 -52 9 -2 1 9 -7 2 [ [ -37 -36 -24 -6 -3 2 [ [ [ Movies 4 3 -2 -39 14

![Matrix Completion: Collaborative Filtering [1, -4, 3] [-5, 2, 1] Dot Product -10 Gaussian Matrix Completion: Collaborative Filtering [1, -4, 3] [-5, 2, 1] Dot Product -10 Gaussian](http://slidetodoc.com/presentation_image_h2/011e0392ce52d2e98ad02ee9cc287a22/image-13.jpg)

Matrix Completion: Collaborative Filtering [1, -4, 3] [-5, 2, 1] Dot Product -10 Gaussian Noise -11 15

Matrix Completion: Collaborative Filtering [ [ 9 -7 2 [ [ -37 -36 -24 59 -52 29 67 61 -79 6 19 77 74 -80 -39 29 22 12 -41 46 [ 9 -2 1 [ -6 -3 2 [ [ 4 1 -5] [ 7 -2 0] [ 6 -2 3] [-9 1 4] [ 3 8 -5] [ Users Movies 4 3 -2 Prediction! 16

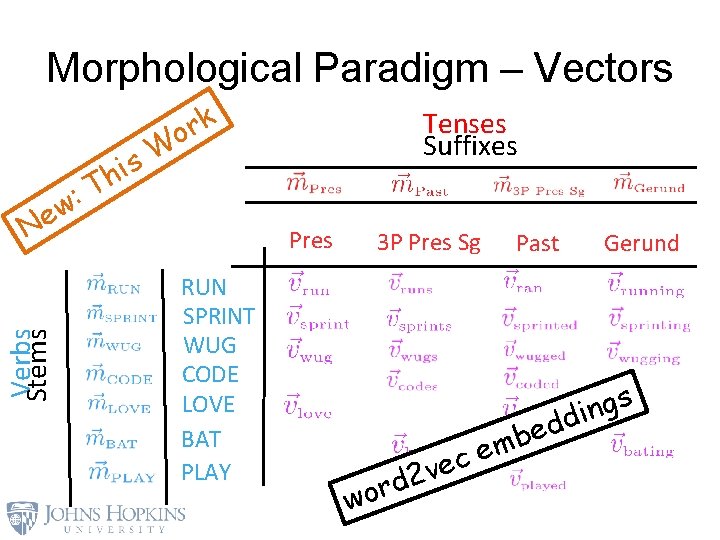

Morphological Paradigm – Vectors k r o Verbs Stems Ne T : w Tenses Suffixes W s hi Pres RUN SPRINT WUG CODE LOVE BAT PLAY 3 P Pres Sg Gerund s g n i dd e b m ce e v 2 rd wo Past

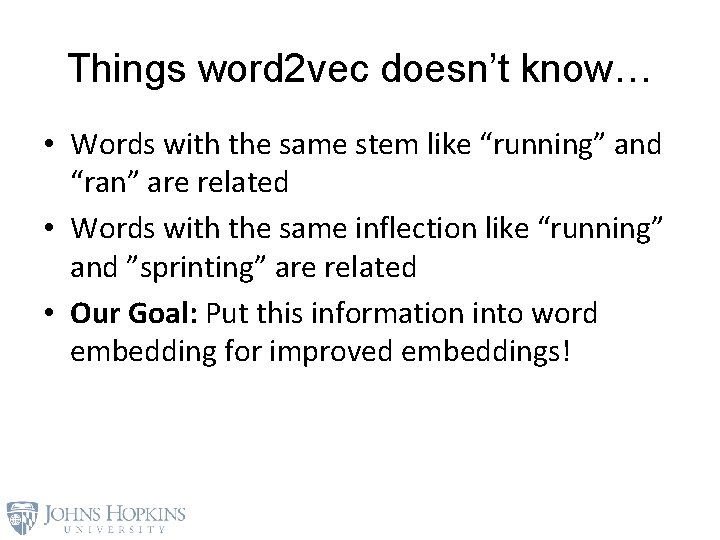

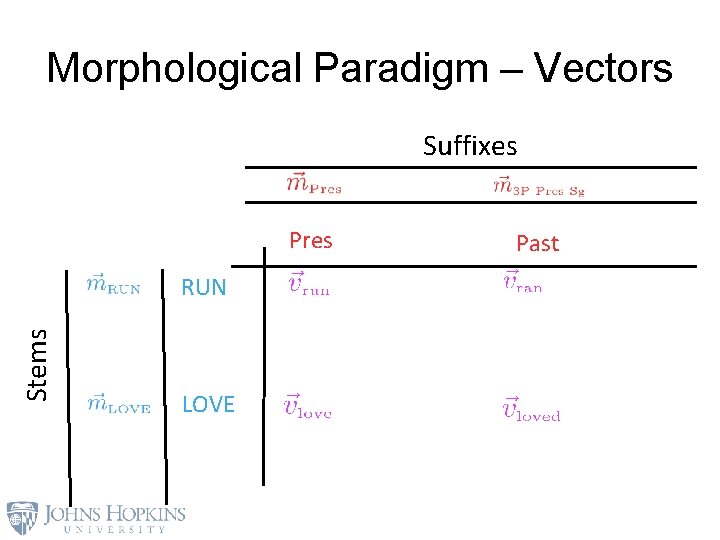

Things word 2 vec doesn’t know… • Words with the same stem like “running” and “ran” are related • Words with the same inflection like “running” and ”sprinting” are related • Our Goal: Put this information into word embedding for improved embeddings!

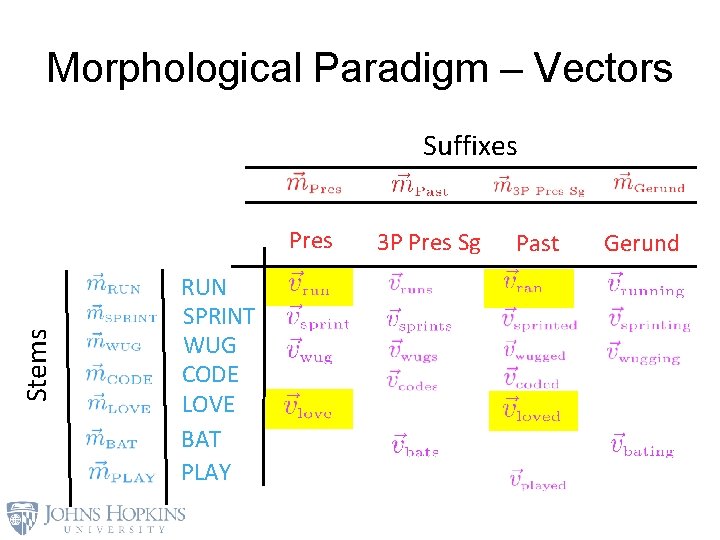

Morphological Paradigm – Vectors Suffixes Stems Pres RUN SPRINT WUG CODE LOVE BAT PLAY 3 P Pres Sg Past Gerund

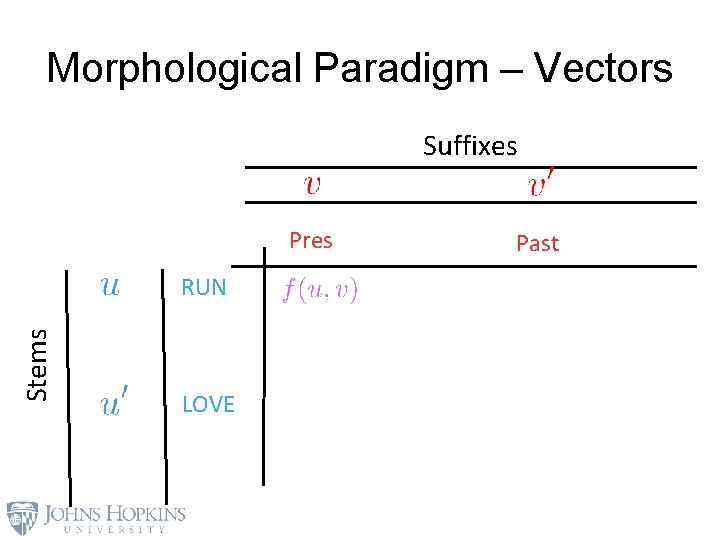

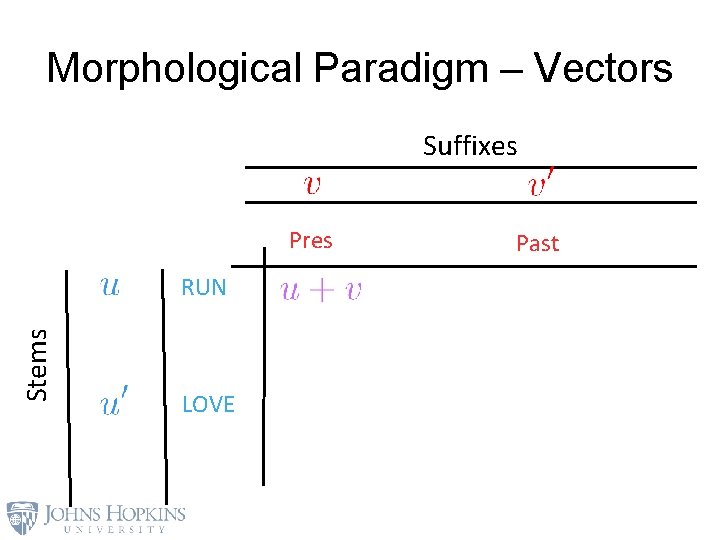

Morphological Paradigm – Vectors Suffixes Pres Stems RUN LOVE Past

Morphological Paradigm – Vectors Suffixes Pres Stems RUN LOVE Past

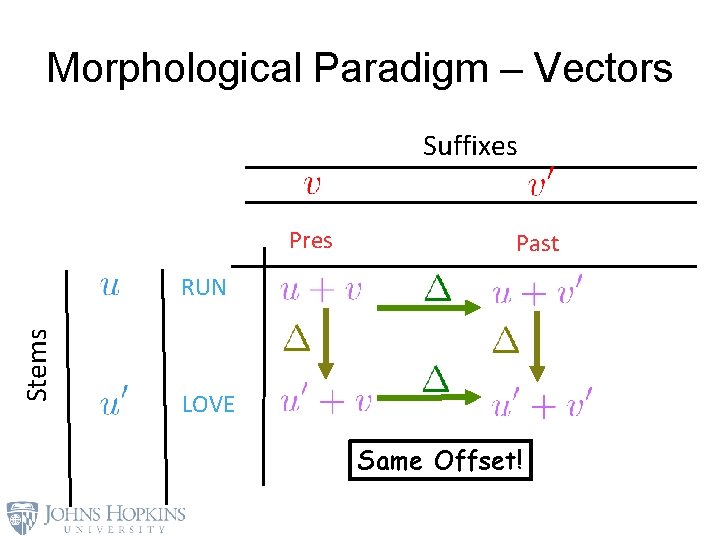

Morphological Paradigm – Vectors Suffixes Pres Stems RUN LOVE Past

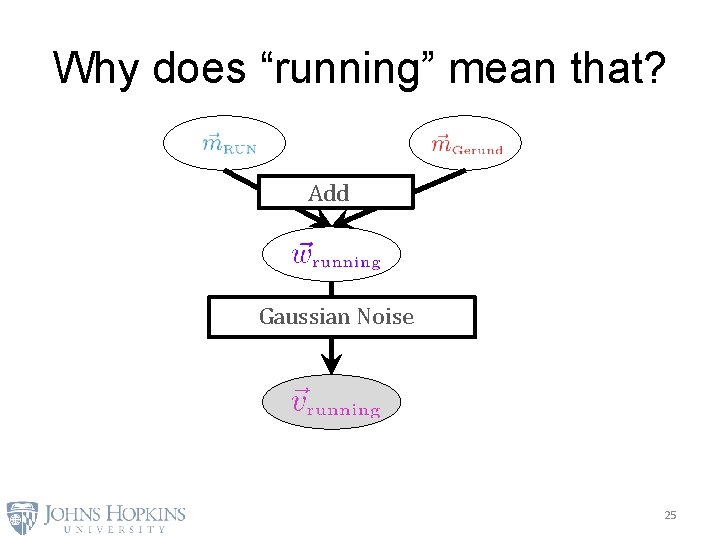

Why does “running” mean that? Add Gaussian Noise 25

Morphological Paradigm – Vectors Suffixes Pres Past Stems RUN LOVE Same Offset!

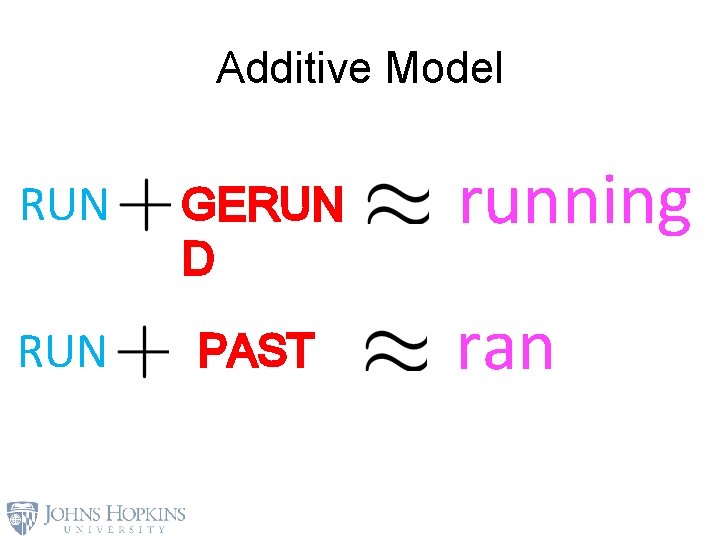

Additive Model RUN GERUN D RUN PAST running ran

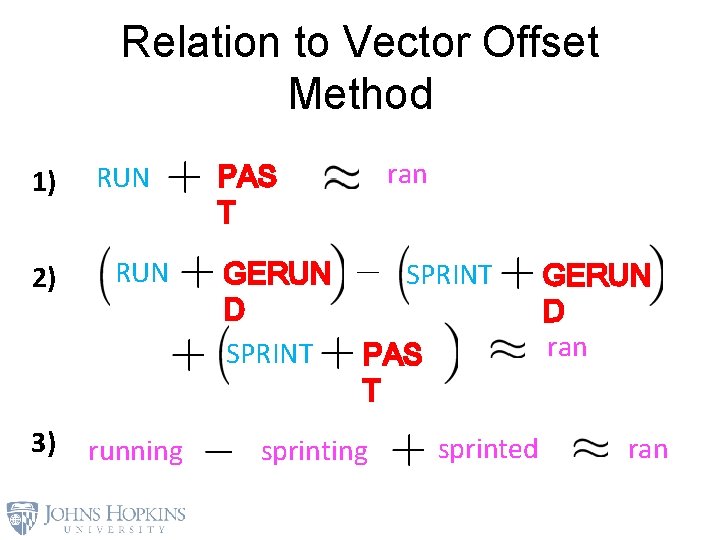

Relation to Vector Offset Method 1) 2) 3) RUN running ran PAS T GERUN D SPRINT PAS T sprinting sprinted GERUN D ran

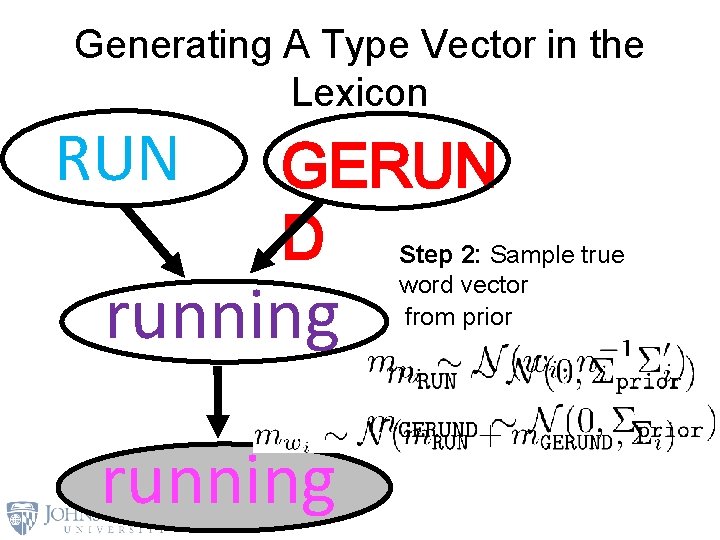

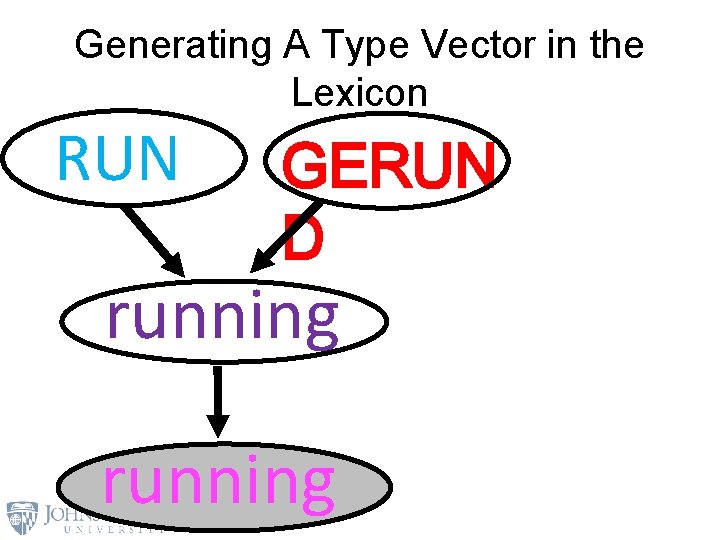

Generating A Type Vector in the Lexicon RUN GERUN D Step 2: 1: Sample true 3: Sample running word vector morpheme vectors observed vector from prior

Generating A Type Vector in the Lexicon RUN GERUN D running

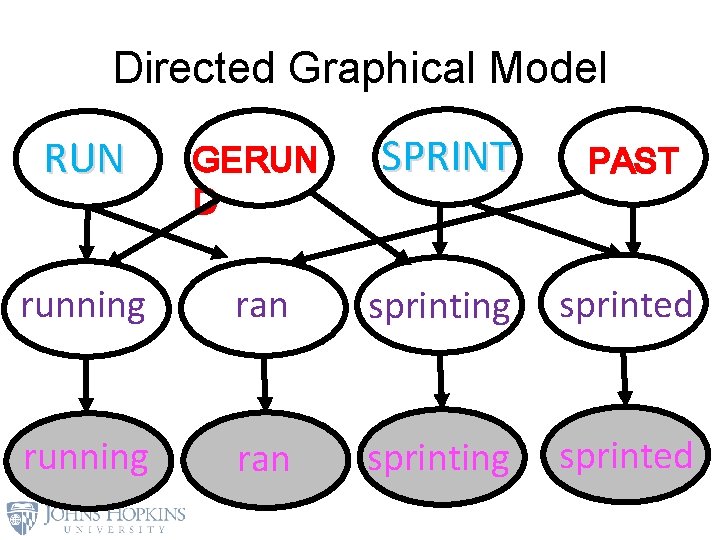

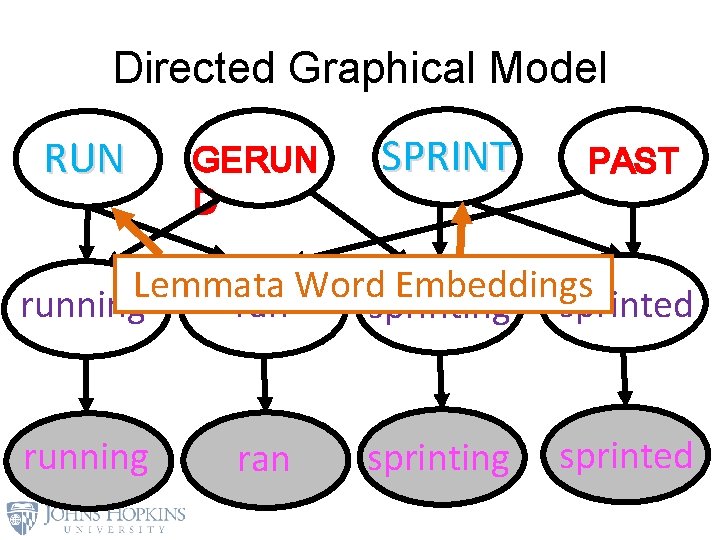

Directed Graphical Model RUN GERUN D SPRINT PAST running ran sprinting sprinted

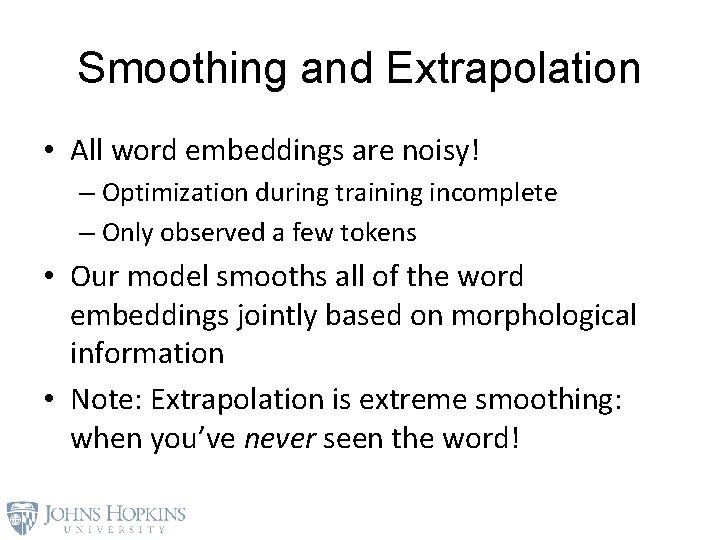

Smoothing and Extrapolation • All word embeddings are noisy! – Optimization during training incomplete – Only observed a few tokens • Our model smooths all of the word embeddings jointly based on morphological information • Note: Extrapolation is extreme smoothing: when you’ve never seen the word!

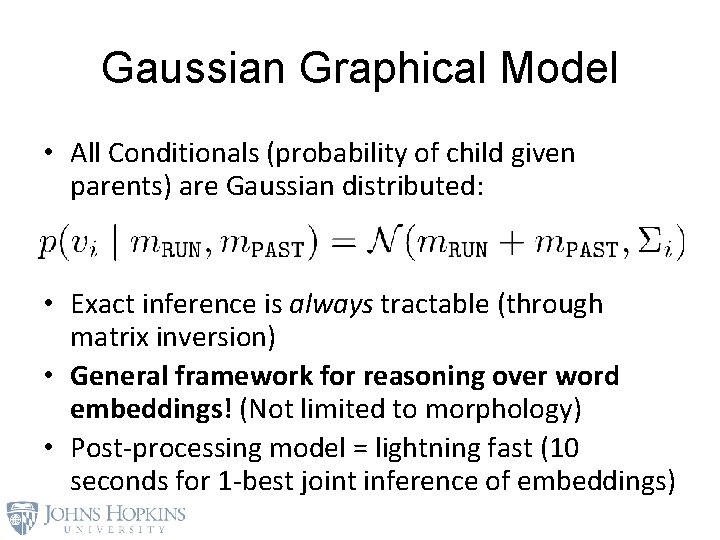

Gaussian Graphical Model • All Conditionals (probability of child given parents) are Gaussian distributed: • Exact inference is always tractable (through matrix inversion) • General framework for reasoning over word embeddings! (Not limited to morphology) • Post-processing model = lightning fast (10 seconds for 1 -best joint inference of embeddings)

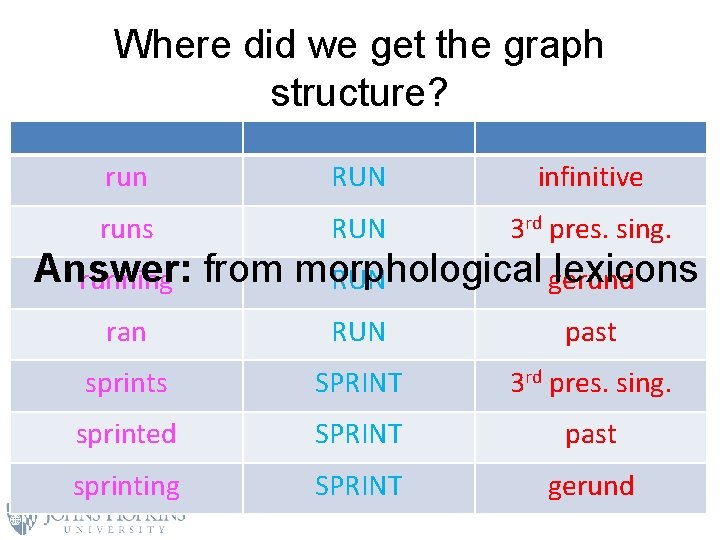

Where did we get the graph structure? run RUN infinitive runs RUN 3 rd pres. sing. ran RUN past sprints SPRINT 3 rd pres. sing. sprinted SPRINT past sprinting SPRINT gerund Answer: lexicons running from morphological RUN gerund

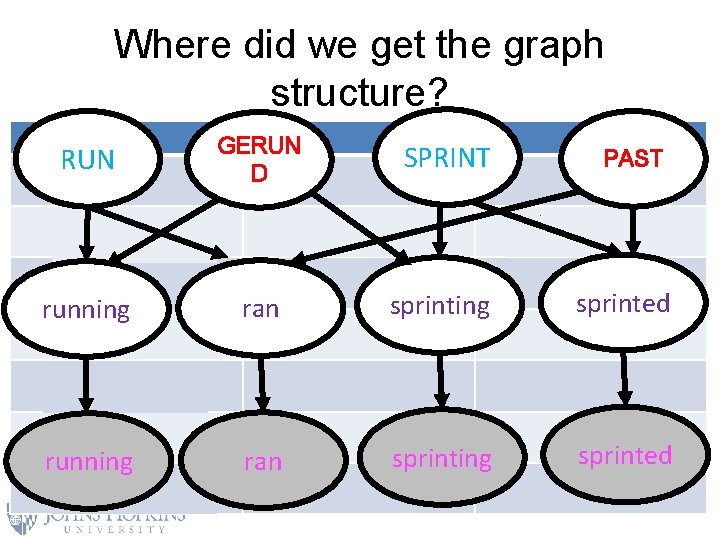

Where did we get the graph structure? RUN run GERUN D SPRINT RUN PAST infinitive runs RUN 3 rd pres. sing. running RUN gerund running ran RUN sprinting sprinted past sprints SPRINT 3 rd pres. sing. sprinted SPRINT past SPRINT gerund running sprinting ran sprinting sprinted

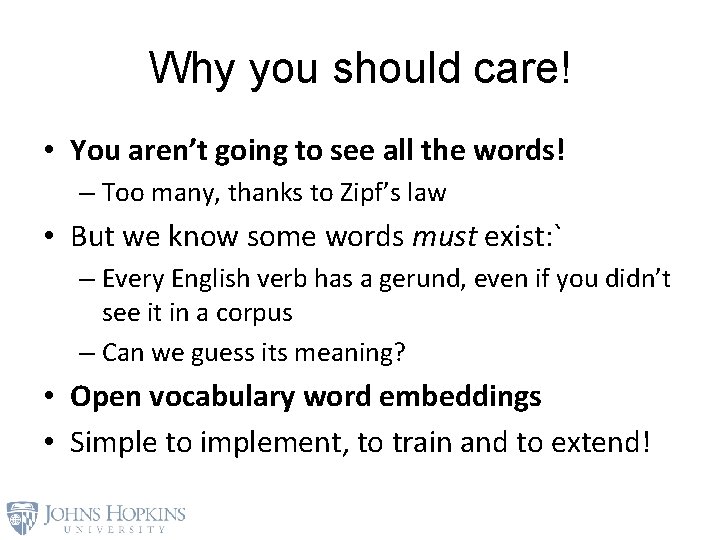

Why you should care! • You aren’t going to see all the words! – Too many, thanks to Zipf’s law • But we know some words must exist: ` – Every English verb has a gerund, even if you didn’t see it in a corpus – Can we guess its meaning? • Open vocabulary word embeddings • Simple to implement, to train and to extend!

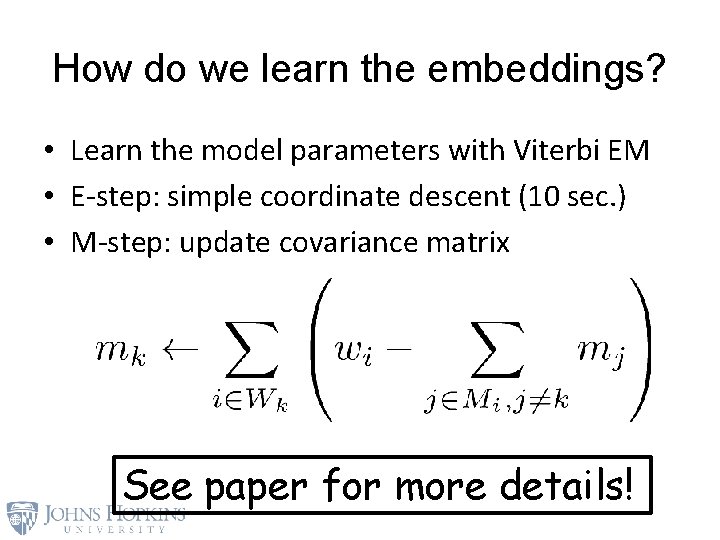

How do we learn the embeddings? • Learn the model parameters with Viterbi EM • E-step: simple coordinate descent (10 sec. ) • M-step: update covariance matrix See paper for more details!

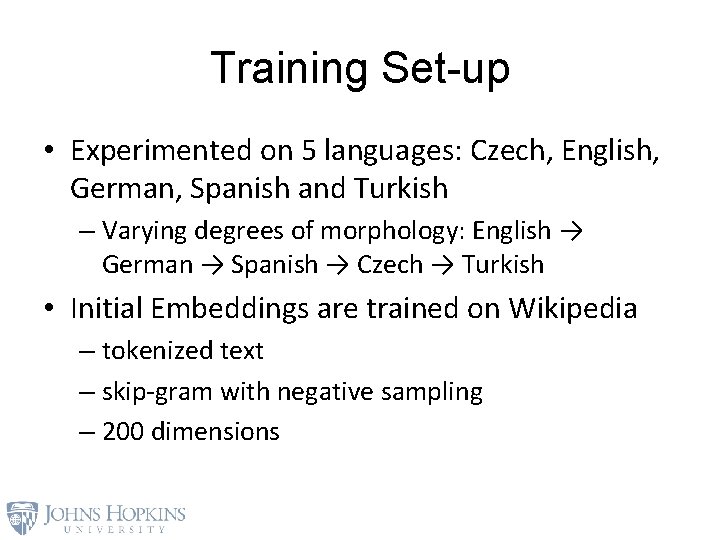

Training Set-up • Experimented on 5 languages: Czech, English, German, Spanish and Turkish – Varying degrees of morphology: English → German → Spanish → Czech → Turkish • Initial Embeddings are trained on Wikipedia – tokenized text – skip-gram with negative sampling – 200 dimensions

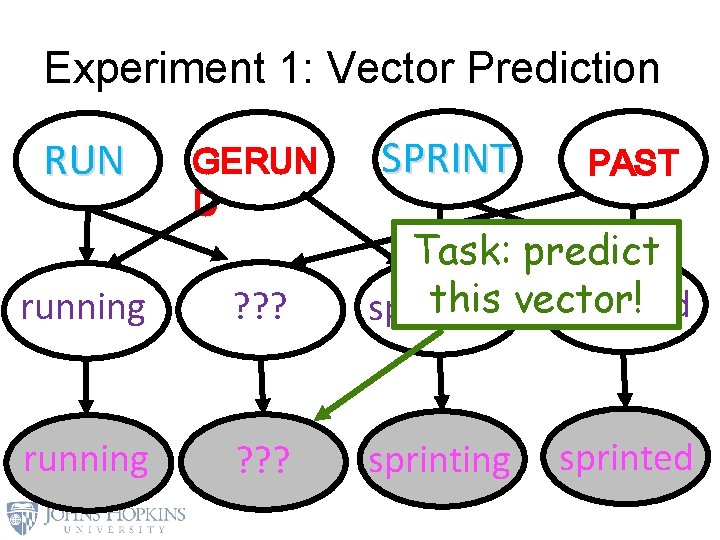

Experiment 1: Vector Prediction RUN GERUN D SPRINT PAST running ? ? ? Task: predict this vector! sprinted sprinting running ? ? ? sprinting sprinted

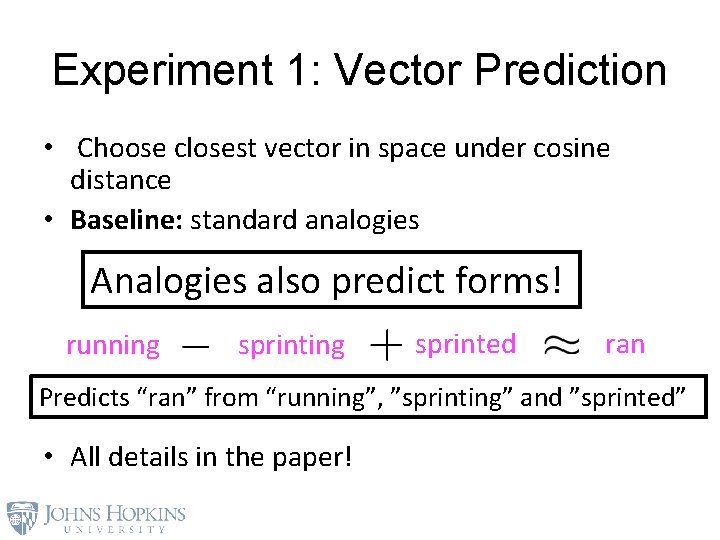

Experiment 1: Vector Prediction • Choose closest vector in space under cosine distance • Baseline: standard analogies Analogies also predict forms! running sprinted ran Predicts “ran” from “running”, ”sprinting” and ”sprinted” • All details in the paper!

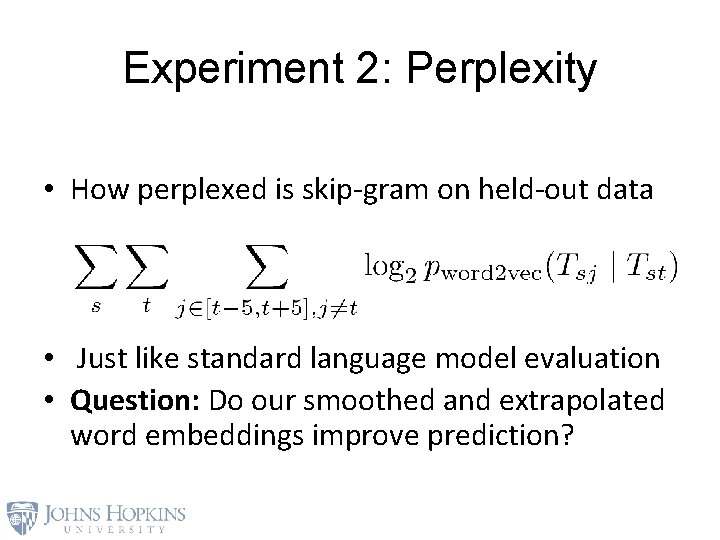

Experiment 2: Perplexity • How perplexed is skip-gram on held-out data • Just like standard language model evaluation • Question: Do our smoothed and extrapolated word embeddings improve prediction?

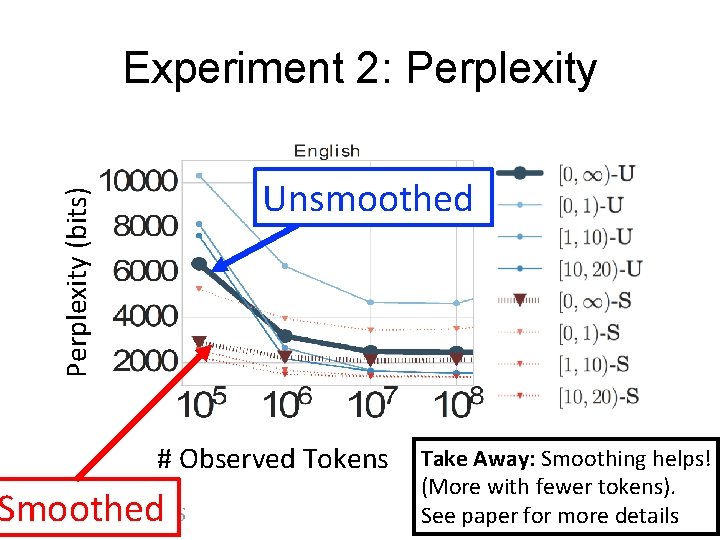

Experiment 2: Perplexity (bits) Unsmoothed # Observed Tokens Smoothed Take Away: Smoothing helps! (More with fewer tokens). See paper for more details

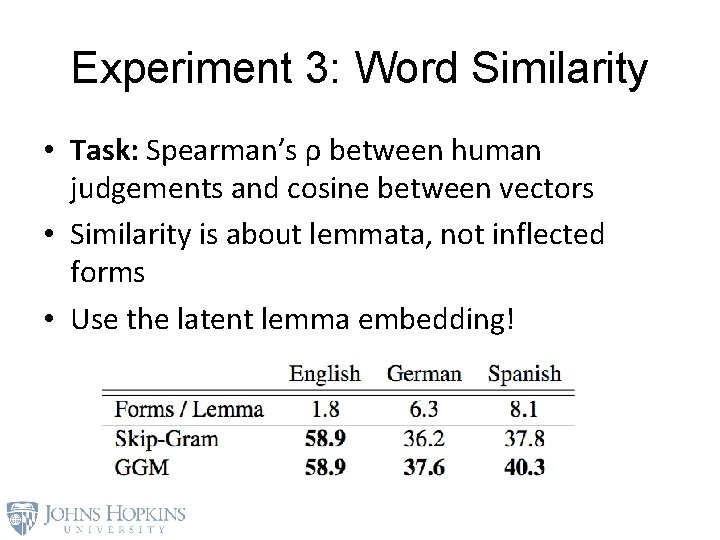

Experiment 3: Word Similarity • Task: Spearman’s ρ between human judgements and cosine between vectors • Similarity is about lemmata, not inflected forms • Use the latent lemma embedding!

Directed Graphical Model RUN GERUN D SPRINT PAST Lemmata Word Embeddings running ran sprinting sprinted

Experiment 3: Word Similarity • Task: Spearman’s ρ between human judgements and cosine between vectors • Similarity is about lemmata, not inflected forms • Use the latent lemma embedding!

Future Work • Integrate morphological information with character-level models! • Research Questions: – Are character-level models enough or do we need structured morphological information? – Can morphology help character-level neural networks?

Fin Thanks for your attention!

- Slides: 43