Differentiation and Richardson Extrapolation Douglas Wilhelm Harder M

![Differentiation and Richardson Extrapolation Better Approximations 58 You will write four functions: function [dy] Differentiation and Richardson Extrapolation Better Approximations 58 You will write four functions: function [dy]](https://slidetodoc.com/presentation_image/019ffbd7ab6c5ff930787137e676b3b8/image-58.jpg)

![Differentiation and Richardson Extrapolation References [1] Glyn James, Modern Engineering Mathematics, 4 th Ed. Differentiation and Richardson Extrapolation References [1] Glyn James, Modern Engineering Mathematics, 4 th Ed.](https://slidetodoc.com/presentation_image/019ffbd7ab6c5ff930787137e676b3b8/image-85.jpg)

- Slides: 85

Differentiation and Richardson Extrapolation Douglas Wilhelm Harder, M. Math. LEL Department of Electrical and Computer Engineering University of Waterloo, Ontario, Canada ece. uwaterloo. ca dwharder@alumni. uwaterloo. ca © 2012 by Douglas Wilhelm Harder. Some rights reserved.

Differentiation and Richardson Extrapolation Outline This topic discusses numerical differentiation: – The use of interpolation – The centred divided-difference approximations of the derivative and second derivative • Error analysis using Taylor series – The backward divided-difference approximation of the derivative • Error analysis – Richardson extrapolation 2

Differentiation and Richardson Extrapolation Outcomes Based Learning Objectives By the end of this laboratory, you will: – Understand how to approximate first and second derivatives – Understand how Taylor series are used to determine errors of various approximations – Know how to eliminate higher errors using Richardson extrapolation – Have programmed a Matlab routine with appropriate error checking and exception handling 3

Differentiation and Richardson Extrapolation Approximating the Derivative Suppose we want to approximate the derivative: 4

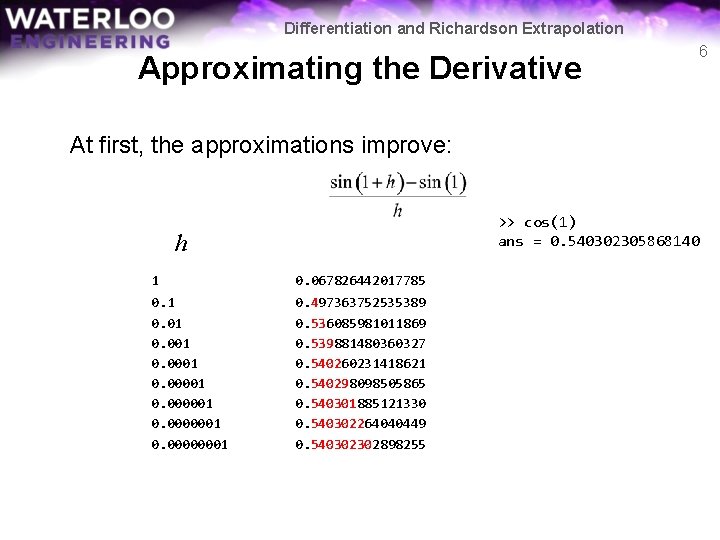

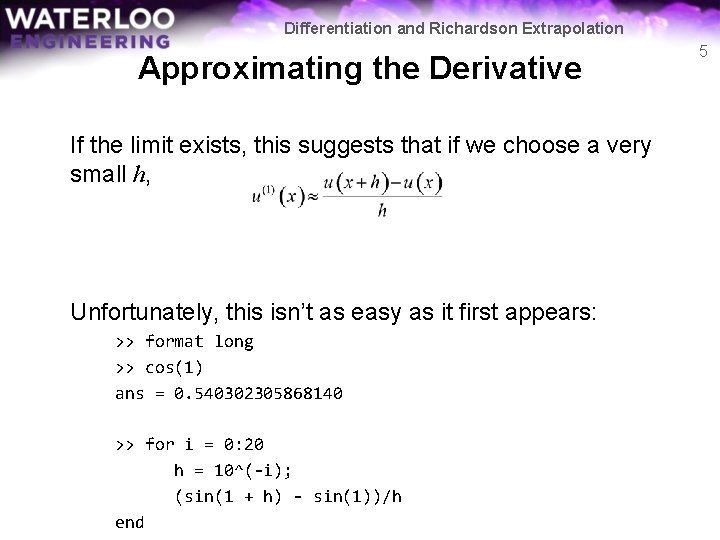

Differentiation and Richardson Extrapolation Approximating the Derivative If the limit exists, this suggests that if we choose a very small h, Unfortunately, this isn’t as easy as it first appears: >> format long >> cos(1) ans = 0. 540302305868140 >> for i = 0: 20 h = 10^(-i); (sin(1 + h) - sin(1))/h end 5

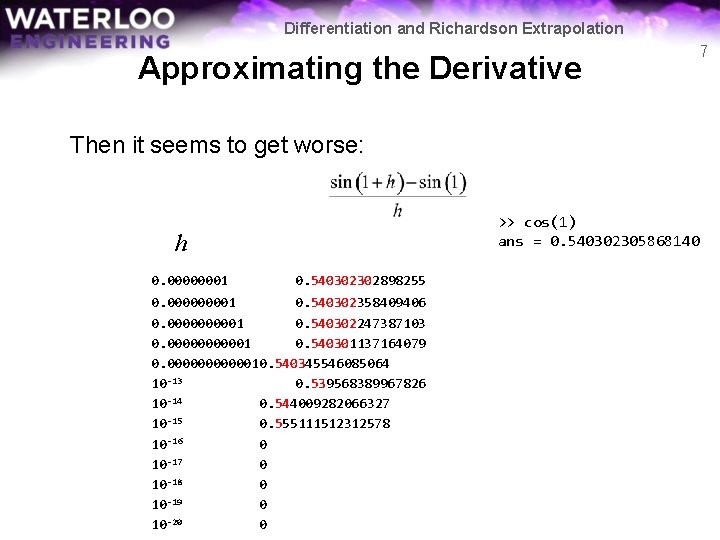

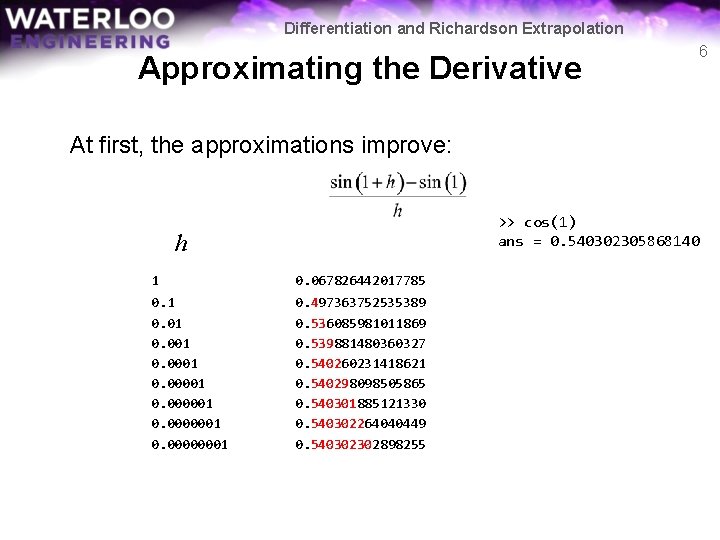

Differentiation and Richardson Extrapolation Approximating the Derivative 6 At first, the approximations improve: >> cos(1) ans = 0. 540302305868140 h 1 0. 067826442017785 0. 1 0. 001 0. 00001 0. 0000001 0. 00000001 0. 497363752535389 0. 536085981011869 0. 539881480360327 0. 540260231418621 0. 540298098505865 0. 540301885121330 0. 540302264040449 0. 540302302898255

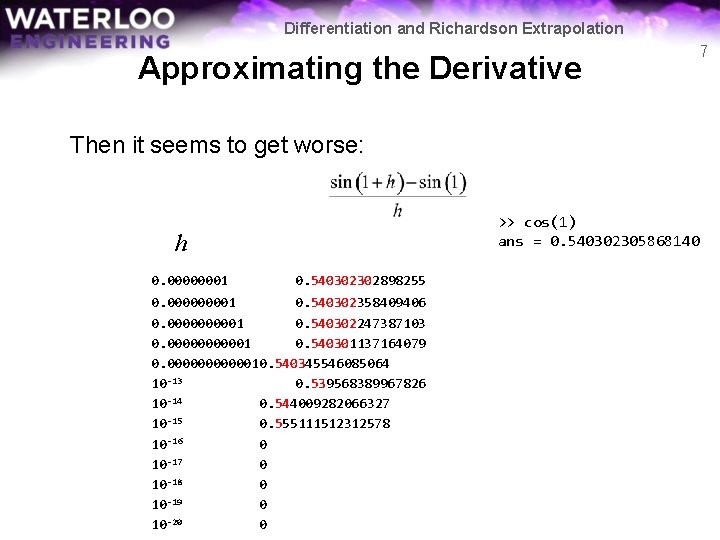

Differentiation and Richardson Extrapolation Approximating the Derivative 7 Then it seems to get worse: >> cos(1) ans = 0. 540302305868140 h 0. 00000001 0. 540302302898255 0. 00001 0. 540302358409406 0. 000001 0. 540302247387103 0. 000001 0. 540301137164079 0. 00000010. 540345546085064 10 -13 0. 539568389967826 10 -14 0. 544009282066327 10 -15 0. 555111512312578 10 -16 0 10 -17 0 10 -18 0 10 -19 0 10 -20 0

Differentiation and Richardson Extrapolation Approximating the Derivative There are two things that must be explained: – Why do we, to start with, appear to get one more digit of accuracy every time we divide h by 10 – Why, after some point, does the accuracy decrease, ultimately rendering a useless approximations 8

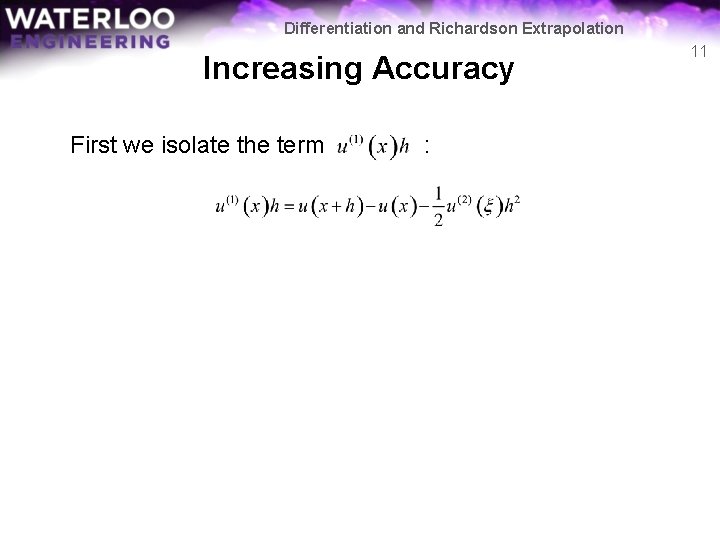

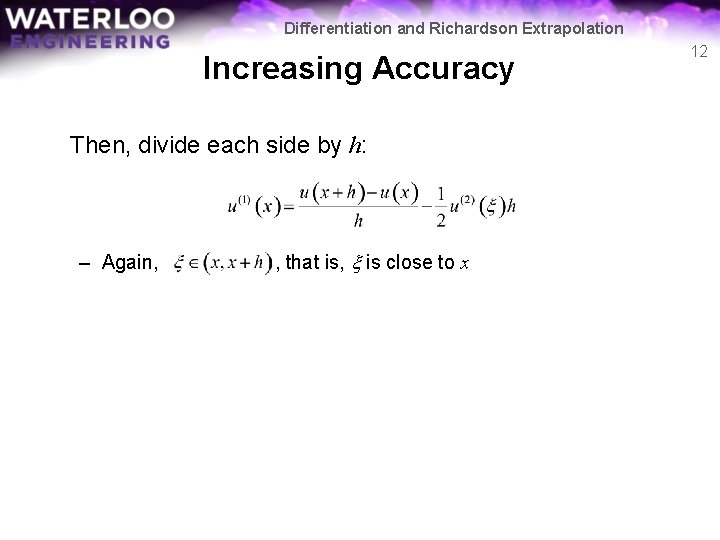

Differentiation and Richardson Extrapolation Increasing Accuracy We will start with why the answer appears to improve: – Recall Taylor’s approximation: where , that is, x is close to x 9

Differentiation and Richardson Extrapolation Increasing Accuracy We will start with why the answer appears to improve: – Recall Taylor’s approximation: where , that is, x is close to x – Solve this equation for the derivative 10

Differentiation and Richardson Extrapolation Increasing Accuracy First we isolate the term : 11

Differentiation and Richardson Extrapolation Increasing Accuracy Then, divide each side by h: – Again, , that is, x is close to x 12

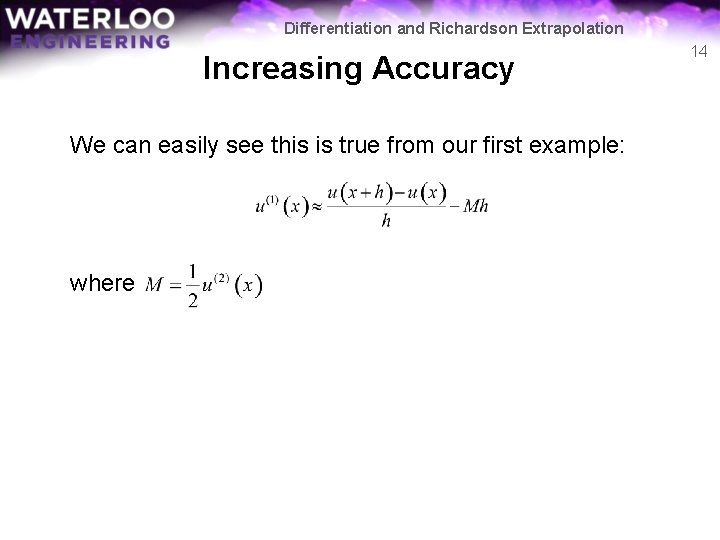

Differentiation and Richardson Extrapolation Increasing Accuracy Assuming that doesn’t vary too wildly, this term is approximately a constant: 13

Differentiation and Richardson Extrapolation Increasing Accuracy We can easily see this is true from our first example: where 14

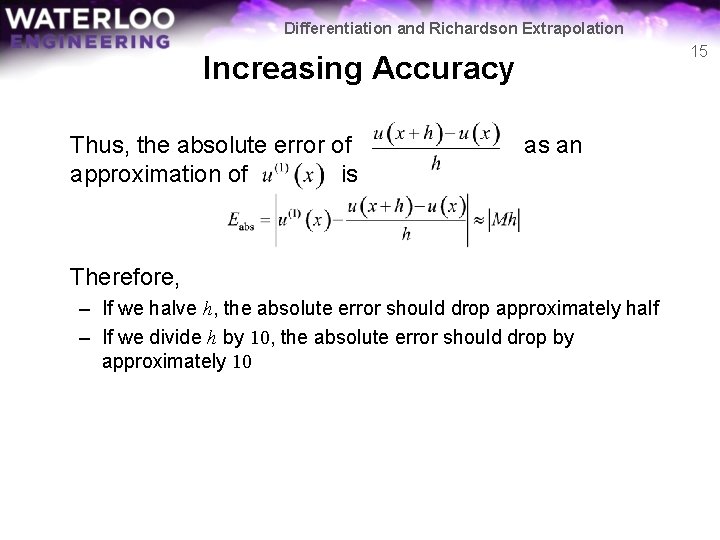

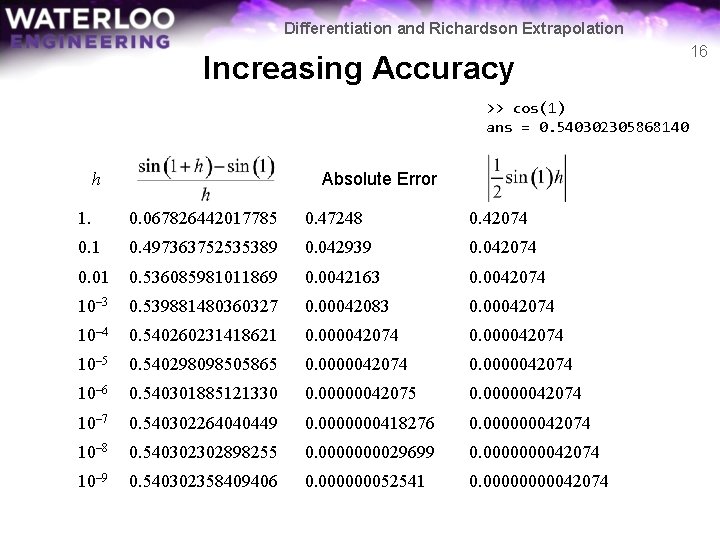

Differentiation and Richardson Extrapolation 15 Increasing Accuracy Thus, the absolute error of approximation of is as an Therefore, – If we halve h, the absolute error should drop approximately half – If we divide h by 10, the absolute error should drop by approximately 10

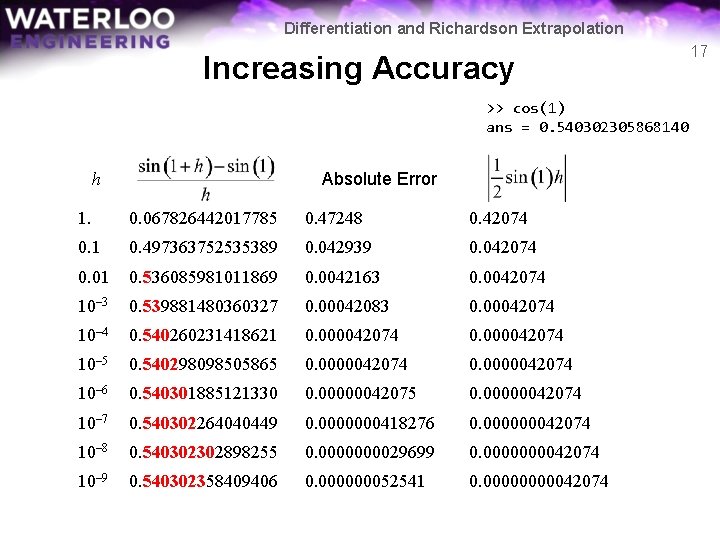

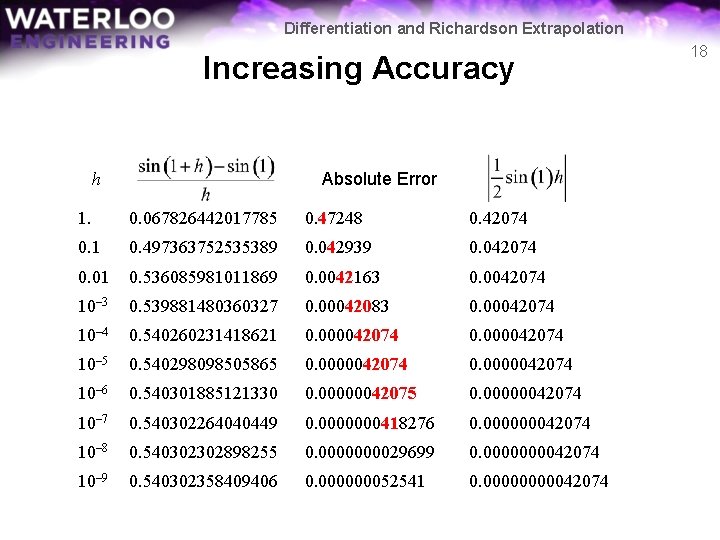

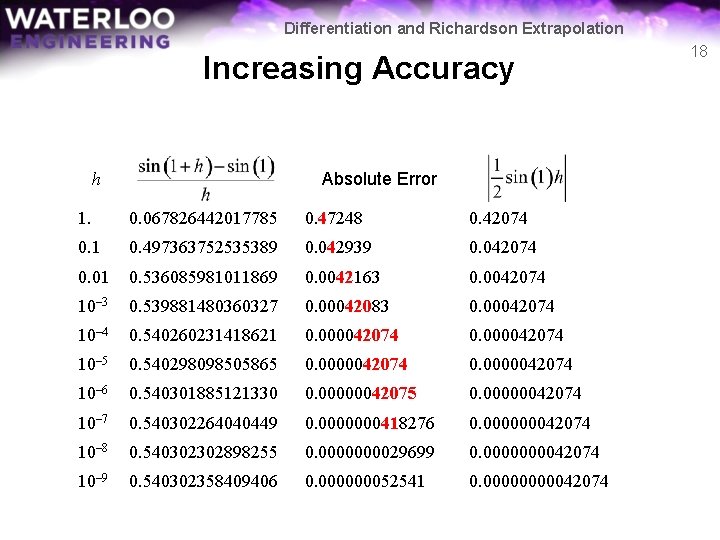

Differentiation and Richardson Extrapolation Increasing Accuracy >> cos(1) ans = 0. 540302305868140 Absolute Error h 1. 0. 067826442017785 0. 47248 0. 42074 0. 1 0. 497363752535389 0. 042939 0. 042074 0. 01 0. 536085981011869 0. 0042163 0. 0042074 10– 3 0. 539881480360327 0. 00042083 0. 00042074 10– 4 0. 540260231418621 0. 000042074 10– 5 0. 540298098505865 0. 0000042074 10– 6 0. 540301885121330 0. 00000042075 0. 00000042074 10– 7 0. 540302264040449 0. 0000000418276 0. 000000042074 10– 8 0. 540302302898255 0. 000029699 0. 000042074 10– 9 0. 540302358409406 0. 000000052541 0. 0000042074 16

Differentiation and Richardson Extrapolation Increasing Accuracy >> cos(1) ans = 0. 540302305868140 Absolute Error h 1. 0. 067826442017785 0. 47248 0. 42074 0. 1 0. 497363752535389 0. 042939 0. 042074 0. 01 0. 536085981011869 0. 0042163 0. 0042074 10– 3 0. 539881480360327 0. 00042083 0. 00042074 10– 4 0. 540260231418621 0. 000042074 10– 5 0. 540298098505865 0. 0000042074 10– 6 0. 540301885121330 0. 00000042075 0. 00000042074 10– 7 0. 540302264040449 0. 0000000418276 0. 000000042074 10– 8 0. 540302302898255 0. 000029699 0. 000042074 10– 9 0. 540302358409406 0. 000000052541 0. 0000042074 17

Differentiation and Richardson Extrapolation Increasing Accuracy Absolute Error h 1. 0. 067826442017785 0. 47248 0. 42074 0. 1 0. 497363752535389 0. 042939 0. 042074 0. 01 0. 536085981011869 0. 0042163 0. 0042074 10– 3 0. 539881480360327 0. 00042083 0. 00042074 10– 4 0. 540260231418621 0. 000042074 10– 5 0. 540298098505865 0. 0000042074 10– 6 0. 540301885121330 0. 00000042075 0. 00000042074 10– 7 0. 540302264040449 0. 0000000418276 0. 000000042074 10– 8 0. 540302302898255 0. 000029699 0. 000042074 10– 9 0. 540302358409406 0. 000000052541 0. 0000042074 18

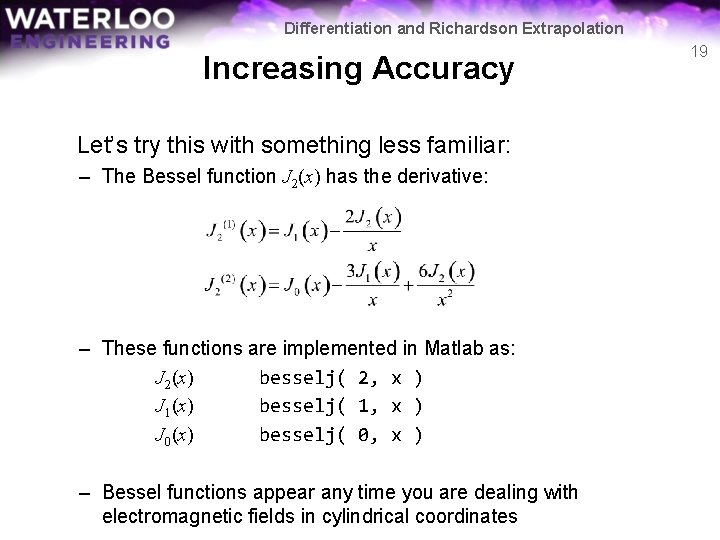

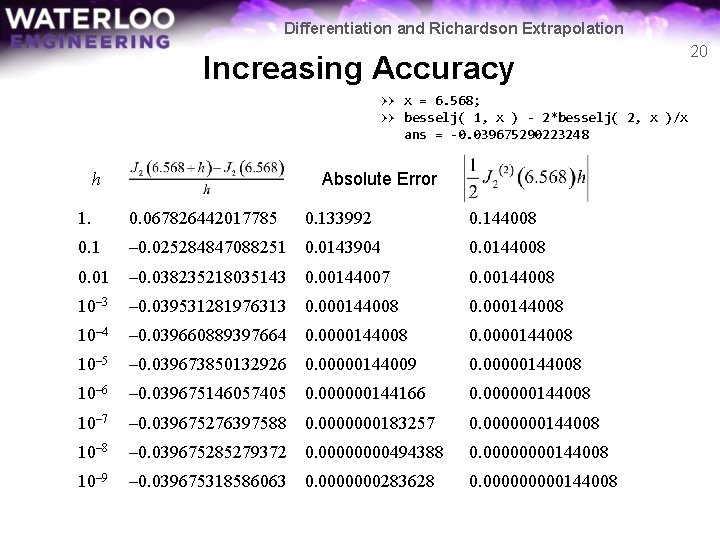

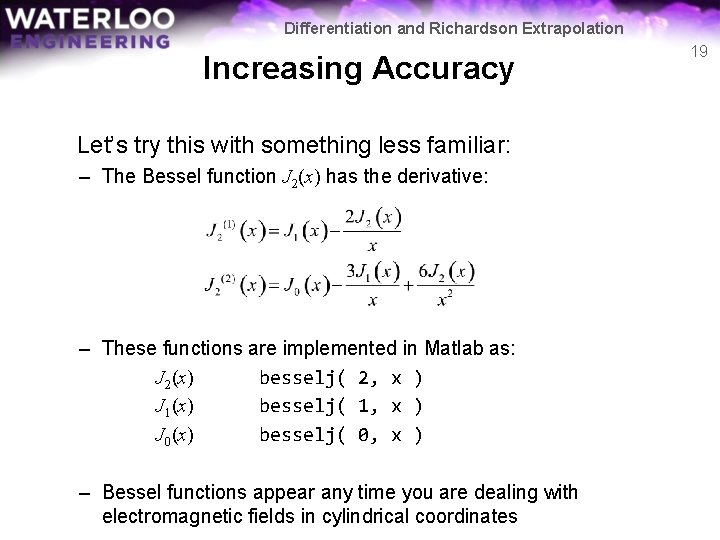

Differentiation and Richardson Extrapolation Increasing Accuracy Let’s try this with something less familiar: – The Bessel function J 2(x) has the derivative: – These functions are implemented in Matlab as: J 2(x) besselj( 2, x ) J 1(x) besselj( 1, x ) J 0(x) besselj( 0, x ) – Bessel functions appear any time you are dealing with electromagnetic fields in cylindrical coordinates 19

Differentiation and Richardson Extrapolation Increasing Accuracy >> x = 6. 568; >> besselj( 1, x ) - 2*besselj( 2, x )/x ans = -0. 039675290223248 Absolute Error h 1. 0. 067826442017785 0. 133992 0. 144008 0. 1 – 0. 025284847088251 0. 0143904 0. 0144008 0. 01 – 0. 038235218035143 0. 00144007 0. 00144008 10– 3 – 0. 039531281976313 0. 000144008 10– 4 – 0. 039660889397664 0. 0000144008 10– 5 – 0. 039673850132926 0. 00000144009 0. 00000144008 10– 6 – 0. 039675146057405 0. 000000144166 0. 000000144008 10– 7 – 0. 039675276397588 0. 0000000183257 0. 0000000144008 10– 8 – 0. 039675285279372 0. 0000494388 0. 0000144008 10– 9 – 0. 039675318586063 0. 0000000283628 0. 00000144008 20

Differentiation and Richardson Extrapolation Increasing Accuracy We could use a rule of thumb: Use h = 10– 8 – It appears to work… Unfortunately: – It is not always the best approximation – It may not give us sufficient accuracy – We still don’t understand why our approximation breaks down… 21

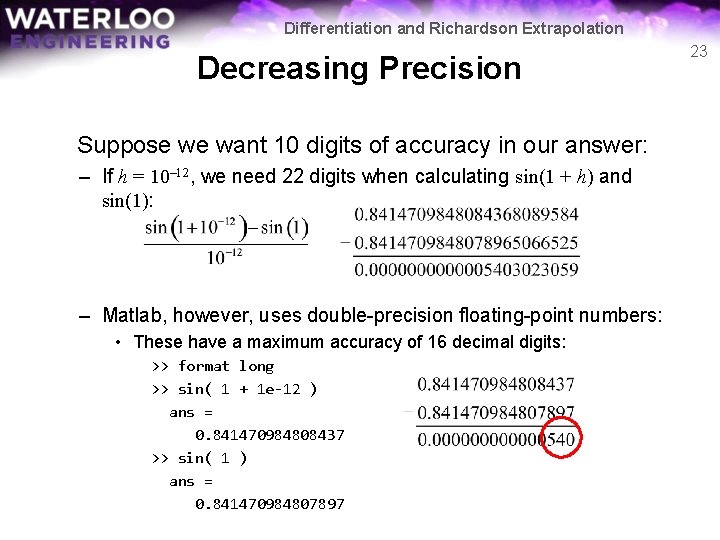

Differentiation and Richardson Extrapolation Decreasing Precision Suppose we want 10 digits of accuracy in our answer: – If h = 0. 01, we need 12 digits when calculating sin(1. 01) and sin(1): – If h = 0. 00001, we need 15 digits when calculating sin(1. 00001) and sin(1): 22

Differentiation and Richardson Extrapolation Decreasing Precision Suppose we want 10 digits of accuracy in our answer: – If h = 10– 12, we need 22 digits when calculating sin(1 + h) and sin(1): – Matlab, however, uses double-precision floating-point numbers: • These have a maximum accuracy of 16 decimal digits: >> format long >> sin( 1 + 1 e-12 ) ans = 0. 841470984808437 >> sin( 1 ) ans = 0. 841470984807897 23

Differentiation and Richardson Extrapolation Decreasing Precision Because of the limitations of doubles, our approximation is Note: this is not entirely true because Matlab uses base 2 and not base 10, but the analogy is faithful… 24

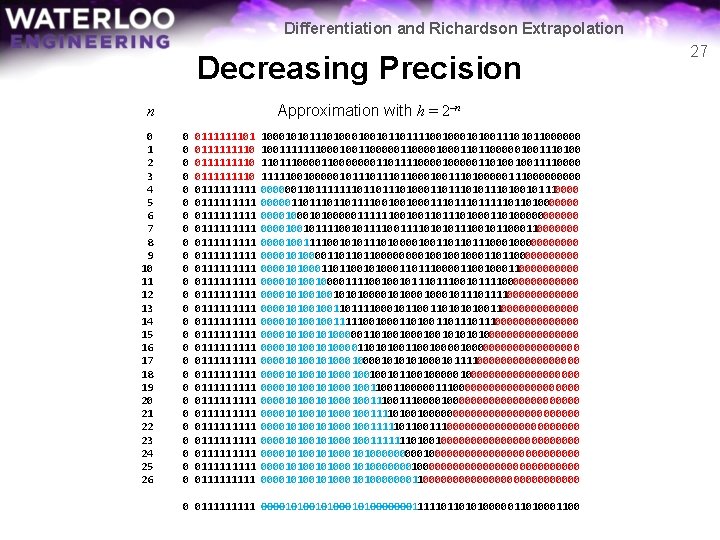

Differentiation and Richardson Extrapolation Decreasing Precision We can view this using the binary representation of doubles: >> cos( 1 ) ans = 3 fe 14 a 280 fb 5068 c 3 f e 1 4 a 2 8 0 f b 5 0 6 8 c 0011 1110 0001 0100 1010 0010 1000 0000 1111 1011 0101 0000 0110 1000 1100 1. 00010100010100000001111101101010000011010001100 × 20111110 – 01111 = 1. 00010100010100000001111101101010000011010001100 × 2– 1 = 0. 100010100010100000001111101101010000011010001100 25

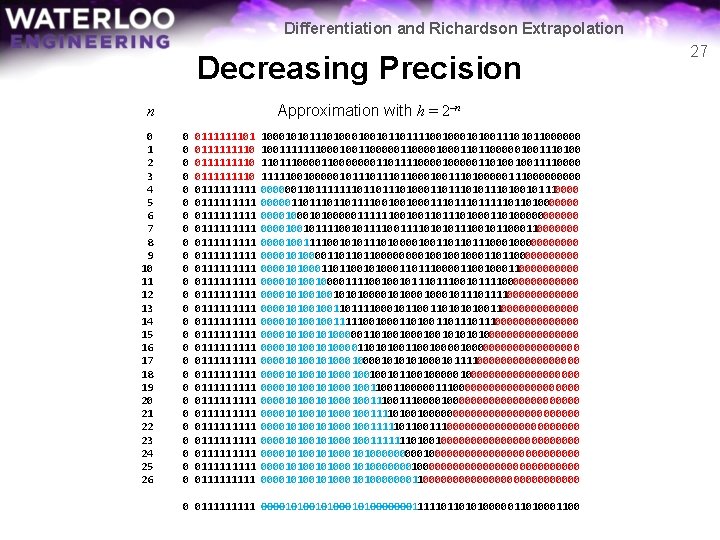

Differentiation and Richardson Extrapolation Decreasing Precision From this, we see: 0. 100010100010100000001111101101010000011010001100 >> format long >> 1/2 + 1/32 + 1/128 + 1/1024 + 1/4096 + 1/65536 + 1/262144 + 1/33554432 ans = 0. 540302306413651 >> cos( 1 ) ans = 0. 540302305868140 >> format hex >> 1/2 + 1/32 + 1/128 + 1/1024 + 1/4096 + 1/65536 + 1/262144 + 1/33554432 ans = 3 fe 14 a 2810000000 >> cos(1) ans = 3 fe 14 a 280 fb 5068 c 26

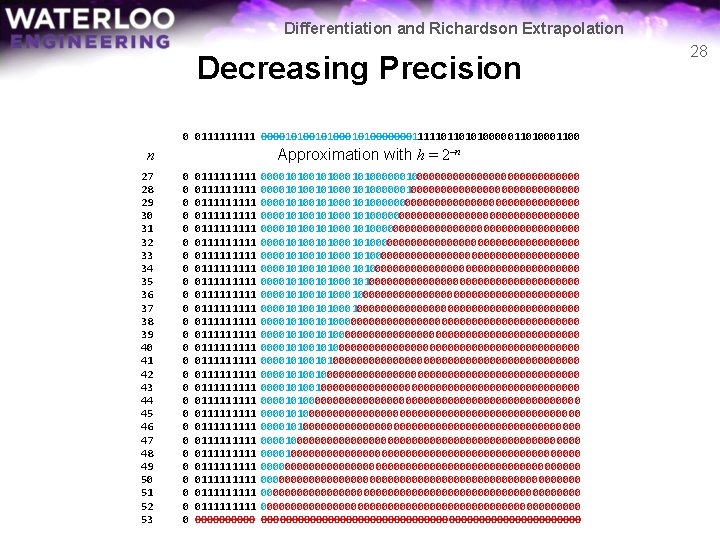

Differentiation and Richardson Extrapolation Decreasing Precision Approximation with h = 2–n n 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 0 0 0 0 0 0 0111111101 0111111110 011110 0111111111 0111111111 0111111111 0111111111 0111111111 0111111111 011111 100010101110100101101111001010011101011000000 1001111111000100110000100011011000001001110100 110111000000011011110000011010010011110000 1111100100000101110110001001110100000111000000110111111101101000110101110100101110000011011110010010001110111110110100000001010000011111100100110100011010000001001011110011110101011100101100000001001111001010111010000100110110111000000010100001101101100001001001000110110000001010001101110000110010001100000101001000011110010010111001011110000000101001001010100010001011110000001010010011011110001011010101001100000001010010011111001000110111011100000001010000011010010010100000000101000011010100100001000000000010100010101010001011110000000001010001001001011001000000000001010001001100000111000000000001010001001110000000000000101000100111101001000000000000010100010011111011001110000000000010100010011111110100100000000000010100010100000000000000000101000101000000000000000001010001010000000110000000000000 0 011111 000010100010100000001111101101010000011010001100 27

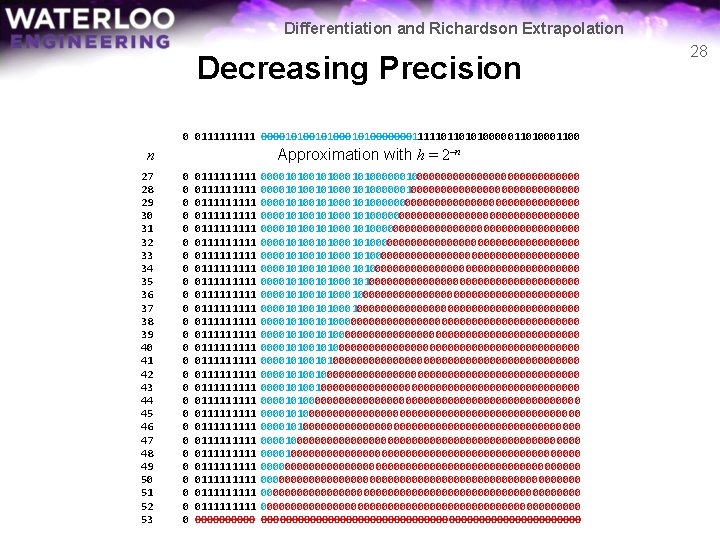

Differentiation and Richardson Extrapolation Decreasing Precision n 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 0 011111 000010100010100000001111101101010000011010001100 Approximation with h = 2–n 0 0 0 0 0 0 0111111111 0111111111 0111111111 0111111111 0111111111 0111111111 0111111111 0000000000101001010001010000001000000000000000000000000000010100101000101000000000000000000000000000000000001010010100010100000000000000000000000000000000000101001010001010000000000000000000000000000000101000101000000000000000000000000000000000001010010100010000000000000000000000000000000000000101001010000000000000000000000000000000000000000010100101000000000000000000000000000000000000000001010010000000000000000000000000000000000000000000101000000000000000000000000000001010000000000000000000000000000000000000000000000100000000000000000000000000000000000000000000000000000000000000000000000000000000 28

Differentiation and Richardson Extrapolation Decreasing Precision This effect when subtracting two similar numbers is called subtractive cancellation In industry, it is also referred to as catastrophic cancellation Ignoring the effects of subtractive cancellation is one of the most significant sources of numerical error 29

Differentiation and Richardson Extrapolation Decreasing Precision Consequence: – Unlike calculus, we cannot make h arbitrarily small Possible solutions: – Find a better formulas – Use completely different approaches 30

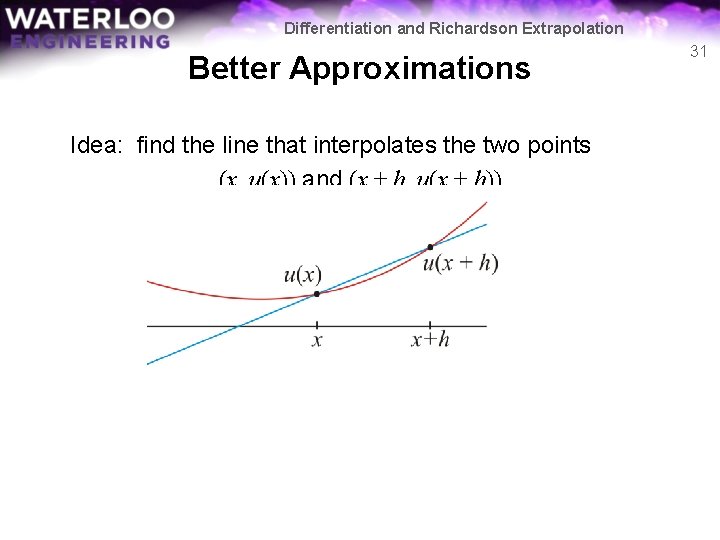

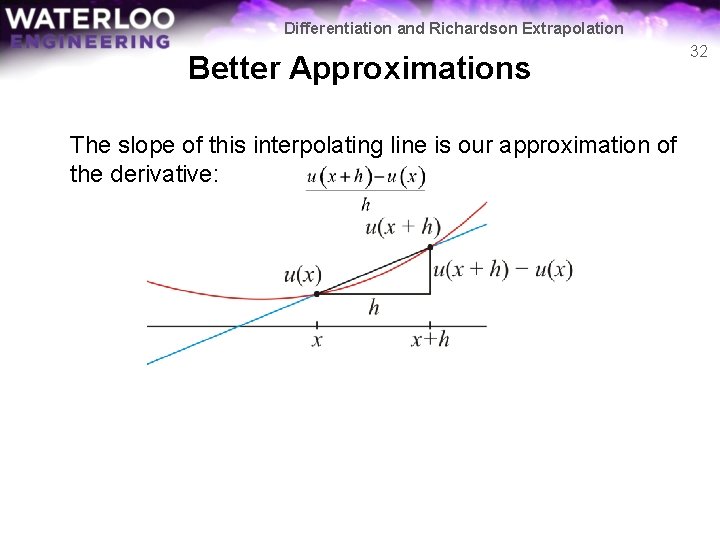

Differentiation and Richardson Extrapolation Better Approximations Idea: find the line that interpolates the two points (x, u(x)) and (x + h, u(x + h)) 31

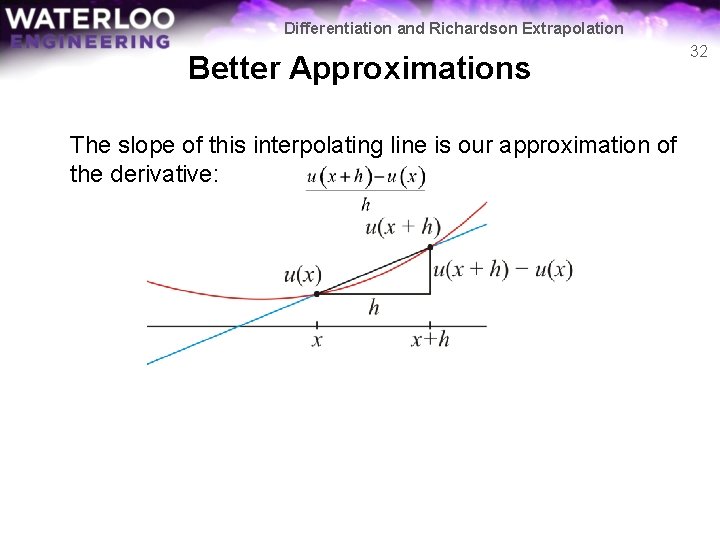

Differentiation and Richardson Extrapolation Better Approximations The slope of this interpolating line is our approximation of the derivative: 32

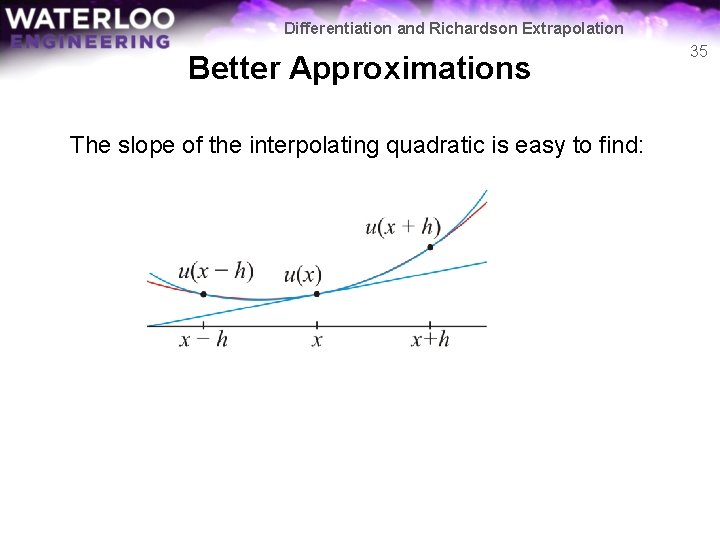

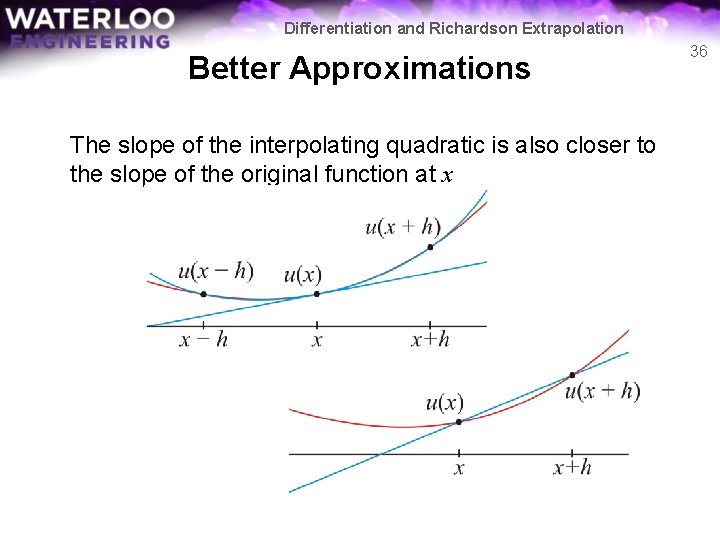

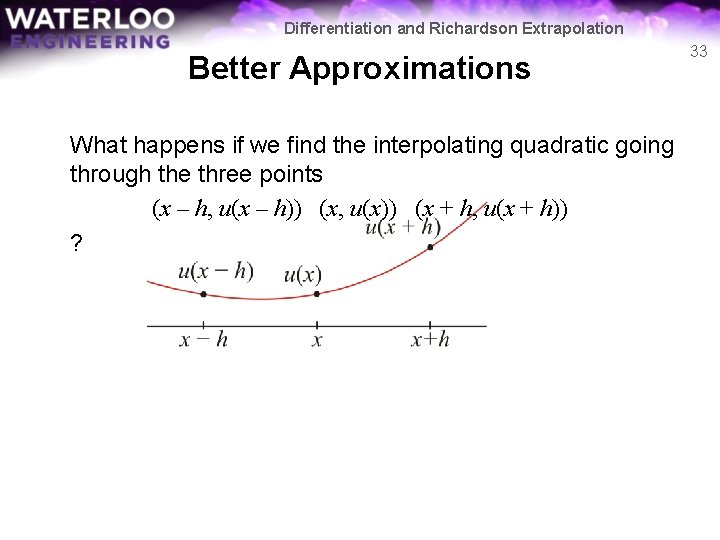

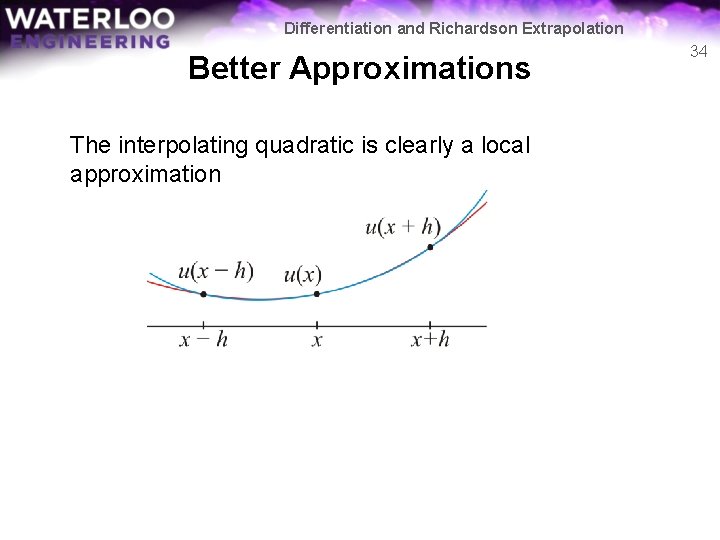

Differentiation and Richardson Extrapolation Better Approximations What happens if we find the interpolating quadratic going through the three points (x – h, u(x – h)) (x, u(x)) (x + h, u(x + h)) ? 33

Differentiation and Richardson Extrapolation Better Approximations The interpolating quadratic is clearly a local approximation 34

Differentiation and Richardson Extrapolation Better Approximations The slope of the interpolating quadratic is easy to find: 35

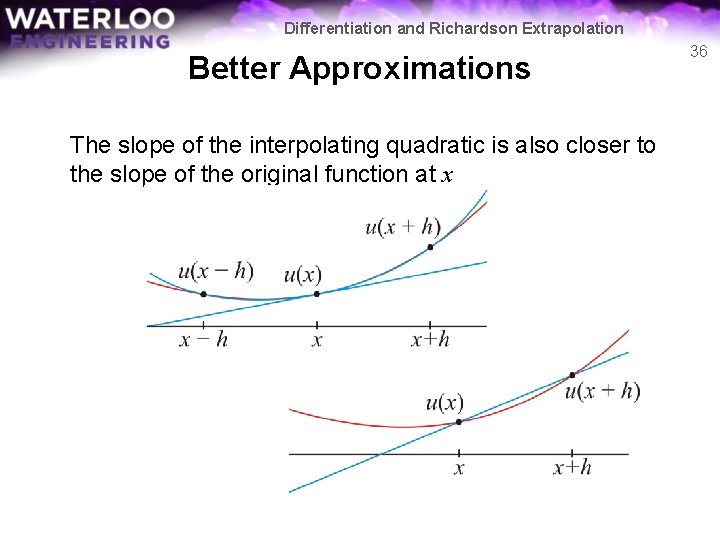

Differentiation and Richardson Extrapolation Better Approximations The slope of the interpolating quadratic is also closer to the slope of the original function at x 36

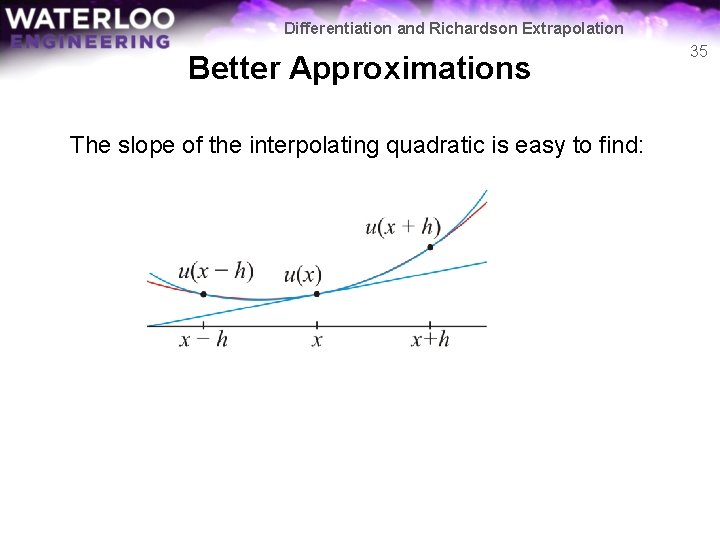

Differentiation and Richardson Extrapolation Better Approximations Without going through the process, finding the interpolating quadratic function gives us a similar formula 37

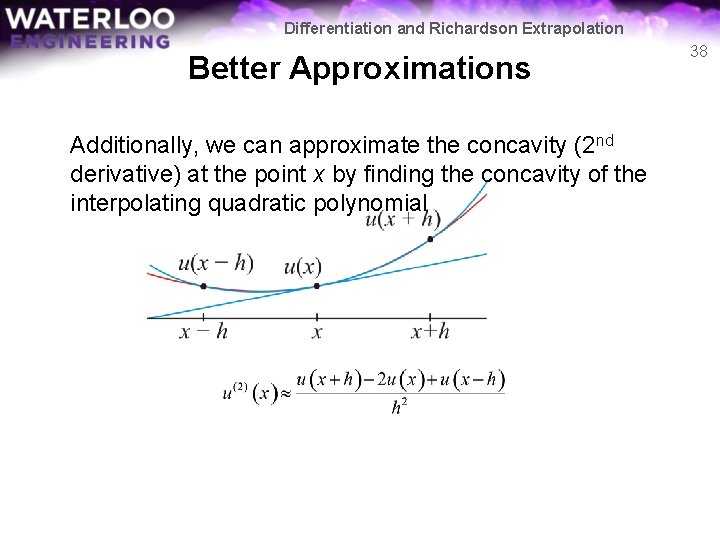

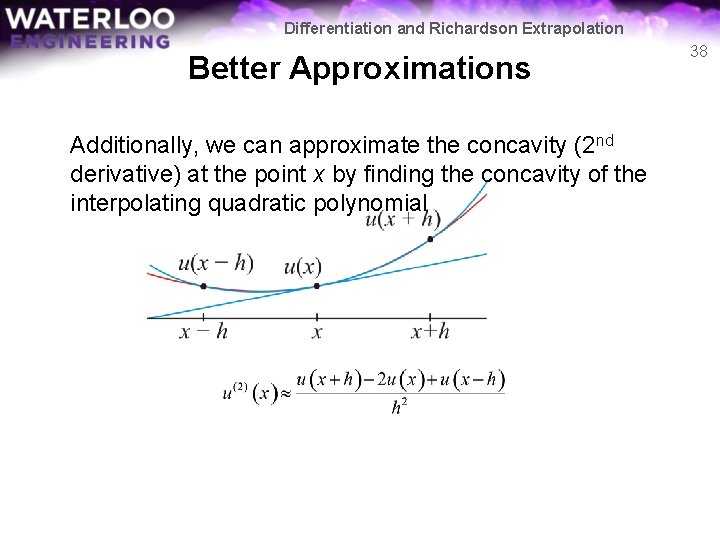

Differentiation and Richardson Extrapolation Better Approximations Additionally, we can approximate the concavity (2 nd derivative) at the point x by finding the concavity of the interpolating quadratic polynomial 38

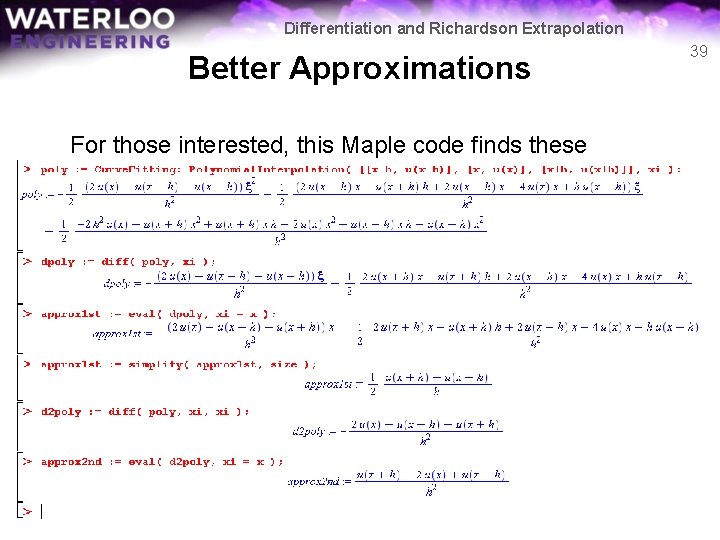

Differentiation and Richardson Extrapolation Better Approximations For those interested, this Maple code finds these formulas 39

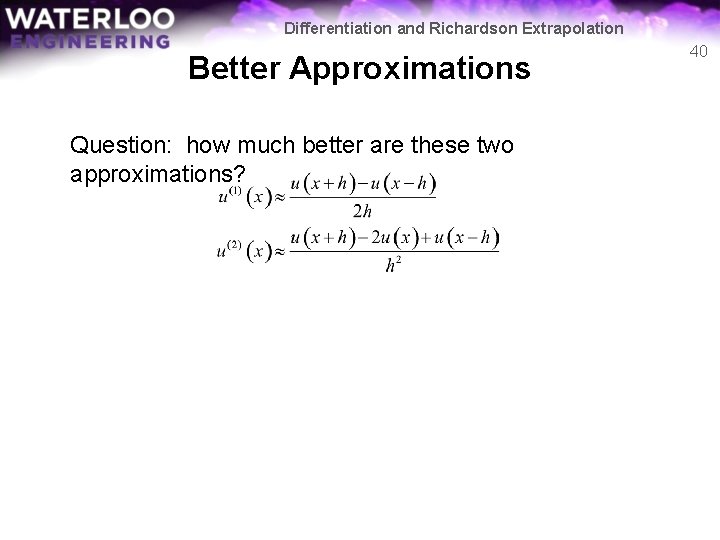

Differentiation and Richardson Extrapolation Better Approximations Question: how much better are these two approximations? 40

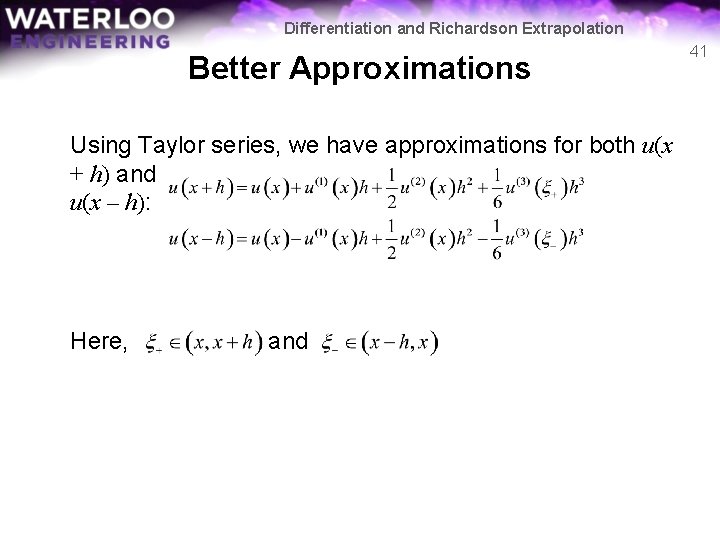

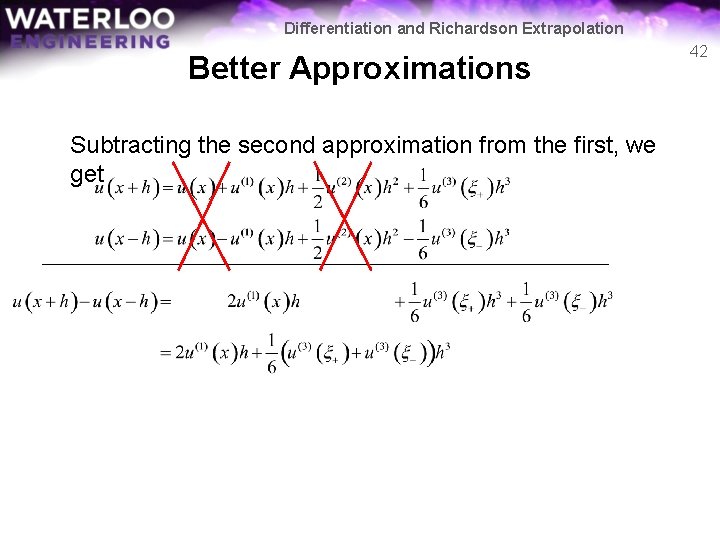

Differentiation and Richardson Extrapolation Better Approximations Using Taylor series, we have approximations for both u(x + h) and u(x – h): Here, and 41

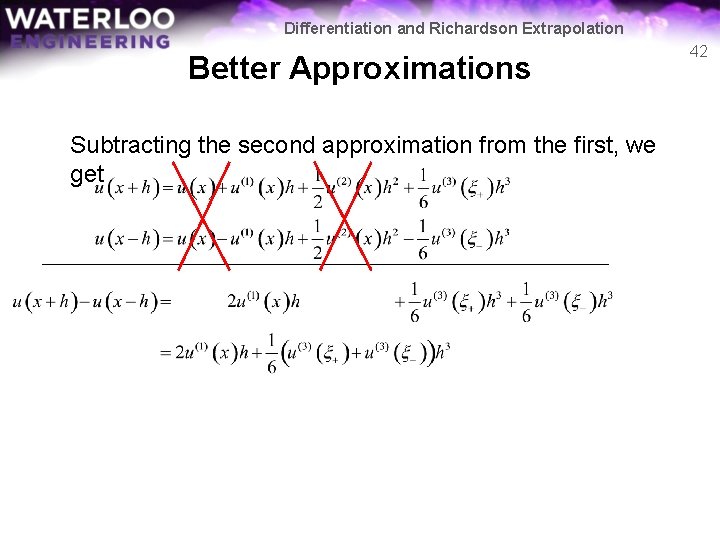

Differentiation and Richardson Extrapolation Better Approximations Subtracting the second approximation from the first, we get 42

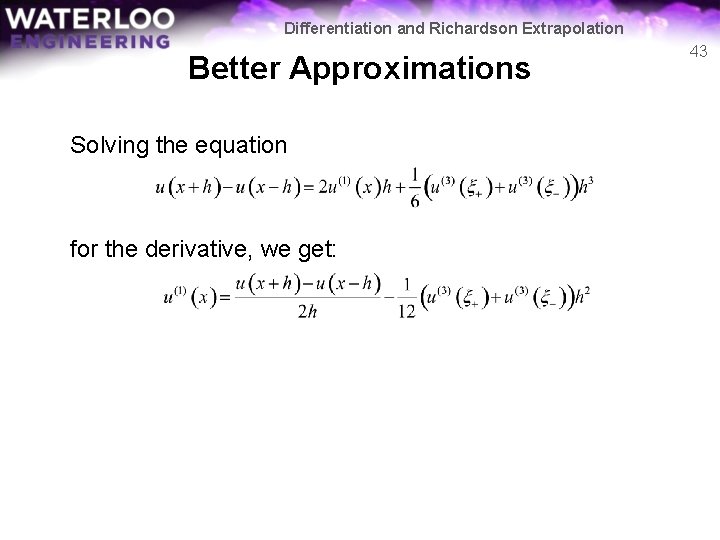

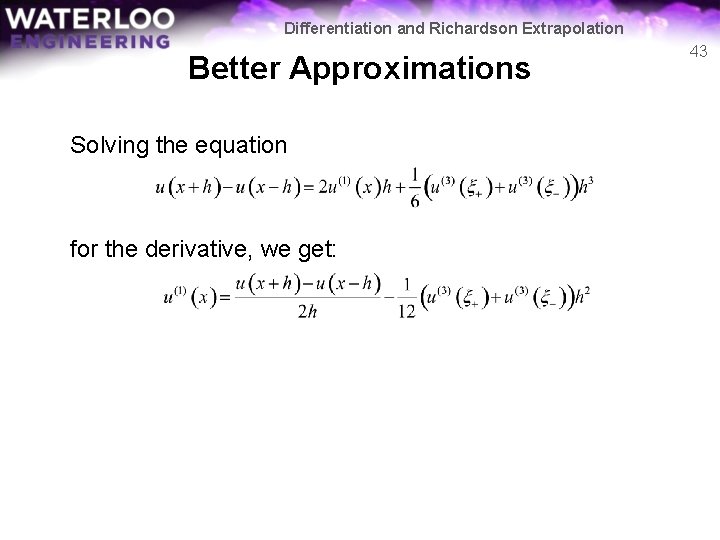

Differentiation and Richardson Extrapolation Better Approximations Solving the equation for the derivative, we get: 43

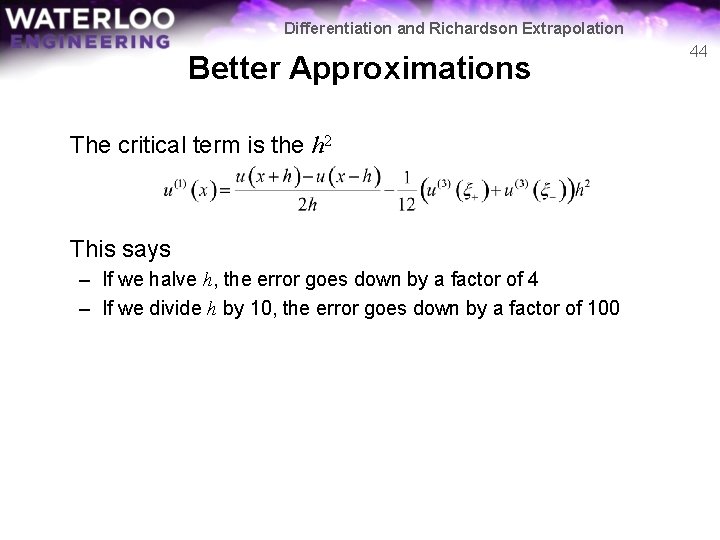

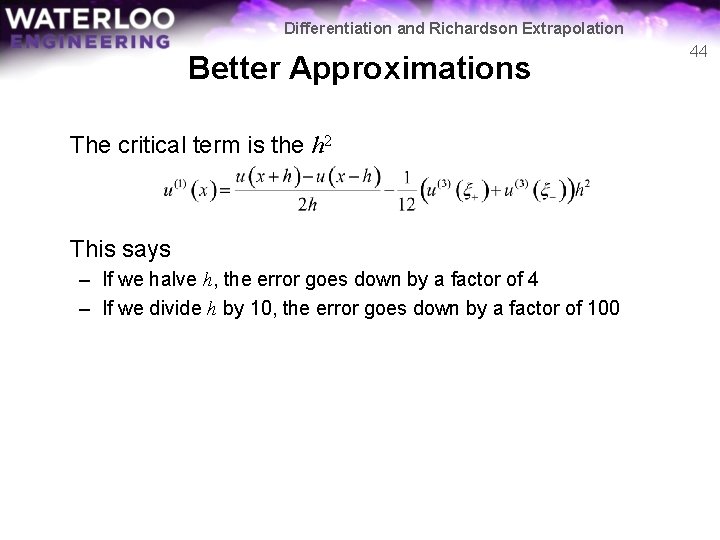

Differentiation and Richardson Extrapolation Better Approximations The critical term is the h 2 This says – If we halve h, the error goes down by a factor of 4 – If we divide h by 10, the error goes down by a factor of 100 44

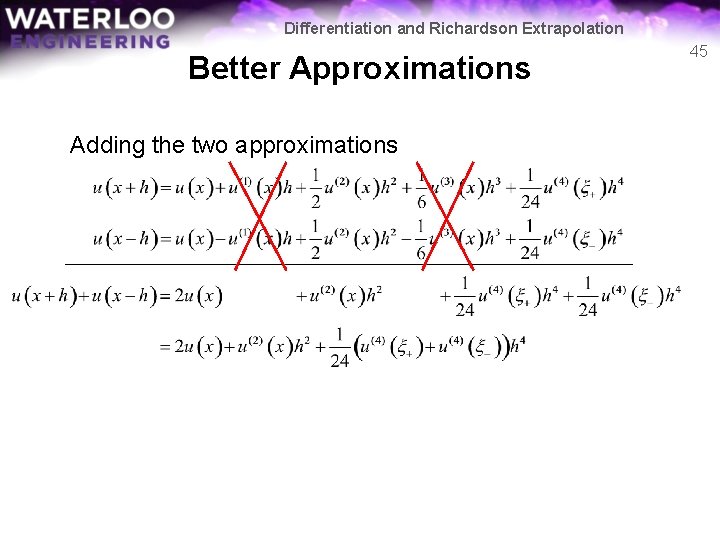

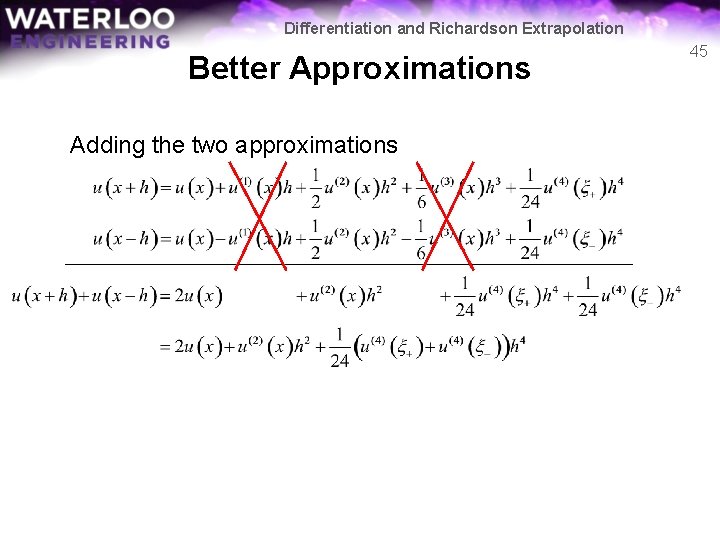

Differentiation and Richardson Extrapolation Better Approximations Adding the two approximations 45

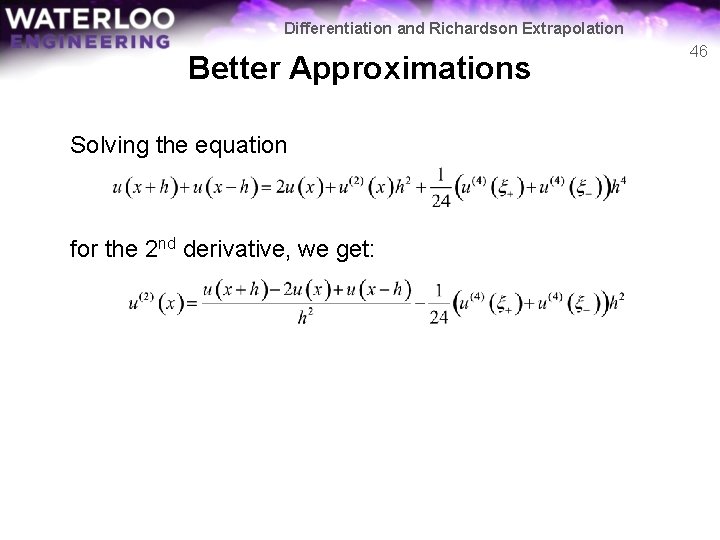

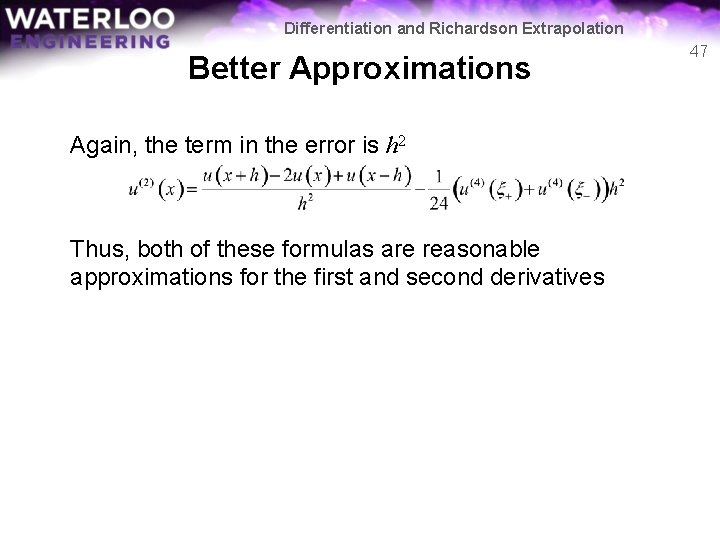

Differentiation and Richardson Extrapolation Better Approximations Solving the equation for the 2 nd derivative, we get: 46

Differentiation and Richardson Extrapolation Better Approximations Again, the term in the error is h 2 Thus, both of these formulas are reasonable approximations for the first and second derivatives 47

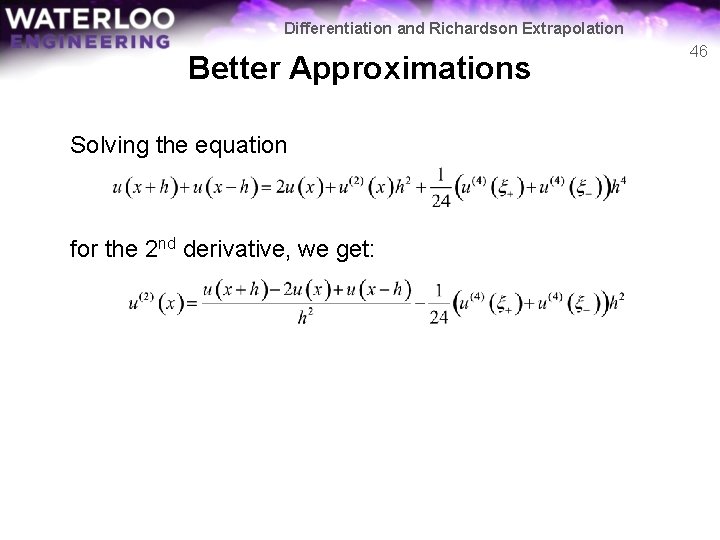

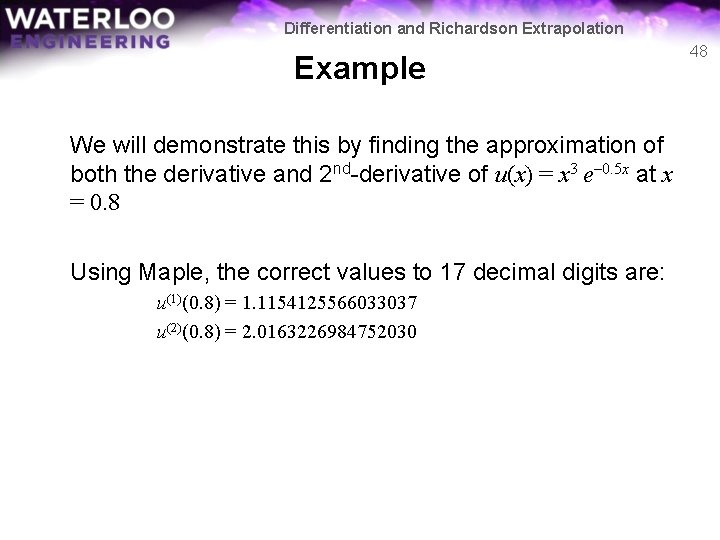

Differentiation and Richardson Extrapolation Example We will demonstrate this by finding the approximation of both the derivative and 2 nd-derivative of u(x) = x 3 e– 0. 5 x at x = 0. 8 Using Maple, the correct values to 17 decimal digits are: u(1)(0. 8) = 1. 1154125566033037 u(2)(0. 8) = 2. 0163226984752030 48

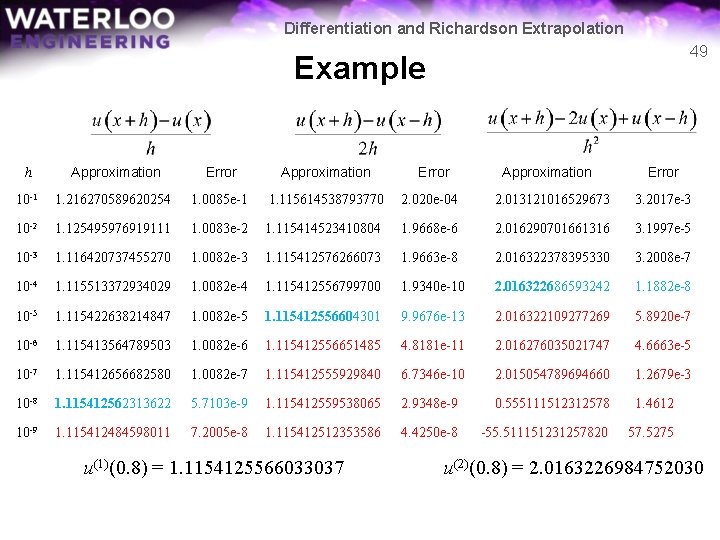

Differentiation and Richardson Extrapolation 49 Example h Approximation Error Approximation 10 -1 1. 216270589620254 1. 0085 e-1 1. 115614538793770 2. 020 e-04 2. 013121016529673 3. 2017 e-3 10 -2 1. 125495976919111 1. 0083 e-2 1. 115414523410804 1. 9668 e-6 2. 016290701661316 3. 1997 e-5 10 -3 1. 116420737455270 1. 0082 e-3 1. 115412576266073 1. 9663 e-8 2. 016322378395330 3. 2008 e-7 10 -4 1. 115513372934029 1. 0082 e-4 1. 115412556799700 1. 9340 e-10 2. 016322686593242 1. 1882 e-8 10 -5 1. 115422638214847 1. 0082 e-5 1. 115412556604301 9. 9676 e-13 2. 016322109277269 5. 8920 e-7 10 -6 1. 115413564789503 1. 0082 e-6 1. 115412556651485 4. 8181 e-11 2. 016276035021747 4. 6663 e-5 10 -7 1. 115412656682580 1. 0082 e-7 1. 115412555929840 6. 7346 e-10 2. 015054789694660 1. 2679 e-3 10 -8 1. 115412562313622 5. 7103 e-9 1. 115412559538065 2. 9348 e-9 0. 555111512312578 1. 4612 10 -9 1. 115412484598011 7. 2005 e-8 1. 115412512353586 4. 4250 e-8 -55. 511151231257820 57. 5275 u(1)(0. 8) = 1. 1154125566033037 Error Approximation Error u(2)(0. 8) = 2. 0163226984752030

Differentiation and Richardson Extrapolation Better Approximations To give names to these formulas: First Derivative 1 st-order forward divided-difference formula 2 nd-order centred divided-difference formula Second Derivative 2 nd-order centred divided-difference formula 50

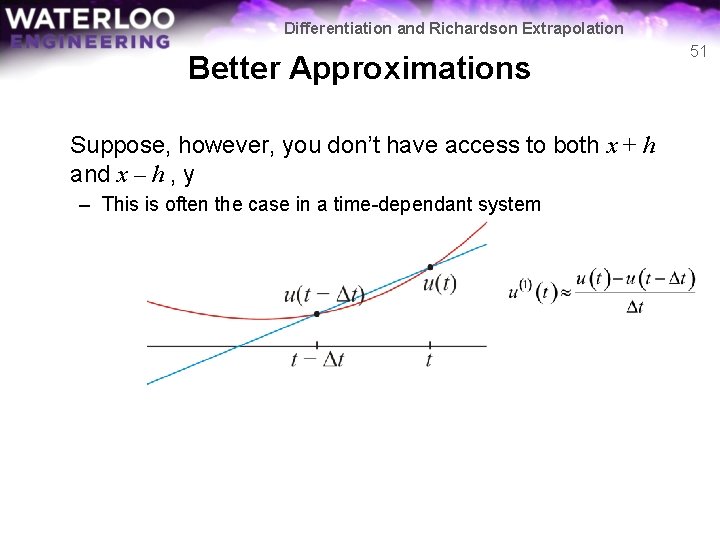

Differentiation and Richardson Extrapolation Better Approximations Suppose, however, you don’t have access to both x + h and x – h , y – This is often the case in a time-dependant system 51

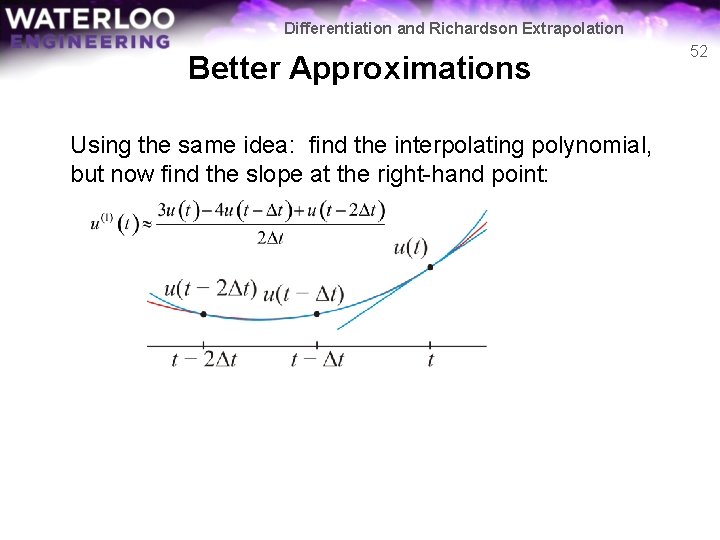

Differentiation and Richardson Extrapolation Better Approximations Using the same idea: find the interpolating polynomial, but now find the slope at the right-hand point: 52

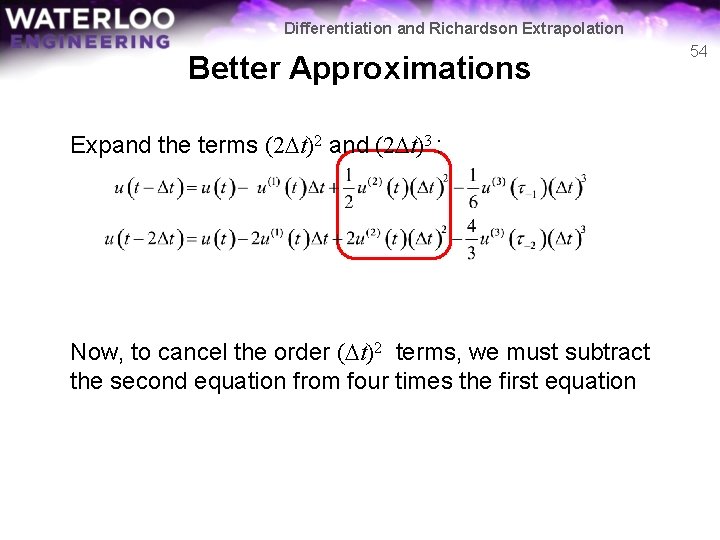

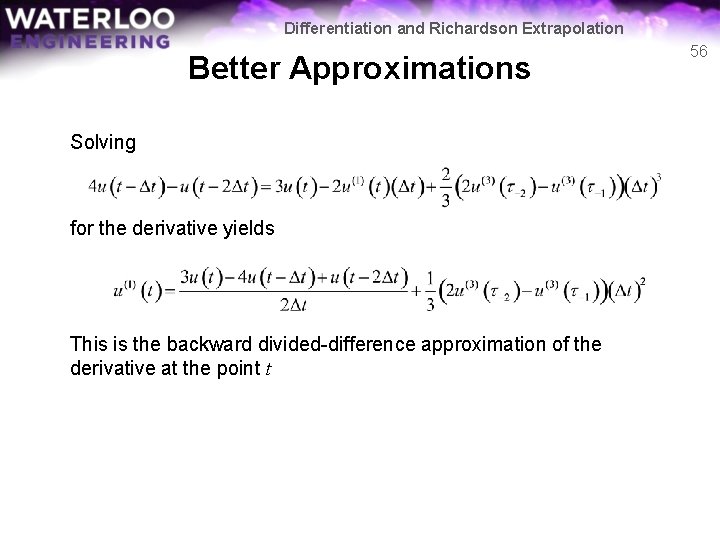

Differentiation and Richardson Extrapolation Better Approximations Using Taylor series, we have approximations for both u(t – Dt) and u(t – 2 Dt): Here, and 53

Differentiation and Richardson Extrapolation Better Approximations Expand the terms (2 Dt)2 and (2 Dt)3 : Now, to cancel the order (Dt)2 terms, we must subtract the second equation from four times the first equation 54

Differentiation and Richardson Extrapolation Better Approximations This leaves us a formula containing the derivative: 55

Differentiation and Richardson Extrapolation Better Approximations Solving for the derivative yields This is the backward divided-difference approximation of the derivative at the point t 56

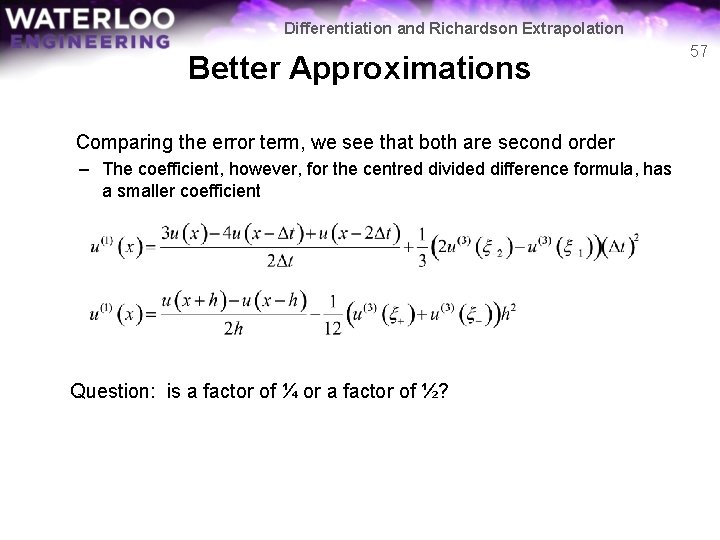

Differentiation and Richardson Extrapolation Better Approximations Comparing the error term, we see that both are second order – The coefficient, however, for the centred divided difference formula, has a smaller coefficient Question: is a factor of ¼ or a factor of ½? 57

![Differentiation and Richardson Extrapolation Better Approximations 58 You will write four functions function dy Differentiation and Richardson Extrapolation Better Approximations 58 You will write four functions: function [dy]](https://slidetodoc.com/presentation_image/019ffbd7ab6c5ff930787137e676b3b8/image-58.jpg)

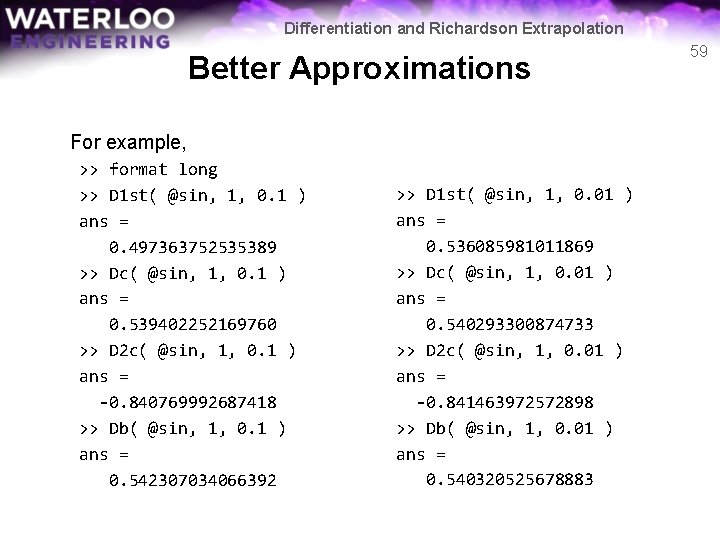

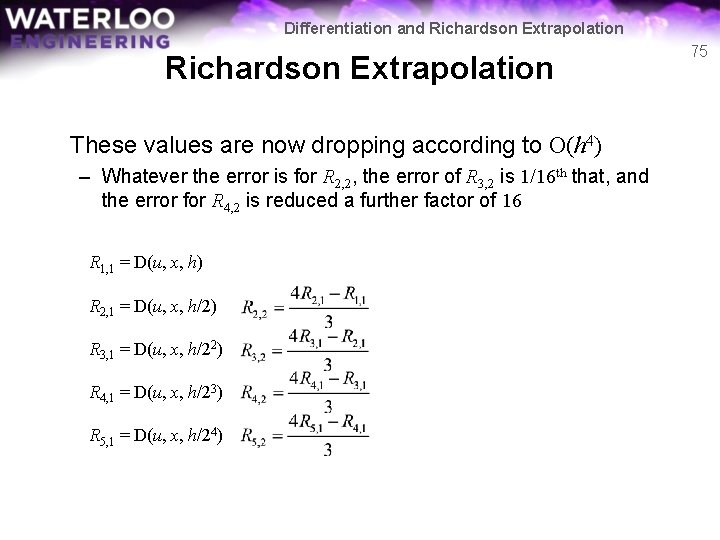

Differentiation and Richardson Extrapolation Better Approximations 58 You will write four functions: function [dy] = D 1 st( u, x, h ) function [dy] = Dc( u, x, h ) function [dy] = D 2 c( u, x, h ) function [dy] = Db( u, x, h ) that implement, respectively, the formulas Yes, they’re all one line…

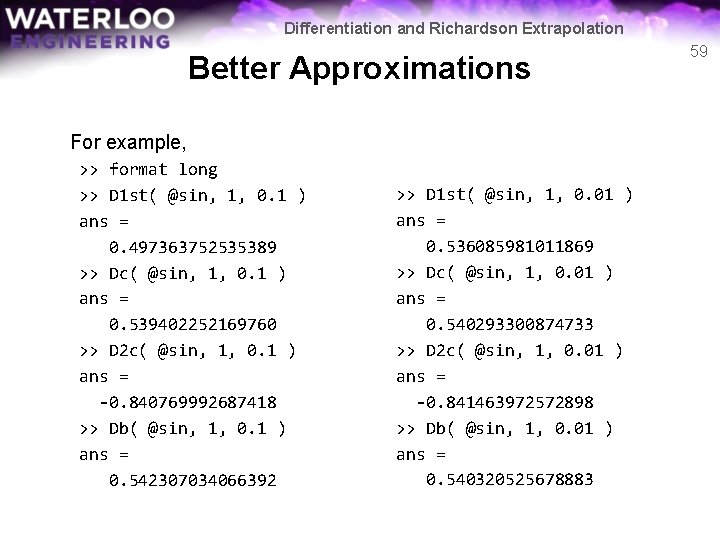

Differentiation and Richardson Extrapolation Better Approximations For example, >> format long >> D 1 st( @sin, 1, 0. 1 ) ans = 0. 497363752535389 >> Dc( @sin, 1, 0. 1 ) ans = 0. 539402252169760 >> D 2 c( @sin, 1, 0. 1 ) ans = -0. 840769992687418 >> Db( @sin, 1, 0. 1 ) ans = 0. 542307034066392 >> D 1 st( @sin, 1, 0. 01 ) ans = 0. 536085981011869 >> Dc( @sin, 1, 0. 01 ) ans = 0. 540293300874733 >> D 2 c( @sin, 1, 0. 01 ) ans = -0. 841463972572898 >> Db( @sin, 1, 0. 01 ) ans = 0. 540320525678883 59

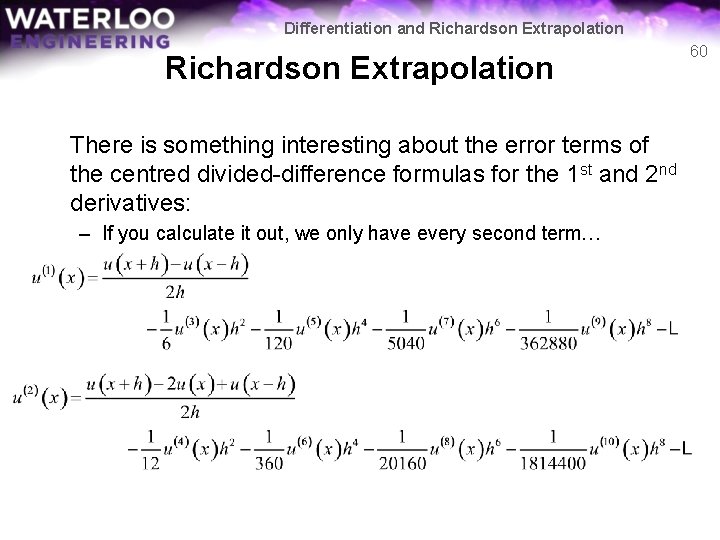

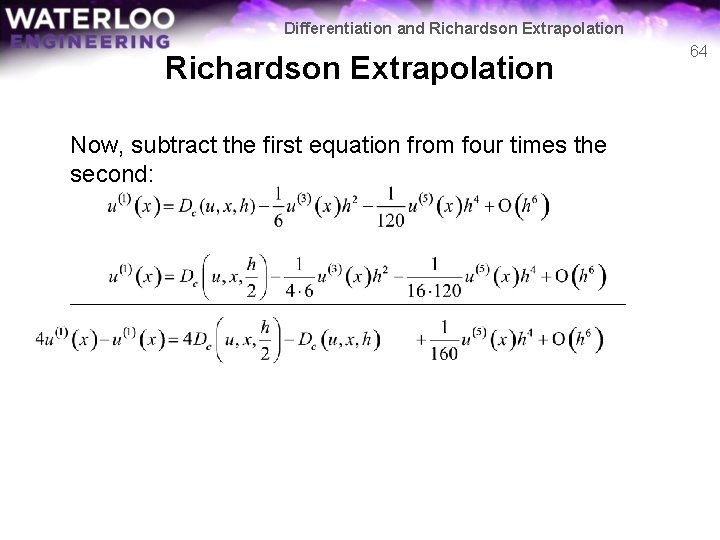

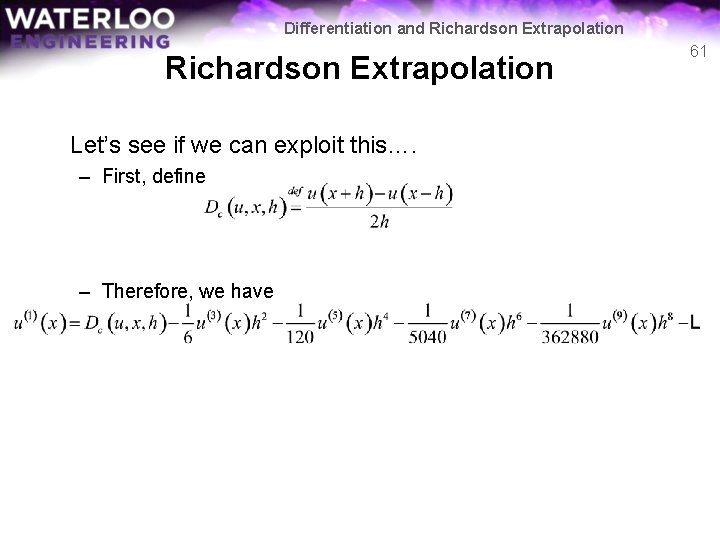

Differentiation and Richardson Extrapolation There is something interesting about the error terms of the centred divided-difference formulas for the 1 st and 2 nd derivatives: – If you calculate it out, we only have every second term… 60

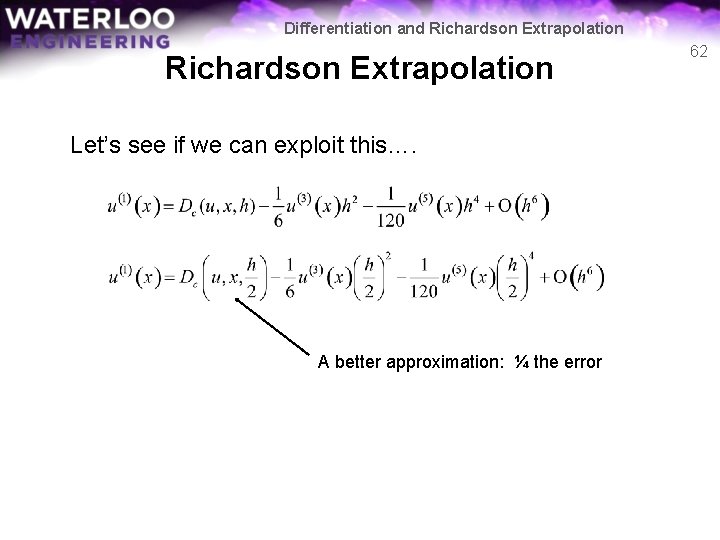

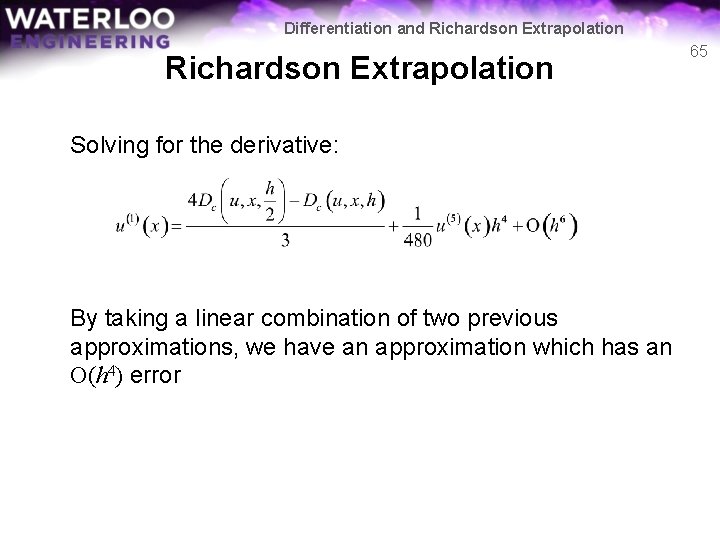

Differentiation and Richardson Extrapolation Let’s see if we can exploit this…. – First, define – Therefore, we have 61

Differentiation and Richardson Extrapolation Let’s see if we can exploit this…. A better approximation: ¼ the error 62

Differentiation and Richardson Extrapolation Expanding the products: 63

Differentiation and Richardson Extrapolation Now, subtract the first equation from four times the second: 64

Differentiation and Richardson Extrapolation Solving for the derivative: By taking a linear combination of two previous approximations, we have an approximation which has an O(h 4) error 65

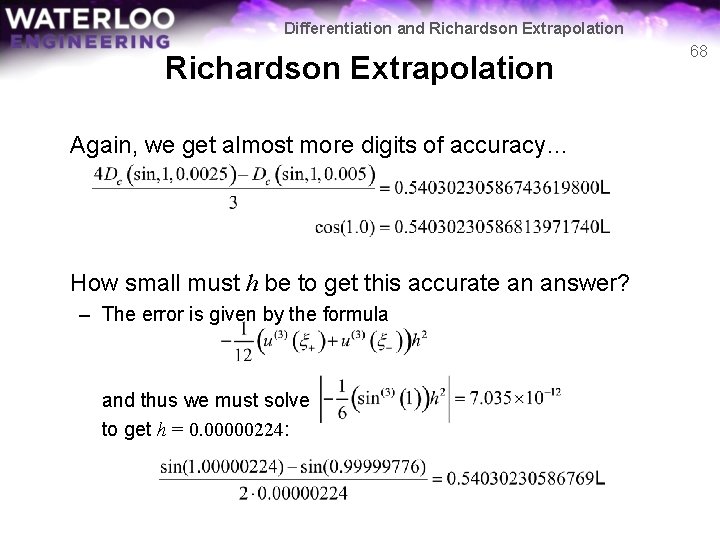

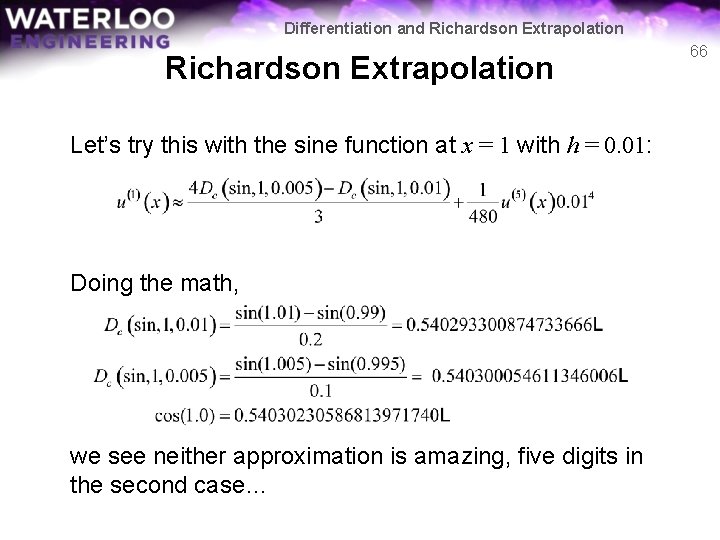

Differentiation and Richardson Extrapolation Let’s try this with the sine function at x = 1 with h = 0. 01: Doing the math, we see neither approximation is amazing, five digits in the second case… 66

Differentiation and Richardson Extrapolation If we calculate the linear combination, however, we get: All we did was take a linear combination of not-so-great approximations and we get an approximation good approximation… Let’s reduce h by half – If the error is O(h 6), reducing h by half should reduce the error by 1/64 th 67

Differentiation and Richardson Extrapolation Again, we get almost more digits of accuracy… How small must h be to get this accurate an answer? – The error is given by the formula and thus we must solve to get h = 0. 00000224: 68

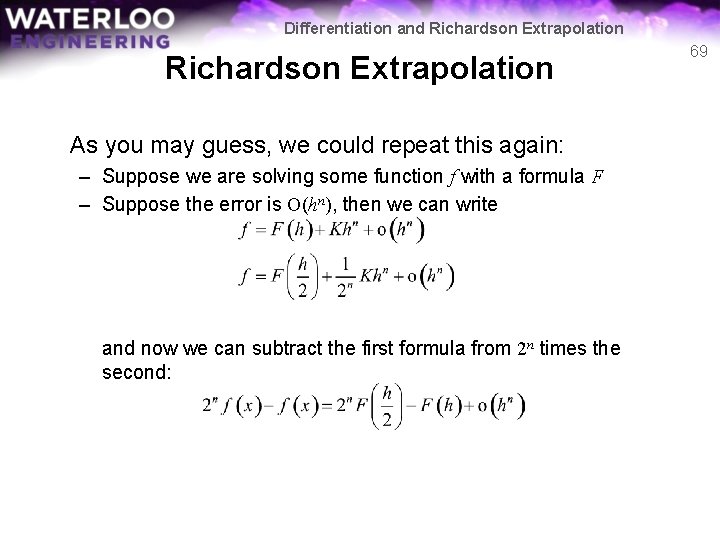

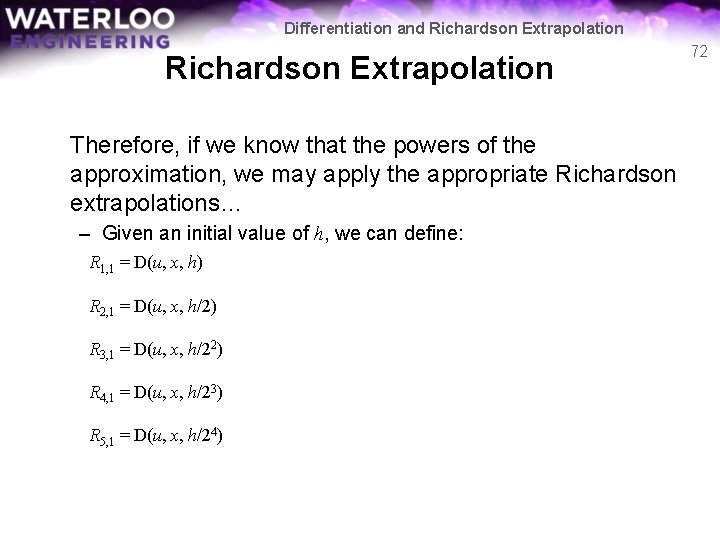

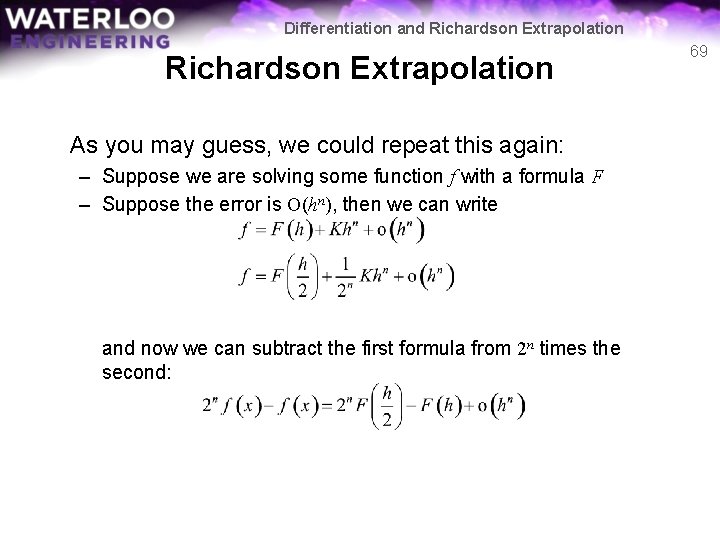

Differentiation and Richardson Extrapolation As you may guess, we could repeat this again: – Suppose we are solving some function f with a formula F – Suppose the error is O(hn), then we can write and now we can subtract the first formula from 2 n times the second: 69

Differentiation and Richardson Extrapolation Solving for f(x), we get Note that the approximation is a weighted average of two other approximations 70

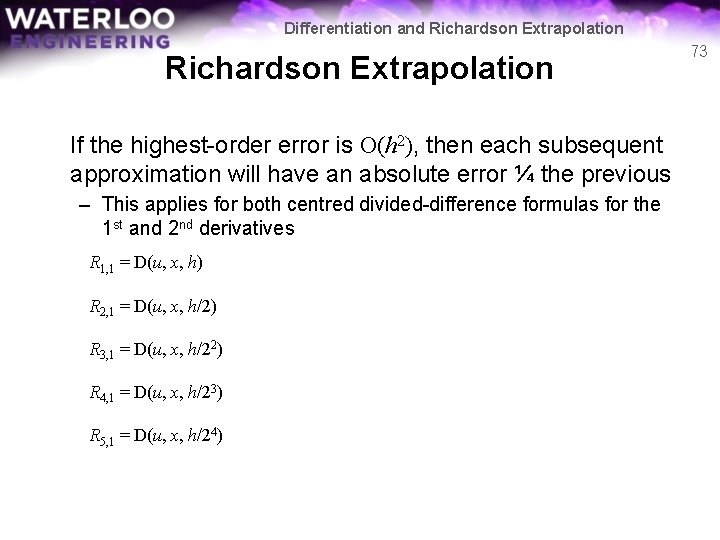

Differentiation and Richardson Extrapolation Question: – Is this formula subject to subtractive cancellation? 71

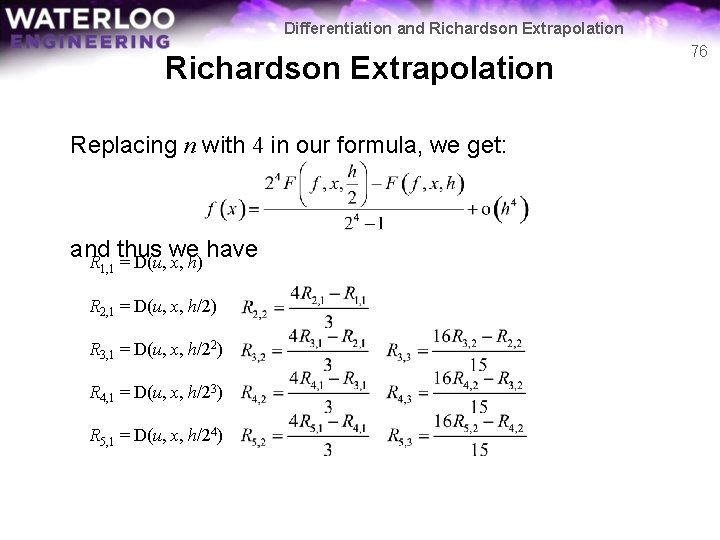

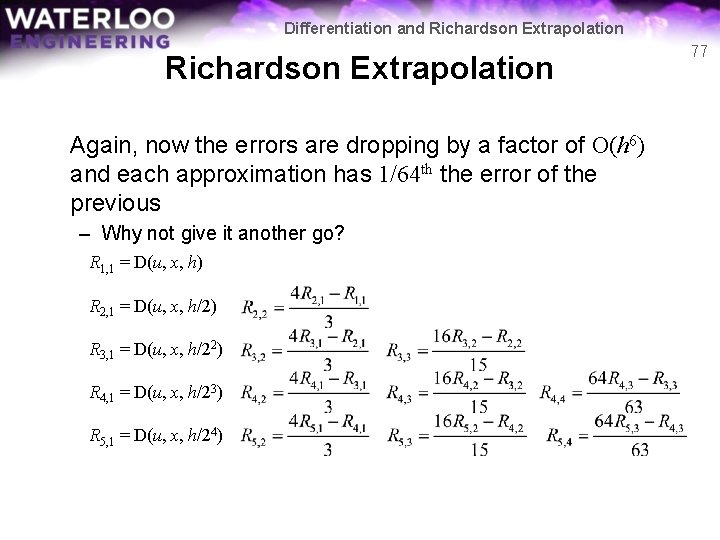

Differentiation and Richardson Extrapolation Therefore, if we know that the powers of the approximation, we may apply the appropriate Richardson extrapolations… – Given an initial value of h, we can define: R 1, 1 = D(u, x, h) R 2, 1 = D(u, x, h/2) R 3, 1 = D(u, x, h/22) R 4, 1 = D(u, x, h/23) R 5, 1 = D(u, x, h/24) 72

Differentiation and Richardson Extrapolation If the highest-order error is O(h 2), then each subsequent approximation will have an absolute error ¼ the previous – This applies for both centred divided-difference formulas for the 1 st and 2 nd derivatives R 1, 1 = D(u, x, h) R 2, 1 = D(u, x, h/2) R 3, 1 = D(u, x, h/22) R 4, 1 = D(u, x, h/23) R 5, 1 = D(u, x, h/24) 73

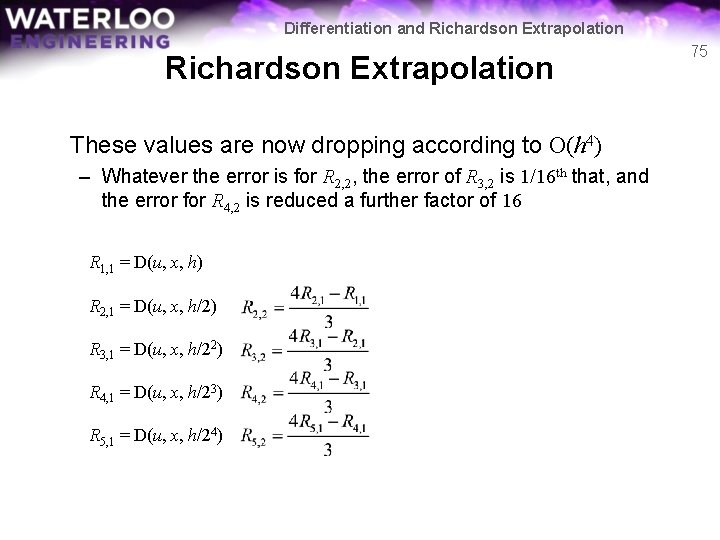

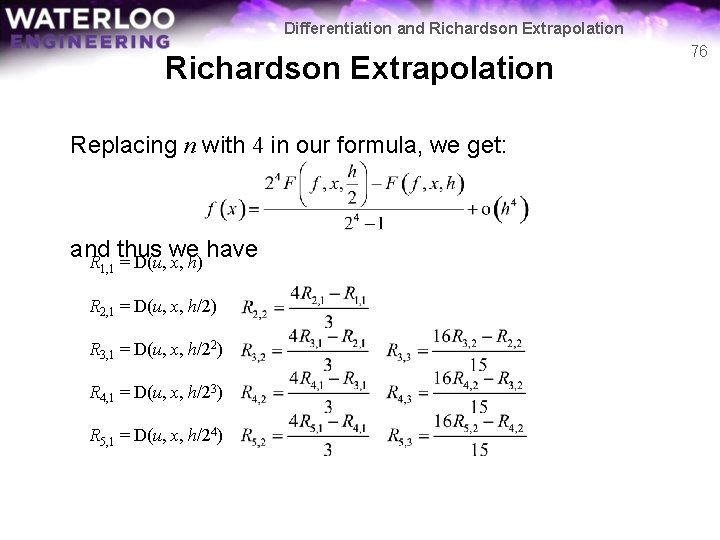

Differentiation and Richardson Extrapolation Therefore, we could now calculate further approximations according to our Richardson extrapolation formula: R 1, 1 = D(u, x, h) R 2, 1 = D(u, x, h/2) R 3, 1 = D(u, x, h/22) R 4, 1 = D(u, x, h/23) R 5, 1 = D(u, x, h/24) 74

Differentiation and Richardson Extrapolation These values are now dropping according to O(h 4) – Whatever the error is for R 2, 2, the error of R 3, 2 is 1/16 th that, and the error for R 4, 2 is reduced a further factor of 16 R 1, 1 = D(u, x, h) R 2, 1 = D(u, x, h/2) R 3, 1 = D(u, x, h/22) R 4, 1 = D(u, x, h/23) R 5, 1 = D(u, x, h/24) 75

Differentiation and Richardson Extrapolation Replacing n with 4 in our formula, we get: and thus we have R = D(u, x, h) 1, 1 R 2, 1 = D(u, x, h/2) R 3, 1 = D(u, x, h/22) R 4, 1 = D(u, x, h/23) R 5, 1 = D(u, x, h/24) 76

Differentiation and Richardson Extrapolation Again, now the errors are dropping by a factor of O(h 6) and each approximation has 1/64 th the error of the previous – Why not give it another go? R 1, 1 = D(u, x, h) R 2, 1 = D(u, x, h/2) R 3, 1 = D(u, x, h/22) R 4, 1 = D(u, x, h/23) R 5, 1 = D(u, x, h/24) 77

Differentiation and Richardson Extrapolation We could, again, repeat this process: Thus, we would have a matrix of entries of which R 5, 5 is the most accurate 78

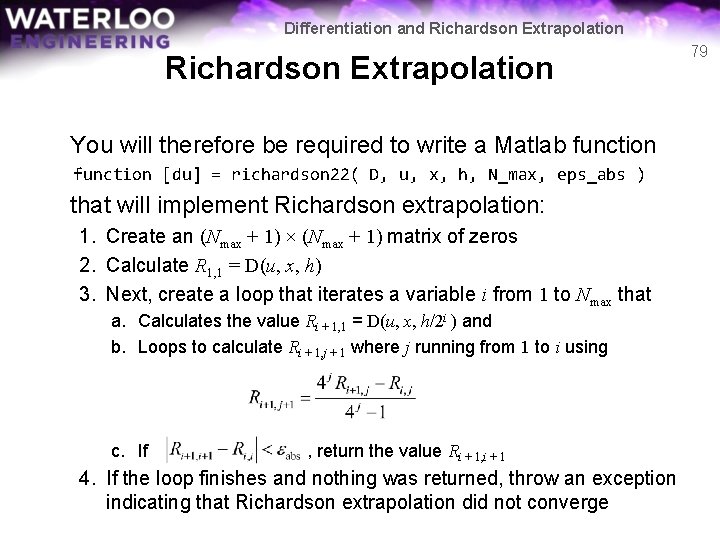

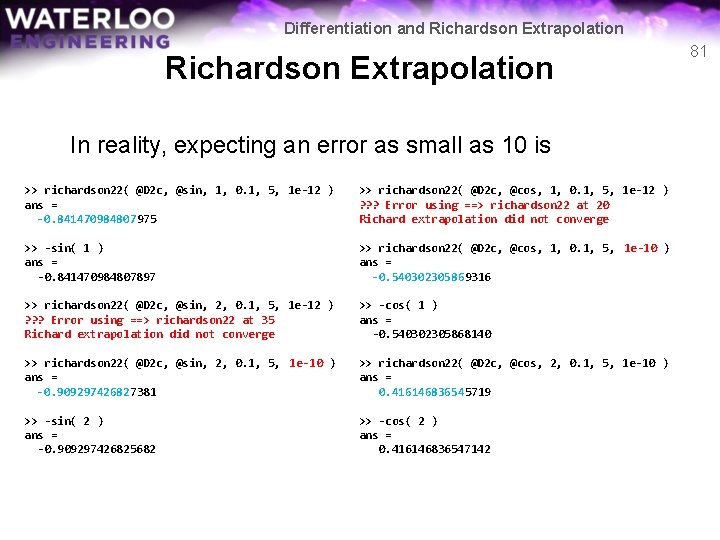

Differentiation and Richardson Extrapolation You will therefore be required to write a Matlab function [du] = richardson 22( D, u, x, h, N_max, eps_abs ) that will implement Richardson extrapolation: 1. Create an (Nmax + 1) × (Nmax + 1) matrix of zeros 2. Calculate R 1, 1 = D(u, x, h) 3. Next, create a loop that iterates a variable i from 1 to Nmax that a. Calculates the value Ri + 1, 1 = D(u, x, h/2 i ) and b. Loops to calculate Ri + 1, j + 1 where j running from 1 to i using c. If , return the value Ri + 1, i + 1 4. If the loop finishes and nothing was returned, throw an exception indicating that Richardson extrapolation did not converge 79

Differentiation and Richardson Extrapolation The accuracy is actually quite impressive >> richardson 22( @Dc, @sin, 1, 0. 1, 5, 1 e-12 ) ans = 0. 540302305868148 >> richardson 22( @Dc, @cos, 1, 0. 1, 5, 1 e-12 ) ans = -0. 841470984807898 >> cos( 1 ) ans = 0. 540302305868140 >> -sin( 1 ) ans = -0. 841470984807897 >> richardson 22( @Dc, @sin, 2, 0. 1, 5, 1 e-12 ) ans = -0. 416146836547144 >> richardson 22( @Dc, @cos, 2, 0. 1, 5, 1 e-12 ) ans = -0. 909297426825698 >> cos( 2 ) ans = -0. 416146836547142 >> -sin( 2 ) ans = -0. 909297426825682 80

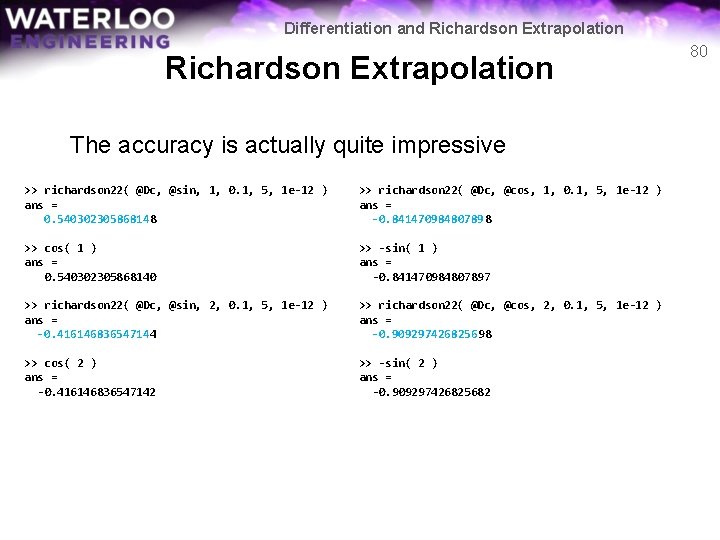

Differentiation and Richardson Extrapolation In reality, expecting an error as small as 10 is >> richardson 22( @D 2 c, @sin, 1, 0. 1, 5, 1 e-12 ) ans = -0. 841470984807975 >> richardson 22( @D 2 c, @cos, 1, 0. 1, 5, 1 e-12 ) ? ? ? Error using ==> richardson 22 at 20 Richard extrapolation did not converge >> -sin( 1 ) ans = -0. 841470984807897 >> richardson 22( @D 2 c, @cos, 1, 0. 1, 5, 1 e-10 ) ans = -0. 540302305869316 >> richardson 22( @D 2 c, @sin, 2, 0. 1, 5, 1 e-12 ) ? ? ? Error using ==> richardson 22 at 35 Richard extrapolation did not converge >> -cos( 1 ) ans = -0. 540302305868140 >> richardson 22( @D 2 c, @sin, 2, 0. 1, 5, 1 e-10 ) ans = -0. 909297426827381 >> richardson 22( @D 2 c, @cos, 2, 0. 1, 5, 1 e-10 ) ans = 0. 416146836545719 >> -sin( 2 ) ans = -0. 909297426825682 >> -cos( 2 ) ans = 0. 416146836547142 81

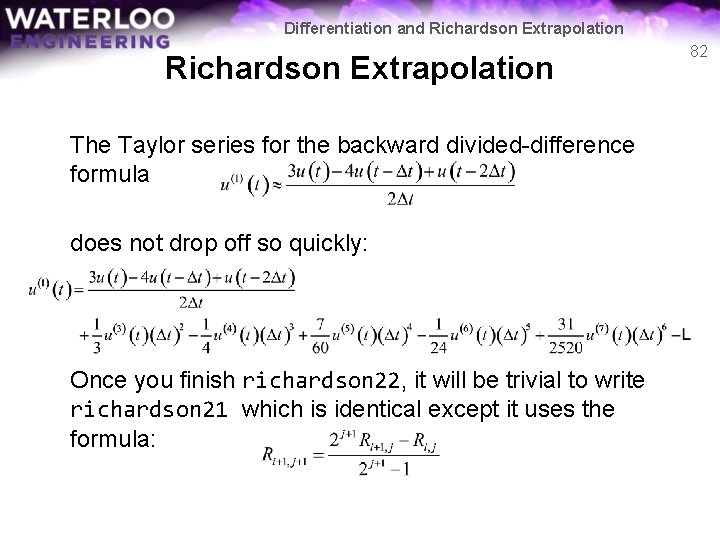

Differentiation and Richardson Extrapolation The Taylor series for the backward divided-difference formula does not drop off so quickly: Once you finish richardson 22, it will be trivial to write richardson 21 which is identical except it uses the formula: 82

Differentiation and Richardson Extrapolation Question: – What happens if an error is larger than that expected by Richardson extrapolation? Will this significantly affect the answer? – Fortunately, each step is just a linear combination with significant weight placed on the more accurate answer • It won’t be worse than just calling, for example, Dc( u, x, h/2^N_max ) 83

Differentiation and Richardson Extrapolation Summary In this topic, we’ve looked at approximating the derivative – We saw the effect of subtractive cancellation – Found the centred-divided difference formulas • Found an interpolating function • Differentiated that interpolating function • Evaluated it at the point we wish to approximate the derivative – We also found one backward divided-difference formula – We then applied Richardson extrapolation 84

![Differentiation and Richardson Extrapolation References 1 Glyn James Modern Engineering Mathematics 4 th Ed Differentiation and Richardson Extrapolation References [1] Glyn James, Modern Engineering Mathematics, 4 th Ed.](https://slidetodoc.com/presentation_image/019ffbd7ab6c5ff930787137e676b3b8/image-85.jpg)

Differentiation and Richardson Extrapolation References [1] Glyn James, Modern Engineering Mathematics, 4 th Ed. , Prentice Hall, 2007, p. 778. [2] Glyn James, Advanced Modern Engineering Mathematics, 4 th Ed. , Prentice Hall, 2011, p. 164. 85