Modern Topics in Learning Theory MariaFlorina Balcan 04192006

Modern Topics in Learning Theory Maria-Florina Balcan 04/19/2006 Maria-Florina Balcan

Modern Topics in Learning Theory • Semi-Supervised Learning • Active Learning • Kernels and Similarity Functions • Tighter Data Dependent Bounds Maria-Florina Balcan

Semi-Supervised Learning • Hot topic in recent years in Machine Learning. • Many applications have lots of unlabeled data, but labeled data is rare or expensive: • Web page, document classification • OCR, Image classification Maria-Florina Balcan

Combining Labeled and Unlabeled Data • Several methods have been developed to try to use unlabeled data to improve performance, e. g. : • Transductive SVM [J 98] • Co-training [BM 98, BBY 04] • Graph-based methods [BC 01], [ZGL 03], [BLRR 04] • Augmented PAC model for SSL [BB 05, BB 06] Maria-Florina Balcan

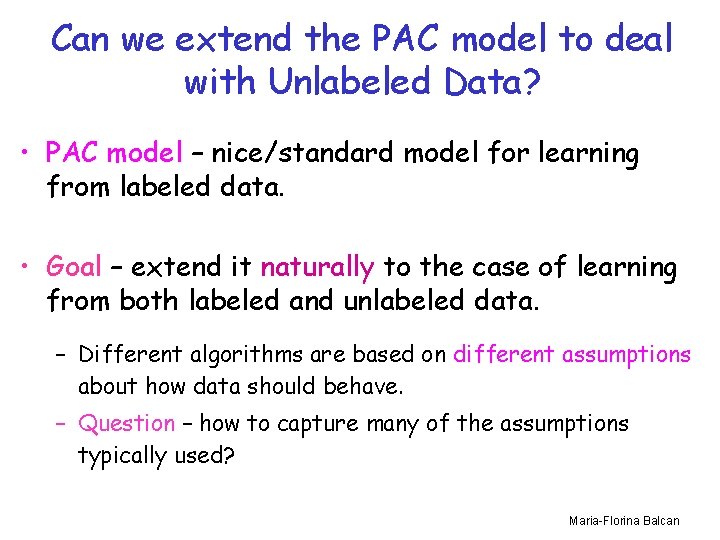

Can we extend the PAC model to deal with Unlabeled Data? • PAC model – nice/standard model for learning from labeled data. • Goal – extend it naturally to the case of learning from both labeled and unlabeled data. – Different algorithms are based on different assumptions about how data should behave. – Question – how to capture many of the assumptions typically used? Maria-Florina Balcan

Example of “typical” assumption • The separator goes through low density regions of the space/large margin. – assume we are looking for linear separator – belief: should exist one with large separation + _ SVM Labeled data only + _ + _ Transductive SVM Maria-Florina Balcan

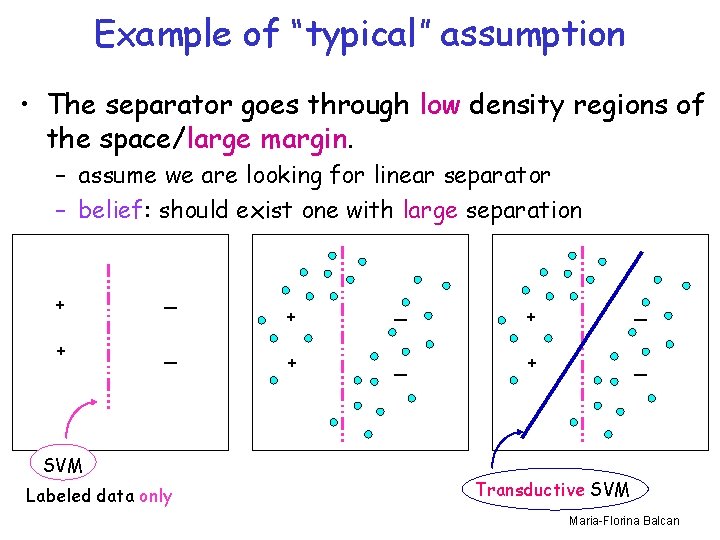

Another Example • Agreement between two parts : co-training. – examples contain two sufficient sets of features, i. e. an example is x=h x 1, x 2 i and the belief is that the two parts of the example are consistent, i. e. 9 c 1, c 2 such that c 1(x 1)=c 2(x 2)=c*(x) – for example, if we want to classify web pages: x = h x 1, x 2 i Prof. Avrim Blum My Advisor x - Link info & Text info Prof. Avrim Blum My Advisor x 1 - Link info x 2 - Text info Maria-Florina Balcan

![Co-Training [BM 98] Works by using unlabeled data to propagate learned information. Text info Co-Training [BM 98] Works by using unlabeled data to propagate learned information. Text info](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-8.jpg)

Co-Training [BM 98] Works by using unlabeled data to propagate learned information. Text info Link info X 2 X 1 + + + Maria-Florina Balcan

![Proposed Model [BB 05, BB 06] • Augment the notion of a concept class Proposed Model [BB 05, BB 06] • Augment the notion of a concept class](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-9.jpg)

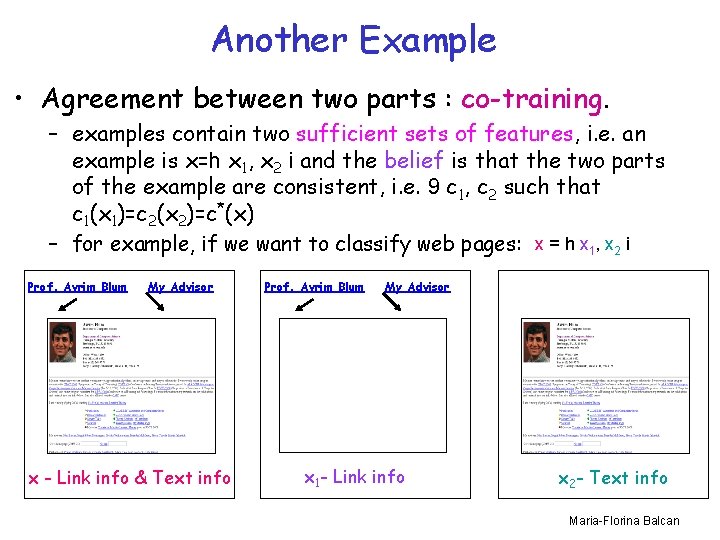

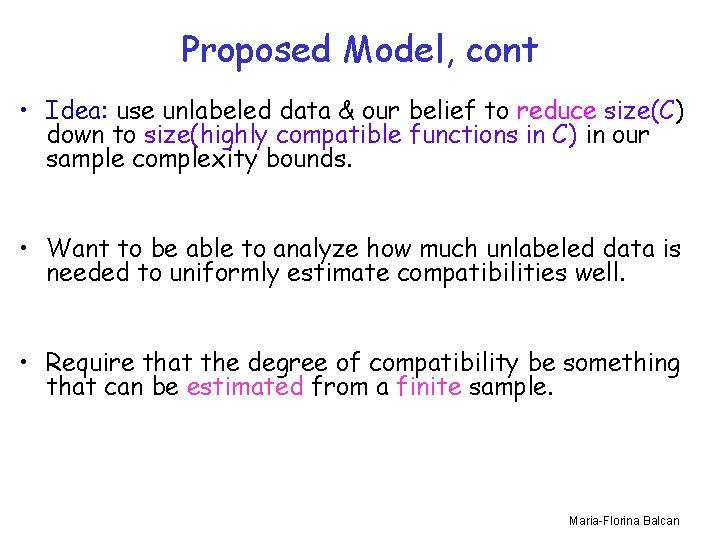

Proposed Model [BB 05, BB 06] • Augment the notion of a concept class C with a notion of compatibility between a concept and the data distribution. • “learn C” becomes “learn (C, )” (i. e. learn class C under compatibility notion ) • Express relationships that one hopes the target function and underlying distribution will possess. • Idea: use unlabeled data & the belief that the target is compatible to reduce C down to just {the highly compatible functions in C}. Maria-Florina Balcan

Proposed Model, cont • Idea: use unlabeled data & our belief to reduce size(C) down to size(highly compatible functions in C) in our sample complexity bounds. • Want to be able to analyze how much unlabeled data is needed to uniformly estimate compatibilities well. • Require that the degree of compatibility be something that can be estimated from a finite sample. Maria-Florina Balcan

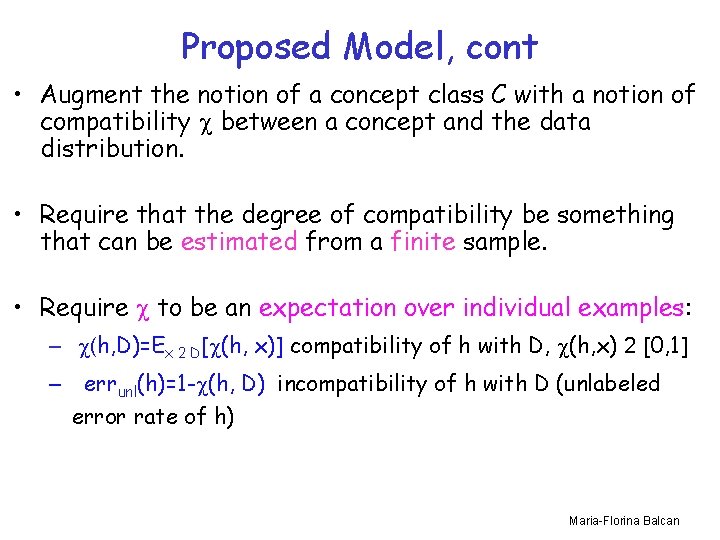

Proposed Model, cont • Augment the notion of a concept class C with a notion of compatibility between a concept and the data distribution. • Require that the degree of compatibility be something that can be estimated from a finite sample. • Require to be an expectation over individual examples: – (h, D)=Ex 2 D[ (h, x)] compatibility of h with D, (h, x) 2 [0, 1] – errunl(h)=1 - (h, D) incompatibility of h with D (unlabeled error rate of h) Maria-Florina Balcan

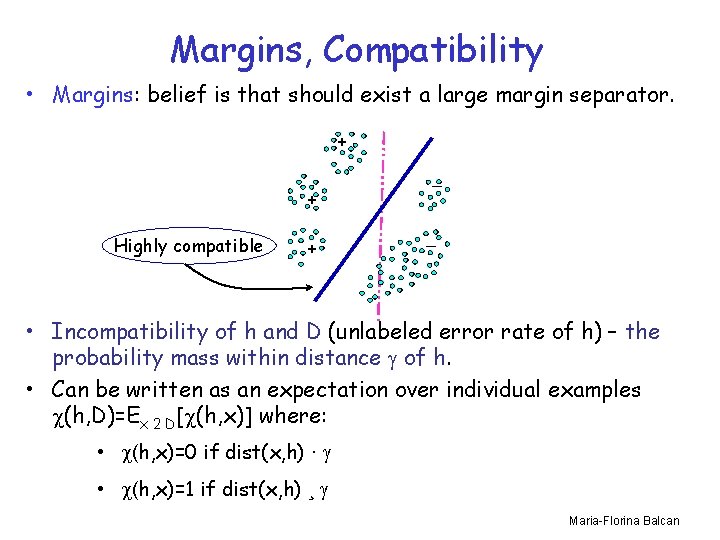

Margins, Compatibility • Margins: belief is that should exist a large margin separator. + + Highly compatible + _ _ • Incompatibility of h and D (unlabeled error rate of h) – the probability mass within distance of h. • Can be written as an expectation over individual examples (h, D)=Ex 2 D[ (h, x)] where: • (h, x)=0 if dist(x, h) · • (h, x)=1 if dist(x, h) ¸ Maria-Florina Balcan

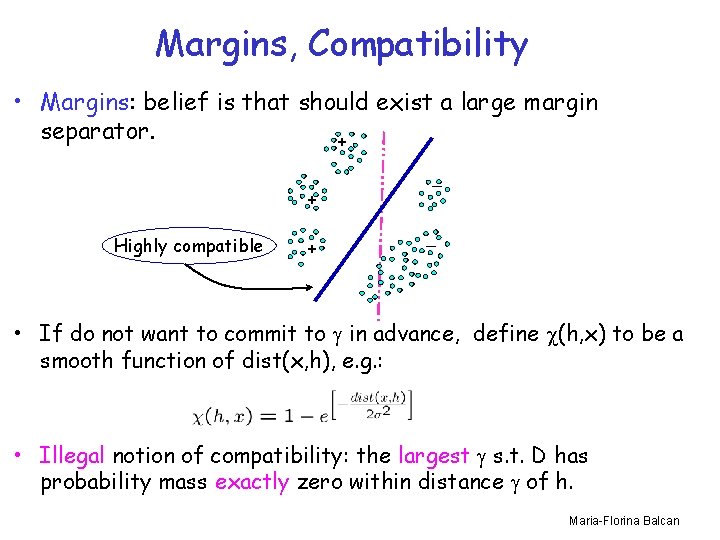

Margins, Compatibility • Margins: belief is that should exist a large margin separator. + + Highly compatible + _ _ • If do not want to commit to in advance, define (h, x) to be a smooth function of dist(x, h), e. g. : • Illegal notion of compatibility: the largest s. t. D has probability mass exactly zero within distance of h. Maria-Florina Balcan

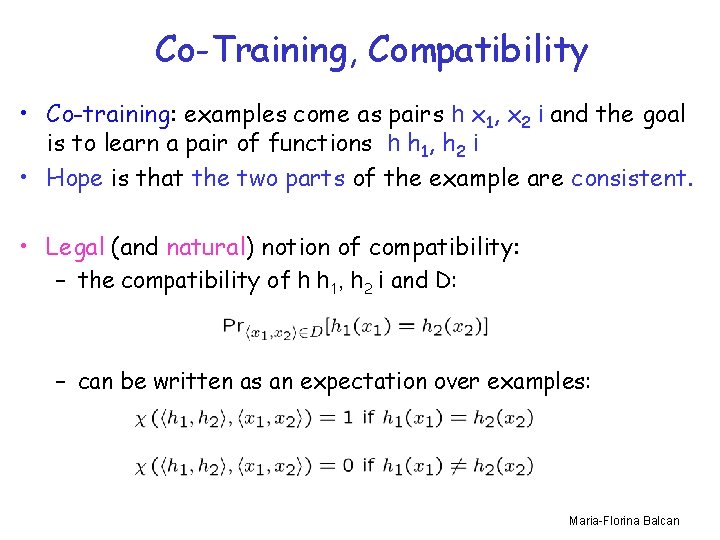

Co-Training, Compatibility • Co-training: examples come as pairs h x 1, x 2 i and the goal is to learn a pair of functions h h 1, h 2 i • Hope is that the two parts of the example are consistent. • Legal (and natural) notion of compatibility: – the compatibility of h h 1, h 2 i and D: – can be written as an expectation over examples: Maria-Florina Balcan

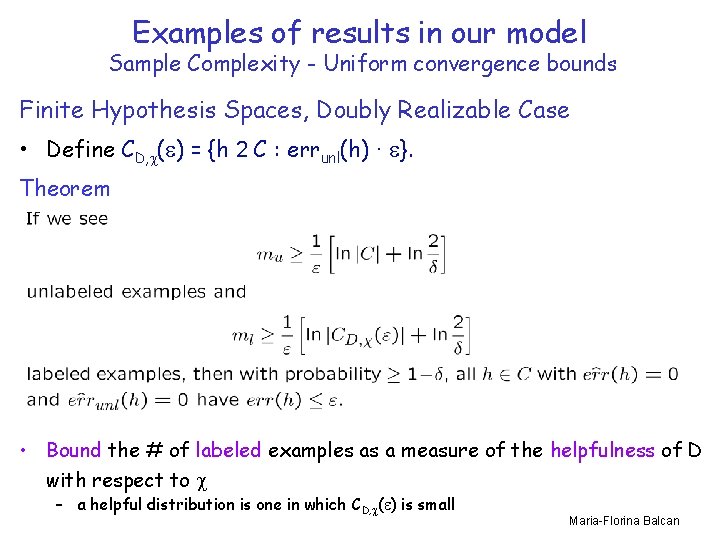

Examples of results in our model Sample Complexity - Uniform convergence bounds Finite Hypothesis Spaces, Doubly Realizable Case • Define CD, ( ) = {h 2 C : errunl(h) · }. Theorem • Bound the # of labeled examples as a measure of the helpfulness of D with respect to – a helpful distribution is one in which CD, ( ) is small Maria-Florina Balcan

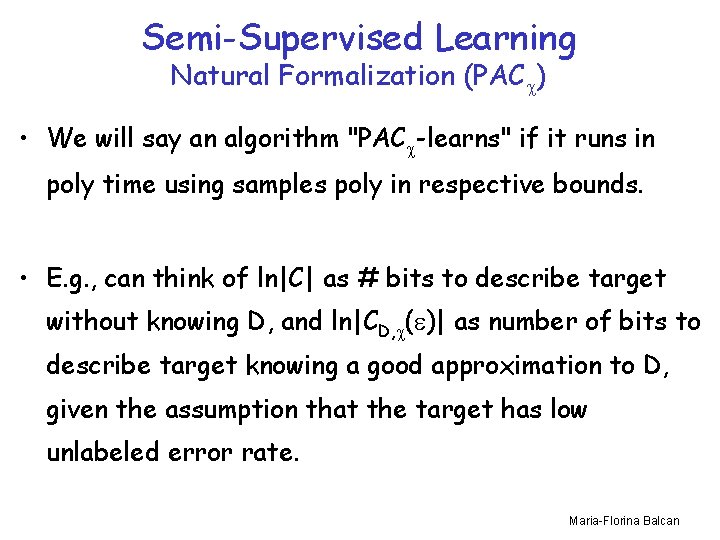

Semi-Supervised Learning Natural Formalization (PAC ) • We will say an algorithm "PAC -learns" if it runs in poly time using samples poly in respective bounds. • E. g. , can think of ln|C| as # bits to describe target without knowing D, and ln|CD, ( )| as number of bits to describe target knowing a good approximation to D, given the assumption that the target has low unlabeled error rate. Maria-Florina Balcan

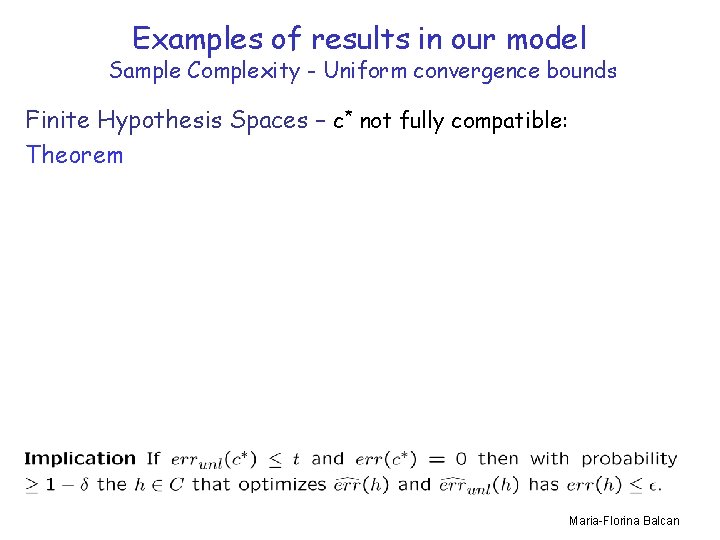

Examples of results in our model Sample Complexity - Uniform convergence bounds Finite Hypothesis Spaces – c* not fully compatible: Theorem Maria-Florina Balcan

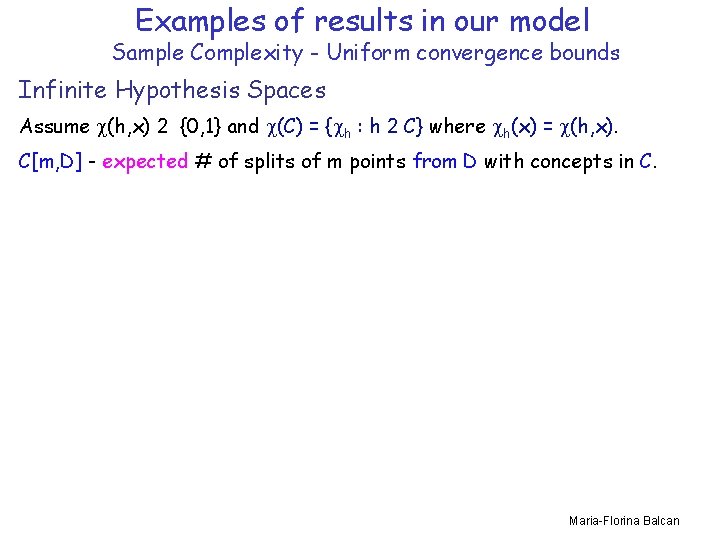

Examples of results in our model Sample Complexity - Uniform convergence bounds Infinite Hypothesis Spaces Assume (h, x) 2 {0, 1} and (C) = { h : h 2 C} where h(x) = (h, x). C[m, D] - expected # of splits of m points from D with concepts in C. Maria-Florina Balcan

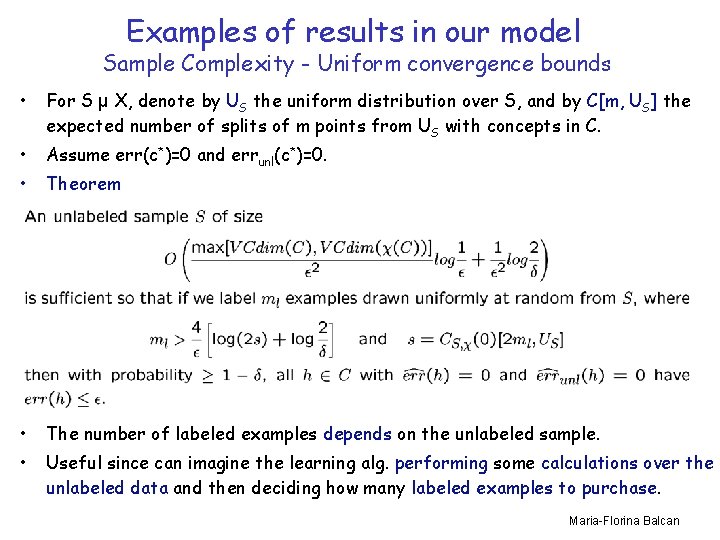

Examples of results in our model Sample Complexity - Uniform convergence bounds • For S µ X, denote by US the uniform distribution over S, and by C[m, US] the expected number of splits of m points from US with concepts in C. • Assume err(c*)=0 and errunl(c*)=0. • Theorem • The number of labeled examples depends on the unlabeled sample. • Useful since can imagine the learning alg. performing some calculations over the unlabeled data and then deciding how many labeled examples to purchase. Maria-Florina Balcan

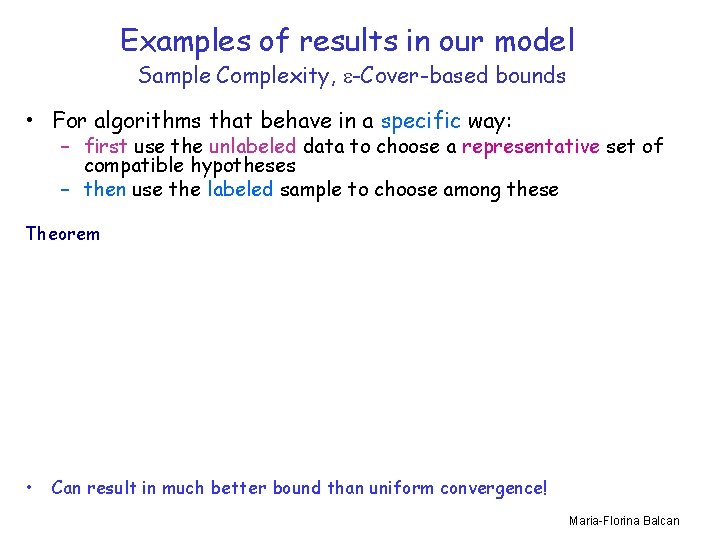

Examples of results in our model Sample Complexity, -Cover-based bounds • For algorithms that behave in a specific way: – first use the unlabeled data to choose a representative set of compatible hypotheses – then use the labeled sample to choose among these Theorem • Can result in much better bound than uniform convergence! Maria-Florina Balcan

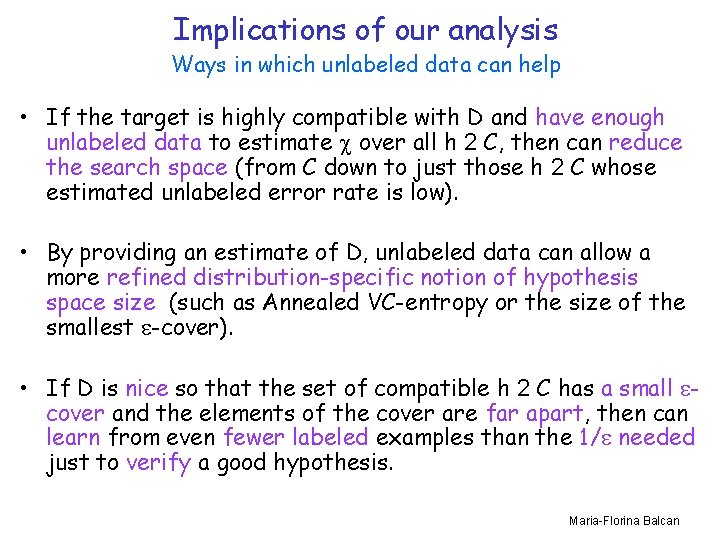

Implications of our analysis Ways in which unlabeled data can help • If the target is highly compatible with D and have enough unlabeled data to estimate over all h 2 C, then can reduce the search space (from C down to just those h 2 C whose estimated unlabeled error rate is low). • By providing an estimate of D, unlabeled data can allow a more refined distribution-specific notion of hypothesis space size (such as Annealed VC-entropy or the size of the smallest -cover). • If D is nice so that the set of compatible h 2 C has a small cover and the elements of the cover are far apart, then can learn from even fewer labeled examples than the 1/ needed just to verify a good hypothesis. Maria-Florina Balcan

Modern Topics in Learning Theory • Semi-Supervised Learning • Active Learning • Kernels and Similarity Functions • Data Dependent Bounds Maria-Florina Balcan

Active Learning • Unlabeled data is cheap & easy to obtain, labeled data is (much) more expensive. • The learner has the ability to choose specific examples to be labeled: - The learner works harder, in order to use fewer labeled examples. Maria-Florina Balcan

![Membership queries • The learner “constructs” the examples. • [Baum and Lang, 1991] tried Membership queries • The learner “constructs” the examples. • [Baum and Lang, 1991] tried](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-24.jpg)

Membership queries • The learner “constructs” the examples. • [Baum and Lang, 1991] tried fitting a neural net to handwritten characters. – synthetic instances created were incomprehensible to humans… Maria-Florina Balcan

![A PAC-like model [CAL 92] • Underlying distribution P on the (x, y) data. A PAC-like model [CAL 92] • Underlying distribution P on the (x, y) data.](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-25.jpg)

A PAC-like model [CAL 92] • Underlying distribution P on the (x, y) data. (agnostic setting) • Learner has two abilities: • draw an unlabeled sample from the distribution • ask for a label of one of these samples • Special case : assume the data is separable, i. e. some concept h 2 C labels all points perfectly. (realizable setting) Maria-Florina Balcan

![Can adaptive querying help? [CAL 92, D 04] • Consider threshold functions on the Can adaptive querying help? [CAL 92, D 04] • Consider threshold functions on the](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-26.jpg)

Can adaptive querying help? [CAL 92, D 04] • Consider threshold functions on the real line: - + w • Start with 1/ unlabeled points. • Binary search – need just log 1/ labels, from which the rest can be inferred. • Output a consistent hypothesis. Exponential improvement in sample complexity Maria-Florina Balcan

![Region of uncertainty [CAL 92] • Current version space: part of C consistent with Region of uncertainty [CAL 92] • Current version space: part of C consistent with](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-27.jpg)

Region of uncertainty [CAL 92] • Current version space: part of C consistent with labels so far. • “Region of uncertainty” = part of data space about which there is still some uncertainty (i. e. disagreement within version space) • Example: data lies on circle in R 2 and hypotheses are linear separators. current version space + + region of uncertainty in data space Maria-Florina Balcan

![Region of uncertainty [CAL 92] Algorithm: Of the unlabeled points which lie in the Region of uncertainty [CAL 92] Algorithm: Of the unlabeled points which lie in the](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-28.jpg)

Region of uncertainty [CAL 92] Algorithm: Of the unlabeled points which lie in the region of uncertainty, pick one at random to query. current version space region of uncertainty in data space Maria-Florina Balcan

![Region of uncertainty [CAL 92] • Number of labels needed depends on C and Region of uncertainty [CAL 92] • Number of labels needed depends on C and](http://slidetodoc.com/presentation_image_h2/8a7f6ba8800eb5e83b891de633ec2bd3/image-29.jpg)

Region of uncertainty [CAL 92] • Number of labels needed depends on C and also on P. • Example: C -- linear separators in Rd, D -- uniform distribution over unit sphere. • need only d 3/2 log 1/ labels to find a hypothesis with error rate < . • supervised learning: d/ labels. Exponential improvement in sample complexity For a robust version of CAL’ 92 see BBL’ 06. Maria-Florina Balcan

- Slides: 29