New Theoretical Frameworks for Machine Learning MariaFlorina Balcan

New Theoretical Frameworks for Machine Learning Maria-Florina Balcan …

Thanks to My Committee Avrim Blum Yishay Mansour Manuel Blum Tom Mitchell Santosh Vempala 2

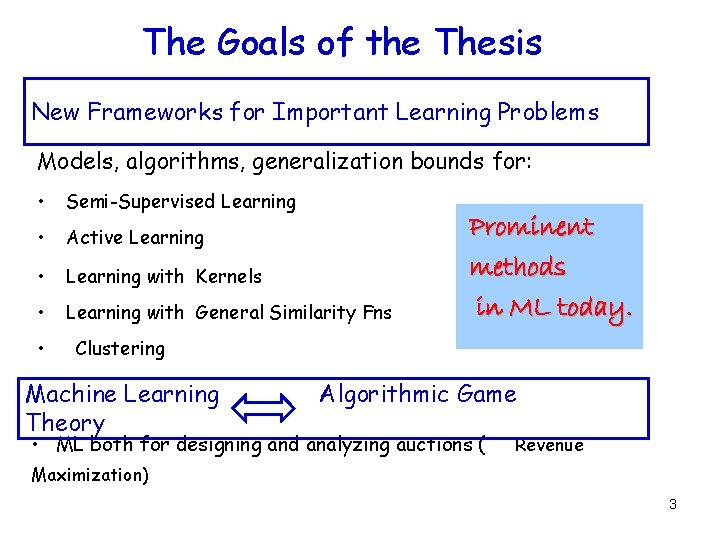

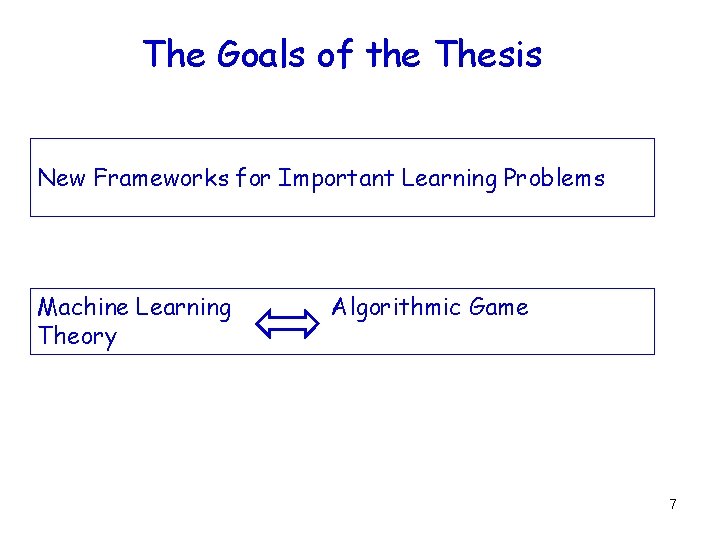

The Goals of the Thesis New Frameworks for Important Learning Problems Models, algorithms, generalization bounds for: • Semi-Supervised Learning • Active Learning • Learning with Kernels • Learning with General Similarity Fns • Prominent methods in ML today. Clustering Machine Learning Theory Algorithmic Game • ML both for designing and analyzing auctions ( Revenue Maximization) 3

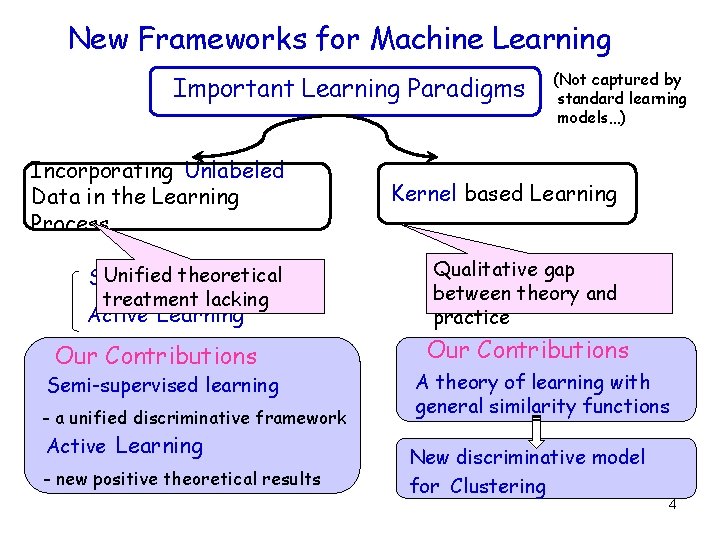

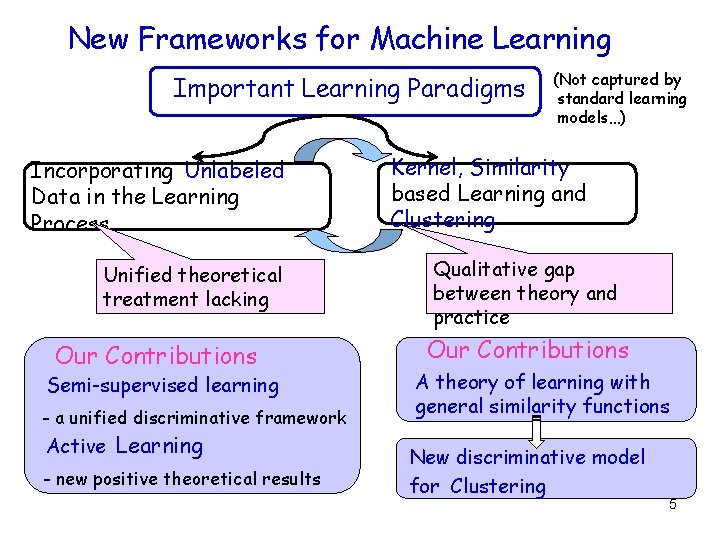

New Frameworks for Machine Learning Important Learning Paradigms Incorporating Unlabeled Data in the Learning Process Unified theoretical Semi-supervised Learning treatment lacking Active Learning Our Contributions Semi-supervised learning - a unified discriminative framework Active Learning - new positive theoretical results (Not captured by standard learning models…) Kernel based Learning Qualitative gap between theory and practice Our Contributions A theory of learning with general similarity functions New discriminative model for Clustering 4

New Frameworks for Machine Learning Important Learning Paradigms Incorporating Unlabeled Data in the Learning Process Unified theoretical treatment lacking Our Contributions Semi-supervised learning - a unified discriminative framework Active Learning - new positive theoretical results (Not captured by standard learning models…) Kernel, Similarity based Learning and Clustering Qualitative gap between theory and practice Our Contributions A theory of learning with general similarity functions New discriminative model for Clustering 5

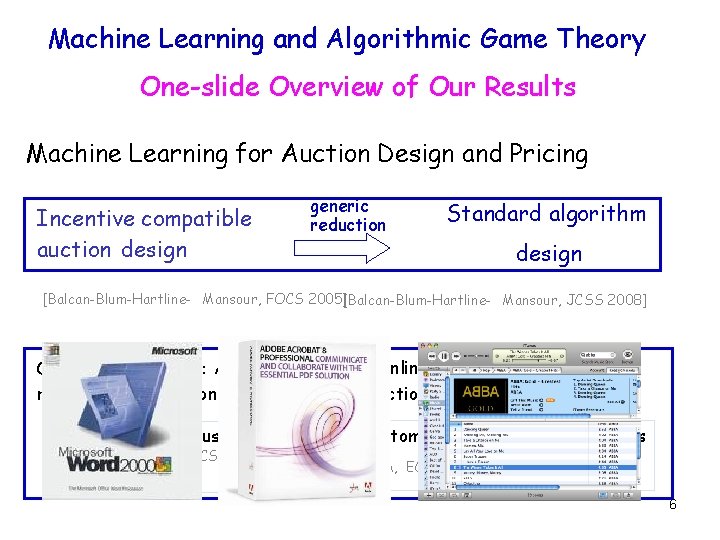

Machine Learning and Algorithmic Game Theory One-slide Overview of Our Results Machine Learning for Auction Design and Pricing Incentive compatible auction design generic reduction Standard algorithm design [Balcan-Blum-Hartline- Mansour, FOCS 2005][Balcan-Blum-Hartline- Mansour, JCSS 2008] Other related work: Approximation and Online Algorithms Pricing revenue maximization in combinatorial auctions Single minded customers [BB, EC 2006] [BB, TCS 2007] [BBCH, WINE 2007] Customers with general valuations [BBM, EC 2008] 6

The Goals of the Thesis New Frameworks for Important Learning Problems Machine Learning Theory Algorithmic Game 7

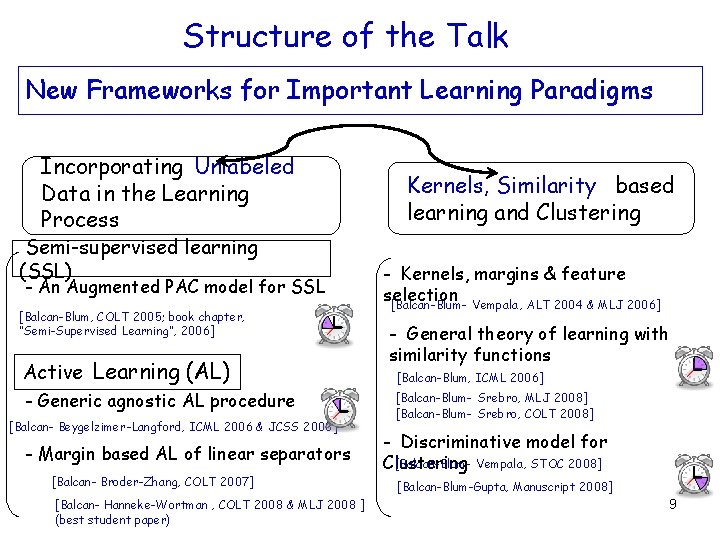

Structure of the Talk New Frameworks for Important Learning Paradigms Incorporating Unlabeled Data in the Learning Process Semi-supervised learning (SSL) - An Augmented PAC model for SSL [Balcan-Blum, COLT 2005; book chapter, “Semi-Supervised Learning”, 2006] Active Learning (AL) - Generic agnostic AL procedure [Balcan- Beygelzimer-Langford, ICML 2006 & JCSS 2008] - Margin based AL of linear separators [Balcan- Broder-Zhang, COLT 2007] [Balcan- Hanneke-Wortman , COLT 2008 & MLJ 2008 ] (best student paper) Kernels, Similarity based learning and Clustering - Kernels, margins & feature selection [Balcan-Blum- Vempala , ALT 2004 & MLJ 2006] - General theory of learning with similarity functions [Balcan-Blum, ICML 2006] [Balcan-Blum- Srebro, MLJ 2008] [Balcan-Blum- Srebro, COLT 2008] - Discriminative model for [Balcan-Blum- Vempala , STOC 2008] Clustering [Balcan-Blum-Gupta, Manuscript 2008] 9

Part I, Incorporating Unlabeled Data in the Learning Process Semi-Supervised Learning A general discriminative framework [Balcan-Blum, COLT 2005; book chapter, “Semi-Supervised Learning”, 2006]

Standard Supervised Learning • X – instance/feature space • S={(x, l)} - set of labeled examples – labeled examples - drawn i. i. d. from distr. D over X and labeled by some target concept c* • labels 2 {-1, 1} - binary classification • Do optimization over S , find hypothesis h 2 C. • Goal: h has small error over D. c* in C, realizable case err(h)= Prx 2 D(h(x) c*(x)) c* not in C, agnostic case • Classic models for learning from labeled data. • Statistical Learning Theory (Vapnik) • PAC (Valiant) 11

Standard Supervised Learning Sample Complexity • E. g. , Finite Hypothesis Spaces, Realizable Case • In PAC, can also talk about efficient algorithms. 12

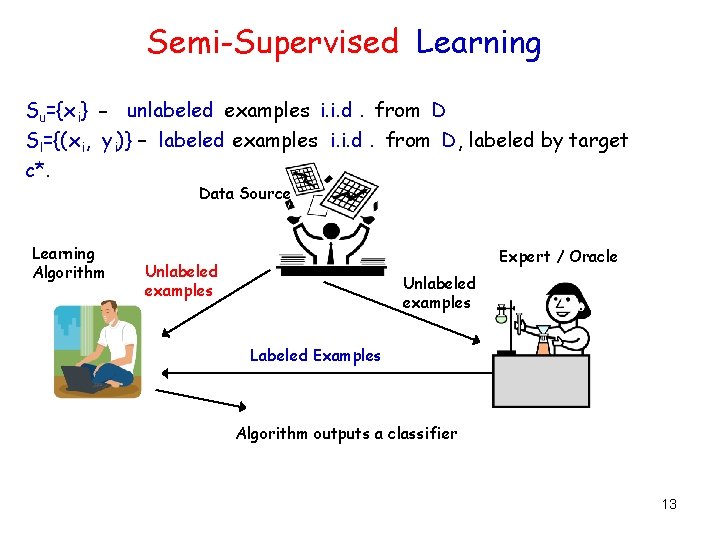

Semi-Supervised Learning Su={x i} - unlabeled examples i. i. d. from D Sl={(x i, y i)} – labeled examples i. i. d. from D, labeled by target c*. Data Source Learning Algorithm Expert / Oracle Unlabeled examples Labeled Examples Algorithm outputs a classifier 13

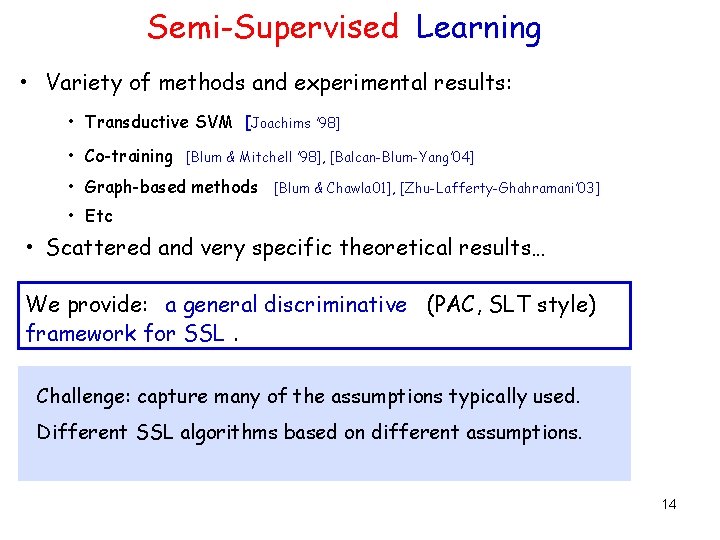

Semi-Supervised Learning • Variety of methods and experimental results: • Transductive SVM [Joachims ’ 98] • Co-training [Blum & Mitchell ’ 98], [Balcan-Blum-Yang’ 04] • Graph-based methods [Blum & Chawla 01], [Zhu-Lafferty-Ghahramani’ 03] • Etc • Scattered and very specific theoretical results… We provide: a general discriminative (PAC, SLT style) framework for SSL. Challenge: capture many of the assumptions typically used. Different SSL algorithms based on different assumptions. 14

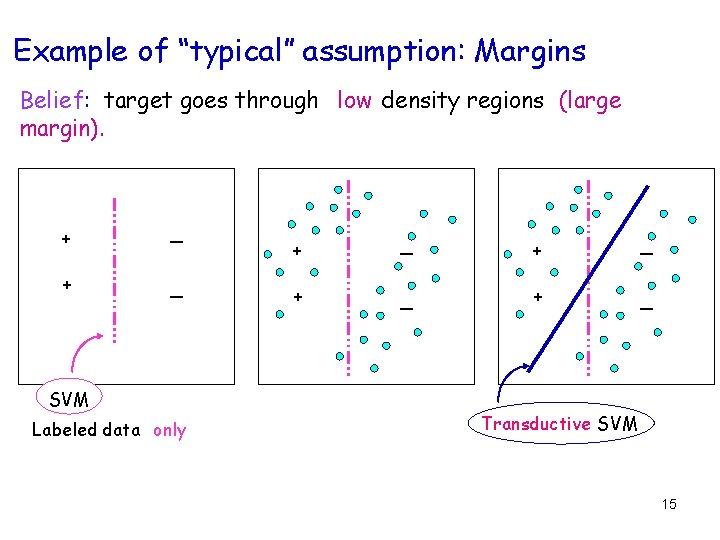

Example of “typical” assumption: Margins Belief: target goes through low density regions (large margin). + _ SVM Labeled data only + _ + _ Transductive SVM 15

![Another Example: Self-consistency Agreement between two parts : co-training [Blum-Mitchell 98]. - examples contain Another Example: Self-consistency Agreement between two parts : co-training [Blum-Mitchell 98]. - examples contain](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-15.jpg)

Another Example: Self-consistency Agreement between two parts : co-training [Blum-Mitchell 98]. - examples contain two sufficient sets of features, x = h x 1 , x 2 i - belief: the parts are consistent, i. e. 9 c 1 , c 2 s. t. c 1 (x 1 )=c 2(x 2)=c *(x) For example, if we want to classify web pages: x = h x 1, x 2 i Prof. Avrim Blum My Advisor x - Link info & Text info Prof. Avrim Blum x 1 - Text info My Advisor x 2 - Link info 16

![New discriminative model for SSL [BB 05] Problems with thinking about SSL in standard New discriminative model for SSL [BB 05] Problems with thinking about SSL in standard](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-16.jpg)

New discriminative model for SSL [BB 05] Problems with thinking about SSL in standard models • PAC or SLT: learn a class C under (known or unknown) distribution D. • Unlabeled data doesn’t give any info about which c 2 C is the target. Key Insight Unlabeled data useful if we have beliefs not only about the form of the target, but also about its relationship with the underlying distribution. 17

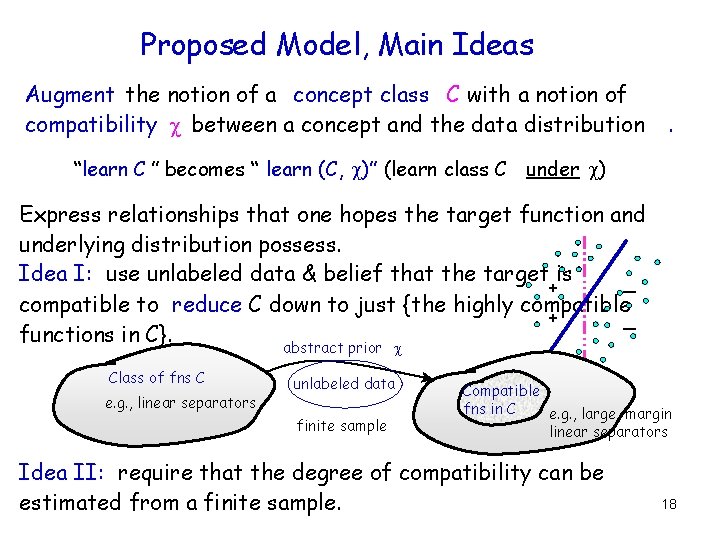

Proposed Model, Main Ideas Augment the notion of a concept class C with a notion of compatibility between a concept and the data distribution . “learn C ” becomes “ learn (C, )” (learn class C under ) Express relationships that one hopes the target function and underlying distribution possess. Idea I: use unlabeled data & belief that the target is _ + compatible to reduce C down to just {the highly compatible + _ functions in C}. abstract prior Class of fns C e. g. , linear separators unlabeled data finite sample Compatible fns in C e. g. , large margin linear separators Idea II: require that the degree of compatibility can be estimated from a finite sample. 18

![Types of Results in the [BB 05] Model Fundamental Sample Complexity issues: – How Types of Results in the [BB 05] Model Fundamental Sample Complexity issues: – How](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-18.jpg)

Types of Results in the [BB 05] Model Fundamental Sample Complexity issues: – How much unlabeled data we need: • both complexity of C and of the compatibility notion. - Ability of unlabeled data to reduce # of labeled examples: • compatibility of the target • (various) measures of the helpfulness of the distribution -Cover bounds much better than Uniform Convergence bounds. Main Poly-Time Algorithmic Result: improved alg cotraining of linear separators (improves over BM’ 98 substantially) Subsequent Work used our framework P. Bartlett, D. Rosenberg, AISTATS 2007; J. Shawe-Taylor et al. , Kakade et al, COLT 2008 Neurocomputing 2007 19

Part II, Incorporating Unlabeled Data in the Learning Process Active Learning Brief Overview of the results

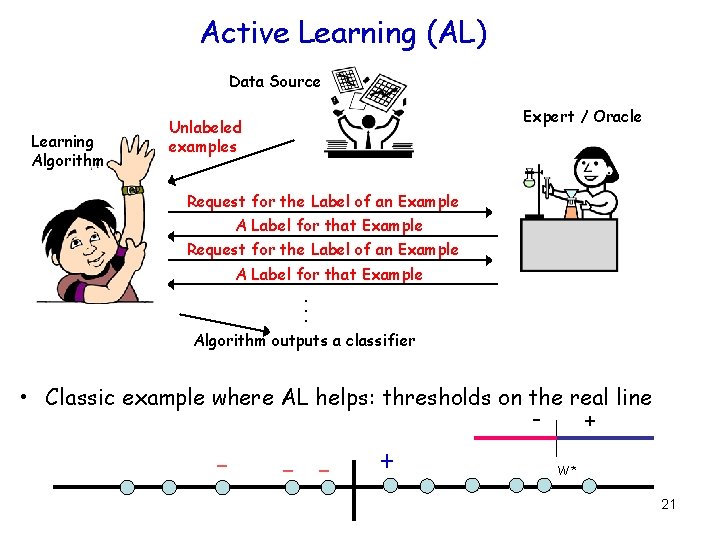

Active Learning (AL) Data Source Learning Algorithm Expert / Oracle Unlabeled examples Request for the Label of an Example A Label for that Example . . . Algorithm outputs a classifier • Classic example where AL helps: thresholds on the real line - - + + W* 21

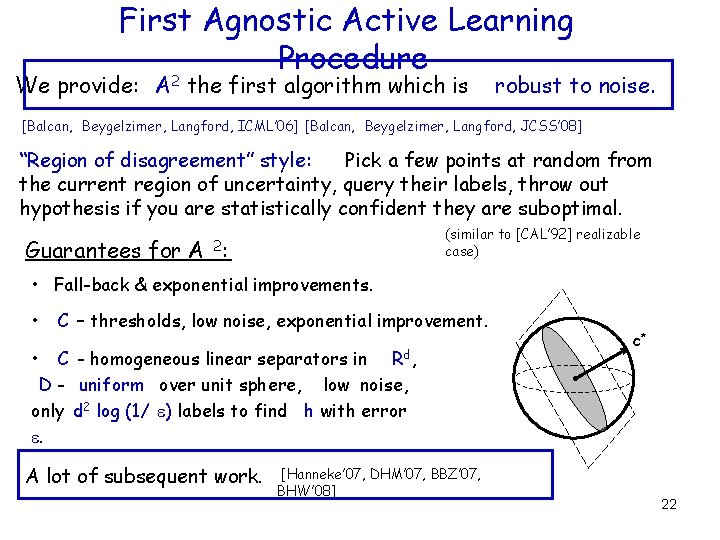

First Agnostic Active Learning Procedure We provide: A 2 the first algorithm which is robust to noise. [Balcan, Beygelzimer, Langford, ICML’ 06] [Balcan, Beygelzimer, Langford, JCSS’ 08] “Region of disagreement” style: Pick a few points at random from the current region of uncertainty, query their labels, throw out hypothesis if you are statistically confident they are suboptimal. Guarantees for A (similar to [CAL’ 92] realizable case) 2: • Fall-back & exponential improvements. • C – thresholds, low noise, exponential improvement. • C - homogeneous linear separators in Rd, D - uniform over unit sphere, low noise, only d 2 log (1/ ) labels to find h with error . A lot of subsequent work. [Hanneke’ 07, DHM’ 07, BBZ’ 07, BHW’ 08] c* 22

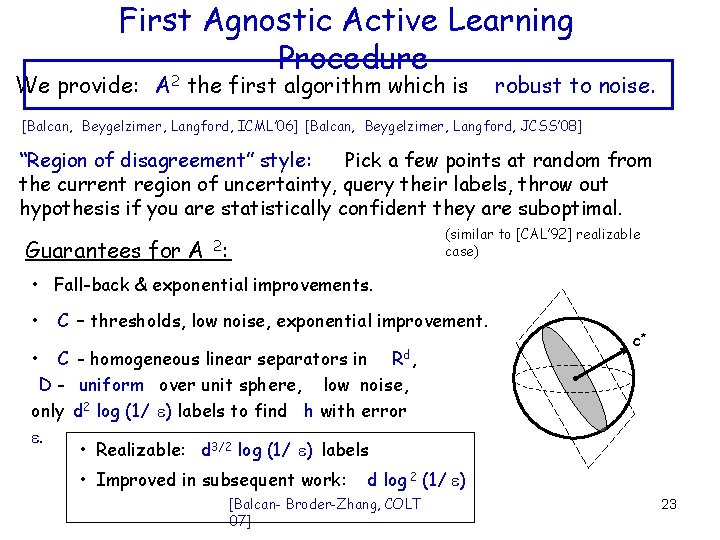

First Agnostic Active Learning Procedure We provide: A 2 the first algorithm which is robust to noise. [Balcan, Beygelzimer, Langford, ICML’ 06] [Balcan, Beygelzimer, Langford, JCSS’ 08] “Region of disagreement” style: Pick a few points at random from the current region of uncertainty, query their labels, throw out hypothesis if you are statistically confident they are suboptimal. Guarantees for A (similar to [CAL’ 92] realizable case) 2: • Fall-back & exponential improvements. • C – thresholds, low noise, exponential improvement. • C - homogeneous linear separators in Rd, D - uniform over unit sphere, low noise, only d 2 log (1/ ) labels to find h with error . • Realizable: d 3/2 log (1/ ) labels • Improved in subsequent work: c* d log 2 (1/ ) [Balcan- Broder-Zhang, COLT 07] 23

![Part III, Learning with Kernels and More General Similarity Functions [Balcan-Blum, ICML 2006] [Balcan-Blum- Part III, Learning with Kernels and More General Similarity Functions [Balcan-Blum, ICML 2006] [Balcan-Blum-](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-23.jpg)

Part III, Learning with Kernels and More General Similarity Functions [Balcan-Blum, ICML 2006] [Balcan-Blum- Srebro, MLJ 2008] [Balcan-Blum- Srebro, COLT 2008]

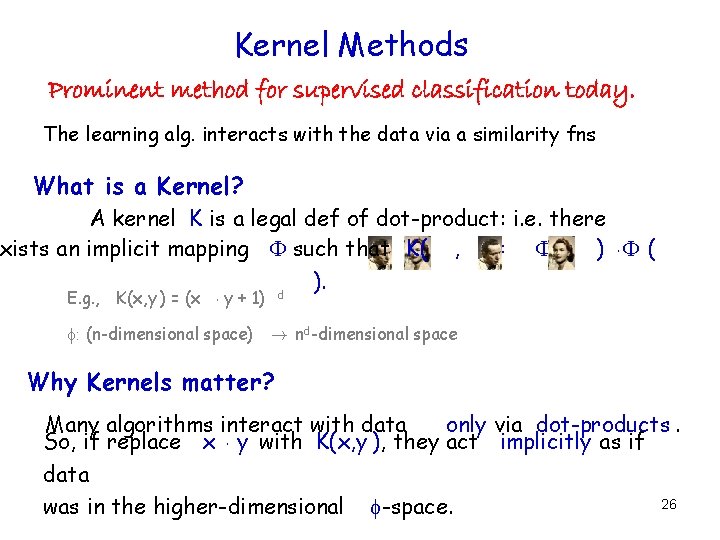

Kernel Methods Prominent method for supervised classification today. The learning alg. interacts with the data via a similarity fns What is a Kernel? A kernel K is a legal def of dot-product: i. e. there xists an implicit mapping such that K( , )= ( ) ¢ ( ). d E. g. , K(x, y ) = (x ¢ y + 1) : (n-dimensional space) ! nd-dimensional space Why Kernels matter? Many algorithms interact with data only via dot-products. So, if replace x ¢ y with K(x, y ), they act implicitly as if data 26 was in the higher-dimensional -space.

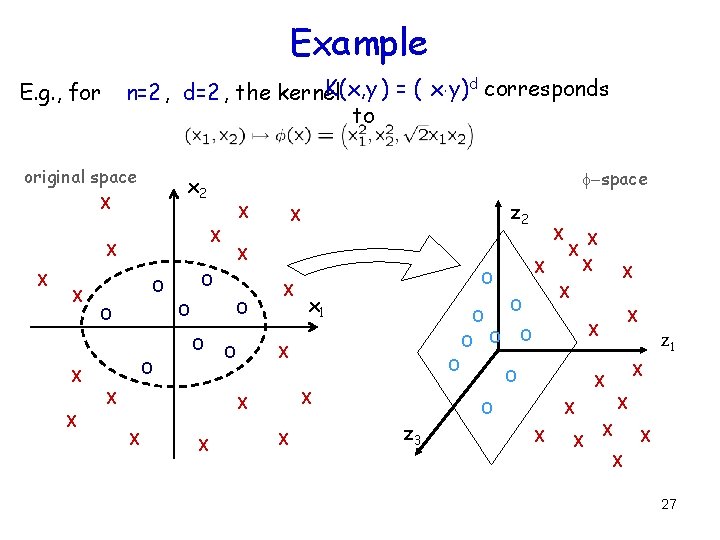

Example K(x, y ) = ( x¢y) d corresponds n=2 , d=2 , the kernel to E. g. , for original space X X z 2 X X -space x 2 X O O O O O X X X O X z 3 X X X O O O X X X O x 1 X O z 1 X X O X X X X 27

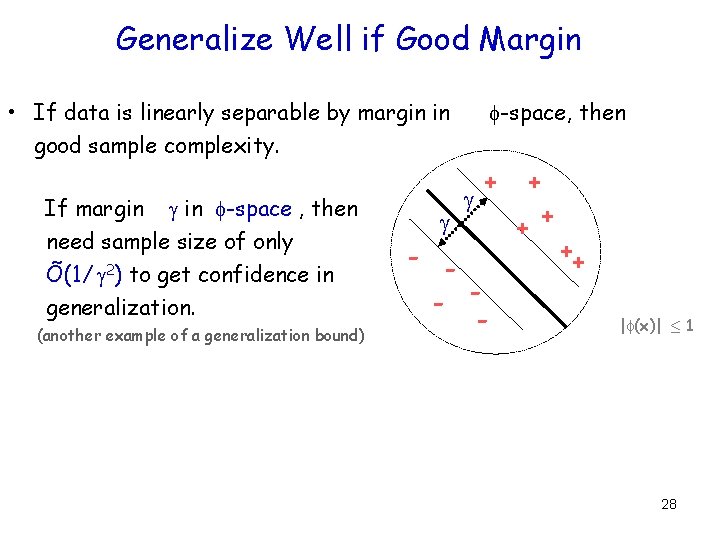

Generalize Well if Good Margin • If data is linearly separable by margin in good sample complexity. If margin in -space , then need sample size of only Õ(1/ 2) to get confidence in generalization. (another example of a generalization bound) -space, then + - - - + ++ | (x)| · 1 28

Limitations of the Current Theory In practice : kernels are constructed by viewing them as measures of similarity. Existing Theory : in terms of margins in implicit spaces. Difficult to think about, not great for intuition. Kernel requirement rules out many natural similarity functions. Better theoretical explanation? 29

Better Theoretical Framework In practice : kernels are constructed by viewing them as measures of similarity. Yes! We provide a more general and Existing Theory : in terms of margins in implicit the spaces. intuitive theory that formalizes Difficult to think about, not great for intuition that a good kernel is a good measure of similarity. [Balcan-Blum, ICML 2006] [Balcan-Blum 2008] Kernel requirement rules. Srebro, out MLJ natural similarity functions. [Balcan-Blum- Srebro, COLT 2008] Better theoretical explanation? 30

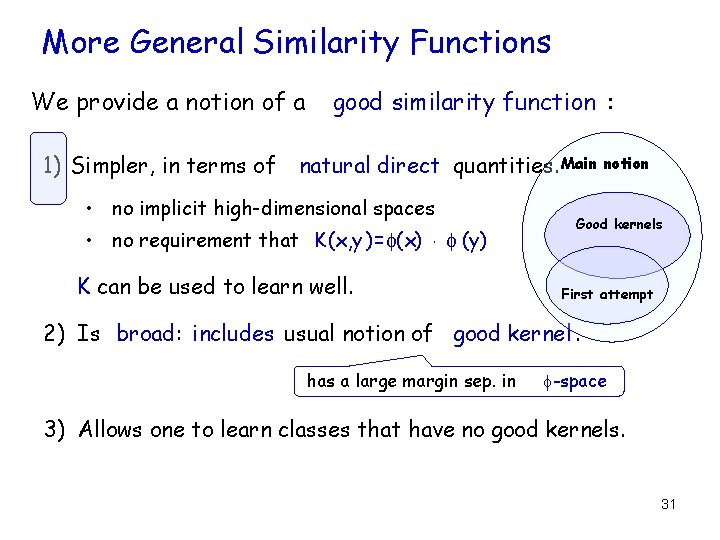

More General Similarity Functions We provide a notion of a 1) Simpler, in terms of good similarity function : natural direct quantities. Main • no implicit high-dimensional spaces • no requirement that K(x, y )= (x) ¢ (y) K can be used to learn well. notion Good kernels First attempt 2) Is broad: includes usual notion of good kernel. has a large margin sep. in -space 3) Allows one to learn classes that have no good kernels. 31

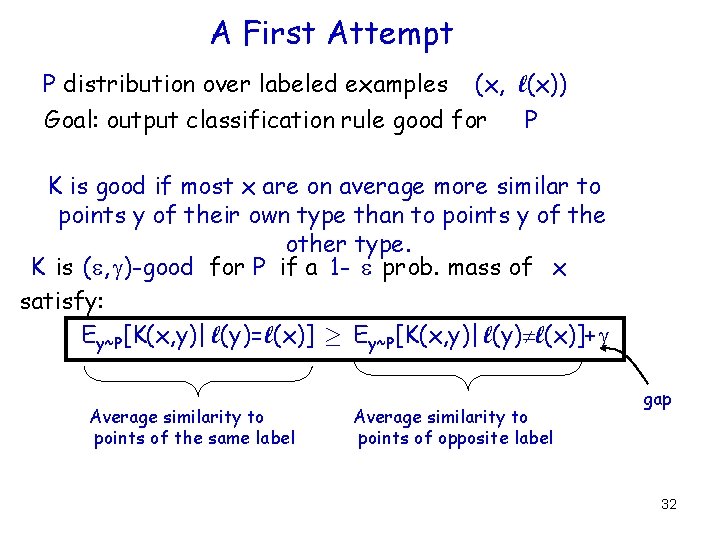

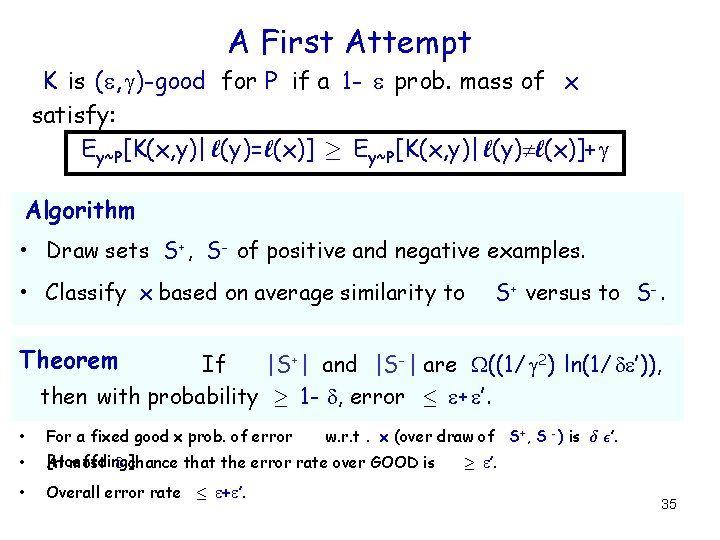

A First Attempt P distribution over labeled examples (x, l(x)) Goal: output classification rule good for P K is good if most x are on average more similar to points y of their own type than to points y of the other type. K is ( , )-good for P if a 1 - prob. mass of x satisfy: Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, y)| l(y) l(x)]+ Average similarity to points of the same label Average similarity to points of opposite label gap 32

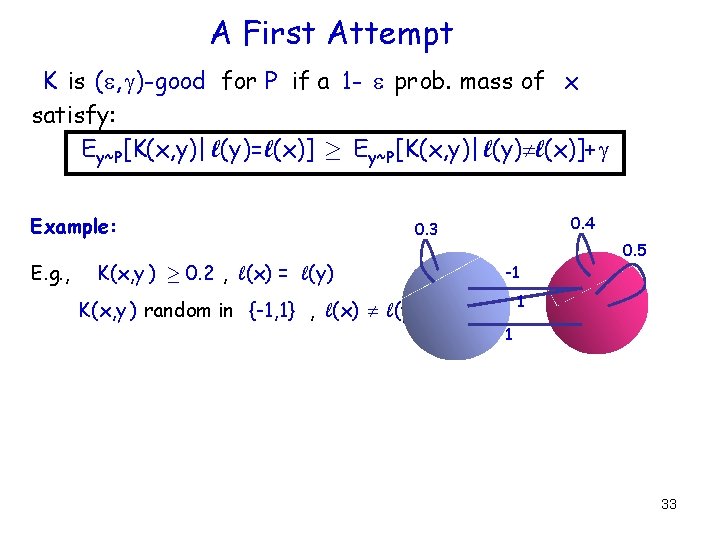

A First Attempt K is ( , )-good for P if a 1 - prob. mass of x satisfy: Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, y)| l(y) l(x)]+ Example: E. g. , 0. 4 0. 3 K(x, y ) ¸ 0. 2 , l(x) = l(y) -1 0. 5 1 K(x, y ) random in {-1, 1} , l(x) l(y) 1 33

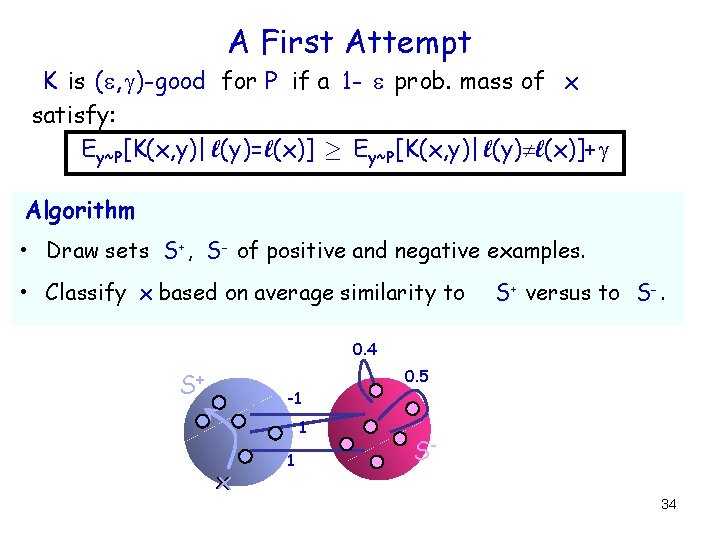

A First Attempt K is ( , )-good for P if a 1 - prob. mass of x satisfy: Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, y)| l(y) l(x)]+ Algorithm • Draw sets S+ , S- of positive and negative examples. • Classify x based on average similarity to S+ versus to S-. 0. 4 S+ -1 1 x 1 0. 5 S 34

A First Attempt K is ( , )-good for P if a 1 - prob. mass of x satisfy: Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, y)| l(y) l(x)]+ Algorithm • Draw sets S+ , S- of positive and negative examples. • Classify x based on average similarity to S+ versus to S-. Theorem If |S+ | and |S- | are ((1/ 2) ln(1/ ’)), then with probability ¸ 1 - , error · + ’. • For a fixed good x prob. of error • [Hoeffding] At most chance that the error rate over GOOD is • Overall error rate · + ’. w. r. t. x (over draw of S+ , S - ) is ± ²’. ¸ ’. 35

![A First Attempt: Not Broad Enough Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, A First Attempt: Not Broad Enough Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x,](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-34.jpg)

A First Attempt: Not Broad Enough Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, y)| l(y) l(x)]+ ++ + more similar to than to typical + + ++ 30 o ½ --- -- ½ versus ½ ¼ ¢ 1+½ ¢ (- ½) Similarity function K(x, y )=x ¢ y • has a large margin separator; does not satisfy our definition. 36

![A First Attempt: Not Broad Enough Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, A First Attempt: Not Broad Enough Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x,](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-35.jpg)

A First Attempt: Not Broad Enough Ey~P [K(x, y)| l(y)= l(x)] ¸ Ey~P [K(x, y)| l(y) l(x)]+ R ++ + + ++ 30 o --- -- Broaden : 9 non-negligible R s. t. most x are on average more similar to y 2 R of same label than to y 2 R of other label. [even if do not know R in advance] 37

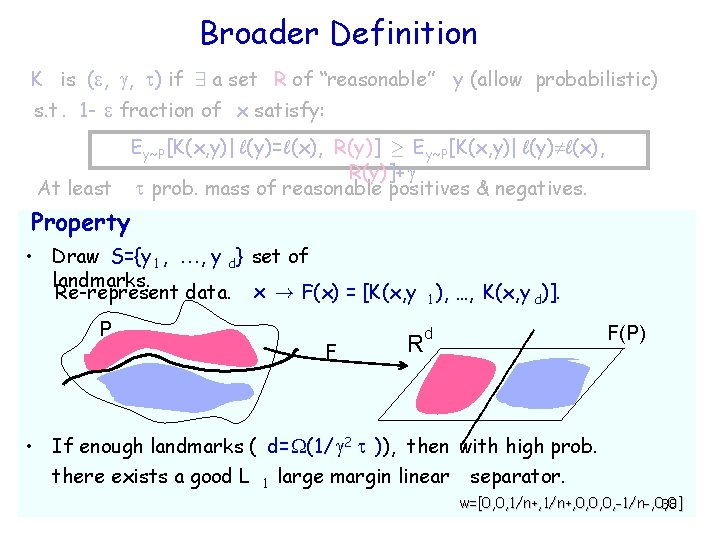

Broader Definition K is ( , , ) if 9 a set R of “reasonable” y (allow probabilistic) s. t. 1 - fraction of x satisfy: Ey~P [K(x, y)| l(y)= l(x), R(y)] ¸ Ey~P [K(x, y)| l(y) l(x), R(y)]+ At least prob. mass of reasonable positives & negatives. Property • Draw S={y 1 , , y d} set of landmarks. Re-represent data. x ! F(x) = [K(x, y 1 ), …, K(x, y d)]. P F R F(P) d • If enough landmarks ( d= (1/ 2 )), then with high prob. there exists a good L 1 large margin linear separator. w=[0, 0, 1/n+, 0, 0, 0, -1/n-, 0, 0] 38

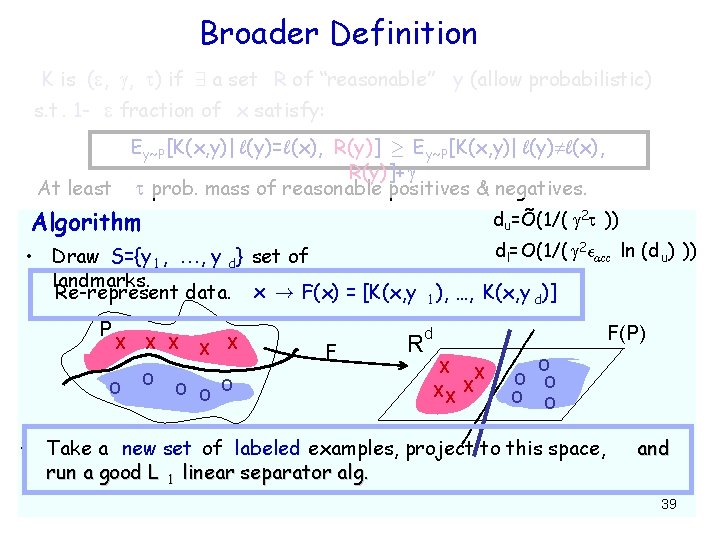

Broader Definition K is ( , , ) if 9 a set R of “reasonable” y (allow probabilistic) s. t. 1 - fraction of x satisfy: Ey~P [K(x, y)| l(y)= l(x), R(y)] ¸ Ey~P [K(x, y)| l(y) l(x), R(y)]+ At least prob. mass of reasonable positives & negatives. Algorithm du=Õ(1/( 2 )) dl= O(1/( 2²acc ln (d u) )) • Draw S={y 1 , , y d} set of landmarks. Re-represent data. x ! F(x) = [K(x, y 1 ), …, K(x, y d)] P X O X X O O O F R F(P) d X X XX X O O O • Take a new set of labeled examples, project to this space, run a good L 1 linear separator alg. and 39

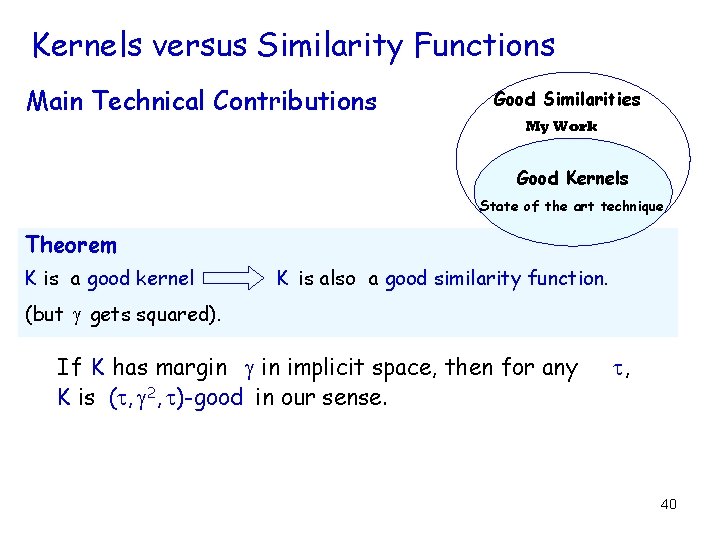

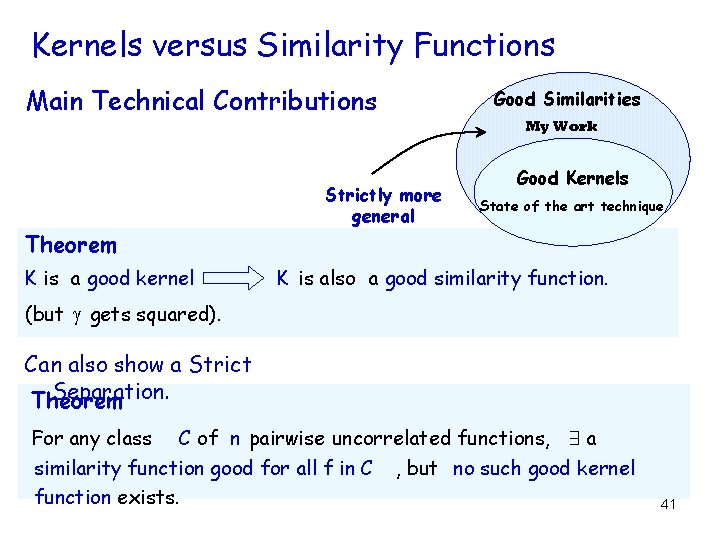

Kernels versus Similarity Functions Main Technical Contributions Good Similarities My Work Good Kernels State of the art technique Theorem K is a good kernel K is also a good similarity function. (but gets squared). If K has margin in implicit space, then for any K is ( , 2, )-good in our sense. , 40

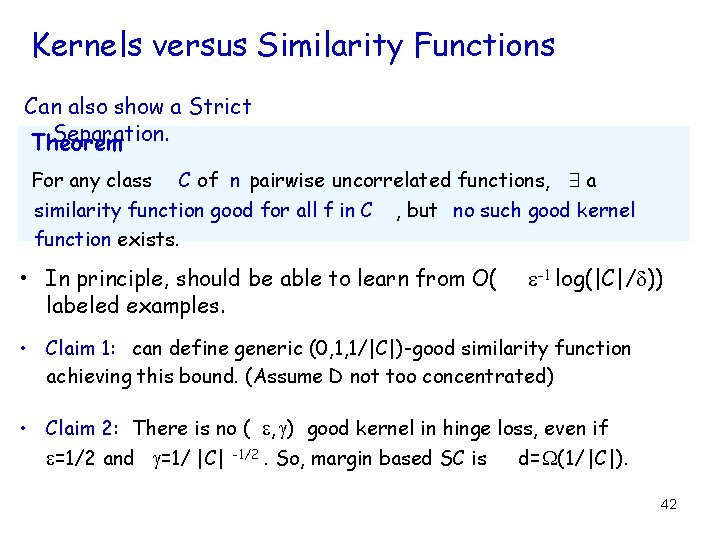

Kernels versus Similarity Functions Main Technical Contributions Good Similarities My Work Strictly more general Good Kernels State of the art technique Theorem K is a good kernel K is also a good similarity function. (but gets squared). Can also show a Strict Separation. Theorem For any class C of n pairwise uncorrelated functions, 9 a similarity function good for all f in C , but no such good kernel function exists. 41

Kernels versus Similarity Functions Can also show a Strict Separation. Theorem For any class C of n pairwise uncorrelated functions, 9 a similarity function good for all f in C , but no such good kernel function exists. • In principle, should be able to learn from O( labeled examples. -1 log(|C|/ )) • Claim 1: can define generic (0, 1, 1/|C|)-good similarity function achieving this bound. (Assume D not too concentrated) • Claim 2: There is no ( , ) good kernel in hinge loss, even if =1/2 and =1/ |C| -1/2. So, margin based SC is d= (1/ |C|). 42

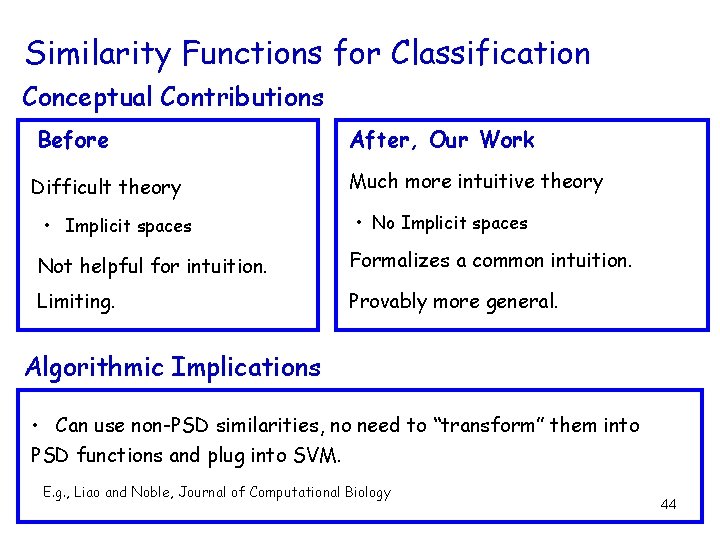

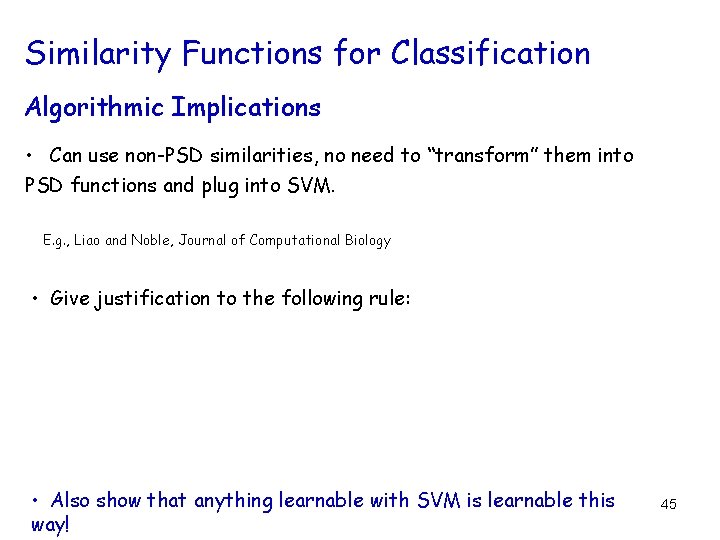

Similarity Functions for Classification Conceptual Contributions Before Difficult theory • Implicit spaces After, Our Work Much more intuitive theory • No Implicit spaces Not helpful for intuition. Formalizes a common intuition. Limiting. Provably more general. Algorithmic Implications • Can use non-PSD similarities, no need to “transform” them into PSD functions and plug into SVM. E. g. , Liao and Noble, Journal of Computational Biology 44

Similarity Functions for Classification Algorithmic Implications • Can use non-PSD similarities, no need to “transform” them into PSD functions and plug into SVM. E. g. , Liao and Noble, Journal of Computational Biology • Give justification to the following rule: • Also show that anything learnable with SVM is learnable this way! 45

![Part IV, A Novel View on Clustering [Balcan-Blum- Vempala , STOC 2008] A General Part IV, A Novel View on Clustering [Balcan-Blum- Vempala , STOC 2008] A General](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-43.jpg)

Part IV, A Novel View on Clustering [Balcan-Blum- Vempala , STOC 2008] A General Framework for analyzing clustering accuracy without strong probabilistic assumptions

![What if only Unlabeled Examples Available? s] rt po [s S set of n What if only Unlabeled Examples Available? s] rt po [s S set of n](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-44.jpg)

What if only Unlabeled Examples Available? s] rt po [s S set of n objects. [f as hi o n] [documents] [topic] 9 ground truth clustering. x, l(x) in {1, …, t}. Goal: h of low error whereerr(h) = min Prx~S[ (h(x)) l(x)] Problem: unlabeled data only! But have a Similarity Function! 47

![What if only Unlabeled Examples Available? s] rt po [s [f as hi o What if only Unlabeled Examples Available? s] rt po [s [f as hi o](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-45.jpg)

What if only Unlabeled Examples Available? s] rt po [s [f as hi o n] Protocol 9 ground truth clustering for S i. e. , each Input x in S has l(x) in {1, …, t}. S, a similarity function K. Output Clustering of small error. (err(h) = min Prx~S[ (h(x)) l(x)]) The similarity function K has to be related to the ground -truth. 48

![What if only Unlabeled Examples Available? s] rt po [s [f as hi o What if only Unlabeled Examples Available? s] rt po [s [f as hi o](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-46.jpg)

What if only Unlabeled Examples Available? s] rt po [s [f as hi o n] Fundamental Question What natural properties on a similarity function would be sufficient to allow one to cluster well? 49

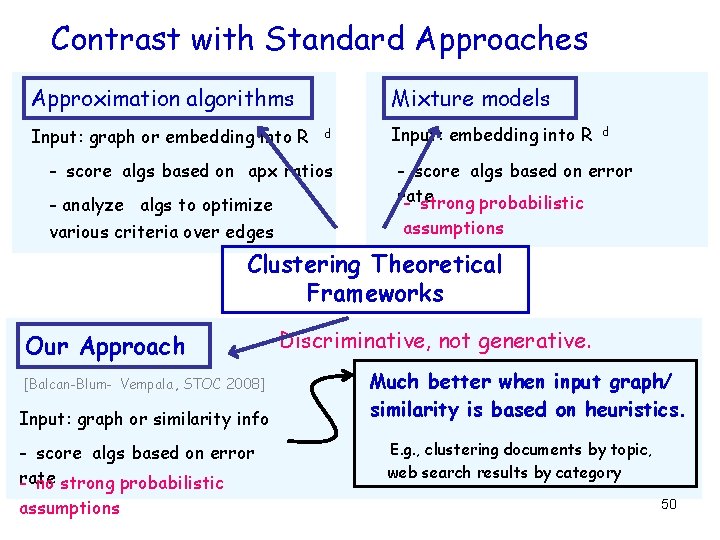

Contrast with Standard Approaches Approximation algorithms Input: graph or embedding into R Mixture models d - score algs based on apx ratios - analyze algs to optimize various criteria over edges Input: embedding into R d - score algs based on error rate - strong probabilistic assumptions Clustering Theoretical Frameworks Our Approach [Balcan-Blum- Vempala , STOC 2008] Input: graph or similarity info - score algs based on error rate - no strong probabilistic assumptions Discriminative, not generative. Much better when input graph/ similarity is based on heuristics. E. g. , clustering documents by topic, web search results by category 50

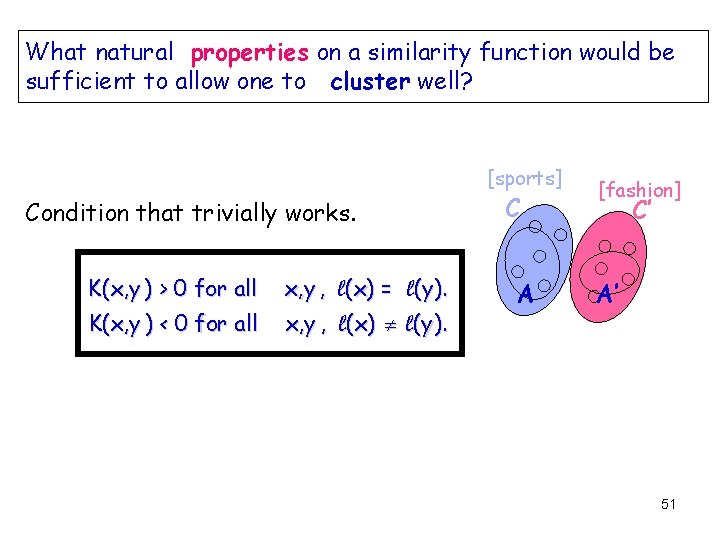

What natural properties on a similarity function would be sufficient to allow one to cluster well? [sports] Condition that trivially works. K(x, y ) > 0 for all x, y , l(x) = l(y ). K(x, y ) < 0 for all x, y , l(x) l(y ). C A [fashion] C’ A’ 51

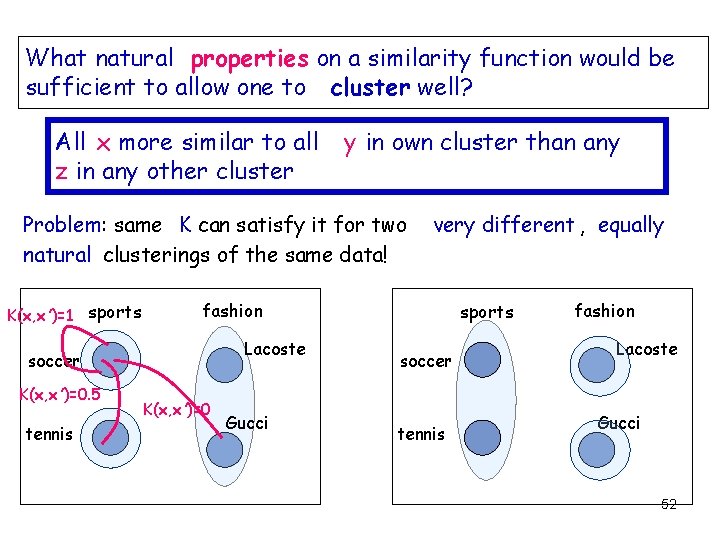

What natural properties on a similarity function would be sufficient to allow one to cluster well? All x more similar to all z in any other cluster y in own cluster than any Problem: same K can satisfy it for two natural clusterings of the same data! K(x, x ’)=1 sports fashion Lacoste soccer K(x, x ’)=0. 5 tennis very different , equally K(x, x ’)=0 Gucci sports soccer tennis fashion Lacoste Gucci 52

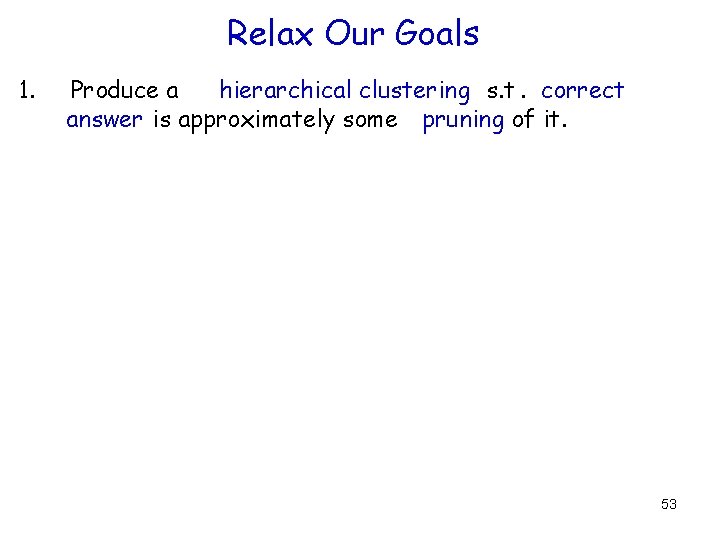

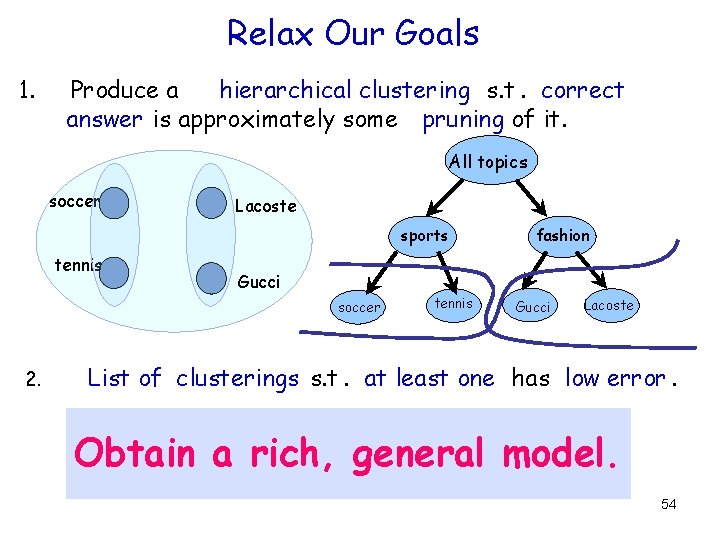

Relax Our Goals 1. Produce a hierarchical clustering s. t. correct answer is approximately some pruning of it. 53

Relax Our Goals 1. Produce a hierarchical clustering s. t. correct answer is approximately some pruning of it. All topics soccer Lacoste sports tennis Gucci soccer 2. fashion tennis Gucci Lacoste List of clusterings s. t. at least one has low error. Tradeoff strength of assumption with size of list. Obtain a rich, general model. 54

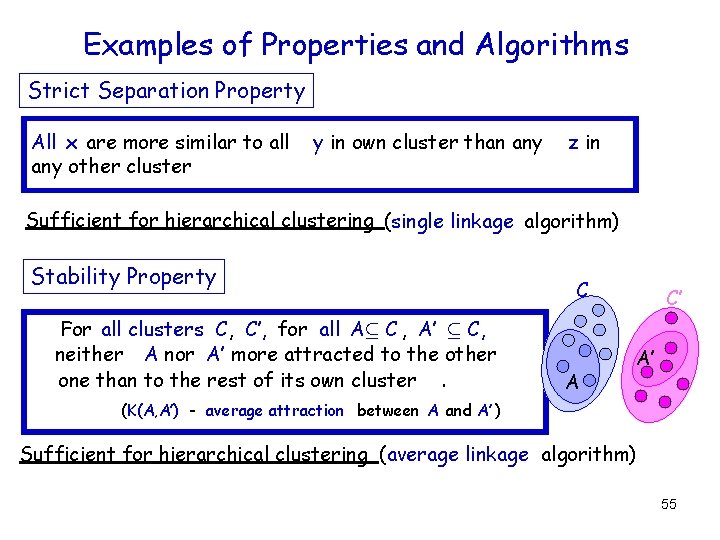

Examples of Properties and Algorithms Strict Separation Property All x are more similar to all any other cluster y in own cluster than any z in Sufficient for hierarchical clustering (single linkage algorithm) Stability Property For all clusters C, C’, for all Aµ C , A’ µ C, neither A nor A’ more attracted to the other one than to the rest of its own cluster. C A C’ A’ (K(A, A’) - average attraction between A and A’ ) Sufficient for hierarchical clustering (average linkage algorithm) 55

![Examples of Properties and Algorithms Average Attraction Property Ex’ 2 C(x)[K(x, x ’)] > Examples of Properties and Algorithms Average Attraction Property Ex’ 2 C(x)[K(x, x ’)] >](http://slidetodoc.com/presentation_image_h2/d7b2acd5a879153e78b12acb479f946b/image-53.jpg)

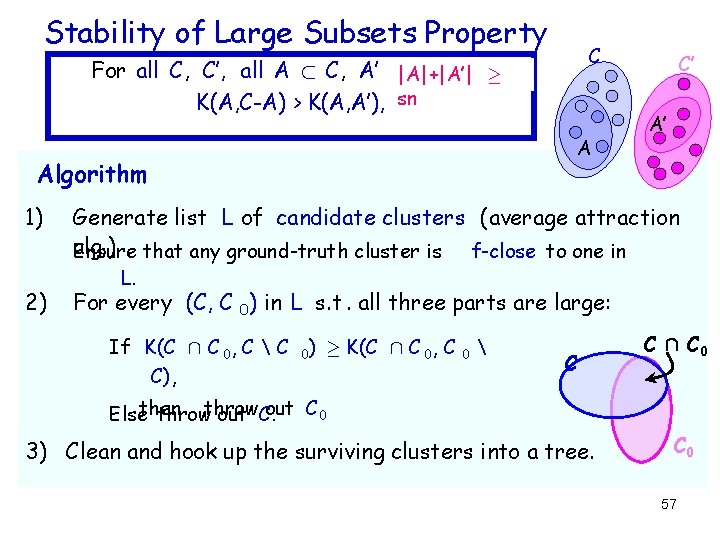

Examples of Properties and Algorithms Average Attraction Property Ex’ 2 C(x)[K(x, x ’)] > E x’ 2 C’ [K(x, x ’)]+ ( 8 C’ C(x)) Not sufficient for hierarchical clustering Can produce a small list of clusterings. (sampling based algorithm) Stability of Large Subsets Property For all clusters C, C’, for all Aµ C , A’ µ C, |A|+|A’| ¸ sn, neither A nor A’ more attracted to the other one than to the rest of its own cluster. C A C’ A’ Sufficient for hierarchical clustering Find hierarchy using a multi-stage learning-based algorithm. 56

Stability of Large Subsets Property C For all C, C’, all A ½ C, A’ µ|A|+|A’| C’, ¸ K(A, C-A) > K(A, A’), sn A Algorithm 1) 2) C’ A’ Generate list L of candidate clusters (average attraction alg. ) Ensure that any ground-truth cluster is f-close to one in L. For every (C, C 0) in L s. t. all three parts are large: If K(C Å C 0, C C 0) ¸ K(C Å C 0, C C), 0 C C Å C 0 out C 0 Elsethen throw out C. 3) Clean and hook up the surviving clusters into a tree. C 0 57

Similarity Functions for Clustering, Summary • Minimal conditions on K to be useful for clustering. • For robust theory, relax objective : hierarchy, list. • A general model that parallels PAC, SLT, Learning with Kernels and Similarity Functions in Supervised Classification. 59

Similarity Functions, Overall Summary Supervised Classification Generalize and simplify the existing theory of Kernels. [Balcan-Blum, ICML 2006] Unsupervised Learning First Clustering model for analyzing accuracy without strong probabilistic assumptions. [Balcan-Blum- Srebro, COLT 2008] [Balcan-Blum- Srebro, MLJ 2008] [Balcan-Blum- Vempala , STOC 2008] 60

Future Directions Connections between Computer Science and Economics Active learning and online learning techniques for better pricing algorithms and auctions. New Frameworks and Algorithms for Machine Learning – Similarity Functions for Learning and Clustering Learn a good similarity based on data from related problems. Other notions of “useful”, other types of feedback. Other navigational structures: e. g. , a small DAG. – Interactive Learning 61

62

- Slides: 58