Lecture 26 Semantics September 30 2005 3122021 1

Lecture 26 Semantics September 30, 2005 3/12/2021 1

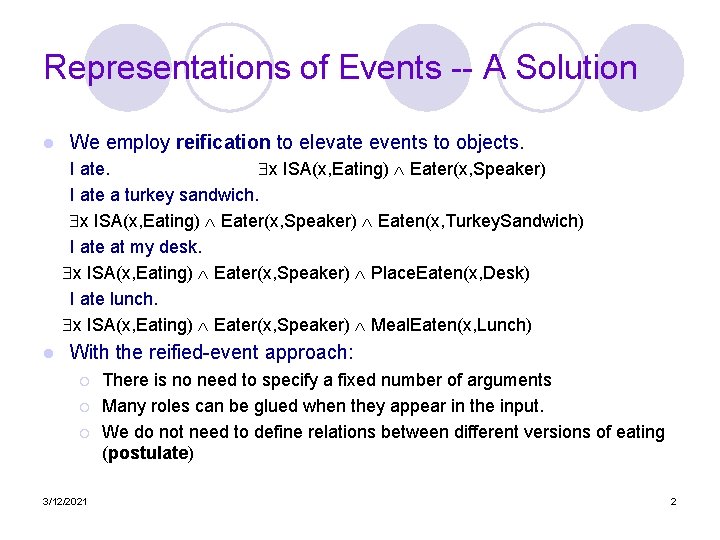

Representations of Events -- A Solution l We employ reification to elevate events to objects. I ate. x ISA(x, Eating) Eater(x, Speaker) I ate a turkey sandwich. x ISA(x, Eating) Eater(x, Speaker) Eaten(x, Turkey. Sandwich) I ate at my desk. x ISA(x, Eating) Eater(x, Speaker) Place. Eaten(x, Desk) I ate lunch. x ISA(x, Eating) Eater(x, Speaker) Meal. Eaten(x, Lunch) l With the reified-event approach: ¡ ¡ ¡ 3/12/2021 There is no need to specify a fixed number of arguments Many roles can be glued when they appear in the input. We do not need to define relations between different versions of eating (postulate) 2

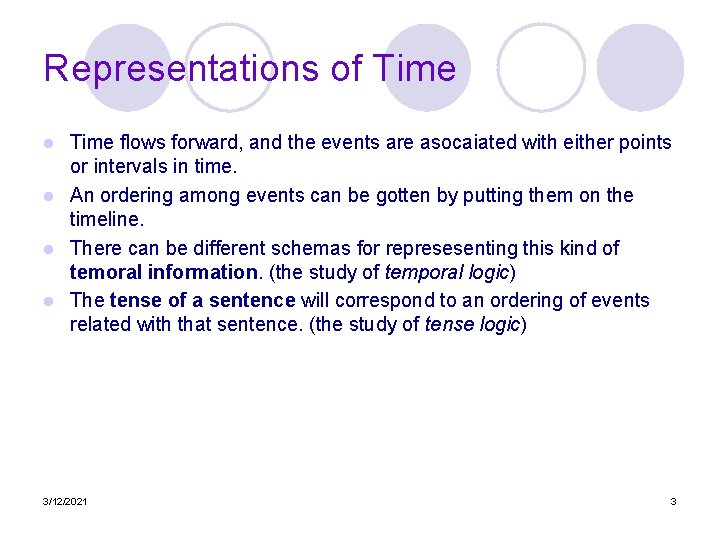

Representations of Time flows forward, and the events are asocaiated with either points or intervals in time. l An ordering among events can be gotten by putting them on the timeline. l There can be different schemas for represesenting this kind of temoral information. (the study of temporal logic) l The tense of a sentence will correspond to an ordering of events related with that sentence. (the study of tense logic) l 3/12/2021 3

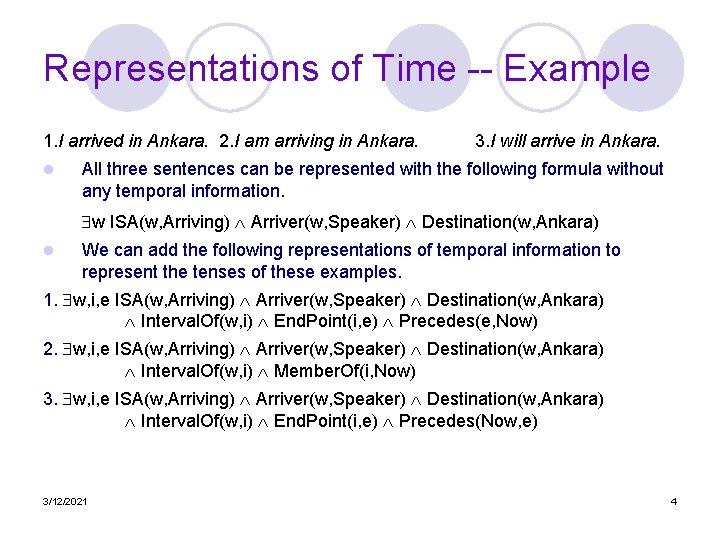

Representations of Time -- Example 1. I arrived in Ankara. 2. I am arriving in Ankara. l 3. I will arrive in Ankara. All three sentences can be represented with the following formula without any temporal information. w ISA(w, Arriving) Arriver(w, Speaker) Destination(w, Ankara) l We can add the following representations of temporal information to represent the tenses of these examples. 1. w, i, e ISA(w, Arriving) Arriver(w, Speaker) Destination(w, Ankara) Interval. Of(w, i) End. Point(i, e) Precedes(e, Now) 2. w, i, e ISA(w, Arriving) Arriver(w, Speaker) Destination(w, Ankara) Interval. Of(w, i) Member. Of(i, Now) 3. w, i, e ISA(w, Arriving) Arriver(w, Speaker) Destination(w, Ankara) Interval. Of(w, i) End. Point(i, e) Precedes(Now, e) 3/12/2021 4

Representations of Time (cont. ) l The relation between simple verb tenses and points in time is not straightforward. ¡ ¡ l We fly from Ankara to Istanbul. -- present tense refers to a future event Flight 12 will be at gate an hour now. -- future tense refers to a past event In some formalisms, the tense of a sentence is expressed with the relation among times of events in that sentence, time of a reference point, and time of utterance. 3/12/2021 5

Reinhenbach’s Approach to Representing Tenses Past Perfect I had eaten. E R U Past I ate. R, E Present I eat. Present Perfect I have eaten. E U, R, E R, U Future I will eat. U, R 3/12/2021 E U Future Perfect I will have eaten. U E R 6

Representation of Aspect The notion of Aspect concerns with: ¡ whether an event has ended or is ongoing ¡ whether it is conceptualized as happening at a point in time or over some interval. ¡ whether a particular state exists because of it. l Event expressions can be divided into four aspectual classes: l ¡ Stative -- an event participant having a property at a point of time. l ¡ Activity -- an event associated with some interval (without a clear end point) l ¡ He booked me a reservation. Achievement -- an event results in a particular state, but an instant event. l 3/12/2021 I drove a Ferrari. Accomplishment -- an event with a natural end point and results in a particular state. l ¡ I know my departure gate. He found the gate. 7

Representations of Beliefs l We can represent a belief as follows: I believe that Mary ate Thai food. u, v ISA(u, Believing) ISA(v, Eating) Believer(u, Speaker) Believed(u, v) Eater(v, Mary) Eaten(v, Thai. Food) ¡ l But from this, we can get the following (which may not be correct). v ISA(v, Eating) Eater(v, Mary) Eaten(v, Thai. Food) l We may think that we can represent this as follows, but it will not be a FOPC formula. Believing(Speaker, Eating(Mary, Thai. Food)) l A solution is to augment FOPC with operators. (modal logic with modal operators). Believing(Speaker, v ISA(v, Eating) Eater(v, Mary) Eaten(v, Thai. Food)) l Inference will be complicated with modal logic. 3/12/2021 8

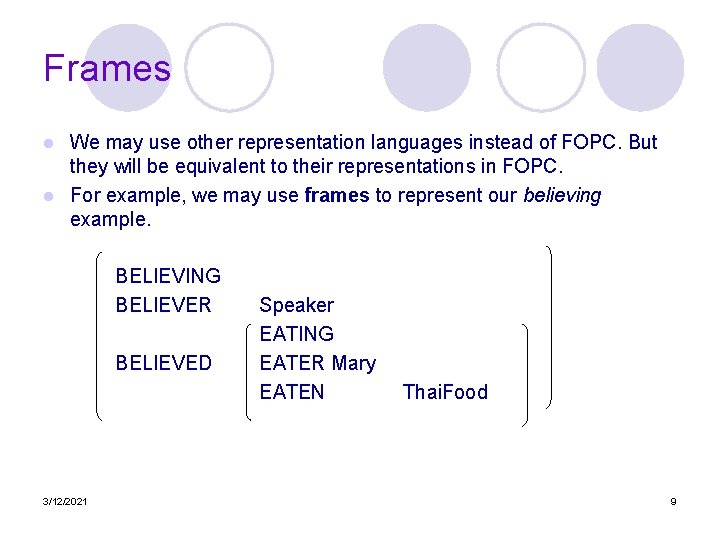

Frames We may use other representation languages instead of FOPC. But they will be equivalent to their representations in FOPC. l For example, we may use frames to represent our believing example. l BELIEVING BELIEVER BELIEVED 3/12/2021 Speaker EATING EATER Mary EATEN Thai. Food 9

Semantic Analysis -- Meaning representations are assigned to linguistic inputs. l We need static knowledge from grammar and lexicon. l How much semantic analysis do we need? l ¡ Deep Analysis -- Through syntactic and semantic analysis of the text to capture all pertinent information in the text. ¡ Information Extraction -- does not require complete syntactic and semantic analysis. With a cascade of FSAs to produce a robust semantic analyzer. 3/12/2021 10

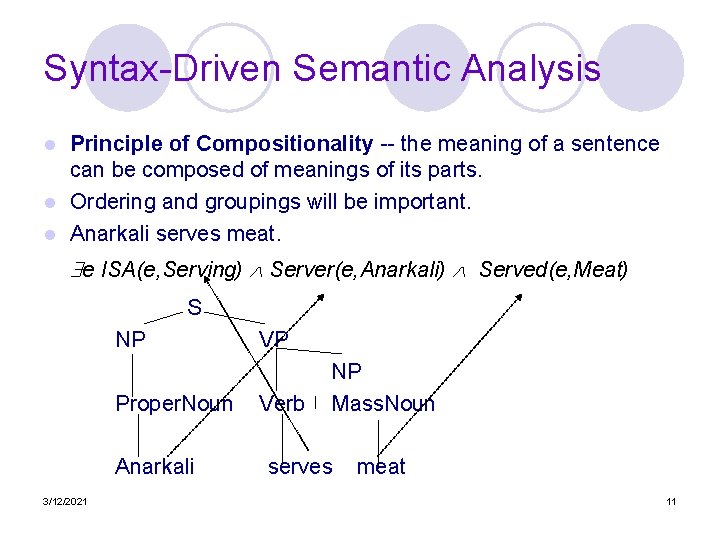

Syntax-Driven Semantic Analysis Principle of Compositionality -- the meaning of a sentence can be composed of meanings of its parts. l Ordering and groupings will be important. l Anarkali serves meat. l e ISA(e, Serving) Server(e, Anarkali) Served(e, Meat) S NP VP Proper. Noun NP Verb Mass. Noun Anarkali serves meat 3/12/2021 11

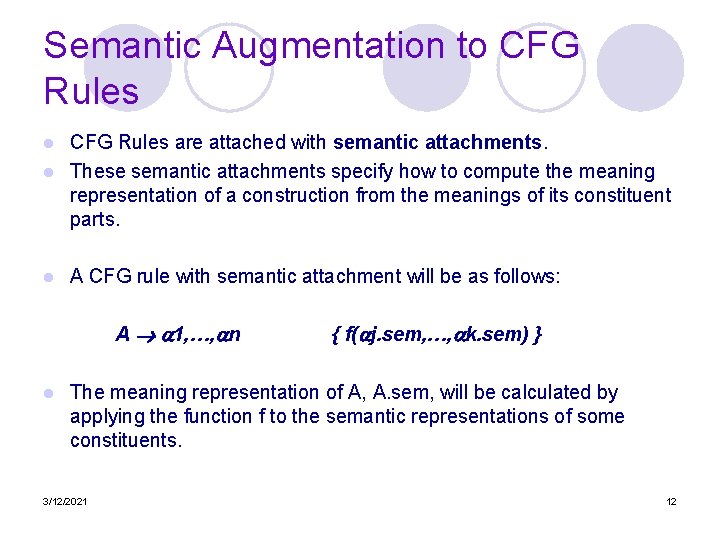

Semantic Augmentation to CFG Rules are attached with semantic attachments. l These semantic attachments specify how to compute the meaning representation of a construction from the meanings of its constituent parts. l l A CFG rule with semantic attachment will be as follows: A 1, …, n l { f( j. sem, …, k. sem) } The meaning representation of A, A. sem, will be calculated by applying the function f to the semantic representations of some constituents. 3/12/2021 12

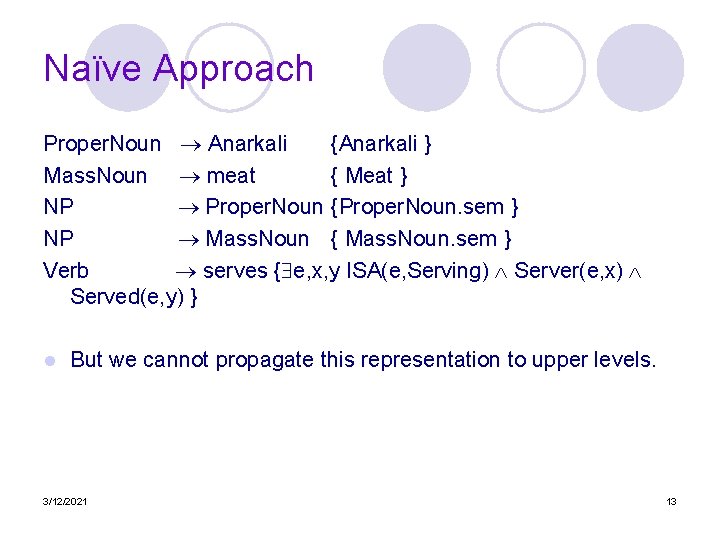

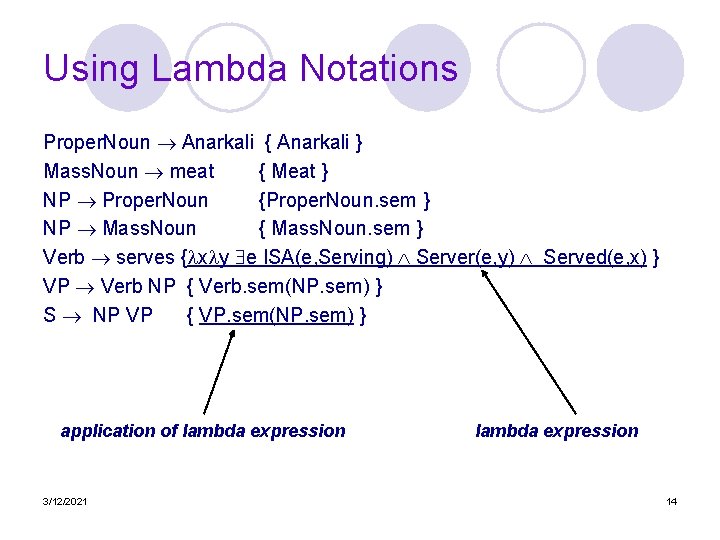

Naïve Approach Proper. Noun Anarkali {Anarkali } Mass. Noun meat { Meat } NP Proper. Noun {Proper. Noun. sem } NP Mass. Noun { Mass. Noun. sem } Verb serves { e, x, y ISA(e, Serving) Server(e, x) Served(e, y) } l But we cannot propagate this representation to upper levels. 3/12/2021 13

Using Lambda Notations Proper. Noun Anarkali { Anarkali } Mass. Noun meat { Meat } NP Proper. Noun {Proper. Noun. sem } NP Mass. Noun { Mass. Noun. sem } Verb serves { x y e ISA(e, Serving) Server(e, y) Served(e, x) } VP Verb NP { Verb. sem(NP. sem) } S NP VP { VP. sem(NP. sem) } application of lambda expression 3/12/2021 lambda expression 14

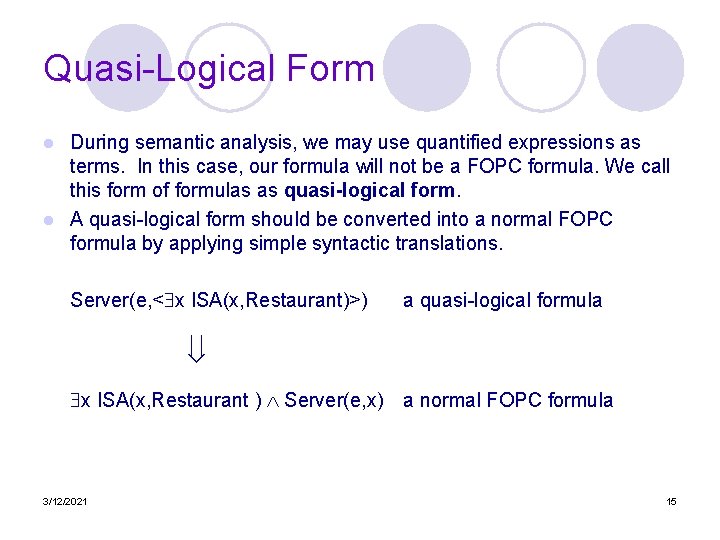

Quasi-Logical Form During semantic analysis, we may use quantified expressions as terms. In this case, our formula will not be a FOPC formula. We call this form of formulas as quasi-logical form. l A quasi-logical form should be converted into a normal FOPC formula by applying simple syntactic translations. l Server(e, < x ISA(x, Restaurant)>) a quasi-logical formula x ISA(x, Restaurant ) Server(e, x) a normal FOPC formula 3/12/2021 15

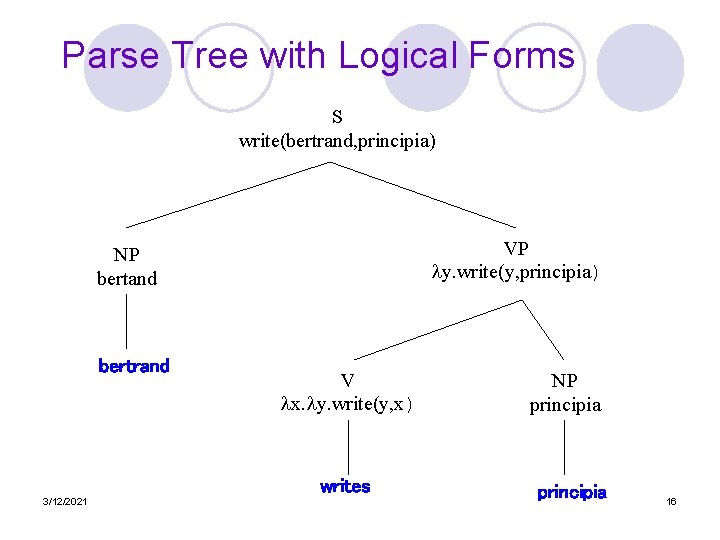

Parse Tree with Logical Forms S write(bertrand, principia) VP y. write(y, principia) NP bertand bertrand V x. y. write(y, x) writes 3/12/2021 NP principia 16

Lecture 24 Lexical Semantics September 28, 2005 3/12/2021 17

Meaning l Traditionally, meaning in language has been studied from three perspectives The meaning of a text or discourse ¡ The meanings of individual sentences or utterances ¡ The meanings of individual words ¡ l We started in the middle, now we’ll look at the meanings of individual words. 3/12/2021 18

Word Meaning l We didn’t assume much about the meaning of words when we talked about sentence meanings ¡ ¡ l Verbs provided a template-like predicate argument structure Nouns were practically meaningless constants There has be more to it than that ¡ 3/12/2021 The internal structure of words that determines where they can go and what they can do (syntagmatic) 19

What’s a word? l Words? : Types, tokens, stems, roots, inflected forms? l Lexeme – An entry in a lexicon consisting of a pairing of a form with a single meaning representation l Lexicon - A collection of lexemes 3/12/2021 20

Lexical Semantics The linguistic study of systematic meaning related structure of lexemes is called Lexical Semantics. l A lexeme is an individual entry in the lexicon. l A lexicon is meaning structure holding meaning relations of lexemes. l A lexeme may have different meanings. A lexeme’s meaning component is known as one of its senses. l ¡ Different senses of the lexeme duck. l ¡ Different senses of the lexeme yüz l 3/12/2021 an animal, to lower the head, . . . face, to swim, to skin, the front of something, hundred, . . . 21

Relations Among Lexemes and Their Senses l l l Homonymy Polysemy Snonymy Hypernym 3/12/2021 22

Homonymy l Homonymy is a relation holds between words have same form with unrelated meanings. ¡ Bank -- financial institution, river bank l ¡ l For this, we should create two senses of the lexeme bank. Bat -- (wooden stick-like thing) vs (flying scary mammal thing) The problematic part of understanding homonymy isn’t with the forms, it’s the meanings. ¡ ¡ 3/12/2021 Causes ambiguity. Nothing particularly important would happen to anything else in English if we used a different word for the little flying mammal things 23

Polysemy l Polysemy is the phenomenon of multiple related meanings in a same lexeme. ¡ ¡ Bank -- financial institution, blood bank l these senses are related. Are we going to create a single sense or two different senses? l While some banks furnish sperm only to married women, others are less restrictive Serve l Which flights serve breakfast? l Does America West serve Philadelphia? l Does United serve breakfast and San Jose? Most non-rare words have multiple meanings l The number of meanings is related to its frequency l Verbs tend more to polysemy l Distinguishing polysemy from homonymy isn’t always easy (or necessary) l 3/12/2021 24

Synonymy l Synonymy is the phenomenon of two different lexemes having the same meaning. ¡ ¡ l Big and large In fact, one of the senses of two lexemes are same. There aren’t any true synonyms. ¡ ¡ Two lexemes are synonyms if they can be successfully substituted for each other in all situations What does successfully mean? l l l Preserves the meaning But may not preserve the acceptability based on notions of politeness, slang, . . . Example - Big and large? ¡ ¡ 3/12/2021 That’s my big sister That’s my large sister a big plane a large plane 25

Hyponymy and Hypernym Hyponymy: one lexeme denotes a subclass of the other lexeme. l The more specific lexeme is a hyponymy of the more general lexeme. l The more general lexeme is a hypernym of the more specific lexeme. l l A hyponymy relation can be asserted between two lexemes when the meanings of the lexemes entail a subset relation ¡ Since dogs are canids l l ¡ 3/12/2021 Dog is a hyponym of canid and Canid is a hypernym of dog Car is a hyponymy of vehicle, vehicle is a hypernym of car. 26

Ontology The term ontology refers to a set of distinct objects resulting from analysis of a domain. l A taxonomy is a particular arrangements of the elements of an ontology into a tree-like class inclusion structure. l A lexicon holds different senses of lexemes together with other relations among lexemes. l 3/12/2021 27

Lexical Resourses l There are lots of lexical resources available Word lists ¡ On-line dictionaries ¡ Corpora ¡ l The most ambitious one is Word. Net A database of lexical relations for English ¡ Versions for other languages are under development ¡ 3/12/2021 28

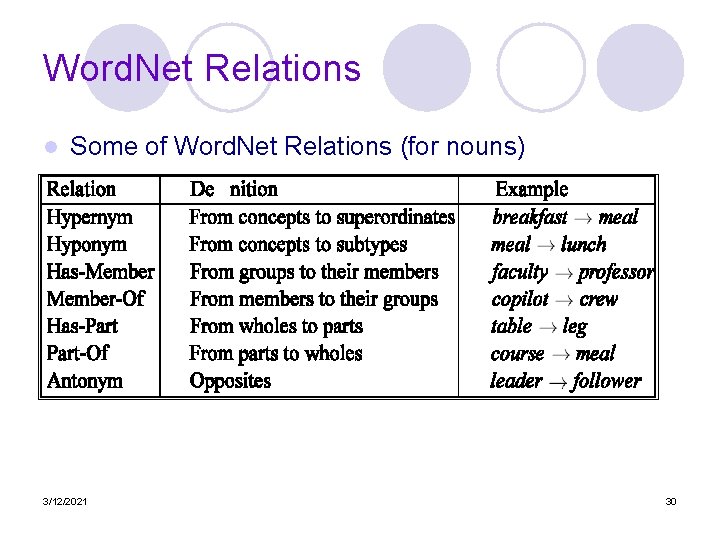

Word. Net is widely used lexical database for English. l Web. Page: http: //www. cogsci. princeton. edu/~wn/ l It holds: l ¡ ¡ 3/12/2021 The senses of the lexemes holds relations among nouns such as hypernym, hyponym, Member. Of, . . Holds relations among verbs such as hypernym, … Relations are held for each different senses of a lexeme. 29

Word. Net Relations l Some of Word. Net Relations (for nouns) 3/12/2021 30

Word. Net Hierarchies l Hyponymy chains for the senses of the lexeme bass 3/12/2021 31

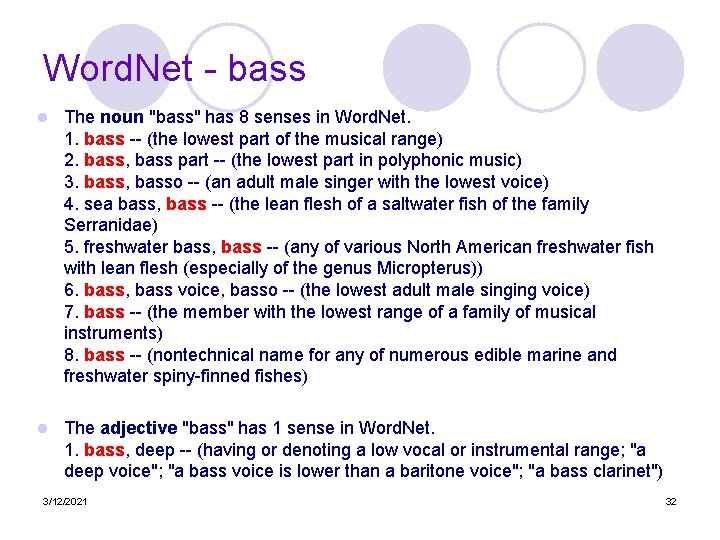

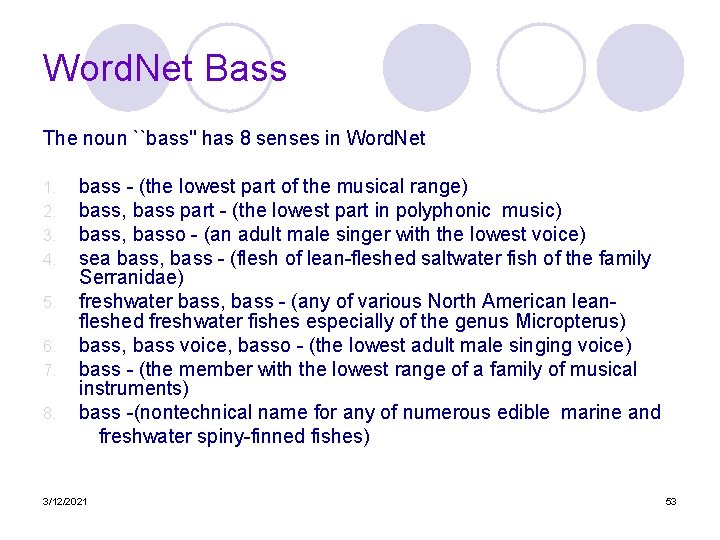

Word. Net - bass l The noun "bass" has 8 senses in Word. Net. 1. bass -- (the lowest part of the musical range) 2. bass, bass part -- (the lowest part in polyphonic music) 3. bass, basso -- (an adult male singer with the lowest voice) 4. sea bass, bass -- (the lean flesh of a saltwater fish of the family Serranidae) 5. freshwater bass, bass -- (any of various North American freshwater fish with lean flesh (especially of the genus Micropterus)) 6. bass, bass voice, basso -- (the lowest adult male singing voice) 7. bass -- (the member with the lowest range of a family of musical instruments) 8. bass -- (nontechnical name for any of numerous edible marine and freshwater spiny-finned fishes) l The adjective "bass" has 1 sense in Word. Net. 1. bass, deep -- (having or denoting a low vocal or instrumental range; "a deep voice"; "a bass voice is lower than a baritone voice"; "a bass clarinet") 3/12/2021 32

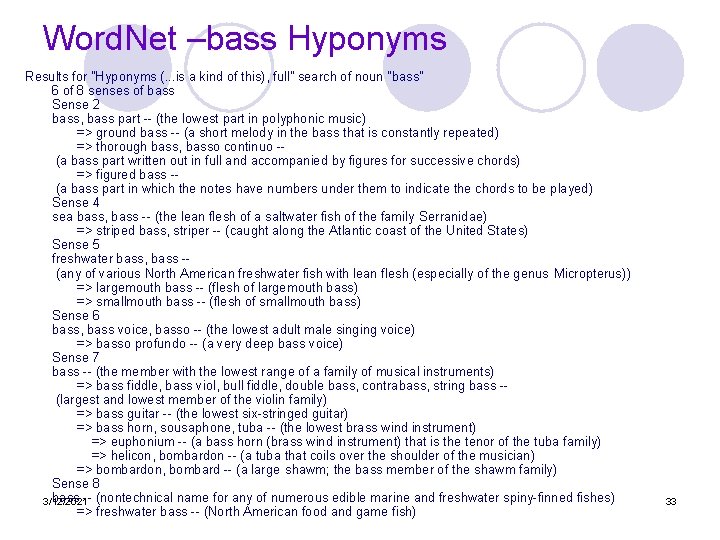

Word. Net –bass Hyponyms Results for "Hyponyms (. . . is a kind of this), full" search of noun "bass" 6 of 8 senses of bass Sense 2 bass, bass part -- (the lowest part in polyphonic music) => ground bass -- (a short melody in the bass that is constantly repeated) => thorough bass, basso continuo - (a bass part written out in full and accompanied by figures for successive chords) => figured bass - (a bass part in which the notes have numbers under them to indicate the chords to be played) Sense 4 sea bass, bass -- (the lean flesh of a saltwater fish of the family Serranidae) => striped bass, striper -- (caught along the Atlantic coast of the United States) Sense 5 freshwater bass, bass - (any of various North American freshwater fish with lean flesh (especially of the genus Micropterus)) => largemouth bass -- (flesh of largemouth bass) => smallmouth bass -- (flesh of smallmouth bass) Sense 6 bass, bass voice, basso -- (the lowest adult male singing voice) => basso profundo -- (a very deep bass voice) Sense 7 bass -- (the member with the lowest range of a family of musical instruments) => bass fiddle, bass viol, bull fiddle, double bass, contrabass, string bass - (largest and lowest member of the violin family) => bass guitar -- (the lowest six-stringed guitar) => bass horn, sousaphone, tuba -- (the lowest brass wind instrument) => euphonium -- (a bass horn (brass wind instrument) that is the tenor of the tuba family) => helicon, bombardon -- (a tuba that coils over the shoulder of the musician) => bombardon, bombard -- (a large shawm; the bass member of the shawm family) Sense 8 bass -- (nontechnical name for any of numerous edible marine and freshwater spiny-finned fishes) 3/12/2021 => freshwater bass -- (North American food and game fish) 33

Word. Net – bass Synonyms Results for "Synonyms, ordered by estimated frequency" search of noun "bass" 8 senses of bass Sense 1 bass -- (the lowest part of the musical range) => low pitch, low frequency -- (a pitch that is perceived as below other pitches) Sense 2 bass, bass part -- (the lowest part in polyphonic music) => part, voice - (the melody carried by a particular voice or instrument in polyphonic music; "he tried to sing the tenor par t") Sense 3 bass, basso -- (an adult male singer with the lowest voice) => singer, vocalist, vocalizer, vocaliser -- (a person who sings) Sense 4 sea bass, bass -- (the lean flesh of a saltwater fish of the family Serranidae) => saltwater fish -- (flesh of fish from the sea used as food) Sense 5 freshwater bass, bass - (any of various North American freshwater fish with lean flesh (especially of the genus Micropterus)) => freshwater fish -- (flesh of fish from fresh water used as food) Sense 6 bass, bass voice, basso -- (the lowest adult male singing voice) => singing voice -- (the musical quality of the voice while singing) Sense 7 3/12/2021 34 bass -- (the member with the lowest range of a family of musical instruments) => musical instrument, instrument --

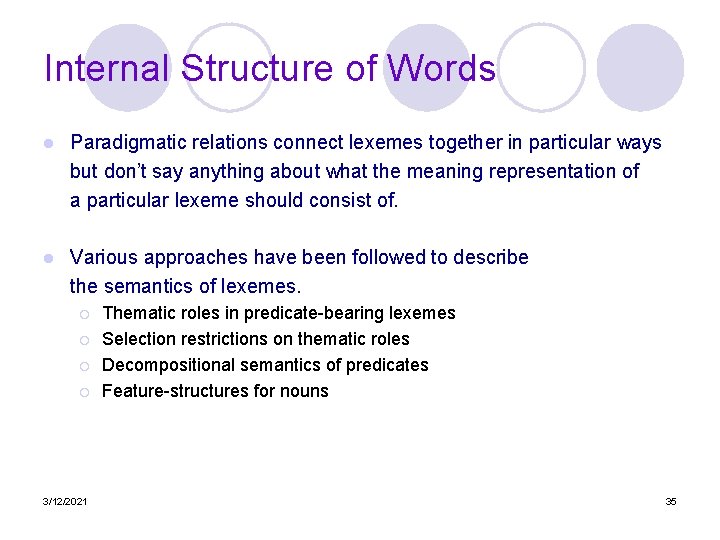

Internal Structure of Words l Paradigmatic relations connect lexemes together in particular ways but don’t say anything about what the meaning representation of a particular lexeme should consist of. l Various approaches have been followed to describe the semantics of lexemes. ¡ ¡ 3/12/2021 Thematic roles in predicate-bearing lexemes Selection restrictions on thematic roles Decompositional semantics of predicates Feature-structures for nouns 35

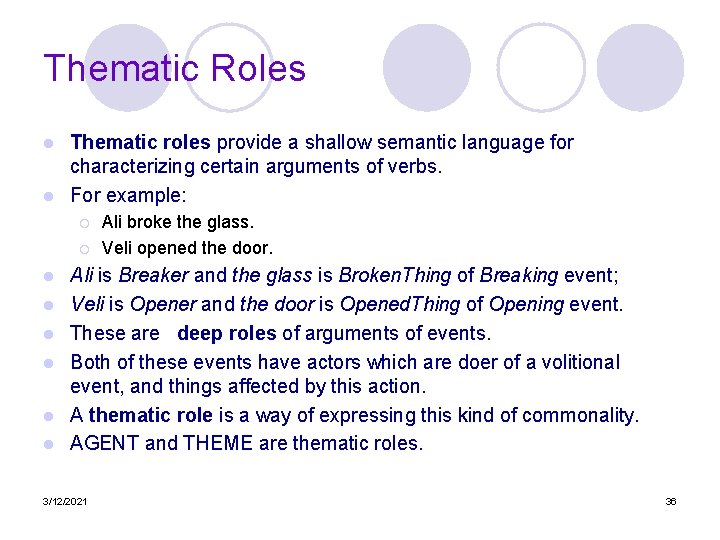

Thematic Roles Thematic roles provide a shallow semantic language for characterizing certain arguments of verbs. l For example: l ¡ ¡ l l l Ali broke the glass. Veli opened the door. Ali is Breaker and the glass is Broken. Thing of Breaking event; Veli is Opener and the door is Opened. Thing of Opening event. These are deep roles of arguments of events. Both of these events have actors which are doer of a volitional event, and things affected by this action. A thematic role is a way of expressing this kind of commonality. AGENT and THEME are thematic roles. 3/12/2021 36

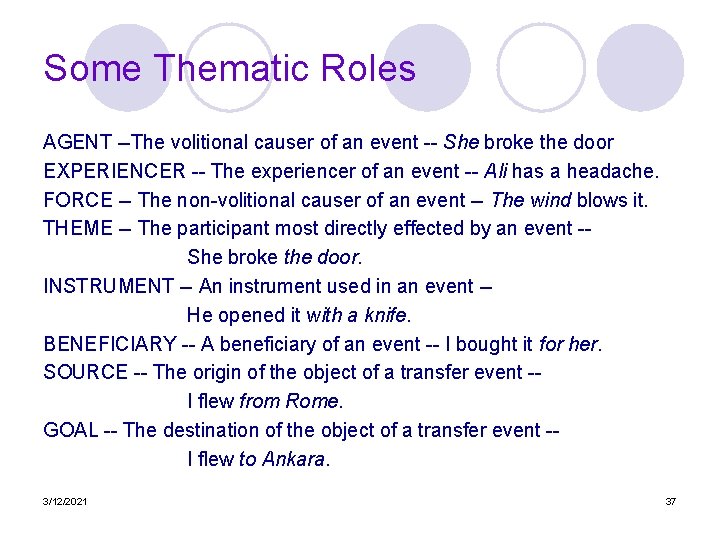

Some Thematic Roles AGENT --The volitional causer of an event -- She broke the door EXPERIENCER -- The experiencer of an event -- Ali has a headache. FORCE -- The non-volitional causer of an event -- The wind blows it. THEME -- The participant most directly effected by an event -- She broke the door. INSTRUMENT -- An instrument used in an event -- He opened it with a knife. BENEFICIARY -- A beneficiary of an event -- I bought it for her. SOURCE -- The origin of the object of a transfer event -- I flew from Rome. GOAL -- The destination of the object of a transfer event -- I flew to Ankara. 3/12/2021 37

Thematic Roles (cont. ) Takes some of the work away from the verbs. l It’s not the case that every verb is unique and has to completely specify how all of its arguments uniquely behave. l Provides a mechanism to organize semantic processing l It permits us to distinguish near surface-level semantics from deeper semantics l 3/12/2021 38

Linking Thematic roles, syntactic categories and their positions in larger syntactic structures are all intertwined in complicated ways. l For example… l AGENTS are often subjects ¡ In a VP->V NP NP rule, the first NP is often a GOAL ¡ and the second a THEME 3/12/2021 39

Deeper Semantics l He melted her reserve with a husky-voiced paean to her eyes. If we label the constituents He and her reserve as the Melter and Melted, then those labels lose any meaning they might have had. l If we make them Agent and Theme then we don’t have the same problems l 3/12/2021 40

Selectional Restrictions l A selectional restriction augments thematic roles by allowing lexemes to place certain semantic restrictions on the lexemes and phrases can accompany them in a sentence. ¡ ¡ ¡ l I want to eat someplace near Bilkent. Now we can say that eat is a predicate that has an AGENT and a THEME And that the AGENT must be capable of eating and the THEME must be capable of being eaten Each sense of a verb can be associated with selectional restrictions. ¡ ¡ THY serves New. York. -- direct object (theme) is a place THY serves breakfast. -- direct object (theme) is a meal. We may use these selectional restrictions to disambiguate a sentence. 3/12/2021 l 41

As Logical Statements l For eat… l Eating(e) ^Agent(e, x)^ Theme(e, y)^Isa(y, Food) (adding in all the right quantifiers and lambdas) 3/12/2021 42

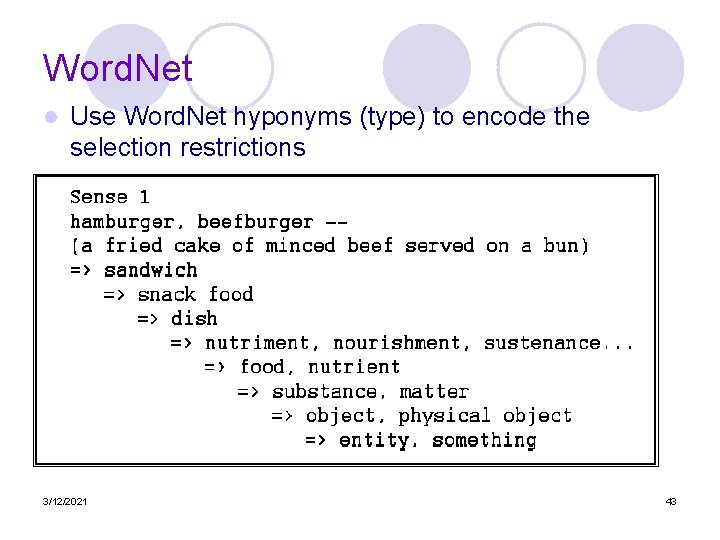

Word. Net l Use Word. Net hyponyms (type) to encode the selection restrictions 3/12/2021 43

Specificity of Restrictions What can you say about THEME in each with respect to the verb? l Some will be high up in the Word. Net hierarchy, others not so high… l l PROBLEMS l Unfortunately, verbs are polysemous and language is creative… … ate glass on an empty stomach accompanied only by water and tea ¡ you can’t eat gold for lunch if you’re hungry ¡ … get it to try to eat Afghanistan ¡ 3/12/2021 44

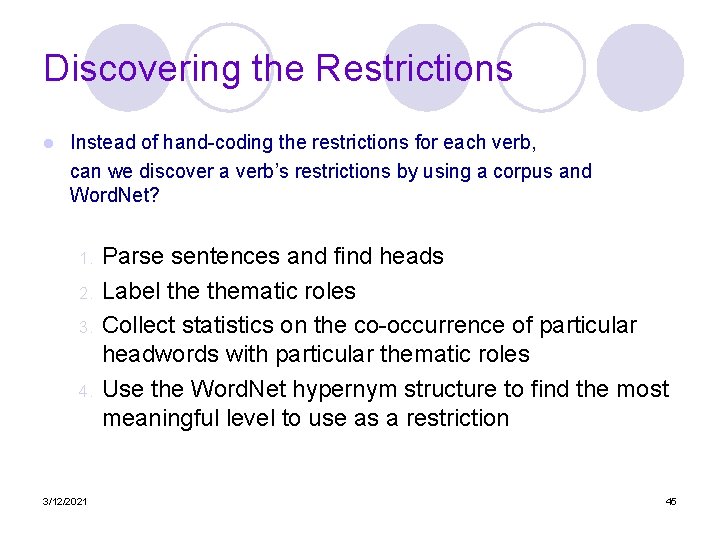

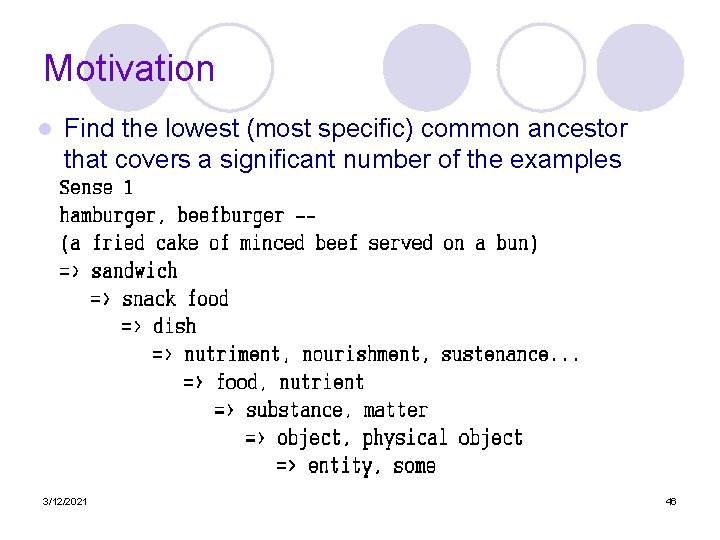

Discovering the Restrictions l Instead of hand-coding the restrictions for each verb, can we discover a verb’s restrictions by using a corpus and Word. Net? 1. 2. 3. 4. 3/12/2021 Parse sentences and find heads Label thematic roles Collect statistics on the co-occurrence of particular headwords with particular thematic roles Use the Word. Net hypernym structure to find the most meaningful level to use as a restriction 45

Motivation l Find the lowest (most specific) common ancestor that covers a significant number of the examples 3/12/2021 46

Word-Sense Disambiguation Word sense disambiguation refers to the process of selecting the right sense for a word from among the senses that the word is known to have l Semantic selection restrictions can be used to disambiguate l ¡ Ambiguous arguments to unambiguous predicates ¡ Ambiguous predicates with unambiguous arguments ¡ Ambiguity all around 3/12/2021 47

Word-Sense Disambiguation l We can use selectional restrictions for disambiguation. ¡ ¡ l But sometimes, selectional restrictions will not be enough to disambiguate. ¡ l What kind of dishes do you recommend? sense is used. -- we cannot know what There can be two lexemes (or more) with multiple senses. ¡ l He cooked simple dishes. He broke the dishes. They serve vegetarian dishes. Selectional restrictions may block the finding of meaning. ¡ ¡ ¡ 3/12/2021 If you want to kill Turkey, eat its banks. Kafayı yedim. These situations leave the system with no possible meanings, and they can indicate a metaphor. 48

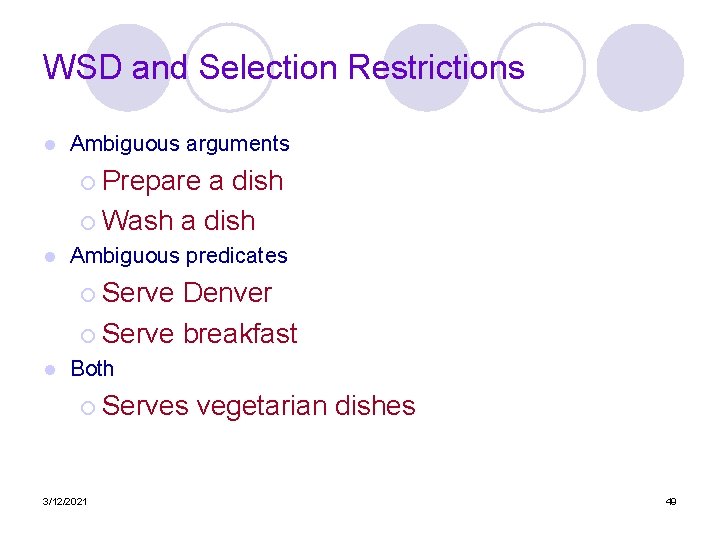

WSD and Selection Restrictions l Ambiguous arguments ¡ Prepare a dish ¡ Wash a dish l Ambiguous predicates ¡ Serve Denver ¡ Serve breakfast l Both ¡ Serves vegetarian dishes 3/12/2021 49

WSD and Selection Restrictions l This approach is complementary to the compositional analysis approach. ¡ You need a parse tree and some form of predicate-argument analysis derived from l The tree and its attachments l All the word senses coming up from the lexemes at the leaves of the tree l Ill-formed analyses are eliminated by noting any selection restriction violations 3/12/2021 50

Problems As we saw last time, selection restrictions are violated all the time. l This doesn’t mean that the sentences are ill-formed or preferred less than others. l This approach needs some way of categorizing and dealing with the various ways that restrictions can be violated l 3/12/2021 51

WSD Tags l What’s a tag? ¡ A dictionary sense? l For example, for Word. Net an instance of “bass” in a text has 8 possible tags or labels (bass 1 through bass 8). 3/12/2021 52

Word. Net Bass The noun ``bass'' has 8 senses in Word. Net bass - (the lowest part of the musical range) bass, bass part - (the lowest part in polyphonic music) bass, basso - (an adult male singer with the lowest voice) sea bass, bass - (flesh of lean-fleshed saltwater fish of the family Serranidae) 5. freshwater bass, bass - (any of various North American leanfleshed freshwater fishes especially of the genus Micropterus) 6. bass, bass voice, basso - (the lowest adult male singing voice) 7. bass - (the member with the lowest range of a family of musical instruments) 8. bass -(nontechnical name for any of numerous edible marine and freshwater spiny-finned fishes) 1. 2. 3. 4. 3/12/2021 53

Representations l Most supervised ML approaches require a very simple representation for the input training data. ¡ Vectors of sets of feature/value pairs l I. e. files of comma-separated values l So our first task is to extract training data from a corpus with respect to a particular instance of a target word ¡ This typically consists of a characterization of the window of text surrounding the target 3/12/2021 54

Representations l This is where ML and NLP intersect ¡ If you stick to trivial surface features that are easy to extract from a text, then most of the work is in the ML system ¡ If you decide to use features that require more analysis (say parse trees) then the ML part may be doing less work (relatively) if these features are truly informative 3/12/2021 55

Surface Representations l Collocational and co-occurrence information ¡ Collocational l Encode features about the words that appear in specific positions to the right and left of the target word • Often limited to the words themselves as well as they’re part of speech ¡ Co-occurrence l Features characterizing the words that occur anywhere in the window regardless of position • Typically limited to frequency counts 3/12/2021 56

Collocational Position-specific information about the words in the window l guitar and bass player stand l ¡ [guitar, NN, and, CJC, player, NN, stand, VVB] ¡ In other words, a vector consisting of ¡ [position n word, position n part-of-speech…] 3/12/2021 57

Co-occurrence Information about the words that occur within the window. l First derive a set of terms to place in the vector. l Then note how often each of those terms occurs in a given window. l 3/12/2021 58

Classifiers l Once we cast the WSD problem as a classification problem, then all sorts of techniques are possible ¡ Naïve Bayes (the right thing to try first) ¡ Decision lists ¡ Decision trees ¡ Neural nets ¡ Support vector machines ¡ Nearest neighbor methods… 3/12/2021 59

Classifiers l The choice of technique, in part, depends on the set of features that have been used Some techniques work better/worse with features with numerical values ¡ Some techniques work better/worse with features that have large numbers of possible values l For example, the feature the word to the left has a fairly large number of possible values ¡ 3/12/2021 60

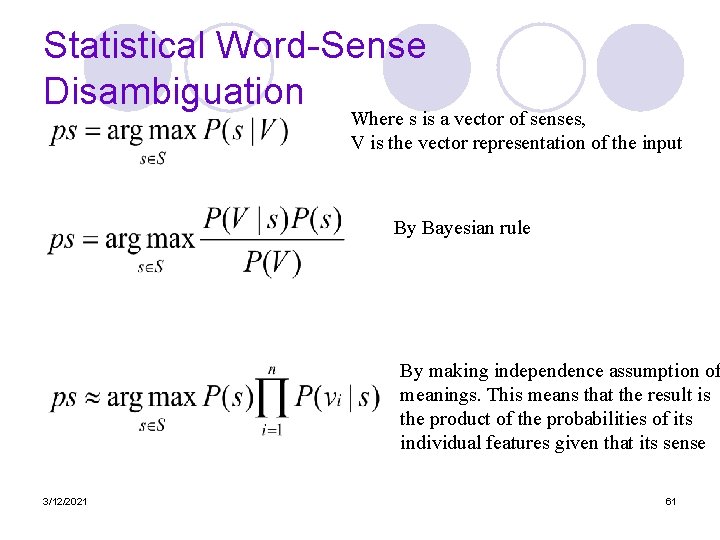

Statistical Word-Sense Disambiguation Where s is a vector of senses, V is the vector representation of the input By Bayesian rule By making independence assumption of meanings. This means that the result is the product of the probabilities of its individual features given that its sense 3/12/2021 61

Problems l Given these general ML approaches, how many classifiers do I need to perform WSD robustly ¡ One for each ambiguous word in the language l How do you decide what set of tags/labels/senses to use for a given word? ¡ Depends on the application 3/12/2021 62

- Slides: 62