KnowledgeBased Kernel Approximation Olvi Mangasarian Jude Shavlik Edward

Knowledge-Based Kernel Approximation Olvi Mangasarian, Jude Shavlik & Edward Wild University of Wisconsin-Madison & University of California at San Diego

Basic Idea v. Use prior knowledge to improve function approximation v. Standard approach: fit function to given data points v. Constrained approach: satisfy constraints at given points v Proposed approach: satisfy constraints over regions v. Proposed approach not employed in extensive approximation literature!

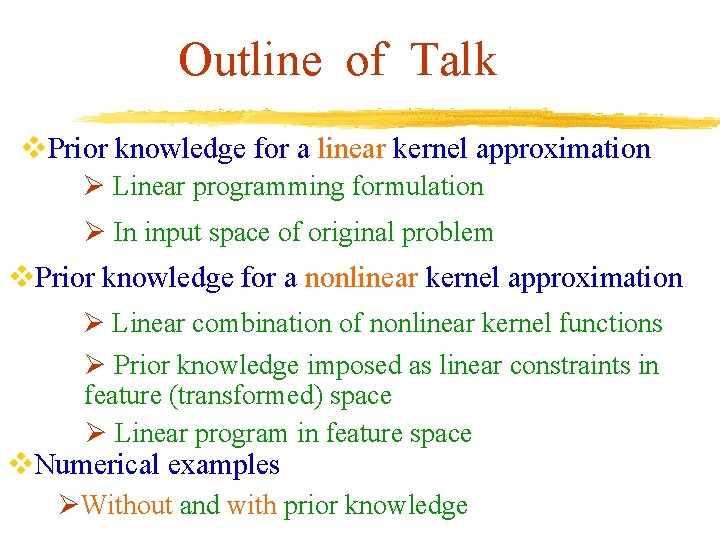

Outline of Talk v. Prior knowledge for a linear kernel approximation Ø Linear programming formulation Ø In input space of original problem v. Prior knowledge for a nonlinear kernel approximation Ø Linear combination of nonlinear kernel functions Ø Prior knowledge imposed as linear constraints in feature (transformed) space Ø Linear program in feature space v. Numerical examples ØWithout and with prior knowledge

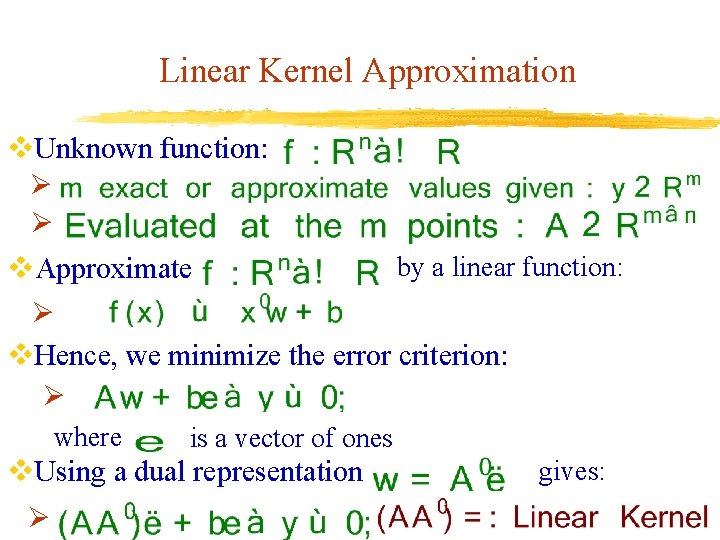

Linear Kernel Approximation v. Unknown function: Ø Ø v. Approximate by a linear function: Ø v. Hence, we minimize the error criterion: Ø where is a vector of ones v. Using a dual representation Ø gives:

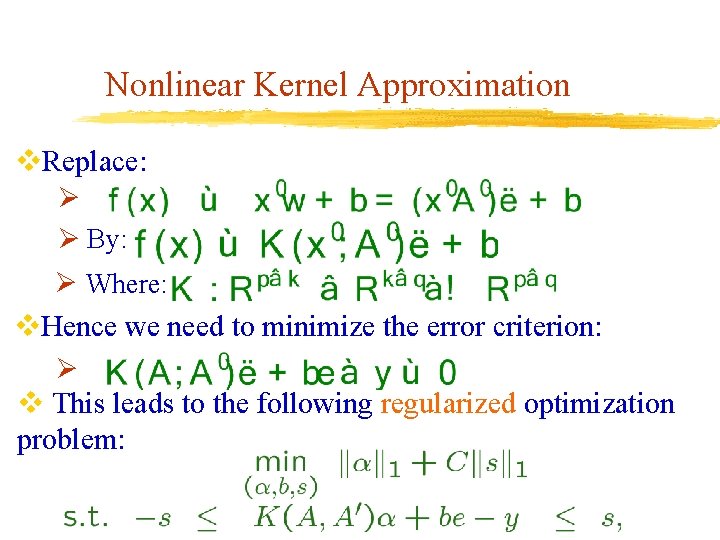

Nonlinear Kernel Approximation v. Replace: Ø Ø By: Ø Where: v. Hence we need to minimize the error criterion: Ø v This leads to the following regularized optimization problem:

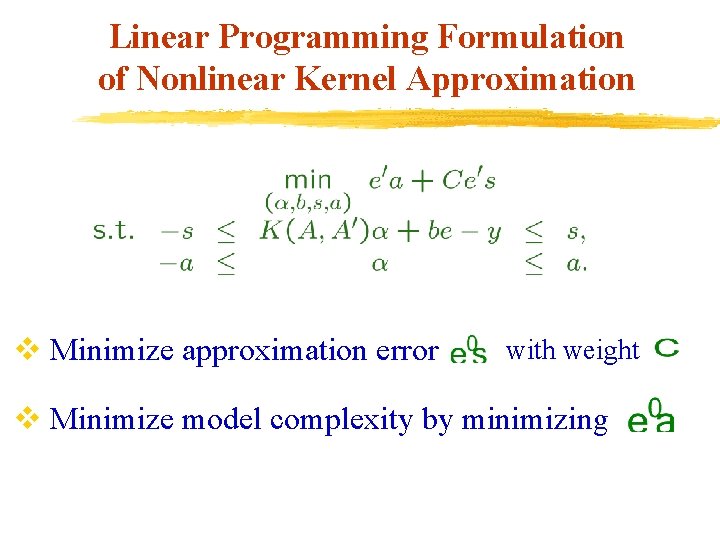

Linear Programming Formulation of Nonlinear Kernel Approximation v Minimize approximation error with weight v Minimize model complexity by minimizing

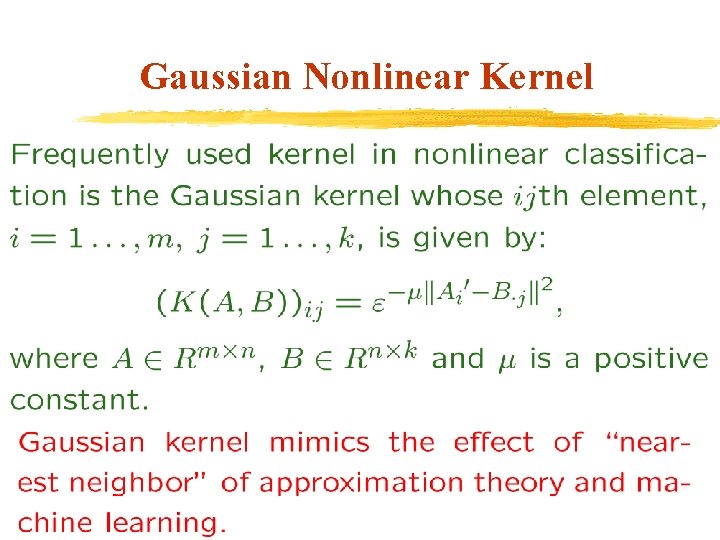

Gaussian Nonlinear Kernel

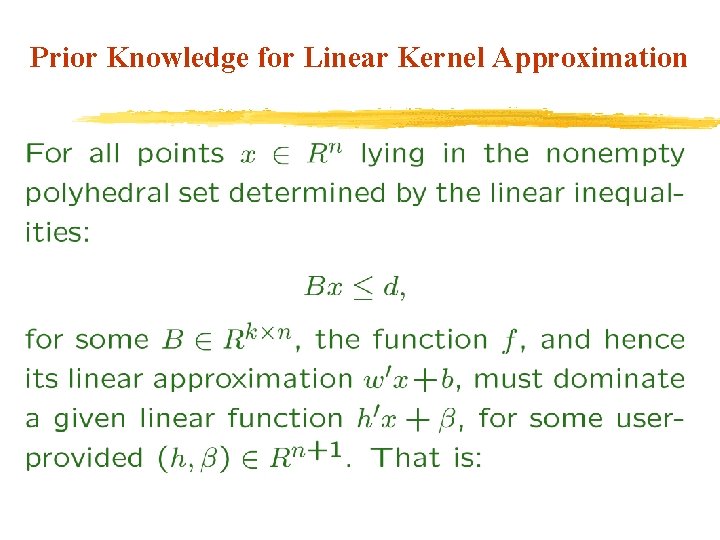

Prior Knowledge for Linear Kernel Approximation

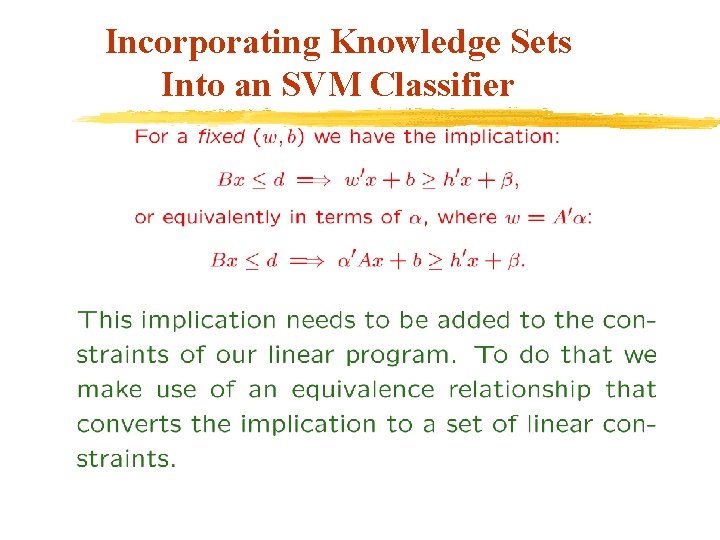

Incorporating Knowledge Sets Into an SVM Classifier

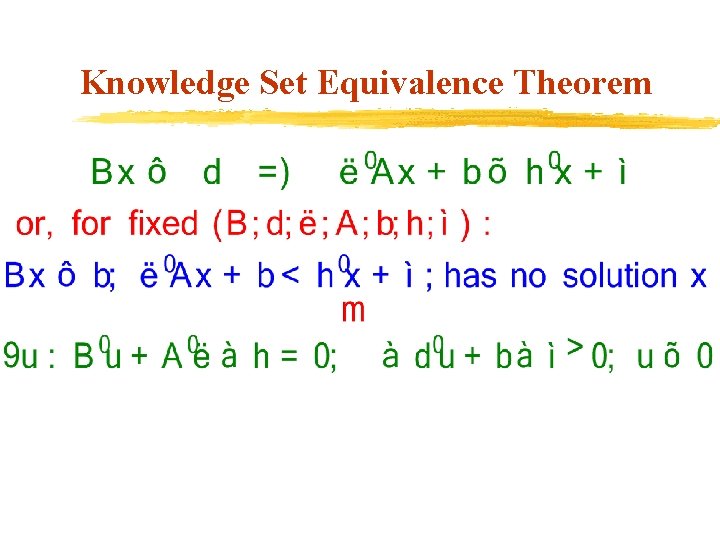

Knowledge Set Equivalence Theorem

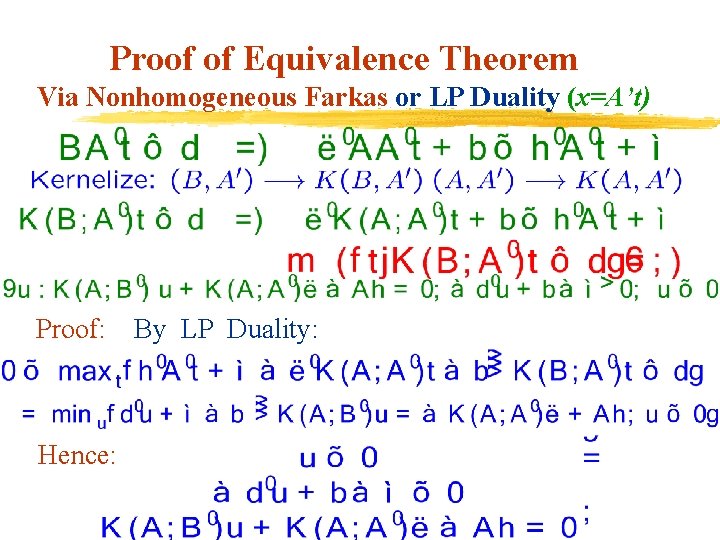

Proof of Equivalence Theorem Via Nonhomogeneous Farkas or LP Duality (x=A’t) Proof: Hence: By LP Duality:

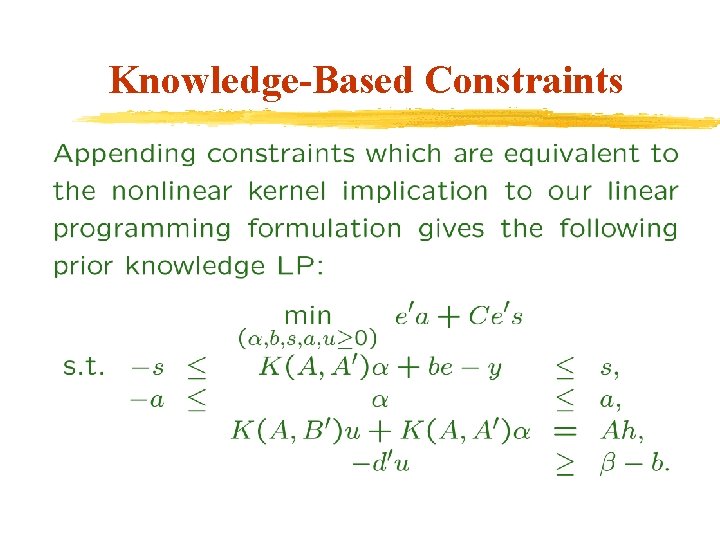

Knowledge-Based Constraints

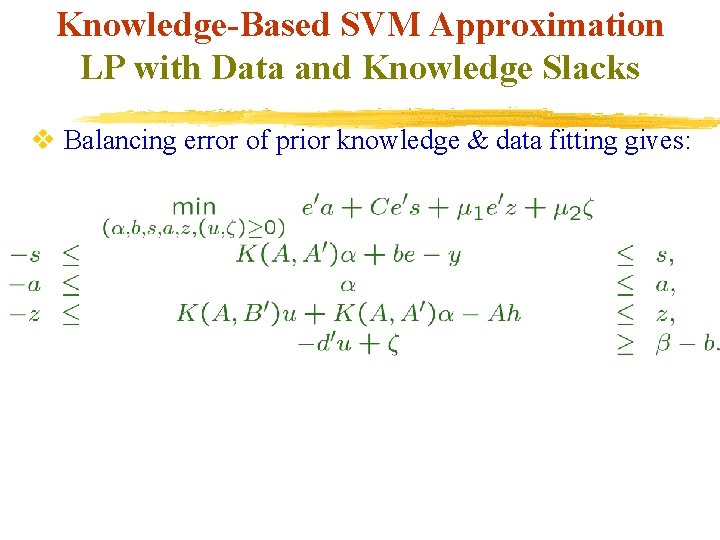

Knowledge-Based SVM Approximation LP with Data and Knowledge Slacks v Balancing error of prior knowledge & data fitting gives:

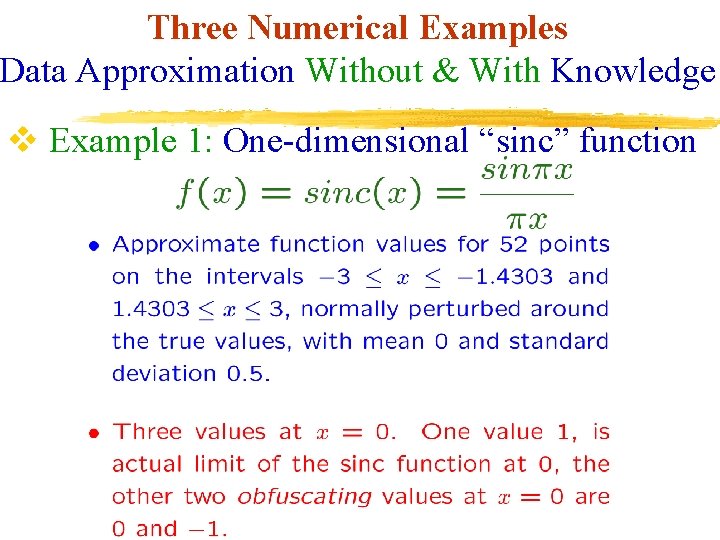

Three Numerical Examples Data Approximation Without & With Knowledge v Example 1: One-dimensional “sinc” function

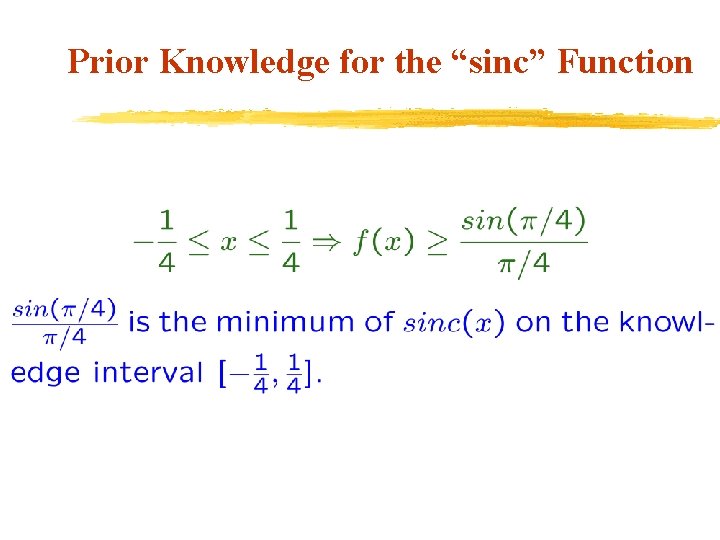

Prior Knowledge for the “sinc” Function

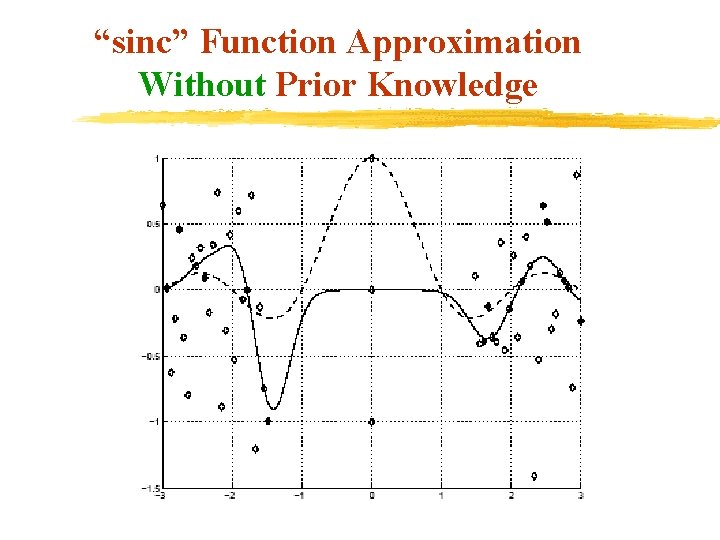

“sinc” Function Approximation Without Prior Knowledge

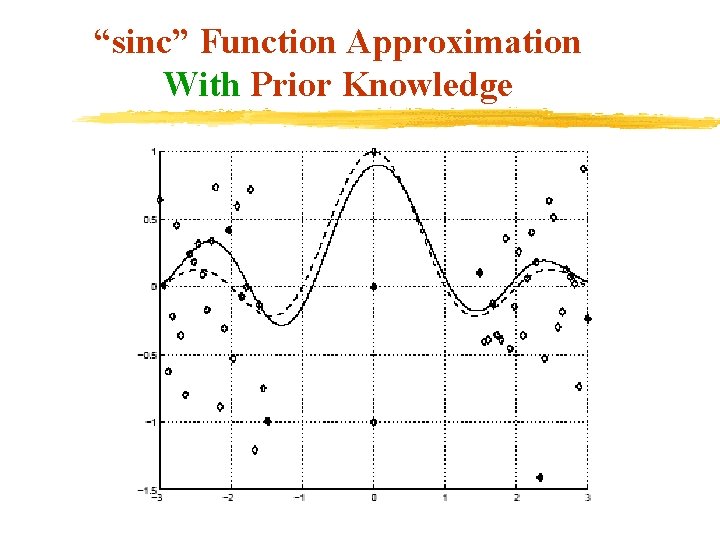

“sinc” Function Approximation With Prior Knowledge

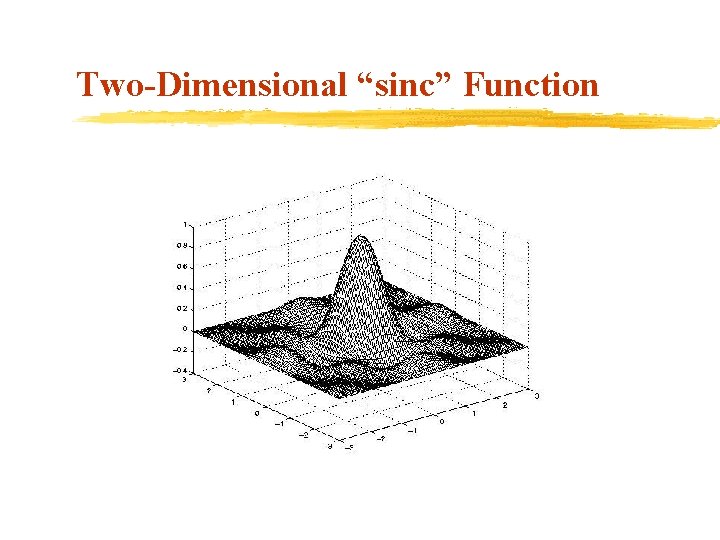

Two-Dimensional “sinc” Function

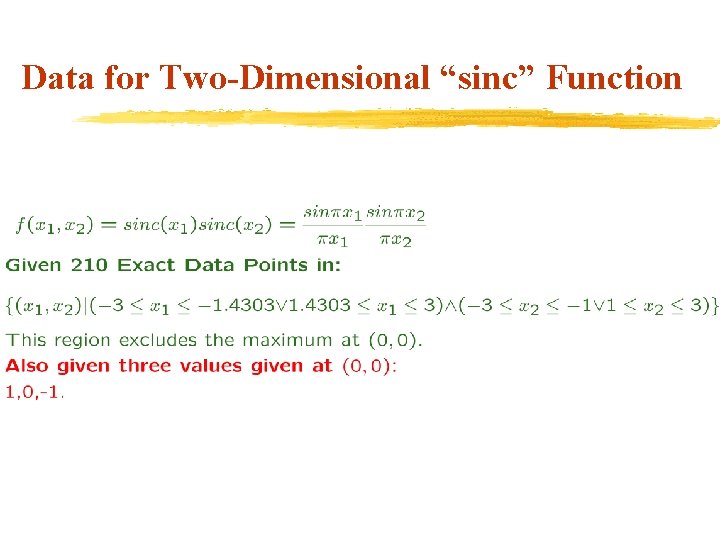

Data for Two-Dimensional “sinc” Function

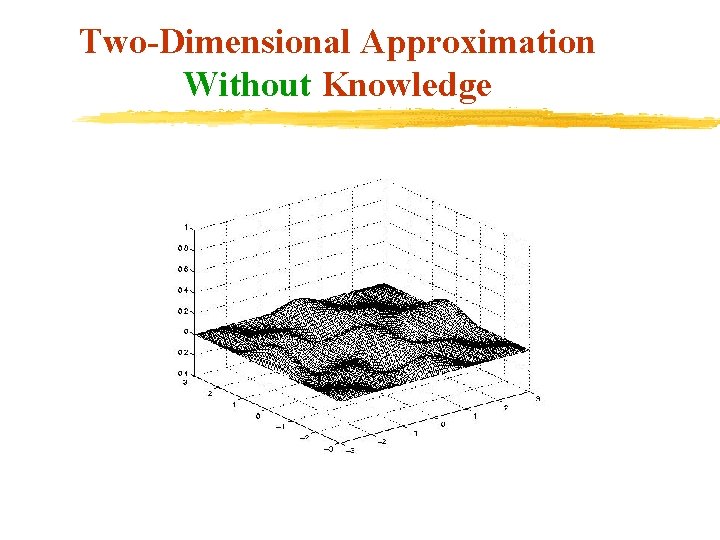

Two-Dimensional Approximation Without Knowledge

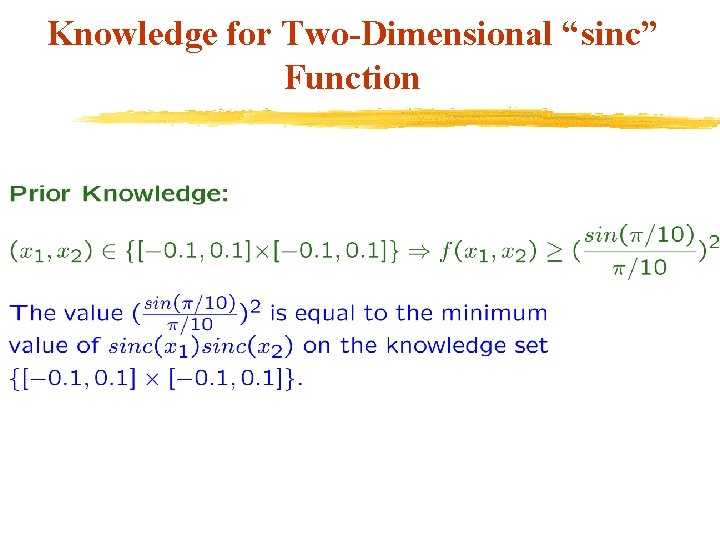

Knowledge for Two-Dimensional “sinc” Function

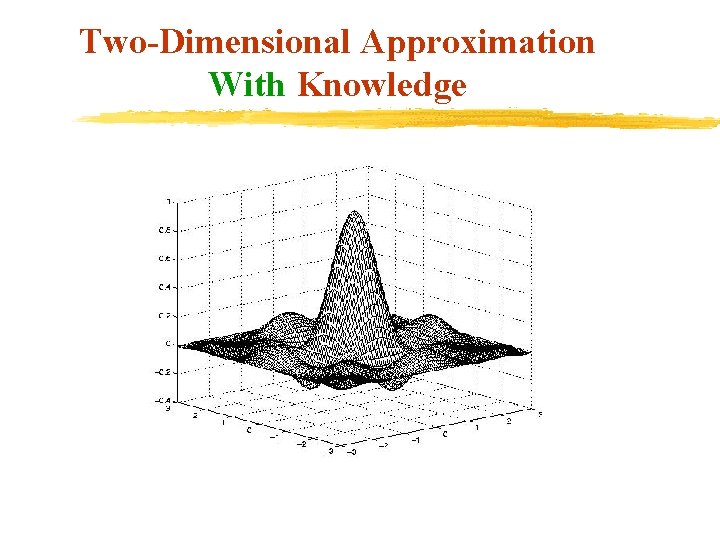

Two-Dimensional Approximation With Knowledge

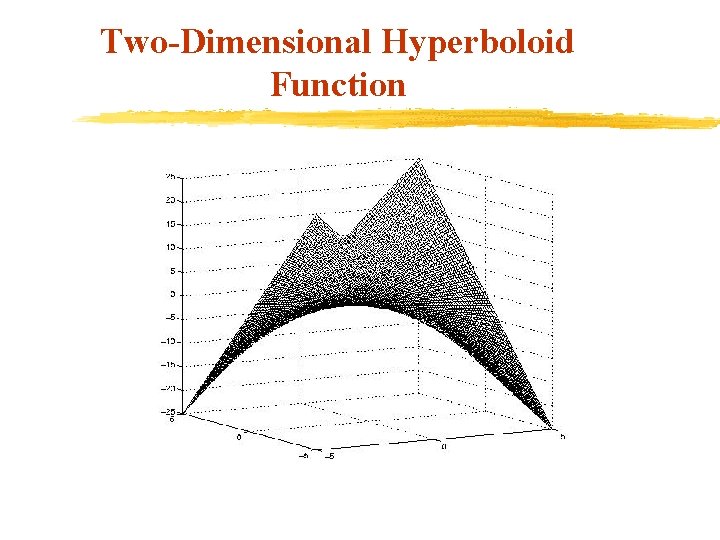

Two-Dimensional Hyperboloid Function

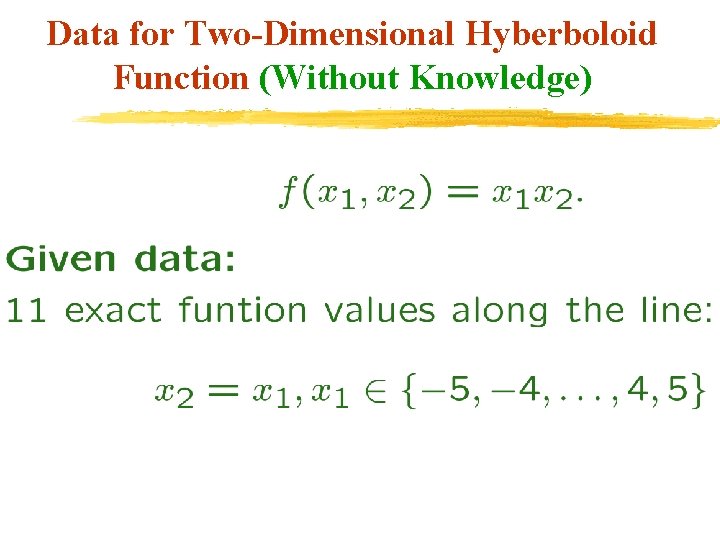

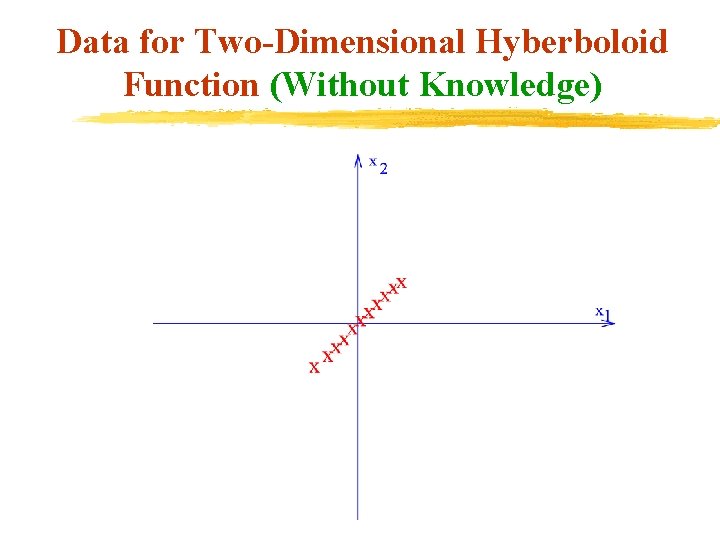

Data for Two-Dimensional Hyberboloid Function (Without Knowledge)

Data for Two-Dimensional Hyberboloid Function (Without Knowledge)

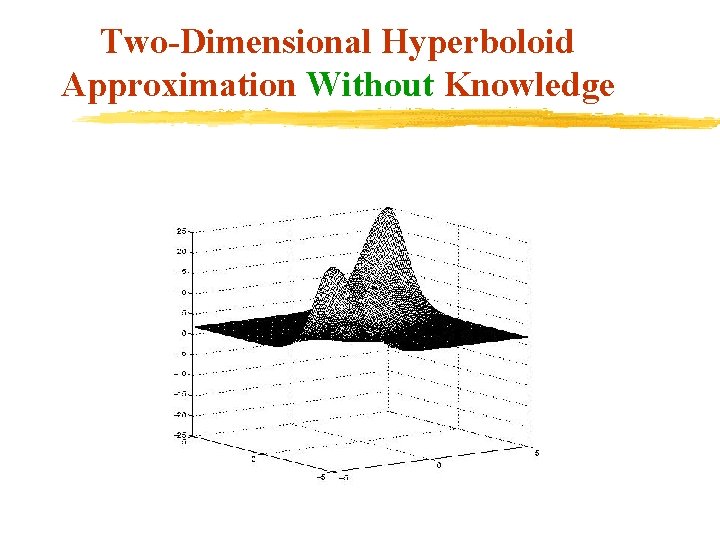

Two-Dimensional Hyperboloid Approximation Without Knowledge

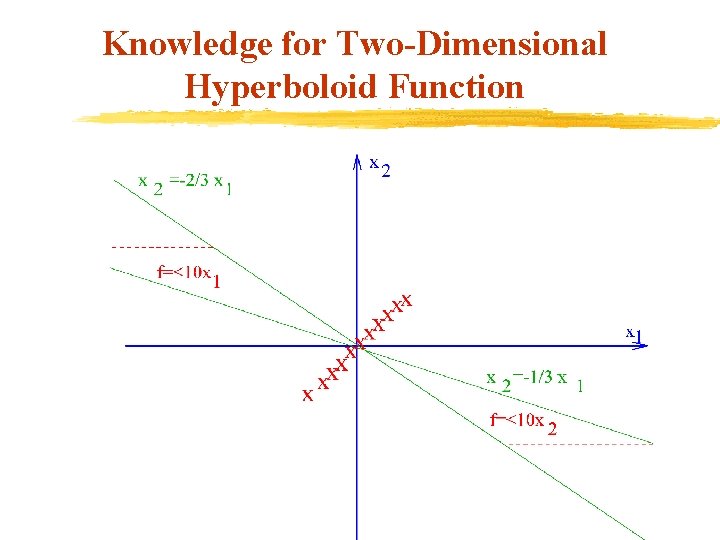

Knowledge for Two-Dimensional Hyperboloid Function

Knowledge for Two-Dimensional Hyperboloid Function

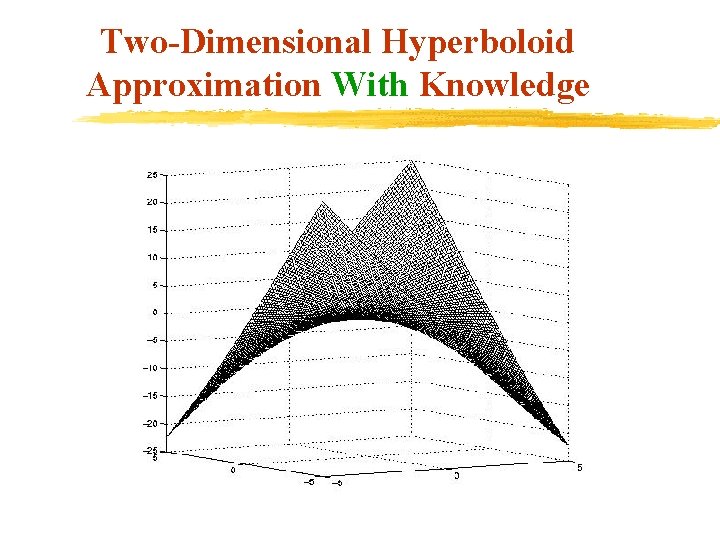

Two-Dimensional Hyperboloid Approximation With Knowledge

Conclusion v Prior knowledge easily incorporated into nonlinear approximation through polyhedral knowledge sets. v Resulting problem is a simple linear program. v Prior knowledge produces substantial improvement v Knowledge sets can be used with or without conventional labeled data.

Future Research v. Generate approximation based on prior expert knowledge in various fields ØDiagnostic rules for various diseases ØFinancial investment rules ØIntrusion detection rules v. Extend knowledge sets to nonpolyhedral convex sets v. Computer vision and robotic applications v. Extend to implication-constrained mathematical programs

Web Pages

- Slides: 32