Graph Mining Laks V S Lakshmanan Based on

Graph Mining Laks V. S. Lakshmanan Based on Xifeng Yan Jiawei Han: g. Span: Graph. Based Substructure Pattern Mining. ICDM 2002. Also see their tech. report (uiuc-cs web site).

What’s the relevance to this course? �Spec. , what does GM have to do with influence analysis? �Connection is technical. �Need to build up some background in GM to appreciate connection. �Will do influence analysis after covering GM.

The problem �Given a database of graphs*, find subgraphs with support >= min. Sup (userspecified threshold). �*graph may be labeled, weighted, etc. Will consider a simple version for clarity. �There is another problem formulation: Given one graph, find frequent pattern sub-graphs.

Basics �Given database D = {G 0, . . . , Gn}, pattern graph g, support. D(g)=#graphs in D that contain g as a sub-graph. Want to find all g: support(g)>=min. Sup. ◦ Mainly interested in frequent connected subgraphs. (Why? ) �Analogous to: Given a database of market basket transactions, find itemsets S: support(S)>=min. Sup – used for association rules and correlations. � Quick review of AR mining next.

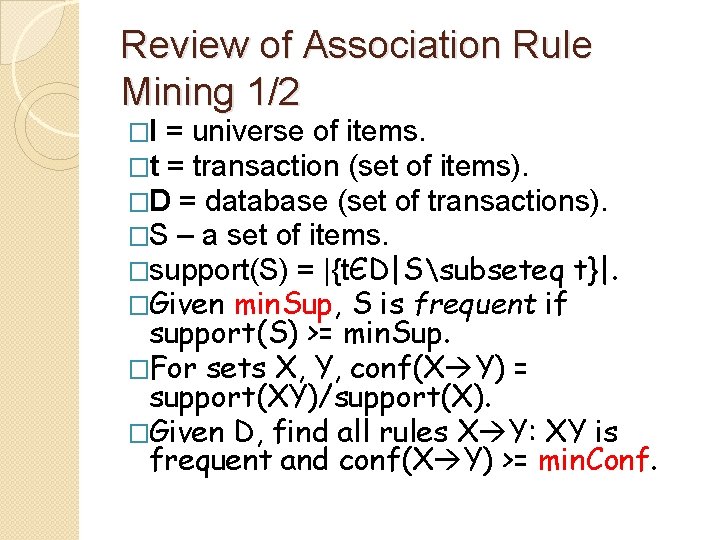

Review of Association Rule Mining 1/2 �I = universe of items. �t = transaction (set of items). �D = database (set of transactions). �S – a set of items. �support(S) = |{tЄD|Ssubseteq t}|. �Given min. Sup, S is frequent if support(S) >= min. Sup. �For sets X, Y, conf(X Y) = support(XY)/support(X). �Given D, find all rules X Y: XY is frequent and conf(X Y) >= min. Conf.

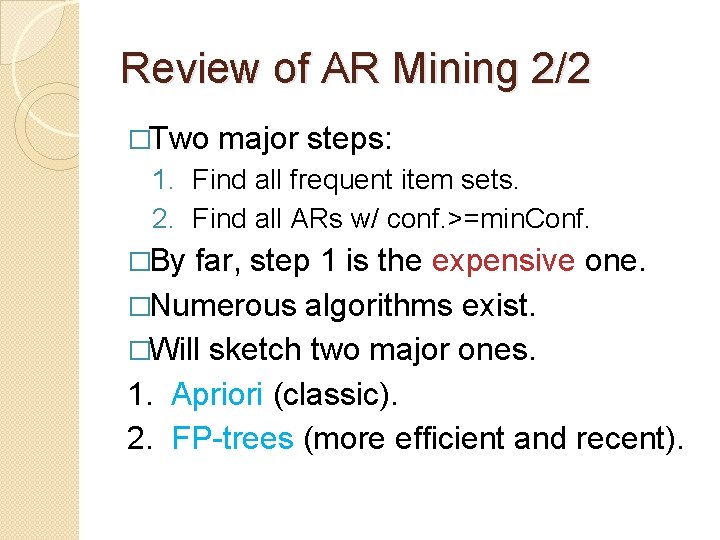

Review of AR Mining 2/2 �Two major steps: 1. Find all frequent item sets. 2. Find all ARs w/ conf. >=min. Conf. �By far, step 1 is the expensive one. �Numerous algorithms exist. �Will sketch two major ones. 1. Apriori (classic). 2. FP-trees (more efficient and recent).

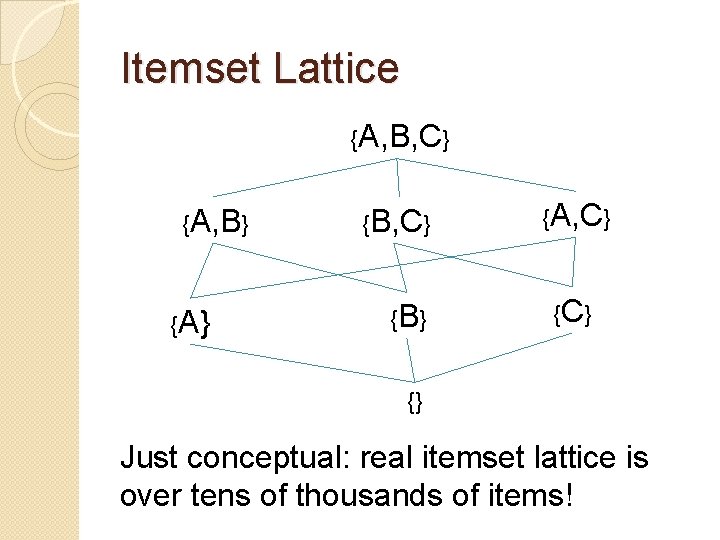

Itemset Lattice {A, B, C} {A, B} {A} {B, C} {B } {A, C} {} Just conceptual: real itemset lattice is over tens of thousands of items!

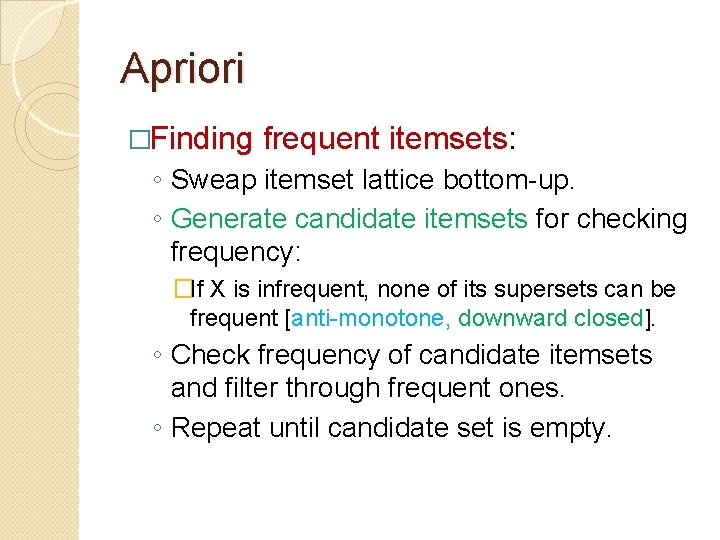

Apriori �Finding frequent itemsets: ◦ Sweap itemset lattice bottom-up. ◦ Generate candidate itemsets for checking frequency: �If X is infrequent, none of its supersets can be frequent [anti-monotone, downward closed]. ◦ Check frequency of candidate itemsets and filter through frequent ones. ◦ Repeat until candidate set is empty.

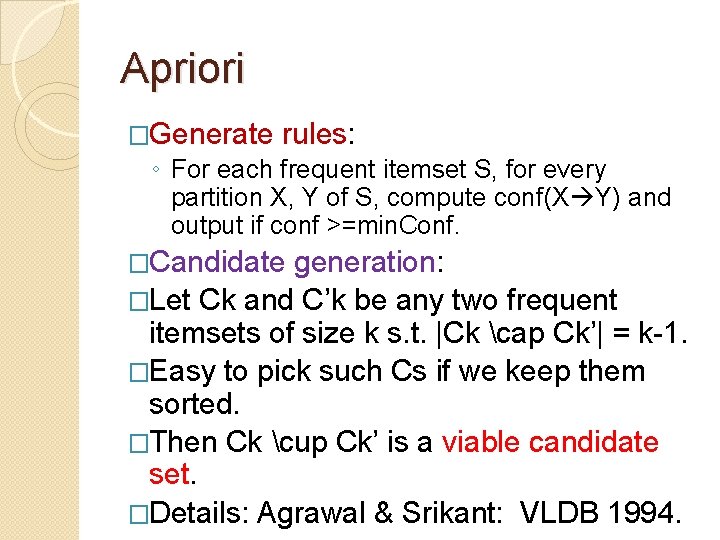

Apriori �Generate rules: ◦ For each frequent itemset S, for every partition X, Y of S, compute conf(X Y) and output if conf >=min. Conf. �Candidate generation: �Let Ck and C’k be any two frequent itemsets of size k s. t. |Ck cap Ck’| = k-1. �Easy to pick such Cs if we keep them sorted. �Then Ck cup Ck’ is a viable candidate set. �Details: Agrawal & Srikant: VLDB 1994.

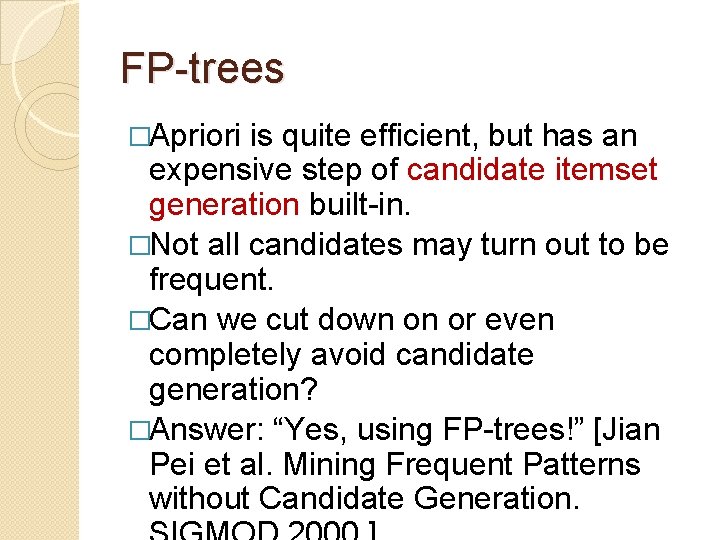

FP-trees �Apriori is quite efficient, but has an expensive step of candidate itemset generation built-in. �Not all candidates may turn out to be frequent. �Can we cut down on or even completely avoid candidate generation? �Answer: “Yes, using FP-trees!” [Jian Pei et al. Mining Frequent Patterns without Candidate Generation.

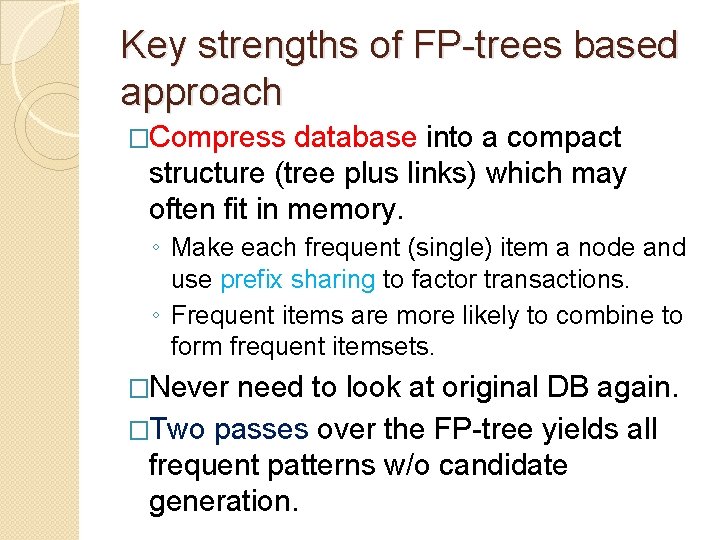

Key strengths of FP-trees based approach �Compress database into a compact structure (tree plus links) which may often fit in memory. ◦ Make each frequent (single) item a node and use prefix sharing to factor transactions. ◦ Frequent items are more likely to combine to form frequent itemsets. �Never need to look at original DB again. �Two passes over the FP-tree yields all frequent patterns w/o candidate generation.

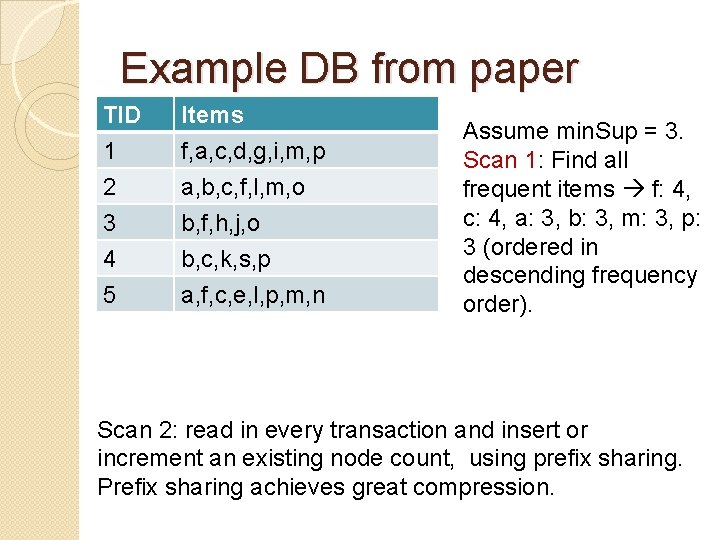

Example DB from paper TID 1 2 3 Items f, a, c, d, g, i, m, p a, b, c, f, l, m, o b, f, h, j, o 4 5 b, c, k, s, p a, f, c, e, l, p, m, n Assume min. Sup = 3. Scan 1: Find all frequent items f: 4, c: 4, a: 3, b: 3, m: 3, p: 3 (ordered in descending frequency order). Scan 2: read in every transaction and insert or increment an existing node count, using prefix sharing. Prefix sharing achieves great compression.

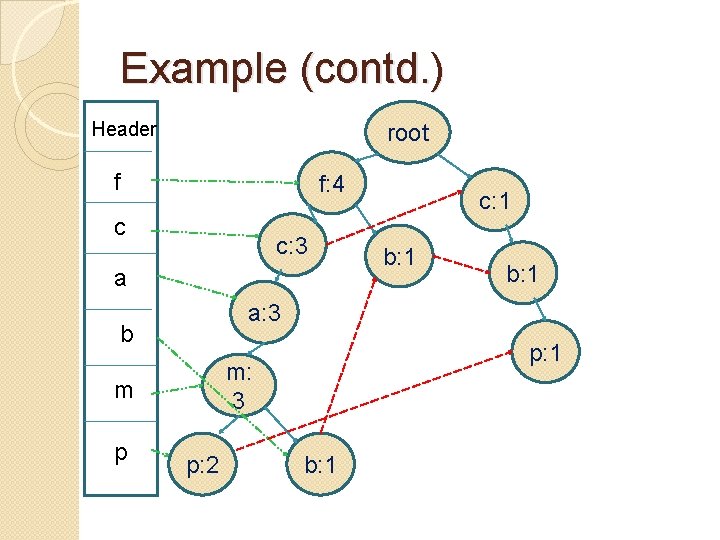

Example (contd. ) Header root f f: 4 c c: 3 a He b: 1 a: 3 b p: 1 m: 3 m p c: 1 p: 2 b: 1

FP-tree construction �Simple recursive algorithm: when you read a transaction (ordered in desc. freq. order and keeping only freq. items), reflect each item into the current tree by either: ◦ Incrementing count of (prefix) matching node, OR ◦ Starting a new branch.

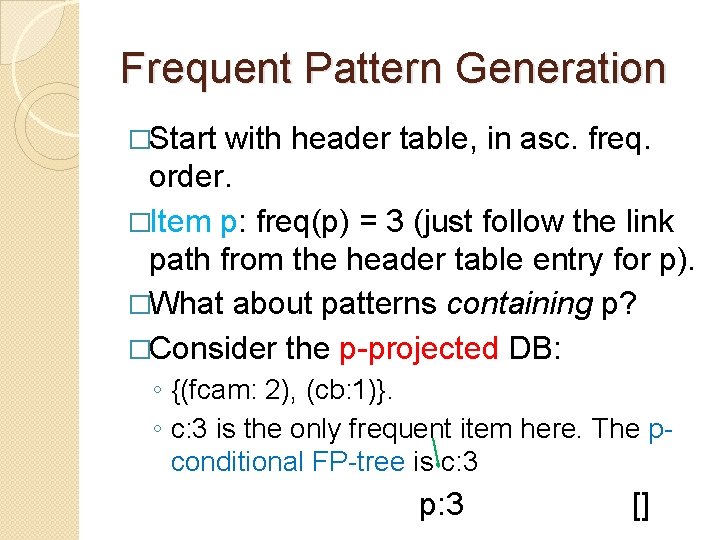

Frequent Pattern Generation �Start with header table, in asc. freq. order. �Item p: freq(p) = 3 (just follow the link path from the header table entry for p). �What about patterns containing p? �Consider the p-projected DB: ◦ {(fcam: 2), (cb: 1)}. ◦ c: 3 is the only frequent item here. The pconditional FP-tree is c: 3 p: 3 []

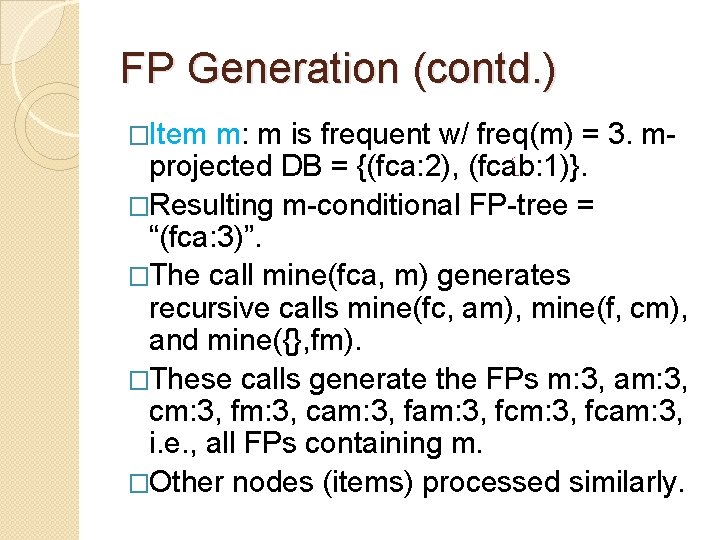

FP Generation (contd. ) �Item m: m is frequent w/ freq(m) = 3. mprojected DB = {(fca: 2), (fcab: 1)}. �Resulting m-conditional FP-tree = “(fca: 3)”. �The call mine(fca, m) generates recursive calls mine(fc, am), mine(f, cm), and mine({}, fm). �These calls generate the FPs m: 3, am: 3, cm: 3, fm: 3, cam: 3, fcm: 3, fcam: 3, i. e. , all FPs containing m. �Other nodes (items) processed similarly.

FP-trees concluding remarks �Note avoidance of expensive candidate generation step. �Read both the SIGMOD 2000 paper and the journal version DMKD 2004 for more details and ideas of parellelizing using divide and conquer. �Practical Note: OTOH, Apriori is one of the highly optimized codes for pattern mining.

Additional Topics on Frequent Patterns �ARs with constraints: e. g. , X Y, such that SUM(X. price) <= c and AVG(Y. price) >= c’. (See Ng et al. SIGMOD 98 and Lakshmanan et al. SIGMOD 99 and numerous follow-ups. ) �Leung et al. 20? ? Push constraints into FP-tree algorithm. �Closed patterns: background and Galois lattice theory. (see Zaki et al. and Pasquier et al. ) �Maximal patterns and patterns with highest frequency.

Graph Mining Resumed �Early work on GM followed the footsteps of Apriori: ◦ Generate candidate subgraphs of size (k+1) from size k (frequent) subgraphs (expensive). ◦ Eliminate infrequent candidates with a subgraph isomorphism test (NP-complete). �g. Span replaces both by a simple depthfirst search (no false positives to prune!). �Key idea: DFS code. �Recall – main interest freq. connected subgraphs.

g. Span Framework �G = (V, E, L, l) – node- and edgelabeled, undirected. �Only interested in connected frequent subgraphs. ◦ Frequent(g) support(g) >= min. Sup. �Recall notions of isomorphism, subgraph iso, and automorphism.

DFS and Pruning �See tech. report, Fig. 1, p 5. �How does it relate to the item set lattice for frequent item set mining? �DFS Tree: (GT) see TR, Fig. 2, p 6. ◦ Clearly many DFS trees possible for a graph. ◦ Root, right-most node, right-most path. ◦ Forward and backward edges. �Partial order on fwd & bwd edges.

DFS & Pruning (contd. ) 1: PO on fwd edges: (i 1, j 1) ‹ (i 2, j 2) iff j 1 < j 2: e. g. , (v 0, v 1) < (v 2, v 3) in Fig. 2 b. 2: PO on bwd edges: (i 1, j 1) ‹ (i 2, j 2) iff either (a) i 1 < i 2 OR (b) i 1=i 2 & j 1 < j 2: e. g. , (v 2, v 0) < (v 3, v 1) [Fig. 2 b] and (v 2, v 0) < (v 3, v 0) [Fig. 2 c-d].

DFS & Pruning (contd. ) 3: PO b/w fwd and bwd edges: e 1 = (i 1, j 1) < e 2 = (i 2, j 2) iff: e 1 is fwd and e 2 is bwd and j 1≤i 2 OR e 1 is bwd and e 2 is fwd and i 1<j 2. Theorem: 1 -3 defines a total order. Proof: Exercise: Prove that the above TO is equiv. to: “< is the transitive closure of: (i) e 1<e 2 if i 1=i 2 & j 1<j 2; (ii) e 1<e 2 if i 1<j 1 & j 1=i 2. ”

DFS & Pruning (contd. ) �DFS Code: The unique ordering of edges in a DFS tree (e. g. , Fig. 2 b-d). �Procedure: ◦ Pick start node (root). ◦ Repeat { �Add fwd edge connecting current “code” to any new node; �Add all bwd edges connecting new node to any old node} ◦ Until (no more edges left). �E. g. , Fig. 2.

DFS & Pruning (contd. ) �But a given graph has numerous DFS codes, making it complex to check if a pattern g is isomorphic to a subgraph of some graph Gi in D. �Solution: In one pass, gather counts of node labels and edge labels and order them in non-ascending frequency order. �We will assume frequency order = alphabetical order, for clarity.

DFS &Pruning (contd. ) �e. g. : X-a->X < X-b->X, X-a->X < X-a->Y, etc. �Think simple lex ordering on the strings of length 3 (node. Label. edge. Label. node. Label). �Visiting time (wrt DFS) is important too: e. g. , (0, 1, ? , ? ) < (0, 2, ? , ? ), (1, ? , ? ), etc. �So what? Well, we get a unique minimum DFS code for a graph. Revisit Fig. 2 a. gamma is its minimum DFS code.

g. Span Algorithm Graph Set Projection. 2. Sub-graph Mining. 1. ◦ ◦ Makes crucial use of min. DFS code; Involves expensive step of computing min. version of given DFS code. Remarks: g. Span one of the best known algorithms for graph mining. Much more efficient than prior art (synthetic and real data sets). Still quite expensive.

Graph Mining (Final Remarks) �Considerable work since g. Span. �Constraints on patterns and pushing constraints into the “mining loop”. �Xifeng Yan et al. Mining significant graph patterns by leap search. SIGMOD 2007. �M. Deshpande et al. Frequent substructurebased approaches for classifying chemical compounds. IEEE TKDE. 17: 1036 --1050, 2005. �X. Yan et al. Graph indexing: A frequent structure-based approach. SIGMOD, 335 -346, 2004. �M. Hasan et al. ORIGAMI: Mining representative orthogonal graph patterns. In Proc. of ICDM, pages 153 --162, 2007.

�F. Pennerath and A. Napoli. Mining frequent most informative subgraphs. In the 5 th Int. Workshop on Mining and Learning with Graphs, 2007. �X. Yan and J. Han. Close. Graph: Mining closed frequent graph patterns. SIGKDD, 286 --295, 2003.

- Slides: 29