Fay Extensible Distributed Tracing from Kernels to Clusters

Fay Extensible Distributed Tracing from Kernels to Clusters Úlfar Erlingsson, Google Inc. Marcus Peinado, Microsoft Research Simon Peter, Systems Group, ETH Zurich Mihai Budiu, Microsoft Research 1

Wouldn’t it be nice if… • We could know what our clusters were doing? • We could ask any question, … easily, using one simple-to-use system. • We could collect answers extremely efficiently … so cheaply we may even ask continuously. 2

Let’s imagine. . . • Applying data-mining to cluster tracing • Bag of words technique – Compare documents w/o structural knowledge – N-dimensional feature vectors – K-means clustering • Can apply to clusters, too! 3

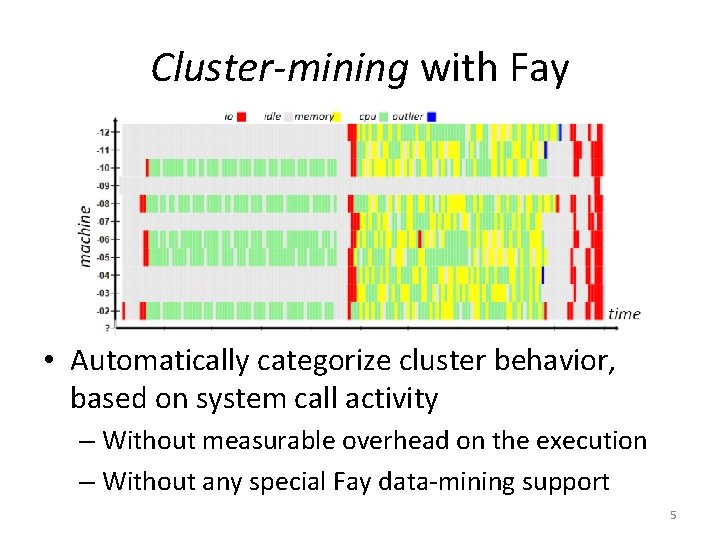

Cluster-mining with Fay • Automatically categorize cluster behavior, based on system call activity 4

Cluster-mining with Fay • Automatically categorize cluster behavior, based on system call activity – Without measurable overhead on the execution – Without any special Fay data-mining support 5

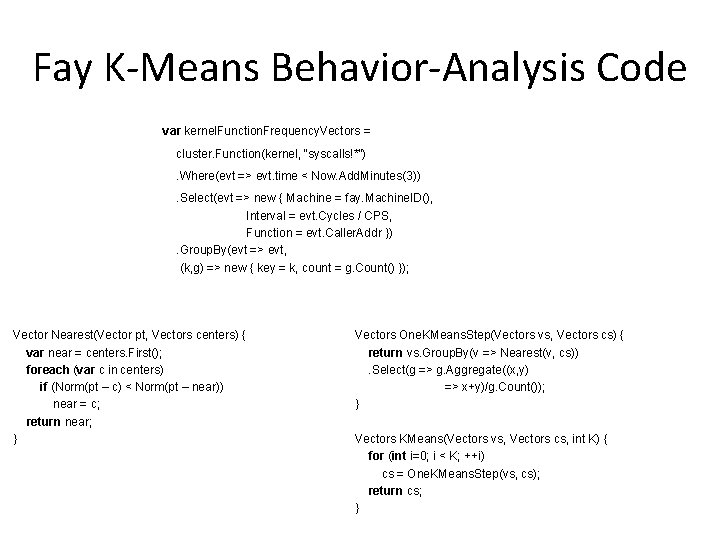

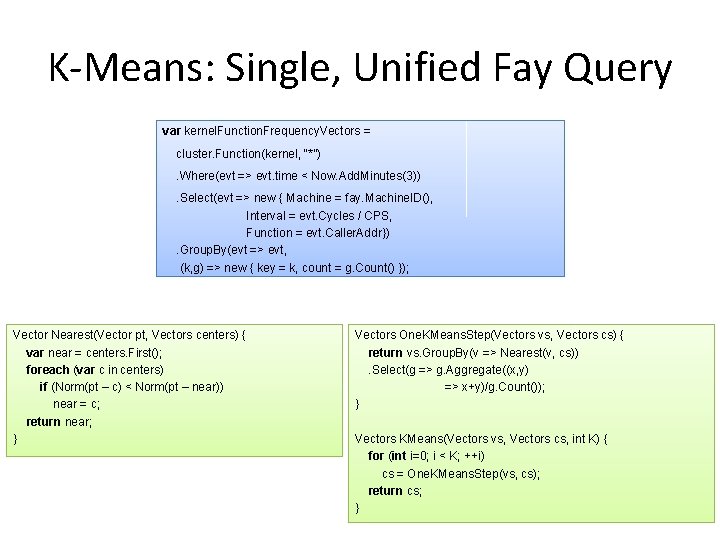

Fay K-Means Behavior-Analysis Code var kernel. Function. Frequency. Vectors = cluster. Function(kernel, “syscalls!*”). Where(evt => evt. time < Now. Add. Minutes(3)). Select(evt => new { Machine = fay. Machine. ID(), Interval = evt. Cycles / CPS, Function = evt. Caller. Addr }). Group. By(evt => evt, (k, g) => new { key = k, count = g. Count() }); Vector Nearest(Vector pt, Vectors centers) { var near = centers. First(); foreach (var c in centers) if (Norm(pt – c) < Norm(pt – near)) near = c; return near; } Vectors One. KMeans. Step(Vectors vs, Vectors cs) { return vs. Group. By(v => Nearest(v, cs)). Select(g => g. Aggregate((x, y) => x+y)/g. Count()); } Vectors KMeans(Vectors vs, Vectors cs, int K) { for (int i=0; i < K; ++i) cs = One. KMeans. Step(vs, cs); return cs; } 6

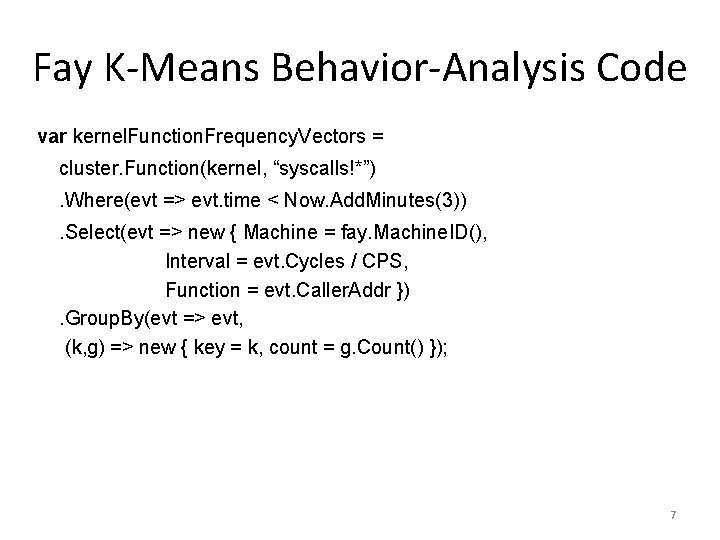

Fay K-Means Behavior-Analysis Code var kernel. Function. Frequency. Vectors = cluster. Function(kernel, “syscalls!*”). Where(evt => evt. time < Now. Add. Minutes(3)). Select(evt => new { Machine = fay. Machine. ID(), Interval = evt. Cycles / CPS, Function = evt. Caller. Addr }). Group. By(evt => evt, (k, g) => new { key = k, count = g. Count() }); 7

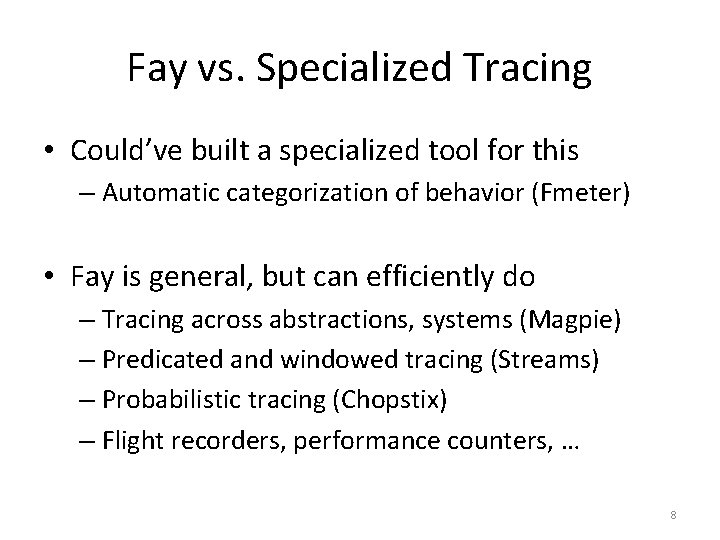

Fay vs. Specialized Tracing • Could’ve built a specialized tool for this – Automatic categorization of behavior (Fmeter) • Fay is general, but can efficiently do – Tracing across abstractions, systems (Magpie) – Predicated and windowed tracing (Streams) – Probabilistic tracing (Chopstix) – Flight recorders, performance counters, … 8

Key Takeaways Fay: Flexible monitoring of distributed executions – Can be applied to existing, live Windows servers 1. Single query specifies both tracing & analysis – Easy to write & enables automatic optimizations 2. Pervasively data-parallel, scalable processing – Same model within machines & across clusters 3. Inline, safe machine-code at tracepoints – Allows us to do computation right at data source 9

K-Means: Single, Unified Fay Query var kernel. Function. Frequency. Vectors = = cluster. Function(kernel, “*”). Where(evt =>=> evt. time < Now. Add. Minutes(3)). Select(evt =>=> new { Machine = fay. Machine. ID(), = Machine. ID(), Interval = evt. Cycles = w. Cycles/ /CPS, Function = evt. Caller. Addr}) = w. Caller. Addr}). Group. By(evt =>=> evt, (k, g) =>=> new { key = k, count = g. Count() }); Vector Nearest(Vector pt, Vectors centers) { var near = centers. First(); foreach (var c in centers) if (Norm(pt (|pt – c| < –|ptc)–<near|) Norm(pt – near)) near = c; return near; } Vectors One. KMeans. Step(Vectors vs, Vectors cs) { return vs. Group. By(v => Nearest(v, cs)). Select(g => g. Aggregate((x, y) => x+y)/g. Count()); } Vectors KMeans(Vectors vs, Vectors cs, int K) { for (int i=0; i < K; ++i) cs = One. KMeans. Step(vs, cs); return cs; } 10

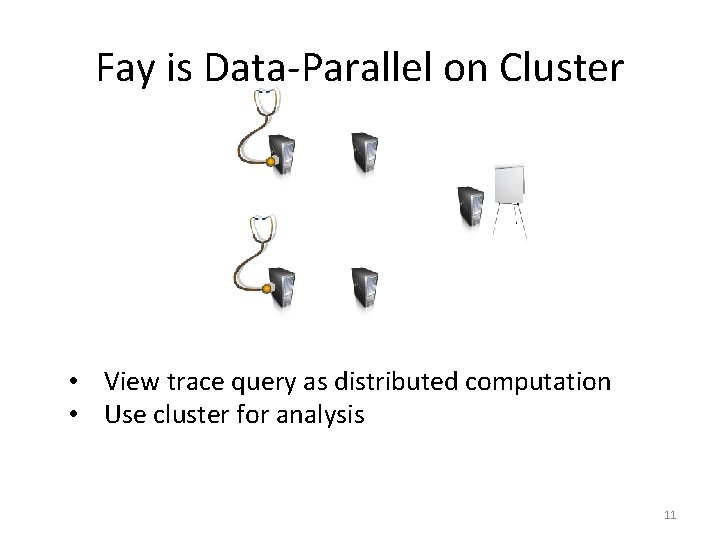

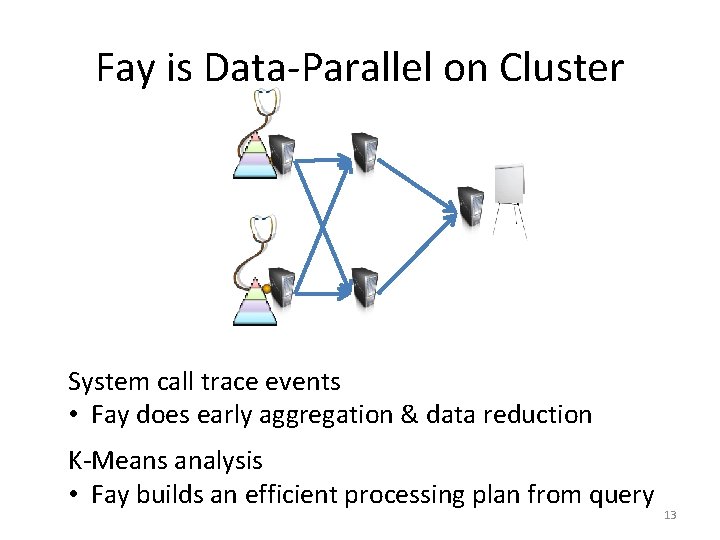

Fay is Data-Parallel on Cluster • View trace query as distributed computation • Use cluster for analysis 11

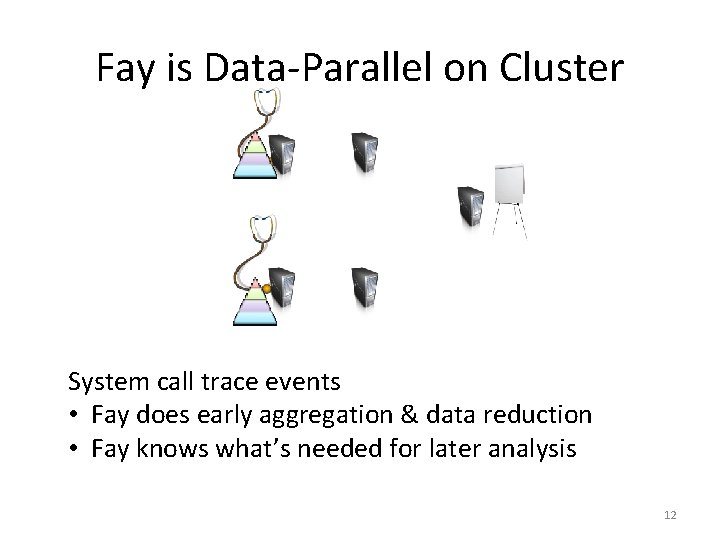

Fay is Data-Parallel on Cluster System call trace events • Fay does early aggregation & data reduction • Fay knows what’s needed for later analysis 12

Fay is Data-Parallel on Cluster System call trace events • Fay does early aggregation & data reduction K-Means analysis • Fay builds an efficient processing plan from query 13

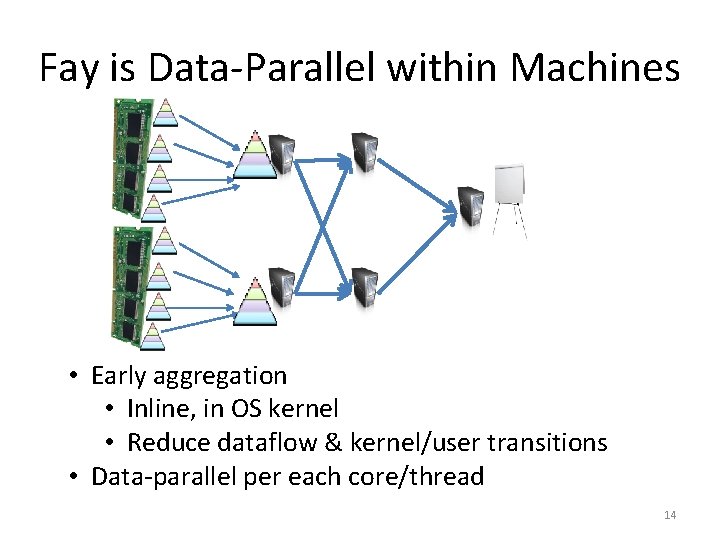

Fay is Data-Parallel within Machines • Early aggregation • Inline, in OS kernel • Reduce dataflow & kernel/user transitions • Data-parallel per each core/thread 14

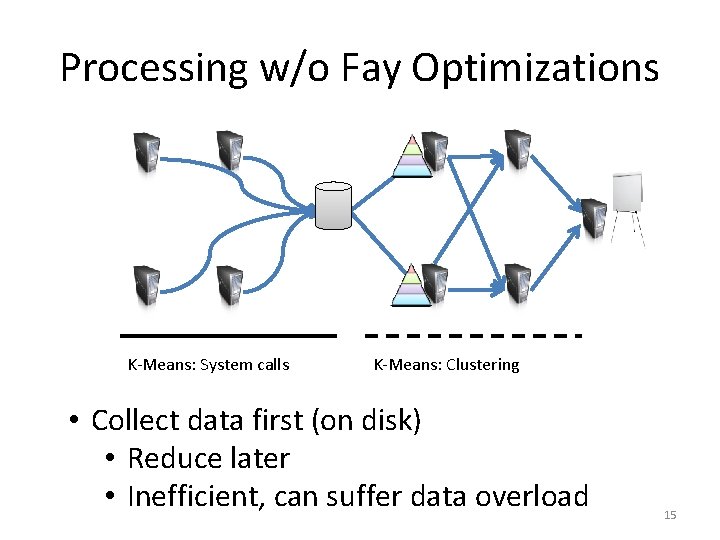

Processing w/o Fay Optimizations K-Means: System calls K-Means: Clustering • Collect data first (on disk) • Reduce later • Inefficient, can suffer data overload 15

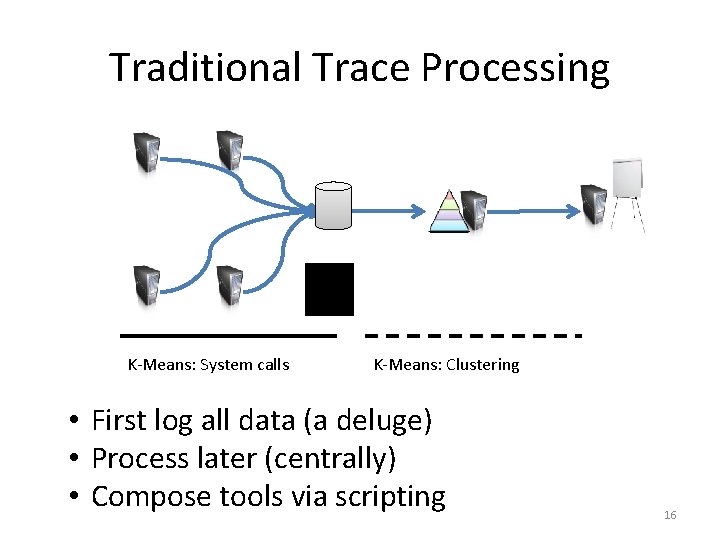

Traditional Trace Processing K-Means: System calls K-Means: Clustering • First log all data (a deluge) • Process later (centrally) • Compose tools via scripting 16

Takeaways so far Fay: Flexible monitoring of distributed executions 1. Single query specifies both tracing & analysis 2. Pervasively data-parallel, scalable processing 17

Safety of Fay Tracing Probes • A variant of XFI used for safety [OSDI’ 06] – Works well in the kernel or any address space – Can safely use existing stacks, etc. – Instead of language interpreter (DTrace) – Arbitrary, efficient, stateful computation • Probes can access thread-local/global state • Probes can try to read any address – I/O registers are protected 18

Key Takeaways, Again Fay: Flexible monitoring of distributed executions 1. Single query specifies both tracing & analysis 2. Pervasively data-parallel, scalable processing 3. Inline, safe machine-code at tracepoints 19

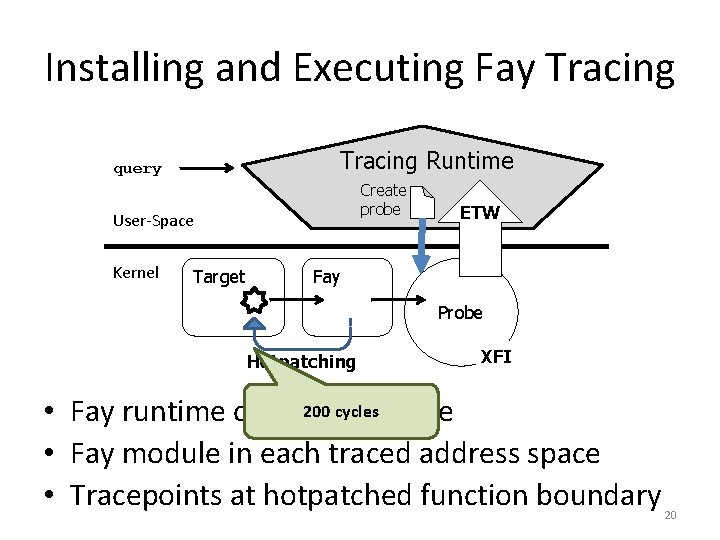

Installing and Executing Fay Tracing Runtime query Create probe User-Space Kernel Target ETW Fay Probe Hotpatching XFI 200 cycles • Fay runtime on each machine • Fay module in each traced address space • Tracepoints at hotpatched function boundary 20

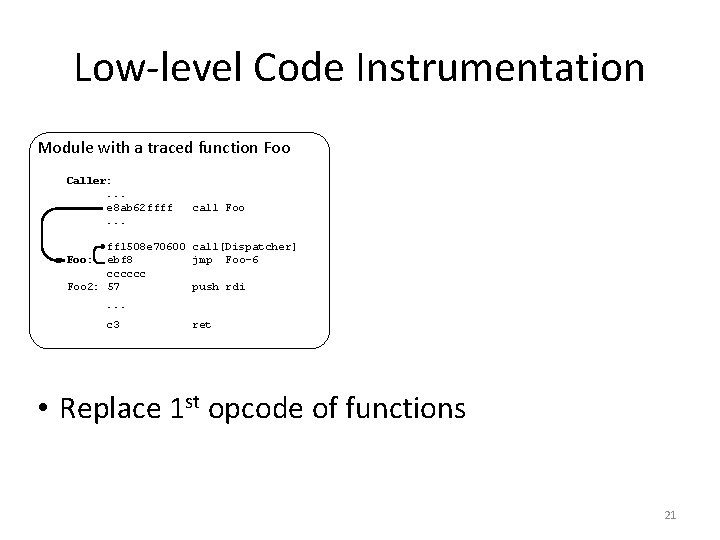

Low-level Code Instrumentation Module with a traced function Foo Caller: . . . e 8 ab 62 ffff. . . call Foo ff 1508 e 70600 call[Dispatcher] ebf 8 jmp Foo-6 cccccc Foo 2: 57 push rdi Foo: . . . c 3 ret • Replace 1 st opcode of functions 21

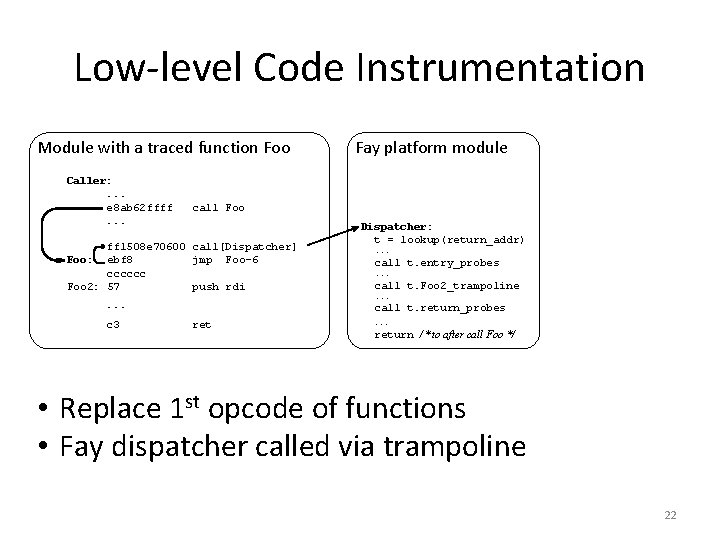

Low-level Code Instrumentation Module with a traced function Foo Caller: . . . e 8 ab 62 ffff. . . call Foo ff 1508 e 70600 call[Dispatcher] ebf 8 jmp Foo-6 cccccc Foo 2: 57 push rdi Foo: . . . c 3 Fay platform module Dispatcher: t = lookup(return_addr). . . call t. entry_probes. . . call t. Foo 2_trampoline. . . call t. return_probes ret . . . return /* to after call Foo */ • Replace 1 st opcode of functions • Fay dispatcher called via trampoline 22

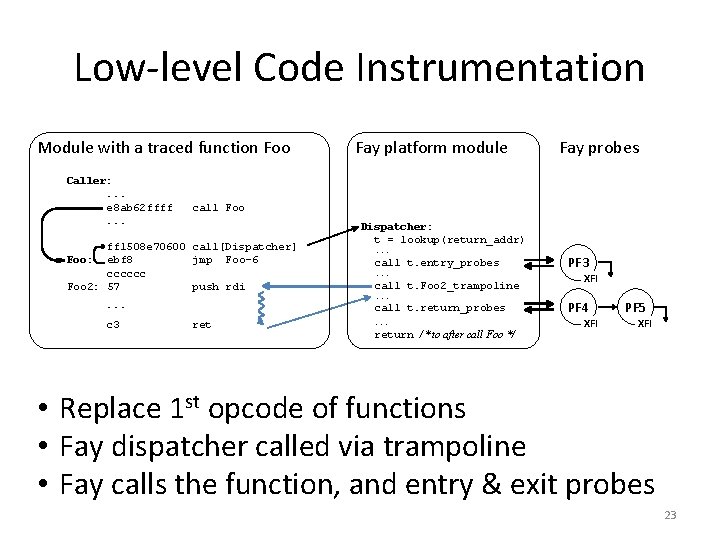

Low-level Code Instrumentation Module with a traced function Foo Caller: . . . e 8 ab 62 ffff. . . Fay probes call Foo ff 1508 e 70600 call[Dispatcher] ebf 8 jmp Foo-6 cccccc Foo 2: 57 push rdi Foo: . . . c 3 Fay platform module Dispatcher: t = lookup(return_addr). . . call t. entry_probes. . . call t. Foo 2_trampoline. . . call t. return_probes ret . . . return /* to after call Foo */ PF 3 XFI PF 4 XFI PF 5 XFI • Replace 1 st opcode of functions • Fay dispatcher called via trampoline • Fay calls the function, and entry & exit probes 23

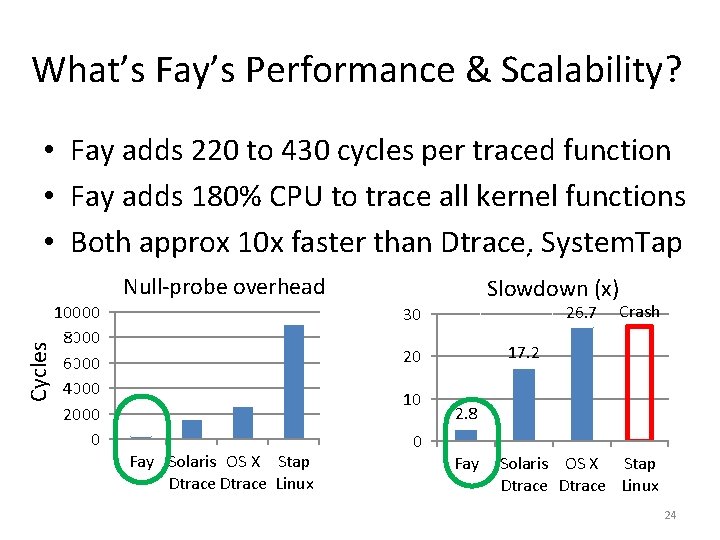

What’s Fay’s Performance & Scalability? • Fay adds 220 to 430 cycles per traced function • Fay adds 180% CPU to trace all kernel functions • Both approx 10 x faster than Dtrace, System. Tap Cycles Null-probe overhead 10000 8000 6000 4000 2000 0 Slowdown (x) 26. 7 30 17. 2 20 10 Fay Solaris OS X Stap Dtrace Linux 0 Crash 2. 8 Fay Solaris OS X Stap Dtrace Linux 24

Fay Scalability on a Cluster • Fay tracing memory allocations, in a loop: – Ran workload on a 128 -node, 1024 -core cluster – Spread work over 128 to 1, 280, 000 threads – 100% CPU utilization • Fay overhead was 1% to 11% (mean 7. 8%) 25

More Fay Implementation Details • Details of query-plan optimizations • Case studies of different tracing strategies • Examples of using Fay for performance analysis • Fay is based on LINQ and Windows specifics – Could build on Linux using Ftrace, Hadoop, etc. • Some restrictions apply currently – E. g. , skew towards batch processing due to Dryad 26

Conclusion • Fay: Flexible tracing of distributed executions • Both expressive and efficient – Unified trace queries – Pervasive data-parallelism – Safe machine-code probe processing • Often equally efficient as purpose-built tools 27

Backup 28

A Fay Trace Query from io in cluster. Function("iolib!Read") where io. time < Now. Add. Minutes(5) let size = io. Arg(2) // request size in bytes group io by size/1024 into g select new { size. In. Kilobytes = g. Key, count. Of. Read. IOs = g. Count() }; • Aggregates read activity in iolib module • Across cluster, both user-mode & kernel • Over 5 minutes 29

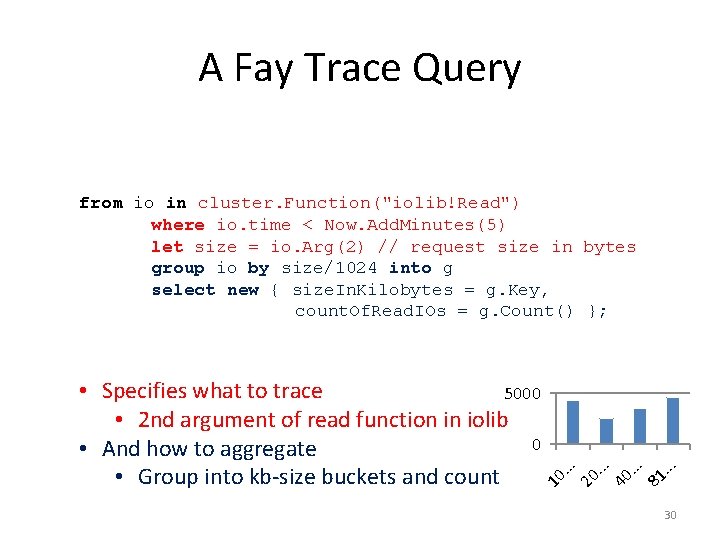

A Fay Trace Query . . . 81 . . . 40 . . . 20 10 • Specifies what to trace 5000 • 2 nd argument of read function in iolib 0 • And how to aggregate • Group into kb-size buckets and count . . . from io in cluster. Function("iolib!Read") where io. time < Now. Add. Minutes(5) let size = io. Arg(2) // request size in bytes group io by size/1024 into g select new { size. In. Kilobytes = g. Key, count. Of. Read. IOs = g. Count() }; 30

- Slides: 30