Fast Multiprocessor Memory Allocation and Garbage Collection Based

Fast Multiprocessor Memory Allocation and Garbage Collection Based on Hans-J. Boehm, HP Laboratories, December 2000 Presented by Yair Sade

About the Author Works in HP labs. n Research on high-performance GCs. n gcj runtime is based his ideas. n There also commercial GCs based on his ideas (Geodesic GC). n Etc. . . n

Introduction Large scale commercial Java applications are becoming more common. n Small scale multi-processor machines are becoming more economical and popular. n Even on desktop machines multi-processors become common. n

Introduction – cont. Garbage collectors need to be highly efficient. n Especially on multi-processor environment. n Throughput of multi-threaded applications may drop dramatically. n

Introduction – cont. GC pause time should be minimized. n GC itself should be able to run in parallel. n

Motivation Create a generic scalable GC. n GC should be transparent. n Emphasis on small scale (desktop) multiprocessor machines. n Should not degrade performance on singleprocessor machines. n

Motivation – cont. Throughput should not degrade as more clients and processors are added (global lock is bad). n GC itself should be scalable. n Even single-threaded application should benefit somewhat from multiprocessors. n

This Work A new GC that meets the above demands. n Plug-in as malloc/free replacement. n Design based on existing Mark and Sweep GC of T. Endo, K. Taura and A. Yonezawa (ETY 97). n

Roadmap Related Work. n Algorithm description. n Parallelism and Performance Issues. n GC-friendly TLS. n Benchmarks. n Summary. n

Related Work n n n Multi-threaded mutators (E. Petrank, 2000). Thread local storage (B. Steensgard, 2000). Tauro and Yonezawa work: u Emphasis on high-end systems. u No single-threaded support, two different libraries. u No malloc/free plug in. u No thread local storage.

Algorithm Context Based on Boehm Mark / Lazy Sweep. n The heap as a big-bag of pages. n Each page holds object of a single size. n Each page has descriptor with mark bits for allocated objects. n

Algorithm Context – cont. Unmarked objects are reclaimed incrementally during allocation calls. n GC works conservatively, and treats everything as potential pointers. n Type information (from compiler / programmer) may be used. n But practice shows that conservative GCs work fine. n

Algorithm Context – cont. The GC never copies / moves objects. n Currently generational GC is not supported. n However, mentioned techniques can be used in generational GCs. n

Mark Phase Reachable objects (grey) are pushed to the stack. n Each stack entry contains base address a mark descriptor. n Mark descriptor contains locations of all the possible pointers relative to its base address. n

Mark Phase – cont. Initially roots are pushed the stack. n Iteration on stack content. n Empty pages are recycled immediately. n Nearly full pages are ignored. n Remaining pages are enqueued by object size for later sweeping. n

Allocation Large objects are allocated on page level. n Allocator maintains free list of various small object sizes. n When free list of object size is empty, enqueued pages are swept. n If there are no such pages, new page is acquired. n

Parallel Allocation Some JVMs use per-thread allocated arenas to avoid synchronization on every small allocation request. n Size of arena is limited, and if unused, leads to unnecessary memory consumption. n Our algorithm uses an improved scheme, using free lists instead of contiguous arenas. n

Parallel Allocation – cont. Each thread has 64 or 48 free list headers for different objects size (No locks). n Larger object are allocated by global free list (Lock required). n Since large object allocation is expensive anyway, synchronization cost is amortized. n

Parallel Allocation – cont. Initially, allocate from global free list. n After a threshold start allocate on thread local free list. n Avoidance of allocating unnecessary storage. n

Parallel Allocation – cont. Allocating from the thread local free list does not require synchronization. n Locks are required only for taking page from global list or from waiting to be swept queue. n

Parallel Marking At process startup N-1 marker threads are created. n When mutator initiates GC (on allocation), it stops all other mutators (Stop the world) and wakes up the marker threads. n

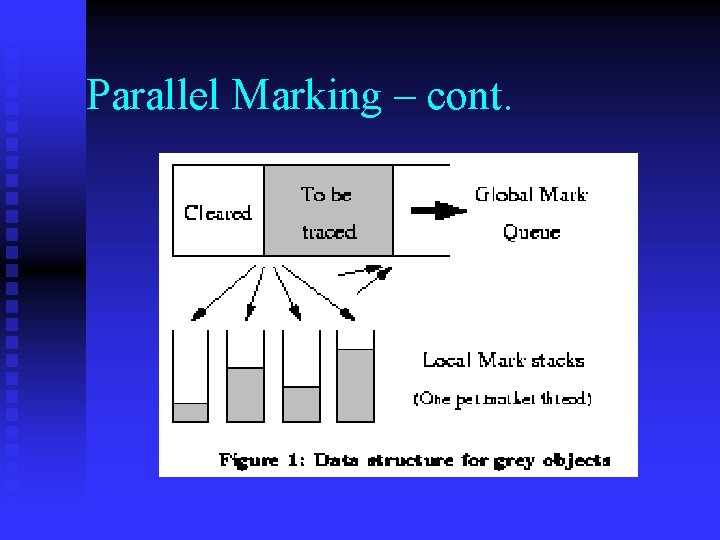

Parallel Marking – cont. The mark stack we discussed before is used as a global list of waiting mark tasks. n Actually the global mark stack is acting as queue. n Initially the Mark stack in filled with roots. n

Parallel Marking – cont. Each marker thread atomically removes 1 -5 items from the stack and inserts it into thread local stack. n Marker thread iteratively marks its local stack. n When local stack is emptied, more items are fetched from global stack. n

Parallel Marking – cont.

Parallel Marking – cont. n n n Objects might be marked twice. Returning items to global stack occurs in the following scenarios: u When mark thread discovers that global stack is empty, and there are entries in local stack (For load balancing). u In case of local stack overflow. Those occasions require lock, however occur rarely.

Parallel Marking – Cheats and Tricks. Shared pointer of the next mark task is held and maintained by Compare and Swap (to avoid unneeded scans). n Large objects are split before the marking phase to increase parallelism. n

Mark bit representation Each page has an array of mark bits. n A problem may rise while two marker threads attempt to update adjacent mark bits concurrently. n We need to find an atomic way to update a mark bit. n

Mark bit representation – cont. n n n How to avoid update of same word by two threads: u By Read and Compare and Swap (Expensive operation). u By using byte instead of bit. (To compensate on space loss, we require object sizes to be multiple of 2 words). Mark bits holds 1/8 of heap size. Leads to extraspace and more cache misses. However reduce number of instructions.

GC-friendly TLS We need a way to quickly generate a pointer of the TLS data. n Most JVMs uses a register for holding thread context data. n Operating system gives services to access thread local storage. n

GC-friendly TLS – cont. n n On posix u pthread_key_create. u pthread_set_specific. u pthread_get_specific. On Windows u Tls. Alloc. u Tls. Set. Value. u Tls. Get. Value.

GC-friendly TLS – cont. Getting TLS value should have highest performance. n It need to be called every allocation in order to get the thread free-list headers. n pthread_getspecific performance are inadequate. n

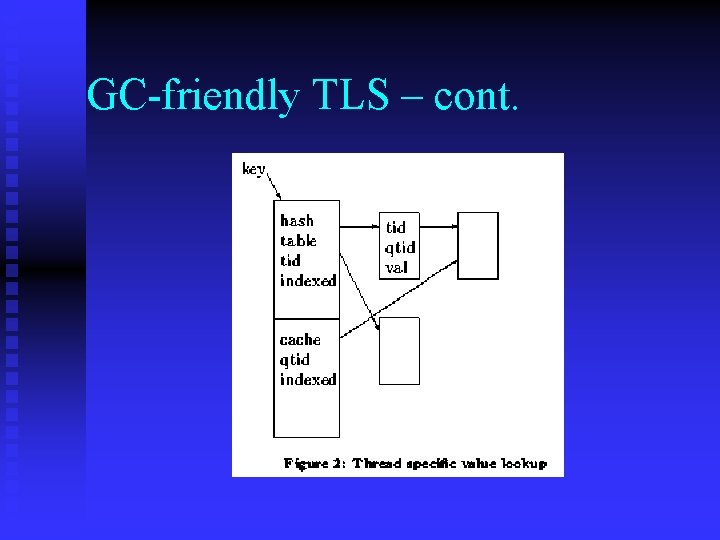

GC-friendly TLS – cont. High performance TLS implementation. n Each TLS key is actually a pointer to a data structure containing two kinds of data. u Hash table mapping thread Ids to associated TLS. u Hash table for fast lookup (cache). n

GC-friendly TLS – cont. We calculate quick thread id (qtid) and looking in the fast lookup cache. n In case entry is not in cache, we’ll lookup in the main hash table. n Access on cache hit requires only 4 memory references. n

GC-friendly TLS – cont. Updates of hash table require locks, however the structure always remains consistence. n Lookup data does not require lock. n

GC-friendly TLS – cont.

GC-friendly TLS – cont. n n n This implementation is applicable only for GC environment. Upon thread termination, its TLS data is not freed. Freeing the hash table is made by the GC. On non-GC environment, freeing the hash entries forces us to synchronize reader access.

Benchmarking Intel Pentium Pro 200 on Linux Red. Hat 7. n 1 -4 processors machines. n Single 66 MHZ bus (which may cause bus contention). n

Benchmarking – cont. RH 7. n RH 7 Single (thread unsafe). n Hoard. n GC Single (thread unsafe). n SGC. n

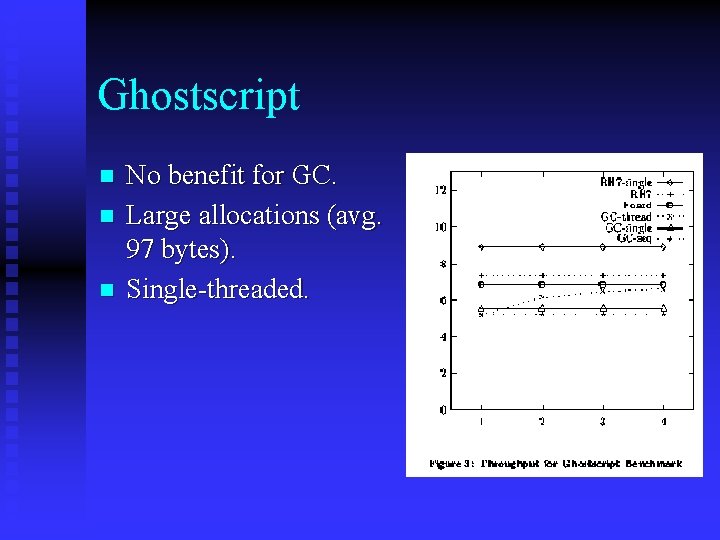

Ghostscript n n n No benefit for GC. Large allocations (avg. 97 bytes). Single-threaded.

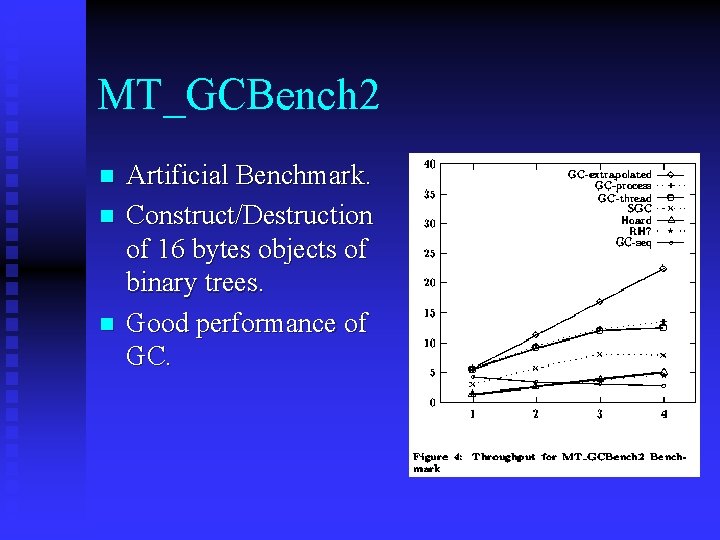

MT_GCBench 2 n n n Artificial Benchmark. Construct/Destruction of 16 bytes objects of binary trees. Good performance of GC.

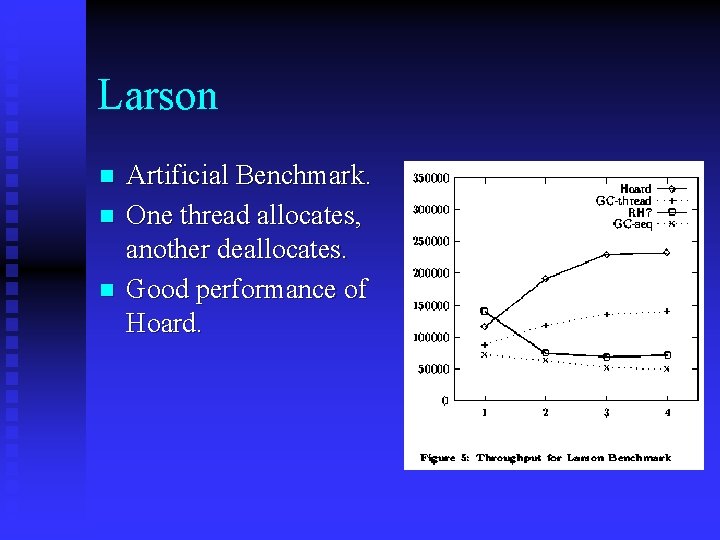

Larson n Artificial Benchmark. One thread allocates, another deallocates. Good performance of Hoard.

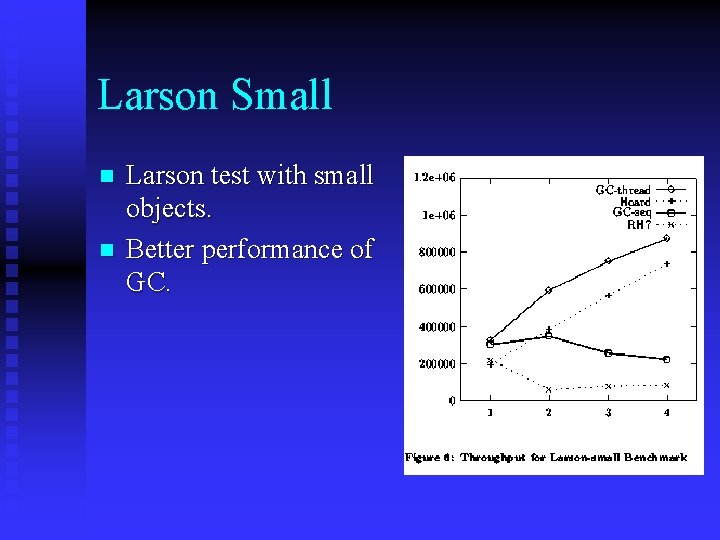

Larson Small n n Larson test with small objects. Better performance of GC.

Benchmark Summary Our GC is good for small allocated object. n Advantages of GC on parallel malloc/free. u No per-object lock on deallocations (objects are deallocated together). u No per-object lock on allocations (We can reuse memory from thread local storage). n

Summary We presented a scalable GC allocator. n We touched some GC related multiprocessors performance issues. n We saw an algorithms with advantage that GC environment has. n We saw in the benchmarks it works quite well. n

Few Words about Hoard Allocator Hoard allocator by D. Berger, K. Mc. Kinley, D. Blumofe and P. Wilson (November 2000). n Not a GC but an allocator. n A malloc/free replacement. n “Compete” Boehm GC. n

Hoard – cont. Highly scalable (for high-end machines). n Low fragmentation (Blowup). n Avoids false cache lines sharing. n Based on global heap, and per-processor heaps. n

Questions?

The end!

- Slides: 48