Decoupling Learning Rates Using Empirical Bayes Sareh Nabi

Decoupling Learning Rates Using Empirical Bayes Sareh Nabi University of Washington Work done while at Amazon Paper in collaboration with: Houssam Nassif, Joseph Hong, Hamed Mamani, Guido Imbens

Biking competition Stop biking pointlessly, join team Circle! • • • Join now! Year-round carousel rides! Unlimited laps! All you can eat Pi! No corners to hide! Hurry, don’t linger around! Check other teams 2

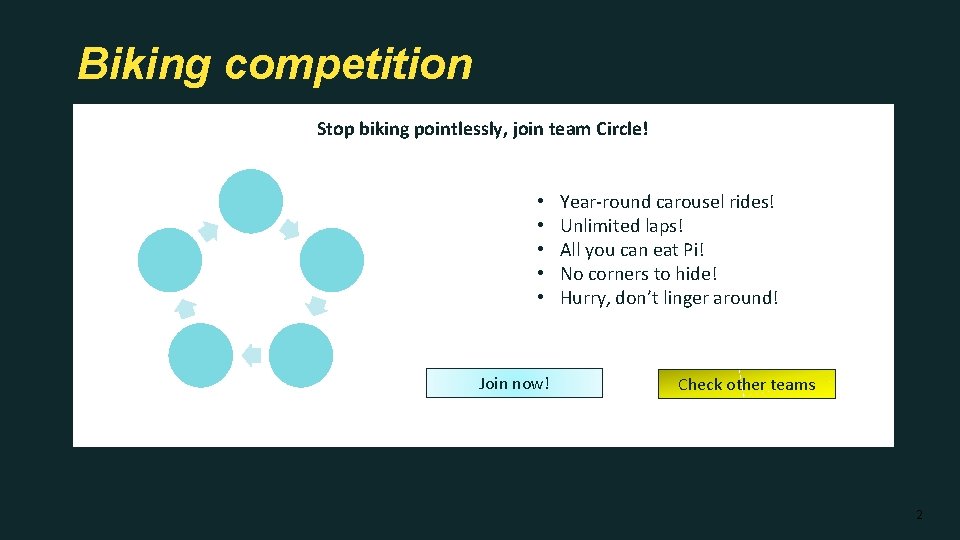

Multiple-slot template Stop biking pointlessly, join team Circle! Slot • • • Join now! Year-round carousel rides! Unlimited laps! All you can eat Pi! No corners to hide! Hurry, don’t linger around! Check other teams 3

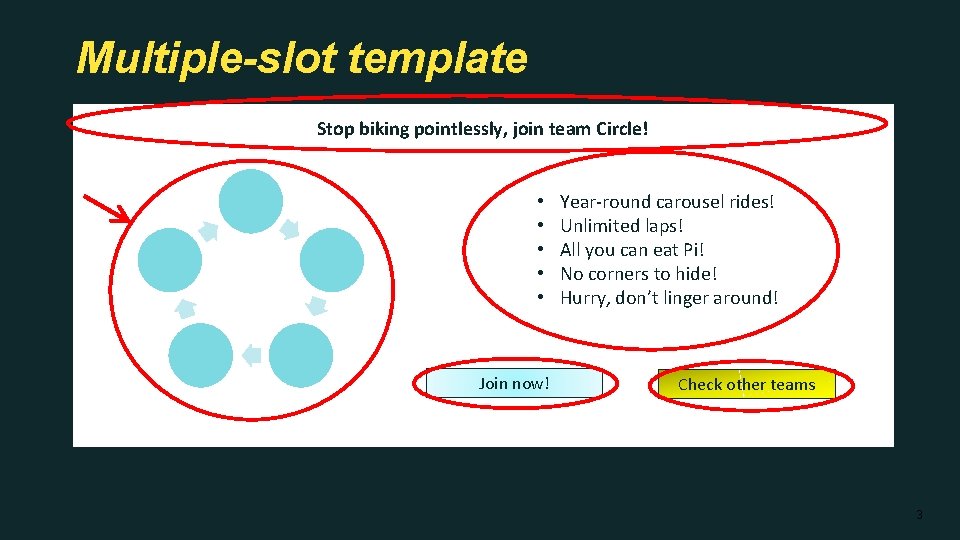

Template combinatorics: 48 layouts Stop biking pointlessly, join team Circle! x 2 x 3 • Best way to bicycle! • We cycle and re-cycle! • No point in not joining! Join now! Check other teams x 2 x 2 4

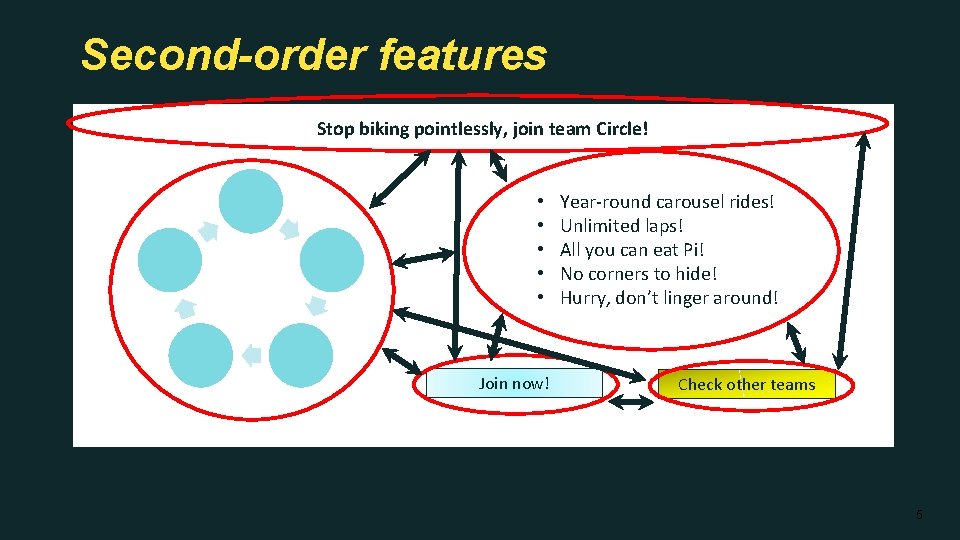

Second-order features Stop biking pointlessly, join team Circle! • • • Join now! Year-round carousel rides! Unlimited laps! All you can eat Pi! No corners to hide! Hurry, don’t linger around! Check other teams 5

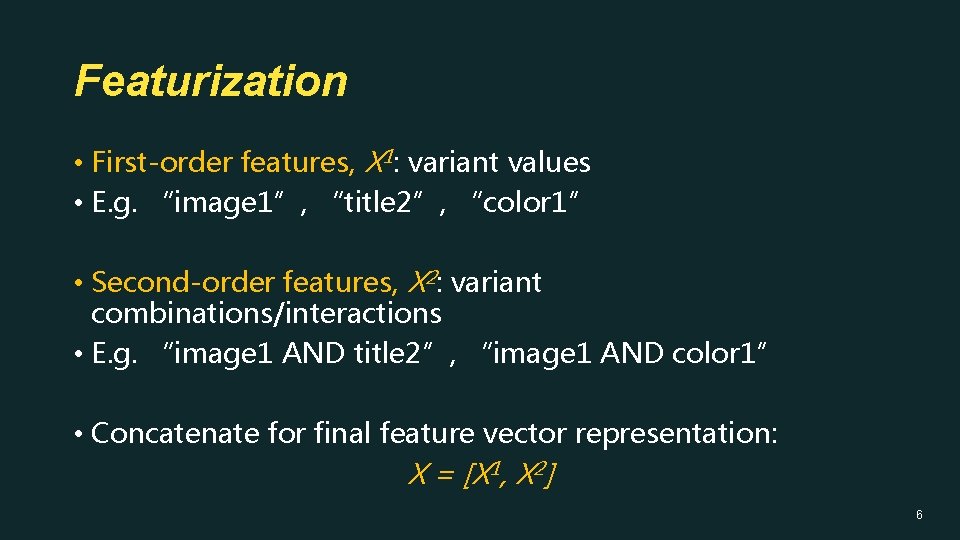

Featurization • First-order features, X 1: variant values • E. g. “image 1”, “title 2”, “color 1” • Second-order features, X 2: variant combinations/interactions • E. g. “image 1 AND title 2”, “image 1 AND color 1” • Concatenate for final feature vector representation: X = [X 1, X 2] 6

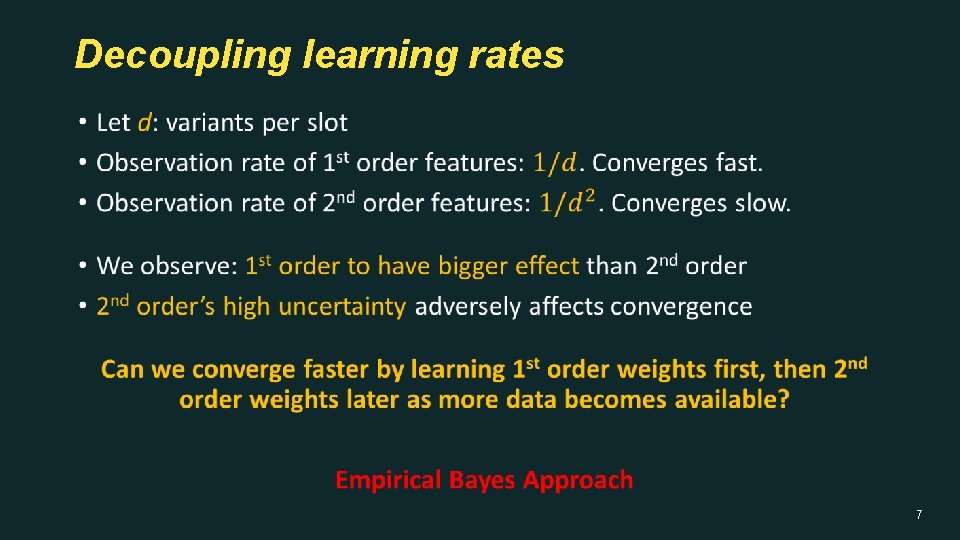

Decoupling learning rates • 7

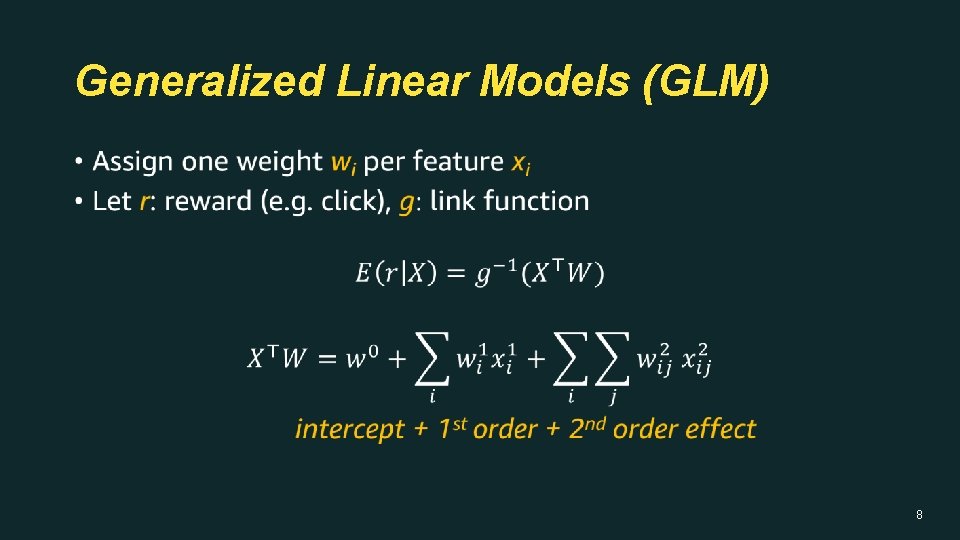

Generalized Linear Models (GLM) • 8

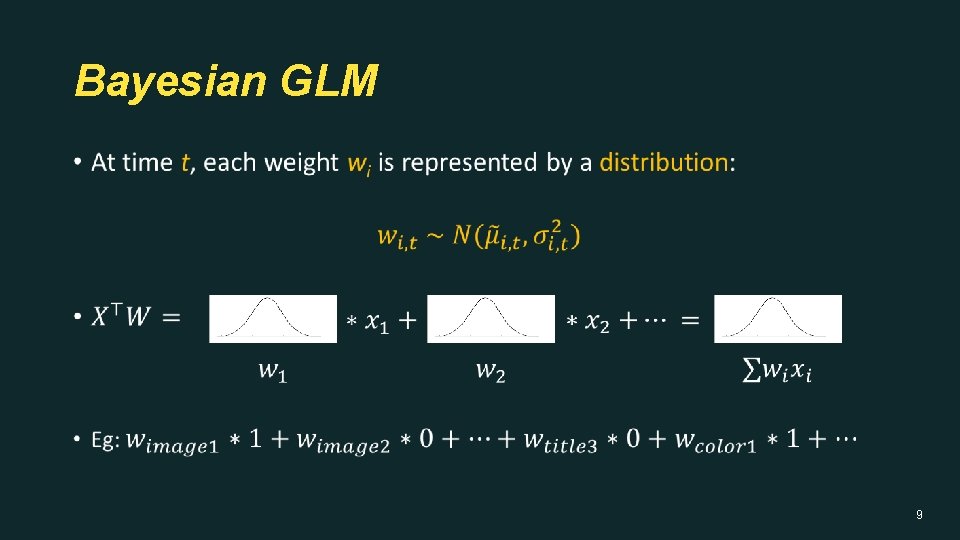

Bayesian GLM • 9

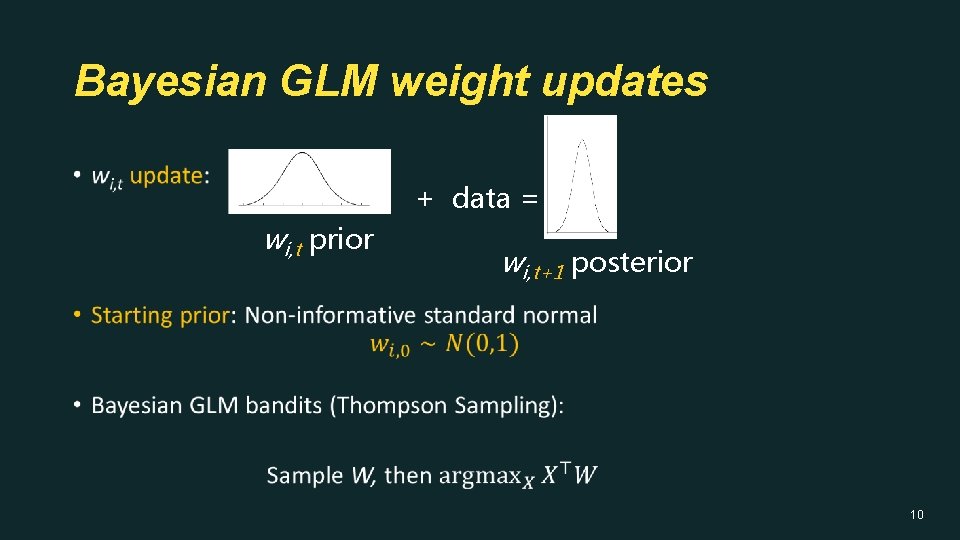

Bayesian GLM weight updates • + data = wi, t prior wi, t+1 posterior 10

Empirical Bayes Approach 11

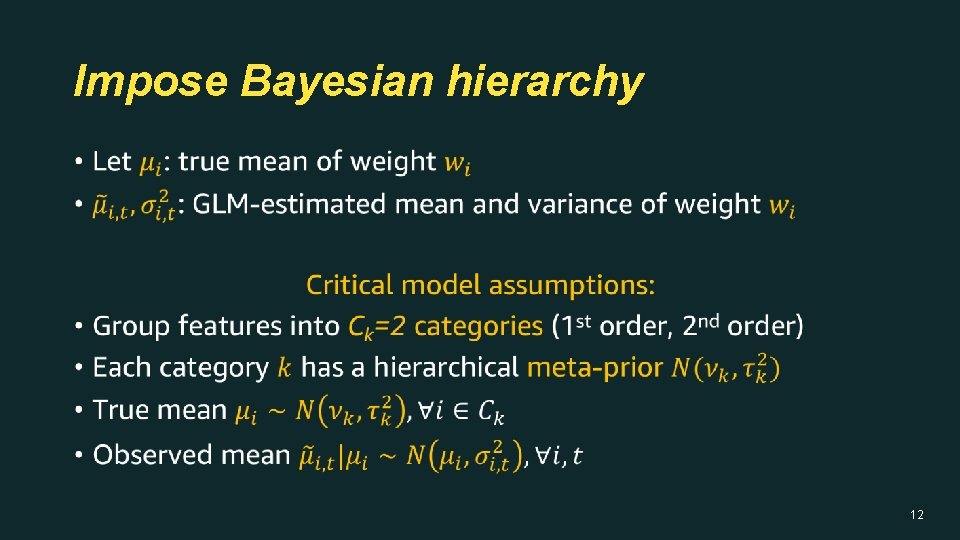

Impose Bayesian hierarchy • 12

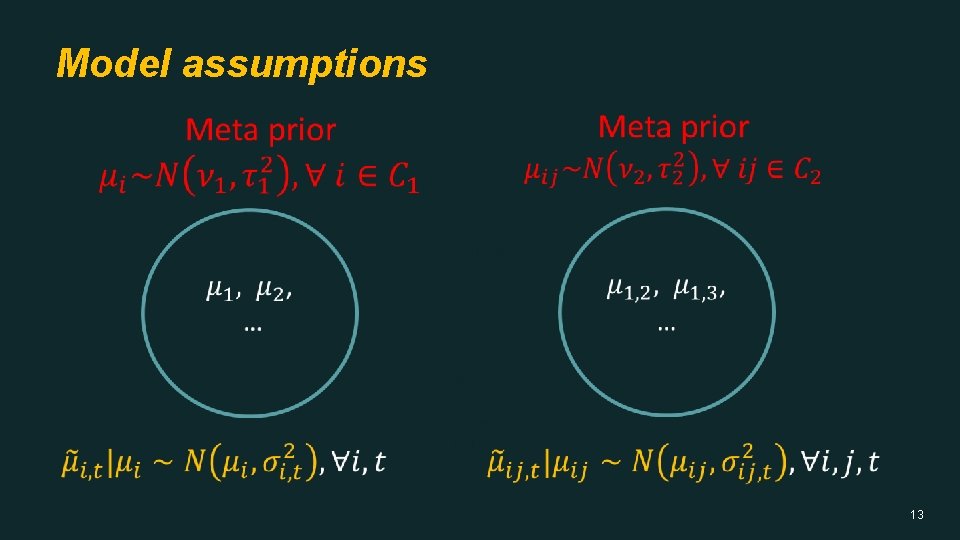

Model assumptions • 13

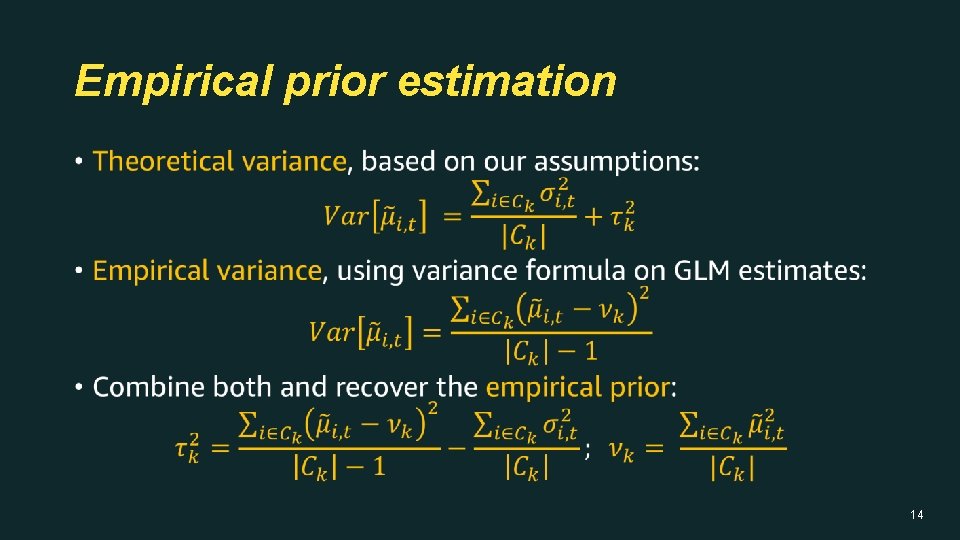

Empirical prior estimation • 14

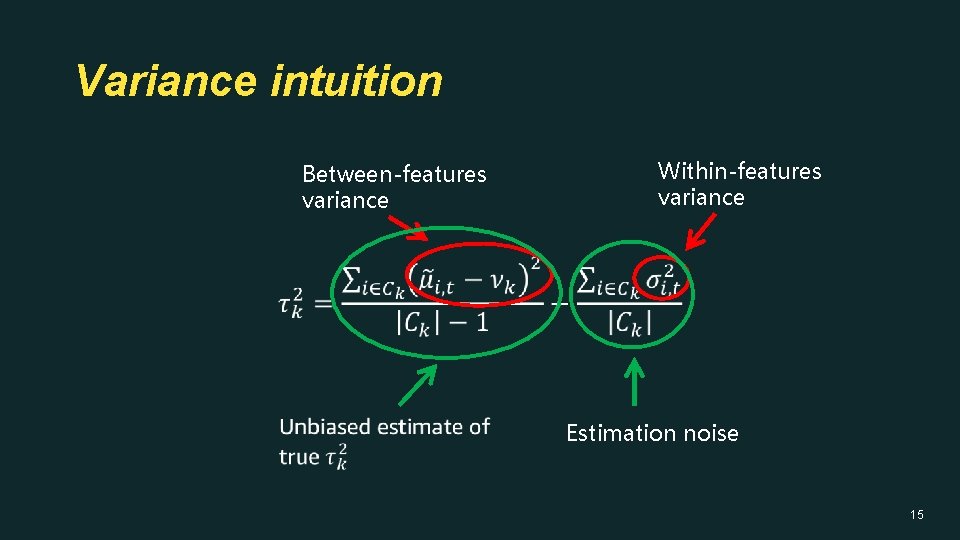

Variance intuition • Between-features variance Within-features variance Estimation noise 15

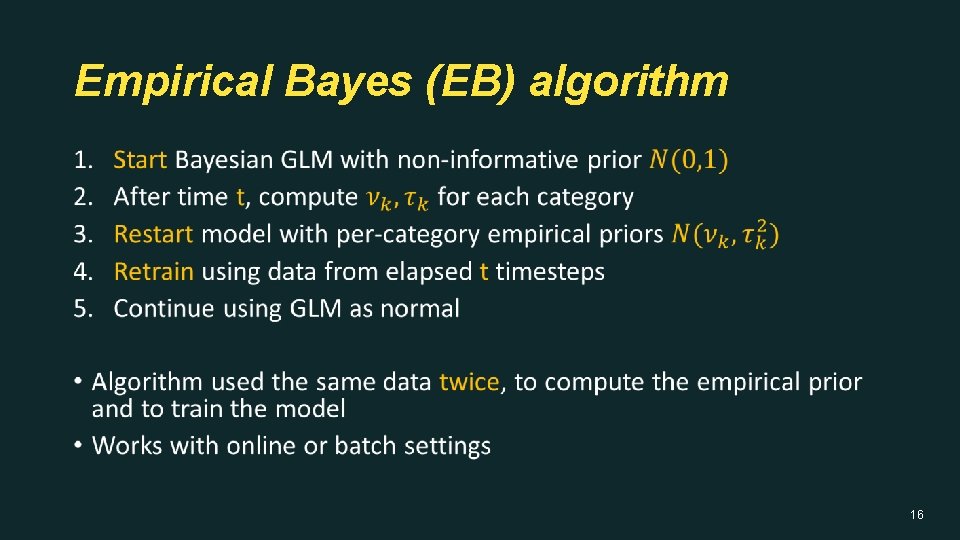

Empirical Bayes (EB) algorithm • 16

Experiments and Results 17

Experimental setup • 18

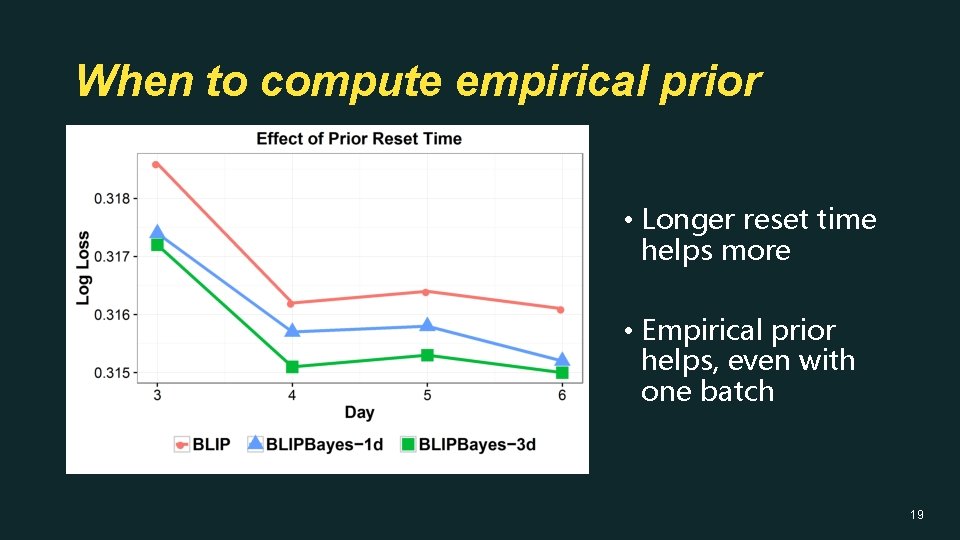

When to compute empirical prior • Longer reset time helps more • Empirical prior helps, even with one batch 19

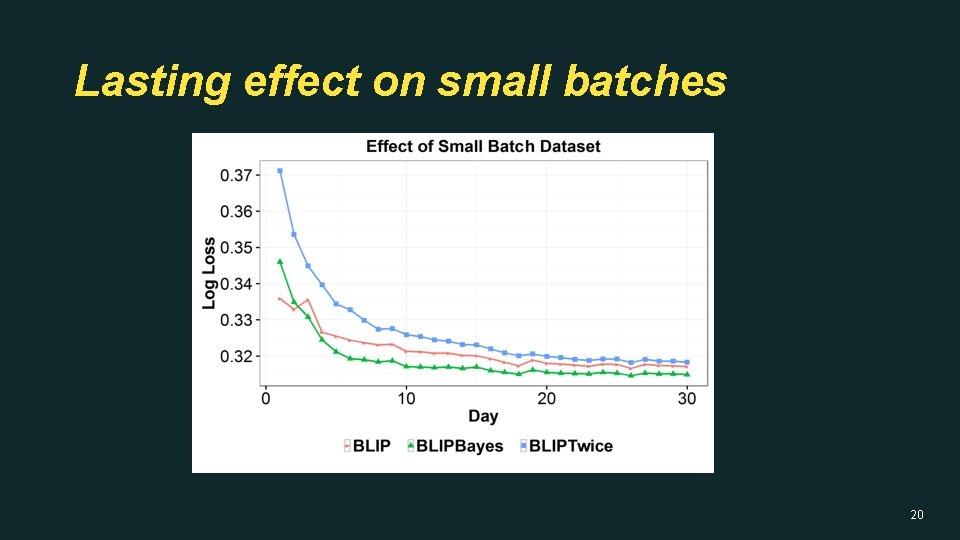

Lasting effect on small batches 20

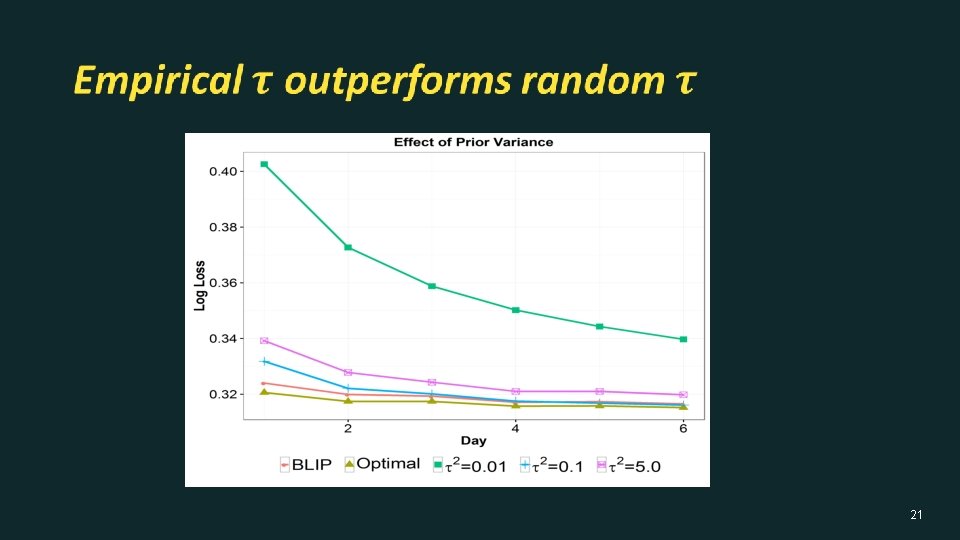

21

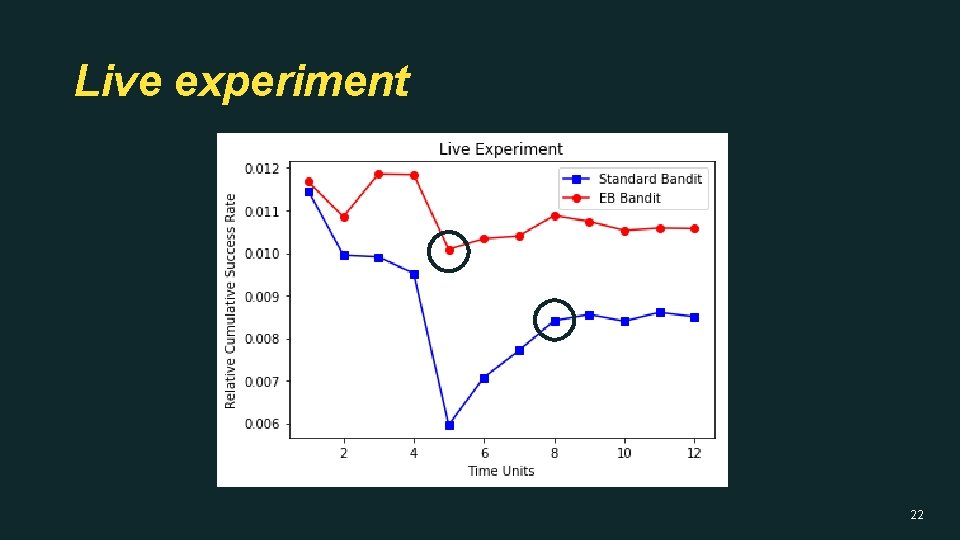

Live experiment 22

Discussion • 23

Takeaways • Empirical Bayes prior helps, mostly on low/medium traffic • Promising, as low traffic cases are hardest to optimize • Effectively decouples learning rates • Model converges faster • Works for any feature grouping • Applies to contextual and personalization use cases 24

Thank you! Questions? Comments? 25

- Slides: 25