DataAwareness and Low Latency on the Enterprise Grid

- Slides: 46

Data-Awareness and Low. Latency on the Enterprise Grid Getting the Most out of Your Grid with Enterprise IMDG Shay Hassidim Deputy CTO Oct 2007

Overall Presentation Goal • Understand the Space Based Architecture model and its 4 verbs. • Understand the Data contention challenge and the latency challenge with Enterprise Grid based applications. • Understand why typical In-Memory-Data-Grid can’t solve the above problems and why the Enterprise IMDG can.

Giga. Spaces in a Nutshell • Founded in 2000 • Founder of The Israeli Association of Grid Technologies (IGT) – OGF affiliate. • Provides infrastructure software for applications characterized by: • • High volume transaction processing • Very Low latency requirements • Real time analytics Product: e. Xtreme Application Platform – XAP. 6. 0 released few months ago. • Enterprise In Memory Data Grid (Caching) • Application Service Grid Customer base – about 2000 deployments around the world. • Financial Services • Telecom • Defense and Government Presence – US: NY (HQ), San Francisco, Atlanta – EMEA: UK, France, Germany, Israel (R&D) – APAC: Japan, Singapore, Hong Kong

About myself – Shay Hassidim • B. Sc. Electrical, Computer & Telecommunications engineer. Focus on Neural networks & Artificial Intelligence , Ben-Gurion University , Graduated 1994 • Object and Multi-Dimensional DBMS Expert • Extensive knowledge with Object Oriented & Distributed Systems • Consultant for Telecom, Healthcare , Defense & Finance projects • Technical Skills: MATLAB , C, C++, . Net , Power. Builder , Visual Basic , Java , XML , CORBA , J 2 EE , ODMG , JDO , Hibernate, SQL , JMS , JMX, IDE , GUI , Jini , ODBMS , RDBMS , Java. Spaces • In the past: – Sirius Technologies Israel - VMDB Applications & Tools team Leader – Versant Corp US. - Tools Lead Architect , R&D • Since 2003 - Giga. Spaces VP Product Management (Based in Israel) • Since 2007 – Giga. Spaces Deputy CTO (Based in NY)

Giga. Spaces – Technical overview

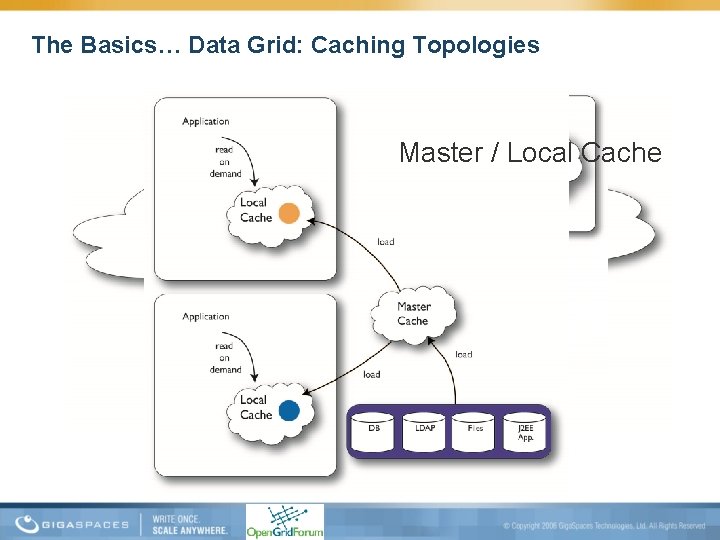

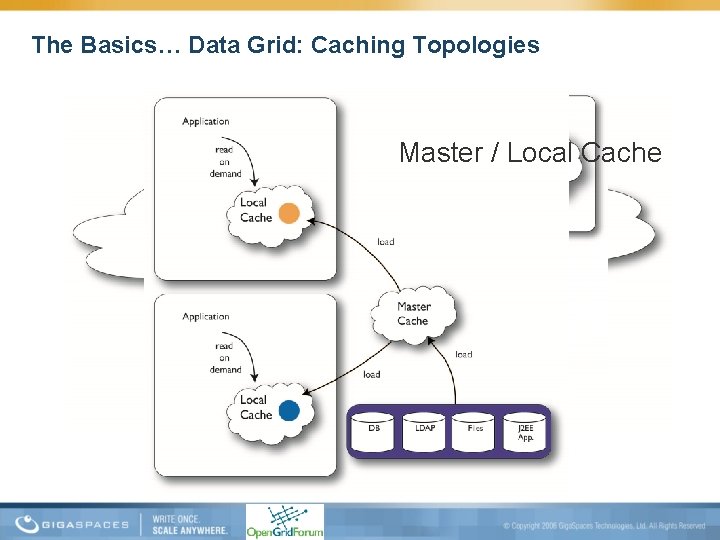

The Basics… Data Grid: Caching Topologies Master / Local Cache Replicated Cache Partitioned Cache

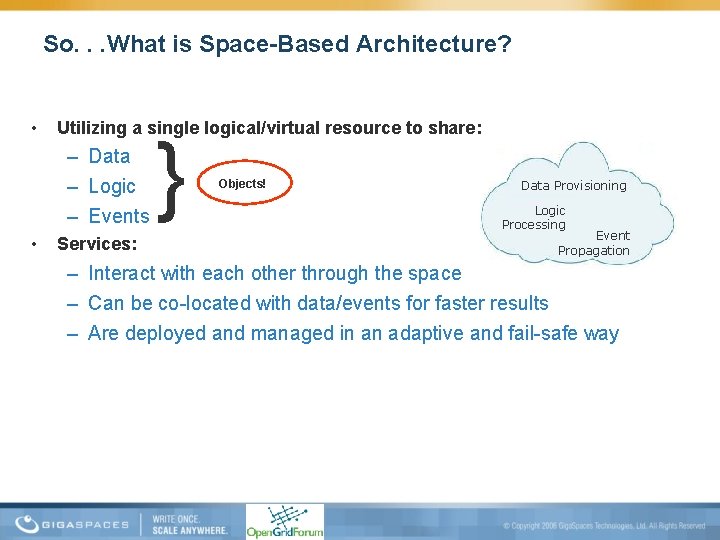

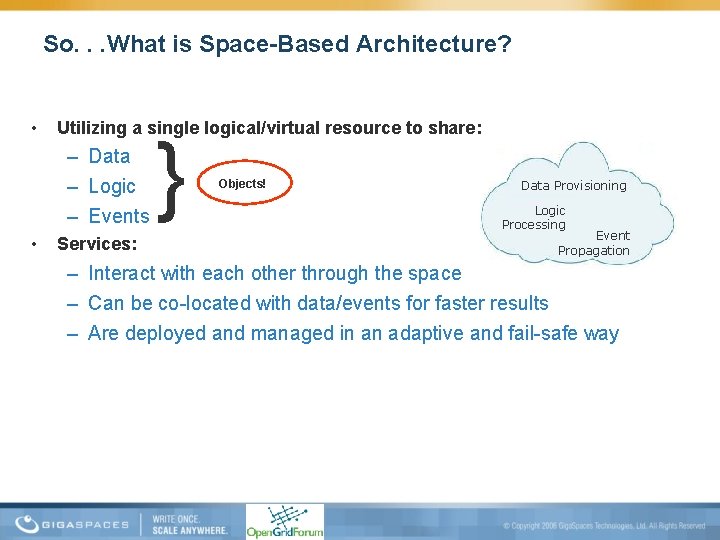

So. . . What is Space-Based Architecture? • Utilizing a single logical/virtual resource to share: – Data – Logic – Events • Services: } Objects! Data Provisioning Logic Processing Event Propagation – Interact with each other through the space – Can be co-located with data/events for faster results – Are deployed and managed in an adaptive and fail-safe way

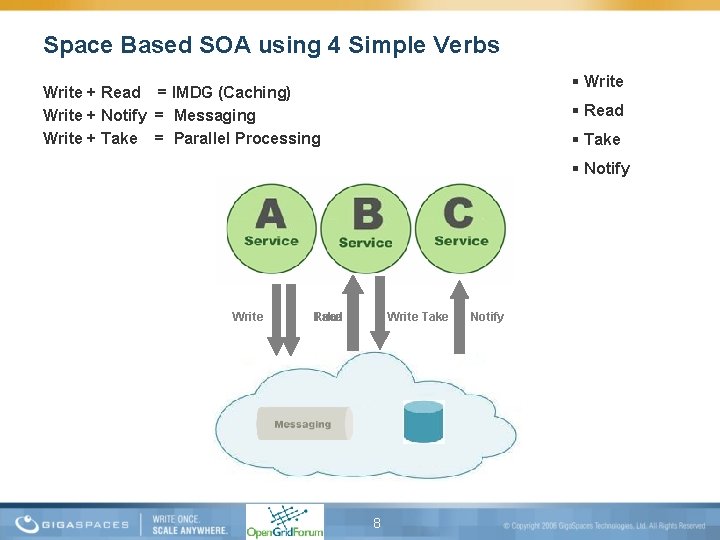

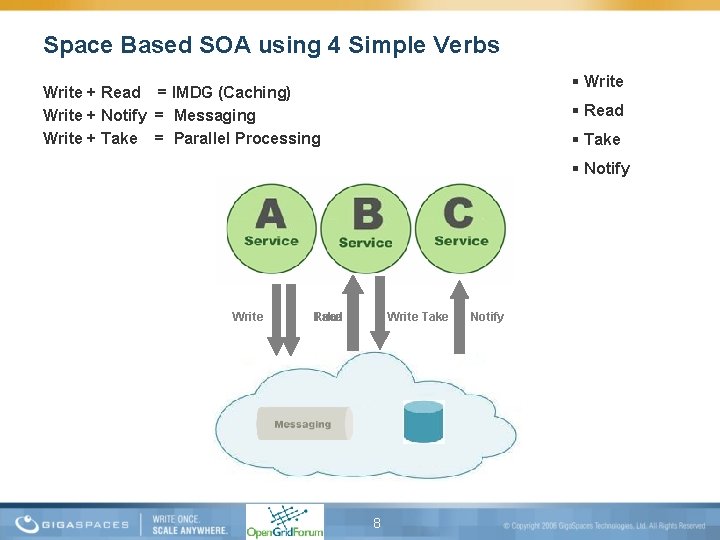

Space Based SOA using 4 Simple Verbs Write + Read = IMDG (Caching) Write + Notify = Messaging Write + Take = Parallel Processing Read Take Notify Write Read Take Write Take 8 Notify

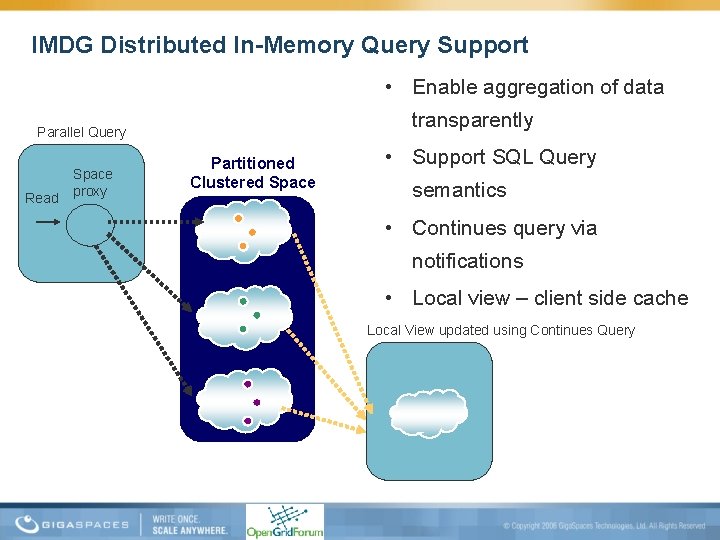

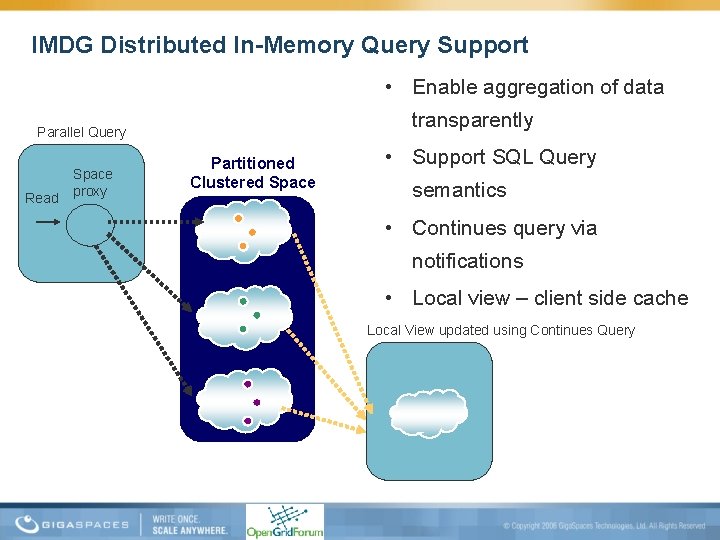

IMDG Distributed In-Memory Query Support • Enable aggregation of data transparently Parallel Query Read Space proxy Partitioned Clustered Space • Support SQL Query semantics • Continues query via notifications • Local view – client side cache Local View updated using Continues Query

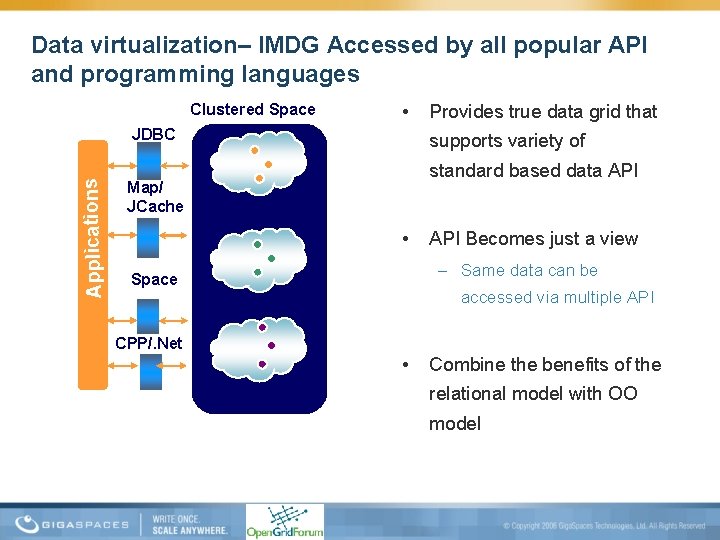

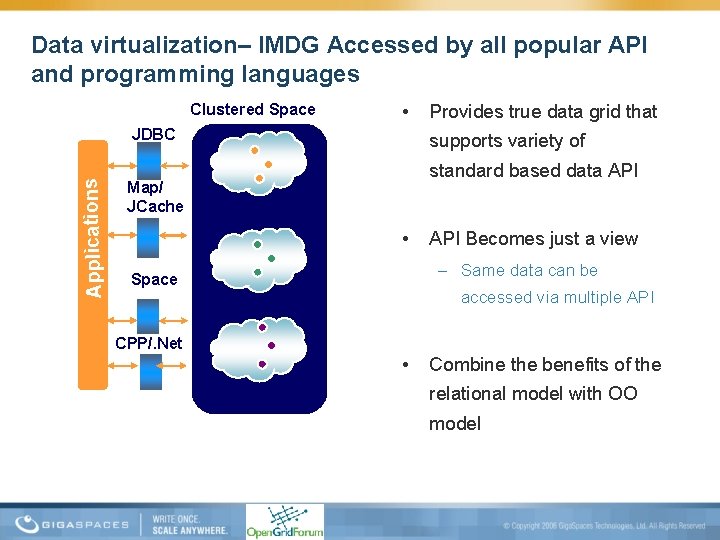

Data virtualization– IMDG Accessed by all popular API and programming languages Clustered Space • Applications JDBC Provides true data grid that supports variety of standard based data API Map/ JCache • API Becomes just a view – Same data can be Space accessed via multiple API CPP/. Net • Combine the benefits of the relational model with OO model

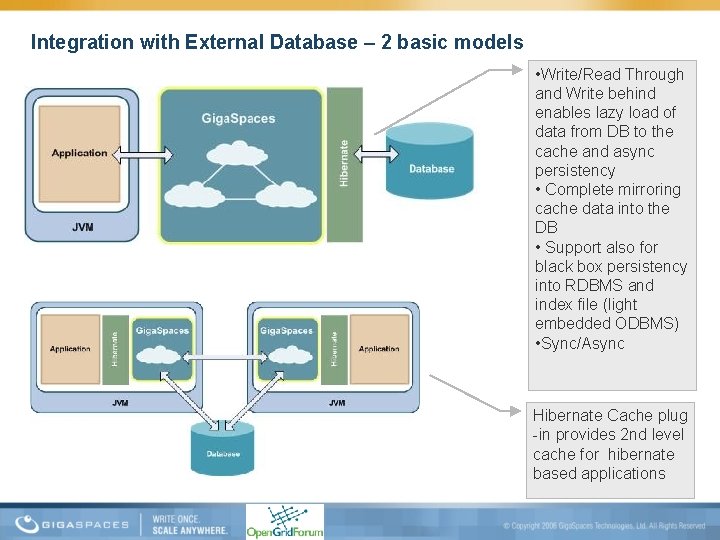

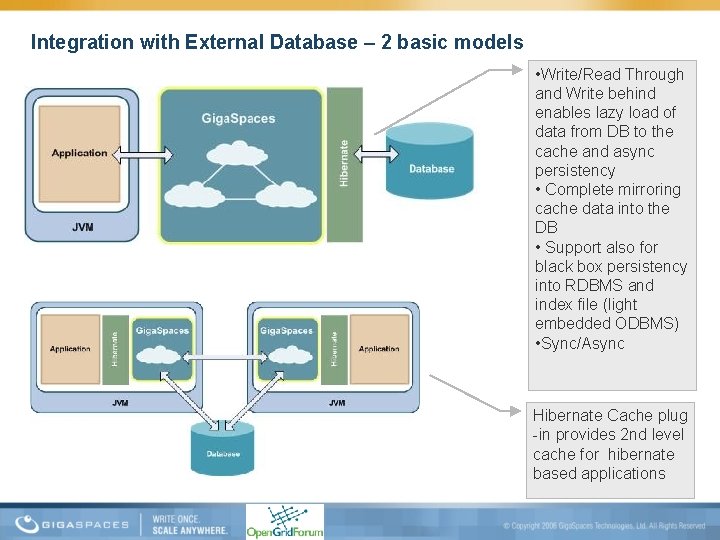

Integration with External Database – 2 basic models • Write/Read Through and Write behind enables lazy load of data from DB to the cache and async persistency • Complete mirroring cache data into the DB • Support also for black box persistency into RDBMS and index file (light embedded ODBMS) • Sync/Async Hibernate Cache plug -in provides 2 nd level cache for hibernate based applications

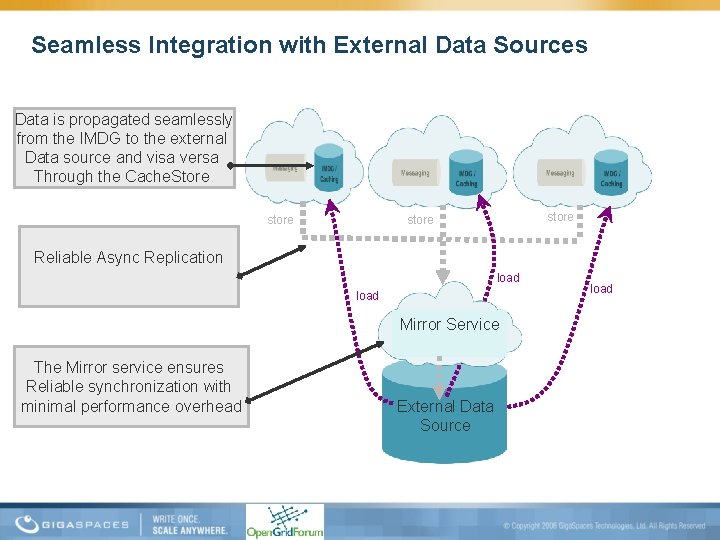

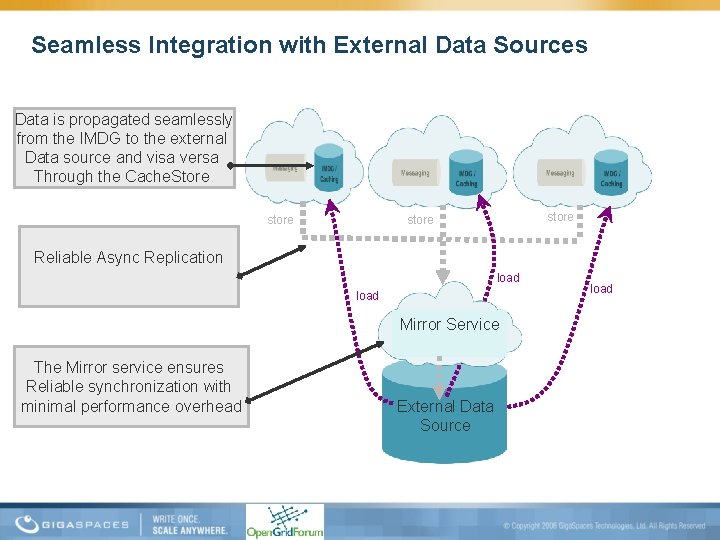

Seamless Integration with External Data Sources Data is propagated seamlessly from the IMDG to the external Data source and visa versa Through the Cache. Store. store Reliable Async Replication load Mirror Service The Mirror service ensures Reliable synchronization with minimal performance overhead External Data Source load

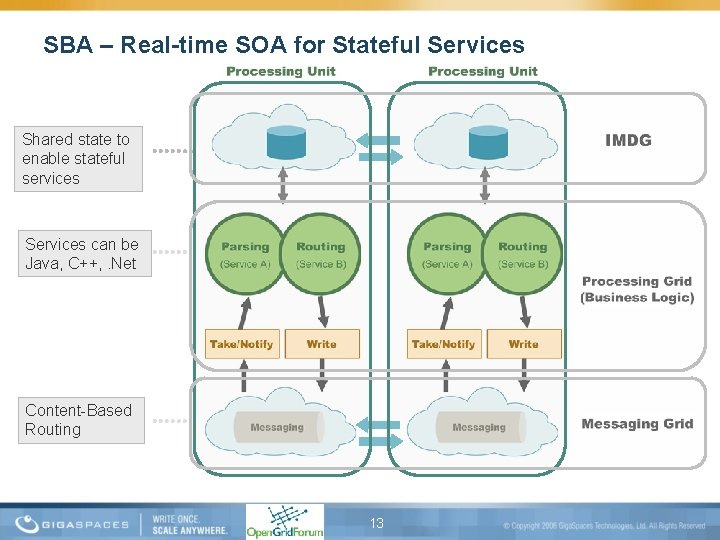

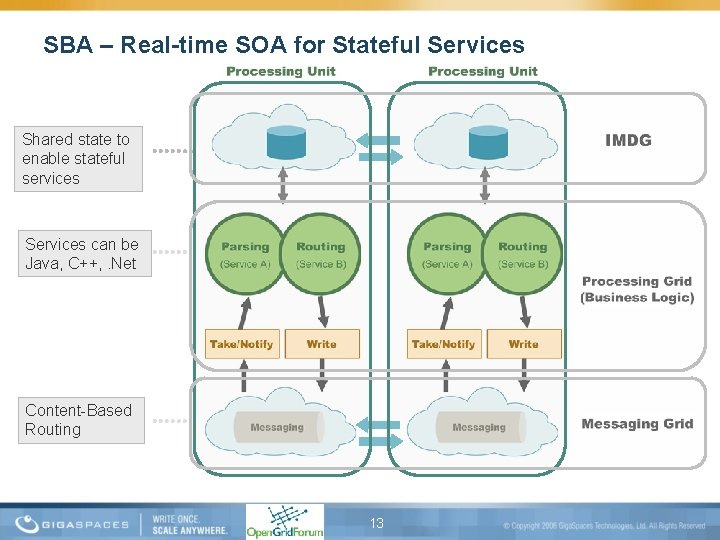

SBA – Real-time SOA for Stateful Services Shared state to enable stateful services Services can be Java, C++, . Net Content-Based Routing 13

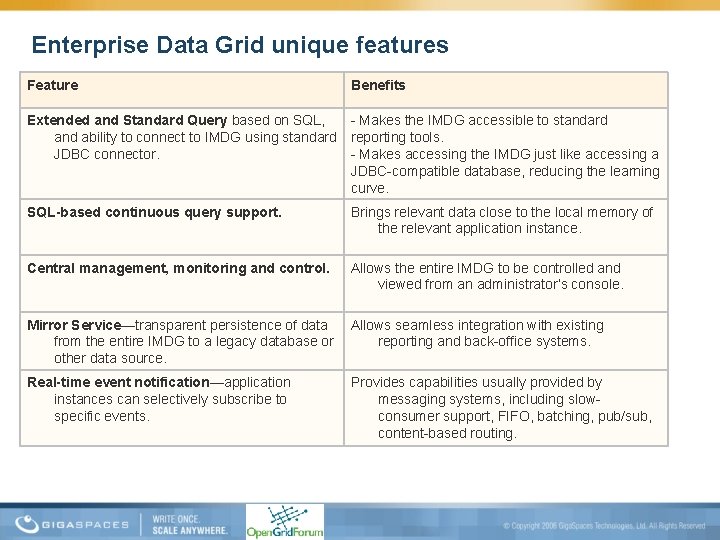

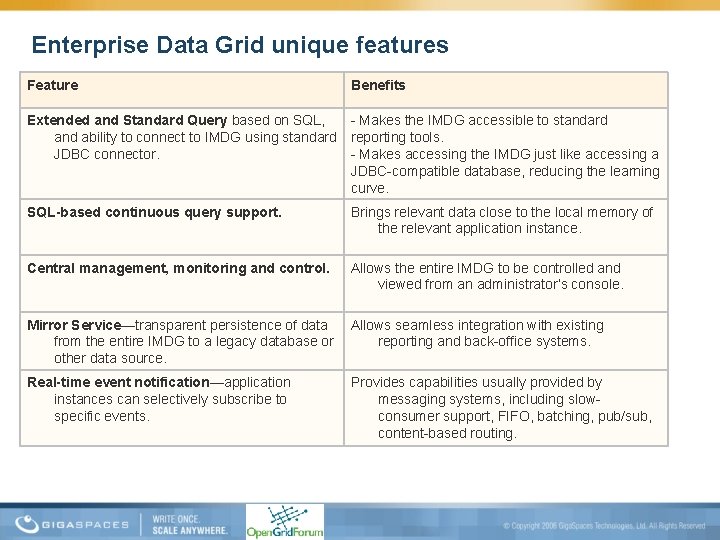

Enterprise Data Grid unique features Feature Benefits Extended and Standard Query based on SQL, - Makes the IMDG accessible to standard and ability to connect to IMDG using standard reporting tools. JDBC connector. - Makes accessing the IMDG just like accessing a JDBC-compatible database, reducing the learning curve. SQL-based continuous query support. Brings relevant data close to the local memory of the relevant application instance. Central management, monitoring and control. Allows the entire IMDG to be controlled and viewed from an administrator’s console. Mirror Service—transparent persistence of data Allows seamless integration with existing from the entire IMDG to a legacy database or reporting and back-office systems. other data source. Real-time event notification—application instances can selectively subscribe to specific events. Provides capabilities usually provided by messaging systems, including slowconsumer support, FIFO, batching, pub/sub, content-based routing.

Giga. Spaces solution for Enterprise Grid

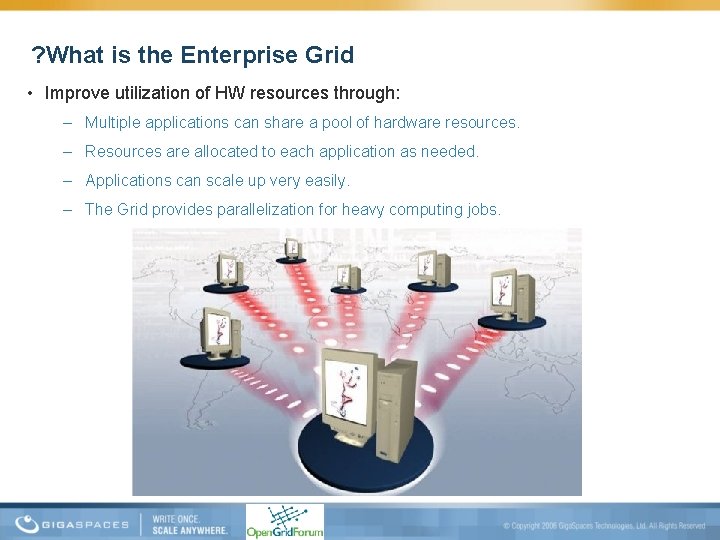

? What is the Enterprise Grid • Improve utilization of HW resources through: – Multiple applications can share a pool of hardware resources. – Resources are allocated to each application as needed. – Applications can scale up very easily. – The Grid provides parallelization for heavy computing jobs.

…Great, But • What about stateful applications? – Data Contention challenge • How can I bring front office application to the grid? – The Latency challenge

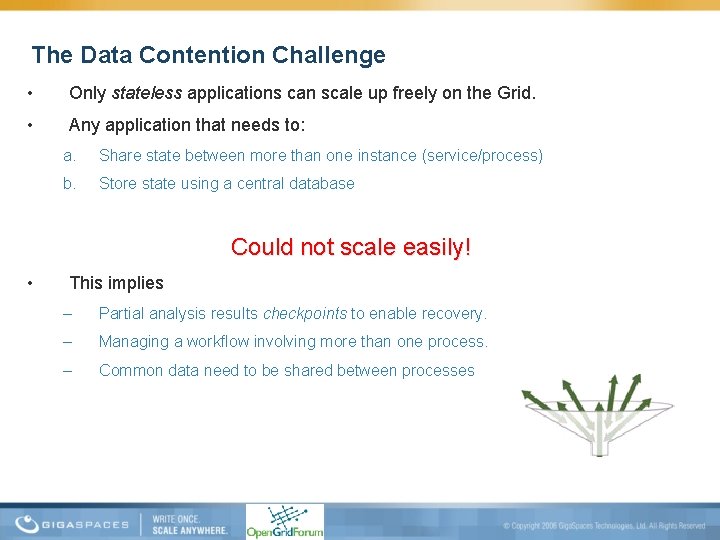

The Data Contention Challenge • Only stateless applications can scale up freely on the Grid. • Any application that needs to: a. Share state between more than one instance (service/process) b. Store state using a central database Could not scale easily! • This implies – Partial analysis results checkpoints to enable recovery. – Managing a workflow involving more than one process. – Common data need to be shared between processes

The Latency Challenge • Enterprise Grid designed for batch applications – Each client request is submitted as a job. – Hardware resources are allocated. – Relevant software instances (service/process) are scheduled to run on the resources and perform the work. Impracticable with low-latency environments! • Why? – An interactive application receives thousands of client requests per second, each of which needs to be fulfilled within milliseconds. – It is impossible to respond fast enough in a “job” approach. – Throughput would be severely limited due to the need to schedule and launch large numbers of application instances.

Three Stages Approach to the Solution 1. In Memory Data Grid (IMDG) 2. Data Aware Grid using SLA driven containers 3. Adding front office application to the Grid using Declarative Space Based Architecture (SBA)

(In Memory Data Grid (IMDG Sta ge 1 • Data stored in the memory of numerous physical machines instead of, or alongside, a database. – Eliminates I/O, network and CPU load. – Partitions the data and moves it closer to the application. However, IMDG in an Enterprise distributed environment, is only a partial solution!

Data Aware Grid using SLA driven containers Sta ge 2 Common wisdom holds that it is much easier to bring the business logic to the data than to bring the data to the business logic. But… Not all IMDG support data & business logic co-locality! This results: • Unnecessary overhead caused by remote calls from business logic to IMDG instances. • Data duplication, because business logic elements that use the same data are not necessarily concentrated around the relevant IMDG instance. • And worst of all, data contention, because several business logic elements might access the same IMDG instance - leading to exactly the problem the IMDG was meant to solve! Requirements for a Data-Aware Grid • The Enterprise Grid must know which data is stored on which IMDG instances. • There must be a way to guarantee data affinity - tasks must always be executed with the relevant data coupled to them.

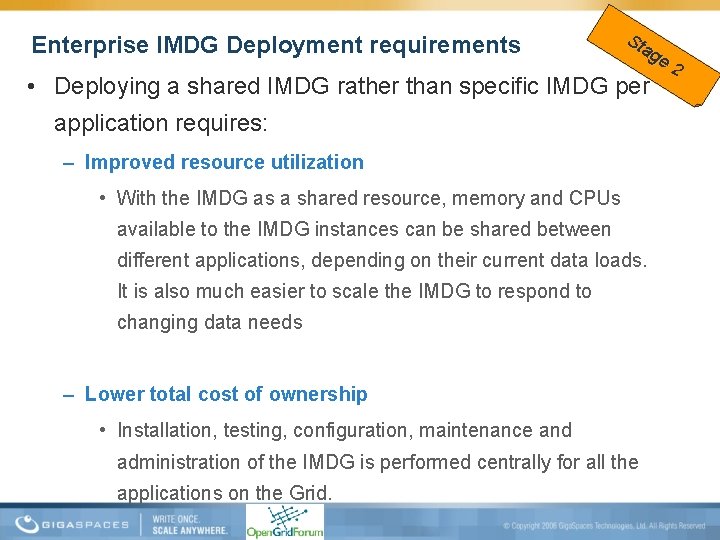

Enterprise IMDG Deployment requirements Sta ge 2 • Deploying a shared IMDG rather than specific IMDG per application requires: – Improved resource utilization • With the IMDG as a shared resource, memory and CPUs available to the IMDG instances can be shared between different applications, depending on their current data loads. It is also much easier to scale the IMDG to respond to changing data needs – Lower total cost of ownership • Installation, testing, configuration, maintenance and administration of the IMDG is performed centrally for all the applications on the Grid.

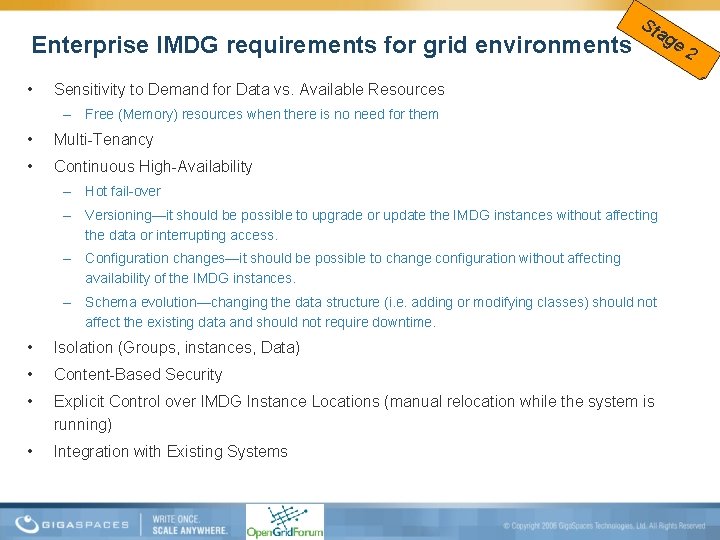

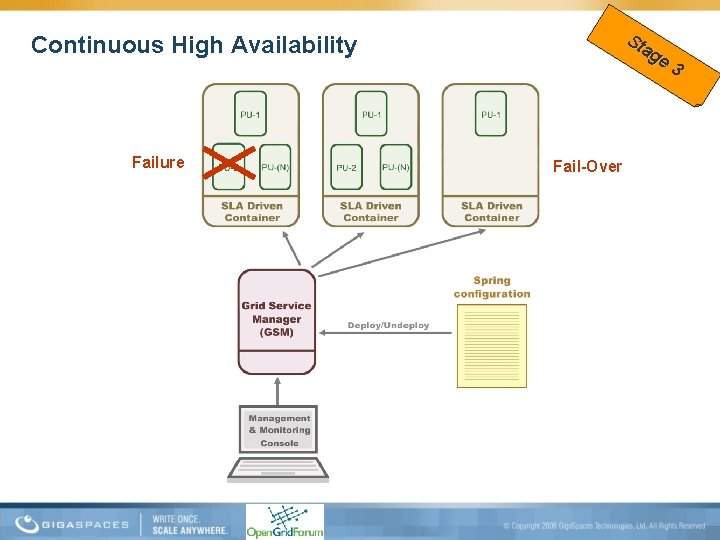

Enterprise IMDG requirements for grid environments • Sta ge 2 Sensitivity to Demand for Data vs. Available Resources – Free (Memory) resources when there is no need for them • Multi-Tenancy • Continuous High-Availability – Hot fail-over – Versioning—it should be possible to upgrade or update the IMDG instances without affecting the data or interrupting access. – Configuration changes—it should be possible to change configuration without affecting availability of the IMDG instances. – Schema evolution—changing the data structure (i. e. adding or modifying classes) should not affect the existing data and should not require downtime. • Isolation (Groups, instances, Data) • Content-Based Security • Explicit Control over IMDG Instance Locations (manual relocation while the system is running) • Integration with Existing Systems

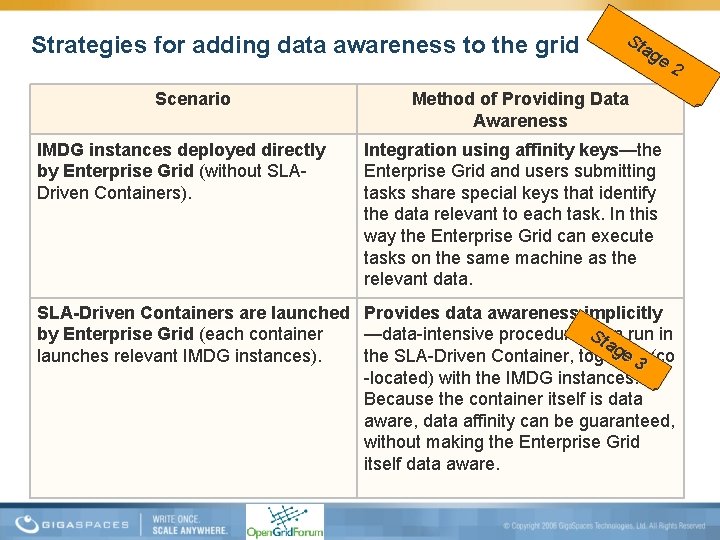

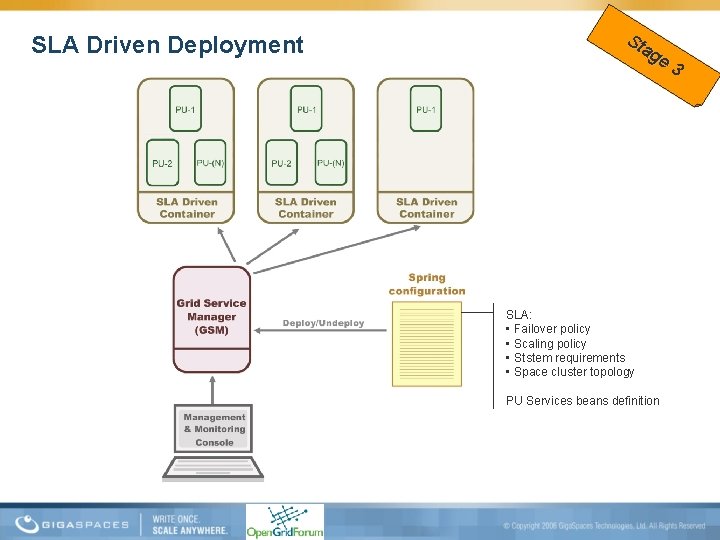

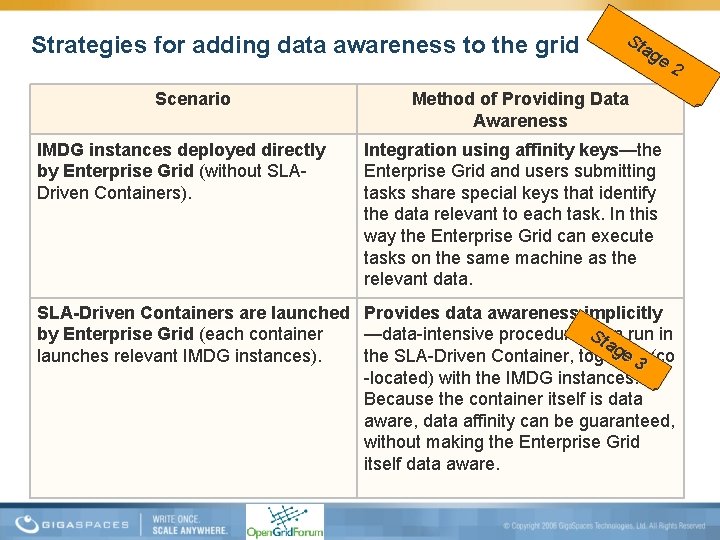

Strategies for adding data awareness to the grid Scenario IMDG instances deployed directly by Enterprise Grid (without SLADriven Containers). Sta ge 2 Method of Providing Data Awareness Integration using affinity keys—the Enterprise Grid and users submitting tasks share special keys that identify the data relevant to each task. In this way the Enterprise Grid can execute tasks on the same machine as the relevant data. SLA-Driven Containers are launched Provides data awareness implicitly Sta by Enterprise Grid (each container —data-intensive procedures can run in ge launches relevant IMDG instances). the SLA-Driven Container, together (co 3 -located) with the IMDG instances. Because the container itself is data aware, data affinity can be guaranteed, without making the Enterprise Grid itself data aware.

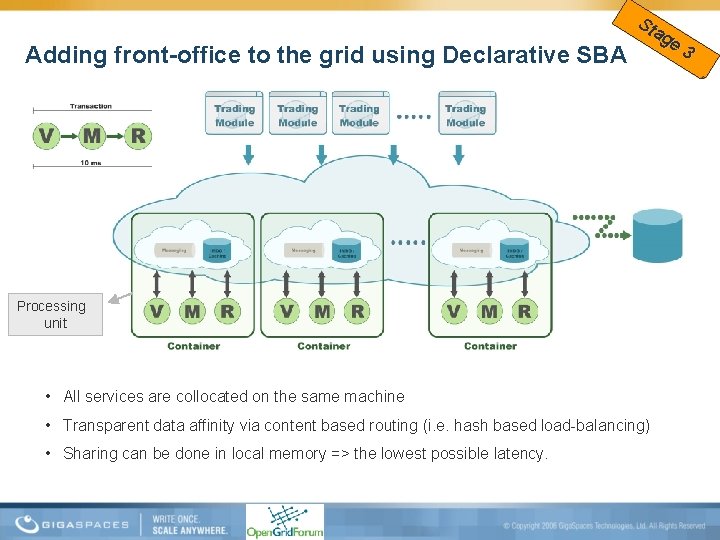

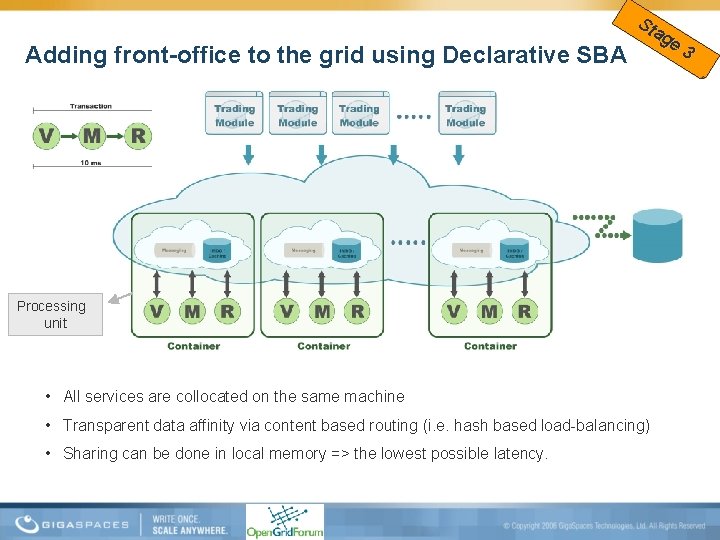

Adding front-office to the grid using Declarative SBA Sta ge 3 Processing unit • All services are collocated on the same machine • Transparent data affinity via content based routing (i. e. hash based load-balancing) • Sharing can be done in local memory => the lowest possible latency.

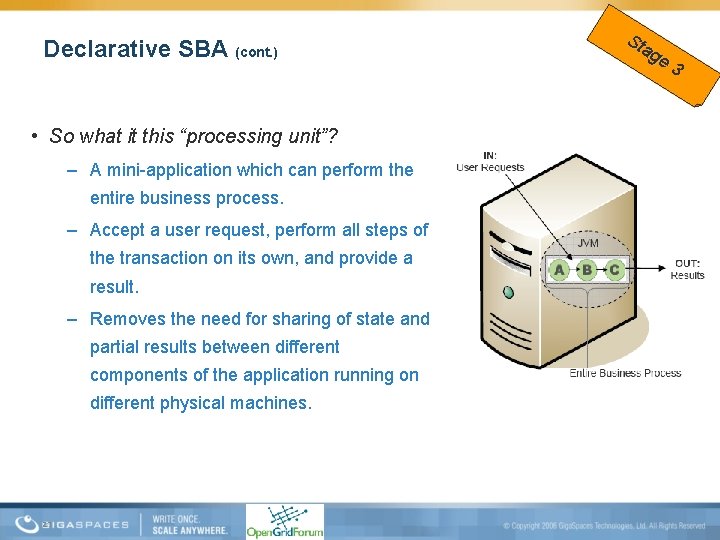

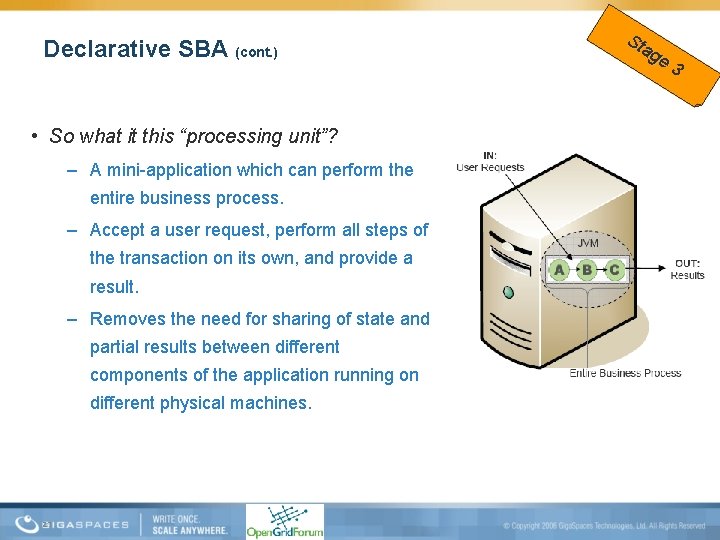

Declarative SBA (cont. ) • So what it this “processing unit”? – A mini-application which can perform the entire business process. – Accept a user request, perform all steps of the transaction on its own, and provide a result. – Removes the need for sharing of state and partial results between different components of the application running on different physical machines. 27 Sta ge 3

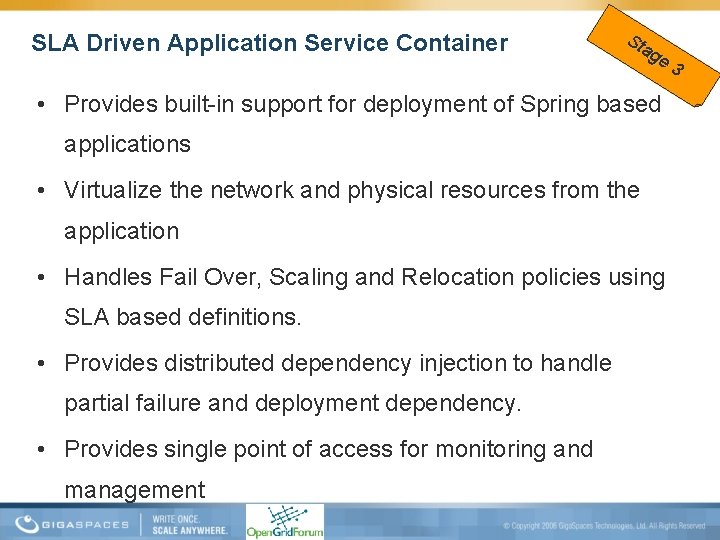

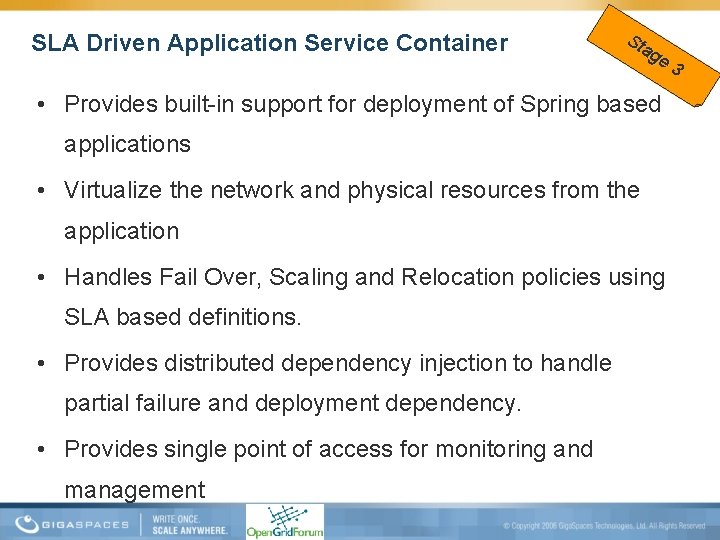

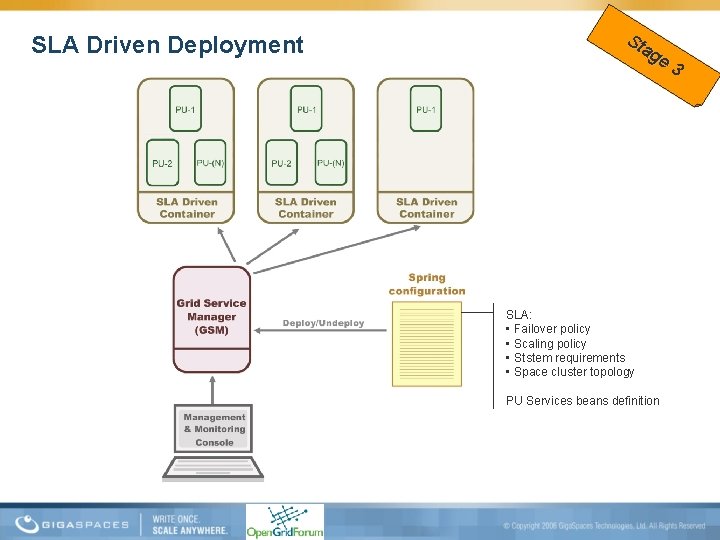

SLA Driven Application Service Container Sta ge 3 • Provides built-in support for deployment of Spring based applications • Virtualize the network and physical resources from the application • Handles Fail Over, Scaling and Relocation policies using SLA based definitions. • Provides distributed dependency injection to handle partial failure and deployment dependency. • Provides single point of access for monitoring and management

SLA Driven Deployment Sta ge 3 SLA: • Failover policy • Scaling policy • Ststem requirements • Space cluster topology PU Services beans definition

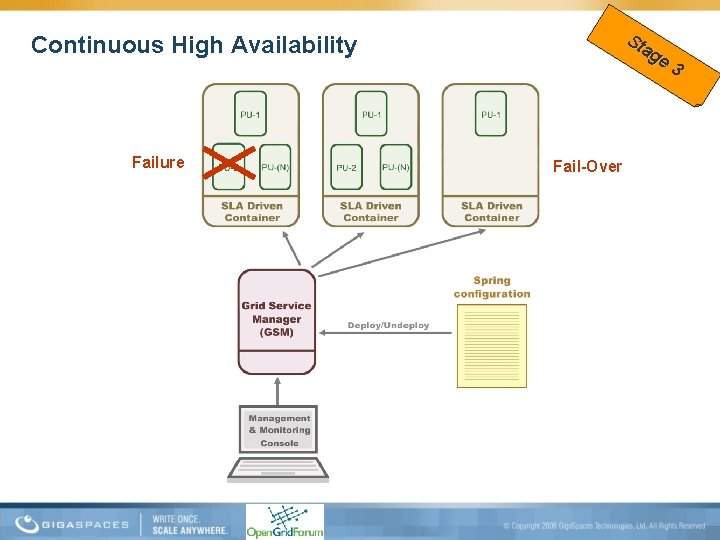

Sta ge 3 Continuous High Availability Failure Fail-Over

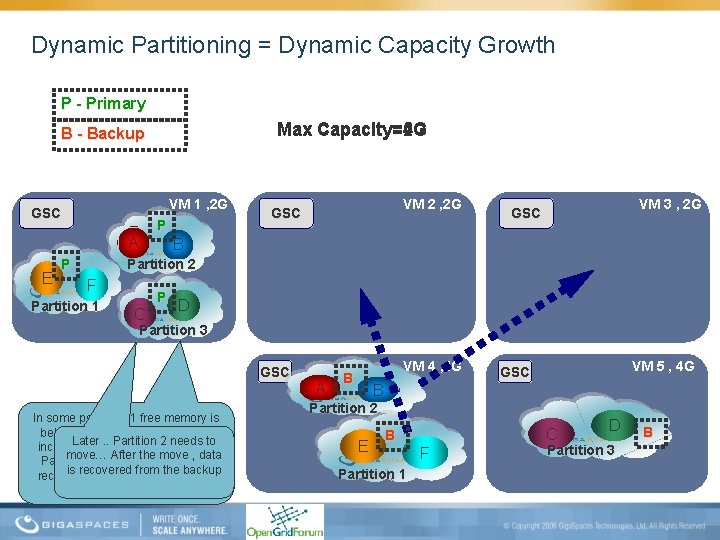

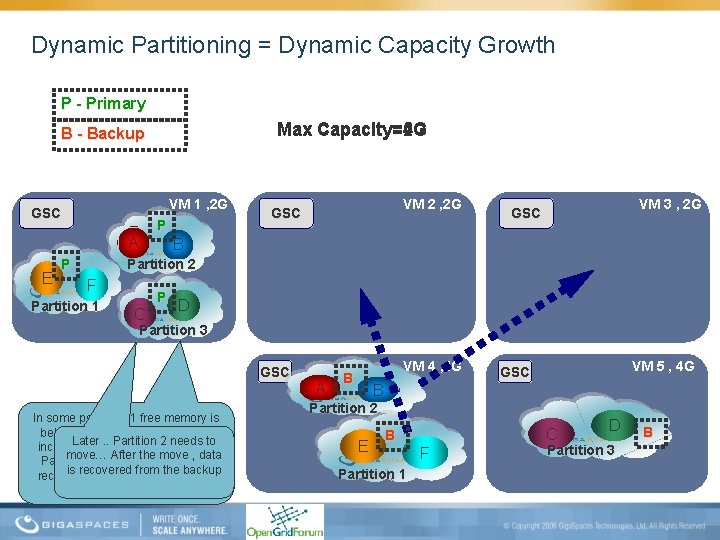

Dynamic Partitioning = Dynamic Capacity Growth P - Primary Max Capacity=6 G Max Capacity=4 G Max Capacity=2 G B - Backup VM 1 , 2 G GSC P A E P VM 2 , 2 G GSC VM 3 , 2 G GSC B Partition 2 F Partition 1 C P D Partition 3 GSC In some point VM 1 free memory is below 20 % - it about the time to Later. . Partition 2 needs to increase the capacity – lets move… After the move , data Partitions 1 to another GSC and is recovered from the backup recover the data from the running backup! A VM 4 , 4 G B VM 5 , 4 G GSC B Partition 2 E C B Partition 1 F D Partition 3 B

A closer look at Open. Spaces and Declarative SBA Development

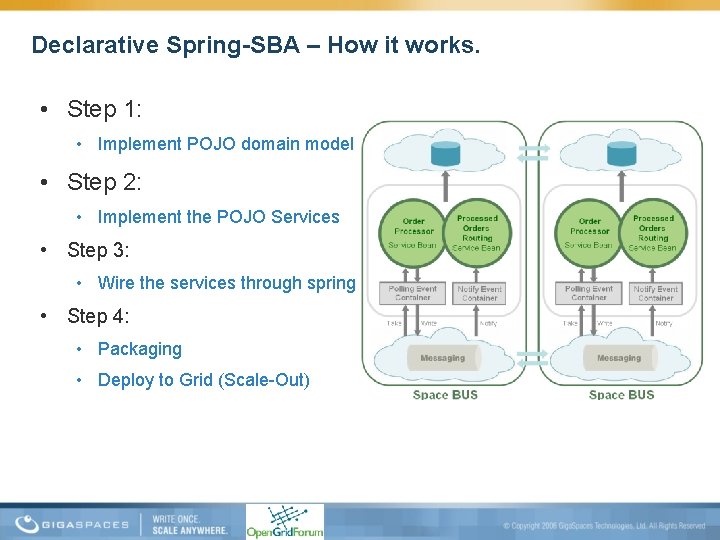

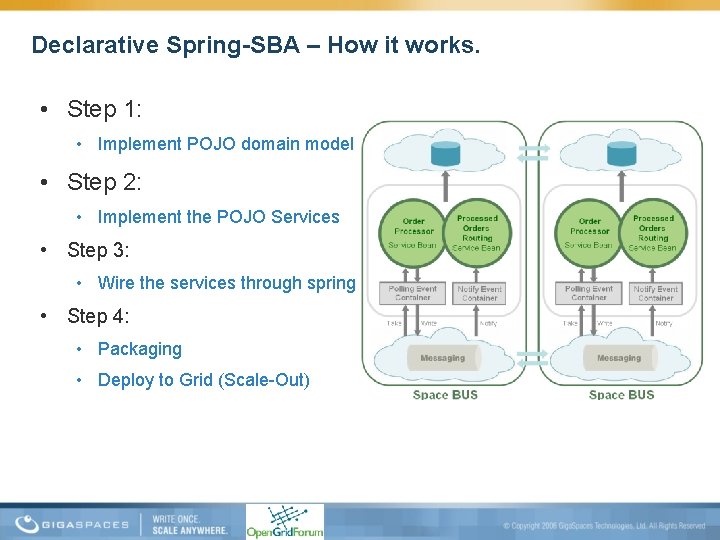

Declarative Spring-SBA – How it works. • Step 1: • Implement POJO domain model • Step 2: • Implement the POJO Services • Step 3: • Wire the services through spring • Step 4: • Packaging • Deploy to Grid (Scale-Out)

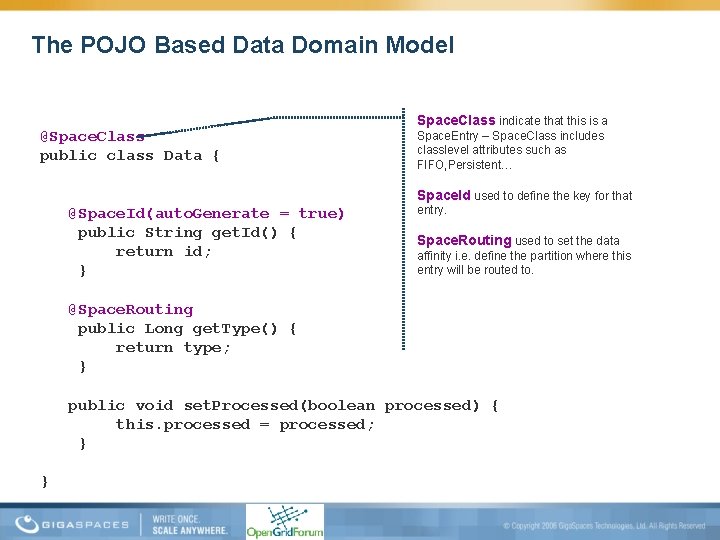

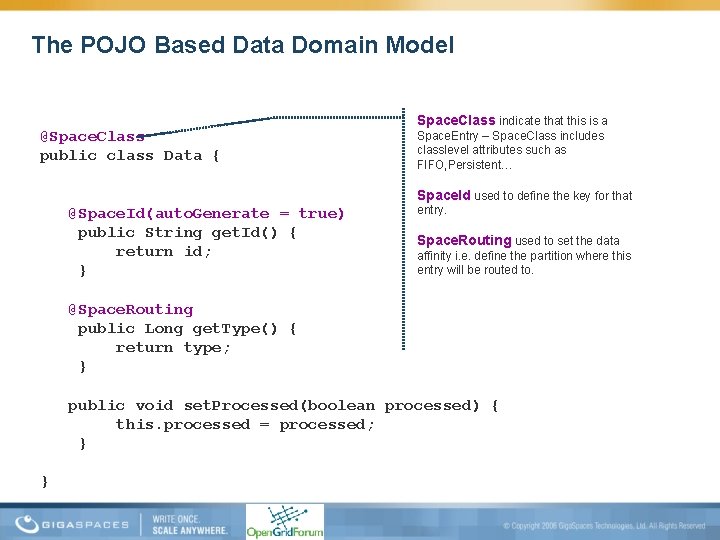

The POJO Based Data Domain Model @Space. Class public class Data { Space. Class indicate that this is a Space. Entry – Space. Class includes classlevel attributes such as FIFO, Persistent… Space. Id used to define the key for that @Space. Id(auto. Generate = true) public String get. Id() { return id; } entry. Space. Routing used to set the data affinity i. e. define the partition where this entry will be routed to. @Space. Routing public Long get. Type() { return type; } public void set. Processed(boolean processed) { this. processed = processed; } }

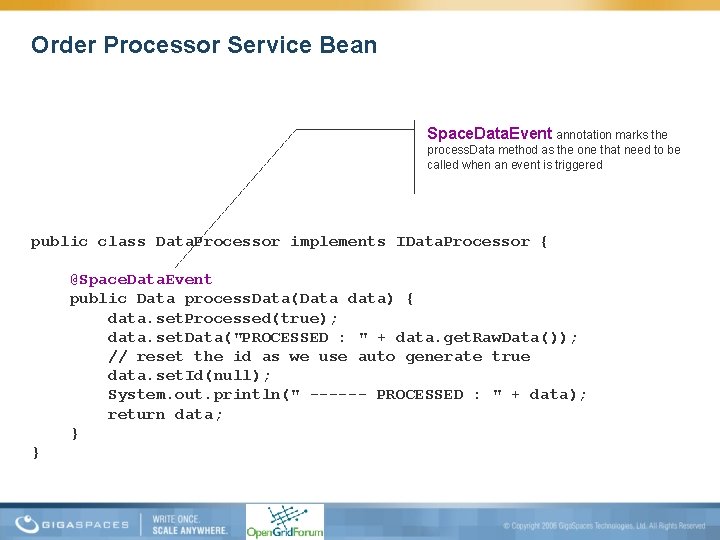

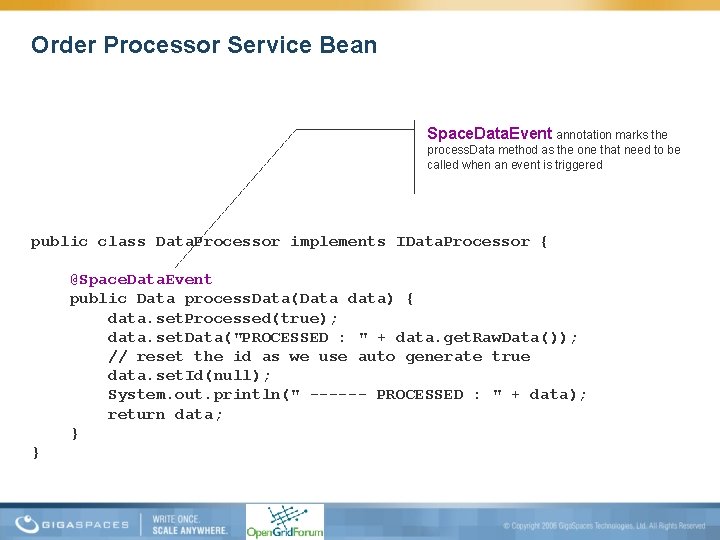

Order Processor Service Bean Space. Data. Event annotation marks the process. Data method as the one that need to be called when an event is triggered public class Data. Processor implements IData. Processor { @Space. Data. Event public Data process. Data(Data data) { data. set. Processed(true); data. set. Data("PROCESSED : " + data. get. Raw. Data()); // reset the id as we use auto generate true data. set. Id(null); System. out. println(" ------ PROCESSED : " + data); return data; } }

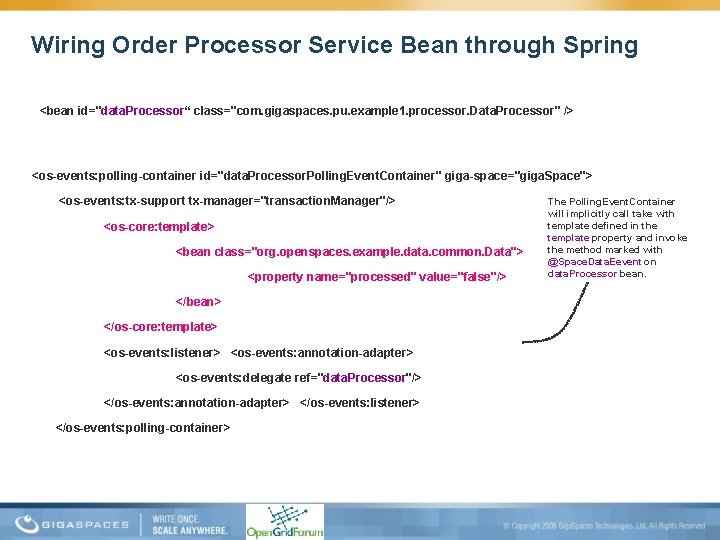

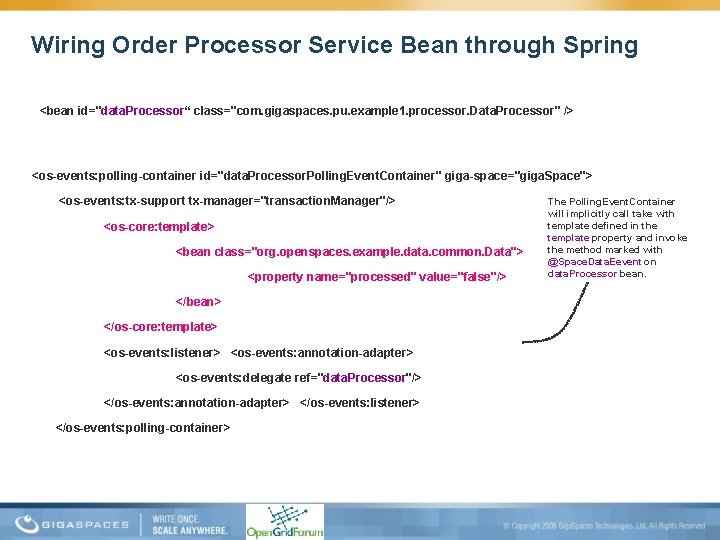

Wiring Order Processor Service Bean through Spring <bean id="data. Processor“ class="com. gigaspaces. pu. example 1. processor. Data. Processor" /> <os-events: polling-container id="data. Processor. Polling. Event. Container" giga-space="giga. Space"> <os-events: tx-support tx-manager="transaction. Manager"/> <os-core: template> <bean class="org. openspaces. example. data. common. Data"> <property name="processed" value="false"/> </bean> </os-core: template> <os-events: listener> <os-events: annotation-adapter> <os-events: delegate ref="data. Processor"/> </os-events: annotation-adapter> </os-events: listener> </os-events: polling-container> The Polling. Event. Container will implicitly call take with template defined in the template property and invoke the method marked with @Space. Data. Eevent on data. Processor bean.

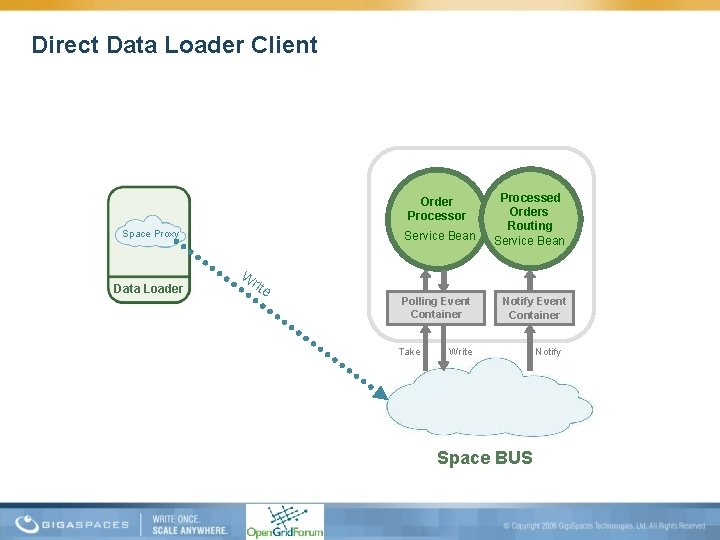

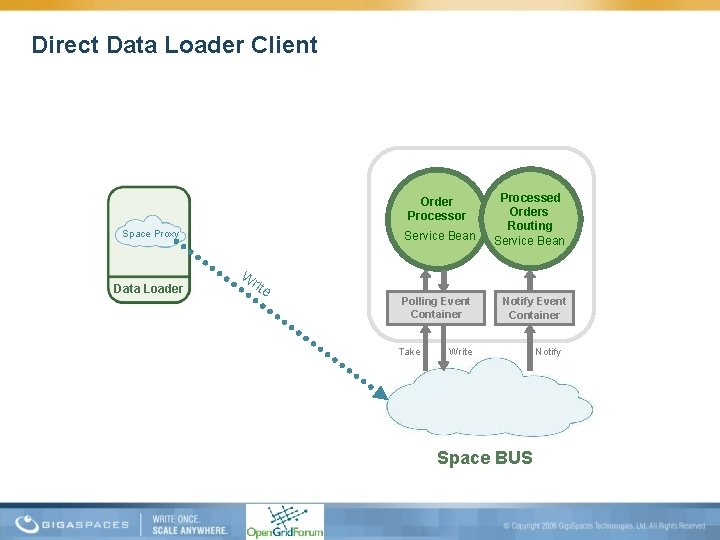

Direct Data Loader Client Order Processor Space Proxy Service Bean Processed Orders Routing Service Bean W Data Loader rite Polling Event Container Take Notify Event Container Write Space BUS Notify

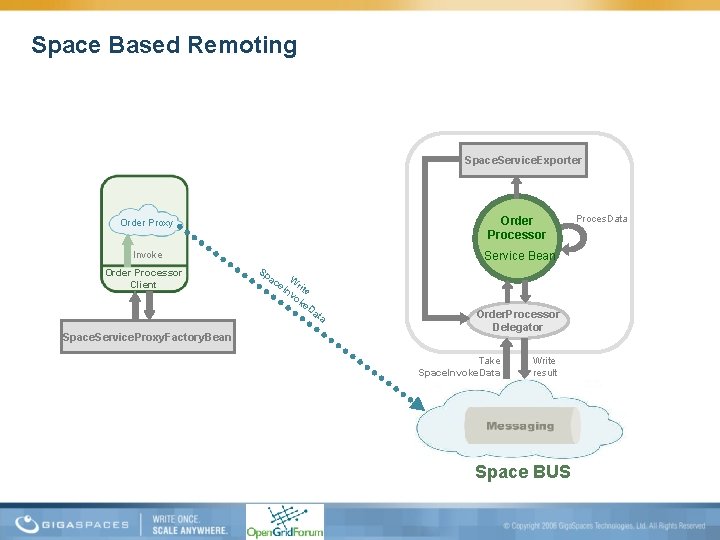

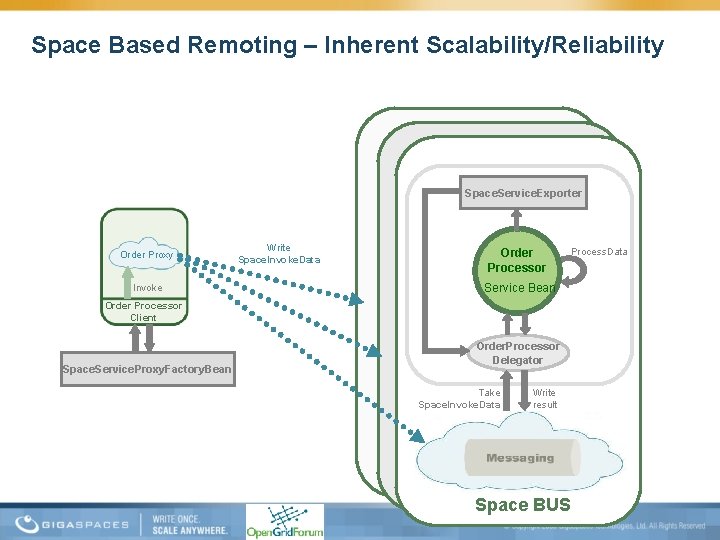

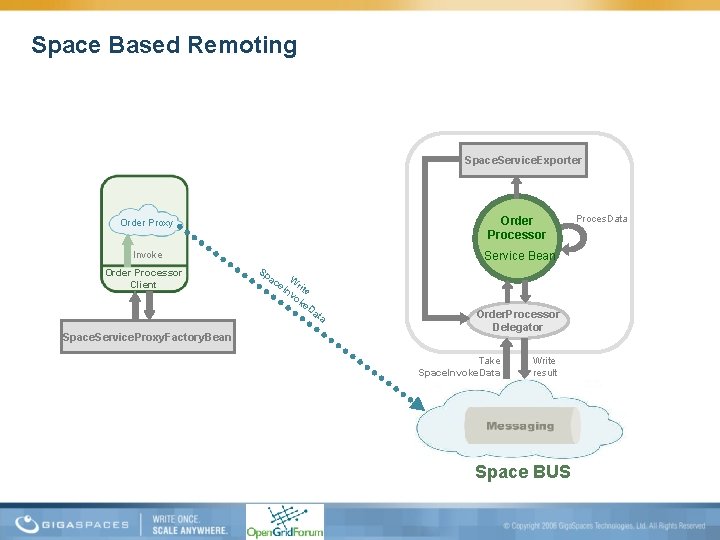

Space Based Remoting Space. Service. Exporter Order Proxy Order Processor Invoke Service Bean Order Processor Client Space. Service. Proxy. Factory. Bean Sp ac W r nv ite ok e. D a e. I ta Order. Processor Delegator Take Space. Invoke. Data Write result Space BUS Proces. Data

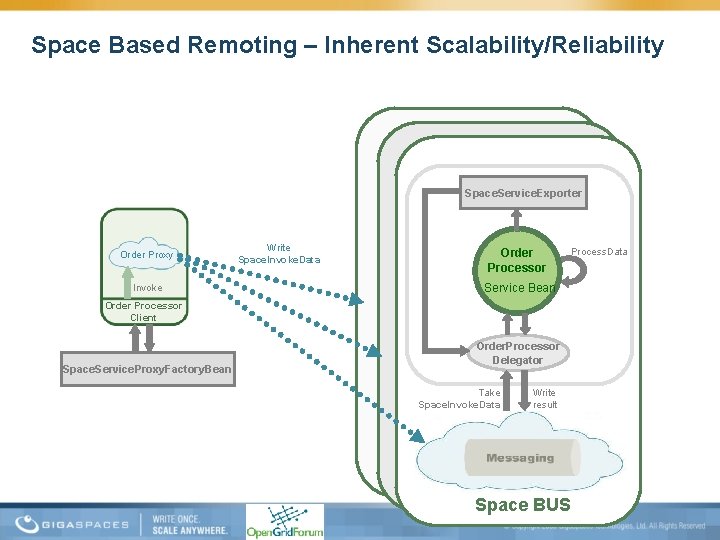

Space Based Remoting – Inherent Scalability/Reliability Space. Service. Exporter Order Proxy Invoke Write Space. Invoke. Data Order Processor Process. Data Service Bean Order Processor Client Space. Service. Proxy. Factory. Bean Order. Processor Delegator Take Space. Invoke. Data Write result Space BUS

Looking into the Future… Many Enhancements! • Enhance Performance – Built in infiniband support – Voltaire , Cisco • Enhance Database integration – Enhance the Space Mirror support (async persistency) • Enhance partnership and integration with grid vendors – Data. Synapse , Platform Computing , Sun Grid Engine, Microsoft Compute Cluster Server • Enhance CPP and. Net support – Performance optimization – first goal – same as java – Support for complex object mapping

Conclusions and Summary • Typical IMDG won’t help you – You need Data Aware Enterprise IMDG to solve the data contention and latency challenges. – Data affinity need its twin: data & business locality • The Enterprise IMDG co-locates the data with the business logic – Using self-sufficient autonomic processing unit deployed into SLA based container that scales via the Enterprise Grid • The Enterprise IMDG bring the Front-office into the grid – Makes the grid a utility model for wide spectrum of applications across the organization

Case Studies

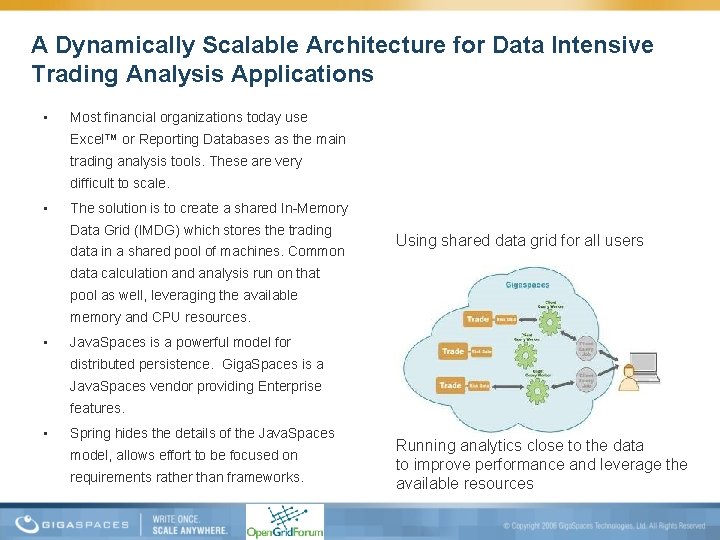

A Dynamically Scalable Architecture for Data Intensive Trading Analysis Applications • Most financial organizations today use Excel™ or Reporting Databases as the main trading analysis tools. These are very difficult to scale. • The solution is to create a shared In-Memory Data Grid (IMDG) which stores the trading data in a shared pool of machines. Common Using shared data grid for all users data calculation and analysis run on that pool as well, leveraging the available memory and CPU resources. • Java. Spaces is a powerful model for distributed persistence. Giga. Spaces is a Java. Spaces vendor providing Enterprise features. • Spring hides the details of the Java. Spaces model, allows effort to be focused on requirements rather than frameworks. Running analytics close to the data to improve performance and leverage the available resources

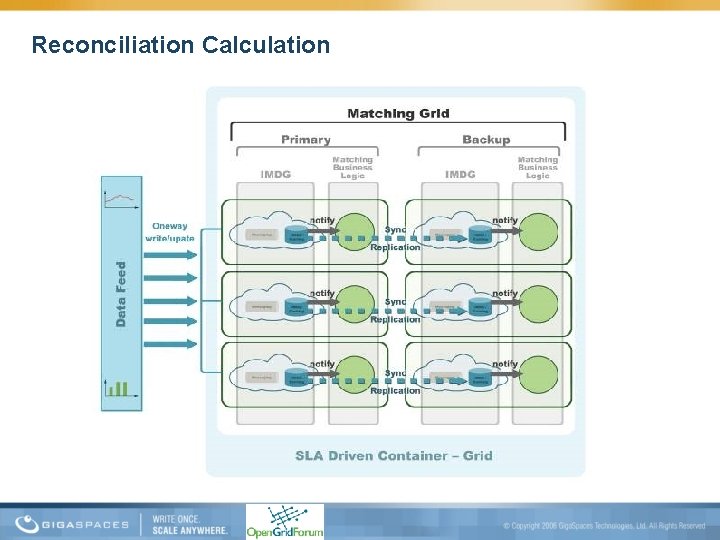

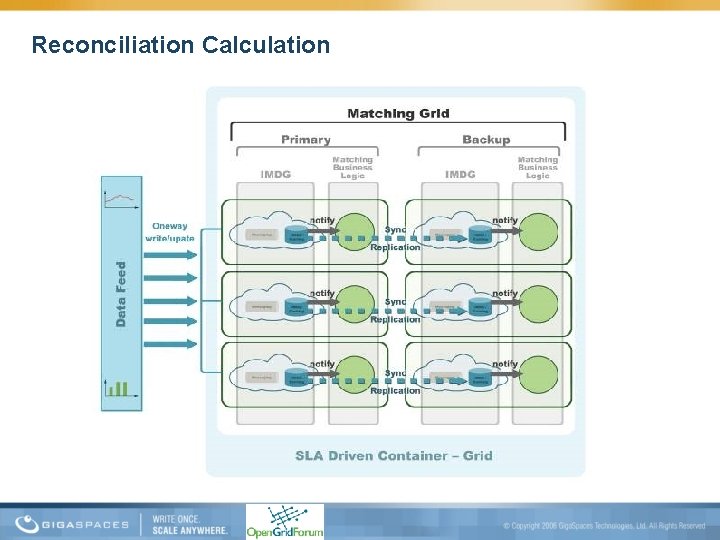

Reconciliation Calculation

Questions?

Thank You! shay@gigaspaces. com