Big Data Infrastructure CS 489698 Big Data Infrastructure

Big Data Infrastructure CS 489/698 Big Data Infrastructure (Winter 2016) Week 11: Analyzing Graphs, Redux (1/2) March 22, 2016 Jimmy Lin David R. Cheriton School of Computer Science University of Waterloo These slides are available at http: //lintool. github. io/bigdata-2016 w/ This work is licensed under a Creative Commons Attribution-Noncommercial-Share Alike 3. 0 United States See http: //creativecommons. org/licenses/by-nc-sa/3. 0/us/ for details

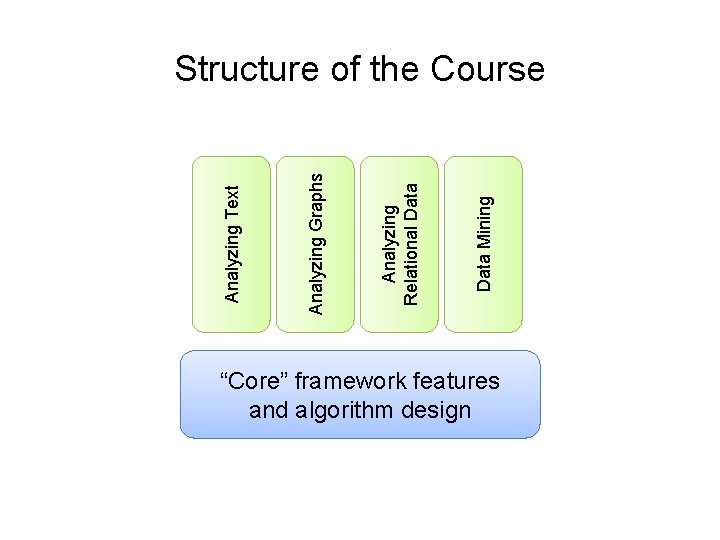

Data Mining Analyzing Relational Data Analyzing Graphs Analyzing Text Structure of the Course “Core” framework features and algorithm design

Characteristics of Graph Algorithms ¢ Parallel graph traversals l l ¢ Local computations Message passing along graph edges Iterations

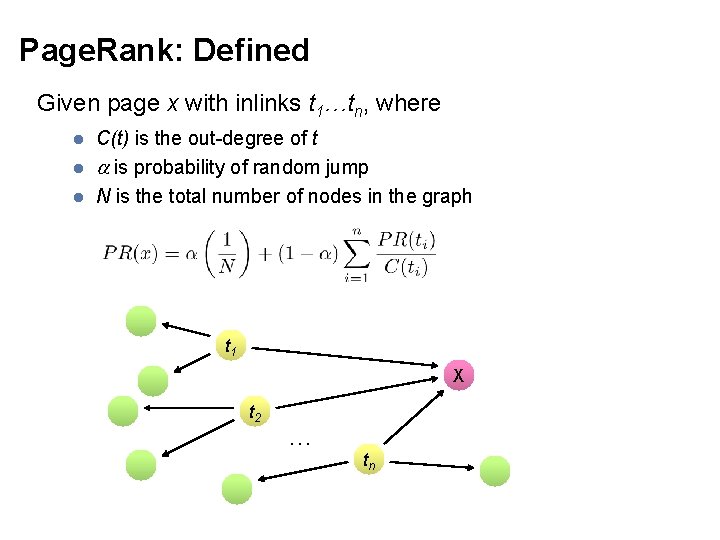

Page. Rank: Defined Given page x with inlinks t 1…tn, where l l l C(t) is the out-degree of t is probability of random jump N is the total number of nodes in the graph t 1 X t 2 … tn

![Page. Rank in Map. Reduce n 1 [n 2, n 4] n 2 [n Page. Rank in Map. Reduce n 1 [n 2, n 4] n 2 [n](http://slidetodoc.com/presentation_image_h2/f6c4659e993b22781d061f7d6dbbf8c4/image-5.jpg)

Page. Rank in Map. Reduce n 1 [n 2, n 4] n 2 [n 3, n 5] n 2 n 3 [n 4] n 4 [n 5] n 4 n 5 [n 1, n 2, n 3] Map n 1 n 4 n 2 n 5 n 3 n 4 n 1 n 2 n 5 Reduce n 1 [n 2, n 4] n 2 [n 3, n 5] n 3 [n 4] n 4 [n 5] n 5 [n 1, n 2, n 3] n 3 n 5

Map. Reduce Sucks ¢ Java verbosity ¢ Hadoop task startup time ¢ Stragglers ¢ Needless graph shuffling ¢ Checkpointing at each iteration

Characteristics of Graph Algorithms ¢ Parallel graph traversals l l ¢ Local computations Message passing along graph edges Iterations ? e u c s e r e h t Spark to

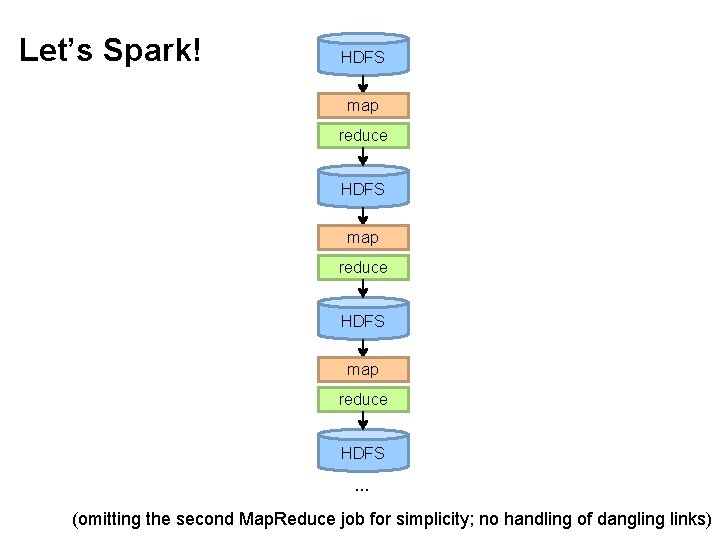

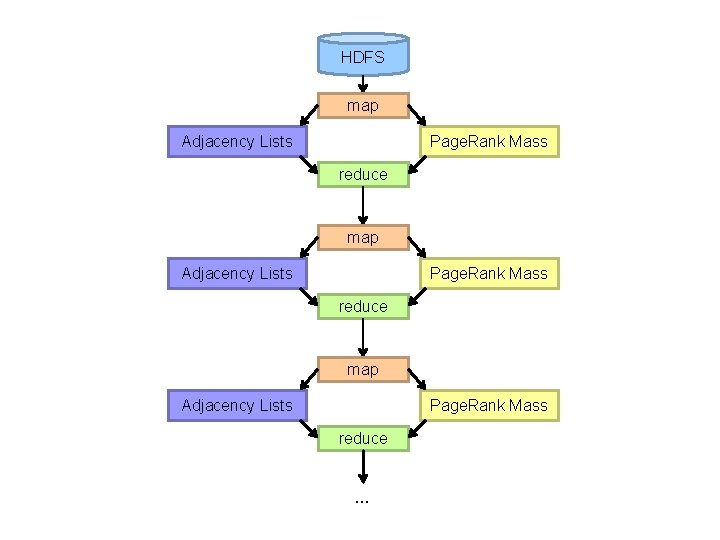

Let’s Spark! HDFS map reduce HDFS … (omitting the second Map. Reduce job for simplicity; no handling of dangling links)

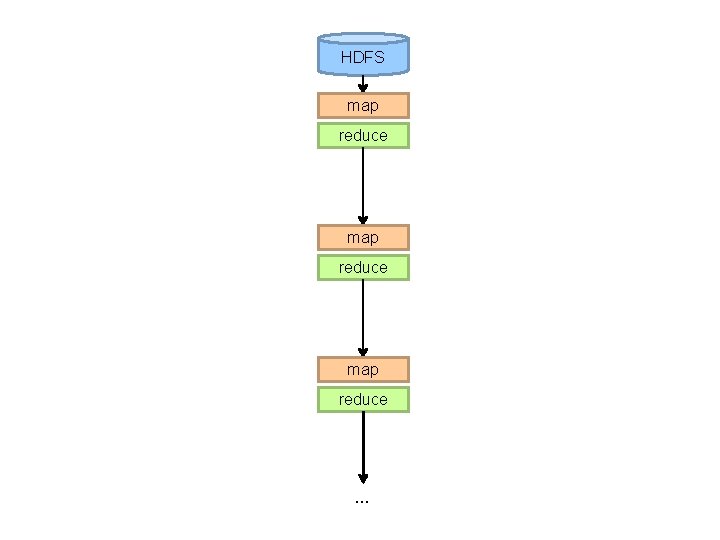

HDFS map reduce …

HDFS map Adjacency Lists Page. Rank Mass reduce …

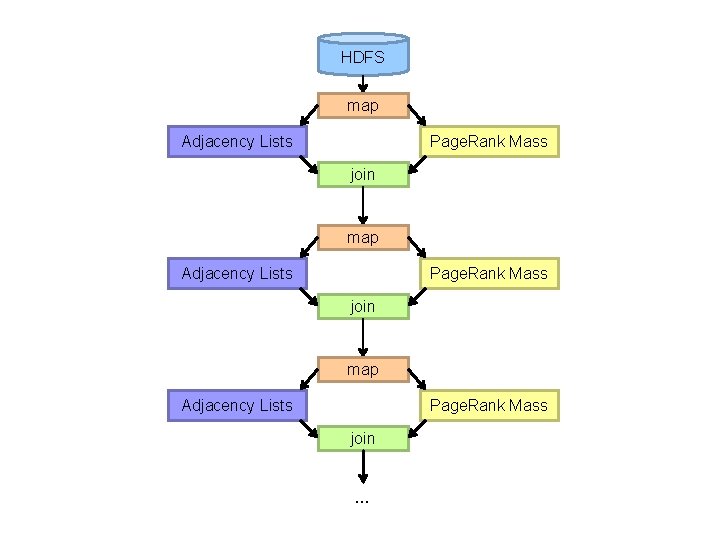

HDFS map Adjacency Lists Page. Rank Mass join …

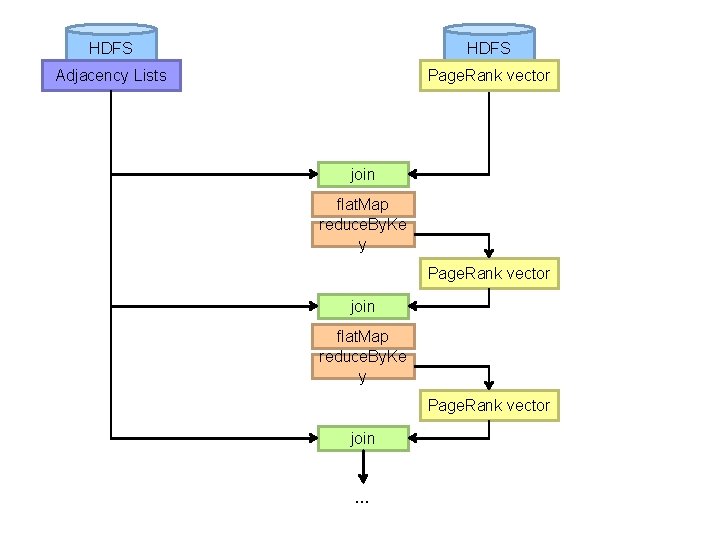

HDFS Adjacency Lists Page. Rank vector join flat. Map reduce. By. Ke y Page. Rank vector join …

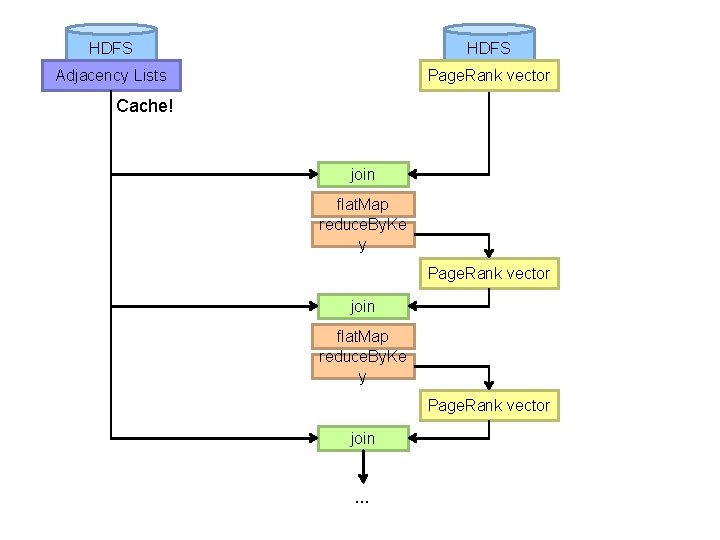

HDFS Adjacency Lists Page. Rank vector Cache! join flat. Map reduce. By. Ke y Page. Rank vector join …

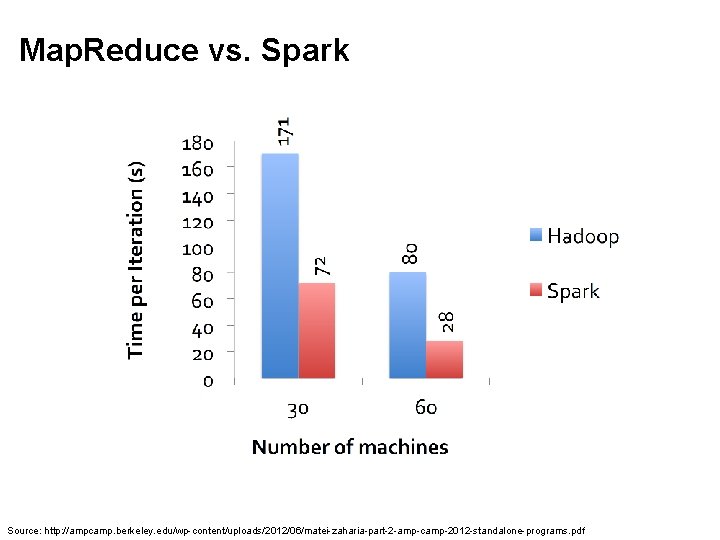

Map. Reduce vs. Spark Source: http: //ampcamp. berkeley. edu/wp-content/uploads/2012/06/matei-zaharia-part-2 -amp-camp-2012 -standalone-programs. pdf

Map. Reduce Sucks ¢ Java verbosity ¢ Hadoop task startup time ¢ Stragglers ¢ Needless graph shuffling ¢ Checkpointing at each iteration a h t a h W ? d e x i f ve we

Characteristics of Graph Algorithms ¢ Parallel graph traversals l l ¢ Local computations Message passing along graph edges Iterations

Big Data Processing in a Nutshell ¢ Lessons learned so far: l l l ¢ Partition Replicate Reduce cross-partition communication What makes Map. Reduce/Spark fast?

Characteristics of Graph Algorithms ¢ Parallel graph traversals l l ¢ Local computations Message passing along graph edges Iterations ? e u s s i e h t s ’ t a h W Obvious solution: keep “neighborhoods” together!

Simple Partitioning Techniques ¢ Hash partitioning ¢ Range partitioning on some underlying linearization l l Web pages: lexicographic sort of domain-reversed URLs Social networks: sort by demographic characteristics

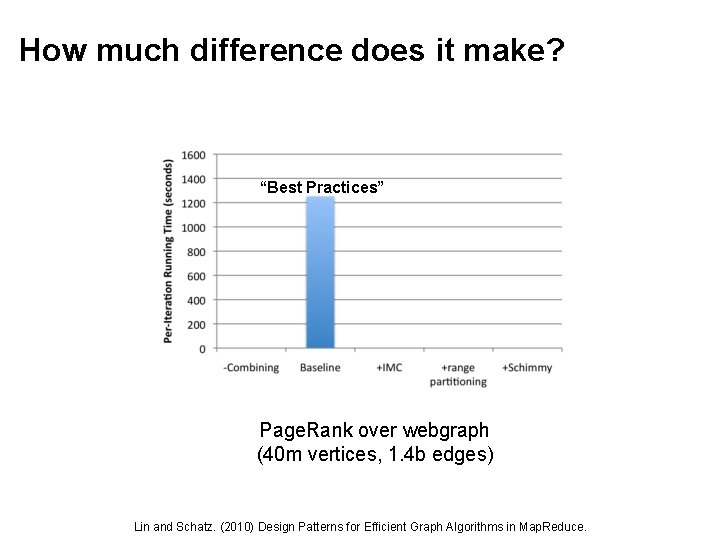

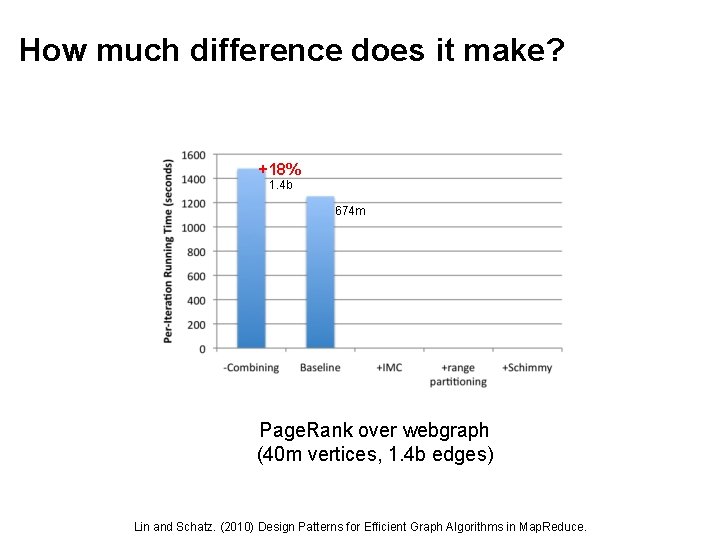

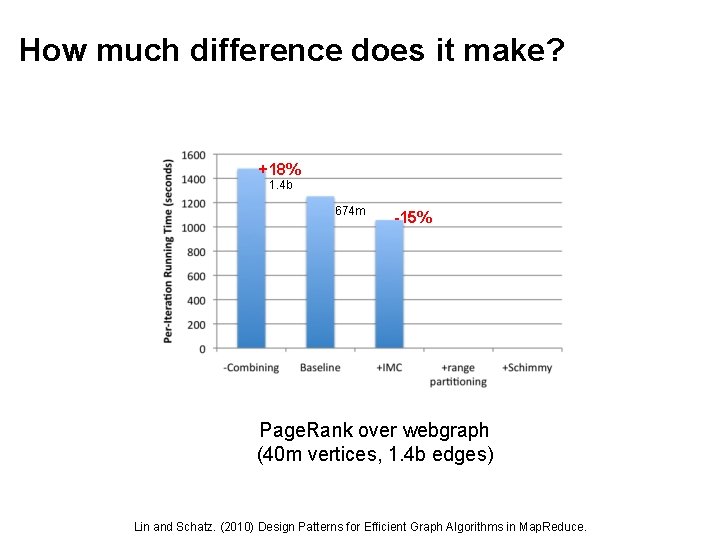

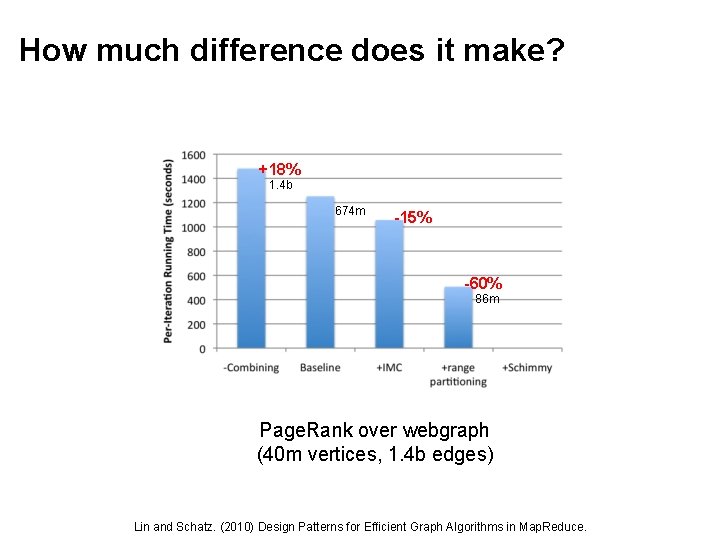

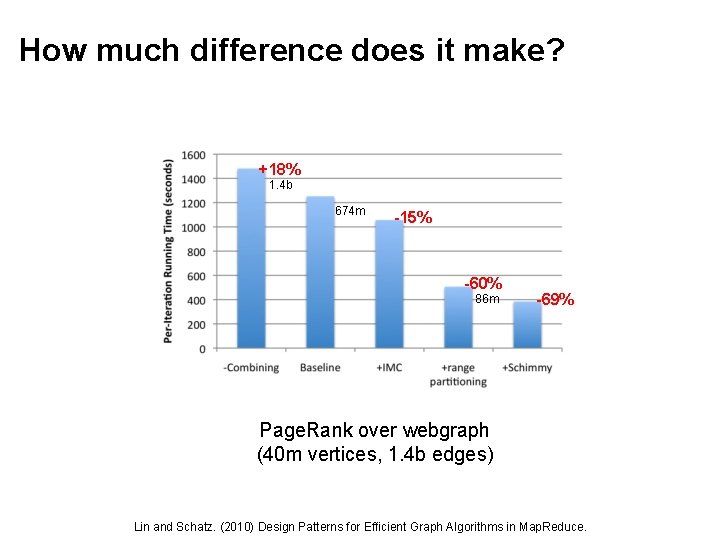

How much difference does it make? “Best Practices” Page. Rank over webgraph (40 m vertices, 1. 4 b edges) Lin and Schatz. (2010) Design Patterns for Efficient Graph Algorithms in Map. Reduce.

How much difference does it make? +18% 1. 4 b 674 m Page. Rank over webgraph (40 m vertices, 1. 4 b edges) Lin and Schatz. (2010) Design Patterns for Efficient Graph Algorithms in Map. Reduce.

How much difference does it make? +18% 1. 4 b 674 m -15% Page. Rank over webgraph (40 m vertices, 1. 4 b edges) Lin and Schatz. (2010) Design Patterns for Efficient Graph Algorithms in Map. Reduce.

How much difference does it make? +18% 1. 4 b 674 m -15% -60% 86 m Page. Rank over webgraph (40 m vertices, 1. 4 b edges) Lin and Schatz. (2010) Design Patterns for Efficient Graph Algorithms in Map. Reduce.

How much difference does it make? +18% 1. 4 b 674 m -15% -60% 86 m -69% Page. Rank over webgraph (40 m vertices, 1. 4 b edges) Lin and Schatz. (2010) Design Patterns for Efficient Graph Algorithms in Map. Reduce.

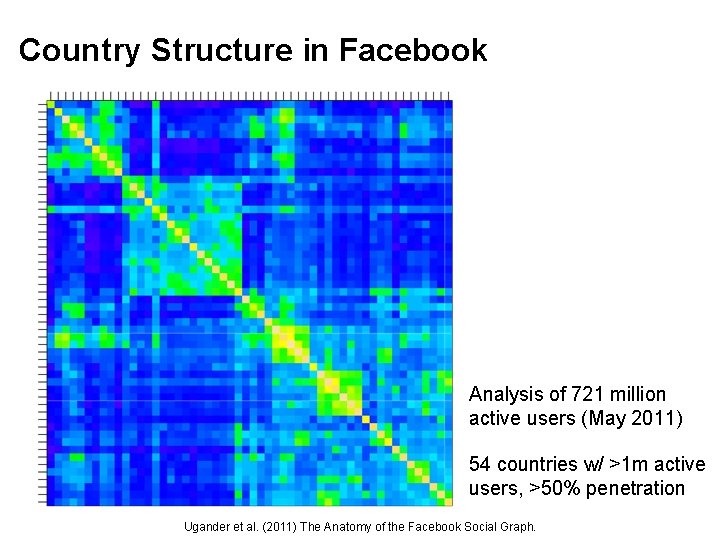

Country Structure in Facebook Analysis of 721 million active users (May 2011) 54 countries w/ >1 m active users, >50% penetration Ugander et al. (2011) The Anatomy of the Facebook Social Graph.

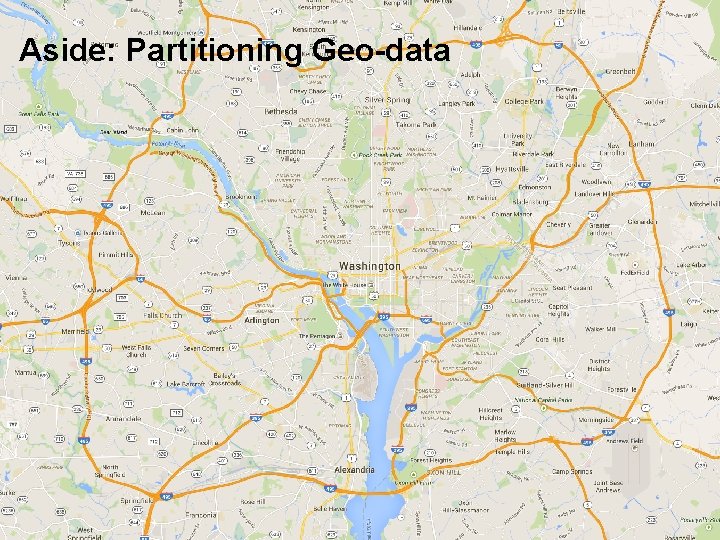

Aside: Partitioning Geo-data

Geo-data = regular graph

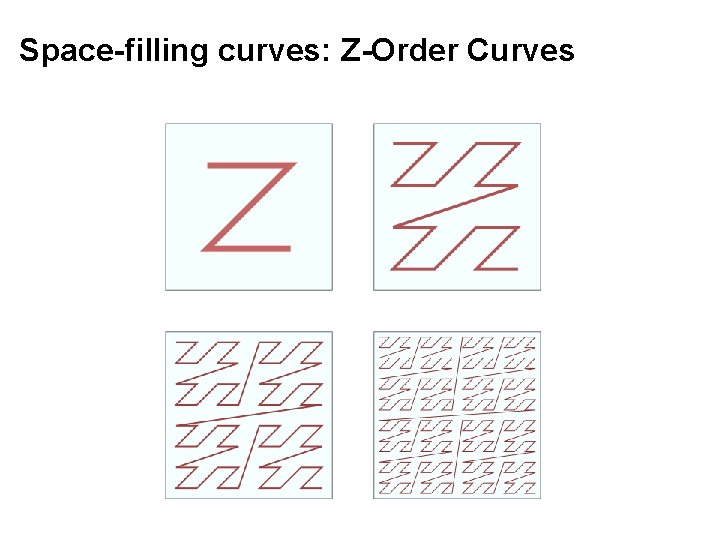

Space-filling curves: Z-Order Curves

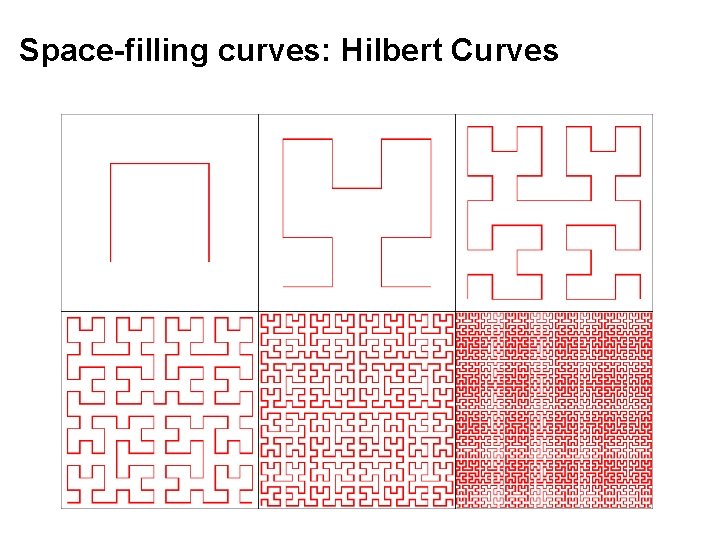

Space-filling curves: Hilbert Curves

Source: http: //www. flickr. com/photos/fusedforces/4324320625/

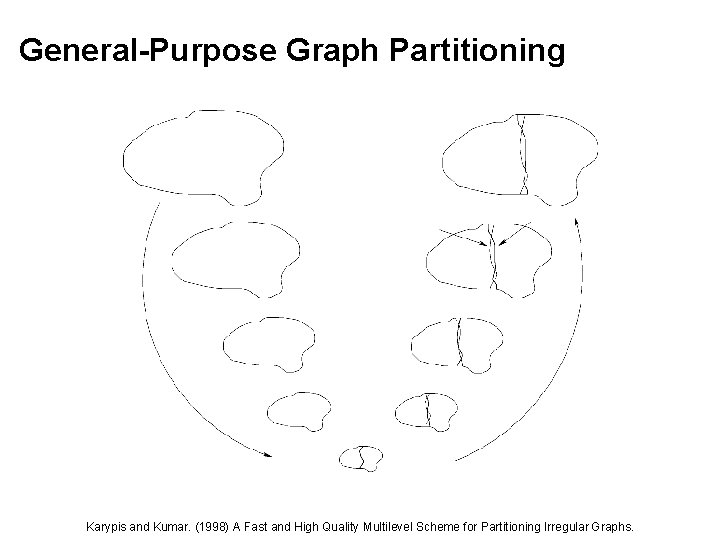

General-Purpose Graph Partitioning Karypis and Kumar. (1998) A Fast and High Quality Multilevel Scheme for Partitioning Irregular Graphs.

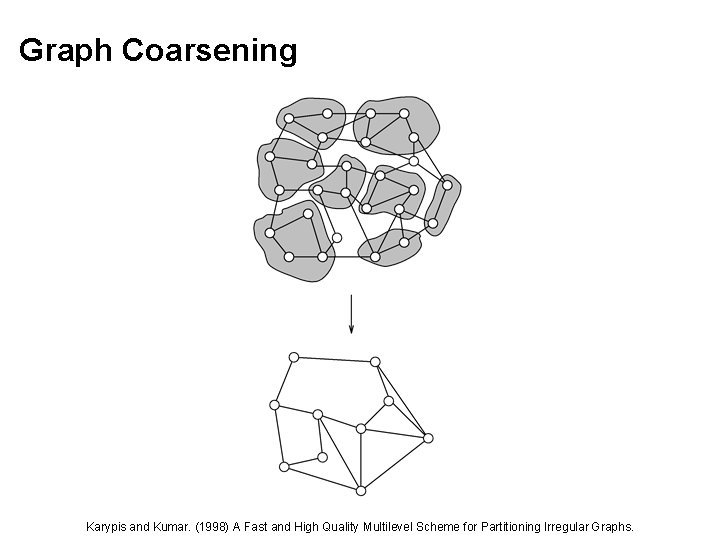

Graph Coarsening Karypis and Kumar. (1998) A Fast and High Quality Multilevel Scheme for Partitioning Irregular Graphs.

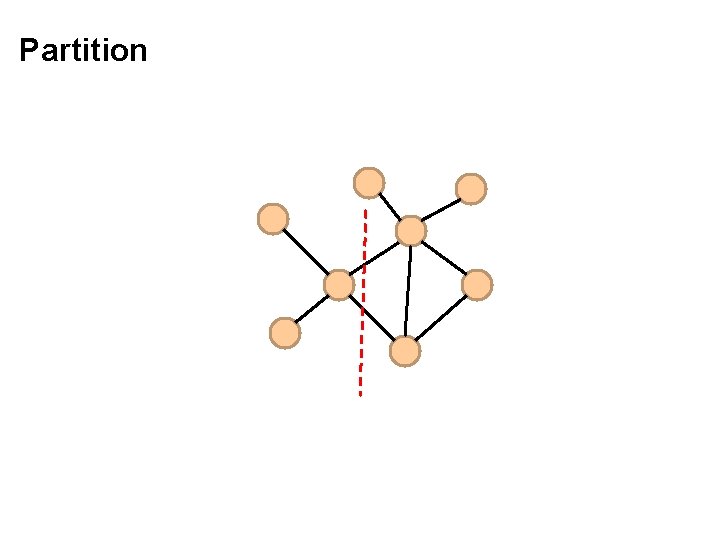

Partition

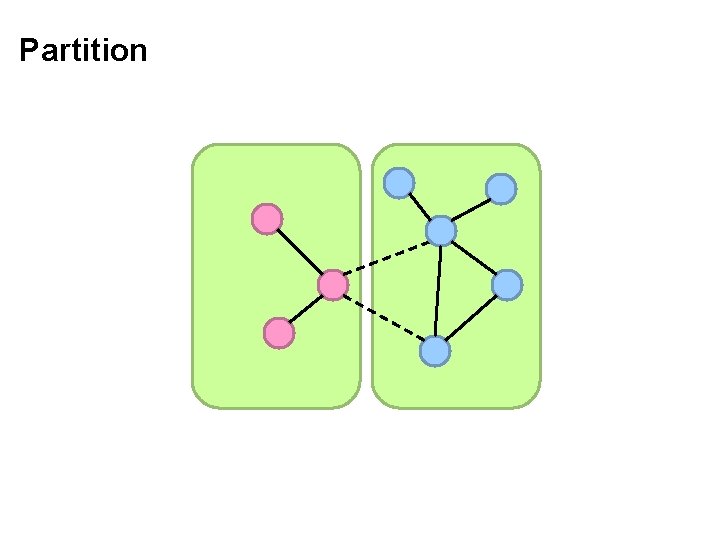

Partition

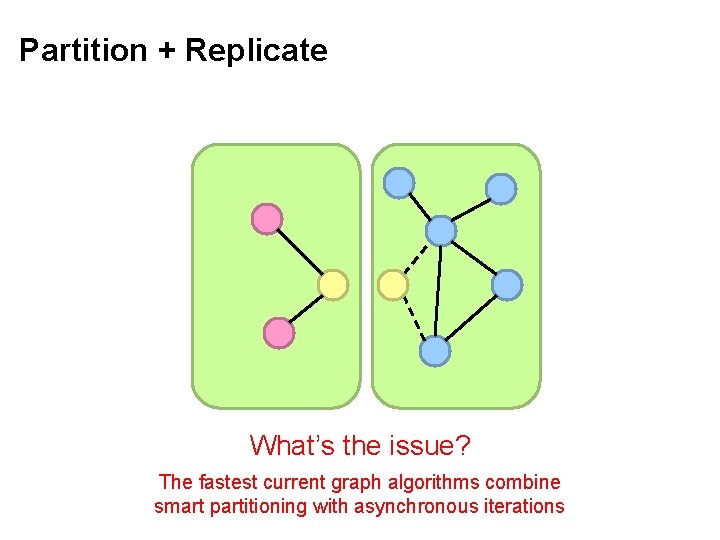

Partition + Replicate What’s the issue? The fastest current graph algorithms combine smart partitioning with asynchronous iterations

Graph Processing Frameworks Source: Wikipedia (Waste container)

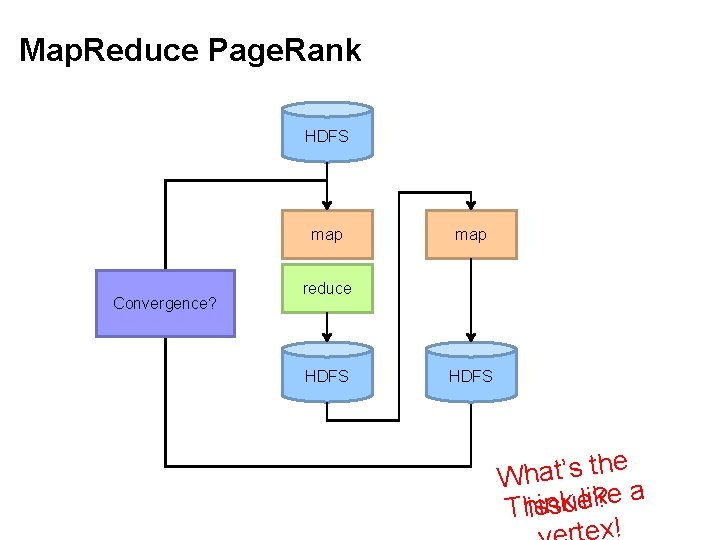

Map. Reduce Page. Rank HDFS map Convergence? map reduce HDFS he t s ’ t a h W a e k i l ? e k u n i s h T is x!

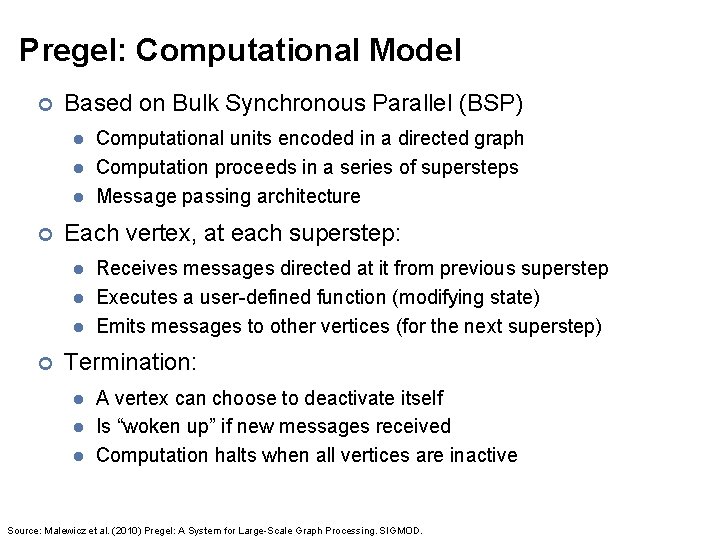

Pregel: Computational Model ¢ Based on Bulk Synchronous Parallel (BSP) l l l ¢ Each vertex, at each superstep: l l l ¢ Computational units encoded in a directed graph Computation proceeds in a series of supersteps Message passing architecture Receives messages directed at it from previous superstep Executes a user-defined function (modifying state) Emits messages to other vertices (for the next superstep) Termination: l l l A vertex can choose to deactivate itself Is “woken up” if new messages received Computation halts when all vertices are inactive Source: Malewicz et al. (2010) Pregel: A System for Large-Scale Graph Processing. SIGMOD.

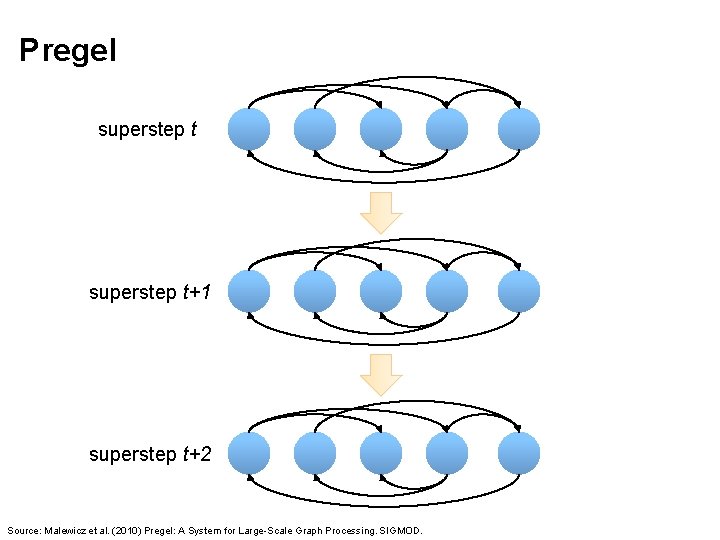

Pregel superstep t+1 superstep t+2 Source: Malewicz et al. (2010) Pregel: A System for Large-Scale Graph Processing. SIGMOD.

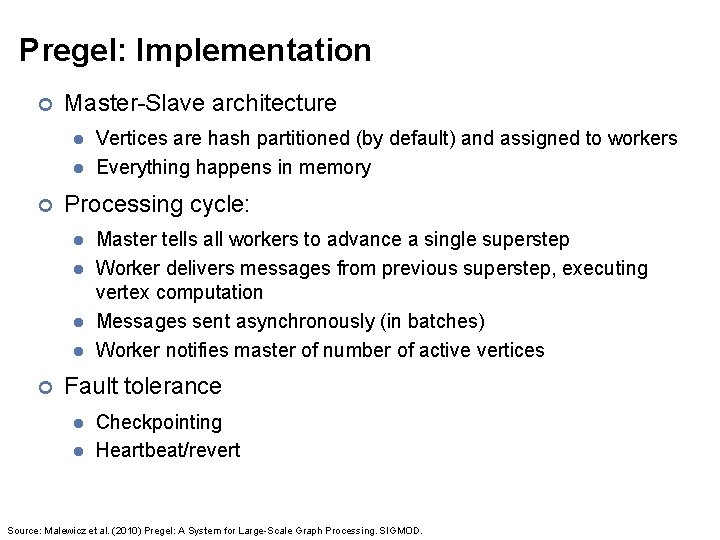

Pregel: Implementation ¢ Master-Slave architecture l l ¢ Processing cycle: l l ¢ Vertices are hash partitioned (by default) and assigned to workers Everything happens in memory Master tells all workers to advance a single superstep Worker delivers messages from previous superstep, executing vertex computation Messages sent asynchronously (in batches) Worker notifies master of number of active vertices Fault tolerance l l Checkpointing Heartbeat/revert Source: Malewicz et al. (2010) Pregel: A System for Large-Scale Graph Processing. SIGMOD.

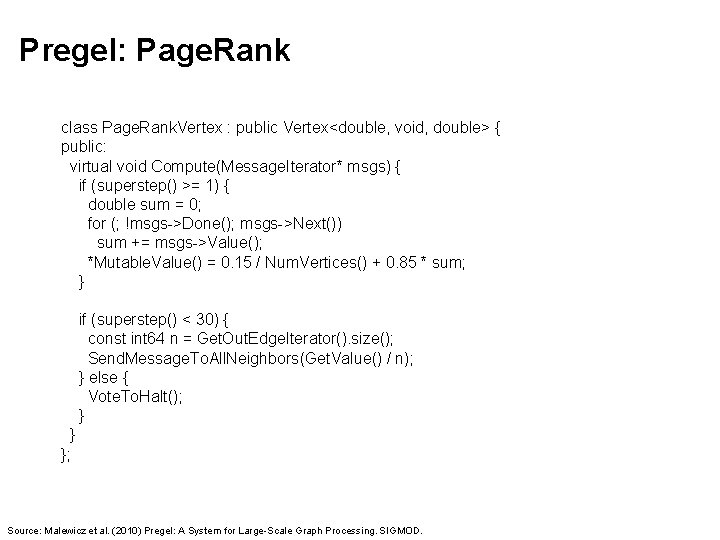

Pregel: Page. Rank class Page. Rank. Vertex : public Vertex<double, void, double> { public: virtual void Compute(Message. Iterator* msgs) { if (superstep() >= 1) { double sum = 0; for (; !msgs->Done(); msgs->Next()) sum += msgs->Value(); *Mutable. Value() = 0. 15 / Num. Vertices() + 0. 85 * sum; } if (superstep() < 30) { const int 64 n = Get. Out. Edge. Iterator(). size(); Send. Message. To. All. Neighbors(Get. Value() / n); } else { Vote. To. Halt(); } } }; Source: Malewicz et al. (2010) Pregel: A System for Large-Scale Graph Processing. SIGMOD.

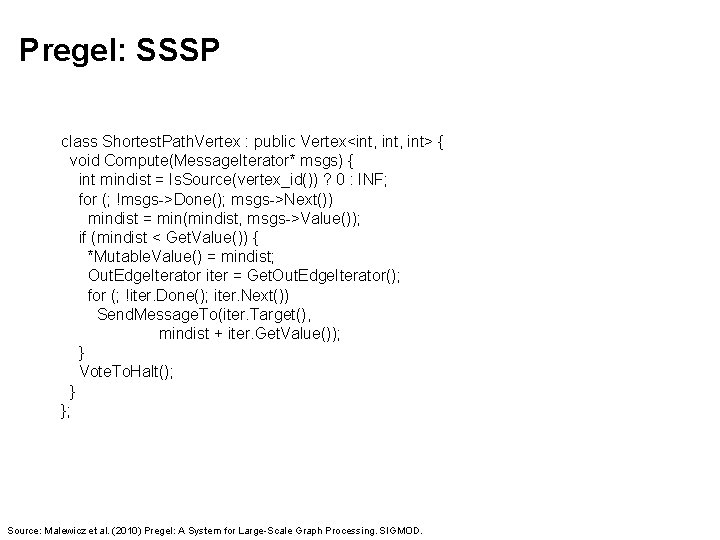

Pregel: SSSP class Shortest. Path. Vertex : public Vertex<int, int> { void Compute(Message. Iterator* msgs) { int mindist = Is. Source(vertex_id()) ? 0 : INF; for (; !msgs->Done(); msgs->Next()) mindist = min(mindist, msgs->Value()); if (mindist < Get. Value()) { *Mutable. Value() = mindist; Out. Edge. Iterator iter = Get. Out. Edge. Iterator(); for (; !iter. Done(); iter. Next()) Send. Message. To(iter. Target(), mindist + iter. Get. Value()); } Vote. To. Halt(); } }; Source: Malewicz et al. (2010) Pregel: A System for Large-Scale Graph Processing. SIGMOD.

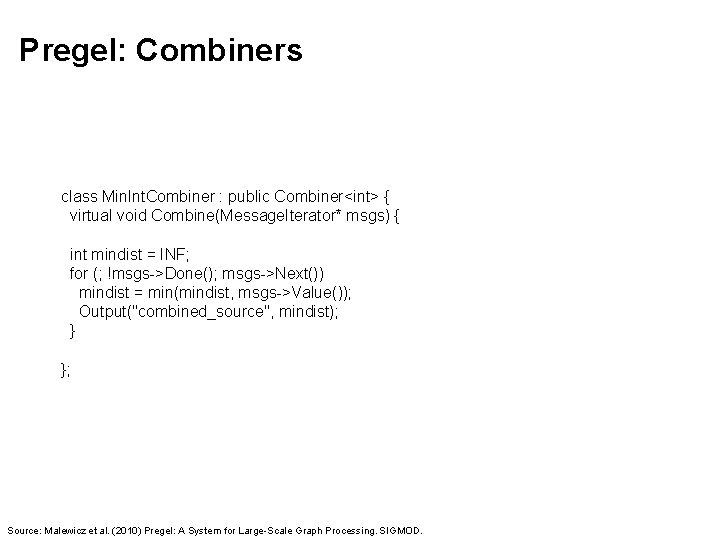

Pregel: Combiners class Min. Int. Combiner : public Combiner<int> { virtual void Combine(Message. Iterator* msgs) { int mindist = INF; for (; !msgs->Done(); msgs->Next()) mindist = min(mindist, msgs->Value()); Output("combined_source", mindist); } }; Source: Malewicz et al. (2010) Pregel: A System for Large-Scale Graph Processing. SIGMOD.

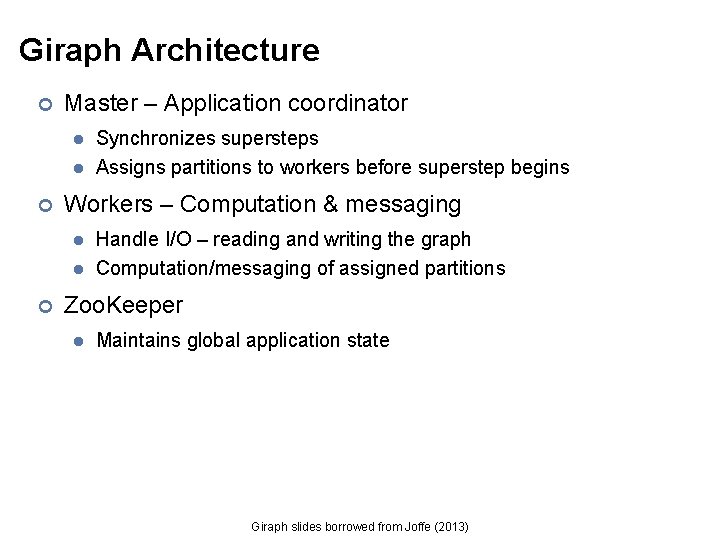

Giraph Architecture ¢ Master – Application coordinator l l ¢ Workers – Computation & messaging l l ¢ Synchronizes supersteps Assigns partitions to workers before superstep begins Handle I/O – reading and writing the graph Computation/messaging of assigned partitions Zoo. Keeper l Maintains global application state Giraph slides borrowed from Joffe (2013)

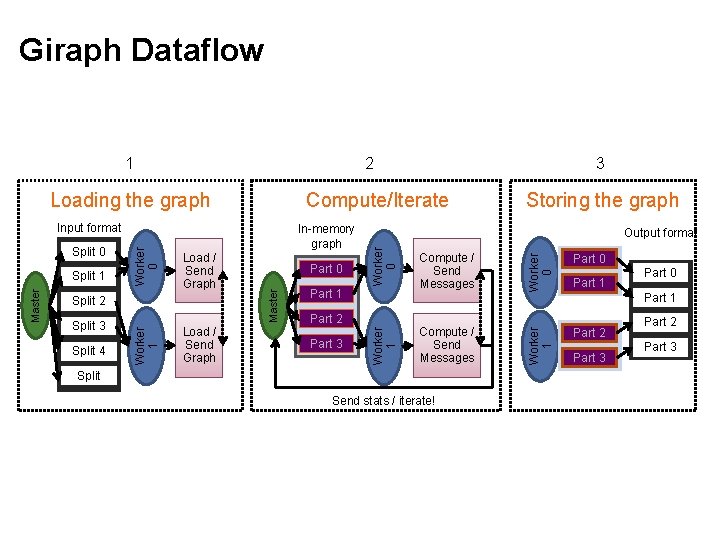

Giraph Dataflow 2 Compute/Iterate Split 4 Load / Send Graph Part 1 Compute / Send Messages Worker 0 Split 3 Part 0 Master Load / Send Graph Storing the graph Output format Worker 0 In-memory graph Split 2 Worker 1 Master Split 1 Worker 0 Input format Part 0 Part 2 Part 1 Part 3 Compute / Send Messages Split Send stats / iterate! Part 0 Part 1 Part 2 Worker 1 Loading the graph Split 0 3 Worker 1 1 Part 3 Part 2 Part 3

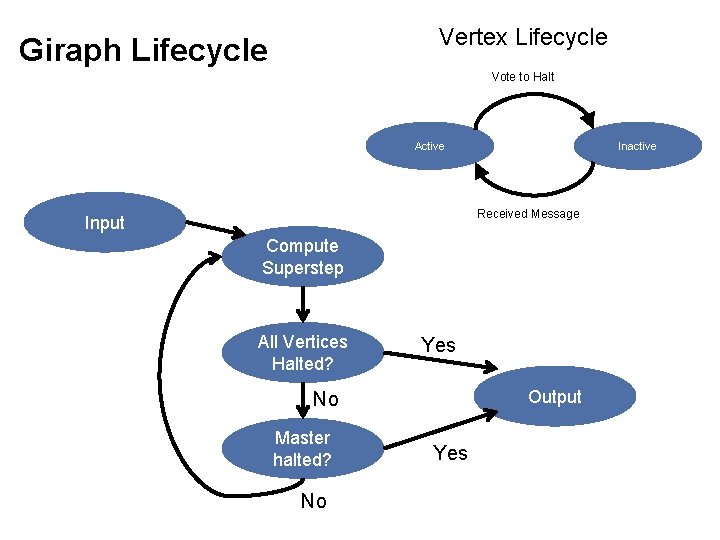

Vertex Lifecycle Giraph Lifecycle Vote to Halt Active Inactive Received Message Input Compute Superstep All Vertices Halted? Yes Output No Master halted? No Yes

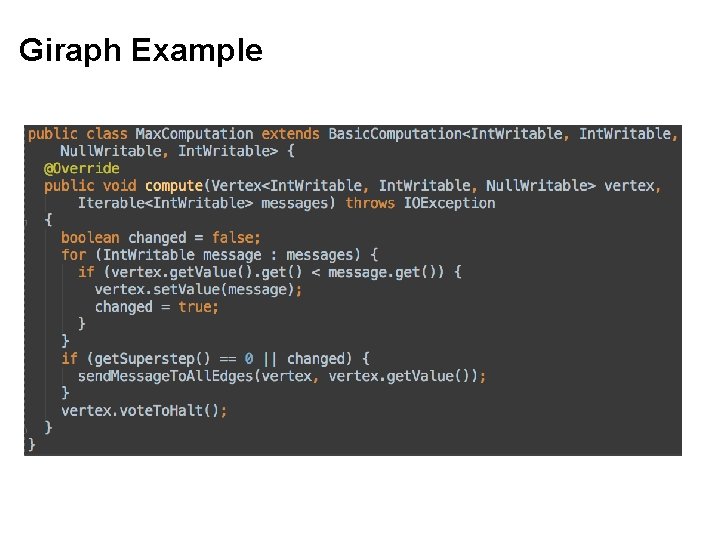

Giraph Example

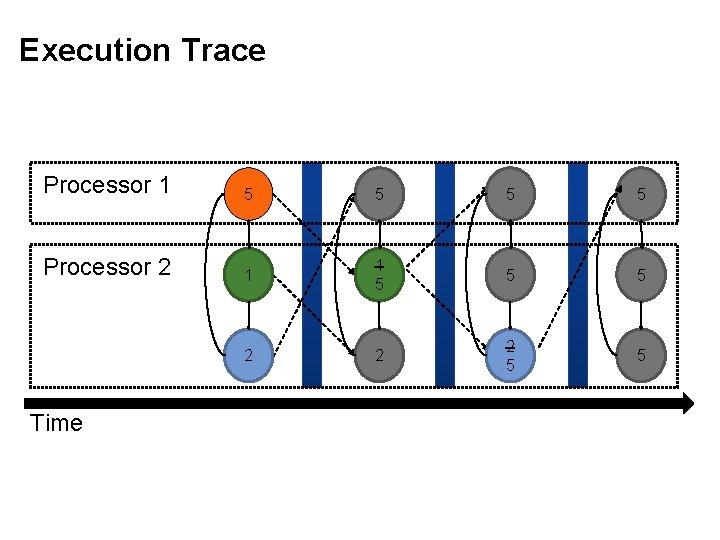

Execution Trace Processor 1 5 5 Processor 2 1 1 5 5 5 2 2 2 5 5 Time

Graph Processing Frameworks Source: Wikipedia (Waste container)

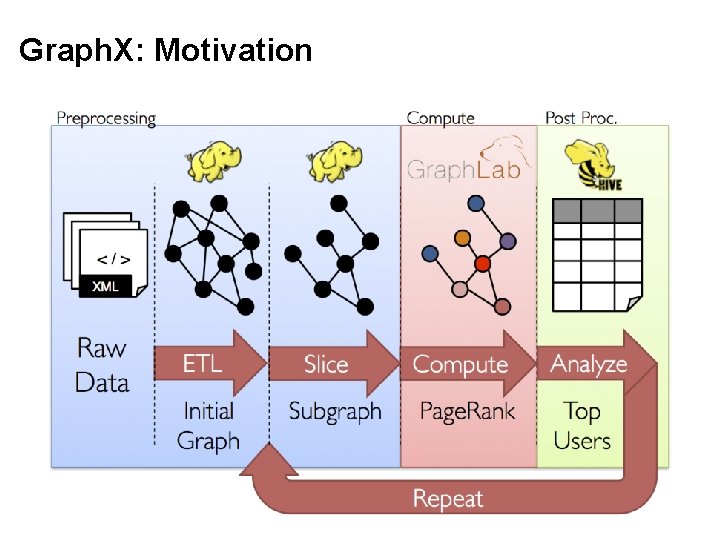

Graph. X: Motivation

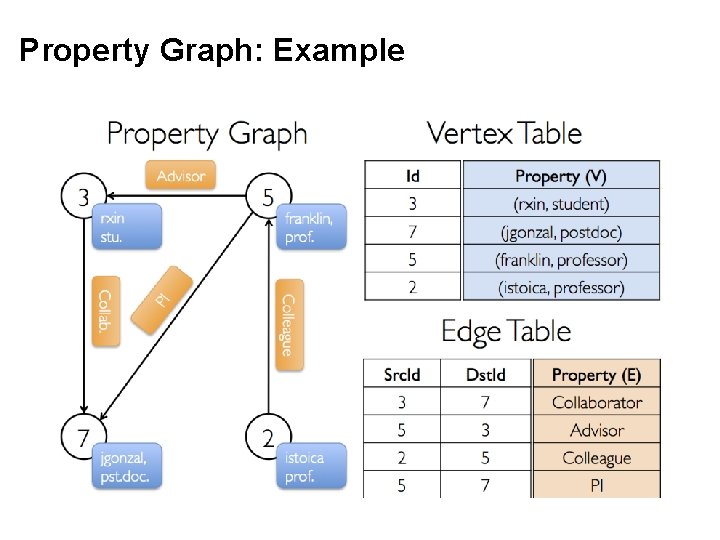

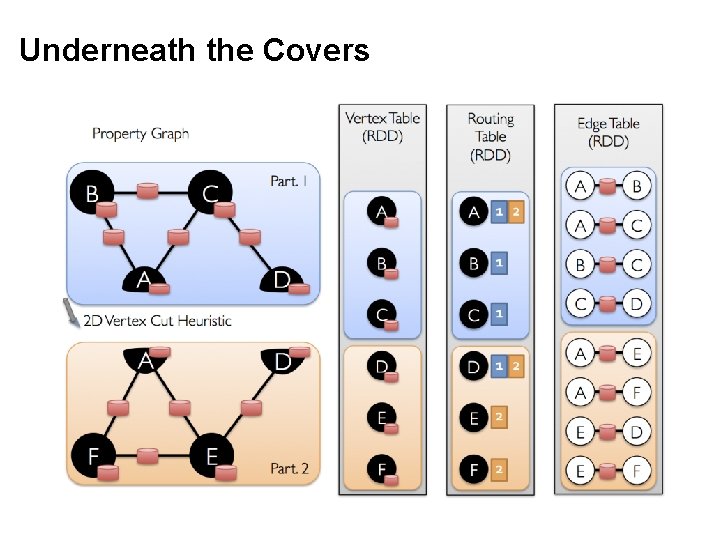

Graph. X = Spark for Graphs ¢ Integration of record-oriented and graph-oriented processing ¢ Extends RDDs to Resilient Distributed Property Graphs ¢ Property graphs: l l l Present different views of the graph (vertices, edges, triplets) Support map-like operations Support distributed Pregel-like aggregations

Property Graph: Example

Underneath the Covers

Questions? Source: Wikipedia (Japanese rock garden)

- Slides: 55