Big Data Analytics and Image Processing Divya Spandana

Big Data Analytics and Image Processing Divya Spandana Marneni

Agenda • • What is Big Data and image processing Why to analyze big images Complexity involved in processing Hadoop Image processing framework Image Retrieval in big data Feature extraction Applications

What is Big Data • • • Huge amount of data in the order of terabytes or petabytes. Difficult to analyze using traditional data management tools. Characterized by Volume, Variety, Velocity Structured, unstructured or semi-structured Some figures • Facebook ingests 500 TB of new data everyday. • Boeing 737 generates 240 TB of flight data during a single flight across US.

Big Data in Image Processing • 80 percent of big data consists of images and videos. • Source Ø Social networking sites, surveillance cameras, satellite images, web image collection, medical data, drones. • Big images could mean two things: Ø A single large image Ex: Giga-pixel and Tera-pixel images - http: //360 gigapixels. com/london-320 -gigapixelpanorama/ Ø A dataset of large number of small images Ex: a massive set of millions of images available with sites like Flicker, Instagram etc.

Why to analyze? • By analyzing the data we develop some insights. • Identify patterns and general trends. • Will be used for improving operational efficiency. • Gain revenues and competitive advantages. • Predict the future and take necessary steps. • Better decision making. • Scope for new products and services.

Complexity involved in processing • Requires lot of computational power, network bandwidth and storage. • Also, the complexity lies in developing efficient algorithms that can be scalable. • The relations among multimedia data and the underlying topics are more complex. • Visualizing complex relations is crucial to understanding the implications that the data has. • When the amount of data scales to terabytes or petabytes processing by traditional methods fail.

Approaches in processing big images Programming constructs • Streaming • Block processing • Parallel for-loops • GPU Arrays • Distributed arrays • Map. Reduce Platforms • Desktop(Multicore, GPU) • Clusters • Cloud Computing • Hadoop

Hadoop • This frameworks provides a platform for: ØIntensive data processing ØDistributed storage • Ability to store and process huge amounts of any kind of data, quickly. • Computing power. • Fault tolerance • Flexibility. • Low cost • Scalability.

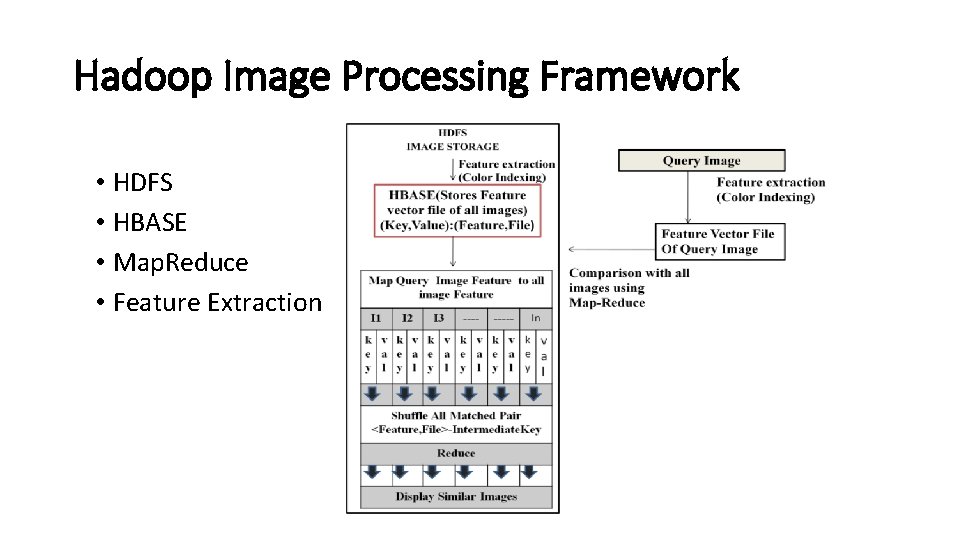

Hadoop Image Processing Framework • HDFS • HBASE • Map. Reduce • Feature Extraction

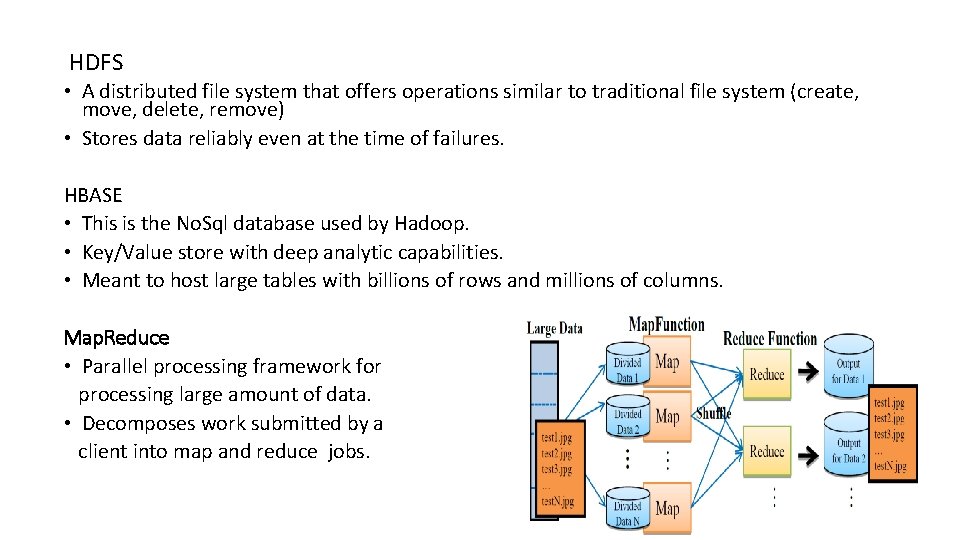

HDFS • A distributed file system that offers operations similar to traditional file system (create, move, delete, remove) • Stores data reliably even at the time of failures. HBASE • This is the No. Sql database used by Hadoop. • Key/Value store with deep analytic capabilities. • Meant to host large tables with billions of rows and millions of columns. Map. Reduce • Parallel processing framework for processing large amount of data. • Decomposes work submitted by a client into map and reduce jobs.

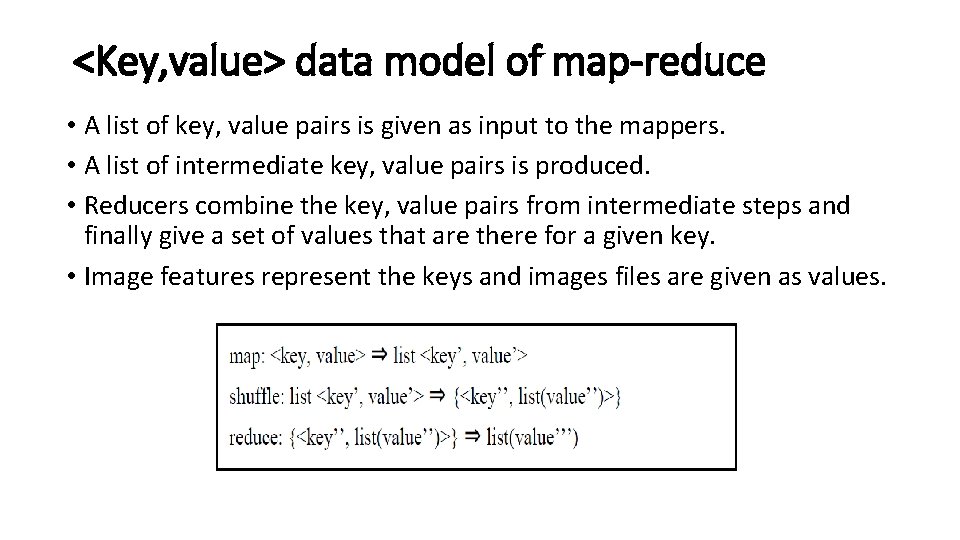

<Key, value> data model of map-reduce • A list of key, value pairs is given as input to the mappers. • A list of intermediate key, value pairs is produced. • Reducers combine the key, value pairs from intermediate steps and finally give a set of values that are there for a given key. • Image features represent the keys and images files are given as values.

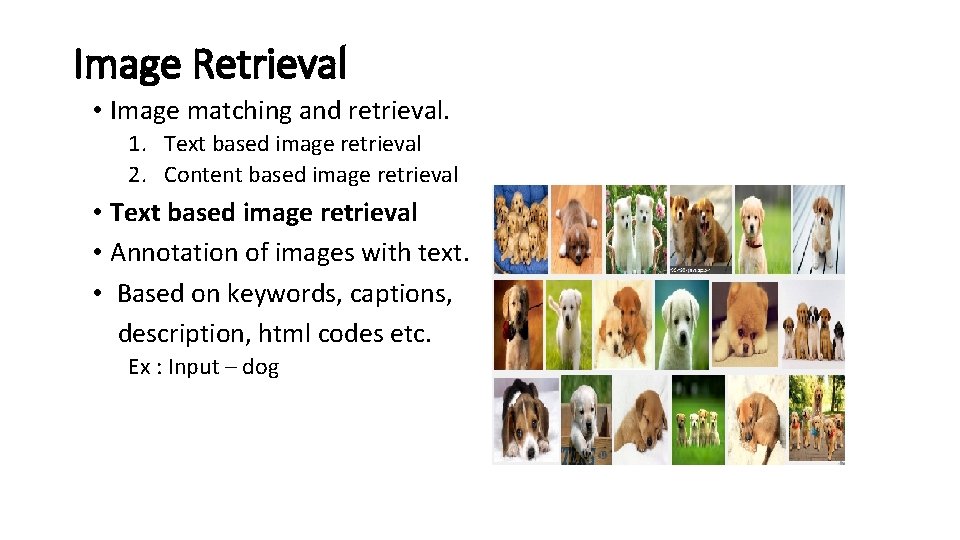

Image Retrieval • Image matching and retrieval. 1. Text based image retrieval 2. Content based image retrieval • Text based image retrieval • Annotation of images with text. • Based on keywords, captions, description, html codes etc. Ex : Input – dog

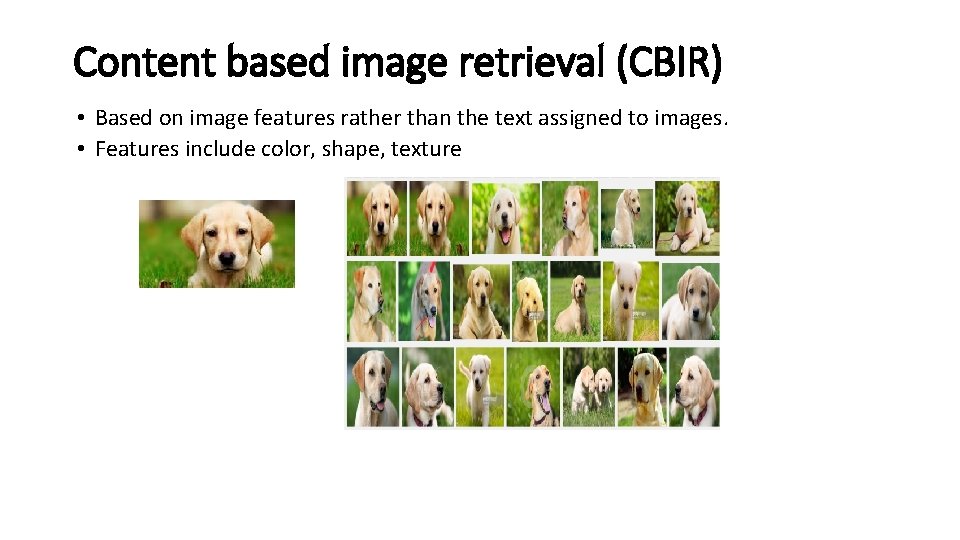

Content based image retrieval (CBIR) • Based on image features rather than the text assigned to images. • Features include color, shape, texture

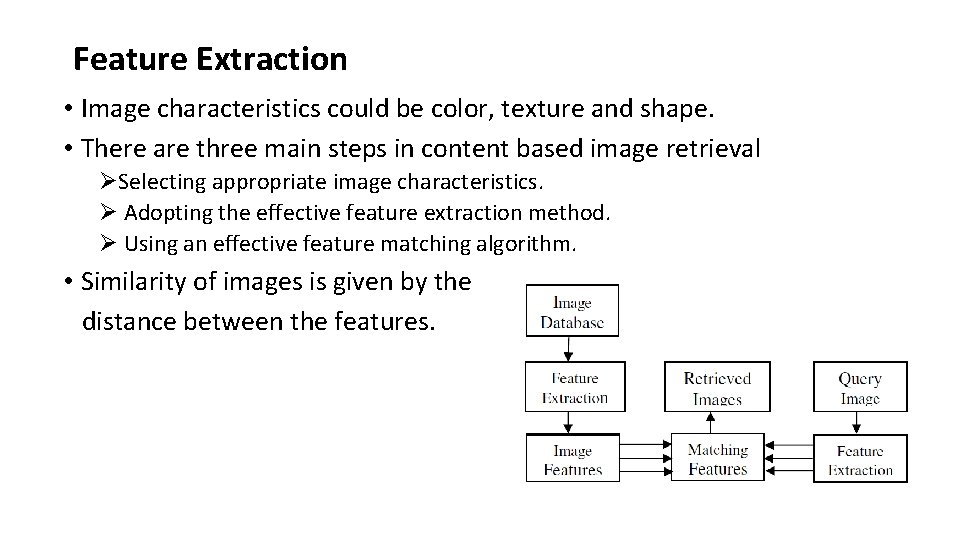

Feature Extraction • Image characteristics could be color, texture and shape. • There are three main steps in content based image retrieval ØSelecting appropriate image characteristics. Ø Adopting the effective feature extraction method. Ø Using an effective feature matching algorithm. • Similarity of images is given by the distance between the features.

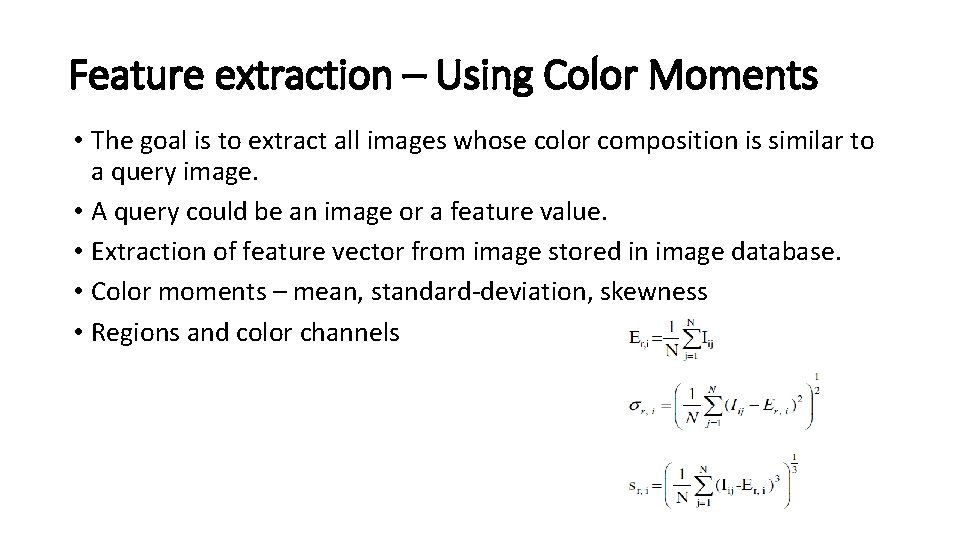

Feature extraction – Using Color Moments • The goal is to extract all images whose color composition is similar to a query image. • A query could be an image or a feature value. • Extraction of feature vector from image stored in image database. • Color moments – mean, standard-deviation, skewness • Regions and color channels

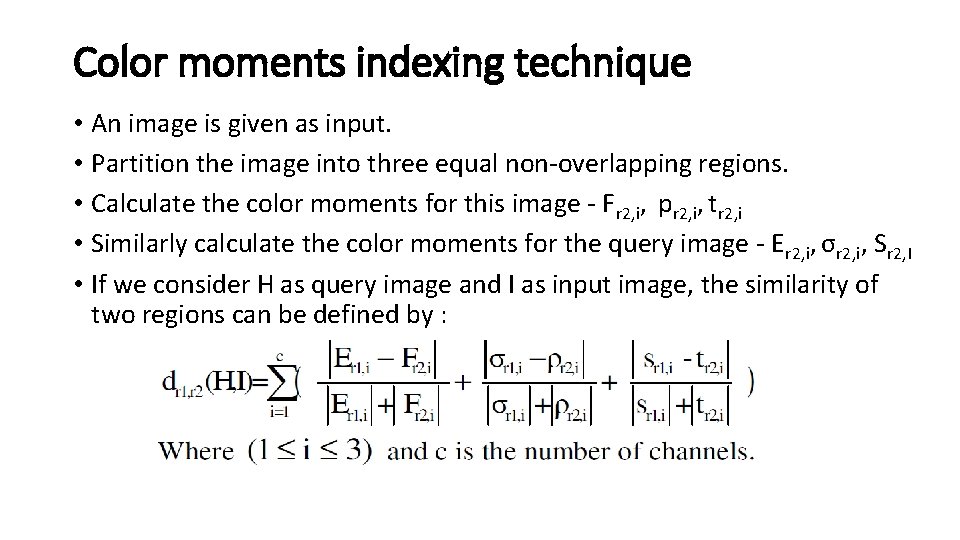

Color moments indexing technique • An image is given as input. • Partition the image into three equal non-overlapping regions. • Calculate the color moments for this image - Fr 2, i, pr 2, i, tr 2, i • Similarly calculate the color moments for the query image - Er 2, i, σr 2, i, Sr 2, I • If we consider H as query image and I as input image, the similarity of two regions can be defined by :

Color moments technique - continued • Total similarity between two images given by H and I is given by : • The color feature vectors for query image and the input image are given by: • Query image – • Input Image • Distance between these features is given by -Canberra distance: • We calculate the color moments for all images in the dataset and apply the above formula to get the similarity values “d”. • Store them in an array. • The array is sorted in ascending order and first element of d corresponds to the most similar image.

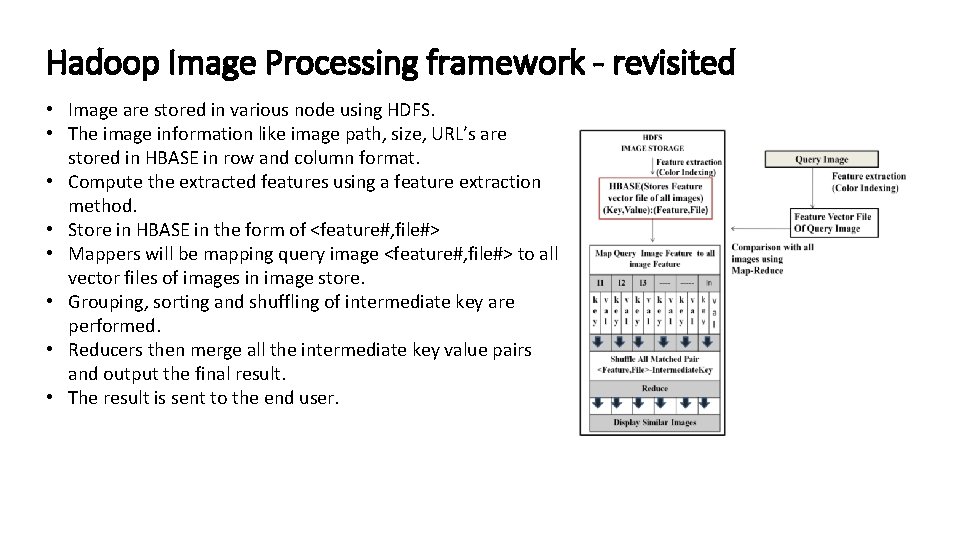

Hadoop Image Processing framework - revisited • Image are stored in various node using HDFS. • The image information like image path, size, URL’s are stored in HBASE in row and column format. • Compute the extracted features using a feature extraction method. • Store in HBASE in the form of <feature#, file#> • Mappers will be mapping query image <feature#, file#> to all vector files of images in image store. • Grouping, sorting and shuffling of intermediate key are performed. • Reducers then merge all the intermediate key value pairs and output the final result. • The result is sent to the end user.

Applications • Searching and browsing large image and video archives. Ex : Google and Yahoo • Photo and video sharing Ex: Youtube and flickr • Image retrieval in healthcare domain for decision assisting process. Ex. Optical biopsy system images • Processing of pictures from surveillance cameras for face detection.

References • R. Datta, D. Joshi, J. Li, J. Z. Wang, " Image retrieval: ideas, influences, and trends of the new age ", ACM Computing Surveys 40(2), 2008, pp. 1 -60 • S. Mangijao Singh , K. Hemachandran, Content-Based Image Retrieval using Color Moment and Gabor Texture leature, IJCSI International Journal of Computer Science Issues, Vol. 9, Issue 5, No 1, September 2012 ISSN (Online): 16940814. • Christopher Crick, Sridhar Vemula, Hadoop Image Processing Framework • Seyyed Mojtaba Banaei, Hossein Kardan Moghaddam, Hadoop and Its Role in Modern Image Processing • YAO Qing-An, ZHENG Hong, XU Zhong-Yu, WU Qiong, LI Zi-Wei, and Yun Lifen, Massive Medical Images Retrieval System Based on Hadoop

Thank you…. .

- Slides: 21