Big Data Infrastructure CS 489698 Big Data Infrastructure

Big Data Infrastructure CS 489/698 Big Data Infrastructure (Winter 2016) Week 3: From Map. Reduce to Spark (2/2) January 21, 2016 Jimmy Lin David R. Cheriton School of Computer Science University of Waterloo These slides are available at http: //lintool. github. io/bigdata-2016 w/ This work is licensed under a Creative Commons Attribution-Noncommercial-Share Alike 3. 0 United States See http: //creativecommons. org/licenses/by-nc-sa/3. 0/us/ for details

The datacenter is the computer! What’s the instruction set? Source: Google

![Map. Reduce List[(K 1, V 1)] map f: (K 1, V 1) ⇒ List[(K Map. Reduce List[(K 1, V 1)] map f: (K 1, V 1) ⇒ List[(K](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-3.jpg)

Map. Reduce List[(K 1, V 1)] map f: (K 1, V 1) ⇒ List[(K 2, V 2)] reduce g: (K 2, Iterable[V 2]) ⇒ List[(K 3, V 3)] List[K 2, V 2])

![Spark RDD[T] filter group. By. Key f: (T) ⇒ Traversable. Once[U] f: (T) ⇒ Spark RDD[T] filter group. By. Key f: (T) ⇒ Traversable. Once[U] f: (T) ⇒](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-4.jpg)

Spark RDD[T] filter group. By. Key f: (T) ⇒ Traversable. Once[U] f: (T) ⇒ RDD[(K, V)] Boolean map. Partitions f: (Iterator[T]) RDD[(K, V)] ⇒ Iterator[U] RDD[U] sort RDD[(K, V)] flat. Map map f: (T) ⇒ U RDD[(K, V)] RDD[T] aggregate. By. Key seq. Op: (U, V) ⇒ U, comb. Op: (U, U) ⇒ U RDD[(K, V)] RDD[(K, W)] reduce. By. Key RDD[(K, W)] f: (V, V) ⇒ V RDD[(K, Iterable[V])] RDD[U] join RDD[(K, U)] cogroup RDD[(K, V)] And more! RDD[(K, V)] RDD[(K, (Iterable[V], Iterable[W]))] RDD[(K, (V, W))]

What’s an RDD? Resilient Distributed Dataset (RDD) = immutable = partitioned Wait, so how do you actually do anything? Developers define transformations on Framework keeps track of lineage RDDs

Spark Word Count val text. File = sc. text. File(args. input()) text. File. flat. Map(line => tokenize(line)). map(word => (word, 1)). reduce. By. Key(_ + _). save. As. Text. File(args. output())

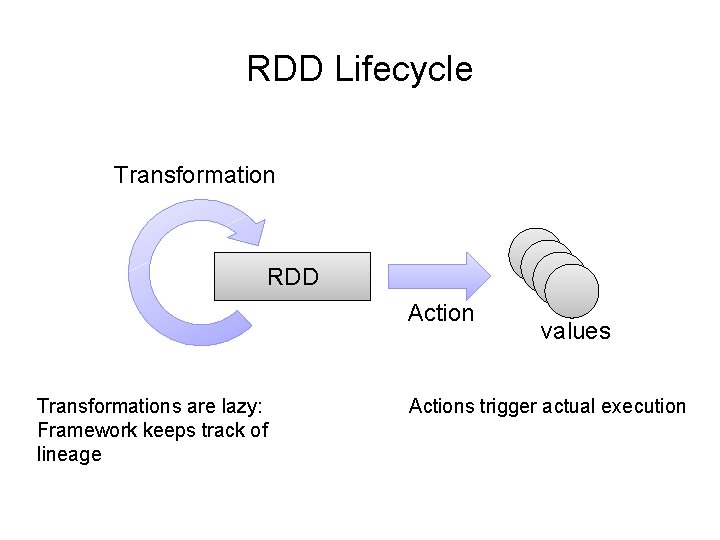

RDD Lifecycle Transformation RDD Action Transformations are lazy: Framework keeps track of lineage values Actions trigger actual execution

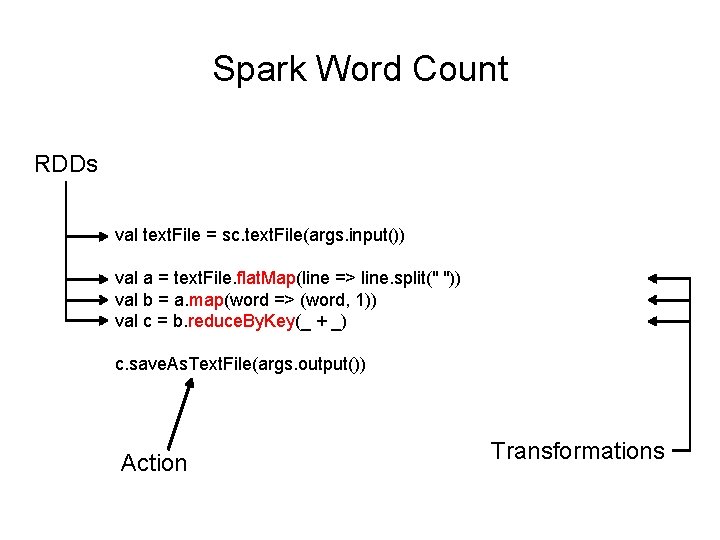

Spark Word Count RDDs val text. File = sc. text. File(args. input()) val a = text. File. flat. Map(line => line. split(" ")) val b = a. map(word => (word, 1)) val c = b. reduce. By. Key(_ + _) c. save. As. Text. File(args. output()) Action Transformations

![RDDs and Lineage On HDFS text. File: RDD[String]. flat. Map(line => line. split(" ")) RDDs and Lineage On HDFS text. File: RDD[String]. flat. Map(line => line. split(" "))](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-9.jpg)

RDDs and Lineage On HDFS text. File: RDD[String]. flat. Map(line => line. split(" ")) a: RDD[String]. map(word => (word, 1)) b: RDD[(String, Int)]. reduce. By. Key(_ + _) c: RDD[(String, Int)] Action! r, Remembe are s n o i t a m r transfo lazy!

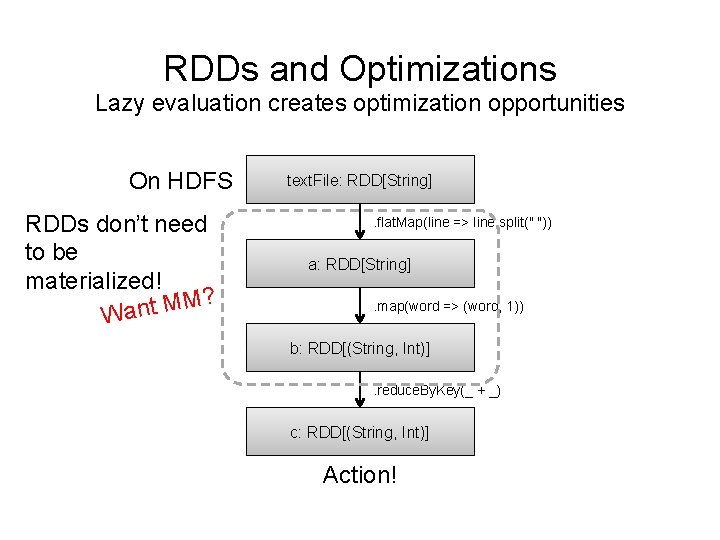

RDDs and Optimizations Lazy evaluation creates optimization opportunities On HDFS RDDs don’t need to be materialized! M? M t n a W text. File: RDD[String]. flat. Map(line => line. split(" ")) a: RDD[String]. map(word => (word, 1)) b: RDD[(String, Int)]. reduce. By. Key(_ + _) c: RDD[(String, Int)] Action!

![RDDs and Caching RDDs can be materialized in memory! On HDFS text. File: RDD[String] RDDs and Caching RDDs can be materialized in memory! On HDFS text. File: RDD[String]](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-11.jpg)

RDDs and Caching RDDs can be materialized in memory! On HDFS text. File: RDD[String] ✗ . flat. Map(line => line. split(" ")) it! e h c a C a: RDD[String] Fault tol erance? . map(word => (word, 1)) b: RDD[(String, Int)]. reduce. By. Key(_ + _) c: RDD[(String, Int)] Action! Spark works even if the RDDs are partially

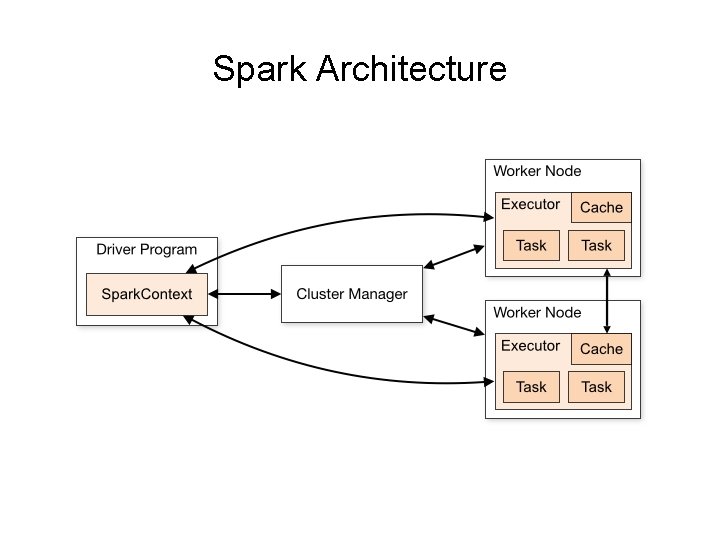

Spark Architecture

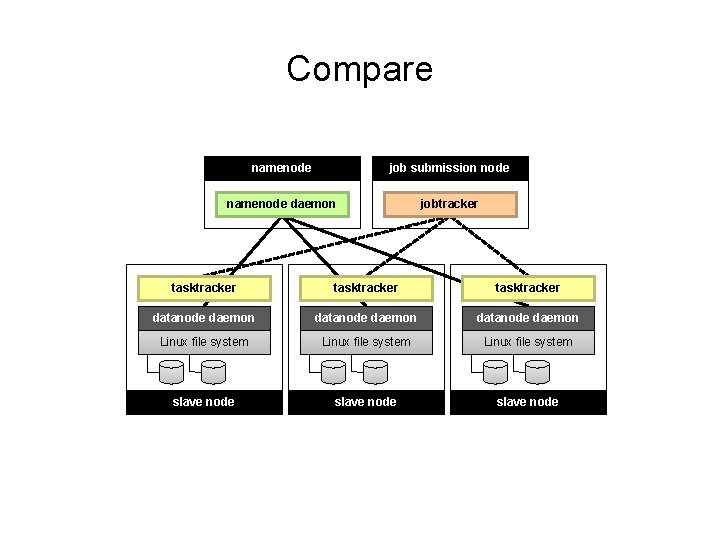

Compare namenode job submission node namenode daemon jobtracker tasktracker datanode daemon Linux file system … slave node

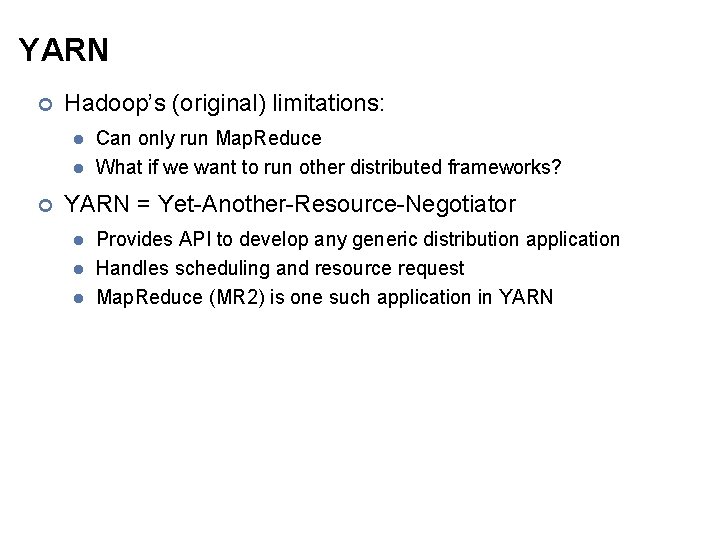

YARN ¢ Hadoop’s (original) limitations: l l ¢ Can only run Map. Reduce What if we want to run other distributed frameworks? YARN = Yet-Another-Resource-Negotiator l l l Provides API to develop any generic distribution application Handles scheduling and resource request Map. Reduce (MR 2) is one such application in YARN

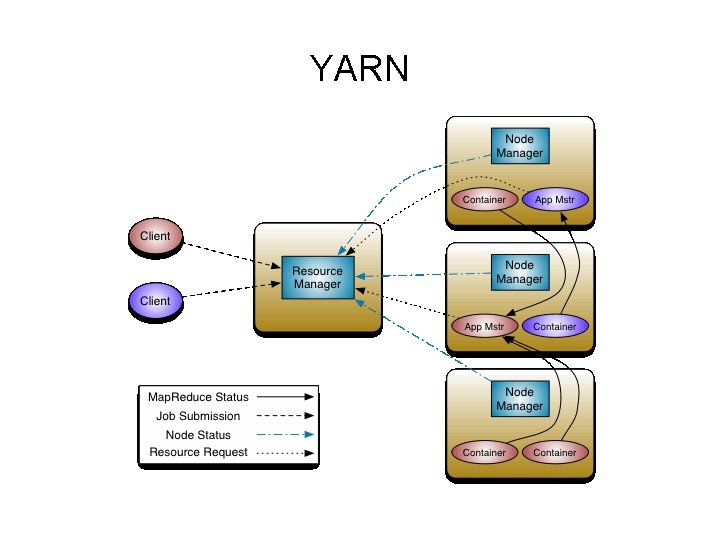

YARN

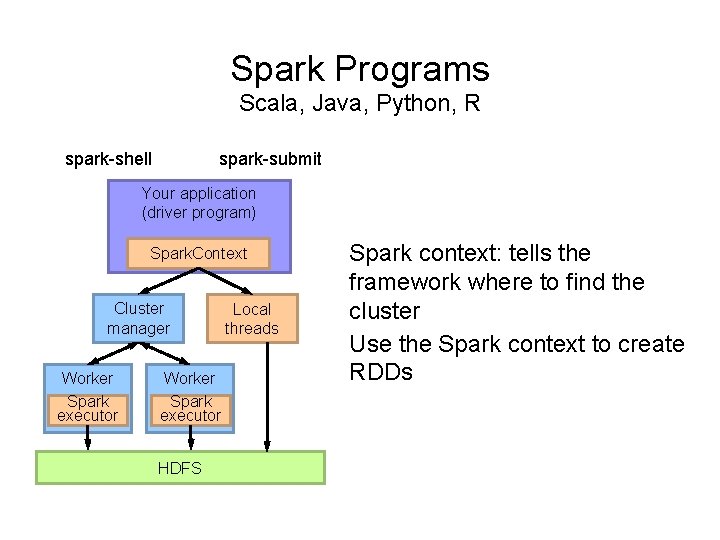

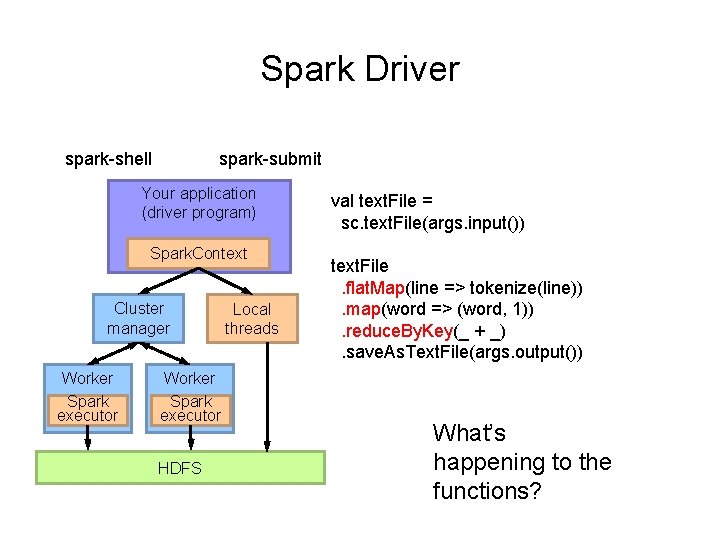

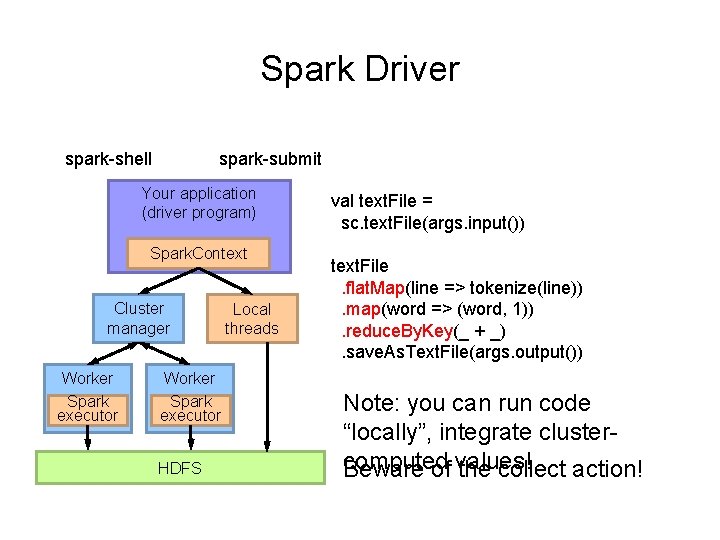

Spark Programs Scala, Java, Python, R spark-shell spark-submit Your application (driver program) Spark. Context Cluster manager Worker Spark executor HDFS Local threads Spark context: tells the framework where to find the cluster Use the Spark context to create RDDs

Spark Driver spark-shell spark-submit Your application (driver program) Spark. Context Cluster manager Worker Spark executor HDFS Local threads val text. File = sc. text. File(args. input()) text. File. flat. Map(line => tokenize(line)). map(word => (word, 1)). reduce. By. Key(_ + _). save. As. Text. File(args. output()) What’s happening to the functions?

Spark Driver spark-shell spark-submit Your application (driver program) Spark. Context Cluster manager Worker Spark executor HDFS Local threads val text. File = sc. text. File(args. input()) text. File. flat. Map(line => tokenize(line)). map(word => (word, 1)). reduce. By. Key(_ + _). save. As. Text. File(args. output()) Note: you can run code “locally”, integrate clustercomputed Beware of values! the collect action!

![Spark Transformations RDD[T] filter group. By. Key f: (T) ⇒ Traversable. Once[U] f: (T) Spark Transformations RDD[T] filter group. By. Key f: (T) ⇒ Traversable. Once[U] f: (T)](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-19.jpg)

Spark Transformations RDD[T] filter group. By. Key f: (T) ⇒ Traversable. Once[U] f: (T) ⇒ RDD[(K, V)] Boolean map. Partitions f: (Iterator[T]) RDD[(K, V)] ⇒ Iterator[U] RDD[U] sort aggregate. By. Key seq. Op: (U, V) ⇒ U, comb. Op: (U, U) ⇒ U RDD[(K, V)] RDD[(K, W)] reduce. By. Key RDD[(K, W)] f: (V, V) ⇒ V RDD[(K, Iterable[V])] RDD[U] join RDD[(K, V)] flat. Map map f: (T) ⇒ U RDD[(K, V)] RDD[T] RDD[(K, U)] cogroup RDD[(K, V)] RDD[(K, (Iterable[V], Iterable[W]))] RDD[(K, (V, W))]

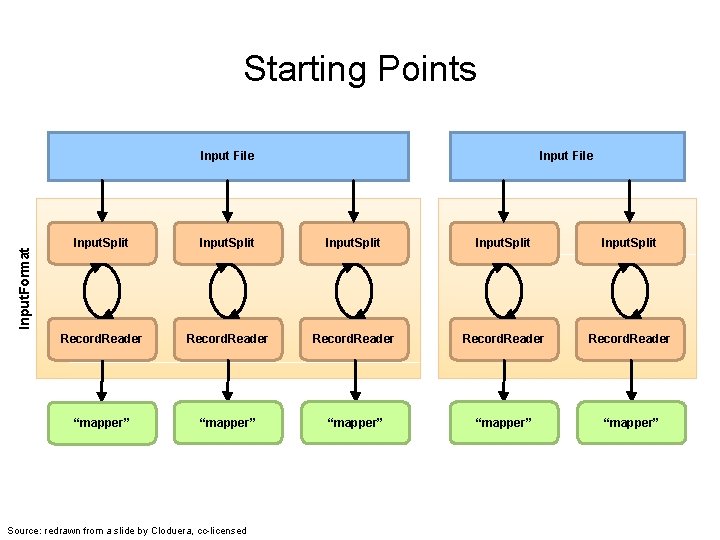

Starting Points Input. Format Input File Input. Split Record. Reader “mapper” “mapper” Source: redrawn from a slide by Cloduera, cc-licensed

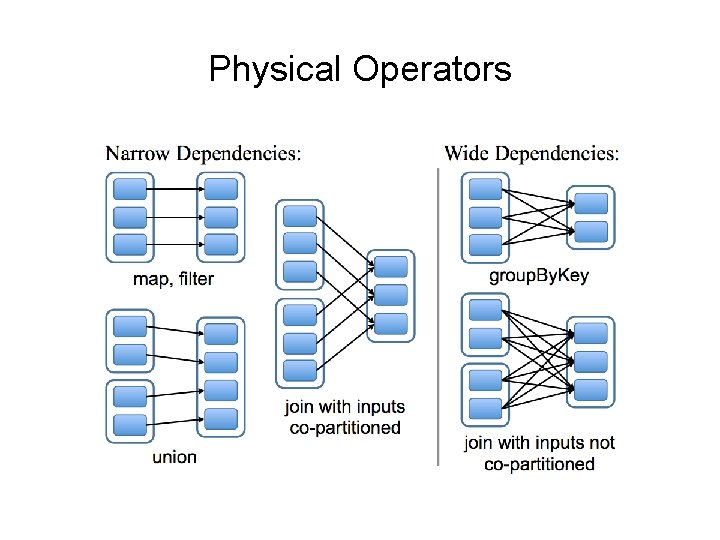

Physical Operators

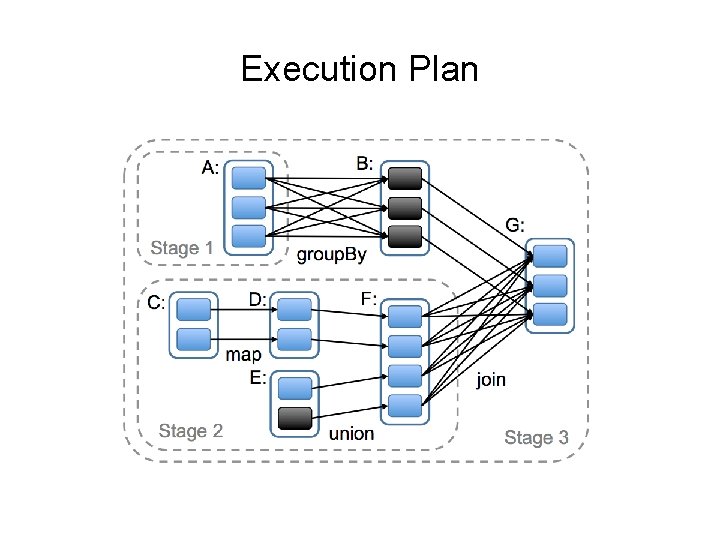

Execution Plan

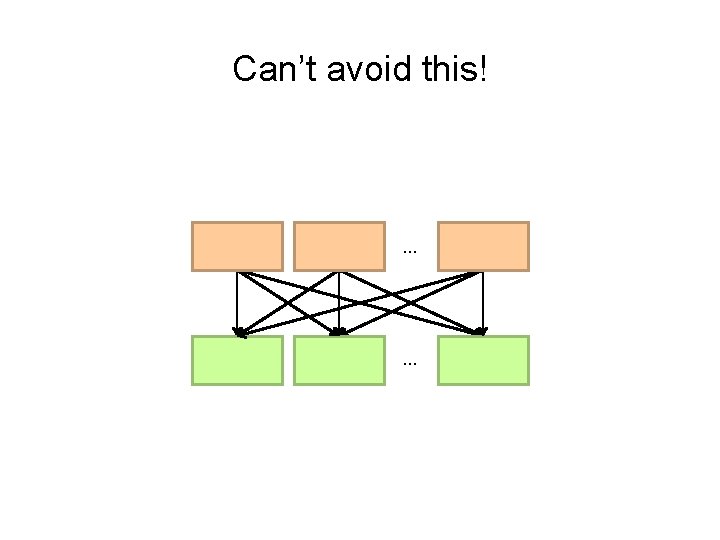

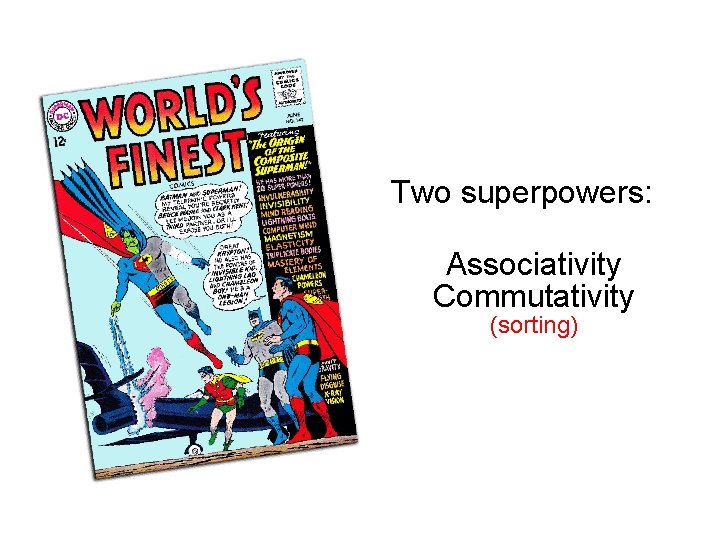

Can’t avoid this! … …

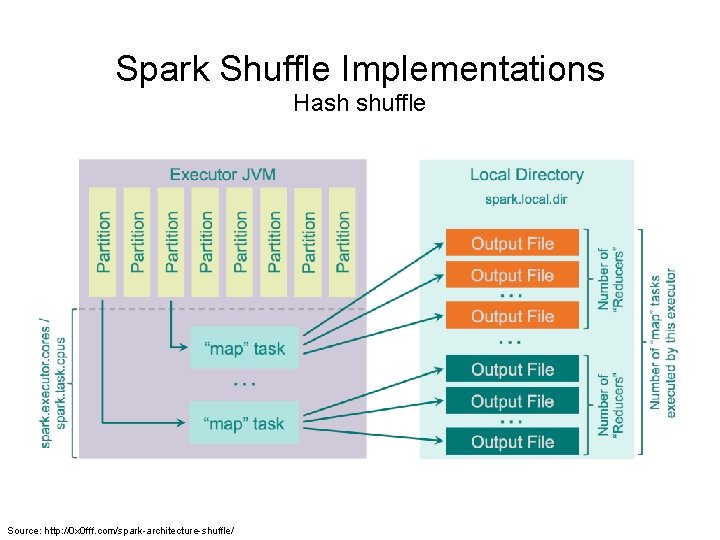

Spark Shuffle Implementations Hash shuffle Source: http: //0 x 0 fff. com/spark-architecture-shuffle/

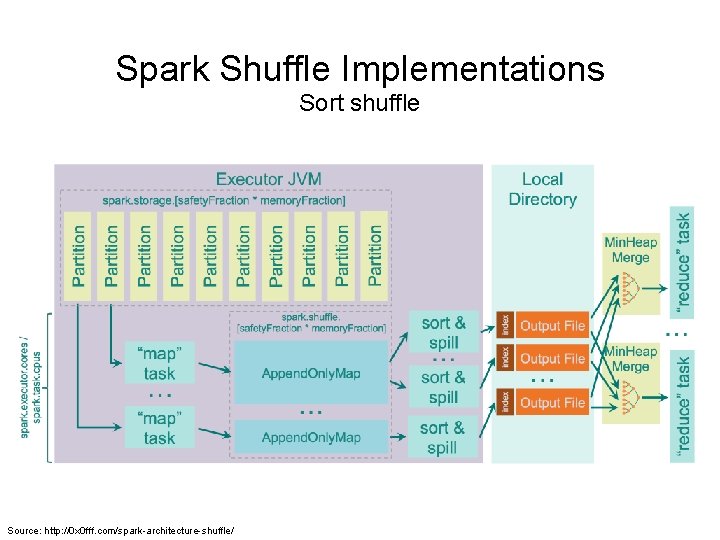

Spark Shuffle Implementations Sort shuffle Source: http: //0 x 0 fff. com/spark-architecture-shuffle/

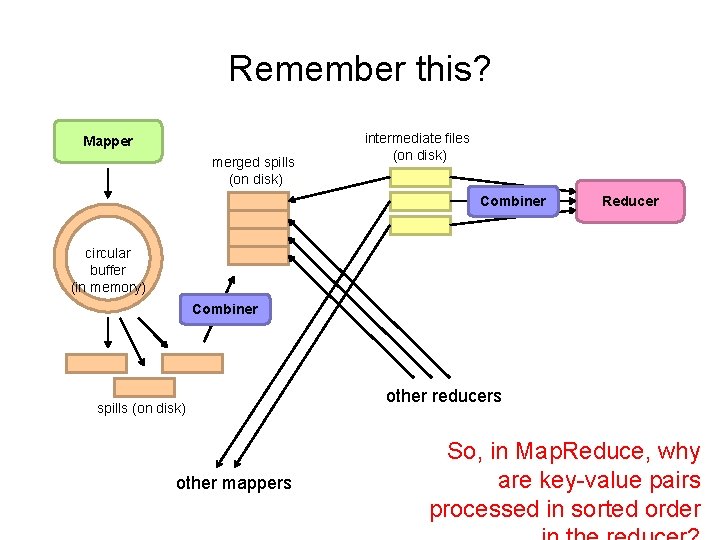

Remember this? Mapper merged spills (on disk) intermediate files (on disk) Combiner Reducer circular buffer (in memory) Combiner spills (on disk) other mappers other reducers So, in Map. Reduce, why are key-value pairs processed in sorted order

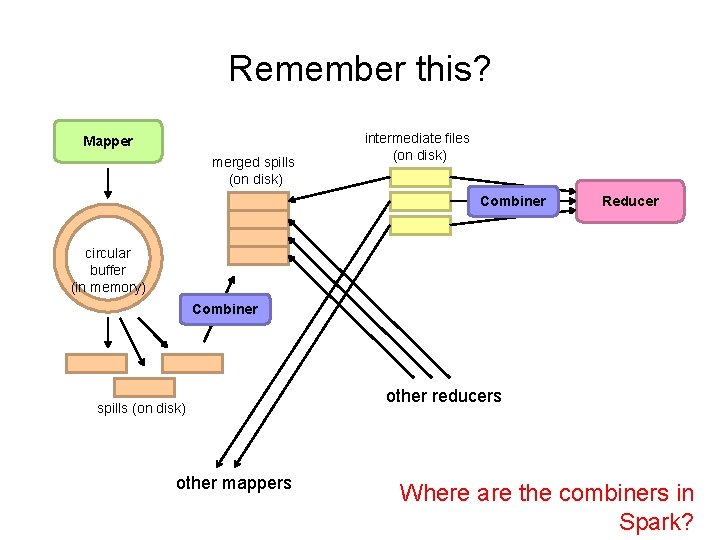

Remember this? Mapper merged spills (on disk) intermediate files (on disk) Combiner Reducer circular buffer (in memory) Combiner spills (on disk) other mappers other reducers Where are the combiners in Spark?

![Reduce-like Operations RDD[(K, V)] reduce. By. Key RDD[(K, V)] aggregate. By. Key f: (V, Reduce-like Operations RDD[(K, V)] reduce. By. Key RDD[(K, V)] aggregate. By. Key f: (V,](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-28.jpg)

Reduce-like Operations RDD[(K, V)] reduce. By. Key RDD[(K, V)] aggregate. By. Key f: (V, V) ⇒ V seq. Op: (U, V) ⇒ U, comb. Op: (U, U) ⇒ U RDD[(K, V)] RDD[(K, U)] How can we optimize? What happened to combiners? … …

Spark #wins Richer operators RDD abstraction supports optimizations (pipelining, caching, etc. ) Scala, Java, Python, R, bindings

Algorithm design, redux Source: Wikipedia (Mahout)

Two superpowers: Associativity Commutativity (sorting) What follows… very basic category theory…

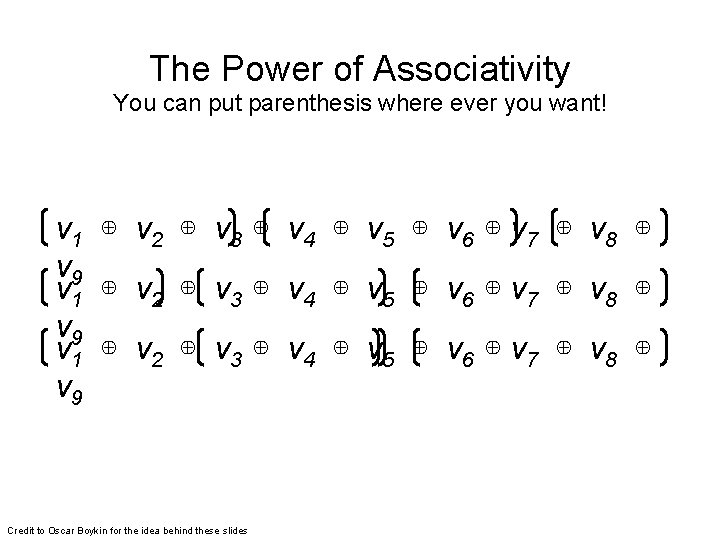

The Power of Associativity You can put parenthesis where ever you want! v 1 ⊕ v 2 ⊕ v 3 ⊕ v 4 ⊕ v 5 ⊕ v 6 ⊕ v 7 ⊕ v 8 ⊕ v 9 Credit to Oscar Boykin for the idea behind these slides

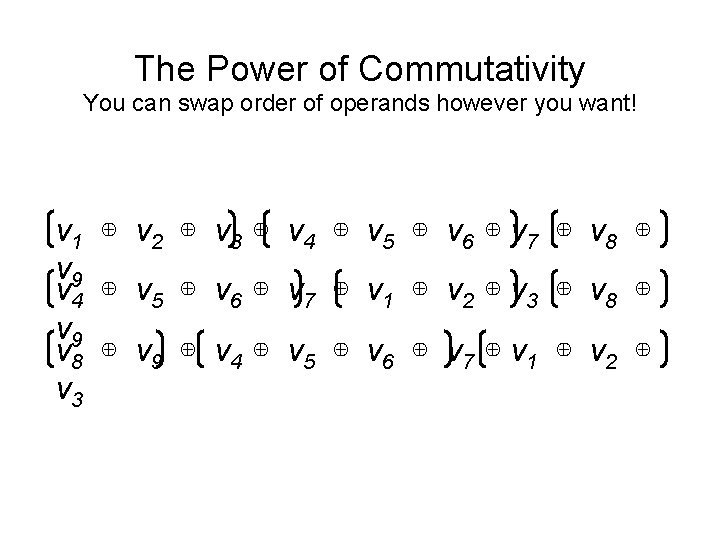

The Power of Commutativity You can swap order of operands however you want! v 1 ⊕ v 2 ⊕ v 3 ⊕ v 4 ⊕ v 5 ⊕ v 6 ⊕ v 7 ⊕ v 8 ⊕ v 9 v 4 ⊕ v 5 ⊕ v 6 ⊕ v 7 ⊕ v 1 ⊕ v 2 ⊕ v 3 ⊕ v 8 ⊕ v 9 ⊕ v 4 ⊕ v 5 ⊕ v 6 ⊕ v 7 ⊕ v 1 ⊕ v 2 ⊕ v 3

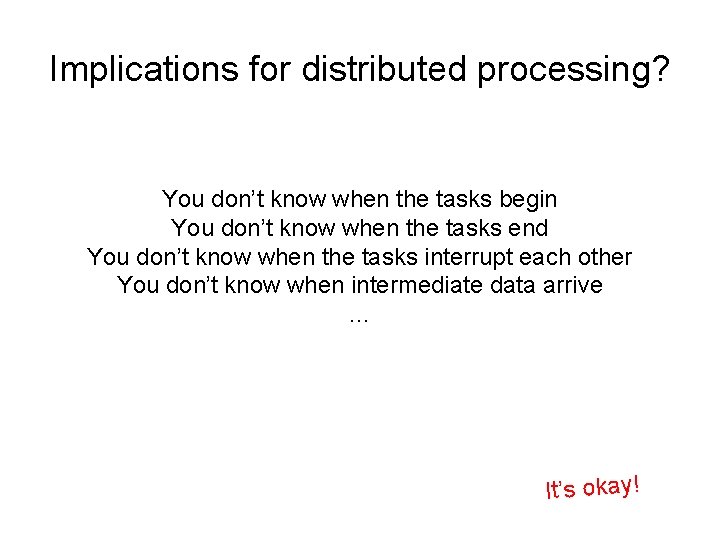

Implications for distributed processing? You don’t know when the tasks begin You don’t know when the tasks end You don’t know when the tasks interrupt each other You don’t know when intermediate data arrive … It’s okay!

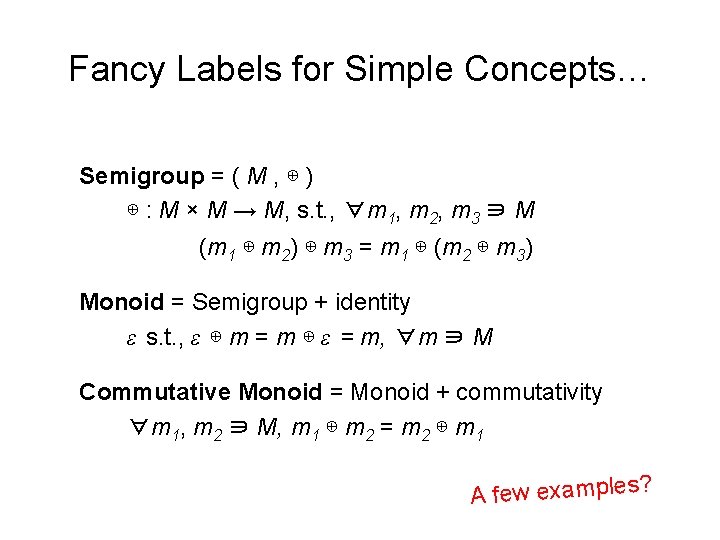

Fancy Labels for Simple Concepts… Semigroup = ( M , ⊕ ) ⊕ : M × M → M, s. t. , ∀m 1, m 2, m 3 ∋ M (m 1 ⊕ m 2) ⊕ m 3 = m 1 ⊕ (m 2 ⊕ m 3) Monoid = Semigroup + identity ε s. t. , ε ⊕ m = m ⊕ ε = m, ∀m ∋ M Commutative Monoid = Monoid + commutativity ∀m 1, m 2 ∋ M, m 1 ⊕ m 2 = m 2 ⊕ m 1 ? s le p m a x e w fe A

![Back to these… RDD[(K, V)] reduce. By. Key RDD[(K, V)] aggregate. By. Key f: Back to these… RDD[(K, V)] reduce. By. Key RDD[(K, V)] aggregate. By. Key f:](http://slidetodoc.com/presentation_image/9cf2e6838592a3f722dea37dfd566519/image-36.jpg)

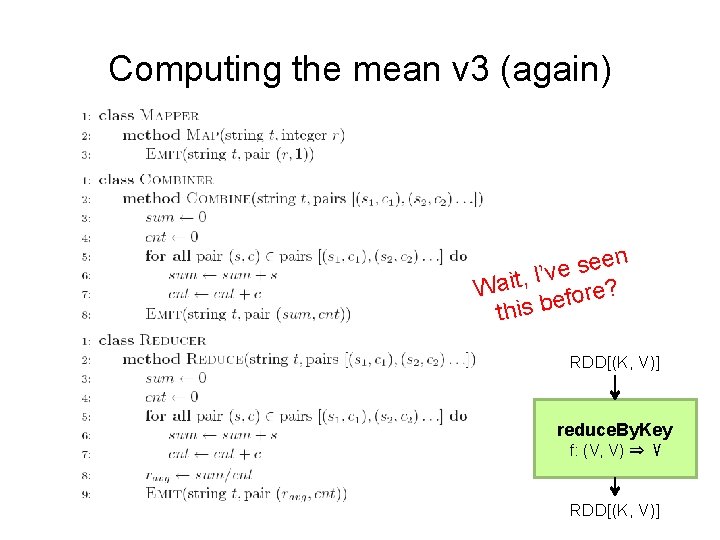

Back to these… RDD[(K, V)] reduce. By. Key RDD[(K, V)] aggregate. By. Key f: (V, V) ⇒ V seq. Op: (U, V) ⇒ U, comb. Op: (U, U) ⇒ U RDD[(K, V)] RDD[(K, U)] Wait, I’ve seen this before?

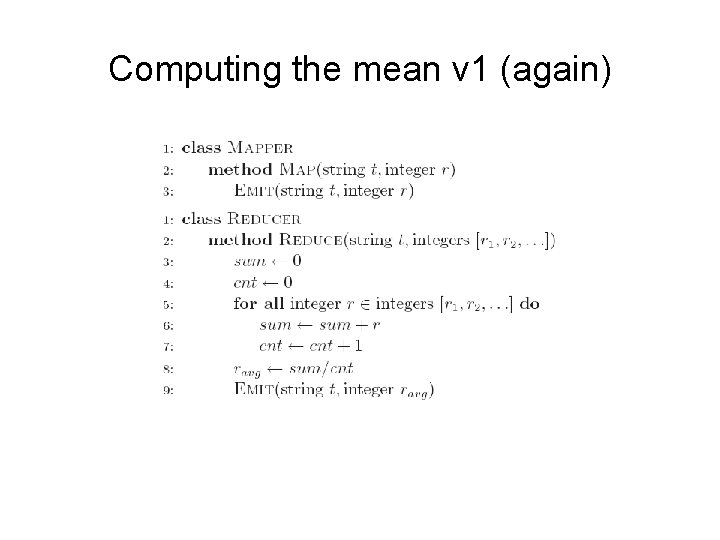

Computing the mean v 1 (again)

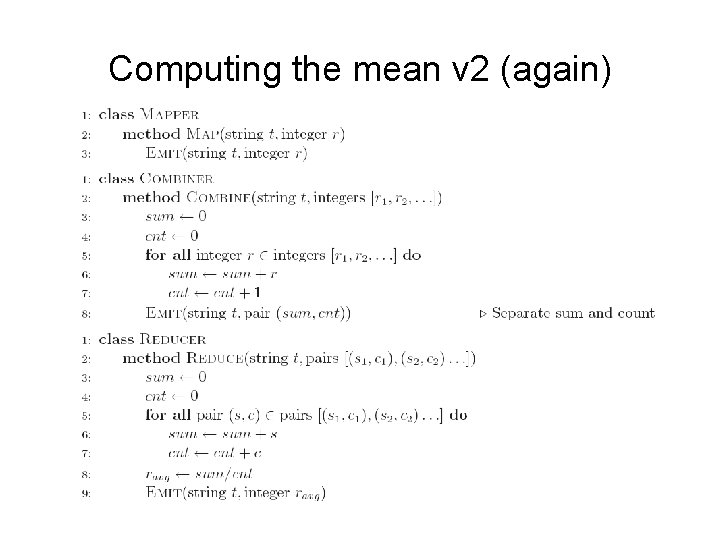

Computing the mean v 2 (again)

Computing the mean v 3 (again) en e s e I’v , t i a W re? o f e b this RDD[(K, V)] reduce. By. Key f: (V, V) ⇒ V RDD[(K, V)]

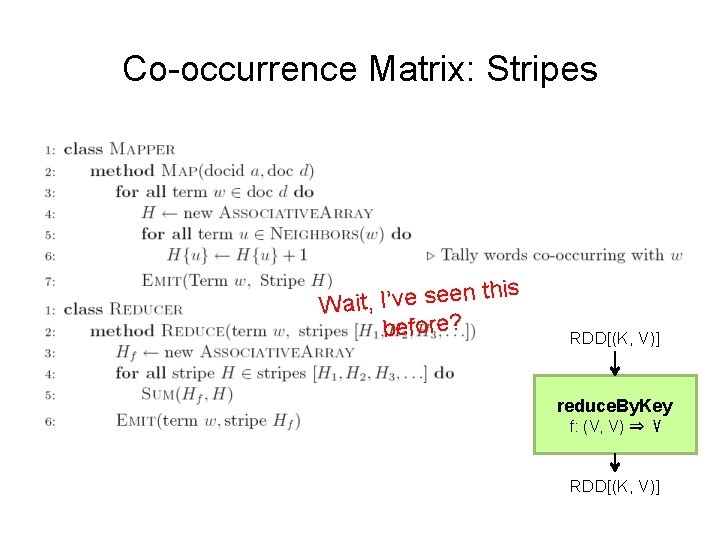

Co-occurrence Matrix: Stripes this n e e s e v ’ I , t i Wa before? RDD[(K, V)] reduce. By. Key f: (V, V) ⇒ V RDD[(K, V)]

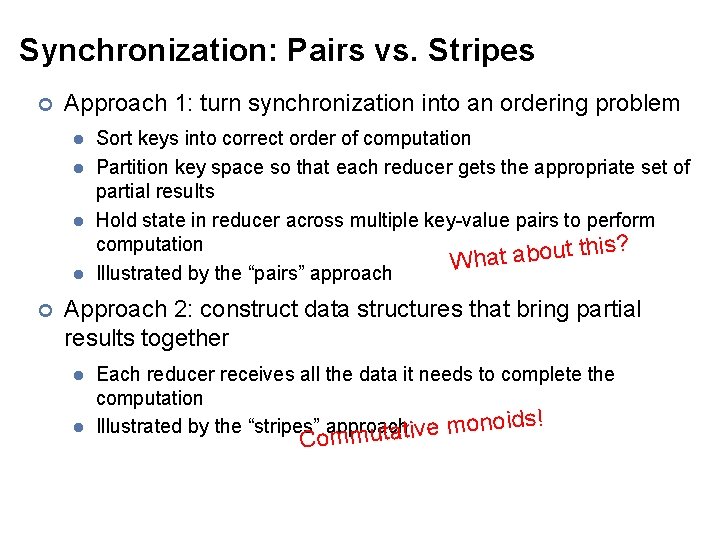

Synchronization: Pairs vs. Stripes ¢ Approach 1: turn synchronization into an ordering problem l l ¢ Sort keys into correct order of computation Partition key space so that each reducer gets the appropriate set of partial results Hold state in reducer across multiple key-value pairs to perform computation ? s i h t t u o b a What Illustrated by the “pairs” approach Approach 2: construct data structures that bring partial results together l l Each reducer receives all the data it needs to complete the computation ds! i o n o Illustrated by the “stripes” approach m e v i t a ut Comm

f(B|A): “Pairs” (a, *) → 32 Reducer holds this value in memory (a, b 1) → 3 (a, b 2) → 12 (a, b 3) → 7 (a, b 4) → 1 … ¢ (a, b 1) → 3 / 32 (a, b 2) → 12 / 32 (a, b 3) → 7 / 32 (a, b 4) → 1 / 32 … For this to work: l l Must emit extra (a, *) for every bn in mapper Must make sure all a’s get sent to same reducer (use partitioner) Must make sure (a, *) comes first (define sort order) Must hold state in reducer across different key-value pairs

Two superpowers: Associativity Commutativity (sorting)

Because you can’t avoid this… … … And sort-based shuffling is pretty efficient!

The datacenter is the computer! What’s the instruction set? Source: Google

Algorithm design in a nutshell… Exploit associativity and commutativity via commutative monoids (if you can) Exploit framework-based sorting to sequence computations (if you can’t) Source: Wikipedia (Walnut)

Questions? Remember: Assignment 2 due next Tuesday at 8: 30 am Source: Wikipedia (Japanese rock garden)

- Slides: 47