www pwc com Big Data Analytics Learning Lab

www. pwc. com Big Data Analytics Learning Lab 1 UN Data Innovation Lab 4 University of Nairobi March 13 -14, 2017

Agenda I. Introduction to Big Data What it is and why it matters II. Big Data Analytics Putting Big Data to work III. Creating a Big Data-Enabled Organization Bringing Big Data Analytics home IV. Case Study ‘Nowcasting’ economic activity in Colombia Pw. C

Introduction to Big Data What it is and Why it Matters 01

What is Big Data? “Big Data” exceeds the capacity of traditional analytics and information management paradigms across what is known as the 4 V’s: Volume, Variety, Velocity, and Veracity Velocity Variety Volume Uncertainty of Data Analysis of Streaming Data Different Forms of Data Scale of Data With exponential increases of data from unfiltered and constantly flowing data sources, data quality often suffers and new methods must find ways to “sift” through junk to find meaning The speed at which data is generated and used. New data is being created every second and in some cases it may need to be analyzed just as quickly Represents the diversity of the data. Data sets will vary by type (e. g. social networking, media, text) and they will vary how well they are structured Reflects the size of a data set. New information is generated daily and in some cases hourly, creating data sets that are measured in terabytes and petabytes Pw. C 4

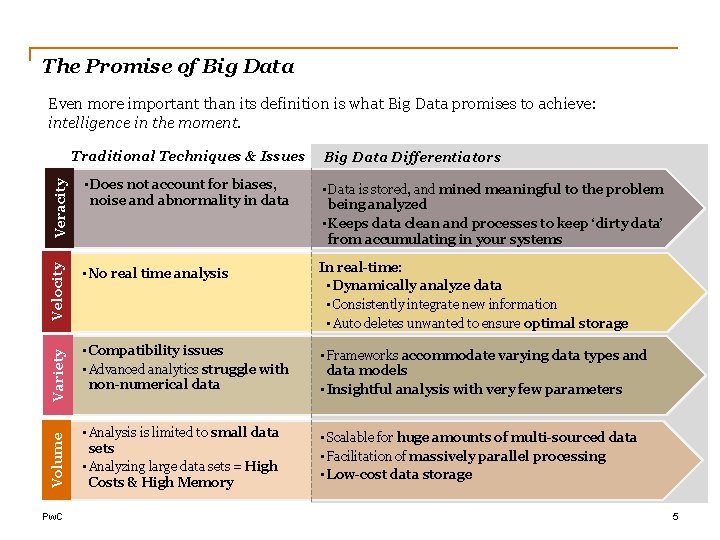

The Promise of Big Data Even more important than its definition is what Big Data promises to achieve: intelligence in the moment. Veracity • Data is stored, and mined meaningful to the problem being analyzed • Keeps data clean and processes to keep ‘dirty data’ from accumulating in your systems • No real time analysis In real-time: • Dynamically analyze data • Consistently integrate new information • Auto deletes unwanted to ensure optimal storage • Compatibility issues • Advanced analytics struggle with non-numerical data • Frameworks accommodate varying data types and data models • Insightful analysis with very few parameters • Analysis is limited to small data sets • Analyzing large data sets = High Costs & High Memory • Scalable for huge amounts of multi-sourced data • Facilitation of massively parallel processing • Low-cost data storage Volume • Does not account for biases, noise and abnormality in data Velocity Big Data Differentiators Variety Traditional Techniques & Issues Pw. C 5

Types of Big Data Variety is the most unique aspect of Big Data. New technologies and new types of data have driven much of the evolution around Big Data. Images, videos, audio, Flash, live streams, podcasts, etc. Media XLS, PDF, CSV, email, Word, PPT, HTML 5, plain text, XML, JSON, etc. Government, weather, competitive, traffic, regulatory, compliance, health care services, economic, census, public finance, stock, OSINT, the World Bank, SEC/Edgar, Wikipedia, IMDb, etc. Docs Sensor data Public Web Machine Log Data Archives of scanned documents, statements, insurance forms, medical record and customer correspondence, paper archives, and print stream files that contain original systems of record between organizations and their customers Pw. C Social Media Twitter, Linkedin, Facebook, Tumblr, Blog, Slide. Share, You. Tube, Google+, Instagram, Flickr, Pinterest, Vimeo, Word. Press, IM, RSS, Review, Chatter, Jive, Yammer, etc. Archive Business Apps Medical devices, smart electric meters, car sensors, road cameras, satellites, traffic recording devices, processors found within vehicles, video games, cable boxes, assembly lines, office building, cell towers, jet engines, air conditioning units, refrigerators, trucks, farm machinery, etc. . Event logs, server data, application logs, business process logs, audit logs, call detail records (CDRs), mobile location, mobile app usage, clickstream data, etc. Project management, marketing automation, productivity, CRM, ERP content management system, HR, storage, talent management, procurement, expense management Google Docs, intranets, portals, etc. 6

“Single sources of data are no longer sufficient to cope with the increasingly complicated problems in many policy arenas. ” 1 Big data “is notable because of its size, but because of its relationality to other data. Due to efforts to mine and aggregate data, Big Data is fundamentally networked. ” 2 (1) M. Milakovich, “Anticipatory Government: Integrating big data for Smaller Government”, in Oxford Internet Institute “Internet, Politics, Policy 2012” Conference, Oxford, 2012 (2) D. Boyd and K. Crawford, “Six Provocations for big data, ” in A Decade in Internet Time: Symposium on the Dynamics of the Internet and Society, 2011 Pw. C 7

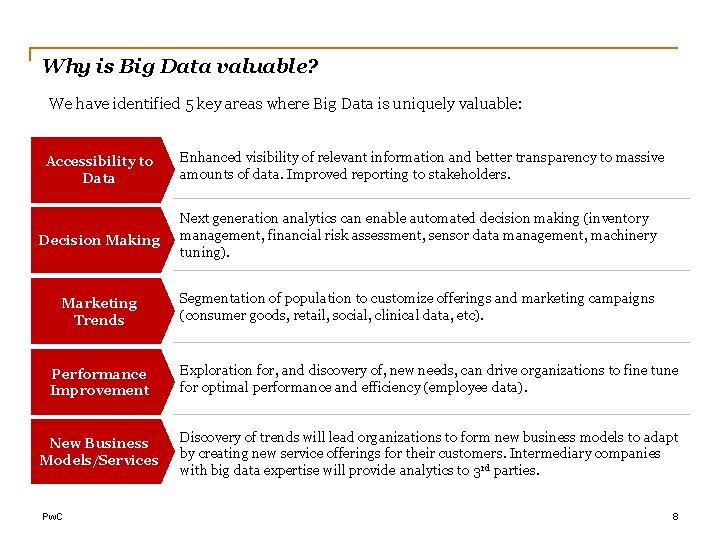

Why is Big Data valuable? We have identified 5 key areas where Big Data is uniquely valuable: Accessibility to Data Enhanced visibility of relevant information and better transparency to massive amounts of data. Improved reporting to stakeholders. Decision Making Next generation analytics can enable automated decision making (inventory management, financial risk assessment, sensor data management, machinery tuning). Marketing Trends Segmentation of population to customize offerings and marketing campaigns (consumer goods, retail, social, clinical data, etc). Performance Improvement Exploration for, and discovery of, new needs, can drive organizations to fine tune for optimal performance and efficiency (employee data). New Business Models/Services Discovery of trends will lead organizations to form new business models to adapt by creating new service offerings for their customers. Intermediary companies with big data expertise will provide analytics to 3 rd parties. Pw. C 8

$1 Trillion One study estimated the potential value of big data in the U. S. health care, European public sector administration, global personal location data, U. S. retail, and global manufacturing to be over $1 trillion U. S. dollars per year. 1 Another study estimated the value of big data in the areas of customer intelligence, supply chain intelligence, performance improvements, fraud detection, and quality and risk management to be $41 billion per year in the UK alone. 2 $41 Billion (1) J. Manyika, M. Chui, B. Brown, J. Bughin, R. Dobbs, C. Roxburgh and A. H. Byers, “Big data: The next frontier for innovation, competition, and productivity, ” Mc. Kinsey & Company, 2011. (2) Centre for Economics and Business Research, “Data equity: unlocking the value of big data, ” SAS, 2012. Pw. C 9

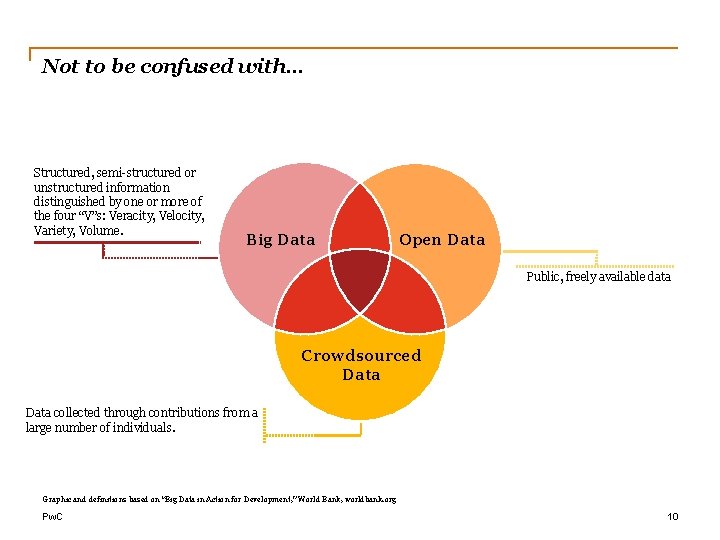

Not to be confused with… Structured, semi-structured or unstructured information distinguished by one or more of the four “V”s: Veracity, Velocity, Variety, Volume. Big Data Open Data Public, freely available data Crowdsourced Data collected through contributions from a large number of individuals. Graphic and definitions based on “Big Data in Action for Development, ” World Bank, worldbank. org Pw. C 10

Big Data Analytics Putting Big Data to Work 02

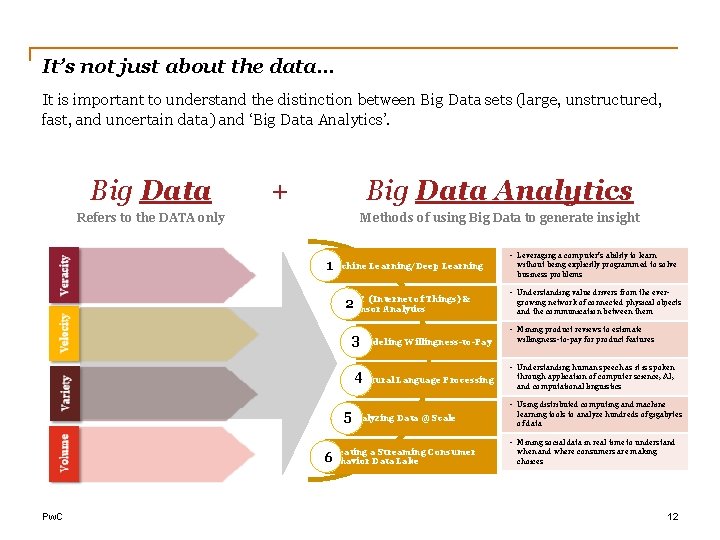

It’s not just about the data… It is important to understand the distinction between Big Data sets (large, unstructured, fast, and uncertain data) and ‘Big Data Analytics’. Big Data Refers to the DATA only + Big Data Analytics Methods of using Big Data to generate insight 1 Machine Learning/Deep Learning (Internet of Things) & 2 Io. T Sensor Analytics 3 Modeling Willingness-to-Pay 4 Natural Language Processing 5 Analyzing Data @ Scale • Leveraging a computer’s ability to learn without being explicitly programmed to solve business problems • Understanding value drivers from the evergrowing network of connected physical objects and the communication between them • Mining product reviews to estimate willingness-to-pay for product features • Understanding human speech as it is spoken through application of computer science, AI, and computational linguistics • Using distributed computing and machine learning tools to analyze hundreds of gigabytes of data • Mining social data in real time to understand Creating a Streaming Consumer 6 Behavior Data Lake Pw. C when and where consumers are making choices 12

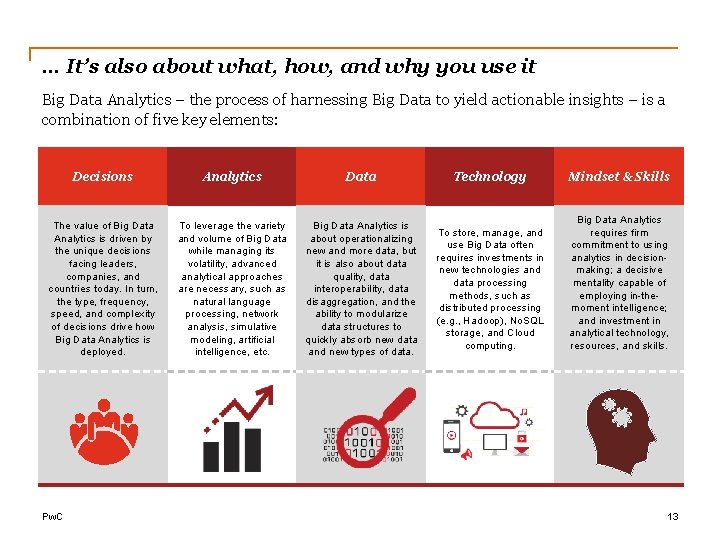

… It’s also about what, how, and why you use it Big Data Analytics – the process of harnessing Big Data to yield actionable insights – is a combination of five key elements: Decisions Analytics Data Technology Mindset & Skills The value of Big Data Analytics is driven by the unique decisions facing leaders, companies, and countries today. In turn, the type, frequency, speed, and complexity of decisions drive how Big Data Analytics is deployed. To leverage the variety and volume of Big Data while managing its volatility, advanced analytical approaches are necessary, such as natural language processing, network analysis, simulative modeling, artificial intelligence, etc. Big Data Analytics is about operationalizing new and more data, but it is also about data quality, data interoperability, data disaggregation, and the ability to modularize data structures to quickly absorb new data and new types of data. To store, manage, and use Big Data often requires investments in new technologies and data processing methods, such as distributed processing (e. g. , Hadoop), No. SQL storage, and Cloud computing. Big Data Analytics requires firm commitment to using analytics in decisionmaking; a decisive mentality capable of employing in-themoment intelligence; and investment in analytical technology, resources, and skills. Pw. C 13

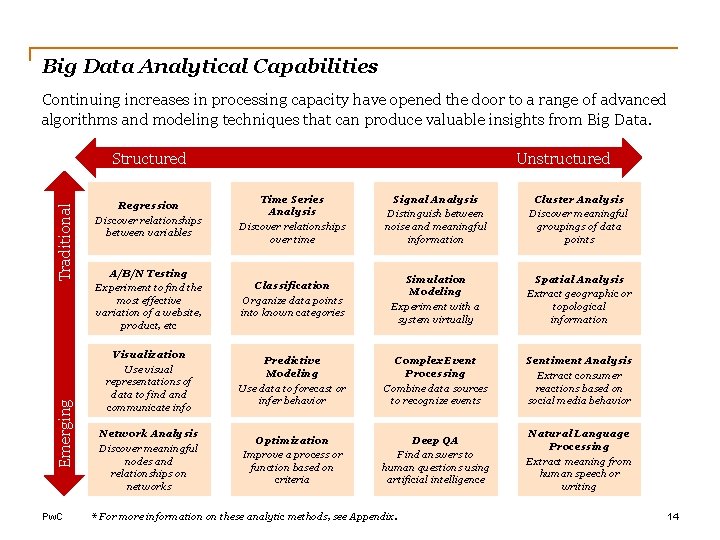

Big Data Analytical Capabilities Continuing increases in processing capacity have opened the door to a range of advanced algorithms and modeling techniques that can produce valuable insights from Big Data. Emerging Traditional Structured Pw. C Unstructured Regression Discover relationships between variables Time Series Analysis Discover relationships over time Signal Analysis Distinguish between noise and meaningful information Cluster Analysis Discover meaningful groupings of data points A/B/N Testing Experiment to find the most effective variation of a website, product, etc Classification Organize data points into known categories Simulation Modeling Experiment with a system virtually Spatial Analysis Extract geographic or topological information Visualization Use visual representations of data to find and communicate info Predictive Modeling Use data to forecast or infer behavior Complex Event Processing Combine data sources to recognize events Sentiment Analysis Extract consumer reactions based on social media behavior Network Analysis Discover meaningful nodes and relationships on networks Optimization Improve a process or function based on criteria Deep QA Find answers to human questions using artificial intelligence Natural Language Processing Extract meaning from human speech or writing * For more information on these analytic methods, see Appendix. 14

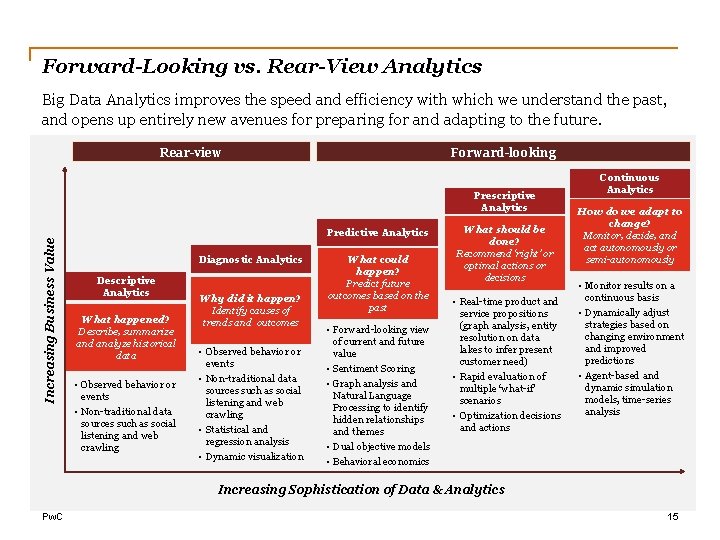

Forward-Looking vs. Rear-View Analytics Big Data Analytics improves the speed and efficiency with which we understand the past, and opens up entirely new avenues for preparing for and adapting to the future. Rear-view Forward-looking Increasing Business Value Prescriptive Analytics Predictive Analytics Diagnostic Analytics Descriptive Analytics What happened? Describe, summarize and analyze historical data • Observed behavior or events • Non-traditional data sources such as social listening and web crawling Why did it happen? Identify causes of trends and outcomes • Observed behavior or events • Non-traditional data sources such as social listening and web crawling • Statistical and regression analysis • Dynamic visualization What could happen? Predict future outcomes based on the past • Forward-looking view of current and future value • Sentiment Scoring • Graph analysis and Natural Language Processing to identify hidden relationships and themes • Dual objective models • Behavioral economics What should be done? Recommend ‘right’ or optimal actions or decisions • Real-time product and service propositions (graph analysis, entity resolution on data lakes to infer present customer need) • Rapid evaluation of multiple ‘what-if’ scenarios • Optimization decisions and actions Continuous Analytics How do we adapt to change? Monitor, decide, and act autonomously or semi-autonomously • Monitor results on a continuous basis • Dynamically adjust strategies based on changing environment and improved predictions • Agent-based and dynamic simulation models, time-series analysis Increasing Sophistication of Data & Analytics Pw. C 15

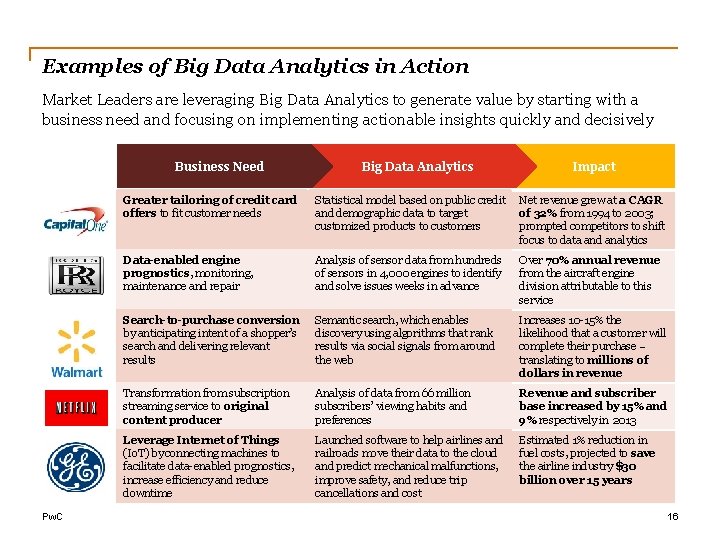

Examples of Big Data Analytics in Action Market Leaders are leveraging Big Data Analytics to generate value by starting with a business need and focusing on implementing actionable insights quickly and decisively Company Pw. C Business Need Big Data Analytics Data and Analytics Impact Greater tailoring of credit card offers to fit customer needs Statistical model based on public credit and demographic data to target customized products to customers Net revenue grew at a CAGR of 32% from 1994 to 2003; prompted competitors to shift focus to data and analytics Data-enabled engine prognostics, monitoring, maintenance and repair Analysis of sensor data from hundreds of sensors in 4, 000 engines to identify and solve issues weeks in advance Over 70% annual revenue from the aircraft engine division attributable to this service Search-to-purchase conversion by anticipating intent of a shopper’s search and delivering relevant results Semantic search, which enables discovery using algorithms that rank results via social signals from around the web Increases 10 -15% the likelihood that a customer will complete their purchase – translating to millions of dollars in revenue Transformation from subscription streaming service to original content producer Analysis of data from 66 million subscribers’ viewing habits and preferences Revenue and subscriber base increased by 15% and 9% respectively in 2013 Leverage Internet of Things (Io. T) by connecting machines to facilitate data-enabled prognostics, increase efficiency and reduce downtime Launched software to help airlines and railroads move their data to the cloud and predict mechanical malfunctions, improve safety, and reduce trip cancellations and cost Estimated 1% reduction in fuel costs, projected to save the airline industry $30 billion over 15 years 16

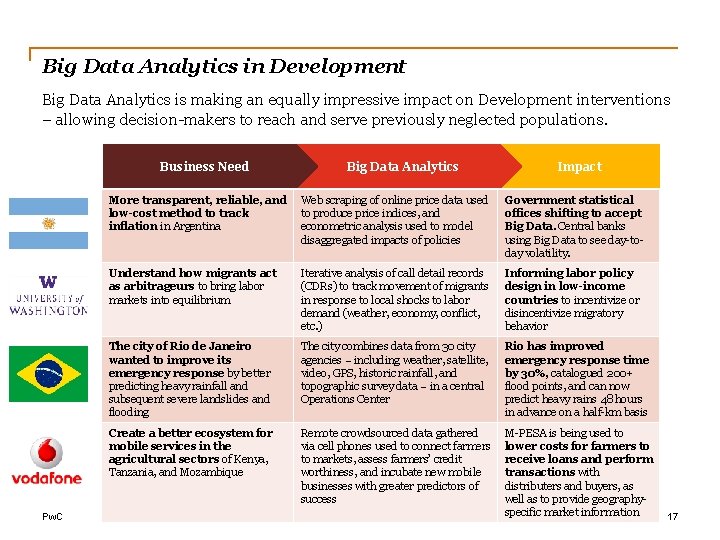

Big Data Analytics in Development Big Data Analytics is making an equally impressive impact on Development interventions – allowing decision-makers to reach and serve previously neglected populations. Company Pw. C Business Need Big Data Analytics Data and Analytics Impact More transparent, reliable, and low-cost method to track inflation in Argentina Web scraping of online price data used to produce price indices, and econometric analysis used to model disaggregated impacts of policies Government statistical offices shifting to accept Big Data. Central banks using Big Data to see day-today volatility. Understand how migrants act as arbitrageurs to bring labor markets into equilibrium Iterative analysis of call detail records (CDRs) to track movement of migrants in response to local shocks to labor demand (weather, economy, conflict, etc. ) Informing labor policy design in low-income countries to incentivize or disincentivize migratory behavior The city of Rio de Janeiro wanted to improve its emergency response by better predicting heavy rainfall and subsequent severe landslides and flooding The city combines data from 30 city agencies – including weather, satellite, video, GPS, historic rainfall, and topographic survey data – in a central Operations Center Rio has improved emergency response time by 30%, catalogued 200+ flood points, and can now predict heavy rains 48 hours in advance on a half-km basis Create a better ecosystem for mobile services in the agricultural sectors of Kenya, Tanzania, and Mozambique Remote crowdsourced data gathered via cell phones used to connect farmers to markets, assess farmers’ credit worthiness, and incubate new mobile businesses with greater predictors of success M-PESA is being used to lower costs for farmers to receive loans and perform transactions with distributers and buyers, as well as to provide geographyspecific market information 17

Creating a Big Data-Enabled Organization Bringing Big Data home 03

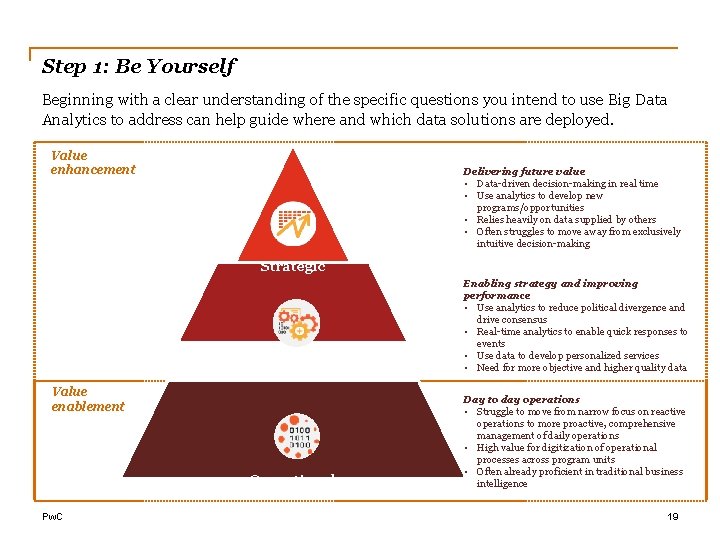

Step 1: Be Yourself Beginning with a clear understanding of the specific questions you intend to use Big Data Analytics to address can help guide where and which data solutions are deployed. Value enhancement Delivering future value • Data-driven decision-making in real time • Use analytics to develop new programs/opportunities • Relies heavily on data supplied by others • Often struggles to move away from exclusively intuitive decision-making Strategic Tactical Enabling strategy and improving performance • Use analytics to reduce political divergence and drive consensus • Real-time analytics to enable quick responses to events • Use data to develop personalized services • Need for more objective and higher quality data Operational Day to day operations • Struggle to move from narrow focus on reactive operations to more proactive, comprehensive management of daily operations • High value for digitization of operational processes across program units • Often already proficient in traditional business intelligence Value enablement Pw. C 19

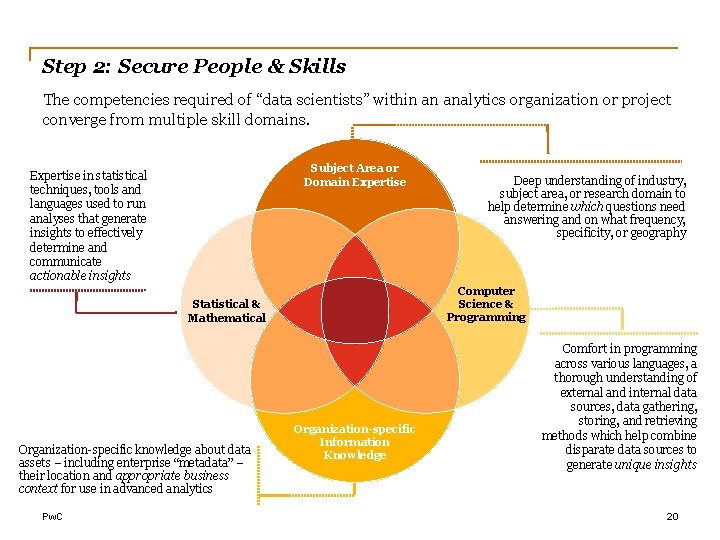

Step 2: Secure People & Skills The competencies required of “data scientists” within an analytics organization or project converge from multiple skill domains. Subject Area or Domain Expertise in statistical techniques, tools and languages used to run analyses that generate insights to effectively determine and communicate actionable insights Computer Science & Programming Statistical & Mathematical Organization-specific knowledge about data assets – including enterprise “metadata” – their location and appropriate business context for use in advanced analytics Pw. C Deep understanding of industry, subject area, or research domain to help determine which questions need answering and on what frequency, specificity, or geography Organization-specific Information Knowledge Comfort in programming across various languages, a thorough understanding of external and internal data sources, data gathering, storing, and retrieving methods which help combine disparate data sources to generate unique insights 20

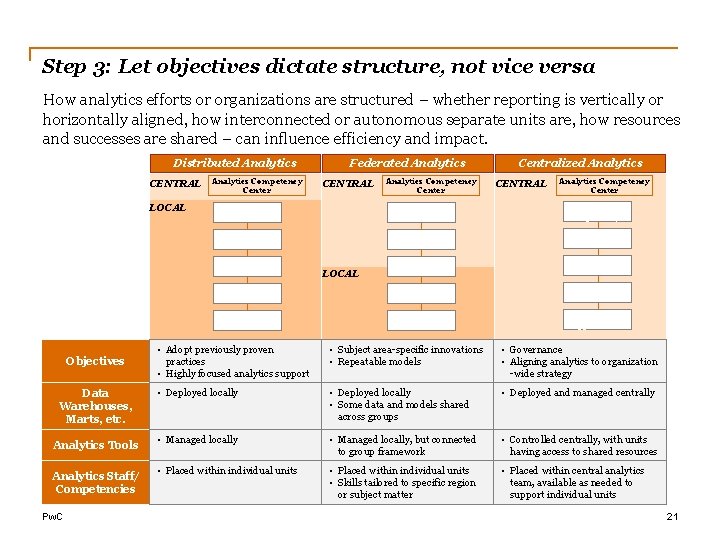

Step 3: Let objectives dictate structure, not vice versa How analytics efforts or organizations are structured – whether reporting is vertically or horizontally aligned, how interconnected or autonomous separate units are, how resources and successes are shared – can influence efficiency and impact. Distributed Analytics CENTRAL LOCAL Objectives Data Warehouses, Marts, etc. Analytics Tools Analytics Staff/ Competencies Pw. C Analytics Competency Center Federated Analytics CENTRAL Analytics Competency Center Centralized Analytics CENTRAL Analytics Competency Center Metadata Repository ETL ETL Data Warehouse Data Mart BI Applications LOCAL • Adopt previously proven practices • Highly focused analytics support • Subject area-specific innovations • Repeatable models • Governance • Aligning analytics to organization -wide strategy • Deployed locally • Some data and models shared across groups • Deployed and managed centrally • Managed locally, but connected to group framework • Controlled centrally, with units having access to shared resources • Placed within individual units • Skills tailored to specific region or subject matter • Placed within central analytics team, available as needed to support individual units 21

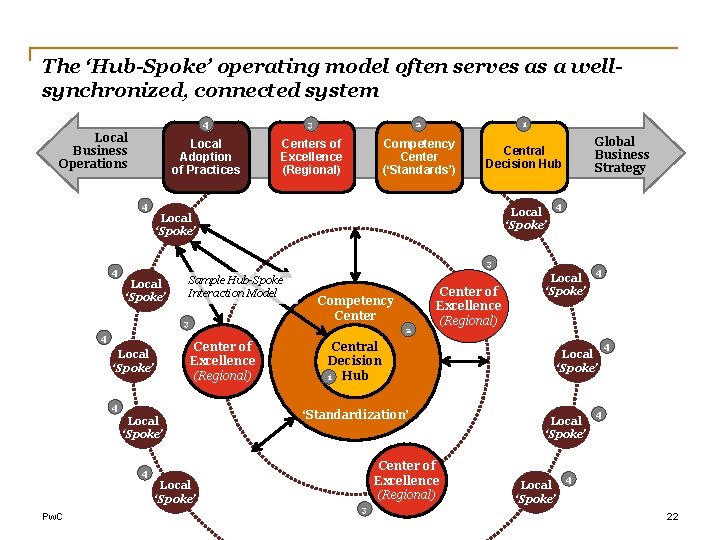

The ‘Hub-Spoke’ operating model often serves as a wellsynchronized, connected system Local Business Operations 4 3 2 1 Local Adoption of Practices Centers of Excellence (Regional) Competency Center (‘Standards’) Central Decision Hub 4 Local ‘Spoke’ Local Global Business Strategy 4 ‘Spoke’ 3 4 Local ‘Spoke’ Sample Hub-Spoke Interaction Model 3 4 2 Center of Excellence (Regional) Local ‘Spoke’ Competency Center 4 Local ‘Spoke’ Central Decision 1 Hub ‘Standardization’ Center of Excellence (Regional) 4 Local ‘Spoke’ Pw. C Center of Excellence (Regional) Local ‘Spoke’ 3 4 Local ‘Spoke’ 4 4 4 22

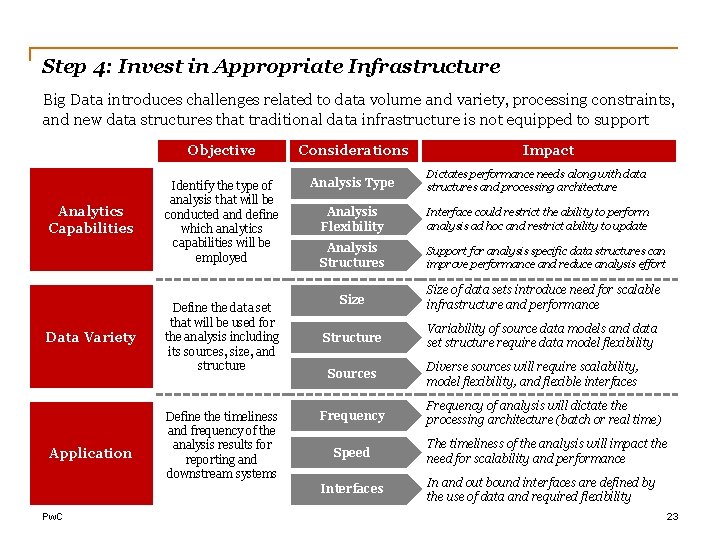

Step 4: Invest in Appropriate Infrastructure Big Data introduces challenges related to data volume and variety, processing constraints, and new data structures that traditional data infrastructure is not equipped to support Analytics Capabilities Data Variety Application Objective Considerations Identify the type of analysis that will be conducted and define which analytics capabilities will be employed Analysis Type Dictates performance needs along with data structures and processing architecture Analysis Flexibility Interface could restrict the ability to perform analysis ad hoc and restrict ability to update Analysis Structures Support for analysis specific data structures can improve performance and reduce analysis effort Size of data sets introduce need for scalable infrastructure and performance Structure Variability of source data models and data set structure require data model flexibility Define the data set that will be used for the analysis including its sources, size, and structure Define the timeliness and frequency of the analysis results for reporting and downstream systems Sources Diverse sources will require scalability, model flexibility, and flexible interfaces Frequency of analysis will dictate the processing architecture (batch or real time) Speed The timeliness of the analysis will impact the need for scalability and performance Interfaces Pw. C Impact In and out bound interfaces are defined by the use of data and required flexibility 23

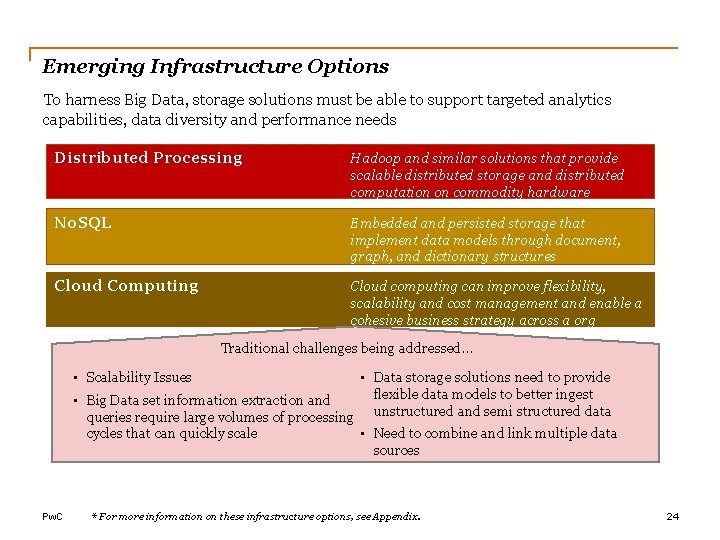

Emerging Infrastructure Options To harness Big Data, storage solutions must be able to support targeted analytics capabilities, data diversity and performance needs Distributed Processing Hadoop and similar solutions that provide scalable distributed storage and distributed computation on commodity hardware No. SQL Embedded and persisted storage that implement data models through document, graph, and dictionary structures Cloud Computing Cloud computing can improve flexibility, scalability and cost management and enable a cohesive business strategy across a org Traditional challenges being addressed… • Scalability Issues • Data storage solutions need to provide flexible data models to better ingest • Big Data set information extraction and queries require large volumes of processing unstructured and semi structured data • Need to combine and link multiple data cycles that can quickly scale sources Pw. C * For more information on these infrastructure options, see Appendix. 24

Pw. C 25

Summary: Key Guiding Principles for developing best-inclass analytics organization Guiding Principles – Illustrative, May be Customized 1. Establish the Analytics organization as an objective advisor for insight generation. 2. Ensure responsiveness to business needs by balancing ‘consolidation’ with ‘distribution’ of analytics functionality where it makes sense. 3. Innovate, invest in, and build new analytics capabilities, and gradually push them out to the business as user sophistication matures (e. g. , data visualization). 4. Prioritize strategic business value delivery over tactical outputs. 5. Ensure adequate attention to user experience. 6. Focus on speed, accuracy, and reusability. 7. Optimize and manage work-flow to achieve maximum resource efficiency. 8. Allow distributed analytics where it makes sense, but tightly govern and ensure cataloguing. 9. Pw. C Ensure a consistent feedback loop of all outputs that are created. 26

Case Study ‘Nowcasting’ Economic Activity in Colombia 04

Situation In Colombia, the leading economic indicators used to analyze economic activity have an average lag of 10 weeks. This presents challenges for the well-timed design of economic policy and monitoring of economic shocks or trends. The Colombian Ministry of Finance looked for coincident indicators that could allow tracking the short-term trends of economic activity. Characteristics of Data Needed: • Real-time • Highly disaggregated – by sector, geography, etc. • Statistically correlated with key economic trends (consumption, GDP, etc. ) • Robust enough of a sample to be representative of the economy as a whole Pw. C 28

Group Discussion What Big Data sources could the Colombian Ministry of Finance potentially use to reliably approximate sectorial economic activity in real-time? Pw. C 29

Brainstorming Breakout In groups of 3 -4, take five-ten minutes to brainstorm how the Ministry could approach answering the following questions: • What data should it consider using? • Is this data the Ministry already has available, or will this require the Ministry to acquire an entirely new source of data? • How does the cost of acquiring this data – whether by their own collection or through external data partnership – compare to the expected benefits of using it? • If this data is new to the Ministry, what entities may already have this data in possession? • How might the Ministry ensure its staff have the skills necessary to acquire, manage, and use this data? Is this data uniquely complex such that it may require more advanced or entirely new skillsets? • What should the Ministry consider in the way of data storage and security? How extensively may it be required to overhaul data storage infrastructure to accommodate using this data? Pw. C 30

Solution Based on web searches performed by Google users, Google Trends (GT) provides daily information about the query volume for a given search term in a given geographic region. For Colombia, GT data are available at the departmental level and also for the largest municipalities. The Colombian Administrative Department for National Statistics (DANE – for its acronym in Spanish) combined indexes built using GT data with its own official economic activity data (both at the aggregate level and at the sectorial level) – both of which are publicly available – to construct leading indicators that determine, in real-time, the shortterm trend of different economic sectors, as well as their turning points. In some sense, the GT data takes the place of traditional consumer-sentiment surveys. For example, the use of data for a certain keyword (such as the brand for a certain product) might be justified in the case a drop or surge in the web searches for that keyword could be linked to a fall or increase in its demand and, therefore, a lower or higher production for the specific sector producing that product. Pw. C 31

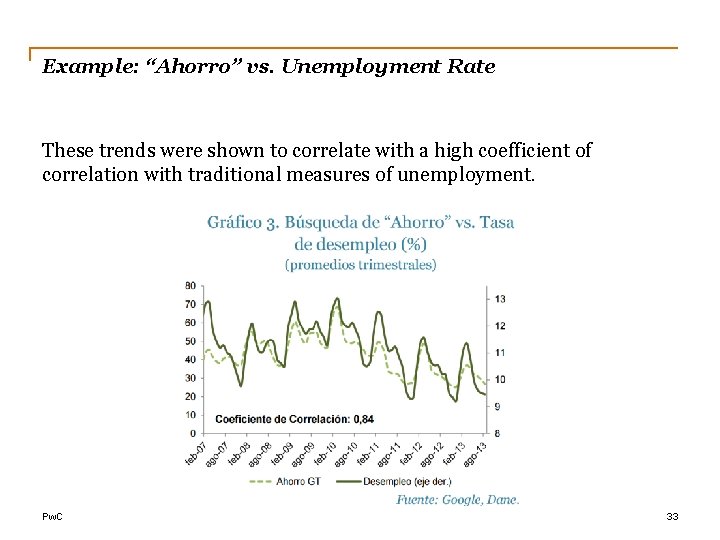

Example: “Ahorro” vs. Unemployment Rate Ahorro – savings Pw. C 32

Example: “Ahorro” vs. Unemployment Rate These trends were shown to correlate with a high coefficient of correlation with traditional measures of unemployment. Pw. C 33

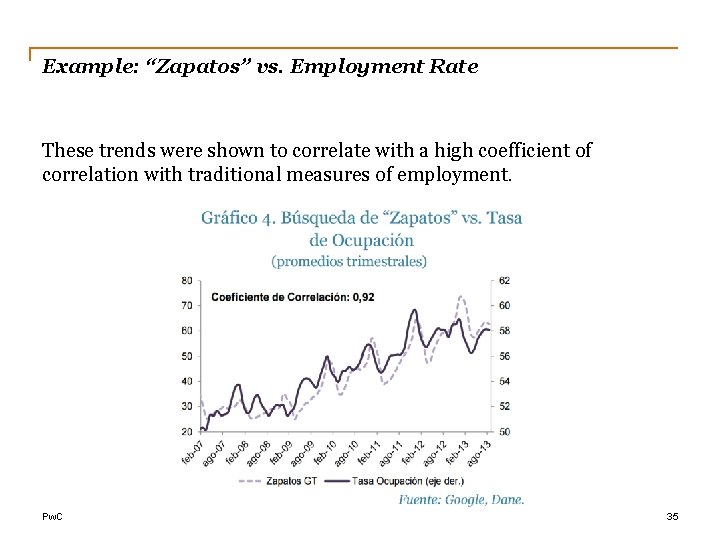

Example: “Zapatos” vs. Employment Rate Zapatos – shoes Pw. C 34

Example: “Zapatos” vs. Employment Rate These trends were shown to correlate with a high coefficient of correlation with traditional measures of employment. Pw. C 35

Find Out More Melanie Thomas Armstrong Jean Young Leading Partner International Public Sector +1 (202) 320 -7098 Melanie. Thomas. Armstrong@us. pwc. com Managing Director International Public Sector Data Analytics +1 (703) 918 -1001 Jean. M. Young@us. pwc. com Bill Stephens Mariola Pogacnik Director International Public Sector Data Analytics +1 (703) 635 -0800 William. L. Stephens. Jr@us. pwc. com Director United Nations & International Public Sector +1 (646) 471 -5467 Mariola. Pogacnik@us. pwc. com Ashraf Faramawi Jared Nyarumba Manager International Public Sector Data Analytics +1 (202) 271 -5711 Ashraf. Faramawi@us. pwc. com Manager Data Analytics, Africa +254 710 623 426 Jared. Nyarumba@ke. pwc. com This publication has been prepared for general guidance on matters of interest only, and does not constitute professional advice. You should not act upon the information contained in this publication without obtaining specific professional advice. No representation or warranty (express or implied) is given as to the accuracy or completeness of the information contained in this publication, and, to the extent permitted by law, Pricewaterhouse. Coopers LLP, its members, employees and agents do not accept or assume any liability, responsibility or duty of care for any consequences of you or anyone else acting, or refraining to act, in reliance on the information contained in this publication or for any decision based on it. © 2017 Pricewaterhouse. Coopers LLP. All rights reserved. In this document, “Pw. C” refers to Pricewaterhouse. Coopers LLP which is a member firm of Pricewaterhouse. Coopers International Limited, each member firm of which is a separate legal entity. Pw. C 36

Appendix

Emerging Data Storage and Infrastructure Options

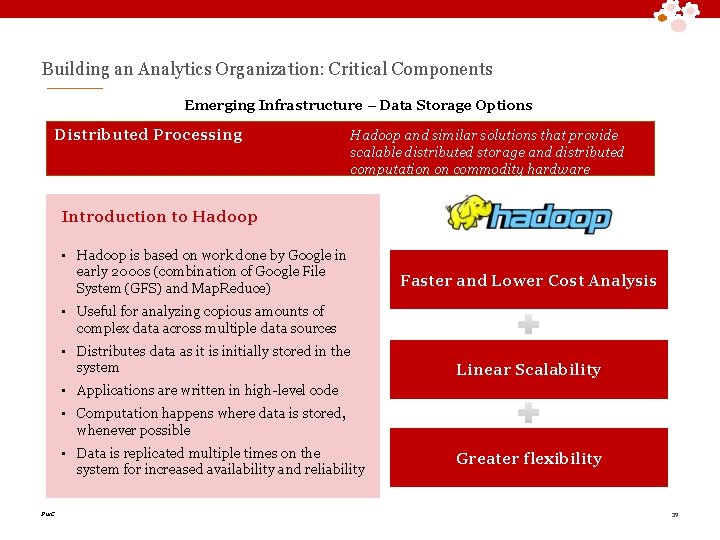

Building an Analytics Organization: Critical Components Emerging Infrastructure – Data Storage Options Distributed Processing Hadoop and similar solutions that provide scalable distributed storage and distributed computation on commodity hardware Introduction to Hadoop • Hadoop is based on work done by Google in early 2000 s (combination of Google File System (GFS) and Map. Reduce) Faster and Lower Cost Analysis • Useful for analyzing copious amounts of complex data across multiple data sources • Distributes data as it is initially stored in the system Linear Scalability • Applications are written in high-level code • Computation happens where data is stored, whenever possible • Data is replicated multiple times on the system for increased availability and reliability Pw. C Greater flexibility 39

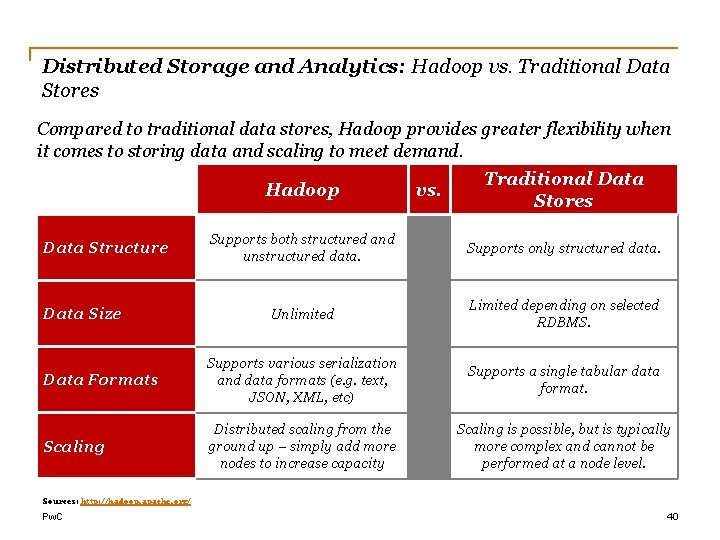

Distributed Storage and Analytics: Hadoop vs. Traditional Data Stores Compared to traditional data stores, Hadoop provides greater flexibility when it comes to storing data and scaling to meet demand. Hadoop vs. Traditional Data Stores Supports both structured and unstructured data. Supports only structured data. Unlimited Limited depending on selected RDBMS. Data Formats Supports various serialization and data formats (e. g. text, JSON, XML, etc) Supports a single tabular data format. Scaling Distributed scaling from the ground up – simply add more nodes to increase capacity Scaling is possible, but is typically more complex and cannot be performed at a node level. Data Structure Data Size Sources: http: //hadoop. apache. org/ Pw. C 40

Building an Analytics Organization: Critical Components Emerging Infrastructure – Data Storage Options No. SQL Embedded and persisted storage that implement data models through document, graph, and dictionary structures No. SQL - Storage Types Key – Value Store Columnar Store Document Store Graph Store Increasing Data Complexity Pros: Scalability & Flexibility Cons: Complexity Pros: Easy to Use Cons: Scalability Pros: Graph Joins Cons: Flexibility Solution Examples Pros: Simplicity & Scalability Cons: Lack of advanced features/queries Pw. C 41

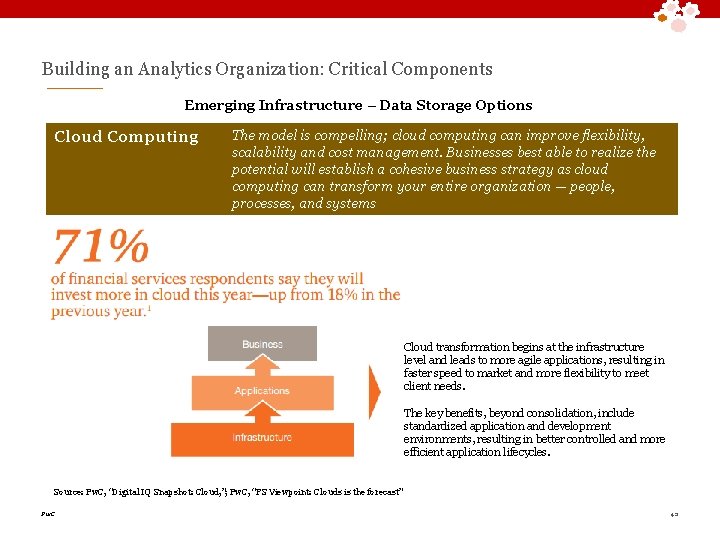

Building an Analytics Organization: Critical Components Emerging Infrastructure – Data Storage Options Cloud Computing The model is compelling; cloud computing can improve flexibility, scalability and cost management. Businesses best able to realize the potential will establish a cohesive business strategy as cloud computing can transform your entire organization — people, processes, and systems Cloud transformation begins at the infrastructure level and leads to more agile applications, resulting in faster speed to market and more flexibility to meet client needs. The key benefits, beyond consolidation, include standardized application and development environments, resulting in better controlled and more efficient application lifecycles. Source: Pw. C, “Digital IQ Snapshot: Cloud, ”; Pw. C, “FS Viewpoint: Clouds is the forecast” Pw. C 42

Text Mining and Natural Language Processing

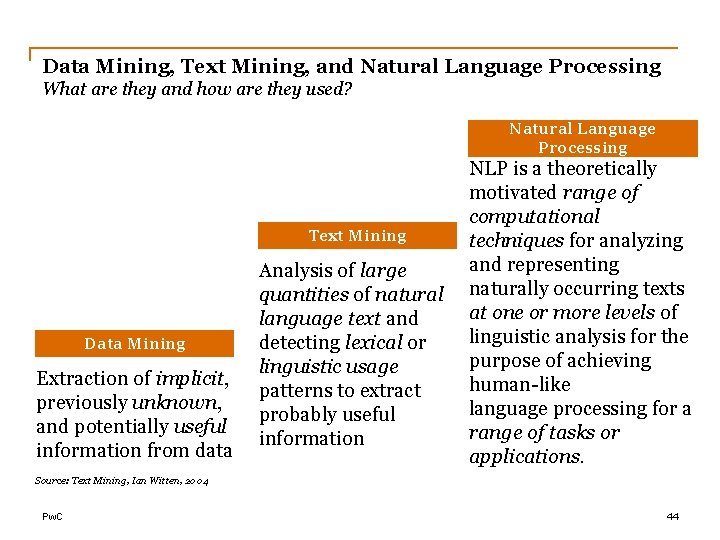

Data Mining, Text Mining, and Natural Language Processing What are they and how are they used? Natural Language Processing Text Mining Data Mining Extraction of implicit, previously unknown, and potentially useful information from data Analysis of large quantities of natural language text and detecting lexical or linguistic usage patterns to extract probably useful information NLP is a theoretically motivated range of computational techniques for analyzing and representing naturally occurring texts at one or more levels of linguistic analysis for the purpose of achieving human-like language processing for a range of tasks or applications. Source: Text Mining, Ian Witten, 2004 Pw. C 44

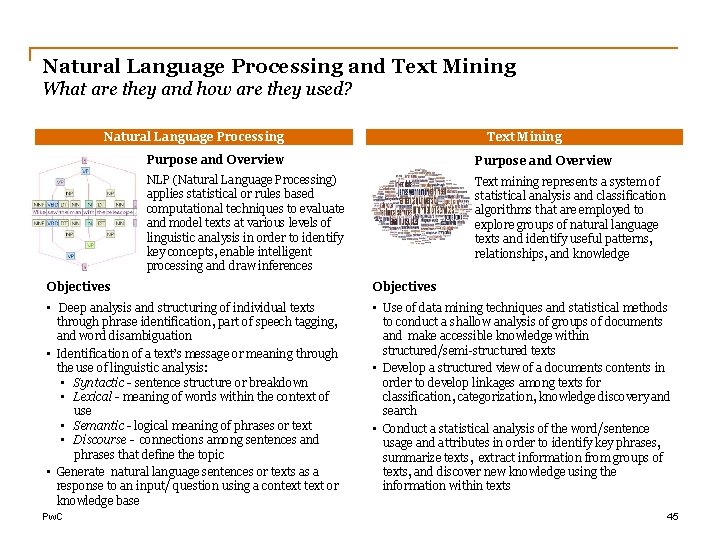

Natural Language Processing and Text Mining What are they and how are they used? Natural Language Processing Text Mining Purpose and Overview NLP (Natural Language Processing) applies statistical or rules based computational techniques to evaluate and model texts at various levels of linguistic analysis in order to identify key concepts, enable intelligent processing and draw inferences Text mining represents a system of statistical analysis and classification algorithms that are employed to explore groups of natural language texts and identify useful patterns, relationships, and knowledge Objectives • Deep analysis and structuring of individual texts through phrase identification, part of speech tagging, and word disambiguation • Identification of a text’s message or meaning through the use of linguistic analysis: • Syntactic - sentence structure or breakdown • Lexical - meaning of words within the context of use • Semantic - logical meaning of phrases or text • Discourse - connections among sentences and phrases that define the topic • Generate natural language sentences or texts as a response to an input/ question using a context or knowledge base • Use of data mining techniques and statistical methods to conduct a shallow analysis of groups of documents and make accessible knowledge within structured/semi-structured texts • Develop a structured view of a documents contents in order to develop linkages among texts for classification, categorization, knowledge discovery and search • Conduct a statistical analysis of the word/sentence usage and attributes in order to identify key phrases, summarize texts, extract information from groups of texts, and discover new knowledge using the information within texts Pw. C 45

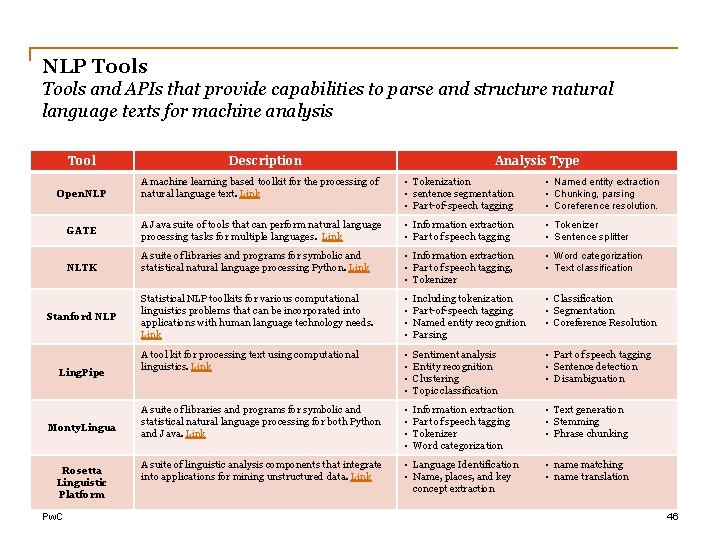

NLP Tools and APIs that provide capabilities to parse and structure natural language texts for machine analysis Tool Open. NLP GATE NLTK Stanford NLP Ling. Pipe Monty. Lingua Rosetta Linguistic Platform Pw. C Description Analysis Type A machine learning based toolkit for the processing of natural language text. Link • Tokenization • sentence segmentation • Part-of-speech tagging • Named entity extraction • Chunking, parsing • Coreference resolution. A Java suite of tools that can perform natural language processing tasks for multiple languages. Link • Information extraction • Part of speech tagging • Tokenizer • Sentence splitter A suite of libraries and programs for symbolic and statistical natural language processing Python. Link • Information extraction • Part of speech tagging, • Tokenizer • Word categorization • Text classification Statistical NLP toolkits for various computational linguistics problems that can be incorporated into applications with human language technology needs. Link • • Including tokenization Part-of-speech tagging Named entity recognition Parsing • Classification • Segmentation • Coreference Resolution A tool kit for processing text using computational linguistics. Link • • Sentiment analysis Entity recognition Clustering Topic classification • Part of speech tagging • Sentence detection • Disambiguation A suite of libraries and programs for symbolic and statistical natural language processing for both Python and Java. Link • • Information extraction Part of speech tagging Tokenizer Word categorization • Text generation • Stemming • Phrase chunking A suite of linguistic analysis components that integrate into applications for mining unstructured data. Link • Language Identification • Name, places, and key concept extraction • name matching • name translation 46

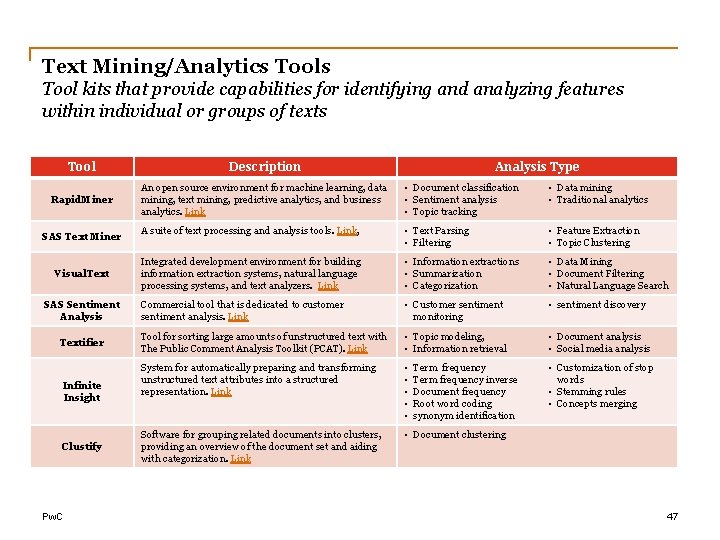

Text Mining/Analytics Tool kits that provide capabilities for identifying and analyzing features within individual or groups of texts Tool Description Rapid. Miner An open source environment for machine learning, data mining, text mining, predictive analytics, and business analytics. Link • Document classification • Sentiment analysis • Topic tracking • Data mining • Traditional analytics A suite of text processing and analysis tools. Link, • Text Parsing • Filtering • Feature Extraction • Topic Clustering Integrated development environment for building information extraction systems, natural language processing systems, and text analyzers. Link • Information extractions • Summarization • Categorization • Data Mining • Document Filtering • Natural Language Search Commercial tool that is dedicated to customer sentiment analysis. Link • Customer sentiment monitoring • sentiment discovery Tool for sorting large amounts of unstructured text with The Public Comment Analysis Toolkit (PCAT). Link • Topic modeling, • Information retrieval • Document analysis • Social media analysis System for automatically preparing and transforming unstructured text attributes into a structured representation. Link • • • Customization of stop words • Stemming rules • Concepts merging Software for grouping related documents into clusters, providing an overview of the document set and aiding with categorization. Link • Document clustering SAS Text Miner Visual. Text SAS Sentiment Analysis Textifier Infinite Insight Clustify Pw. C Analysis Type Term frequency inverse Document frequency Root word coding synonym identification 47

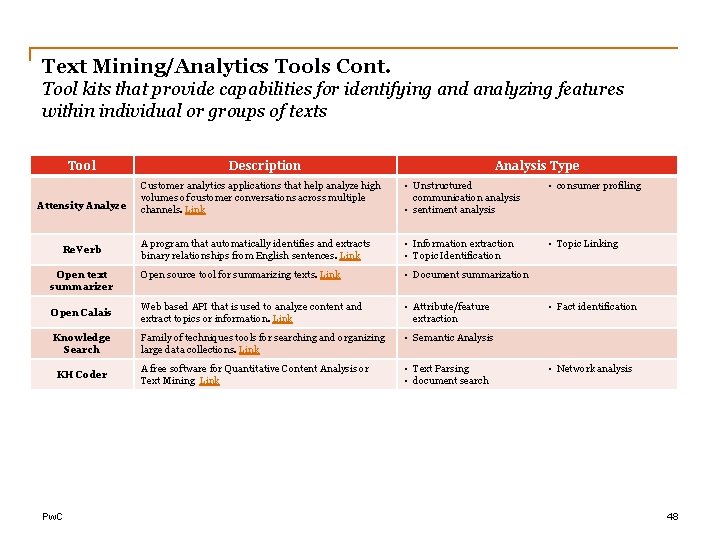

Text Mining/Analytics Tools Cont. Tool kits that provide capabilities for identifying and analyzing features within individual or groups of texts Tool Description Analysis Type Customer analytics applications that help analyze high volumes of customer conversations across multiple channels. Link • Unstructured communication analysis • sentiment analysis • consumer profiling A program that automatically identifies and extracts binary relationships from English sentences. Link • Information extraction • Topic Identification • Topic Linking Open source tool for summarizing texts. Link • Document summarization Open Calais Web based API that is used to analyze content and extract topics or information. Link • Attribute/feature extraction Knowledge Search Family of techniques tools for searching and organizing large data collections. Link • Semantic Analysis A free software for Quantitative Content Analysis or Text Mining Link • Text Parsing • document search Attensity Analyze Re. Verb Open text summarizer KH Coder Pw. C • Fact identification • Network analysis 48

Resources Tutorials, Tools, Applications, and Research Groups Link and Description Text Mining Overview Text Mining Activites Text Mining Tutorials and Overviews Text Mining Process NLP Introduction NLP Overview NLP Concepts http: //ai. cs. washington. edu/projects/open-information-extraction Research Groups and Papers http: //www. nactem. ac. uk/index. php http: //nlp. stanford. edu/ http: //research. microsoft. com/en-us/groups/nlp/ NLP Toolkit List NLP Tools and Data Sets Text Mining Tools by Function Text Mining Tools Pw. C 49

Deep. QA, Image Analytics, and Audio Analytics

Deep. QA Overview and Introduction What is Deep. QA? • Deep. QA forms that core of Watson, the open domain question analysis and answering system • The Deep. QA stack is comprised of set of search, NLP, learning, and scoring algorithms • Deep. QA operates on a distributed computing infrastructure that leverages Map Reduce and the Unstructured Information Management Architecture What is the target problem set? • Understanding the meaning and context of human language • Searching and retrieving information from large library of unstructured information • Identifying accurate and precise answers to questions that are complex and must sourced from a large knowledge set Pw. C 51

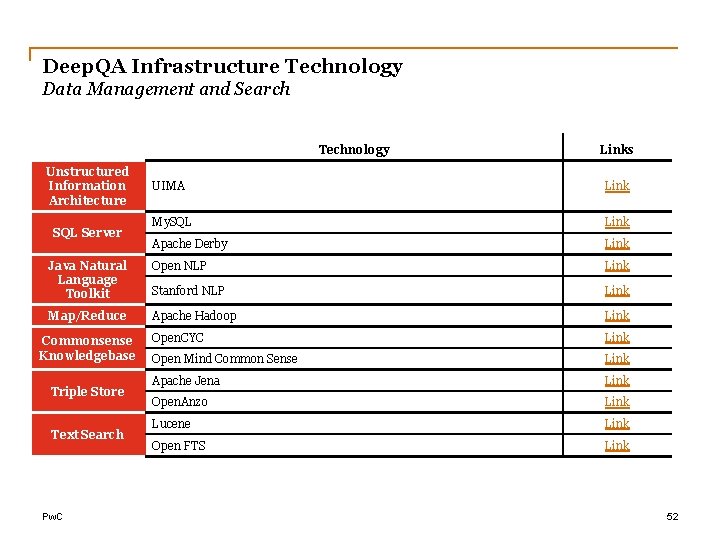

Deep. QA Infrastructure Technology Data Management and Search Technology Unstructured Information Architecture Links UIMA Link My. SQL Link Apache Derby Link Java Natural Language Toolkit Open NLP Link Stanford NLP Link Map/Reduce Apache Hadoop Link Open. CYC Link Open Mind Common Sense Link Apache Jena Link Open. Anzo Link Lucene Link Open FTS Link SQL Server Commonsense Knowledgebase Triple Store Text Search Pw. C 52

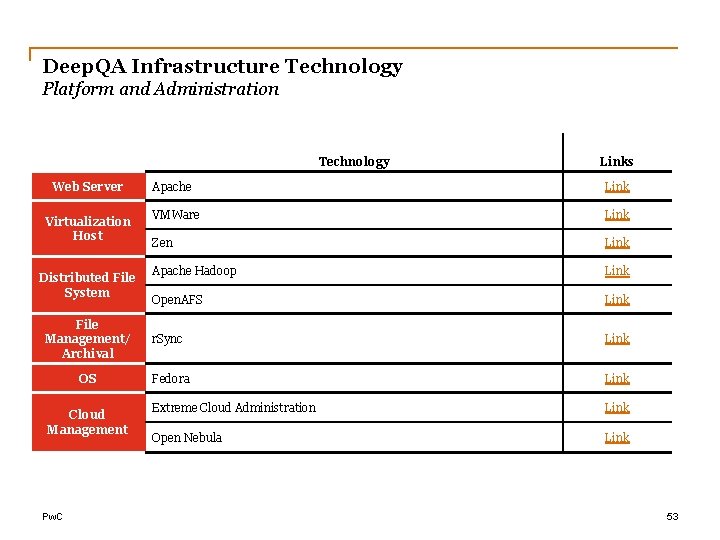

Deep. QA Infrastructure Technology Platform and Administration Technology Web Server Virtualization Host Distributed File System File Management/ Archival OS Cloud Management Pw. C Links Apache Link VMWare Link Zen Link Apache Hadoop Link Open. AFS Link r. Sync Link Fedora Link Extreme Cloud Administration Link Open Nebula Link 53

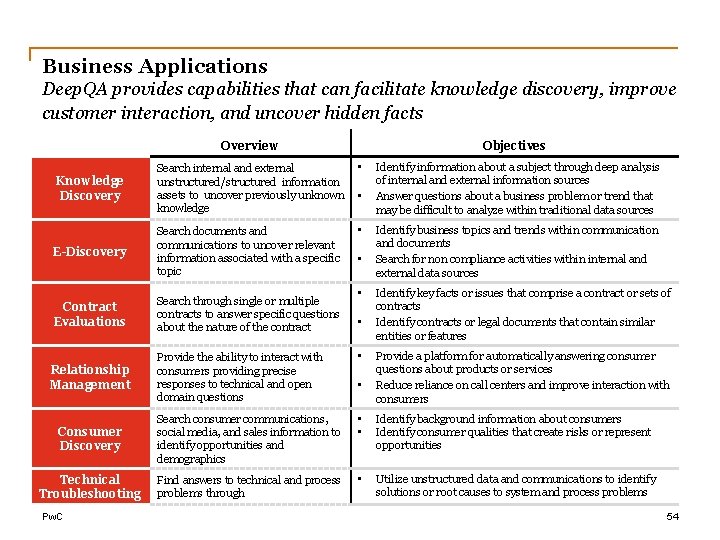

Business Applications Deep. QA provides capabilities that can facilitate knowledge discovery, improve customer interaction, and uncover hidden facts Overview Objectives • Knowledge Discovery Search internal and external unstructured/structured information assets to uncover previously unknown knowledge • E-Discovery Search documents and communications to uncover relevant information associated with a specific topic Contract Evaluations Search through single or multiple contracts to answer specific questions about the nature of the contract • • Identify information about a subject through deep analysis of internal and external information sources Answer questions about a business problem or trend that may be difficult to analyze within traditional data sources Identify business topics and trends within communication and documents Search for non compliance activities within internal and external data sources Identify key facts or issues that comprise a contract or sets of contracts Identify contracts or legal documents that contain similar entities or features Provide the ability to interact with consumers providing precise responses to technical and open domain questions • Consumer Discovery Search consumer communications, social media, and sales information to identify opportunities and demographics • • Identify background information about consumers Identify consumer qualities that create risks or represent opportunities Technical Troubleshooting Find answers to technical and process problems through • Utilize unstructured data and communications to identify solutions or root causes to system and process problems Relationship Management Pw. C • Provide a platform for automatically answering consumer questions about products or services Reduce reliance on call centers and improve interaction with consumers 54

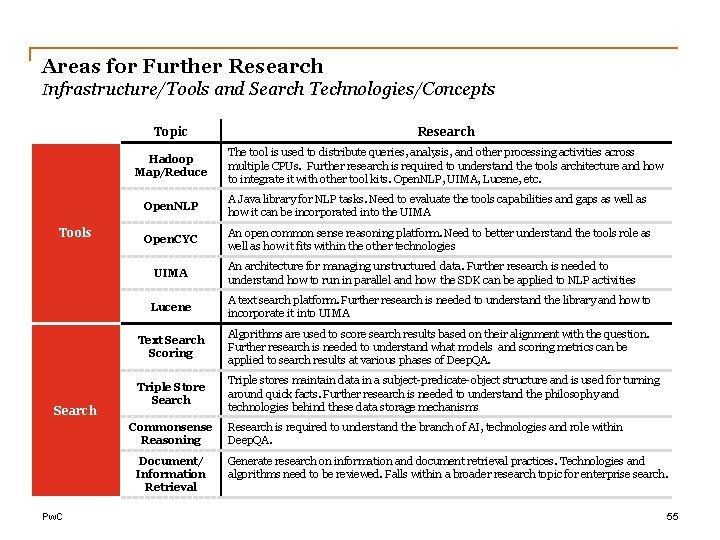

Areas for Further Research Infrastructure/Tools and Search Technologies/Concepts Tools Topic Research Hadoop Map/Reduce The tool is used to distribute queries, analysis, and other processing activities across multiple CPUs. Further research is required to understand the tools architecture and how to integrate it with other tool kits. Open. NLP, UIMA, Lucene, etc. Open. NLP A Java library for NLP tasks. Need to evaluate the tools capabilities and gaps as well as how it can be incorporated into the UIMA Open. CYC An open common sense reasoning platform. Need to better understand the tools role as well as how it fits within the other technologies UIMA Search Lucene A text search platform. Further research is needed to understand the library and how to incorporate it into UIMA Text Search Scoring Algorithms are used to score search results based on their alignment with the question. Further research is needed to understand what models and scoring metrics can be applied to search results at various phases of Deep. QA. Triple Store Search Triple stores maintain data in a subject-predicate-object structure and is used for turning around quick facts. Further research is needed to understand the philosophy and technologies behind these data storage mechanisms Commonsense Reasoning Document/ Information Retrieval Pw. C An architecture for managing unstructured data. Further research is needed to understand how to run in parallel and how the SDK can be applied to NLP activities Research is required to understand the branch of AI, technologies and role within Deep. QA. Generate research on information and document retrieval practices. Technologies and algorithms need to be reviewed. Falls within a broader research topic for enterprise search. 55

Areas for Further Research Machine Learning and Natural Language Processing Topic Meta. Learners Machine Learning Question Classification Search Ranking Models Logical Form Analysis Semantic Structure Analysis NLP Pw. C Relationship Analysis Description Research the concept and how they are to used evaluate learning models and assign a confidence score based on the learning models that are used to rank search results Identify techniques and models that can be employed to analyze and classify questions Research models are available for ranking search results based on the various search and recall techniques that are employed for a question Research how SNA is used to discover logical relationships within text and product an understanding about the information within the text Identify tools and algorithms that are employed to uncover semantic relationships within texts/phrases and how these relationships can be applied to extract relevant information for question analysis and search Research techniques and tools for uncovering temporal, geospatial and spatial relationships within a knowledge set Feature Extraction Evaluate tools and algorithms that are used to extract features of entities from text and identify methods for structuring the data for search Phrase Analysis Identify algorithms and tools that can be applied to extract key phrases from text based on a search context 56

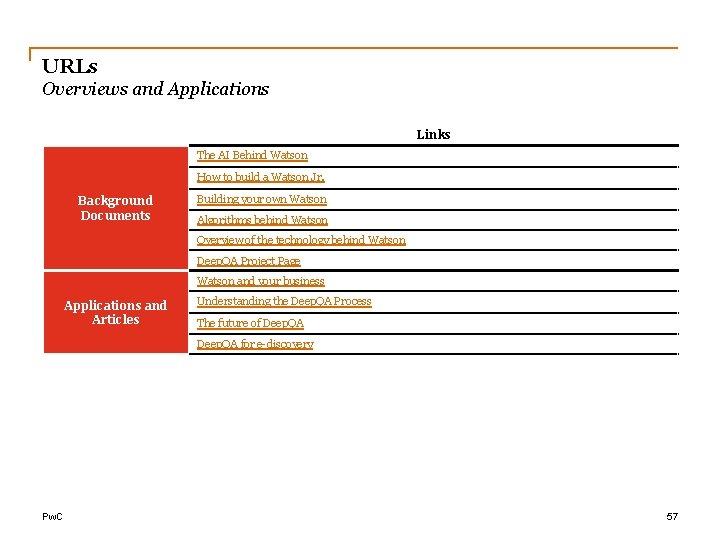

URLs Overviews and Applications Links The AI Behind Watson How to build a Watson Jr. Background Documents Building your own Watson Algorithms behind Watson Overview of the technology behind Watson Deep. QA Project Page Watson and your business Applications and Articles Understanding the Deep. QA Process The future of Deep. QA for e-discovery Pw. C 57

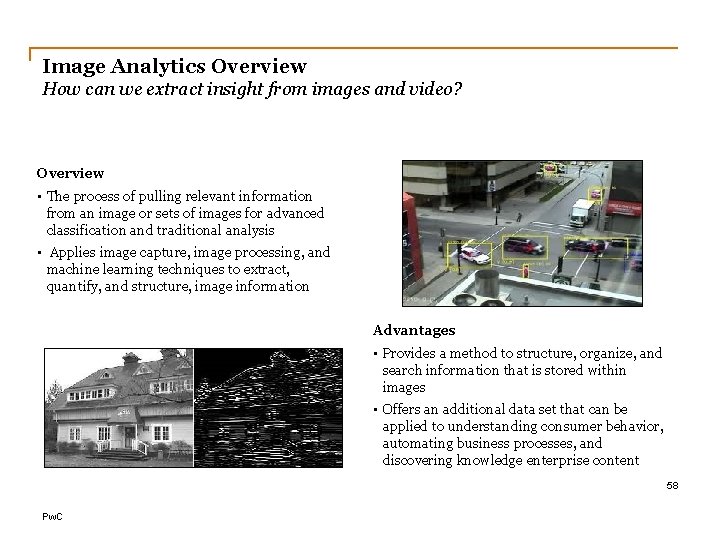

Image Analytics Overview How can we extract insight from images and video? Overview • The process of pulling relevant information from an image or sets of images for advanced classification and traditional analysis • Applies image capture, image processing, and machine learning techniques to extract, quantify, and structure, image information Advantages • Provides a method to structure, organize, and search information that is stored within images • Offers an additional data set that can be applied to understanding consumer behavior, automating business processes, and discovering knowledge enterprise content 58 Pw. C

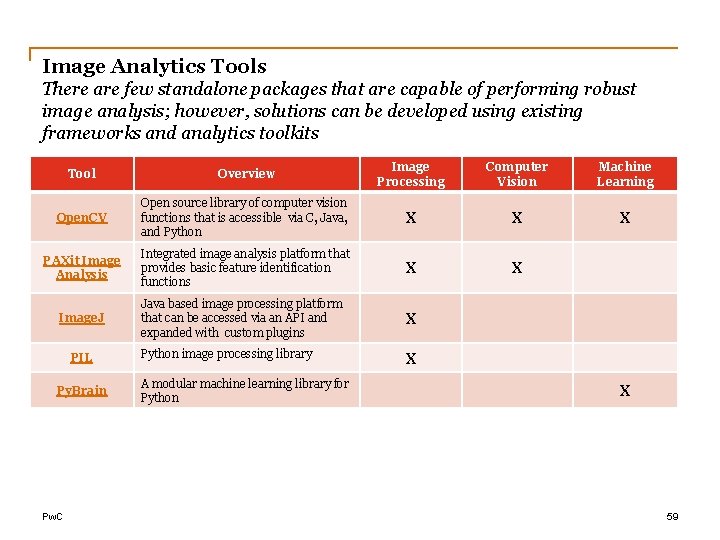

Image Analytics Tools There are few standalone packages that are capable of performing robust image analysis; however, solutions can be developed using existing frameworks and analytics toolkits Tool Overview Image Processing Computer Vision Machine Learning Open. CV Open source library of computer vision functions that is accessible via C, Java, and Python X X X PAXit Image Analysis Integrated image analysis platform that provides basic feature identification functions X X Java based image processing platform that can be accessed via an API and expanded with custom plugins X Python image processing library X Image. J PIL Py. Brain Pw. C A modular machine learning library for Python X 59

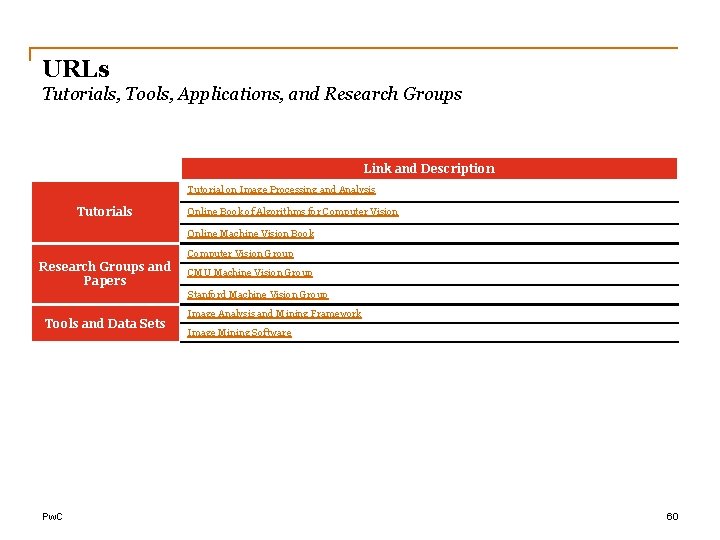

URLs Tutorials, Tools, Applications, and Research Groups Link and Description Tutorial on Image Processing and Analysis Tutorials Online Book of Algorithms for Computer Vision Online Machine Vision Book Computer Vision Group Research Groups and Papers CMU Machine Vision Group Stanford Machine Vision Group Tools and Data Sets Pw. C Image Analysis and Mining Framework Image Mining Software 60

Audio Analytics Overview How can we extract insight from audio and voice media? Overview • The process of capturing audio and analyzing its features as to extract content and context of an event • Applies speech analysis and signal processing principles to structure audio information for analysis via NLP or traditional analytics techniques Advantages • Provides a method for identifying events or common patterns within sound bytes • Offers a way of capturing not only the content and topics within a conversation, but also the emotions and context 61 Pw. C

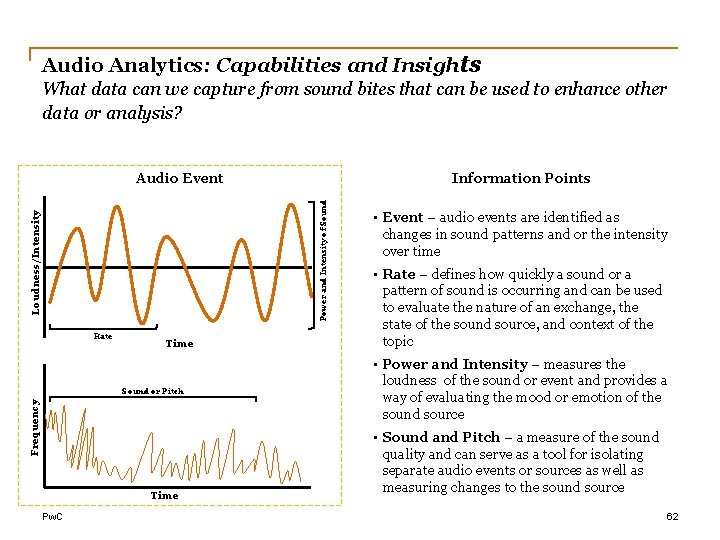

Audio Analytics: Capabilities and Insights What data can we capture from sound bites that can be used to enhance other data or analysis? Information Points Loudness/Intensity Power and Intensity of Sound Audio Event Rate Time Frequency Sound or Pitch Time Pw. C • Event – audio events are identified as changes in sound patterns and or the intensity over time • Rate – defines how quickly a sound or a pattern of sound is occurring and can be used to evaluate the nature of an exchange, the state of the sound source, and context of the topic • Power and Intensity – measures the loudness of the sound or event and provides a way of evaluating the mood or emotion of the sound source • Sound and Pitch – a measure of the sound quality and can serve as a tool for isolating separate audio events or sources as well as measuring changes to the sound source 62

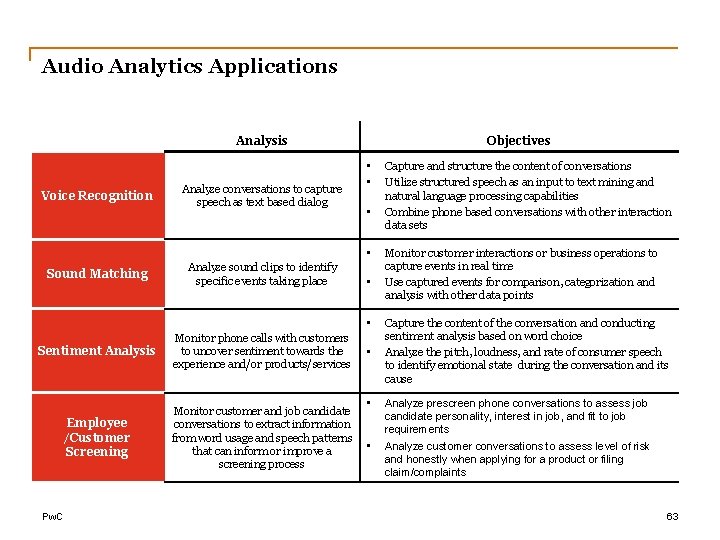

Audio Analytics Applications Analysis Voice Recognition Analyze conversations to capture speech as text based dialog Objectives • • Sound Matching Analyze sound clips to identify specific events taking place • • Sentiment Analysis Monitor phone calls with customers to uncover sentiment towards the experience and/or products/services Employee /Customer Screening Monitor customer and job candidate conversations to extract information from word usage and speech patterns that can inform or improve a screening process Pw. C • • • Capture and structure the content of conversations Utilize structured speech as an input to text mining and natural language processing capabilities Combine phone based conversations with other interaction data sets Monitor customer interactions or business operations to capture events in real time Use captured events for comparison, categorization and analysis with other data points Capture the content of the conversation and conducting sentiment analysis based on word choice Analyze the pitch, loudness, and rate of consumer speech to identify emotional state during the conversation and its cause Analyze prescreen phone conversations to assess job candidate personality, interest in job, and fit to job requirements Analyze customer conversations to assess level of risk and honestly when applying for a product or filing claim/complaints 63

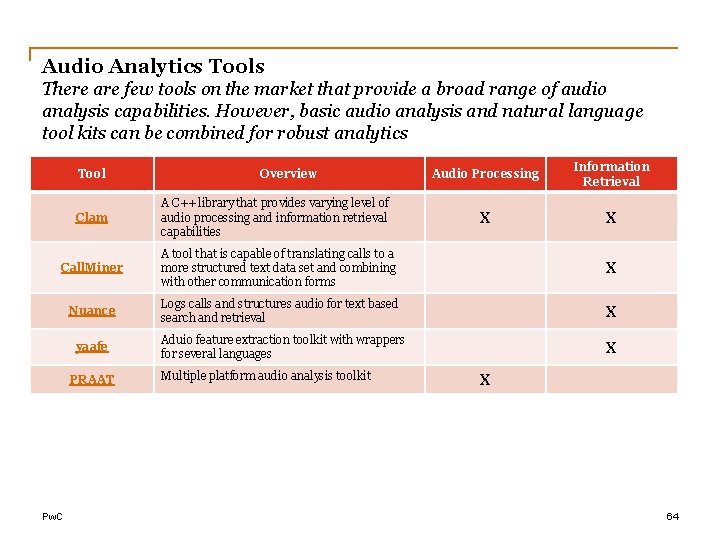

Audio Analytics Tools There are few tools on the market that provide a broad range of audio analysis capabilities. However, basic audio analysis and natural language tool kits can be combined for robust analytics Tool Clam Overview A C++ library that provides varying level of audio processing and information retrieval capabilities Audio Processing Information Retrieval X X Call. Miner A tool that is capable of translating calls to a more structured text data set and combining with other communication forms X Nuance Logs calls and structures audio for text based search and retrieval X yaafe Aduio feature extraction toolkit with wrappers for several languages X PRAAT Pw. C Multiple platform audio analysis toolkit X 64

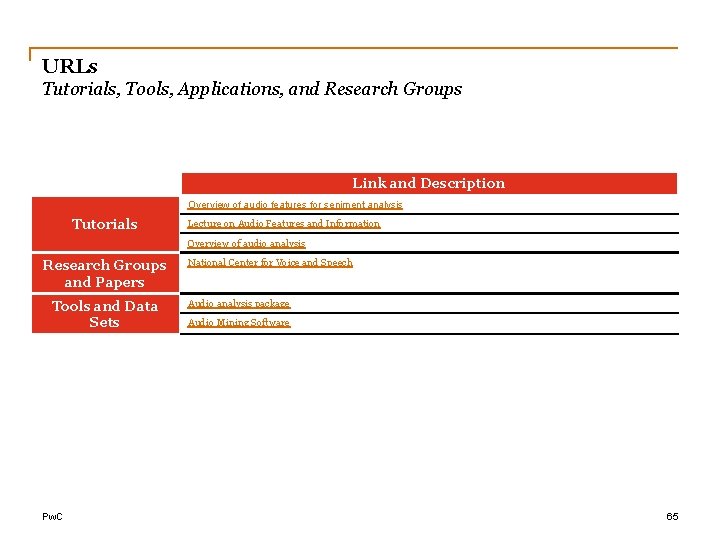

URLs Tutorials, Tools, Applications, and Research Groups Link and Description Overview of audio features for seniment analysis Tutorials Lecture on Audio Features and Information Overview of audio analysis Research Groups and Papers Tools and Data Sets Pw. C National Center for Voice and Speech Audio analysis package Audio Mining Software 65

Social Network Analysis

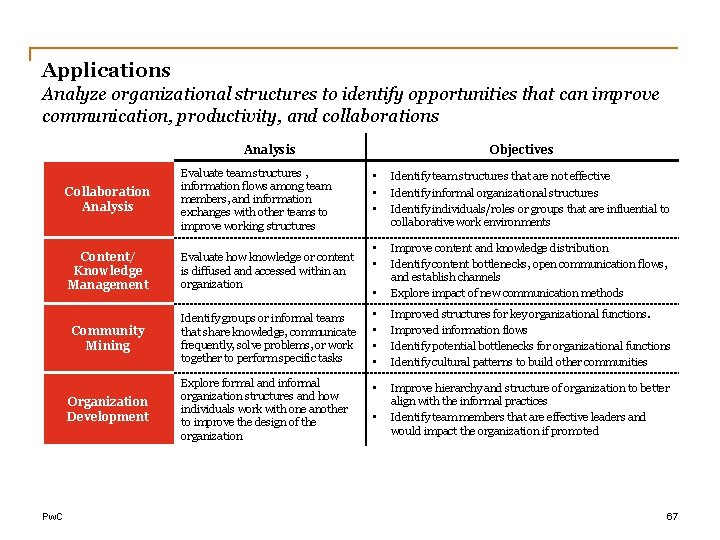

Applications Analyze organizational structures to identify opportunities that can improve communication, productivity, and collaborations Analysis Collaboration Analysis Evaluate team structures , information flows among team members, and information exchanges with other teams to improve working structures Content/ Knowledge Management Evaluate how knowledge or content is diffused and accessed within an organization Community Mining Identify groups or informal teams that share knowledge, communicate frequently, solve problems, or work together to perform specific tasks • • Improved structures for key organizational functions. Improved information flows Identify potential bottlenecks for organizational functions Identify cultural patterns to build other communities Explore formal and informal organization structures and how individuals work with one another to improve the design of the organization • Improve hierarchy and structure of organization to better align with the informal practices Identify team members that are effective leaders and would impact the organization if promoted Organization Development Pw. C Objectives • • • Identify team structures that are not effective Identify informal organizational structures Identify individuals/roles or groups that are influential to collaborative work environments • • • Improve content and knowledge distribution Identify content bottlenecks, open communication flows, and establish channels Explore impact of new communication methods • 67

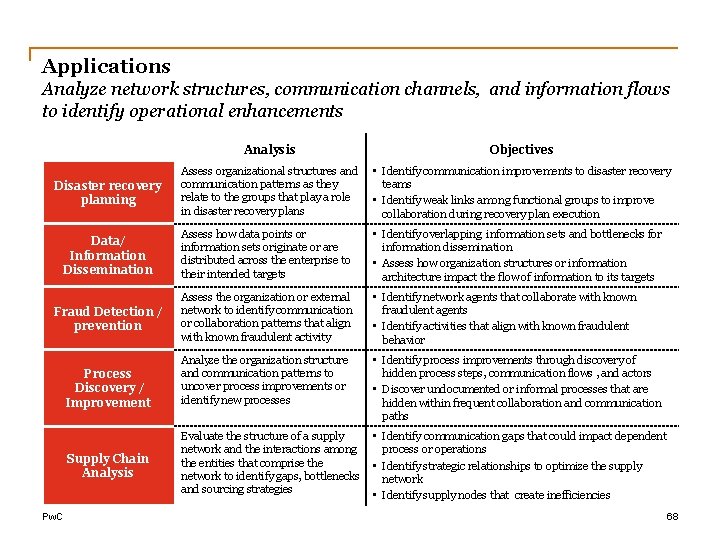

Applications Analyze network structures, communication channels, and information flows to identify operational enhancements Analysis Objectives Assess organizational structures and communication patterns as they relate to the groups that play a role in disaster recovery plans • Identify communication improvements to disaster recovery teams • Identify weak links among functional groups to improve collaboration during recovery plan execution Data/ Information Dissemination Assess how data points or information sets originate or are distributed across the enterprise to their intended targets • Identify overlapping information sets and bottlenecks for information dissemination • Assess how organization structures or information architecture impact the flow of information to its targets Fraud Detection / prevention Assess the organization or external network to identify communication or collaboration patterns that align with known fraudulent activity • Identify network agents that collaborate with known fraudulent agents • Identify activities that align with known fraudulent behavior Analyze the organization structure and communication patterns to uncover process improvements or identify new processes • Identify process improvements through discovery of hidden process steps, communication flows , and actors • Discover undocumented or informal processes that are hidden within frequent collaboration and communication paths Evaluate the structure of a supply network and the interactions among the entities that comprise the network to identify gaps, bottlenecks and sourcing strategies • Identify communication gaps that could impact dependent process or operations • Identify strategic relationships to optimize the supply network • Identify supply nodes that create inefficiencies Disaster recovery planning Process Discovery / Improvement Supply Chain Analysis Pw. C 68

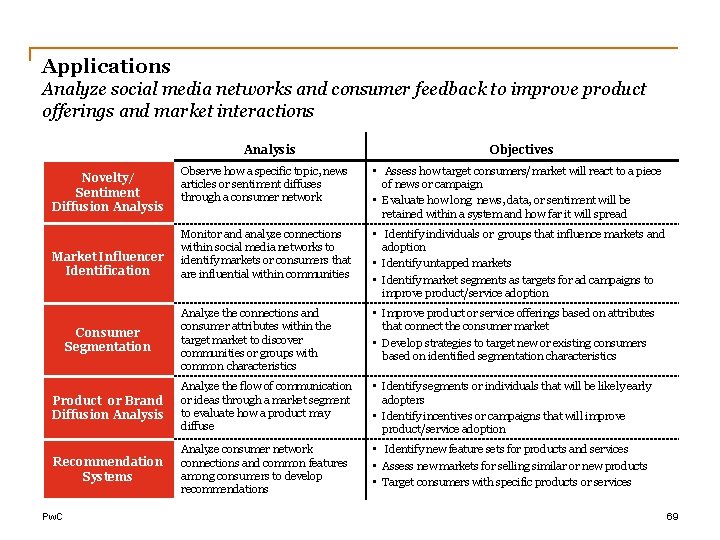

Applications Analyze social media networks and consumer feedback to improve product offerings and market interactions Analysis Objectives Observe how a specific topic, news articles or sentiment diffuses through a consumer network • Assess how target consumers/market will react to a piece of news or campaign • Evaluate how long news, data, or sentiment will be retained within a system and how far it will spread Monitor and analyze connections within social media networks to identify markets or consumers that are influential within communities • Identify individuals or groups that influence markets and adoption • Identify untapped markets • Identify market segments as targets for ad campaigns to improve product/service adoption Analyze the connections and consumer attributes within the target market to discover communities or groups with common characteristics • Improve product or service offerings based on attributes that connect the consumer market • Develop strategies to target new or existing consumers based on identified segmentation characteristics Product or Brand Diffusion Analysis Analyze the flow of communication or ideas through a market segment to evaluate how a product may diffuse • Identify segments or individuals that will be likely early adopters • Identify incentives or campaigns that will improve product/service adoption Recommendation Systems Analyze consumer network connections and common features among consumers to develop recommendations • Identify new feature sets for products and services • Assess new markets for selling similar or new products • Target consumers with specific products or services Novelty/ Sentiment Diffusion Analysis Market Influencer Identification Consumer Segmentation Pw. C 69

Tools Social network analysis plug-ins and APIs for development/scripting languages and data analysis tools Tool SNAP Statnet lib. SNA, graph. Tool, network. X JUNG Node. XL Pw. C Overview Network Analysis A general purpose network analysis and graph mining library for C++. Link X A package for R that provides capabilities for social network statistical analysis. Link X Python libraries for network analysis and manipulation. lib. SNA, network. X, graph. Tool X Java package for network analysis and modeling. Link X Excel plug-in that provides an easy to use and interactive interface to explore and visualize networks Link X Network Visual Network Manipulation X X X 70

Tools Proprietary and open source social network analysis interactive application suites Tool Overview Network Analysis Network Visual Network Manipulation X GEPHI Interactive open source platform for network analysis and visualization. Gephi X X Ucinet Commercial social network analysis tool with separate visualization component. Link X X Graphviz Open source graph visualization package. Link Net. Miner Proprietary package that provides the ability to develop and implement custom algorithms link X X X kxen SNA Network analysis package that provides predictive analytics and customer MDM integration. Link X X X Pro. M Open source package for mining business process networks. Link X X X Open source tool for network modeling, and analysis. Can connect to external data sources Link X X X Large-Scale Network Analysis, Modeling and Visualization Toolkit for Biomedical, Social Science and Physics Research. Link X X X Cytoscape Network Workbench Pw. C X 71

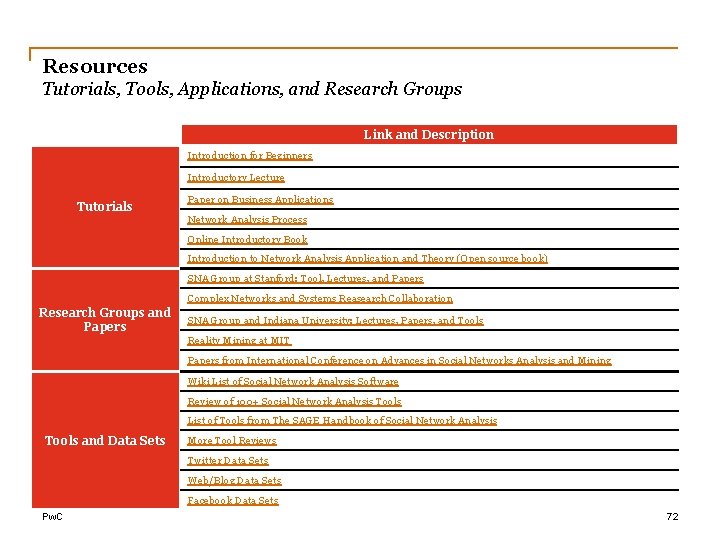

Resources Tutorials, Tools, Applications, and Research Groups Link and Description Introduction for Beginners Introductory Lecture Tutorials Paper on Business Applications Network Analysis Process Online Introductory Book Introduction to Network Analysis Application and Theory (Open source book) SNA Group at Stanford: Tool, Lectures, and Papers Complex Networks and Systems Reasearch Collaboration Research Groups and Papers SNA Group and Indiana University: Lectures, Papers, and Tools Reality Mining at MIT Papers from International Conference on Advances in Social Networks Analysis and Mining Wiki List of Social Network Analysis Software Review of 100+ Social Network Analysis Tools List of Tools from The SAGE Handbook of Social Network Analysis Tools and Data Sets More Tool Reviews Twitter Data Sets Web/Blog Data Sets Facebook Data Sets Pw. C 72

Additional Case Studies

Example 1 Advanced natural language processing and deep questionanswering technology are being applied to address clinical decision-making Memorial Sloan-Kettering Cancer Center • Memorial Sloan-Kettering Cancer Center is applying Deep. QA technology (technology that relies on advanced analytics powered by IBM’s Watson) to develop a decision-support application for cancer treatment • Doctors will be able to generate and evaluate hypothesis on evidence and treatment and the Cancer Center will be able to better identify and personalize cancer therapies for individual patients Well. Point and Cedars-Sinai • Well. Point and the Cedars-Sinai Samuel Oschin Comprehensive Cancer Institute will work together to help improve patient care and support physicians in their efforts to make the most informed, personalized treatment decisions possible. • It is estimated that new clinical research and medical information doubles every five years, and nowhere is this knowledge advancing more quickly than in the complex area of cancer care. • The Well. Point health care solutions will use Deep. QA technology to draw from vast libraries of information including medical evidence-based scientific and health care data, and clinical insights from institutions like Cedars-Sinai. Source: Memorial Sloan-Kettering Cancer Institute Press Release March 2012, Well. Point Press Release, December 2011; Pw. C 74

Example 2 Large volumes of real-time sensor data are empowering individuals to take more control of their health Quantified Health – P 4 Medicine (Predictive, Preventive, Personalized, Participatory) • Non-invasive wearable sensors are creating a new ‘Quantified Health’ movement and one of the fastest growing sectors in the tech industry, let alone in the field of Big Data Analytics • The number of connected industrial and medical devices is projected to reach 16 billion by 2015 • The m. Health market is estimated to reach a value of $23 billion by 2017 Source: Bruce Bigelow, Big Data, Big Biology, and the ‘Tipping Point’ in Quantified Health: Takeaways from Xconomy’s On-the-Record Dinner, Xconomy, April 26, 2012 Pw. C 75

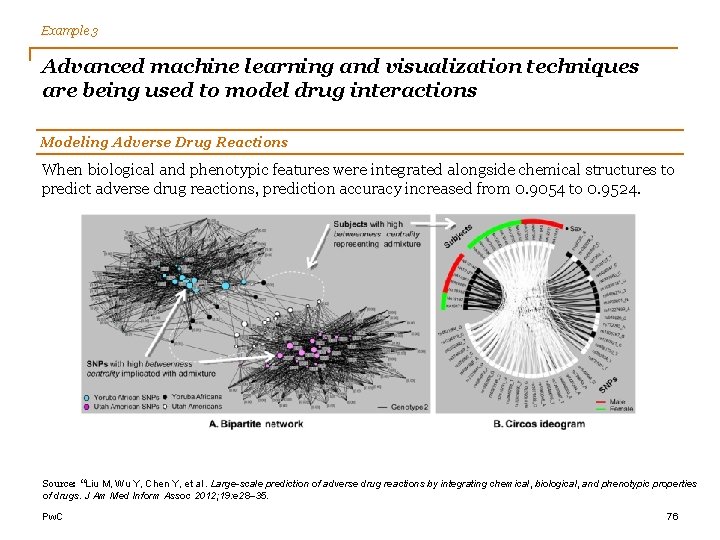

Example 3 Advanced machine learning and visualization techniques are being used to model drug interactions Modeling Adverse Drug Reactions When biological and phenotypic features were integrated alongside chemical structures to predict adverse drug reactions, prediction accuracy increased from 0. 9054 to 0. 9524. Source: “Liu M, Wu Y, Chen Y, et al. Large-scale prediction of adverse drug reactions by integrating chemical, biological, and phenotypic properties of drugs. J Am Med Inform Assoc 2012; 19: e 28– 35. Pw. C 76

Other Examples Companies in other sectors are also pursuing various applications of ‘Big Data’ and ‘Smart Analytics’. Satellite Data Allianz Hartford Steam Boiler Location Allianz is ‘mashing’ satellite data, third-party street-level data, images, and other internal data to better understand risk concentrations and manage concentration risk in commercial property insurance Map Data Property-Specific Data Hartford Steam Boiler is using sensors and real-time sensor data to monitor assets, reduce losses and manage risks better Hartford Steam Boiler has been able to manage concentration risks and reduce losses, having one of the lowest combined ratios for a commercial insurer Proctor & Gamble is investing in analytics talent for quicker decision making, with the CIO planning to increase fourfold the number of staff with expertise in business analytics Executives are currently using big data to uncover what is currently going on in their business, to understand why, to predict future performance and to understand what actions P&G should take Source: “Procter & Gamble – Business Sphere and Decision Cockpits”, Ravi Kalakota, Pratical Analytics Wordpress, Feb. 2012, mskcc. org/cancercare; e. Week. com, Healthcare IT News, IBM Watson to Aid Sloan-Kettering With Cancer Research, March 2012 77 Pw. C

Big Data Analytics Technology & Vendor Mappings

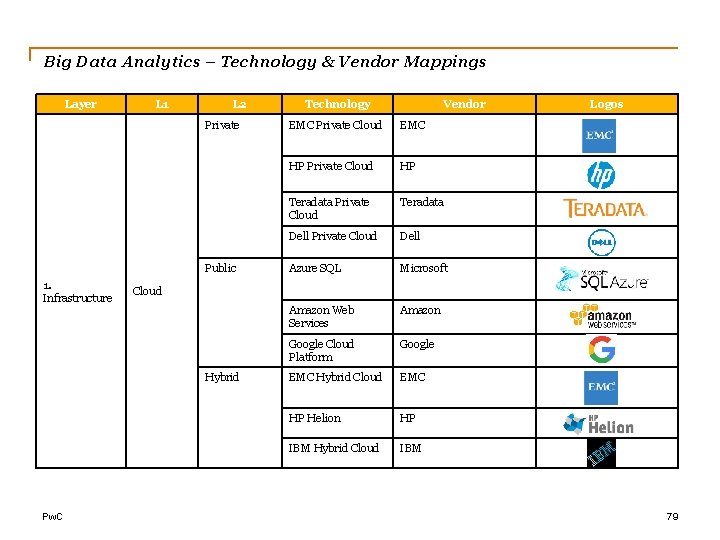

Big Data Analytics – Technology & Vendor Mappings Layer L 1 L 2 Private Public 1. Infrastructure Vendor EMC Private Cloud EMC HP Private Cloud HP Teradata Private Cloud Teradata Dell Private Cloud Dell Azure SQL Microsoft Amazon Web Services Amazon Google Cloud Platform Google EMC Hybrid Cloud EMC HP Helion HP IBM Hybrid Cloud IBM Logos Cloud Hybrid Pw. C Technology 79

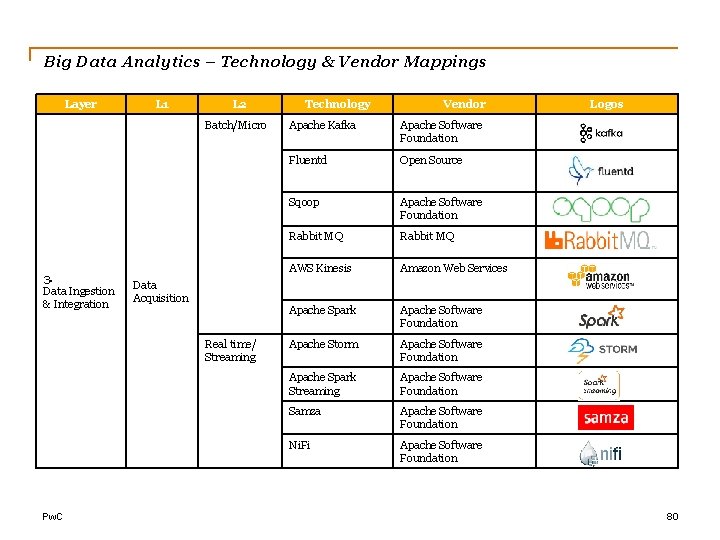

Big Data Analytics – Technology & Vendor Mappings Layer L 1 L 2 Batch/Micro 3. Data Ingestion & Integration Data Acquisition Real time/ Streaming Pw. C Technology Vendor Apache Kafka Apache Software Foundation Fluentd Open Source Sqoop Apache Software Foundation Rabbit MQ AWS Kinesis Amazon Web Services Apache Spark Apache Software Foundation Apache Storm Apache Software Foundation Apache Spark Streaming Apache Software Foundation Samza Apache Software Foundation Ni. Fi Apache Software Foundation Logos 80

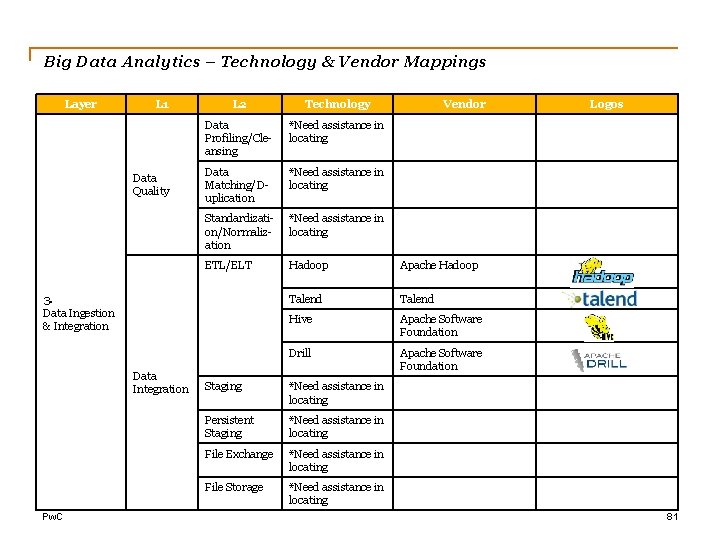

Big Data Analytics – Technology & Vendor Mappings Layer L 1 Data Quality L 2 Technology Data Profiling/Cleansing *Need assistance in locating Data Matching/Duplication *Need assistance in locating Standardization/Normalization *Need assistance in locating ETL/ELT Hadoop Apache Hadoop Talend Hive Apache Software Foundation Drill Apache Software Foundation 3. Data Ingestion & Integration Data Integration Pw. C Staging *Need assistance in locating Persistent Staging *Need assistance in locating File Exchange *Need assistance in locating File Storage *Need assistance in locating Vendor Logos 81

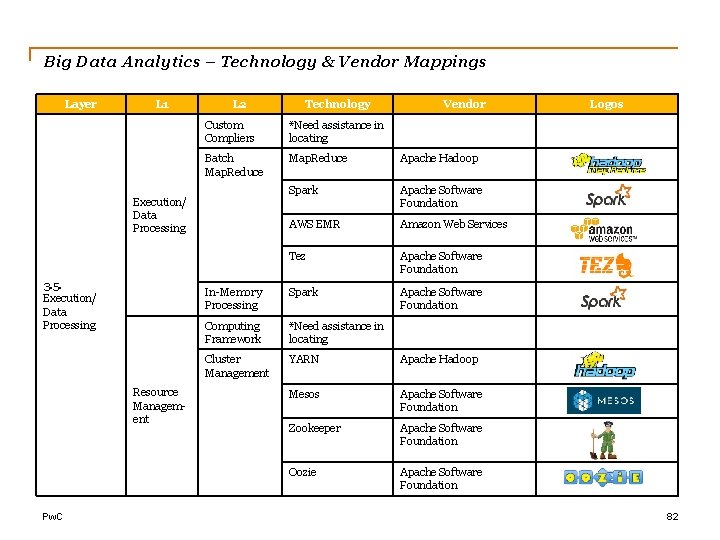

Big Data Analytics – Technology & Vendor Mappings Layer L 1 L 2 Resource Management Pw. C Vendor Custom Compliers *Need assistance in locating Batch Map. Reduce Apache Hadoop Spark Apache Software Foundation AWS EMR Amazon Web Services Tez Apache Software Foundation In-Memory Processing Spark Apache Software Foundation Computing Framework *Need assistance in locating Cluster Management YARN Apache Hadoop Mesos Apache Software Foundation Zookeeper Apache Software Foundation Oozie Apache Software Foundation Execution/ Data Processing 3. 5. Execution/ Data Processing Technology Logos 82

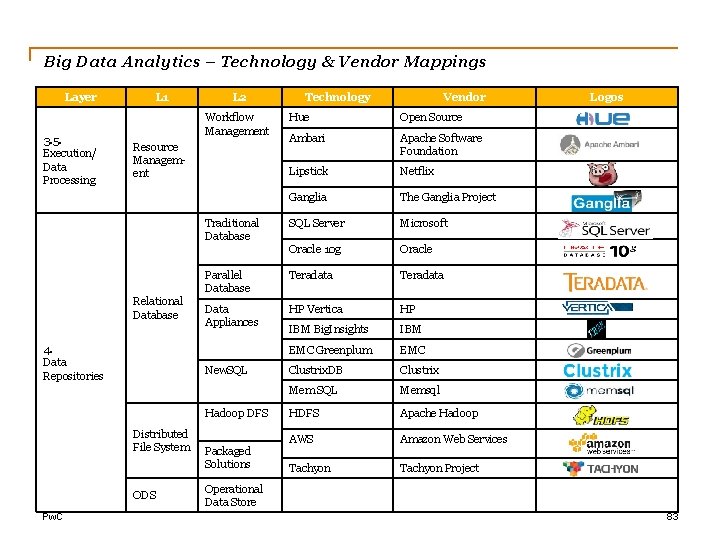

Big Data Analytics – Technology & Vendor Mappings Layer 3. 5. Execution/ Data Processing L 1 L 2 Workflow Management Open Source Ambari Apache Software Foundation Lipstick Netflix Ganglia The Ganglia Project SQL Server Microsoft Oracle 10 g Oracle Parallel Database Teradata Data Appliances HP Vertica HP IBM Big. Insights IBM EMC Greenplum EMC Clustrix. DB Clustrix Mem SQL Memsql HDFS Apache Hadoop AWS Amazon Web Services Tachyon Project Traditional Database 4. Data Repositories New. SQL Hadoop DFS Distributed File System ODS Pw. C Vendor Hue Resource Management Relational Database Technology Packaged Solutions Logos Operational Data Store 83

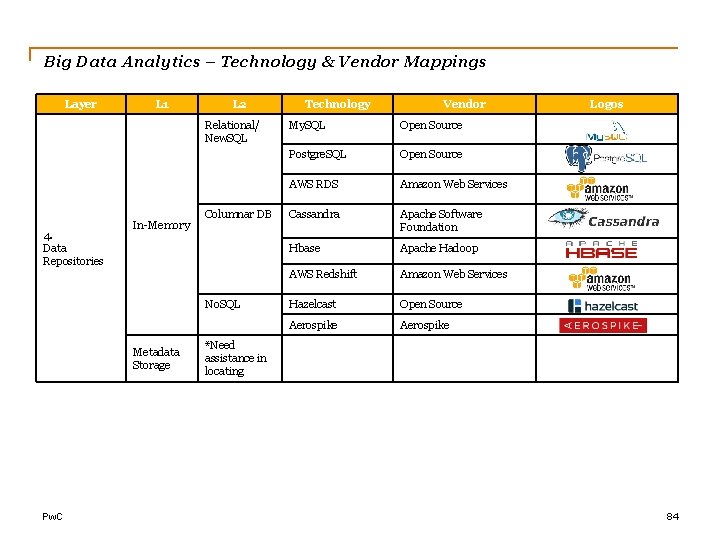

Big Data Analytics – Technology & Vendor Mappings Layer L 1 L 2 Relational/ New. SQL 4. Data Repositories In-Memory Columnar DB No. SQL Metadata Storage Pw. C Technology Vendor My. SQL Open Source Postgre. SQL Open Source AWS RDS Amazon Web Services Cassandra Apache Software Foundation Hbase Apache Hadoop AWS Redshift Amazon Web Services Hazelcast Open Source Aerospike Logos *Need assistance in locating 84

Big Data Analytics – Technology & Vendor Mappings Layer L 1 L 2 Key Value Column Store 4. Data Repositories No. SQL Graph Database Document Database Pw. C Technology Vendor Redis Open Source Riak Basho AWS Dynamo. DB Amazon Web Services Cassandra Apache Software Foundation Hbase Apache Hadoop AWS Redshift Amazon Web Services Neo 4 j Neo Technology Orient. DB Orient Tehcnologies Arango. DB Open Source Mongo. DB, Inc. Elastic Couchbase Logos 85

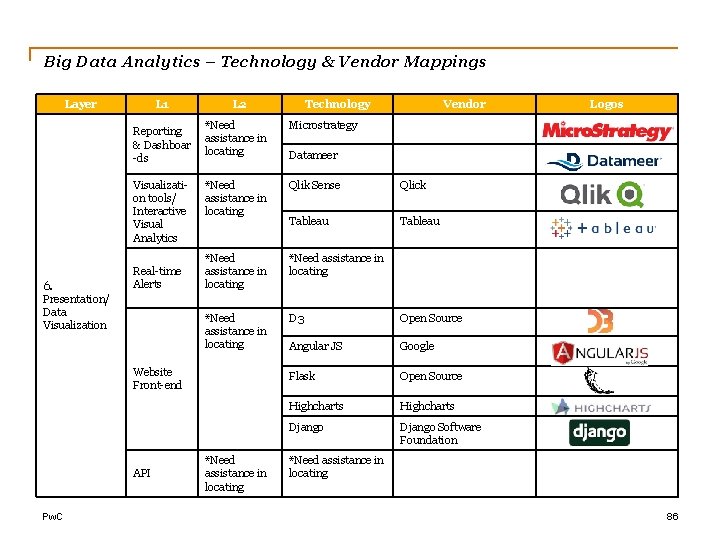

Big Data Analytics – Technology & Vendor Mappings Layer L 1 Reporting & Dashboar -ds Visualization tools/ Interactive Visual Analytics 6. Presentation/ Data Visualization Real-time Alerts L 2 Pw. C Vendor *Need assistance in locating Microstrategy *Need assistance in locating Qlik Sense Qlick Tableau *Need assistance in locating D 3 Open Source Angular JS Google Flask Open Source Highcharts Django Software Foundation Website Front-end API Technology *Need assistance in locating Logos Datameer *Need assistance in locating 86

- Slides: 86