Bag of Tricks for Image Classification with Convolutional

Bag of Tricks for Image Classification with Convolutional Neural Networks Tong He, Zhi Zhang, Hang Zhang, Zhongyue Zhang, Junyuan Xie, Mu Li Amazon Web Services

Motivation • training procedure refinements briefly mentioned, or only visible in source code, but have impact on the final model accuracy • examine a collection of training procedure and model architecture refinements, barely changing computational complexity

1. Outline • set up a baseline training procedure • Tricks useful for efficient training • model architecture tweaks for Res. Net • 4 training procedure refinements • Experiments for transfer learning

![2. Baseline Training Procedure • Follow a widely used implementation [1] of Res. Net 2. Baseline Training Procedure • Follow a widely used implementation [1] of Res. Net](http://slidetodoc.com/presentation_image_h/2e51520ad02752989986bf5654567473/image-4.jpg)

2. Baseline Training Procedure • Follow a widely used implementation [1] of Res. Net • Data augmentation, weights initialization, optimization • Eg. Xavier algorithm Nesterov Accelerated Gradient (NAG) descent batch size of 256 initial lr = 0. 1 • [1] S. Gross and M. Wilber. Training and investigating residual nets.

3. 1 Efficient Training •

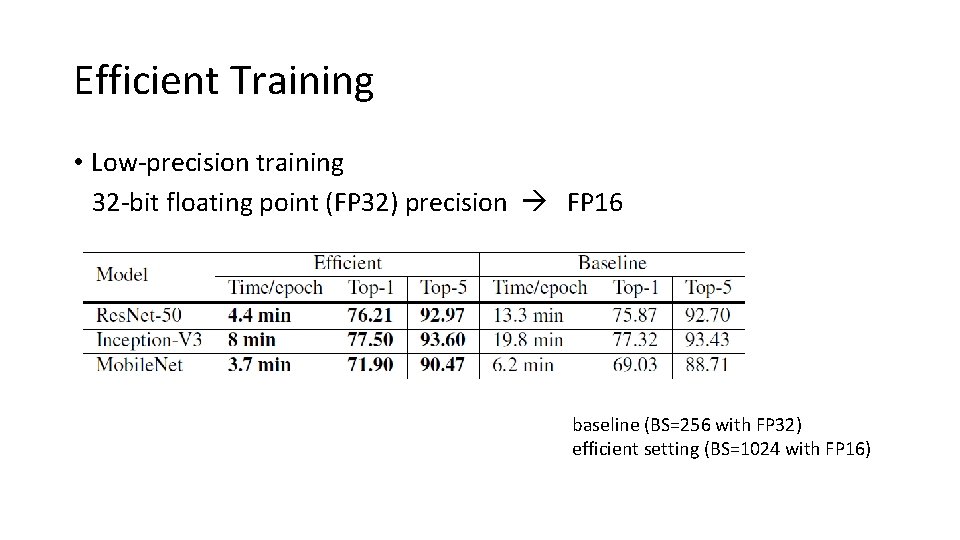

Efficient Training • Low-precision training 32 -bit floating point (FP 32) precision FP 16 baseline (BS=256 with FP 32) efficient setting (BS=1024 with FP 16)

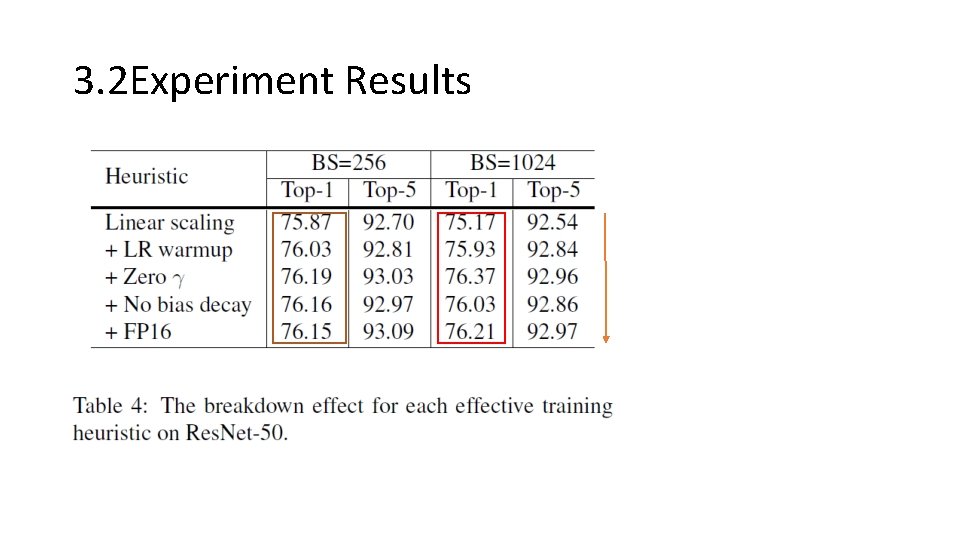

3. 2 Experiment Results

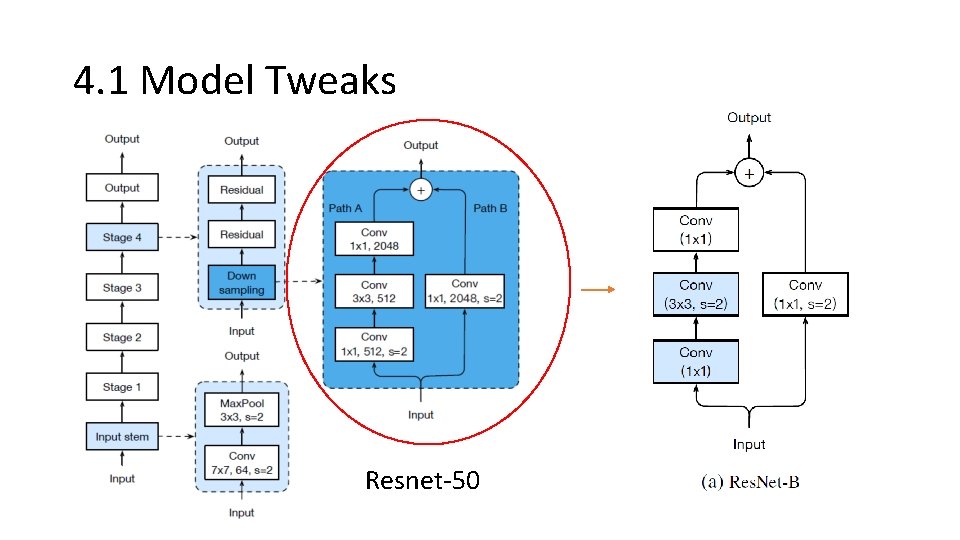

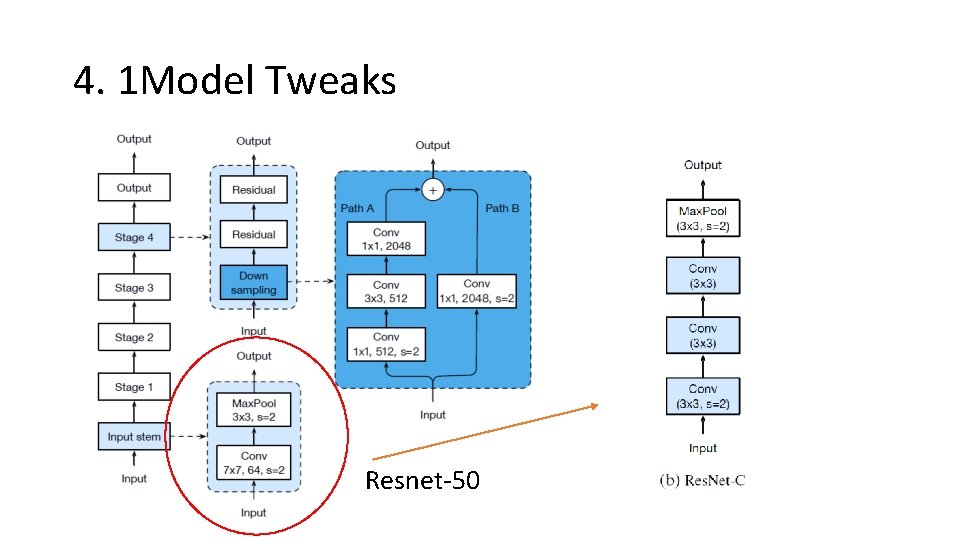

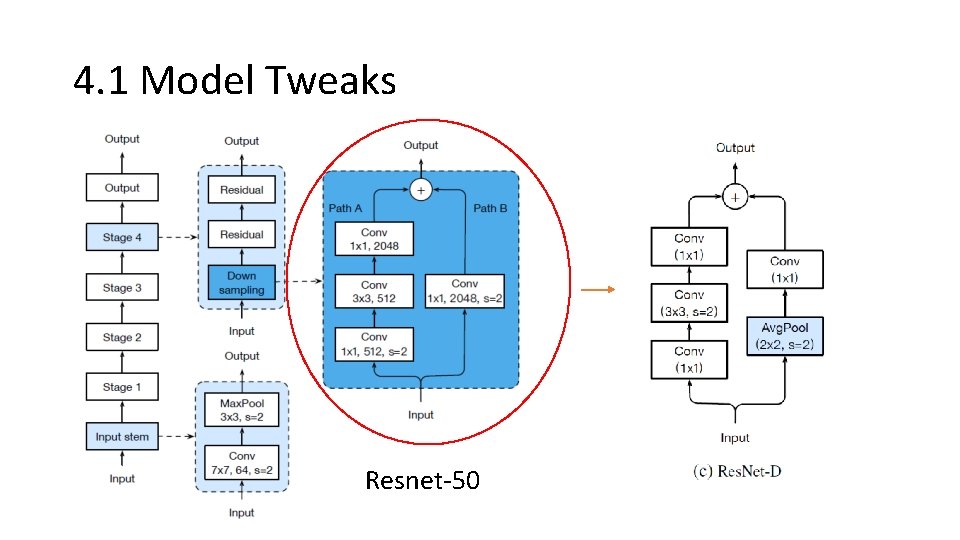

4. 1 Model Tweaks Resnet-50

4. 1 Model Tweaks Resnet-50

4. 1 Model Tweaks Resnet-50

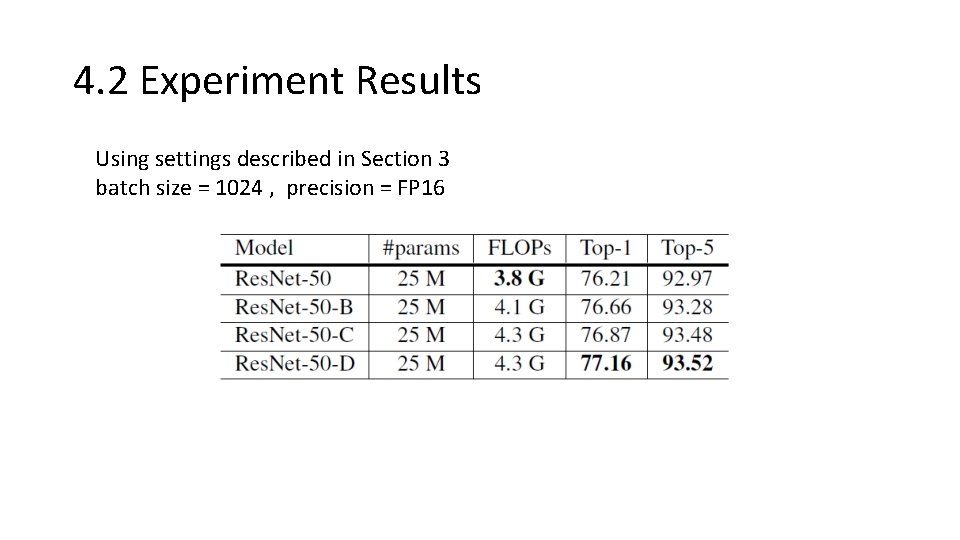

4. 2 Experiment Results Using settings described in Section 3 batch size = 1024 , precision = FP 16

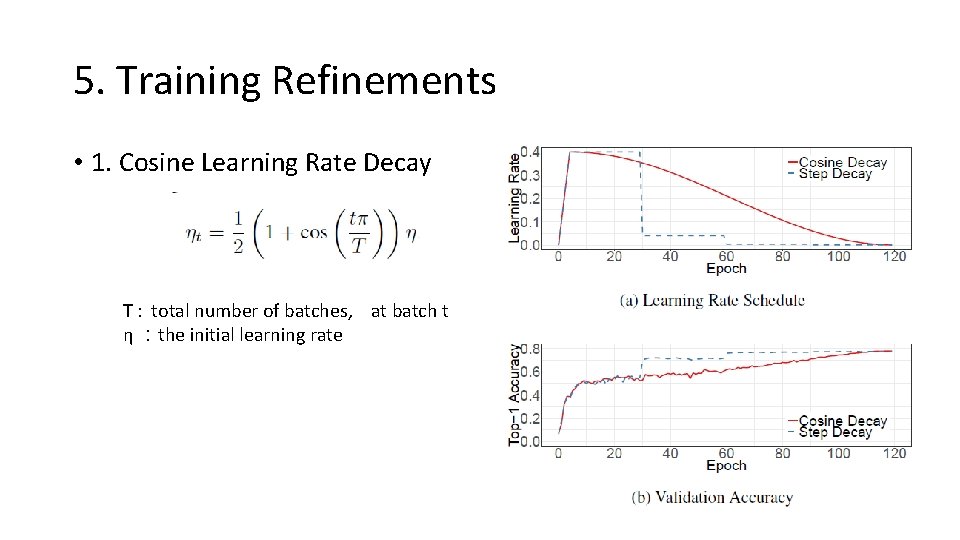

5. Training Refinements • 1. Cosine Learning Rate Decay T : total number of batches, at batch t η :the initial learning rate

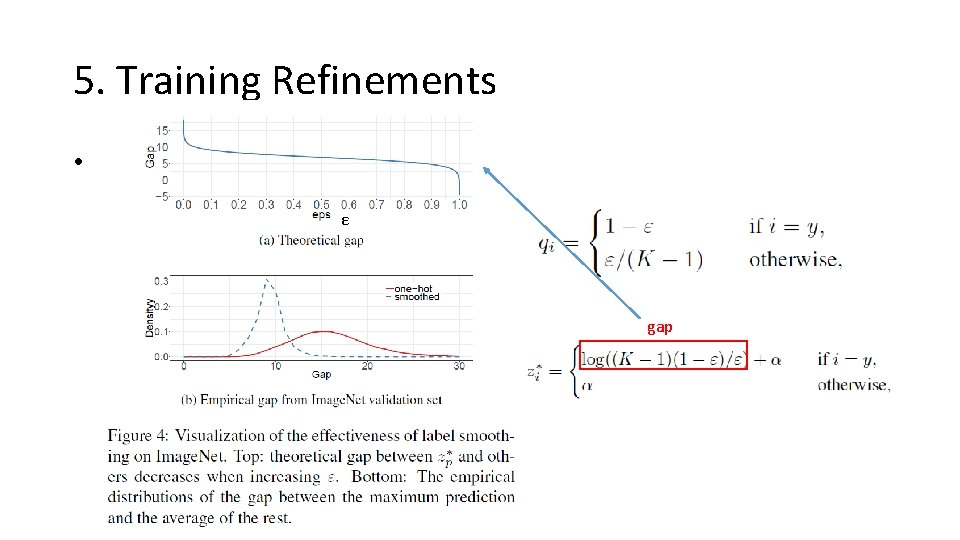

5. Training Refinements • 2. Label Smoothing p εε Minimize the negative cross entropy loss gap

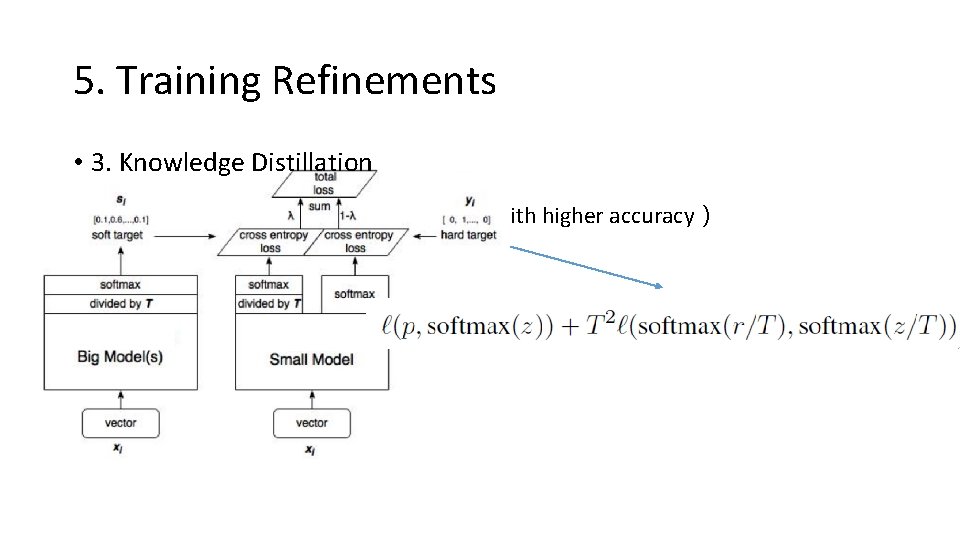

5. Training Refinements • 3. Knowledge Distillation Teacher model (pre-trained model with higher accuracy) Res. Net-152 Student model Res. Net-50

![5. Training Refinements • 4. Mixup Training λ [0,1] is a random number drawn 5. Training Refinements • 4. Mixup Training λ [0,1] is a random number drawn](http://slidetodoc.com/presentation_image_h/2e51520ad02752989986bf5654567473/image-15.jpg)

5. Training Refinements • 4. Mixup Training λ [0,1] is a random number drawn from the Beta(α; α )

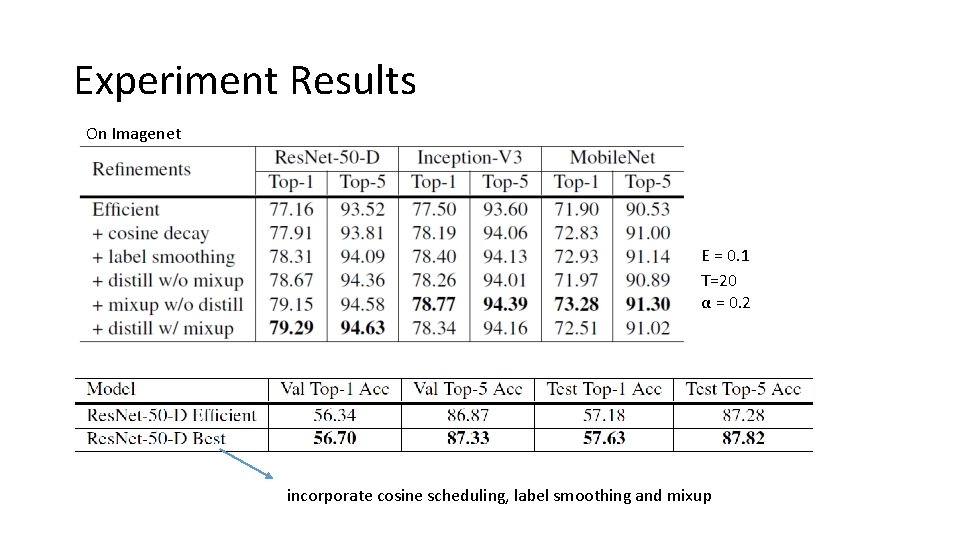

Experiment Results On Imagenet Ε = 0. 1 T=20 α = 0. 2 incorporate cosine scheduling, label smoothing and mixup

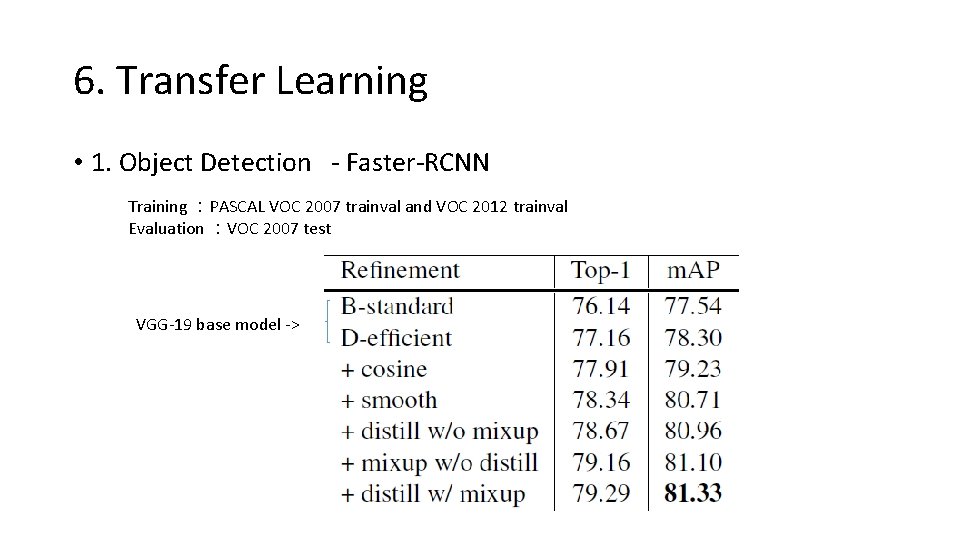

6. Transfer Learning • 1. Object Detection - Faster-RCNN Training :PASCAL VOC 2007 trainval and VOC 2012 trainval Evaluation :VOC 2007 test VGG-19 base model ->

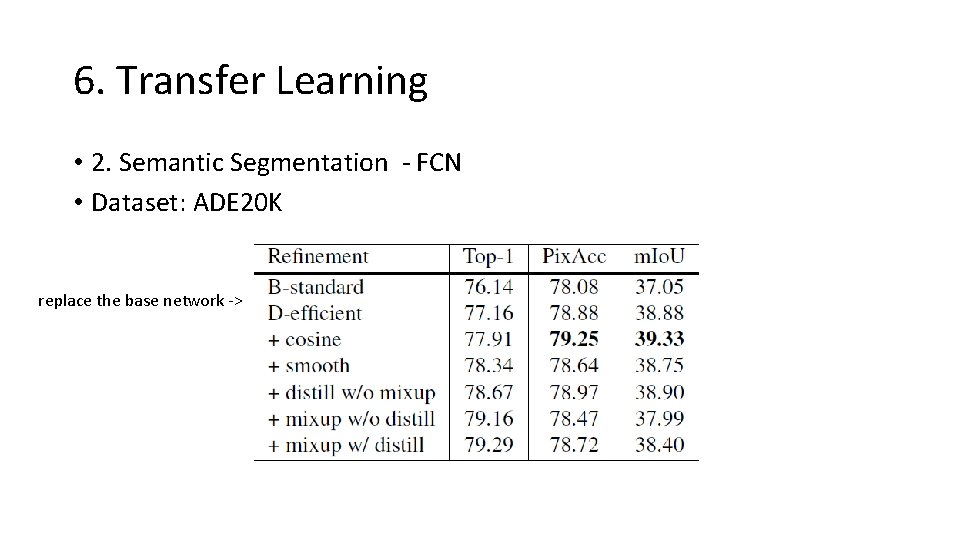

6. Transfer Learning • 2. Semantic Segmentation - FCN • Dataset: ADE 20 K replace the base network ->

7. Conclusion + Tricks: Linear scaling learning rate Learning rate warmup Zero γ No bias decay Low precision… + Transfer learning - More experiment? - Tricks on other model?

- Slides: 19