ARABIC SENTIMENT CLASSIFICATION A HYBRID APPROACH MARIAM BILTAWI

ARABIC SENTIMENT CLASSIFICATION: A HYBRID APPROACH MARIAM BILTAWI, GHAZI AL-NAYMAT, SARA TEDMORI

AGENDA • Definition • Categories of Sentiment Classification • Related work • Proposed Approach • Experimental Results • Conclusion

DEFINITION • Sentiment analysis: refers to the task of analyzing unstructured natural language such as; comments and reviews of people, for the purpose of classifying their polarity orientation as being either positive or negative.

CATEGORIES OF SENTIMENT ANALYSIS • Lexicon-based • Corpus-based • Hybrid

CATEGORIES OF SENTIMENT ANALYSIS • Hybrid • Lexicon-based approaches were used to annotate the corpus and then this corpus is used with any machine learning algorithms. • Lexicon-base approached were used to annotate a portion of the corpus and then depending on this portion the rest of the corpus is annotated using a machine learning algorithm/s

INSPIRATION • Mudinas, Andrius, Dell Zhang, and Mark Levene. "Combining lexicon and learning based approaches for concept-level sentiment analysis. " In Proceedings of the First International Workshop on Issues of Sentiment Discovery and Opinion Mining, p. 5. ACM, 2012. • The main idea is to represent the review for the corpusbased approach in the same way it is seen in lexiconbased approach

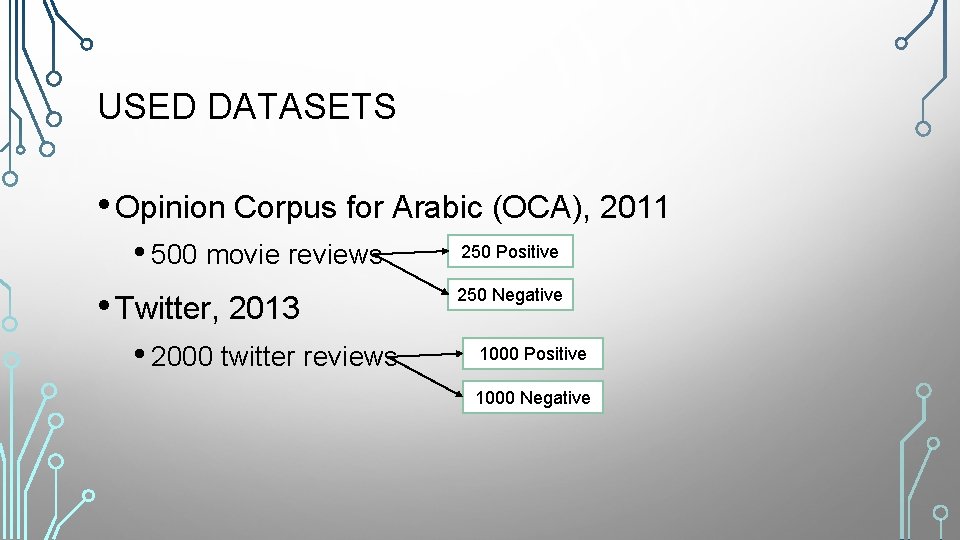

USED DATASETS • Opinion Corpus for Arabic (OCA), 2011 • 500 movie reviews • Twitter, 2013 • 2000 twitter reviews 250 Positive 250 Negative 1000 Positive 1000 Negative

USED LEXICONS • Emoji lexicon • Negation words lexicon • Polarity words lexicon

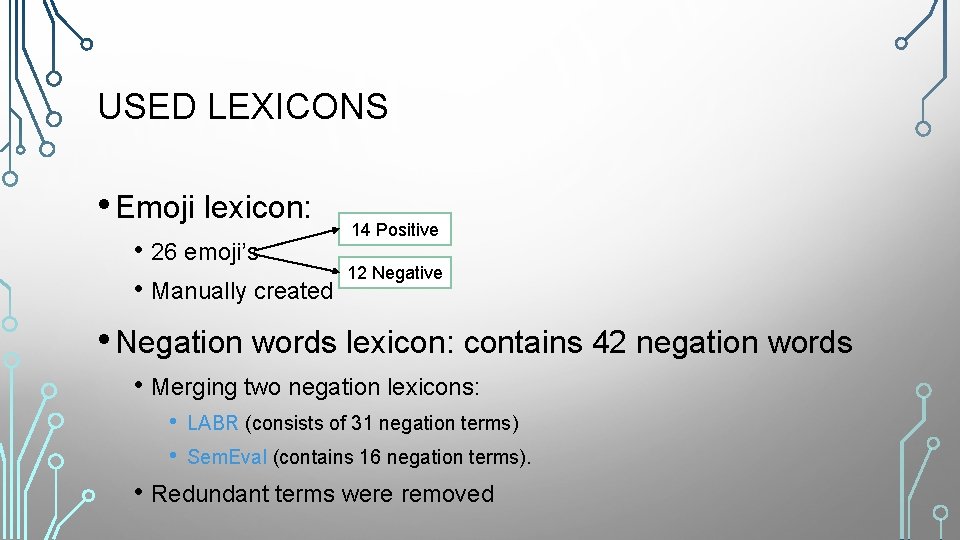

USED LEXICONS • Emoji lexicon: • 26 emoji’s • Manually created 14 Positive 12 Negative • Negation words lexicon: contains 42 negation words • Merging two negation lexicons: • • LABR (consists of 31 negation terms) Sem. Eval (contains 16 negation terms). • Redundant terms were removed

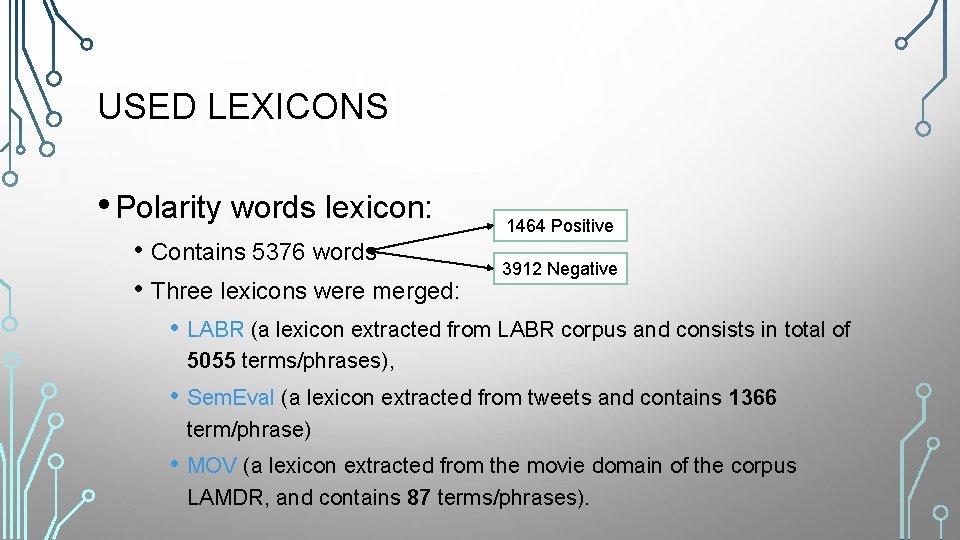

USED LEXICONS • Polarity words lexicon: • Contains 5376 words • Three lexicons were merged: 1464 Positive 3912 Negative • LABR (a lexicon extracted from LABR corpus and consists in total of 5055 terms/phrases), • Sem. Eval (a lexicon extracted from tweets and contains 1366 term/phrase) • MOV (a lexicon extracted from the movie domain of the corpus LAMDR, and contains 87 terms/phrases).

USED LEXICONS • Polarity words lexicon: • When merging these lexicons: • only single words were taken into consideration, • A number of preprocessing steps were applied on each word: • • • Punctuations were removed including hashtags, • Redundant word were eliminated. Diacritical marks were removed. Normalized by removing tatweel, and replacing all forms of Alif ( آ ، ﺇ ، )ﺃ with the bare Alif ( )ﺍ , and replacing ta’ ( )ﺓ with the ha’ ( )ﻩ.

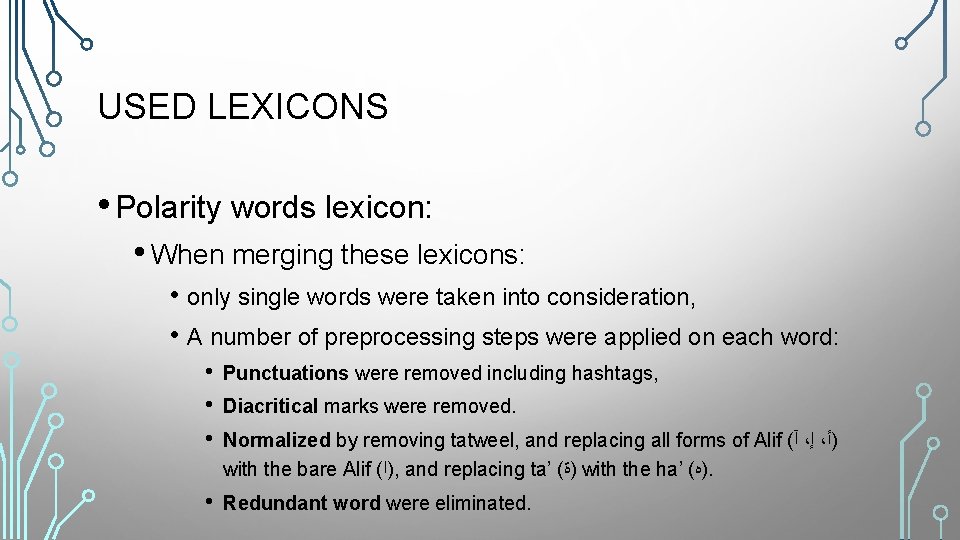

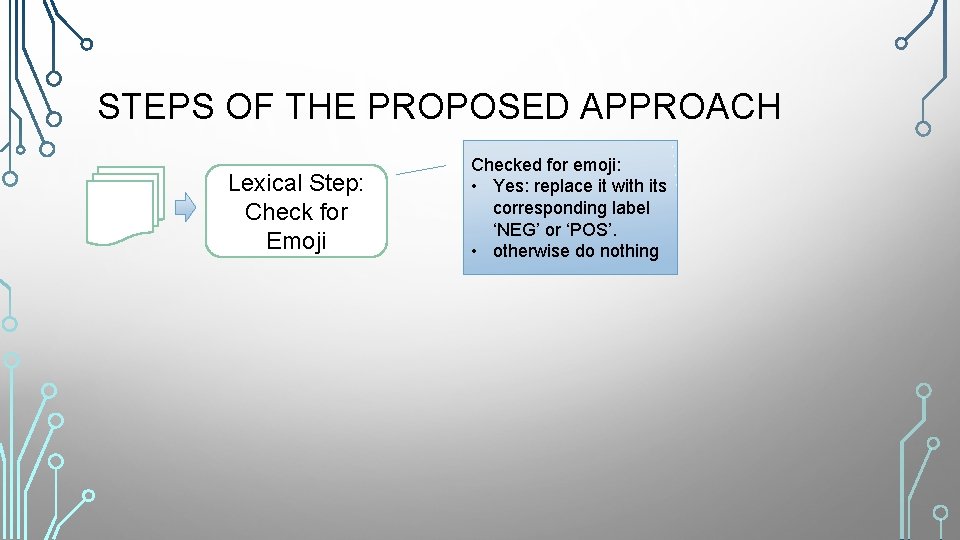

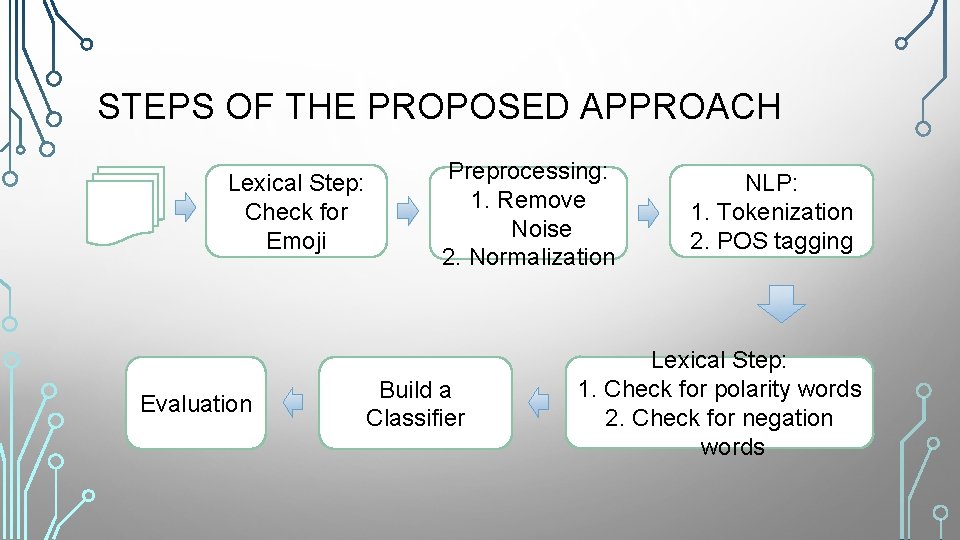

STEPS OF THE PROPOSED APPROACH Lexical Step: Check for Emoji Checked for emoji: • Yes: replace it with its corresponding label ‘NEG’ or ‘POS’. • otherwise do nothing

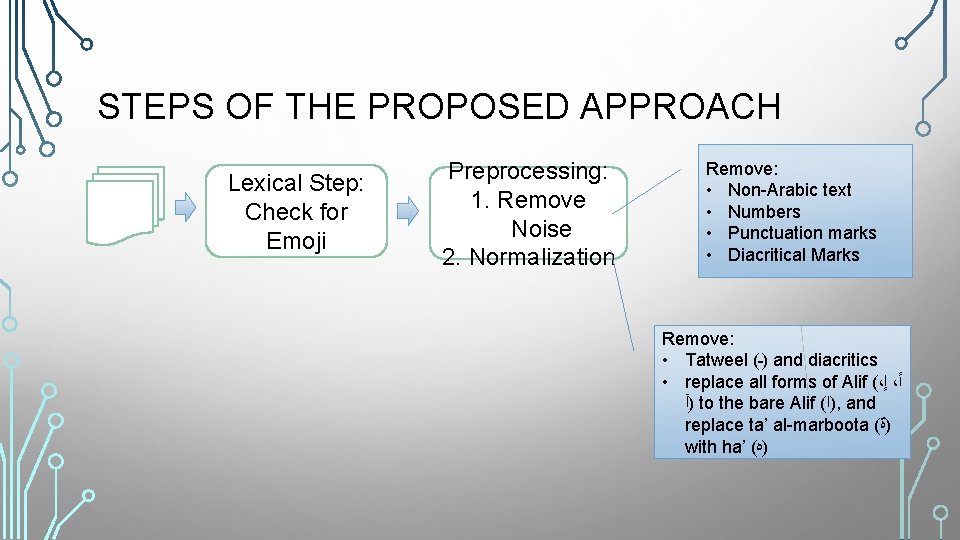

STEPS OF THE PROPOSED APPROACH Lexical Step: Check for Emoji Preprocessing: 1. Remove Noise 2. Normalization Remove: • Non-Arabic text • Numbers • Punctuation marks • Diacritical Marks Remove: • Tatweel ( )ـ and diacritics • replace all forms of Alif (، ﺇ ، ﺃ )آ to the bare Alif ( )ﺍ , and replace ta’ al-marboota ( )ﺓ with ha’ ( )ﻩ

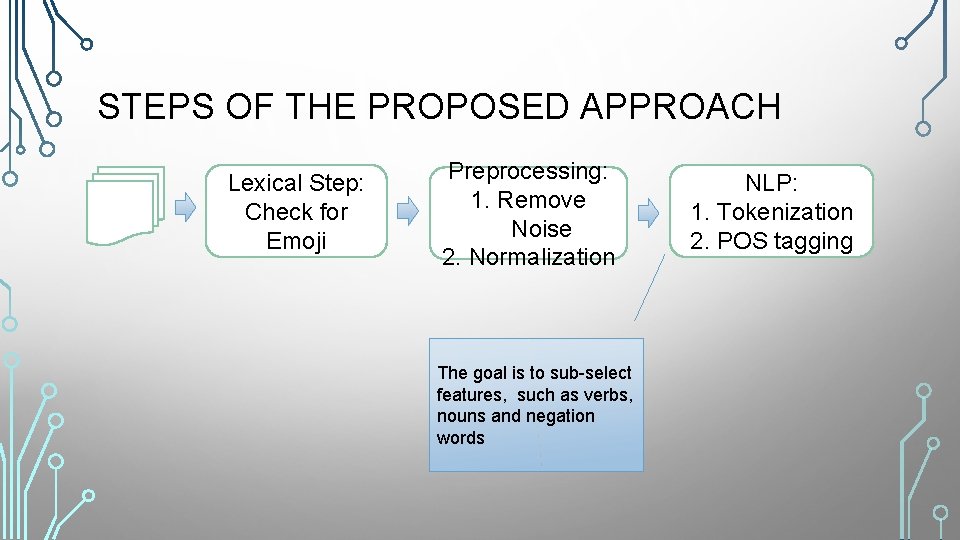

STEPS OF THE PROPOSED APPROACH Lexical Step: Check for Emoji Preprocessing: 1. Remove Noise 2. Normalization The goal is to sub-select features, such as verbs, nouns and negation words NLP: 1. Tokenization 2. POS tagging

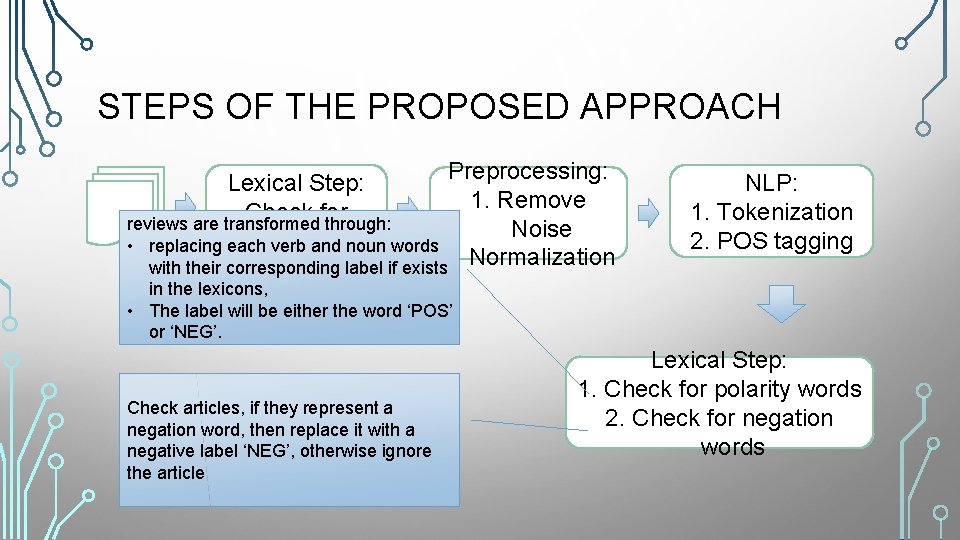

STEPS OF THE PROPOSED APPROACH Preprocessing: 1. Remove Noise with their corresponding label if exists 2. Normalization Lexical Step: Check for reviews are transformed through: • replacing each. Emoji verb and noun words NLP: 1. Tokenization 2. POS tagging in the lexicons, • The label will be either the word ‘POS’ or ‘NEG’. Check articles, if they represent a negation word, then replace it with a negative label ‘NEG’, otherwise ignore the article Lexical Step: 1. Check for polarity words 2. Check for negation words

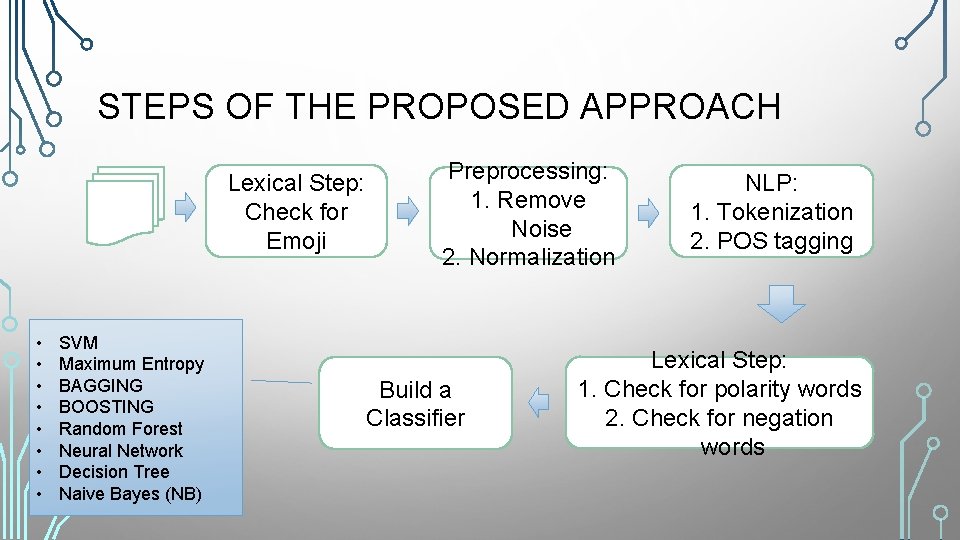

STEPS OF THE PROPOSED APPROACH Lexical Step: Check for Emoji • • SVM Maximum Entropy BAGGING BOOSTING Random Forest Neural Network Decision Tree Naive Bayes (NB) Preprocessing: 1. Remove Noise 2. Normalization Build a Classifier NLP: 1. Tokenization 2. POS tagging Lexical Step: 1. Check for polarity words 2. Check for negation words

STEPS OF THE PROPOSED APPROACH Lexical Step: Check for Emoji Evaluation Preprocessing: 1. Remove Noise 2. Normalization Build a Classifier NLP: 1. Tokenization 2. POS tagging Lexical Step: 1. Check for polarity words 2. Check for negation words

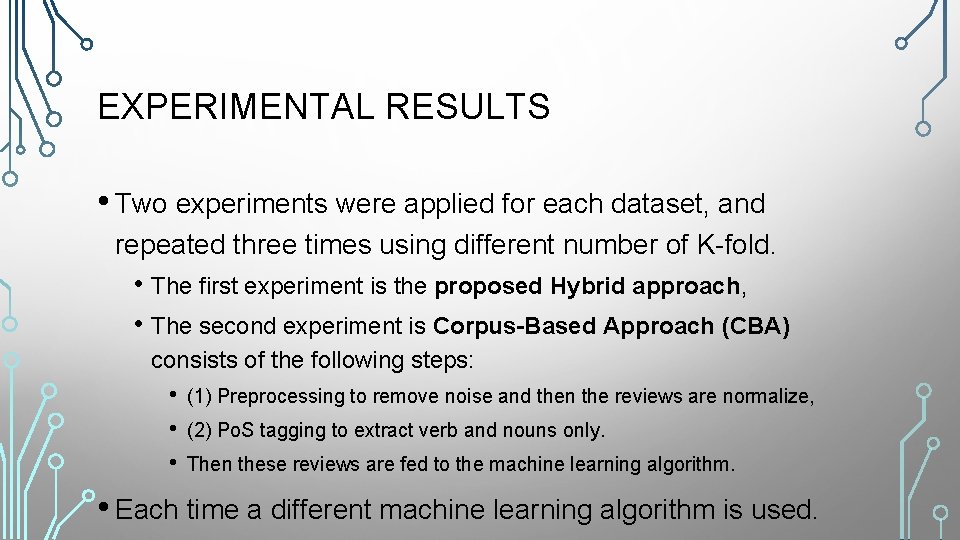

EXPERIMENTAL RESULTS • Two experiments were applied for each dataset, and repeated three times using different number of K-fold. • The first experiment is the proposed Hybrid approach, • The second experiment is Corpus-Based Approach (CBA) consists of the following steps: • • • (1) Preprocessing to remove noise and then the reviews are normalize, (2) Po. S tagging to extract verb and nouns only. Then these reviews are fed to the machine learning algorithm. • Each time a different machine learning algorithm is used.

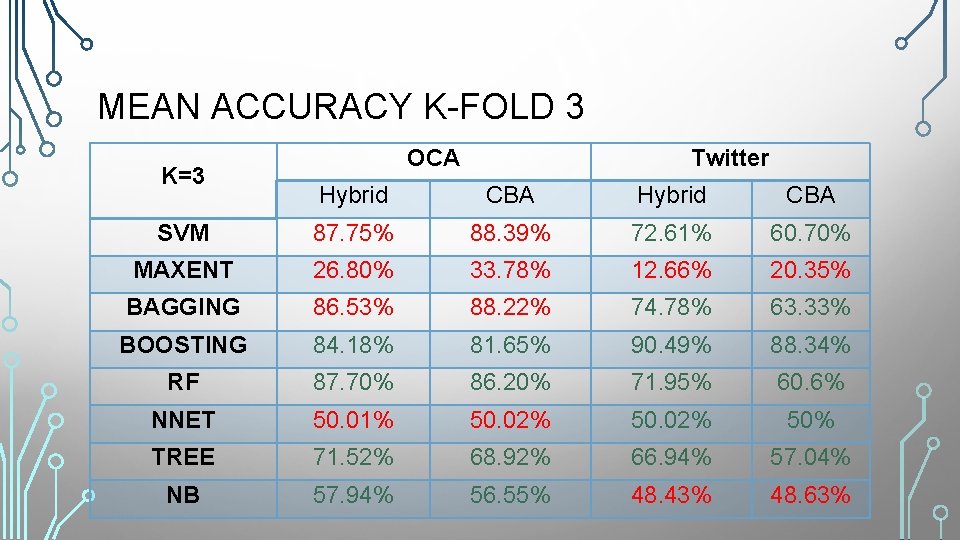

MEAN ACCURACY K-FOLD 3 K=3 OCA Twitter Hybrid CBA SVM 87. 75% 88. 39% 72. 61% 60. 70% MAXENT 26. 80% 33. 78% 12. 66% 20. 35% BAGGING 86. 53% 88. 22% 74. 78% 63. 33% BOOSTING 84. 18% 81. 65% 90. 49% 88. 34% RF 87. 70% 86. 20% 71. 95% 60. 6% NNET 50. 01% 50. 02% 50% TREE 71. 52% 68. 92% 66. 94% 57. 04% NB 57. 94% 56. 55% 48. 43% 48. 63%

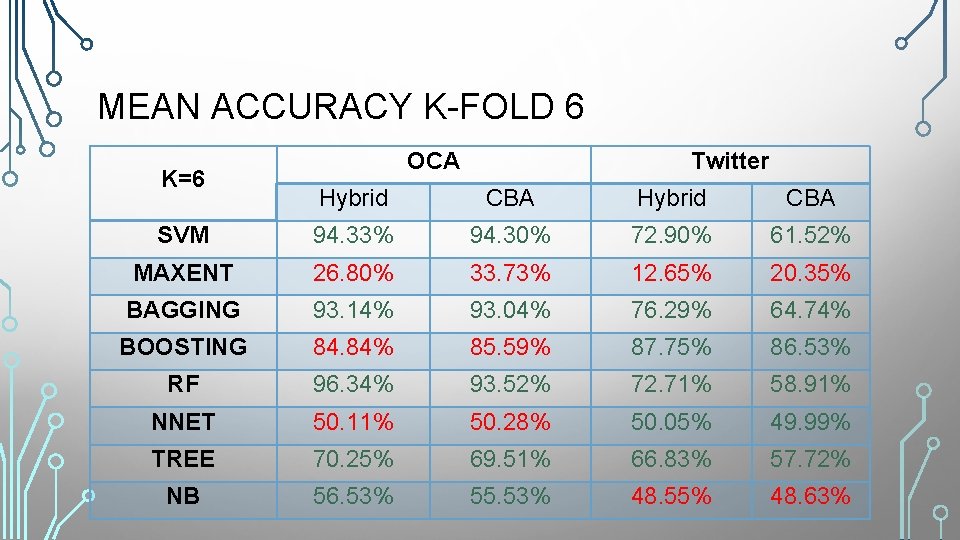

MEAN ACCURACY K-FOLD 6 K=6 OCA Twitter Hybrid CBA SVM 94. 33% 94. 30% 72. 90% 61. 52% MAXENT 26. 80% 33. 73% 12. 65% 20. 35% BAGGING 93. 14% 93. 04% 76. 29% 64. 74% BOOSTING 84. 84% 85. 59% 87. 75% 86. 53% RF 96. 34% 93. 52% 72. 71% 58. 91% NNET 50. 11% 50. 28% 50. 05% 49. 99% TREE 70. 25% 69. 51% 66. 83% 57. 72% NB 56. 53% 55. 53% 48. 55% 48. 63%

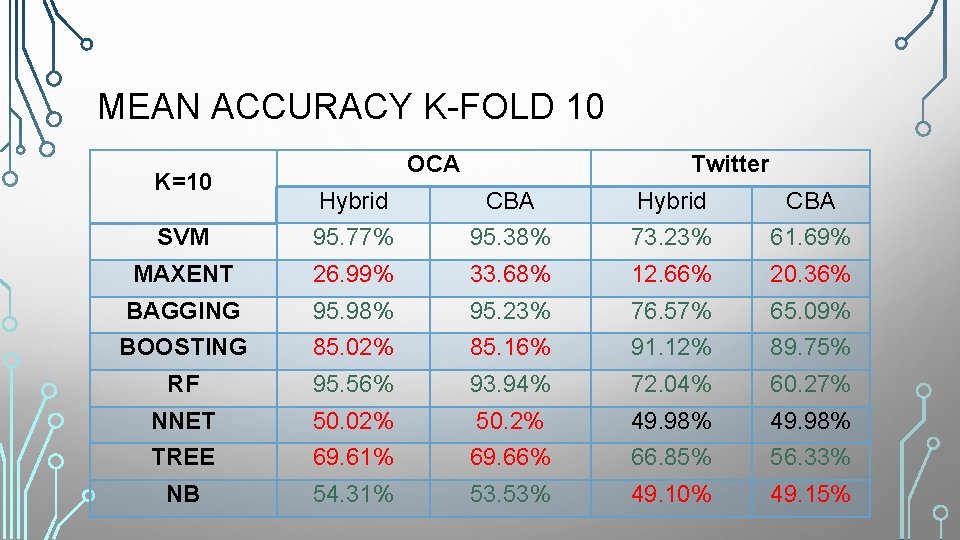

MEAN ACCURACY K-FOLD 10 K=10 OCA Twitter Hybrid CBA SVM 95. 77% 95. 38% 73. 23% 61. 69% MAXENT 26. 99% 33. 68% 12. 66% 20. 36% BAGGING 95. 98% 95. 23% 76. 57% 65. 09% BOOSTING 85. 02% 85. 16% 91. 12% 89. 75% RF 95. 56% 93. 94% 72. 04% 60. 27% NNET 50. 02% 50. 2% 49. 98% TREE 69. 61% 69. 66% 66. 85% 56. 33% NB 54. 31% 53. 53% 49. 10% 49. 15%

DISCUSSION • The accuracy is dependent on the used dataset • The used lexicons have more polarity words used by twitter users, while having only a small number of polarity words conducted from the movie domain. • The reason that NB was not performing well in this experiment is because the most frequent words were not selected when the NB classifier was built.

CONCLUSION • The hybrid approach outperformed the corpus-based approach, and the highest accuracy reached 96. 34% using random forest with 6 -fold cross-validation.

QUESTIONS

THANK YOU

- Slides: 26