A Scalable Content Addressable Network Sylvia Ratnasamy UC

A Scalable, Content Addressable Network Sylvia Ratnasamy (UC Berkley Dissertation 2002) Paul Francis Mark Handley Richard Karp Scott Shenker Slides by Authors above with additions/modificatons by Michael Isaacs (UCSB) Tony Hannan (Georgia Tech)

Outline • • • Introduction Design Improvements Evalution Related Work Conclusion

Internet-scale hash tables • Hash tables – essential building block in software systems • Internet-scale distributed hash tables – equally valuable to large-scale distributed systems • peer-to-peer systems – Napster, Gnutella, Groove, Free. Net, Mojo. Nation… • large-scale storage management systems – Publius, Ocean. Store, PAST, Farsite, CFS. . . • mirroring on the Web

Content-Addressable Network (CAN) • CAN: Internet-scale hash table • Interface – insert (key, value) – value = retrieve (key) • Properties – scalable – operationally simple – good performance • Related systems: Chord, Plaxton/Tapestry. . .

Problem Scope • • provide the hashtable interface scalability robustness performance

Not in scope • security • anonymity • higher level primitives – keyword searching – mutable content

Outline • • • Introduction Design Improvements Evalution Related Work Conclusion

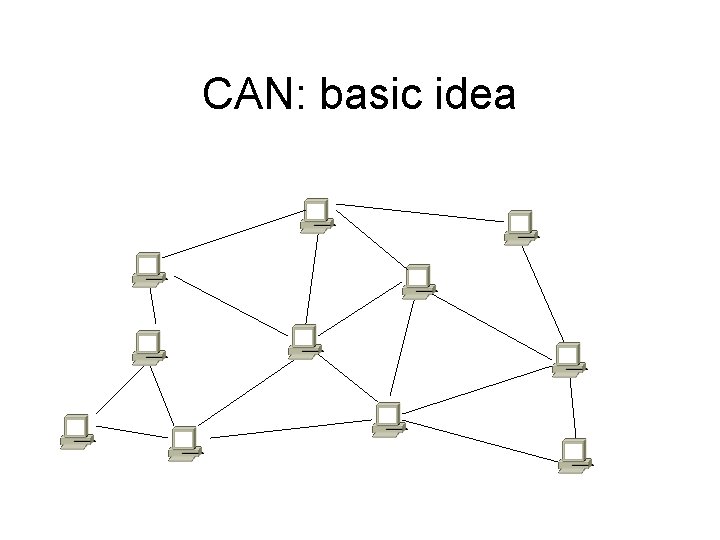

CAN: basic idea

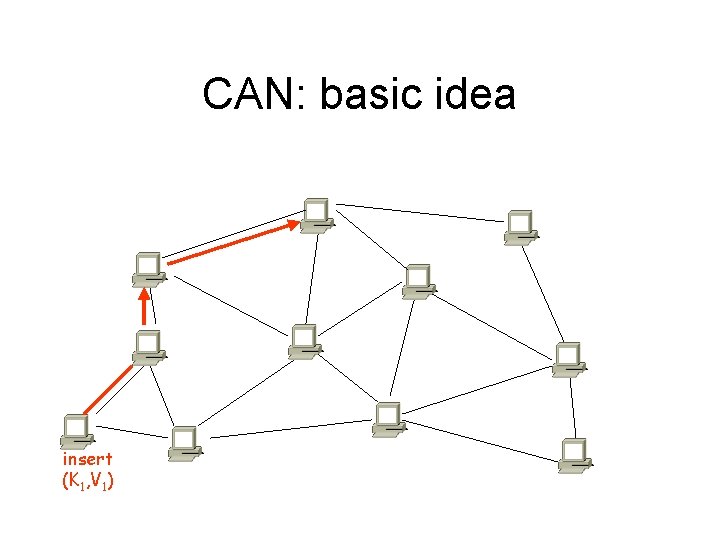

CAN: basic idea insert (K 1, V 1)

CAN: basic idea insert (K 1, V 1)

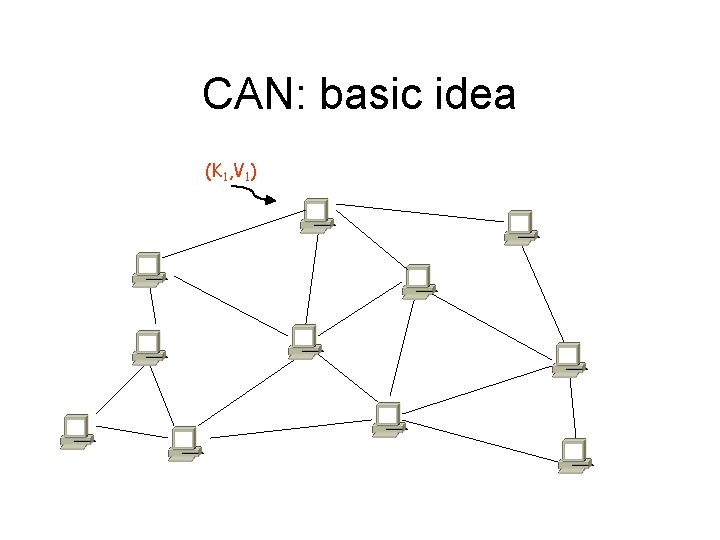

CAN: basic idea (K 1, V 1)

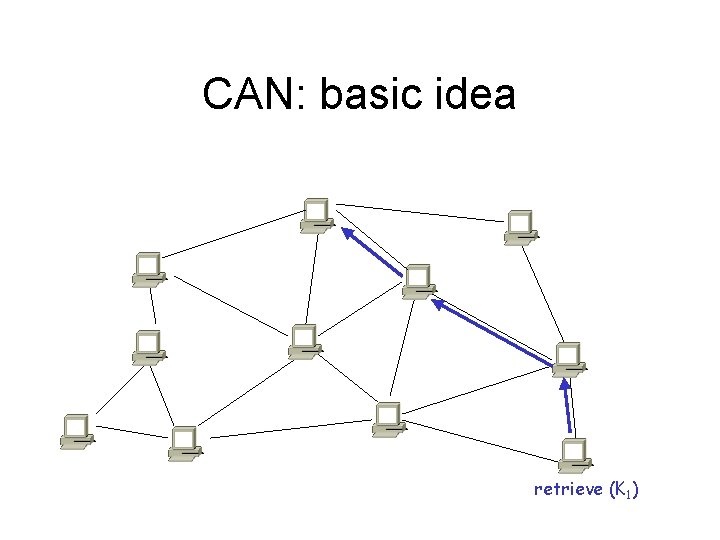

CAN: basic idea retrieve (K 1)

CAN: solution • Virtual Cartesian coordinate space • Entire space is partitioned amongst all the nodes – every node “owns” a zone in the overall space • Abstraction – can store data at “points” in the space – can route from one “point” to another • Point = node that owns the enclosing zone

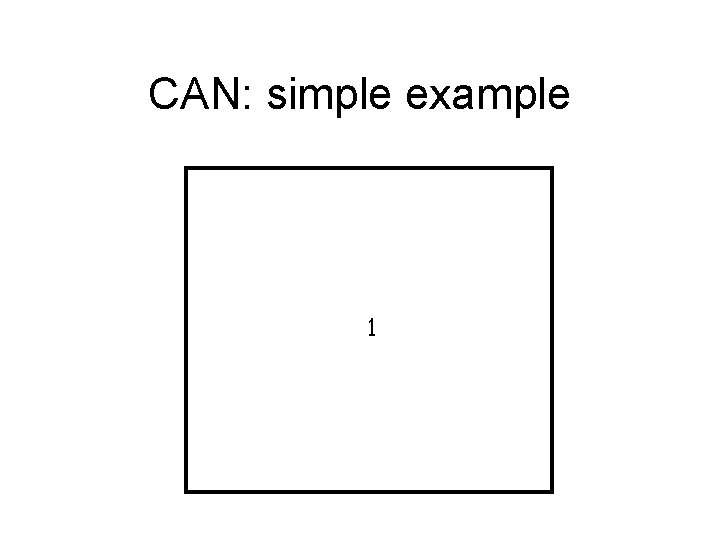

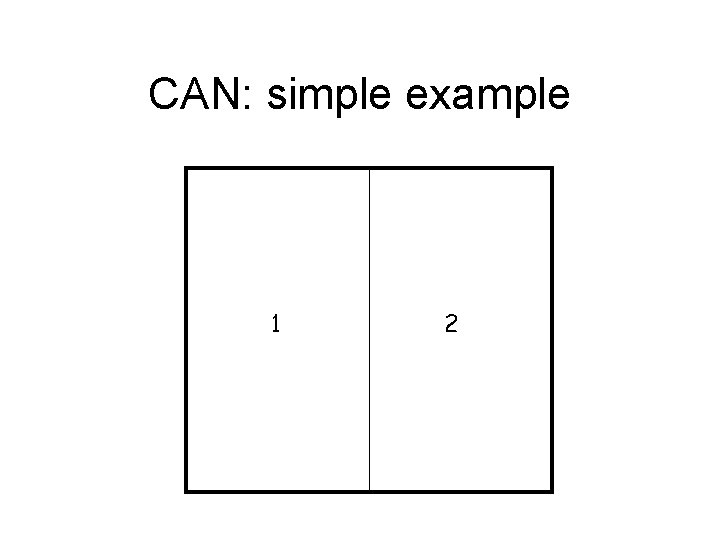

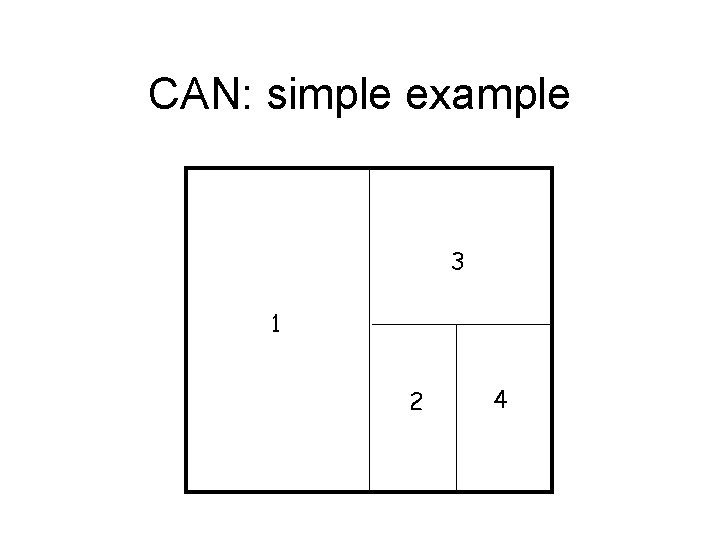

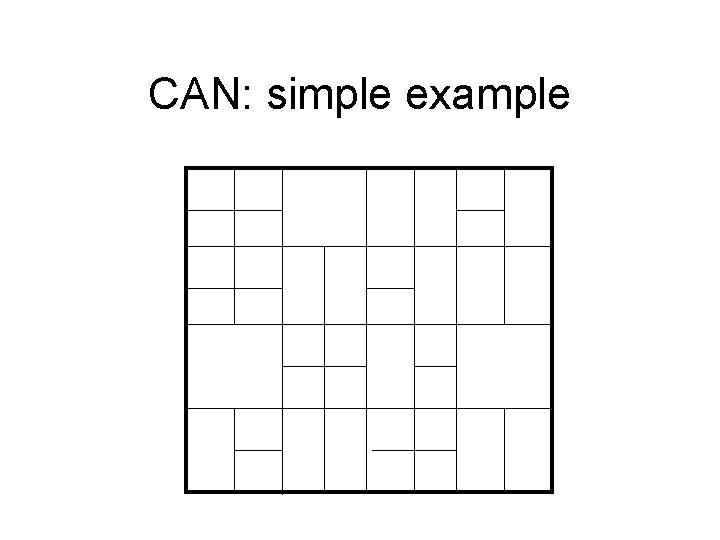

CAN: simple example 1

CAN: simple example 1 2

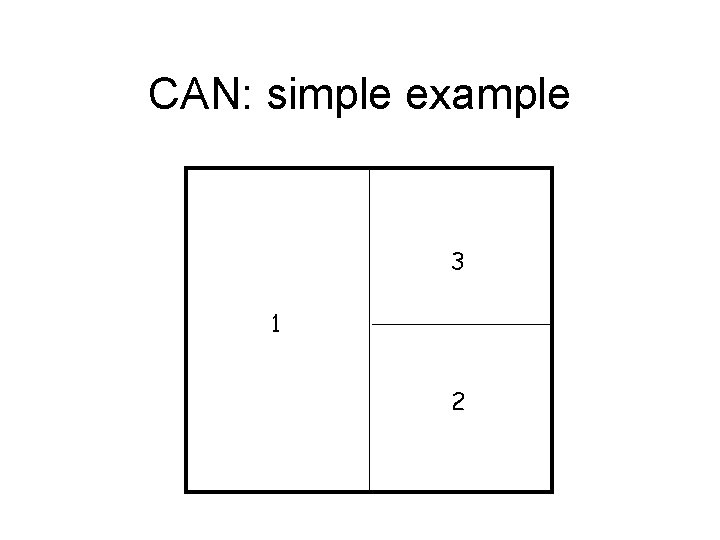

CAN: simple example 3 1 2

CAN: simple example 3 1 2 4

CAN: simple example

CAN: simple example I

CAN: simple example node I: : insert(K, V) I

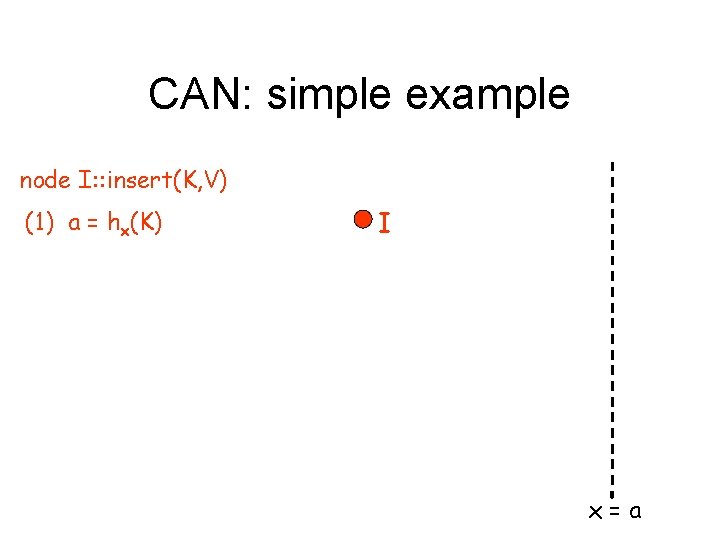

CAN: simple example node I: : insert(K, V) (1) a = hx(K) I x=a

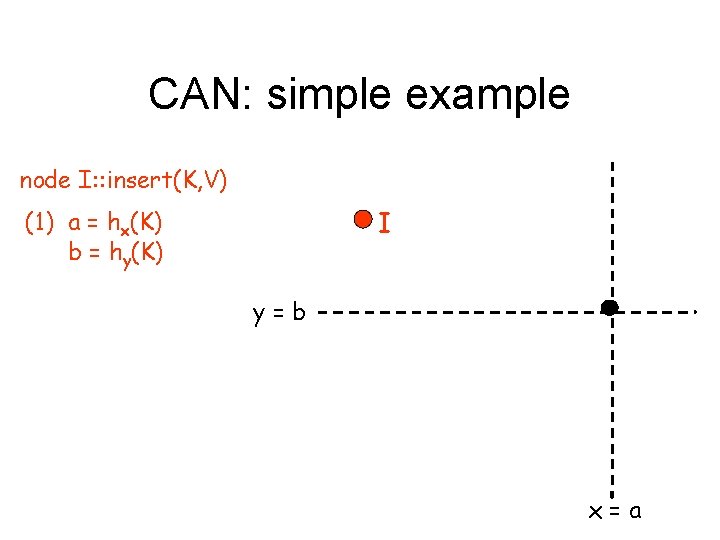

CAN: simple example node I: : insert(K, V) (1) a = hx(K) b = hy(K) I y=b x=a

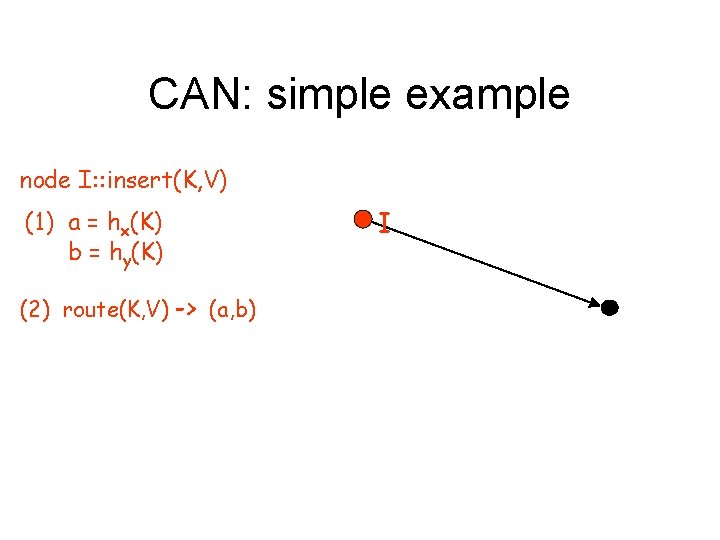

CAN: simple example node I: : insert(K, V) (1) a = hx(K) b = hy(K) (2) route(K, V) -> (a, b) I

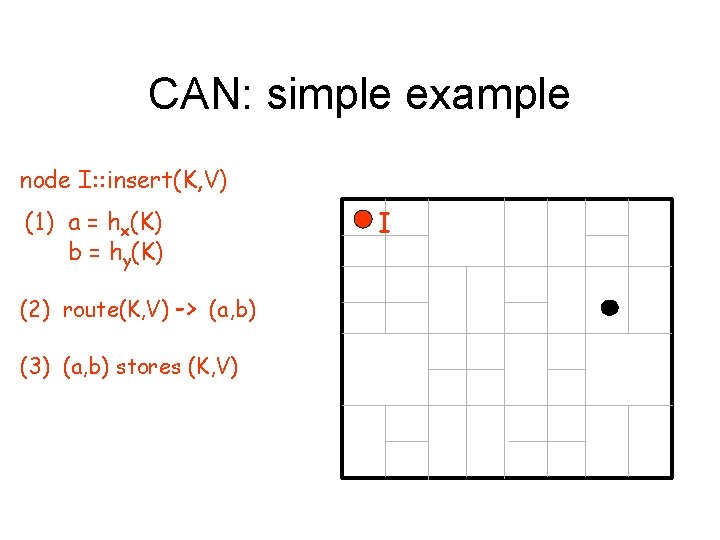

CAN: simple example node I: : insert(K, V) (1) a = hx(K) b = hy(K) (2) route(K, V) -> (a, b) (3) (a, b) stores (K, V) I

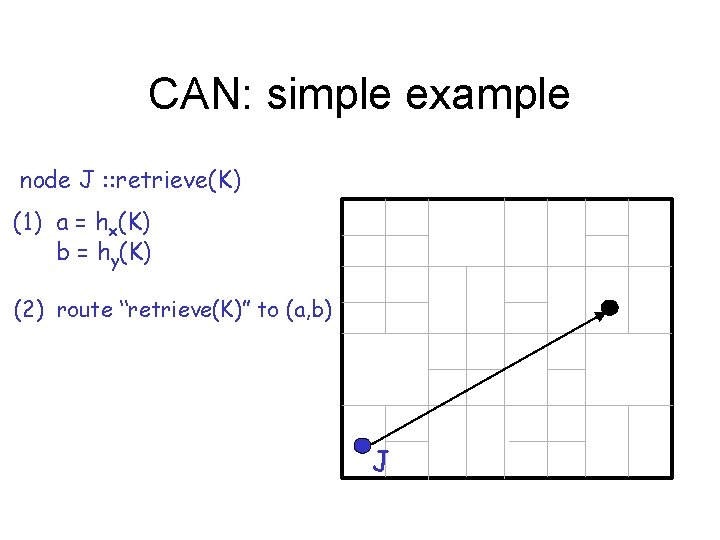

CAN: simple example node J : : retrieve(K) (1) a = hx(K) b = hy(K) (2) route “retrieve(K)” to (a, b) J

CAN Data stored in the CAN is addressed by name (i. e. key), not location (i. e. IP address)

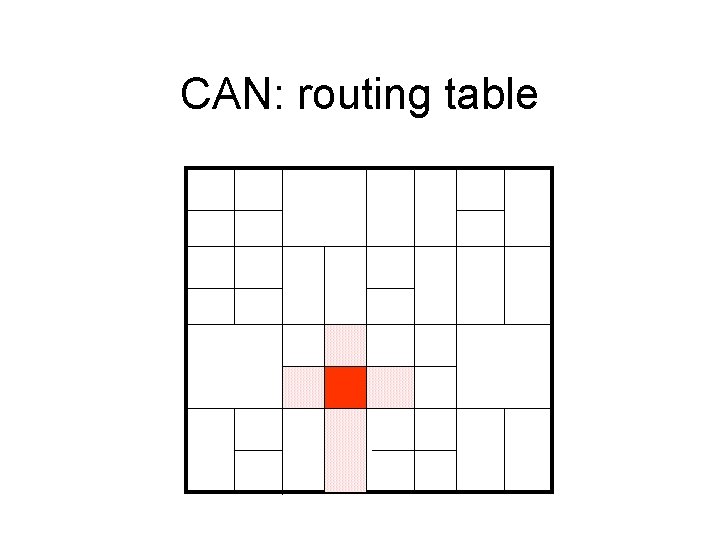

CAN: routing table

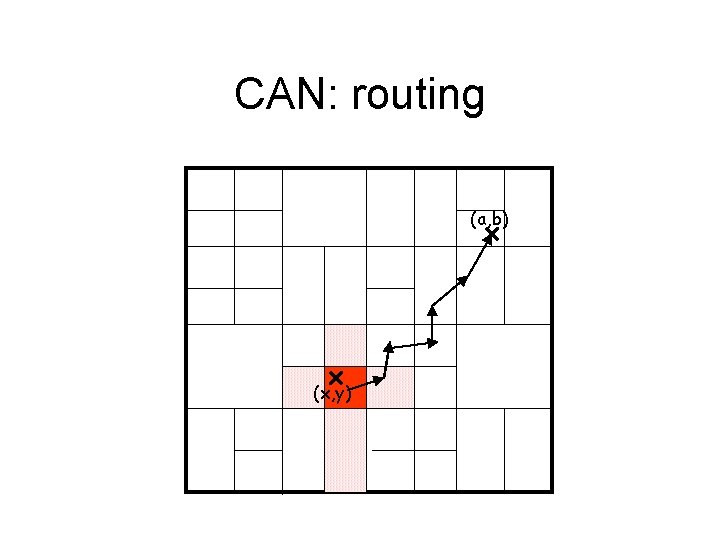

CAN: routing (a, b) (x, y)

CAN: routing A node only maintains state for its immediate neighboring nodes

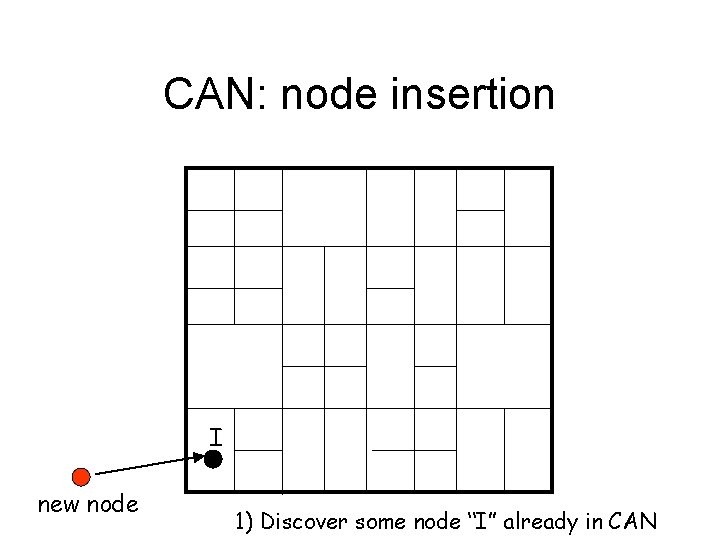

CAN: node insertion I new node 1) Discover some node “I” already in CAN

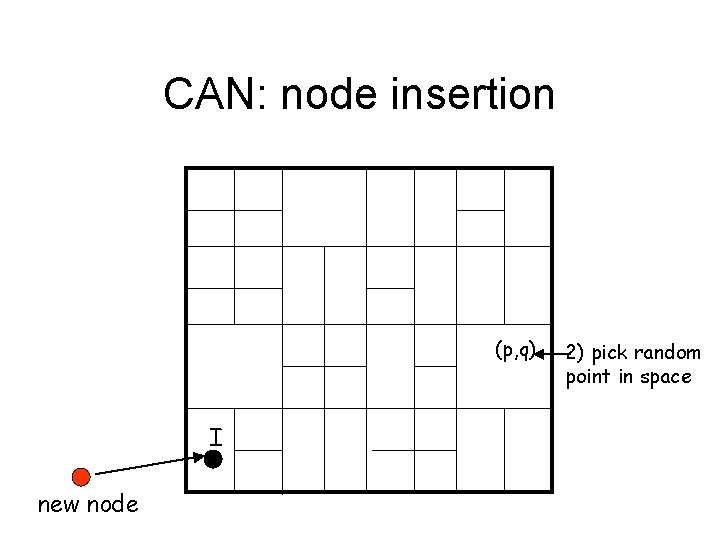

CAN: node insertion (p, q) I new node 2) pick random point in space

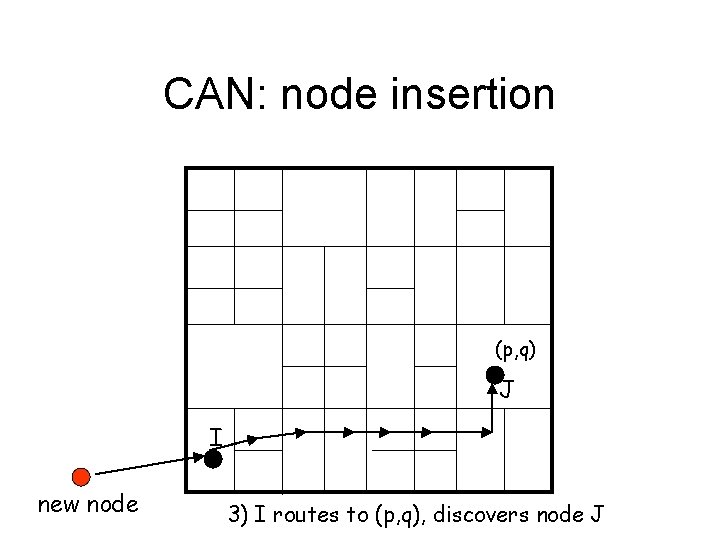

CAN: node insertion (p, q) J I new node 3) I routes to (p, q), discovers node J

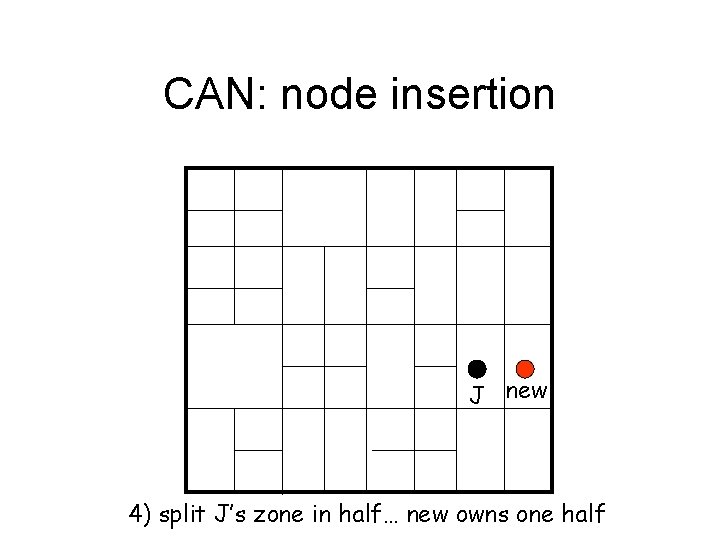

CAN: node insertion J new 4) split J’s zone in half… new owns one half

CAN: node insertion Inserting a new node affects only a single other node and its immediate neighbors

CAN: node failures • Need to repair the space – recover database • state updates • use replication, rebuild database from replicas – repair routing • takeover algorithm

CAN: takeover algorithm • Nodes periodically send update message to neigbors • An absent update message indicates a failure – Each node send a recover message to the takeover node – The takeover node takes over the failed node's zone

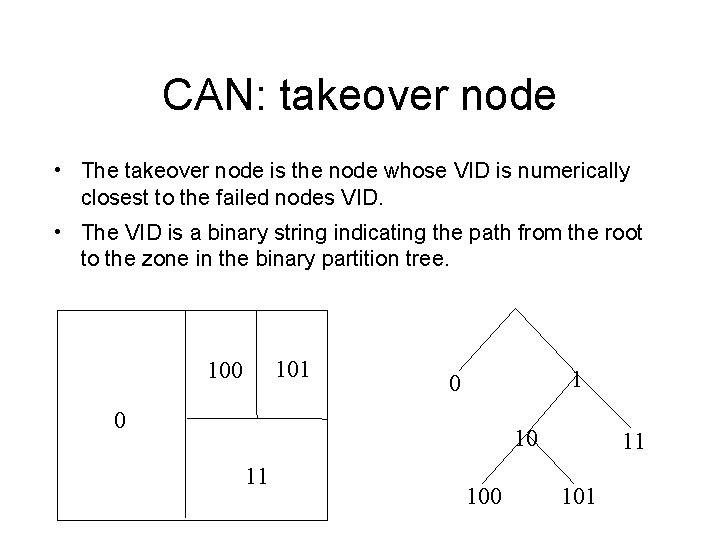

CAN: takeover node • The takeover node is the node whose VID is numerically closest to the failed nodes VID. • The VID is a binary string indicating the path from the root to the zone in the binary partition tree. 101 100 1 0 0 10 11 101

CAN: VID predecessor / successor • To handle multiple neighbors failing simultaneously, each node maintains its predecessor and successor in the VID ordering (idea borrowed from Chord). • This guarantees that the takeover node can always be found.

CAN: node failures Only the failed node’s immediate neighbors are required for recovery

Basic Design recap • Basic CAN – completely distributed – self-organizing – nodes only maintain state for their immediate neighbors

Outline • • • Introduction Design Improvements Evalution Related Work Conclusion

Design Improvement Objectives • Reduce latency of CAN routing – Reduce CAN path length – Reduce per-hop latency (IP length/latency per CAN hop) • Improve CAN robustness – data availability – routing

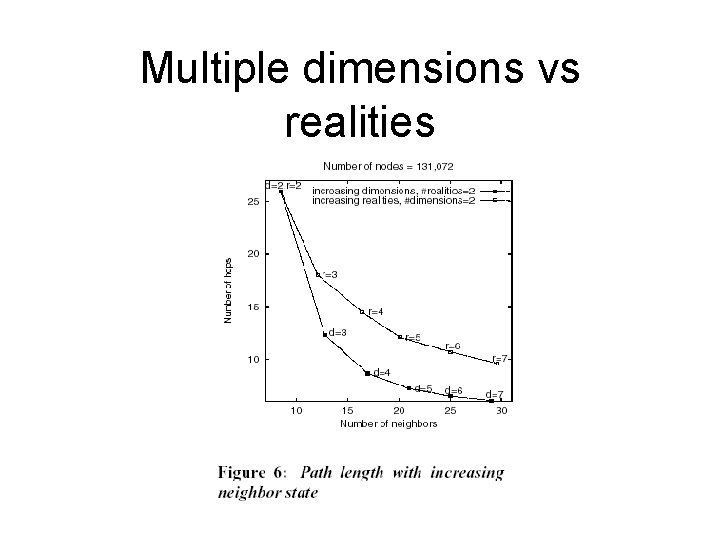

Design Improvement Tradeoffs Routing performance and system robustnes » vs. Increased per-node state and system complexity

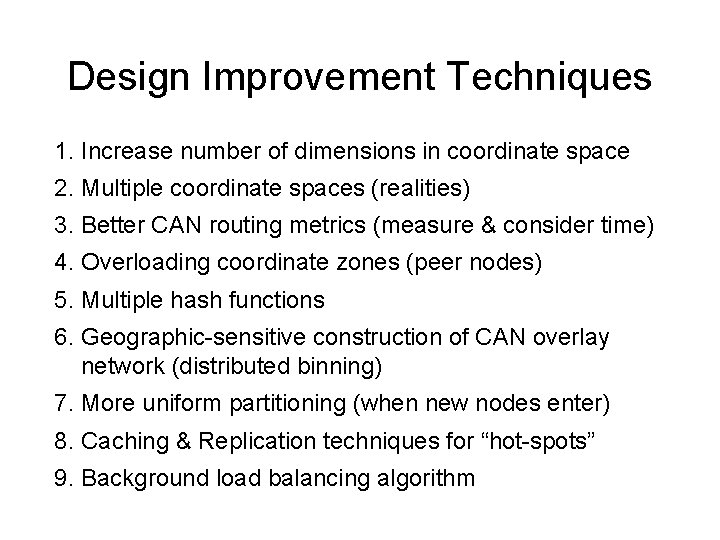

Design Improvement Techniques 1. Increase number of dimensions in coordinate space 2. Multiple coordinate spaces (realities) 3. Better CAN routing metrics (measure & consider time) 4. Overloading coordinate zones (peer nodes) 5. Multiple hash functions 6. Geographic-sensitive construction of CAN overlay network (distributed binning) 7. More uniform partitioning (when new nodes enter) 8. Caching & Replication techniques for “hot-spots” 9. Background load balancing algorithm

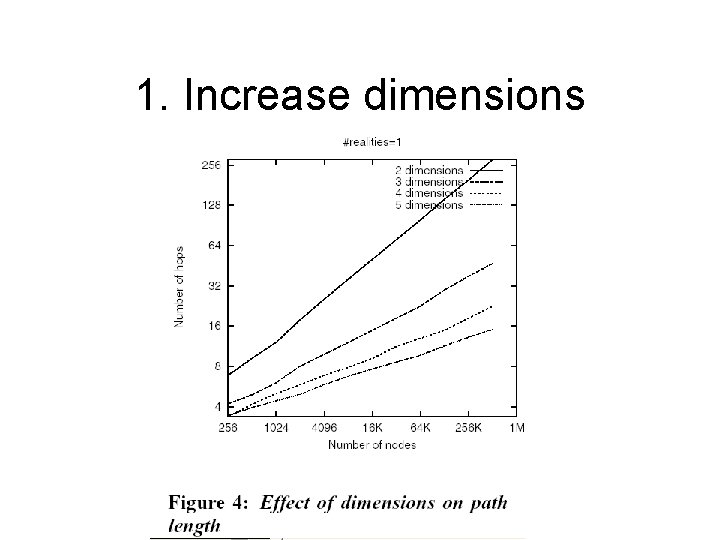

1. Increase dimensions

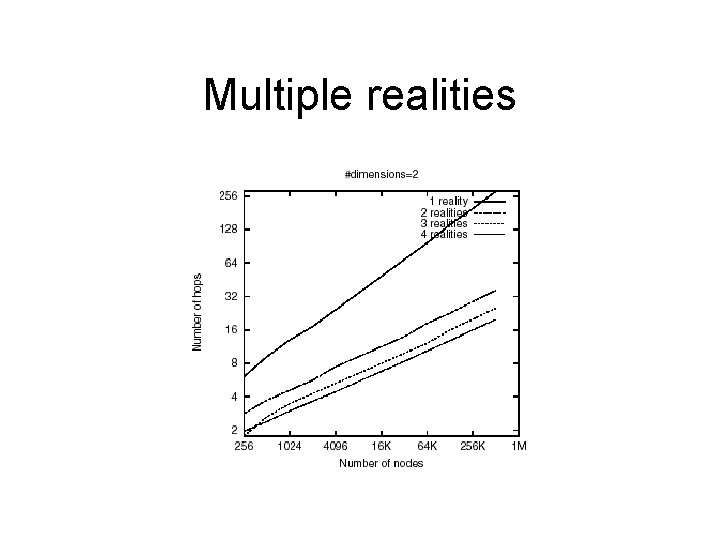

2. Multiple realities • Multiple (r) coordinate spaces • Each node maintains a different zone in each reality • Contents of hash table replicated on each reality • Routing chooses neighbor closest in any reality (can make large jumps towards target) • Advantages: – Greater data availability – Fewer hops

Multiple realities

Multiple dimensions vs realities

3. Time-sensitive routing • Each node measures round-trip time (RTT) to each of its neighbors • Routing message is forwarded to the neighbor with the maximum ratio of progress to RTT • Advantage: – reduces per-hop latency (25 -40% depending on # dimensions)

4. Zone overloading • Node maintains list of “peers” in same zone • Zone not split until MAXPEERS are there • Only least RTT neighbor maintained • Replication or splitting of data across peers • Advantages – Reduced per hop latency – Reduced path length (because sharing zones has same effect as reducing total number of nodes) – Improved fault tolerance (a zone is vacant only when all nodes fail)

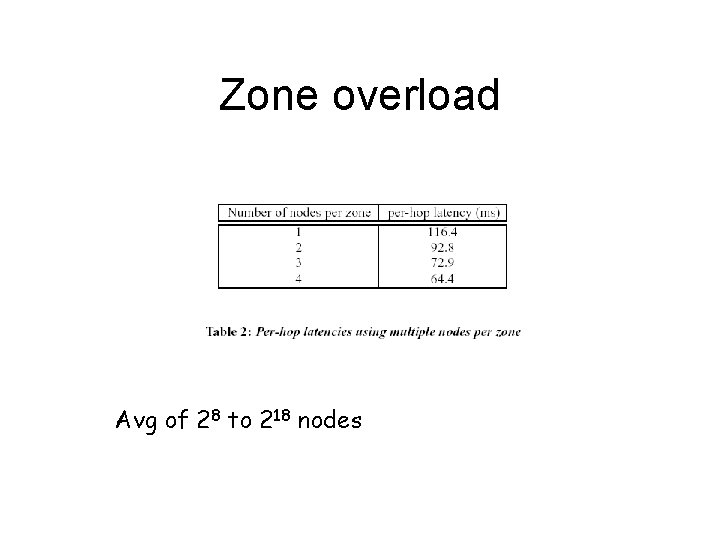

Zone overload Avg of 28 to 218 nodes

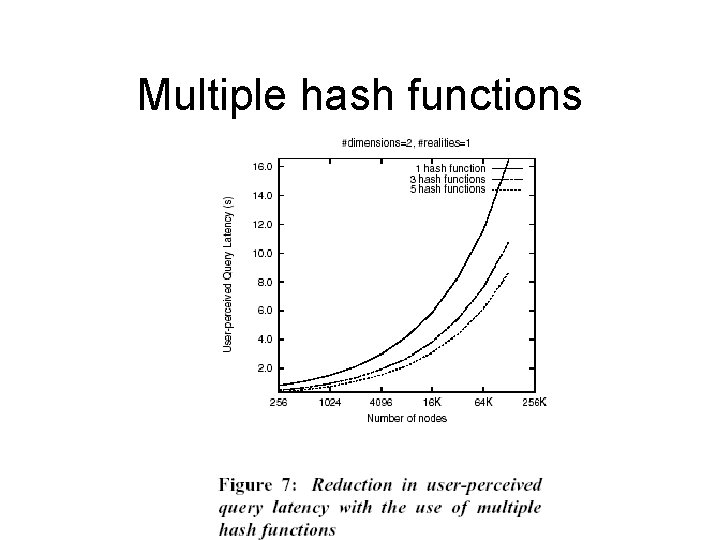

5. Multiple hash • (key, value) mapped to “k” multiple points (and nodes) • Parallel query execution on “k” paths • Or choose closest one in space • Advantages: – improve data availability – reduce path length

Multiple hash functions

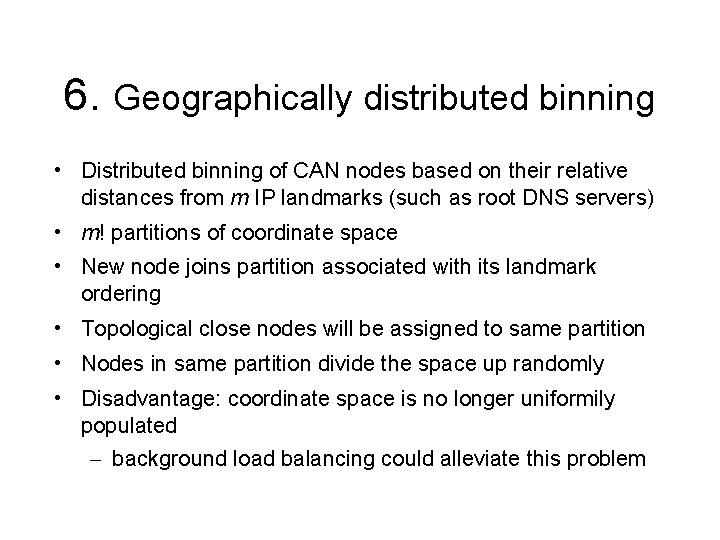

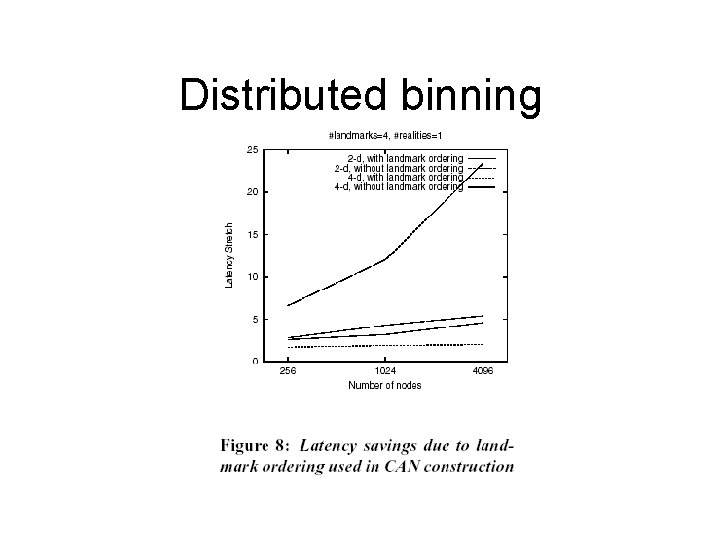

6. Geographically distributed binning • Distributed binning of CAN nodes based on their relative distances from m IP landmarks (such as root DNS servers) • m! partitions of coordinate space • New node joins partition associated with its landmark ordering • Topological close nodes will be assigned to same partition • Nodes in same partition divide the space up randomly • Disadvantage: coordinate space is no longer uniformily populated – background load balancing could alleviate this problem

Distributed binning

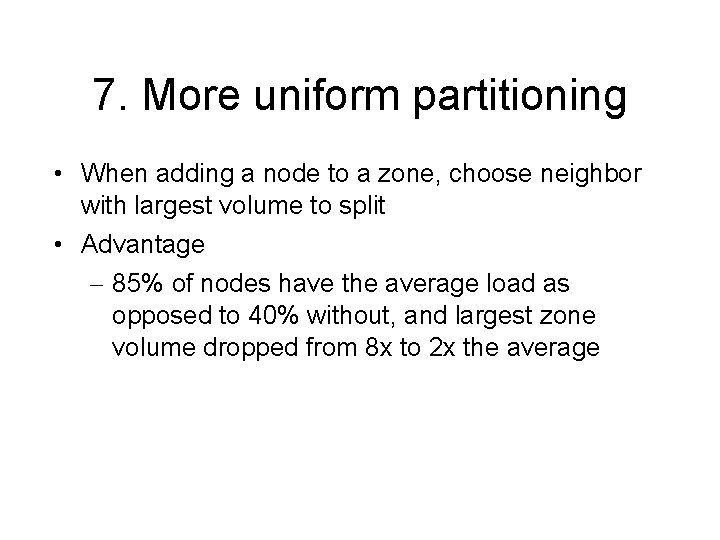

7. More uniform partitioning • When adding a node to a zone, choose neighbor with largest volume to split • Advantage – 85% of nodes have the average load as opposed to 40% without, and largest zone volume dropped from 8 x to 2 x the average

8. Caching & Replication • A node can cache values of keys it has recently requested. • An node can push out “hot” keys to neighbors.

9. Load balancing • Merge and split zones to balance loads

Outline • • • Introduction Design Improvements Evalution Related Work Conclusion

Evaluation Metrics 1. Path length 2. Neighbor state 3. Latency 4. Volume 5. Routing fault tolerance 6. Data availability

CAN: scalability • For a uniformly partitioned space with n nodes and d dimensions – per node, number of neighbors is 2 d – average routing path is (dn 1/d) hops • Simulations show that the above results hold in practice (Transit-Stub topology: GT-ITM gen) • Can scale the network without increasing pernode state

CAN: latency • latency stretch = (CAN routing delay) (IP routing delay) • To reduce latency, apply design improvements: – increase dimensions (d) – multiple nodes per zone (peer nodes) (p) – multiple “realities” ie coordinate spaces (r) – multiple hash functions (k) – use RTT weighted routing – distributed Binning – uniform Partitioning – caching & replication (for “hot spots”)

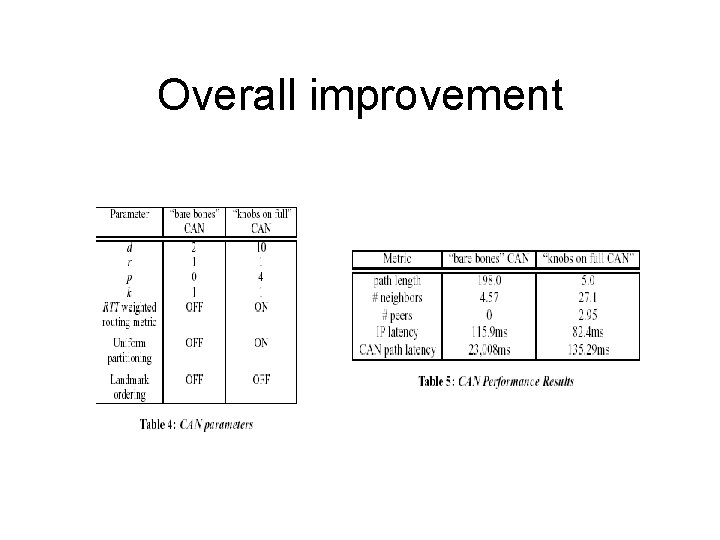

Overall improvement

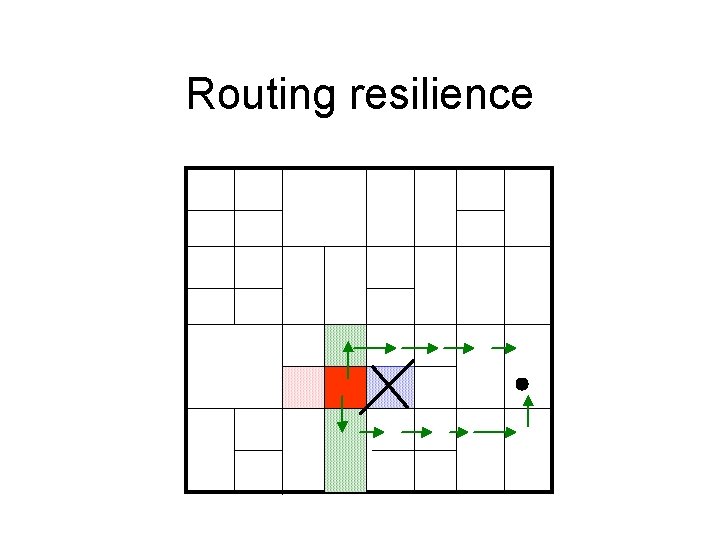

CAN: Robustness • Completely distributed – no single point of failure • Not exploring database recovery • Resilience of routing – can route around trouble

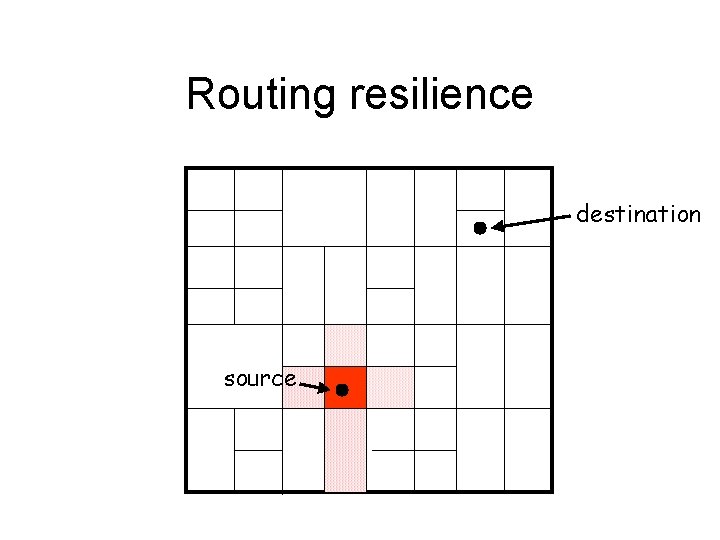

Routing resilience destination source

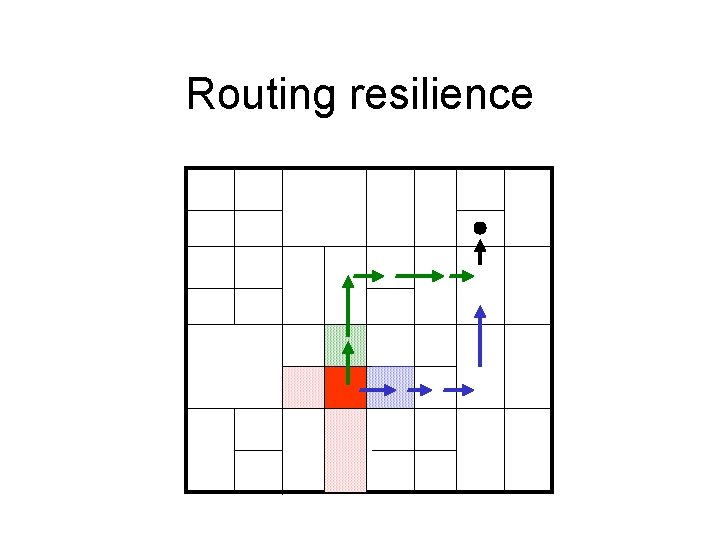

Routing resilience

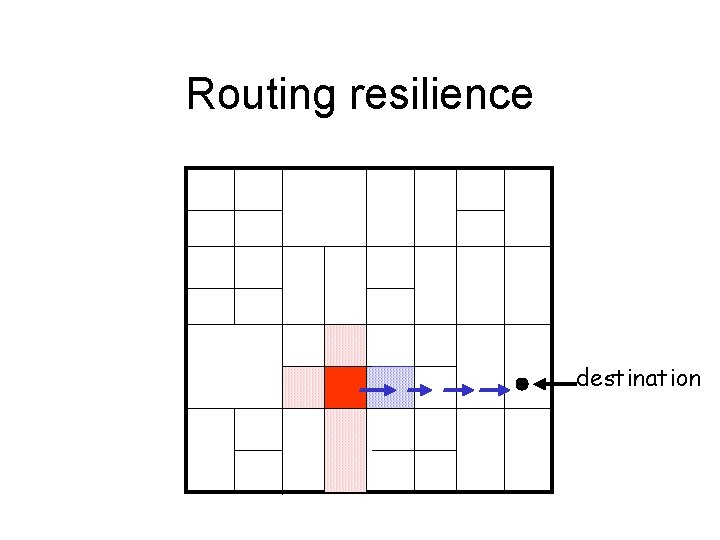

Routing resilience destination

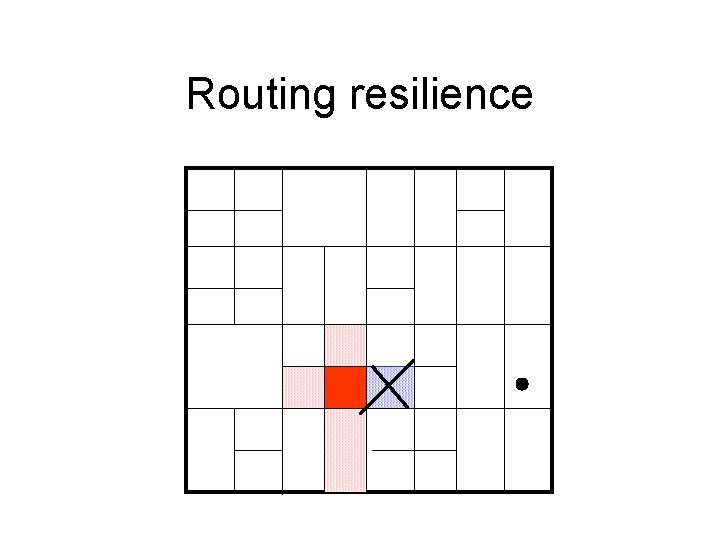

Routing resilience

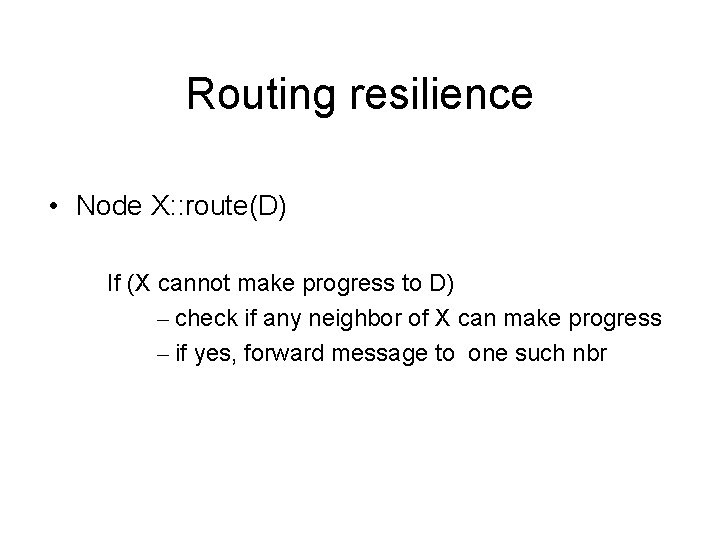

Routing resilience • Node X: : route(D) If (X cannot make progress to D) – check if any neighbor of X can make progress – if yes, forward message to one such nbr

Routing resilience

Outline • • • Introduction Design Improvements Evalution Related Work Conclusion

Related Algorithms • Distance Vector (DV) and Link State (LS) in IP routing – require widespread dissemination of topology – not well suited to dynamic topology like filesharing • Hierarchical routing algorithms – too centralized, single points of stress • Other DHTs – Plaxton, Tapestry, Pastry, Chord

Related Systems • DNS • Ocean. Store (uses Tapestry) • Publius: Web-publishing system with high anonymity • P 2 P File sharing systems – Napster, Gnutella, Ka. Za. A, Freenet

Outline • • • Introduction Design Improvements Evalution Related Work Conclusion

Consclusion: Purpose • CAN – an Internet-scale hash table – potential building block in Internet applications

Conclusion: Scalability • O(d) per-node state • O(d * n^(1/d)) path length For d = (log n)/2, path lengh and per-node state is O(log n) like other DHTs

Conclusion: Latency • With the main design improvements latency is less than twice the IP path latency

Conclusion: Robustness • Decentralized, can route around trouble • With certain design improvements (replication), high data availability

Weaknesses / Future Work • Security – Denial of service attacks • hard to combat because a malicious node can act as a malicious server as well as a malicious client • Mutable content • Search

- Slides: 79