Using Active Learning to Label Large Email Corpora

Using Active Learning to Label Large Email Corpora Ted Markowitz Pace University CSIS DPS & IBM T. J. Watson Research Ctr. 05/04/07

Quick History • Email-related research suggested by Dr. Chuck Tappert’s work with MS student, Ian Stuart • Decided to approach IBM Research’s Spam. Guru anti-spam group for joint research • Started P/T onsite at IBM in 11/05 • Dr. Richard Segal of IBM Research generously agreed to act as adjunct advisor 2

Research Motivation • Assumption: Ongoing training and testing of anti-spam tools require large, fresh databases– corpora–of labeled (spam vs. good) messages • Problem: How do we accurately label large numbers of examples―potentially millions― without manually examining every one? 3

Building Email Corpora • Accurate training & testing of anti-spam tools require: – – truly random, i. e. , unbiased, samples sufficient # of examples to measure low (< 0. 1%) error rates reasonable distributions of spam vs. good mail examples which represent the target operating environment • However, most existing email testing corpora are: – Rather small (just a few thousand messages) – Very narrowly focused in type and content – Aging rapidly and growing more and more stale over time 4

Building Email Corpora (cont. ) • Email and spam are constantly evolving • Building large, current and diverse bodies of examples is time-consuming and expensive • Result: Just a few–relatively small and aging– email corpora are used over and over again 5

One Potential Approach • Machine Learning (ML) methods can help to build corpus labelers which learn how to label • Research in semi-supervised learning (SSL) has shown it’s possible to accurately learn by bootstrapping, i. e. , using relatively few labeled examples and lots of unlabeled examples 6

Active Learning • Active Learning (AL) is one form of SSL • While some ML is passive (e. g. , learner is only given labeled examples), AL is proactive • Active Learner component directs attention to particular areas it wants information about from a teacher who knows all the labels 7

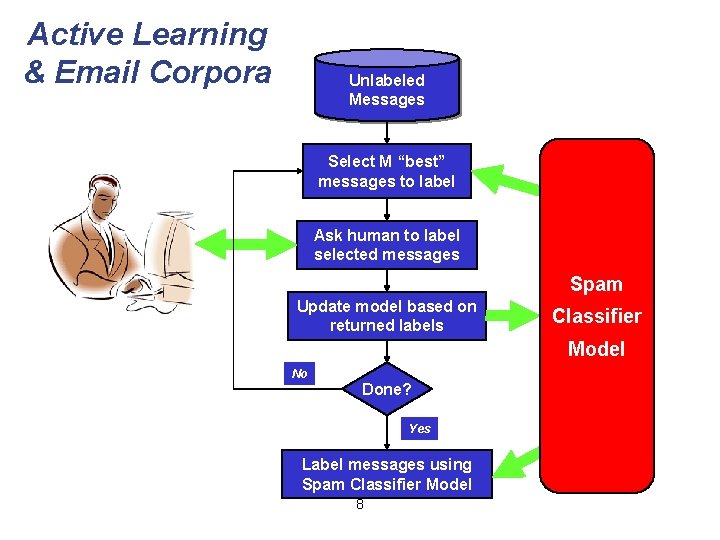

Active Learning & Email Corpora Unlabeled Messages Select M “best” messages to label Ask human to label selected messages Spam Update model based on returned labels Classifier Model No Done? Yes Label messages using Spam Classifier Model 8

Active Learning (cont. ) • Basic Challenge: Minimize the total cost of teacher queries required to achieve a target error rate, - often simply the fewest queries • Research Question: How does one selectively choose an optimal set of queries for the teacher during each update cycle? 9

Selective Sampling • Uncertainty Sampling† (US) is one selective sampling technique for choosing the most informative examples • US is based on the premise that the learner learns fastest by asking first about those examples it, itself, is most uncertain about † “A Sequential Algorithm for Training Text Classifiers”, D. D. Lewis & W. A. Gale, ACM SIGIR ‘ 94 10

Uncertainty Sampling (cont. ) • Minimizing total uncertainty over all examples is computationally expensive: O(n) • Can you reduce the # of questions asked in each cycle and still learn accurately? • Is picking just the most uncertain examples always the best learning strategy? • Can other knowledge be brought to bear in selecting the best questions? 11

Research Hypothesis • Hypothesis: It should be possible to achieve close to full US accuracy while asking fewer, better questions • Focused on development of Approximate Uncertainty Sampling (AUS) labelers – Compromise between speed of learning, # of questions asked & computational resources – Computational complexity is O(m log(n)) vs. O(n) for original Uncertainty Sampling algorithm 12

Research Approach 1. Construct competing AL/US-based labelers 2. Compare them by… – – Accuracy (% correct, FP’s & FN’s) # of teacher queries required to hit error rates Relative sample sizes Overall performance & resource usage 3. Select best labelers and refine them 13

Research Infrastructure • Built a Java labeler testbench for comparing labeler variations on IBM Spam. Guru codebase • Developed and tested several Uncertainty Sampling-based labelers • Used gold-standard, labeled 92 K msg TREC 2005 Enron mail corpus to simulate the teacher • Built a GUI front-end (CSI) to support human teacher interaction with labelers 14

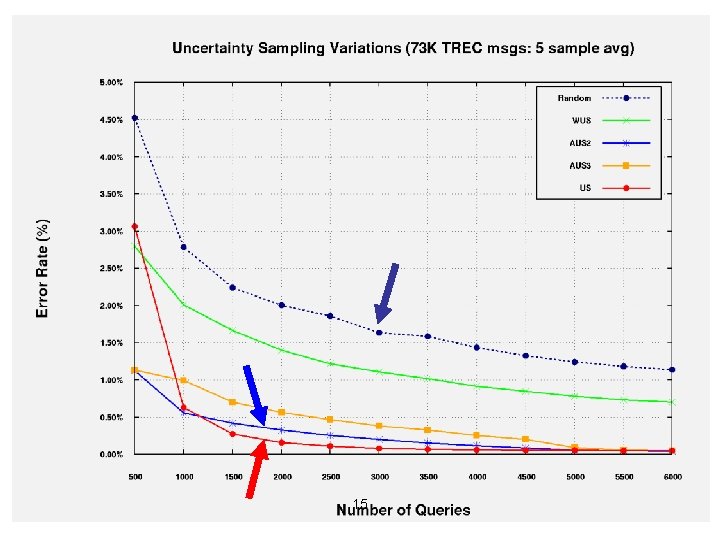

15

Benefits of AUS • Nearly as effective as vanilla US, but with lower computational complexity: O(m log(n)) • Reduced computational cost allows AUS to be applied to labeling larger datasets • AUS makes it possible to update the learned model more frequently • AUS is applicable to any AL/US-based solution, not just email corpus labeling 16

Ongoing Work • Determine why selective sampling of queries using simple unsupervised clustering (AUS 3 & AUS 4) didn’t produce better results • Develop enhanced clustering versions to attempt to improve AUS performance 17

18

- Slides: 18