The Anatomy of a Large Scale Hypertextual Web

The Anatomy of a Large. Scale Hypertextual Web Search Engine Sergey Brin, Lawrence Page CS Department Stanford University Presented by Md. Abdus Salam CSE Department University of Texas at Arlington

Introduction

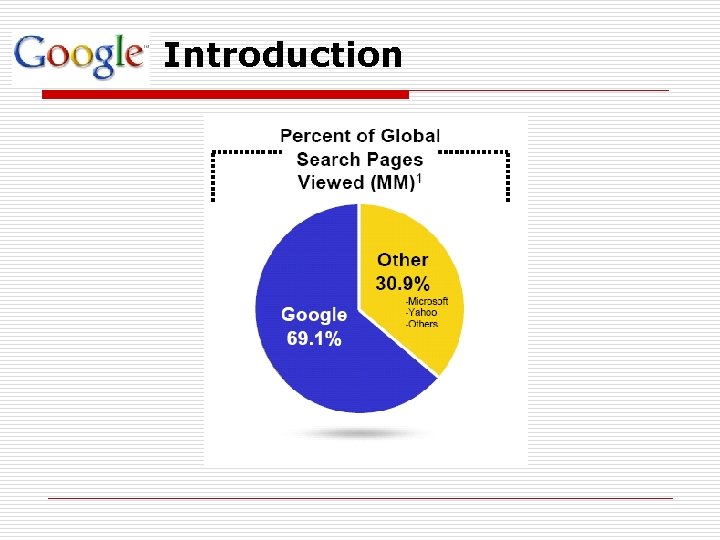

Introduction

Introduction o Google’s Mission To organize the world’s information and make it universally accessible and useful o Scaling with the web n Improved Search Quality n Academic Search Engine Research

System Features o It makes use of the link structure of the Web to calculate a quality ranking for each web page, called Page. Rank n Page. Rank is a trademark of Google. The Page. Rank process has been patented. o Google utilizes link to improve search results

Page. Rank n Page. Rank is a link analysis algorithm which assigns a numerical weighting to each Web page, with the purpose of "measuring" relative importance. n Based on the hyperlinks map n An excellent way to prioritize the results of web keyword searches

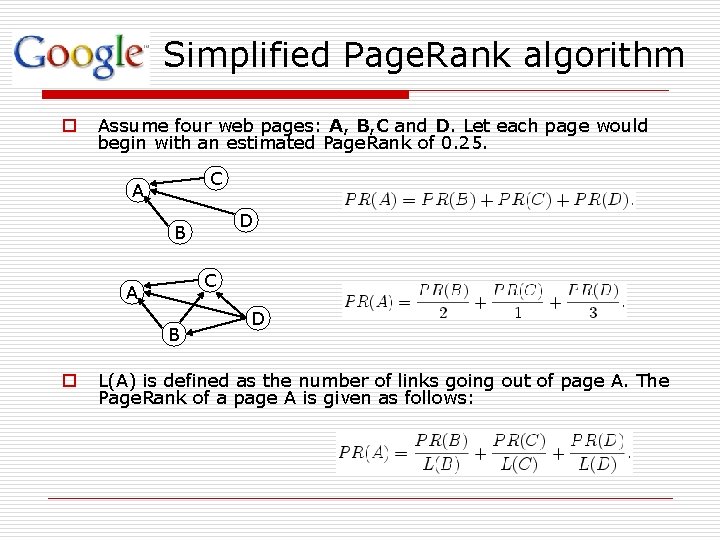

Simplified Page. Rank algorithm o Assume four web pages: A, B, C and D. Let each page would begin with an estimated Page. Rank of 0. 25. C A D B C A B o D L(A) is defined as the number of links going out of page A. The Page. Rank of a page A is given as follows:

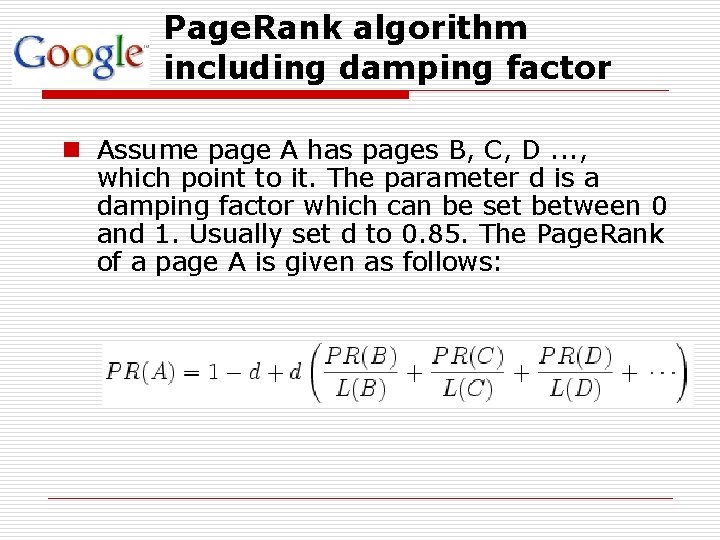

Page. Rank algorithm including damping factor n Assume page A has pages B, C, D. . . , which point to it. The parameter d is a damping factor which can be set between 0 and 1. Usually set d to 0. 85. The Page. Rank of a page A is given as follows:

Intuitive Justification o A "random surfer" who is given a web page at random and keeps clicking on links, never hitting "back“, but eventually gets bored and starts on another random page. n n The probability that the random surfer visits a page is its Page. Rank. The d damping factor is the probability at each page the "random surfer" will get bored and request another random page. o A page can have a high Page. Rank n If there are many pages that point to it n Or if there are some pages that point to it, and have a high Page. Rank.

Anchor Text o <A href="http: //www. yahoo. com/">Yahoo!</A> Besides the text of a hyperlink (anchor text) is associated with the page that the link is on, it is also associated with the page the link points to. n anchors often provide more accurate descriptions of web pages than the pages themselves. n anchors may exist for documents which cannot be indexed by a text-based search engine, such as images, programs, and databases.

Other Features o It has location information for all hits. o Google keeps track of some visual presentation details such as font size of words. n Words in a larger or bolder font are weighted higher than other words. o Full raw HTML of pages is available in a repository

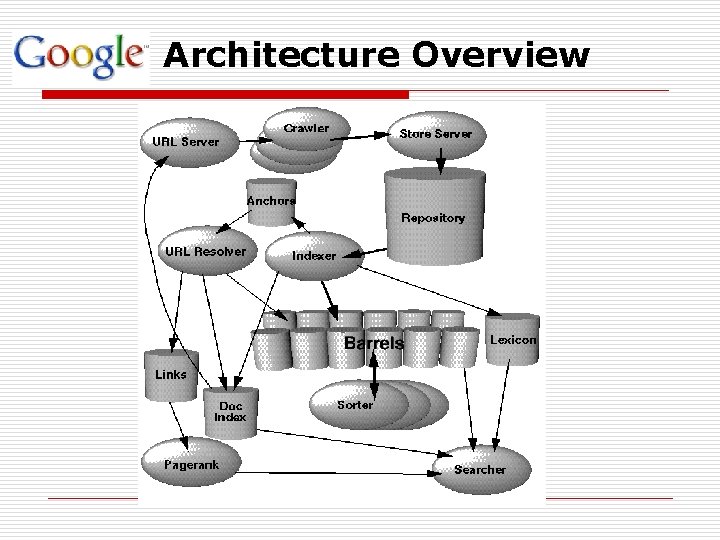

Architecture Overview

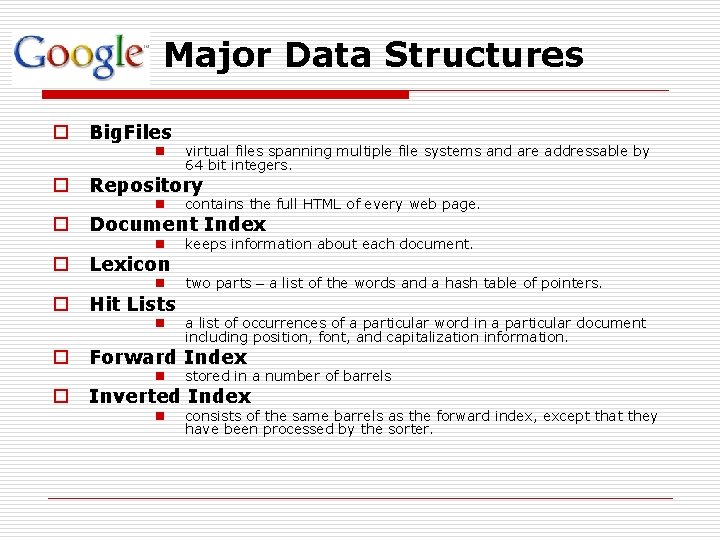

Major Data Structures o Big. Files n o o Repository n contains the full HTML of every web page. n keeps information about each document. n two parts – a list of the words and a hash table of pointers. Document Index Lexicon Hit Lists n o o virtual files spanning multiple file systems and are addressable by 64 bit integers. a list of occurrences of a particular word in a particular document including position, font, and capitalization information. Forward Index n stored in a number of barrels n consists of the same barrels as the forward index, except that they have been processed by the sorter. Inverted Index

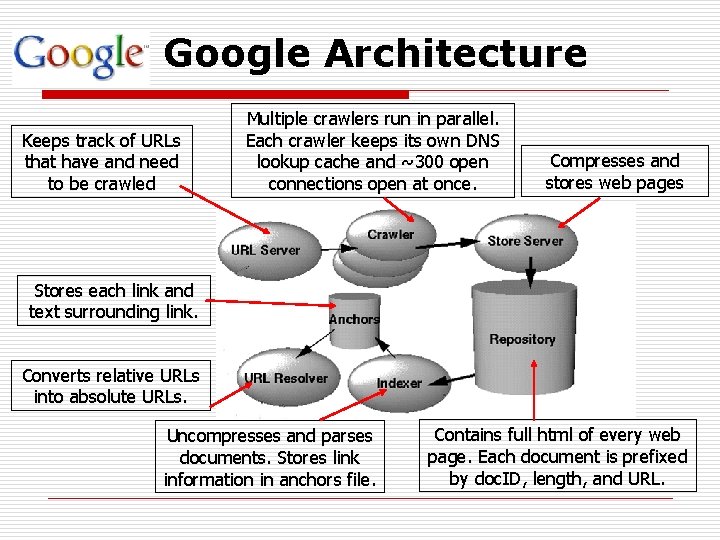

Google Architecture Keeps track of URLs that have and need to be crawled Multiple crawlers run in parallel. Each crawler keeps its own DNS lookup cache and ~300 open connections open at once. Compresses and stores web pages Stores each link and text surrounding link. Converts relative URLs into absolute URLs. Uncompresses and parses documents. Stores link information in anchors file. Contains full html of every web page. Each document is prefixed by doc. ID, length, and URL.

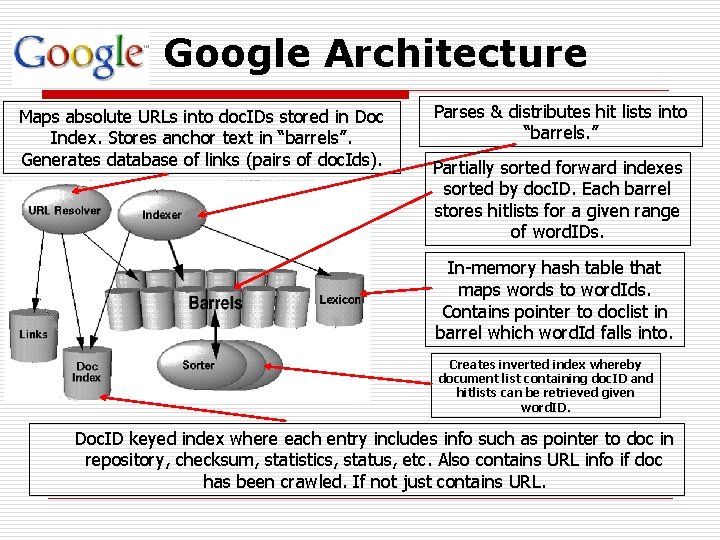

Google Architecture Maps absolute URLs into doc. IDs stored in Doc Index. Stores anchor text in “barrels”. Generates database of links (pairs of doc. Ids). Parses & distributes hit lists into “barrels. ” Partially sorted forward indexes sorted by doc. ID. Each barrel stores hitlists for a given range of word. IDs. In-memory hash table that maps words to word. Ids. Contains pointer to doclist in barrel which word. Id falls into. Creates inverted index whereby document list containing doc. ID and hitlists can be retrieved given word. ID. Doc. ID keyed index where each entry includes info such as pointer to doc in repository, checksum, statistics, status, etc. Also contains URL info if doc has been crawled. If not just contains URL.

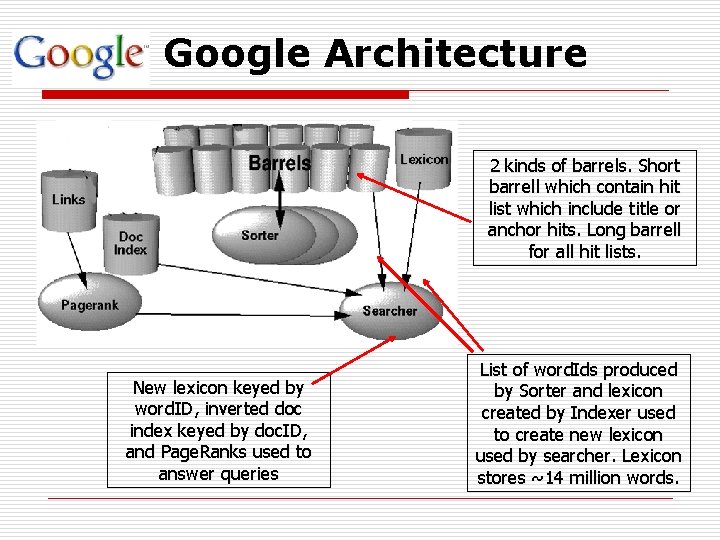

Google Architecture 2 kinds of barrels. Short barrell which contain hit list which include title or anchor hits. Long barrell for all hit lists. New lexicon keyed by word. ID, inverted doc index keyed by doc. ID, and Page. Ranks used to answer queries List of word. Ids produced by Sorter and lexicon created by Indexer used to create new lexicon used by searcher. Lexicon stores ~14 million words.

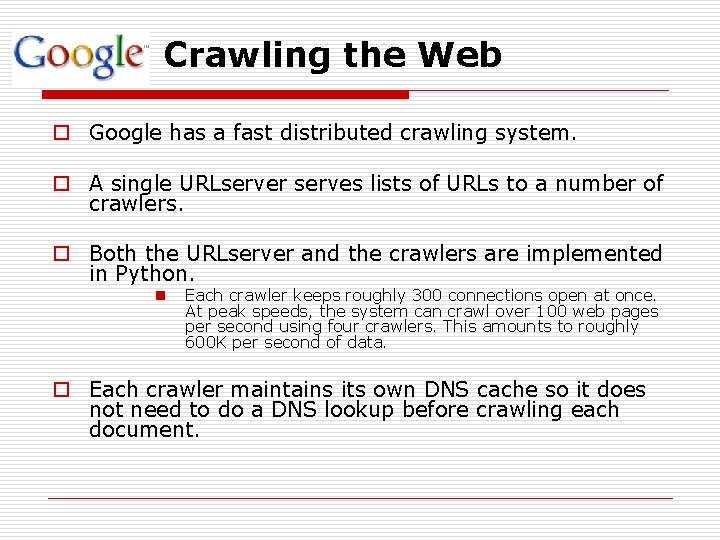

Crawling the Web o Google has a fast distributed crawling system. o A single URLserver serves lists of URLs to a number of crawlers. o Both the URLserver and the crawlers are implemented in Python. n Each crawler keeps roughly 300 connections open at once. At peak speeds, the system can crawl over 100 web pages per second using four crawlers. This amounts to roughly 600 K per second of data. o Each crawler maintains its own DNS cache so it does not need to do a DNS lookup before crawling each document.

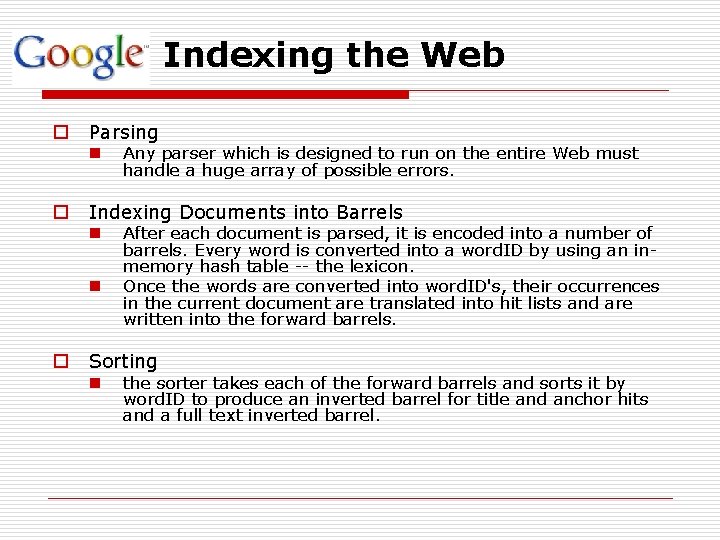

Indexing the Web o Parsing n o Indexing Documents into Barrels n n o Any parser which is designed to run on the entire Web must handle a huge array of possible errors. After each document is parsed, it is encoded into a number of barrels. Every word is converted into a word. ID by using an inmemory hash table -- the lexicon. Once the words are converted into word. ID's, their occurrences in the current document are translated into hit lists and are written into the forward barrels. Sorting n the sorter takes each of the forward barrels and sorts it by word. ID to produce an inverted barrel for title and anchor hits and a full text inverted barrel.

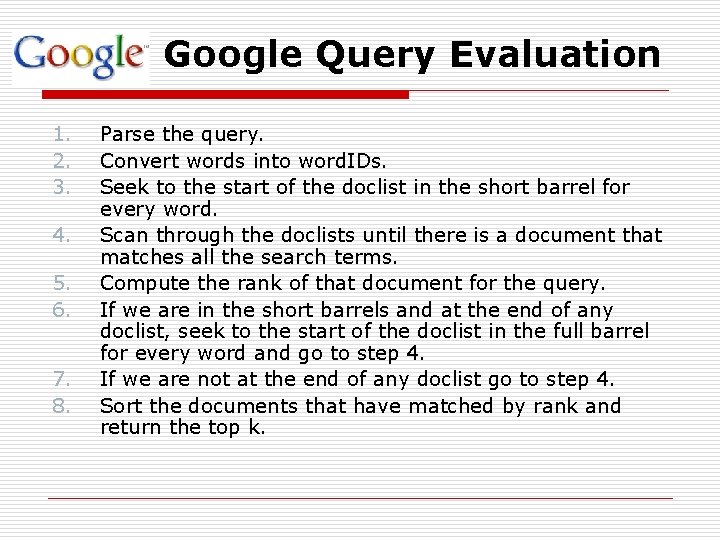

Google Query Evaluation 1. 2. 3. 4. 5. 6. 7. 8. Parse the query. Convert words into word. IDs. Seek to the start of the doclist in the short barrel for every word. Scan through the doclists until there is a document that matches all the search terms. Compute the rank of that document for the query. If we are in the short barrels and at the end of any doclist, seek to the start of the doclist in the full barrel for every word and go to step 4. If we are not at the end of any doclist go to step 4. Sort the documents that have matched by rank and return the top k.

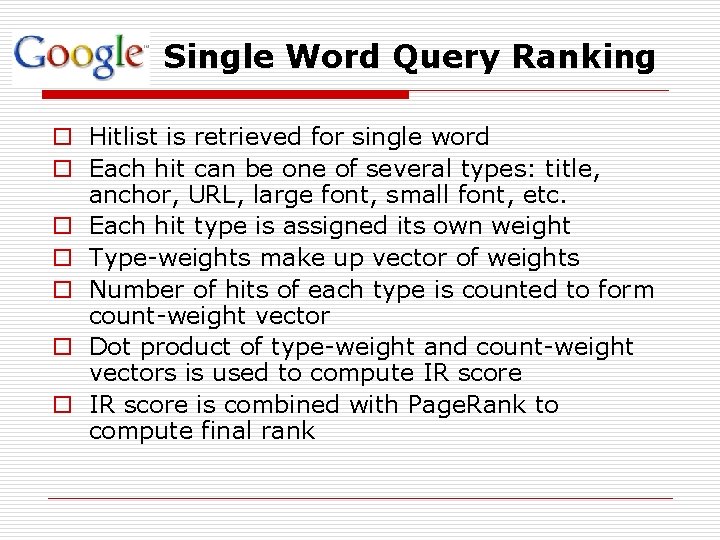

Single Word Query Ranking o Hitlist is retrieved for single word o Each hit can be one of several types: title, anchor, URL, large font, small font, etc. o Each hit type is assigned its own weight o Type-weights make up vector of weights o Number of hits of each type is counted to form count-weight vector o Dot product of type-weight and count-weight vectors is used to compute IR score o IR score is combined with Page. Rank to compute final rank

Multi-word Query Ranking o Similar to single-word ranking except now must analyze proximity of words in a document o Hits occurring closer together are weighted higher than those farther apart o Each proximity relation is classified into 1 of 10 bins ranging from a “phrase match” to “not even close” o Each type and proximity pair has a type-prox weight o Counts converted into count-weights o Take dot product of count-weights and typeprox weights to computer for IR score

Scalability o Cluster architecture combined with Moore’s Law make for high scalability. At time of writing: n ~ 24 million documents indexed in one week n ~518 million hyperlinks indexed n Four crawlers collected 100 documents/sec

Key Optimization Techniques o Each crawler maintains its own DNS lookup cache o Use flex to generate lexical analyzer with own stack for parsing documents o Parallelization of indexing phase o In-memory lexicon o Compression of repository o Compact encoding of hit lists for space saving o Indexer is optimized so it is just faster than the crawler so that crawling is the bottleneck o Document index is updated in bulk o Critical data structures placed on local disk o Overall architecture designed avoid to disk seeks wherever possible

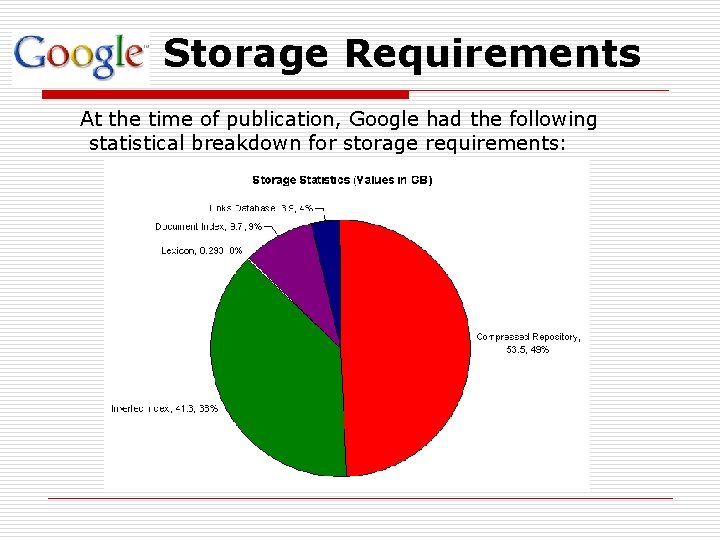

Storage Requirements At the time of publication, Google had the following statistical breakdown for storage requirements:

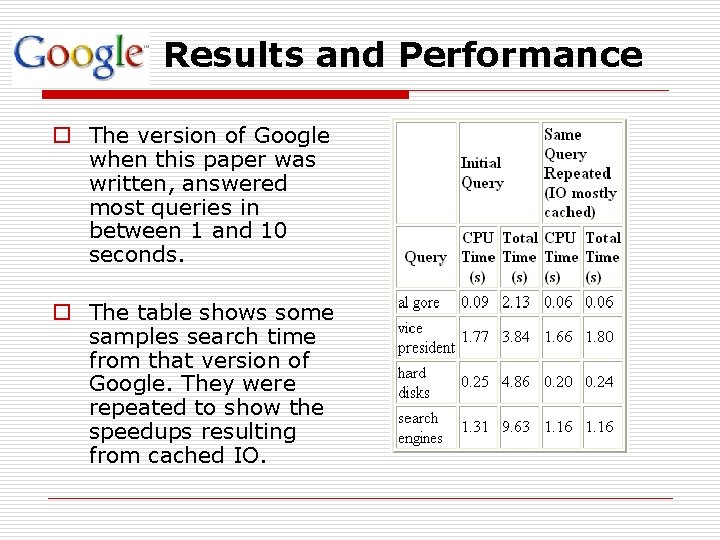

Results and Performance o The version of Google when this paper was written, answered most queries in between 1 and 10 seconds. o The table shows some samples search time from that version of Google. They were repeated to show the speedups resulting from cached IO.

Conclusion o Google is designed to be a scalable search engine. o The primary goal is to provide high quality search results over a rapidly growing World Wide Web. o Google employs a number of techniques to improve search quality including page rank, anchor text, and proximity information. o Google is a complete architecture for gathering web pages, indexing them, and performing search queries over them.

Google bomb n Because of the Page. Rank, a page will be ranked higher if the sites that link to that page use consistent anchor text. n A Google bomb is created if a large number of sites link to the page in this manner. n search term "more evil than Satan himself" the Microsoft homepage as the top result.

Problems o All Shopping, All the Time – Searching for “flowers”, more than 90% of the top results are online florists. o Skewed Synonyms – If “apple” is searched, the top results would be related to apple computers. o Book Learning – People are implicitly pushed toward information stored in articles and away from information stored in books.

The Future “The ultimate search engine would understand exactly what you mean and give back exactly what you want. ” - Larry Page

Web Search For A Planet The Google Cluster Architecture Luiz Andre Barroso Jeffrey Dean Urs Holzle Google Presented by Md. Abdus Salam CSE Department University of Texas at Arlington

Basic Cluster Design Insights o Reliability in software rather than server-class hardware. o Commodity PCs used to build high-end computing cluster at a low end prices. o Example: n n $278, 000 – 176 x 2 GHz Xeon, 176 GB RAM, 7 TB HDD $758, 000 – 8 x 2 GHZ Xeon, 64 GB RAM, 8 TB HDD o Design is tailored for best aggregate request throughput, not peak server response time – individual request parallelization

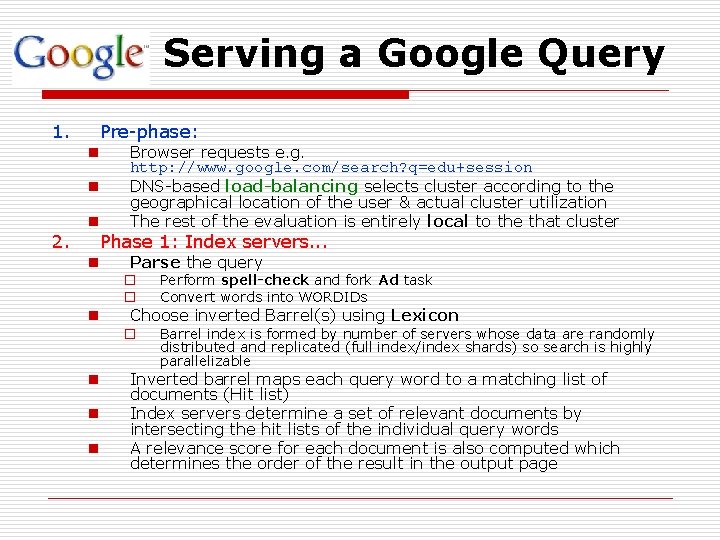

Serving a Google Query 1. Pre-phase: n Browser requests e. g. http: //www. google. com/search? q=edu+session DNS-based load-balancing selects cluster according to the geographical location of the user & actual cluster utilization The rest of the evaluation is entirely local to the that cluster n Parse the query n n 2. Phase 1: Index servers. . . n n o o Perform spell-check and fork Ad task Convert words into WORDIDs o Barrel index is formed by number of servers whose data are randomly distributed and replicated (full index/index shards) so search is highly parallelizable Choose inverted Barrel(s) using Lexicon Inverted barrel maps each query word to a matching list of documents (Hit list) Index servers determine a set of relevant documents by intersecting the hit lists of the individual query words A relevance score for each document is also computed which determines the order of the result in the output page

Serving a Google Query 3. Phase 2: Document servers. . . n For each DOCID compute actual title, URL and query-specific document summary (matched words context). n Document servers are used to dispatch this completion – also documents are randomly distributed and replicated, so the completion is highly parallelizable

Cluster Design Principles o Software reliability o Use replication for better request throughput and availability o Price/performance beats peak performance o Using commodity PCs reduces the cost of computation

Bonus

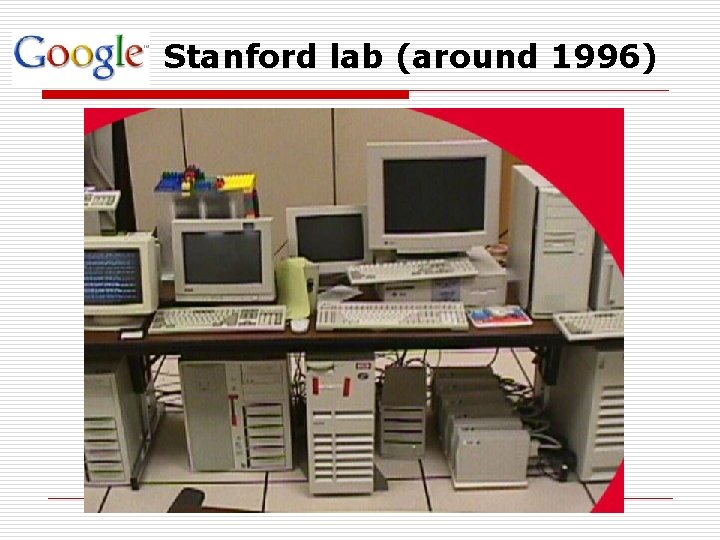

Stanford lab (around 1996)

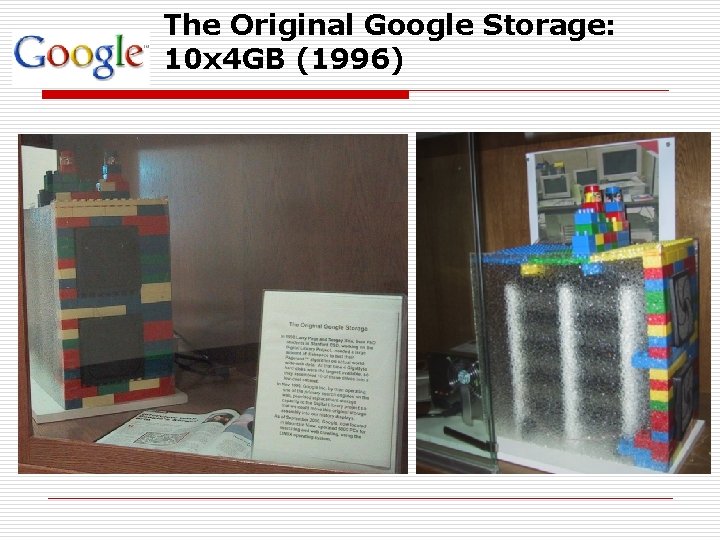

The Original Google Storage: 10 x 4 GB (1996)

Google San Francisco (2004)

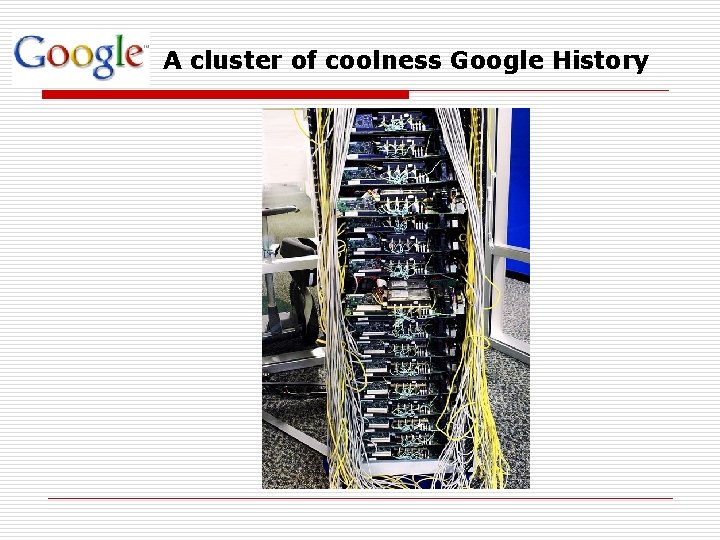

A cluster of coolness Google History

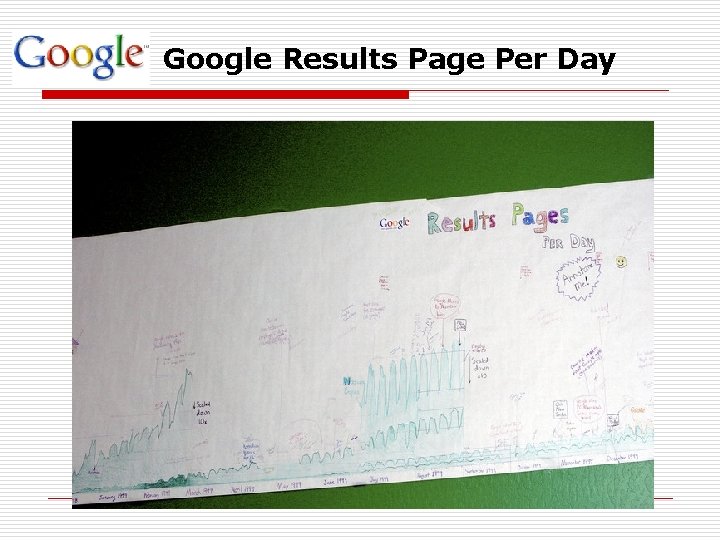

Google Results Page Per Day

References o Sergey Brin, Lawrence Page; The Anatomy of a Large-Scale Hypertextual Web Search Engine; 1998 o Luiz André Barroso, Jeffrey Dean and Urs Hoelzle: Web Search for a Planet: The Google Cluster Architecture; 2003 o http: //www-db. stanford. edu/pub/voy/museum/pictures o o o www. e-mental. com/dvorka/ppt/google. Cluster. Innards. ppt www. cis. temple. edu/~vasilis/Courses/CIS 664/Papers/Angoogle. ppt www. cs. uvm. edu/~xwu/kdd/Page. Rank. ppt

Thanks!

- Slides: 42