The Anatomy of a LargeScale Hypertextual Web Search

The Anatomy of a Large-Scale Hypertextual Web Search Engine By Sergey Brin and Lawrence Page Presented by Joshua Haley Zeyad Zainal Michael Lopez Michael Galletti Britt Phillips Jeff Masson

Searching in the 90’s • Search Engine Technology had to deal with huge growths. 2000000 1500000 Web Pages Indexed 1994 v. 1997 1000000 500000 0 Indexed Pages in 94 (110 K) in 97 (2 M) Queries Per Day 1994 v. 1997 20000000 15000000 10000000 5000000 0 Queries Per Day 94 (1. 5 K) 97 (20 M)

Google will Scale • They wanted a search engine that: – Has fast crawling capabilities – Use Storage Space Efficiently – Process Indexes fast – Handles Queries fast • They Had to Deal with Scaling Difficulties – Disk Speeds and OS robustness not scaling as well as hardware performance and cost

The Google Goals • Improve Search Quality – Remove Junk Results (Prioritizing of Results) • Academic Search Engine Research – Create Literature on the subject of Databases • Gather Usage Data – Data bases can support research • Support Novel Research Activities on Web Data

System Features • Two important features that help it produce high precision results: – Page. Rank – Anchor Text

Page. Rank • Graph structure of hyperlinks hadn’t been used by other search engines • Graph of 518 million hyperlinks • Text matching using page titles performs well after pages are prioritized • Similar results when looking at entire pages

Page. Rank Formula • Not all pages linking to others are counted equally • PR(A) = (1 -d) + d (PR(T 1)/C(T 1) +. . . + PR(Tn)/C(Tn)) – A: page – T 1…Tn: pages linking to it – C(A): pages linking out of it – d: “damping factor” • Page. Rank for 26 m pages can be calculated in a few hours

Intuitive Justification • A page can have a high Page. Rank if many pages link to it • Or if a high Page. Rank’d page links to it (eg: Yahoo News) – The page wouldn’t be linked to if it wasn’t high quality, or it had a broken link • Page. Rank handles these cases by propagating the weights of different pages

Anchor Text • Anchors provide more accurate descriptions than the page itself. • Anchors exist for documents that aren’t textbased (eg. Images, videos, etc) • Google indexed more than 259 m anchors from just 24 m pages.

Other Features • Larger font sizes or bold fonts carry more weight than other words

Related Work

Early Search Engines • The World Wide Web Worm (WWWW) – One of the first web search engines (Developed 1994) – Had a database of 300, 000 multimedia objects • Some early search engines retrieved results by post-processing the results of other search engines.

Information Retrieval • The science of searching for documents or information within documents and for metadata about documents. • Most research is on small collections of scientific papers or news stories on a related topic. • Text Retrieval Conference is the primary benchmark for information retrieval – Uses the “Very Large Corpus”, a small and well controlled collection for their benchmarks – Very Large Corpus benchmark is only 20 GB

Information Retrieval • The Text Retrieval Conference doesn’t produce good results on the web – EX: A search of “Bill Clinton” would return a page that only says “Bill Clinton Sucks” and have a picture of him. Brin and Page believe that for a search of “Bill Clinton” you should receive reasonable results because there is so much information on the topic. • The standard information retreival work needs to be extended to deal effectively with the web

Differences Between the Web and Well Controlled Collections • Documents differ internally in their language, vocabulary, type or format, and may even be machine generated. • External meta information is information that can be inferred about a document but is not contained within it. – Ex: reputation of the source, update frequency, quality, popularity, etc. • A page like Yahoo needs to be treated differently than an article or web page that receives one view every ten years.

Differences Between the Web and Well Controlled Collections • There is no control over what people can put on the web • Some companies manipulate search engines to route traffic for profit • Metadata efforts have largely failed with web search engines because a user can be returned a web page that has nothing to do with the query due to the search engine being manipulated.

The Anatomy of a Large-Scale Hypertextual Web Search Engine By Sergey Brin and Lawrence Page Presented by Joshua Haley Zeyad Zainal Michael Lopez Michael Galletti Britt Phillips Jeff Masson

System Anatomy • High-level discussion of architecture • Descriptions of data structures – Repository – Lexicon – Hit. Lists – Forward and Inverted Indices • Major applications – Crawling – Indexing – Searching

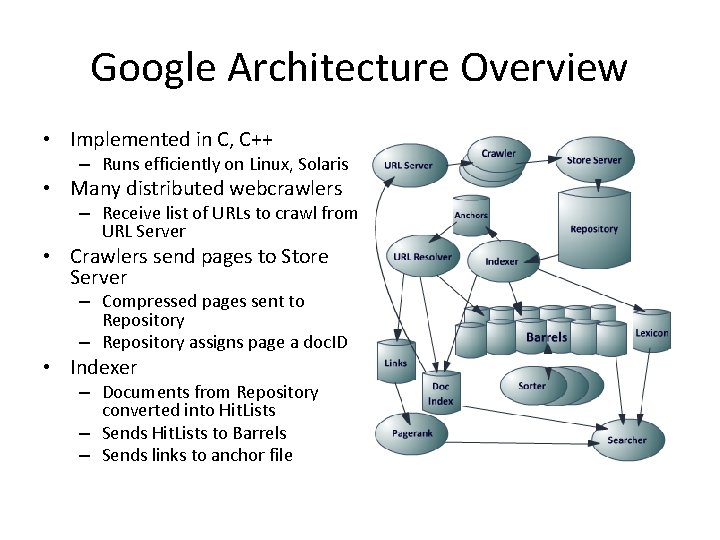

Google Architecture Overview • Implemented in C, C++ – Runs efficiently on Linux, Solaris • Many distributed webcrawlers – Receive list of URLs to crawl from URL Server • Crawlers send pages to Store Server – Compressed pages sent to Repository – Repository assigns page a doc. ID • Indexer – Documents from Repository converted into Hit. Lists – Sends Hit. Lists to Barrels – Sends links to anchor file

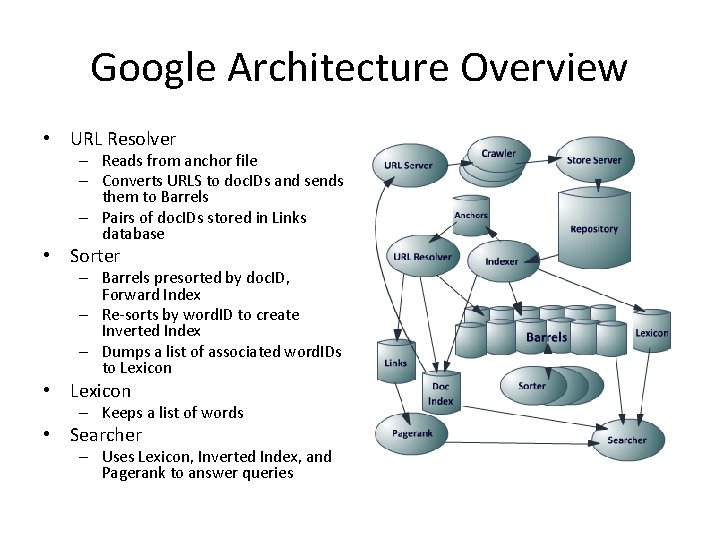

Google Architecture Overview • URL Resolver – Reads from anchor file – Converts URLS to doc. IDs and sends them to Barrels – Pairs of doc. IDs stored in Links database • Sorter – Barrels presorted by doc. ID, Forward Index – Re-sorts by word. ID to create Inverted Index – Dumps a list of associated word. IDs to Lexicon • Lexicon – Keeps a list of words • Searcher – Uses Lexicon, Inverted Index, and Pagerank to answer queries

Repository • Big. Files • Repository – Contains full HTML of every web page – Compression decision • Bzip offers 4 : 1 compression • Zlib offers 3 : 1, is faster – Opted for speed over ratio Repository 53. 5 GB = 147. 8 GB Uncompressed Sync Length Compressed packet Uncompressed Packet doc. Id ecode url. Len page. Len url page – Virtual files spanning multiple file systems – Operating systems did not provide enough for system needs • Repository access – No additional data structures necessary – Reduces complexity – Can rebuild all data structures from Respository

Document Index and Lexicon • Document Index – Stores information about each document – Fixed-width ISAM (Index. Sequential Access Mode) ordered by doc. ID – Information includes: • • Status Pointer into Repository Checksum Various Statistics – Record fetching • Document points to docinfo file with URL and title if previously crawled • Otherwise points to URL in URLlist • doc. ID Allocation – File of all document checksums paired with doc. IDs • Sorted by checksum – Find doc. ID • 1. Checksum of URL is computed • 2. Binary search over file – May be done in batches • Lexicon – Capable of existing in main memory of a machine – Holds 14 million words • Linked-List of words • Hash table of pointers

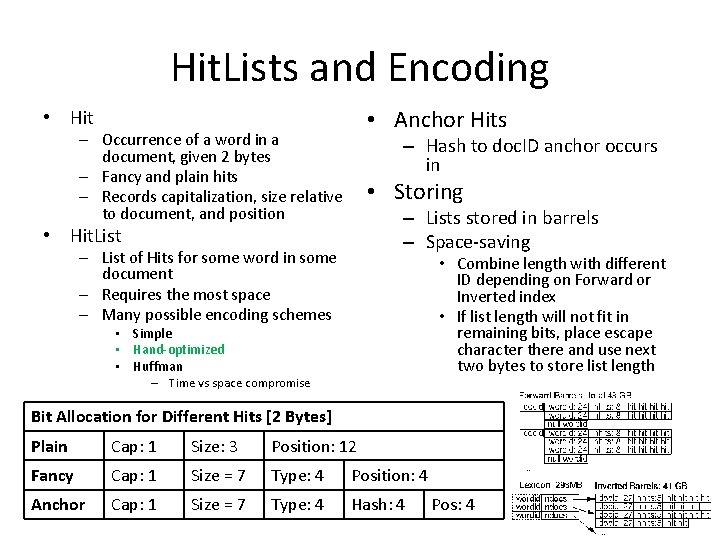

Hit. Lists and Encoding • Anchor Hits • Hit – Occurrence of a word in a document, given 2 bytes – Fancy and plain hits – Records capitalization, size relative to document, and position – Hash to doc. ID anchor occurs in • Storing – Lists stored in barrels – Space-saving • Hit. List – List of Hits for some word in some document – Requires the most space – Many possible encoding schemes • Combine length with different ID depending on Forward or Inverted index • If list length will not fit in remaining bits, place escape character there and use next two bytes to store list length • Simple • Hand-optimized • Huffman – Time vs space compromise Bit Allocation for Different Hits [2 Bytes] Plain Cap: 1 Size: 3 Position: 12 Fancy Cap: 1 Size = 7 Type: 4 Position: 4 Anchor Cap: 1 Size = 7 Type: 4 Hash: 4 Pos: 4

Forward and Inverted Indices • Forward Index – 64 barrels • Each one corresponds to a range of word. IDs – Words in documents broken up into ranges • doc. ID is recorded into appropriate barrel • List of word. IDs with Hit. Lists follow • word. IDs stored relative to Barrel starting index – Fit in 24 bits, leaving 8 for list length – System requires more storage for duplicate IDs • However, coding complexity greatly reduced • Inverted Index – Created after Barrels go through Sorter – For each valid word. ID there is a pointer from Lexicon into corresponding Barrel – Points to doc. List of doc. IDs and matching Hit. Lists • Represents every document in which a particular word appears • doc. List Ordering – Sort by doc. ID • Quick for multi-word queries – Sort by ranking of occurrence • One word queries trivial • Multi-word queries likely near start of list • Merging is difficult • Development is difficult – Compromise! • Keep two sets of Barrels

Crawling The Web

Crawling Accessing millions of webpages and logging data DNS caching for increased performance Email from web admins Unpredictable bugs Copyright problems Robots. txt

Indexing The Web Parsing HTML data Handle wide variety of errors Encoding to Barrels Turning words into Word. Ids Hashing all the data Sorting data recursively – Bucket Sort

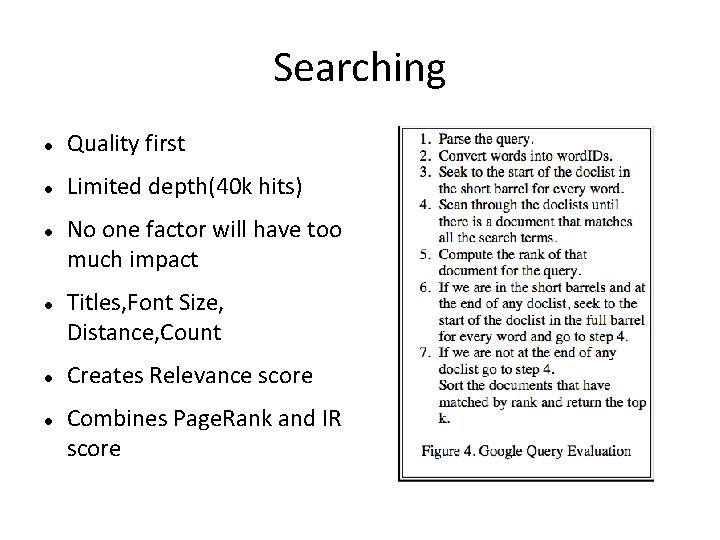

Searching Quality first Limited depth(40 k hits) No one factor will have too much impact Titles, Font Size, Distance, Count Creates Relevance score Combines Page. Rank and IR score

User Feedback User input vital to improved search results Verified users can evaluate results and send their ratings back Adjust ranking system Verify that old results are still valid

Results and Performance

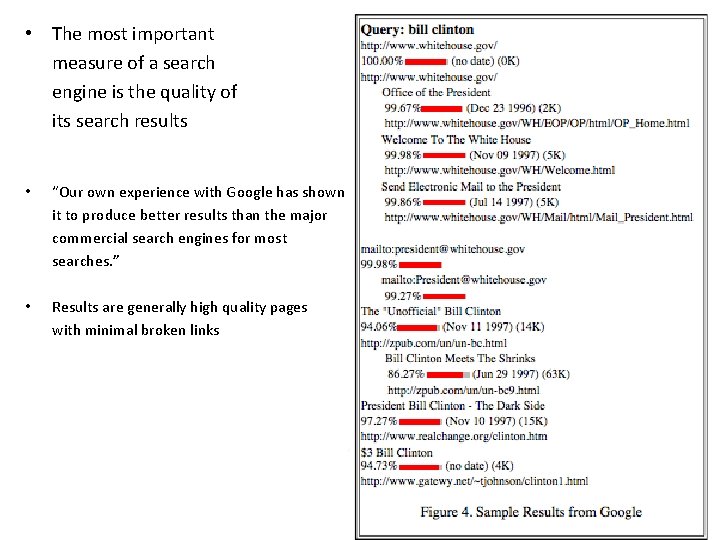

• The most important measure of a search engine is the quality of its search results • “Our own experience with Google has shown it to produce better results than the major commercial search engines for most searches. ” • Results are generally high quality pages with minimal broken links

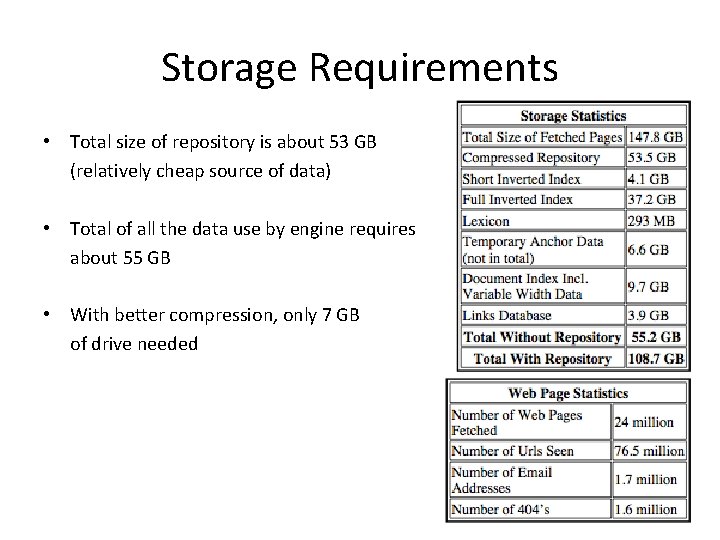

Storage Requirements • Total size of repository is about 53 GB (relatively cheap source of data) • Total of all the data use by engine requires about 55 GB • With better compression, only 7 GB of drive needed

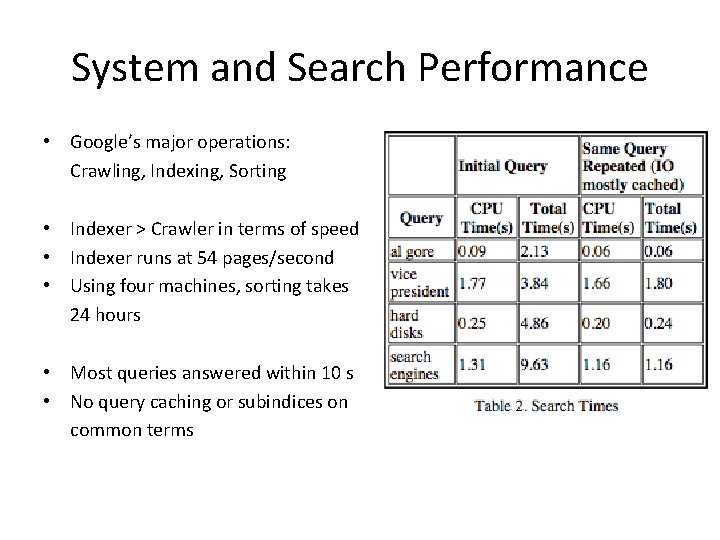

System and Search Performance • Google’s major operations: Crawling, Indexing, Sorting • Indexer > Crawler in terms of speed • Indexer runs at 54 pages/second • Using four machines, sorting takes 24 hours • Most queries answered within 10 s • No query caching or subindices on common terms

Conclusions • Google is designed to a be a scalable search engine, providing high quality search results. • Future Work: Query caching, smart disk allocation, subindices Smart algorithms to decide what old web pages should be recrawled and which new ones should be crawled Using proxy caches to build search databases and adding boolean operators, negation, and stemming Support user context and result summarization

• High Quality Search: Users want high quality results without being frustrates and wasting time. Google returns higher quality search results than current commercial search engines; Link structure analysis determines quality of pages, link description determines relevance. • Scalable Architecture: Google is efficient in both space and time Google has overcome bottleneck in CPU, memory access and capacity, and disk I/O during various operations to prove excellence Crawling, Indexing, Sorting are efficient enough to build 24 million pages in less than a week

- Slides: 36