Robust Network Compressive Sensing Lili Qiu UT Austin

Robust Network Compressive Sensing Lili Qiu UT Austin NSF Workshop Nov. 12, 2014

Network Matrices and Applications • Network matrices – – – Traffic matrix Loss matrix Delay matrix Channel State Information (CSI) matrix RSS matrix 2

3 Q: How to fill in missing values in a matrix?

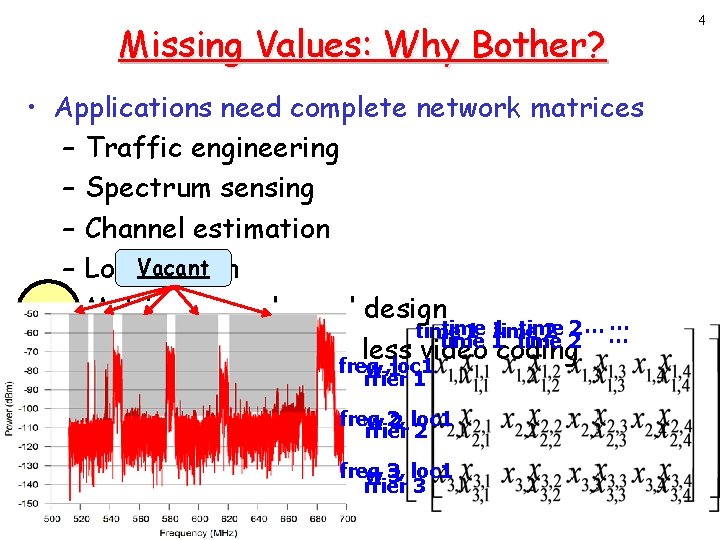

Missing Values: Why Bother? • Applications need complete network matrices – Traffic engineering – Spectrum sensing – Channel estimation Vacant – Localization 1 – Multi-access channel design … time 2… … time 1 time 2 time 1 flow 3 time 1 time 2 – Network coding, wireless video coding link 1 freq link 2 flow , loc 1 1 1 subcarrier – Anomaly detection 2 flow 2 router freq flow 2, 2 loc 1 2 – Data aggregationsubcarrier flow 1 freq 3, loc 1 –… flow 3 subcarrier 3 3 link 3 4

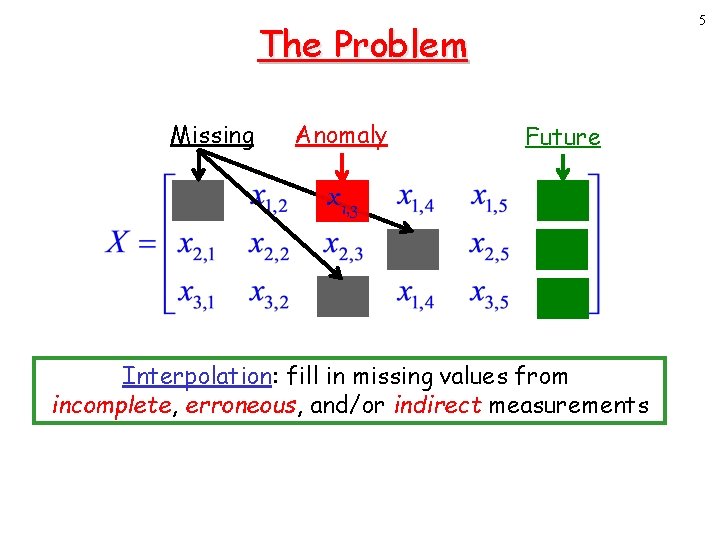

5 The Problem Missing Anomaly Future x 1, 3 Interpolation: fill in missing values from incomplete, erroneous, and/or indirect measurements

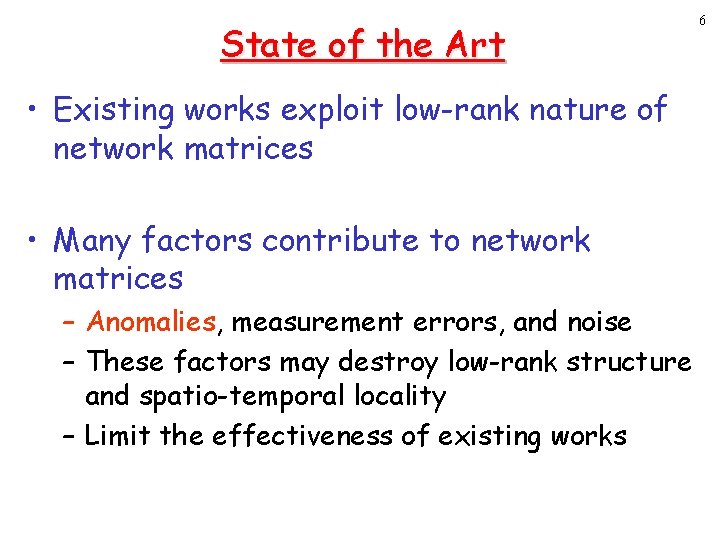

State of the Art • Existing works exploit low-rank nature of network matrices • Many factors contribute to network matrices – Anomalies, measurement errors, and noise – These factors may destroy low-rank structure and spatio-temporal locality – Limit the effectiveness of existing works 6

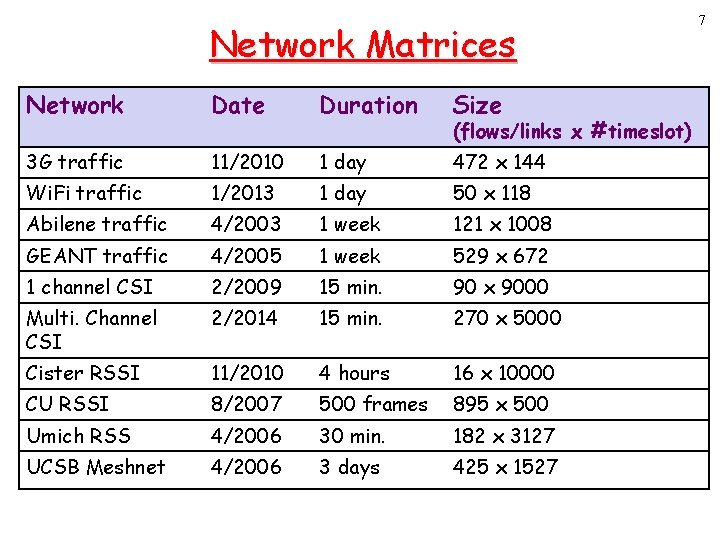

Network Matrices Network Date Duration Size 3 G traffic 11/2010 1 day 472 x 144 Wi. Fi traffic 1/2013 1 day 50 x 118 Abilene traffic 4/2003 1 week 121 x 1008 GEANT traffic 4/2005 1 week 529 x 672 1 channel CSI 2/2009 15 min. 90 x 9000 Multi. Channel CSI 2/2014 15 min. 270 x 5000 Cister RSSI 11/2010 4 hours 16 x 10000 CU RSSI 8/2007 500 frames 895 x 500 Umich RSS 4/2006 30 min. 182 x 3127 UCSB Meshnet 4/2006 3 days 425 x 1527 (flows/links x #timeslot) 7

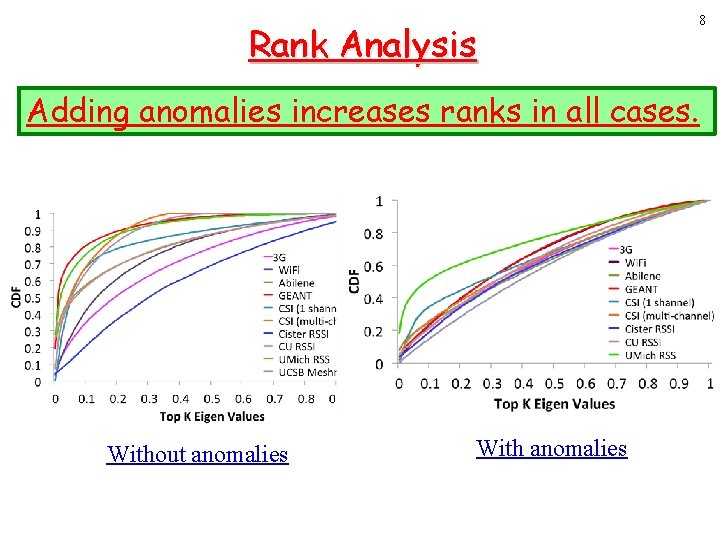

Rank Analysis Adding anomalies increases ranks in all cases. Without anomalies With anomalies 8

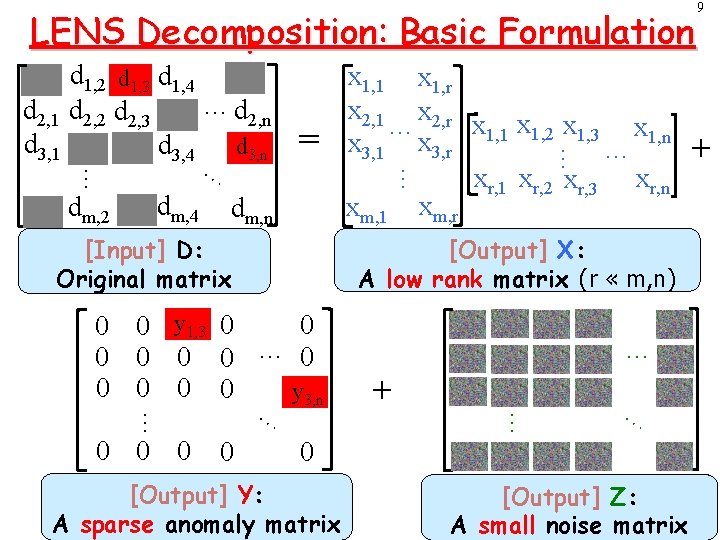

LENS Decomposition: Basic Formulation dm, 4 … … dm, 2 … = dm, n [Input] D: Original matrix [Output] X: A low rank matrix (r « m, n) 0 0 0 y 1, 3 0 0 0 … 0 0 0 y 3, n 0 … + … … … 0 0 0 x 1, 1 x 1, r x 2, 1 x 2, r x 1, 1 x 1, 2 x 1, 3 x 1, n … x 3, 1 x 3, r … xr, 1 xr, 2 xr, 3 xr, n xm, 1 xm, r … d 2, 1 d 3, 1 d 1, 2 d 1, 3 d 1, 4 …d d 2, 2 d 2, 3 2, n d 3, 4 d 3, n … 0 [Output] Y: A sparse anomaly matrix [Output] Z: A small noise matrix 9 +

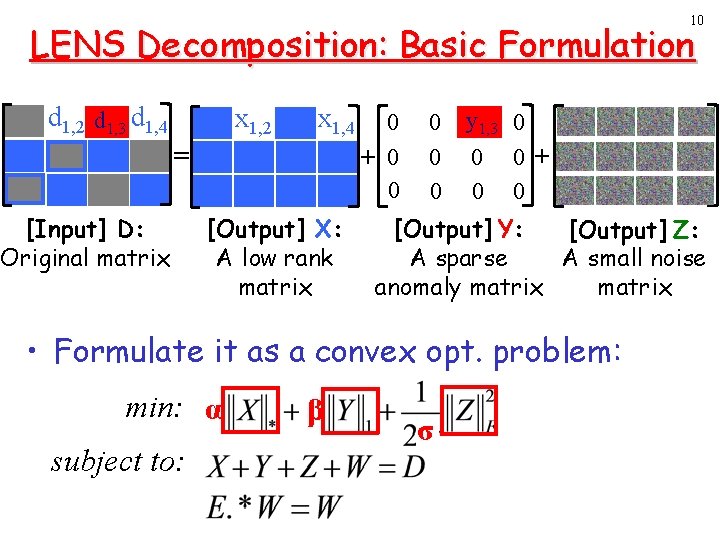

10 LENS Decomposition: Basic Formulation d 1, 2 d 1, 3 d 1, 4 x 1, 2 x 1, 4 = [Input] D: Original matrix [Output] X: A low rank matrix 0 + 0 0 0 y 1, 3 0 0 0+ 0 0 [Output] Y: [Output] Z: A sparse A small noise anomaly matrix • Formulate it as a convex opt. problem: min: α subject to: β σ

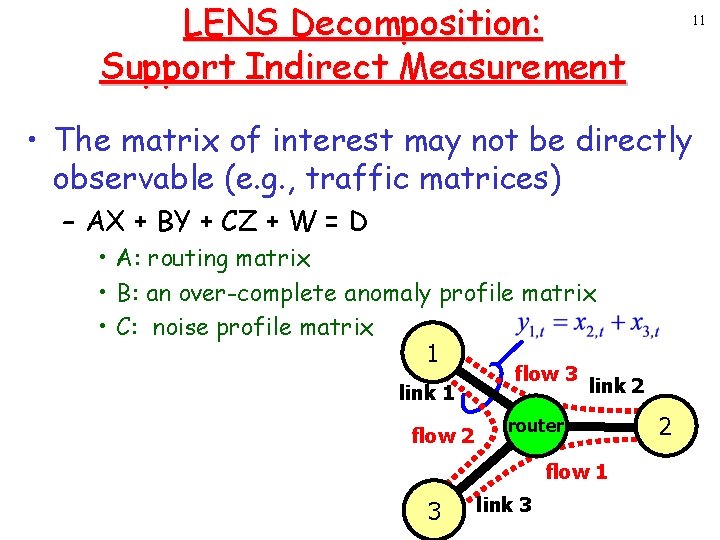

LENS Decomposition: Support Indirect Measurement 11 • The matrix of interest may not be directly observable (e. g. , traffic matrices) – AX + BY + CZ + W = D • A: routing matrix • B: an over-complete anomaly profile matrix • C: noise profile matrix 1 link 1 flow 2 flow 3 link 2 router flow 1 3 link 3 2

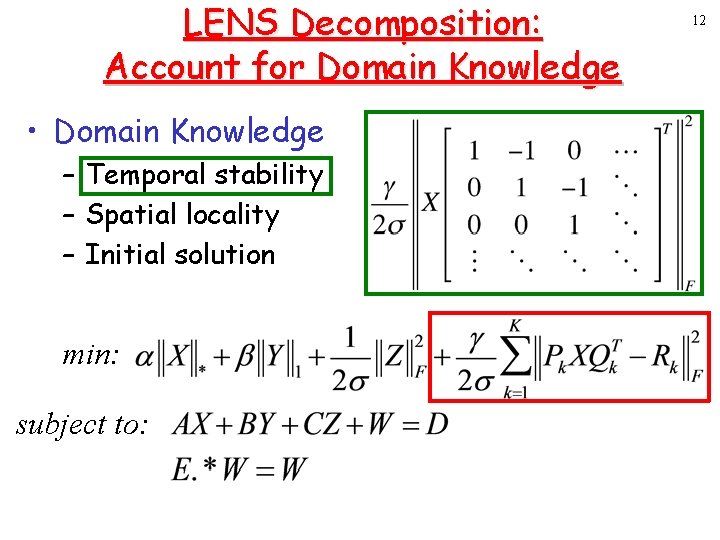

LENS Decomposition: Account for Domain Knowledge • Domain Knowledge – Temporal stability – Spatial locality – Initial solution min: subject to: 12

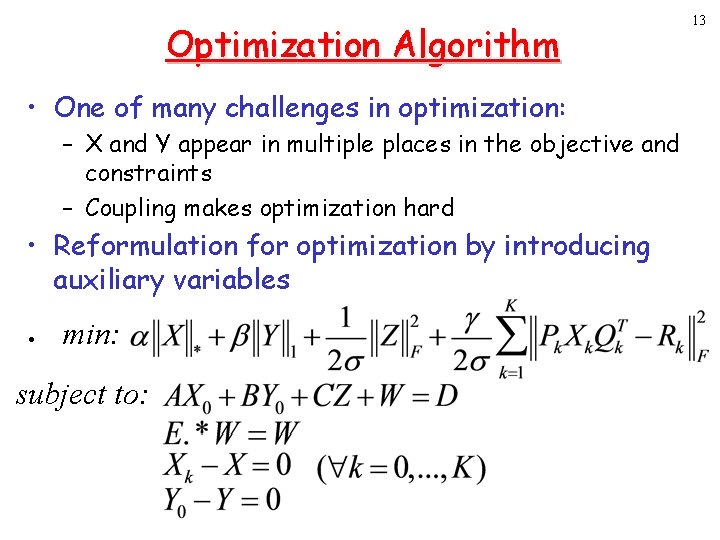

Optimization Algorithm • One of many challenges in optimization: – X and Y appear in multiple places in the objective and constraints – Coupling makes optimization hard • Reformulation for optimization by introducing auxiliary variables • min: subject to: 13

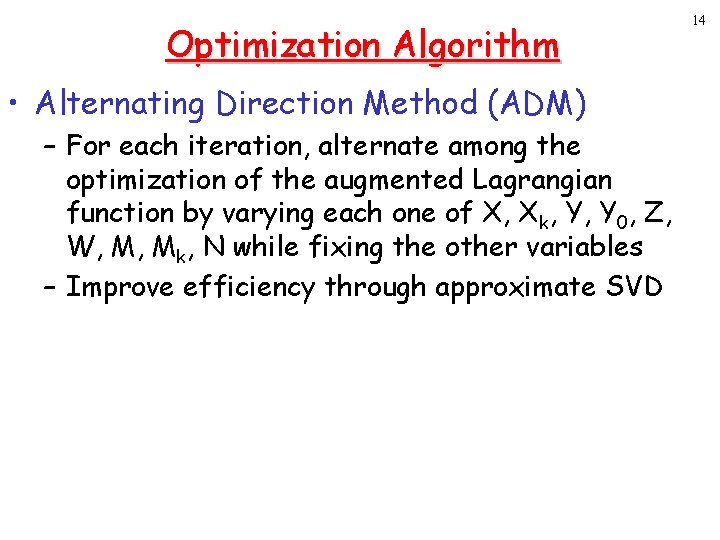

Optimization Algorithm • Alternating Direction Method (ADM) – For each iteration, alternate among the optimization of the augmented Lagrangian function by varying each one of X, Xk, Y, Y 0, Z, W, M, Mk, N while fixing the other variables – Improve efficiency through approximate SVD 14

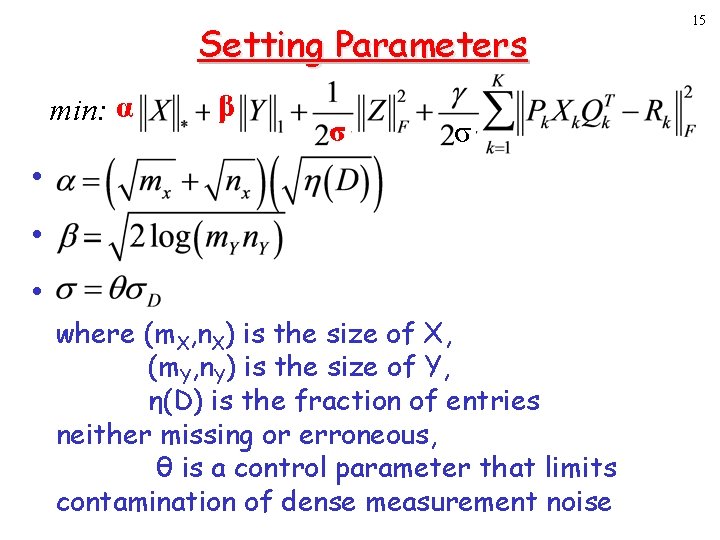

Setting Parameters min: α • • • β σ σ where (m. X, n. X) is the size of X, (m. Y, n. Y) is the size of Y, η(D) is the fraction of entries neither missing or erroneous, θ is a control parameter that limits contamination of dense measurement noise 15

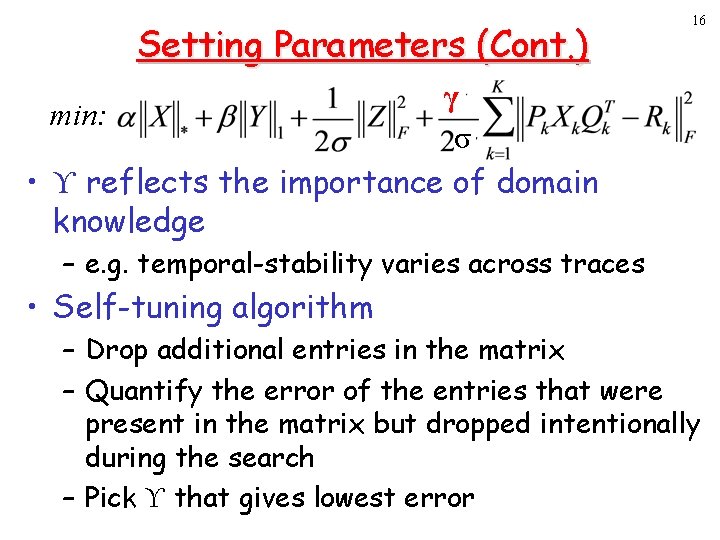

Setting Parameters (Cont. ) 16 γ min: σ • ϒ reflects the importance of domain knowledge – e. g. temporal-stability varies across traces • Self-tuning algorithm – Drop additional entries in the matrix – Quantify the error of the entries that were present in the matrix but dropped intentionally during the search – Pick ϒ that gives lowest error

![Algorithms Compared Algorithm Baseline Description SVD-base +KNN SRMF [SIGCOMM 09] SRMF+KNN [SIGCOMM 09] SRSVD Algorithms Compared Algorithm Baseline Description SVD-base +KNN SRMF [SIGCOMM 09] SRMF+KNN [SIGCOMM 09] SRSVD](http://slidetodoc.com/presentation_image_h/2c816f58c194df3305a35ecfb9b89a87/image-17.jpg)

Algorithms Compared Algorithm Baseline Description SVD-base +KNN SRMF [SIGCOMM 09] SRMF+KNN [SIGCOMM 09] SRSVD with baseline removal LENS Robust network compressive sensing Baseline estimate via rank-2 approximation Apply KNN after SVD-base Sparsity Regularized Matrix Factorization Hybrid of SRMF and KNN 17

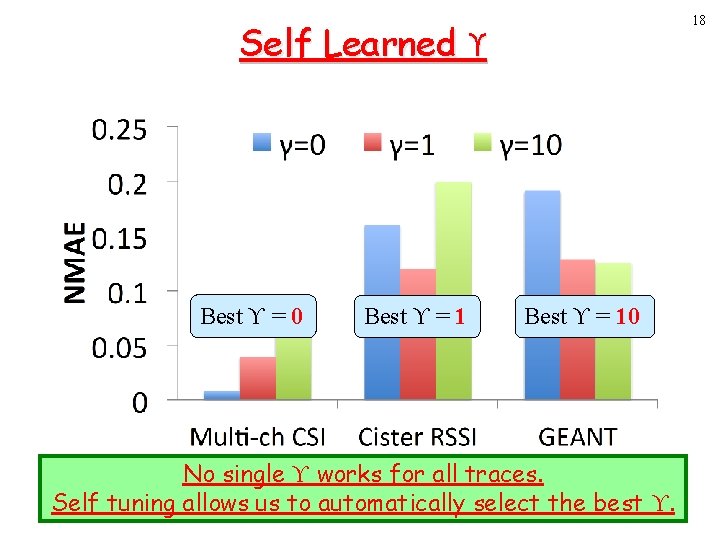

18 Self Learned ϒ Best ϒ = 0 Best ϒ = 10 No single ϒ works for all traces. Self tuning allows us to automatically select the best ϒ.

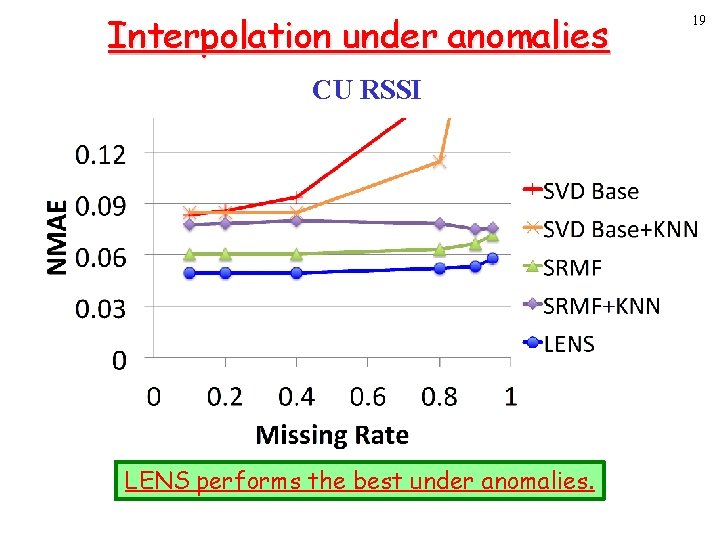

Interpolation under anomalies CU RSSI LENS performs the best under anomalies. 19

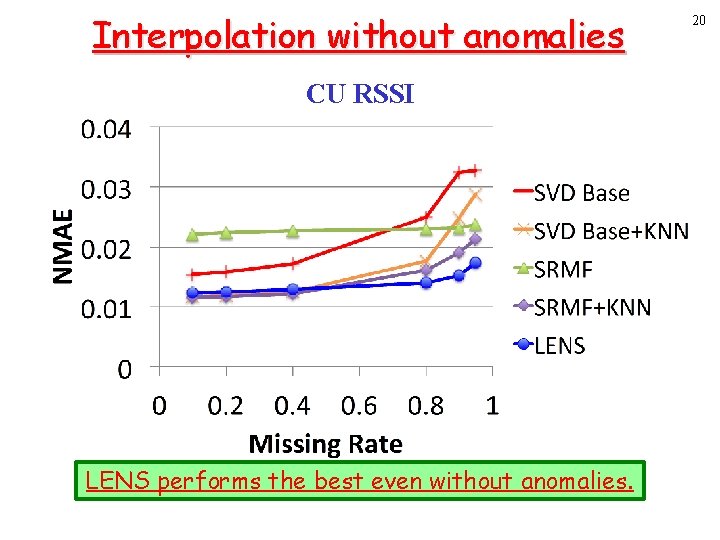

Interpolation without anomalies CU RSSI LENS performs the best even without anomalies. 20

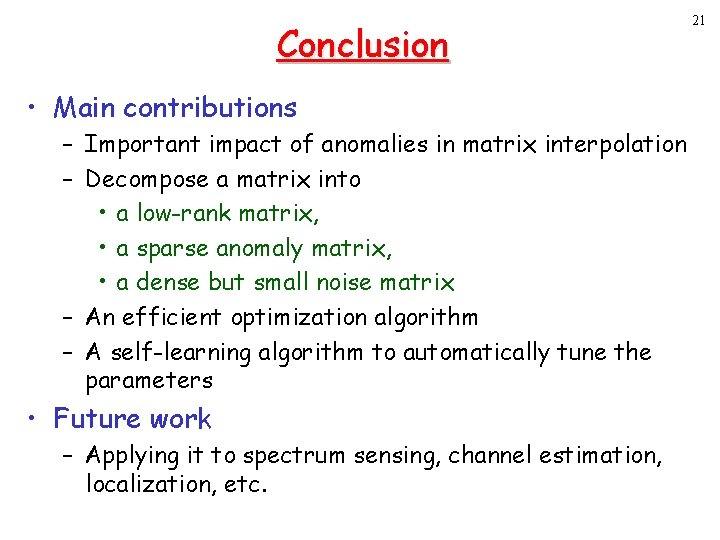

Conclusion • Main contributions – Important impact of anomalies in matrix interpolation – Decompose a matrix into • a low-rank matrix, • a sparse anomaly matrix, • a dense but small noise matrix – An efficient optimization algorithm – A self-learning algorithm to automatically tune the parameters • Future work – Applying it to spectrum sensing, channel estimation, localization, etc. 21

22 Thank you!

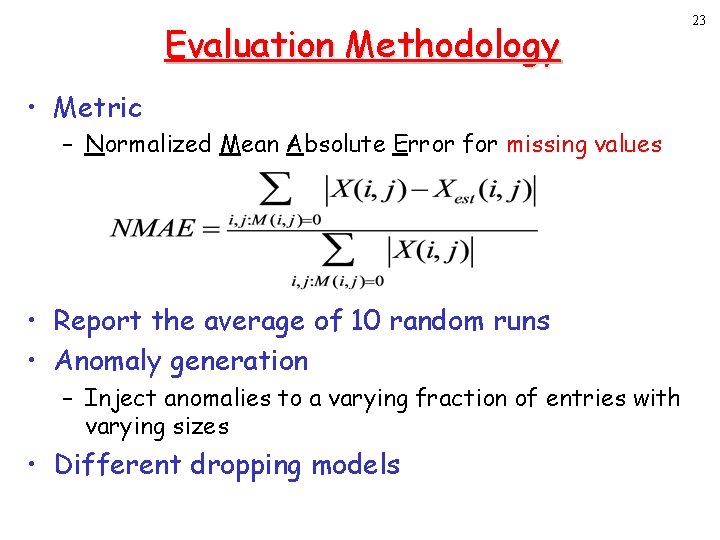

Evaluation Methodology • Metric – Normalized Mean Absolute Error for missing values • Report the average of 10 random runs • Anomaly generation – Inject anomalies to a varying fraction of entries with varying sizes • Different dropping models 23

Summary of Other Results 24 • The improvement of LENS increases with anomaly sizes and # anomalies. • LENS consistently performs the best under different dropping modes. • LENS yields the lowest prediction error. • LENS achieves higher anomaly detection accuracy.

- Slides: 24