Robust Declassification Steve Zdancewic Andrew Myers Cornell University

Robust Declassification Steve Zdancewic Andrew Myers Cornell University CSFW 14 1

Information Flow Security Information flow policies are a natural way to specify precise, system-wide, multi-level security requirements. Enforcement mechanisms are often too restrictive – prevent desired policies. Information flow controls provide declassification mechanisms to accommodate intentional leaks. But… hard to understand end-to-end system behavior. CSFW 14 2

Declassification (downgrading) is the intentional release of confidential information. Policy governs use of declassification operation. CSFW 14 3

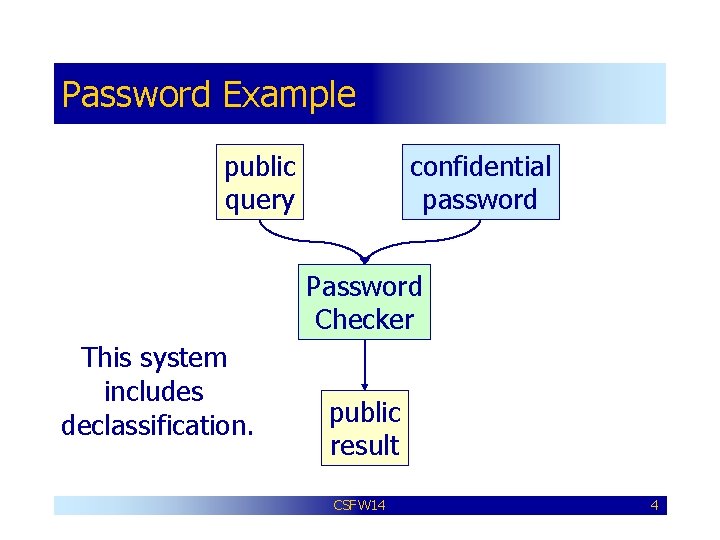

Password Example public query confidential password Password Checker This system includes declassification. public result CSFW 14 4

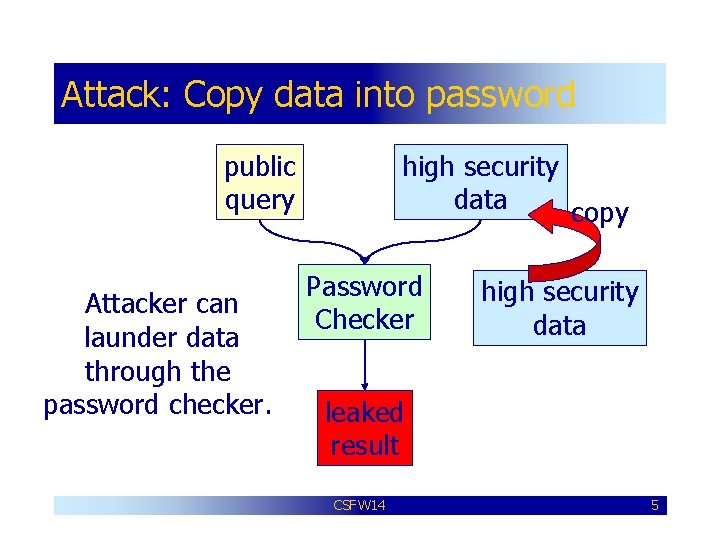

Attack: Copy data into password public query Attacker can launder data through the password checker. high security data copy Password Checker high security data leaked result CSFW 14 5

Robust Declassification Goal: Formalize the intuition that an attacker should not be able to abuse the downgrade mechanisms provided by the system to cause more information to be declassified than intended. CSFW 14 6

How to Proceed? • Characterize what information is declassified. • Make a distinction between “intentional” and “unintentional” information flow. • Explore some of the consequences of robust declassification. CSFW 14 7

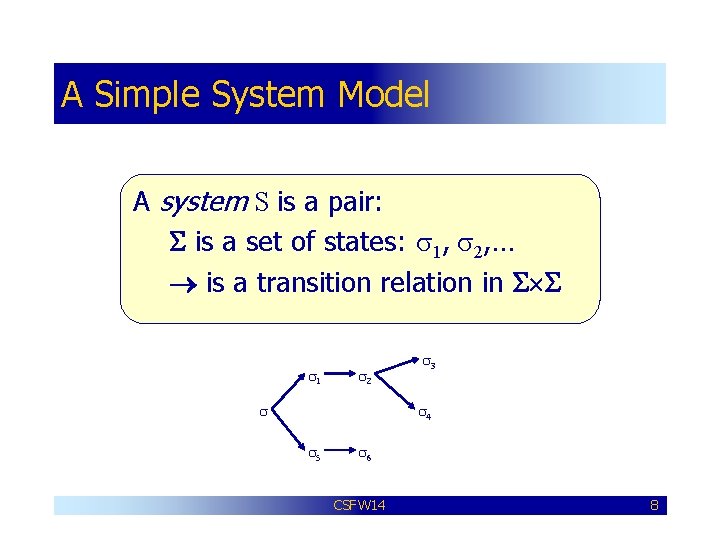

A Simple System Model A system S is a pair: S is a set of states: s 1, s 2, … is a transition relation in S S s 1 s 2 s s 3 s 4 s 5 s 6 CSFW 14 8

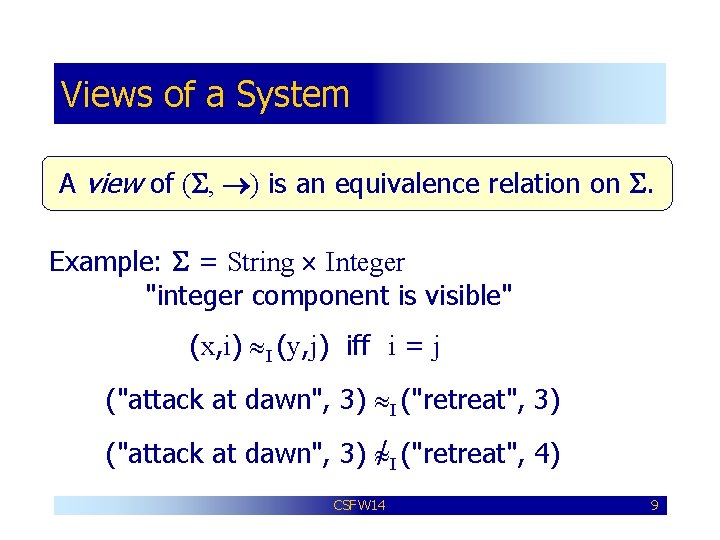

Views of a System A view of (S, ) is an equivalence relation on S. Example: S = String Integer "integer component is visible" (x, i) I (y, j) iff i = j ("attack at dawn", 3) I ("retreat", 3) ("attack at dawn", 3) / I ("retreat", 4) CSFW 14 9

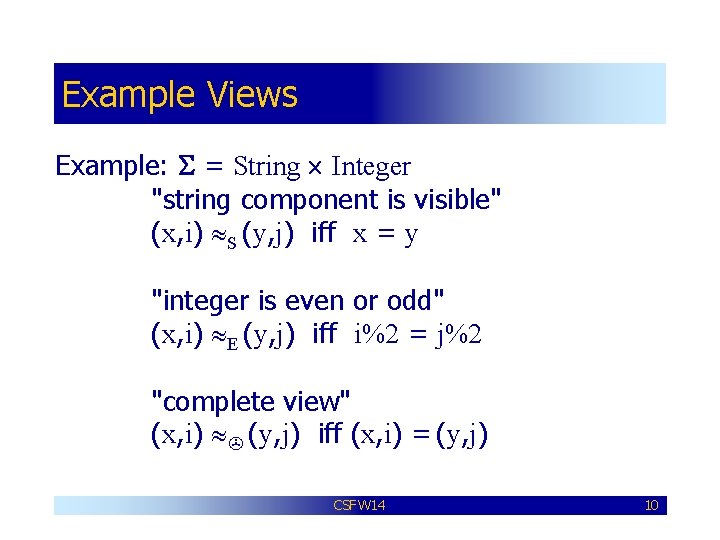

Example Views Example: S = String Integer "string component is visible" (x, i) S (y, j) iff x = y "integer is even or odd" (x, i) E (y, j) iff i%2 = j%2 "complete view" (x, i) (y, j) iff (x, i) = (y, j) CSFW 14 10

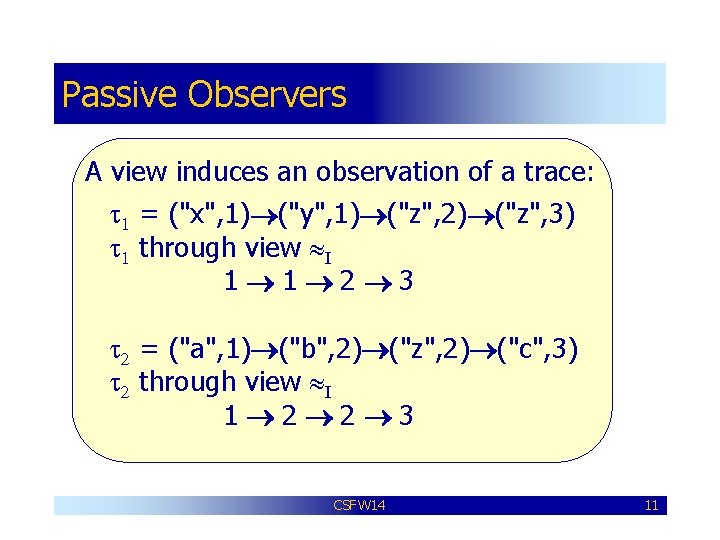

Passive Observers A view induces an observation of a trace: t 1 = ("x", 1) ("y", 1) ("z", 2) ("z", 3) t 1 through view I 1 1 2 3 t 2 = ("a", 1) ("b", 2) ("z", 2) ("c", 3) t 2 through view I 1 2 2 3 CSFW 14 11

![Observational Equivalence The induced observational equivalence is S[ ]: s S[ ] s' if Observational Equivalence The induced observational equivalence is S[ ]: s S[ ] s' if](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-12.jpg)

Observational Equivalence The induced observational equivalence is S[ ]: s S[ ] s' if the traces from s look the same as the traces from s' through the view . CSFW 14 12

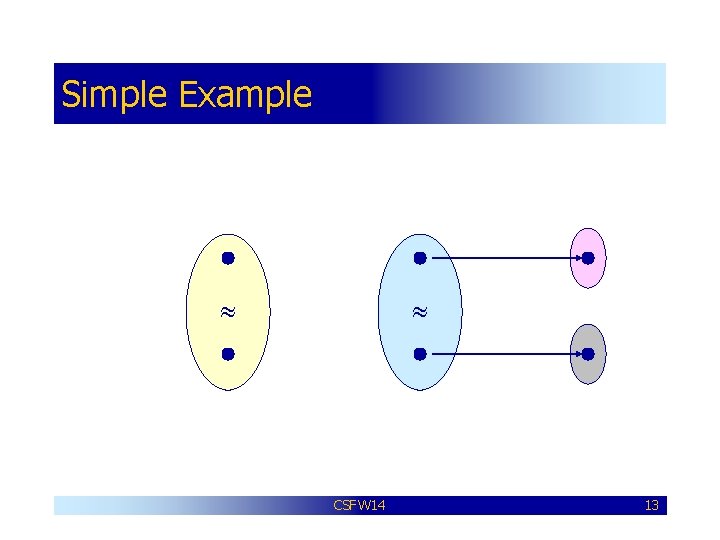

Simple Example CSFW 14 13

![Simple Example S[ ] CSFW 14 14 Simple Example S[ ] CSFW 14 14](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-14.jpg)

Simple Example S[ ] CSFW 14 14

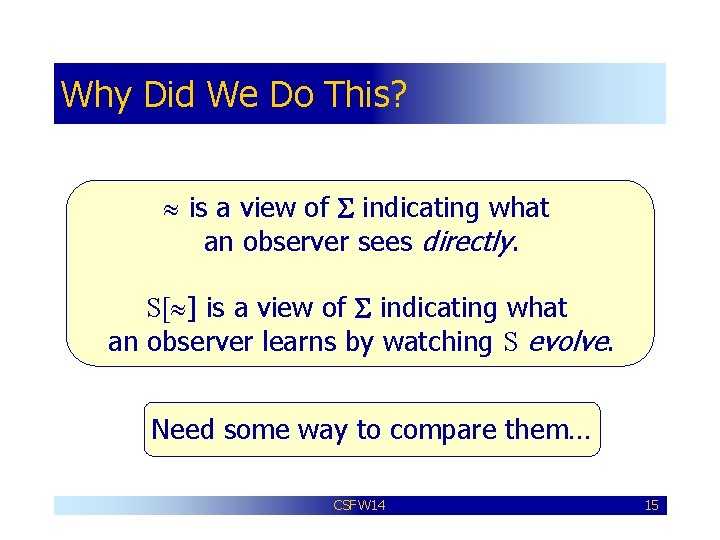

Why Did We Do This? is a view of S indicating what an observer sees directly. S[ ] is a view of S indicating what an observer learns by watching S evolve. Need some way to compare them… CSFW 14 15

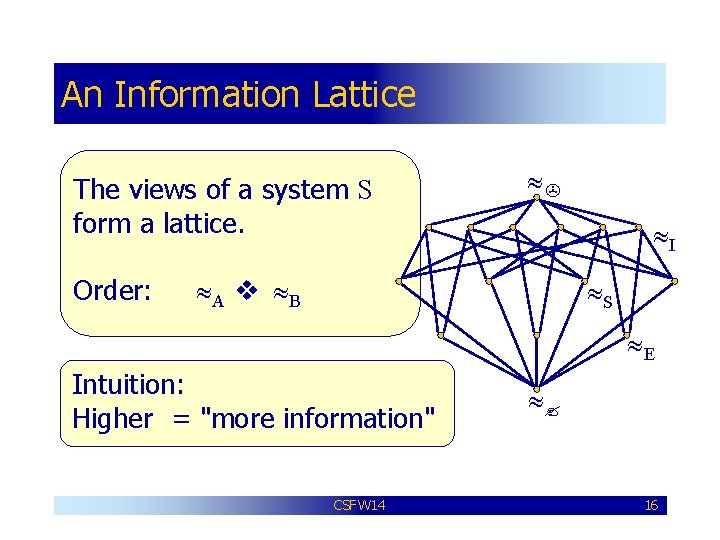

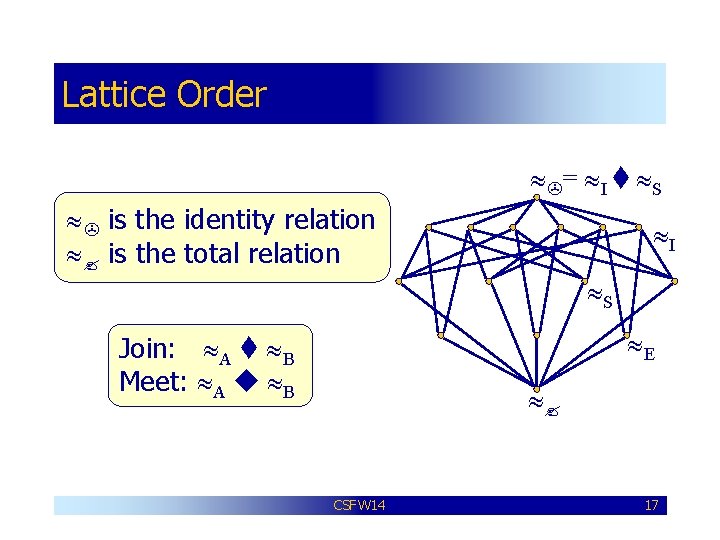

An Information Lattice The views of a system S form a lattice. Order: I A B S E Intuition: Higher = "more information" CSFW 14 16

Lattice Order = I S is the identity relation is the total relation I S E Join: A B Meet: A B CSFW 14 17

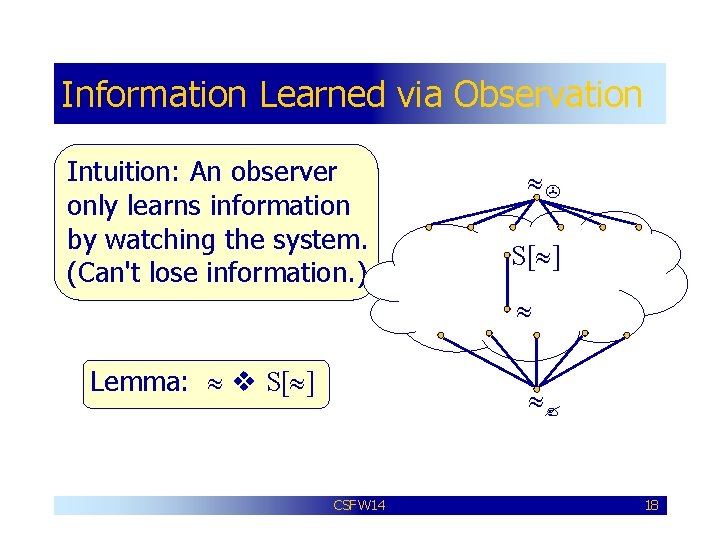

Information Learned via Observation Intuition: An observer only learns information by watching the system. (Can't lose information. ) S[ ] Lemma: S[ ] CSFW 14 18

![Natural Security Condition Definition: A system S is -secure whenever = S[ ] Closely Natural Security Condition Definition: A system S is -secure whenever = S[ ] Closely](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-19.jpg)

Natural Security Condition Definition: A system S is -secure whenever = S[ ] Closely related to standard definitions of non-interference style properties. CSFW 14 19

![Declassification is intentional leakage of information. Implies that = / S[ ] We want Declassification is intentional leakage of information. Implies that = / S[ ] We want](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-20.jpg)

Declassification is intentional leakage of information. Implies that = / S[ ] We want to characterize unintentional declassification. CSFW 14 20

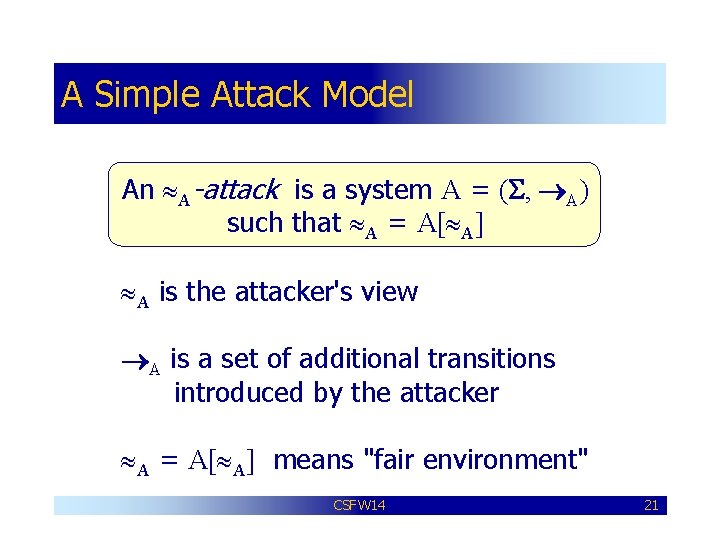

A Simple Attack Model An A-attack is a system A = (S, A) such that A = A[ A] A is the attacker's view A is a set of additional transitions introduced by the attacker A = A[ A] means "fair environment" CSFW 14 21

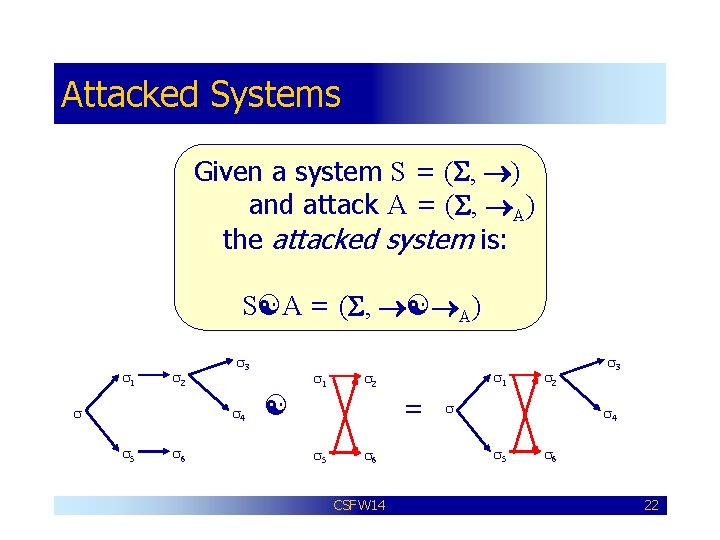

Attacked Systems Given a system S = (S, ) and attack A = (S, A) the attacked system is: S A = (S, A) s 1 s 2 s s 3 s 4 s 5 s 6 s 1 s 2 = s 5 s 6 CSFW 14 s 2 s s 3 s 4 s 5 s 6 22

![More Intuition S[ ] describes the information intentionally declassified by the system – a More Intuition S[ ] describes the information intentionally declassified by the system – a](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-23.jpg)

More Intuition S[ ] describes the information intentionally declassified by the system – a specification for how S ought to behave. (S A)[ A] describes the information obtained by an attack A. CSFW 14 23

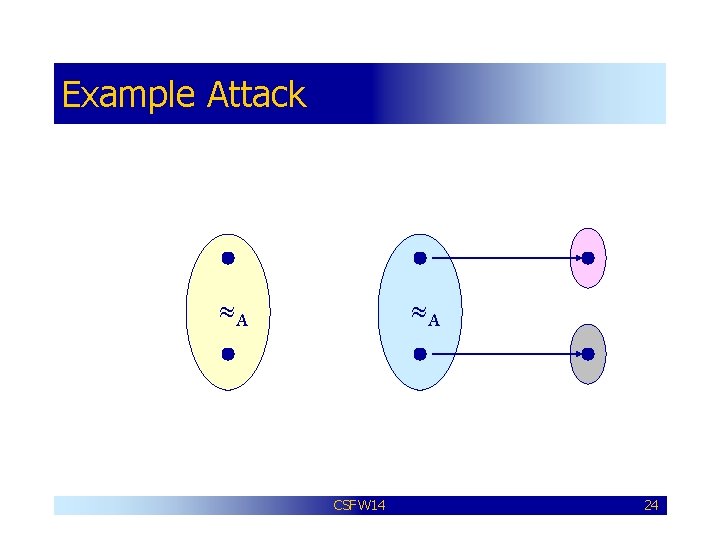

Example Attack A A CSFW 14 24

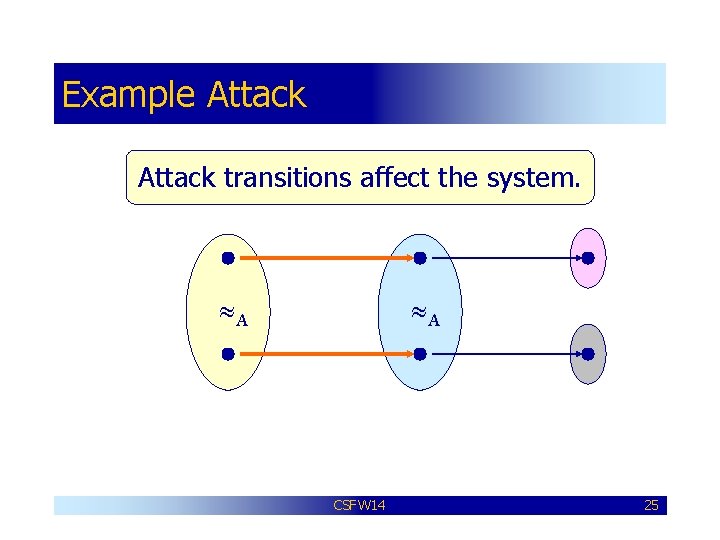

Example Attack transitions affect the system. A A CSFW 14 25

![Example Attacked system may reveal more. (S A)[ A] S[ ] S[ A] CSFW Example Attacked system may reveal more. (S A)[ A] S[ ] S[ A] CSFW](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-26.jpg)

Example Attacked system may reveal more. (S A)[ A] S[ ] S[ A] CSFW 14 26

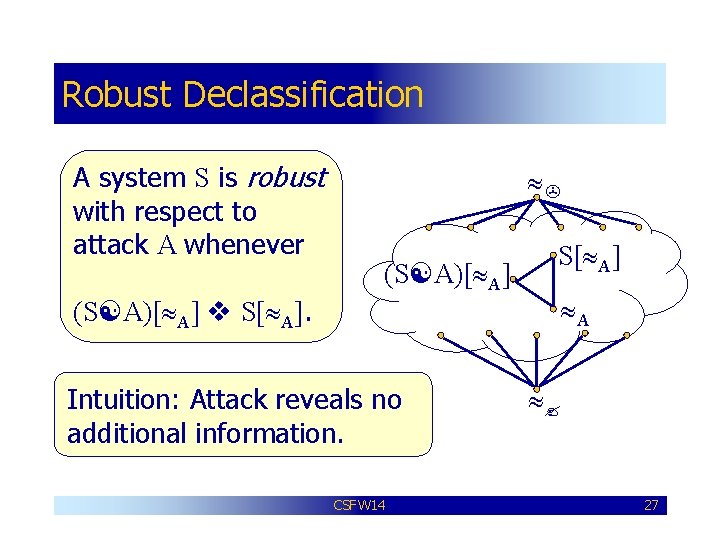

Robust Declassification A system S is robust with respect to attack A whenever (S A)[ A] S[ A] A (S A)[ A] S[ A]. Intuition: Attack reveals no additional information. CSFW 14 27

Secure Systems are Robust Theorem: If S is A-secure then S is A-robust with respect to all A-attacks. Intuition: S doesn't leak any information to A-observer, so no declassifications to exploit. CSFW 14 28

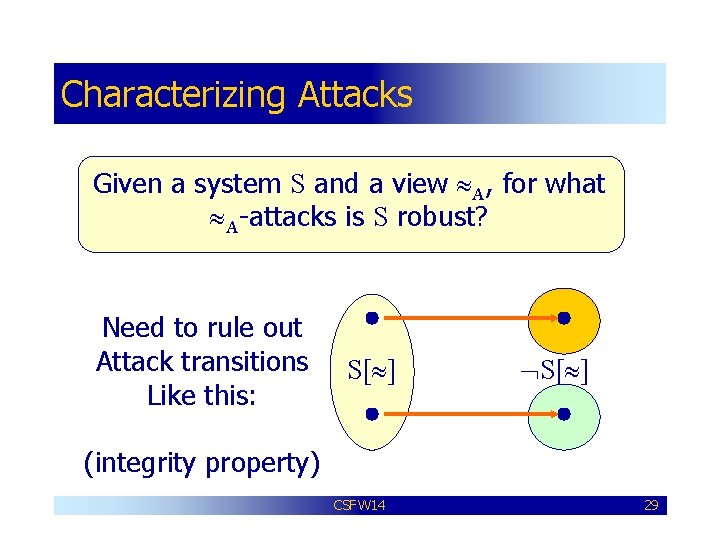

Characterizing Attacks Given a system S and a view A, for what A-attacks is S robust? Need to rule out Attack transitions Like this: S[ ] (integrity property) CSFW 14 29

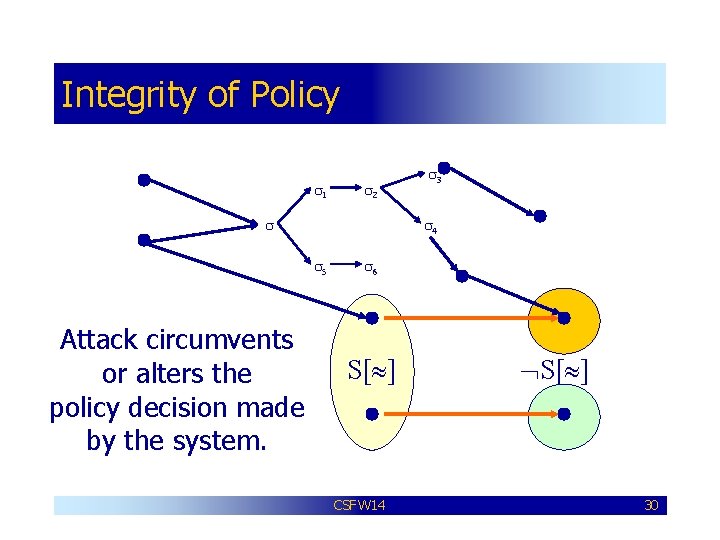

Integrity of Policy s 1 s 2 s s 4 s 5 Attack circumvents or alters the policy decision made by the system. s 3 s 6 S[ ] CSFW 14 S[ ] 30

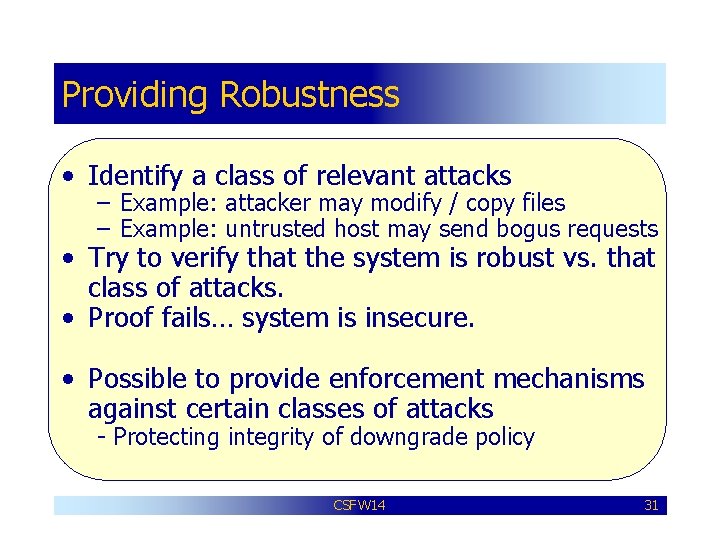

Providing Robustness • Identify a class of relevant attacks – Example: attacker may modify / copy files – Example: untrusted host may send bogus requests • Try to verify that the system is robust vs. that class of attacks. • Proof fails… system is insecure. • Possible to provide enforcement mechanisms against certain classes of attacks - Protecting integrity of downgrade policy CSFW 14 31

Future Work This is a simple model – extend definition of robustness to better models. How does robustness fit in with intransitive non-interference? We're putting the idea of robustness into practice in a distributed version of Jif. CSFW 14 32

Conclusions It’s critical to prevent downgrading mechanisms in a system from being abused. Robustness is an attempt to capture this idea in a formal way. Suggests that integrity and confidentiality are linked in systems with declassification. CSFW 14 33

Correction to Paper Bug-fixed version of paper available http: //www. cs. cornell. edu/zdance CSFW 14 34

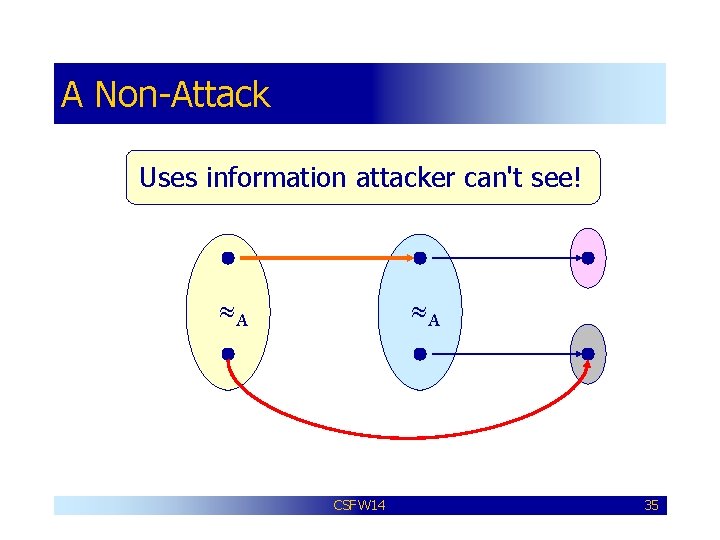

A Non-Attack Uses information attacker can't see! A A CSFW 14 35

![Observational Equivalence s S[ ] ? Are s and s' observationally equivalent with respect Observational Equivalence s S[ ] ? Are s and s' observationally equivalent with respect](http://slidetodoc.com/presentation_image_h2/058d92cf8cacca30d78923f573698909/image-36.jpg)

Observational Equivalence s S[ ] ? Are s and s' observationally equivalent with respect to ? s' CSFW 14 36

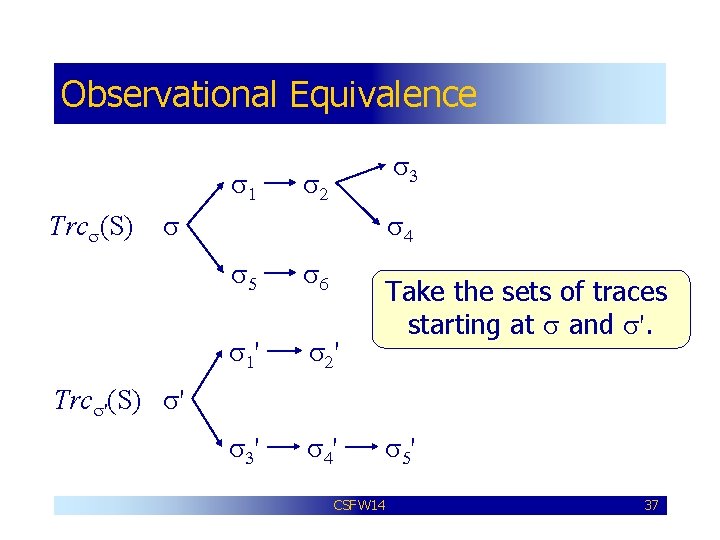

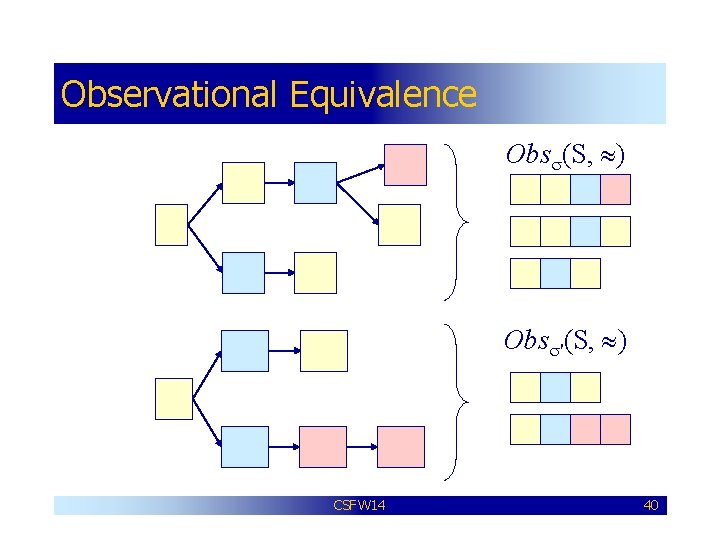

Observational Equivalence s 1 Trcs(S) s 3 s 2 s s 4 s 5 s 6 s 1' s 2' s 3' s 4' Take the sets of traces starting at s and s'. Trcs'(S) s' s 5' CSFW 14 37

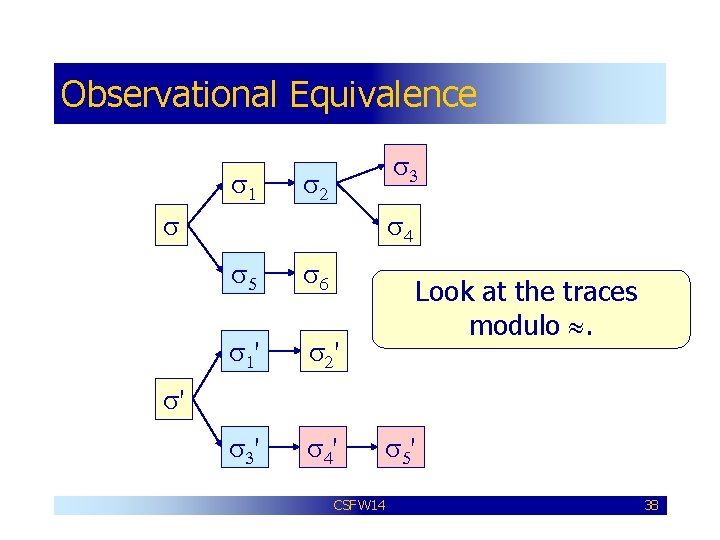

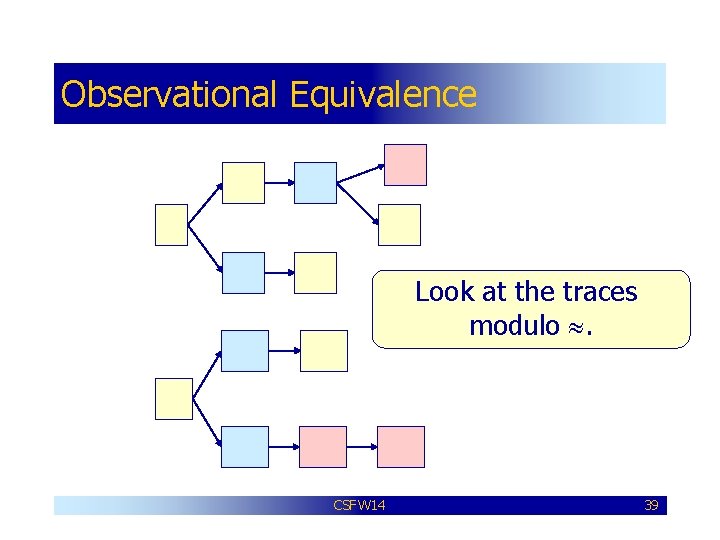

Observational Equivalence s 1 s 3 s 2 s s 4 s 5 s 6 s 1' s 2' s 3' s 4' Look at the traces modulo . s' s 5' CSFW 14 38

Observational Equivalence s 1 s 3 s 2 s s 4 s 5 s 6 s 1' s 2' s 3' s 4' Look at the traces modulo . s' s 5' CSFW 14 39

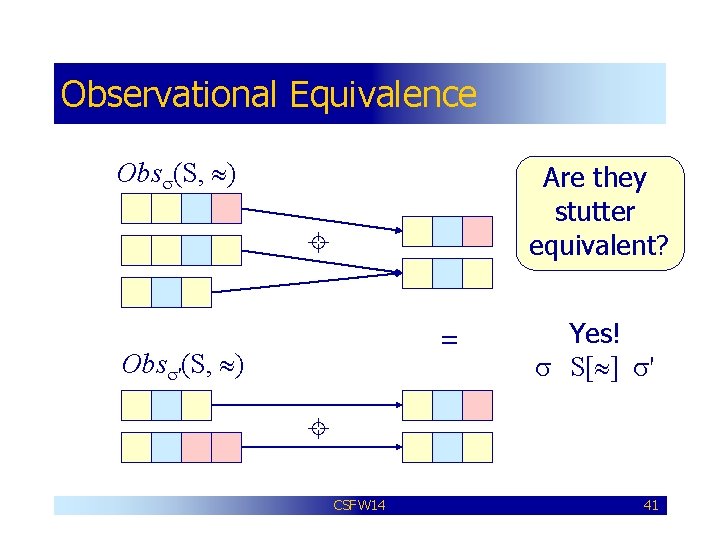

Observational Equivalence s 1 s 3 s 2 s Obss(S, ) s 4 s 5 s 6 s 1' s 2' s 3' s 4' Obss'(S, ) s' s 5' CSFW 14 40

Observational Equivalence Obss(S, ) Are they stutter equivalent? = Obss'(S, ) Yes! s S[ ] s' CSFW 14 41

- Slides: 41