Probabilistic Inference q Main Objective Understanding how probabilistic

Probabilistic Inference q Main Objective: Understanding how probabilistic inference works. q Students should be able to solve probabilistic inferences problems by themselves. q Discussion of classical problems.

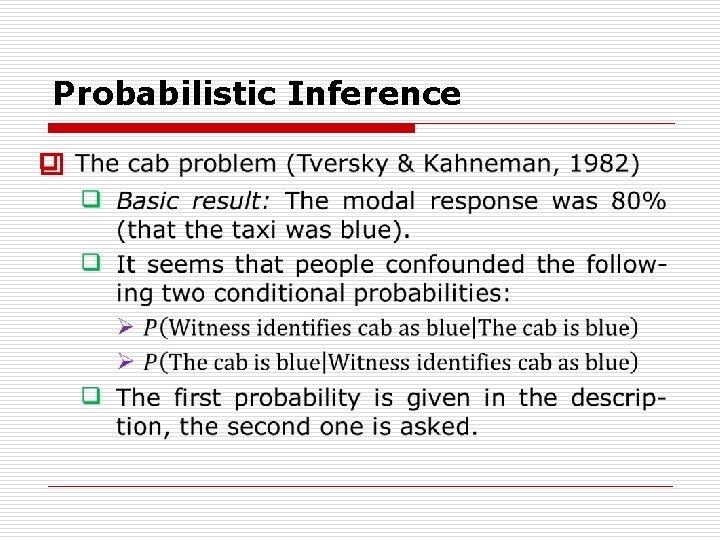

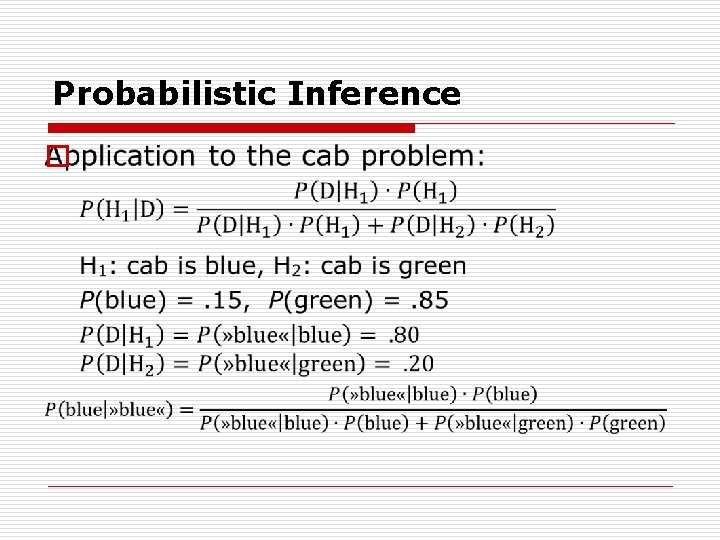

Probabilistic Inference q The cab problem (Tversky & Kahneman, 1982) A cab was involved in a hit and run accident at night. Two cab companies, the Green and the Blue, operate in the city. You are given the following data: q 85% of the cabs in the city are Green (G) and 15% are Blue (B). q A witness identified the cab as Blue (» B «). The court tested the reliability of the witness under the same cir cumstances that existed on the night of the accident and concluded that the witness correctly identified each one of the two colors 80% of the time and failed 20% of the time. What is the probability that the cab involved in the accident was Blue rather than Green?

Probabilistic Inference o

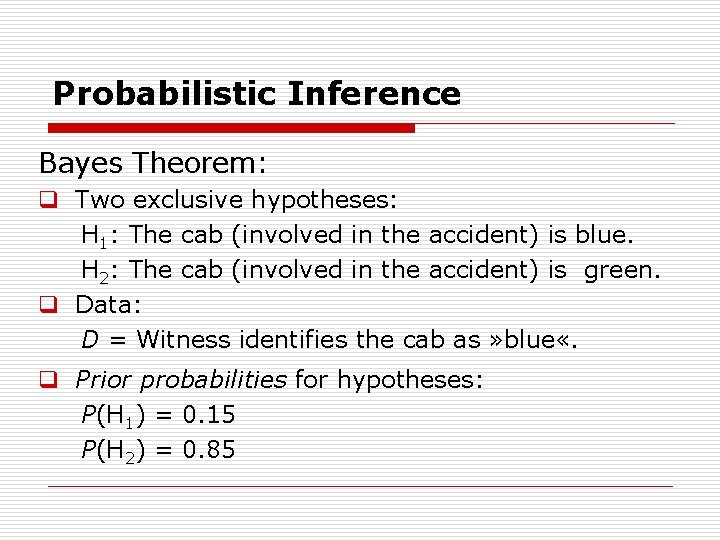

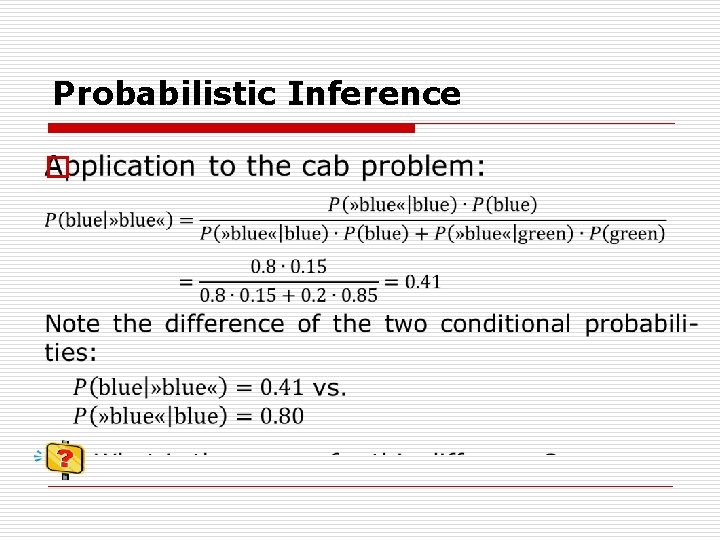

Probabilistic Inference Bayes Theorem: q Two exclusive hypotheses: H 1: The cab (involved in the accident) is blue. H 2: The cab (involved in the accident) is green. q Data: D = Witness identifies the cab as » blue «. q Prior probabilities for hypotheses: P(H 1) = 0. 15 P(H 2) = 0. 85

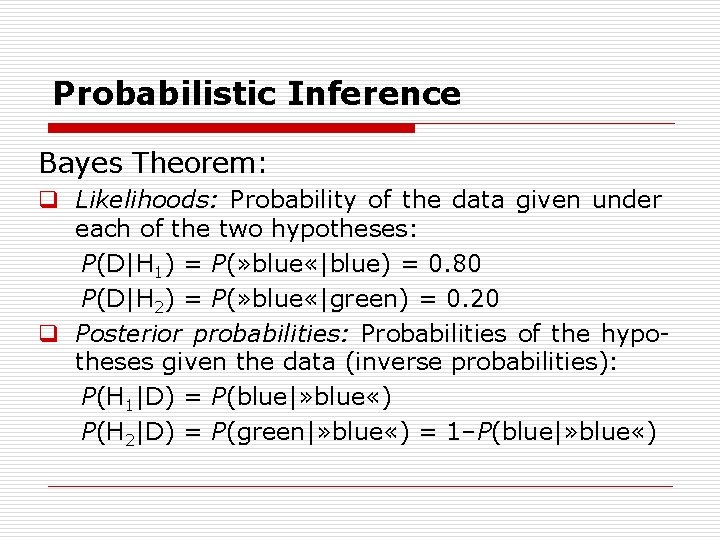

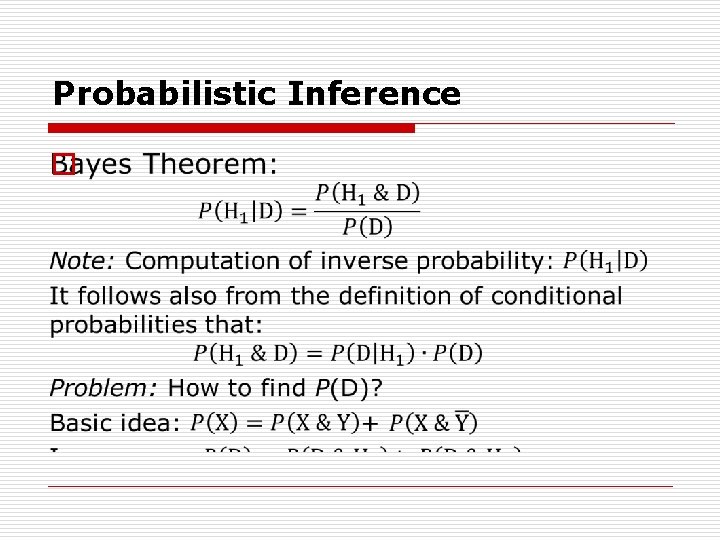

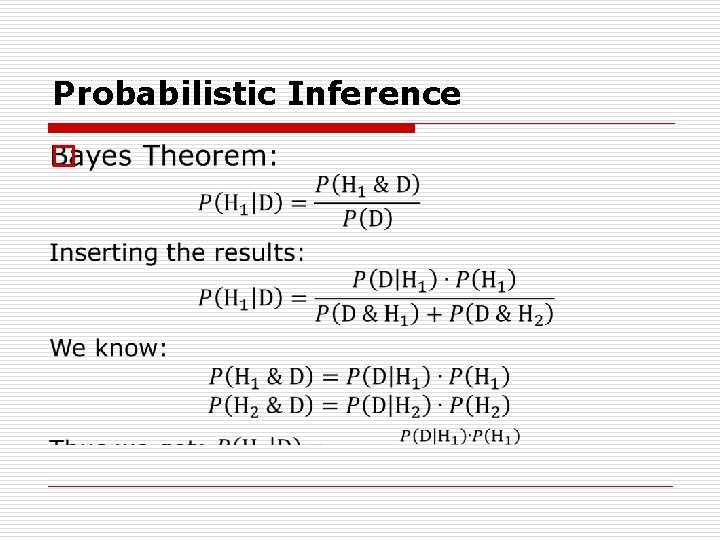

Probabilistic Inference Bayes Theorem: q Likelihoods: Probability of the data given under each of the two hypotheses: P(D|H 1) = P(» blue «|blue) = 0. 80 P(D|H 2) = P(» blue «|green) = 0. 20 q Posterior probabilities: Probabilities of the hypo theses given the data (inverse probabilities): P(H 1|D) = P(blue|» blue «) P(H 2|D) = P(green|» blue «) = 1–P(blue|» blue «)

Probabilistic Inference o

Probabilistic Inference o

Probabilistic Inference o

Probabilistic Inference o

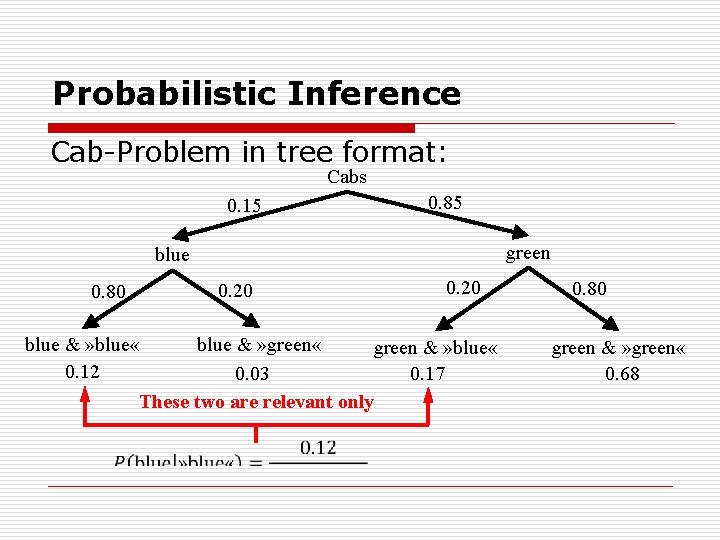

Probabilistic Inference Cab Problem in tree format: Cabs 0. 15 0. 85 green blue 0. 80 0. 20 blue & » green « blue & » blue « green & » blue « 0. 12 0. 03 0. 17 These two are relevant only 0. 80 green & » green « 0. 68

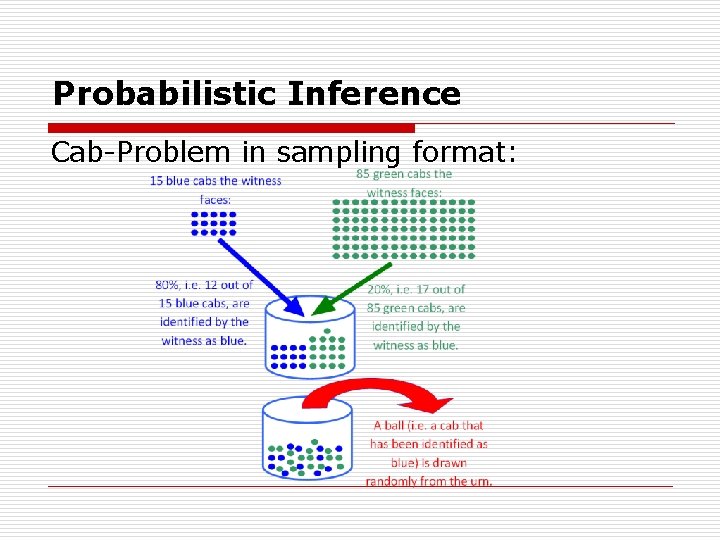

Probabilistic Inference Cab Problem in sampling format:

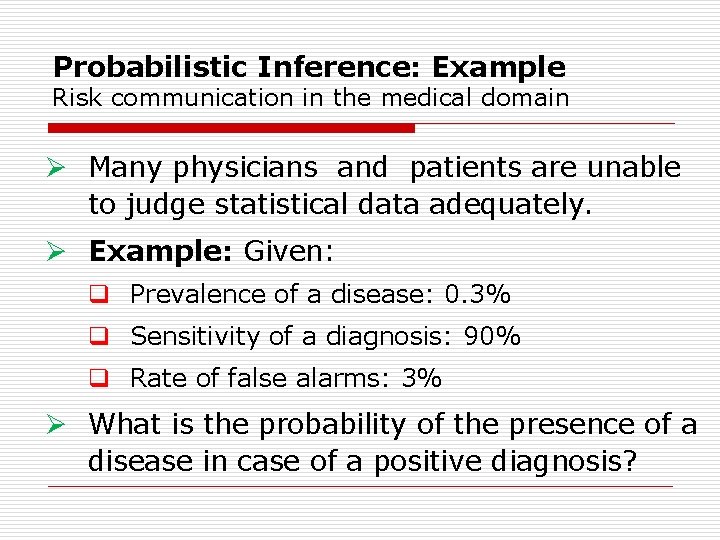

Probabilistic Inference: Example Risk communication in the medical domain Ø Many physicians and patients are unable to judge statistical data adequately. Ø Example: Given: q Prevalence of a disease: 0. 3% q Sensitivity of a diagnosis: 90% q Rate of false alarms: 3% Ø What is the probability of the presence of a disease in case of a positive diagnosis?

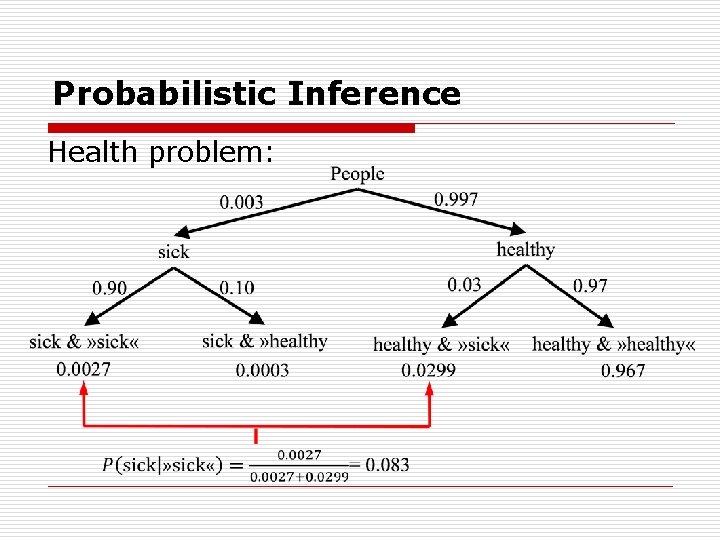

Probabilistic Inference Health problem:

Probabilistic Inference Bayes Theorem: Summary: q Bayes Theorem as a device for updating proba bilities: Prior probability P(H) is updated by in corporating new evidence given by the likelihood: P(D|H). q The result is the posterior P(H|D) [the updated prior] that takes the evidence into account. q Bayes Theorem as a device for computing inverse probabilities: P(D|H) given, P(H|D) required. q Trees as convenient method for representation.

Probabilistic Inference o

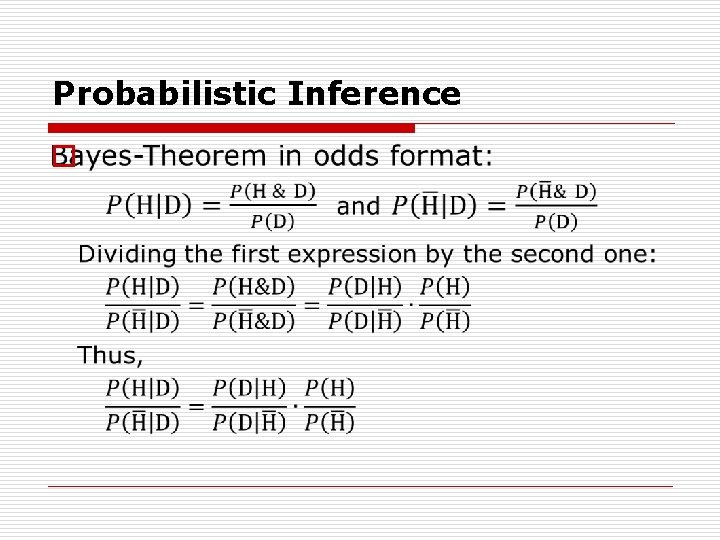

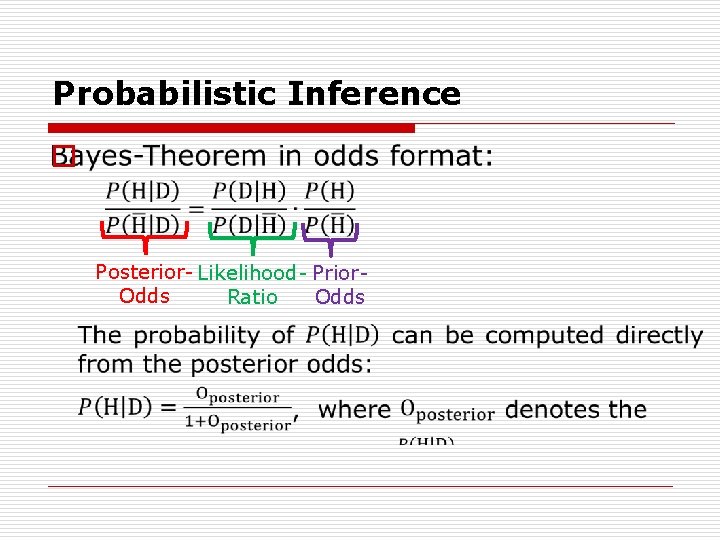

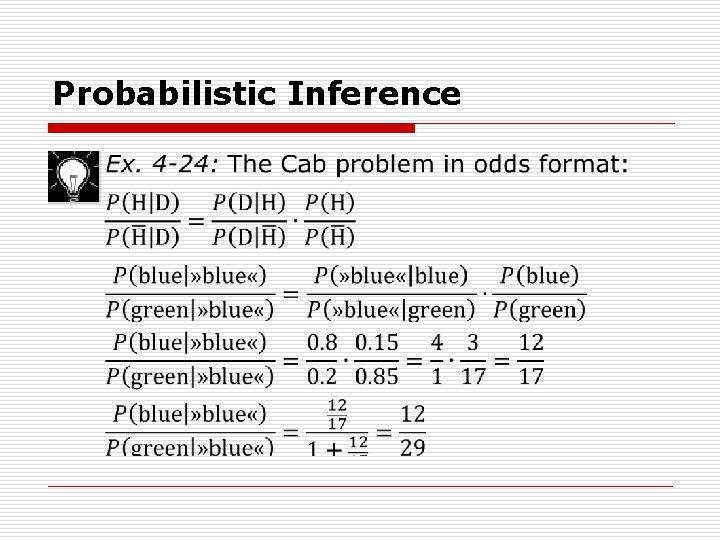

Probabilistic Inference o Posterior Likelihood Prior Odds Ratio

Probabilistic Inference o

Probabilistic Inference o

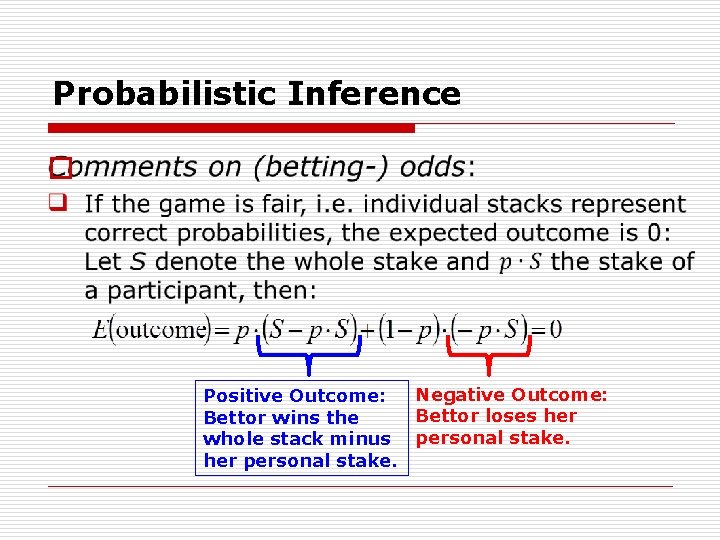

Probabilistic Inference o Negative Outcome: Positive Outcome: Bettor loses her Bettor wins the whole stack minus personal stake. her personal stake.

Probabilistic Inference o

Probabilistic Inference o

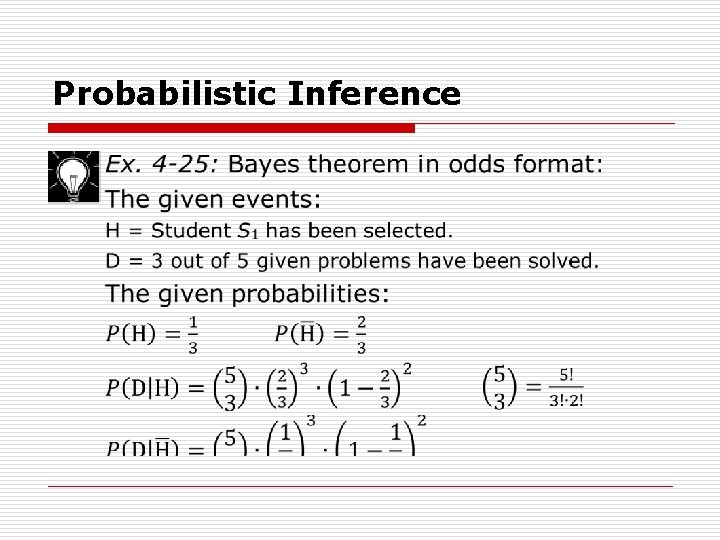

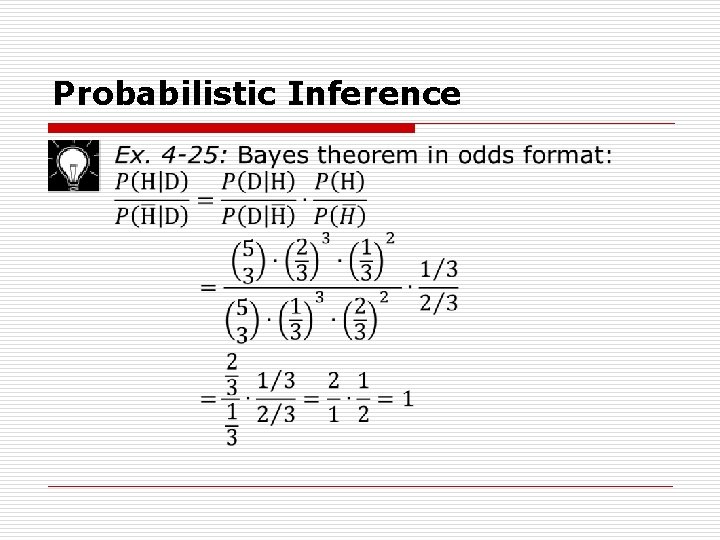

Probabilistic Inference Ex. 4 -25: Bayes theorem in odds format: q Instructor M has two students, S 1 and S 2, who perform their exercises regularly. q S 1 is capable to solve 2/3 of the problems whereas S 2 solves on average 1/3 of the problems. q M selects randomly one of the two students using the follow ingprocedure: He rolls a fair die and if the num ber of points is smaller than 3 he selects S 1 otherwise S 2 is selected. Thus S 1 is selected with p=1/3. q The student selected receives 5 problems from the pool of exercises. She solves 3 of the 5 problems. What is the probability that the selected student was S 1?

Probabilistic Inference Ex. 4 -25: Bayes theorem in odds format: Comment: One might argue that the presented formulation of the pro blem does not determine a unique probability model. One fur ther assumption is required: The probability of a solution is determined entirely by the ability of the student to solve the problem as given by the probabilities provided by the problem formulation. This pre cludes the influence of factors other than the student’s ability.

Probabilistic Inference o

Probabilistic Inference o

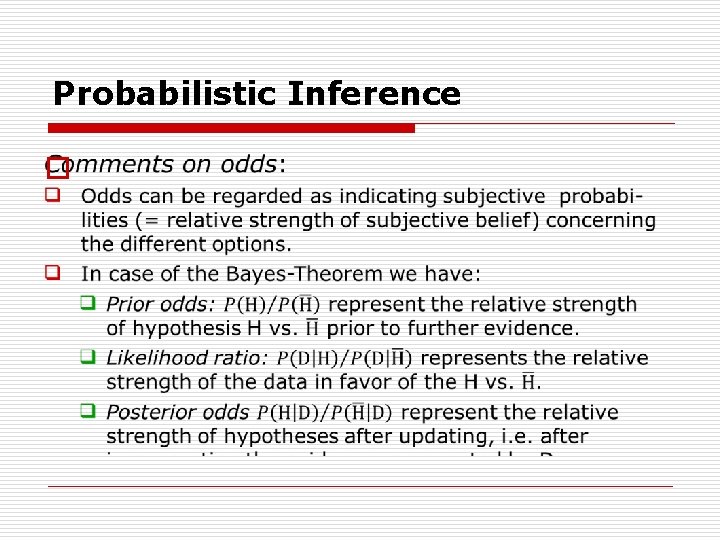

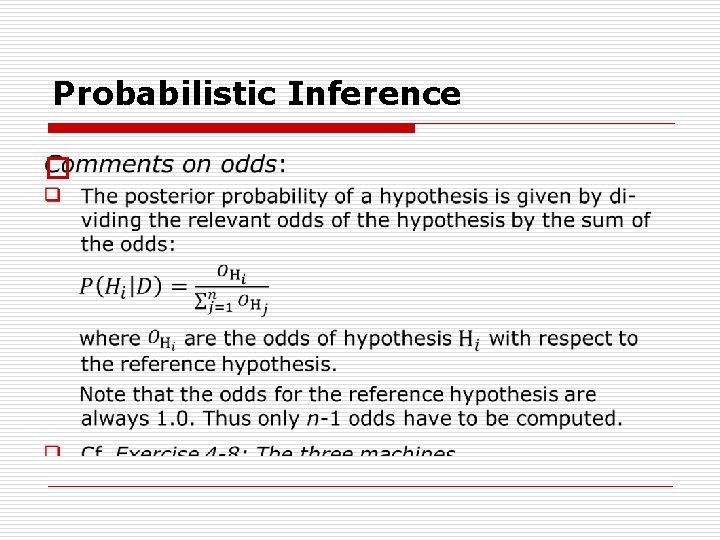

Probabilistic Inference Comments on odds: q Ex. 4 25 illustrates that Bayes Theorem in odds format can be quite convenient for computational purposes in that computation is considerably simplified. q Problem: How to work with odds in case of more than two alternatives. q Solution: Use one of the hypotheses as the reference hypothesis and then compute the odds for the other hypotheses with respect to this reference hypothesis.

Probabilistic Inference o

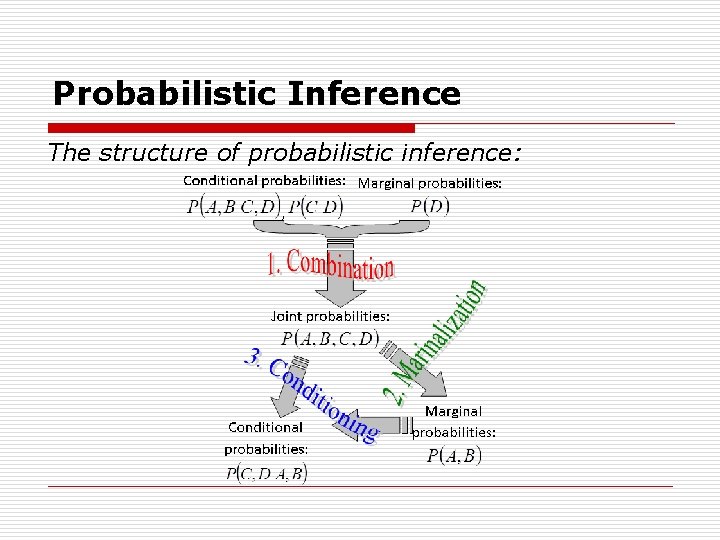

Probabilistic Inference The structure of probabilistic inference: q Bayes Theorem represents just a specific form of pro babilistic inference within a more general framework of probabilistic inference. In following, this more general approach will be characterized. q There are three types of probabilities: Joint, marginal, and conditional probabilities. q There are three types of probabilistic operations: q Combination of probabilistic information, q Marginalization, q Conditioning on specific events.

Probabilistic Inference o

Probabilistic Inference o

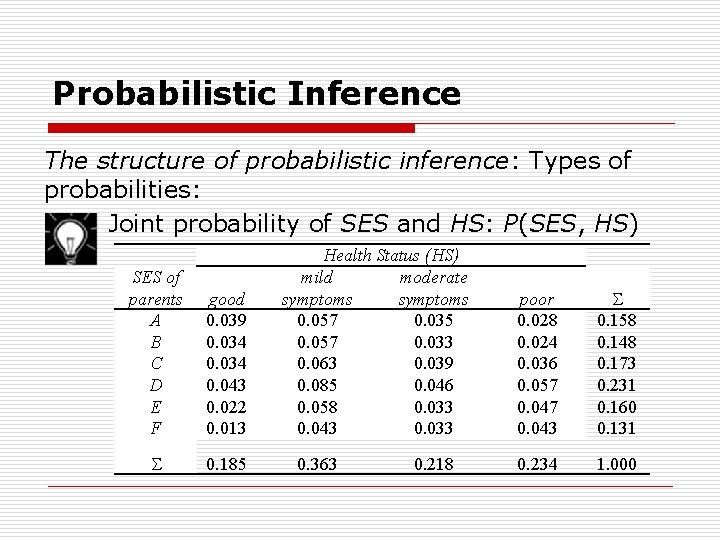

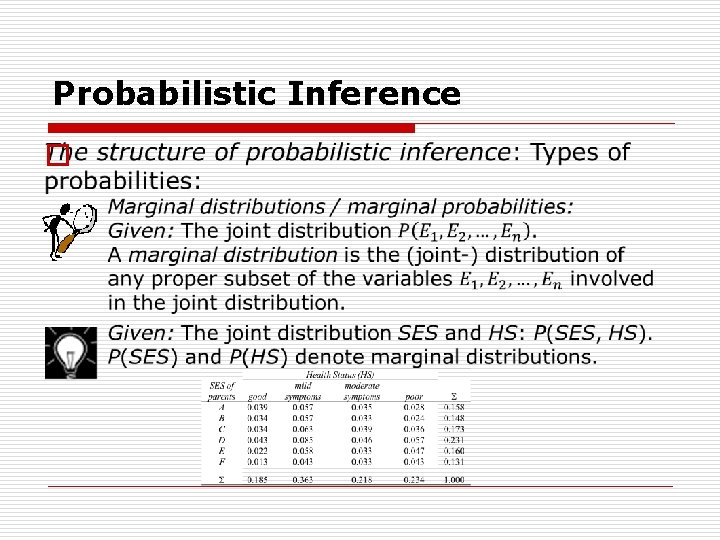

Probabilistic Inference The structure of probabilistic inference: Types of probabilities: Joint probability of SES and HS: P(SES, HS) SES of parents A B C D E F good 0. 039 0. 034 0. 043 0. 022 0. 013 0. 185 Health Status (HS) mild moderate symptoms 0. 057 0. 035 0. 057 0. 033 0. 063 0. 039 0. 085 0. 046 0. 058 0. 033 0. 043 0. 033 0. 363 0. 218 poor 0. 028 0. 024 0. 036 0. 057 0. 043 0. 158 0. 148 0. 173 0. 231 0. 160 0. 131 0. 234 1. 000

Probabilistic Inference The structure of probabilistic inference: Types of probabilities: Principle (Joint distributions): The joint distribution contains the complete probability information about the underlying random variables. By consequence, any question concerning probabilistic information about the underlying events can be answered by using the joint distribution. Two problems: 1. Complexity in case of many variables. 2. Availability the desired information: For example, the stochastic dependence of two or more random variables cannot be read off from the joint distribution.

Probabilistic Inference The structure of probabilistic inference: Types of probabilities: Complexity of joint distributions: Given: 100 variables with two outcomes each. The table of the joint distributions contains 2100 entries: 2100 = 1. 27 1030. If each entry of the table takes one bit only, more than 100 billion (1011) exabytes are required (1 exabyte = 8 1018 bits). To date (2016), the whole disk space of all computers on earth is estimated to comprises about 2500 exa bytes.

Probabilistic Inference o

Probabilistic Inference o

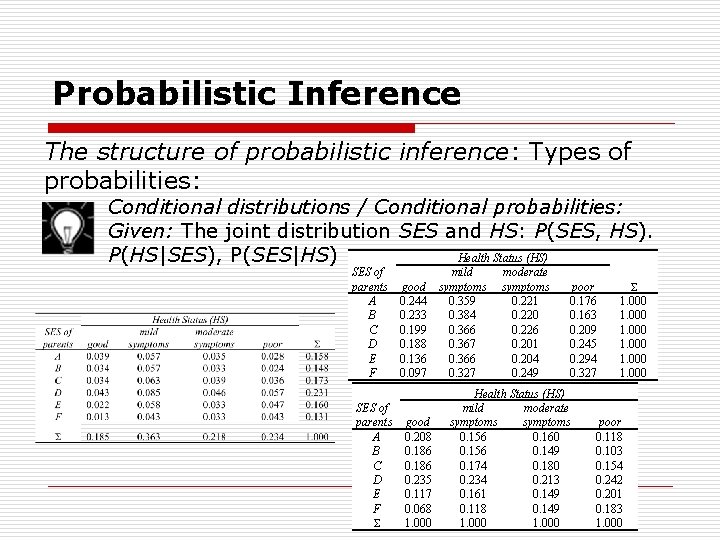

Probabilistic Inference The structure of probabilistic inference: Types of probabilities: Conditional distributions / Conditional probabilities: Given: The joint distribution SES and HS: P(SES, HS). Health Status (HS) P(HS|SES), P(SES|HS) SES of parents A B C D E F mild good symptoms 0. 244 0. 359 0. 233 0. 384 0. 199 0. 366 0. 188 0. 367 0. 136 0. 366 0. 097 0. 327 good 0. 208 0. 186 0. 235 0. 117 0. 068 1. 000 moderate symptoms 0. 221 0. 220 0. 226 0. 201 0. 204 0. 249 poor 0. 176 0. 163 0. 209 0. 245 0. 294 0. 327 Health Status (HS) mild moderate symptoms 0. 156 0. 160 0. 156 0. 149 0. 174 0. 180 0. 234 0. 213 0. 161 0. 149 0. 118 0. 149 1. 000 1. 000 poor 0. 118 0. 103 0. 154 0. 242 0. 201 0. 183 1. 000

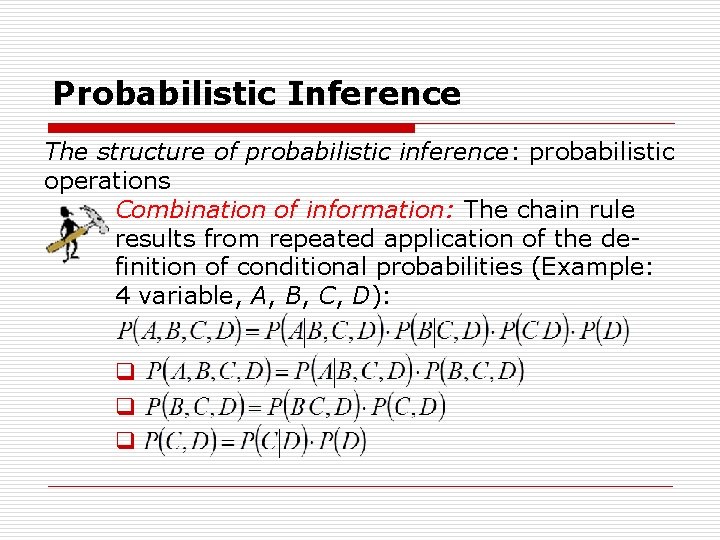

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Combination of information: The chain rule results from repeated application of the de finition of conditional probabilities (Example: 4 variable, A, B, C, D): q q q

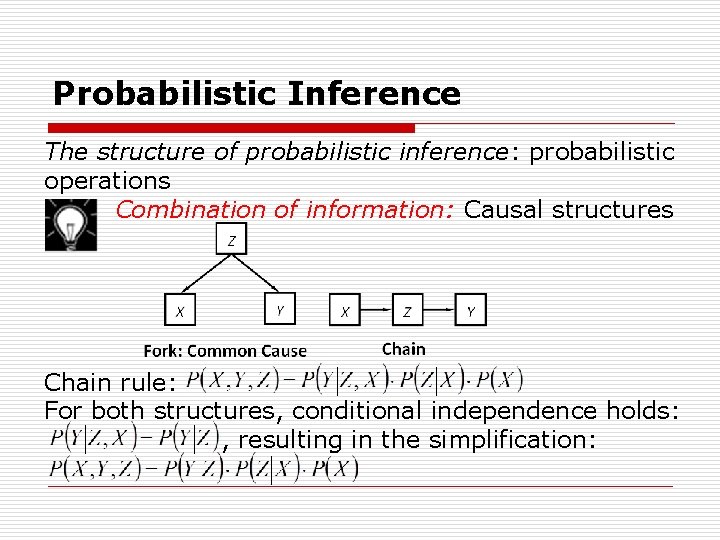

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Combination of information: Causal structures Chain rule: For both structures, conditional independence holds: , resulting in the simplification:

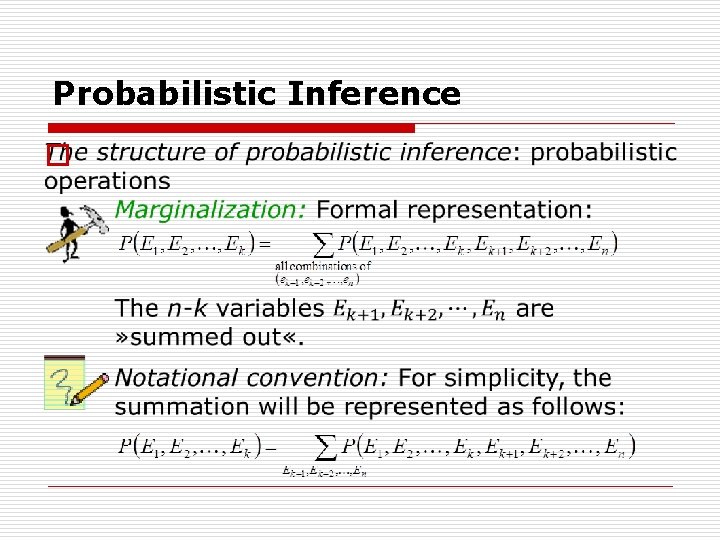

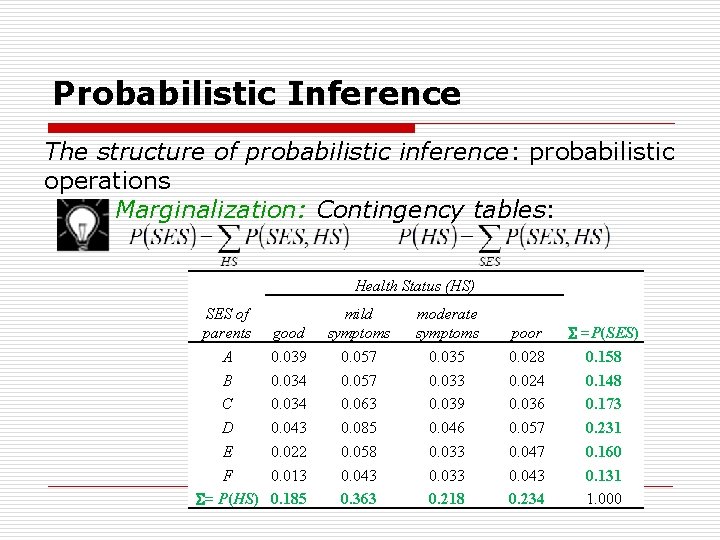

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Marginalization: The operation of marginalization consists in a summation performed on the joint distribution. The summation is taken over all combination of values of those variables that do not make up the resulting marginal distribution (These variables are » summed out « of the distribution). The result of marginalization is a marginal distribution.

Probabilistic Inference o

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Marginalization: Contingency tables: Health Status (HS) SES of parents A B C D E F = P(HS) good 0. 039 0. 034 0. 043 0. 022 0. 013 0. 185 mild symptoms 0. 057 0. 063 0. 085 0. 058 0. 043 0. 363 moderate symptoms 0. 035 0. 033 0. 039 0. 046 0. 033 0. 218 poor 0. 028 0. 024 0. 036 0. 057 0. 043 0. 234 =P(SES) 0. 158 0. 148 0. 173 0. 231 0. 160 0. 131 1. 000

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Conditioning: Application of the definition of conditional probability: Conditioning on a specific combination of values of the conditioning variables (simpler): Why simpler?

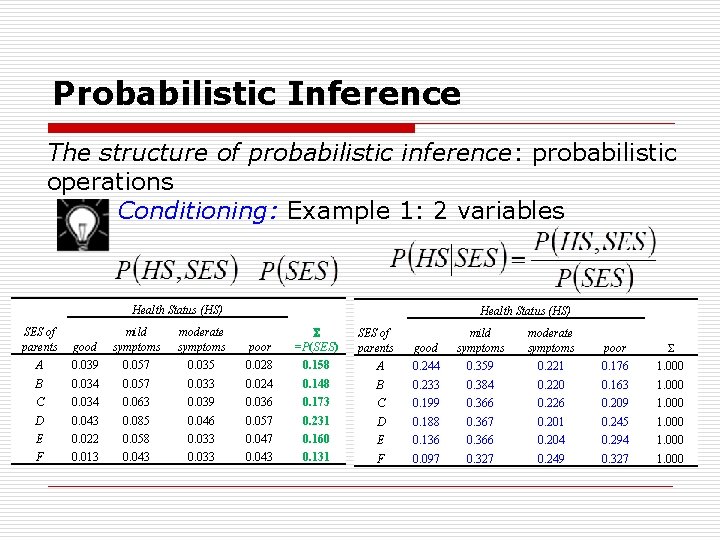

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Conditioning: Example 1: 2 variables Health Status (HS) SES of parents good mild symptoms moderate symptoms poor =P(SES) A 0. 039 0. 057 0. 035 0. 028 0. 158 B 0. 034 0. 057 0. 033 0. 024 C 0. 034 0. 063 0. 039 D 0. 043 0. 085 E 0. 022 F 0. 013 SES of parents good mild symptoms moderate symptoms poor A 0. 244 0. 359 0. 221 0. 176 1. 000 0. 148 B 0. 233 0. 384 0. 220 0. 163 1. 000 0. 036 0. 173 C 0. 199 0. 366 0. 226 0. 209 1. 000 0. 046 0. 057 0. 231 D 0. 188 0. 367 0. 201 0. 245 1. 000 0. 058 0. 033 0. 047 0. 160 E 0. 136 0. 366 0. 204 0. 294 1. 000 0. 043 0. 033 0. 043 0. 131 F 0. 097 0. 327 0. 249 0. 327 1. 000

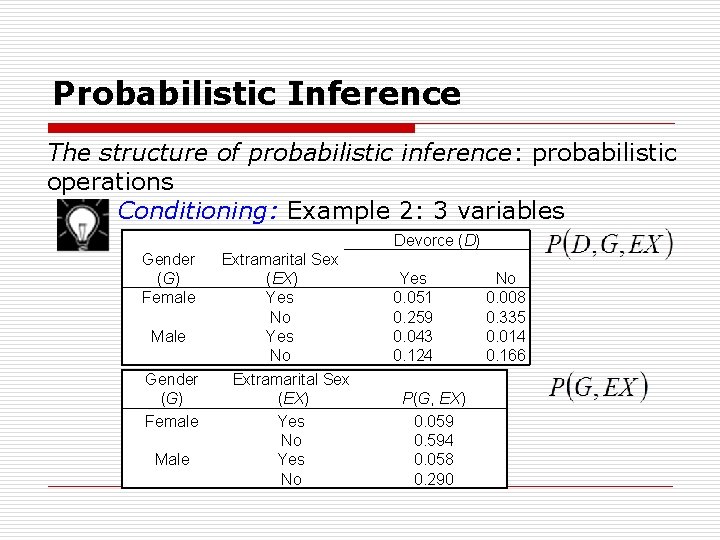

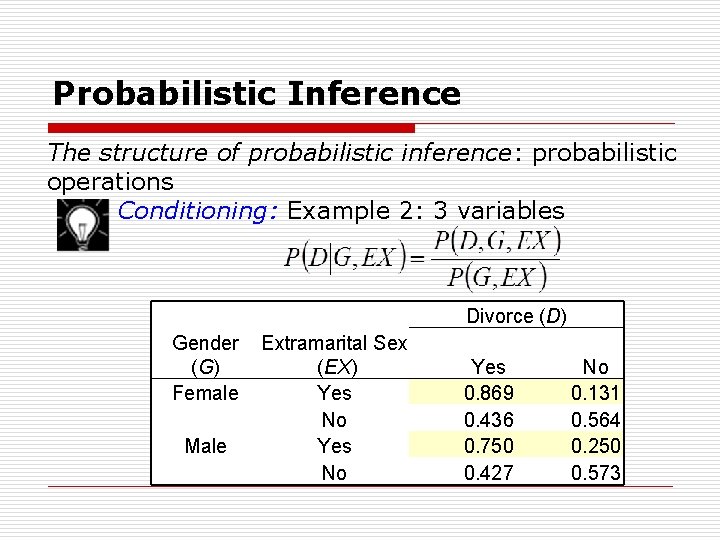

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Conditioning: Example 2: 3 variables Devorce (D) Gender (G) Female Male Extramarital Sex (EX) Yes No Yes 0. 051 0. 259 0. 043 0. 124 P(G, EX) 0. 059 0. 594 0. 058 0. 290 No 0. 008 0. 335 0. 014 0. 166

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Conditioning: Example 2: 3 variables Divorce (D) Gender (G) Female Male Extramarital Sex (EX) Yes No Yes 0. 869 0. 436 0. 750 0. 427 No 0. 131 0. 564 0. 250 0. 573

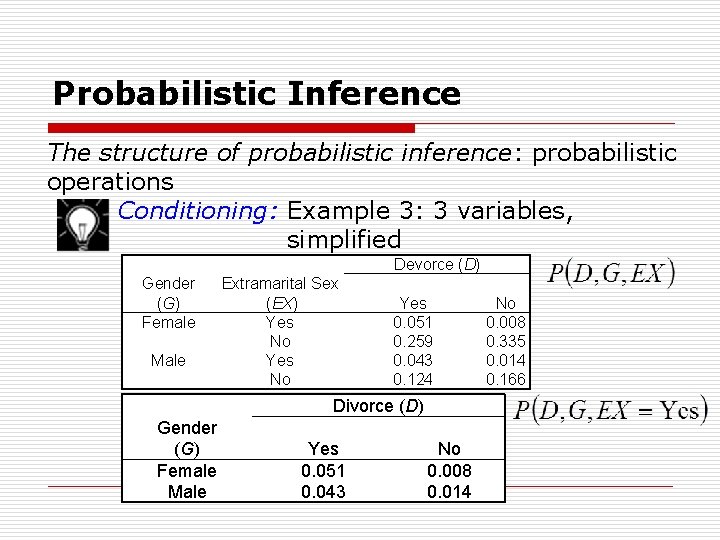

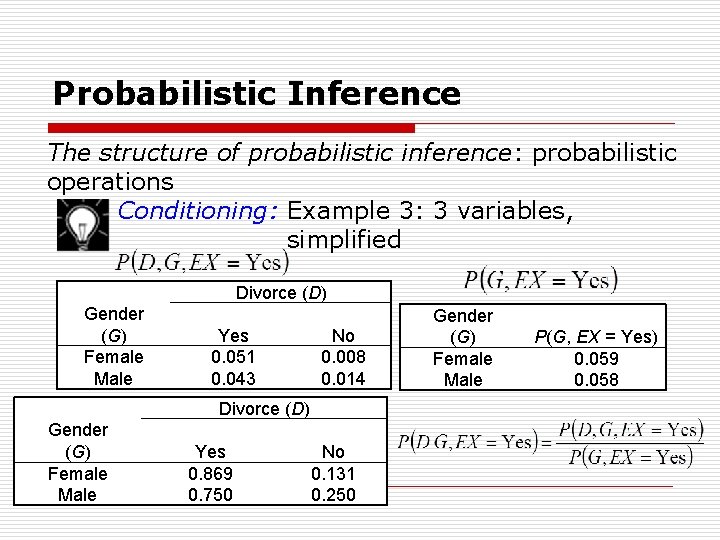

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Conditioning: Example 3: 3 variables, simplified Devorce (D) Gender (G) Female Male Extramarital Sex (EX) Yes No Yes 0. 051 0. 259 0. 043 0. 124 Divorce (D) Gender (G) Female Male Yes 0. 051 0. 043 No 0. 008 0. 014 No 0. 008 0. 335 0. 014 0. 166

Probabilistic Inference The structure of probabilistic inference: probabilistic operations Conditioning: Example 3: 3 variables, simplified Divorce (D) Gender (G) Female Male Yes 0. 051 0. 043 No 0. 008 0. 014 Divorce (D) Gender (G) Female Male Yes 0. 869 0. 750 No 0. 131 0. 250 Gender (G) Female Male P(G, EX = Yes) 0. 059 0. 058

Probabilistic Inference The structure of probabilistic inference:

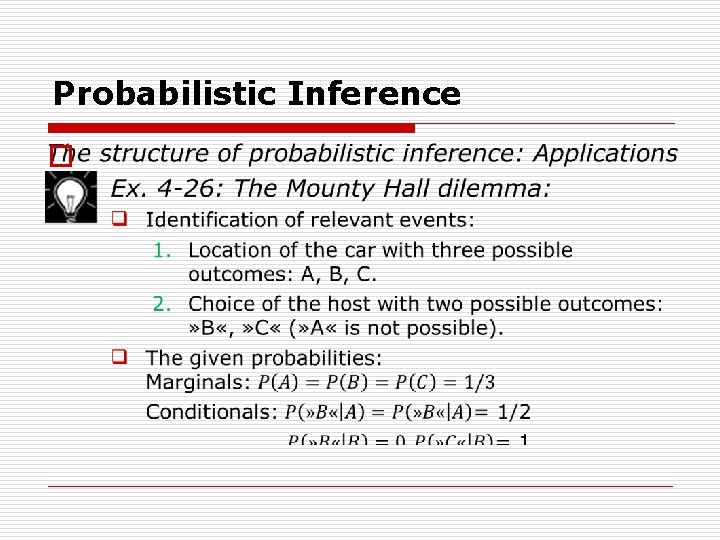

Probabilistic Inference The structure of probabilistic inference: Applications Ex. 4 -26: The Mounty Hall dilemma: Suppose you're on a game show, and you're given the choice of three doors (A, B, or C): Behind one door is a car; behind the others, goats. You pick a door, say Door A, and the host, who knows what's behind the doors, opens another door, say Door C, which has a goat. He then says to you, » Do you want to pick Door B? « Is it to your advantage to switch your choice? Comment: The problem is not completely determined since it does not specify how the moderator chooses doors (B or C) in case of the car being behind Door A.

Probabilistic Inference o

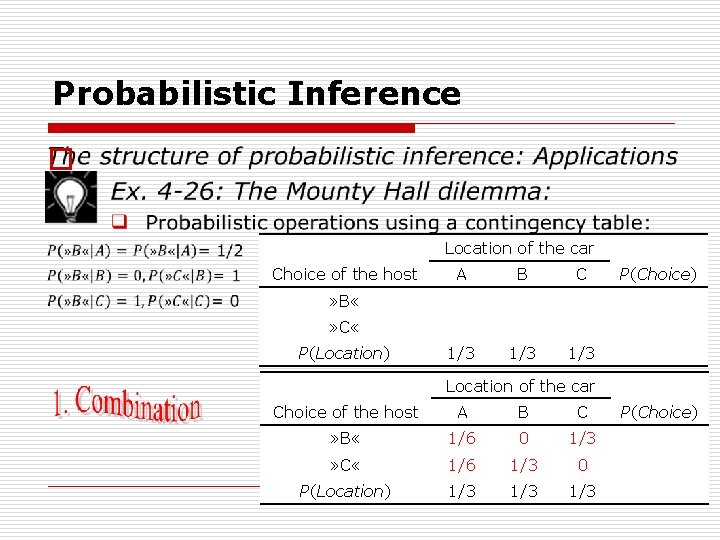

Probabilistic Inference o Location of the car Choice of the host A B C 1/3 1/3 P(Choice) » B « » C « P(Location) Location of the car Choice of the host A B C » B « 1/6 0 1/3 » C « 1/6 1/3 0 P(Location) 1/3 1/3 P(Choice)

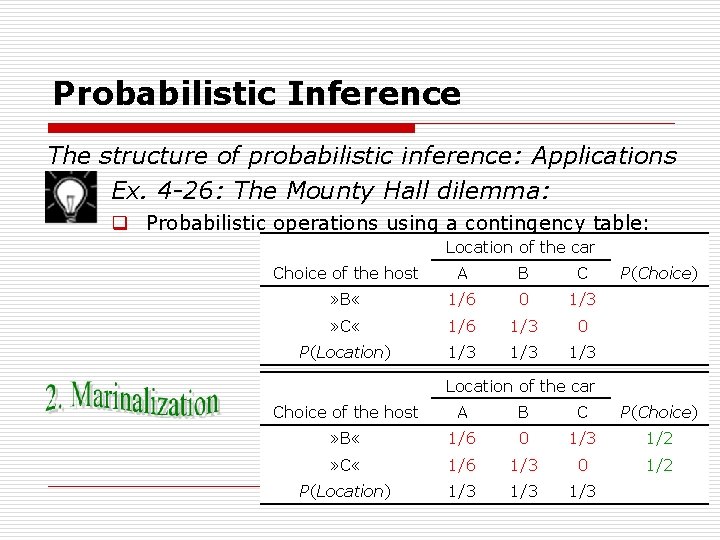

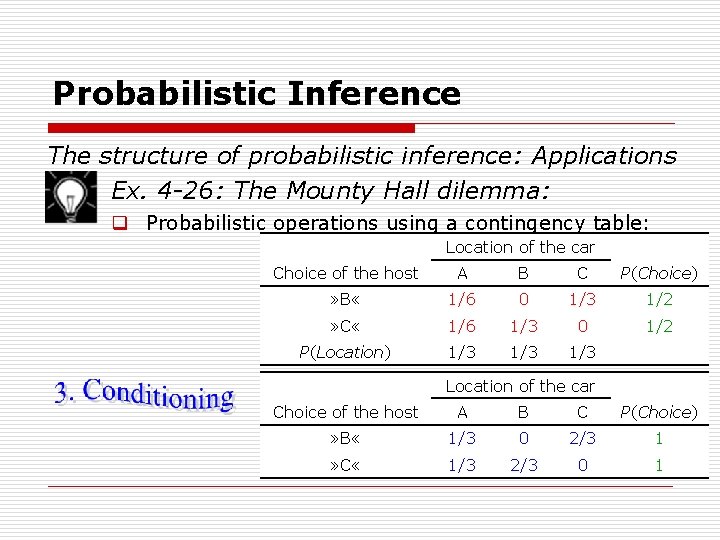

Probabilistic Inference The structure of probabilistic inference: Applications Ex. 4 -26: The Mounty Hall dilemma: q Probabilistic operations using a contingency table: Location of the car Choice of the host A B C » B « 1/6 0 1/3 » C « 1/6 1/3 0 P(Location) 1/3 1/3 P(Choice) Location of the car Choice of the host A B C P(Choice) » B « 1/6 0 1/3 1/2 » C « 1/6 1/3 0 1/2 P(Location) 1/3 1/3

Probabilistic Inference The structure of probabilistic inference: Applications Ex. 4 -26: The Mounty Hall dilemma: q Probabilistic operations using a contingency table: Location of the car Choice of the host A B C P(Choice) » B « 1/6 0 1/3 1/2 » C « 1/6 1/3 0 1/2 P(Location) 1/3 1/3 Location of the car Choice of the host A B C P(Choice) » B « 1/3 0 2/3 1 » C « 1/3 2/3 0 1

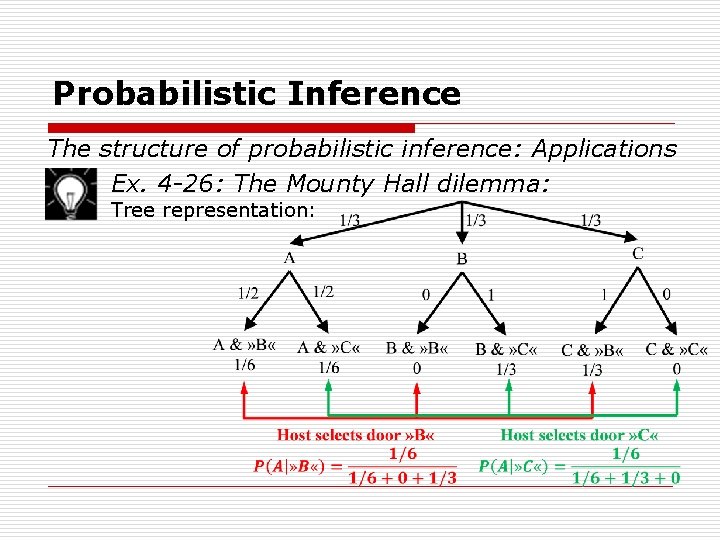

Probabilistic Inference The structure of probabilistic inference: Applications Ex. 4 -26: The Mounty Hall dilemma: Tree representation:

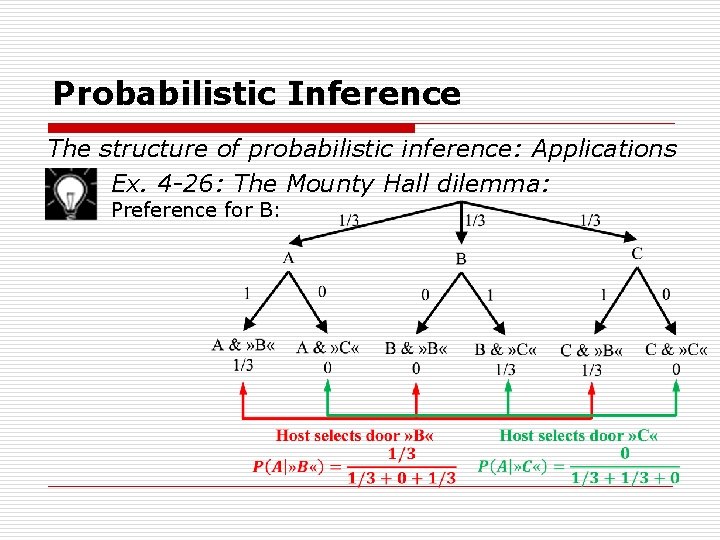

Probabilistic Inference The structure of probabilistic inference: Applications Ex. 4 -26: The Mounty Hall dilemma: Preference for B:

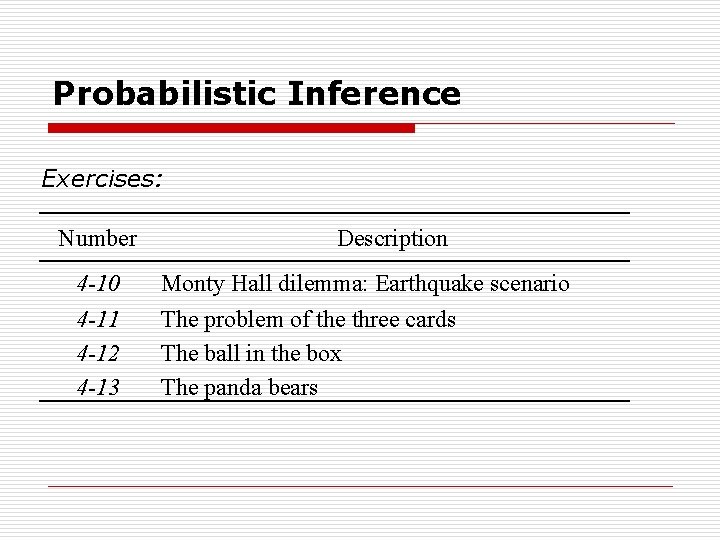

Probabilistic Inference Exercises: Number 4 -10 4 -11 4 -12 4 -13 Description Monty Hall dilemma: Earthquake scenario The problem of the three cards The ball in the box The panda bears

- Slides: 56