Offline Computing Tommaso Boccali INFN Pisa 1 2

Offline & Computing Tommaso Boccali (INFN Pisa) 1

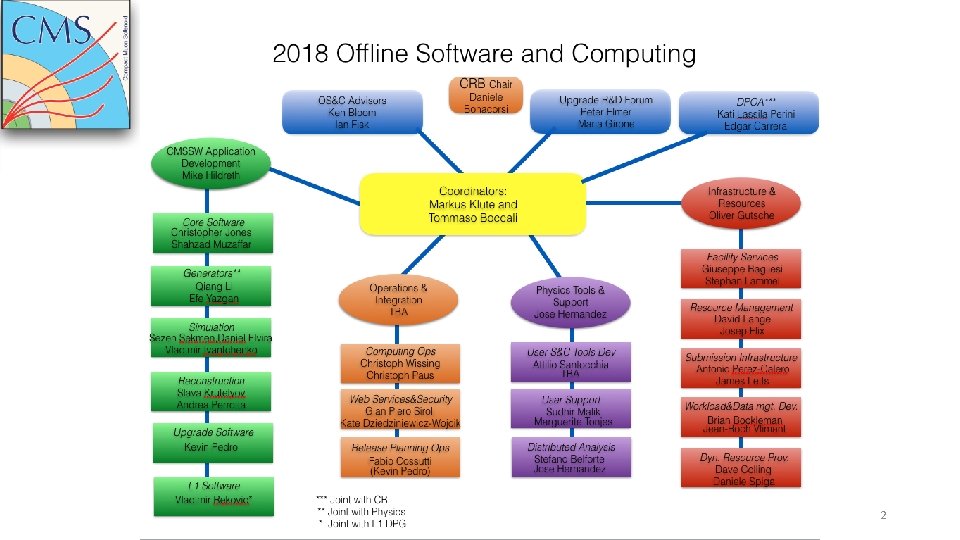

2

WHAT is the Offline and Computing Project doing • We are in charge of the CMS Data from the moment it goes out of DAQ @ Cessy site and enters CERN/IT • We are in charge of • Data custodiality (earthquake-solid) • Data processing from DAQ channels to physics enabling objects (from 0101001 to jets, particles, leptons, …. ) • Periodic Data reprocessing • Delivery of data to users for analyses • A parallel path is followed for Monte Carlo events • From Monte Carlo Generators (our DAQ…) to Geant 4 (our detector…) to analysis ready datasets 3

HOW do we do it? • A simple calculation, for a typical Run. II year (say 2018) • CMS collects 10 billion collision events, 1 MB/ev typically • You want to be earthquake-solid, so you want 2 copies on tape: 20 PB/y • You need to process them at least a coup of times; processing takes ~ 20 sec/ev) • You need 200 billion second CPU (you need 15000 CPU cores) • You want almost 2 MC events per data event (and they cost more, ~50 sec/ev) • Another 70000 cores, another 40 PB • You do much more (reconstruction of previous years, simulation of future detectors) • Double the previous numbers • You need do run user analyses • Add another 50% 4

All in all … • CMS computing requests for 2018 are roughly • CPU = 200. 000 computing cores • DISK = 150 PB • Tape = 200 PB • How to handle this? • A single huge center (but you lose earthquake-solid); also, political reasonings • Distributed computing • Nice idea, but how? • Monarc, GRID, eventually Clouds, etc … • This is the story of the last 20 years 5

Today - WLCG • 200+ sites • Totals exceeding • 800. 000 Computing cores • 500 PB Disk • 500 PB Tape • WLCG provides the middleware to Experiments, governs its deployment and evolution, reports to committes and review panels • It is somehow an additional LHC experiment, and CMS is also part of it 6

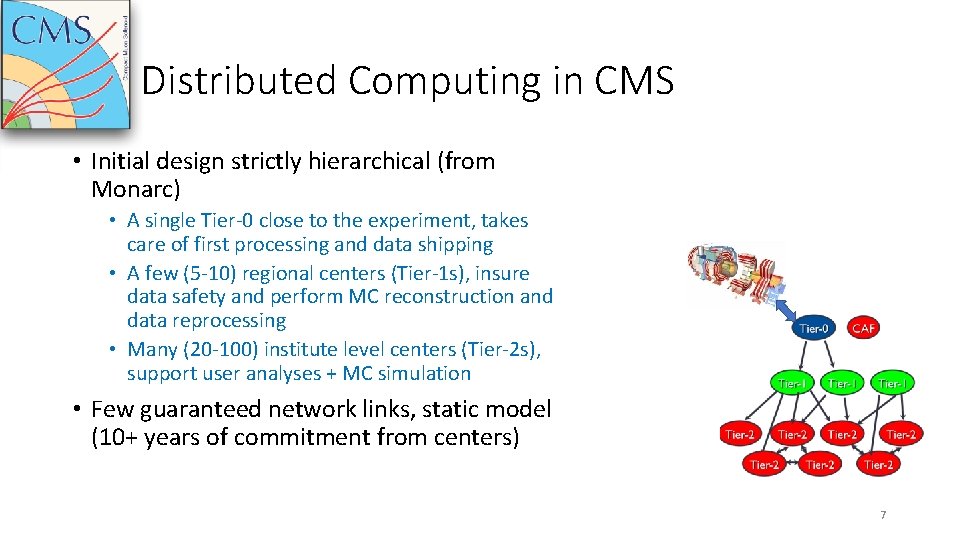

Distributed Computing in CMS • Initial design strictly hierarchical (from Monarc) • A single Tier-0 close to the experiment, takes care of first processing and data shipping • A few (5 -10) regional centers (Tier-1 s), insure data safety and perform MC reconstruction and data reprocessing • Many (20 -100) institute level centers (Tier-2 s), support user analyses + MC simulation • Few guaranteed network links, static model (10+ years of commitment from centers) 7

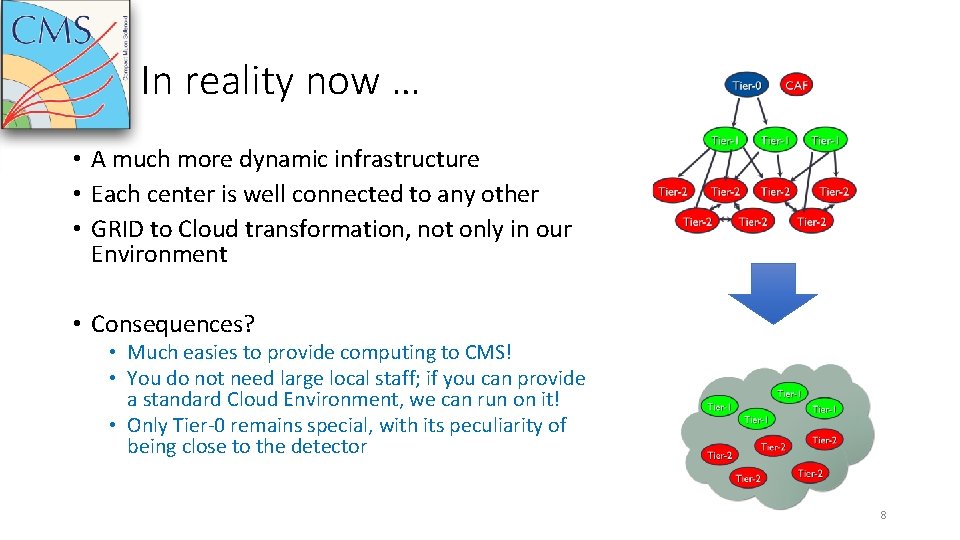

In reality now … • A much more dynamic infrastructure • Each center is well connected to any other • GRID to Cloud transformation, not only in our Environment • Consequences? • Much easies to provide computing to CMS! • You do not need large local staff; if you can provide a standard Cloud Environment, we can run on it! • Only Tier-0 remains special, with its peculiarity of being close to the detector 8

What we can use today (well, tomorrow early morning…) • At the resource level, CMS is more or less advanced in the utilization of • Standard GRID centers (easy …. ) • Institute Cloud infrastructures (Open. Stack, Open. Nebula, VMWare, …) • DODAS • Commercial Clouds (Google, Amazon, …) • Hep. Cloud • HPC systems • With some specific effort, many of them can be used • So, building and maintaining a Tier-2 is not the only option! 9

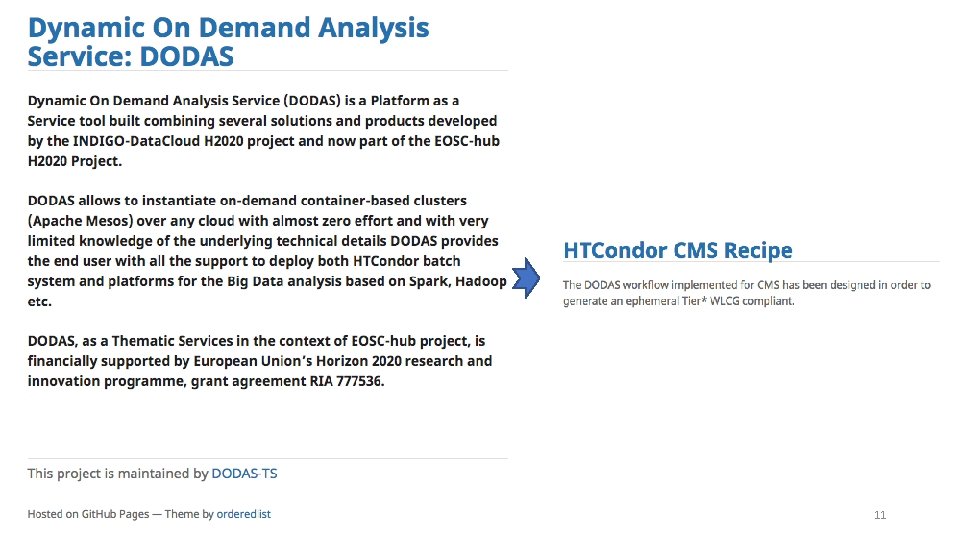

Institute level Cloud infrastructures • We provide a tool, called DODAS, which can run a “Tier-2/3 on demand” given an allocation on industry standard Cloud Platforms • It is *really* 1 click and go. • It deploys Compute nodes, services like squid caches and Xroot dcaches • After deployment (30 min), it can directly be used for either Analysis and Production transparently • This is the easiest way to provide resources to CMS – using what you might already have in your institutions • It can equally work if you have a Cloud allocation / a Grant from a Commercial Cloud Provider: you can build a virtual center from 1 to 1000 s computing cores, lasting from a few hours to months 10

11

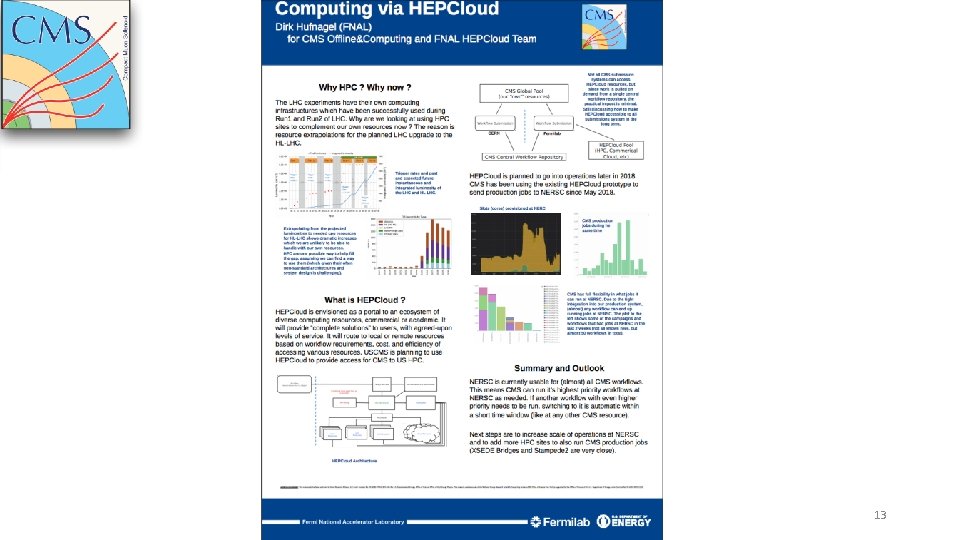

Commercial Clouds • One way to provide resources to CMS (instead of building a Tier-2) is to provide access “in your name” to commercial cloud resources (Amazon, Google, Rac. KSpace, T-systems, …) • Fermilab is the most advanced with its Hep. Cloud: • Hide the commercial part behind its services • Invidible to the experiment (we like it!) • DODAS is also a possibility, depending on how much local effort you want to put in the deployment • DODAS is easier but could be less optimal, being a catch-all solution 12

13

HPC systems • They are expected to be more and more important for High Energy Physics Computing • There is experience, but currently each HPC system is unique and needs unique developments • Also, some are friendly • Networking allowed with the outside world; x 86_64 architectures; provide CVMFS and virtualization, … • Others are not • Exotic architectures; network segregation; …. • Which is the situation in your countries? Are there HPC centers available? We would love to work with you to check the usability & help you to prepare a utilization proposal! 14

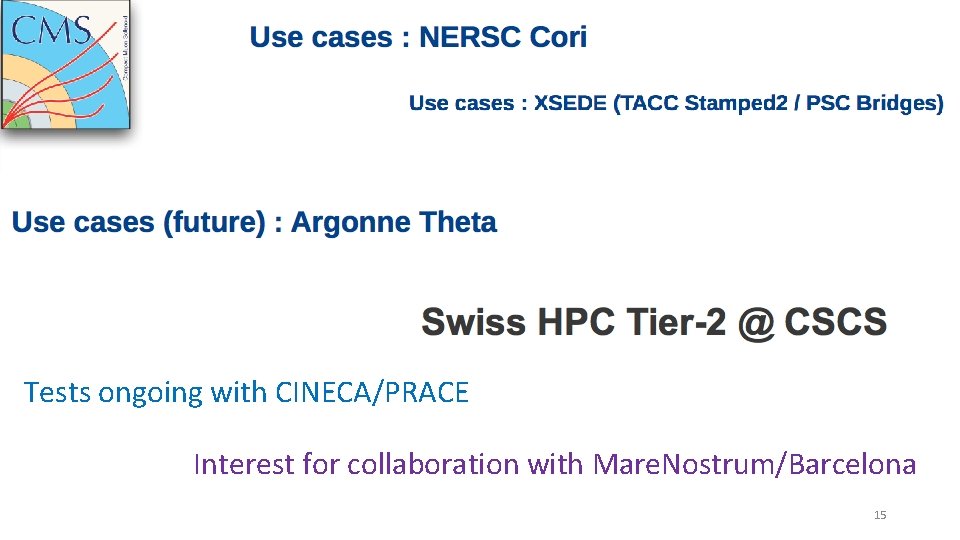

Tests ongoing with CINECA/PRACE Interest for collaboration with Mare. Nostrum/Barcelona 15

• SW development is essential to CMS • Our SW is anything but static • O(2 -10) releases per week • Adopting new technologies at a fast pace ge we ffo rs rt f th rom eb ar CM rie SS rf W or to us su er pp st o es rt tin ne gi w de te as chn /a o lgo lo rit gies hm SW development • C++-11 C++-14 C++-17 • CUDA available in the standard SW environment • Tensor. Flow and Keras available in the standard SW environment • 2017: pixels • 2018: HCAL Endcap • … Bi • CMS loves to slightly change the detector each year 16

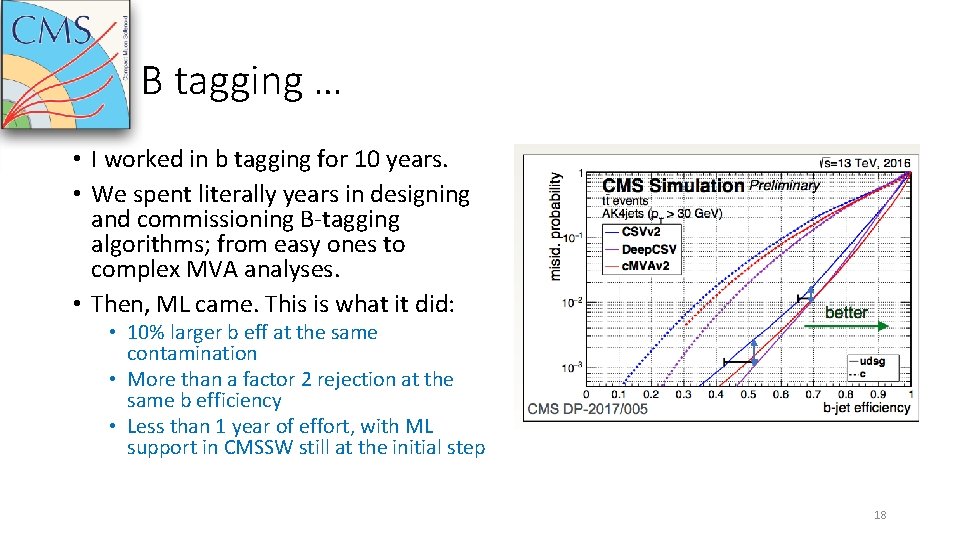

SW development • During 2004 -2010 huge effort on software, also detector driven • People building a detector wanted to have the best performance out of it, hence were spending effort on sw • 2010+ data analysis started to be felt more exciting • Effort on software much lower • There is a lot of space for physicists / CS to contribute and take ownership of MAJOR parts of the code • Reconstruction algorithms, including Machine Learning • Code optimization, refactoring, re-engineering • Adoption of new tools • Algebra packages, toolkits, … • There are oceans of opportunities for someone who is computer savvy and with a decent physics background • A very fast example … 17

B tagging … • I worked in b tagging for 10 years. • We spent literally years in designing and commissioning B-tagging algorithms; from easy ones to complex MVA analyses. • Then, ML came. This is what it did: • 10% larger b eff at the same contamination • More than a factor 2 rejection at the same b efficiency • Less than 1 year of effort, with ML support in CMSSW still at the initial step 18

Service Tool development • CMS Computing is a complex machine • It has to move PBs of files weekly • It has to process ~ 1 M jobs every day • It handles 500 unique users per week • We need a lot of infrastructure • • Data Management Workload management Analysis Infrastructure Databases, web services, monitoring tools, …. • We feel we are deeply understaffed for most of the tasks • Many of them at “survival support level”; we would love the injection of new forces and ideas 19

Computing Operations • Every day in CMS • LHC delivers ~ 0. 5 /fb (300 TB of RAW data) • We need to process it and store it safely • PPD operators submit • Release validation samples • Production workflows • Data reprocessing • Users submit 100 s of analyses tasks • Coverage is 24 x 7 (yes, LHC does not stop at nights or weekends…) • Each of these needs data movement, monitoring, failure recovery, … • You would be surprised by the numbers of FTE involved here (surprise) 20

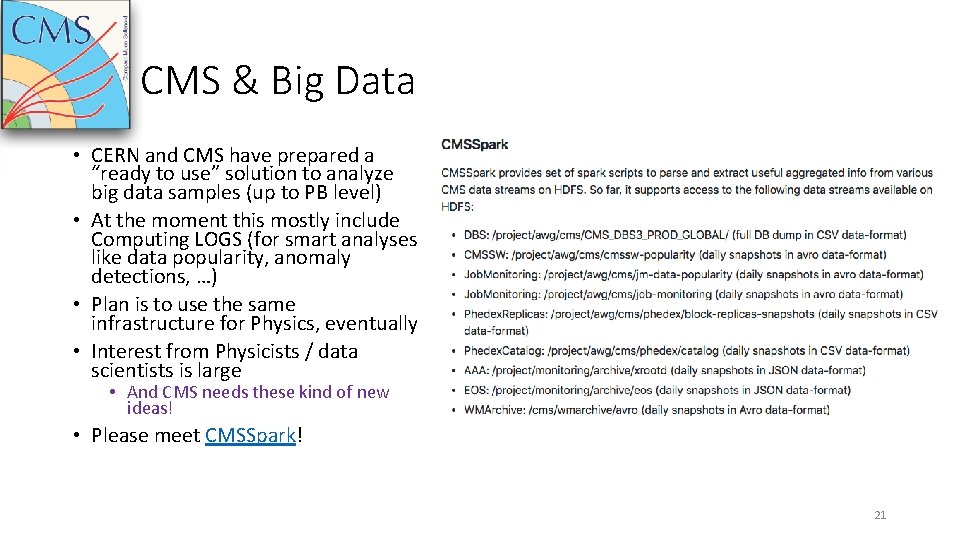

CMS & Big Data • CERN and CMS have prepared a “ready to use” solution to analyze big data samples (up to PB level) • At the moment this mostly include Computing LOGS (for smart analyses like data popularity, anomaly detections, …) • Plan is to use the same infrastructure for Physics, eventually • Interest from Physicists / data scientists is large • And CMS needs these kind of new ideas! • Please meet CMSSpark! 21

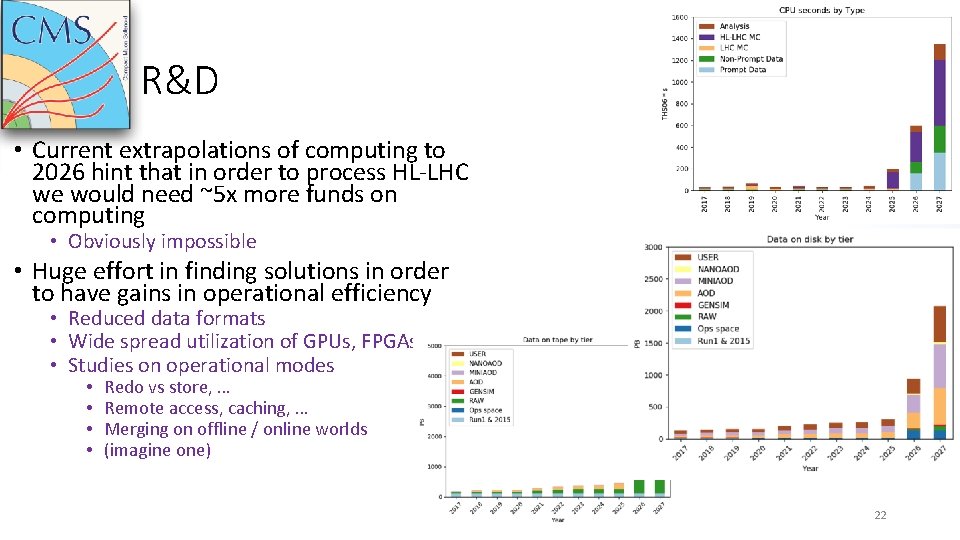

R&D • Current extrapolations of computing to 2026 hint that in order to process HL-LHC we would need ~5 x more funds on computing • Obviously impossible • Huge effort in finding solutions in order to have gains in operational efficiency • Reduced data formats • Wide spread utilization of GPUs, FPGAs, … • Studies on operational modes • • Redo vs store, … Remote access, caching, … Merging on offline / online worlds (imagine one) 22

Opportunities … • Opportunities are there in all the areas, practically • CMS is trying to lower the threshold for Resource Utilization • No more limited to “build a Tier 2 for us” • SW development (physics and services) • Oceans of opportunities, ranging from code curation to the utilization of novel technologies in basically all the fields • Operations • Even during Long Shutdown! Computing never stops (indeed periods w/o data taking are usually the busiest!) • Short / Long range R&D • Dive into the CMS Big Data (computing AND physics) • Modelling, access to new technologies • Test of completely new ideas (always welcome!!!) 23

. . And rewards! • We spoke about the EPR system last time, not covered here … • The tasks in O+C are usually large visibility ones • If you provide a resources OR take care of a service • R&D tasks are very attractive for data scientists • Easy access to Keras/TF _AND_ a huge amount of data in a single place • A HDFS/Spark cluster already configured and CMS-enabled • SW, operations, … • All are challenging environments, with high return in publications / conferences (CMS brought / proposed > 60 talks to last CHEP!) • At FA level, we are very interested in listening and helping with • Participation to EU projects • Utilization / collaboration with local facilities (HPC, clouds, …) 24

- Slides: 24