Highlights from OC week statusof operations Tommaso Boccali

Highlights from O+C week & statusof operations Tommaso Boccali – INFN Pisa

Outline O+C Spring 18 week Some sparse highlights Computing operations for 2018 Status on the day of first beams Status from last CRB AOB

Spring 18 O+C week A dense week: 8 half days packed with talks In many cases, we would have profited from (a lot) more time Focus on both short term (Run 2) and long term (Run 3 -4) Agenda at https: //indico. cern. ch/event/711343/ Some highlights here (which include also partially the status of 2018 Run)

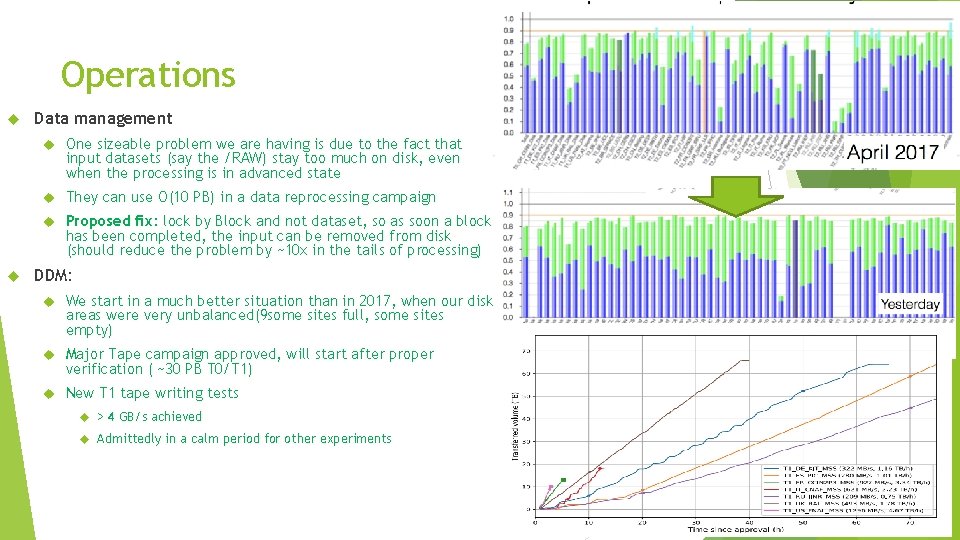

Operations Data management One sizeable problem we are having is due to the fact that input datasets (say the /RAW) stay too much on disk, even when the processing is in advanced state They can use O(10 PB) in a data reprocessing campaign Proposed fix: lock by Block and not dataset, so as soon a block has been completed, the input can be removed from disk (should reduce the problem by ~10 x in the tails of processing) DDM: We start in a much better situation than in 2017, when our disk areas were very unbalanced(9 some sites full, some sites empty) Major Tape campaign approved, will start after proper verification ( ~30 PB T 0/T 1) New T 1 tape writing tests > 4 GB/s achieved Admittedly in a calm period for other experiments

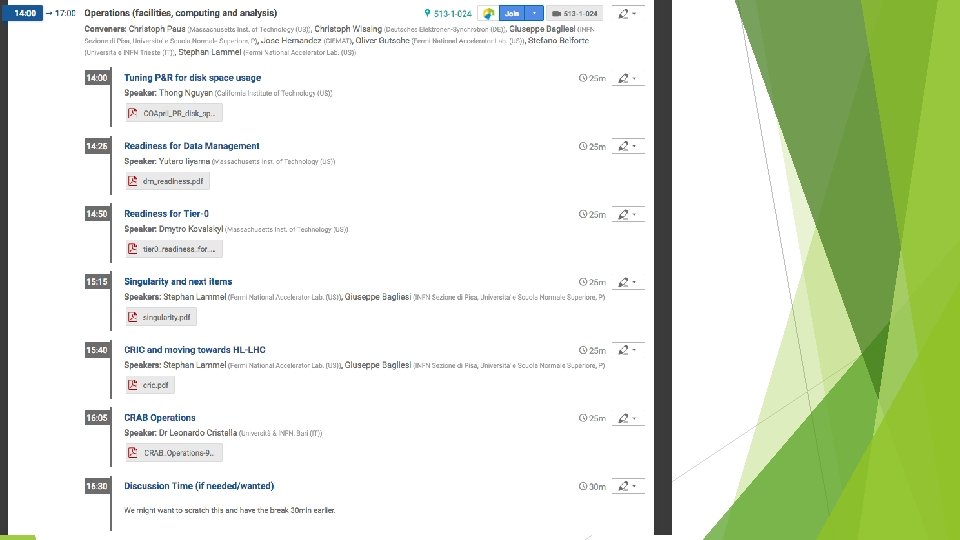

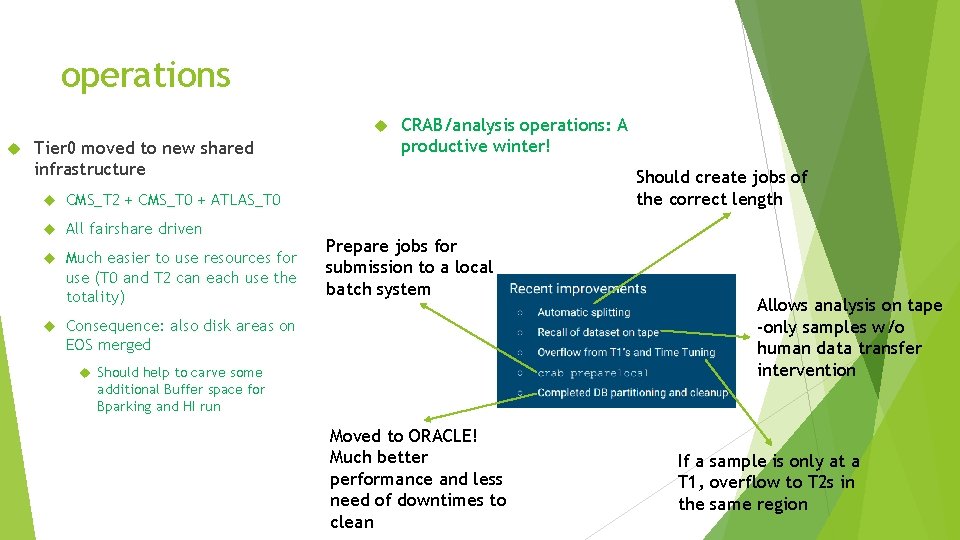

operations Tier 0 moved to new shared infrastructure CMS_T 2 + CMS_T 0 + ATLAS_T 0 All fairshare driven Much easier to use resources for use (T 0 and T 2 can each use the totality) CRAB/analysis operations: A productive winter! Should create jobs of the correct length Prepare jobs for submission to a local batch system Consequence: also disk areas on EOS merged Should help to carve some Allows analysis on tape -only samples w/o human data transfer intervention additional Buffer space for Bparking and HI run Moved to ORACLE! Much better performance and less need of downtimes to clean If a sample is only at a T 1, overflow to T 2 s in the same region

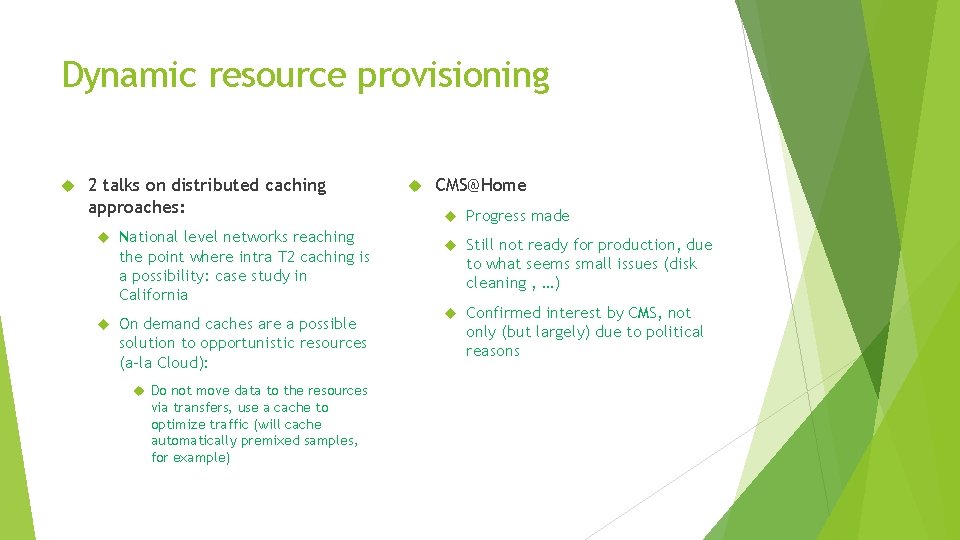

Dynamic resource provisioning 2 talks on distributed caching approaches: National level networks reaching the point where intra T 2 caching is a possibility: case study in California On demand caches are a possible solution to opportunistic resources (a-la Cloud): Do not move data to the resources via transfers, use a cache to optimize traffic (will cache automatically premixed samples, for example) CMS@Home Progress made Still not ready for production, due to what seems small issues (disk cleaning , …) Confirmed interest by CMS, not only (but largely) due to political reasons

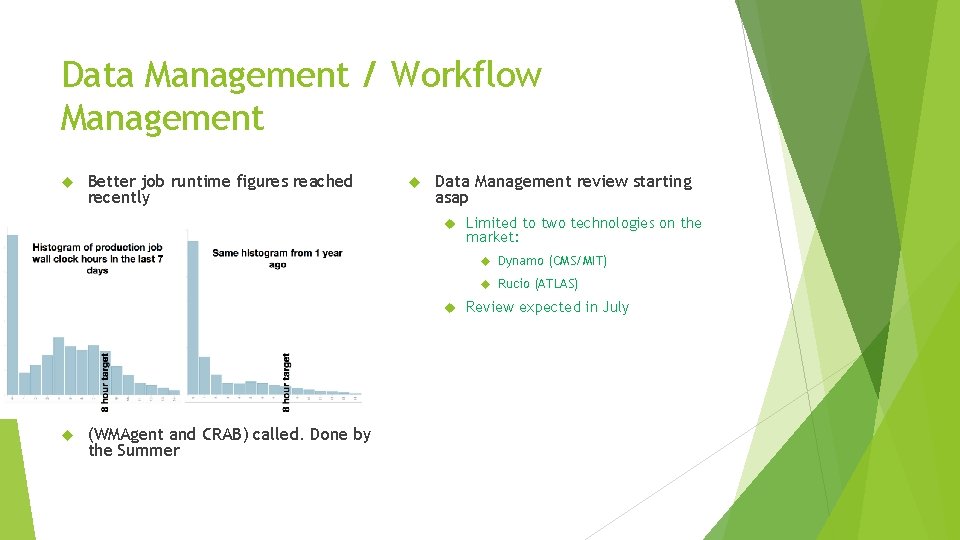

Data Management / Workflow Management Better job runtime figures reached recently Data Management review starting asap Limited to two technologies on the market: Dynamo (CMS/MIT) Rucio (ATLAS) Workflow review of our tools (WMAgent and CRAB) called. Done by the Summer Review expected in July

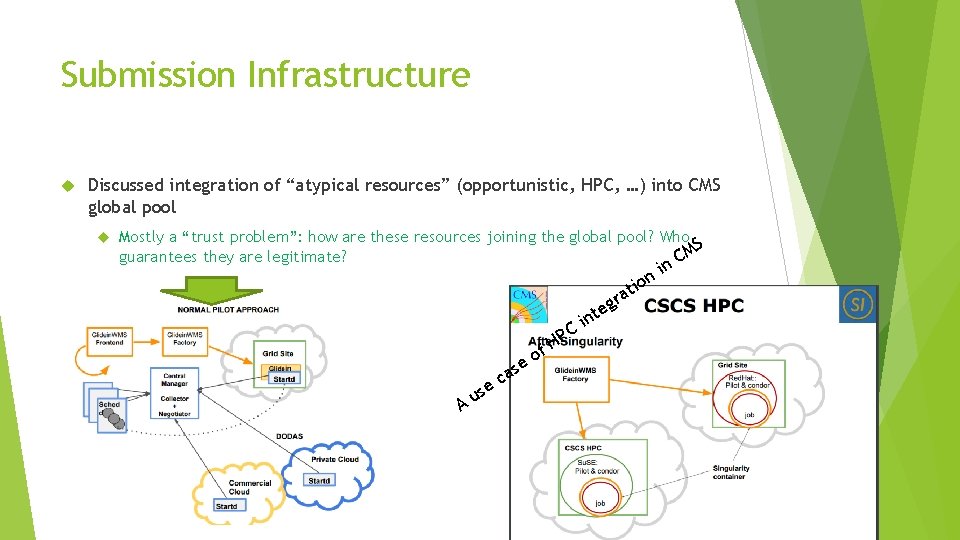

Submission Infrastructure Discussed integration of “atypical resources” (opportunistic, HPC, …) into CMS global pool Mostly a “trust problem”: how are these resources joining the global pool? Who S guarantees they are legitimate? CM PC f. H o e A us as c e in g te ra i n tio n

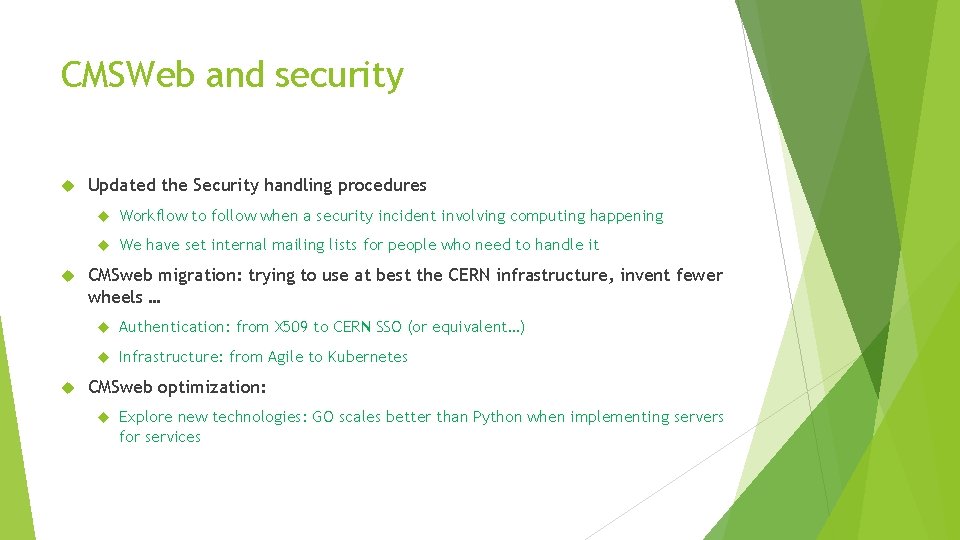

CMSWeb and security Updated the Security handling procedures Workflow to follow when a security incident involving computing happening We have set internal mailing lists for people who need to handle it CMSweb migration: trying to use at best the CERN infrastructure, invent fewer wheels … Authentication: from X 509 to CERN SSO (or equivalent…) Infrastructure: from Agile to Kubernetes CMSweb optimization: Explore new technologies: GO scales better than Python when implementing servers for services

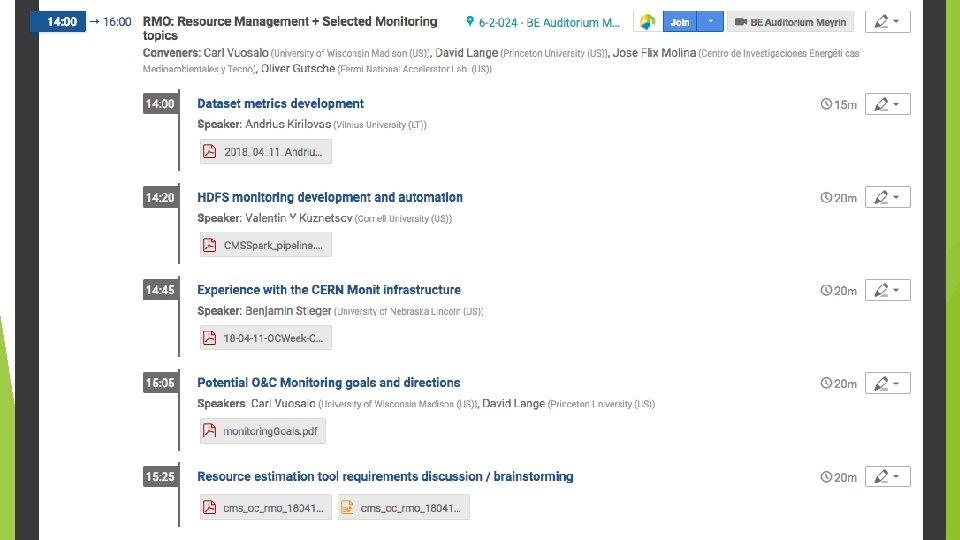

Resource Management & Monitoring RMO: See talk by David later today Monitoring: CMS in not in an optimal situation, many efforts but: Manpower in projects missing Missing a global coordination Proposal is to identify and fund a task in DM&WM, which Sets the guidelines Helps in implementation This does not immediately solve the problem, but is an attempt at unifying the approach Some manpower could come during LS 2 from “repositioning” cat-A inkind effort from operations

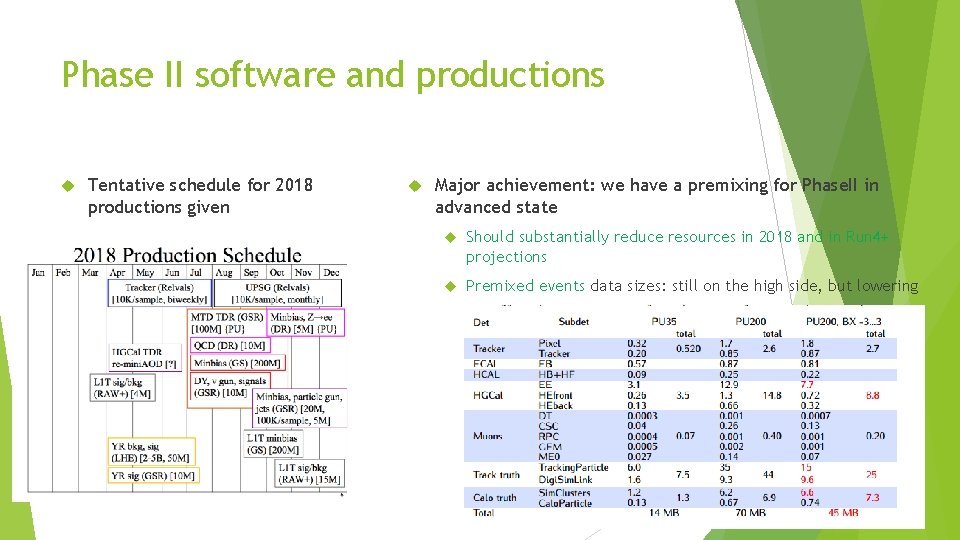

Phase II software and productions Tentative schedule for 2018 productions given Major achievement: we have a premixing for Phase. II in advanced state Should substantially reduce resources in 2018 and in Run 4+ projections Premixed events data sizes: still on the high side, but lowering

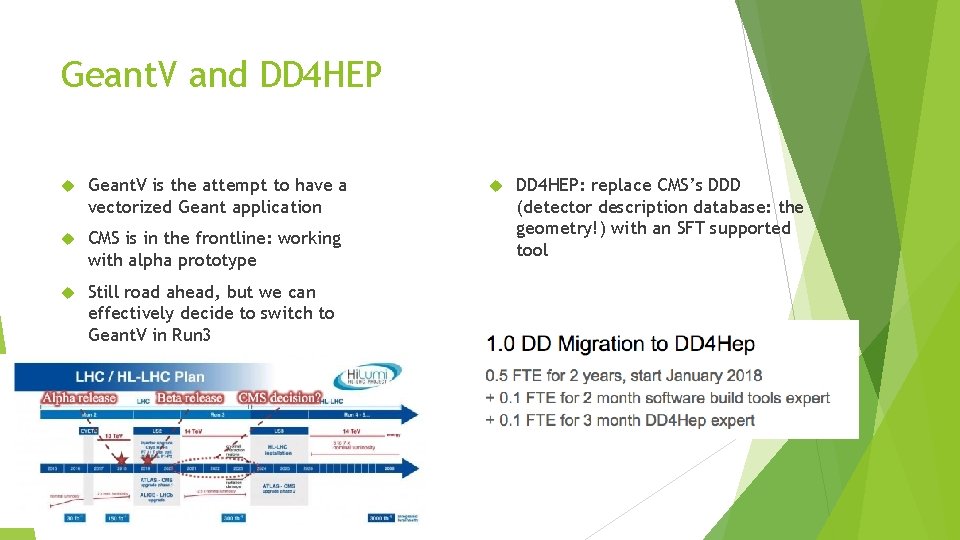

Geant. V and DD 4 HEP Geant. V is the attempt to have a vectorized Geant application CMS is in the frontline: working with alpha prototype Still road ahead, but we can effectively decide to switch to Geant. V in Run 3 DD 4 HEP: replace CMS’s DDD (detector description database: the geometry!) with an SFT supported tool

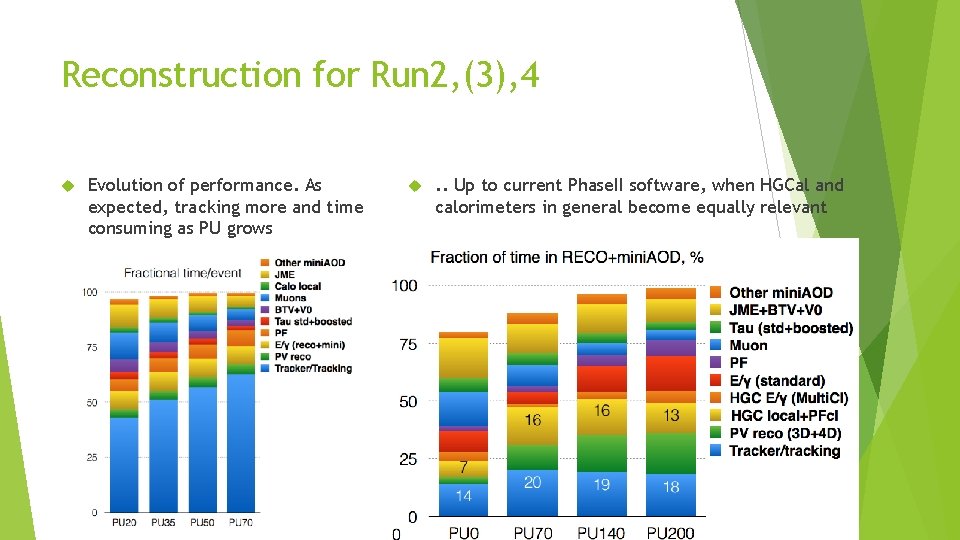

Reconstruction for Run 2, (3), 4 Evolution of performance. As expected, tracking more and time consuming as PU grows . . Up to current Phase. II software, when HGCal and calorimeters in general become equally relevant

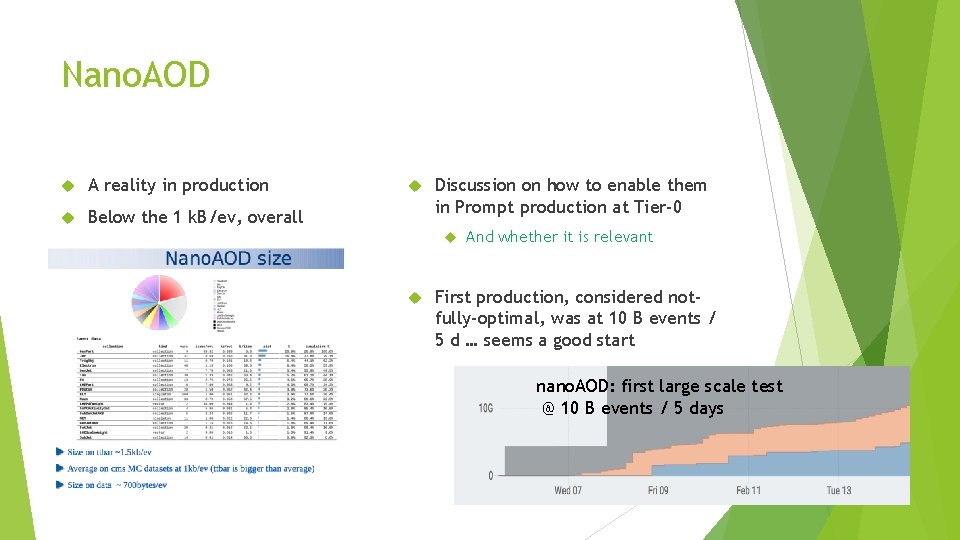

Nano. AOD A reality in production Below the 1 k. B/ev, overall Discussion on how to enable them in Prompt production at Tier-0 And whether it is relevant First production, considered notfully-optimal, was at 10 B events / 5 d … seems a good start nano. AOD: first large scale test @ 10 B events / 5 days

Now, what for 2018 data taking? Tier-0: to be commissioned, but Should allow for more flexibility when overflowing to Tier-2 Disk reorganization should have more margin for buffers DDM: Situation overall seems ok (below 60% occupancy) 2018 Disk still not very different from 2017. We still need to receive most of the new disk Expected PU is ~ 45, while resources were budgeted for ~35 More Tier 0 needed essentially New ideas in CMS can make it even more difficult B physics parking HI “stretched goals”

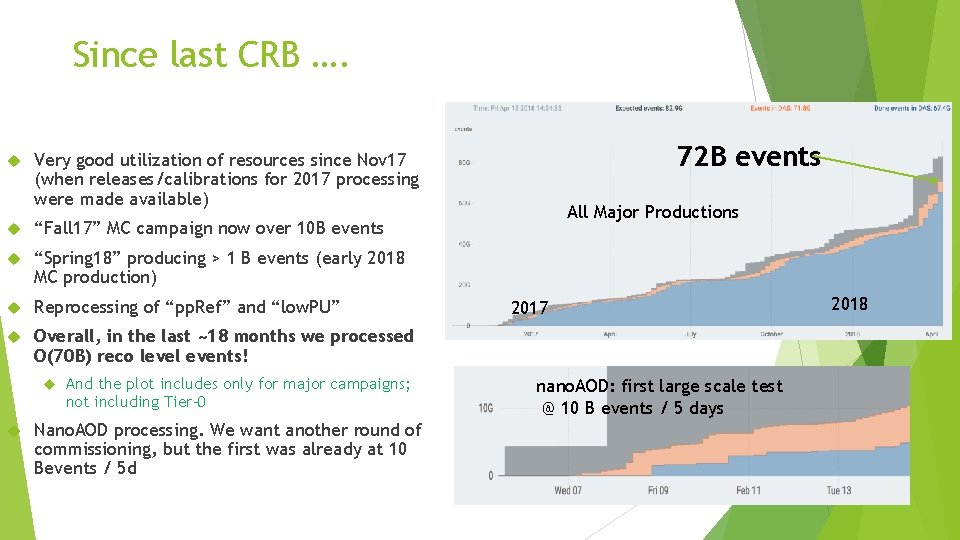

Since last CRB …. “Fall 17” MC campaign now over 10 B events “Spring 18” producing > 1 B events (early 2018 MC production) Reprocessing of “pp. Ref” and “low. PU” Overall, in the last ~18 months we processed O(70 B) reco level events! 72 B events Very good utilization of resources since Nov 17 (when releases/calibrations for 2017 processing were made available) And the plot includes only for major campaigns; not including Tier-0 Nano. AOD processing. We want another round of commissioning, but the first was already at 10 Bevents / 5 d All Major Productions 2017 nano. AOD: first large scale test @ 10 B events / 5 days 2018

- Slides: 24