More on Classic Synchronization Problems Monitors and Deadlock

More on Classic Synchronization Problems, Monitors, and Deadlock CSCI 3753 Operating Systems Spring 2005 Prof. Rick Han

Announcements • PA #2 is coming, assigned Tuesday night • Midterm is tentatively Thursday March 10 • Read chapters 9 and 10 • HW #3 is due Friday Feb. 25, a week+ from now – submitting graphic: . doc OK? - will post an answer – extra office hours Thursday 1 pm - post this • TA finished regrading some HWs that were cut off by moodle • Slides on synchronization online • user vs kernel level threads slides soon

From last time. . . • We discussed semaphores • Deadlock • Classic synchronization problems – Bounded Buffer Producer/Consumer Problem – First Readers/Writers Problem – Dining Philosophers Problem

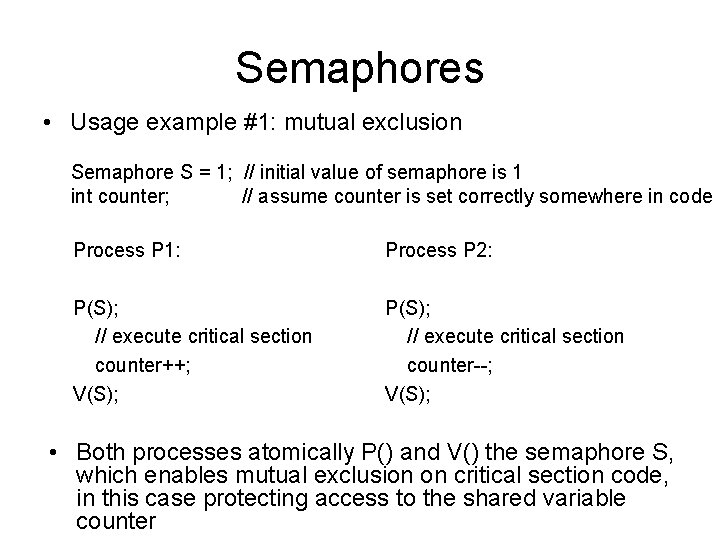

Semaphores • Usage example #1: mutual exclusion Semaphore S = 1; // initial value of semaphore is 1 int counter; // assume counter is set correctly somewhere in code Process P 1: Process P 2: P(S); // execute critical section counter++; V(S); P(S); // execute critical section counter--; V(S); • Both processes atomically P() and V() the semaphore S, which enables mutual exclusion on critical section code, in this case protecting access to the shared variable counter

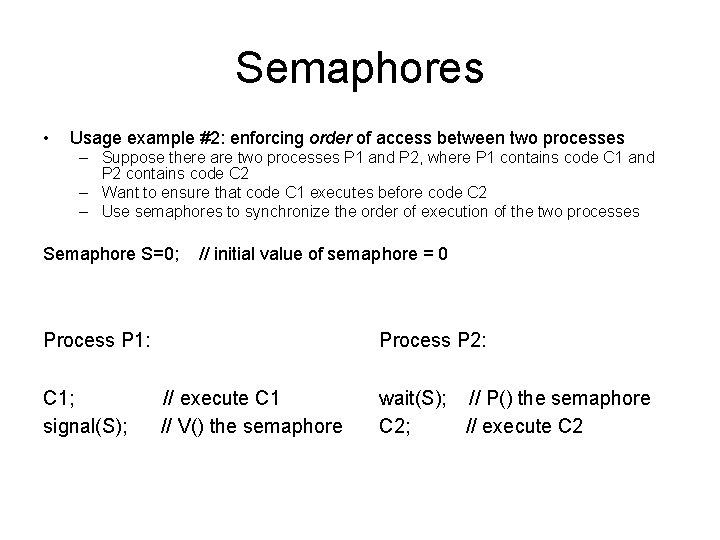

Semaphores • Usage example #2: enforcing order of access between two processes – Suppose there are two processes P 1 and P 2, where P 1 contains code C 1 and P 2 contains code C 2 – Want to ensure that code C 1 executes before code C 2 – Use semaphores to synchronize the order of execution of the two processes Semaphore S=0; // initial value of semaphore = 0 Process P 1: C 1; signal(S); Process P 2: // execute C 1 // V() the semaphore wait(S); // P() the semaphore C 2; // execute C 2

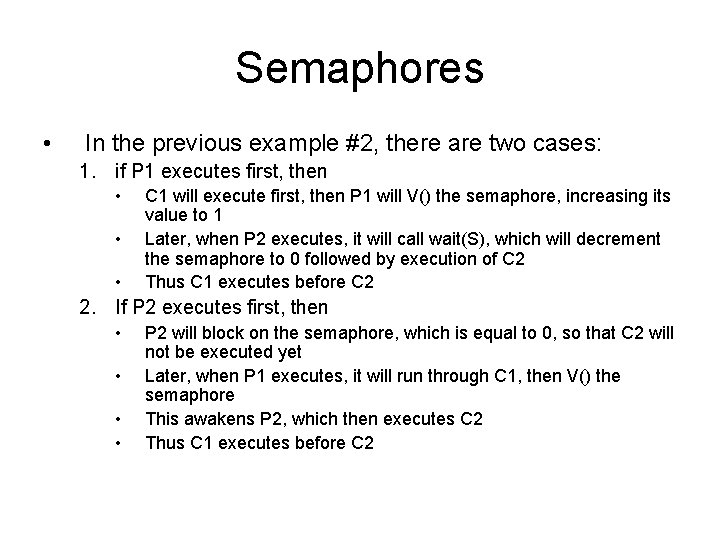

Semaphores • In the previous example #2, there are two cases: 1. if P 1 executes first, then • • • C 1 will execute first, then P 1 will V() the semaphore, increasing its value to 1 Later, when P 2 executes, it will call wait(S), which will decrement the semaphore to 0 followed by execution of C 2 Thus C 1 executes before C 2 2. If P 2 executes first, then • • P 2 will block on the semaphore, which is equal to 0, so that C 2 will not be executed yet Later, when P 1 executes, it will run through C 1, then V() the semaphore This awakens P 2, which then executes C 2 Thus C 1 executes before C 2

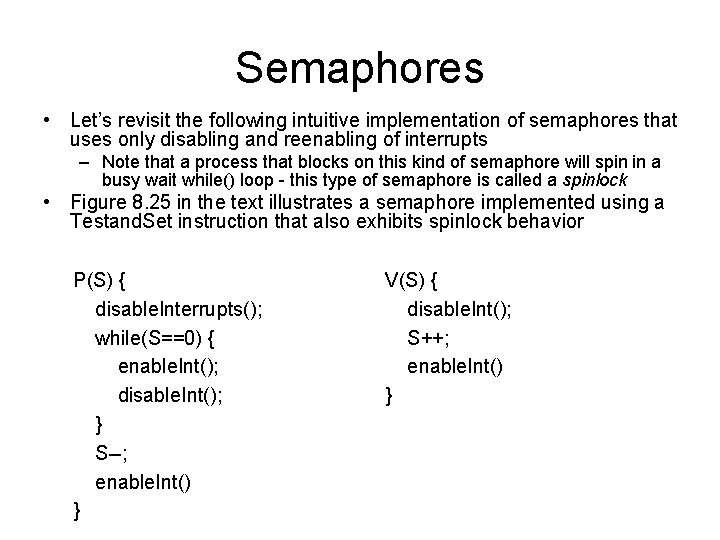

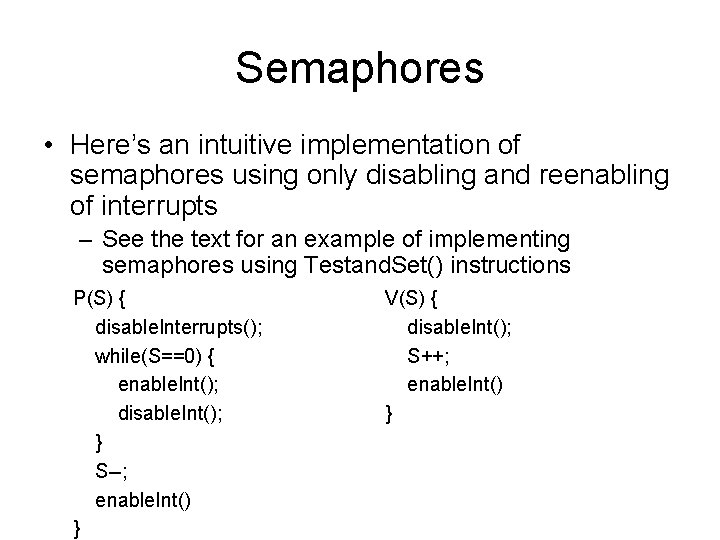

Semaphores • Let’s revisit the following intuitive implementation of semaphores that uses only disabling and reenabling of interrupts – Note that a process that blocks on this kind of semaphore will spin in a busy wait while() loop - this type of semaphore is called a spinlock • Figure 8. 25 in the text illustrates a semaphore implemented using a Testand. Set instruction that also exhibits spinlock behavior P(S) { disable. Interrupts(); while(S==0) { enable. Int(); disable. Int(); } S--; enable. Int() } V(S) { disable. Int(); S++; enable. Int() }

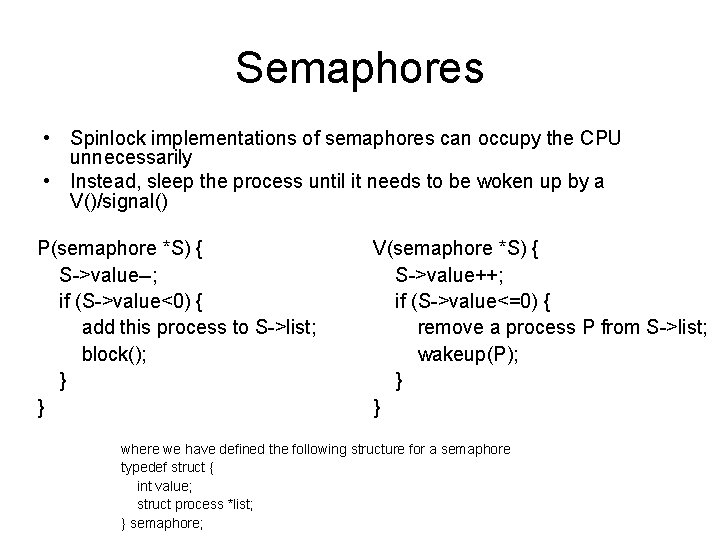

Semaphores • Spinlock implementations of semaphores can occupy the CPU unnecessarily • Instead, sleep the process until it needs to be woken up by a V()/signal() P(semaphore *S) { S->value--; if (S->value<0) { add this process to S->list; block(); } } V(semaphore *S) { S->value++; if (S->value<=0) { remove a process P from S->list; wakeup(P); } } where we have defined the following structure for a semaphore typedef struct { int value; struct process *list; } semaphore;

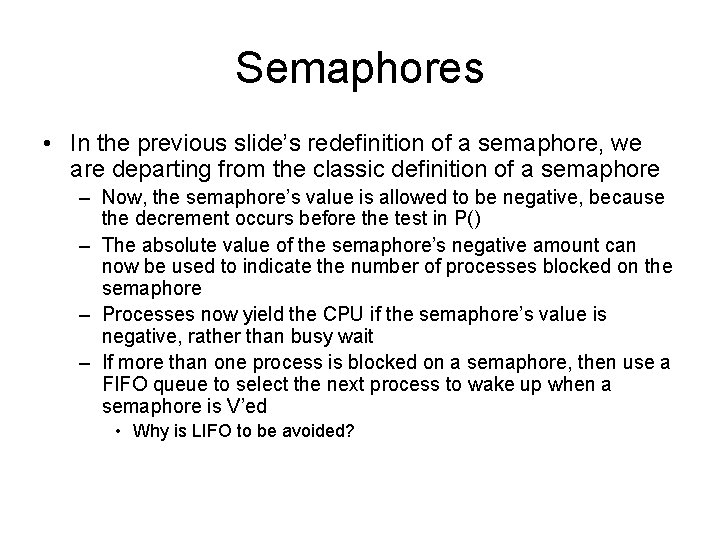

Semaphores • In the previous slide’s redefinition of a semaphore, we are departing from the classic definition of a semaphore – Now, the semaphore’s value is allowed to be negative, because the decrement occurs before the test in P() – The absolute value of the semaphore’s negative amount can now be used to indicate the number of processes blocked on the semaphore – Processes now yield the CPU if the semaphore’s value is negative, rather than busy wait – If more than one process is blocked on a semaphore, then use a FIFO queue to select the next process to wake up when a semaphore is V’ed • Why is LIFO to be avoided?

Deadlock • Semaphores provide synchronization, but can introduce more complicated higher level problems like deadlock – two processes deadlock when each wants a resource that has been locked by the other process – e. g. P 1 wants resource R 2 locked by process P 2 with semaphore S 2, while P 2 wants resource R 1 locked by process P 1 with semaphore S 1

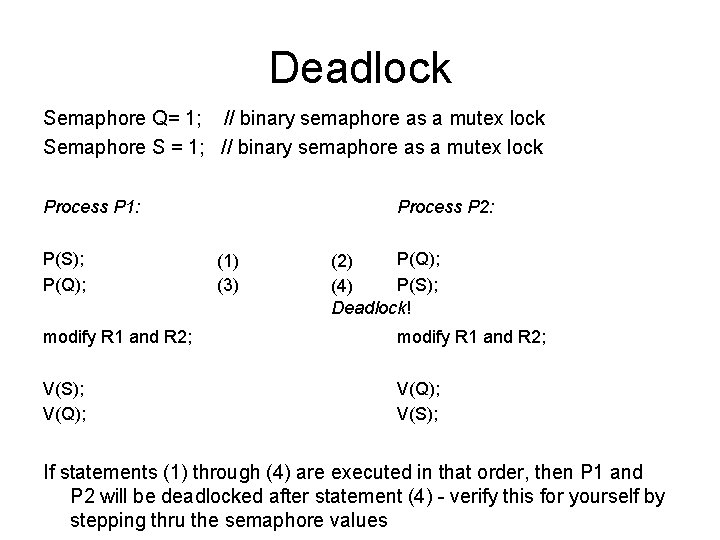

Deadlock Semaphore Q= 1; // binary semaphore as a mutex lock Semaphore S = 1; // binary semaphore as a mutex lock Process P 1: P(S); P(Q); Process P 2: (1) (3) P(Q); (2) P(S); (4) Deadlock! modify R 1 and R 2; V(S); V(Q); V(S); If statements (1) through (4) are executed in that order, then P 1 and P 2 will be deadlocked after statement (4) - verify this for yourself by stepping thru the semaphore values

Deadlock • In the previous example, – Each process will sleep on the other process’s semaphore – the V() signalling statements will never get executed, so there is no way to wake up the two processes from within those two processes – there is no rule prohibiting an application programmer from P()’ing Q before S, or vice versa - the application programmer won’t have enough information to decide on the proper order – in general, with N processes sharing N semaphores, the potential for deadlock grows

Deadlock • Other examples: – A programmer mistakenly follows a P() with a second P() instead of a V(), e. g. P(mutex) critical section P(mutex) <---- this causes a deadlock, should have been a V() – A programmer forgets and omits the P(mutex) or V(mutex). Can cause deadlock if V(mutex) is omitted. Can violate mutual exclusion if P(mutex) is omitted. – A programmer reverses the order of P() and V(), e. g. V(mutex) critical section P(mutex) <---- this violates mutual exclusion, but is not an example of deadlock

Classic Synchronization Problems • Bounded Buffer Producer-Consumer Problem • Readers-Writers Problem – First Readers Problem • Dining Philosophers Problem • These are not just abstract problems – They are representative of several classes of synchronization problems commonly encountered when trying to synchronize access to shared resources among multiple processes

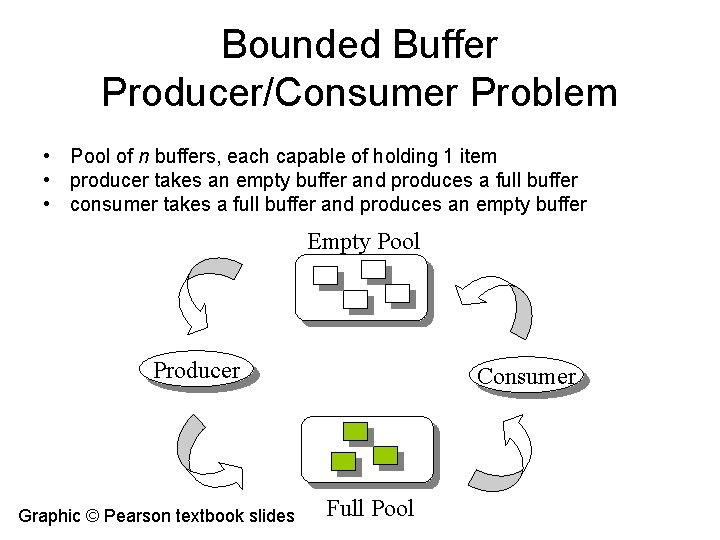

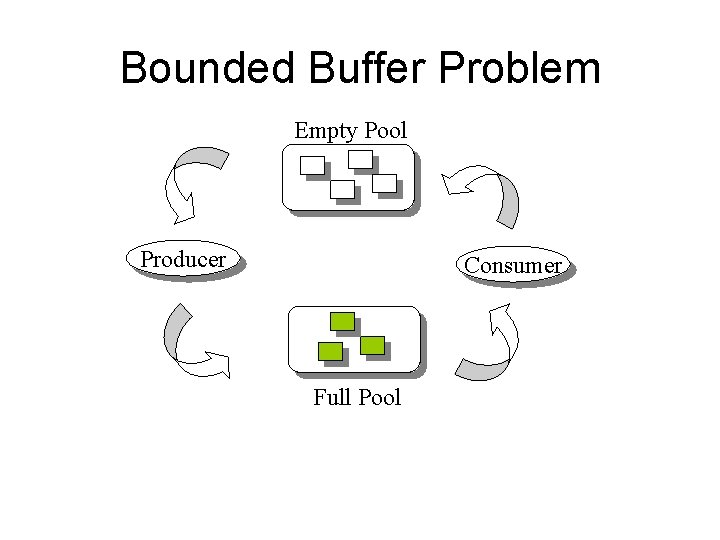

Bounded Buffer Producer/Consumer Problem • Pool of n buffers, each capable of holding 1 item • producer takes an empty buffer and produces a full buffer • consumer takes a full buffer and produces an empty buffer Empty Pool Producer Graphic © Pearson textbook slides Consumer Full Pool

Bounded Buffer Producer/Consumer Problem • Synchronization setup: – Use a mutex semaphore to protect access to buffer manipulation, mutexinit = 1 – Use two counting semaphores full and empty to keep track of the number of full and empty buffers, where the values of full + empty = n • fullinit = 0 • emptyinit = n

Bounded Buffer Producer/Consumer Problem • Why do we need counting semaphores? Why do we need two of them? Consider the following: Producer: Consumer: while(1) { // need code here to keep track of number of empty buffers P(mutex) obtain empty buffer and add next item, creating a full buffer V(mutex). . . } while(1) {. . . P(mutex) remove item from full buffer, create an empty buffer V(mutex). . . }

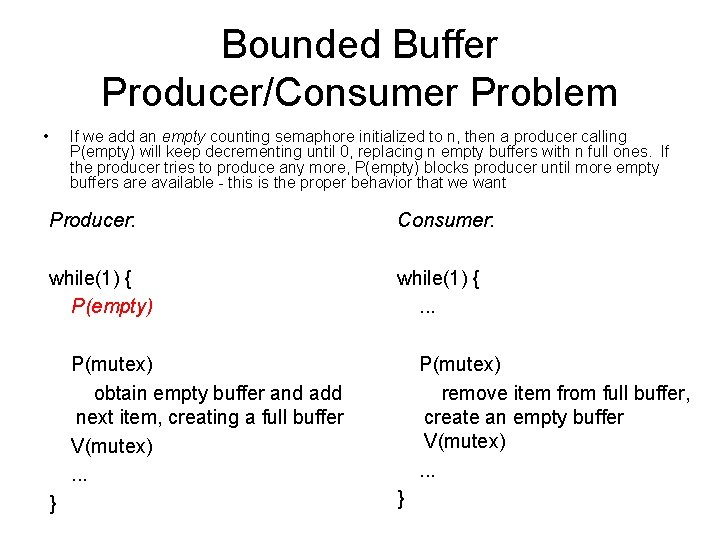

Bounded Buffer Producer/Consumer Problem • If we add an empty counting semaphore initialized to n, then a producer calling P(empty) will keep decrementing until 0, replacing n empty buffers with n full ones. If the producer tries to produce any more, P(empty) blocks producer until more empty buffers are available - this is the proper behavior that we want Producer: Consumer: while(1) { P(empty) while(1) {. . . P(mutex) obtain empty buffer and add next item, creating a full buffer V(mutex). . . } P(mutex) remove item from full buffer, create an empty buffer V(mutex). . . }

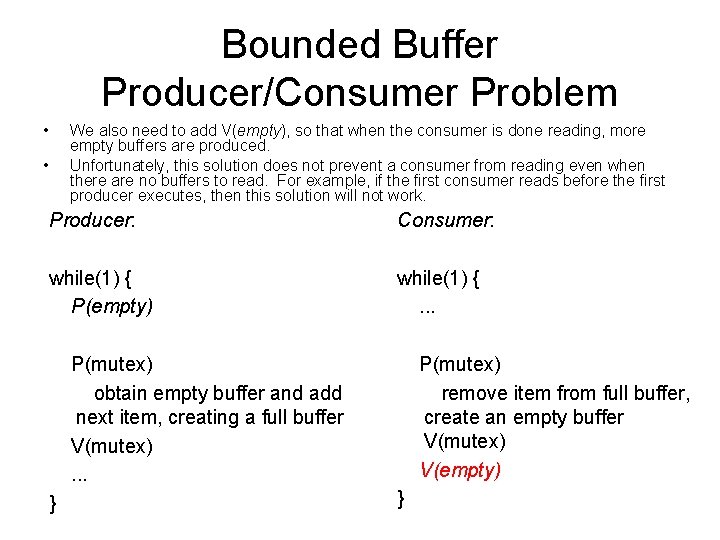

Bounded Buffer Producer/Consumer Problem • We also need to add V(empty), so that when the consumer is done reading, more empty buffers are produced. Unfortunately, this solution does not prevent a consumer from reading even when there are no buffers to read. For example, if the first consumer reads before the first producer executes, then this solution will not work. • Producer: Consumer: while(1) { P(empty) while(1) {. . . P(mutex) obtain empty buffer and add next item, creating a full buffer V(mutex). . . } P(mutex) remove item from full buffer, create an empty buffer V(mutex) V(empty) }

Bounded Buffer Producer/Consumer Problem • • So add a second counting semaphore full, initially set to 0 Verify for yourself that this solution won’t deadlock, synchronizes properly with mutual exclusion, prevents a producer from writing to a full buffer, and prevents a consumer from reading from an empty buffer Producer: Consumer: while(1) { P(empty) while(1) { P(full) P(mutex) obtain empty buffer and add next item, creating a full buffer V(mutex) V(full) } P(mutex) remove item from full buffer, create an empty buffer V(mutex) V(empty) }

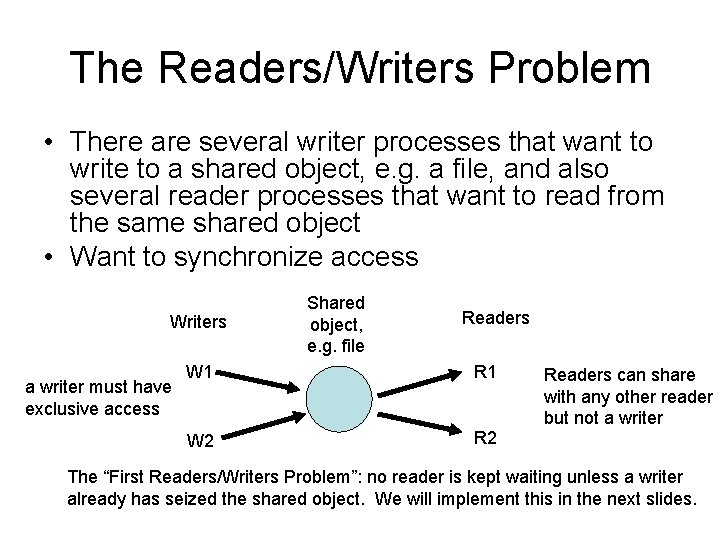

The Readers/Writers Problem • There are several writer processes that want to write to a shared object, e. g. a file, and also several reader processes that want to read from the same shared object • Want to synchronize access Writers a writer must have exclusive access Shared object, e. g. file Readers W 1 R 1 W 2 Readers can share with any other reader but not a writer The “First Readers/Writers Problem”: no reader is kept waiting unless a writer already has seized the shared object. We will implement this in the next slides.

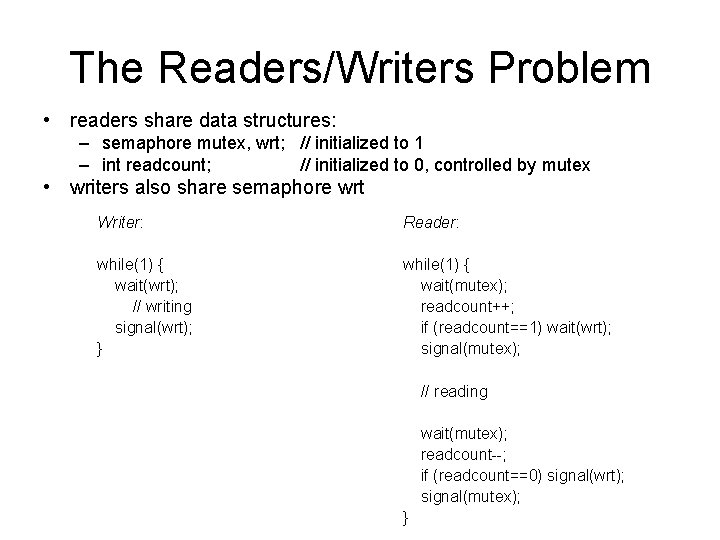

The Readers/Writers Problem • readers share data structures: – semaphore mutex, wrt; // initialized to 1 – int readcount; // initialized to 0, controlled by mutex • writers also share semaphore wrt Writer: Reader: while(1) { wait(wrt); // writing signal(wrt); } while(1) { wait(mutex); readcount++; if (readcount==1) wait(wrt); signal(mutex); // reading wait(mutex); readcount--; if (readcount==0) signal(wrt); signal(mutex); }

The Readers/Writers Problem • If multiple writers seek to write, then the write semaphore wrt provides mutual exclusion • If the 1 st reader tries to read while a writer is writing, then the 1 st reader blocks on wrt – if subsequent readers try to read while a writer is writing, they block on mutex • If the 1 st reader reads and there are no writers, then 1 st reader grabs the write lock and continues reading, eventually releasing the write lock when done reading – if a writer tries to write while the 1 st reader is reading, then the writer blocks on the write lock wrt – if a 2 nd or any subsequent reader tries to read while the 1 st reader is reading, then it falls through and is allowed to read

![Synchronization • Suppose we have the following sequence of interleaving, where the brackets [value] Synchronization • Suppose we have the following sequence of interleaving, where the brackets [value]](http://slidetodoc.com/presentation_image_h2/694790cf1acc406241176b22c6c2ea50/image-27.jpg)

Synchronization • Suppose we have the following sequence of interleaving, where the brackets [value] denote the local value of counter in either the producer or consumer’s process. Let counter=5 initially. // counter++ (1) reg 1 = counter; (3) reg 1 = reg 1 + 1; (5) counter = reg 1; [5] [6] // counter--; (2) reg 2 = counter; (4) reg 2 = reg 2 - 1; (6) counter = reg 2; [5] [4] • At the end, counter = 4. But if steps (5) and (6) were reversed, then counter=6 !!! • Value of shared variable counter changes depending upon (an arbitrary) order of writes - very undesirable!

Critical Section • Some kernel data structures could be subject to race conditions, e. g. access to list of open files • Kernel developer must ensure that no such race conditions occur • User or kernel developer identifies critical sections in code where each process accesses shared variables – access to critical sections is controlled by special entry and exit code while(1) { entry section critical section (manipulate common var’s) exit section remainder code }

Critical Section • Critical section access should satisfy multiple properties – mutual exclusion • if process Pi is executing in its critical section, then no other processes can be executing in their critical sections – progress • if no process is executing in its critical section and some processes wish to enter their critical sections, then only those processes that are not executing in their remainder sections can participate in the decision on which will enter its critical section next • this selection cannot be postponed indefinitely – bounded waiting • there exists a bound, or limit, on the number of times other processes can enter their critical sections after a process X has made a request to enter its critical section and before that request is granted

Critical Section • How do we protect access to critical sections? – want to prevent another process from executing while current process is modifying this variable - mutual exclusion – disable interrupts • before entering critical section, disable interrupts • after exiting critical section, reenable interrupts • This provides mutual exclusion

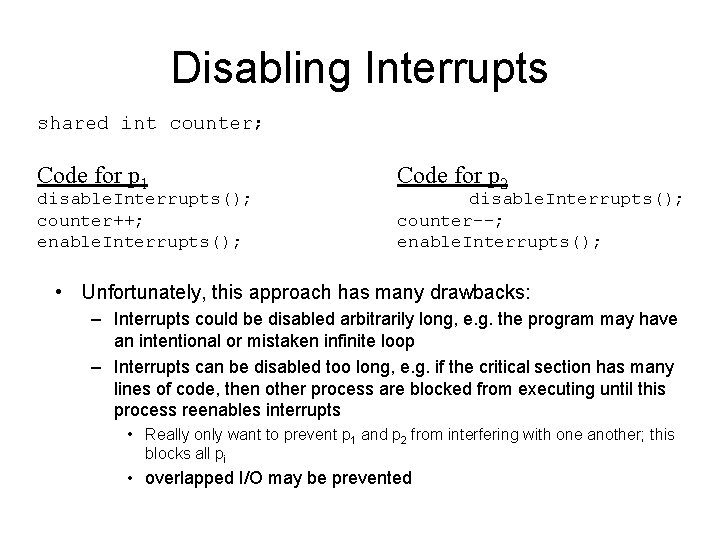

Disabling Interrupts shared int counter; Code for p 1 disable. Interrupts(); counter++; enable. Interrupts(); Code for p 2 disable. Interrupts(); counter--; enable. Interrupts(); • Unfortunately, this approach has many drawbacks: – Interrupts could be disabled arbitrarily long, e. g. the program may have an intentional or mistaken infinite loop – Interrupts can be disabled too long, e. g. if the critical section has many lines of code, then other process are blocked from executing until this process reenables interrupts • Really only want to prevent p 1 and p 2 from interfering with one another; this blocks all pi • overlapped I/O may be prevented

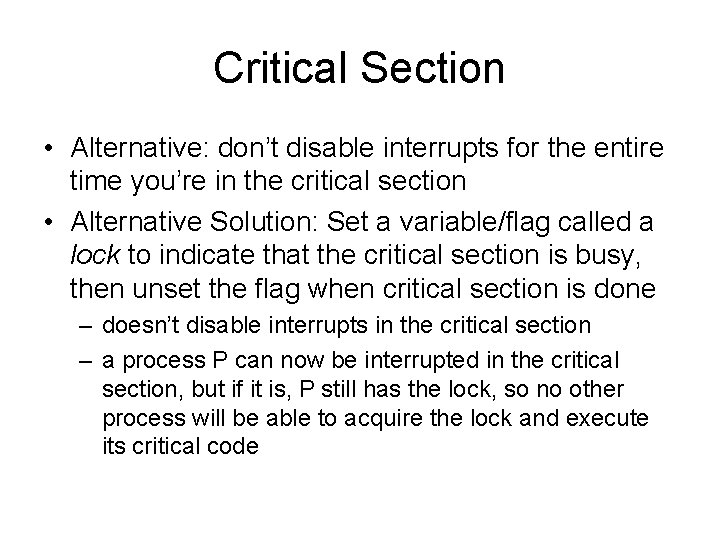

Critical Section • Alternative: don’t disable interrupts for the entire time you’re in the critical section • Alternative Solution: Set a variable/flag called a lock to indicate that the critical section is busy, then unset the flag when critical section is done – doesn’t disable interrupts in the critical section – a process P can now be interrupted in the critical section, but if it is, P still has the lock, so no other process will be able to acquire the lock and execute its critical code

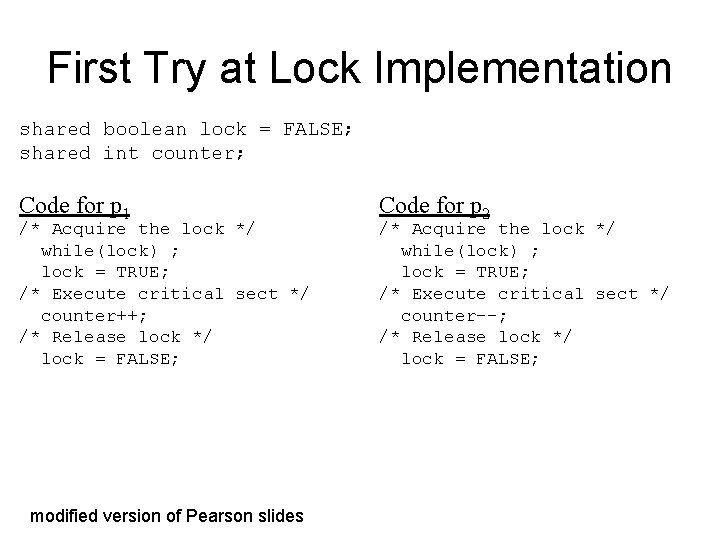

First Try at Lock Implementation shared boolean lock = FALSE; shared int counter; Code for p 1 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ counter++; /* Release lock */ lock = FALSE; modified version of Pearson slides Code for p 2 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ counter--; /* Release lock */ lock = FALSE;

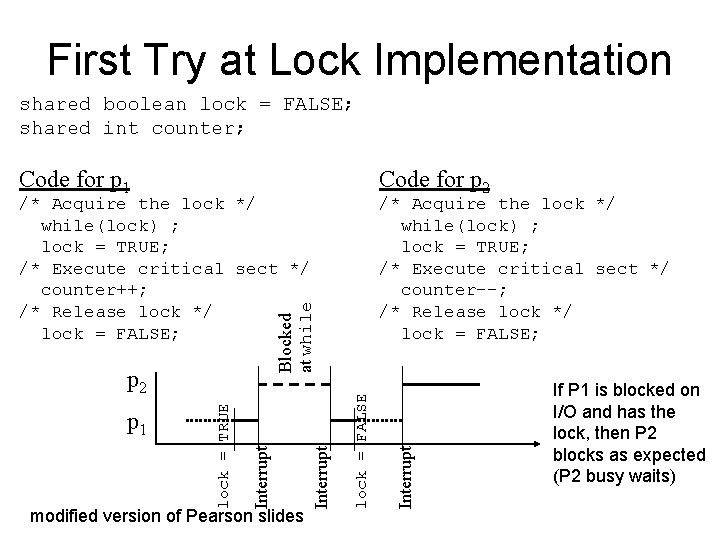

First Try at Lock Implementation shared boolean lock = FALSE; shared int counter; Code for p 1 Code for p 2 Interrupt lock = FALSE modified version of Pearson slides Interrupt lock = TRUE p 2 p 1 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ counter--; /* Release lock */ lock = FALSE; Blocked at while /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ counter++; /* Release lock */ lock = FALSE; If P 1 is blocked on I/O and has the lock, then P 2 blocks as expected (P 2 busy waits)

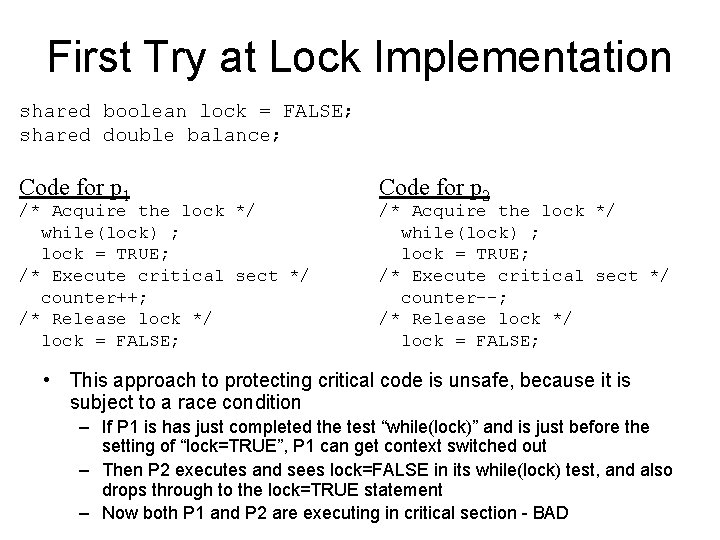

First Try at Lock Implementation shared boolean lock = FALSE; shared double balance; Code for p 1 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ counter++; /* Release lock */ lock = FALSE; Code for p 2 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ counter--; /* Release lock */ lock = FALSE; • This approach to protecting critical code is unsafe, because it is subject to a race condition – If P 1 is has just completed the test “while(lock)” and is just before the setting of “lock=TRUE”, P 1 can get context switched out – Then P 2 executes and sees lock=FALSE in its while(lock) test, and also drops through to the lock=TRUE statement – Now both P 1 and P 2 are executing in critical section - BAD

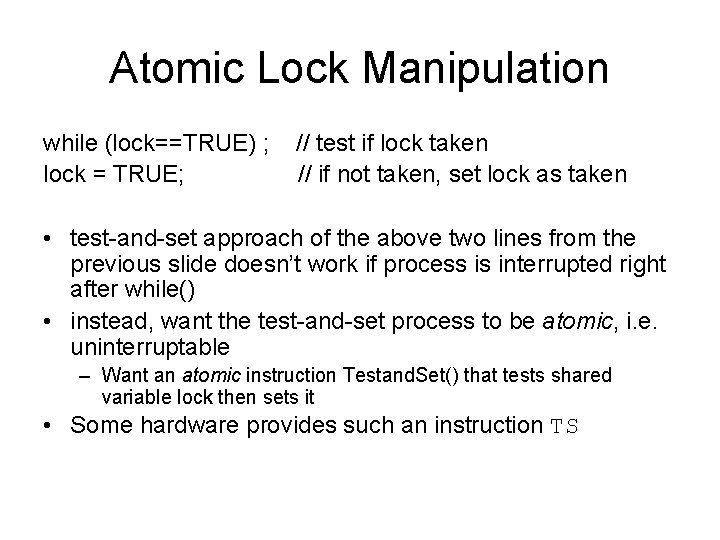

Atomic Lock Manipulation while (lock==TRUE) ; lock = TRUE; // test if lock taken // if not taken, set lock as taken • test-and-set approach of the above two lines from the previous slide doesn’t work if process is interrupted right after while() • instead, want the test-and-set process to be atomic, i. e. uninterruptable – Want an atomic instruction Testand. Set() that tests shared variable lock then sets it • Some hardware provides such an instruction TS

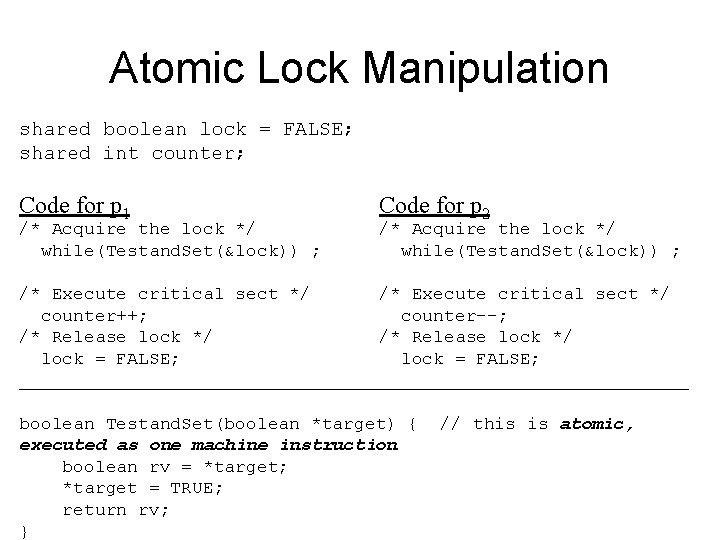

Atomic Lock Manipulation shared boolean lock = FALSE; shared int counter; Code for p 1 /* Acquire the lock */ while(Testand. Set(&lock)) ; Code for p 2 /* Acquire the lock */ while(Testand. Set(&lock)) ; /* Execute critical sect */ counter++; counter--; /* Release lock */ lock = FALSE; _______________________________ boolean Testand. Set(boolean *target) { executed as one machine instruction boolean rv = *target; *target = TRUE; return rv; } // this is atomic,

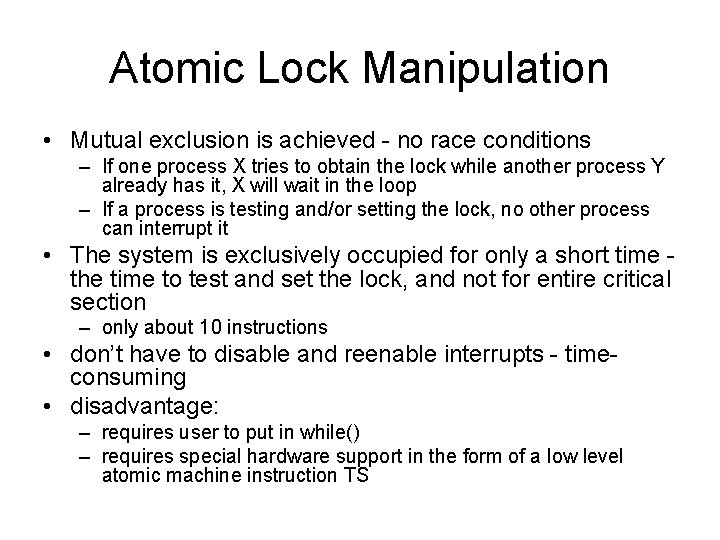

Atomic Lock Manipulation • Mutual exclusion is achieved - no race conditions – If one process X tries to obtain the lock while another process Y already has it, X will wait in the loop – If a process is testing and/or setting the lock, no other process can interrupt it • The system is exclusively occupied for only a short time the time to test and set the lock, and not for entire critical section – only about 10 instructions • don’t have to disable and reenable interrupts - timeconsuming • disadvantage: – requires user to put in while() – requires special hardware support in the form of a low level atomic machine instruction TS

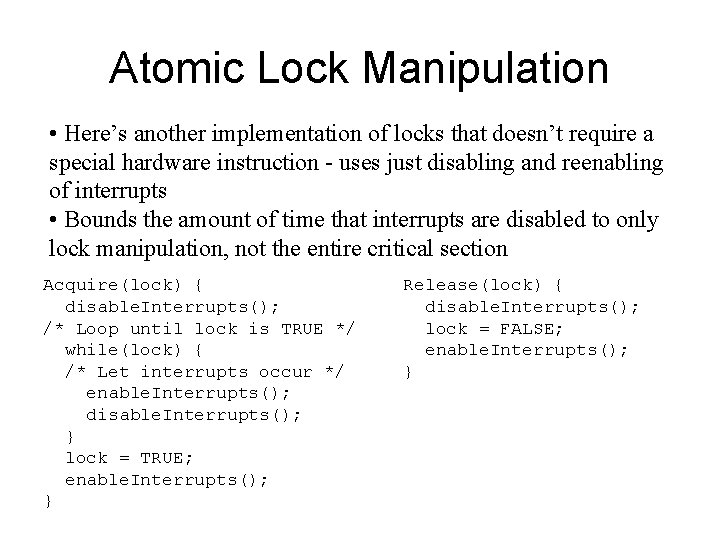

Atomic Lock Manipulation • Here’s another implementation of locks that doesn’t require a special hardware instruction - uses just disabling and reenabling of interrupts • Bounds the amount of time that interrupts are disabled to only lock manipulation, not the entire critical section Acquire(lock) { disable. Interrupts(); /* Loop until lock is TRUE */ while(lock) { /* Let interrupts occur */ enable. Interrupts(); disable. Interrupts(); } lock = TRUE; enable. Interrupts(); } Release(lock) { disable. Interrupts(); lock = FALSE; enable. Interrupts(); }

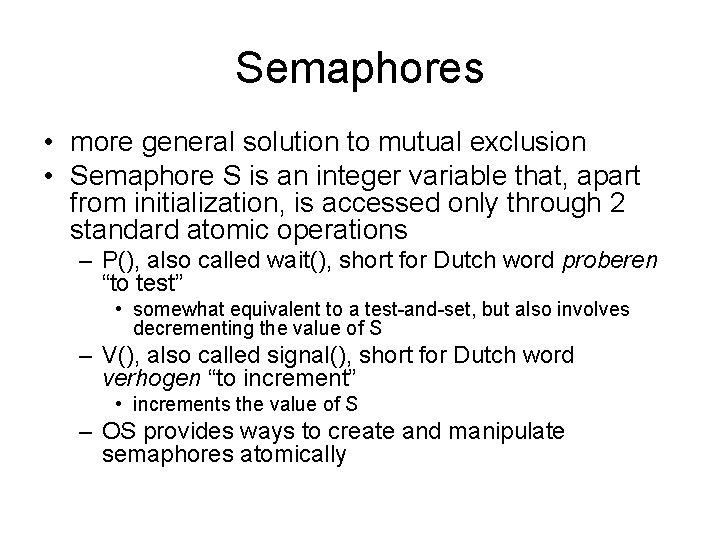

Semaphores • more general solution to mutual exclusion • Semaphore S is an integer variable that, apart from initialization, is accessed only through 2 standard atomic operations – P(), also called wait(), short for Dutch word proberen “to test” • somewhat equivalent to a test-and-set, but also involves decrementing the value of S – V(), also called signal(), short for Dutch word verhogen “to increment” • increments the value of S – OS provides ways to create and manipulate semaphores atomically

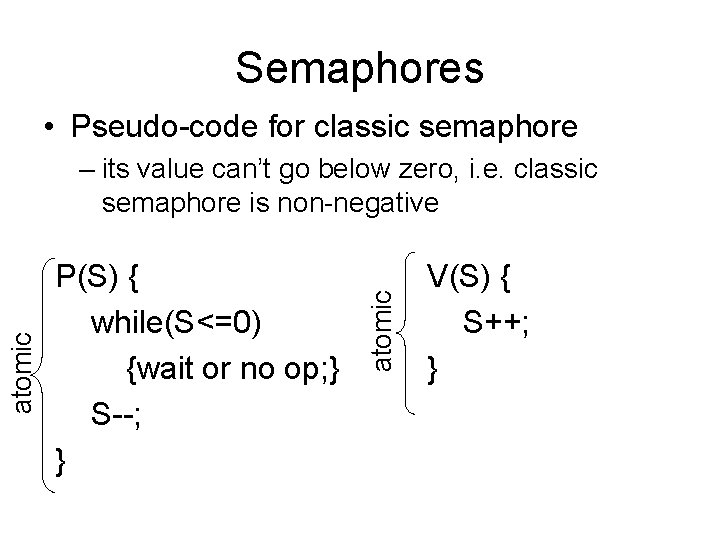

Semaphores • Pseudo-code for classic semaphore P(S) { while(S<=0) {wait or no op; } S--; } atomic – its value can’t go below zero, i. e. classic semaphore is non-negative V(S) { S++; }

Semaphores • Here’s an intuitive implementation of semaphores using only disabling and reenabling of interrupts – See the text for an example of implementing semaphores using Testand. Set() instructions P(S) { disable. Interrupts(); while(S==0) { enable. Int(); disable. Int(); } S--; enable. Int() } V(S) { disable. Int(); S++; enable. Int() }

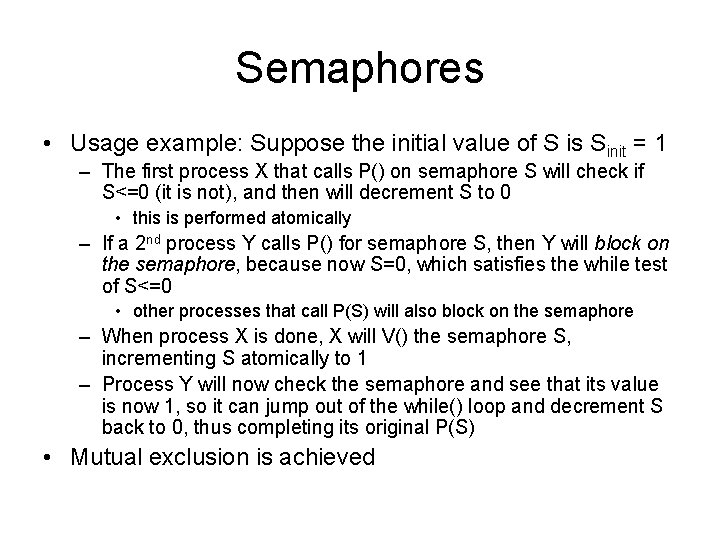

Semaphores • Usage example: Suppose the initial value of S is Sinit = 1 – The first process X that calls P() on semaphore S will check if S<=0 (it is not), and then will decrement S to 0 • this is performed atomically – If a 2 nd process Y calls P() for semaphore S, then Y will block on the semaphore, because now S=0, which satisfies the while test of S<=0 • other processes that call P(S) will also block on the semaphore – When process X is done, X will V() the semaphore S, incrementing S atomically to 1 – Process Y will now check the semaphore and see that its value is now 1, so it can jump out of the while() loop and decrement S back to 0, thus completing its original P(S) • Mutual exclusion is achieved

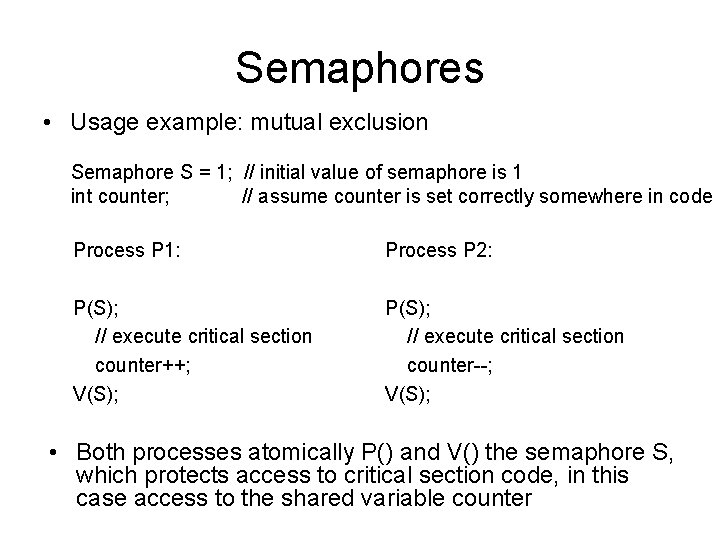

Semaphores • Usage example: mutual exclusion Semaphore S = 1; // initial value of semaphore is 1 int counter; // assume counter is set correctly somewhere in code Process P 1: Process P 2: P(S); // execute critical section counter++; V(S); P(S); // execute critical section counter--; V(S); • Both processes atomically P() and V() the semaphore S, which protects access to critical section code, in this case access to the shared variable counter

Semaphores • The previous example showed how to use the semaphore as a binary semaphore – its initial value was set to 1 – a binary semaphore is also called a mutex lock, i. e. it can be used to provide mutual exclusion on some piece of critical code • A semaphore can also be used more generally as a counting semaphore – its initial value is n, e. g. n=10 – the value of the semaphore is used to store the value of a shared variable, or is used to keep track of the number of processes allowed to access some shared resource

Bounded Buffer Problem Empty Pool Producer Consumer Full Pool

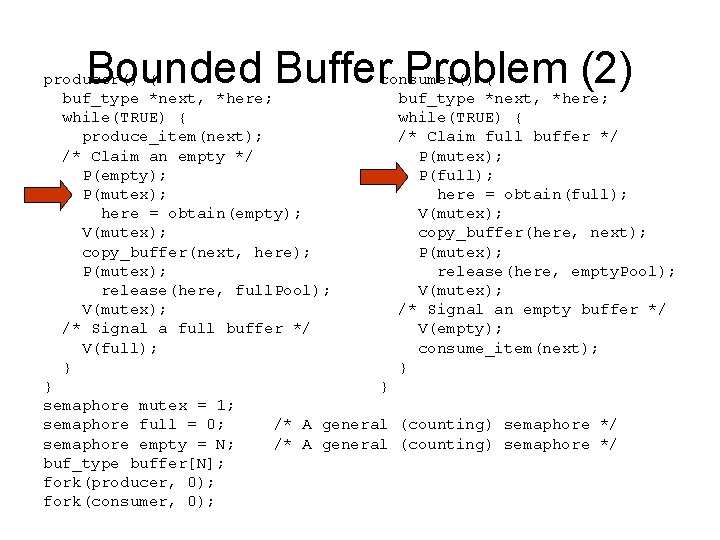

Bounded Buffer Problem (2) producer() { consumer() { buf_type *next, *here; while(TRUE) { produce_item(next); /* Claim full buffer */ /* Claim an empty */ P(mutex); P(empty); P(full); P(mutex); here = obtain(full); here = obtain(empty); V(mutex); copy_buffer(here, next); copy_buffer(next, here); P(mutex); release(here, empty. Pool); release(here, full. Pool); V(mutex); /* Signal an empty buffer */ /* Signal a full buffer */ V(empty); V(full); consume_item(next); } } semaphore mutex = 1; semaphore full = 0; /* A general (counting) semaphore */ semaphore empty = N; /* A general (counting) semaphore */ buf_type buffer[N]; fork(producer, 0); fork(consumer, 0);

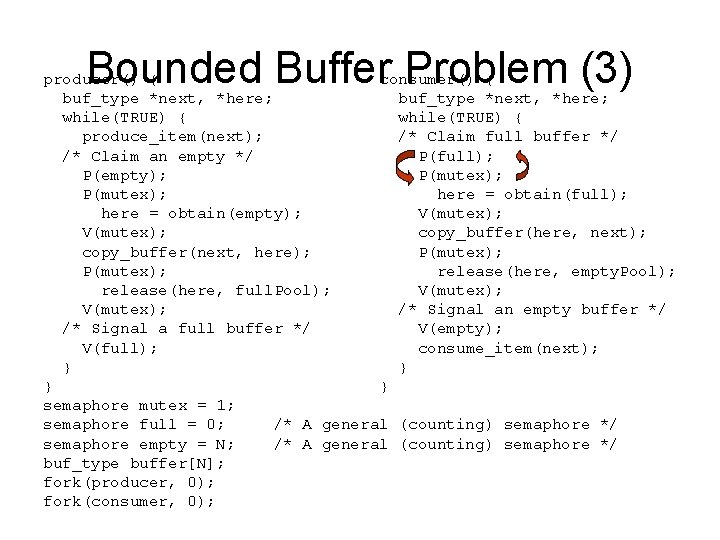

Bounded Buffer Problem (3) producer() { consumer() { buf_type *next, *here; while(TRUE) { produce_item(next); /* Claim full buffer */ /* Claim an empty */ P(full); P(empty); P(mutex); here = obtain(full); here = obtain(empty); V(mutex); copy_buffer(here, next); copy_buffer(next, here); P(mutex); release(here, empty. Pool); release(here, full. Pool); V(mutex); /* Signal an empty buffer */ /* Signal a full buffer */ V(empty); V(full); consume_item(next); } } semaphore mutex = 1; semaphore full = 0; /* A general (counting) semaphore */ semaphore empty = N; /* A general (counting) semaphore */ buf_type buffer[N]; fork(producer, 0); fork(consumer, 0);

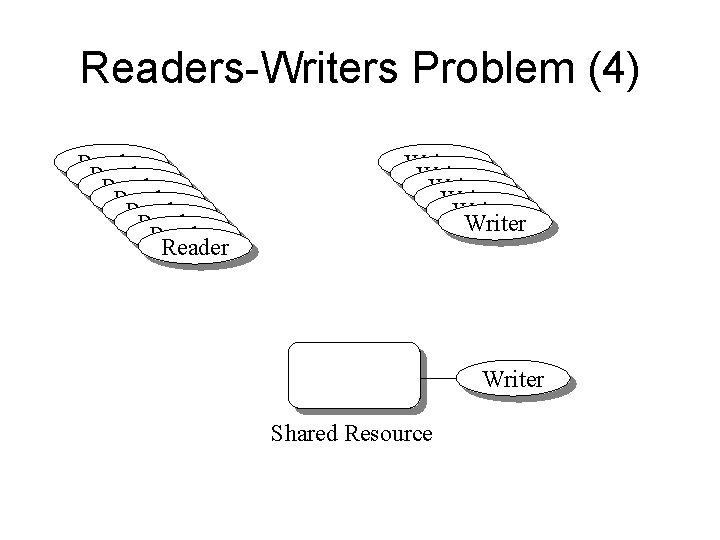

Readers-Writers Problem Writers Readers

Readers-Writers Problem (2) Reader Reader Writer Writer Shared Resource

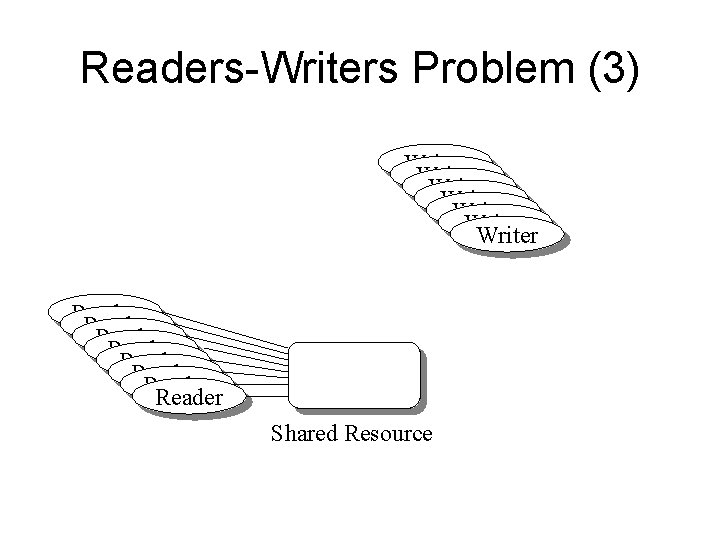

Readers-Writers Problem (3) Writer Writer Reader Reader Shared Resource

Readers-Writers Problem (4) Reader Reader Writer Writer Shared Resource

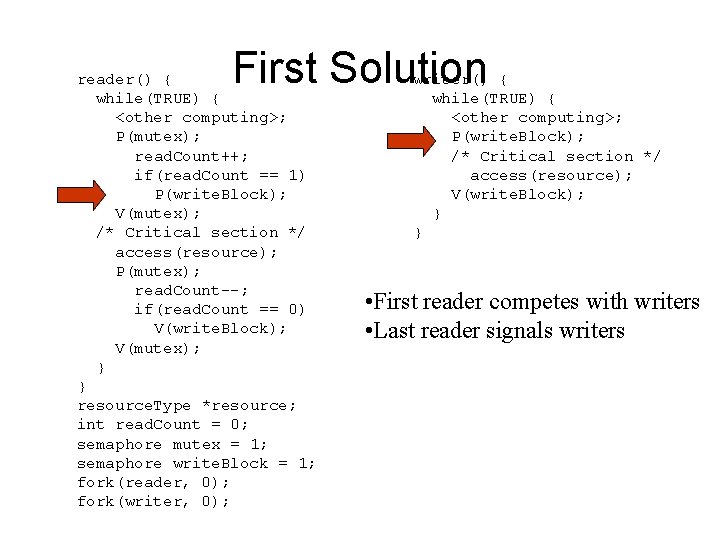

First Solution reader() { while(TRUE) { <other computing>; P(mutex); read. Count++; if(read. Count == 1) P(write. Block); V(mutex); /* Critical section */ access(resource); P(mutex); read. Count--; if(read. Count == 0) V(write. Block); V(mutex); } } resource. Type *resource; int read. Count = 0; semaphore mutex = 1; semaphore write. Block = 1; fork(reader, 0); fork(writer, 0); writer() { while(TRUE) { <other computing>; P(write. Block); /* Critical section */ access(resource); V(write. Block); } } • First reader competes with writers • Last reader signals writers

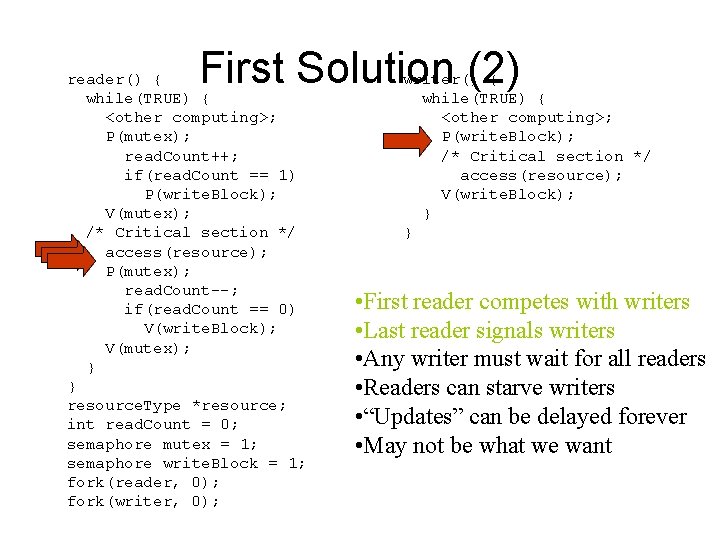

First Solution (2) reader() { while(TRUE) { <other computing>; P(mutex); read. Count++; if(read. Count == 1) P(write. Block); V(mutex); /* Critical section */ access(resource); P(mutex); read. Count--; if(read. Count == 0) V(write. Block); V(mutex); } } resource. Type *resource; int read. Count = 0; semaphore mutex = 1; semaphore write. Block = 1; fork(reader, 0); fork(writer, 0); writer() { while(TRUE) { <other computing>; P(write. Block); /* Critical section */ access(resource); V(write. Block); } } • First reader competes with writers • Last reader signals writers • Any writer must wait for all readers • Readers can starve writers • “Updates” can be delayed forever • May not be what we want

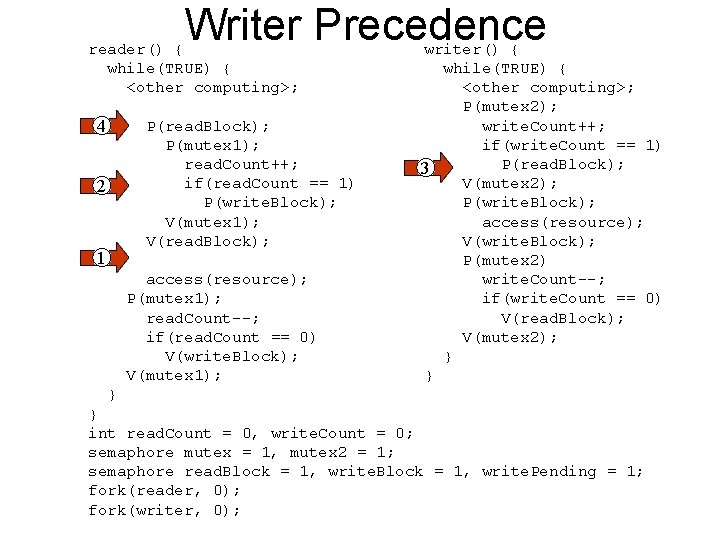

Writer Precedence reader() { while(TRUE) { <other computing>; 4 P(read. Block); P(mutex 1); read. Count++; if(read. Count == 1) P(write. Block); V(mutex 1); V(read. Block); 2 1 access(resource); P(mutex 1); read. Count--; if(read. Count == 0) V(write. Block); V(mutex 1); writer() { while(TRUE) { <other computing>; P(mutex 2); write. Count++; if(write. Count == 1) P(read. Block); 3 V(mutex 2); P(write. Block); access(resource); V(write. Block); P(mutex 2) write. Count--; if(write. Count == 0) V(read. Block); V(mutex 2); } } int read. Count = 0, write. Count = 0; semaphore mutex = 1, mutex 2 = 1; semaphore read. Block = 1, write. Pending = 1; fork(reader, 0); fork(writer, 0);

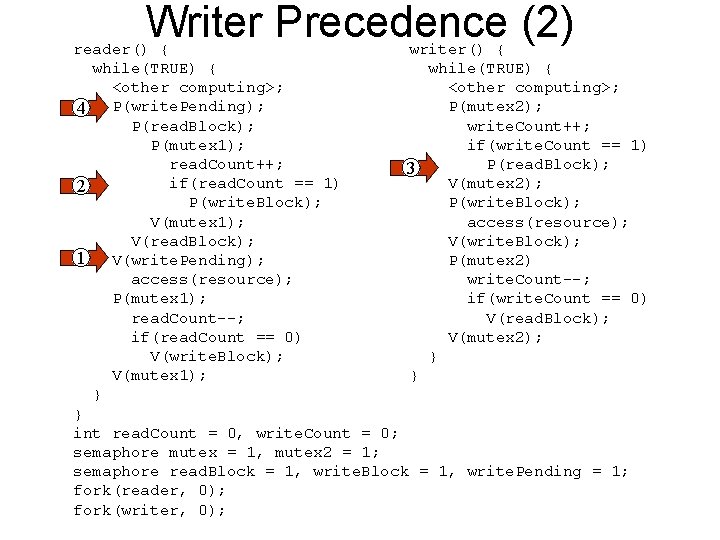

Writer Precedence (2) reader() { writer() { while(TRUE) { <other computing>; P(mutex 2); 4 P(write. Pending); P(read. Block); write. Count++; P(mutex 1); if(write. Count == 1) read. Count++; P(read. Block); 3 if(read. Count == 1) V(mutex 2); 2 P(write. Block); V(mutex 1); access(resource); V(read. Block); V(write. Block); 1 V(write. Pending); P(mutex 2) access(resource); write. Count--; P(mutex 1); if(write. Count == 0) read. Count--; V(read. Block); if(read. Count == 0) V(mutex 2); V(write. Block); } V(mutex 1); } } } int read. Count = 0, write. Count = 0; semaphore mutex = 1, mutex 2 = 1; semaphore read. Block = 1, write. Pending = 1; fork(reader, 0); fork(writer, 0);

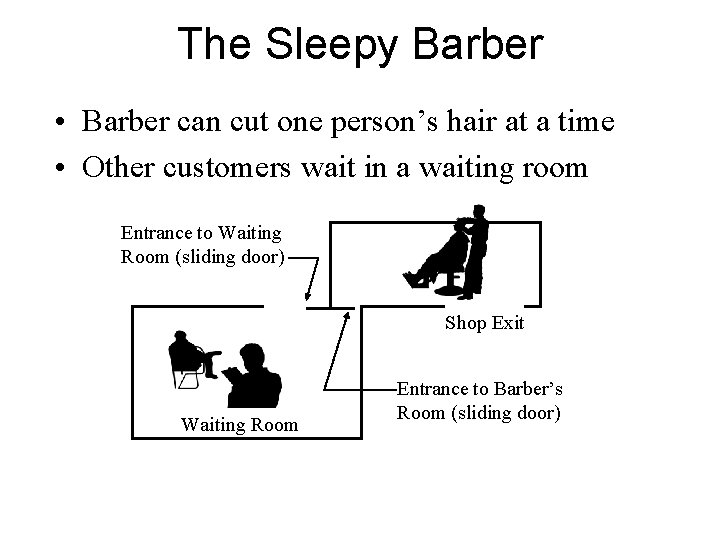

The Sleepy Barber • Barber can cut one person’s hair at a time • Other customers wait in a waiting room Entrance to Waiting Room (sliding door) Shop Exit Waiting Room Entrance to Barber’s Room (sliding door)

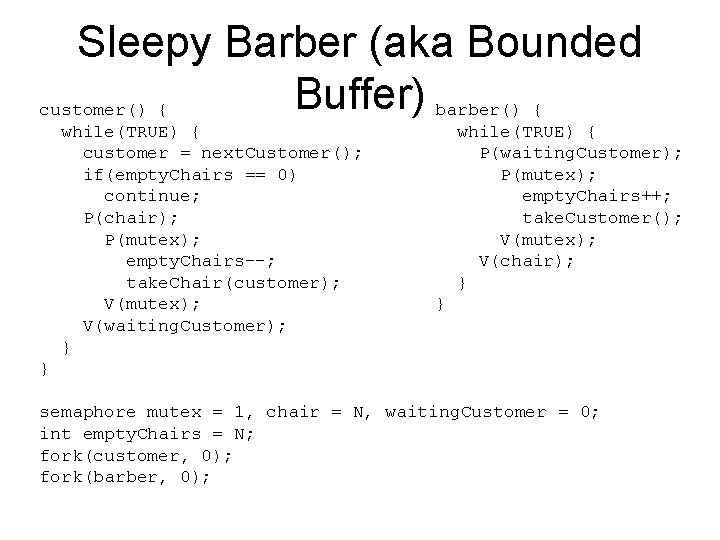

Sleepy Barber (aka Bounded Buffer) barber() { customer() { while(TRUE) { customer = next. Customer(); if(empty. Chairs == 0) continue; P(chair); P(mutex); empty. Chairs--; take. Chair(customer); V(mutex); V(waiting. Customer); } while(TRUE) { P(waiting. Customer); P(mutex); empty. Chairs++; take. Customer(); V(mutex); V(chair); } } } semaphore mutex = 1, chair = N, waiting. Customer = 0; int empty. Chairs = N; fork(customer, 0); fork(barber, 0);

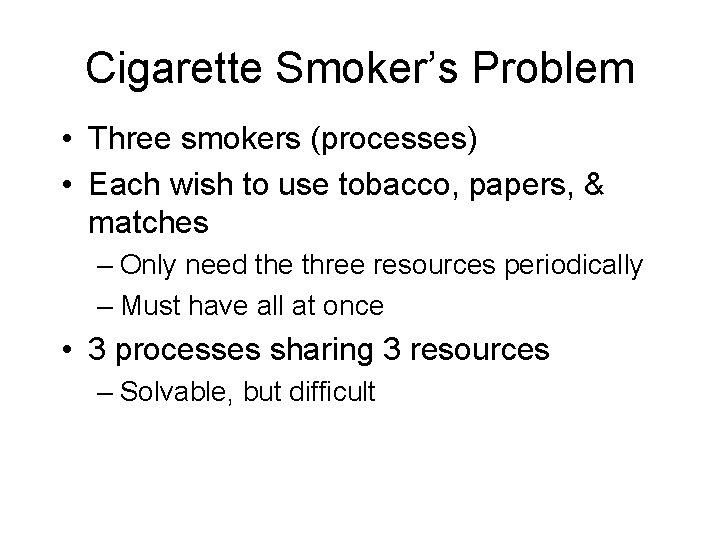

Cigarette Smoker’s Problem • Three smokers (processes) • Each wish to use tobacco, papers, & matches – Only need the three resources periodically – Must have all at once • 3 processes sharing 3 resources – Solvable, but difficult

Implementing Semaphores • Minimize effect on the I/O system • Processes are only blocked on their own critical sections (not critical sections that they should not care about) • If disabling interrupts, be sure to bound the time they are disabled

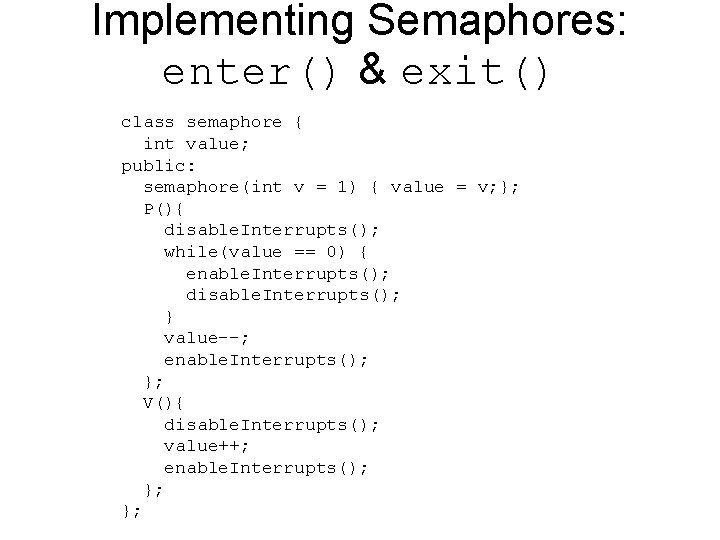

Implementing Semaphores: enter() & exit() class semaphore { int value; public: semaphore(int v = 1) { value = v; }; P(){ disable. Interrupts(); while(value == 0) { enable. Interrupts(); disable. Interrupts(); } value--; enable. Interrupts(); }; V(){ disable. Interrupts(); value++; enable. Interrupts(); }; };

![Implementing Semaphores: Test and Set Instruction • TS(m): [Reg_i = memory[m]; memory[m] = TRUE; Implementing Semaphores: Test and Set Instruction • TS(m): [Reg_i = memory[m]; memory[m] = TRUE;](http://slidetodoc.com/presentation_image_h2/694790cf1acc406241176b22c6c2ea50/image-63.jpg)

Implementing Semaphores: Test and Set Instruction • TS(m): [Reg_i = memory[m]; memory[m] = TRUE; ] Data CC Register R 3 … m … FALSE Primary Memory (a) Before Executing TS Data CC Register R 3 FALSE m =0 TRUE Primary Memory (b) After Executing TS

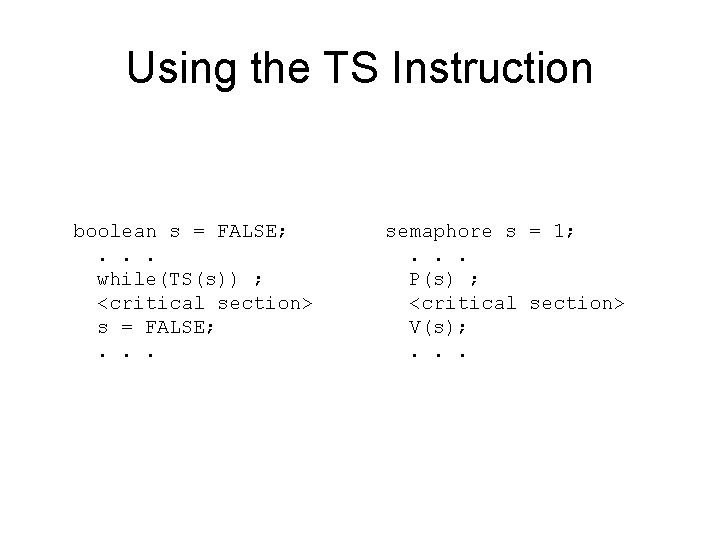

Using the TS Instruction boolean s = FALSE; . . . while(TS(s)) ; <critical section> s = FALSE; . . . semaphore s = 1; . . . P(s) ; <critical section> V(s); . . .

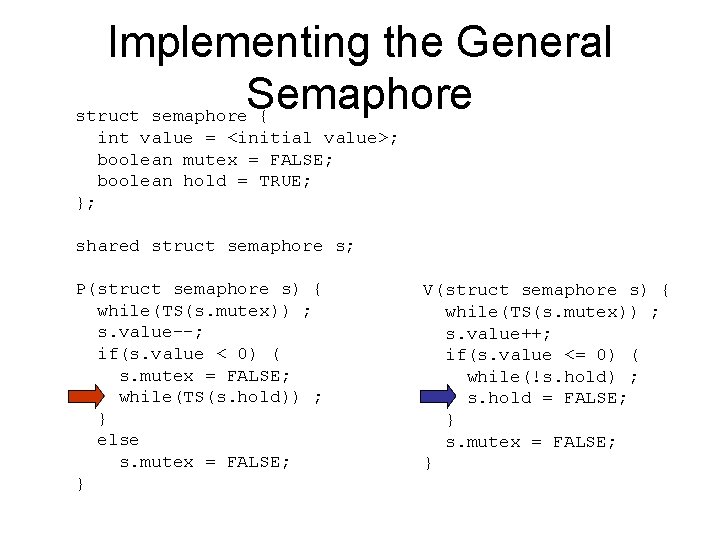

Implementing the General Semaphore struct semaphore { int value = <initial value>; boolean mutex = FALSE; boolean hold = TRUE; }; shared struct semaphore s; P(struct semaphore s) { while(TS(s. mutex)) ; s. value--; if(s. value < 0) ( s. mutex = FALSE; while(TS(s. hold)) ; } else s. mutex = FALSE; } V(struct semaphore s) { while(TS(s. mutex)) ; s. value++; if(s. value <= 0) ( while(!s. hold) ; s. hold = FALSE; } s. mutex = FALSE; }

Active vs Passive Semaphores • A process can dominate the semaphore – Performs V operation, but continues to execute – Performs another P operation before releasing the CPU – Called a passive implementation of V • Active implementation calls scheduler as part of the V operation. – Changes semantics of semaphore! – Cause people to rethink solutions

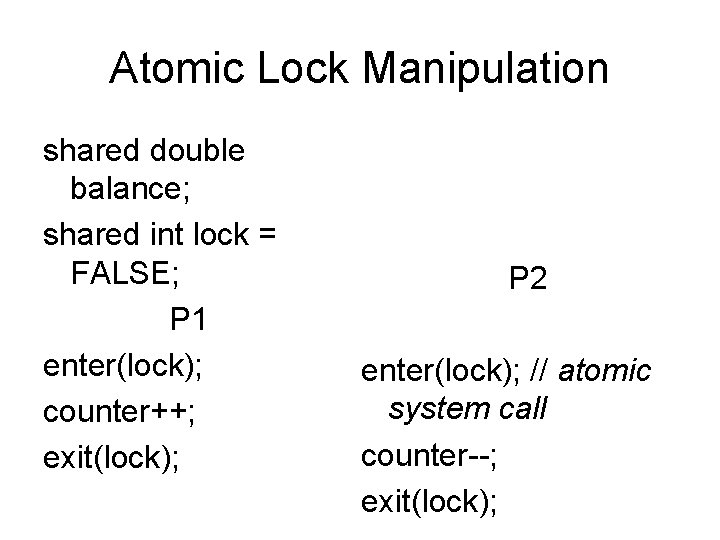

Atomic Lock Manipulation shared double balance; shared int lock = FALSE; P 1 enter(lock); counter++; exit(lock); P 2 enter(lock); // atomic system call counter--; exit(lock);

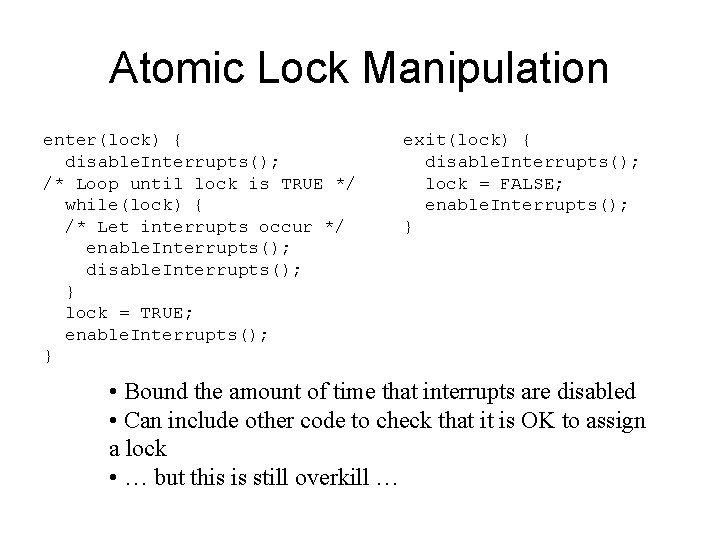

Atomic Lock Manipulation enter(lock) { disable. Interrupts(); /* Loop until lock is TRUE */ while(lock) { /* Let interrupts occur */ enable. Interrupts(); disable. Interrupts(); } lock = TRUE; enable. Interrupts(); } exit(lock) { disable. Interrupts(); lock = FALSE; enable. Interrupts(); } • Bound the amount of time that interrupts are disabled • Can include other code to check that it is OK to assign a lock • … but this is still overkill …

![Critical Section do { flag[i] = TRUE turn = j; while (flag[j] && turn==j); Critical Section do { flag[i] = TRUE turn = j; while (flag[j] && turn==j);](http://slidetodoc.com/presentation_image_h2/694790cf1acc406241176b22c6c2ea50/image-70.jpg)

Critical Section do { flag[i] = TRUE turn = j; while (flag[j] && turn==j); critical section flag[i] = FALSE remainder section } while (TRUE)

Critical Section • Solution: instead disable only on entry and exit. Use a shared variable to control access to another shared variable. – this introduces complexity - what if you’re interrupted while having the lock?

- Slides: 71