Basic Synchronization Principles 1 BASIC SYNCHRONIZATION PRINCIPLES 2

Basic Synchronization Principles 1

BASIC SYNCHRONIZATION PRINCIPLES 2

Cooperating Processes � Independent process � Cannot affect or be affected by the other processes in the system � Does not share any data with other processes � Interacting process � Can affect or be affected by the other processes � Shares data with other processes � We focus on interacting processes through physically or logically shared memory 3

Concurrency � � Threads/processes which logically appear to be executing in parallel Value of concurrency – speed & economics But few widely-accepted concurrent programming languages (Java is an exception) Few concurrent programming paradigms � Each problem requires careful consideration � There is no common model � OS tools to support concurrency tend to be “low level” 4

Synchronization � � Need to ensure that interacting processes/ threads begin to execute a designated block of code at the same logical time May cause problems when using shared resources � Deadlock � Critical sections � Nondeterminacy (no assurance that repeating a parallel program will produce same results) 5

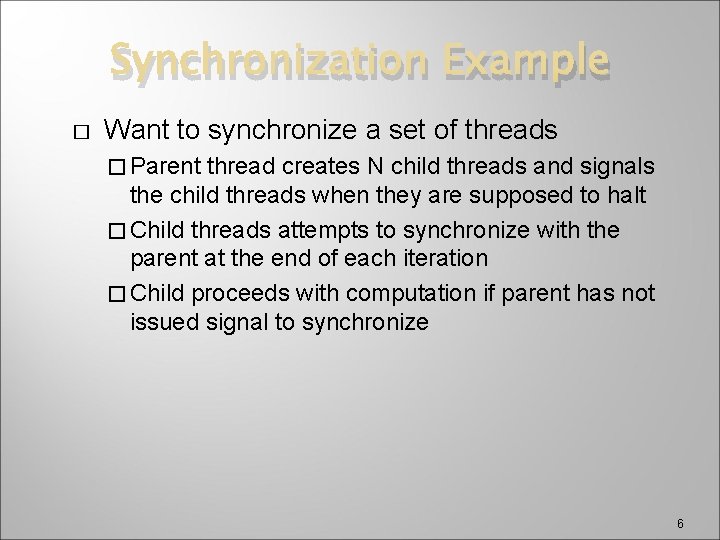

Synchronization Example � Want to synchronize a set of threads � Parent thread creates N child threads and signals the child threads when they are supposed to halt � Child threads attempts to synchronize with the parent at the end of each iteration � Child proceeds with computation if parent has not issued signal to synchronize 6

Synchronizing Multiple Threads with a Shared Variable Initialize Exit TRUE … FALSE run. Flag? FALSE run. Flag=FALSE Thread Work FALSE Wait run. Time seconds TRUE Create. Thread(…) Terminate 7

The Critical-Section Problem � � � n processes all competing to use some shared data Each process has a code segment, called critical section, in which the shared data is accessed. Problem – ensure that when one process is executing in its critical section, no other process is allowed to execute in its critical section, called mutual exclusion 8

Traffic Intersections 9

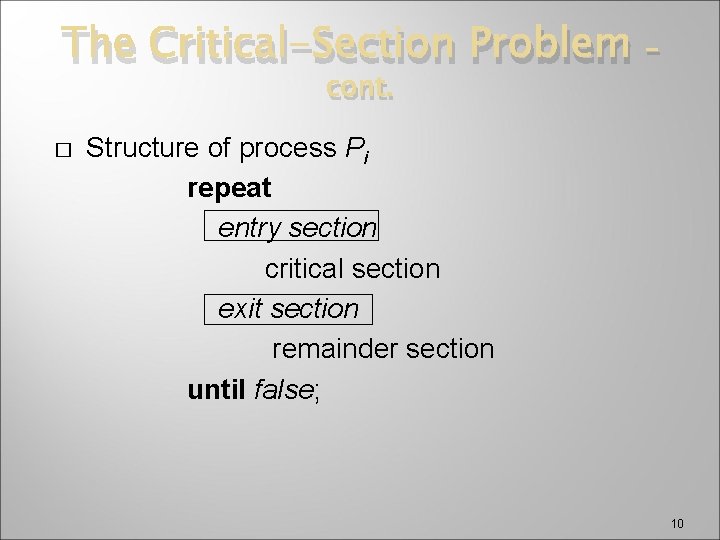

The Critical-Section Problem cont. � – Structure of process Pi repeat entry section critical section exit section remainder section until false; 10

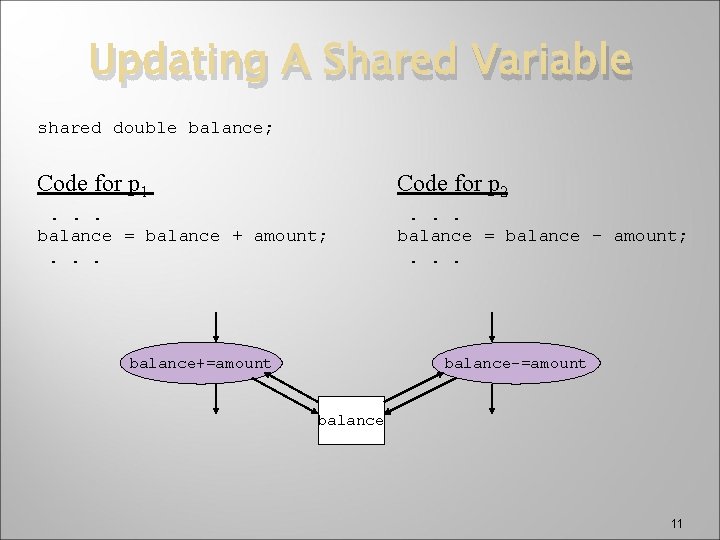

Updating A Shared Variable shared double balance; Code for p 1 Code for p 2 . . . balance = balance + amount; . . . balance = balance - amount; . . . balance+=amount balance-=amount balance 11

Updating A Shared Variable Execution of p 1 … load R 1, balance load R 2, amount Timer interrupt add R 1, R 2 store R 1, balance … – cont. Execution of p 2 … load sub store … R 1, R 2, R 1, balance amount R 2 balance 12

Race Condition � Race condition � When several processes access and manipulate the same data concurrently, there is a race among the processes � The outcome of the execution depends on the particular order in which the access takes place � This is called a race condition 13

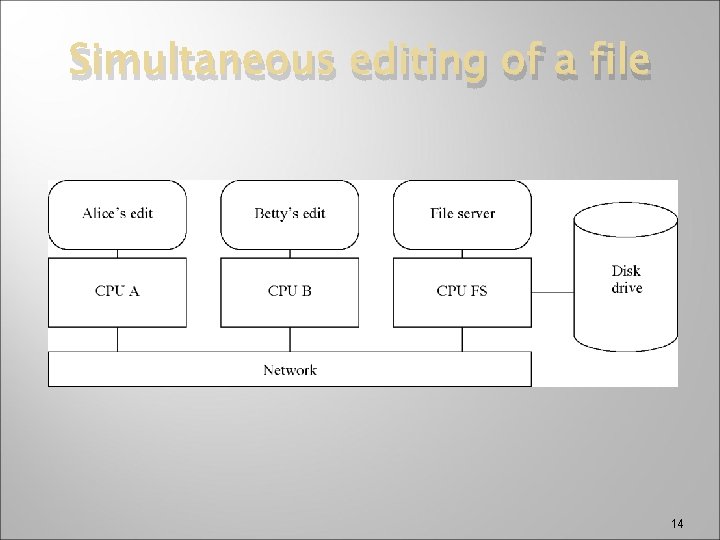

Simultaneous editing of a file 14

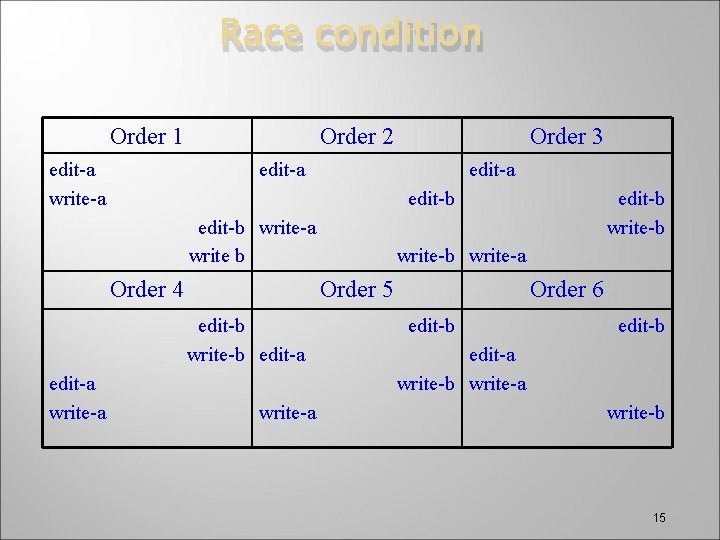

Race condition Order 1 edit-a write-a Order 2 edit-a edit-b write-a write b Order 4 write-a edit-b write-b write-a Order 5 edit-b write-b edit-a write-a Order 3 Order 6 edit-b edit-a write-b write-a write-b 15

Critical Sections � � � Mutual exclusion: Only one process can be in the critical section at a time There is a race to execute critical sections The sections may be defined by different code in different processes � � � cannot easily detect with static analysis Without mutual exclusion, results of multiple execution are not determinate Need an OS mechanism so programmer can resolve races 16

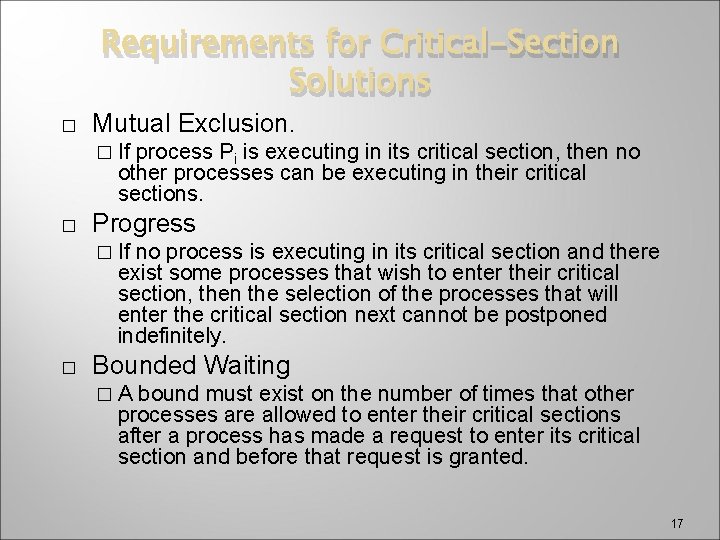

Requirements for Critical-Section Solutions � Mutual Exclusion. � If process Pi is executing in its critical section, then no other processes can be executing in their critical sections. � Progress � If no process is executing in its critical section and there exist some processes that wish to enter their critical section, then the selection of the processes that will enter the critical section next cannot be postponed indefinitely. � Bounded Waiting �A bound must exist on the number of times that other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted. 17

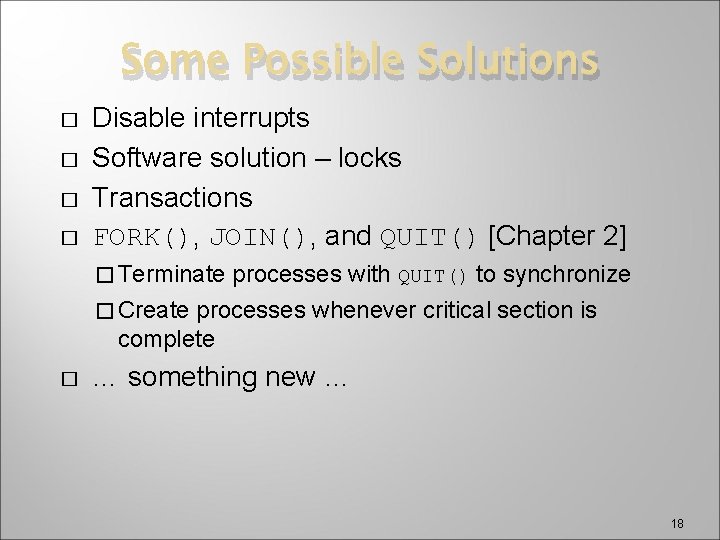

Some Possible Solutions � � Disable interrupts Software solution – locks Transactions FORK(), JOIN(), and QUIT() [Chapter 2] � Terminate processes with QUIT() to synchronize � Create processes whenever critical section is complete � … something new … 18

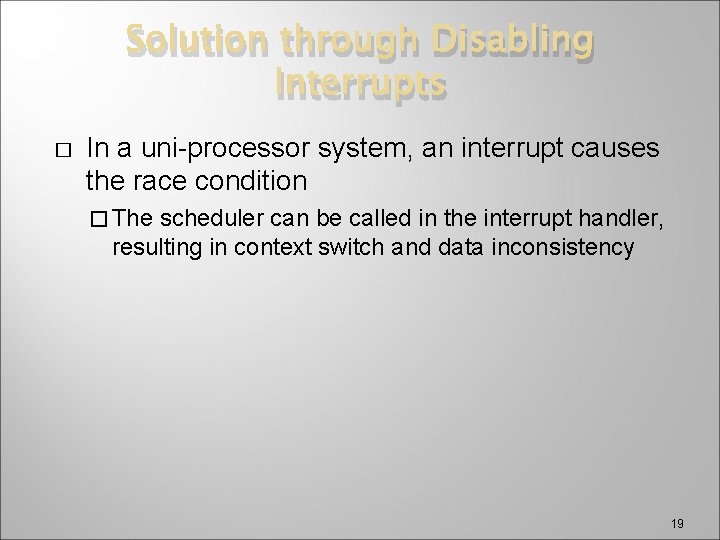

Solution through Disabling Interrupts � In a uni-processor system, an interrupt causes the race condition � The scheduler can be called in the interrupt handler, resulting in context switch and data inconsistency 19

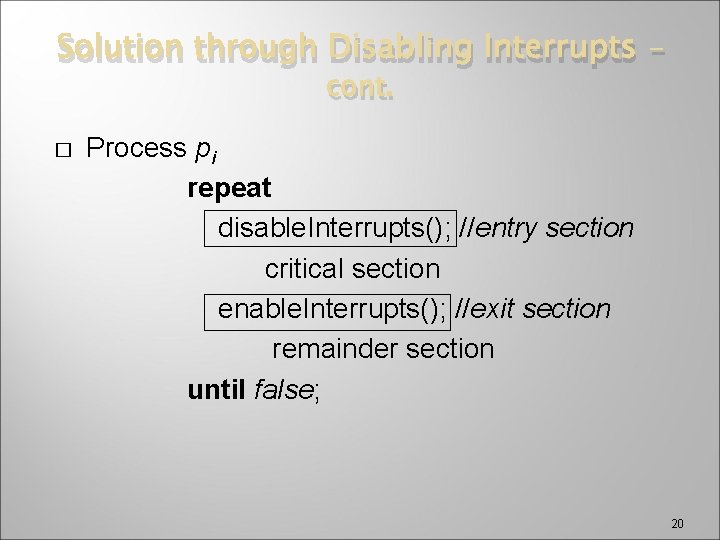

Solution through Disabling Interrupts – cont. � Process pi repeat disable. Interrupts(); //entry section critical section enable. Interrupts(); //exit section remainder section until false; 20

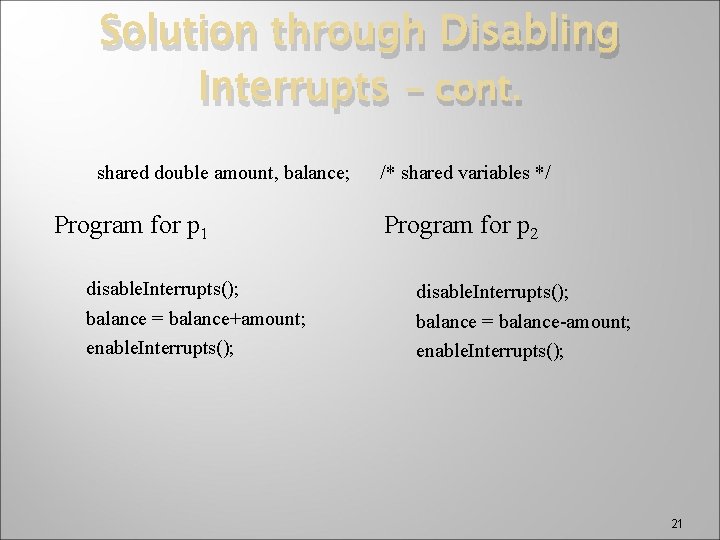

Solution through Disabling Interrupts – cont. shared double amount, balance; Program for p 1 disable. Interrupts(); balance = balance+amount; enable. Interrupts(); /* shared variables */ Program for p 2 disable. Interrupts(); balance = balance-amount; enable. Interrupts(); 21

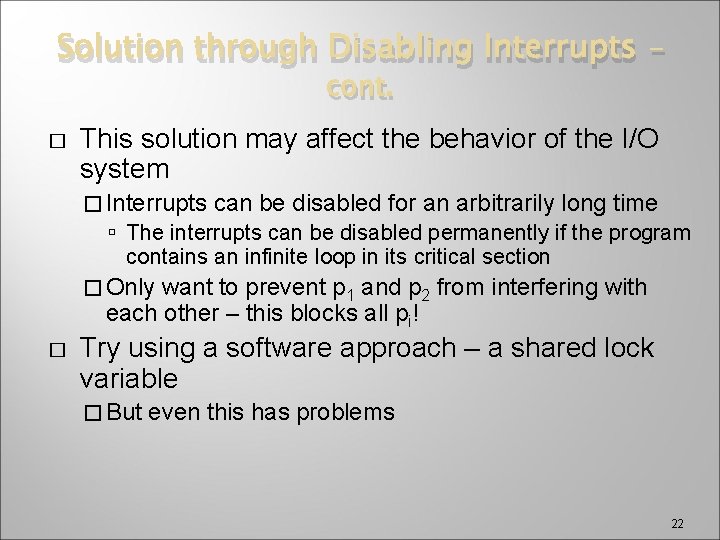

Solution through Disabling Interrupts – cont. � This solution may affect the behavior of the I/O system � Interrupts can be disabled for an arbitrarily long time The interrupts can be disabled permanently if the program contains an infinite loop in its critical section � Only want to prevent p 1 and p 2 from interfering with each other – this blocks all pi! � Try using a software approach – a shared lock variable � But even this has problems 22

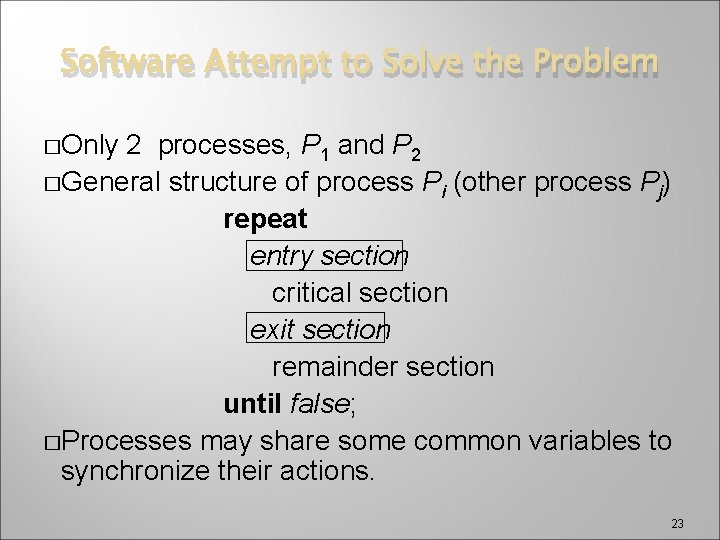

Software Attempt to Solve the Problem �Only 2 processes, P 1 and P 2 �General structure of process Pi (other process Pj) repeat entry section critical section exit section remainder section until false; �Processes may share some common variables to synchronize their actions. 23

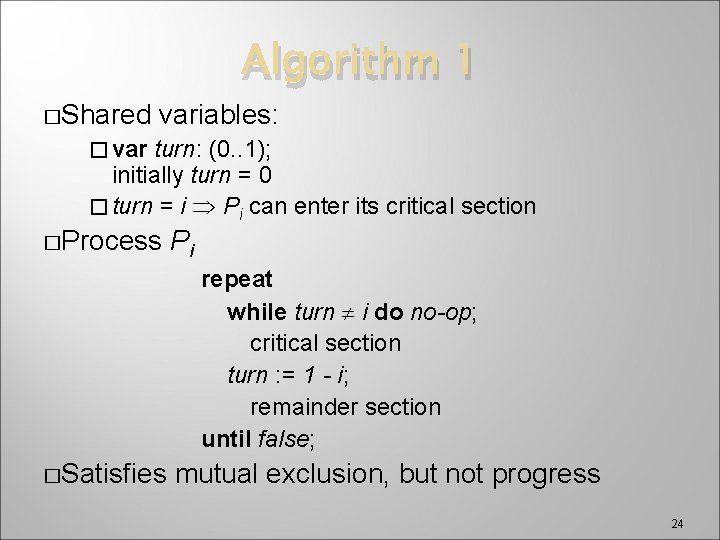

Algorithm 1 �Shared variables: � var turn: (0. . 1); initially turn = 0 � turn = i Pi can enter its critical section �Process Pi repeat while turn i do no-op; critical section turn : = 1 - i; remainder section until false; �Satisfies mutual exclusion, but not progress 24

![Algorithm 2 �Shared variables var flag: array [0. . 1] of boolean; initially flag Algorithm 2 �Shared variables var flag: array [0. . 1] of boolean; initially flag](http://slidetodoc.com/presentation_image_h/0177887bf68e5b5d43fc570c75b2ee15/image-25.jpg)

Algorithm 2 �Shared variables var flag: array [0. . 1] of boolean; initially flag [0] = flag [1] = false. � flag [i] = true Pi ready to enter its critical section � � Process Pi (note that j represents the other process) repeat flag[i] : = true; while flag[j] do no-op; critical section flag [i] : = false; remainder section until false; � Satisfies mutual exclusion, but not progress requirement. 25

![Algorithm 2 – version 2 �Shared variables var flag: array [0. . 1] of Algorithm 2 – version 2 �Shared variables var flag: array [0. . 1] of](http://slidetodoc.com/presentation_image_h/0177887bf68e5b5d43fc570c75b2ee15/image-26.jpg)

Algorithm 2 – version 2 �Shared variables var flag: array [0. . 1] of boolean; initially flag [0] = flag [1] = false. � flag [i] = true Pi ready to enter its critical section � � Process Pi repeat while flag[j] do no-op; flag[i] : = true; critical section flag [i] : = false; remainder section until false; � Does not satisfy mutual exclusion 26

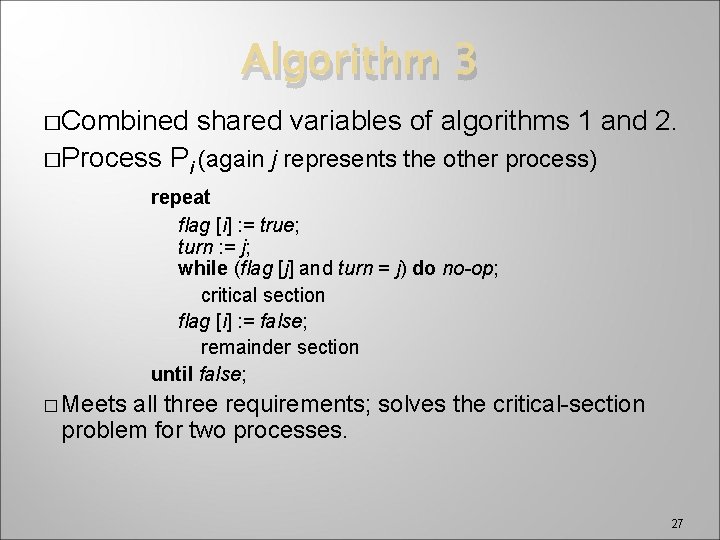

Algorithm 3 �Combined �Process shared variables of algorithms 1 and 2. Pi (again j represents the other process) repeat flag [i] : = true; turn : = j; while (flag [j] and turn = j) do no-op; critical section flag [i] : = false; remainder section until false; � Meets all three requirements; solves the critical-section problem for two processes. 27

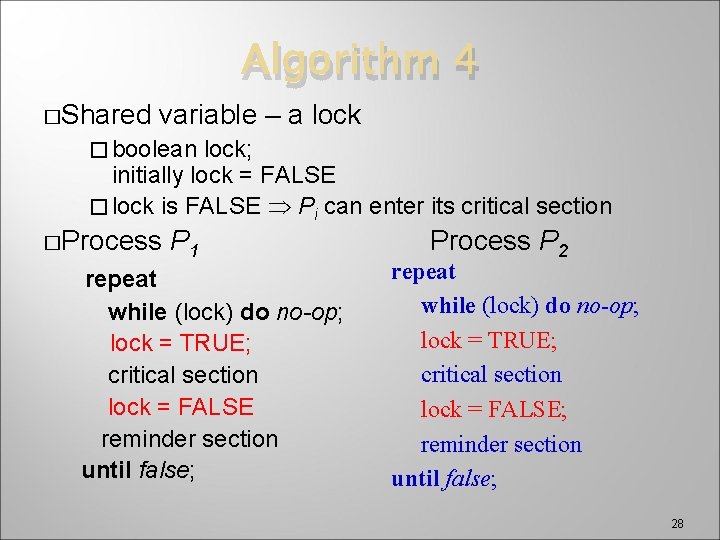

Algorithm 4 �Shared variable – a lock � boolean lock; initially lock = FALSE � lock is FALSE Pi can enter its critical section �Process P 1 repeat while (lock) do no-op; lock = TRUE; critical section lock = FALSE reminder section until false; Process P 2 repeat while (lock) do no-op; lock = TRUE; critical section lock = FALSE; reminder section until false; 28

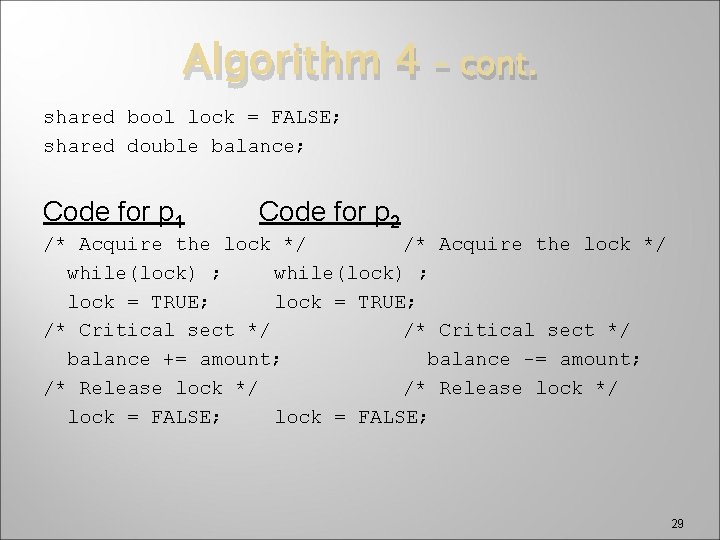

Algorithm 4 – cont. shared bool lock = FALSE; shared double balance; Code for p 1 Code for p 2 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Critical sect */ balance += amount; balance -= amount; /* Release lock */ lock = FALSE; 29

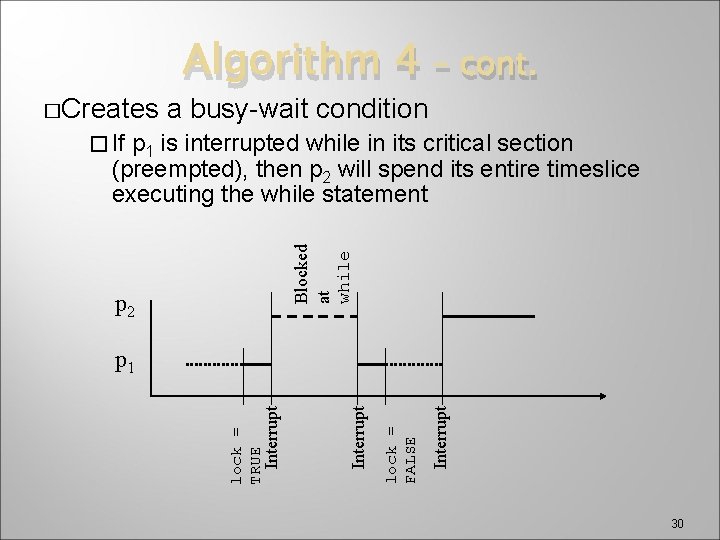

�Creates Algorithm 4 a busy-wait condition – cont. p 1 is interrupted while in its critical section (preempted), then p 2 will spend its entire timeslice executing the while statement Blocked at while � If p 2 Interrupt lock = FALSE Interrupt lock = TRUE Interrupt p 1 30

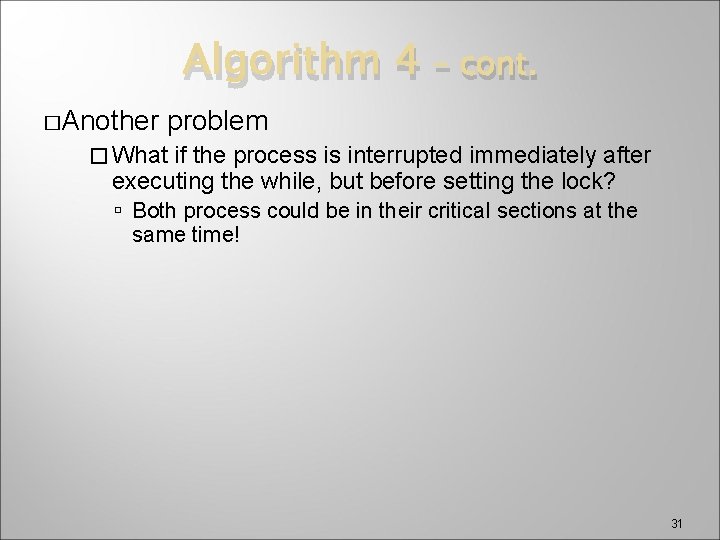

Algorithm 4 �Another – cont. problem � What if the process is interrupted immediately after executing the while, but before setting the lock? Both process could be in their critical sections at the same time! 31

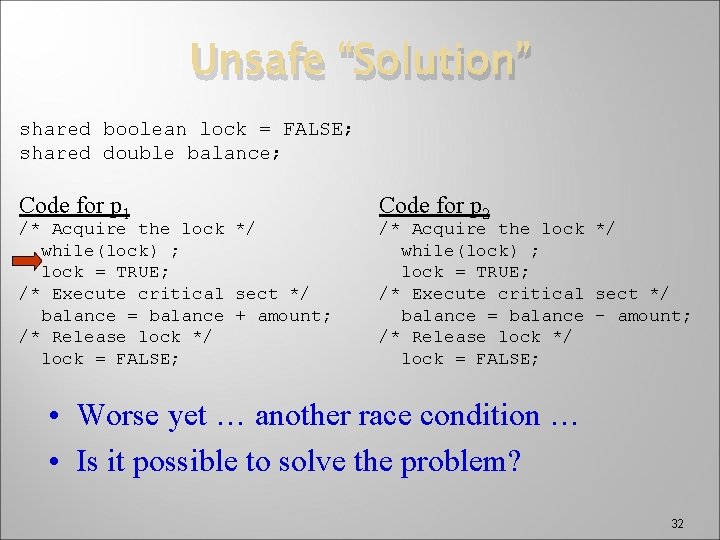

Unsafe “Solution” shared boolean lock = FALSE; shared double balance; Code for p 1 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ balance = balance + amount; /* Release lock */ lock = FALSE; Code for p 2 /* Acquire the lock */ while(lock) ; lock = TRUE; /* Execute critical sect */ balance = balance - amount; /* Release lock */ lock = FALSE; • Worse yet … another race condition … • Is it possible to solve the problem? 32

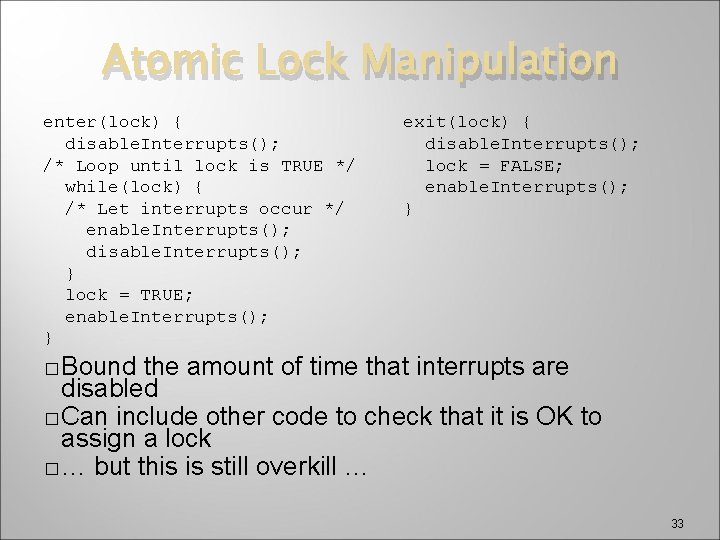

Atomic Lock Manipulation enter(lock) { disable. Interrupts(); /* Loop until lock is TRUE */ while(lock) { /* Let interrupts occur */ enable. Interrupts(); disable. Interrupts(); } lock = TRUE; enable. Interrupts(); } exit(lock) { disable. Interrupts(); lock = FALSE; enable. Interrupts(); } � Bound the amount of time that interrupts are disabled � Can include other code to check that it is OK to assign a lock � … but this is still overkill … 33

�Another Deadlock more subtle problem �Two or more processes/threads get into a state where each is controlling a resource needed by the other 34

Fig 8 -9: Deadlocked Pirates 35

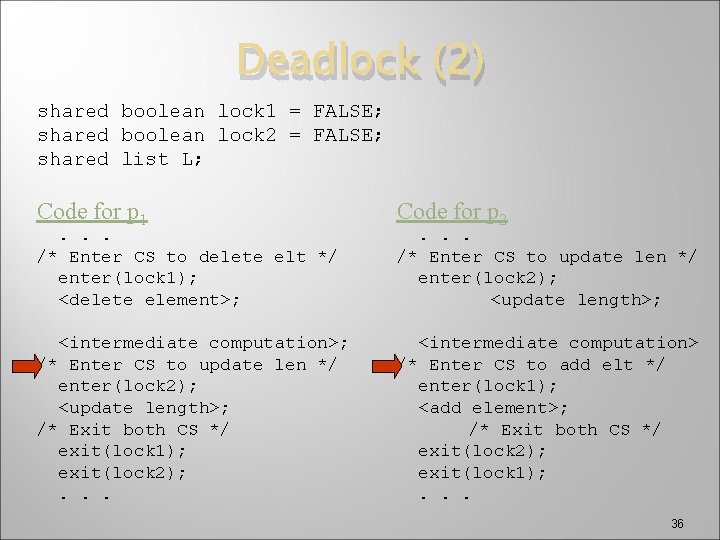

Deadlock (2) shared boolean lock 1 = FALSE; shared boolean lock 2 = FALSE; shared list L; Code for p 1 Code for p 2 <intermediate computation>; /* Enter CS to update len */ enter(lock 2); <update length>; /* Exit both CS */ exit(lock 1); exit(lock 2); . . . <intermediate computation> /* Enter CS to add elt */ enter(lock 1); <add element>; /* Exit both CS */ exit(lock 2); exit(lock 1); . . . /* Enter CS to delete elt */ enter(lock 1); <delete element>; . . . /* Enter CS to update len */ enter(lock 2); <update length>; 36

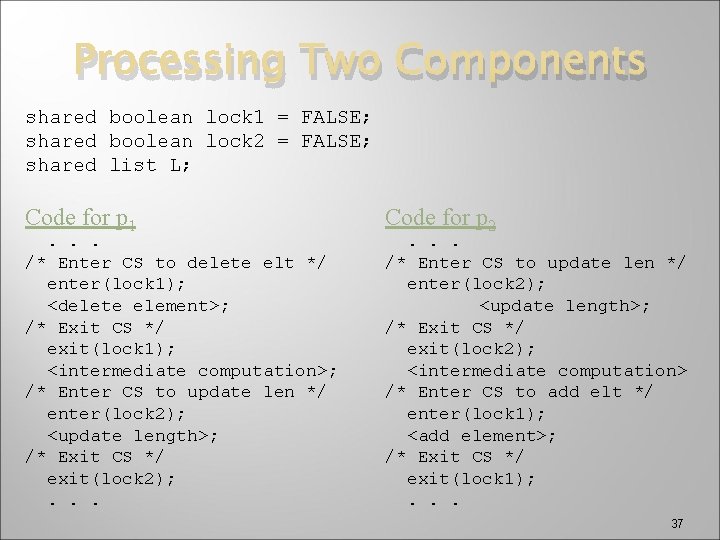

Processing Two Components shared boolean lock 1 = FALSE; shared boolean lock 2 = FALSE; shared list L; Code for p 1 . . . /* Enter CS to delete elt */ enter(lock 1); <delete element>; /* Exit CS */ exit(lock 1); <intermediate computation>; /* Enter CS to update len */ enter(lock 2); <update length>; /* Exit CS */ exit(lock 2); . . . Code for p 2 . . . /* Enter CS to update len */ enter(lock 2); <update length>; /* Exit CS */ exit(lock 2); <intermediate computation> /* Enter CS to add elt */ enter(lock 1); <add element>; /* Exit CS */ exit(lock 1); . . . 37

Transactions � A transaction is a list of operations � When the system begins to execute the list, it must execute all of them without interruption or � It � must not execute any at all Example: List manipulator � Add or delete an element from a list � Adjust the list descriptor, e. g. , length � Too heavyweight – need something simpler 38

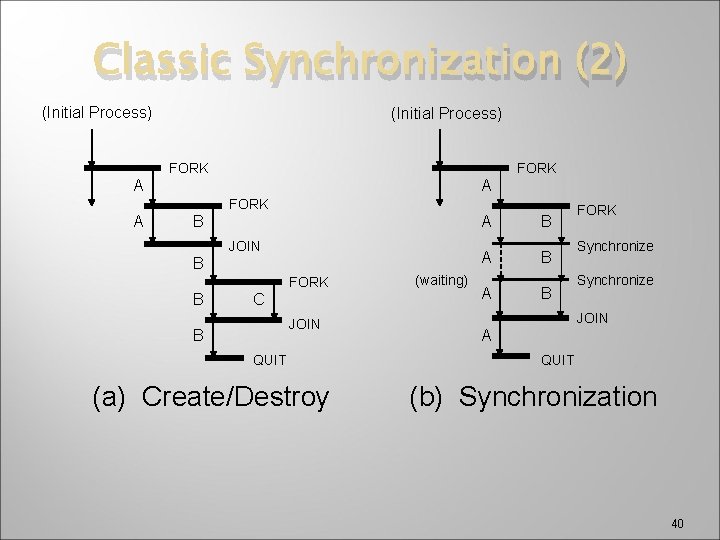

Classic Solution � Recall mechanisms for creating and destroying processes � FORK(), JOIN(), and QUIT() � May be used to synchronize concurrent processes � Adding a synchronization operator is an modern approach 39

Classic Synchronization (2) (Initial Process) FORK A A FORK A B JOIN B FORK B C JOIN B QUIT (a) Create/Destroy (waiting) A B A B FORK Synchronize JOIN A QUIT (b) Synchronization 40

Classic Solution � – cont. Process creation/destroying tend to be costly operations � Considerable manipulation of process descriptors, protection mechanisms, and memory management mechanisms � � Synchronization operation can be thought of as a resource request, and can be implemented much more efficiently Contemporary OS use synchronization mechanisms – semaphores 41

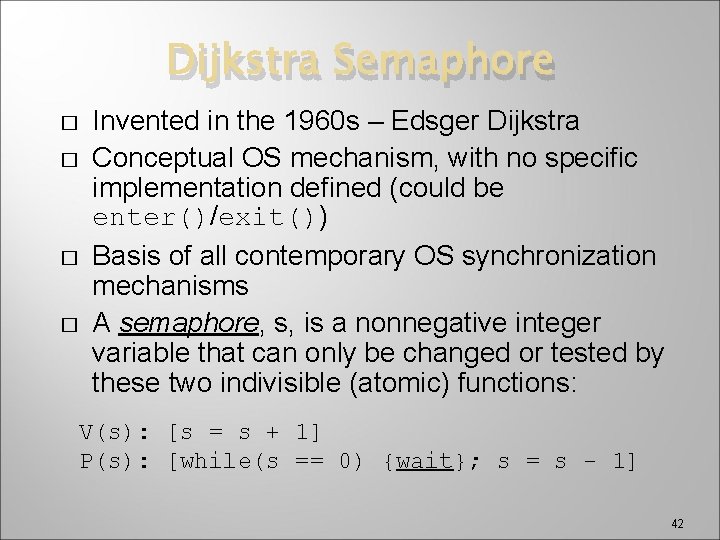

Dijkstra Semaphore � � Invented in the 1960 s – Edsger Dijkstra Conceptual OS mechanism, with no specific implementation defined (could be enter()/exit()) Basis of all contemporary OS synchronization mechanisms A semaphore, s, is a nonnegative integer variable that can only be changed or tested by these two indivisible (atomic) functions: V(s): [s = s + 1] P(s): [while(s == 0) {wait}; s = s - 1] 42

Semaphore �Dijkstra was Dutch, so � P: short for proberen, “to test” � V: short for verhogen, “to increment” �P is the wait operation �V is the signal operation �Note that some texts use P and V; others use wait and signal 43

Traffic Intersections 44

A Semaphore 45

Semaphores �Two types of semaphores � Binary semaphore – can only be either 0 or 1 Can also be true or false � Counting semaphore – can be any positive number 46

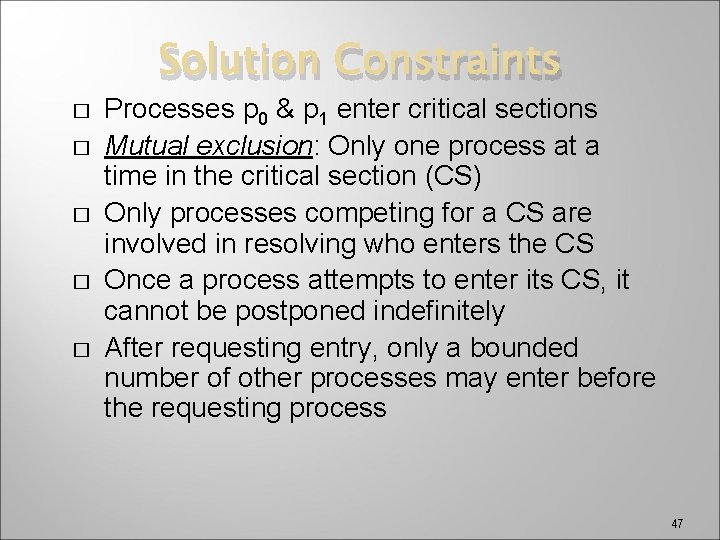

Solution Constraints � � � Processes p 0 & p 1 enter critical sections Mutual exclusion: Only one process at a time in the critical section (CS) Only processes competing for a CS are involved in resolving who enters the CS Once a process attempts to enter its CS, it cannot be postponed indefinitely After requesting entry, only a bounded number of other processes may enter before the requesting process 47

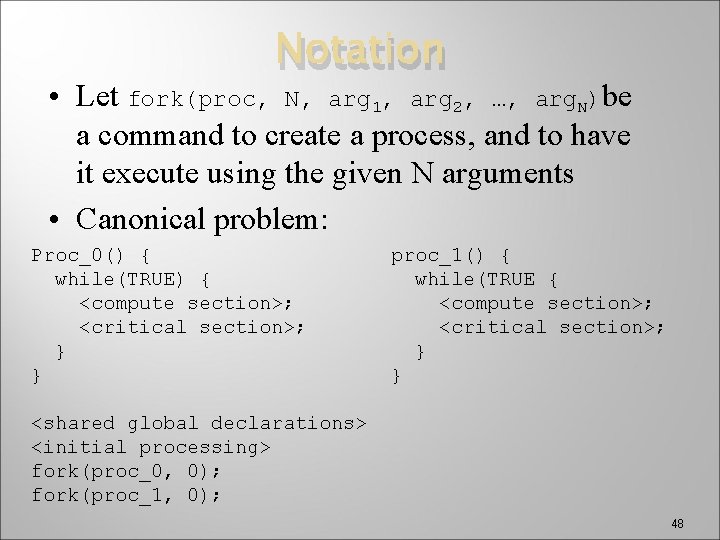

Notation • Let fork(proc, N, arg 1, arg 2, …, arg. N)be a command to create a process, and to have it execute using the given N arguments • Canonical problem: Proc_0() { while(TRUE) { <compute section>; <critical section>; } } proc_1() { while(TRUE { <compute section>; <critical section>; } } <shared global declarations> <initial processing> fork(proc_0, 0); fork(proc_1, 0); 48

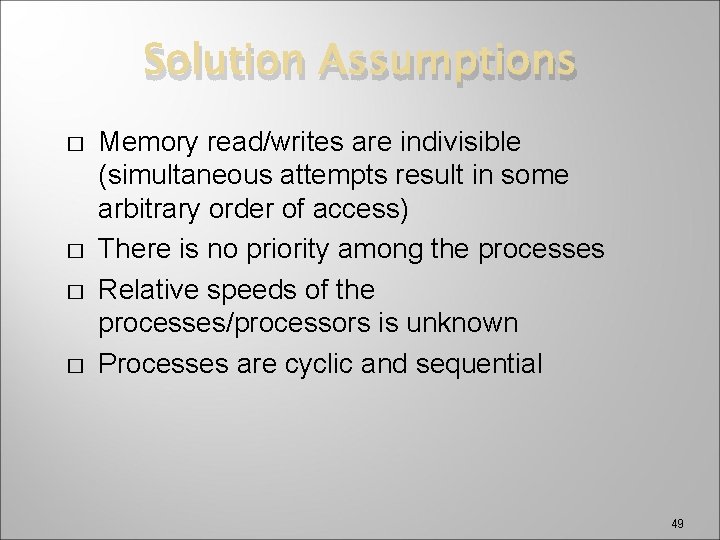

Solution Assumptions � � Memory read/writes are indivisible (simultaneous attempts result in some arbitrary order of access) There is no priority among the processes Relative speeds of the processes/processors is unknown Processes are cyclic and sequential 49

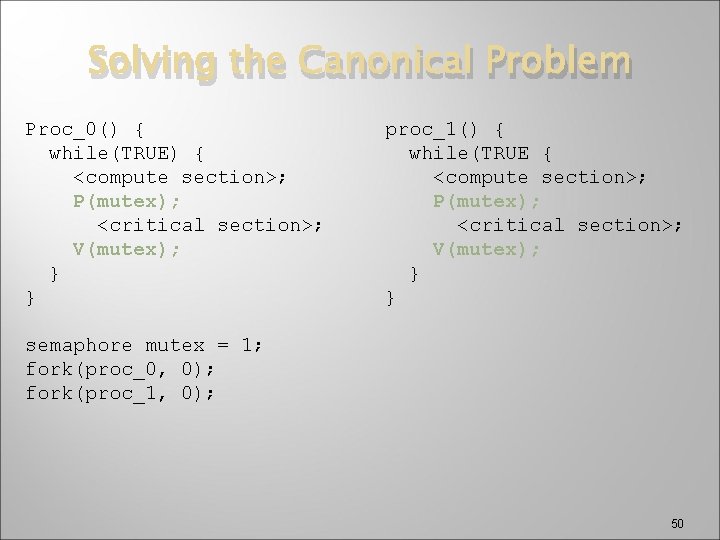

Solving the Canonical Problem Proc_0() { while(TRUE) { <compute section>; P(mutex); <critical section>; V(mutex); } } proc_1() { while(TRUE { <compute section>; P(mutex); <critical section>; V(mutex); } } semaphore mutex = 1; fork(proc_0, 0); fork(proc_1, 0); 50

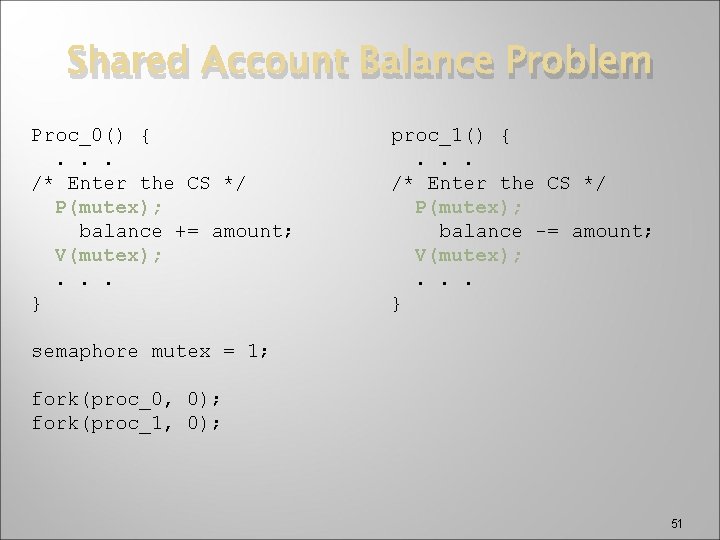

Shared Account Balance Problem Proc_0() {. . . /* Enter the CS */ P(mutex); balance += amount; V(mutex); . . . } proc_1() {. . . /* Enter the CS */ P(mutex); balance -= amount; V(mutex); . . . } semaphore mutex = 1; fork(proc_0, 0); fork(proc_1, 0); 51

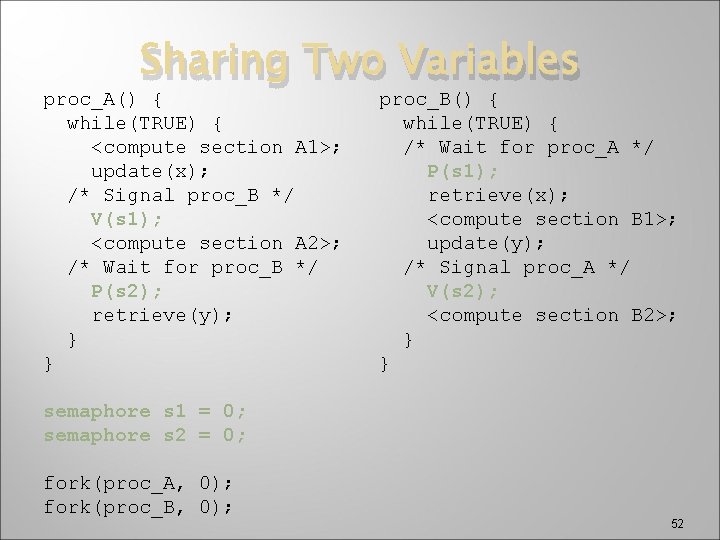

Sharing Two Variables proc_A() { while(TRUE) { <compute section A 1>; update(x); /* Signal proc_B */ V(s 1); <compute section A 2>; /* Wait for proc_B */ P(s 2); retrieve(y); } } proc_B() { while(TRUE) { /* Wait for proc_A */ P(s 1); retrieve(x); <compute section B 1>; update(y); /* Signal proc_A */ V(s 2); <compute section B 2>; } } semaphore s 1 = 0; semaphore s 2 = 0; fork(proc_A, 0); fork(proc_B, 0); 52

The Driver-Controller Interface � � The semaphore principle is logically used with the busy and done flags in a controller Driver signals controller with a V(busy), then waits for completion with P(done) Controller waits for work with P(busy), then announces completion with V(done) See Fig 8. 17, page 310 53

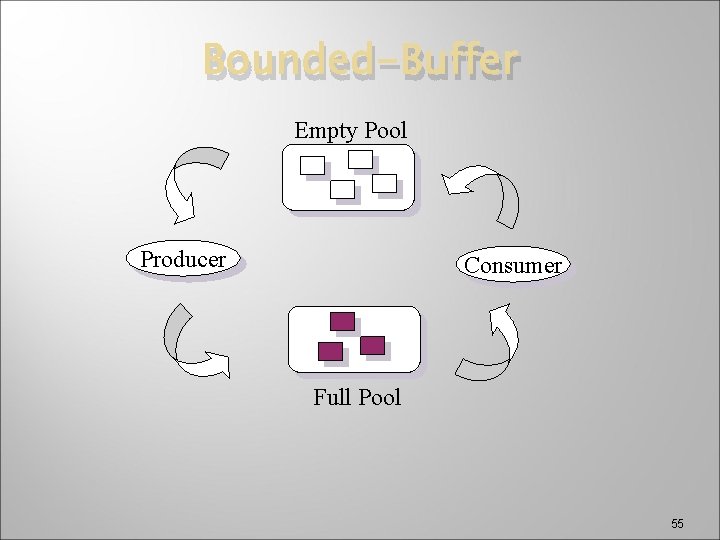

Bounded Buffer Problem � � � Two processes executing concurrently using shared memory One produces information (the producer), other uses the information (the consumer) Processes communicate through fixed number of buffers � Producer obtains buffer from pool of empty buffers, fills it with info, and places it in pool of full buffers � Consumer obtains buffer from pool of full buffers, gets info, and places it in pool of empty buffers � Need to ensure neither process uses wrong pool 54

Bounded-Buffer Empty Pool Producer Consumer Full Pool 55

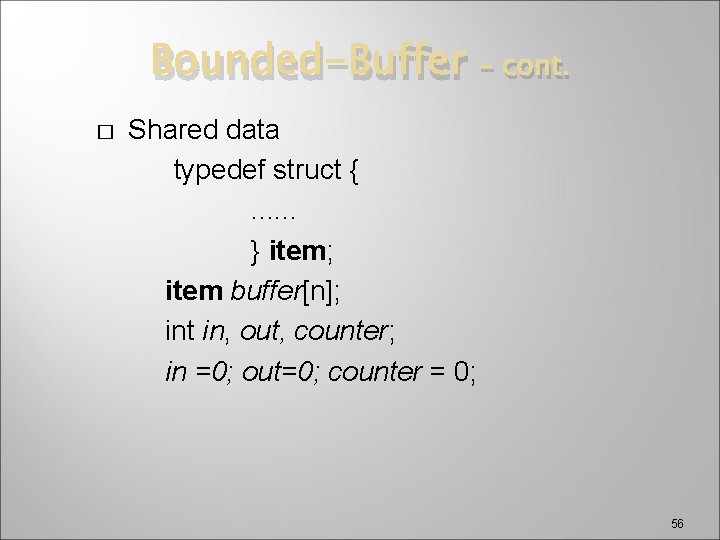

Bounded-Buffer � – cont. Shared data typedef struct {. . . } item; item buffer[n]; int in, out, counter; in =0; out=0; counter = 0; 56

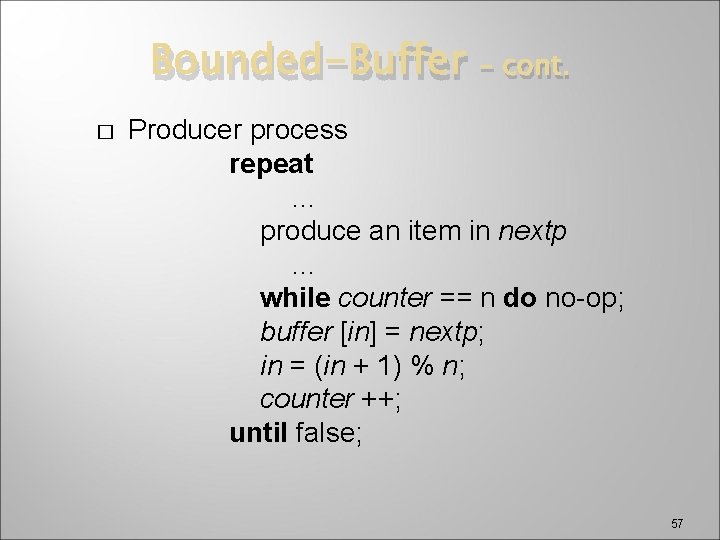

Bounded-Buffer � – cont. Producer process repeat … produce an item in nextp … while counter == n do no-op; buffer [in] = nextp; in = (in + 1) % n; counter ++; until false; 57

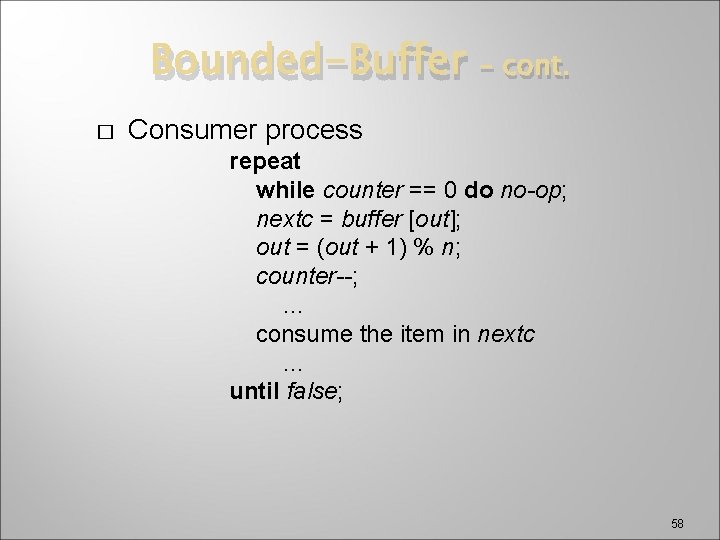

Bounded-Buffer � – cont. Consumer process repeat while counter == 0 do no-op; nextc = buffer [out]; out = (out + 1) % n; counter--; … consume the item in nextc … until false; 58

Bounded-Buffer � – cont. If we let producer and consumer processes run concurrently, we may have wrong result � Note the simple-thread program � Each thread is working properly � However, the total balance is not kept 59

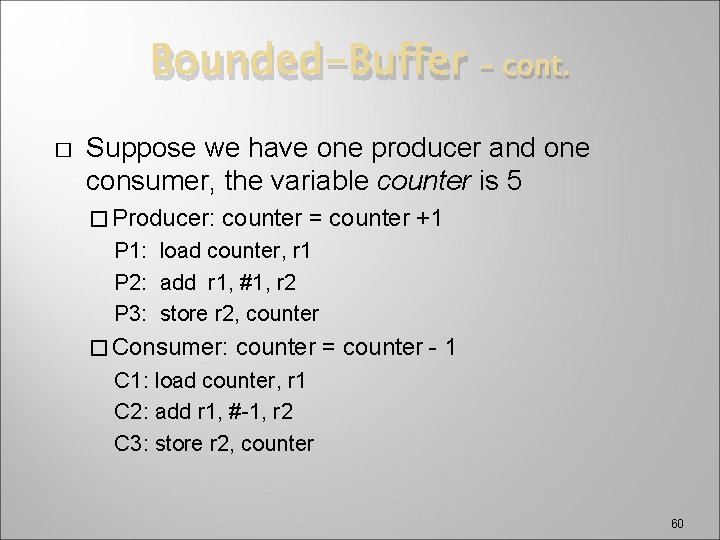

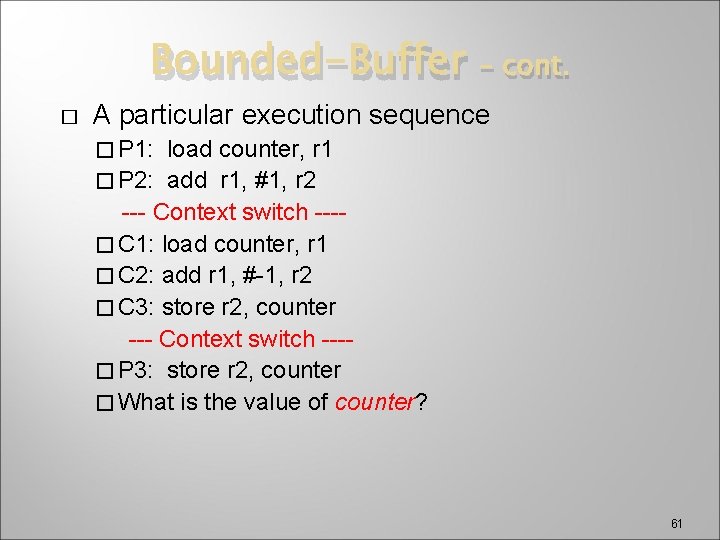

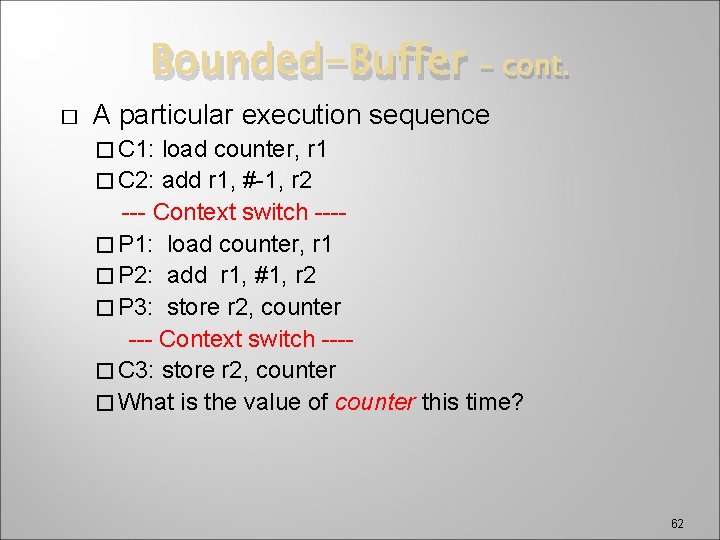

Bounded-Buffer � – cont. Suppose we have one producer and one consumer, the variable counter is 5 � Producer: counter = counter +1 P 1: load counter, r 1 P 2: add r 1, #1, r 2 P 3: store r 2, counter � Consumer: counter = counter - 1 C 1: load counter, r 1 C 2: add r 1, #-1, r 2 C 3: store r 2, counter 60

Bounded-Buffer � – cont. A particular execution sequence � P 1: load counter, r 1 � P 2: add r 1, #1, r 2 --- Context switch ---� C 1: load counter, r 1 � C 2: add r 1, #-1, r 2 � C 3: store r 2, counter --- Context switch ---� P 3: store r 2, counter � What is the value of counter? 61

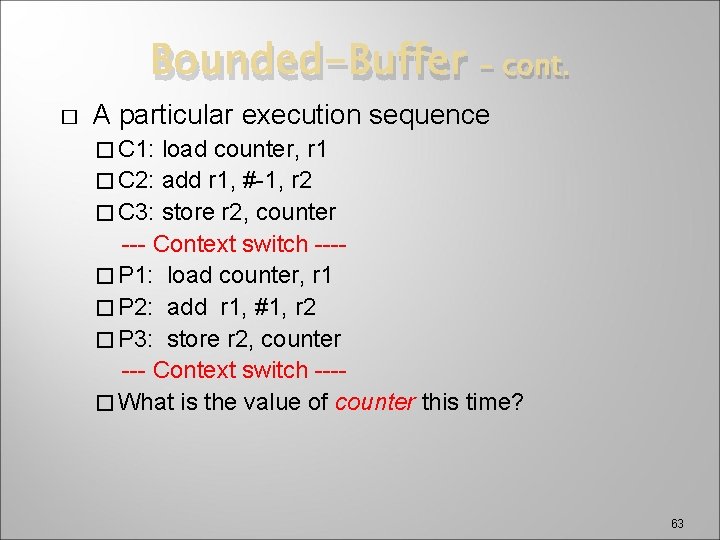

Bounded-Buffer � – cont. A particular execution sequence � C 1: load counter, r 1 � C 2: add r 1, #-1, r 2 --- Context switch ---� P 1: load counter, r 1 � P 2: add r 1, #1, r 2 � P 3: store r 2, counter --- Context switch ---� C 3: store r 2, counter � What is the value of counter this time? 62

Bounded-Buffer � – cont. A particular execution sequence � C 1: load counter, r 1 � C 2: add r 1, #-1, r 2 � C 3: store r 2, counter --- Context switch ---� P 1: load counter, r 1 � P 2: add r 1, #1, r 2 � P 3: store r 2, counter --- Context switch ---� What is the value of counter this time? 63

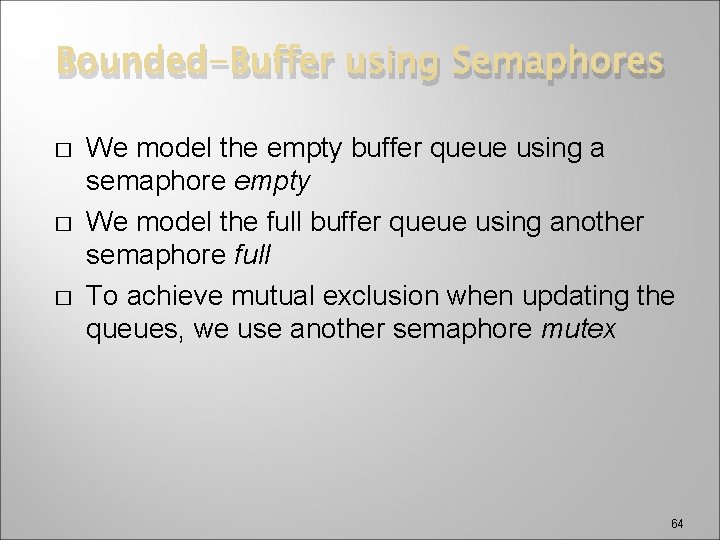

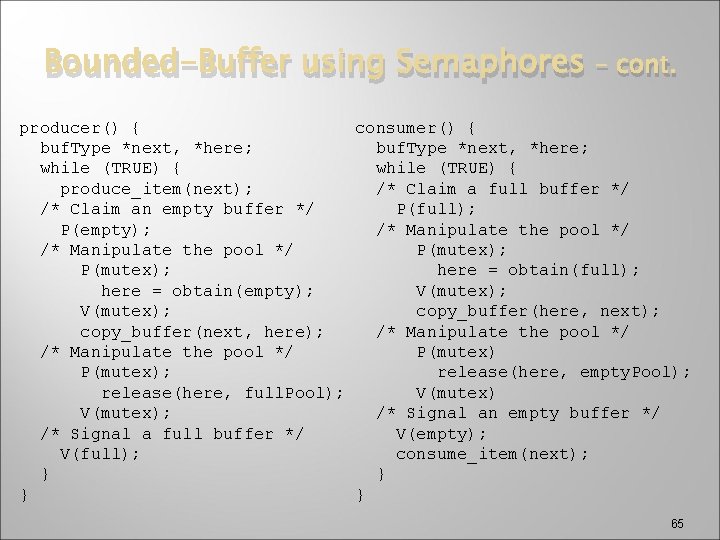

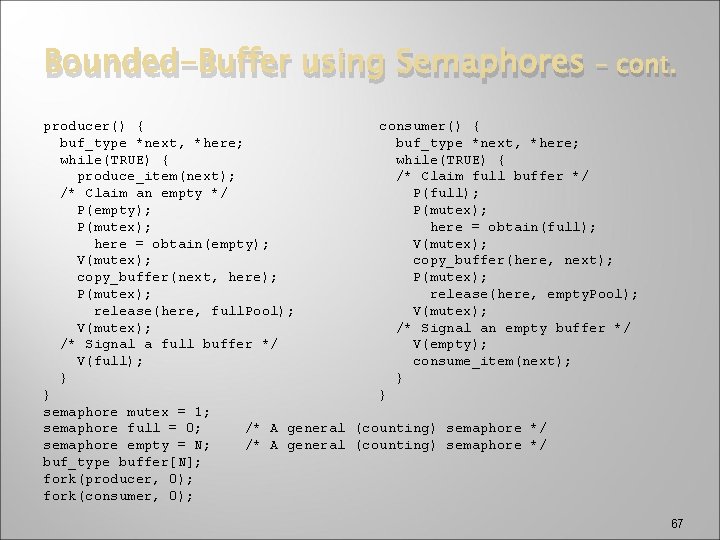

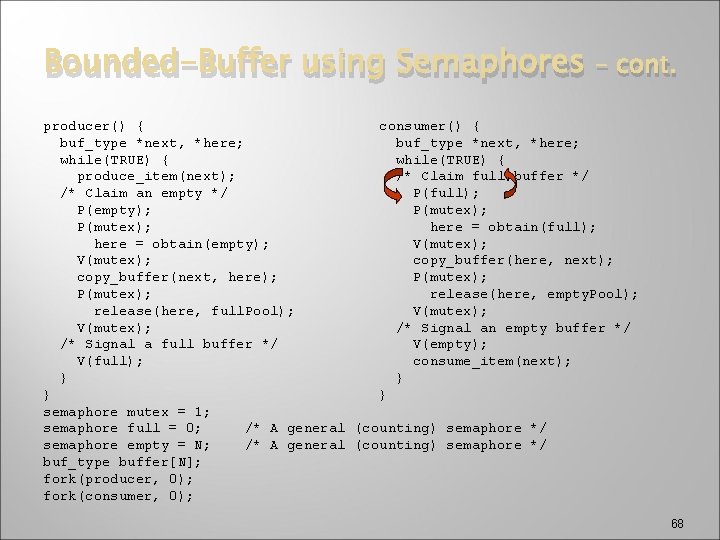

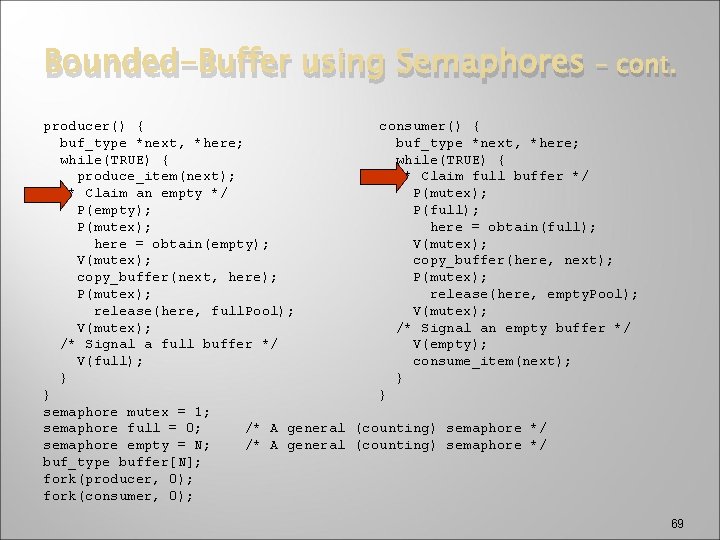

Bounded-Buffer using Semaphores � � � We model the empty buffer queue using a semaphore empty We model the full buffer queue using another semaphore full To achieve mutual exclusion when updating the queues, we use another semaphore mutex 64

Bounded-Buffer using Semaphores – cont. producer() { consumer() { buf. Type *next, *here; while (TRUE) { produce_item(next); /* Claim a full buffer */ /* Claim an empty buffer */ P(full); P(empty); /* Manipulate the pool */ P(mutex); here = obtain(full); here = obtain(empty); V(mutex); copy_buffer(here, next); copy_buffer(next, here); /* Manipulate the pool */ P(mutex); release(here, empty. Pool); release(here, full. Pool); V(mutex); /* Signal an empty buffer */ /* Signal a full buffer */ V(empty); V(full); consume_item(next); } } 65

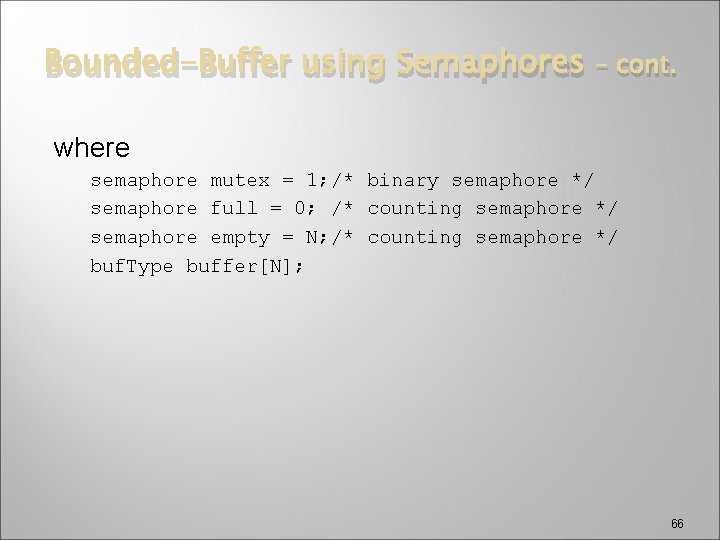

Bounded-Buffer using Semaphores – cont. where semaphore mutex = 1; /* binary semaphore */ semaphore full = 0; /* counting semaphore */ semaphore empty = N; /* counting semaphore */ buf. Type buffer[N]; 66

Bounded-Buffer using Semaphores – cont. producer() { consumer() { buf_type *next, *here; while(TRUE) { produce_item(next); /* Claim full buffer */ /* Claim an empty */ P(full); P(empty); P(mutex); here = obtain(full); here = obtain(empty); V(mutex); copy_buffer(here, next); copy_buffer(next, here); P(mutex); release(here, empty. Pool); release(here, full. Pool); V(mutex); /* Signal an empty buffer */ /* Signal a full buffer */ V(empty); V(full); consume_item(next); } } semaphore mutex = 1; semaphore full = 0; /* A general (counting) semaphore */ semaphore empty = N; /* A general (counting) semaphore */ buf_type buffer[N]; fork(producer, 0); fork(consumer, 0); 67

Bounded-Buffer using Semaphores – cont. producer() { consumer() { buf_type *next, *here; while(TRUE) { produce_item(next); /* Claim full buffer */ /* Claim an empty */ P(full); P(empty); P(mutex); here = obtain(full); here = obtain(empty); V(mutex); copy_buffer(here, next); copy_buffer(next, here); P(mutex); release(here, empty. Pool); release(here, full. Pool); V(mutex); /* Signal an empty buffer */ /* Signal a full buffer */ V(empty); V(full); consume_item(next); } } semaphore mutex = 1; semaphore full = 0; /* A general (counting) semaphore */ semaphore empty = N; /* A general (counting) semaphore */ buf_type buffer[N]; fork(producer, 0); fork(consumer, 0); 68

Bounded-Buffer using Semaphores – cont. producer() { consumer() { buf_type *next, *here; while(TRUE) { produce_item(next); /* Claim full buffer */ /* Claim an empty */ P(mutex); P(empty); P(full); P(mutex); here = obtain(full); here = obtain(empty); V(mutex); copy_buffer(here, next); copy_buffer(next, here); P(mutex); release(here, empty. Pool); release(here, full. Pool); V(mutex); /* Signal an empty buffer */ /* Signal a full buffer */ V(empty); V(full); consume_item(next); } } semaphore mutex = 1; semaphore full = 0; /* A general (counting) semaphore */ semaphore empty = N; /* A general (counting) semaphore */ buf_type buffer[N]; fork(producer, 0); fork(consumer, 0); 69

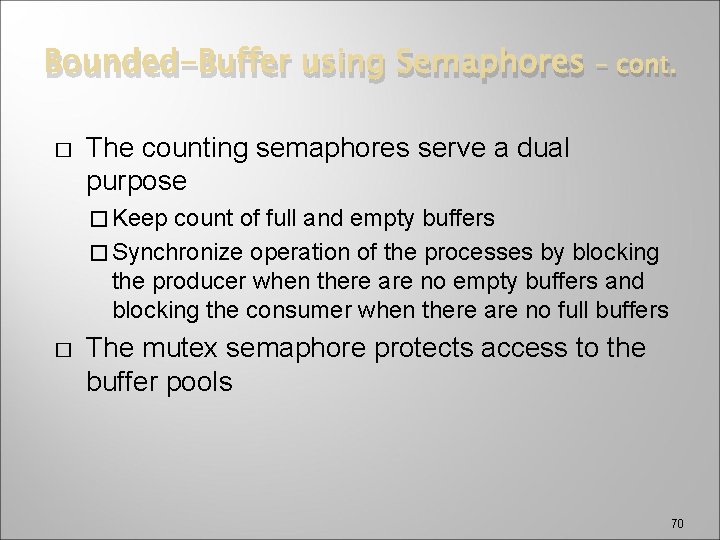

Bounded-Buffer using Semaphores � – cont. The counting semaphores serve a dual purpose � Keep count of full and empty buffers � Synchronize operation of the processes by blocking the producer when there are no empty buffers and blocking the consumer when there are no full buffers � The mutex semaphore protects access to the buffer pools 70

Bounded-Buffer using Semaphores � � – cont. The order of appearance of the P operations is significant If the first two P operations were switched, then either process could obtain the mutex semaphore and block on the empty/full semaphore – the other process could not proceed deadlock 71

The Readers-Writers Problem � A resource is shared among readers and writers �A reader process can share the resource with any other reader process but not with any writer process � A writer process requires exclusive access to the resource whenever it acquires any access to the resource 72

Readers-Writers Problem Writers Readers 73

Readers-Writers Problem (2) Reader Reader Writer Writer Shared Resource 74

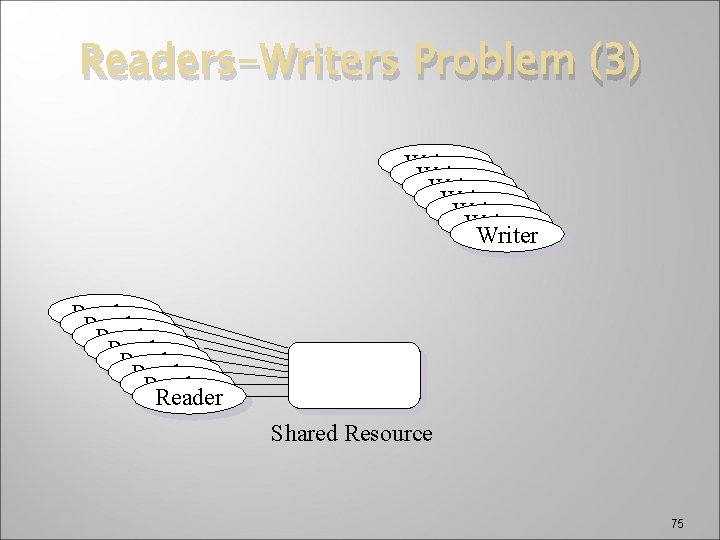

Readers-Writers Problem (3) Writer Writer Reader Reader Shared Resource 75

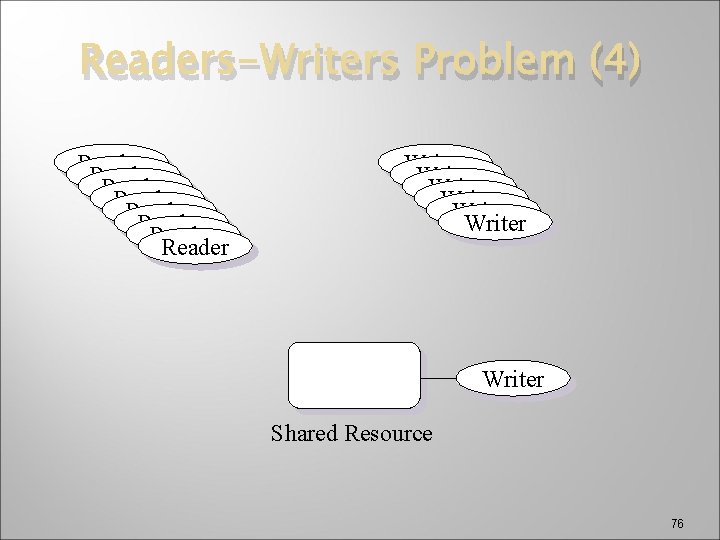

Readers-Writers Problem (4) Reader Reader Writer Writer Shared Resource 76

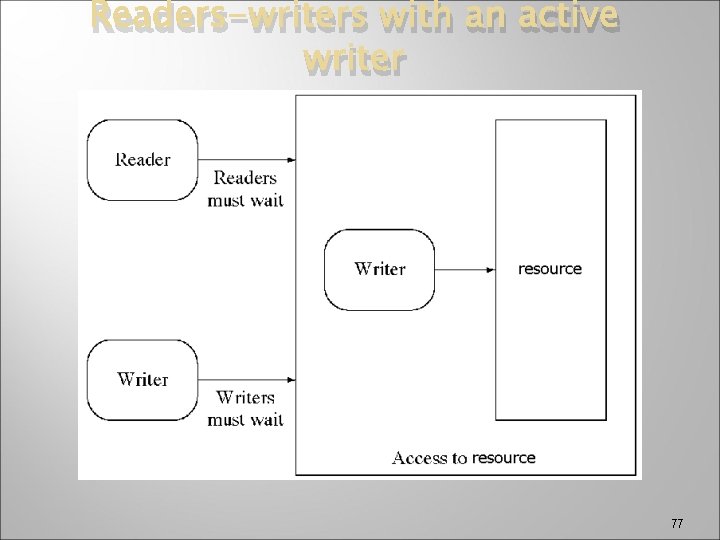

Readers-writers with an active writer 77

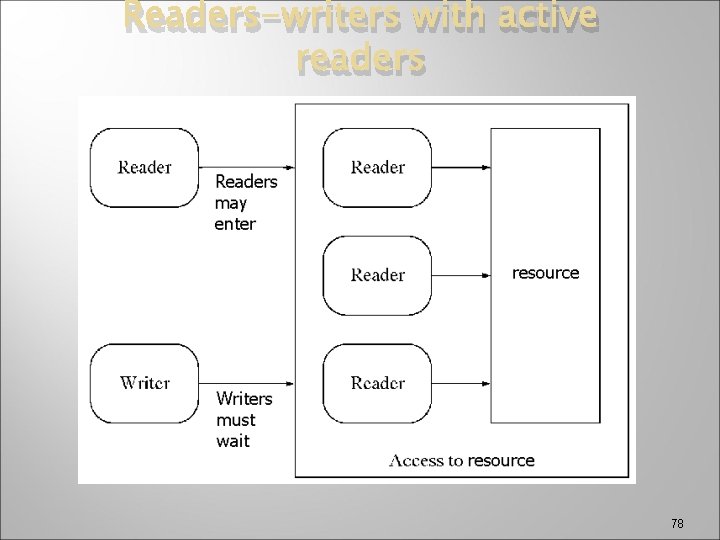

Readers-writers with active readers 78

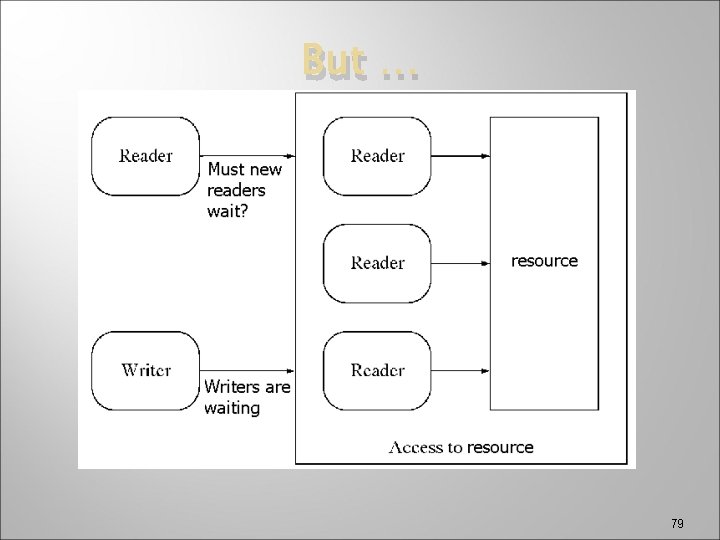

But … 79

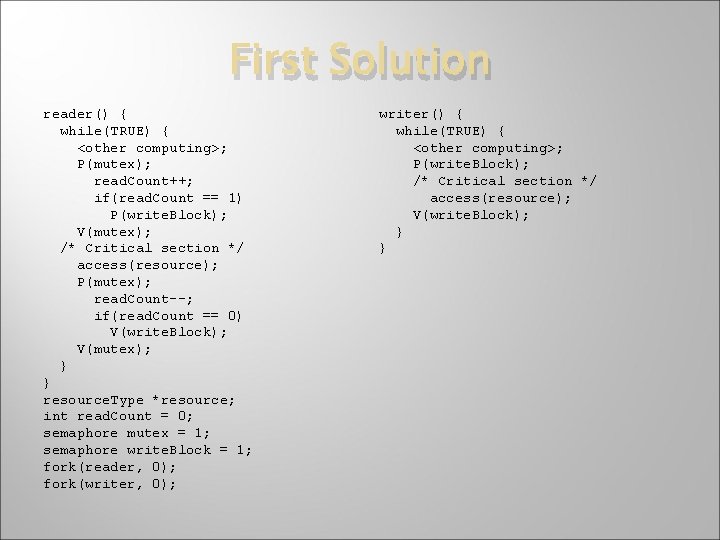

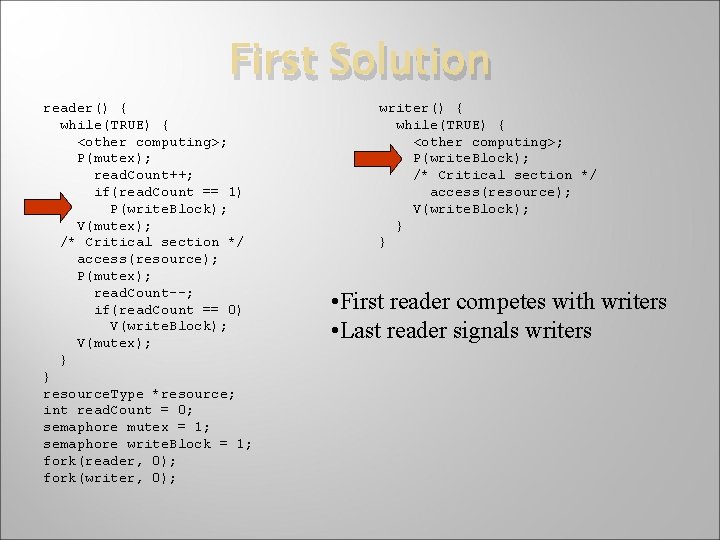

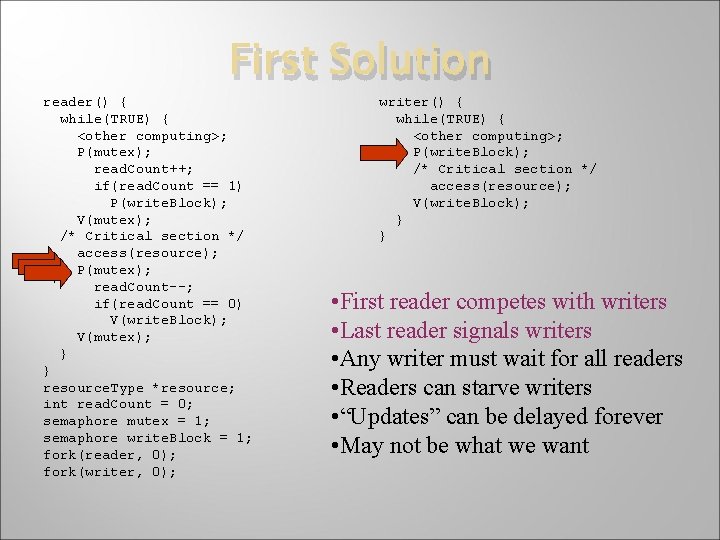

First Solution reader() { while(TRUE) { <other computing>; P(mutex); read. Count++; if(read. Count == 1) P(write. Block); V(mutex); /* Critical section */ access(resource); P(mutex); read. Count--; if(read. Count == 0) V(write. Block); V(mutex); } } resource. Type *resource; int read. Count = 0; semaphore mutex = 1; semaphore write. Block = 1; fork(reader, 0); fork(writer, 0); writer() { while(TRUE) { <other computing>; P(write. Block); /* Critical section */ access(resource); V(write. Block); } }

First Solution reader() { while(TRUE) { <other computing>; P(mutex); read. Count++; if(read. Count == 1) P(write. Block); V(mutex); /* Critical section */ access(resource); P(mutex); read. Count--; if(read. Count == 0) V(write. Block); V(mutex); } } resource. Type *resource; int read. Count = 0; semaphore mutex = 1; semaphore write. Block = 1; fork(reader, 0); fork(writer, 0); writer() { while(TRUE) { <other computing>; P(write. Block); /* Critical section */ access(resource); V(write. Block); } } • First reader competes with writers • Last reader signals writers

First Solution reader() { while(TRUE) { <other computing>; P(mutex); read. Count++; if(read. Count == 1) P(write. Block); V(mutex); /* Critical section */ access(resource); P(mutex); read. Count--; if(read. Count == 0) V(write. Block); V(mutex); } } resource. Type *resource; int read. Count = 0; semaphore mutex = 1; semaphore write. Block = 1; fork(reader, 0); fork(writer, 0); writer() { while(TRUE) { <other computing>; P(write. Block); /* Critical section */ access(resource); V(write. Block); } } • First reader competes with writers • Last reader signals writers • Any writer must wait for all readers • Readers can starve writers • “Updates” can be delayed forever • May not be what we want

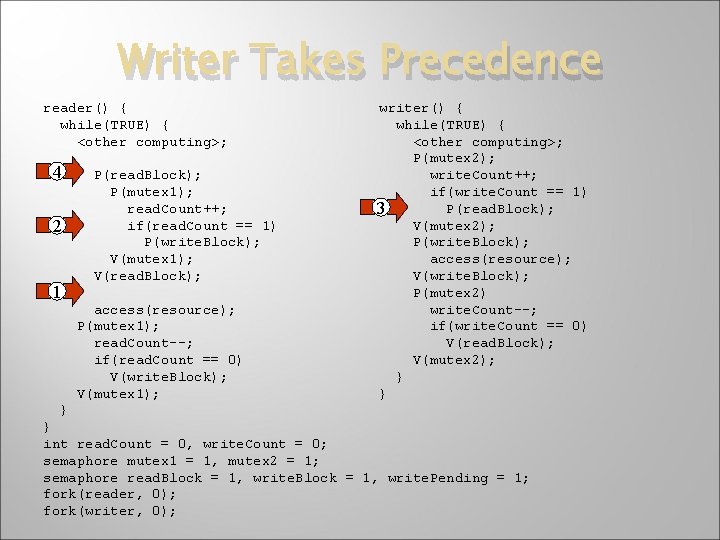

Writer Takes Precedence reader() { while(TRUE) { <other computing>; 4 2 1 P(read. Block); P(mutex 1); read. Count++; if(read. Count == 1) P(write. Block); V(mutex 1); V(read. Block); access(resource); P(mutex 1); read. Count--; if(read. Count == 0) V(write. Block); V(mutex 1); writer() { while(TRUE) { <other computing>; P(mutex 2); write. Count++; if(write. Count == 1) P(read. Block); 3 V(mutex 2); P(write. Block); access(resource); V(write. Block); P(mutex 2) write. Count--; if(write. Count == 0) V(read. Block); V(mutex 2); } } int read. Count = 0, write. Count = 0; semaphore mutex 1 = 1, mutex 2 = 1; semaphore read. Block = 1, write. Pending = 1; fork(reader, 0); fork(writer, 0);

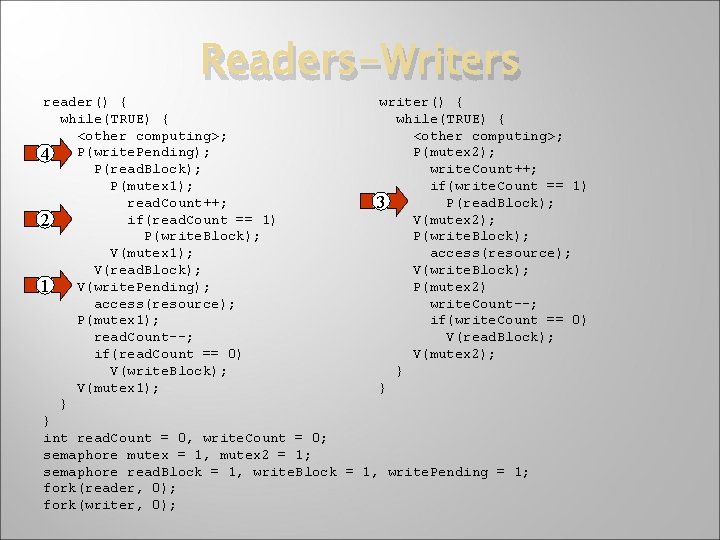

Readers-Writers reader() { writer() { while(TRUE) { <other computing>; P(write. Pending); P(mutex 2); 4 P(read. Block); write. Count++; P(mutex 1); if(write. Count == 1) read. Count++; P(read. Block); 3 if(read. Count == 1) V(mutex 2); 2 P(write. Block); V(mutex 1); access(resource); V(read. Block); V(write. Pending); P(mutex 2) 1 access(resource); write. Count--; P(mutex 1); if(write. Count == 0) read. Count--; V(read. Block); if(read. Count == 0) V(mutex 2); V(write. Block); } V(mutex 1); } } } int read. Count = 0, write. Count = 0; semaphore mutex = 1, mutex 2 = 1; semaphore read. Block = 1, write. Pending = 1; fork(reader, 0); fork(writer, 0);

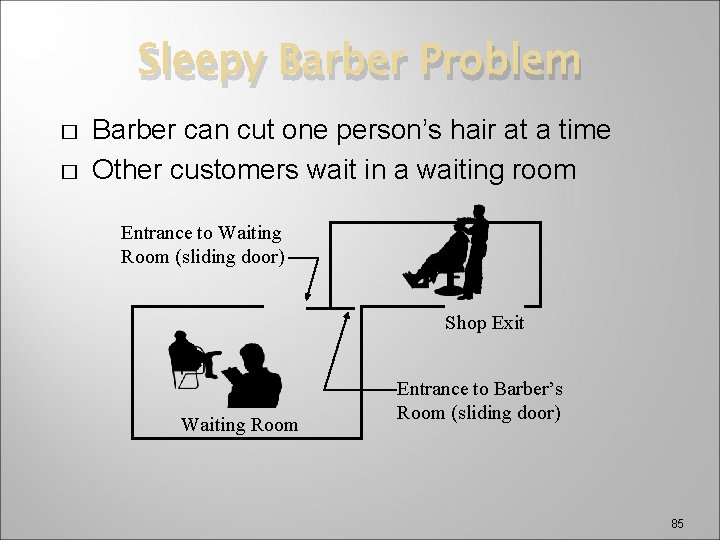

Sleepy Barber Problem � � Barber can cut one person’s hair at a time Other customers wait in a waiting room Entrance to Waiting Room (sliding door) Shop Exit Waiting Room Entrance to Barber’s Room (sliding door) 85

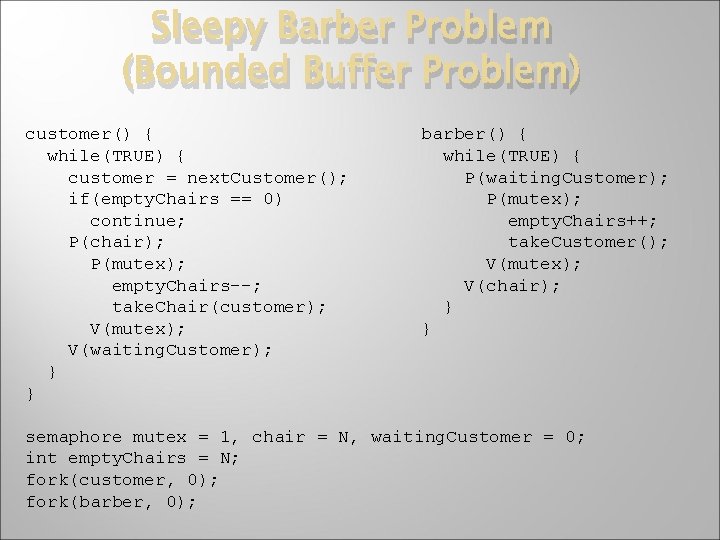

Sleepy Barber Problem (Bounded Buffer Problem) customer() { while(TRUE) { customer = next. Customer(); if(empty. Chairs == 0) continue; P(chair); P(mutex); empty. Chairs--; take. Chair(customer); V(mutex); V(waiting. Customer); } } barber() { while(TRUE) { P(waiting. Customer); P(mutex); empty. Chairs++; take. Customer(); V(mutex); V(chair); } } semaphore mutex = 1, chair = N, waiting. Customer = 0; int empty. Chairs = N; fork(customer, 0); fork(barber, 0);

![Dining-Philosophers Problem while(TRUE) { think(); eat(); } � Shared data semaphore chopstick[5]; (=1 initially) Dining-Philosophers Problem while(TRUE) { think(); eat(); } � Shared data semaphore chopstick[5]; (=1 initially)](http://slidetodoc.com/presentation_image_h/0177887bf68e5b5d43fc570c75b2ee15/image-87.jpg)

Dining-Philosophers Problem while(TRUE) { think(); eat(); } � Shared data semaphore chopstick[5]; (=1 initially) 87

![Dining-Philosophers Problem �Philosopher i: cont. - repeat P(chopstick[i]) P(chopstick[i+1 mod 5]) … eat … Dining-Philosophers Problem �Philosopher i: cont. - repeat P(chopstick[i]) P(chopstick[i+1 mod 5]) … eat …](http://slidetodoc.com/presentation_image_h/0177887bf68e5b5d43fc570c75b2ee15/image-88.jpg)

Dining-Philosophers Problem �Philosopher i: cont. - repeat P(chopstick[i]) P(chopstick[i+1 mod 5]) … eat … V(chopstick[i]); V(chopstick[i+1 mod 5]); … think … until false; 88

Dining-Philosophers Problem cont. �Problem - with this � If all philosophers pick up the chopstick on their right at the same time, then deadlock occurs and they all starve �In the next chapter we will look at techniques to solve this problem 89

Cigarette Smokers’ Problem � � Three smokers (processes) Each wish to use tobacco, papers, & matches � Each has an unlimited supply of one � Only need the three resources periodically � Must have all at once � One agent (a fourth process) � Has � an unlimited amount of all three 3 processes sharing 3 resources � Solvable, but difficult 90

Cigarette Smokers’ Problem � � – cont. Due to S. S. Patil in 1971 The agent and smokers share a table. The agent randomly generates two ingredients places these on the table. Once the ingredients are taken from the table by one smoker, the agent supplies another two. On the other hand, each smoker waits for the two missing ingredients. The smoker who has the remaining ingredient then makes and smokes a cigarette for a while, and goes back to the table waiting for his next ingredients. 91

Cigarette Smokers’ Problem � – cont. The problem here is that if semaphores are used for each ingredient, then deadlock will occur � One smoker has tobacco; second has matches � Agent puts matches and paper on table � Smoker with matches grabs paper, while smoker with tobacco gets matches � Since neither smoker has both ingredients, the agent is not signaled � DEADLOCK! 92

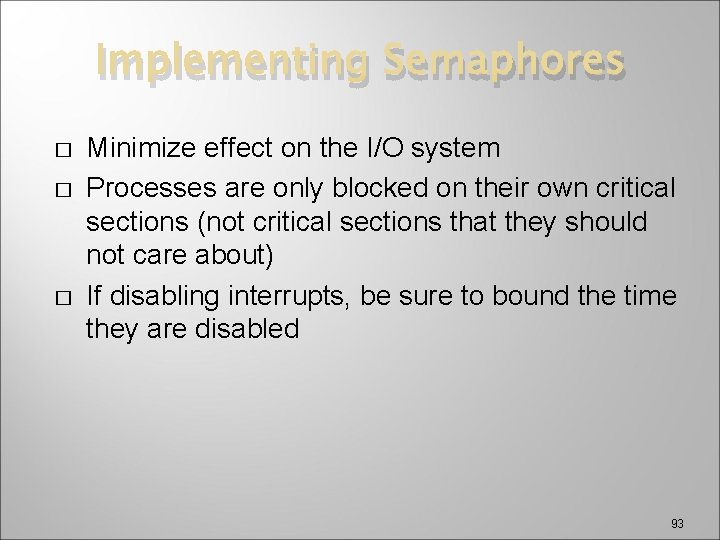

Implementing Semaphores � � � Minimize effect on the I/O system Processes are only blocked on their own critical sections (not critical sections that they should not care about) If disabling interrupts, be sure to bound the time they are disabled 93

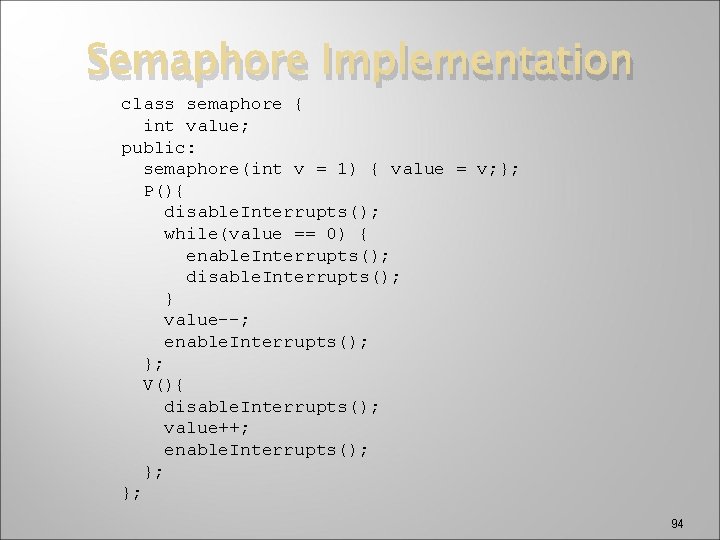

Semaphore Implementation class semaphore { int value; public: semaphore(int v = 1) { value = v; }; P(){ disable. Interrupts(); while(value == 0) { enable. Interrupts(); disable. Interrupts(); } value--; enable. Interrupts(); }; V(){ disable. Interrupts(); value++; enable. Interrupts(); }; }; 94

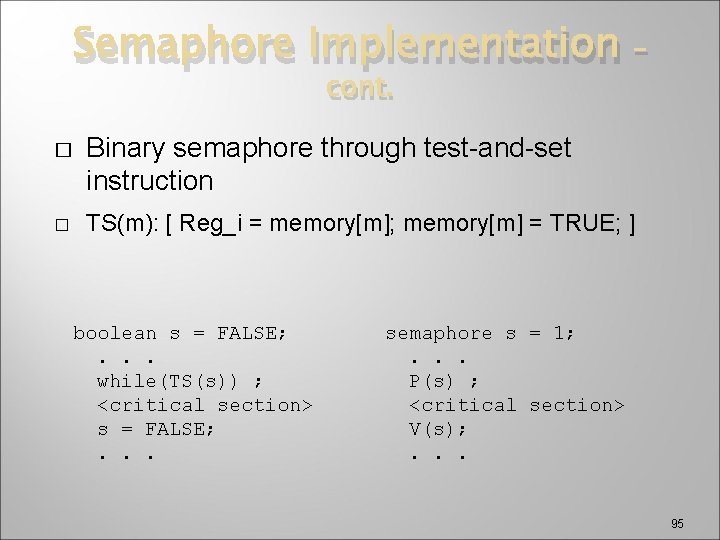

Semaphore Implementation cont. � � – Binary semaphore through test-and-set instruction TS(m): [ Reg_i = memory[m]; memory[m] = TRUE; ] boolean s = FALSE; . . . while(TS(s)) ; <critical section> s = FALSE; . . . semaphore s = 1; . . . P(s) ; <critical section> V(s); . . . 95

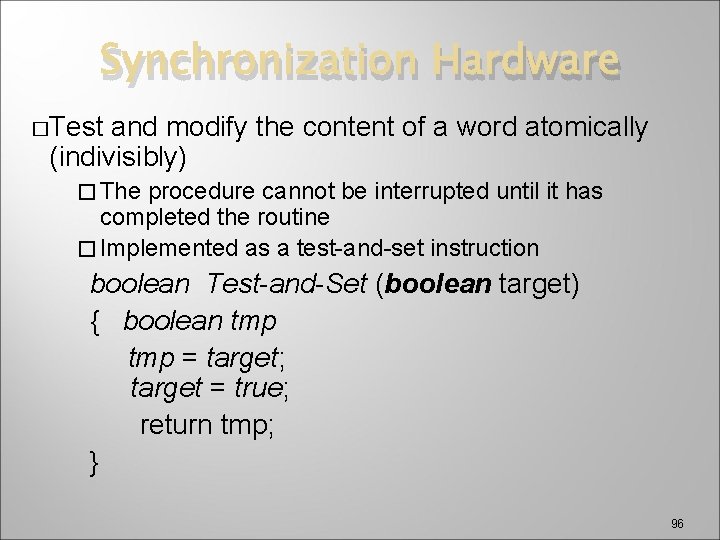

Synchronization Hardware �Test and modify the content of a word atomically (indivisibly) � The procedure cannot be interrupted until it has completed the routine � Implemented as a test-and-set instruction boolean Test-and-Set (boolean target) { boolean tmp = target; target = true; return tmp; } 96

![Test and Set Instruction – cont. • TS(m): [Reg_i = memory[m]; memory[m] = TRUE; Test and Set Instruction – cont. • TS(m): [Reg_i = memory[m]; memory[m] = TRUE;](http://slidetodoc.com/presentation_image_h/0177887bf68e5b5d43fc570c75b2ee15/image-97.jpg)

Test and Set Instruction – cont. • TS(m): [Reg_i = memory[m]; memory[m] = TRUE; ] Data CC Register R 3 … m … FALSE Primary Memory (a) Before Executing TS Data CC Register R 3 FALSE m =0 TRUE Primary Memory (b) After Executing TS 97

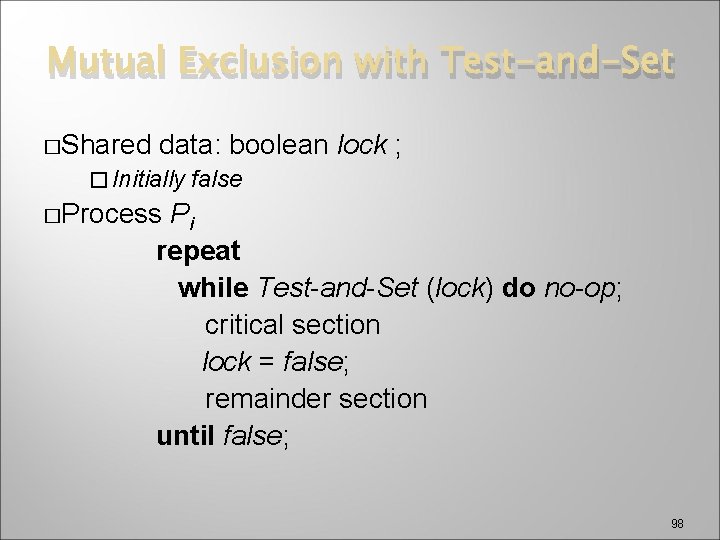

Mutual Exclusion with Test-and-Set �Shared data: boolean lock ; � Initially false �Process Pi repeat while Test-and-Set (lock) do no-op; critical section lock = false; remainder section until false; 98

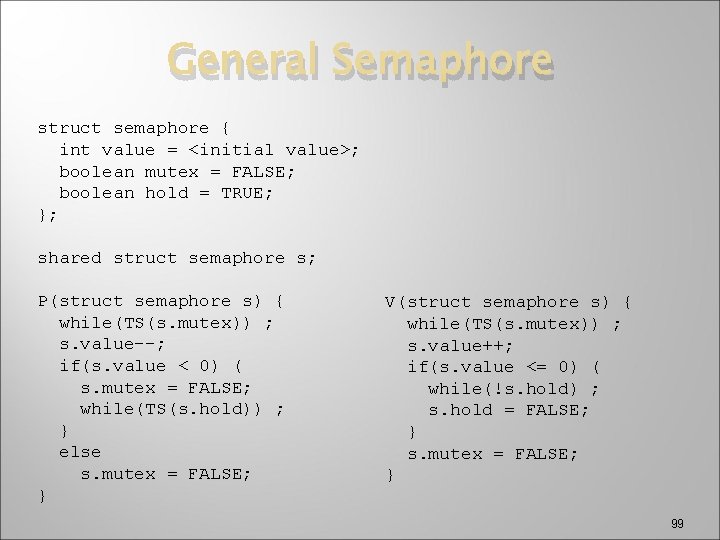

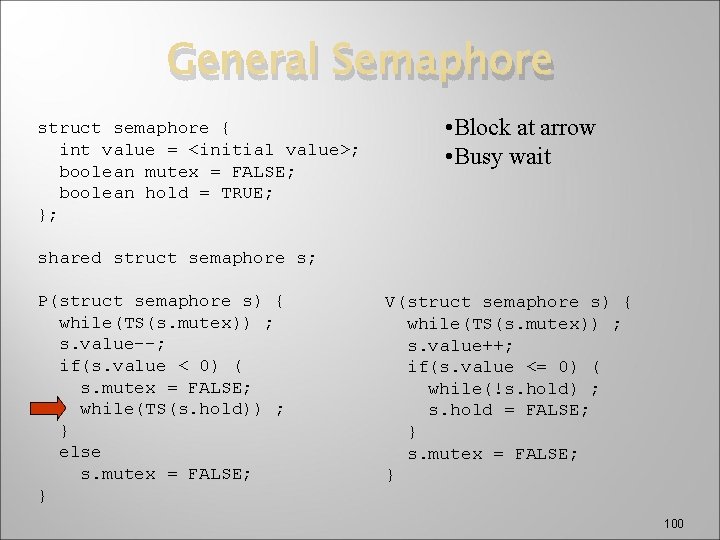

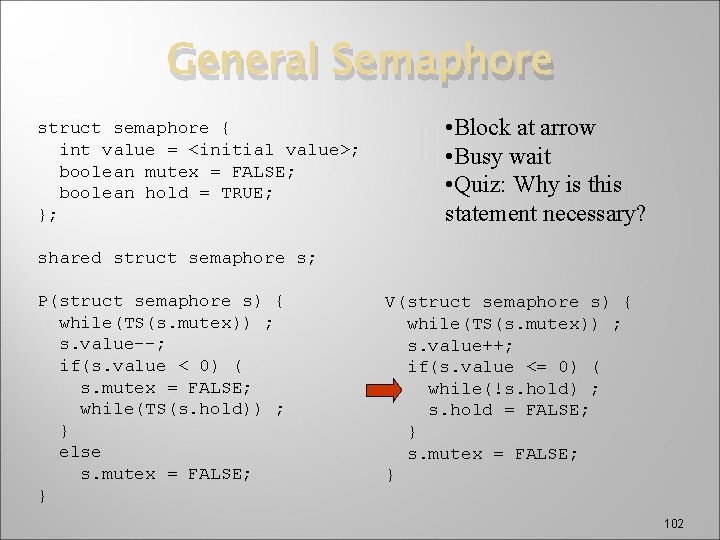

General Semaphore struct semaphore { int value = <initial value>; boolean mutex = FALSE; boolean hold = TRUE; }; shared struct semaphore s; P(struct semaphore s) { while(TS(s. mutex)) ; s. value--; if(s. value < 0) ( s. mutex = FALSE; while(TS(s. hold)) ; } else s. mutex = FALSE; } V(struct semaphore s) { while(TS(s. mutex)) ; s. value++; if(s. value <= 0) ( while(!s. hold) ; s. hold = FALSE; } s. mutex = FALSE; } 99

General Semaphore struct semaphore { int value = <initial value>; boolean mutex = FALSE; boolean hold = TRUE; }; • Block at arrow • Busy wait shared struct semaphore s; P(struct semaphore s) { while(TS(s. mutex)) ; s. value--; if(s. value < 0) ( s. mutex = FALSE; while(TS(s. hold)) ; } else s. mutex = FALSE; } V(struct semaphore s) { while(TS(s. mutex)) ; s. value++; if(s. value <= 0) ( while(!s. hold) ; s. hold = FALSE; } s. mutex = FALSE; } 100

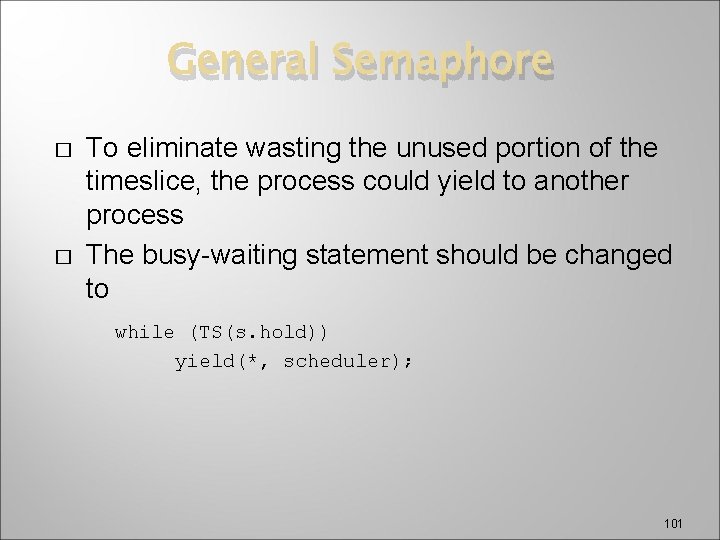

General Semaphore � � To eliminate wasting the unused portion of the timeslice, the process could yield to another process The busy-waiting statement should be changed to while (TS(s. hold)) yield(*, scheduler); 101

General Semaphore struct semaphore { int value = <initial value>; boolean mutex = FALSE; boolean hold = TRUE; }; • Block at arrow • Busy wait • Quiz: Why is this statement necessary? shared struct semaphore s; P(struct semaphore s) { while(TS(s. mutex)) ; s. value--; if(s. value < 0) ( s. mutex = FALSE; while(TS(s. hold)) ; } else s. mutex = FALSE; } V(struct semaphore s) { while(TS(s. mutex)) ; s. value++; if(s. value <= 0) ( while(!s. hold) ; s. hold = FALSE; } s. mutex = FALSE; } 102

General Semaphore � � � A race condition can occur in which a thread that is blocked in the P procedure, yet the V procedure encounters s. hold as being TRUE Occurs when consecutive V operations occur before any thread executes a P operation Without the while, the result of one of the V operations could be lost 103

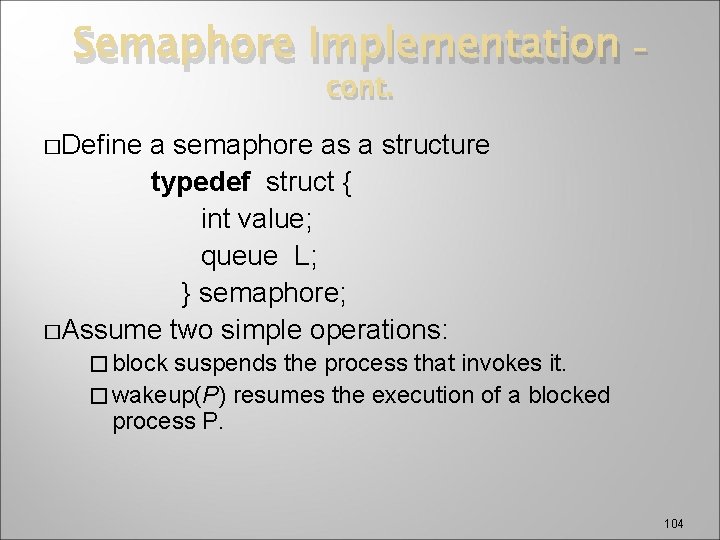

Semaphore Implementation cont. – �Define a semaphore as a structure typedef struct { int value; queue L; } semaphore; �Assume two simple operations: � block suspends the process that invokes it. � wakeup(P) resumes the execution of a blocked process P. 104

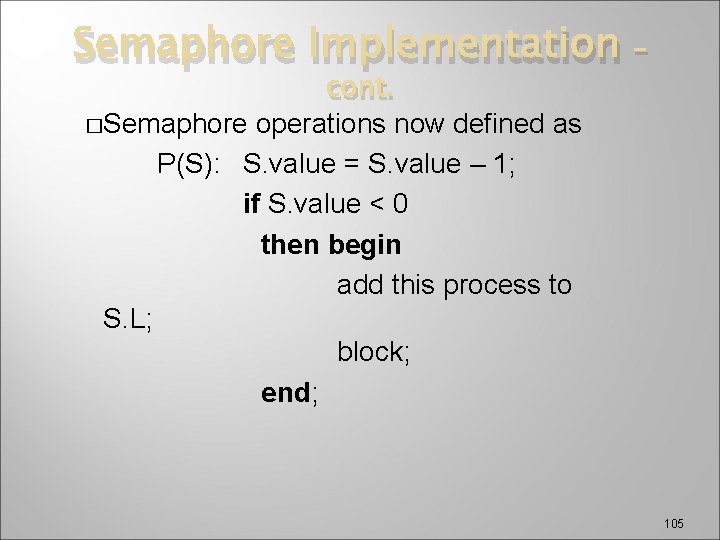

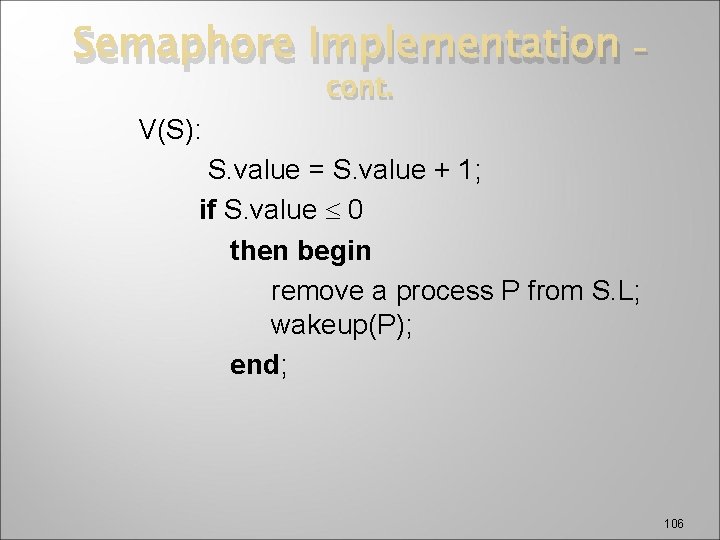

Semaphore Implementation cont. �Semaphore operations now defined as P(S): S. value = S. value – 1; if S. value < 0 then begin add this process to S. L; block; end; – 105

Semaphore Implementation cont. – V(S): S. value = S. value + 1; if S. value 0 then begin remove a process P from S. L; wakeup(P); end; 106

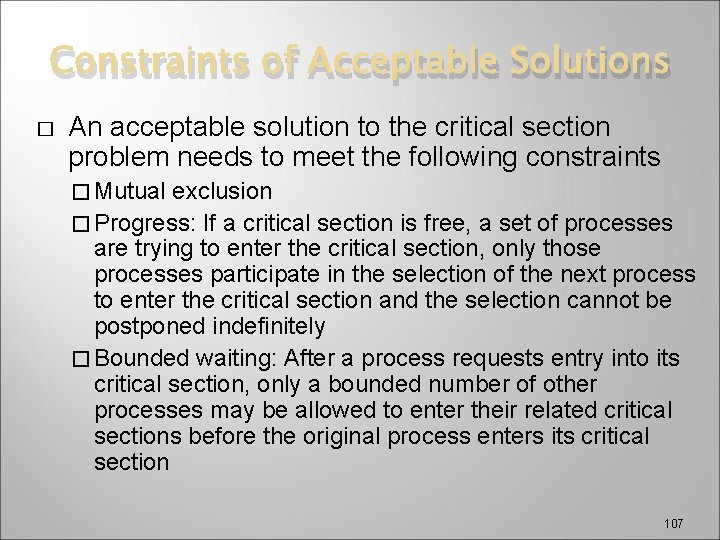

Constraints of Acceptable Solutions � An acceptable solution to the critical section problem needs to meet the following constraints � Mutual exclusion � Progress: If a critical section is free, a set of processes are trying to enter the critical section, only those processes participate in the selection of the next process to enter the critical section and the selection cannot be postponed indefinitely � Bounded waiting: After a process requests entry into its critical section, only a bounded number of other processes may be allowed to enter their related critical sections before the original process enters its critical section 107

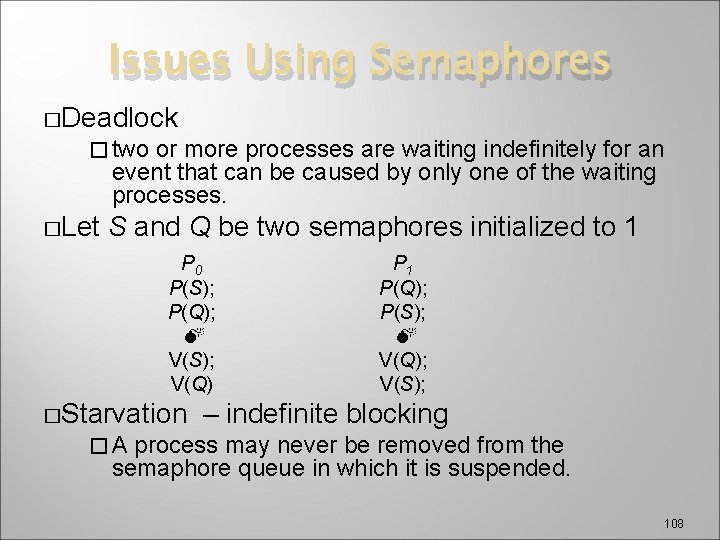

Issues Using Semaphores �Deadlock � two or more processes are waiting indefinitely for an event that can be caused by only one of the waiting processes. �Let S and Q be two semaphores initialized to 1 P 0 P(S); P(Q); V(S); V(Q) �Starvation P 1 P(Q); P(S); V(Q); V(S); – indefinite blocking �A process may never be removed from the semaphore queue in which it is suspended. 108

Issues Using Semaphores �A semaphore value is a consumable resource �The P operation can be considered a resource request operation �A process/thread blocks if it requests a positive semaphore value but the semaphore is zero � When a process/thread encounters a zero-valued semaphore, it moves to the blocked state �The V operation can be considered a resource release operation � Process/thread moves from blocked to ready when it detects a positive value of the semaphore 109

Active and Passive Semaphores � There is a subtle problem related to semaphore implementation �A process can dominate the semaphore Performs V operation, but continues to execute Performs another P operation before releasing CPU Called a passive implementation of V � Bounded waiting may not be satisfied when the passive V operation is used where the implementation increments the semaphore with no opportunity for a context switch 110

Active and Passive Semaphores � – cont. Active implementation calls scheduler as part of the V operation. � Changes semantics of semaphore! � In contrast to passive semaphores, a yield or similar procedure will be called after incrementing the semaphore for a context switch in a V operation implementation 111

- Slides: 111