Migratory File Services for BatchPipelined Workloads John Bent

Migratory File Services for Batch-Pipelined Workloads John Bent, Douglas Thain, Andrea Arpaci-Dusseau, Remzi Arpaci-Dusseau, and Miron Livny Wi. ND and Condor Projects 6 May 2003

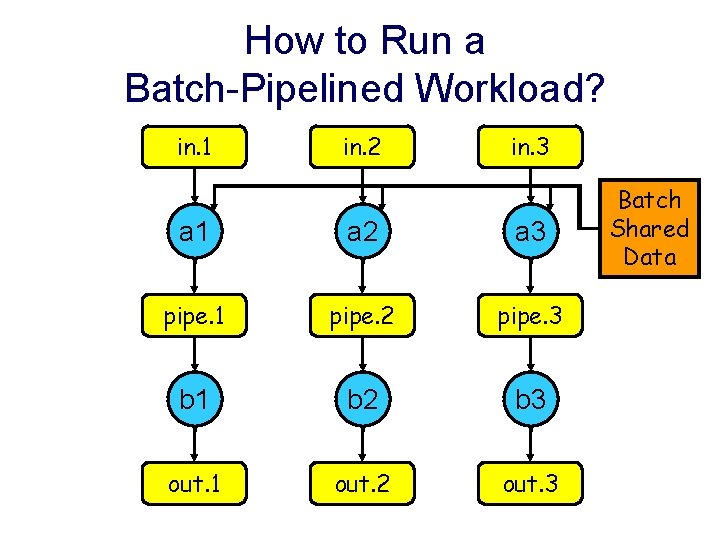

How to Run a Batch-Pipelined Workload? in. 1 in. 2 in. 3 a 1 a 2 a 3 pipe. 1 pipe. 2 pipe. 3 b 1 b 2 b 3 out. 1 out. 2 out. 3 Batch Shared Data

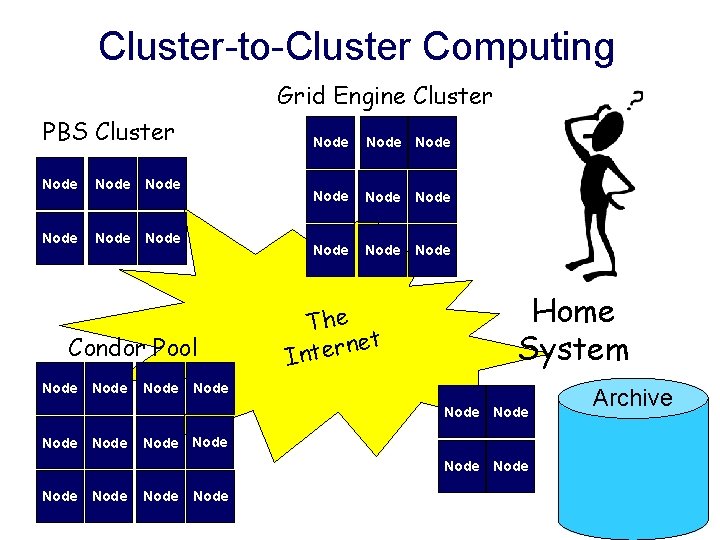

Cluster-to-Cluster Computing Grid Engine Cluster PBS Cluster Node Node Condor Pool Node Node Node The t e n r e Int Home System Node Node Node Node Archive

How to Run a Batch-Pipelined Workload? “Remote I/O” • Submit jobs to a remote batch system. • Let all I/O come directly home. • Inefficient if re-use is common. • (( But perfect if no data sharing! )) 4 “FTP-Net” • User finds remote clusters. • Manually stages data in. • Submits jobs, deals with failures. • Pulls data out. • Lather, rinse, repeat. 4

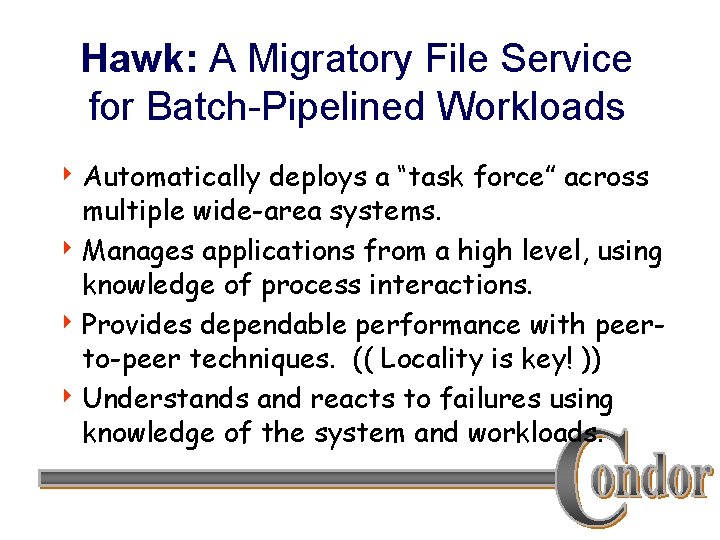

Hawk: A Migratory File Service for Batch-Pipelined Workloads 4 Automatically deploys a “task force” across multiple wide-area systems. 4 Manages applications from a high level, using knowledge of process interactions. 4 Provides dependable performance with peerto-peer techniques. (( Locality is key! )) 4 Understands and reacts to failures using knowledge of the system and workloads.

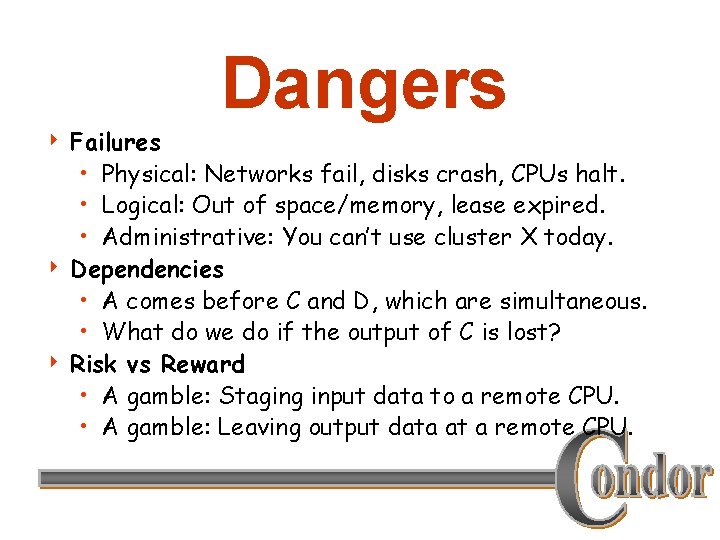

Dangers Failures • Physical: Networks fail, disks crash, CPUs halt. • Logical: Out of space/memory, lease expired. • Administrative: You can’t use cluster X today. 4 Dependencies • A comes before C and D, which are simultaneous. • What do we do if the output of C is lost? 4 Risk vs Reward • A gamble: Staging input data to a remote CPU. • A gamble: Leaving output data at a remote CPU. 4

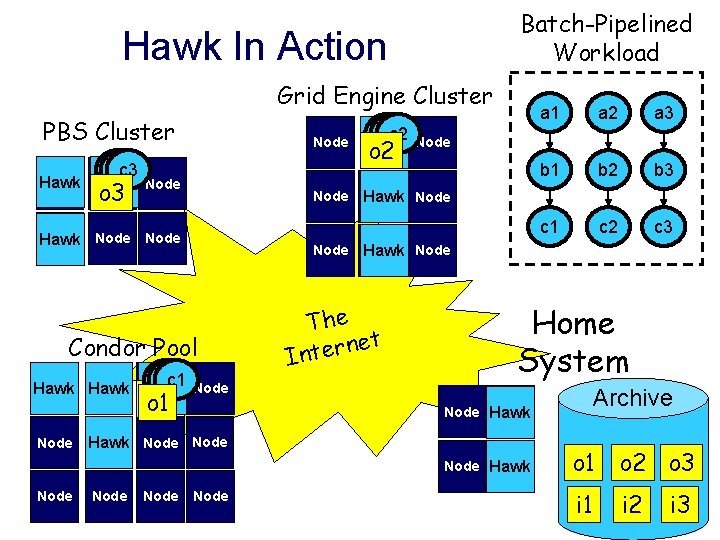

Batch-Pipelined Workload Hawk In Action Grid Engine Cluster PBS Cluster Node a 3 b 3 c 3 Hawk Node o 3 i 3 Node Hawk Node Condor Pool a 1 b 1 c 1 Node Hawk i 1 o 1 Node o 2 i 2 Node a 3 b 1 b 2 b 3 c 1 c 2 c 3 Node Hawk The t e n r e Int Home System Archive Node Hawk Node a 2 b 2 c 2 Node Hawk Node a 1 Node o 1 o 2 o 3 i 1 i 2 i 3

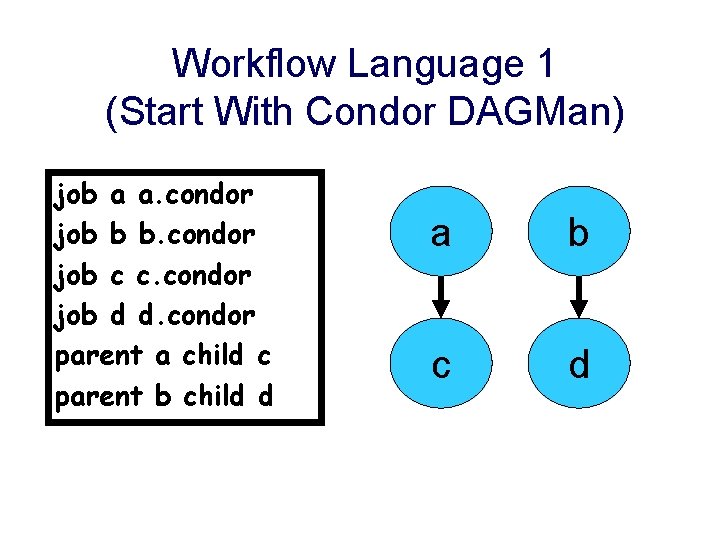

Workflow Language 1 (Start With Condor DAGMan) job a a. condor job b b. condor job c c. condor job d d. condor parent a child c parent b child d a b c d

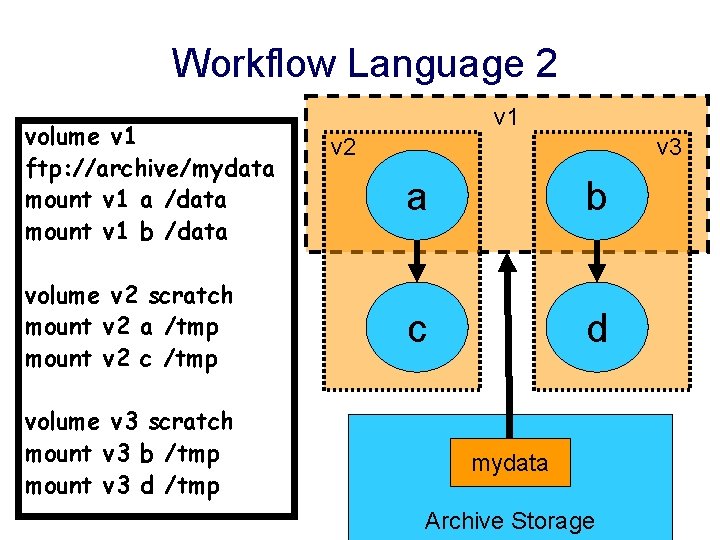

Workflow Language 2 volume v 1 ftp: //archive/mydata mount v 1 a /data mount v 1 b /data volume v 2 scratch mount v 2 a /tmp mount v 2 c /tmp volume v 3 scratch mount v 3 b /tmp mount v 3 d /tmp v 1 v 2 v 3 a b c d mydata Archive Storage

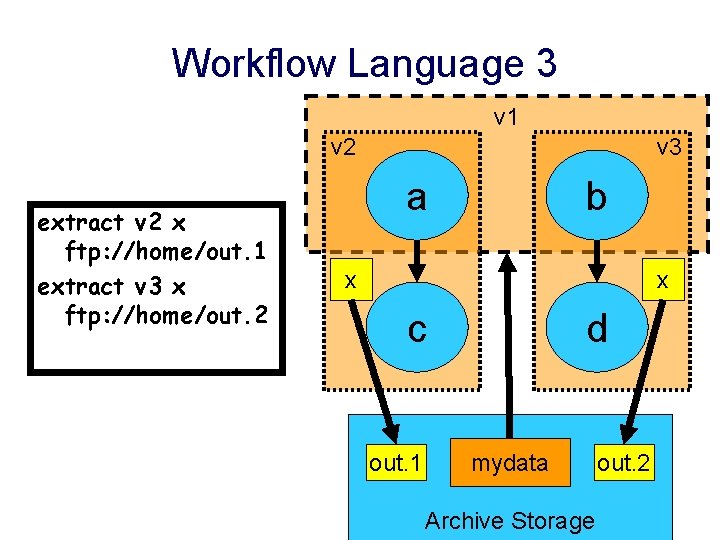

Workflow Language 3 v 1 v 2 extract v 2 x ftp: //home/out. 1 extract v 3 x ftp: //home/out. 2 v 3 a b x x c out. 1 d mydata Archive Storage out. 2

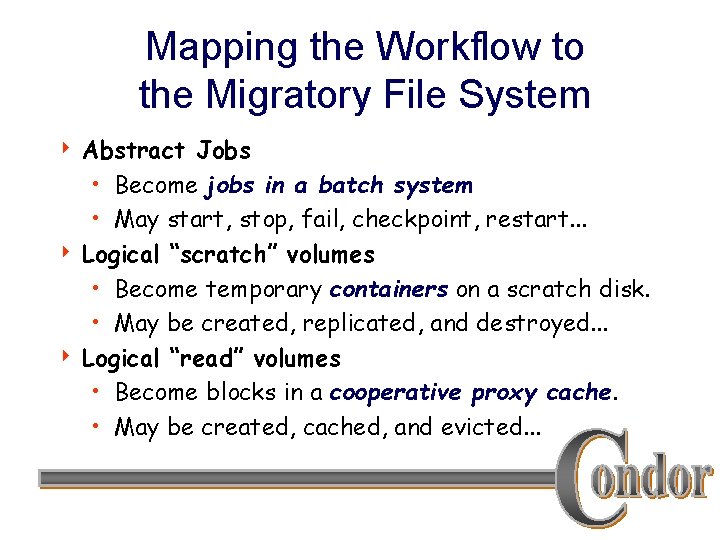

Mapping the Workflow to the Migratory File System Abstract Jobs • Become jobs in a batch system • May start, stop, fail, checkpoint, restart. . . 4 Logical “scratch” volumes • Become temporary containers on a scratch disk. • May be created, replicated, and destroyed. . . 4 Logical “read” volumes • Become blocks in a cooperative proxy cache. • May be created, cached, and evicted. . . 4

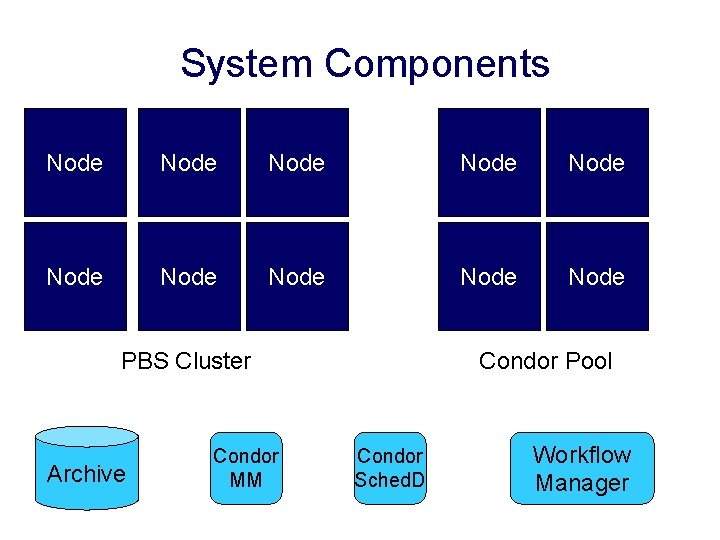

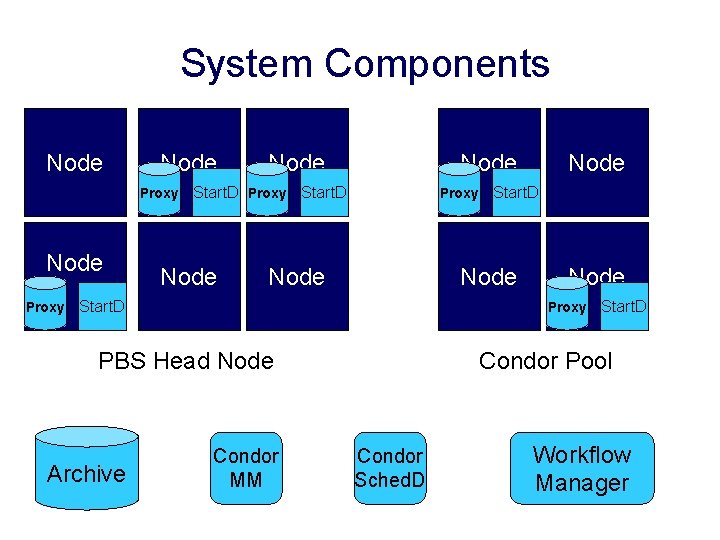

System Components Node Node Node PBS Cluster Archive Condor MM Condor Pool Condor Sched. D Workflow Manager

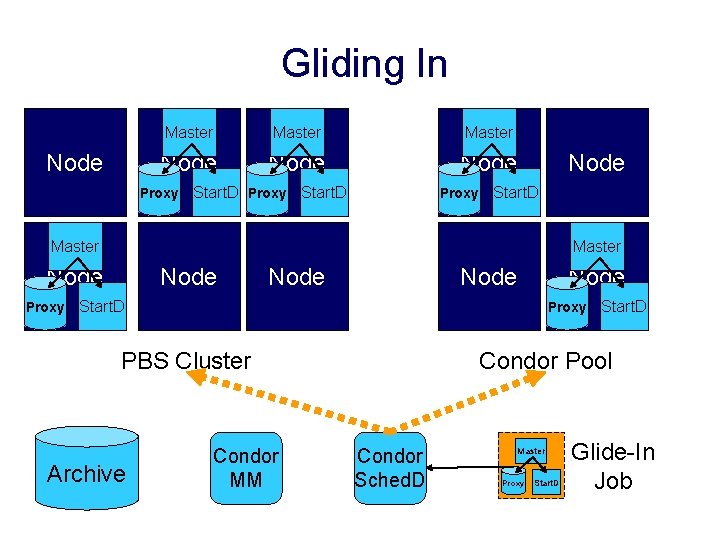

Gliding In Node Master Node Proxy Start. D Proxy Node Start. D Master Node Proxy Node Start. D Proxy PBS Cluster Archive Condor MM Start. D Condor Pool Condor Sched. D Master Proxy Start. D Glide-In Job

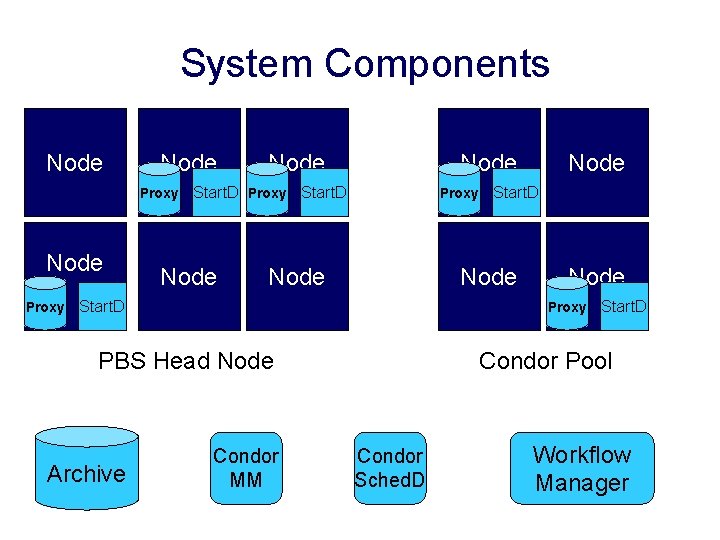

System Components Node Proxy Node Start. D Proxy Start. D Node Proxy PBS Head Node Archive Start. D Node Condor MM Start. D Condor Pool Condor Sched. D Workflow Manager

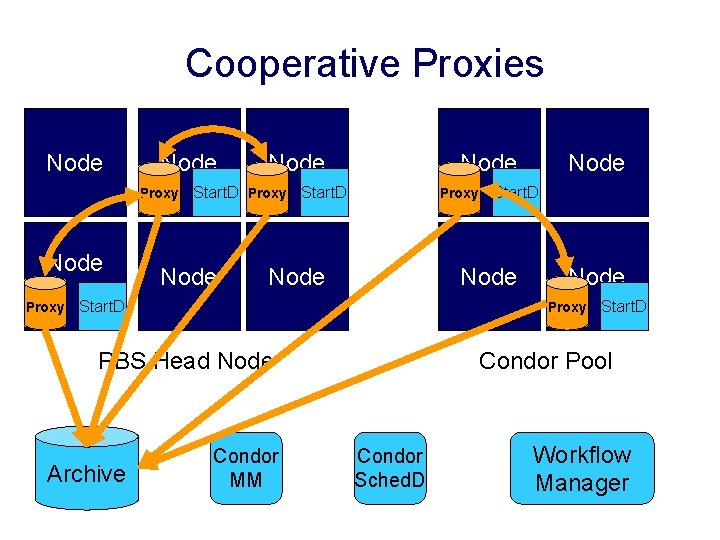

Cooperative Proxies Node Proxy Node Start. D Proxy Start. D Node Proxy PBS Head Node Archive Start. D Node Condor MM Start. D Condor Pool Condor Sched. D Workflow Manager

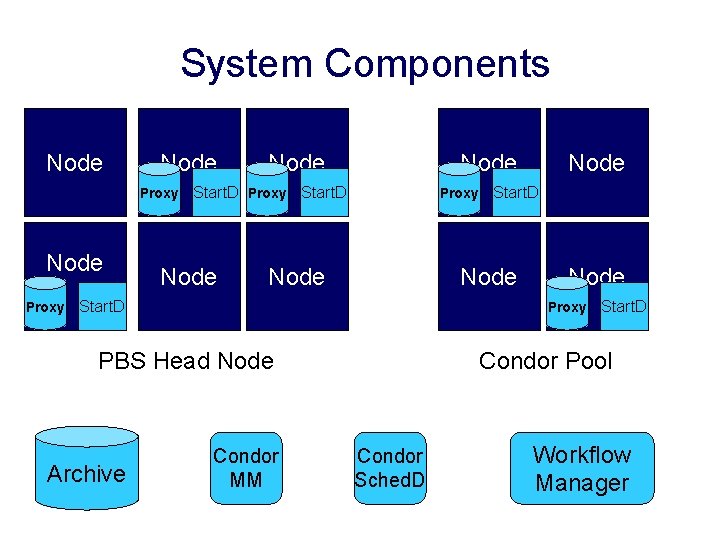

System Components Node Proxy Node Start. D Proxy Start. D Node Proxy PBS Head Node Archive Start. D Node Condor MM Start. D Condor Pool Condor Sched. D Workflow Manager

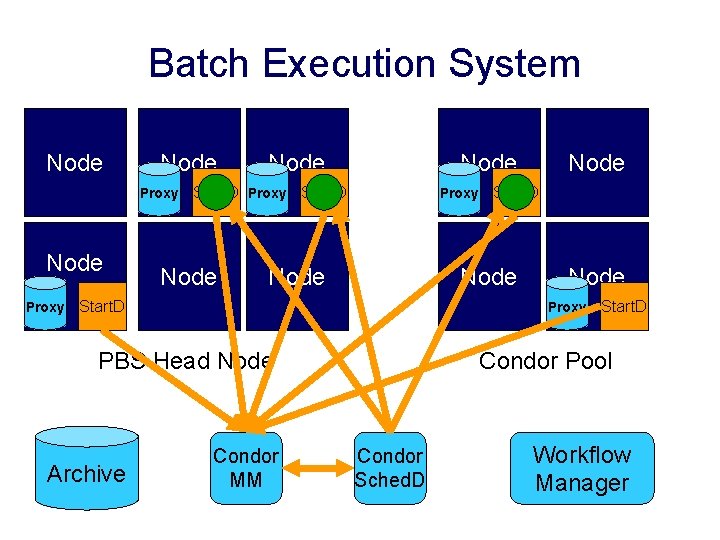

Batch Execution System Node Proxy Node Start. D Proxy Start. D Node Proxy PBS Head Node Archive Start. D Node Condor MM Start. D Condor Pool Condor Sched. D Workflow Manager

System Components Node Proxy Node Start. D Proxy Start. D Node Proxy PBS Head Node Archive Start. D Node Condor MM Start. D Condor Pool Condor Sched. D Workflow Manager

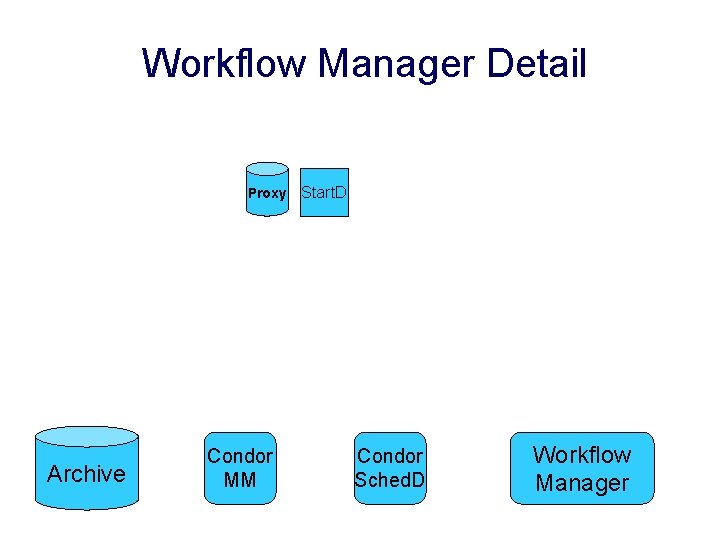

Workflow Manager Detail Proxy Archive Condor MM Start. D Condor Sched. D Workflow Manager

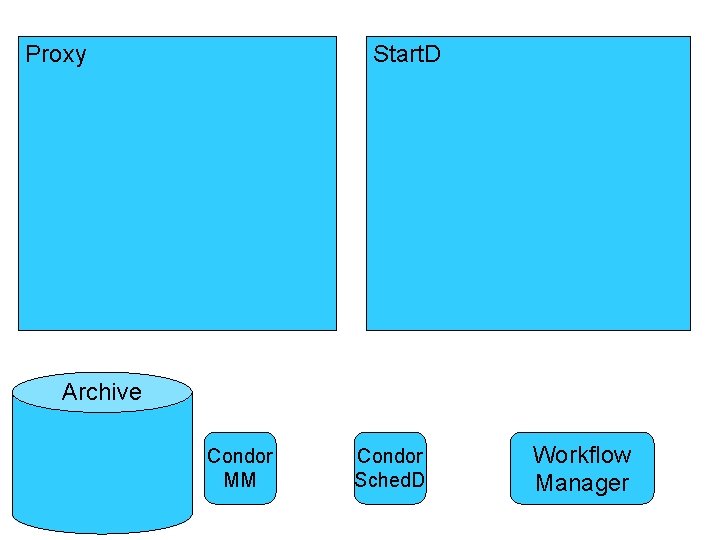

Proxy Start. D Archive Condor MM Condor Sched. D Workflow Manager

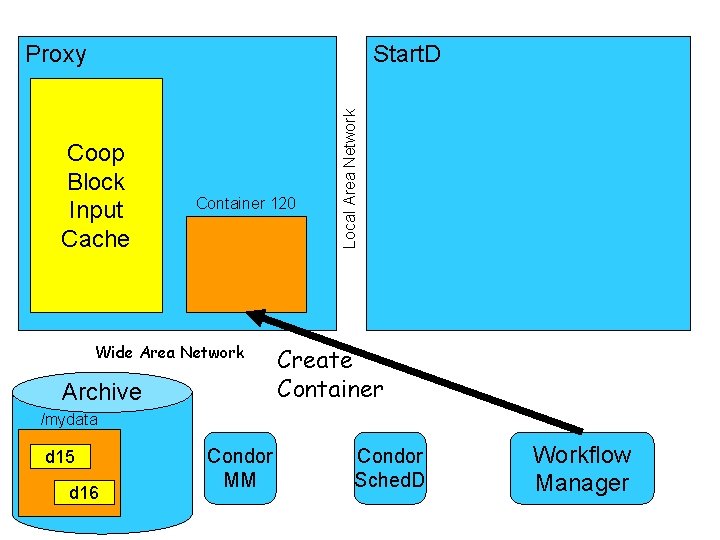

Start. D Coop Block Input Cache Container 120 Wide Area Network Archive Local Area Network Proxy Create Container /mydata d 15 d 16 Condor MM Condor Sched. D Workflow Manager

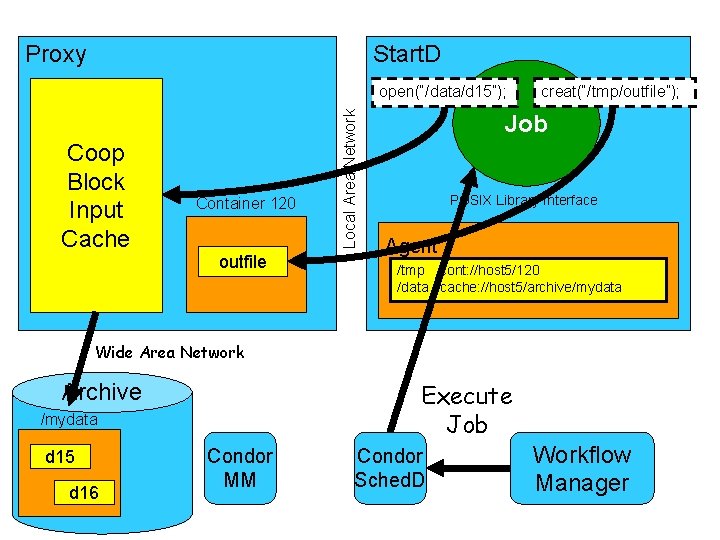

Proxy Start. D Coop Block Input Cache Container 120 outfile Local Area Network open(“/data/d 15”); creat(“/tmp/outfile”); Job POSIX Library Interface Agent /tmp cont: //host 5/120 /data cache: //host 5/archive/mydata Wide Area Network Execute Job Archive /mydata d 15 d 16 Condor MM Condor Sched. D Workflow Manager

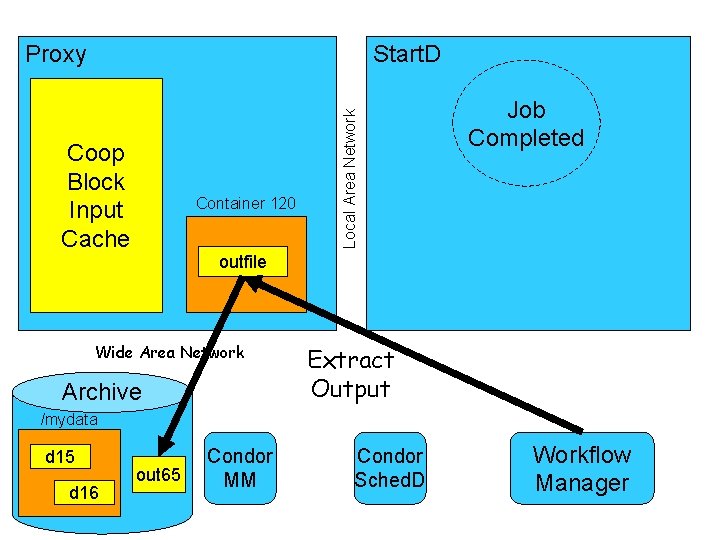

Start. D Coop Block Input Cache Container 120 Local Area Network Proxy Job Completed outfile Wide Area Network Archive Extract Output /mydata d 15 d 16 out 65 Condor MM Condor Sched. D Workflow Manager

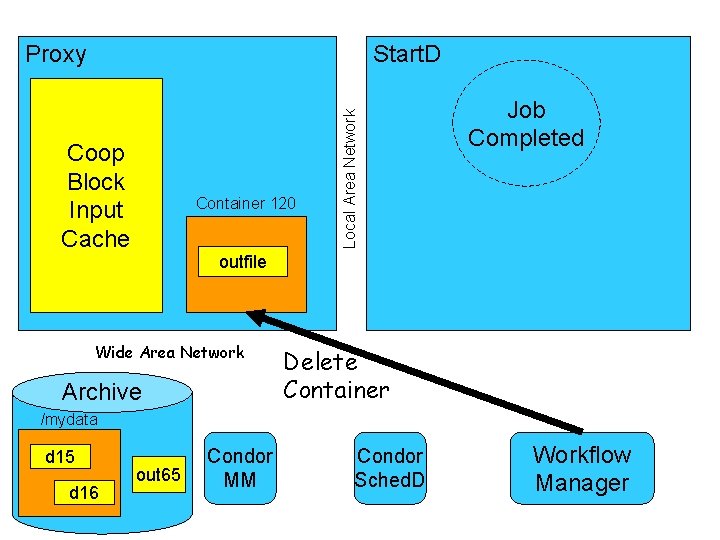

Start. D Coop Block Input Cache Container 120 Local Area Network Proxy Job Completed outfile Wide Area Network Archive Delete Container /mydata d 15 d 16 out 65 Condor MM Condor Sched. D Workflow Manager

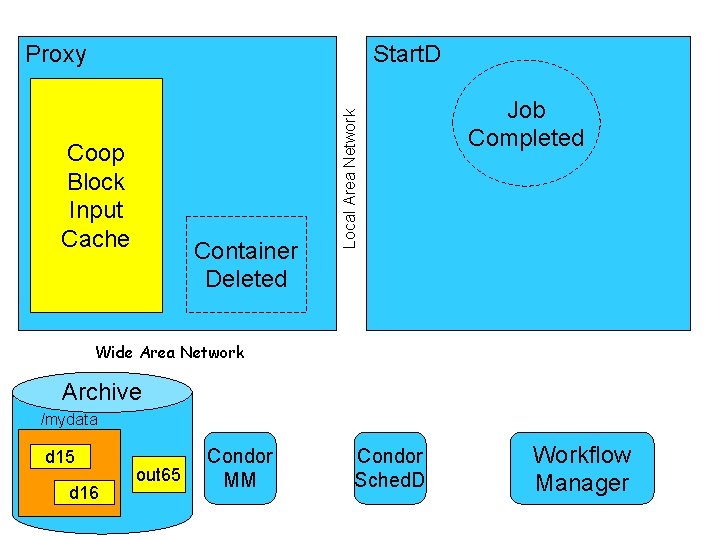

Start. D Coop Block Input Cache Container Deleted Local Area Network Proxy Job Completed Wide Area Network Archive /mydata d 15 d 16 out 65 Condor MM Condor Sched. D Workflow Manager

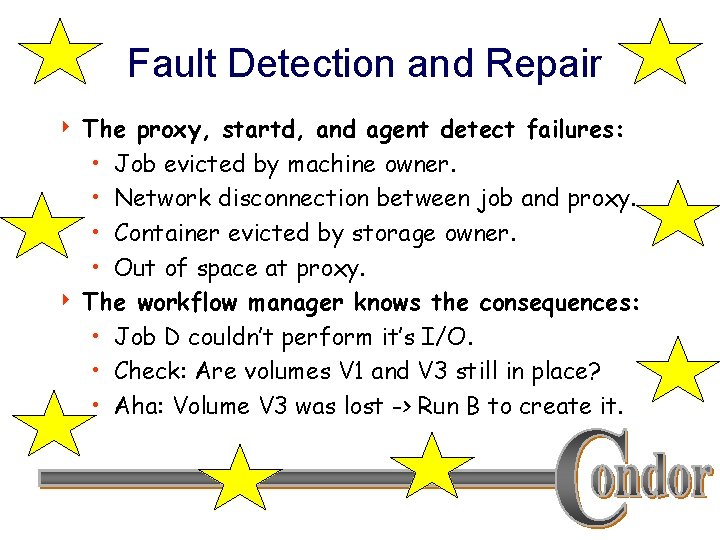

Fault Detection and Repair The proxy, startd, and agent detect failures: • Job evicted by machine owner. • Network disconnection between job and proxy. • Container evicted by storage owner. • Out of space at proxy. 4 The workflow manager knows the consequences: • Job D couldn’t perform it’s I/O. • Check: Are volumes V 1 and V 3 still in place? • Aha: Volume V 3 was lost -> Run B to create it. 4

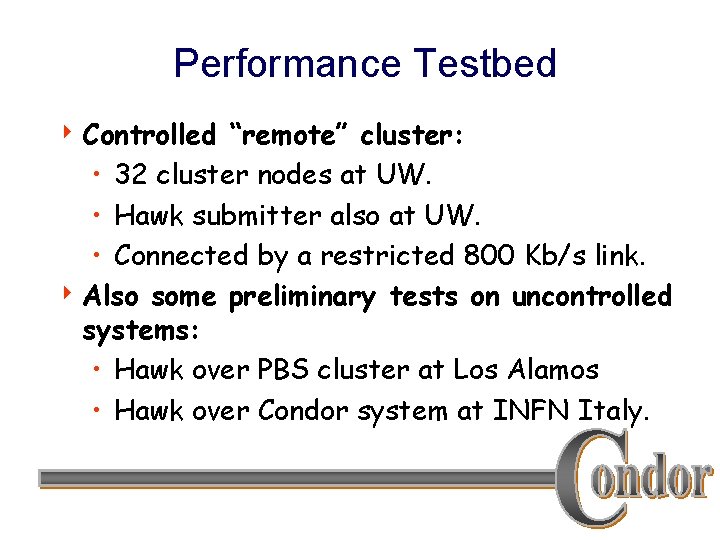

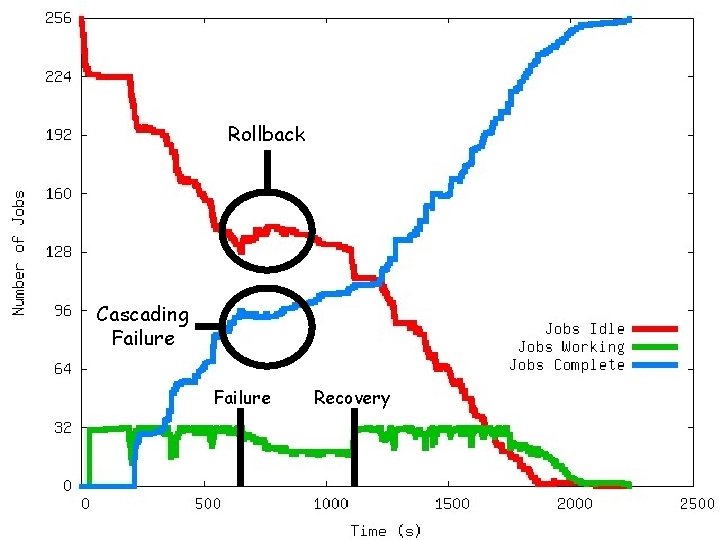

Performance Testbed 4 Controlled “remote” cluster: • 32 cluster nodes at UW. • Hawk submitter also at UW. • Connected by a restricted 800 Kb/s link. 4 Also some preliminary tests on uncontrolled systems: • Hawk over PBS cluster at Los Alamos • Hawk over Condor system at INFN Italy.

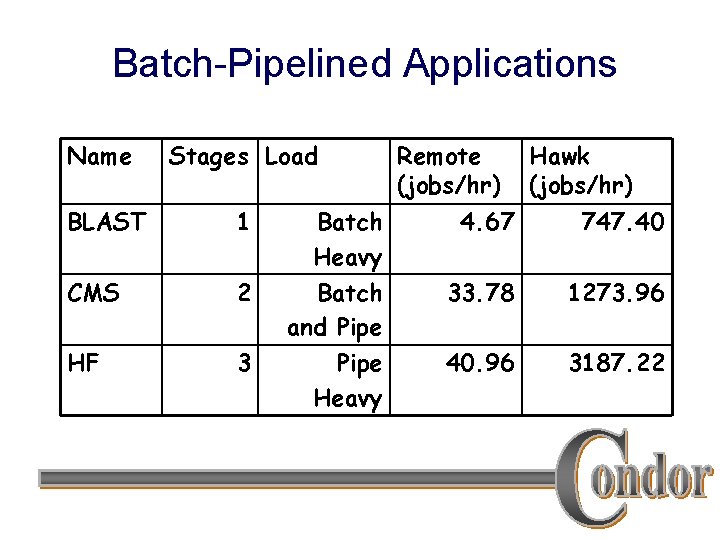

Batch-Pipelined Applications Name Stages Load BLAST 1 CMS 2 HF 3 Batch Heavy Batch and Pipe Heavy Remote (jobs/hr) Hawk (jobs/hr) 4. 67 747. 40 33. 78 1273. 96 40. 96 3187. 22

Rollback Cascading Failure Recovery

A Little Bit of Philosophy 4 Most systems build from the bottom up: • “This disk must have five nines, or else!” 4 MFS works from the top down: • “If this disk fails, we know what to do. ” 4 By working from the top down, we finesse many of the hard problems in traditional filesystems.

Future Work 4 Integration with Stork 4 P 2 P Aspects: Discovery & Replication 4 Optional Knowledge: Size & Time 4 Delegation and Disconnection 4 Names, names: • Hawk – A migratory file service. • Hawkeye – A system monitoring tool.

s job data job ? data job data Feeling overwhelmed? Let Hawk juggle your work!

- Slides: 32