MAP decoding The BCJR algorithm Maximum A posteriori

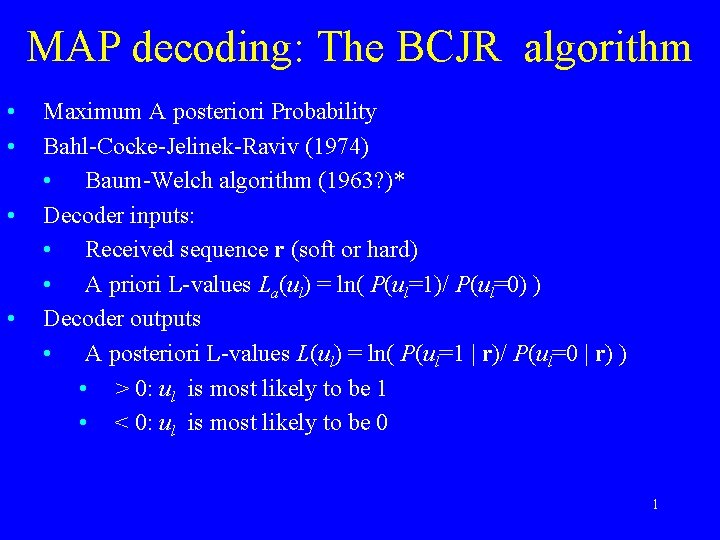

MAP decoding: The BCJR algorithm • • Maximum A posteriori Probability Bahl-Cocke-Jelinek-Raviv (1974) • Baum-Welch algorithm (1963? )* Decoder inputs: • Received sequence r (soft or hard) • A priori L-values La(ul) = ln( P(ul=1)/ P(ul=0) ) Decoder outputs • A posteriori L-values L(ul) = ln( P(ul=1 | r)/ P(ul=0 | r) ) • > 0: ul is most likely to be 1 • < 0: ul is most likely to be 0 1

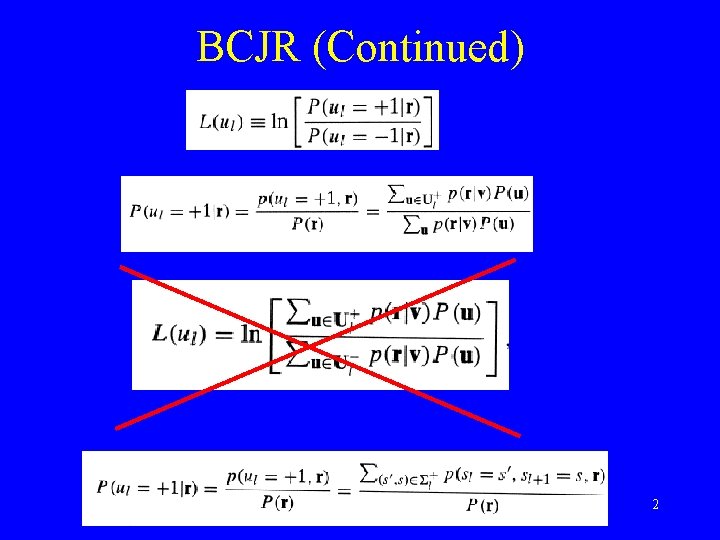

BCJR (Continued) 2

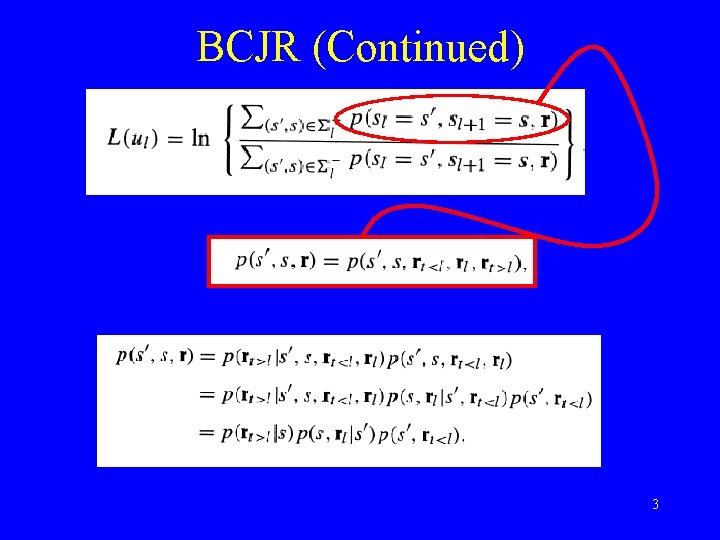

BCJR (Continued) 3

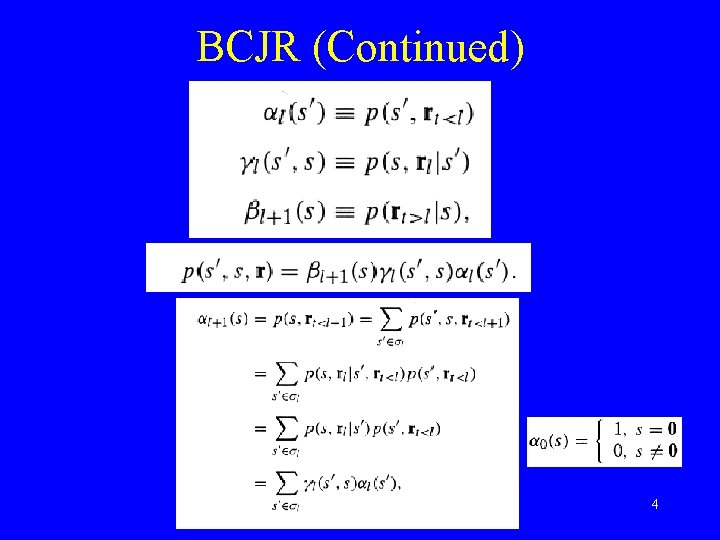

BCJR (Continued) 4

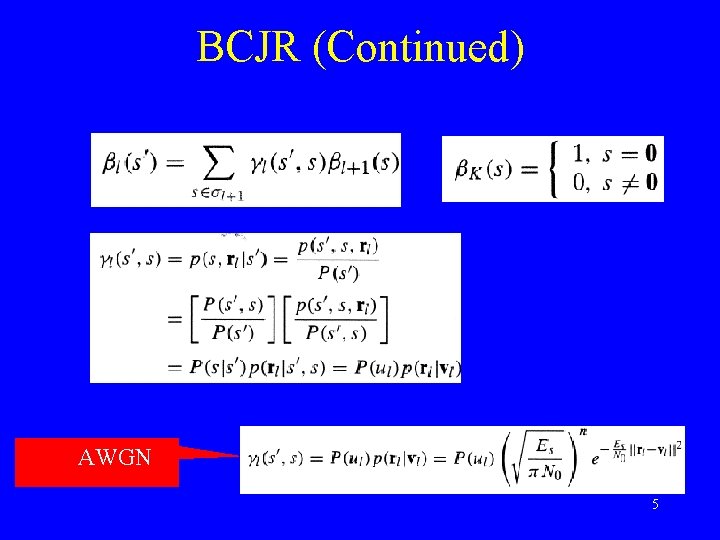

BCJR (Continued) AWGN 5

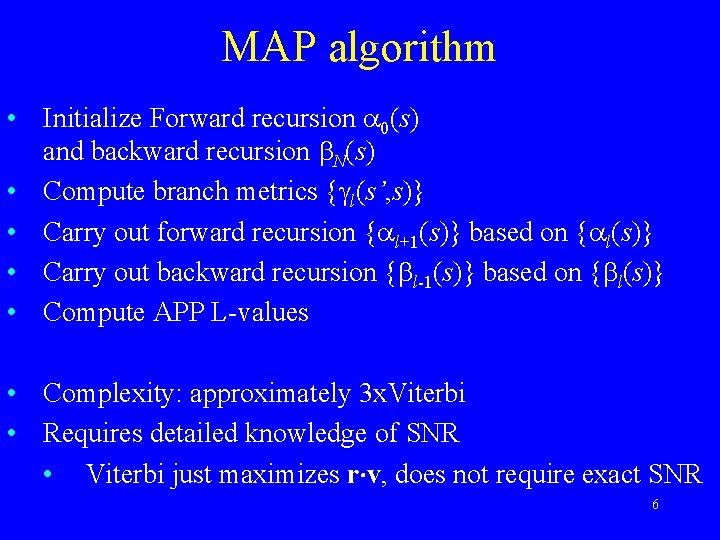

MAP algorithm • Initialize Forward recursion 0(s) and backward recursion N(s) • Compute branch metrics { l(s’, s)} • Carry out forward recursion { l+1(s)} based on { l(s)} • Carry out backward recursion { l-1(s)} based on { l(s)} • Compute APP L-values • Complexity: approximately 3 x. Viterbi • Requires detailed knowledge of SNR • Viterbi just maximizes r v, does not require exact SNR 6

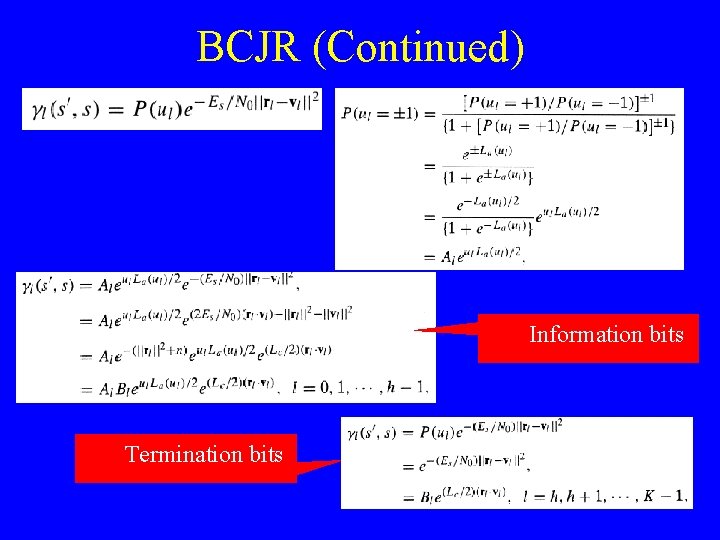

BCJR (Continued) Information bits Termination bits 7

BCJR (Continued) 8

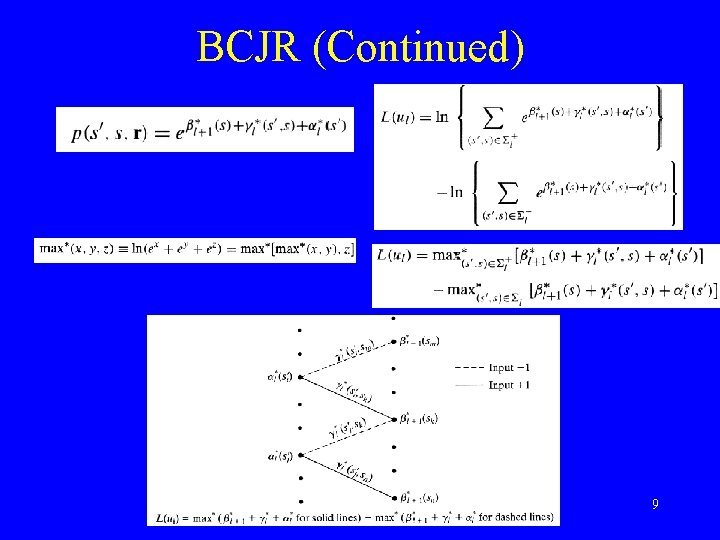

BCJR (Continued) 9

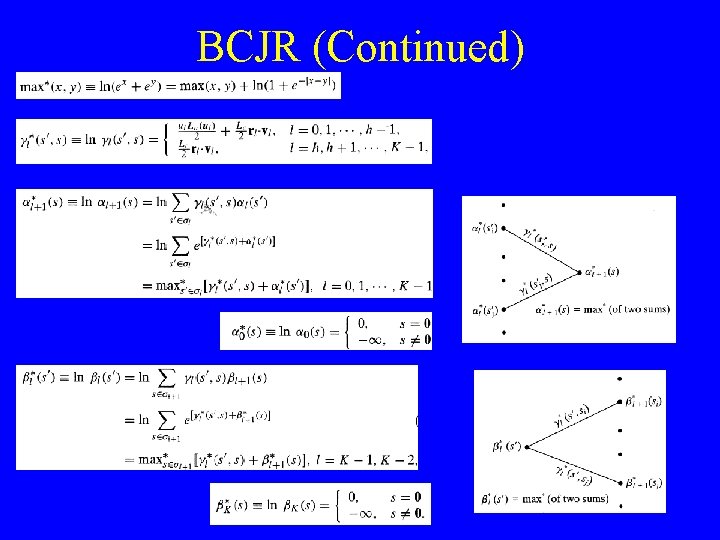

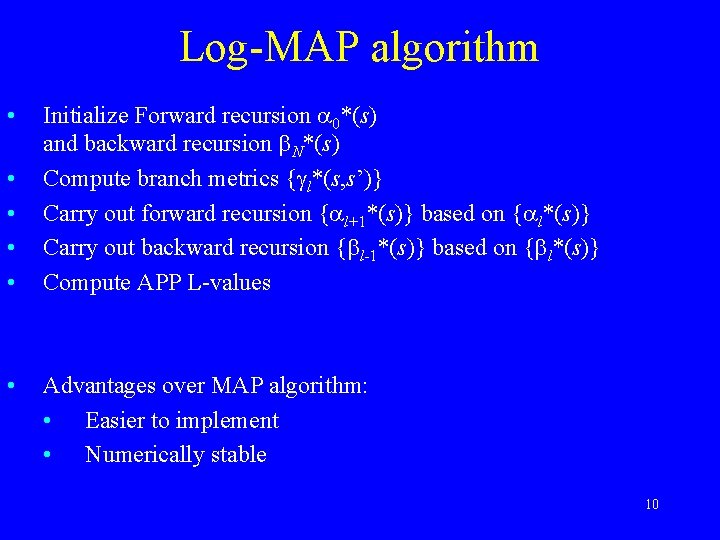

Log-MAP algorithm • • • Initialize Forward recursion 0*(s) and backward recursion N*(s) Compute branch metrics { l*(s, s’)} Carry out forward recursion { l+1*(s)} based on { l*(s)} Carry out backward recursion { l-1*(s)} based on { l*(s)} Compute APP L-values Advantages over MAP algorithm: • Easier to implement • Numerically stable 10

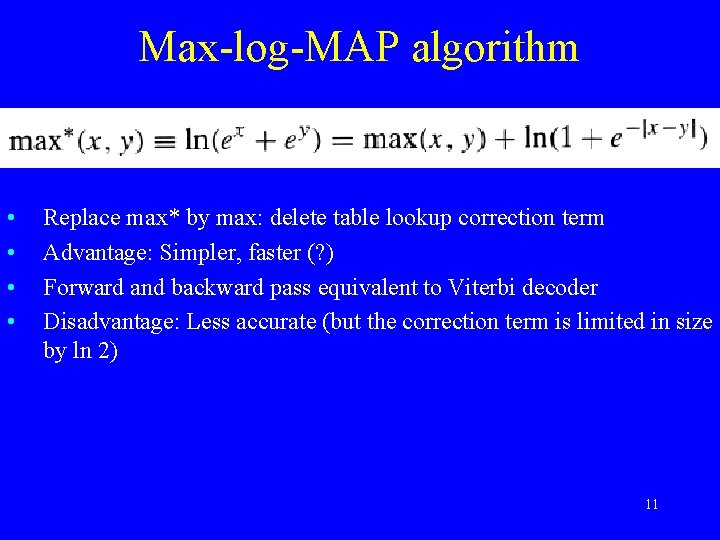

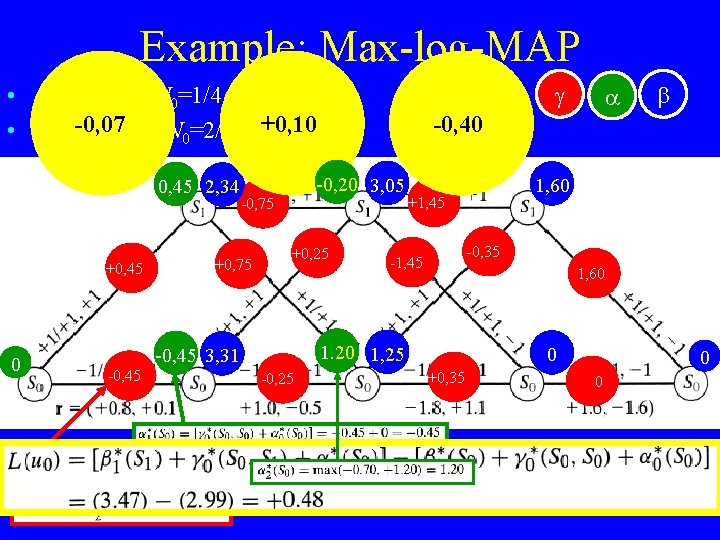

Max-log-MAP algorithm • • Replace max* by max: delete table lookup correction term Advantage: Simpler, faster (? ) Forward and backward pass equivalent to Viterbi decoder Disadvantage: Less accurate (but the correction term is limited in size by ln 2) 11

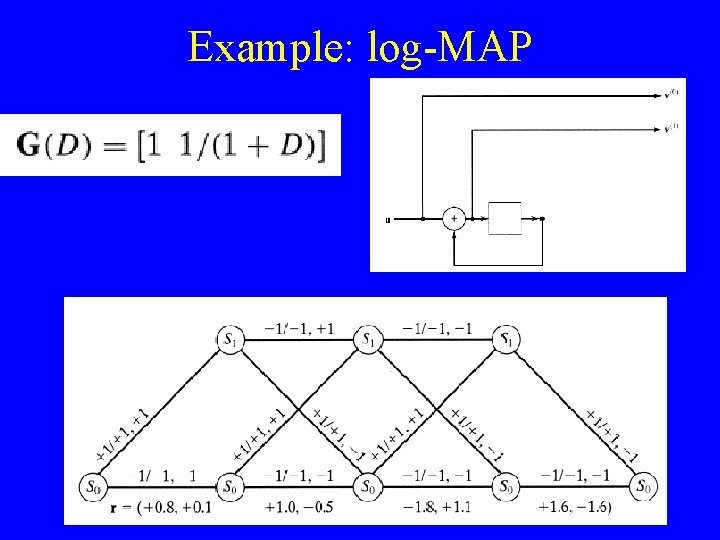

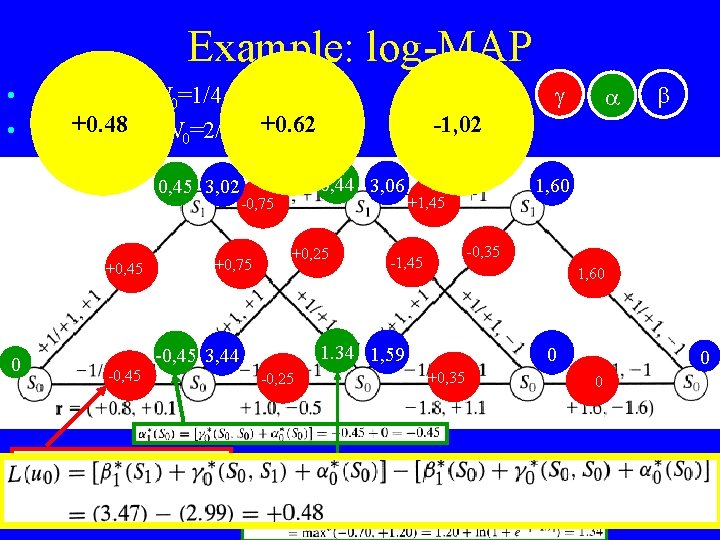

Example: log-MAP 12

Example: log-MAP • • 0, 45 3, 02 +0, 45 0 Assume Es/N 0=1/4 = - 6. 02 d. B +0. 48 R=3/8 so Eb/N 0=2/3 = -+0. 62 1. 76 d. B +0, 75 +0, 25 -0, 45 -0, 25 1, 60 +1, 45 -0, 35 -1, 45 1, 60 1. 34 1, 59 -0, 45 3, 44 -1, 02 0, 44 3, 06 -0, 75 0 +0, 35 0 0 13

Example: Max-log-MAP • • 0, 45 2, 34 +0, 45 0 Assume Es/N 0=1/4 = - 6. 02 d. B -0, 07 R=3/8 so Eb/N 0=2/3 = -+0, 10 1. 76 d. B +0, 75 +0, 25 -0, 45 -0, 25 1, 60 +1, 45 -0, 35 -1, 45 1, 60 1. 20 1, 25 -0, 45 3, 31 -0, 40 -0, 20 3, 05 -0, 75 0 +0, 35 0 0 14

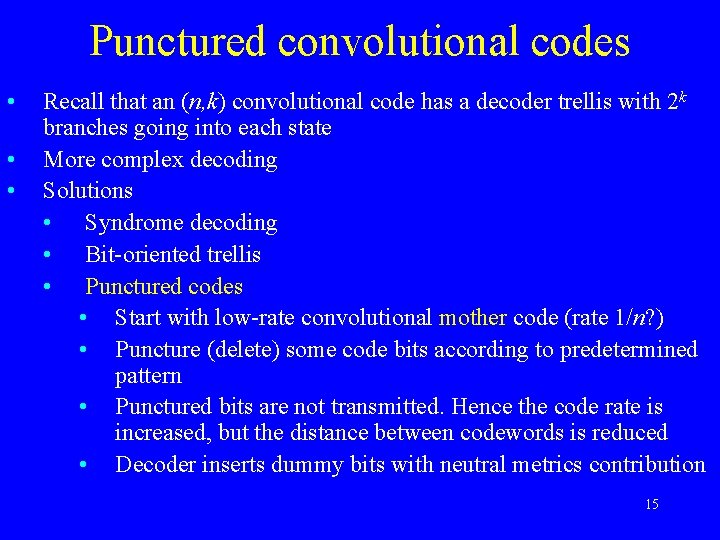

Punctured convolutional codes • • • Recall that an (n, k) convolutional code has a decoder trellis with 2 k branches going into each state More complex decoding Solutions • Syndrome decoding • Bit-oriented trellis • Punctured codes • Start with low-rate convolutional mother code (rate 1/n? ) • Puncture (delete) some code bits according to predetermined pattern • Punctured bits are not transmitted. Hence the code rate is increased, but the distance between codewords is reduced • Decoder inserts dummy bits with neutral metrics contribution 15

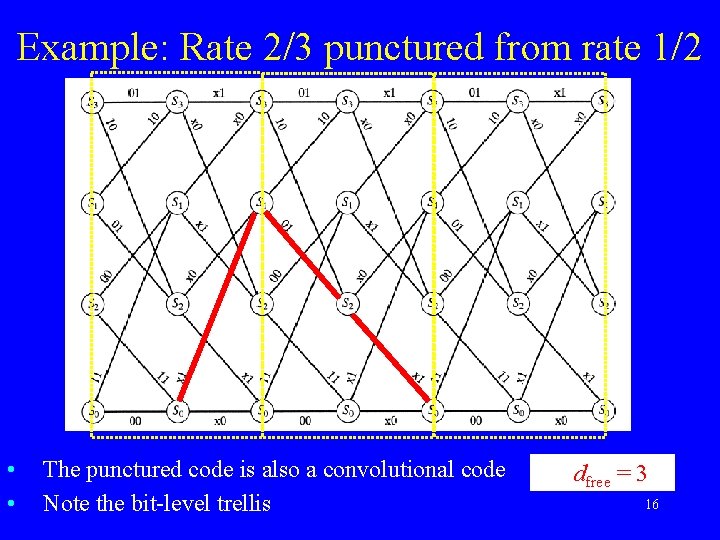

Example: Rate 2/3 punctured from rate 1/2 • • The punctured code is also a convolutional code Note the bit-level trellis dfree = 3 16

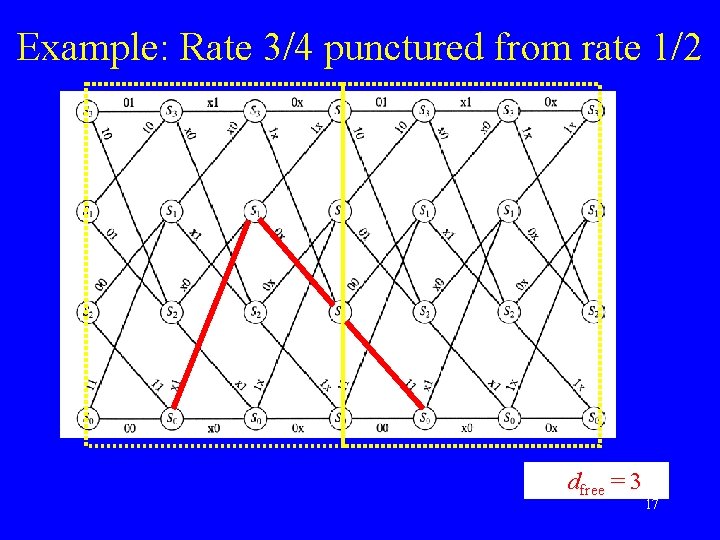

Example: Rate 3/4 punctured from rate 1/2 dfree = 3 17

More on punctured convolutional codes • Rate compatible punctured convolutional codes • • For applications that needs to support several code rates • For example adaptive coding • Sequence of codes obtained by repeated puncturing • Advantage: One decoder can decode all codes in family • Disadvantage: Resulting codes may be sub-optimum Puncturing patterns • Usually periodic puncturing maps • Found by computer search • Care must be exercised to avoid catastrophic encoders 18

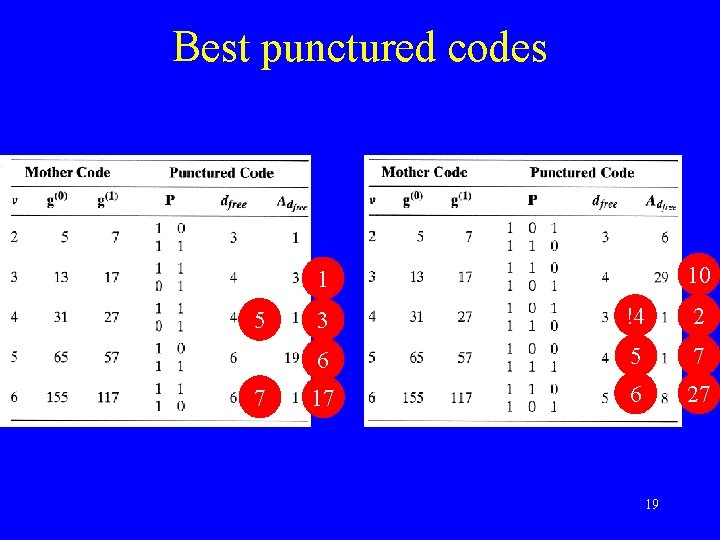

Best punctured codes 10 1 5 7 3 !4 2 6 5 7 17 6 27 19

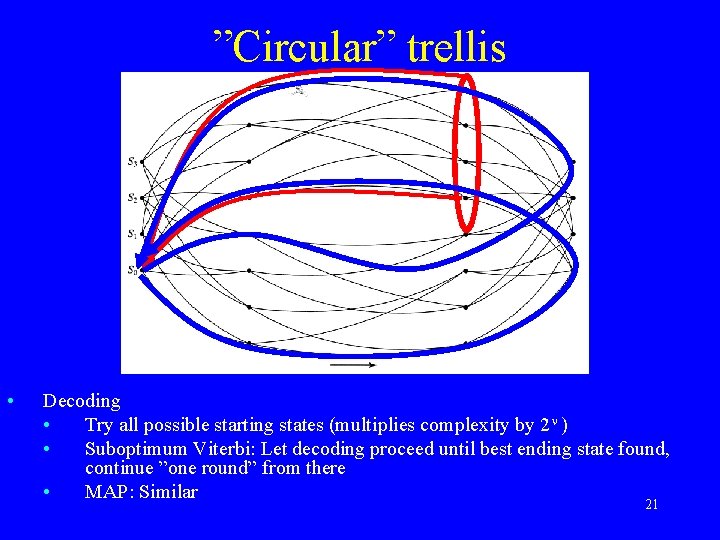

Tailbiting convolutional codes • • Purpose: Avoid the terminating tail (rate loss) • without distance loss? • Cannot avoid distance loss completely Tailbiting codes: Paths through trellis • Codewords can start in any state • Gives 2 as many codewords • But each codeword must end in the same state that it started from • …Gives 2 - as many codewords Tailbiting codes increasingly popular for moderate length purposes DVB: Turbo codes with tailbiting component codes 20

”Circular” trellis • Decoding • Try all possible starting states (multiplies complexity by 2 ) • Suboptimum Viterbi: Let decoding proceed until best ending state found, continue ”one round” from there • MAP: Similar 21

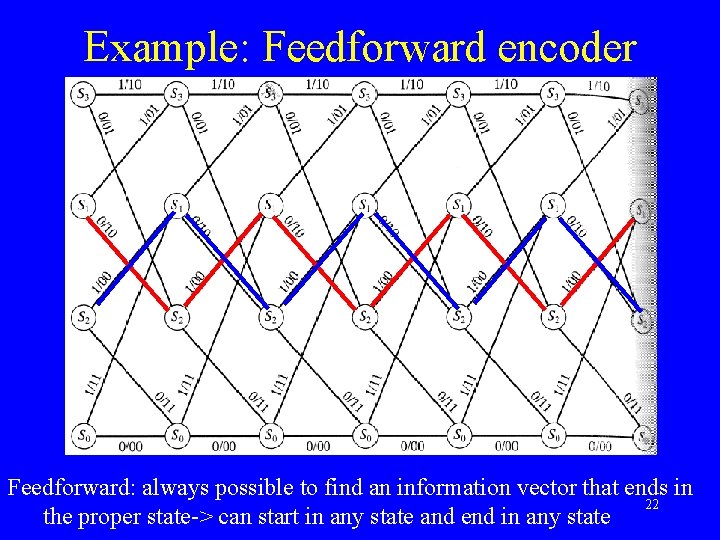

Example: Feedforward encoder Feedforward: always possible to find an information vector that ends in 22 the proper state-> can start in any state and end in any state

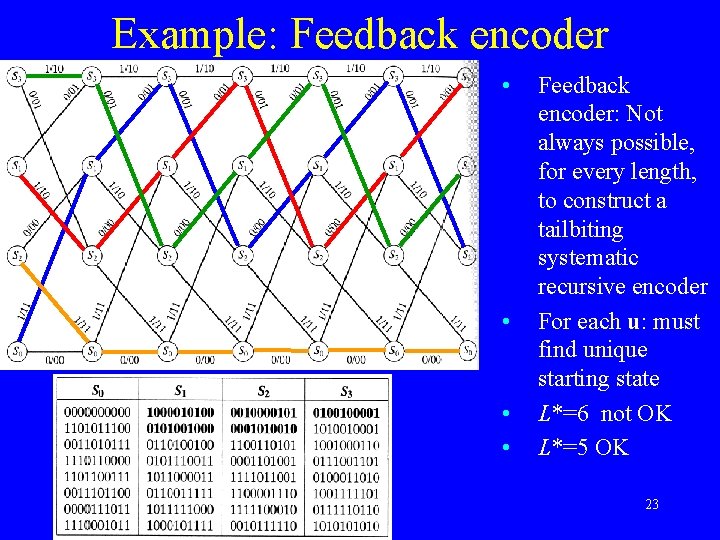

Example: Feedback encoder • • Feedback encoder: Not always possible, for every length, to construct a tailbiting systematic recursive encoder For each u: must find unique starting state L*=6 not OK L*=5 OK 23

Tailbiting codes as block codes • Tailbiting codes are block codes 24

Suggested exercises • 12. 27 -12. 39 25

- Slides: 25