Lifelong Machine Learning Bing Liu University of Illinois

Lifelong Machine Learning Bing Liu University of Illinois at Chicago liub@uic. edu

Introduction (Chen and Liu, 2016 -book) n Classic Machine Learning (ML) paradigm: isolated single-task learning q Given a dataset, run an ML algo. to build a model n q n e. g. , SVM, CRF, Neural Nets, Topic Modeling, …. Without considering previously learned knowledge Weaknesses of “isolated learning” q Knowledge learned is not retained or accumulated n n Needs a large number of training examples Suitable for well-defined & narrow tasks. 2

Humans never learn in isolation n We retain knowledge learned in the past and use it to learn more knowledge q Learn effectively from a few or no examples n n Our knowledge learned in the past enables us to learn new things with little data or effort. Nobody has ever given me 2000 training documents and ask me to build a classifier q If no accumulated knowledge, I cannot do it. n E. g. , if someone gives me 2000 training docs in Arabic (no translation), it is impossible for me to do. 3

Lifelong machine learning (LML) n Statistical ML is getting increasingly mature n Time to work on Lifelong Machine Learning q q n Chatbots and physical robots need LML in its interactions with humans and environments. q n Retain/accumulate learned knowledge in the past & use it to help future learning become more knowledgeable & better at learning Without LML, true AI is unlikely. We need a paradigm shift to LML. 4

A Motivating Example (Liu, 2012, 2015) n My interest in LML came from my experiences in a sentiment analysis startup. n Sentiment analysis (SA) q Sentiment and target: “The screen is great, but the voice quality is poor. ” n q Positive about screen but negative about voice quality Extensive knowledge sharing across tasks/domains n Sentiment expressions & product features (aspects) 5

Knowledge Shared Across Domains n After working on many SA projects for clients, I realized q q n (1) Easy to see sharing of sentiment words, q n a lot of concept sharing across domains as we see more and more application domains, fewer and fewer things are new. e. g. , good, bad, poor, terrible, etc. (2) There is also a great deal of sharing of product features. 6

Sharing of Product Features n A great deal of product features overlapping across domains q q n Every product review domain has the aspect price Most electronic products share the aspect battery Many also share the aspect of screen. …. It is rather “silly” not to exploit such sharing in SA to make it much more effective. 7

What does it mean for learning? n How to systematically exploit such sharing? q q Retain/accumulate knowledge learned in the past. Leverage the knowledge for new task learning n I. e. , lifelong machine learning (LML) n This leads to our work q q q Lifelong topic modeling (Chen and Liu 2014 a, b) Lifelong sentiment classification (Chen et al 2015) Others 8

LML is Useful in General n Such sharing and relatedness is everywhere. q E. g. , NLP is particularly suitable for LML n n n Words/phrases: same meaning across domains. Sentences: same syntax in all domains/fields It is hard to imagine: q q Humans have to learn everything from scratch whenever we face a new problem or environment. If that were the case, n Intelligence is unlikely 9

Definition of LML (Thrun 1995, Chen and Liu, 2016 – book) n The learner has performed learning on a sequence of tasks, from 1 to N. n When faced with the (N+1)th task, it uses the relevant knowledge in its knowledge base (KB) to help learn the (N+1)th task. n After learning (N+1)th task, KB is updated with the learned results from (N+1)th task. 10

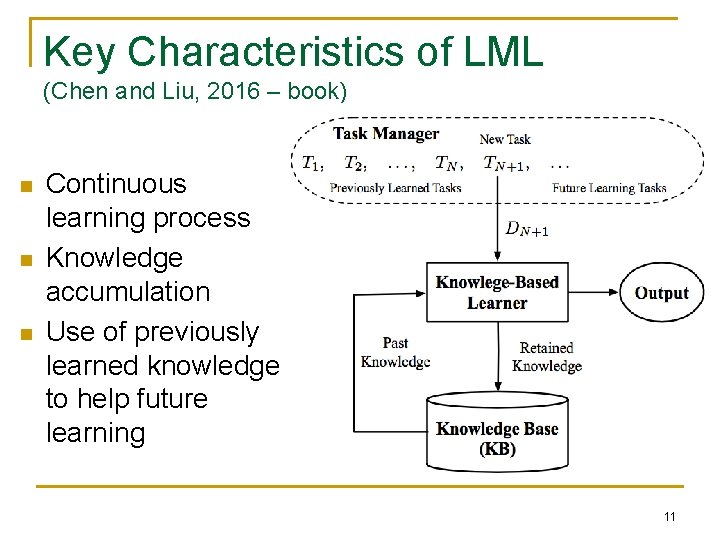

Key Characteristics of LML (Chen and Liu, 2016 – book) n n n Continuous learning process Knowledge accumulation Use of previously learned knowledge to help future learning 11

Two Types of Knowledge n Global knowledge: assume there is a global latent structure among tasks shared by all (Bou Ammar et al. , 2014, Ruvolo and Eaton, 2013 b, Tanaka and Yamamura, 1997, Thrun, 1996 b, Wilson et al. , 2007) q The global structure can be learned & used in the new task learning. n n These methods grew out of multi-task learning. Local knowledge: the new task uses relevant pieces of past knowledge based on needs. (Chen and Liu, 2014 a, b, Chen et al. , 2015, Fei et al. , 2016, Liu et al. , 2016, Shu et al. , 2016) 12

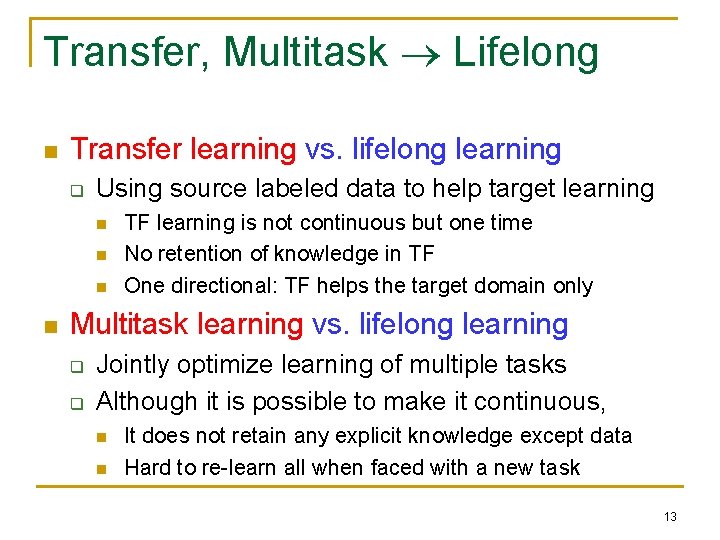

Transfer, Multitask Lifelong n Transfer learning vs. lifelong learning q Using source labeled data to help target learning n n TF learning is not continuous but one time No retention of knowledge in TF One directional: TF helps the target domain only Multitask learning vs. lifelong learning q q Jointly optimize learning of multiple tasks Although it is possible to make it continuous, n n It does not retain any explicit knowledge except data Hard to re-learn all when faced with a new task 13

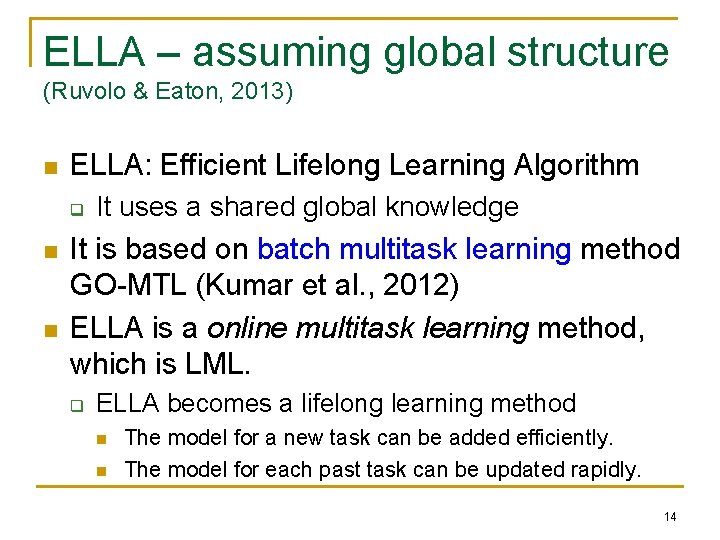

ELLA – assuming global structure (Ruvolo & Eaton, 2013) n ELLA: Efficient Lifelong Learning Algorithm q n n It uses a shared global knowledge It is based on batch multitask learning method GO-MTL (Kumar et al. , 2012) ELLA is a online multitask learning method, which is LML. q ELLA becomes a lifelong learning method n n The model for a new task can be added efficiently. The model for each past task can be updated rapidly. 14

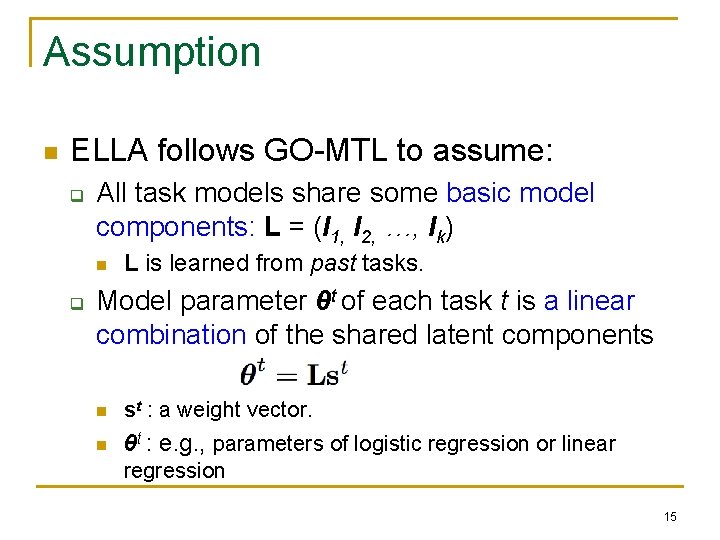

Assumption n ELLA follows GO-MTL to assume: q All task models share some basic model components: L = (l 1, l 2, …, lk) n q L is learned from past tasks. Model parameter θt of each task t is a linear combination of the shared latent components n st : a weight vector. n θt : e. g. , parameters of logistic regression or linear regression 15

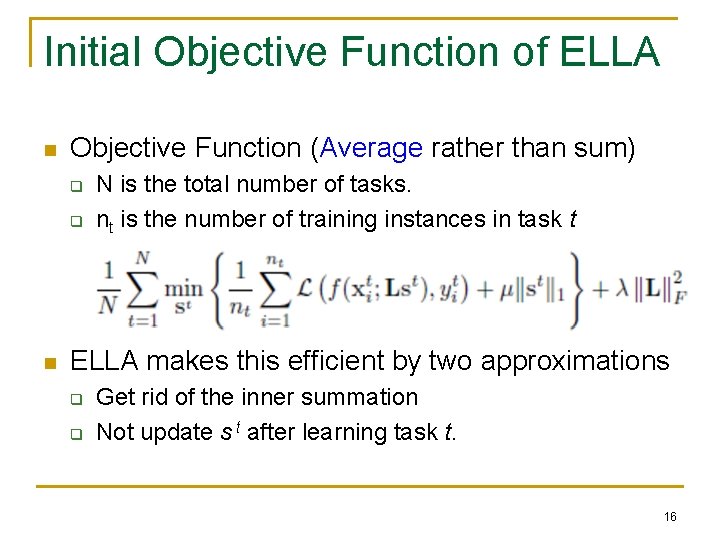

Initial Objective Function of ELLA n Objective Function (Average rather than sum) q q n N is the total number of tasks. nt is the number of training instances in task t ELLA makes this efficient by two approximations q q Get rid of the inner summation Not update s t after learning task t. 16

Lifelong Sentiment Classification (Chen, Ma, and Liu 2015) n “I bought a cellphone a few days ago. It is such a nice phone. The touch screen is really cool. The voice quality is great too. . . ” n Goal: classify docs or sentences as + or -. q n Need to manually label a lot of training data for each domain, which is highly labor-intensive Can we not label for every domain? 17

A Simple Lifelong Learning Method If we have worked on a large number of past domains with their training data D n Build a classifier using D, test on new domain q n n Note - using only one past/source domain as in transfer learning is not enough. In many cases – improve accuracy by as much as 19% (= 80%-61%). Why? In some other cases – not so good, e. g. , it works poorly for toy reviews. Why? “toy” 18

Lifelong Sentiment Classification (Chen, Ma and Liu, 2015) n n It adopts a Bayesian optimization framework for LML using stochastic gradient descent Objective function for each +ve review: P(+ | di) – P(- | di) (and P(- | di) – P(+ | di) for each –ve review) n Lifelong learning uses q q Word counts from the past data as priors. Penalty terms to deal with domain dependent sentiment words and reliability of knowledge. 19

Knowledge Base n Two types of previous knowledge q q Document-level knowledge Domain-level knowledge 20

Knowledge Base n Two types of knowledge q q Document-level knowledge Domain-level knowledge 21

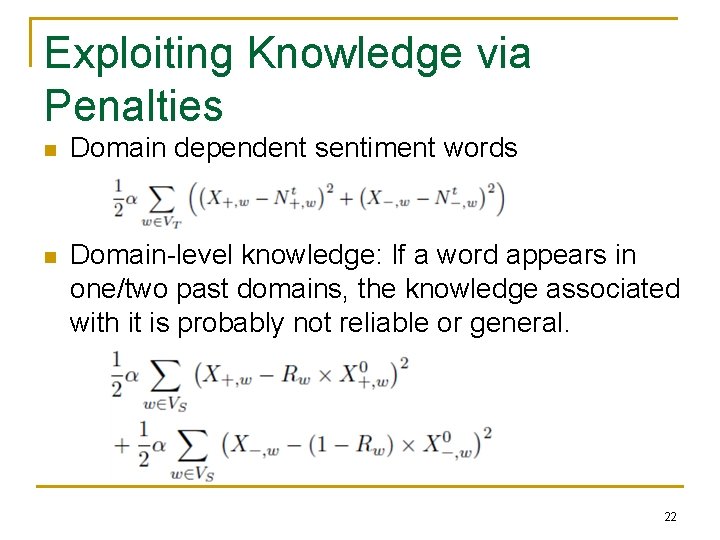

Exploiting Knowledge via Penalties n Domain dependent sentiment words n Domain-level knowledge: If a word appears in one/two past domains, the knowledge associated with it is probably not reliable or general. 22

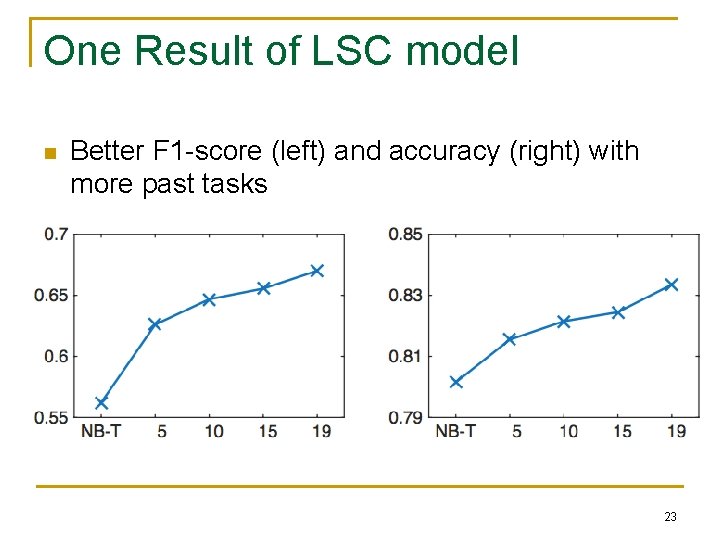

One Result of LSC model n Better F 1 -score (left) and accuracy (right) with more past tasks 23

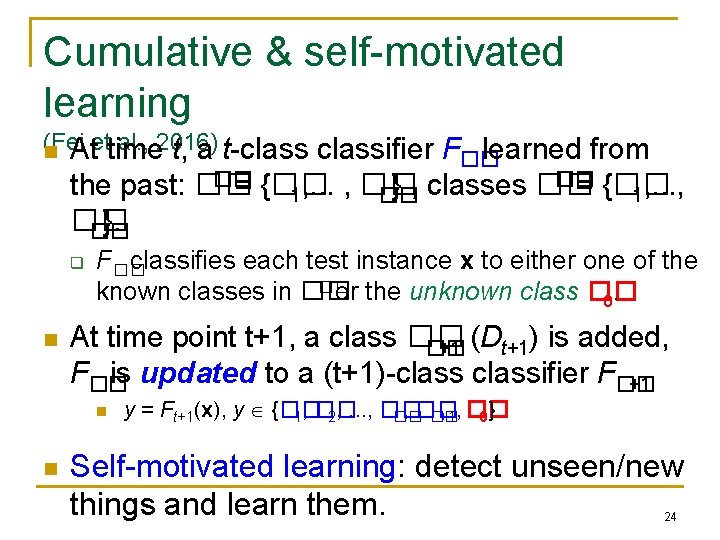

Cumulative & self-motivated learning (Fei al. , 2016) n Atettime t, a t-classifier F�� learned from �� �� the past: �� = {�� , . . . , �� } , classes �� = {�� 1, . . . , �� }. �� q n F�� classifies each test instance x to either one of the �� known classes in �� or the unknown class �� 0. At time point t+1, a class �� �� +1 (Dt+1) is added, F�� is updated to a (t+1)-classifier F�� +1 n n y = Ft+1(x), y {�� , �� 1, �� 2, . . . , �� �� �� +1, �� 0} Self-motivated learning: detect unseen/new things and learn them. 24

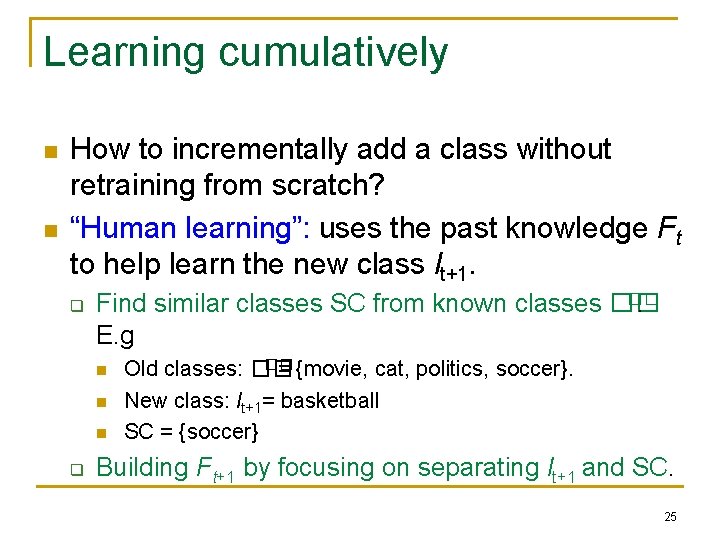

Learning cumulatively n n How to incrementally add a class without retraining from scratch? “Human learning”: uses the past knowledge Ft to help learn the new class lt+1. q �� Find similar classes SC from known classes ��. E. g n n n q �� Old classes: �� = {movie, cat, politics, soccer}. New class: lt+1= basketball SC = {soccer} Building Ft+1 by focusing on separating lt+1 and SC. 25

Open Classification (Fei and Liu, 2016) n n Detect unseen class docs (not in training) Traditional classification makes the closed world assumption: q Classes in testing have been seen in training n n Not true in most real-life environments. q n i. e. , no new classes in the test data New data may contain unseen class documents We need open (world) classification q Detect the unseen class of documents 26

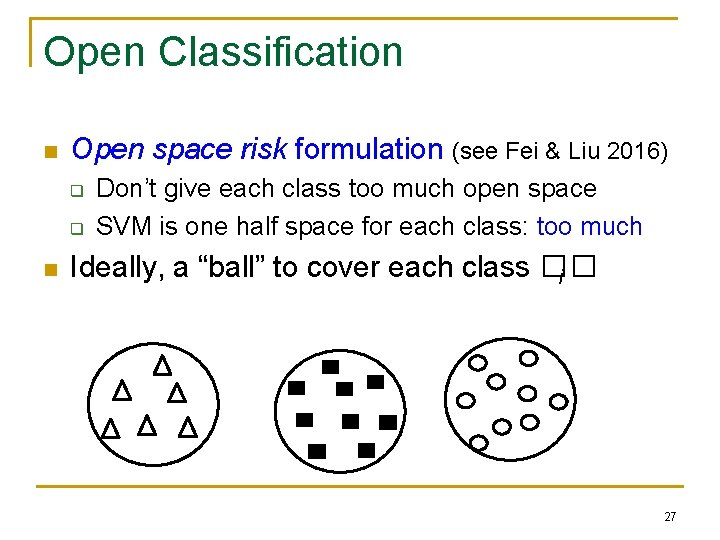

Open Classification n Open space risk formulation (see Fei & Liu 2016) q q n Don’t give each class too much open space SVM is one half space for each class: too much Ideally, a “ball” to cover each class �� i 27

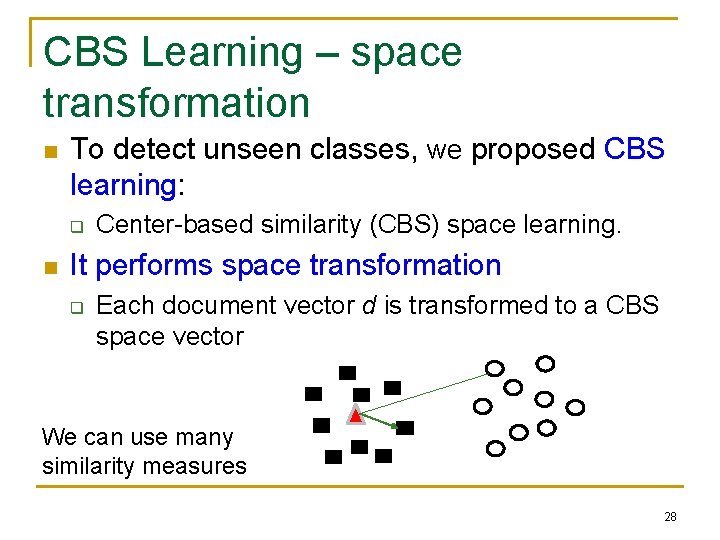

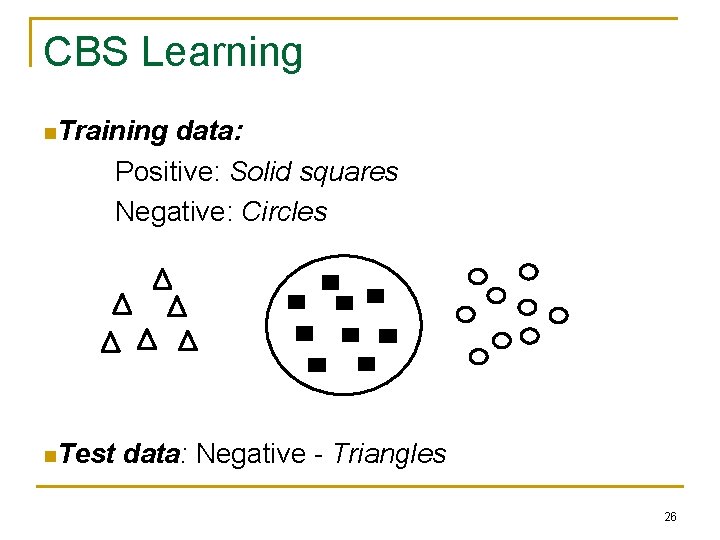

CBS Learning – space transformation n To detect unseen classes, we proposed CBS learning: q n Center-based similarity (CBS) space learning. It performs space transformation q Each document vector d is transformed to a CBS space vector We can use many similarity measures 28

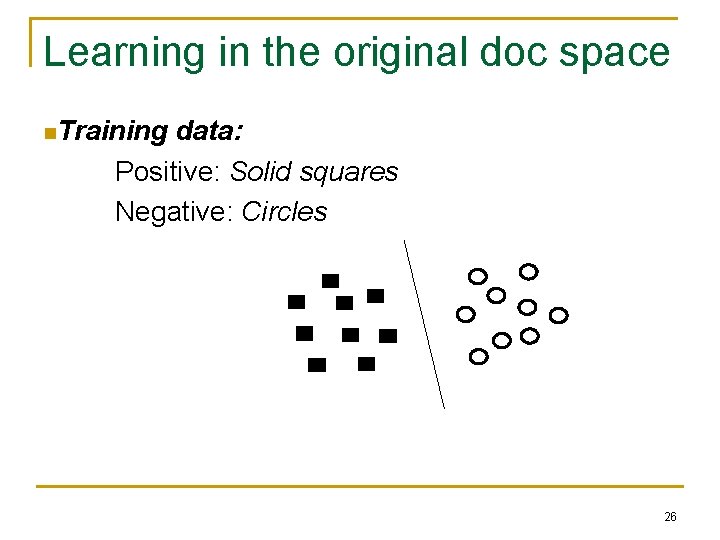

Learning in the original doc space n. Training data: Positive: Solid squares Negative: Circles 26

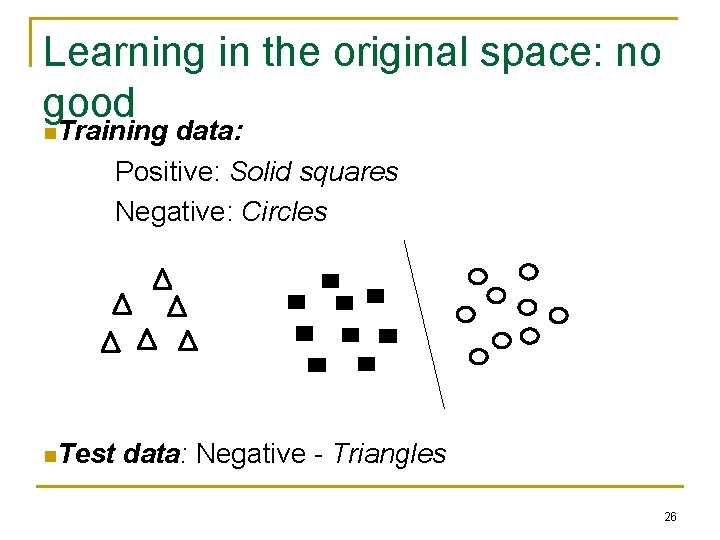

Learning in the original space: no good n. Training data: Positive: Solid squares Negative: Circles n. Test data: Negative - Triangles 26

CBS Learning n. Training data: Positive: Solid squares Negative: Circles n. Test data: Negative - Triangles 26

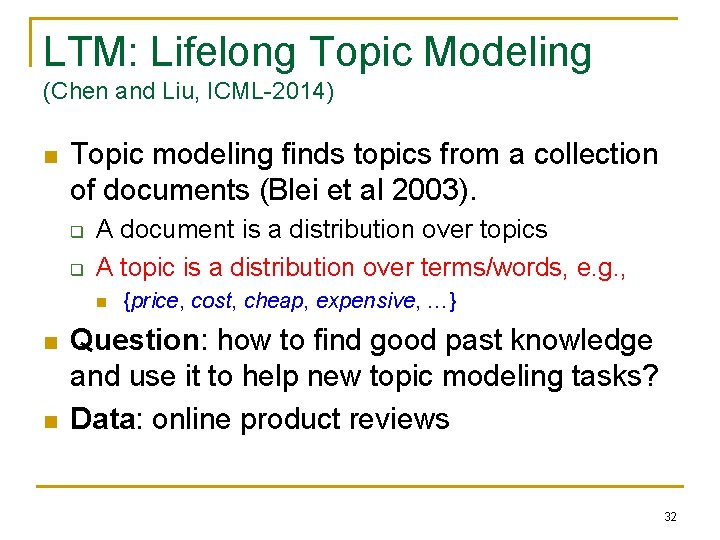

LTM: Lifelong Topic Modeling (Chen and Liu, ICML-2014) n Topic modeling finds topics from a collection of documents (Blei et al 2003). q q A document is a distribution over topics A topic is a distribution over terms/words, e. g. , n n n {price, cost, cheap, expensive, …} Question: how to find good past knowledge and use it to help new topic modeling tasks? Data: online product reviews 32

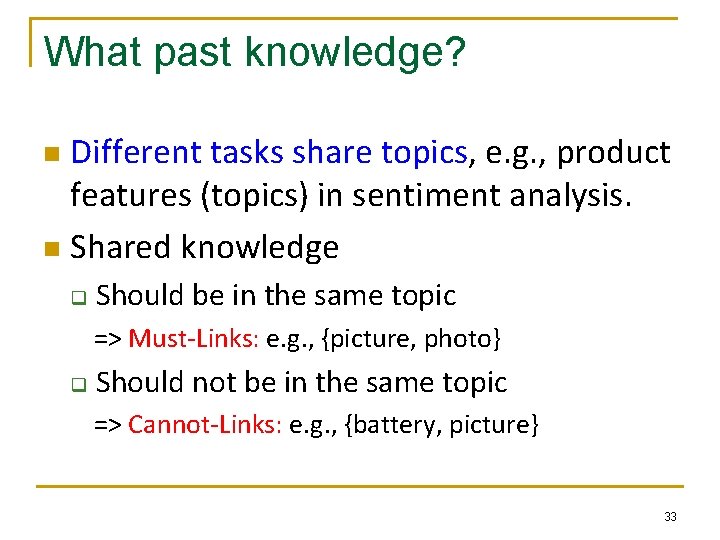

What past knowledge? Different tasks share topics, e. g. , product features (topics) in sentiment analysis. n Shared knowledge n q Should be in the same topic => Must-Links: e. g. , {picture, photo} q Should not be in the same topic => Cannot-Links: e. g. , {battery, picture} 33

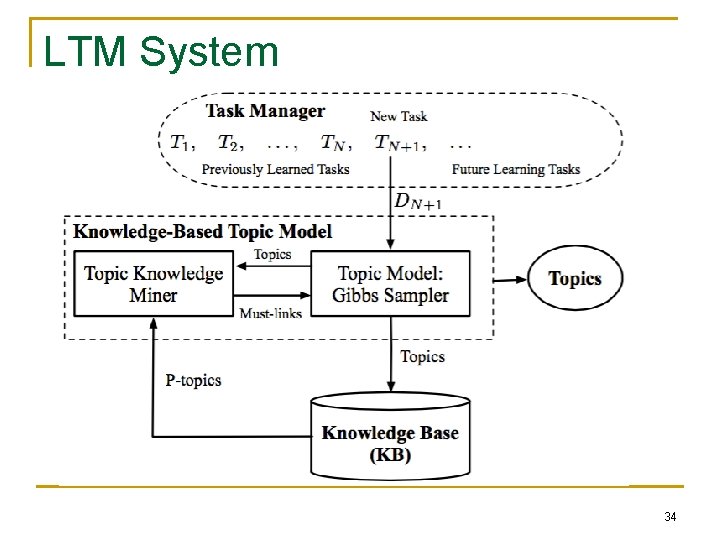

LTM System 34

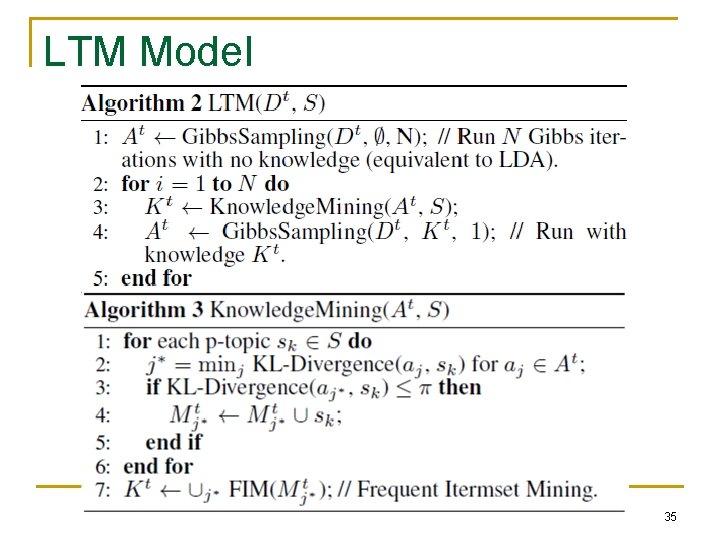

LTM Model 35

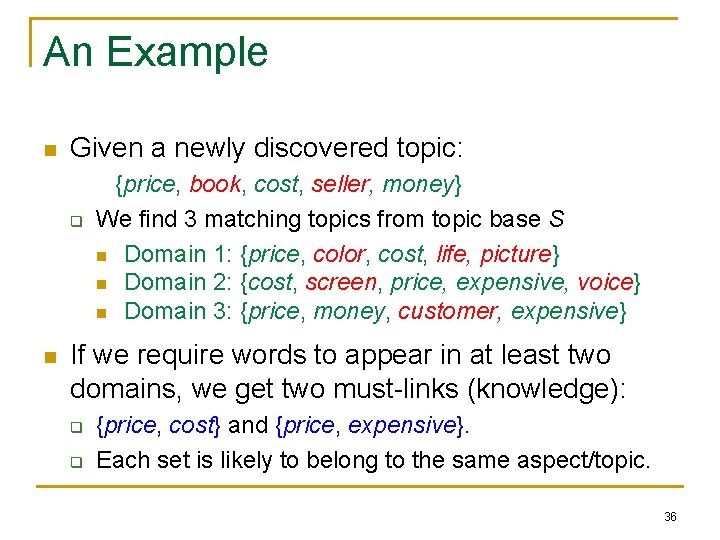

An Example n Given a newly discovered topic: q n {price, book, cost, seller, money} We find 3 matching topics from topic base S n Domain 1: {price, color, cost, life, picture} n Domain 2: {cost, screen, price, expensive, voice} n Domain 3: {price, money, customer, expensive} If we require words to appear in at least two domains, we get two must-links (knowledge): q q {price, cost} and {price, expensive}. Each set is likely to belong to the same aspect/topic. 36

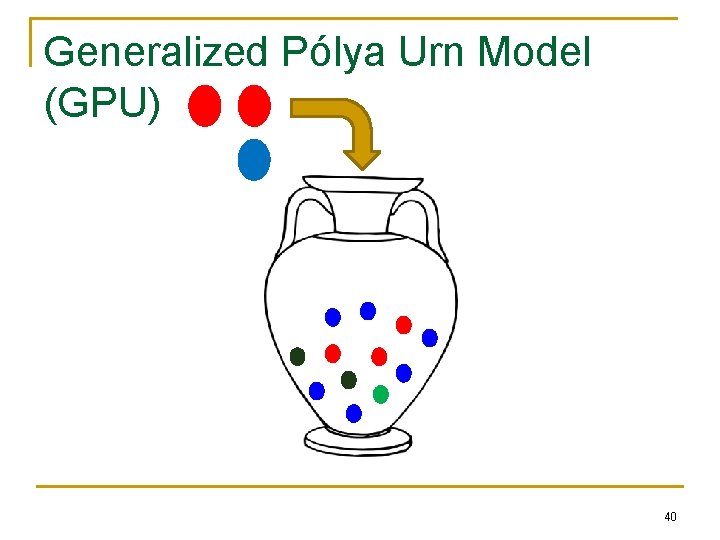

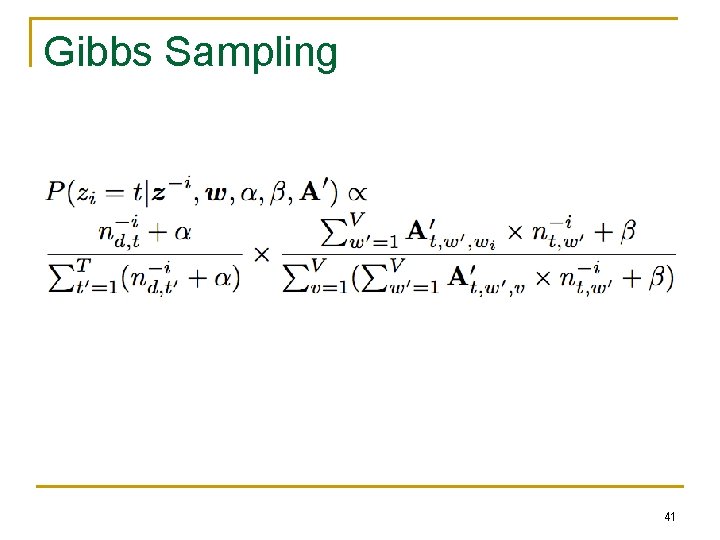

Model Inference: Gibbs Sampling n How to use the must-link knowledge? q n Model inference is based on q n e. g. , {price, cost} & {price, expensive} Generalized Pólya Urn Model (GPU) Idea q q When assigning a topic t to a word w, e. g. , price also assign a fraction of t to words (e. g. , cost and expensive) in must-links with w. 37

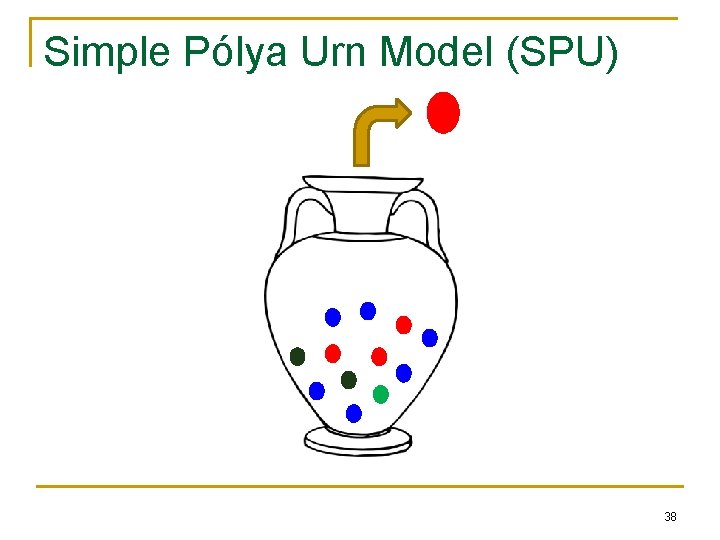

Simple Pólya Urn Model (SPU) 38

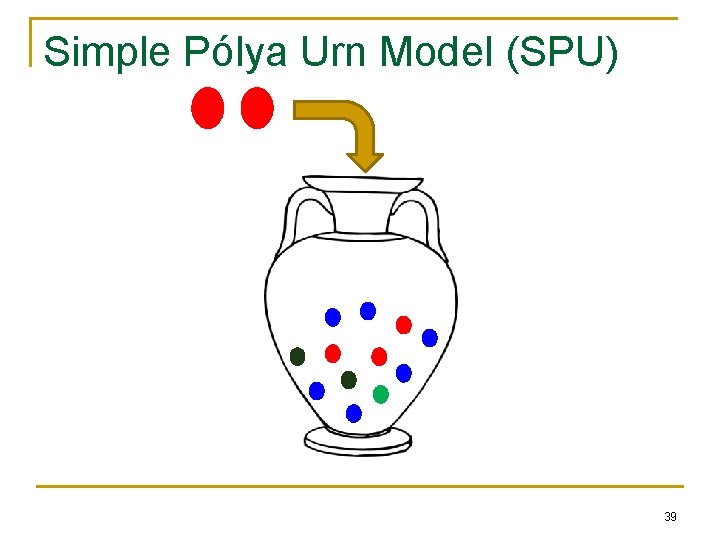

Simple Pólya Urn Model (SPU) 39

Generalized Pólya Urn Model (GPU) 40

Gibbs Sampling 41

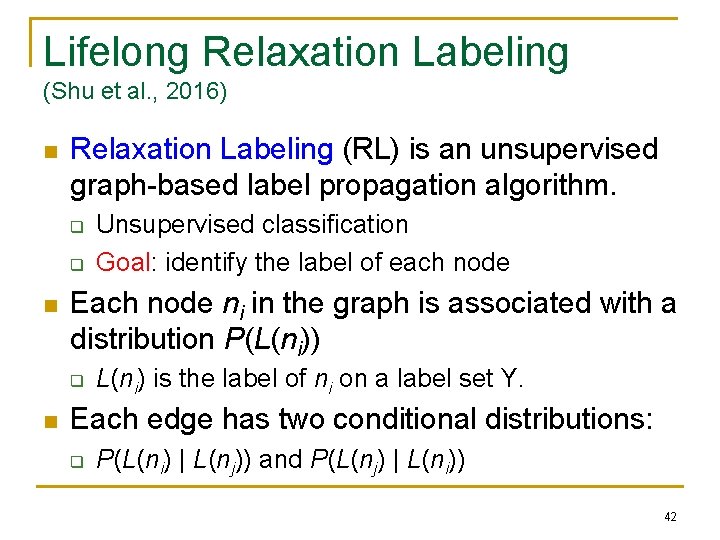

Lifelong Relaxation Labeling (Shu et al. , 2016) n Relaxation Labeling (RL) is an unsupervised graph-based label propagation algorithm. q q n Each node ni in the graph is associated with a distribution P(L(ni)) q n Unsupervised classification Goal: identify the label of each node L(ni) is the label of ni on a label set Y. Each edge has two conditional distributions: q P(L(ni) | L(nj)) and P(L(nj) | L(ni)) 42

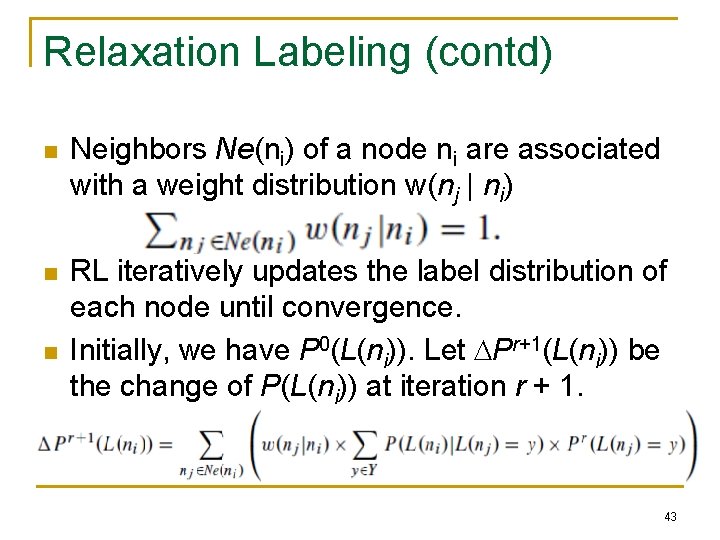

Relaxation Labeling (contd) n Neighbors Ne(ni) of a node ni are associated with a weight distribution w(nj | ni) n RL iteratively updates the label distribution of each node until convergence. Initially, we have P 0(L(ni)). Let Pr+1(L(ni)) be the change of P(L(ni)) at iteration r + 1. n 43

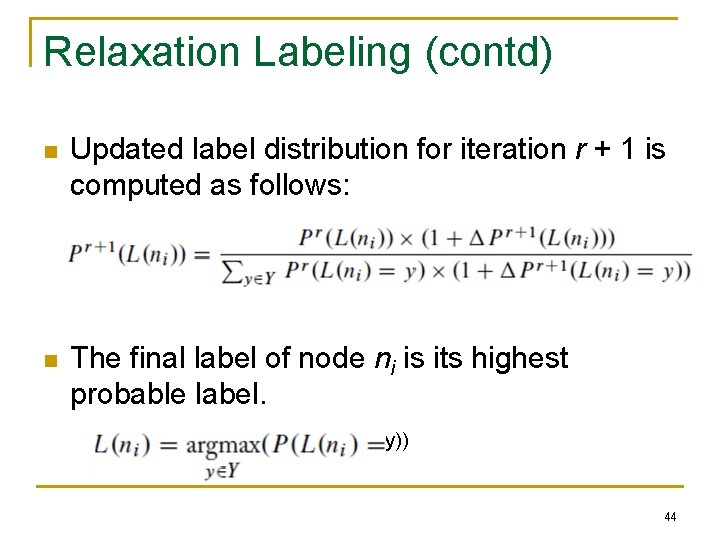

Relaxation Labeling (contd) n Updated label distribution for iteration r + 1 is computed as follows: n The final label of node ni is its highest probable label. y)) 44

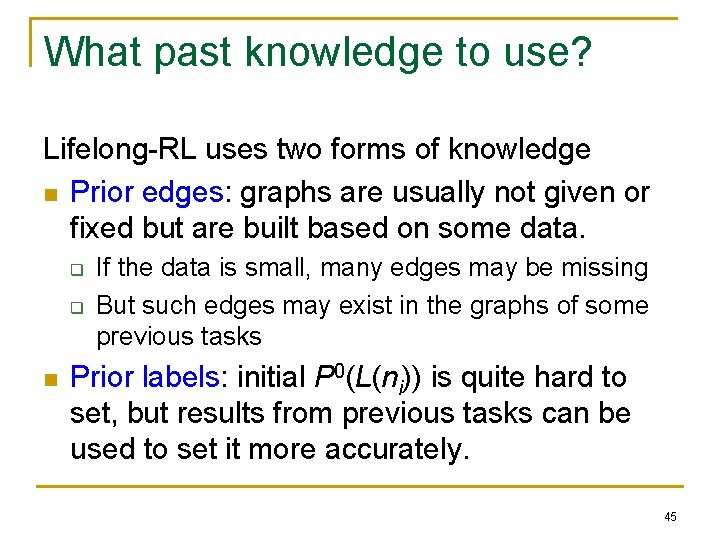

What past knowledge to use? Lifelong-RL uses two forms of knowledge n Prior edges: graphs are usually not given or fixed but are built based on some data. q q n If the data is small, many edges may be missing But such edges may exist in the graphs of some previous tasks Prior labels: initial P 0(L(ni)) is quite hard to set, but results from previous tasks can be used to set it more accurately. 45

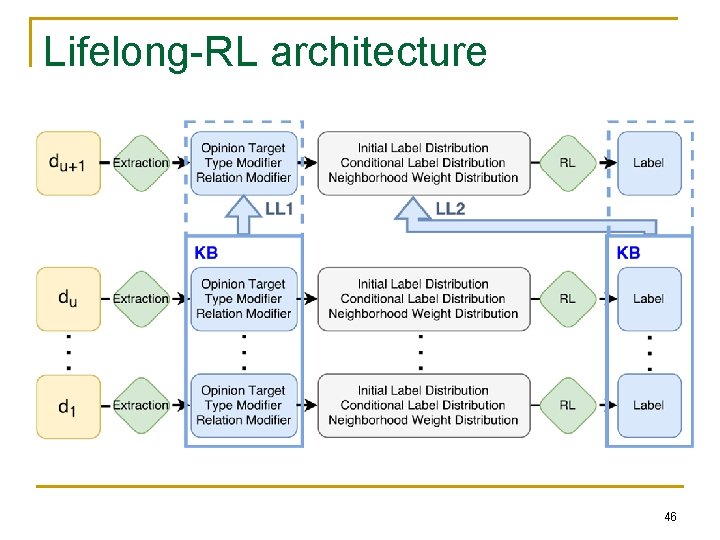

Lifelong-RL architecture 46

Conclusions n Introduced LML & discussed some current work q q n IMHO: Without accumulating the learned knowledge and using knowledge to learn more. q n Understanding of LML is very limited Current research focuses on only one type of task Artificial General Intelligence (AGI) is unlikely. As statistical machine learning is increasingly mature, q we should go for a paradigm shift to LML. 47

LML Challenges (Chen and Liu 2016 -book) There are many challenges for LML, e. g. , n Correctness of knowledge n Applicability of knowledge n Knowledge representation and reasoning n Learn with tasks of multiple types n Self-motivated learning n Compositional learning n Learning in interaction with humans & systems 48

Thank you Q&A

- Slides: 49