Improving File System Reliability with IO Shepherding Haryadi

Improving File System Reliability with I/O Shepherding Haryadi S. Gunawi, Vijayan Prabhakaran+, Swetha Krishnan, Andrea C. Arpaci-Dusseau, Remzi H. Arpaci-Dusseau University of Wisconsin - Madison + 1

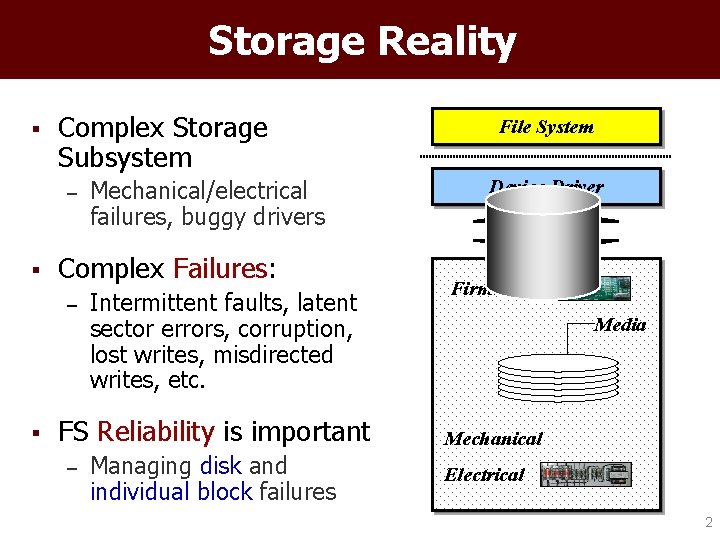

Storage Reality § Complex Storage Subsystem – § Complex Failures: – § Mechanical/electrical failures, buggy drivers Intermittent faults, latent sector errors, corruption, lost writes, misdirected writes, etc. FS Reliability is important – Managing disk and individual block failures File System Device Driver Transport Firmware Media Mechanical Electrical 2

File System Reality § Good news: – Rich literature • • – § Checksum, parity, mirroring Versioning, physical/logical identity Important for single and multiple disks setting Bad news: – File system reliability is broken[SOSP’ 05] • • Unlike other components (performance, consistency) Reliability approaches hard-to understand evolve 3

Broken FS Reliability § Lack of good reliability strategy – – No remapping, checksumming, redundancy Existing strategy is coarse-grained • § § Let’s fix them! Mount read-only, panic, retry Inconsistent policies With current – Different techniques in similar failure scenarios Framework? Bugs Not so easy … – Ignored write failures 4

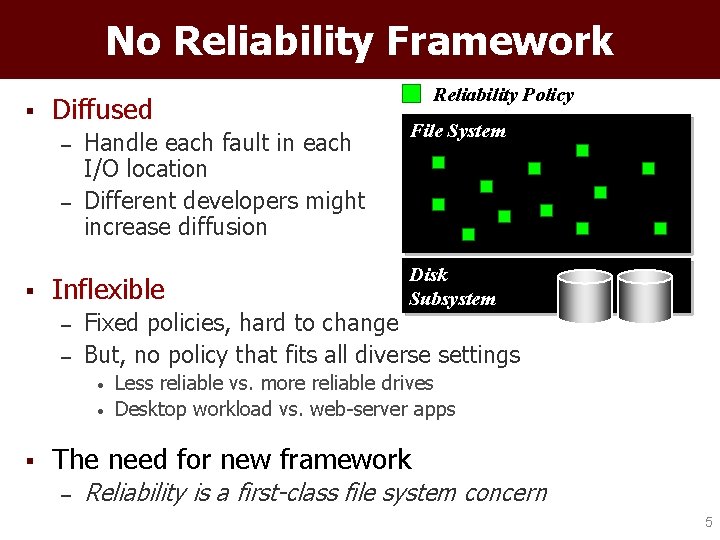

No Reliability Framework § Diffused – – § Handle each fault in each I/O location Different developers might increase diffusion Inflexible – – File System Disk Subsystem Fixed policies, hard to change But, no policy that fits all diverse settings • • § Reliability Policy Less reliable vs. more reliable drives Desktop workload vs. web-server apps The need for new framework – Reliability is a first-class file system concern 5

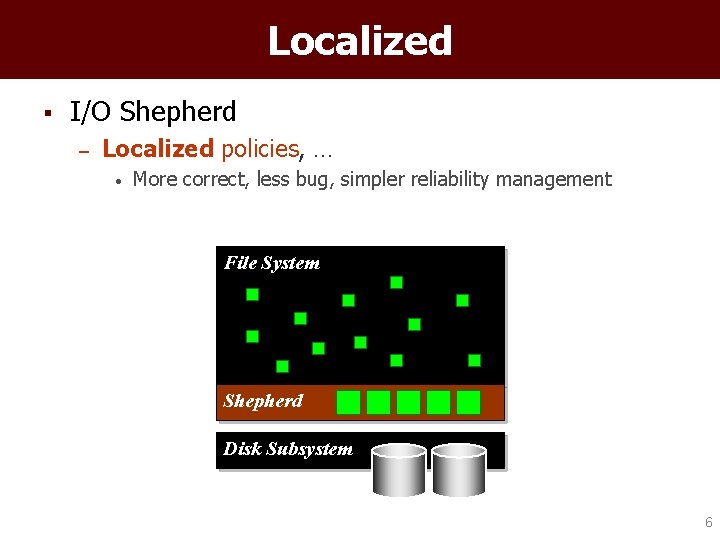

Localized § I/O Shepherd – Localized policies, … • More correct, less bug, simpler reliability management File System Shepherd Disk Subsystem 6

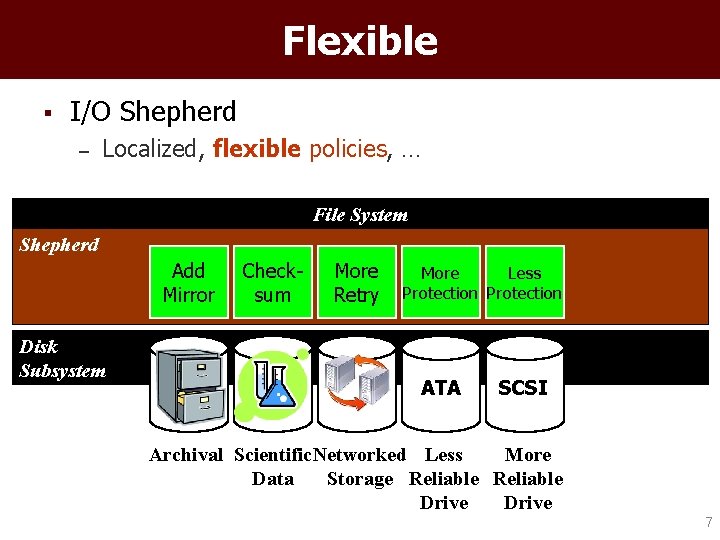

Flexible § I/O Shepherd – Localized, flexible policies, … File System Shepherd Add Mirror Disk Subsystem Checksum More Retry More Less Protection ATA SCSI More Archival Scientific. Networked Less Data Storage Reliable Drive 7

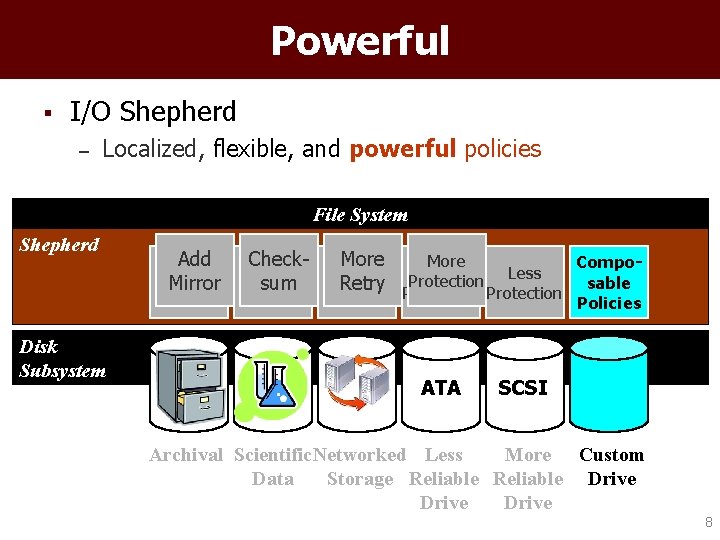

Powerful § I/O Shepherd – Localized, flexible, and powerful policies File System Shepherd Disk Subsystem Add Mirror Checksum More Compo. More Less Protection sable Retry Protection Retry Policies ATA SCSI Archival Scientific. Networked Less More Custom Data Storage Reliable Drive 8

Outline § Introduction § I/O Shepherd Architecture § Implementation § Evaluation § Conclusion 9

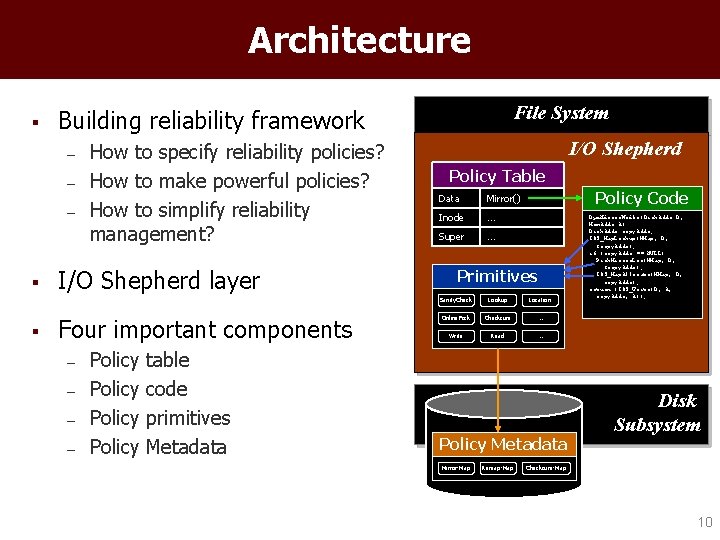

Architecture § – – – § § File System Building reliability framework How to specify reliability policies? How to make powerful policies? How to simplify reliability management? I/O Shepherd layer Four important components – – Policy table code primitives Metadata I/O Shepherd Policy Table Data Mirror() Inode … Super … Policy Code Primitives Sanity. Check Lookup Location Online. Fsck Checksum … Write Read … Policy Metadata Mirror-Map Remap-Map Dyn. Mirror. Write(Disk. Addr D, Mem. Addr A) Disk. Addr copy. Addr; IOS_Map. Lookup(MMap, D, ©. Addr); if (copy. Addr == NULL) Pick. Mirror. Loc(MMap, D, ©. Addr); IOS_Map. Allocate(MMap, D, copy. Addr); return (IOS_Write(D, A, copy. Addr, A)); Disk Subsystem Checksum-Map 10

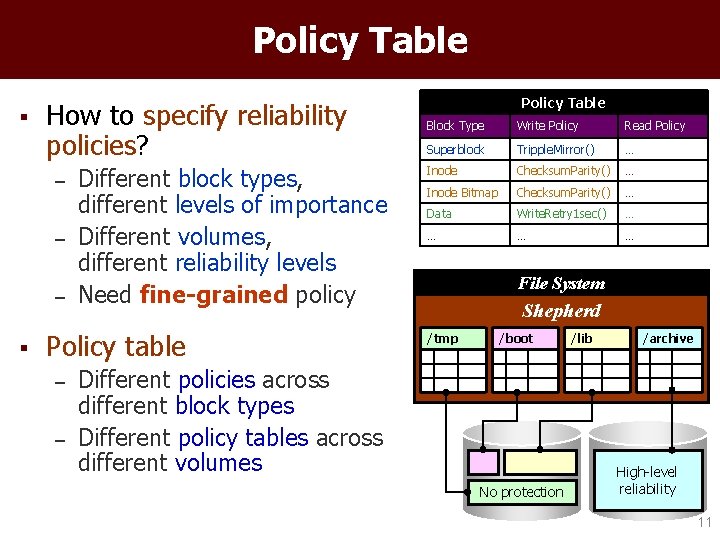

Policy Table § How to specify reliability policies? – – – § Different block types, different levels of importance Different volumes, different reliability levels Need fine-grained policy Policy table – – Policy Table Block Type Write Policy Read Policy Superblock Tripple. Mirror() … Inode Checksum. Parity() … Inode Bitmap Checksum. Parity() … Data Write. Retry 1 sec() … … File System Shepherd /tmp /boot Different policies across different block types Different policy tables across different volumes No protection /lib /archive High-level reliability 11

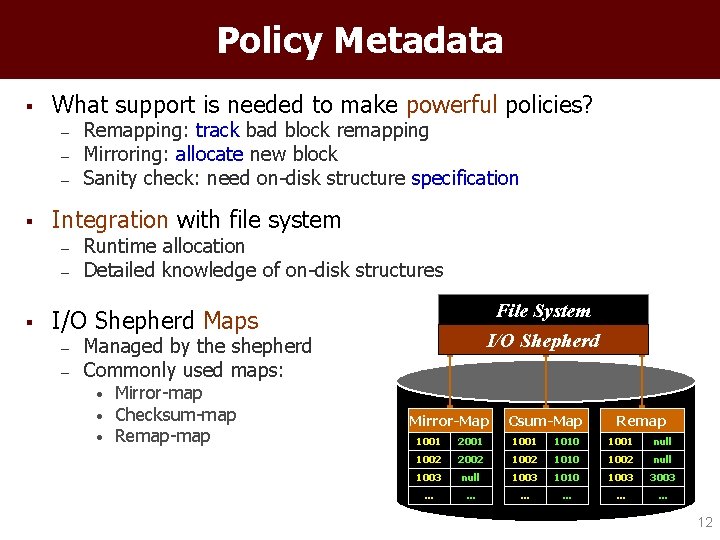

Policy Metadata § What support is needed to make powerful policies? – – – § Integration with file system – – § Remapping: track bad block remapping Mirroring: allocate new block Sanity check: need on-disk structure specification Runtime allocation Detailed knowledge of on-disk structures File System I/O Shepherd Maps – – Managed by the shepherd Commonly used maps: • • • Mirror-map Checksum-map Remap-map Mirror-Map Csum-Map Remap 1001 2001 1010 1001 null 1002 2002 1010 1002 null 1003 1010 1003 3003 … … … 12

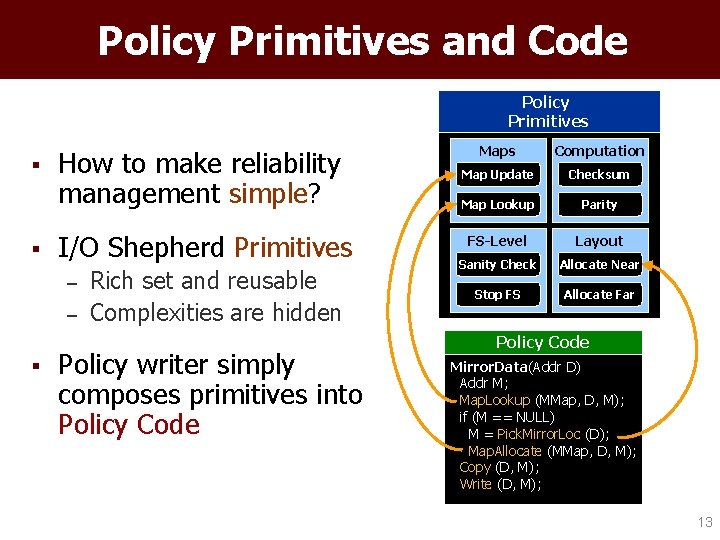

Policy Primitives and Code Policy Primitives § § How to make reliability management simple? I/O Shepherd Primitives – – § Rich set and reusable Complexities are hidden Policy writer simply composes primitives into Policy Code Maps Computation Map Update Checksum Map Lookup Parity FS-Level Layout Sanity Check Allocate Near Stop FS Allocate Far Policy Code Mirror. Data(Addr D) Addr M; Map. Lookup (MMap, D, M); if (M == NULL) M = Pick. Mirror. Loc (D); Map. Allocate (MMap, D, M); Copy (D, M); Write (D, M); 13

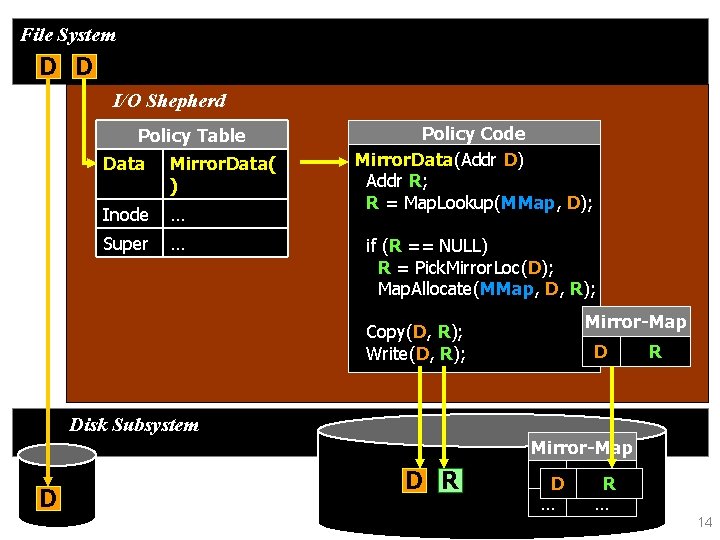

File System D D I/O Shepherd Policy Table Data Mirror. Data( ) Inode … Super … Policy Code Mirror. Data(Addr D) Addr R; R = Map. Lookup(MMap, D); if (R == NULL) R = Pick. Mirror. Loc(D); Map. Allocate(MMap, D, R); Copy(D, R); Write(D, R); Mirror-Map D R Disk Subsystem Mirror-Map D D R DD NULL R … … 14

Summary § Interposition simplifies reliability management – – § Localized policies Simple and extensible policies Challenge: Keeping new data and metadata consistent 15

Outline § Introduction § I/O Shepherd Architecture § Implementation – Consistency Management § Evaluation § Conclusion 16

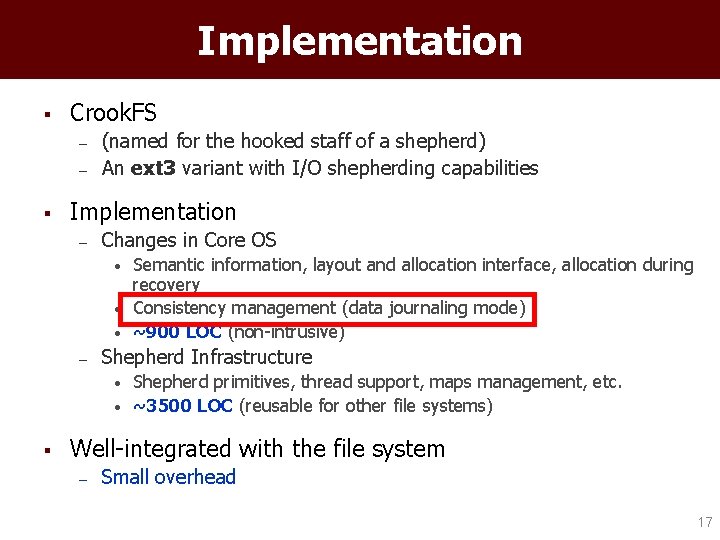

Implementation § Crook. FS – – § (named for the hooked staff of a shepherd) An ext 3 variant with I/O shepherding capabilities Implementation – Changes in Core OS • • • – Shepherd Infrastructure • • § Semantic information, layout and allocation interface, allocation during recovery Consistency management (data journaling mode) ~900 LOC (non-intrusive) Shepherd primitives, thread support, maps management, etc. ~3500 LOC (reusable for other file systems) Well-integrated with the file system – Small overhead 17

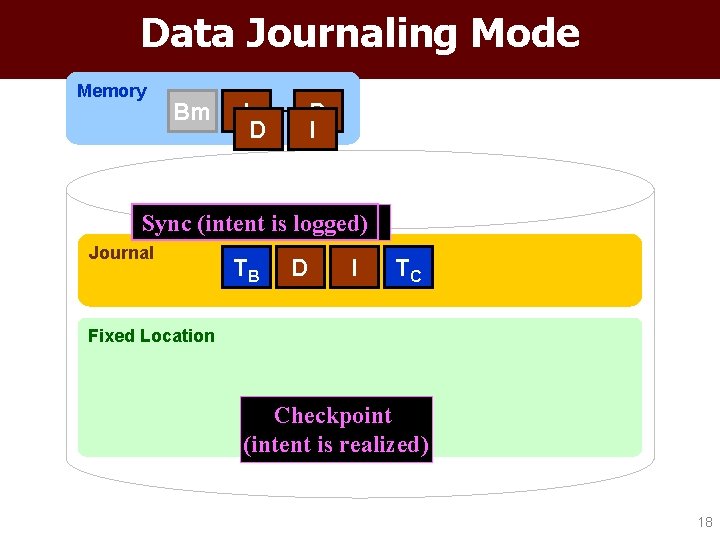

Data Journaling Mode Memory Bm I D Sync (intent. Tx is logged) Release Journal TB D I TC Fixed Location Checkpoint (intent is realized) 18

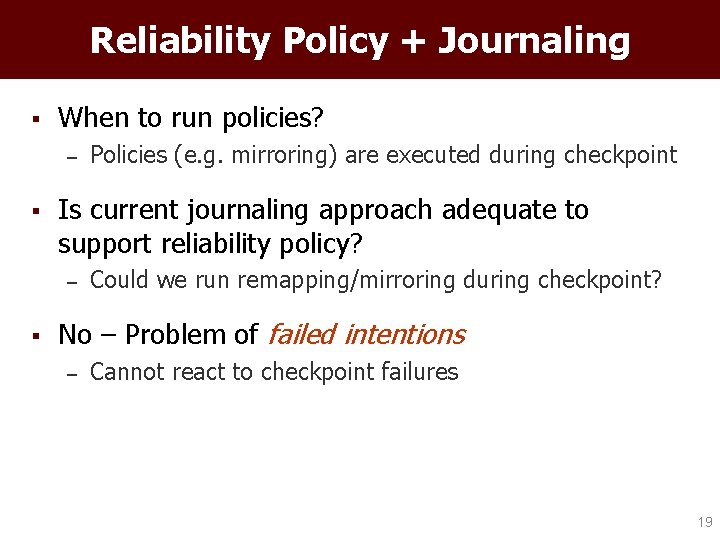

Reliability Policy + Journaling § When to run policies? – § Is current journaling approach adequate to support reliability policy? – § Policies (e. g. mirroring) are executed during checkpoint Could we run remapping/mirroring during checkpoint? No – Problem of failed intentions – Cannot react to checkpoint failures 19

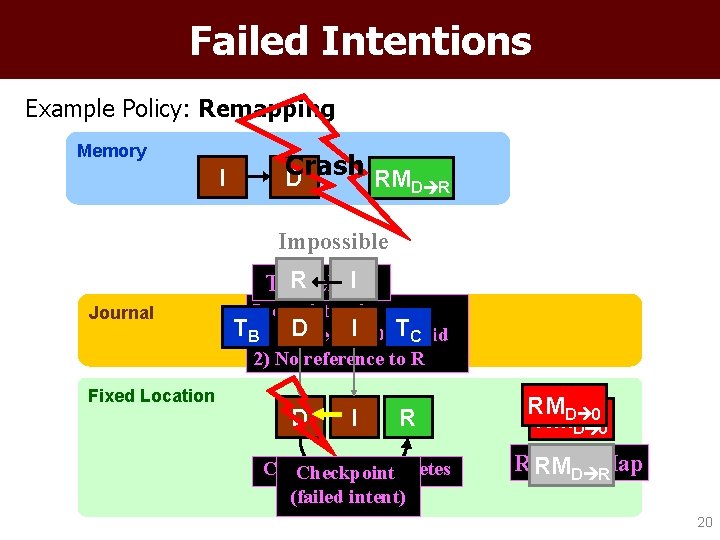

Failed Intentions Example Policy: Remapping Memory I Crash D RMD R Impossible I Tx. RRelease Journal Fixed Location Inconsistencies: TB 1) Pointer D I invalid TC 2) No reference to R D I R Checkpoint completes Checkpoint (failed intent) RMD 0 Remap-Map RMD R 20

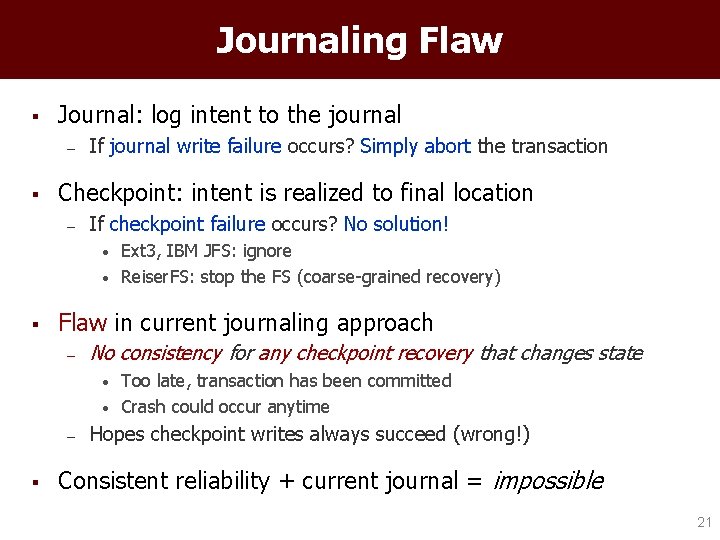

Journaling Flaw § Journal: log intent to the journal – § If journal write failure occurs? Simply abort the transaction Checkpoint: intent is realized to final location – If checkpoint failure occurs? No solution! • • § Flaw in current journaling approach – No consistency for any checkpoint recovery that changes state • • – § Ext 3, IBM JFS: ignore Reiser. FS: stop the FS (coarse-grained recovery) Too late, transaction has been committed Crash could occur anytime Hopes checkpoint writes always succeed (wrong!) Consistent reliability + current journal = impossible 21

Chained Transactions § Contains all recent changes (e. g. modified shepherd’s metadata) § “Chained” with previous transaction § Rule: Only after the chained transaction commits, can we release the previous transaction 22

Chained Transactions Example Policy: Remapping Memory I D RM D R New: Tx Release CTx commits Old : Txafter Release Journal TB Fixed Location D I TC D I R TB TC RMD 0 Checkpoint completes 23

Summary § Chained Transactions – – – § Handles failed-intentions Works for all policies Minimal changes in the journaling layer Repeatable across crashes – Idempotent policy • An important property for consistency in multiple crashes 24

Outline § Introduction § I/O Shepherd Architecture § Implementation § Evaluation § Conclusion 25

![Evaluation § Flexible – § Fine-Grained – § Implement gracefully-degrade RAID[TOS’ 05] Composable – Evaluation § Flexible – § Fine-Grained – § Implement gracefully-degrade RAID[TOS’ 05] Composable –](http://slidetodoc.com/presentation_image_h/c04c2053037275be3dee057b33a1fabf/image-26.jpg)

Evaluation § Flexible – § Fine-Grained – § Implement gracefully-degrade RAID[TOS’ 05] Composable – § Change ext 3 to all-stop or more-retry policies Perform multiple lines of defense Simple – Craft 8 policies in a simple manner 26

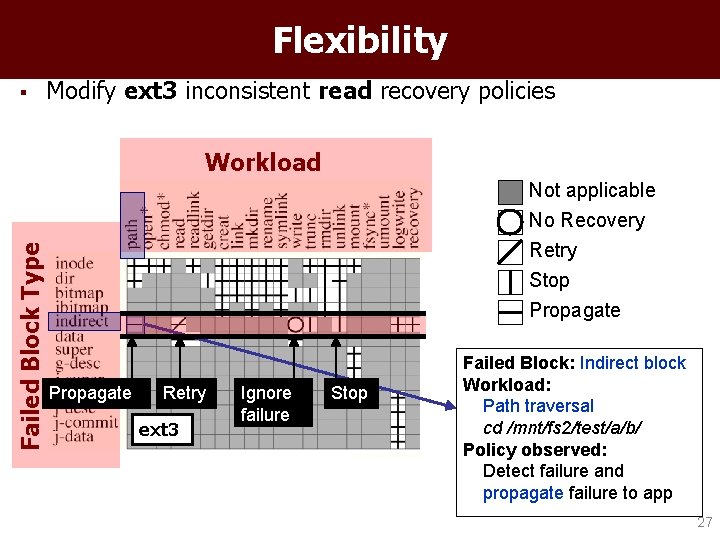

Flexibility § Modify ext 3 inconsistent read recovery policies Failed Block Type Workload Not applicable No Recovery Retry Stop Propagate Retry ext 3 Ignore failure Stop Failed Block: Indirect block Workload: Path traversal cd /mnt/fs 2/test/a/b/ Policy observed: Detect failure and propagate failure to app 27

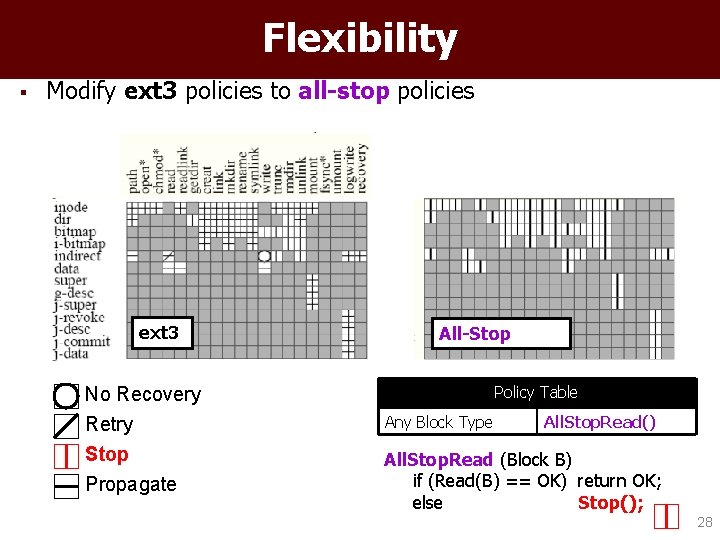

Flexibility § Modify ext 3 policies to all-stop policies ext 3 No Recovery Retry Stop Propagate All-Stop Policy Table Any Block Type All. Stop. Read() All. Stop. Read (Block B) if (Read(B) == OK) return OK; else Stop(); 28

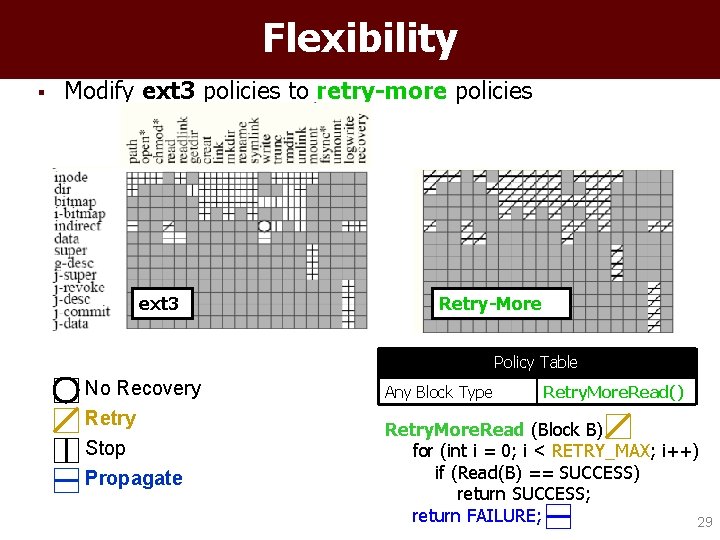

Flexibility § Modify ext 3 policies to retry-more policies ext 3 Retry-More Policy Table No Recovery Retry Stop Propagate Any Block Type Retry. More. Read() Retry. More. Read (Block B) for (int i = 0; i < RETRY_MAX; i++) if (Read(B) == SUCCESS) return SUCCESS; return FAILURE; 29

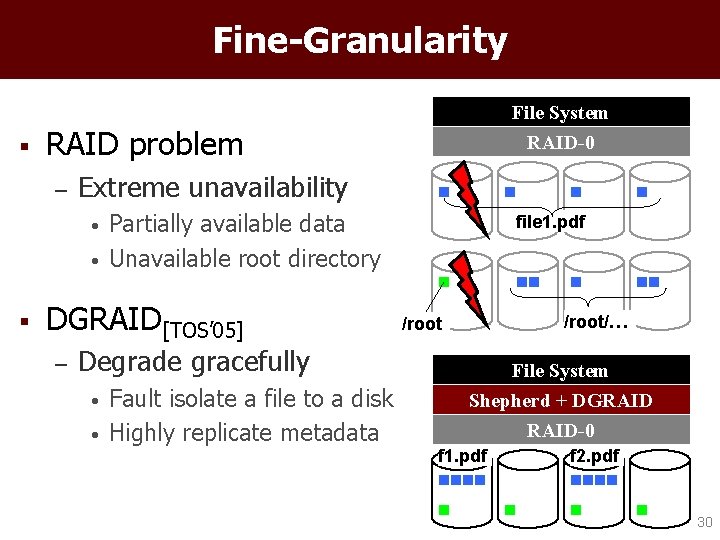

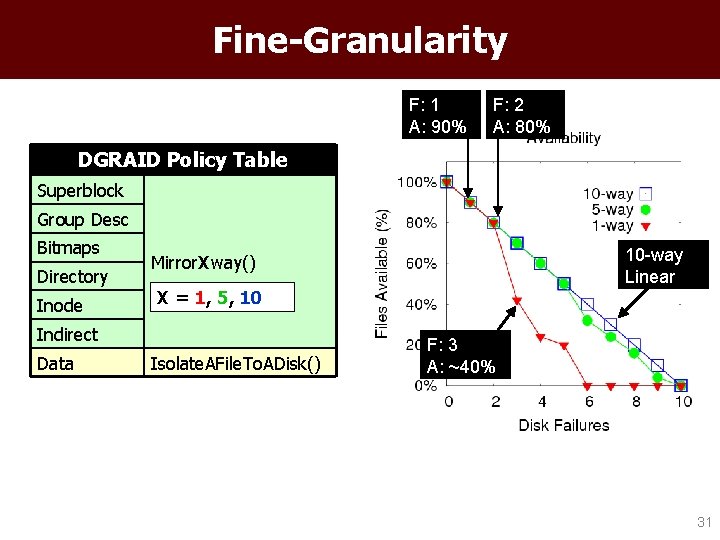

Fine-Granularity File System § RAID problem – Extreme unavailability • • § Partially available data Unavailable root directory DGRAID[TOS’ 05] – RAID-0 Degrade gracefully • • Fault isolate a file to a disk Highly replicate metadata file 1. pdf /root/… /root File System Shepherd + DGRAID-0 f 1. pdf f 2. pdf 30

Fine-Granularity F: 1 A: 90% F: 2 A: 80% DGRAID Policy Table Superblock Group Desc Bitmaps Directory Inode X = 1, 5, 10 Indirect Data 10 -way Linear Mirror. Xway() Isolate. AFile. To. ADisk() F: 3 A: ~40% 31

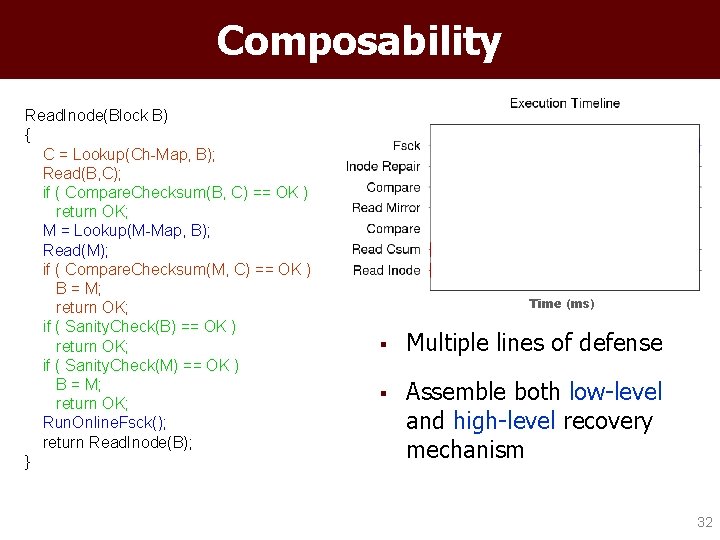

Composability Read. Inode(Block B) { C = Lookup(Ch-Map, B); Read(B, C); if ( Compare. Checksum(B, C) == OK ) return OK; M = Lookup(M-Map, B); Read(M); if ( Compare. Checksum(M, C) == OK ) B = M; return OK; if ( Sanity. Check(B) == OK ) return OK; if ( Sanity. Check(M) == OK ) B = M; return OK; Run. Online. Fsck(); return Read. Inode(B); } Time (ms) § Multiple lines of defense § Assemble both low-level and high-level recovery mechanism 32

Simplicity Policy Propagate § Writing reliability policy is simple – Implement 8 policies • – Using reusable primitives Complex one < 80 LOC 8 Sanity Check 10 Reboot 15 Retry 15 Mirroring 18 Parity 28 Multiple Lines of D D-GRAID 39 79 33

Conclusion § Modern storage failures are complex – § FS reliability framework does not exist – – § Not only fail-stop, but also exhibit individual block failures Scattered policy code – can’t expect much reliability Journaling + Block Failures Failed intentions (Flaw) I/O Shepherding – Powerful • – Flexible • – Deploy disk-level, RAID-level, FS-level policies Reliability as a function of workload and environment Consistent • Chained-transactions 34

ADvanced Systems Laboratory www. cs. wisc. edu/adsl Thanks to: I/O Shepherd’s shepherd – Frans Kaashoek Scholarship Sponsor: Research Sponsor: 35

Extra Slides 36

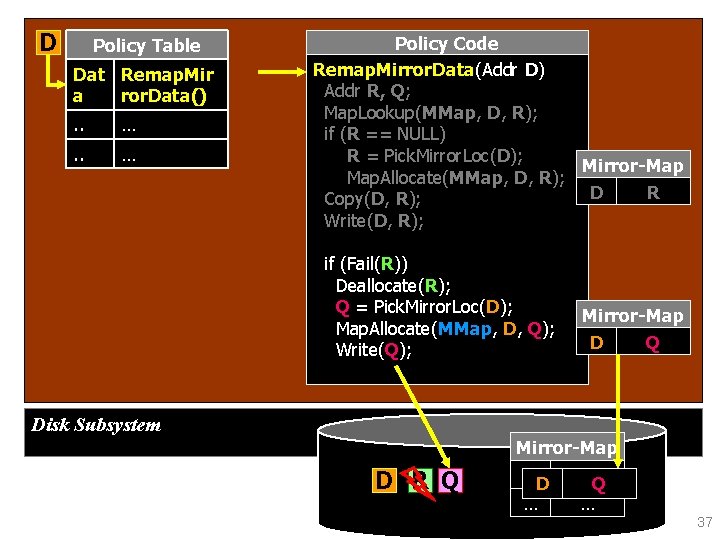

D Policy Table Dat Remap. Mir a ror. Data(). . … Policy Code Remap. Mirror. Data(Addr D) Addr R, Q; Map. Lookup(MMap, D, R); if (R == NULL) R = Pick. Mirror. Loc(D); Mirror-Map Map. Allocate(MMap, D, R); D R Copy(D, R); Write(D, R); if (Fail(R)) Deallocate(R); Q = Pick. Mirror. Loc(D); Map. Allocate(MMap, D, Q); Write(Q); Mirror-Map D Q Disk Subsystem Mirror-Map D R Q DD NULL Q … … 37

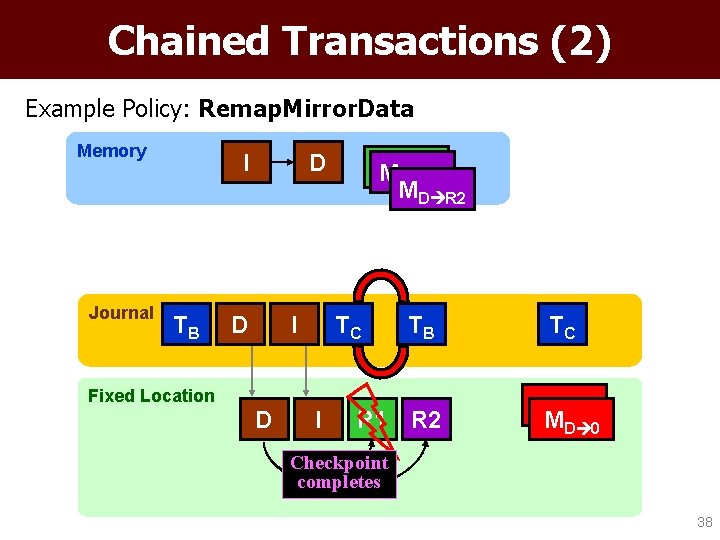

Chained Transactions (2) Example Policy: Remap. Mirror. Data Memory Journal I TB D D I M MD R 1 D R 2 M D R 2 TC TB Fixed Location D I R 1 R 2 TC MD 0 Checkpoint completes 38

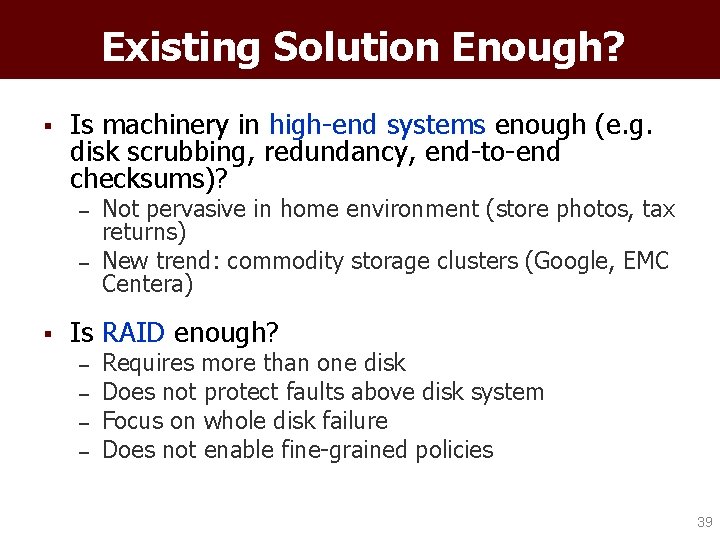

Existing Solution Enough? § Is machinery in high-end systems enough (e. g. disk scrubbing, redundancy, end-to-end checksums)? – – § Not pervasive in home environment (store photos, tax returns) New trend: commodity storage clusters (Google, EMC Centera) Is RAID enough? – – Requires more than one disk Does not protect faults above disk system Focus on whole disk failure Does not enable fine-grained policies 39

- Slides: 39