Classroom Assessment Reliability Classroom Assessment Reliability Reliability Assessment

Classroom Assessment Reliability

Classroom Assessment Reliability • Reliability = Assessment Consistency. – Consistency within teachers across students. – Consistency within teachers over multiple occasions for students. – Consistency across teachers for the same students. – Consistency across teachers across students.

Three Types of Reliability • Stability reliability. • Alternate form reliability. • Internal consistency reliability.

Stability Reliability • Stability Reliability – Concerned with the question: Are assessment results consistent over time (over occasions). Think of some examples where stability reliability might be important. Why might test results NOT be consistent over time?

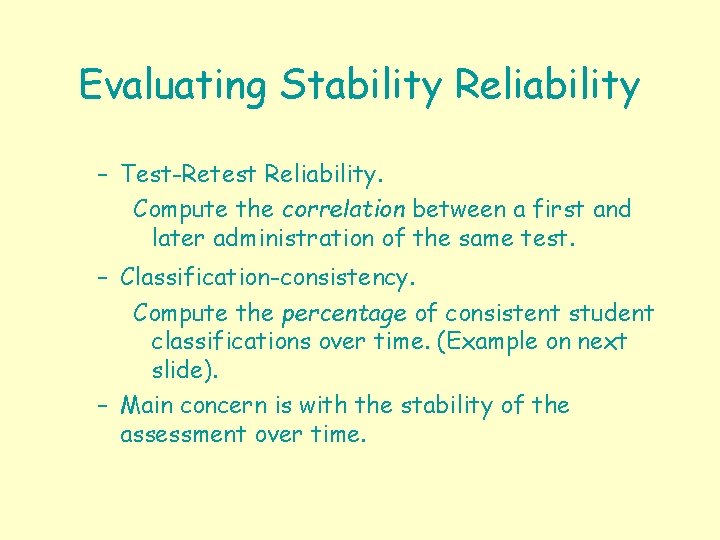

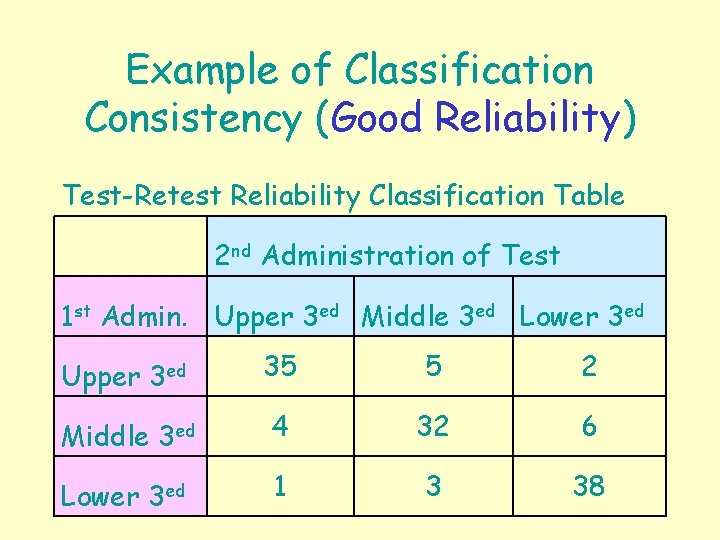

Evaluating Stability Reliability – Test-Retest Reliability. Compute the correlation between a first and later administration of the same test. – Classification-consistency. Compute the percentage of consistent student classifications over time. (Example on next slide). – Main concern is with the stability of the assessment over time.

Example of Classification Consistency Test-Retest Reliability Classification Table 2 nd Administration of Test 1 st Admin. Upper 3 ed Middle 3 ed Lower 3 ed

Example of Classification Consistency (Good Reliability) Test-Retest Reliability Classification Table 2 nd Administration of Test 1 st Admin. Upper 3 ed Middle 3 ed Lower 3 ed Upper 3 ed 35 5 2 Middle 3 ed 4 32 6 Lower 3 ed 1 3 38

Example of Classification Consistency (Poor Reliability) Test-Retest Reliability Classification Table 2 nd Administration of Test 1 st Admin. Upper 3 ed Middle 3 ed Lower 3 ed Upper 3 ed 13 15 4 Middle 3 ed 10 24 8 Lower 3 ed 11 10 18

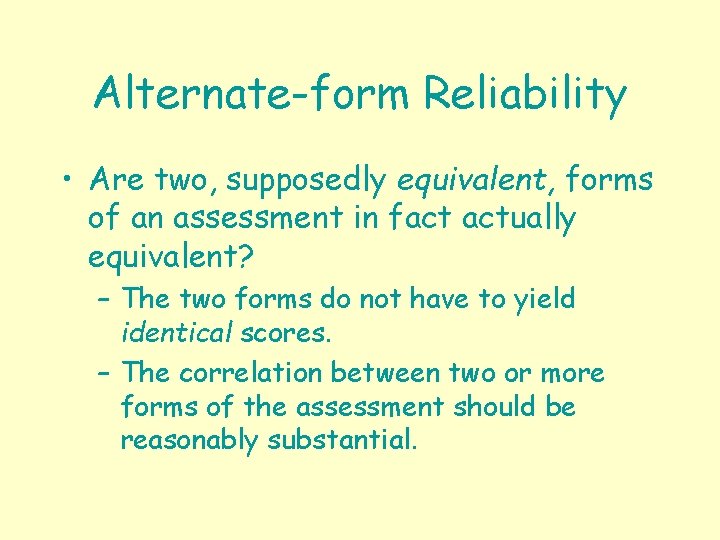

Alternate-form Reliability • Are two, supposedly equivalent, forms of an assessment in fact actually equivalent? – The two forms do not have to yield identical scores. – The correlation between two or more forms of the assessment should be reasonably substantial.

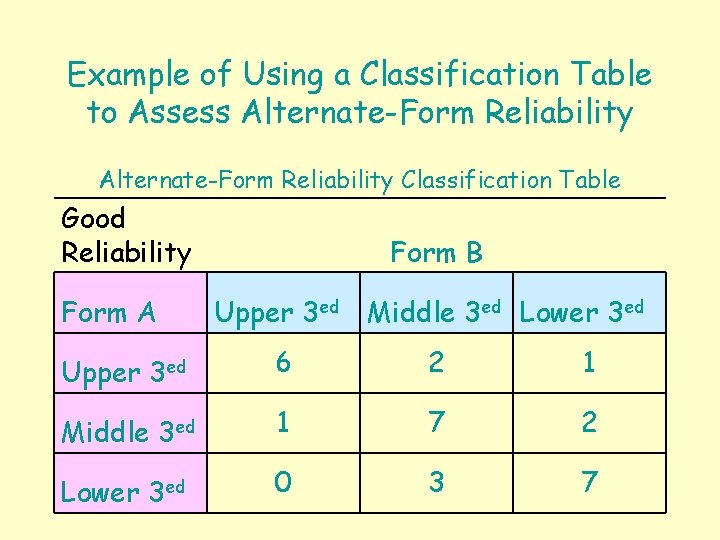

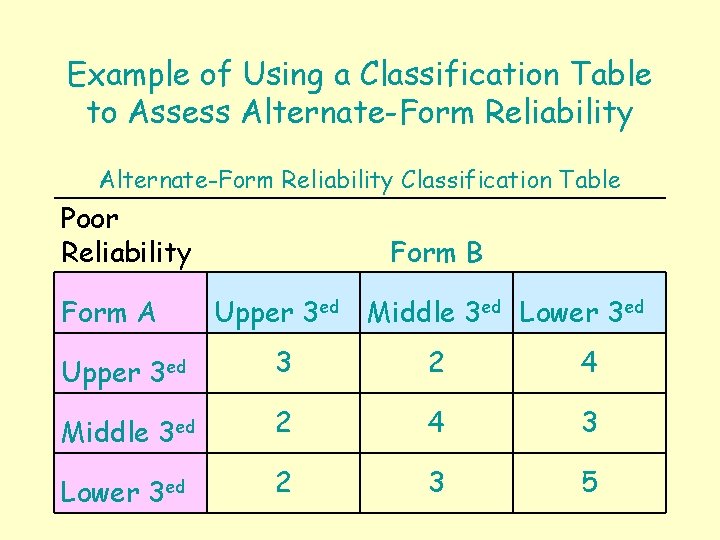

Evaluating Alternate-form Reliability Administer two forms of the assessment to the same individuals and correlate the results. Determine the extent to which the same students are classified the same way by the two forms. Alternate-form reliability is established by evidence, not by proclamation.

Example of Using a Classification Table to Assess Alternate-Form Reliability Classification Table Good Reliability Form A Form B Upper 3 ed Middle 3 ed Lower 3 ed Upper 3 ed 6 2 1 Middle 3 ed 1 7 2 Lower 3 ed 0 3 7

Example of Using a Classification Table to Assess Alternate-Form Reliability Classification Table Poor Reliability Form A Form B Upper 3 ed Middle 3 ed Lower 3 ed Upper 3 ed 3 2 4 Middle 3 ed 2 4 3 Lower 3 ed 2 3 5

Internal Consistency Reliability Concerned with the extent to which the items (or components) of an assessment function consistently. To what extent do the items in an assessment measure a single attribute? For example, consider a math problem-solving test. To what extent does reading comprehension play a role? What is being measured?

Evaluating Internal Consistency Reliability • Split-Half Correlations. • Kuder-Richardson Formua (KR 20). – Used with binary-scored (dichotomous) items. – Average of all possible split-half correlations. • Cronbach’s Coefficient Alpha. – Similar to KR 20, except used with non-binary scored (polytomous) items (e. g. , items that measure attitude.

Reliability Components of an Observation O=T+E Observation = True Status + Error.

Standard Error of Measurement • Provides an index of the reliability of an individual’s score. • The standard deviation of theoretical distribution of errors (i. e. the E’s). • The more reliable a test, the smaller the SEM.

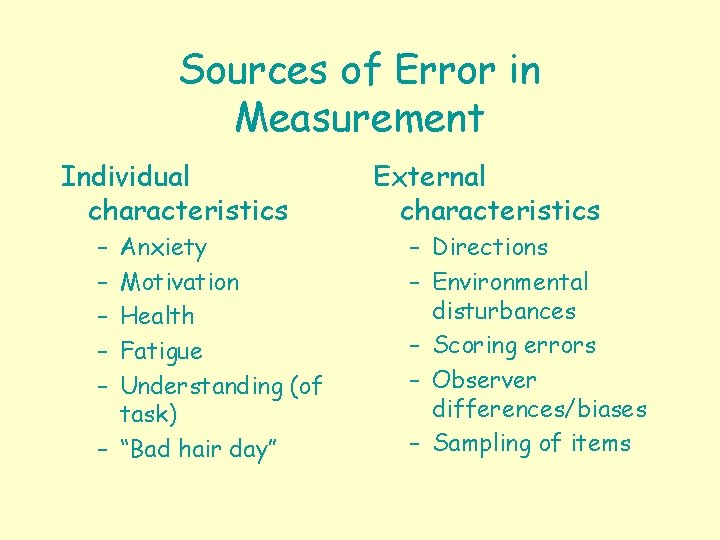

Sources of Error in Measurement Individual characteristics – – – Anxiety Motivation Health Fatigue Understanding (of task) – “Bad hair day” External characteristics – Directions – Environmental disturbances – Scoring errors – Observer differences/biases – Sampling of items

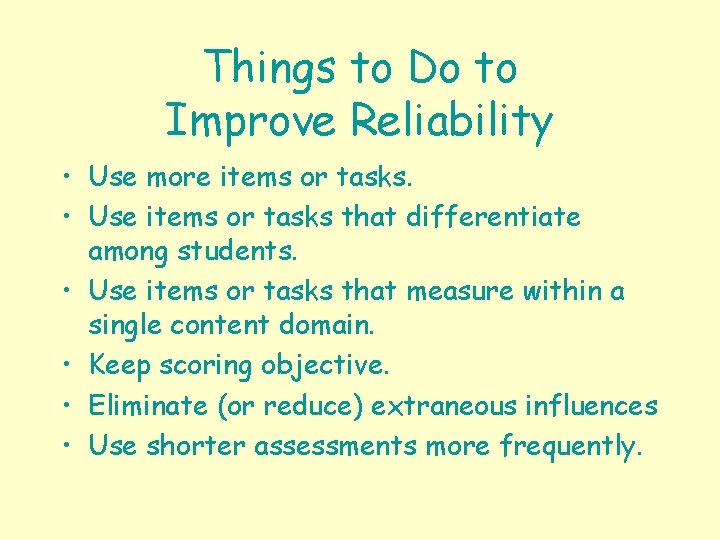

Things to Do to Improve Reliability • Use more items or tasks. • Use items or tasks that differentiate among students. • Use items or tasks that measure within a single content domain. • Keep scoring objective. • Eliminate (or reduce) extraneous influences • Use shorter assessments more frequently.

End

- Slides: 19