Replication Improves reliability Improves availability What good is

Replication • Improves reliability • Improves availability (What good is a reliable system if it is not available? ) • Replication must be transparent and create the illusion of a single copy.

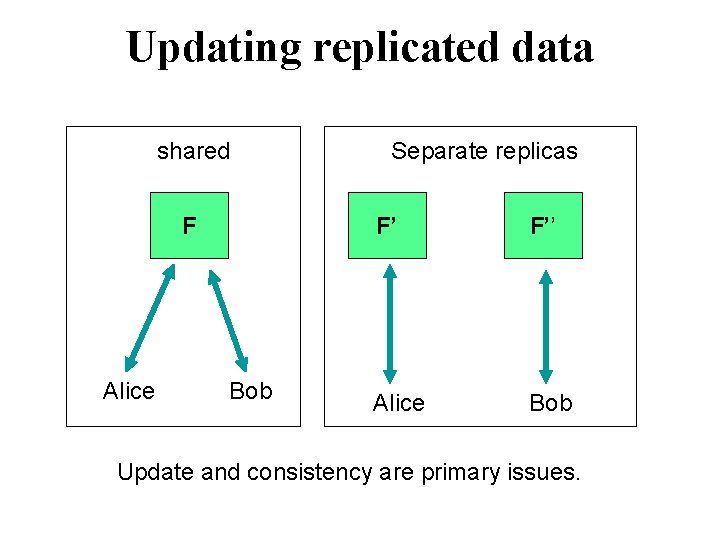

Updating replicated data shared F Alice Bob Separate replicas F’ F’’ Alice Bob Update and consistency are primary issues.

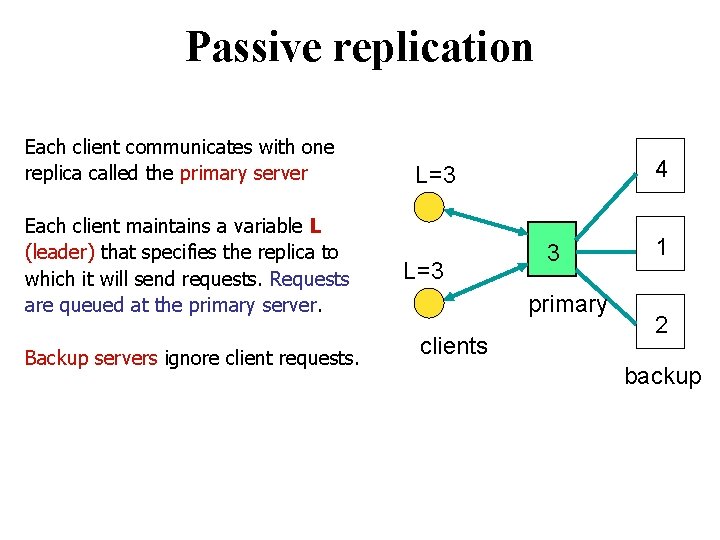

Passive replication Each client communicates with one replica called the primary server Each client maintains a variable L (leader) that specifies the replica to which it will send requests. Requests are queued at the primary server. Backup servers ignore client requests. 4 L=3 3 primary clients 1 2 backup

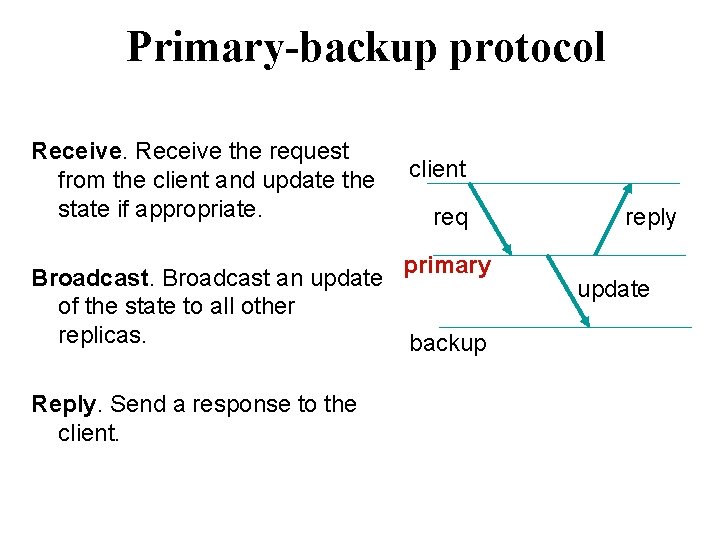

Primary-backup protocol Receive the request from the client and update the state if appropriate. client req primary Broadcast an update of the state to all other replicas. backup Reply. Send a response to the client. reply update

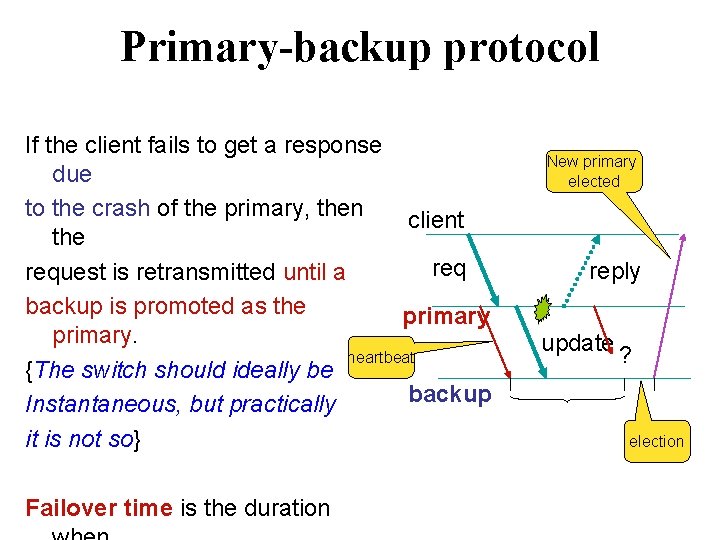

Primary-backup protocol If the client fails to get a response due to the crash of the primary, then client the request is retransmitted until a backup is promoted as the primary. heartbeat {The switch should ideally be backup Instantaneous, but practically it is not so} Failover time is the duration New primary elected reply update ? election

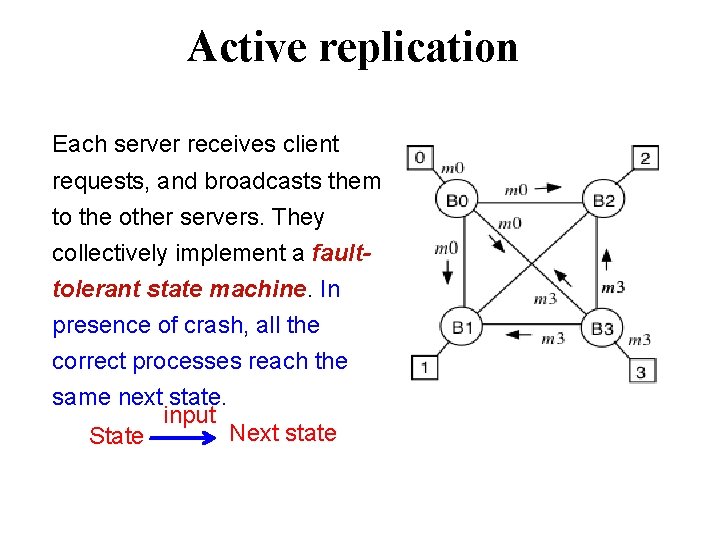

Active replication Each server receives client requests, and broadcasts them to the other servers. They collectively implement a faulttolerant state machine. In presence of crash, all the correct processes reach the same next state. input Next state State

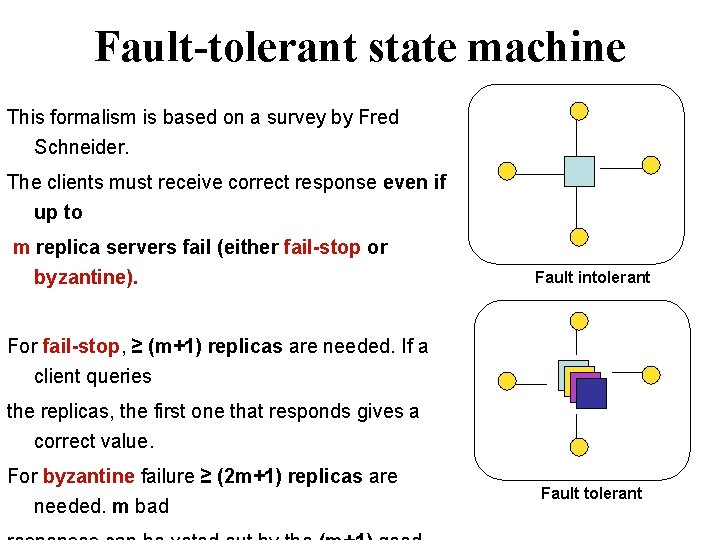

Fault-tolerant state machine This formalism is based on a survey by Fred Schneider. The clients must receive correct response even if up to m replica servers fail (either fail-stop or byzantine). Fault intolerant For fail-stop, ≥ (m+1) replicas are needed. If a client queries the replicas, the first one that responds gives a correct value. For byzantine failure ≥ (2 m+1) replicas are needed. m bad Fault tolerant

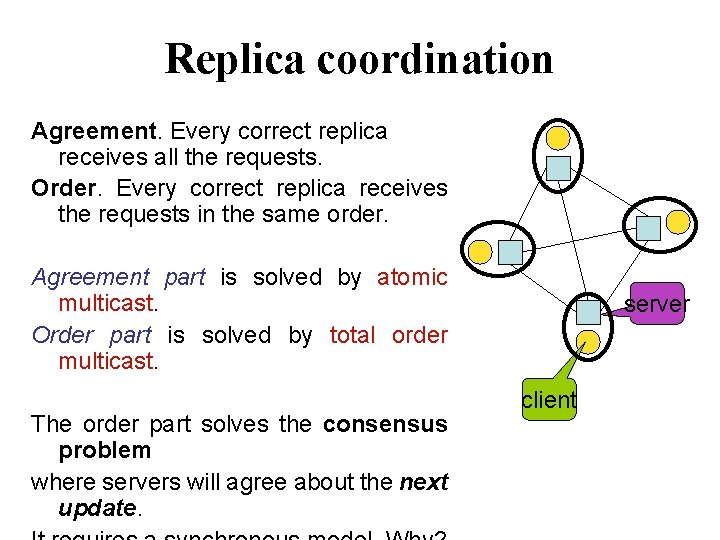

Replica coordination Agreement. Every correct replica receives all the requests. Order. Every correct replica receives the requests in the same order. Agreement part is solved by atomic multicast. Order part is solved by total order multicast. The order part solves the consensus problem where servers will agree about the next update. server client

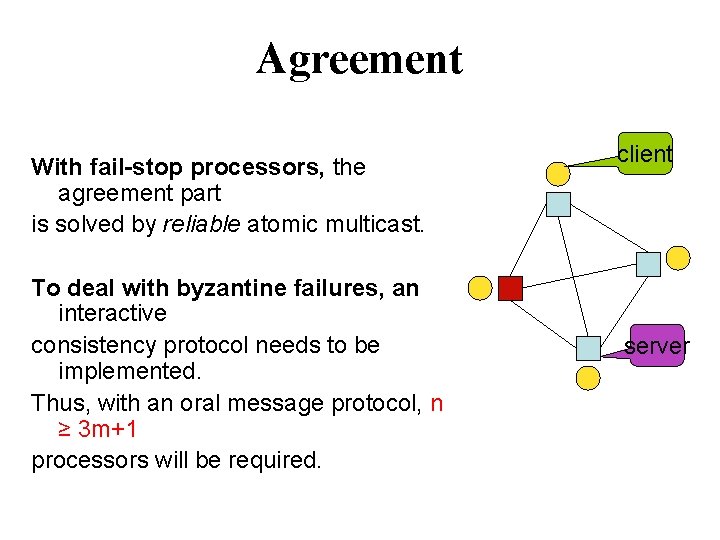

Agreement With fail-stop processors, the agreement part is solved by reliable atomic multicast. To deal with byzantine failures, an interactive consistency protocol needs to be implemented. Thus, with an oral message protocol, n ≥ 3 m+1 processors will be required. client server

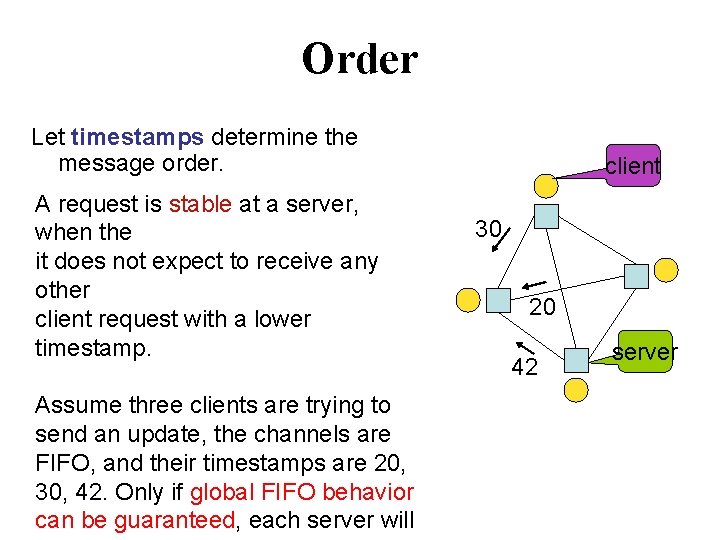

Order Let timestamps determine the message order. A request is stable at a server, when the it does not expect to receive any other client request with a lower timestamp. Assume three clients are trying to send an update, the channels are FIFO, and their timestamps are 20, 30, 42. Only if global FIFO behavior can be guaranteed, each server will client 30 20 42 server

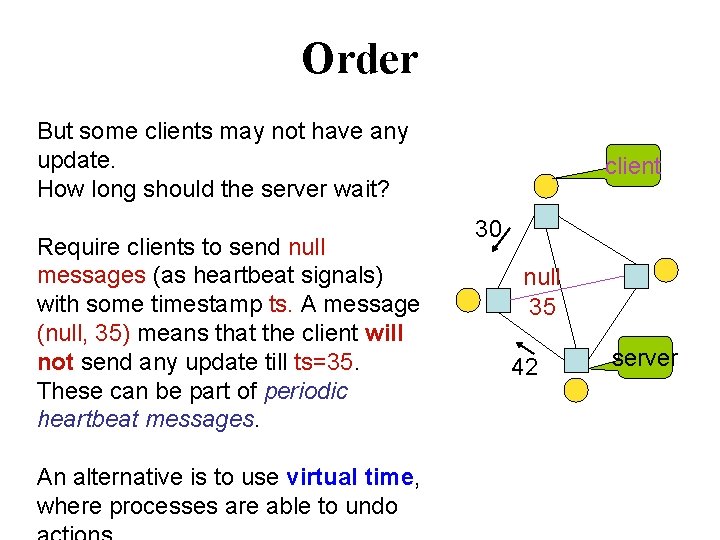

Order But some clients may not have any update. How long should the server wait? Require clients to send null messages (as heartbeat signals) with some timestamp ts. A message (null, 35) means that the client will not send any update till ts=35. These can be part of periodic heartbeat messages. An alternative is to use virtual time, where processes are able to undo client 30 null 35 42 server

What is replica consistency? replica clients Consistency models define a contract between the data man the clients regarding the responses to read and write operatio

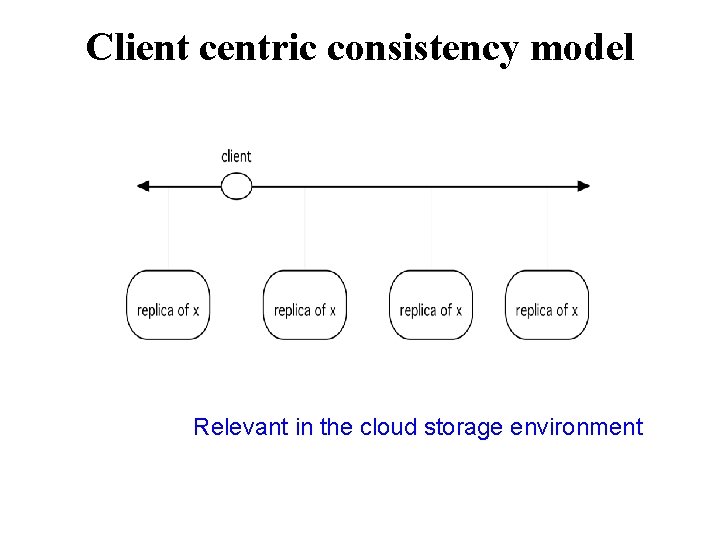

Replica Consistency • Data Centric Client communicates with the same replica • Client centric Client communicates with different replica at different times. This may be the case with mobile clients.

Data-centric Consistency Models 1. Strict consistency 2. Linearizability 3. Sequential consistency 4. Causal consistency 5. Eventual consistency (as in DNS) 6. Weak consistency There are many other models

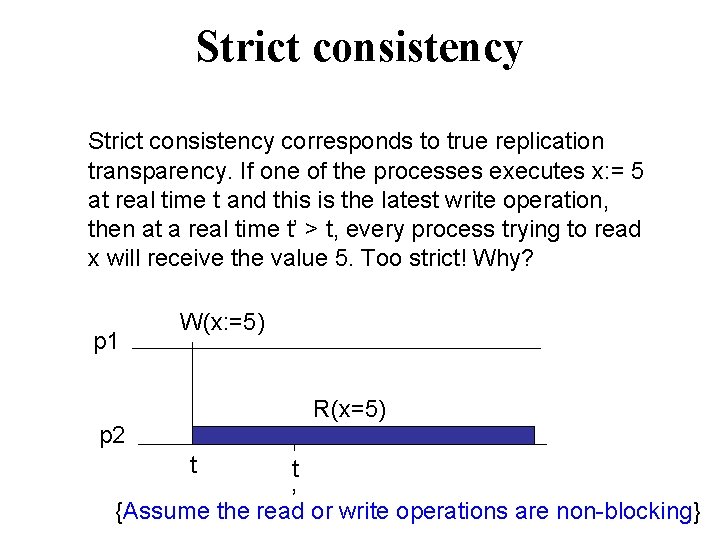

Strict consistency corresponds to true replication transparency. If one of the processes executes x: = 5 at real time t and this is the latest write operation, then at a real time t’ > t, every process trying to read x will receive the value 5. Too strict! Why? p 1 W(x: =5) R(x=5) p 2 t t ’ {Assume the read or write operations are non-blocking}

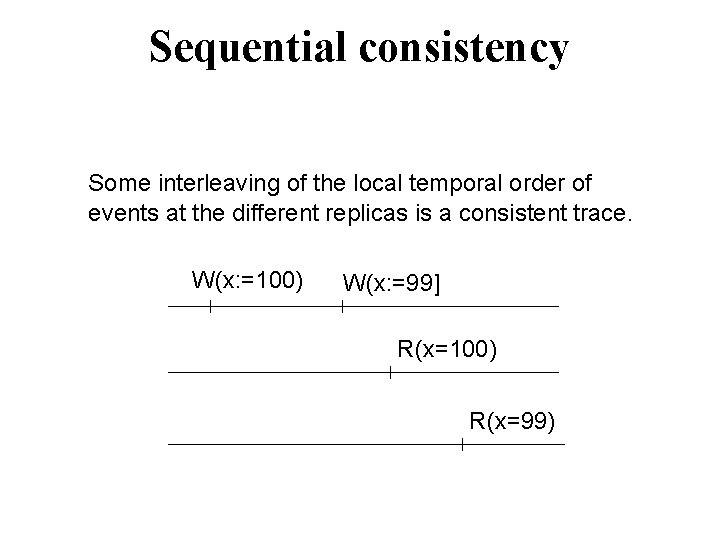

Sequential consistency Some interleaving of the local temporal order of events at the different replicas is a consistent trace. W(x: =100) W(x: =99] R(x=100) R(x=99)

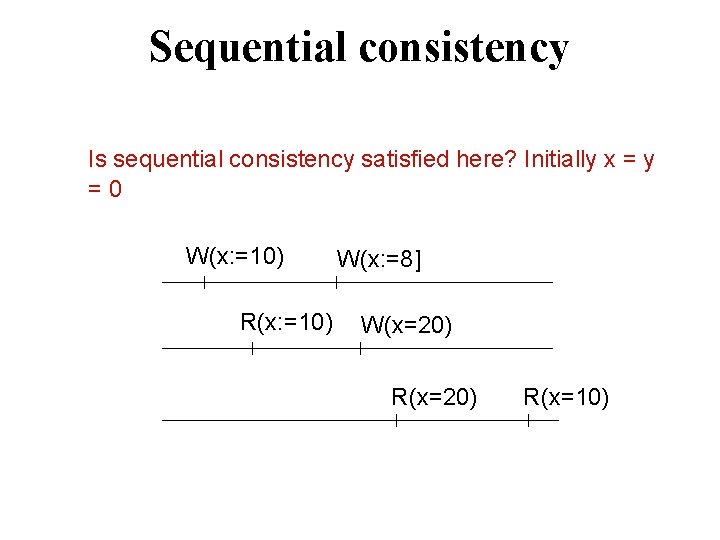

Sequential consistency Is sequential consistency satisfied here? Initially x = y = 0 W(x: =10) R(x: =10) W(x: =8] W(x=20) R(x=10)

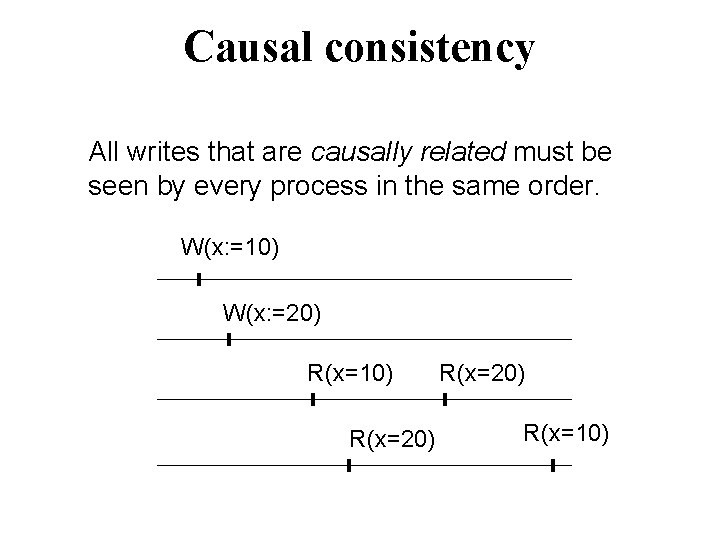

Causal consistency All writes that are causally related must be seen by every process in the same order. W(x: =10) W(x: =20) R(x=10) R(x=20) R(x=10)

Linearizability is a correctness criterion for concurrent object (Herlihy & Wing ACM TOPLAS 1990). It provides the illusion that each operation on the object takes effect in zero time, and the results are “equivalent computation. to” some legal sequential

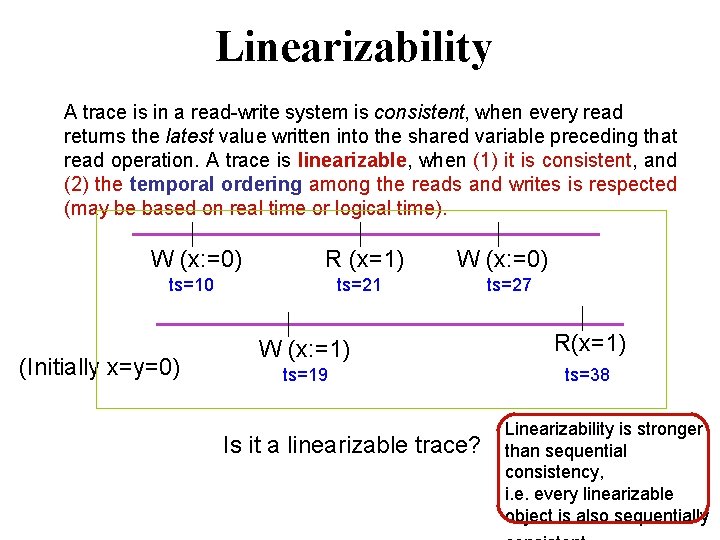

Linearizability A trace is in a read-write system is consistent, when every read returns the latest value written into the shared variable preceding that read operation. A trace is linearizable, when (1) it is consistent, and (2) the temporal ordering among the reads and writes is respected (may be based on real time or logical time). W (x: =0) R (x=1) ts=10 (Initially x=y=0) W (x: =0) ts=21 ts=27 W (x: =1) R(x=1) ts=19 ts=38 Is it a linearizable trace? Linearizability is stronger than sequential consistency, i. e. every linearizable object is also sequentially

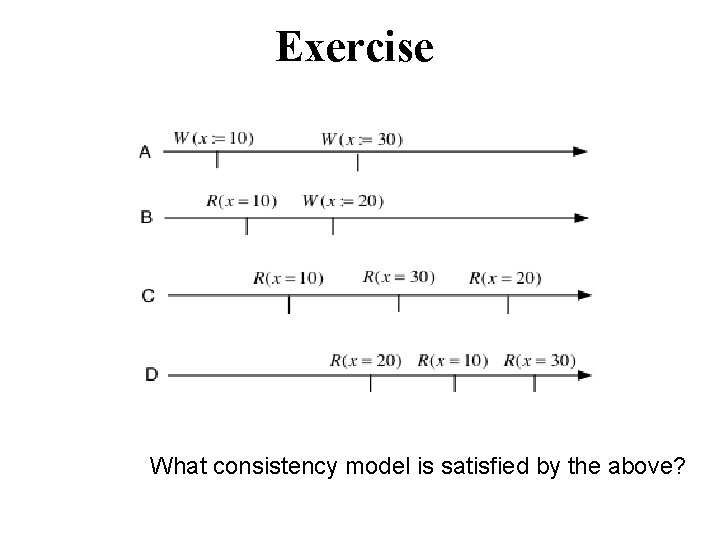

Exercise What consistency model is satisfied by the above?

Implementing consistency models Why are there so many consistency models? Each model has a use in some type of application. The cost of implementation (as measured by message complexity) decreases as the models become “weaker”.

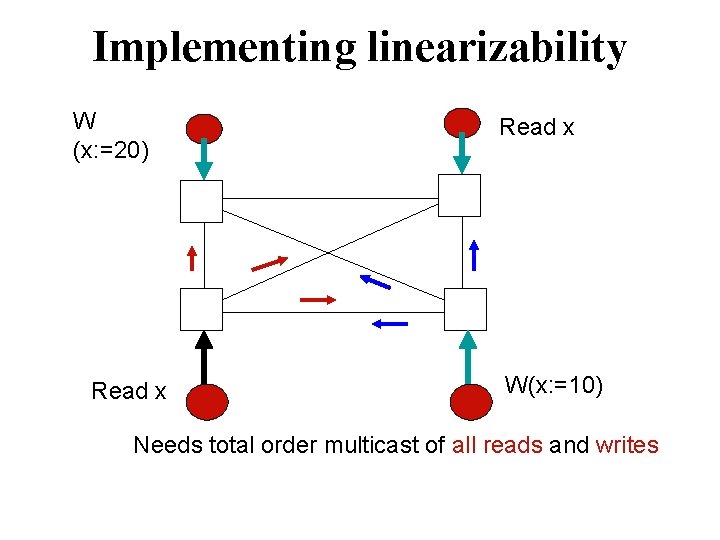

Implementing linearizability W (x: =20) Read x W(x: =10) Needs total order multicast of all reads and writes

Implementing linearizability • The total order multicast forces every process to accept and handle all reads and writes in the same temporal order. • The peers update their copies in response to a write, but only send acknowledgments for reads. After all updates and acknowledgments are received, the local copy is returned to the client.

Implementing sequential consistency Use total order broadcast all writes only, but for reads, immediately return local copies.

Eventual consistency Only guarantees that all replicas eventually receive all updates, regardless of the order. The system does not provide replication transparency but large scale systems like Bayou allows this. Conflicting updates are resolved using occasional anti-entropy sessions that incrementally steer the system towards a consistent configuration.

Implementing eventual consistency Updates are propagated via epidemic protocols. Server S 1 randomly picks a neighboring server S 2, and passes on the update. Case 1. S 2 did not receive the update before. In this case, S 2 accepts the update, and both S 1 and S 2 continue the process. Case 2. S 2 already received the update from someone else. In that case, S 1 loses interest in sending updates to S 2 (reduces the probability of transmission to S 2 to 1/p (p is a tunable parameter) There is always a finite probability that some servers do not

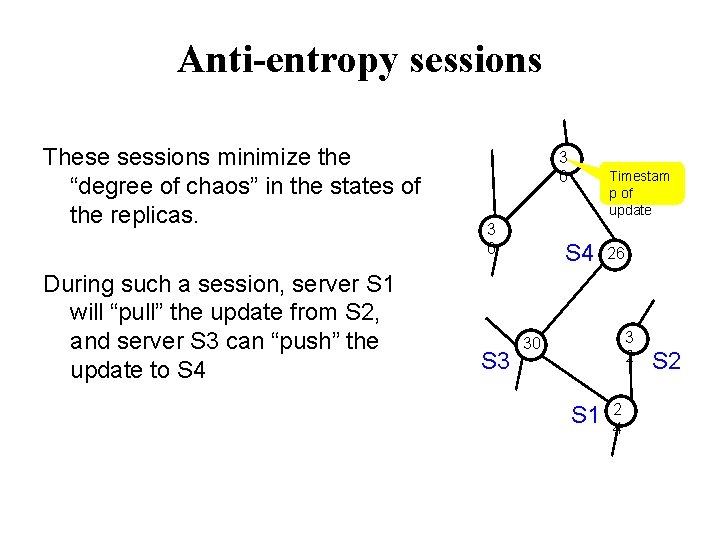

Anti-entropy sessions These sessions minimize the “degree of chaos” in the states of the replicas. During such a session, server S 1 will “pull” the update from S 2, and server S 3 can “push” the update to S 4 3 0 S 3 Timestam p of update S 4 26 3 2 30 S 1 2 4 S 2

Exercise Let x, y be two shared variables Process P {initially x=0} x : =1; if y=0 x: =2 fi; Print x Process Q {initially y=0} y: =1; if x=0 y: =2 fi; Print y If sequential consistency is preserved, then what are the possible values of the printouts? List all of them.

Client centric consistency model Relevant in the cloud storage environment

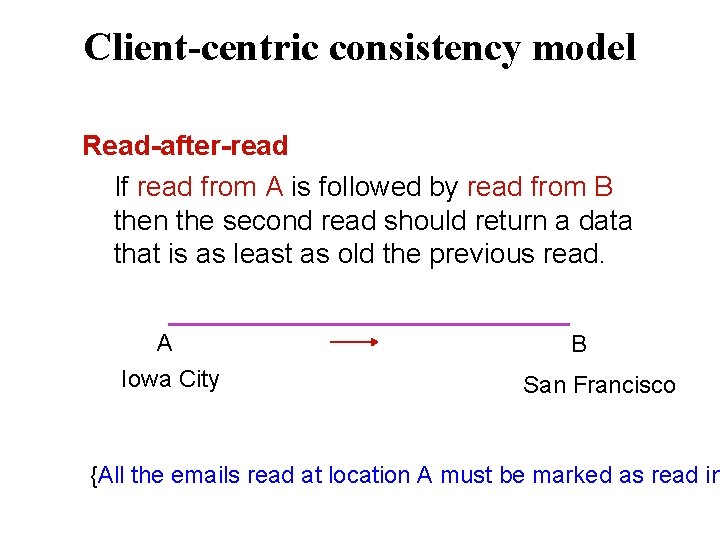

Client-centric consistency model Read-after-read If read from A is followed by read from B then the second read should return a data that is as least as old the previous read. A Iowa City B San Francisco {All the emails read at location A must be marked as read in

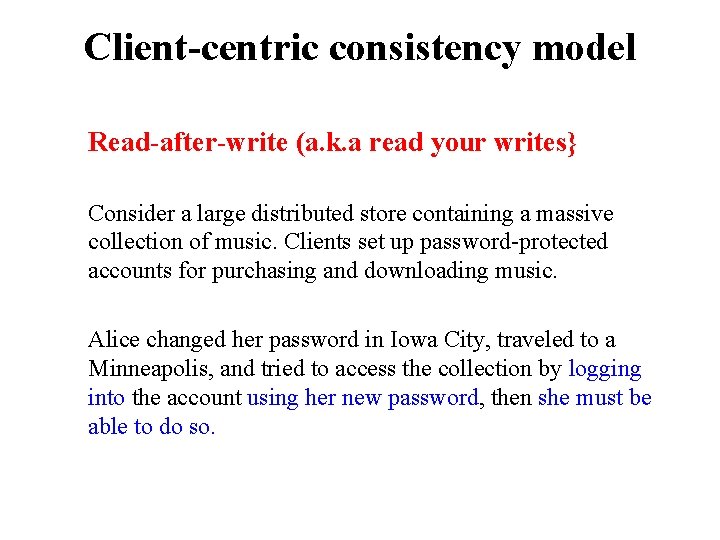

Client-centric consistency model Read-after-write (a. k. a read your writes} Consider a large distributed store containing a massive collection of music. Clients set up password-protected accounts for purchasing and downloading music. Alice changed her password in Iowa City, traveled to a Minneapolis, and tried to access the collection by logging into the account using her new password, then she must be able to do so.

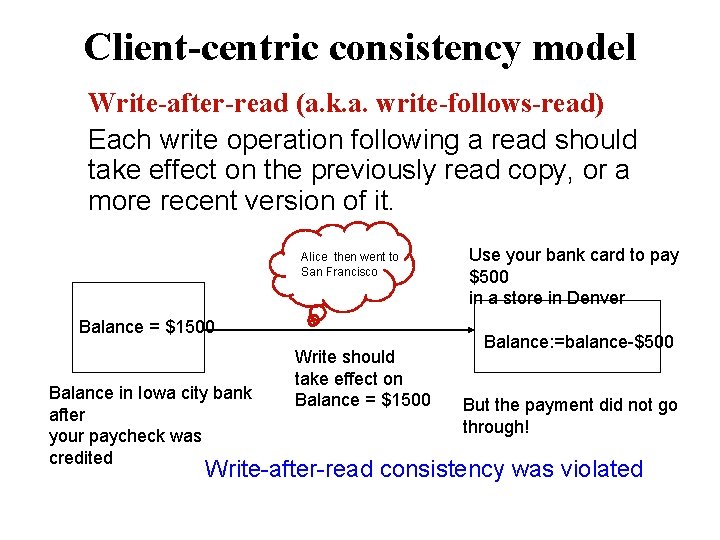

Client-centric consistency model Write-after-read (a. k. a. write-follows-read) Each write operation following a read should take effect on the previously read copy, or a more recent version of it. Alice then went to San Francisco Balance = $1500 Balance in Iowa city bank after your paycheck was credited Write should take effect on Balance = $1500 Use your bank card to pay $500 in a store in Denver Balance: =balance-$500 But the payment did not go through! Write-after-read consistency was violated

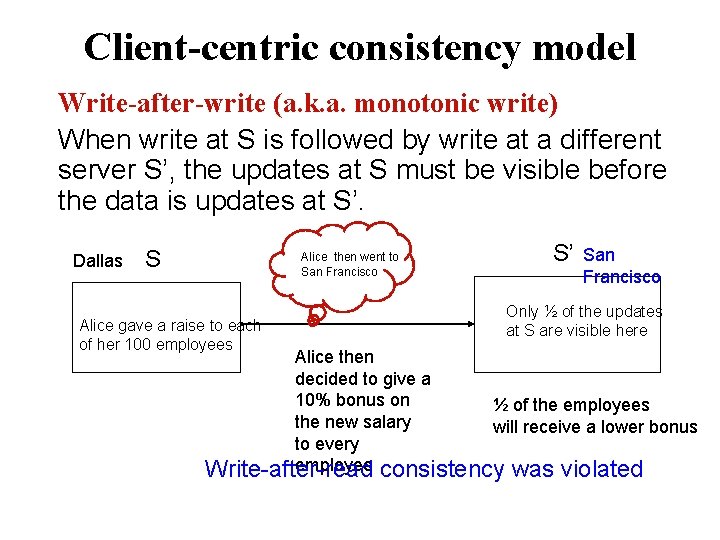

Client-centric consistency model Write-after-write (a. k. a. monotonic write) When write at S is followed by write at a different server S’, the updates at S must be visible before the data is updates at S’. Dallas S Alice then went to San Francisco Alice gave a raise to each of her 100 employees S’ San Francisco Only ½ of the updates at S are visible here Alice then decided to give a 10% bonus on ½ of the employees the new salary will receive a lower bonus to every employee Write-after-read consistency was violated

Implementing client-centric consistency Read set RS, write set WS Before an operation at a different server is initiated, the app RS or WS is fetched from another server.

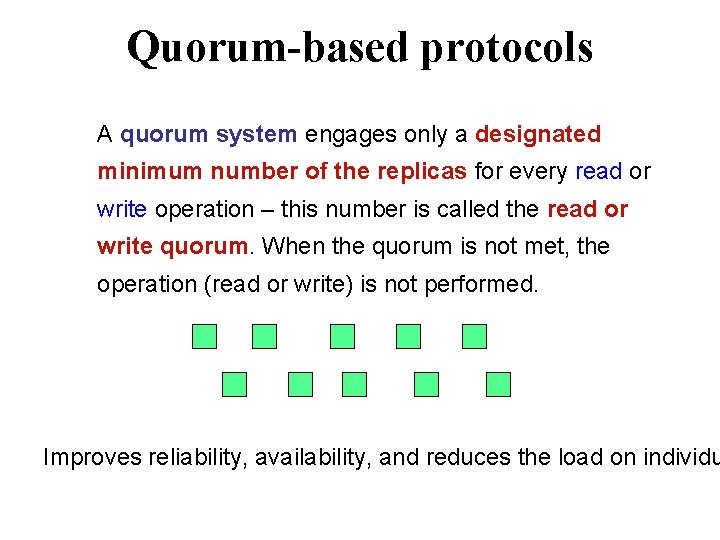

Quorum-based protocols A quorum system engages only a designated minimum number of the replicas for every read or write operation – this number is called the read or write quorum. When the quorum is not met, the operation (read or write) is not performed. Improves reliability, availability, and reduces the load on individu

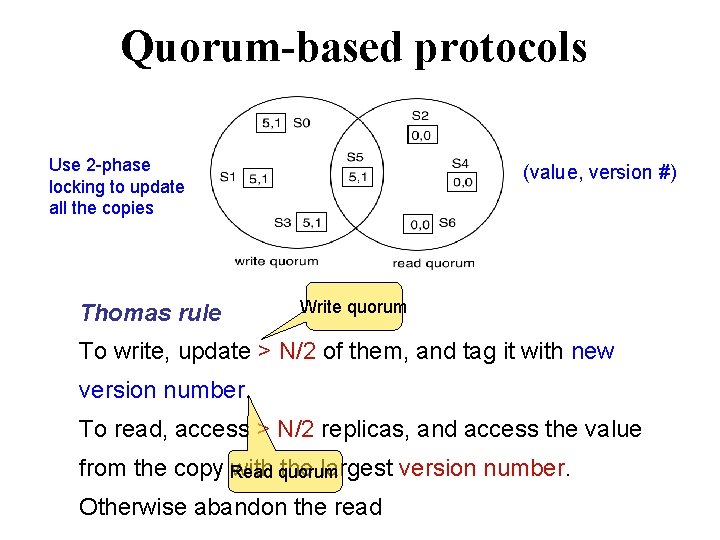

Quorum-based protocols Use 2 -phase locking to update all the copies Thomas rule (value, version #) Write quorum To write, update > N/2 of them, and tag it with new version number. To read, access > N/2 replicas, and access the value from the copy with the largest version number. Read quorum Otherwise abandon the read

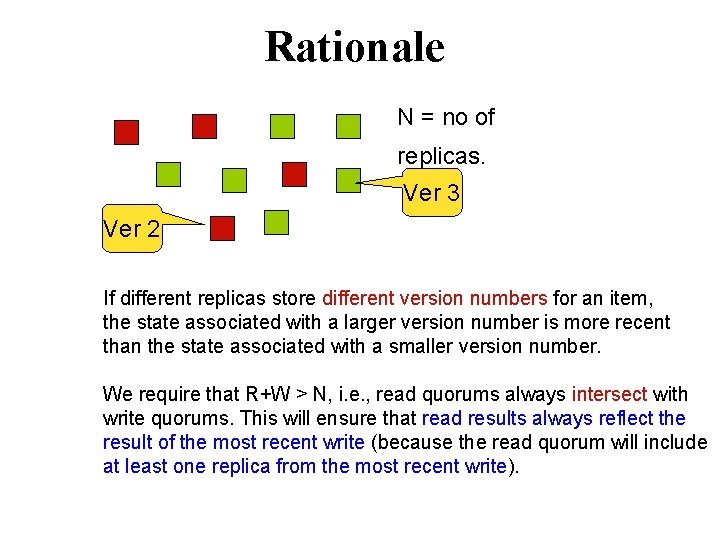

Rationale N = no of replicas. Ver 3 Ver 2 If different replicas store different version numbers for an item, the state associated with a larger version number is more recent than the state associated with a smaller version number. We require that R+W > N, i. e. , read quorums always intersect with write quorums. This will ensure that read results always reflect the result of the most recent write (because the read quorum will include at least one replica from the most recent write).

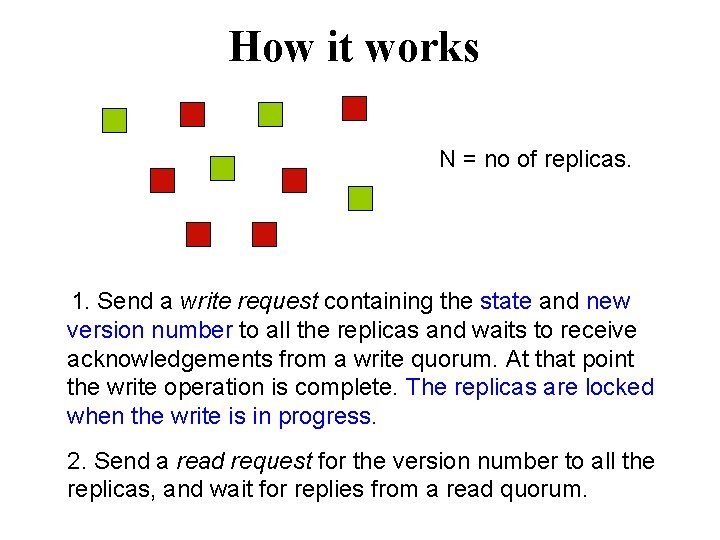

How it works N = no of replicas. 1. Send a write request containing the state and new version number to all the replicas and waits to receive acknowledgements from a write quorum. At that point the write operation is complete. The replicas are locked when the write is in progress. 2. Send a read request for the version number to all the replicas, and wait for replies from a read quorum.

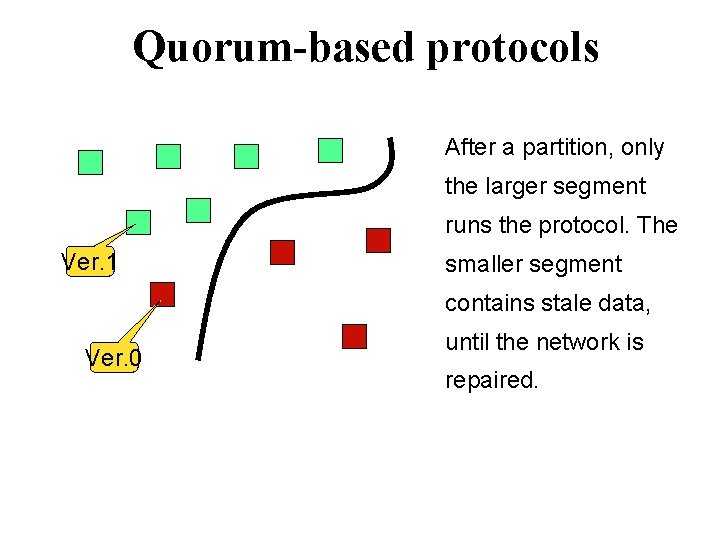

Quorum-based protocols After a partition, only the larger segment runs the protocol. The Ver. 1 smaller segment contains stale data, Ver. 0 until the network is repaired.

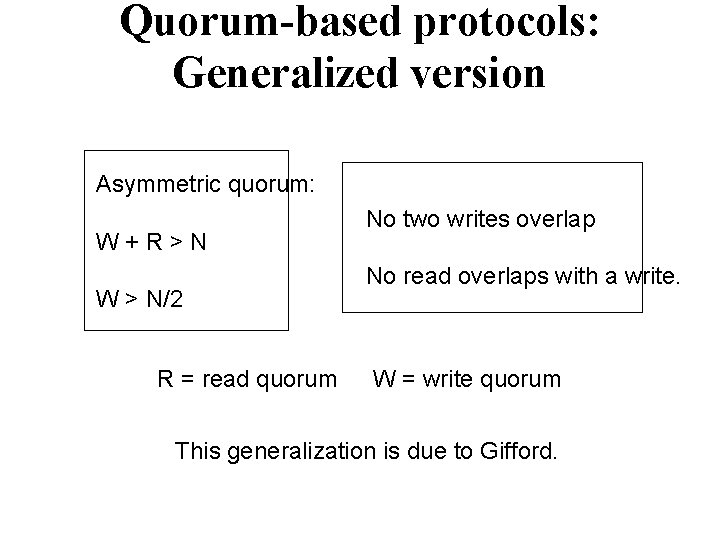

Quorum-based protocols: Generalized version Asymmetric quorum: W + R > N W > N/2 R = read quorum No two writes overlap No read overlaps with a write. W = write quorum This generalization is due to Gifford.

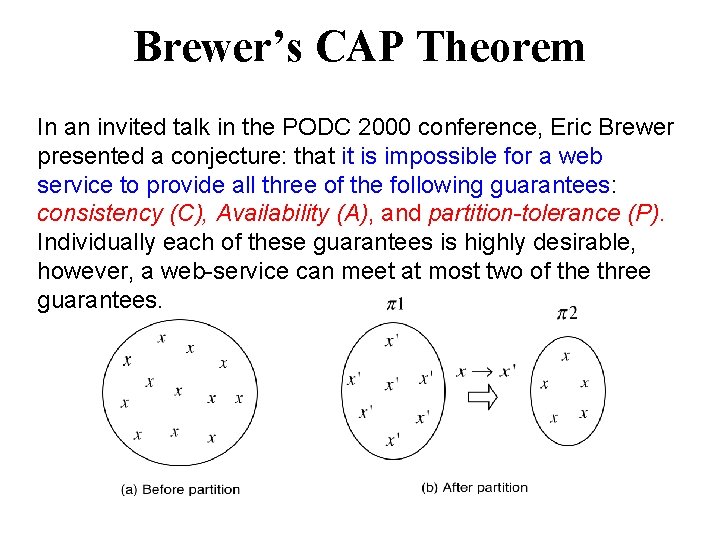

Brewer’s CAP Theorem In an invited talk in the PODC 2000 conference, Eric Brewer presented a conjecture: that it is impossible for a web service to provide all three of the following guarantees: consistency (C), Availability (A), and partition-tolerance (P). Individually each of these guarantees is highly desirable, however, a web-service can meet at most two of the three guarantees.

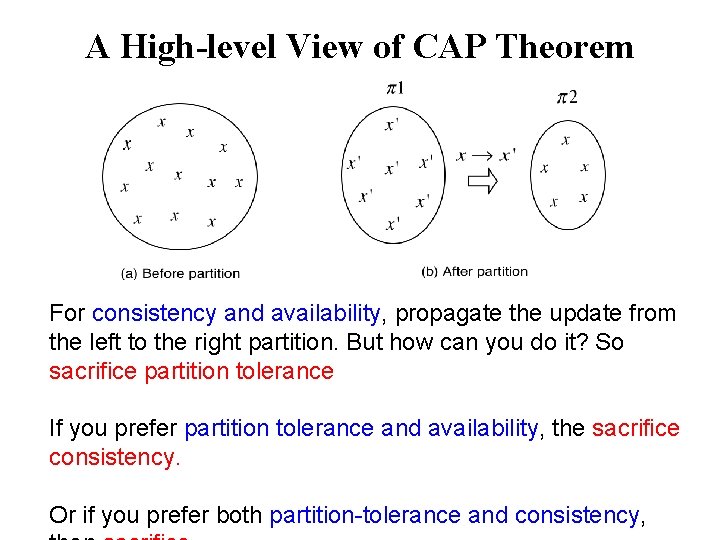

A High-level View of CAP Theorem For consistency and availability, propagate the update from the left to the right partition. But how can you do it? So sacrifice partition tolerance If you prefer partition tolerance and availability, the sacrifice consistency. Or if you prefer both partition-tolerance and consistency,

Amazon Dynamo Amazon’s Dynamo is a highly scalable and highly available key-va storage designed to support the implementation of its various e-co services. Dynamo serves tens of millions of customers at peak times using thousands of servers located across numerous data centers aroun Dynamo uses distributed hash tables (DHT) to map its servers in a key space using consistent hashing commonly used in many P 2 P

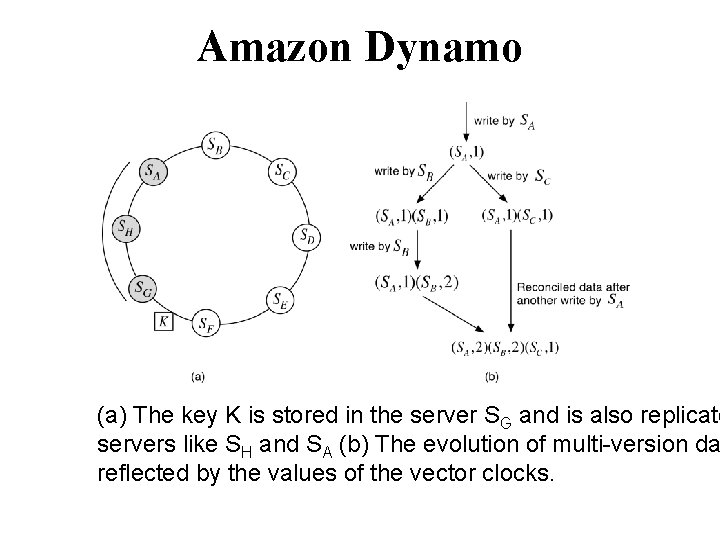

Amazon Dynamo (a) The key K is stored in the server SG and is also replicate servers like SH and SA (b) The evolution of multi-version da reflected by the values of the vector clocks.

Amazon Dynamo Multiple versions of data are however rare. In a 24 -hour profile of the shopping cart service, 99. 94% of requests saw exactly one version, and 0. 00057% of requests saw 2 versions. Write: the coordinator generates the vector clock for the new version, and sends it to the top T reachable nodes. If at least W nodes respond, then the write is considered successful. Read: the coordinator sends a request for all existing version to the T top reachable servers. If it receives R responses then the read is considered successful Uses sloppy quorum -- T, R, and W are limited to the first set of reachable non-faulty servers in the consistent hashing ring -- this speeds up the read and the write operations by avoiding the slow servers. Typically, (T, R, W) = (3, 2, 2)

Amazon Dynamo Maintains the spirit of “always write” When a designated server S is inaccessible or down, the write is directed to a different server S’ with a hint that this update is meant for S. S’ later delivers the update to S when it recovers (Hinted handoff). Service level agreement Quite stringent -- a typical SLA requires that 99. 9% of the read and write requests execute within 300 ms, otherwise customers lose interest and business suffers.

- Slides: 47