Hidden Markov Model Simple weather prediction raining today

- Slides: 15

Hidden Markov Model

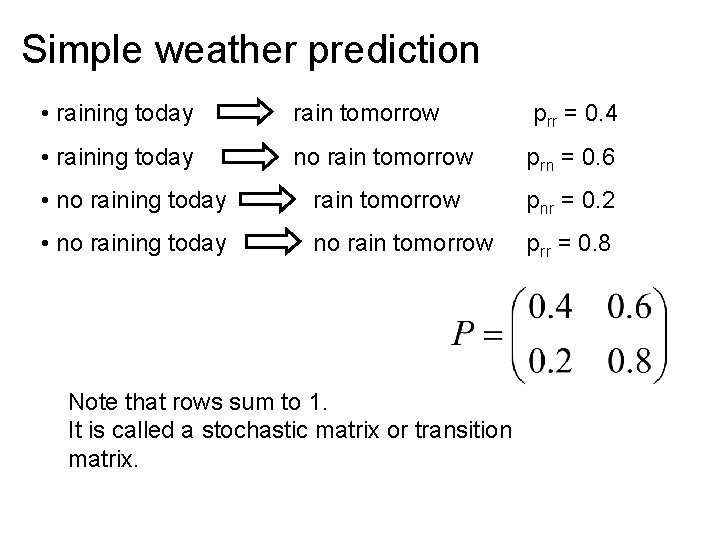

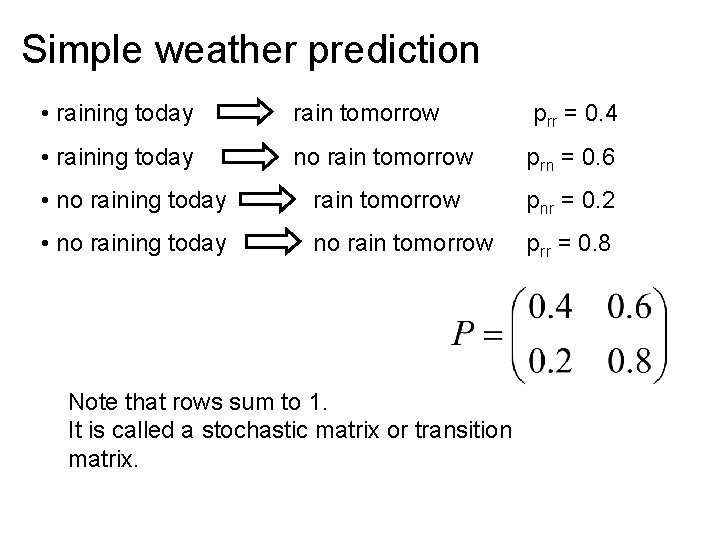

Simple weather prediction • raining today rain tomorrow p rr = 0. 4 • raining today no rain tomorrow prn = 0. 6 • no raining today rain tomorrow pnr = 0. 2 • no raining today no rain tomorrow prr = 0. 8 Note that rows sum to 1. It is called a stochastic matrix or transition matrix.

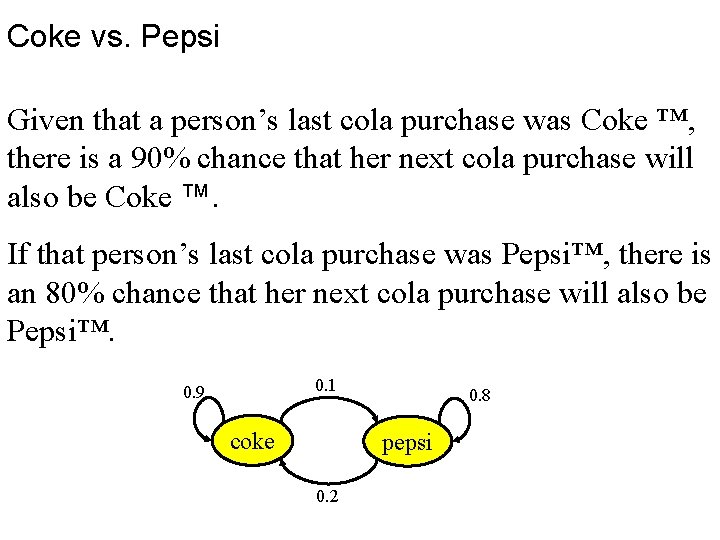

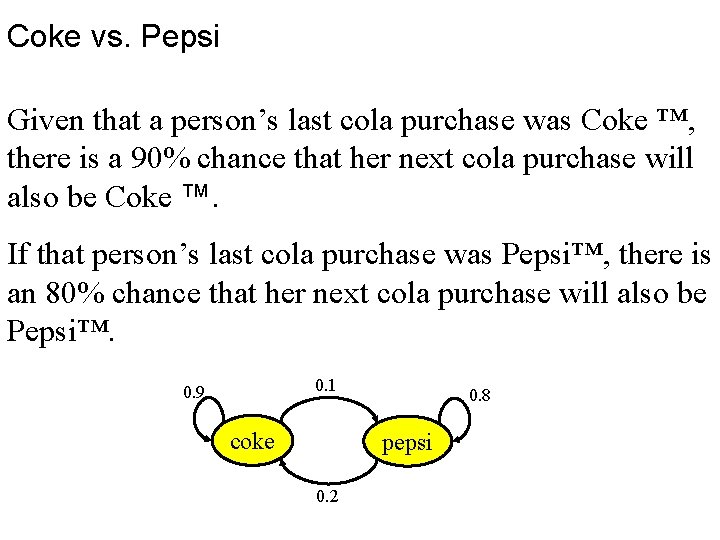

Coke vs. Pepsi Given that a person’s last cola purchase was Coke ™, there is a 90% chance that her next cola purchase will also be Coke ™. If that person’s last cola purchase was Pepsi™, there is an 80% chance that her next cola purchase will also be Pepsi™. 0. 1 0. 9 coke 0. 8 pepsi 0. 2

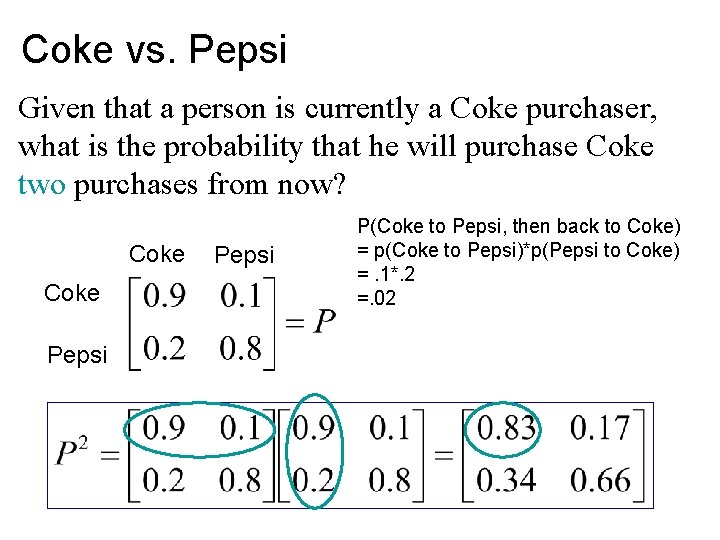

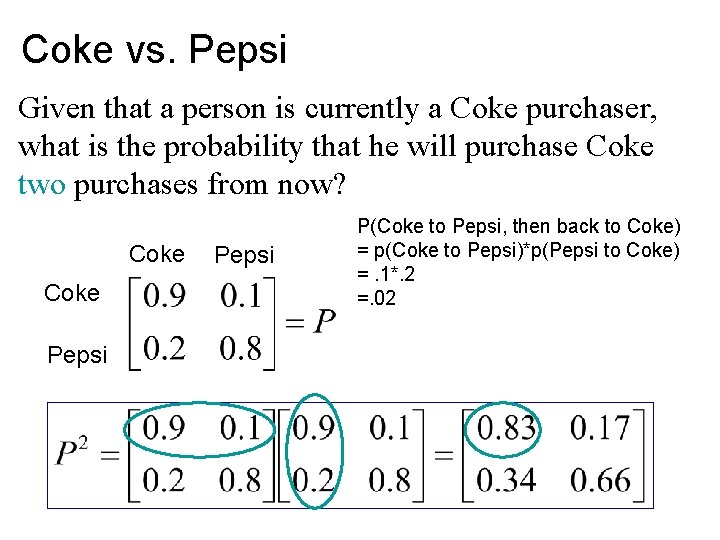

Coke vs. Pepsi Given that a person is currently a Coke purchaser, what is the probability that he will purchase Coke two purchases from now? Coke Pepsi P(Coke to Pepsi, then back to Coke) = p(Coke to Pepsi)*p(Pepsi to Coke) =. 1*. 2 =. 02

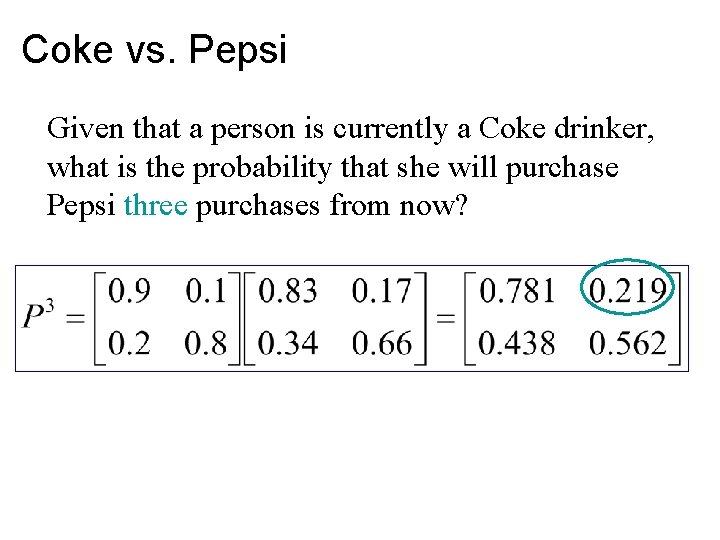

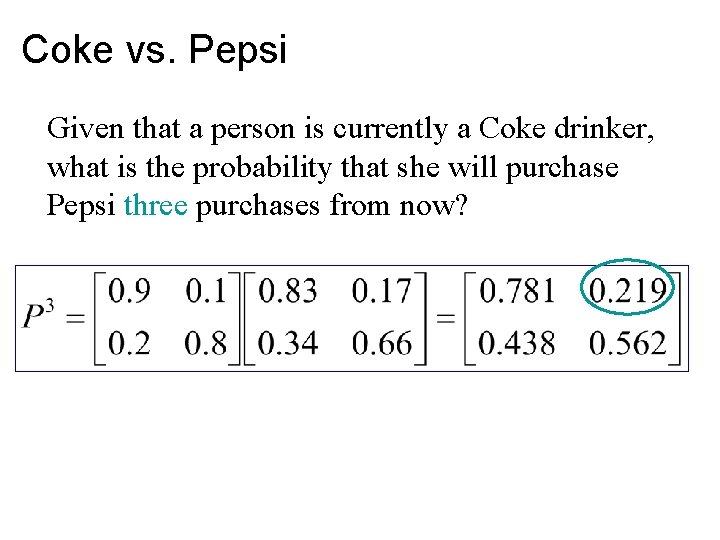

Coke vs. Pepsi Given that a person is currently a Coke drinker, what is the probability that she will purchase Pepsi three purchases from now?

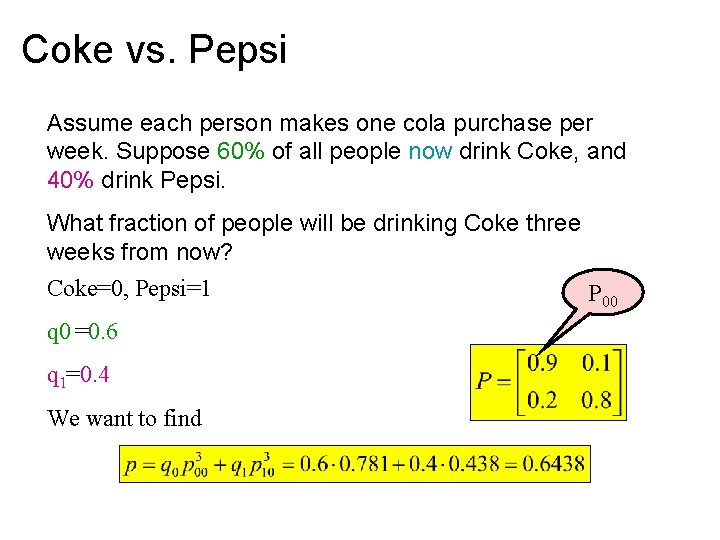

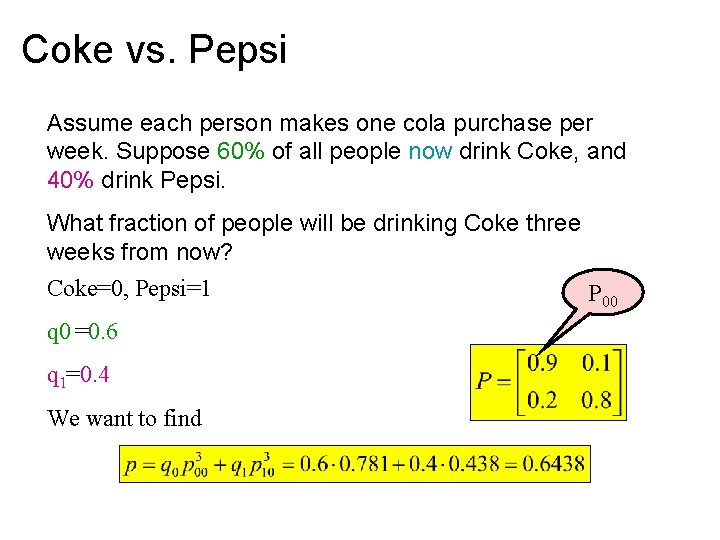

Coke vs. Pepsi Assume each person makes one cola purchase per week. Suppose 60% of all people now drink Coke, and 40% drink Pepsi. What fraction of people will be drinking Coke three weeks from now? Coke=0, Pepsi=1 P 00 q 0 =0. 6 q 1=0. 4 We want to find

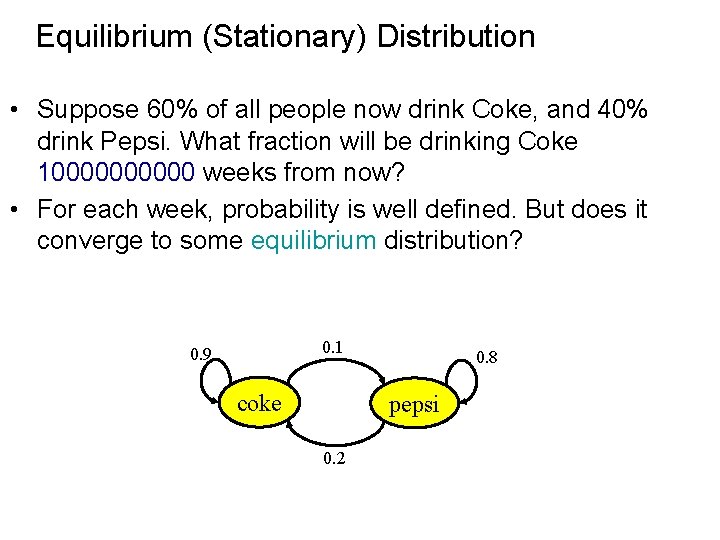

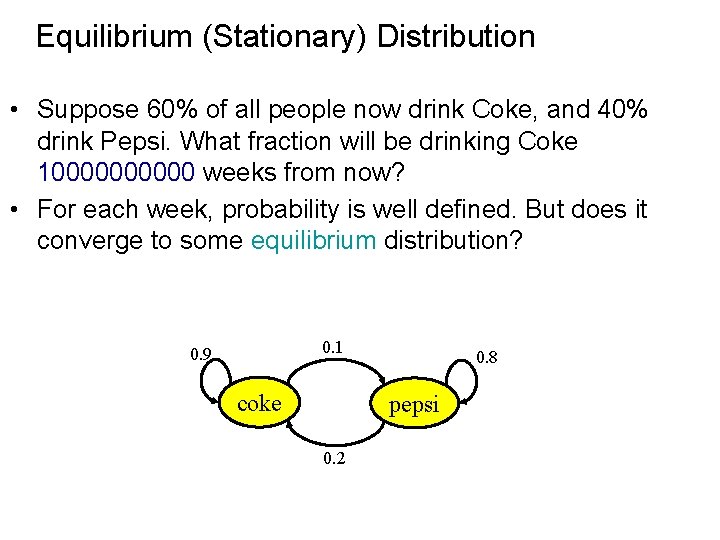

Equilibrium (Stationary) Distribution • Suppose 60% of all people now drink Coke, and 40% drink Pepsi. What fraction will be drinking Coke 100000 weeks from now? • For each week, probability is well defined. But does it converge to some equilibrium distribution? 0. 1 0. 9 coke 0. 8 pepsi 0. 2

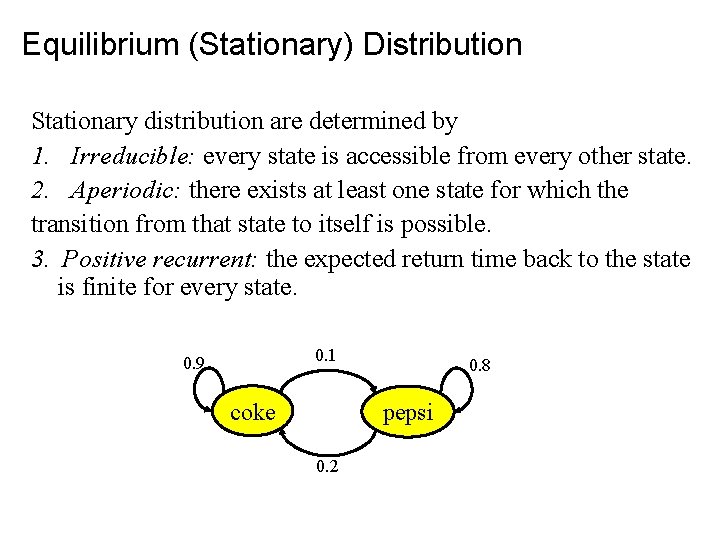

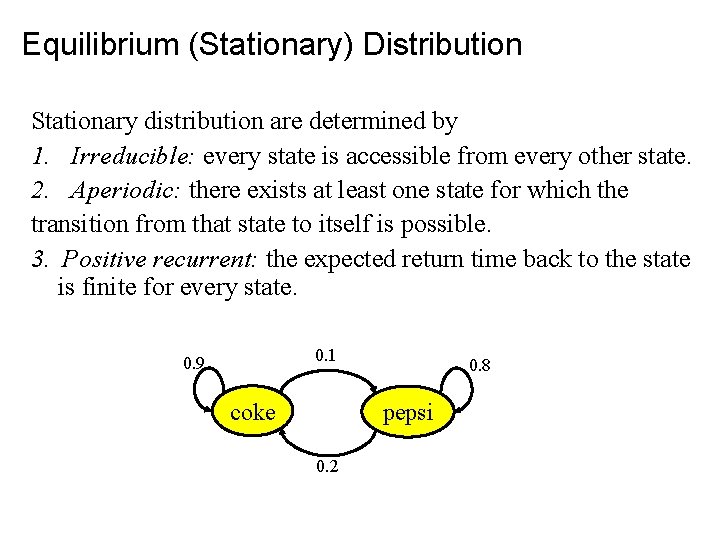

Equilibrium (Stationary) Distribution Stationary distribution are determined by 1. Irreducible: every state is accessible from every other state. 2. Aperiodic: there exists at least one state for which the transition from that state to itself is possible. 3. Positive recurrent: the expected return time back to the state is finite for every state. 0. 1 0. 9 coke 0. 8 pepsi 0. 2

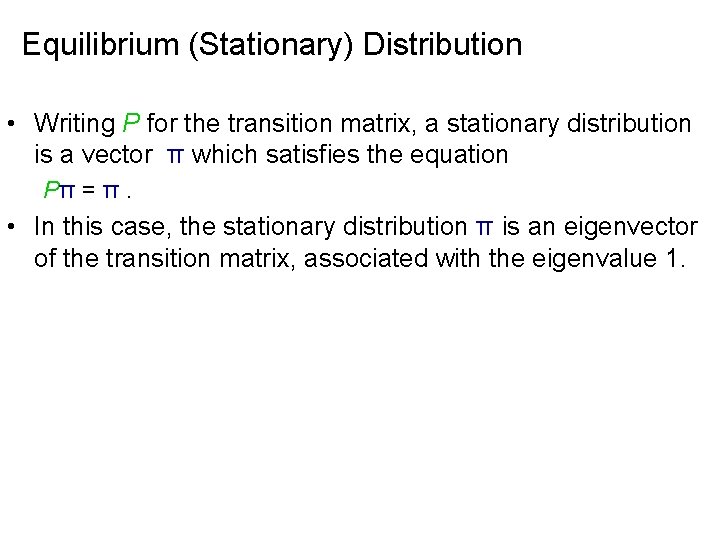

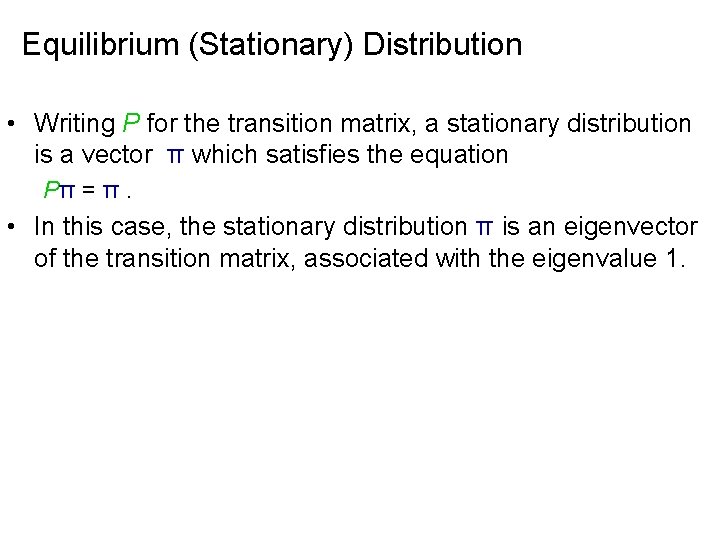

Equilibrium (Stationary) Distribution • Writing P for the transition matrix, a stationary distribution is a vector π which satisfies the equation Pπ = π. • In this case, the stationary distribution π is an eigenvector of the transition matrix, associated with the eigenvalue 1.

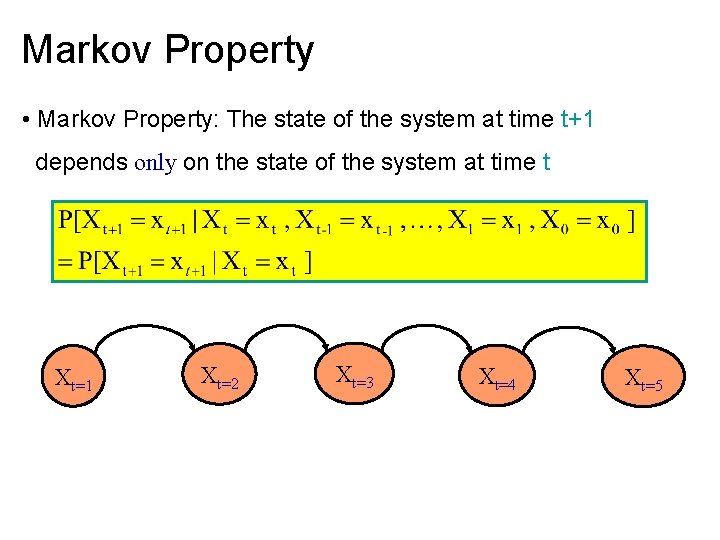

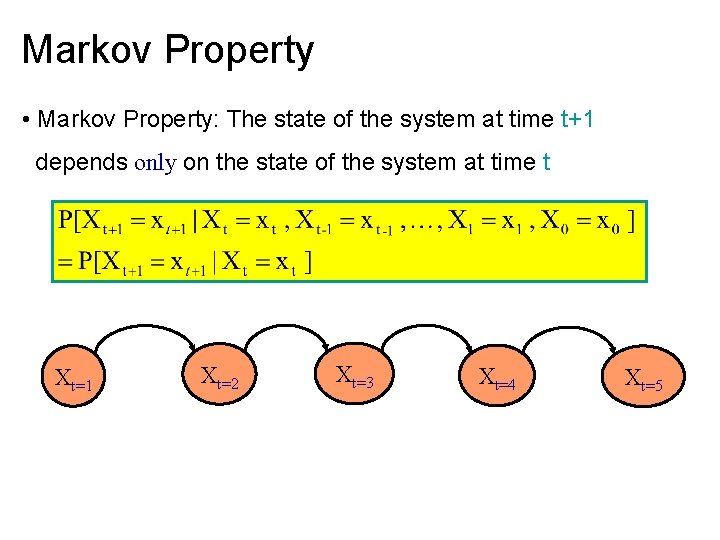

Markov Property • Markov Property: The state of the system at time t+1 depends only on the state of the system at time t Xt=1 Xt=2 Xt=3 Xt=4 Xt=5

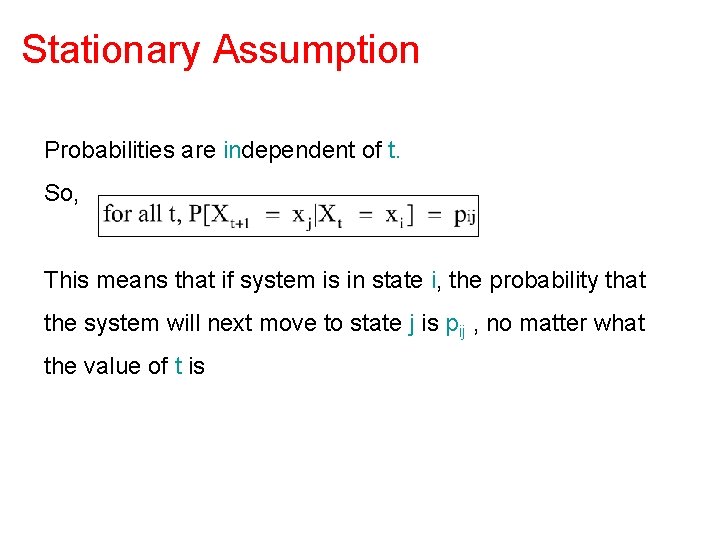

Stationary Assumption Probabilities are independent of t. So, This means that if system is in state i, the probability that the system will next move to state j is pij , no matter what the value of t is

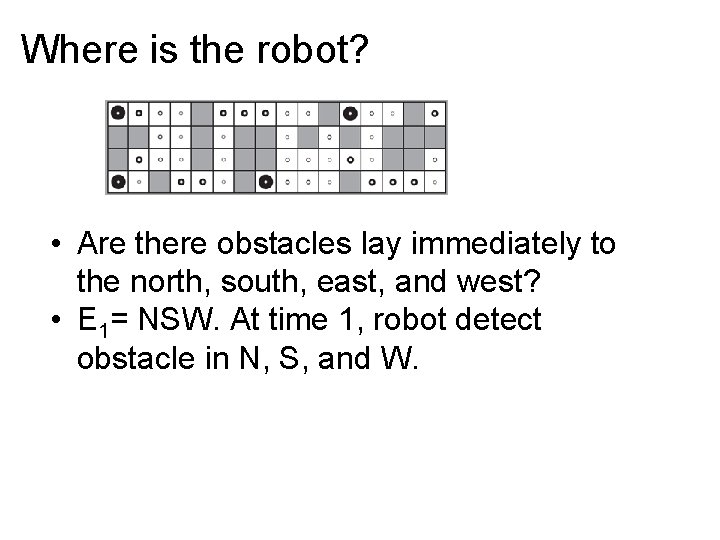

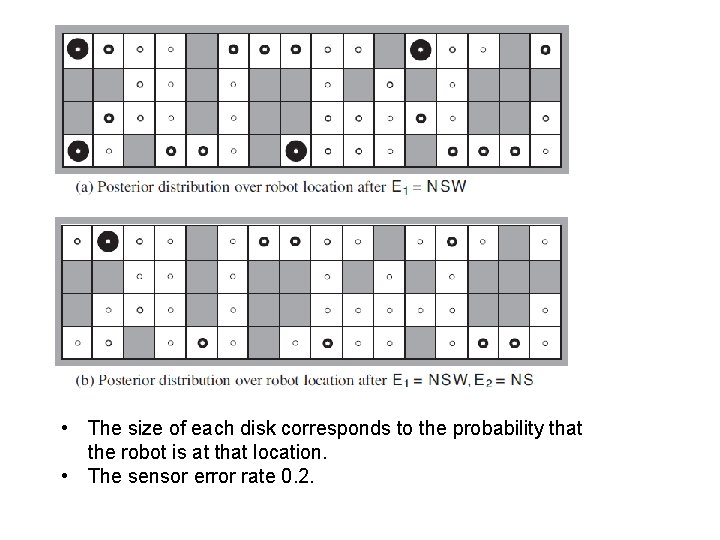

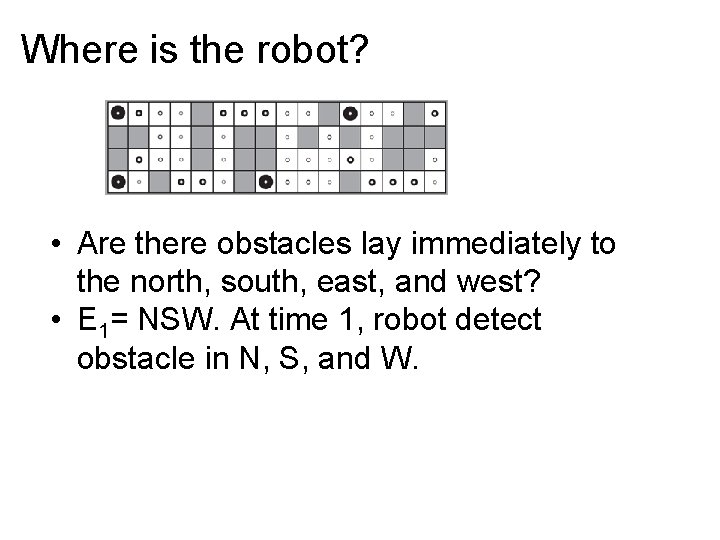

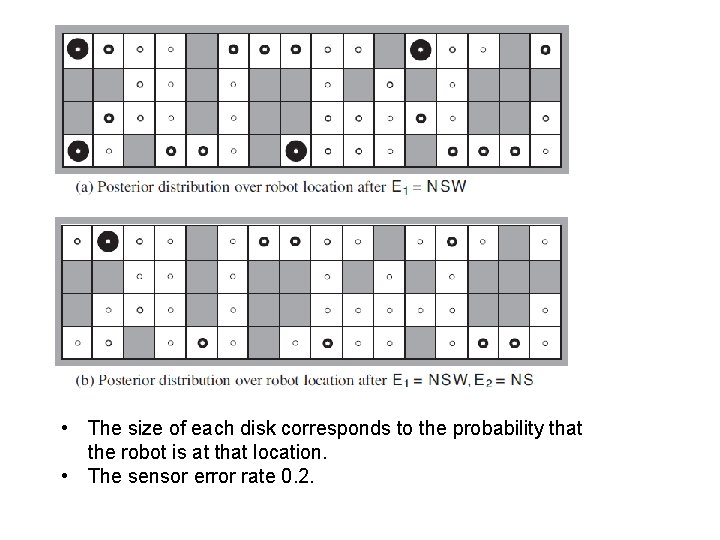

Where is the robot? • Are there obstacles lay immediately to the north, south, east, and west? • E 1= NSW. At time 1, robot detect obstacle in N, S, and W.

• The size of each disk corresponds to the probability that the robot is at that location. • The sensor error rate 0. 2.

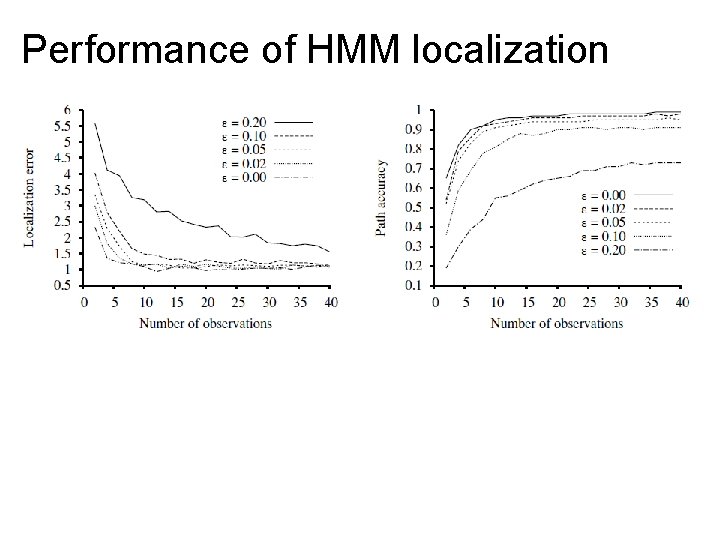

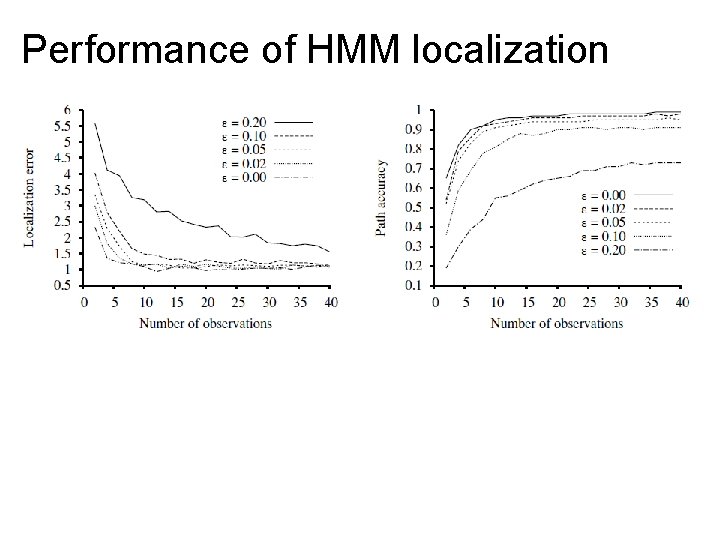

Performance of HMM localization

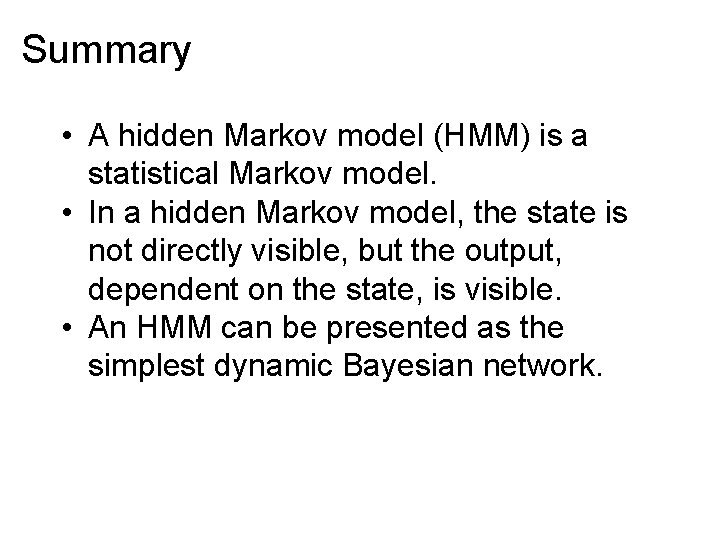

Summary • A hidden Markov model (HMM) is a statistical Markov model. • In a hidden Markov model, the state is not directly visible, but the output, dependent on the state, is visible. • An HMM can be presented as the simplest dynamic Bayesian network.