Deterministic Algorithms for Submodular Maximization Problems Moran Feldman

Deterministic Algorithms for Submodular Maximization Problems Moran Feldman The Open University of Israel Joint work with Niv Buchbinder.

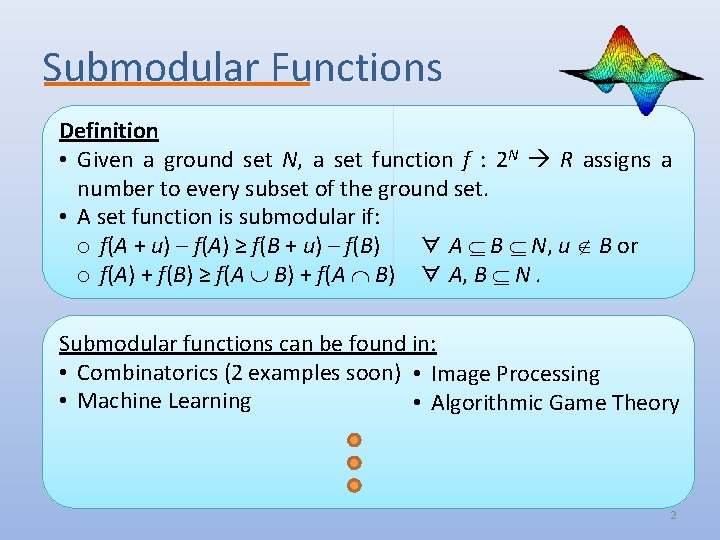

Submodular Functions Definition • Given a ground set N, a set function f : 2 N R assigns a number to every subset of the ground set. • A set function is submodular if: o f(A + u) – f(A) ≥ f(B + u) – f(B) ∀ A B N, u B or o f(A) + f(B) ≥ f(A B) + f(A B) ∀ A, B N. Submodular functions can be found in: • Combinatorics (2 examples soon) • Image Processing • Machine Learning • Algorithmic Game Theory 2

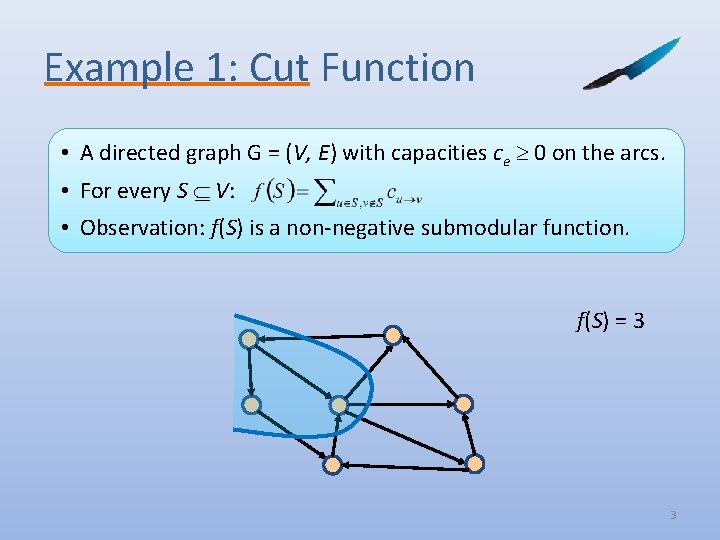

Example 1: Cut Function • A directed graph G = (V, E) with capacities ce 0 on the arcs. • For every S V: • Observation: f(S) is a non-negative submodular function. f(S) = 3 3

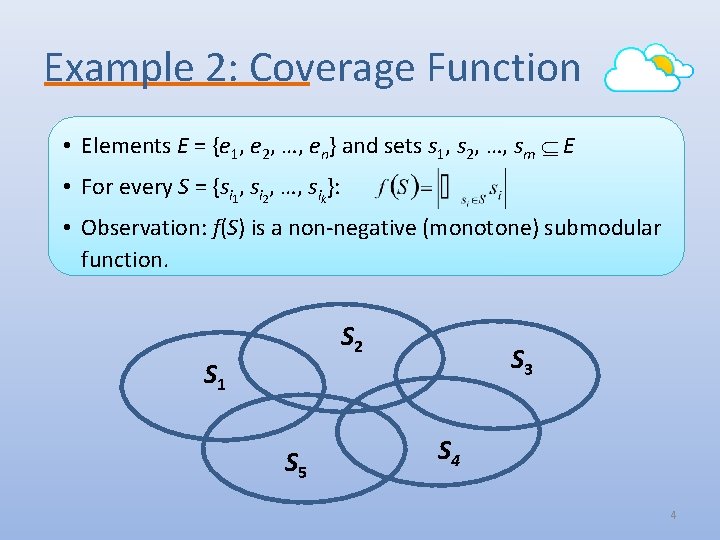

Example 2: Coverage Function • Elements E = {e 1, e 2, …, en} and sets s 1, s 2, …, sm E • For every S = {si 1, si 2, …, sik}: • Observation: f(S) is a non-negative (monotone) submodular function. S 2 S 1 S 5 S 3 S 4 4

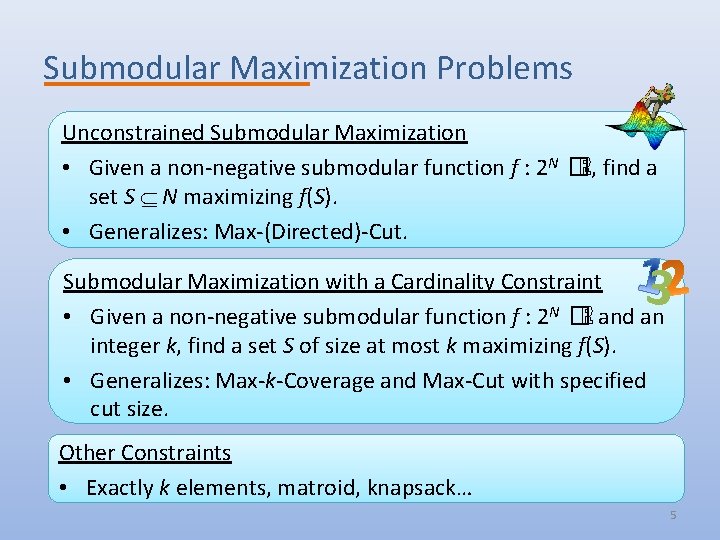

Submodular Maximization Problems Unconstrained Submodular Maximization • Given a non-negative submodular function f : 2 N �ℝ, find a set S N maximizing f(S). • Generalizes: Max-(Directed)-Cut. 3 Submodular Maximization with a Cardinality Constraint • Given a non-negative submodular function f : 2 N �ℝ and an integer k, find a set S of size at most k maximizing f(S). • Generalizes: Max-k-Coverage and Max-Cut with specified cut size. Other Constraints • Exactly k elements, matroid, knapsack… 5

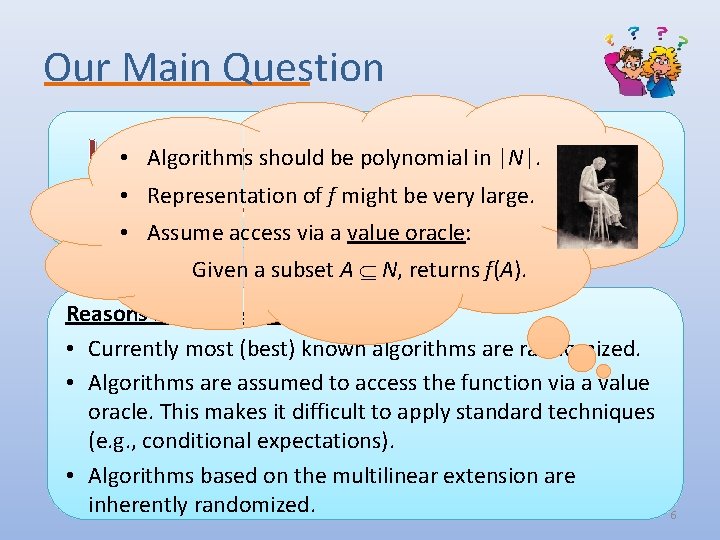

Our Main Question Algorithms should be polynomial in |N|. Is • randomization necessary for • Representation of f might be very large. submodular maximization? • Assume access via a value oracle: Given a subset A N, returns f(A). Reasons for “necessary” “not necessary” • Currently Most approximation most (best)algorithms known algorithms can be derandomized. are randomized. • Algorithms “Not necessary” are assumed is the default to access … the function via a value oracle. This makes it difficult to apply standard techniques (e. g. , conditional expectations). • Algorithms based on the multilinear extension are inherently randomized. 6

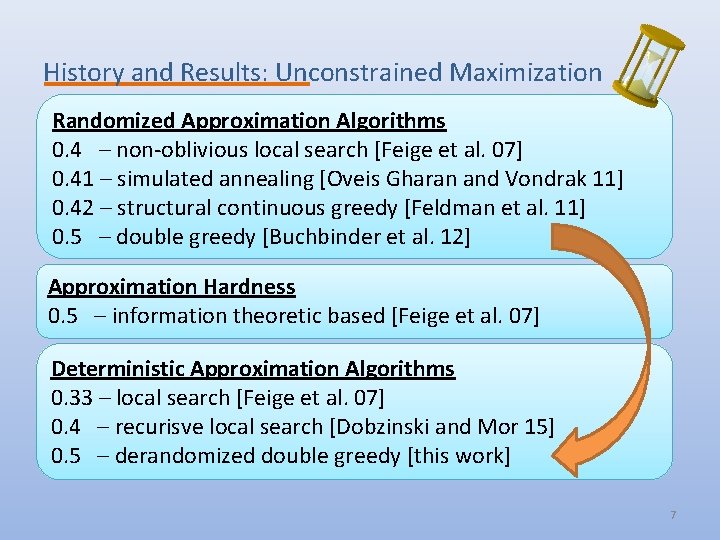

History and Results: Unconstrained Maximization Randomized Approximation Algorithms 0. 4 – non-oblivious local search [Feige et al. 07] 0. 41 – simulated annealing [Oveis Gharan and Vondrak 11] 0. 42 – structural continuous greedy [Feldman et al. 11] 0. 5 – double greedy [Buchbinder et al. 12] Approximation Hardness 0. 5 – information theoretic based [Feige et al. 07] Deterministic Approximation Algorithms 0. 33 – local search [Feige et al. 07] 0. 4 – recurisve local search [Dobzinski and Mor 15] 0. 5 – derandomized double greedy [this work] 7

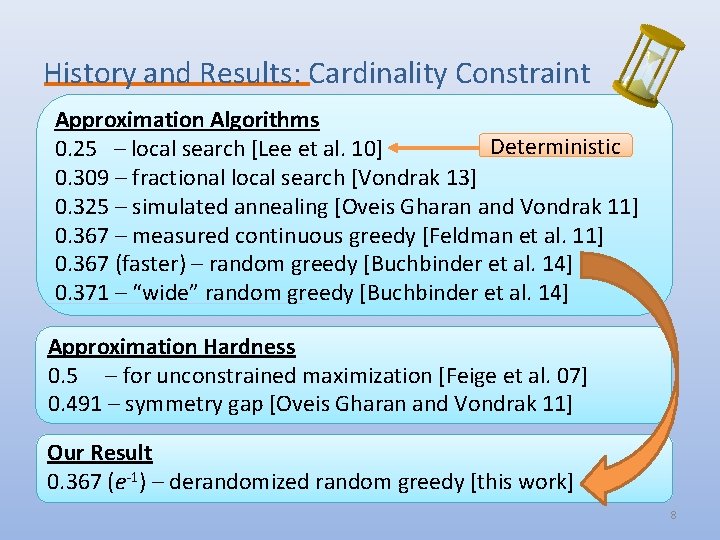

History and Results: Cardinality Constraint Approximation Algorithms Deterministic 0. 25 – local search [Lee et al. 10] 0. 309 – fractional local search [Vondrak 13] 0. 325 – simulated annealing [Oveis Gharan and Vondrak 11] 0. 367 – measured continuous greedy [Feldman et al. 11] 0. 367 (faster) – random greedy [Buchbinder et al. 14] 0. 371 – “wide” random greedy [Buchbinder et al. 14] Approximation Hardness 0. 5 – for unconstrained maximization [Feige et al. 07] 0. 491 – symmetry gap [Oveis Gharan and Vondrak 11] Our Result 0. 367 (e-1) – derandomized random greedy [this work] 8

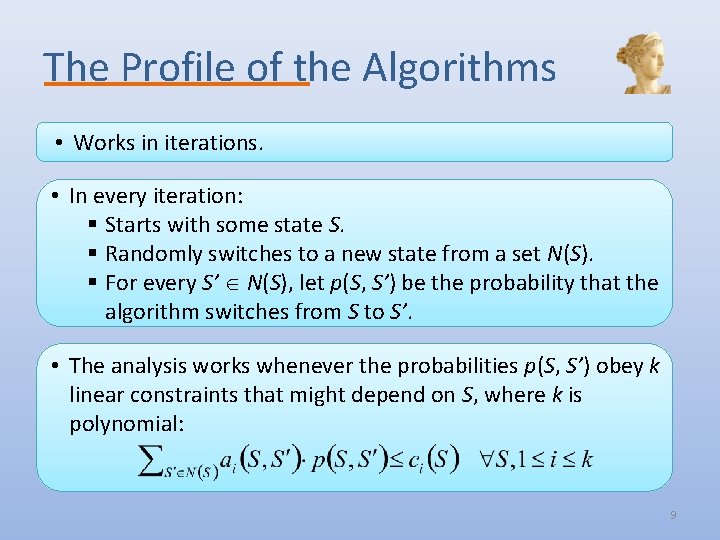

The Profile of the Algorithms • Works in iterations. • In every iteration: § Starts with some state S. § Randomly switches to a new state from a set N(S). § For every S’ N(S), let p(S, S’) be the probability that the algorithm switches from S to S’. • The analysis works whenever the probabilities p(S, S’) obey k linear constraints that might depend on S, where k is polynomial: 9

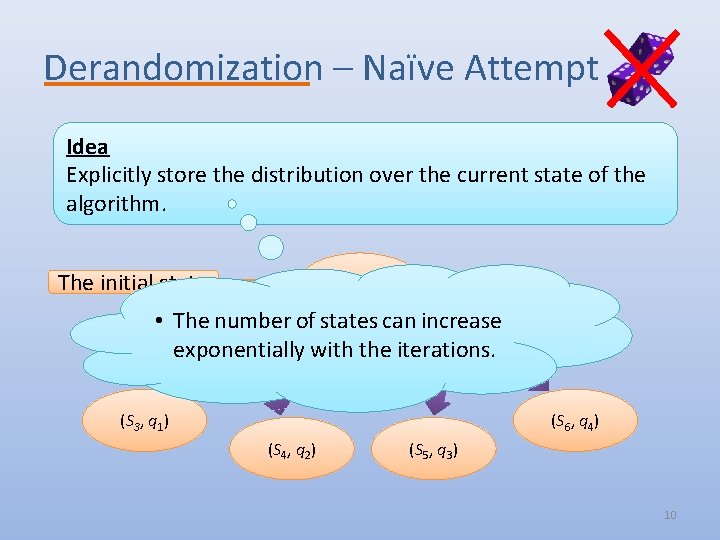

Derandomization – Naïve Attempt Idea Explicitly store the distribution over the current state of the algorithm. The initial state (S 0, 1) • The number of states can increase exponentially with the iterations. (S 1, p) (S 2, 1 - p) (S 6, q 4) (S 3, q 1) (S 4, q 2) (S 5, q 3) 10

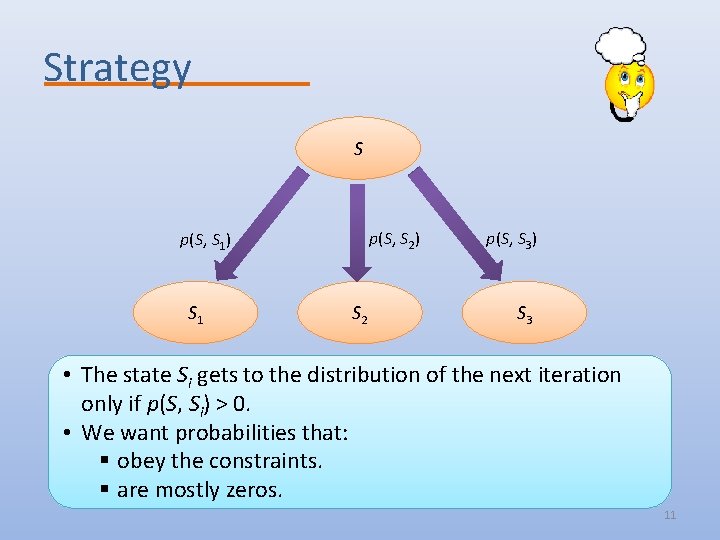

Strategy S p(S, S 2) p(S, S 1) S 1 S 2 p(S, S 3) S 3 • The state Si gets to the distribution of the next iteration only if p(S, Si) > 0. • We want probabilities that: § obey the constraints. § are mostly zeros. 11

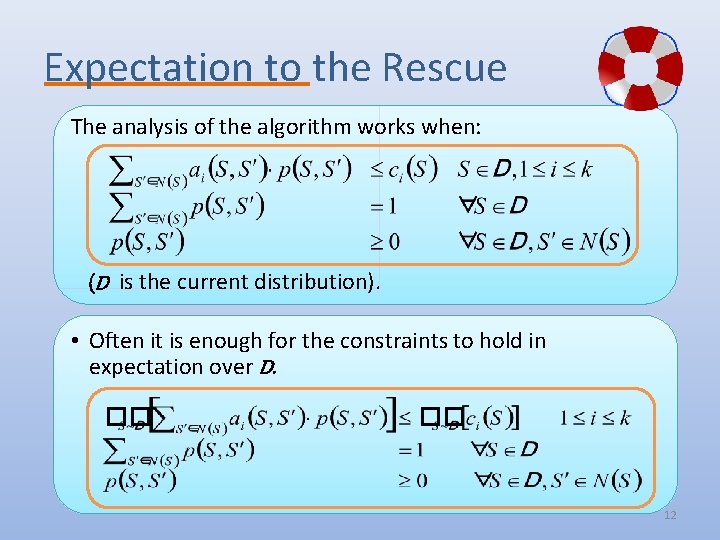

Expectation to the Rescue The analysis of the algorithm works when: (D is the current distribution). • Often it is enough for the constraints to hold in expectation over D. �� �� 12

Expectation to the Rescue (cont. ) Some Justifications q We now require the analysis to work only for the expected output set. q Can often follow from the linearity of the expectation. q The new constraints are defined using multiple states (and their probabilities): • Not natural/accessible for the randomized algorithm. q True for the two algorithms we derandomize. 13

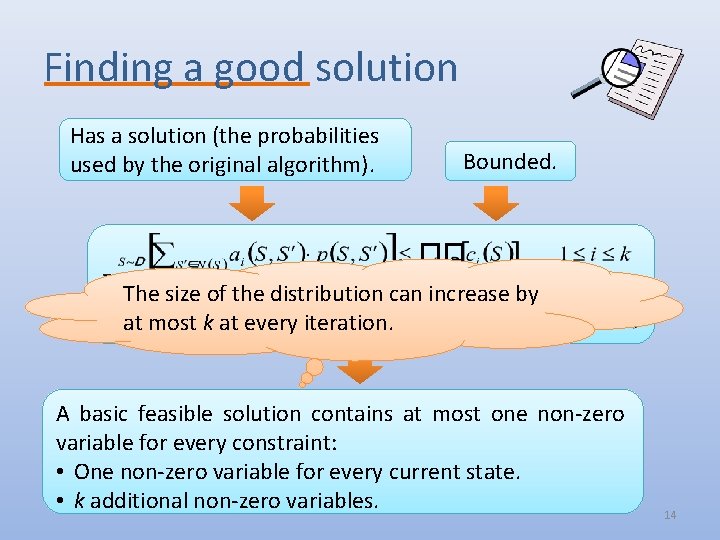

Finding a good solution Has a solution (the probabilities used by the original algorithm). �� Bounded. �� The size of the distribution can increase by at most k at every iteration. A basic feasible solution contains at most one non-zero variable for every constraint: • One non-zero variable for every current state. • k additional non-zero variables. 14

In Conclusion Deterministic Algorithm • Explicitly stores a distribution over states. • In every iteration: § Uses the previous LP to calculate the probabilities to move from one state to another. § Calculates the distribution for the next iteration based on these probabilities. Sometimes the LP can be solved quickly, Performance resulting in a quite fast algorithm. • The analysis of the original (randomized) algorithm still works. • The size of the distribution grows linearly in k – polynomial time algorithm. 15

Open Problems • Derandomizing additional algorithms for submodular max. problems. § In particular, derandomizing algorithms involving the multilinear extension. • Obtaining faster deterministic algorithms for the problems we considered. • Using our technique to derandomize algorithms from other fields. 16

- Slides: 17