Maximization Problems with Submodular Objective Functions Moran Feldman

Maximization Problems with Submodular Objective Functions Moran Feldman Publication List • • • Improved Approximations for k-Exchange Systems. Moran Feldman, Joseph (Seffi) Naor, Roy Schwartz and Justin Ward, ESA, 2011. A Unified Continuous Greedy Algorithm for Submodular Maximization. Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz, FOCS 2011. A Tight Linear Time (1/2)-Approximation for Unconstrained Submodular Maximization. Niv Buchbinder, Moran Feldman, Joseph (Seffi) Naor and Roy Schwartz, FOCS 2012.

Outline • Preliminaries – What is a submodular function? • Unconstrained Submodular Maximization • More Preliminaries • Maximizining a Submodular Function over a Polytope Constraint 2

Set Functions Definition Given a ground set N, a set function f : 2 N R assigns a number to every subset of the ground set. Intuition • Consider a player participating in an auction on a set N of elements. • The utility of the player from buying a subset N’ N of elements is given by a set function f. Modular Functions A set function is modular (linear) if there is a fixed utility vu for every element u N, and the utility of a subset N’ N is given by: 3

Properties of Set Functions Motivation • Modularity is often a too strong property. • Many weaker properties have been defined. • A modular function has all these properties. Normalization • No utility is gained from the set of no elements. • f( ) = 0. Monotonicity • More elements cannot give less utility. • For every two sets A B N, f(A) f(B). Subadditivity • Two sets of elements give less utility together than separately. • For every two sets A, B N, f(A) + f(B) f(A B). • Intuition: I want to hang a single painting in the living room, but I have nothing to do with a second painting. 4

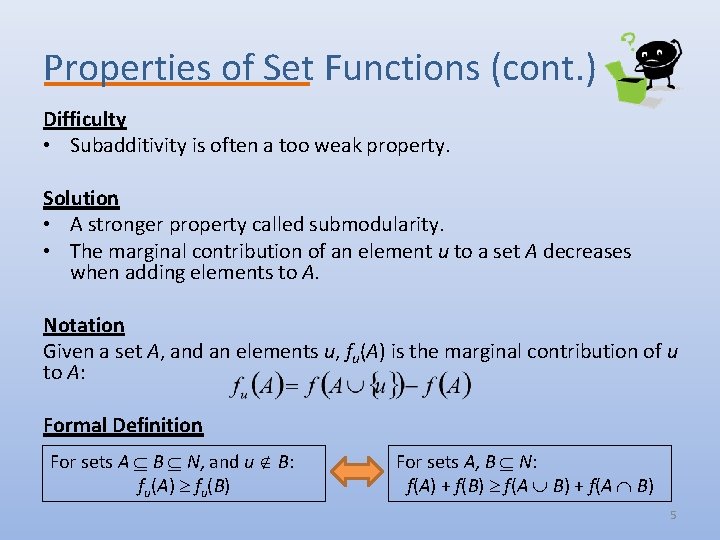

Properties of Set Functions (cont. ) Difficulty • Subadditivity is often a too weak property. Solution • A stronger property called submodularity. • The marginal contribution of an element u to a set A decreases when adding elements to A. Notation Given a set A, and an elements u, fu(A) is the marginal contribution of u to A: Formal Definition For sets A B N, and u B: fu(A) fu(B) For sets A, B N: f(A) + f(B) f(A B) + f(A B) 5

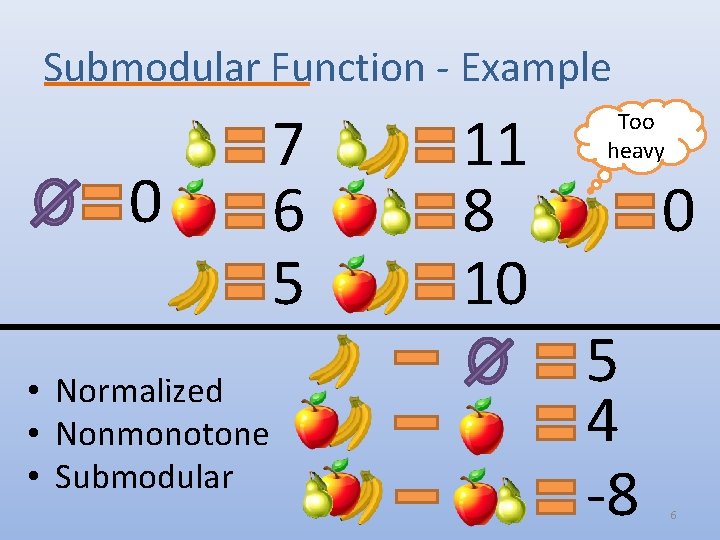

Submodular Function - Example 0 • Normalized • Nonmonotone • Submodular 7 6 5 11 8 10 Too heavy 0 5 4 -8 6

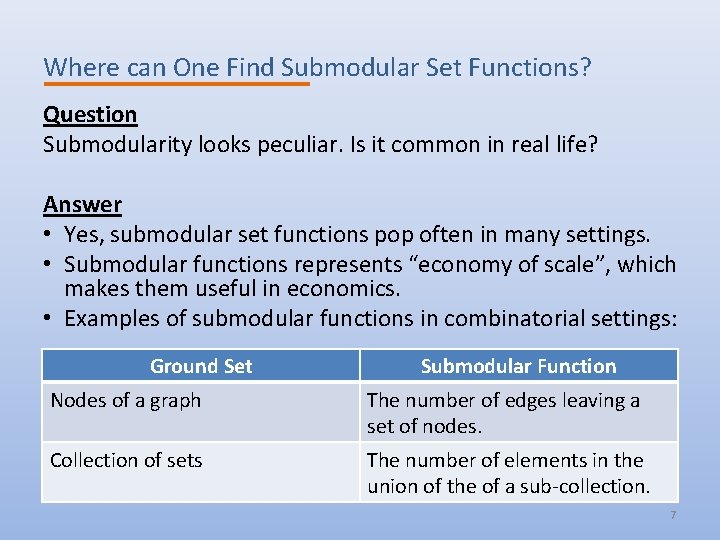

Where can One Find Submodular Set Functions? Question Submodularity looks peculiar. Is it common in real life? Answer • Yes, submodular set functions pop often in many settings. • Submodular functions represents “economy of scale”, which makes them useful in economics. • Examples of submodular functions in combinatorial settings: Ground Set Submodular Function Nodes of a graph The number of edges leaving a set of nodes. Collection of sets The number of elements in the union of the of a sub-collection. 7

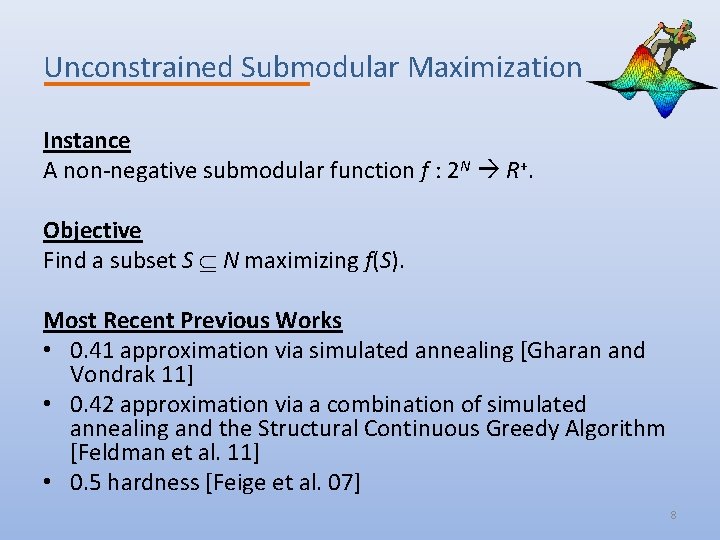

Unconstrained Submodular Maximization Instance A non-negative submodular function f : 2 N R+. Objective Find a subset S N maximizing f(S). Most Recent Previous Works • 0. 41 approximation via simulated annealing [Gharan and Vondrak 11] • 0. 42 approximation via a combination of simulated annealing and the Structural Continuous Greedy Algorithm [Feldman et al. 11] • 0. 5 hardness [Feige et al. 07] 8

The Players • The algorithm we present manages two solutions (sets): – X – Initially the empty set. – Y – Initially the set of all elements (N). • The elements are ordered in an arbitrary order: u 1, u 2, …, un. • The algorithm has n iterations, one for each element. In iteration i the algorithm decides whether to add ui to X or to remove it from Y. • The following notation is defined using these players: The contribution of adding ui to X Formally: ai = f(X {ui}) – f(X) The contribution of removing ui from Y bi = f(Y {ui}) – f(Y) 9

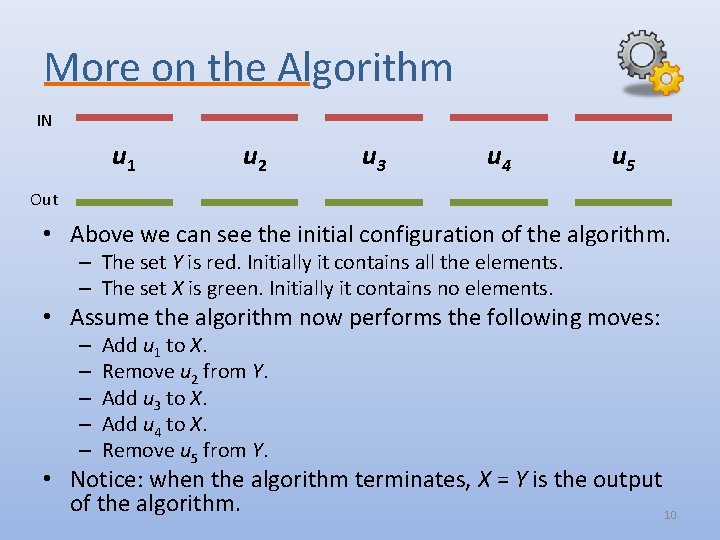

More on the Algorithm IN u 1 u 2 u 3 u 4 u 5 Out • Above we can see the initial configuration of the algorithm. – The set Y is red. Initially it contains all the elements. – The set X is green. Initially it contains no elements. • Assume the algorithm now performs the following moves: – – – Add u 1 to X. Remove u 2 from Y. Add u 3 to X. Add u 4 to X. Remove u 5 from Y. • Notice: when the algorithm terminates, X = Y is the output of the algorithm. 10

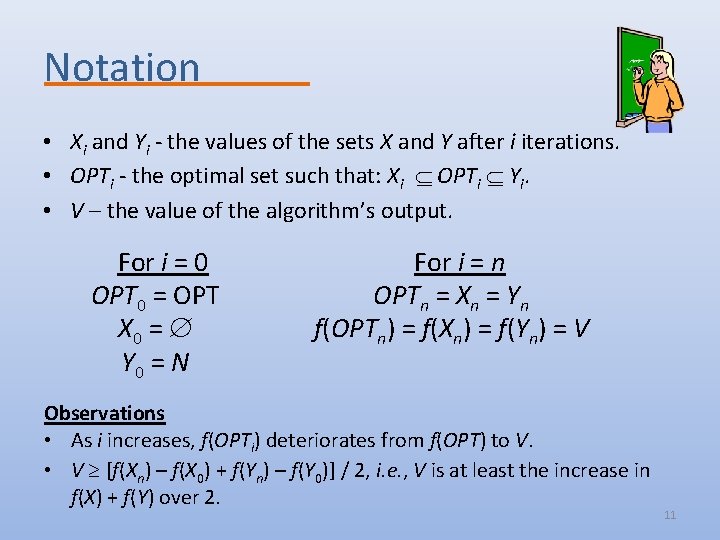

Notation • Xi and Yi - the values of the sets X and Y after i iterations. • OPTi - the optimal set such that: Xi OPTi Yi. • V – the value of the algorithm’s output. For i = 0 OPT 0 = OPT X 0 = Y 0 = N For i = n OPTn = Xn = Yn f(OPTn) = f(Xn) = f(Yn) = V Observations • As i increases, f(OPTi) deteriorates from f(OPT) to V. • V [f(Xn) – f(X 0) + f(Yn) – f(Y 0)] / 2, i. e. , V is at least the increase in f(X) + f(Y) over 2. 11

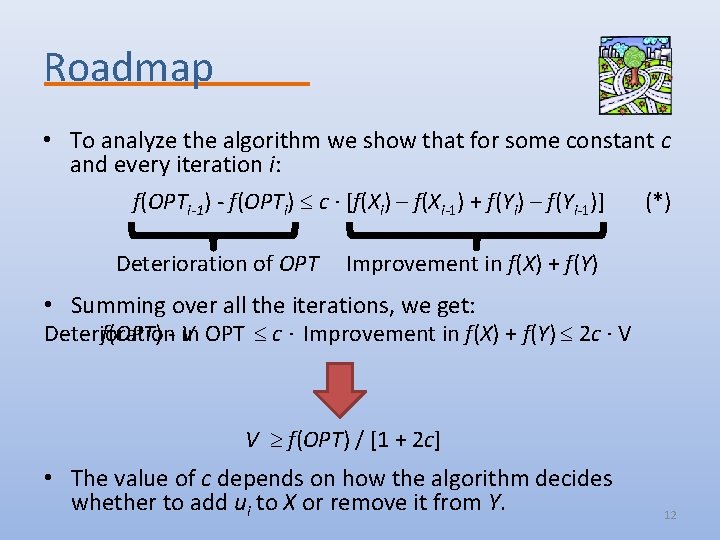

Roadmap • To analyze the algorithm we show that for some constant c and every iteration i: f(OPTi-1) - f(OPTi) c ∙ [f(Xi) – f(Xi-1) + f(Yi) – f(Yi-1)] Deterioration of OPT (*) Improvement in f(X) + f(Y) • Summing over all the iterations, we get: Deterioration f(OPT) - V in OPT c ∙ Improvement in f(X) + f(Y) 2 c ∙ V V f(OPT) / [1 + 2 c] • The value of c depends on how the algorithm decides whether to add ui to X or remove it from Y. 12

Deterministic Rule If ai bi • then add ui to X, • else remove ui from Y. Non-standard greedy algorithm Lemma Always, ai + bi 0. Lemma If the algorithm adds ui to X (respectively, removes ui from Y), and OPTi-1 OPTi , then the deterioration in OPT is at most bi (respectively, ai). Proof Since OPTi-1 OPTi , we know that ui OPTi-1. f(OPTi-1) – f(OPTi) f(OPTi-1) – f(OPTi-1 {ui}) f(Y {ui}) – f(Y) = bi 13

Deterministic Rule - Analysis • Assume ai bi , and the algorithm adds ui to X (the other case is analogues). • Cleary ai 0 since ai + bi 0. • If ui OPTi-1 , then: – f(OPTi-1) - f(OPTi) = 0 – f(Xi) – f(Xi-1) + f(Yi) – f(Yi-1) = ai 0 – Inequality (*) holds for every non-negative c. • If ui OPTi-1 , then: – f(OPTi-1) - f(OPTi) bi – f(Xi) – f(Xi-1) + f(Yi) – f(Yi-1) = ai bi – Inequality (*) holds for c = 1. • The approximation ratio is: 1 / [1 + 2 c] = 1/3. 14

Random Rule 1. If bi 0, then add ui to X and quit. 2. If ai 0, then remove ui from Y and quit. 3. With probability ai / (ai + bi) add ui to X, otherwise (with probability bi / (ai + bi)), remove ui from Y. Three cases: one for each line of the algorithm. Case 1 (bi 0) • Cleary ai 0 since ai + bi 0. • Thus, f(Xi) – f(Xi-1) + f(Yi) – f(Yi-1) = ai 0. • If ui OPTi-1 , then f(OPTi-1) - f(OPTi) = 0 • If ui OPTi-1 , then f(OPTi-1) - f(OPTi) bi 0 • Inequality (*) holds for every non-negative c. 15

Random Rule - Analysis Case 2 (ai 0) Analogues to the previous case. Case 3 (ai , bi > 0) The improvement is: Assume, w. l. o. g. , ui OPTi-1, the deterioration in OPT is: Inequality (*) holds for c = 0. 5, since: The approximation ratio is: 1 / [1 + 2 c] = 1/2. 16

17

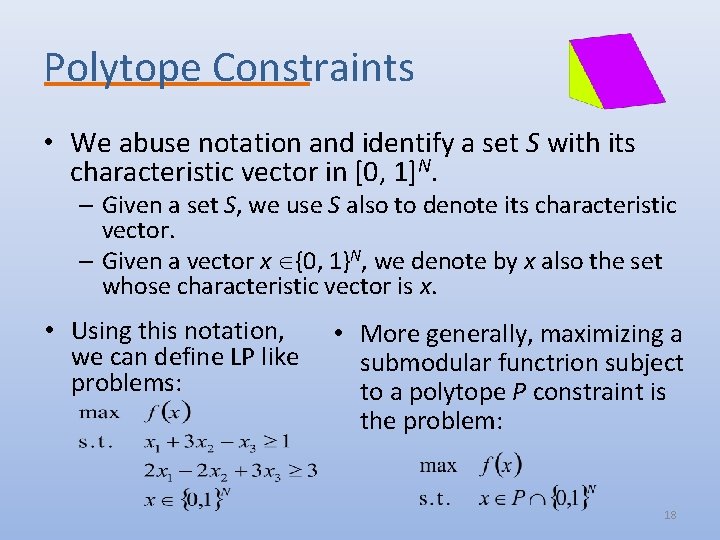

Polytope Constraints • We abuse notation and identify a set S with its characteristic vector in [0, 1]N. – Given a set S, we use S also to denote its characteristic vector. – Given a vector x {0, 1}N, we denote by x also the set whose characteristic vector is x. • Using this notation, we can define LP like problems: • More generally, maximizing a submodular functrion subject to a polytope P constraint is the problem: 18

Relaxation • The last program: – requires integer solutions. – generalizes “integer programming”. – is unlikely to have a reasonable approximation. • We need to relax it. – We replace the constraint x {0, 1}N with x [0, 1]N. – The difficulty is to extend the objective function to fractional vectors. • The multilinear extension F (a. k. a. extension by expectation) [Calinescu et al. 07]. – Given a vector x [0, 1]N, let R(x) denote a random set containing every element u N with probability xu independently. – F(x) = E[f(R(x))]. 19

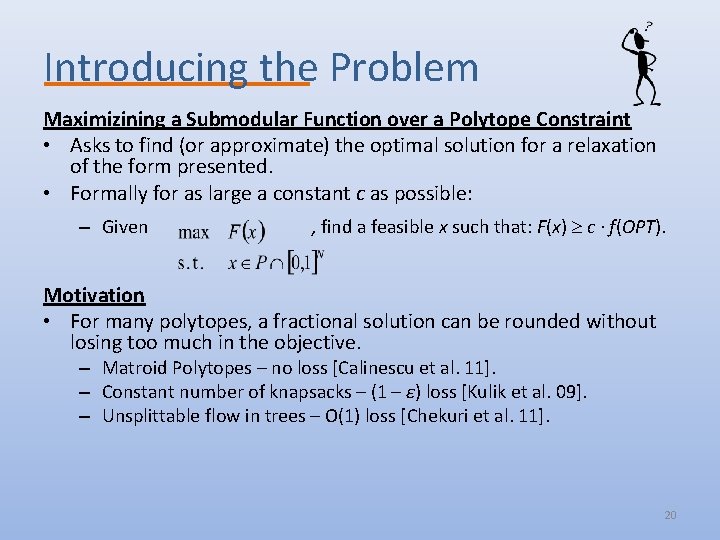

Introducing the Problem Maximizining a Submodular Function over a Polytope Constraint • Asks to find (or approximate) the optimal solution for a relaxation of the form presented. • Formally for as large a constant c as possible: – Given , find a feasible x such that: F(x) c ∙ f(OPT). Motivation • For many polytopes, a fractional solution can be rounded without losing too much in the objective. – Matroid Polytopes – no loss [Calinescu et al. 11]. – Constant number of knapsacks – (1 – ε) loss [Kulik et al. 09]. – Unsplittable flow in trees – O(1) loss [Chekuri et al. 11]. 20

![The Continuous Greedy Algorithm The Algorithm [Vondrak 08] • Let δ > 0 be The Continuous Greedy Algorithm The Algorithm [Vondrak 08] • Let δ > 0 be](http://slidetodoc.com/presentation_image_h/0cfe8f51e98a31e43bd469947a8b75fa/image-21.jpg)

The Continuous Greedy Algorithm The Algorithm [Vondrak 08] • Let δ > 0 be a small number. 1. Initialize: y(0) and t 0. 2. While t < 1 do: 3. For every u N, let wu = F(y(t) u) – F(y(t)). 4. Find a solution x in P [0, 1]N maximizing w ∙ x. 5. y(t + δ) y(t) + δ ∙ x 6. Set t t + δ 7. Return y(t) Remark • Calculation of wu can be tricky for some functions. • Assuming one can evaluate f, the value of wu can be approximated via sampling. 21

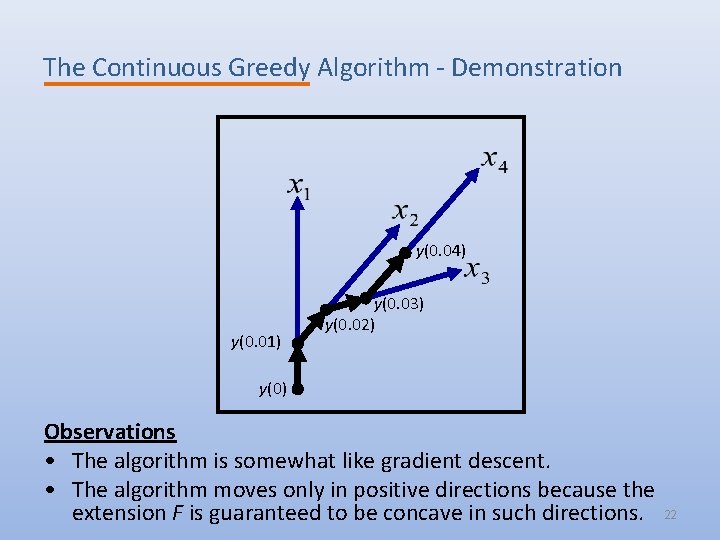

The Continuous Greedy Algorithm - Demonstration y(0. 04) y(0. 01) y(0. 03) y(0. 02) y(0) Observations • The algorithm is somewhat like gradient descent. • The algorithm moves only in positive directions because the extension F is guaranteed to be concave in such directions. 22

![The Continuous Greedy Algorithm - Results Theorem [Calinescu et al. 11] • Assuming, – The Continuous Greedy Algorithm - Results Theorem [Calinescu et al. 11] • Assuming, –](http://slidetodoc.com/presentation_image_h/0cfe8f51e98a31e43bd469947a8b75fa/image-23.jpg)

The Continuous Greedy Algorithm - Results Theorem [Calinescu et al. 11] • Assuming, – f is a normalized monotone submodular function. – P is a solvable polytope. • The continuous greedy algorithm gives 1 – 1/e – o(n-1) approximation. • By guessing the element of OPT with the maximal marginal contribution, one can get an optimal 1 – 1/e approximation. Proof Idea • Show that w ∙ x w ∙ OPT is large. • Show that the improvement in each iteration δ ∙ w ∙ x. • Sum up the improvements over all the iterations. 23

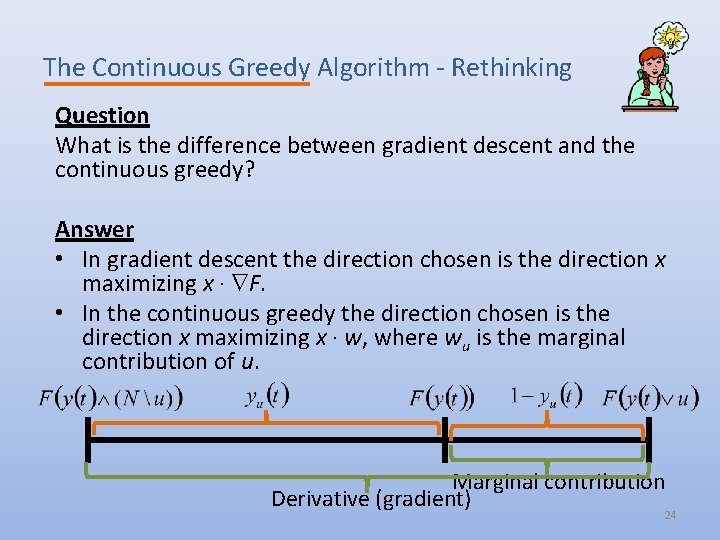

The Continuous Greedy Algorithm - Rethinking Question What is the difference between gradient descent and the continuous greedy? Answer • In gradient descent the direction chosen is the direction x maximizing x ∙ F. • In the continuous greedy the direction chosen is the direction x maximizing x ∙ w, where wu is the marginal contribution of u. Marginal contribution Derivative (gradient) 24

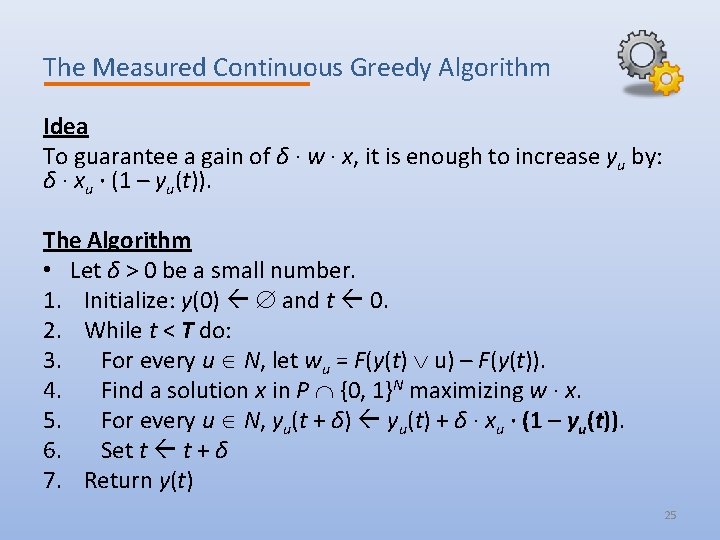

The Measured Continuous Greedy Algorithm Idea To guarantee a gain of δ ∙ w ∙ x, it is enough to increase yu by: δ ∙ xu ∙ (1 – yu(t)). The Algorithm • Let δ > 0 be a small number. 1. Initialize: y(0) and t 0. 2. While t < T do: 3. For every u N, let wu = F(y(t) u) – F(y(t)). 4. Find a solution x in P {0, 1}N maximizing w ∙ x. 5. For every u N, yu(t + δ) yu(t) + δ ∙ xu ∙ (1 – yu(t)). 6. Set t t + δ 7. Return y(t) 25

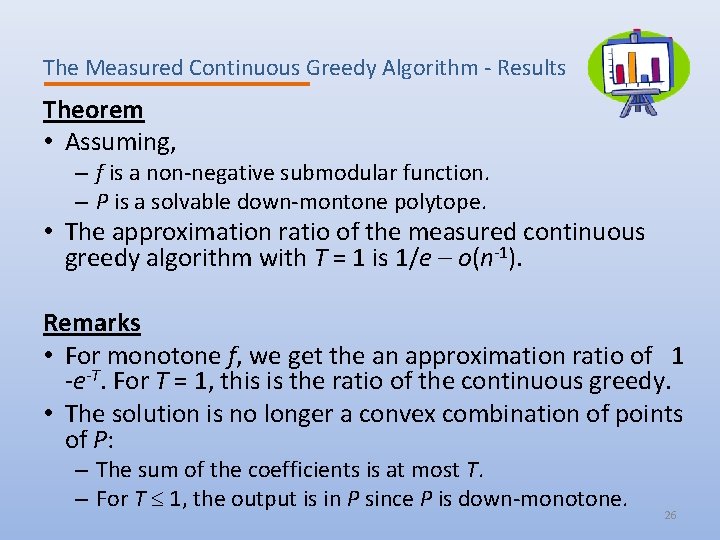

The Measured Continuous Greedy Algorithm - Results Theorem • Assuming, – f is a non-negative submodular function. – P is a solvable down-montone polytope. • The approximation ratio of the measured continuous greedy algorithm with T = 1 is 1/e – o(n-1). Remarks • For monotone f, we get the an approximation ratio of 1 -e-T. For T = 1, this is the ratio of the continuous greedy. • The solution is no longer a convex combination of points of P: – The sum of the coefficients is at most T. – For T 1, the output is in P since P is down-monotone. 26

![The Measured Continuous Greedy Algorithm - Analysis Helper Lemma Given x [0, 1]N and The Measured Continuous Greedy Algorithm - Analysis Helper Lemma Given x [0, 1]N and](http://slidetodoc.com/presentation_image_h/0cfe8f51e98a31e43bd469947a8b75fa/image-27.jpg)

The Measured Continuous Greedy Algorithm - Analysis Helper Lemma Given x [0, 1]N and [0, 1], let T (x) be a set containing every element u N such that xu . • For every x [0, 1]N, Proof Omitted due to time constraints. Lemma 1 There is a good direction, i. e. , w ∙ x e-t ∙ f(OPT) – F(y(t)). Proof • Notice that the increase in yu(t) is at most δ ∙ (1 – yu(t)). • If δ is infinitely small, yu(t) is upper bounded by the solution of the following differential equation. • For small δ, yu(t) is almost upper bounded by 1 – e-t. 27

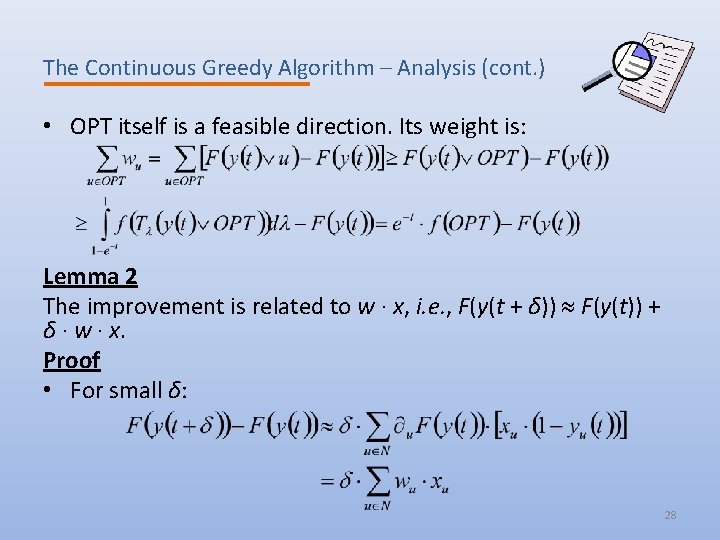

The Continuous Greedy Algorithm – Analysis (cont. ) • OPT itself is a feasible direction. Its weight is: Lemma 2 The improvement is related to w ∙ x, i. e. , F(y(t + δ)) F(y(t)) + δ ∙ w ∙ x. Proof • For small δ: 28

Proof of the Theorem • Combining the last two lemmata gives: F(y(t + δ)) - F(y(t)) δ ∙ [e-t ∙ f(OPT) – F(y(t))] • Let g(t) = F(y(t)). For small δ the last equation becomes the differential equation: dg/dt = e-t ∙ f(OPT) – g(t), g(0) = 0 • The solution of this equation is: g(t) = t ∙ e-t ∙ f(OPT) • Hence, at time t = 1, the value of the solution is at least g(1) = e-1 ∙ f(OPT). 29

Result for Monotone Functions • For non-monotone functions, the approximation ratio is T ∙ e-T, which is maximized for T = 1. • For monotone functions, the approximation ratio is 1 – e-T, which improves as T increases. • In general, the solution produced for T > 1: – Is always within the cube [0, 1]N. – Might be outside the polytope. • However, for some polytopes, somewhat larger values of T can be used. 30

The Submodular Welfare Problem Instance • A set P of n players • A set Q of m items • Normalized monotone submodular utility function wj: 2 Q R+ for each player. Objective • Let Qj Q denote the set of items the jth player gets. • The utility of the jth player is wj(Qj). • Distribute the items among the players, maximizing the sum of utilities. 31

The Polytope Problem Representation • Each item is represented by n elements in the ground set (each one corresponding to its assignment to a different player). • The objective is the sum of the utility functions of the players, each applied to the items allocated to it. • The polytope requires that every item will be allocated to at most one player. Analyzing a Single Constraint • All constraints are of the form: • Let ti be the amount of time in which the algorithm increases xi. Notice that xi = 1 – e-ti. • By definition, , and it can be shown that for T -n ∙ ln (1–n-1) it always holds that: 32

Approximating the Submodular Welfare Problem • Apply the measured continuous greedy till time: T = - n ∙ ln (1 – 1/n) • By the previous analysis, the solution produced will be in the polytope. • The expected value of the solution is at least: • Round the solution using the natural rounding: – Assign an item to each player with probability equal to the corresponding variable. • This approximation ratio is tight for every n. [Vondrak 06] 33

Open Problems • The measured continuous greedy algorithm: – Provides tight approximation for monotone functions [Nemhauser and Wolsey 78]. – Is this also the case for non-monotone functions? – The current approximation ratio of e-1 is a natural number. • Uniform Matroids – The approximability depends on k/n. • For k/n = 0. 5, we have 0. 5 approximation. • Recently, a hardness of a bit less than 0. 5 was shown for k/n approaching 0. [Gharan and Vondrak 11] – What is the correct approximation ratio as a function of k/n? – We know it is always possible to bit the e-1 ratio. 34

- Slides: 35